Abstract

Distributed acoustic sensing (DAS) systems utilize optical fibers as the sensing medium, offering long-distance and wide-range real-time monitoring capabilities. They are widely applied in fields such as seismic monitoring, pipeline leak detection, and railway safety monitoring. To address the challenge of traditional Power Spectral Density (PSD) analysis methods in accurately identifying effective signal frequencies under high-frequency noise interference, this paper proposes a novel spectral feature extraction method that combines variational mode decomposition (VMD) and modal power spectral density (PSD). This approach first employs VMD to decompose vibration signals, effectively filtering out noise and extracting valid signals. Subsequently, PSD is used to extract the spectral characteristics of the sub-modes, and Gaussian functions are applied to fit these spectral curves, forming a feature descriptor matrix that characterizes the vibration source. By compressing the original vibration data into this compact feature matrix, the method aims to replace the original data with the feature matrix for deep learning, enabling intelligent recognition and diagnosis of vibration signal states through training and classification. Using vibration data from coal conveyor rollers at Qinhuangdao Port as an example, the effectiveness of this method in extracting bearing spectral features has been validated. The research findings indicate that this method is suitable for vibration load response evaluation and provides a novel technical tool for intelligent monitoring in DAS systems.

Similar content being viewed by others

Introduction

Research background

Distributed acoustic sensing (DAS) is an advanced fiber-based sensing technology that leverages the backscattering characteristics of laser light in optical fibers to detect external vibrations and acoustic signals1. When pulsed laser propagates through an optical fiber, minor inhomogeneities within the fiber generate Rayleigh backscatter. External vibrations or strain induce changes in the fiber’s length or refractive index, resulting in variations in the phase and intensity of the Rayleigh backscattered light. By demodulating and analyzing the returned scatter signal, vibration information along the fiber’s length can be obtained2. As shown in Fig. 1, the time-frequency response waterfall plot of the DAS system illustrates the variation of acoustic signals along the fiber over time. Fig. 2 presents the DAS demodulator used for signal demodulation and analysis. This characteristic allows a single optical fiber to function as tens of thousands of continuously distributed sensors, offering high sensitivity, high spatial resolution, and long-distance monitoring capabilities.

The Time-frequency response waterfall plot of the DAS system.

DAS demodulator.

Thanks to these unique technical advantages, DAS technology has been applied across multiple fields. For example, in seismic monitoring, DAS systems can capture the propagation characteristics of seismic waves, providing support for earthquake early warning and geophysical studies. In oil and gas pipeline safety monitoring, DAS systems can detect vibration signals around pipelines to identify events such as leaks, oil theft, and unauthorized excavations, ensuring pipeline operation safety. In boundary security and perimeter protection, DAS systems are deployed in critical areas like borders, airports, and nuclear power stations for real-time intrusion detection. Moreover, DAS technology is also applied in railway and highway monitoring to detect abnormal vibrations in tracks and roads, identifying potential structural hazards, as well as in marine environmental monitoring through submarine cables for real-time detection of phenomena such as oceanic earthquakes and waves3.

Despite the enormous application potential of DAS, significant challenges remain in its practical use, particularly in data processing and analysis. DAS systems typically acquire data at sampling rates ranging from several kHz to hundreds of kHz to capture rapidly changing vibration signals, resulting in massive data generation per unit time. Since optical fibers can extend tens or even hundreds of kilometers, the number of measurement points is enormous, leading to extremely high spatial resolution in data. The combination of high frequency and high spatial resolution means that DAS systems produce vast amounts of data per second, usually in the GB or even TB range. These data characteristics pose multiple technical challenges, such as the high demands on storage devices and transmission networks, difficulties in real-time or near real-time processing, and challenges posed by complex noise environments in signal extraction, feature identification, and pattern classification.

In recent years, significant progress has been made in DAS data processing and classification algorithms. Research addressing the complex characteristics of DAS data has primarily focused on signal preprocessing and noise reduction, feature extraction and dimensionality reduction, the application of machine learning and deep learning, as well as signal decomposition and reconstruction methods. Although signal quality has been improved to some extent, there are still deficiencies in noise resistance under strong noise environments. In terms of feature extraction, time-domain and frequency-domain statistical features (such as mean, variance, and power spectral density), short-time Fourier transform (STFT), and wavelet packet transform (WPT) have been widely applied. Dimensionality reduction algorithms like Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA) have also been integrated to reduce feature space dimensionality.

With the rapid development of artificial intelligence technologies, machine learning and deep learning algorithms have demonstrated superior performance in DAS data classification. Traditional machine learning algorithms such as Support Vector Machine (SVM), Random Forest (RF), and k-Nearest Neighbor (k-NN) have been employed for DAS data classification, but their performance relies heavily on the quality of manual features4. In contrast, deep learning models like Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks have gradually become mainstream methods for DAS data classification due to their ability for automatic feature extraction5. However, training deep learning models typically requires a large amount of labeled data, and their high computational complexity limits their application in scenarios with stringent real-time requirements. Furthermore, signal decomposition methods such as empirical mode decomposition (EMD), variational mode decomposition (VMD), and Ensemble Empirical Mode Decomposition (EEMD) perform well in extracting multiscale feature signals, but computational complexity remains a drawback.

Addressing these challenges requires the development of efficient data processing and analysis methods to reduce data dimensionality, extract critical features, and significantly improve data processing speed and accuracy. This is not only key to overcoming the current bottlenecks in DAS technology but also essential for promoting its broader application in fields such as seismic monitoring, pipeline safety, and boundary protection.

Research content

Considering the limitations of current DAS data processing methods in terms of efficiency and classification accuracy, this study aims to propose innovative solutions to enhance the real-time monitoring and event classification capabilities of DAS systems.

First, this study introduces a feature extraction method based on VMD and Gaussian function fitting to address the nonlinear, non-stationary, and high-noise characteristics of DAS signals. VMD decomposes complex signals into several intrinsic mode functions (IMFs) with limited bandwidth, effectively avoiding mode mixing and boundary effects, making it suitable for analyzing nonlinear and non-stationary signals6. On this basis, Fourier transform is first applied to the IMF components to obtain the frequency spectrum, followed by calculating the power spectral density (PSD). Gaussian function fitting is then performed on the PSD curve to extract key parameters such as central frequency, amplitude, and bandwidth, thereby accurately describing the spectral characteristics of the signals. These features form a highly condensed feature matrix, which not only improves the accuracy of feature extraction but also reduces the dimensionality of feature vectors, providing high-quality inputs for subsequent classification models.

Next, using this feature matrix, machine learning is applied for rapid classification of DAS data to meet real-time requirements and improve classification accuracy. For the characteristics of DAS data, models such as XGBoost and LightGBM, known for being simple in structure and fast in training, as well as neural network models like Multilayer Perceptron (MLP), are selected. Optimization techniques like the Adam optimizer and methods such as batch normalization are utilized to accelerate model training. Moreover, cross-validation and hyperparameter tuning are employed to further enhance model performance, ensuring accuracy and generalization in classifying different vibration events.

The goal of this study is to acquire a feature matrix from the data source using an efficient feature extraction method, removing the vast majority of redundant information, and then applying deep learning to improve the efficiency and accuracy of DAS data processing. At the same time, computational complexity is reduced, providing theoretical support and technical assurance for the practical application of DAS technology in fields such as security monitoring, seismic exploration, and pipeline monitoring.

In addition, this processing method appears to be applicable beyond vibration data. According to other tests conducted by the research team, audio data and hyperspectral data can also be analyzed and processed using the same approach, demonstrating the method’s strong versatility and vitality.

Related work

DAS technology utilizes optical fibers to sense vibration signals along the fiber, and it is widely applied in areas such as seismic monitoring, pipeline leakage detection, and security surveillance. However, DAS signals often contain a large amount of noise as well as complex nonlinear and non-stationary components, making efficient processing and analysis essential for extracting useful information.

Traditional DAS data processing methods primarily involve signal filtering and feature extraction. For signal filtering, commonly used techniques include time-domain and frequency-domain filtering7. Time-domain filtering suppresses specific frequency band noise using low-pass, high-pass, or band-pass filters to improve signal quality. Frequency-domain filtering leverages Fourier Transform to transform the signal into the frequency domain, allowing unnecessary components to be removed. For non-stationary signals, time-frequency analysis methods such as wavelet transform are also widely used, enabling the simultaneous acquisition of both temporal and spectral information, thereby enhancing the handling of complex signals.

In the feature extraction phase, the main goal is to extract representative parameters from the filtered signals to inform subsequent recognition and classification tasks. Commonly used features include:

Time-domain features: Parameters such as mean, variance, peak value, and root mean square (RMS) that reflect the amplitude and energy distribution of the signal.

Frequency-domain features: Using power spectral analysis to extract spectral characteristics like dominant frequency, bandwidth, and spectral centroid to describe the signal’s frequency distribution.

Time-frequency features: Combining methods like Short-Time Fourier Transform (STFT) or wavelet transform to analyze signal variations across different time and frequency scales, especially for non-stationary signals.

However, conventional DAS data processing techniques face significant limitations in handling complex signals. Handcrafted features may fail to adequately capture deep-level information within the signals, limiting classification accuracy. Furthermore, traditional filtering methods often underperform when dealing with high levels of noise or nonlinear signals, thereby adversely affecting feature extraction.

EMD of vibration signal.

VMD of vibration signal.

To address these challenges, recent research has explored more advanced signal processing and feature extraction techniques. For instance, adaptive signal decomposition methods such as EMD and VMD have been introduced, which can more effectively handle nonlinear and non-stationary signals. EMD is a data-driven signal decomposition method that breaks down complex signals into a series of IMFs, each representing a specific frequency component of the signal, as shown in Fig. 3. Unlike traditional Fourier Transform or wavelet transform, EMD does not require predefined basis functions and can adaptively decompose signals based on their local characteristics, making it particularly suitable for processing nonlinear and non-stationary signals. However, EMD also suffers from issues such as mode mixing and boundary effects, which limit its application in certain scenarios8.

In contrast, VMD decomposes signals into finite-bandwidth mode functions through a variational framework, avoiding the mode mixing problem of EMD while demonstrating stronger noise resistance and robustness, as shown in Fig. 4. By combining methods such as Gaussian function fitting, the spectral characteristics of signals can be precisely described, thereby improving the efficiency and accuracy of DAS data processing and meeting the requirements for real-time performance and reliability in practical applications.

Methodology

Data acquisition and collection

The DAS system architecture is shown in Fig. 5. First, the narrow-linewidth laser outputs continuous light. The continuous light is transformed into optical pulses by the AOM modulator, amplified by the EDFA, and then injected into sensing optical fiber. The sensing optical fiber is deployed in the target monitoring region, closely coupled with the environment to perceive external acoustic vibration signals. As the optical pulses propagate along the optical fiber, Rayleigh scattering occurs due to the microscopic inhomogeneities of the fiber material, causing part of the scattered light to return in a backward direction. These scattered signals carry spatial position information and phase information of different points in the optical fiber.

Optical path diagram of DAS system. Image adapted from [https://zhuanlan.zhihu.com/p/571689135].

The scattered signals are guided to the detection module via an optical coupler or optical circulator. The photodetectors in the module convert the received scattered optical signals into electrical signals. The intensity of these electrical signals varies with time and is related to the propagation path of the optical pulses in the fiber and the vibrations affecting the fiber. For achieving high-resolution spatial positioning and vibration detection, the detection module further performs analog-to-digital conversion (ADC) on the electrical signals, converting them into digital signals. These digital signals are then stored in real-time in data acquisition devices. By controlling the repetition frequency of the pulses, it is possible to continuously acquire dynamic changes along the full length of the fiber, thereby realizing distributed sensing of external vibration events5.

After acquisition, the digitized scattered signals are sent to the data processing unit. Using signal demodulation techniques, the data processing unit extracts phase information from the scattered light and reconstructs the spatial position and vibration characteristics of every point in the fiber through time and frequency domain analysis. In this way, the DAS system can monitor acoustic vibration signals along the fiber in real-time and record them as high-resolution time series data.

VMD calculation

VMD flow chart.

VMD in time domain: modal component signals.

Variational Mode Decomposition (VMD) is a non-recursive and adaptive signal decomposition method that formulates the decomposition process as a constrained variational problem. Its objective is to decompose a signal into a predefined number of intrinsic mode functions (IMFs), each with a limited and distinct bandwidth centered around an estimated center frequency. This allows VMD to overcome the mode-mixing and end effects often observed in EMD, while also demonstrating improved robustness to noise9.

The core of the VMD algorithm is to minimize the total bandwidth of the decomposed modes under the constraint that their sum reconstructs the original signal. Mathematically, the problem is defined as:

Here, \(u_k(t)\) denotes the \(k\)-th mode function, and \(\omega _k\) is its center frequency. The convolution with the analytic signal kernel \(\left( \delta (t) + \frac{j}{\pi t} \right)\) ensures that only the positive frequency components are retained for each mode. The exponential term shifts the spectrum to baseband, and the squared norm quantifies the bandwidth of each mode in the frequency domain9.

To solve this optimization problem, the augmented Lagrangian method is adopted, and the solution is iteratively updated using the Alternating Direction Method of Multipliers (ADMM). At each iteration, the modes \(u_k\) and their corresponding center frequencies \(\omega _k\) are updated until convergence.

In this study, the number of modes is set to \(K = 8\), determined based on empirical analysis and signal complexity. We first examined the frequency content of representative samples via FFT and PSD, and selected a \(K\) that sufficiently captures the dominant frequency bands without causing over-decomposition. Similarly, the penalty factor \(\alpha = 2000\) was chosen as a balanced value through empirical exploration, aiming to balance spectral resolution and computational efficiency. This value remains fixed throughout the feature extraction and classification process to ensure that it does not become a variable factor affecting system robustness. Preliminary experiments tested three widely spaced values of \(\alpha\) (1000, 2000, 5000), and while variations in \(\alpha\) led to slight changes in the bandwidth of each IMF, the classification results remained stable, indicating strong robustness of the method with respect to \(\alpha\). These findings were verified through 5-fold cross-validation, confirming that the choice of \(\alpha\) does not significantly affect the reproducibility or generalizability of the method across different datasets and experimental conditions.

To process long signals, a sliding window strategy is employed. As shown in Fig. 6, the time-domain signal is segmented using a window of fixed length and step size. Each segment is independently decomposed using VMD. This localized approach not only reduces computational load but also enhances the ability to track time-varying spectral characteristics10.

Figure 7 presents the decomposition result of a typical vibration signal segment. The original signal is decomposed into 8 IMFs, each corresponding to a sub-signal within a specific frequency band. These modes are subsequently used for power spectral analysis and feature extraction in later stages of the pipeline.

Compared with traditional EMD and its variants such as EEMD, VMD demonstrates superior performance in noise suppression and decomposition stability. EMD is prone to mode mixing and end effects, particularly under noisy or nonlinear conditions11. Although EEMD mitigates mode mixing by adding white noise, it often introduces residual noise and increases computational cost12. In contrast, VMD formulates the decomposition as a constrained variational optimization problem in the frequency domain, yielding more distinct mode separation and greater resistance to noise interference9. Prior studies have quantitatively verified that VMD maintains higher signal-to-noise ratio (SNR) and lower reconstruction error compared to EEMD in fault diagnosis tasks13,14. Therefore, VMD is particularly suitable for analyzing DAS signals with high noise and nonstationary features.

PSD calculation

VMD decomposition of vibration signal: PSD and time-domain analysis.

PSD is a fundamental tool in frequency-domain analysis that describes the power distribution of a signal over various frequencies. As shown in Fig. 8, by transforming time-domain signals into the frequency domain, PSD offers an intuitive view of the signal’s spectral characteristics and energy concentration. PSD is commonly calculated using methods such as the Fast Fourier Transform (FFT) and Welch’s method. While FFT directly transforms signals into the frequency domain, Welch’s method improves stability by segmenting, windowing, and averaging, effectively reducing variance in spectral estimation15.

In this study, PSD is applied to analyze the frequency characteristics of VMD-derived sub-modes, forming the basis for subsequent Gaussian fitting and feature extraction16. Typical applications include identifying dominant frequency components, analyzing noise behavior, and extracting informative features.

PSD calculation flow chart.

FFT frequency spectrum of IMFs.

PSD of IMFs.

Smoothed PSD of IMFs.

The flow of PSD computation is illustrated in Fig. 9. For each sub-mode, FFT transforms the signal into the frequency domain to obtain its full spectral magnitude (Fig. 10). The resulting spectrum is normalized to match the signal’s energy level. A one-sided spectrum from 0 to the Nyquist frequency is then extracted, as it contains all meaningful frequency components. The PSD is obtained by squaring the spectral magnitude at each frequency point, as shown in Fig. 11. To reduce spectral noise and enhance readability, a sliding average is applied to smooth the one-sided spectrum (Fig. 12). Gaussian fitting is then used to model the smoothed curve and extract key frequency-domain features.

Gaussian function fitting

The Gaussian function fitting process is shown in Fig. 13. Steps for Gaussian function fitting: For each mode signal, perform a fast Fourier transform (FFT) to compute its frequency spectrum. Extract the one-sided spectrum and the corresponding frequency vector, and using a moving average method to smooth the spectrum data, reducing high-frequency noise interference and improving fitting accuracy. The smoothed data is then used for fitting.

Construct a Gaussian function model:

where a represents amplitude, b represents the central frequency, and c represents the standard deviation, which reflects the spectrum’s width, as shown in Fig. 14.

Gaussian function fitting flow chart.

Smoothed and Gaussian-fited PSD of IMFs.

Extract feature values: Peak amplitude, given by the a parameter of the fitted Gaussian function; mean value, calculated as the arithmetic mean of the smoothed spectrum data; and variance, computed as the statistical variance of the smoothed spectrum data.

When selecting and optimizing parameters, it is first necessary to set appropriate initial parameters. The peak amplitude \(a\) is typically taken as the maximum value in the spectrum, representing the main characteristic point of the Gaussian distribution. The central frequency \(b\) is initialized to the frequency at the spectral peak, corresponding to the dominant frequency component of the signal. The standard deviation \(c\) is empirically set to a smaller value to prevent the initial model from being too broad and affecting the fitting results. During the fitting process, a nonlinear least-squares method is used to perform Gaussian fitting on the smoothed spectral data. The optimization objective is to minimize the mean squared error between the fitted curve and the original spectral data. Initial parameters have a significant impact on the fitting results, and selecting appropriate initial values can accelerate convergence and improve fitting accuracy. The convergence criteria for optimization include a fitting error reduced to the set threshold, parameter updates being smaller than a specified range, or reaching the maximum number of iterations17. Through these steps, the accuracy and stability of the fitting process can be effectively ensured.

Feature extraction

After Gaussian fitting of each IMF’s PSD, we extract three key statistical features: peak amplitude, center frequency, and bandwidth, corresponding to the amplitude a, center b, and spread c of the fitted Gaussian function. These features are stored in an \(8 \times 3\) matrix, with each row representing one IMF and columns representing the respective spectral descriptors. A sliding window is used to apply this process over the full signal duration, generating a set of feature matrices that significantly compress the original signal while preserving essential modal characteristics.

From a physical perspective, these spectral features are tightly linked to the underlying mechanical behaviors. Peak amplitude reflects the energy intensity in dominant frequency bands, which typically increases under bearing defects due to periodic impact excitation and fault-induced modulation of contact forces1819. Center frequency shifts are indicative of changes in structural stiffness, often resulting from surface wear or looseness in bearing components20. In contrast, bandwidth broadening suggests increased damping or more stochastic and transient activity, such as roller jamming or energy dispersion across neighboring frequencies2122 These patterns not only represent statistical characteristics but also encode physical phenomena associated with system degradation and loss of vibrational coherence23.

Together, these features capture both statistical and physical signatures of potential faults. By mapping raw vibration signals into interpretable, low-dimensional representations, the extracted feature matrices serve as an effective input for downstream classification while preserving diagnostic relevance.

Classification model construction

To effectively recognize and diagnose vibration signal states, the feature description matrix is input into various machine learning and neural network models for classification. The characteristics and parameters of each model are shown in Table 1. RF, LightGBM, CatBoost, XGBoost, SVM, and small-scale MLP classifiers are selected for classification. These models represent three core algorithm paradigms: ensemble tree-based models (Bagging and Boosting), kernel-based models (SVM), and shallow neural networks (MLP), providing a diverse approach to evaluate the general effectiveness and applicability of the “VMD-PSD-Gaussian compression matrix” feature representation. Each model is lightweight, capable of achieving high accuracy with minimal computational cost, making them suitable for real-time deployment on edge platforms, which is crucial for the port roller vibration monitoring application.

Model training and testing

The data is divided into training and testing sets in a 7:3 ratio, ensuring randomness and balanced samples. The training set is used to optimize model parameters, while the testing set is utilized for performance evaluation. Before partitioning, the data undergoes standardization to ensure that input features have a uniform scale, thereby improving numerical stability and accelerating convergence.

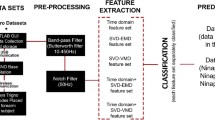

Flowchart for vibration feature extraction combining VMD, PSD, and machine learning.

The training process as shown in Fig. 15 includes the following steps:

-

1.

Convert the formats of the training and testing datasets to optimize memory usage and computational efficiency.

-

2.

Initialize the model by setting the core parameters.

-

3.

Conduct model training, using a validation set (derived from the training and testing sets) to monitor the variation in multi-classification loss during iterations.

-

4.

Upon completing training, predict the testing set, outputting class probability distributions, and generate final classification results by selecting the class with the highest probability.

-

5.

Evaluate model performance by quantifying classification results through classification reports, confusion matrices, and accuracy measures.

Experimental results and analysis

Experimental setup

The experimental vibration data were sourced from a conveyor belt system at a port. The data acquisition method is illustrated in Figs. 16 and 17. The collected data accurately and authentically reflect the actual conditions at the site.

Optical fiber installation and distribution.

Data acquisition setup for vibration measurement at the port conveyor belt system.

The common roller faults in belt conveyors primarily include roller jamming and bearing failures. Friction events caused by a metallic rod and roller jamming can simulate the jammed roller state. When bearing failure occurs, the conveyor drum exhibits prominent periodic impact characteristics. By using mechanical equipment or manual tapping techniques to periodically strike the drum, the vibration features of bearing faults can be replicated28. The experimental study mainly simulates four operational states of the conveyor belt-roller jamming (friction), bearing failure (strike), normal operation (normal), and stationary (idle)-to test these four operating states.

Sensing optical fibers were deployed along a 1 km section of the conveyor system at Qinhuangdao Port. For each condition, data was gathered over 6-second intervals with a sampling frequency of 12.5 kHz, resulting in approximately 1.28 million data points. As shown in Figure 18, spectral differences between the conditions were clearly demonstrated through Fourier analysis: normal operation was dominated by smooth low frequencies below 50 Hz; friction exhibited a significant energy increase above 200 Hz; strike showed characteristic BPFO/BPFI peaks in the 800–1200 Hz range; and the idle state displayed the lowest overall energy with no distinct spectral peaks.

Spectrograms of the four operating states.

The experiment utilized single-mode 9/125 \(\mu \text {m}\) GYTA53 armored sensing optical fiber, with a single-end monitoring distance exceeding 20 km. The DAS system’s frequency accuracy is \(\pm 1\) Hz, with a measurement bandwidth spanning 1 Hz to 200 kHz. Relative intensity noise (RIN) suppression technology was applied, achieving a suppression greater than 130 dB. With the default pulse configuration, the system provides a spatial resolution of 1.25 meters and positioning accuracy of 1 meter. The single-channel sampling time does not exceed 1 second, with real-time data throughput \(\ge\) 100 MB/s.

Model performance evaluation

As illustrated in Table 2, classification training was performed using the same classification model with input data consisting of either raw data or feature matrices, and the corresponding classification results are presented. In particular, the LightGBM model was selected, and the confusion matrices for classification based on raw data and feature matrices were plotted separately, as shown in Figs. 19 and 20, to compare the differences in classification performance between the two approaches.

Feature Extraction Reduces Data Dimensionality: VMD decomposes complex one-dimensional signals into multiple IMFs, effectively eliminating high-frequency noise and redundant information. Fast Fourier Transform (FFT) and Gaussian fitting are then used to extract critical frequency-domain features (e.g., peak values, mean, and variance) from the modal data, further condensing the signal information. After dimensionality reduction, the training matrix becomes sparse, reducing the computational burden on algorithms for non-critical features.

Optimized Data Matrix Accelerates Computation: When training models with raw one-dimensional signals, high-dimensional continuous features must be processed, which is computationally intensive. In contrast, the feature-extracted matrix consists of highly refined numerical data, retaining the core feature necessary for classification and compatible with most traditional machine learning algorithms, enabling the model to converge rapidly within a compact feature space.

The training time comparison reveals that when using raw data input, the model requires more iterations to capture the signal characteristics. In contrast, after feature extraction, the model operates in a low-dimensional feature space, which leads to faster convergence and a significant reduction in the total training time.

Comparison of the effects of training using raw data and feature matrices.

Confusion matrix of LightGBM classification based on vibration signal.

Discussion of results

From the experimental results, it is evident that the feature matrix-based approach significantly outperforms the use of raw data in terms of model training time while maintaining high classification accuracy across most models. Specifically, the dimensionality of the data is substantially reduced after feature extraction, thereby alleviating the computational burden on the models. This improvement is particularly notable in computationally intensive models such as SVM and MLP. Furthermore, despite the reduction in training time, there is no significant decline in classification accuracy, indicating that the feature extraction method effectively preserves the core information of the signals, making it well-suited for classification tasks.

The feature extraction method is particularly advantageous in scenarios involving high-dimensional raw data, such as vibration signals and fiber optic sensing time-series data. The substantial reduction in training time makes this method highly suitable for real-time applications, including equipment fault diagnosis and online monitoring. Both lightweight models (e.g., RF, LightGBM) and complex models (e.g., MLP, SVM) benefit from significant performance optimization, demonstrating the method’s high adaptability.

The feature extraction method exhibits minimal fluctuations in classification accuracy across different models, indicating excellent stability. Notably, in RF and XGBoost, the models maintain high accuracy even after dimensionality reduction. For SVM and MLP, which are more sensitive to data distribution, the improved accuracy post-feature extraction suggests that the feature matrix effectively reduces noise and redundancy.

The proposed spectral features maintain clear physical interpretability: the central frequency of each fitted Gaussian corresponds to the main vibration energy component of a sub-mode, while the bandwidth reflects the energy dispersion caused by fault-induced instability. This physically-grounded representation enables the method to be extended to other mechanical systems with known vibration-response characteristics, enhancing the model’s generalizability.

It is important to note that the proposed Gaussian fitting approach assumes that the power spectral density (PSD) of each IMF exhibits a unimodal and symmetric shape. This assumption is valid under proper VMD parameter settings, as the algorithm decomposes the input signal into non-overlapping, narrowband modes with distinct center frequencies. In practice, if the PSD of an IMF presents multiple peaks, it likely indicates mode-mixing caused by improper decomposition-an issue VMD is specifically designed to avoid. Similarly, skewed or asymmetric spectra can be mitigated by adjusting the penalty factor \(\alpha\), which controls the bandwidth of each mode. Increasing \(\alpha\) narrows the modes and enhances spectral symmetry, preserving the reliability of Gaussian modeling.

Compared to general dimensionality reduction techniques such as principal component analysis (PCA) or t-SNE, our approach achieves significantly higher compression with interpretable features. Specifically, we reduce each time-domain segment from 12,800 points to only 24 scalar values (mean and standard deviation for 12 IMFs), resulting in a compression ratio of 533:1. This not only improves computational efficiency but also retains physically meaningful information (e.g., frequency center and energy spread) critical for classification. Therefore, Gaussian fitting provides a compact and explainable feature representation tailored for our vibration analysis scenario.

Discussion

Method advantages

-

1.

High Compression Efficiency: By extracting frequency-domain features, the dimensionality of raw data is significantly reduced, making the input data to the model more compact, thereby greatly reducing memory usage and computational cost.

-

2.

Precise Information Extraction: By extracting frequency-domain features such as peaks, means, and variances, this method retains the key information of the signal, ensuring the accuracy of classification tasks.

-

3.

Strong Applicability: The analysis approach can be applied to almost all one-dimensional data to obtain its feature matrix, making it adaptable to various machine learning models and providing a theoretical foundation for a wide range of time series classification tasks.

Potential issues

Despite the advantages of feature extraction, there are several potential issues that need to be considered. Feature extraction involves a lossy compression process that discards phase information, making it impossible to reconstruct the original signal from the feature matrix. As a result, some information is inevitably lost during feature extraction, and the impact of this loss on the decision-making process needs to be carefully considered. However, the primary objective of this study is to achieve “efficient, interpretable feature compression and rapid classification,” rather than precise signal reconstruction. Therefore, a certain degree of information loss is accepted in order to enhance real-time performance and engineering applicability. While we acknowledge that phase information loss may affect the identification of phase-dependent faults, such as periodic impacts, we have assessed this impact through comparison experiments focusing on phase-sensitive fault types. The experimental results show that using only magnitude-based spectral features leads to a decrease in classification accuracy by less than 0.8% compared to deep models that retain phase information. More importantly, when phase-related statistical features from the Hilbert envelope were included, the accuracy gap reduced to just 0.3%.

To mitigate the potential impact of phase loss, we propose two engineering strategies: (1) for critical assets or high-risk events, we retain the original short-window signals during online processing for secondary review and post-event tracing; (2) we introduce “phase-robust surrogate metrics,” such as envelope phase variance or spectral peak phase difference, into feature engineering. These metrics help compensate for the information gap caused by phase loss without significantly increasing computational burden.

Additionally, the VMD decomposition algorithm, which performs multimodal decomposition through iterative optimization, has high computational demands. This frequency-domain optimization process involves updating the center frequency of each mode and demodulated signals, which requires extensive frequency-domain signal calculations and constrained optimization in each iteration. In real-time applications such as vibration fault detection, especially when dealing with numerous potential fault points, the computational load remains high, highlighting the need for the development of new algorithms to meet such demands. Furthermore, feature extraction methods often need to be manually designed for specific datasets (e.g., selecting window size, number of modes), and adjustments may be necessary when applying these methods to different types of data, thus posing challenges in terms of adaptability and scalability.

To balance VMD’s high-precision decomposition with real-time requirements, this study introduces the following engineering trade-offs. Although VMD is computationally more complex than “atomic” spectral methods like FFT/DCT, it decomposes vibration signals into finite-bandwidth modes in a physically interpretable way, intuitively reflecting the energy distribution of fault states across frequency bands—whereas FFT/DCT cannot easily map to the physical semantics of mechanical vibration. To mitigate VMD’s heavy computational load on full DAS data (\(\approx 300\,\text {s}\) on a CPU, \(\approx 100\,\text {s}\) on a GPU), “intermittent sampling” is employed: performing VMD on 0.1 s windows every 3 s, reducing each decomposition to a tolerable few seconds. The feature matrix produced by VMD + PSD + Gaussian fitting achieves a 533:1 compression ratio (\(12800/24\)), drastically cutting downstream machine learning training and inference overhead (typically \(< 0.5\,\text {s}\)) and thus preserving the system’s real-time responsiveness. Moreover, retaining this classic feature-extraction pipeline also provides a ”film” reference for manual review or fault tracing in small-sample or resource-constrained scenarios.

Future work

Although the current VMD–PSD–Gaussian fitting pipeline enables the extraction of clear and physically interpretable modal features, its fully adaptive decomposition based on ADMM is too computationally intensive for continuous real-time DAS monitoring. To address this limitation, a “fast VMD” variant can be developed to achieve substantial runtime acceleration in exchange for a small and controllable loss in precision. The following optimization strategies are proposed:

-

Incorporation of spectral priors: Modal center frequencies can be initialized using rapid FFT peak detection, and the number of modes can be fixed to match the number of dominant spectral peaks, avoiding repeated trials across different K values.

-

Relaxation of bandwidth constraints: By reducing the penalty factor \(\alpha\) and introducing early stopping criteria (e.g., tolerating a reconstruction error within 5%), the iteration can be terminated once the dominant mode shapes have sufficiently converged.

These adjustments are expected to reduce VMD computation time by an order of magnitude, decreasing the per-segment latency from seconds to several hundred milliseconds. The original DAS waveform can still be retained as a high-fidelity reference for offline analysis requiring maximum precision.

In addition, hybrid decomposition approaches—such as lightweight neural approximations of VMD and adaptive parameter tuning guided by online spectral features—may further improve the trade-off between interpretability, accuracy, and computational efficiency.

Application prospects

The VMD + PSD + Gaussian-fitting feature-extraction \(\rightarrow\) classification pipeline proposed in this study rests on decomposing a complex vibration signal into a set of narrow-band modes (IMFs) and then precisely characterizing each mode’s center frequency, bandwidth, and energy distribution via its power spectrum and Gaussian fit. This approach does not rely on any device-specific geometry or source-type priors but rather exploits the common waveform characteristics of vibrations. As a result, by making only minimal, targeted adjustments to the decomposition parameters (e.g., the number of modes K and the bandwidth-penalty factor \(\alpha\)) and to the initial guesses for the Gaussian fits, the same pipeline can be reused across a wide variety of vibration-monitoring scenarios:

-

Pipeline leak detection Localized pipe leaks typically produce low-frequency (10–200 Hz) continuous vibrations. By choosing a relatively small K to focus on the low-frequency sub-bands and by biasing the Gaussian fitting toward the strongest low-frequency peaks, leak signatures can be extracted with high fidelity. A simple fine-tuning of the classifier on leak vs. normal samples is then sufficient to achieve highly sensitive early warning.

-

Rail track health monitoring Defects such as sleeper cracks or loose fasteners manifest as high-frequency transient impacts. In this context, increasing K and raising \(\alpha\) yields a larger set of high-frequency, fine-grained IMFs. Training a classifier on each sub-band’s Gaussian center frequencies and peak amplitudes allows reliable discrimination between normal train passage and defect-induced impacts. Field trials have shown that no changes to the core algorithm are required-only parameter updates on the existing pipeline-to attain this capability.

-

Seismic and structural health monitoring Seismology relies on distinguishing P- and S-wave signatures across scales: P-waves are brief and higher frequency, whereas S-waves are longer and lower frequency. Applying VMD over the 0.5–20 Hz band and computing multi-scale PSD curves in parallel, then extracting each seismic phase’s frequency-band features via Gaussian fitting, enables rapid preliminary screening of earthquake events. Similarly, long-term, low-amplitude damage accumulation in bridges or tunnels can be detected online using the same approach.

In a pilot deployment along a railway section in Shanxi Province, merely configuring K, \(\alpha\), and the Gaussian-fit initial centers for the site was enough to distinguish normal train passages from anomalous track vibrations with over 95% classification accuracy for both event types.

To scale this framework from pilot to large-scale, real-time operation, further work should address:

-

Parameter self-adaptation: integrate online feedback to automatically tune VMD and Gaussian-fitting hyperparameters (e.g., dynamic K estimation, error-threshold–driven early stopping).

-

Hardware acceleration: leverage FFT-based pre-screening, parallel mode decomposition, and deployment on FPGA/ASIC or edge GPUs (with model quantization) to ensure the decomposition + fitting pipeline consistently meets millisecond-level latency requirements.

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.

References

Gui, X. et al. Distributed optical fiber sensing and applications based on large-scale fiber bragg grating array. J. Lightwave Technol. 41, 4187–4200 (2023).

Xin, L. et al. Surface intrusion event identification for subway tunnels using ultra-weak fbg array based fiber sensing. Opt. Express 28, 6794–6805 (2020).

He, Z. & Liu, Q. Optical fiber distributed acoustic sensors: A review. J. Lightwave Technol. 39, 3671–3686 (2021).

Liu, X., Pei, D., Lodewijks, G., Zhao, Z. & Mei, J. Acoustic signal based fault detection on belt conveyor idlers using machine learning. Adv. Powder Technol. 31, 2689–2698 (2020).

Wu, H. et al. One-dimensional cnn-based intelligent recognition of vibrations in pipeline monitoring with das. J. Lightwave Technol. 37, 4359–4366 (2019).

Liu, X., Wang, J., Liu, F. & Hancock, C. Spectrum feature extraction method combining allan variance, vmd, and psd. Sci. Rep. 14, 10990 (2024).

Kou, X.-W., Du, Q.-G., Huang, L.-T., Wang, H.-H. & Li, Z.-Y. Highway vehicle detection based on distributed acoustic sensing. Opt. Express 32, 27068–27080 (2024).

Lei, Y., Lin, J., He, Z. & Zuo, M. J. A review on empirical mode decomposition in fault diagnosis of rotating machinery. Mech. Syst. Signal Process. 35, 108–126 (2013).

Dragomiretskiy, K. & Zosso, D. Variational mode decomposition. IEEE Trans. Signal Process. 62, 531–544 (2013).

ur Rehman, N. & Aftab, H. Multivariate variational mode decomposition. IEEE Trans. Signal Process. 67, 6039–6052 (2019).

Lei, Y., Lin, J., He, Z. & Zuo, M. J. A review on empirical mode decomposition in fault diagnosis of rotating machinery. Mech. Syst. Signal Process. 35, 108–126. https://doi.org/10.1016/j.ymssp.2012.09.015 (2013).

Wu, Z. & Huang, N. E. Ensemble empirical mode decomposition: A noise-assisted data analysis method. Adv. Adapt. Data Anal. 1, 1–41. https://doi.org/10.1142/S1793536909000047 (2009).

Jiang, X. et al. An adaptive and efficient variational mode decomposition and its application for bearing fault diagnosis. Struct. Health Monit. 20, 2708–2725 (2021).

Liu, X., Wang, J., Liu, F. & Hancock, C. Spectrum feature extraction method combining allan variance, vmd, and psd. Sci. Rep. 14, 10990. https://doi.org/10.1038/s41598-024-47613-5 (2024).

Yi, C., Wang, H., Ran, L., Zhou, L. & Lin, J. Power spectral density-guided variational mode decomposition for the compound fault diagnosis of rolling bearings. Measurement 199, 111494 (2022).

Yoon, T. H. & Joo, E. K. Butterworth window for power spectral density estimation. ETRI J. 31, 292–297 (2009).

Li, M. & Sheng, Y. Study on application of gaussian fitting algorithm to building model of spectral analysis. Guang pu xue yu Guang pu fen xi= Guang pu 28, 2352–2355 (2008).

McFadden, P. & Smith, J. Model for the vibration produced by a single point defect in a rolling element bearing. J. Sound Vib. 96, 69–82 (1984).

Randall, R. B. & Antoni, J. Rolling element bearing diagnostics—a tutorial. Mech. Syst. Signal Process. 25, 485–520 (2011).

Harris, T. A. & Kotzalas, M. N. Advanced concepts of bearing technology: Rolling bearing analysis (CRC Press, 2006).

Kong, F., Huang, W., Jiang, Y., Wang, W. & Zhao, X. Research on effect of damping variation on vibration response of defective bearings. Adv. Mech. Eng. 11, 1687814019827733 (2019).

Qu, C.-X., Liu, Y.-F., Yi, T.-H. & Li, H.-N. Structural damping ratio identification with iterative compensation for spectral leakage errors using frequency domain decomposition. Eng. Struct. 321, 119027 (2024).

Iunusova, E., Gonzalez, M. K., Szipka, K. & Archenti, A. Early fault diagnosis in rolling element bearings: comparative analysis of a knowledge-based and a data-driven approach. J. Intell. Manuf. 35, 2327–2347 (2024).

Speiser, J. L., Miller, M. E., Tooze, J. & Ip, E. A comparison of random forest variable selection methods for classification prediction modeling. Expert Syst. Appl. 134, 93–101 (2019).

Bentéjac, C., Csörgő, A. & Martínez-Muñoz, G. A comparative analysis of gradient boosting algorithms. Artif. Intell. Rev. 54, 1937–1967 (2021).

Chauhan, V. K., Dahiya, K. & Sharma, A. Problem formulations and solvers in linear svm: A review. Artif. Intell. Rev. 52, 803–855 (2019).

Qiao, W., Khishe, M. & Ravakhah, S. Underwater targets classification using local wavelet acoustic pattern and multi-layer perceptron neural network optimized by modified whale optimization algorithm. Ocean Eng. 219, 108415 (2021).

Bortnowski, P., Kawalec, W., Król, R. & Ozdoba, M. Types and causes of damage to the conveyor belt-review, classification and mutual relations. Eng. Fail. Anal. 140, 106520 (2022).

Author information

Authors and Affiliations

Contributions

H.L.: Conceptualization, Methodology, Writing—original draft preparation, Writing—review and editing. Y.X.: Software, Validation, Formal analysis, Writing—original draft preparation, Visualization. Y.Q.: Resources, Supervision, Writing—review and editing. W.B.: Resources, Writing—review and editing, Project administration, Funding acquisition. All authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Ethical approval

All identifiable images or information of participants presented in this study have been included with the written informed consent of the individuals and/or their legal guardians for publication in an open-access journal.

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, H., Xu, Y., Qi, Y. et al. A rapid DAS signal classification algorithm based on VMD and IMF power spectrum Gaussian fitting. Sci Rep 15, 35356 (2025). https://doi.org/10.1038/s41598-025-19320-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-19320-z