Abstract

Channel estimation constitutes a pivotal concern within the realm of practical massive multiple-input multiple-output (MIMO) systems. Recently, numerous studies have been conducted to harness the power of deep neural networks for better channel estimation and feedback. However, they often overlook a crucial factor: the intrinsic correlation features present in downlink channel state information (CSI). As a consequence, in challenging environments, the performance of channel estimation and feedback frequently falls short of expectations. To achieve joint channel estimation and feedback, this paper proposes an encoder-decoder based network that unveils the intrinsic frequency-domain correlation within the CSI matrix. The entire encoder-decoder network is utilized for channel compression. To efficiently capture and reconstruct correlation features, we propose a self-mask-attention coding mechanism, augmented by an active masking strategy aimed at enhancing operational efficiency. Besides, this paper employs a streamlined multilayer perceptron denoising module to achieve more precise estimations in the decoder part for channel estimation. Extensive experiments demonstrate that our method not only outperforms state-of-the-art channel estimation and feedback techniques in joint tasks but also achieves beneficial performance in individual tasks.

Similar content being viewed by others

Introduction

Massive multiple-input multiple-output (MIMO) technology is a crucial component of 5G-Advanced and 6G networks. It increases the number of transmission antennas and improves cell transmission quality and cell system capacity1,2. The utilization of large arrays of analog antennas in MIMO systems introduces transmission overhead and noise interference, leading to notable performance degradation, particularly in frequency division duplex (FDD) systems. There is no reciprocity3 in FDD systems, and as a result, the base station requires the user to transmit back the downlink (DL) channel state information (CSI) to build the communication link to obtain the MIMO gain. In this process, there are several challenges. First, there is a contradiction between the large overhead requirement by the size of the DL CSI matrix and the limited number of available bandwidths. Therefore, it is necessary to compress the channel data information. Secondly, in practical operations, the transmitted signals only constitute a portion of the complete signal; these signals are referred to as pilot signals (explain, pilot signals are specific signals used in telecommunications to estimate the effect of the channel on the transmission). It is necessary to estimate the complete signal from these pilot signals.

Previous studies have explored various kinds of methods for optimizing channel estimation and/or feedback to improve the accuracy of the downlink channel. For the channel estimation, the least squares (LS)4 and minimum mean square error (MMSE)5 are two commonly used classical methods, but LS estimations suffer from significant noise, and applying the MMSE method in practice poses challenges due to the lack of access to accurate channel information beforehand. Recently, learning-based methods including CNN-based6,7 and attention-based8 networks can effectively increase the estimation accuracy. For channel compression, the 3GPP release-16 (Rel-16) standards specify the utilization of Type I and improved Type II (eType II) codebooks for the CSI feedback system9. The encoder-decoder based structure10,11,12,13,14 can further improve the CSI feedback performance. For joint channel estimation and feedback, deep learning-based methods15,16 achieve significant improvement. Attention-based methods are the most well-known deep learning-based methods, which improve the performance of channel estimation and feedback by strengthening the connection between different channels17,18,19,20. However, previous methods have been limited to performing either a single task or joint tasks with two independent networks or methods.

To explicitly incorporate channel estimation and feedback into one unified structure, we propose an attention-based method named FlowMat. It is based on the encoder-decoder architecture and makes full use of the characteristics of CSI and each part of the network. Based on the observation that inherent frequency-domain correlations exist among channel matrices, we can achieve mutual substitution of frequency domain data. We leverage this characteristic of the channel matrix and propose self-attention coding to facilitate the acquisition of relevant features. Then, channel compression can be achieved through extracting highly correlated features. We proposed an active mask technique to acquire these highly correlated features. The learnable mask tokens make the encoder learn a better mapping between deep features and channel information. Then, channel completion and recovery are achieved by decoding mask tokens. For channel estimation, we use the decoder part. In order to reduce environmental noise and complement the H channel, we introduce a lightweight multilayer perceptron denoising module. The proposed FlowMat framework enables estimation and feedback with high performance and low computational overhead, which outperforms state-of-the-art approaches. Our main contributions are summarized as follows:

-

We reveal the inherent frequency-domain correlation that existed among channel matrices. To leverage this correlation effectively, we devise the frequency domain vector as the fundamental channel feature base unit.

-

We propose an innovative token transformer named FlowMat, which can achieve simultaneous channel estimation and feedback in the downlink scenario. FlowMat is uniquely equipped to harness the frequency domain’s inherent correlation to achieve both completion and compression tasks efficiently.

-

Extensive experiments on joint channel estimation and feedback tasks show that our proposed method outperforms state-of-the-art approaches. Moreover, applying the proposed method to individual channel estimation or feedback can achieve performance gains as well.

Related work

Channel estimation

Recently, deep learning has emerged as the principal tool in numerous studies, offering a promising avenue for channel estimation21. These studies can be categorized into two types: non-attention-based and attention-based methods. For no-attention-based methods, ChannelNet6 is the first deep-learning-based work to solve the channel estimation problem. It utilizes a super-resolution network SRCNN22, and a denoising network DnCNN for channel completion and denoising, respectively. Chun et al23. propose a two-phase model that estimates channels in time domain. The first phase employs a pilot-aid model consisting of a two-layer MLP and a CNN, while the second phase utilizes a data-aid model. Jin et al7. exploit an image-denoising network called CBDNet for channel estimation. The network is composed of two main sub-networks: a noise level estimation sub-network and a non-blind denoising sub-network. He et al24. utilize a learned denoising-based approximate message-passing network for beamspace millimeter-wave (mmWave) MIMO systems. For attention-based methods, AttentionNet8utilizes transformer blocks for channel attention. Gao et al20. and Mashhadi et al25. propose the CNN channel attention module and CNN non-local blocks for channel estimation, respectively.

Channel feedback

In addition to channel estimation, channel feedback is also a crucial technology in the physical layer of MIMO systems. Wen et al10. propose CsiNet, which is based on a CNN autoencoder structure. In CsiNet, the encoder is designed for CSI compression, while the decoder is responsible for recovering both the user equipment (UE) and base station (BS). Following, inspired by CsiNet, a series of studies11,12,13,14design various kinds of CNNs to further improve the CSI feedback performance. Mashhadi et al26. propose an image compression approach, which incorporates rate-distortion cost and arithmetic entropy coding to achieve the minimum bit overhead. The long short-term memory (LSTM) networks27,28are introduced into the encoder and decoder to make full use of the correlation extracted from subcarriers. The majority of CNN and LSTM-based methods concentrated on full channel state information (F-CSI) feedback, without considering the character of channels, such as correlation, which can lead to unrepresentative compressed features and low recovery accuracy. The current 5G system primarily uses CSI feedback, which is based on the compression and feedback of the eigenvector of the channel matrix, as stated in 3GPP. Liu et al29. propose EVCsiNet, which is a CNN-based structure that utilizes eigenvector features. In recent times, attention-based techniques have garnered significant popularity in the domains of CV and NLP. Xiao et al18. propose EVCsiNet-T for channel feedback, which compresses the channel eigenvector and the attention mechanism for channel recovery.

Joint channel estimation and feedback

All of the deep-learning-based CSI feedback schemes mentioned above assume that the downlink channels are accurately known to the user, which is not feasible in practical communication systems. Obtaining precise channel information is crucial for base stations. Therefore, recent investigations have explored the application of neural networks for joint channel estimation and feedback optimization30. Ma et al15. propose a deep neural network (DNN) architecture that jointly designs the pilot signals and channel estimation module end-to-end to avoid performance loss caused by separate designs. Chen Tong et al16. propose JCEF, where two networks are constructed to perform explicit and implicit channel estimation and feedback, respectively.

Preliminaries

Massive MIMO system

For a typical massive MIMO system in FDD mode, we consider that the system is equipped with \(N_t(\ge 1)\) transmit antennas at the BS and \(N_r(\ge 1)\) receive antennas at UE side. Orthogonal frequency division multiplexing (OFDM) with \(N_c(\ge 1)\) subcarriers is adopted, consisting of 4 resource blocks (RBs). In the downlink phase, the corresponding the i-th received signal component \({y_i}\in {\mathbb {C}^{N_c\times {1}}}\) can be denoted as,

where \({s_i}\in {\mathbb {C}^{N_c\times {1}}}\) is the matrix of the transmitting signal to the i-th receive antenna. \(H_i\in {\mathbb {C}^{N_c\times {N_t}}}\) represents the channel frequency response vector, which is estimated by the pilot-based channel estimation. \(P_i\in {\mathbb {C}^{N_t\times {N_c}}}\) is the corresponding precoding matrix.\(n_i\in {\mathbb {C}^{N_c\times {1}}}\) represents the additive noise. Then, the channel matrix \(H_i\) in the frequency-spatial domain can be obtained by stacking \(h_{i,j}\) in the frequency domain as follows: \(H_i=[h_{i,1}, h_{i,2},...,h_{i,k}], h_{i,k}\in {\mathbb {C}^{N_t\times {1}}}\). However, this matrix size is unacceptably large for direct feedback in a massive MIMO system.

The potential of eigenvector decomposition (e.g., EZF31) can be exploited to achieve lightweight precoding and establish efficient communication links. After channel estimation at UE, the corresponding eigenvector matric \(w\in {\mathbb {C}^{N_t\times {N_c}}}\) is composed of \(w_{k}\in {\mathbb {C}^{N_t\times {1}}}\) with normalization \(\Vert {w_{k}}\Vert ^2=1\). \(w_{k}\) is the k-th subcarrier of CSI. W can be utilized as the downlink precoding vector and calculated using eigenvector decomposition. The based eigenvector decomposition and precoding system model can be calculated as:

where \(\lambda _i\) represents the maximum eigenvalue of \(H_k^HH_k, H_k\in {\mathbb {C}^{N_r\times {N_t}}}\), and \(()^{\textrm{H}}\) is Hadamard transpose. Therefore, to build an accurate communication link and obtain MIMO gain, pilot-based channel estimation and channel feedback are key challenges in FDD system.

Channel estimation

Before building the complete communication link, the BS needs to transmit the pilots to the receiver, because downlink channels are unknown to the transmitter. The pilot symbol-based channel estimation often performs quite well for tracking the sudden change of the channel, especially the fading channels in \(N_p (1\le {N_p}\le {N_c})\) equispaced pilot subcarriers. However, the size of the density and spatial link noise directly affect the accuracy of channel recovery. Conventional channel estimation methods, such as the least-squares (LS) technique are widely used by minimizing the mean squared error (MSE) between received \(y_{i,p}, y_{i,p}\in {\mathbb {C}^{N_p\times {N_t}}}\), to give an estimate of \(\hat{H}_{i,p}\), the frequency domain LS estimation is given by

where \(\hat{H}_{i,p}, s_{i,p}\in {\mathbb {C}^{N_p\times {N_t}}}\) denotes the estimated and transmitted pilot signals respectively and \(\odot\) denotes the Hadamard product. Then, interpolation will be conducted to obtain the channel responses at other subcarriers to obtain the whole \(H_i\). The LS technique is known for its ease of implementation and extremely low complexity.

Channel feedback

In FDD systems, the UE will send the estimated downlink CSI back to the BS after receiving the pilot symbols and estimating the downlink channel matrix. Then, the BS may create relevant precoding vectors to reduce user interference and enhance communication quality. In massive MIMO systems, the CSI matrix \(H\in {\mathbb {C}^{N_c\times {N_t}\times {N_r}}}\), which has a total of \(2\times {N_c}\times {N_t}\times {N_r}\) real number components, will result in significant feedback overhead. The compressibility of the CSI matrix has been extensively studied in the literature since it is desirable in actual systems to minimize the feedback parameters. Adopting the multi-stream downlink transmission at the UE side, the corresponding eigenvector for the subcarriers, the BS can be directly used as the downlink precoding vector. Using the eigenvector as a channel matrix can also reduce the \(N_r\) dimensions of the receiving antenna. So the size of the channel matrix is \(2\times {N_c}\times {N_t}\) for the eigenvector. To further decrease the feedback overhead of DL CSI and enable accurate CSI recovery at the BS, we apply the typical DNN-based method for CSI compression and reconstruction.

Inherent frequency-domain correlation in wireless channel

Empirical support

We conducted a comprehensive analysis of wireless channel and eigenvector characteristics, which motivates us to pursue carefully crafted network designs.

Illustration of correlation features in the spatial-frequency domain.

The base station builds communication links by recovering the DL CSI matrix. We mainly analyze the measured DL CSI matrix \(H\in {\mathbb {C}^{N_c\times {N_t}\times {N_r}}}\) without noise and eigenvector w in the spatial-frequency domain. We perform frequency-domain correlation calculations on \(H_i, i=1\) and w separately. Figures 1a and 1b represent the H matrix and the correlation matrix of its frequency domain features, respectively. Figures 1c and 1d depict w matrix and the correlation matrices of its frequency domain features. From Fig. 1d, we can observe that the responses near the diagonal of the matrix are relatively larger, indicating that there is significant correlation information locally in the frequency domain features. Additionally, in Fig. 1d, there is one yellow line on each side of the diagonal. This suggests that there is also long-distance correlation between frequency domain features, although it’s not as pronounced as the local correlation information. Therefore, to leverage the long-distance correlation properties of frequency domain features, we consider the frequency domain as the base unit and the spatial data as vector data. The feature token vector is similar to CV patch and NLP word token unit. Therefore, we utilize the frequency token unit and the self-correlation underlying characteristic to build the joint model for channel compression and channel estimation.

FlowMat for joint channel estimation and feedback

Problem definition

we propose that active masking and self-mask-attention coding method FlowMat can be used to achieve compression and completion, which have been fully verified in channel estimation and feedback reconstruction. We use a sequence \(X=\{x_1,...,x_N\}, x_n\in {C^{1\times N_t}}\) to uniformly express the channel matrix H and the eigenvector matrix V. We divide the data in both the frequency domain and spatial domain into two-dimensional information. The frequency domain vector \(x_n\) serves as the channel basic unit, which can be analogously considered as a token in the fields of CV and NLP. Traditional DNN compression techniques primarily involve flattening all the encoder data, downsampling it through DNN, and then reconstructing the compressed data using upsampling methods. However, such an operation obviously does not make full use of the characteristics of the wireless channel. In order to make full use of the correlation information found between the frequency domains, we actively mask a part of the tokens to achieve compression, then insert the mask token into the compressed tokens’ subset as a complete matrix to decode and recover the whole original channel matrix.

System design

The compressing and completing processes of FlowMat. In a hierarchical data-encoding process, the latent variables (e.g., Z) represent high-level information. By active masking, \(Z_{Part}\) is the compact representation, which recovers the origin data with embedding mask token and decoding.

Our idea of the masked token is inspired by the work Masked AutoEncoders (MAE) from He et al32., where a self-supervised learning method in CV is proposed. They divide an image into non-overlapping patches and mask random patches of them, and the encoder only processes the unmasked and visible patches. The decoder reconstructs the original image from deep representations of the encoder and masked tokens. Each mask token is a shared learning vector indicating the existence of missing patches to be restored. The loss function is the mean squared error (MSE) between the reconstructed images and the original images. During pre-training, it masks the input image in a large proportion (about 75%), allowing the model to generalize better. The pre-trained encoder can be applied to downstream tasks such as classification and segmentation and achieve better performance than many other pre-training pipelines (such as MoCo v333 and BEiT34).

Network structure design

The encoder-decoder framework for compression and completion is formulated as:

where X, Z, \(Z_{Part}\), and \(Z_{Mask}\) are input data, compressed data, learnable useful data, and mask data, respectively. \(E_\theta\) and \(Q_{\psi }\) denote encoder and query. To reconstruct and restore the latent variable \(Z_{Part}\), we first substitute the upsampling technique with the incorporation of mask token. Subsequently, this modified latent variable is transmitted to the decoder. This process is mathematically expressed as:

The entire compressing and completing processes are illustrated in Fig. 2. The objective of our study is to identify a set of encoding, query, and decoding functions, their parameters are denoted as \(\theta\), \(\phi\) and \(\psi\), respectively. This parameter set can be obtained by minimizing the discrepancy between the original matrix X and its reconstructed counterpart \(X'\):

Query, encoder and decoder in FlowMat

For effective compression and completion through subset acquisition and mask token insertion, meticulous design of querying strategies, the encoder, and decoder is essential. This section introduces these three components in detail.

Query: According to the number of compressed target frequency domain units, We generate random real vectors query, \(query\in {R^N}\). The query is a vector that can be updated from learning. Each time the target subset is selected as \(Z_{Part}\), the set of topK positions of the query will be selected according to the compression rate to achieve compression.

Encoder: We achieve compression by selecting a subset of X. To obtain high-quality reconstruction, Z should be compact and representative enough. We leverage the self-attention mechanism within the transformer structure to obtain fully correlated latent variables. Moreover, we introduce a specialized transmission path mask \(M'\) at the last block to facilitate the coding of global information to the target set. The calculation of the encoder is:

where Pos is the position embedding. \(W_{*}, (*={1,2,Q,K,V})\) are learnble parameters. In Enq. 8, the mask \(M'\) is:

where \(r, h \le {N_c}\) represents the number of the subcarriers. Fig. 4 illustrates this single mask-attention stage.

Decoder: We initialize the completion matrix by inserting mask tokens. The decoder also uses the self-attention mechanism to pass effective information in \(Z_{Part}\) to the mask token.Data reconstruction is realized through multi-layer message passing. We further add a transfer path into the first layer to embed the mask map. We introduce a transfer path into the first layer specifically for embedding the inverse mask \(M'\). This transfer path enhances the transferability of relevant information to the mask token, meanwhile preventing the contamination of valid information by initial invalid data. The process of the decoder is formulated as:

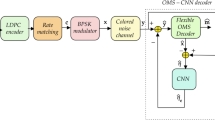

The structure of FlowMat. Our approach applies the typical DNN-based encoder-decoder framework and embeds the novel learnable Query vector (\(Q_{\varphi }\)) to active masking. First, using the based multi common attention blocks (ATT) and a mask-attention block (MAT), the latent variables are obtained. Then, we use active mask for the latent variables and get the compact representation. Finally, the decoder recovers the completed matrix, which embeds the mask token and concatenates the compact representation.

The structure of a single mask-attention stage in MAT. ‘\({{\varvec{M}}}'\)’ represents the proposed mask attention matrix.

Joint channel estimation and feedback

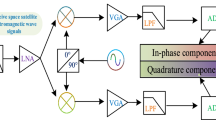

Channel estimation

Channel estimation aims to recover all \(N_c\) subcarries channel matrix from the \(N_P\) pilots’ information. However, the pilots obtained by the receiver contain significant environmental noise, which further hinders the accurate estimation. To recover DL CSI with high quality, we need to build a denoising network to remove noise from the pilots. Then, the clean pilots can be used to recover the complete DL CSI with the help of a completion network. As shown in Fig. 5, our channel estimation network includes three main components: denoising block, mask token embedding block, and recovery decoder. Note that we do not artificially add noise during training or testing, since the dataset already provides pilot signals with realistic noise. These are directly used as inputs in both the training and evaluation phases.

For the complex values of the CSI matrix, we first concatenate the real and imaginary as inputs (\(\mathbb {R}^{N_c\times {N_t}\times {2}}\)). The channel estimation in our system is performed at the user equipment (UE) side, taking into account the constraint of calculation resources. Our design focuses on achieving low complexity, and to achieve denoising, we utilize the lightweight MLP-Mixer. The network employed in our system reduces the dimension of the pilot information from the feature dimension to the original dimension. Additionally, we use a pixel-wise loss to ensure that the pilot information aligns with the noise-free full information provided in the ground truth. After obtaining the clean pilot, we embed the masked tokens and recovery decoder in the same way as the FlowMat decoder (\(D_\phi\)). Finally, the NMSE between the output and noiseless full information is calculated. The decoder used for channel estimation shares the same architecture as the decoder used for channel feedback. This architectural unification allows us to reuse learned parameters and ensures consistent feature transformation across both tasks, while also reducing model complexity.

The structure of the channel estimation network. Denoising is needed before completion. We use the lightweight MLP-Mixer to achieve encoder \(D_e\), and the decoder recovers the complete channel matrix with FlowMat’s decoder \(D_\phi\).

Channel feedback

The purpose of the channel compression and feedback network is to compress the wireless information in the user equipment and recover it as well as possible in the base station under the given bit bandwidth. In our work, we propose a transformer-based architecture in the encoder and decoder. As shown in Fig. 3, the input is an \(N_c\times {N_t}\) eigenvector matrix. Because the values of the eigenvector are complex, we will concatenate the real part and the imaginary part to obtain the \(N_c\times {2}\times {N_t}\) input. And then this input is sent to the encoder. We regard the subcarriers as the tokens of FlowMat’s encoder (\(E_\theta\)). After getting the deep features Z, the network learns a query vector to select specific deep subcarriers’ feature and only retains m of them (m is a super parameter), which is denoted as \(Z_{Part}\). For quantization, there are two options: uniform quantization and VQ quantization35. The bit stream transmitted by uniform quantization is the quantized sequence of \(Z_{Part}\), while VQ quantization transmits the indexes of the corresponding vectors in the codebook corresponding to \(Z_{Part}\).

Following quantization, masked tokens are utilized to recover the information, similar to the decoder process in channel estimation. Finally, the decoder is employed to recover the full information.

Joint and individual tasks

Firstly, the channel estimation model is employed to estimate the missing channels, thereby accomplishing frequency domain interpolation and denoising. Then, based on the estimated channel, the eigenvectors to be feedback on different subcarriers are calculated. Finally, the channel feedback model is used to implement the channel eigenvector compression and feedback. We build a model using an end-to-end training manner without modifying the network structure to realize information estimation and feedback. The model takes pilots as input and produces eigenvectors as output. We also separately train a channel estimation network and a channel feedback network, each referred to as ’splited’, and subsequently evaluate their performance together.

Training strategy

All training is conducted offline. The deployed model operates in inference mode without real-time parameter updates, ensuring low latency in practical systems. The proposed network uses progressive training and joint training. The progressive training strategy initially employs the normalized root mean square error (NMSE) loss to train both the encoder and the denoised constrained network, which is formulated as:

where \(D_e\) is the ‘Denoise’ network in Fig. 5, \(y_{n,k}\) is the kth channel of the input pilot, T is the number of training samples, and \(H^{\prime }_{i,k}\) is the selected ideal channel vector corresponding to \(y_{n,k}\). And the decoder is trained with the NMSE loss while keeping the parameters of the encoder and the denoised constrained network fixed. The loss function can be formulated as:

where T is the number of training samples, and \(H_n\) is the whole ideal channel matrix. The joint training is to train all the parts in the channel estimation network, simultaneously, which combines Eqn. 11 and Eqn. 12 as loss functions.

The loss function of channel compression and feedback network is,

where \(N_c\) is the number of subcarriers of each sample, \(w_{i, j}\) and \(w_{i, j}^{\prime }\) are the eigenvectors of labels and predicted eigenvectors, respectively.

Experiments

Data description

The dataset that we use is Mobile Communication Open Dataset in 2022. It has 32 transmit antennas and 4 receive antennas. Channel estimation and channel feature feedback are required according to specific pilots. This dataset provides two different received pilots, namely, high-density (HD) pilot and low-density (LD) pilot as the input information. Each of them includes 300,000 samples. The high-density pilots occupy 26 resource blocks out of 52 resource blocks (odd numbers of the resource blocks) to send pilot information. The dimension of each data sample is \(4 \times 208 \times 4\). The first ‘4’ refers to four receiving antennas. ‘208’ refers to 208 subcarriers (26 resource blocks, each resource block has 8 subcarriers with pilot information), and the second ‘4’ refers to 4 OFDM symbols. For low-density pilots, 6 of 52 resource blocks are occupied (serial numbers: 7, 15, 23, 31, 39, 47). The dimension of each data sample is \(4 \times 48 \times 4\), where ‘48’ refers to 6 resource blocks, each of which has eight subcarriers with pilots.

The time-domain full channel information contains a total of 300,000 samples as the unified information for both the high-density pilots and low-density pilots. The dimension of each sample is \(4 \times 32 \times 64\), corresponding to 4 receiving antennas, 32 transmitting antennas, and 64 delay sampling.

The time-domain full channel information can be converted to the eigenvector information for better performance in channel compression and feedback. These channel eigenvectors are given by the dataset. The transmission bandwidth is divided into 13 subcarriers, each of which contains 32-dimensional eigenvectors. There are 300,000 samples in this part, which are used as unified labels for high-density and low-density pilots. The dimension of each sample is \(32 \times 13\).

We use the first 95% of the samples in the dataset for training and the last 5% for testing, namely, the test set contains 15,000 samples. This split is chosen considering the large dataset size (300,000 samples), which allows us to retain a sufficiently large test set (15,000 samples) for reliable evaluation, while maximizing training diversity and data utilization.

Comparison results

We first compare our method with the SOTA methods of the two tasks individually and then conduct experiments on the joint channel estimation and feedback.

Comparison metrics

For channel estimation, we use NMSE to evaluate the error between the desired and estimated channels, which is calculated by:

where y is the input pilots and \(H^{\prime }\) is the ideal channel matrix. To present the results clearly, we express the NMSE in decibels (dB).

For channel feedback, we employ the cosine similarity (Rho) between the channel eigenvector of the feedback and the label as metric, which is calculated as:

where T is the number of tested samples, \(N_{c}\) is the number of subcarriers of each sample, \(w_{i, j}\) and \(w_{i, j}^{\prime }\) are the eigenvectors of labels and predicted eigenvectors, respectively.

Channel estimation

We compare ours with four SOTA channel estimation methods, ChannelNet6, AttentionNet8, CBDNet7, and Attention-Aid20. ChannelNet regards the problem as image super-resolution and image denoising. In ChannelNet, the pilots are regarded as low-resolution images, and the super-resolution network, SRCNN36 cascaded with the denoising network, DNCNN37 is used to estimate the channels. AttentionNet proposes a new hybrid encoder-decoder structure. It contains a transformer encoder and a residual decoder CNN. An improved CNN network is proposed in CBDNet, which uses a noise level estimation subnetwork, non-blind denoising subnetwork, and asymmetric joint loss function for channel estimation. Attention-Aid introduces a new attention-aided deep learning channel estimation framework for traditional large-scale MIMO systems, which includes channel attention modules.

We utilize the metric NMSE for evaluation, and the corresponding performance results are presented in Table 1. It shows that our method is better than other models in the channel estimation only task. Ours achieves −6.6106 dB and −5.9438 dB in the high-density test set and low-density test set, respectively (lower is better), which improves CBDNet by about 14% and 11%. When compared with the attention-based method, Attention-Aid, our method improves Attention-Aid by 21% and 39%. Our masked token scheme has learned a better mapping between deep features and channel information by adding learnable tokens, which achieves better estimation results. ChannelNet is a CNN-based method that performs denoising and estimation in two separate stages. Although their method achieves excellent results on high-density datasets (ranked second among all methods), its performance on low-density datasets is not satisfactory (ranked last among all methods). In contrast, our method can achieve good performance in both settings. For computation complexity, the Floating Point Operations (denoted as ‘FLOPs’) and network parameters (denoted as ‘Params’) results are shown in Table 2. Among the comparison methods, FlowMat exhibits the lowest FLOPs with slightly higher Params.

Quantitative comparison of joint channel estimation and channel compression on the dataset. Higher is better.

CSI compression and feedback only

For CSI compression and feedback, we also compare with six SOTA methods, CsiNet10, CsiNet+14, ImCsiNet38, DCRNet39, EVCsiNet29, and EVCsiNet-T40. CsiNet and CsiNet+ are deep-learning-based feedback neural networks, which contain Convolution, fully connected layers and BatchNorm layers. ImCsiNet is an implicit feedback neural network, which contains LSTM structure, fully-connected layers and BatchNorm layers. DCRNet is a CSI feedback network based on dilated convolution. The dilated convolution layer is used to enhance the reception field without increasing the convolution size. EVCsiNet is an eigenvector based deep learning CSI feedback method, in which the joint eigenvector vectors cascaded from multiple subcarriers are compressed and restored at the encoder and decoder, respectively. The network of EVCsiNet adopts the architecture of Residual block41. EVCsiNet-T has developed the method of EVCsiNet, including i) channel data analysis and pre-processing, ii) neural network design and iii) quantization enhancement.

We employ the metric Rho for evaluation, and the performance is presented in Table 3. Here, ‘bit’ refers to the bit budget allocated for compressed CSI transmission, corresponding to different compression levels (64, 128, 256 bits). We report the performance of compressing and recovering using original eigenvector vectors. The results also demonstrate that our approach is better than other models across all bits overhead. For example, it achieves 0.9015 in the 64-bit, which improves CsiNet by 14.53%. FlowMat and EVCsiNet-T use the same transformer structure, and FlowMat improves the latter by 3.59% in 64 Bit. The main reason is that the last layer of the encoder in EVCsiNet-T directly decreases its output into low dimension vector. This approach results in significant information loss, causing difficulties for the decoder in recovering the original data. The eigenvector is denser than DFT, thus, the CNN ways’ performance is lower than that of LSTM and Transformer ways, which utilize the correlation in subcarriers. In CNN ways, the CsiNet+ uses the 7*7 kernel to obtain the key feature, which loses more information than CsiNet’s 3*3 kernel in eigenvector data. Similar to channel estimation only to estimate computation complexity, the experimental results w.r.t. FLOPs and Params are shown in Table 4. The FLOPs of ours is lower than EVCsiNet and CsiNet and the Params is lower than EVCsiNet_T and ImCsiNet.

Joint channel estimation and feedback

Since there are few joint channel estimation and feedback methods, we only compare one joint channel and feedback method, namely JCEF16. JCEF, which completes the full channel information, compresses the information using uniform quantization, and restores the information at last. Meanwhile, we combine some of the channel estimation methods with the channel feedback method to compare with our method. It denotes ‘AttentionNet+EVCsiNet-T’ and ‘AttentionNet+DCRNet’.

We use Eqn. 15 for evaluation and the performance is shown in Fig. 6(a). In low-density pilots (Fig. 6(b)), we can see that the Rho increases by 0.0378, 0.0526, and 0.053 on 64-bit, 128-bit and 256-bit respectively, when using our model. In high-density pilots (Fig. 6(a)), we can see that the Rho increases by 0.0227, 0.0223, and 0.0149 on 64-bit, 128-bit and 256-bit respectively, when using our model. These results show the effectiveness of our FlowMat in joint channel estimation and channel compression.

Ablation study

In this subsection, we do the ablation study using the low-density dataset.

End-to-end solution v.s. splited training

To show the effectiveness of the splited training pipeline, we build a model named ‘Ours: splited’ in Fig 6(a), and (b) is tested on high-density pilots. We compared it with the end-to-end training manner to realize information estimation without modifying the network structure. The input is feedback with pilots and the output is eigenvectors. And Eqn. 13 serves as the loss function. In low-density pilots, it can be observed that Rho of splited manner is higher than end-to-end on 64-bit, 128-bit, and 256-bit, respectively. The same phenomenon can be observed in high density. And our method in splited manner performs best among the competing methods. When compared to other end-to-end methods, our approach still outperforms them (e.g., see JCEF and AttentionNet+DCRNet), making it the most effective in the end-to-end framework.

The loss function for channel estimation

For channel estimation, we also try other loss functions for the ablation study. In the original model, NMSE loss and NMSE loss are used for channel denoising channel estimation, respectively. And In Table 5, it is denoted as ‘NMSE+NMSE’. And we build some models for comparison, ‘L1+L1’ and ‘L1+NMSE’. The one before the ‘+’ refers to the loss used for denoising, and the one after the ‘+’ refers to the loss used for estimation. We can see that among these methods, ‘NMSE+NMSE’ performs the best result. For example, it exceeds ‘L1+L1’ and ‘L1+NMSE’ by about 0.0036 and 0.0003 respectively in the low-density test set.

Ablation study: The way of token reduction in CSI compression.

The way of token reduction in CSI feedback

In our channel compression and feedback model, we propose channel selection technique and mask embedding for token reduction and expansion, respectively. There are also some other methods that can process token reduction and expansion. For example, MLP layers are a common way for dimension reduction and expansion8,16. We use the MLP layers and token average merging for comparison. For MLP layers, we use one MLP for token reduction and another MLP for token expansion. For token average merging, we group all the input tokens equally and then calculate the average value of each group for token reduction. For token expansion, the way is the same as the original model. We do the experiments on channel compression and feedback only. And the results show the effectiveness of our proposed method. In Fig. 7, ‘MLP’ and ‘Token merging’ mean using MLP layers and token merging respectively. It shows that although the MLP method has advantage (0.0011) over our original method in the case of low-bit compression, the performance of our method exceeds the MLP method in the middle- and high-bit compression (exceed by 0.0038 and 0.0014). When compared with ‘Token merging’ and ours, our method performs equally well as the token merging method at lower bit rates, but exhibits significant advantages (exceeding by 0.006) at higher bit rates.

Tokens’ content and update

The contents of masked tokens and whether updating will also affect the performance. Therefore, we try to make the initial values of masked tokens all zero (‘0’), the initial values are standard normal distribution (‘randn’), and the token values are updated/not updated in the training process. Therefore, there are four experimental combinations: ‘zero+update’, ‘randn+update’, ‘zero+without update’, and ‘randn+without update’. The results are shown in Table 6. It can be seen that in the cases of high-bit compression and low-bit compression, random initialization and updating have different effects on performance. When the compression bit is low (64-bit), the impact of the initialization of the standard normal distribution (‘randn’) is dominant, and updating has a weak impact on the results. When the compression bit is high (128-bit), the impact of updating is dominant. Updating the tokens’ parameters will boost the performance.

Which tokens should be masked?

Finally, we do the ablation study about which tokens should be masked in the mask token embedding operation. In our original model, we learn a query vector to determine which tokens should be masked. Without losing generality, we build a comparable model whose query vectors are randomly selected at the initial time and are fixed during training and testing. The performance of the learnable query vector and fixed query vector is almost the same. However, because the performance of the learnable query vector is slightly higher in some specific cases, we employed the technique of query learnable vector in our paper.

Conclusions

In this paper, we leverage the correlation in the inherent frequency domain among channel matrices and thereby propose a unified framework named FlowMat for joint channel estimation and feedback in massive MIMO systems. The proposed FlowMat takes advantage of the encoder-decoder architecture. The entire encoder-decoder network is dedicated to channel feedback utilizing a learnable mask token, which has achieved excellent channel feedback performance. The decoder is used for channel estimation, Channel estimation is achieved by a decoder with the same structure as in channel feedback, wherein a lightweight multilayer perceptron denoising module is utilized for further accurate estimation. We have conducted extensive, which demonstrates the superior performance on channel estimation and feedback in both joint and separate tasks. In addition, the FlowMat offers a significant reduction in computation complexity in particular channel estimation.

Data availability

The dataset used in this study is publicly available and can be downloaded from the Mobile Communication Open Dataset at: https://www.mobileai-dataset.cn/html/default/zhongwen/shujuji/index.html?index=1.

References

L., M. T. Massive mimo: an introduction. Bell Labs Tech. J. (2015).

Tariq, F., et al. A speculative study on 6g. IEEE Wirel. Commun. (2020).

Rusek, F., et al. Scaling up mimo: Opportunities and challenges with very large arrays. signal processing magazine (2012).

Song, W. G. & Lim, J. T. Channel estimation and signal detection for mimo-ofdm with time varying channels. IEEE Transactions on Signal Processing (2006).

Ma, J. & L, P. Data-aided channel estimation in large antenna systems. IEEE Transactions on Signal Processing (2014).

Mehran, S., Vahid, P., Ali, M. & Hamid, S. Deep learning-based channel estimation. IEEE Commun. Lett. (2019).

Jin, Y., Zhang, J., Ai, B. & Zhang, X. Channel estimation for mmwave massive mimo with convolutional blind denoising network. IEEE Commun. Lett. (2020).

Luan, D. & Thompson, J. Attention based neural networks for wireless channel estimation. vehicular technology conference (2022).

3GPP & Antipolis, S. technical specification group radio access network; nr; physical layer procedures for data (release 16). 3GPP Rep. TS 38.214 V16.1.0 (2020).

Wen, C.-K., Shih, W.-T. & Jin, S. Deep learning for massive mimo csi feedback. IEEE Wirel. Commun. Lett. 7, 748–751 (2018).

Lu, Z., Wang, J. & Song, J. Multi-resolution csi feedback with deep learning in massive mimo system. In ICC 2020-2020 IEEE International Conference on Communications (ICC), 1–6 (IEEE, 2020).

Cao, Z., Shih, W.-T., Guo, J., Wen, C.-K. & Jin, S. Lightweight convolutional neural networks for csi feedback in massive mimo. IEEE Commun. Lett. 25, 2624–2628 (2021).

Sun, Y., Xu, W., Fan, L., Li, G. Y. & Karagiannidis, G. K. Ancinet: An efficient deep learning approach for feedback compression of estimated csi in massive mimo systems. IEEE Wirel. Commun. Lett. 9, 2192–2196 (2020).

Guo, J., Wen, C.-K., Jin, S. & Li, G. Y. Convolutional neural network-based multiple-rate compressive sensing for massive mimo csi feedback: Design, simulation, and analysis. IEEE Transactions on Wirel. Commun. 19, 2827–2840 (2020).

Ma X, G. Z. Data-driven deep learning to design pilot and channel estimator for massive mimo. IEEE Transactions on Veh. Technol. (2020).

Tong, C. et al. Deep learning for joint channel estimation and feedback in massive mimo systems. arXiv: Information Theory (2020).

Vaswani, A. et al. Attention is all you need. In Advances in Neural Information Processing Systems (2017).

Xiao, H. et al. Ai enlightens wireless communication: A transformer backbone for csi feedback. arXiv preprint arXiv (2022).

Xu, Y., Yuan, M. & Pun, M.-O. Transformer empowered csi feedback for massive mimo systems. wireless and optical communications conference (2021).

Gao, J., Hu, M., Zhong, C., Li, G. Y. & Zhang, Z. An attention-aided deep learning framework for massive mimo channel estimation. IEEE Transactions on Wirel. Commun. (2021).

Hu, Q., Gao, F., Zhang, H., Jin, S. & Li, G. Y. Deep learning for channel estimation: Interpretation, performance, and comparison. IEEE Transactions on Wirel. Commun. 20, 2398–2412 (2020).

Dong, C., Loy, C. C., He, K. & Tang, X. Image super-resolution using deep convolutional networks. IEEE transactions on pattern analysis and machine intelligence 38, 295–307 (2015).

Chun, C.-J., Kang, J.-M. & Kim, I.-M. Deep learning-based channel estimation for massive mimo systems. IEEE Wirel. Commun. Lett. 8, 1228–1231 (2019).

He, H., Wen, C.-K., Jin, S. & Li, G. Y. Deep learning-based channel estimation for beamspace mmwave massive mimo systems. IEEE Wirel. Commun. Lett. (2018).

Mashhadi, M. B. & Gündüz, D. Pruning the pilots: Deep learning-based pilot design and channel estimation for mimo-ofdm systems. IEEE Transactions on Wirel. Commun. (2021).

Mashhadi M B, G. D., Yang Q. Distributed deep convolutional compression for massive mimo csi feedback. IEEE Transactions on Wirel. Commun. (2020).

Lu, C., Xu, W., Shen, H., Zhu, J. & Wang, K. Mimo channel information feedback using deep recurrent network. IEEE Commun. Lett 23, 188–191 (2018).

Chen, M. et al. Deep learning-based implicit csi feedback in massive mimo. IEEE Transactions on Commun. 70, 935–950 (2021).

Liu, W. et al. Evcsinet: Eigenvector-based csi feedback under 3gpp link-level channels. IEEE Wirel. Commun. Lett. (2021).

Xiao, Z. et al. Channel estimation for movable antenna communication systems: A framework based on compressed sensing. IEEE Transactions on Wirel. Commun. (2024).

Jindal, N. Mimo broadcast channels with finite-rate feedback. IEEE Transactions on information theory 52, 5045–5060 (2006).

He, K. et al. Masked autoencoders are scalable vision learners. In roceedings of the IEEE/CVF conference on computer vision and pattern recognition, 16000–16009 (2022).

Chen, X., Xie, S. & He, K. An empirical study of training self-supervised vision transformers. In International Conference on Computer Vision, 9620–9629 (IEEE, 2021).

Bao, H. & Dong, L. & Wei, F (Bert pre-training of image transformers. Arxiv, 2021).

van den Oord, A., Vinyals, O. & Kavukcuoglu, K. Neural discrete representation learning. In Advances in Neural Information Processing Systems, 6306–6315 (2017).

Dong, C., Loy, C. C., He, K. & Tang, X. Image super-resolution using deep convolutional networks. IEEE Transactions on Pattern Analysis Mach. Intell. (2014).

Zhang, K., Zuo, W., Chen, Y., Meng, D. & Zhang, L. Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising. IEEE transactions on image processing 26, 3142–3155 (2017).

Chen, M. et al. Deep learning-based implicit csi feedback in massive mimo. IEEE Transactions on Commun. (2022).

Tang, S. et al. Dilated convolution based csi feedback compression for massive mimo systems. IEEE Transactions on Vehicular Technology (2022).

Xiao, H. et al. Ai enlightens wireless communication: Analyses, solutions and opportunities on csi feedback. China Commun. (2021).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, 770–778 (2016).

Author information

Authors and Affiliations

Contributions

Mei Yin: Methodology, Programming, Writing-Original Draft Preparation. Mingming Zhao: Data Preparation, Writing-Revision and Response. Lin Liu: Visualization, Writing-Reviewing and Editing. Lifu Liu: Supervision, Validation, Conceptualization, Reviewing and Editing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Yin, M., Zhao, M., Liu, L. et al. Joint channel estimation and feedback with masked token transformers in massive MIMO systems. Sci Rep 15, 41512 (2025). https://doi.org/10.1038/s41598-025-25256-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-25256-1