Abstract

Understanding perception and communication gaps between patients with neurological diseases and their treating physicians is essential for optimizing patient-centered care. The GAP-AI study aimed to identify these gaps in a cohort of patients with Parkinson’s disease, multiple sclerosis, or epilepsy. This single-center observational study involved patients (N = 197) and their treating physicians (N = 12) answering questionnaires (18-item Patient Satisfaction Questionnaire Short Form, 9-item Shared Decision Making Questionnaire for patients and physicians, Barthel Index, and 36-item Short Form subdomains) over two clinic visits. The primary outcome was the difference between pairwise items in the questionnaires (perception gap). Perception gaps, albeit minimal, were identified for patient satisfaction, shared decision-making, activities of daily living, and quality of life. Attributes that significantly influenced perception gaps included physician’s age, years of experience/holding a neurologist qualification, disease area, and the number of patients treated, with experienced physicians tending to provide more rigorous evaluations than their patients’ self-assessments. Multiple machine learning algorithms were used to develop predictive models based on study data. The k-nearest neighbors algorithm demonstrated the best performance in predicting a patient–physician perception gap. Insights from our study highlight the potential to recognize, predict, and ultimately address these gaps, thus enhancing clinical practice by increasing the level of understanding between patients and their physicians.

Similar content being viewed by others

Introduction

Neurological diseases, such as Parkinson’s disease, multiple sclerosis, and epilepsy are chronic in nature with complex symptomology. As these diseases progress, their negative impacts on activities of daily living (ADLs), and thus quality of life (QoL), become increasingly severe1,2,3,4.

Understanding the complex symptoms of neurological diseases, which constantly evolve, and how they affect daily functioning is important for effective decision-making in clinical practice, which in turn influences patient treatment satisfaction. Recently, ‘shared decision-making’ (SDM), whereby healthcare professionals and patients share evidence and determine treatment decisions together, has been recognized as playing an important role in achieving patient treatment satisfaction5. SDM encompasses patient-centered care by incorporating both the patient’s pathologic condition and clinical symptoms (from the viewpoint of a physician) and the patient’s preferences, values, and goals. Implementation of SDM has been reported to improve patient outcomes and reduce medical expenses6,7. However, most clinical decisions are based on information obtained only at the time of medical examination, thus limiting physicians’ ability to grasp the full spectrum of patients’ symptoms and their impacts on daily life. It therefore seems likely that perception gaps exist between patients and physicians in terms of their communication, and recognition of disease status and treatment goals. Studies have identified such gaps in both non-neurological8,9,10 and neurological disease settings10,11,12,13,14,15.

Perception gaps have been identified between patients with Parkinson’s disease and their physicians in terms of the relative importance of different disease symptoms and treatment delivery options, and their knowledge of support networks11,12. In addition, some aspects of QoL have been rated as more important by patients with multiple sclerosis than by their treating physicians14. Similarly, patients with epilepsy in another study rated reducing seizure severity as a more important treatment goal than seizure frequency, which was reversed with physicians15. Patients with multiple sclerosis have also self-reported more relapses than physician-documented relapses during routine clinical practice, and perception gaps have been found to be more pronounced in patients with greater disability or decreased treatment satisfaction13 and in certain ethnicities10.

Most studies have compared perception gaps in disease recognition and communication indirectly between groups of patients and physicians. However, studies that have directly identified perception gaps between patients with neurological diseases and their treating physicians are limited, particularly in Japan12,15. An evidence-based, patient-centric approach that takes into consideration the characteristics of individual patients and their physicians is required to optimize their outcomes16. Hence, understanding the gaps in the overall management of neurological diseases is an area warranting further research. We hypothesized that patient and physician factors can influence whether a perception gap exists.

There is increasing evidence that artificial intelligence (AI) and machine learning could be used to enhance the management of neurological diseases17,18. For example, machine learning algorithms trained on patient survey responses can help identify patients at risk of responding negatively to a survey19, and models trained on patient self-reported surveys and electronic health records can predict patient satisfaction20. We hypothesized that machine learning can generate a predictive model for identifying gaps in perception and communication during patient–physician consultations.

The aim of this observational study was to investigate the perception gaps between patients and physicians in the neurological disease setting and to develop a predictive model using AI (GAP-AI study). The objective of this study was to identify perception gaps between patients with Parkinson’s disease, multiple sclerosis, or epilepsy, and their treating physicians regarding patient satisfaction, SDM, assessment of ADLs, and QoL. Additionally, we investigated factors influencing these gaps and whether they could be predicted using an AI machine learning model.

Results

Patient characteristics

This study was conducted between January 2023 and August 2023. In total, 198 patients were enrolled and 197 patients were included for subsequent analyses (one patient withdrew their consent). Of these patients, the mean (SD) age was 58.1 (16.4) years and most were women (60.4%; Table 1). The most common diagnosis was Parkinson’s disease (69.5%), followed by multiple sclerosis (20.3%) and epilepsy (10.2%), with an overall mean disease duration of 7.4 years. Over one-third of patients had their disease for less than 5 years (38.0%). Most patients reported living with someone (82.4%) and not requiring caregiver assistance (61.0%). The mean 36-item Short Form (SF-36) Physical Component Summary (43.2) and Role/Social Component Summary (46.8) scores were slightly below the average of 50 in the general population, whereas the Mental Component Summary (51.4) score was slightly above the average of 50. Most patients had mild-to-moderate disease severity of Parkinson’s disease, multiple sclerosis, or epilepsy (Supplementary Table 1).

Physician characteristics

Half the physicians were between the ages of 35 and 44 years and most (83.3%) were men (Table 2). More than 40% of physicians had over 20 years of experience and most specialized in Parkinson’s disease (75.0%). Of the 11 physicians (91.7%) who were certified neurologists by the Japanese Society of Neurology, one-third had held their certification for 5–10 years. Most physicians (75.0%) had treated fewer than 500 cumulative patients in their career and one physician had treated more than 2000 patients.

Perception gaps in pairwise questionnaire items

The mean (standard deviation [SD]) sum of relative difference between patient and physician responses (Yr) to the 18-item Patient Satisfaction Questionnaire Short Form (PSQ-18), 9-item Shared Decision Making Questionnaire for patients (SDM-Q-9) and physicians (SDM-Q-Doc), Barthel Index, and original questionnaire were 3.4 (9.8), 7.2 (12.2), −0.3 (9.2), and 4.4 (6.4), respectively (Table 3). The mean (SD) sum of the absolute difference between patient and physician responses (Ya) to the PSQ-18, SDM-Q-9/SDM-Q-Doc, Barthel Index, and original questionnaire were 16.3 (5.7), 12.7 (7.8), 3.6 (9.4), and 8.4 (3.9), respectively.

Overall, 19.3% of patients’ SF-36 subdomain responses matched those of their physicians (Table 3). The subdomains most frequently prioritized by patients were role physical (25.4%), physical functioning (19.3%), and general health (18.8%). These SF-36 subdomains were also most frequently prioritized by physicians, but at different rates: 39.0%, 12.8%, and 15.5%, respectively.

Comparison of the total scores of patient and physician responses to each of the questionnaires, as well as the degree of correlation between the responses, is another approach to evaluating perception gaps. There were significant differences between the mean total scores of patients (Yp) and physicians (Yi) for PSQ-18, SDM-Q-9/SDM-Q-Doc, and the original questionnaire (P < 0.001; Supplementary Table 2). Similarly, there were low correlations between patient and physician responses to the individual items of the PSQ-18 (κ = 0.030), SDM-Q-9/SDM-Q-Doc (κ = 0.021), Barthel Index (κ = 0.172), the original questionnaire (κ = 0.039), and SF-36 subdomains (κ = 0.203).

Factors that influenced perception gaps

Cross-tabulation analyses identified the following patient attributes that significantly influenced the perception gap between the concordant and discordant groups for PSQ-18: caregiver status (present vs absent), age group, and the type of diagnosis (all P ≤ 0.002); for SDM-Q-9/SDM-Q-Doc: age group (P = 0.020), type of diagnosis (P < 0.001), and frequency of hospital visits (P = 0.002); for the Barthel Index: caregiver status (P = 0.008), age group (P = 0.003), occupation (P = 0.001), method of transportation to the hospital (P < 0.001), and duration of disease (P = 0.001); for the original questionnaire: age group (P = 0.025) and type of diagnosis (P = 0.017); and for SF-36 subdomains: type of diagnosis (P = 0.030; Table 4).

Physician attributes that significantly influenced the perception gap in the five outcomes were similar across the questionnaires and included: physician’s age, years of experience, disease area, years holding a neurologist qualification, years of experience in treating the target disease, and cumulative patients treated (Table 4).

Multiple regression analyses across the PSQ-18, SDM-Q-9/SDM-Q-Doc, and Barthel Index for all patients identified patient age, years of experience as a physician, years of holding a neurologist qualification, physician-reported time for outpatient consultation, and caregiver status as independent variables that significantly influenced the perception gap (Table 5).

Independent variables that significantly influenced the perception gap in Parkinson’s disease included years of experience as a physician, years of holding a neurologist qualification, years of experience in the treatment of Parkinson’s disease, cumulative number of patients treated, time allocated for outpatient consultations, and patient’s disease stage (Hoehn and Yahr; Table 5). Similarly, patient’s disability status (Expanded Disability Status Scale [EDSS]) was an independent variable that influenced the perception gap in patients with multiple sclerosis, and patient’s annual income for patients with epilepsy. Multiple regression analysis was not performed for the Barthel Index in patients with epilepsy as none of the variables showed significance in the univariate analysis.

Correlation of perception gaps between questionnaires

There were significant correlations between the mean Ya in PSQ-18 and SDM-Q-9/SDM-Q-Doc (ρ = 0.285; P < 0.001); PSQ-18 and the original questionnaire (ρ = 0.295; P < 0.001); and SDM-Q-9/SDM-Q-Doc and the original questionnaire (ρ = 0.400; P < 0.001; Supplementary Table 3). However, there were no significant correlations between patient and physician SF-36 subdomain rankings and the Ya of the PSQ-18 (P = 0.488), SDM-Q-9/SDM-Q-Doc (P = 0.758), Barthel Index (P = 0.829), or the original questionnaire (P = 0.701) (Supplementary Table 4).

Model performance

Multiple machine learning algorithms were evaluated to ensure robust cross-testing. The log loss values for the holdout set among all tested algorithms are shown in Supplementary Table 5 and the area under the curve (AUC) of the receiver operating characteristics (ROC) curves are shown in Supplementary Fig. S1. The k-nearest neighbors model showed the best performance (i.e., smallest log loss values for the holdout set) in predicting the presence or absence of a perception gap identified in the primary outcome from PSQ-18, SDM-Q-9/SDM-Q-Doc, Barthel Index, the original questionnaire, and the SF-36 subdomain of interest (Table 6).

The feature importance was measured by SHapley Additive exPlanations (SHAP) values. The top five important features of the k-nearest neighbors predictive model for identifying a perception gap are shown in Fig. 1. For PSQ-18, these features were ‘years of treatment experience for target disease’, ‘patient age’, ‘annual income’, ‘presence or absence of a caregiver’, and ‘cumulative number of patients treated’. For SDM-Q-9/SDM-Q-Doc, the features were ‘Ya for the original questionnaire’, ‘current occupation’, ‘certificate for recipient of welfare services’, ‘highest level of education’, and ‘years of treatment experience for target disease’. For the Barthel Index, the features were ‘current occupation’, ‘previous occupation’, ‘patient’s other treatments’, ‘annual income’, and ‘cumulative number of patients treated’. For the original questionnaire, the features were ‘annual income’, ‘Ya for the SDM-Q-9/SDM-Q-Doc questionnaires’, ‘patient age’, ‘years of treatment experience for target disease’, and ‘highest level of education’. For the SF-36 subdomains, the features were ‘current occupation’, ‘annual income’, ‘highest level of education’, ‘presence or absence of a caregiver’, and ‘previous occupation.’

Highest impact features for the specified target measured by SHAP values for the k-nearest neighbors model. (a) PSQ-18, (b) SDM-Q-9/SDM-Q-Doc, (c) Barthel index, (d) original questionnaire (e) SF-36 subdomains.PSQ-18, Patient Satisfaction Questionnaire-18; SDM-Q-9; 9-item Shared Decision Making Questionnaire from the patient perspective; SDM-Q-Doc, Shared Decision Making Questionnaire from the physician perspective; SF-36, 36-item Short Form; SHAP, SHapley Additive exPlanations; Ya, sum of the absolute difference between patient and physician responses.

Discussion

This is the first study to examine the perception gaps between patients with neurological diseases (Parkinson’s disease, multiple sclerosis, and epilepsy) and their treating physicians using validated patient-reported outcomes (PROs)/observer-reported outcomes (OROs) and an original questionnaire, and to develop a predictive model using machine learning for recognizing these gaps. Perception gaps between patients and physicians have previously been reported in neurological diseases, particularly in the perceived importance of symptoms, relapse reporting, and QoL determinants11,12,13,14. Such discrepancies can compromise treatment decisions, patient satisfaction, and overall care quality. For example, discrepancies in what patients and physicians prioritize for QoL can skew assessments, while differences in relapse reporting may lead to under- or overtreatment. Addressing these differences is therefore essential for improving communication and fostering patient-centered care. Although studies have shown that perception gaps exist, few have evaluated the reasons behind a perception gap in neurological diseases.

In our study, we identified perception gaps in patient satisfaction, SDM, ADLs, and QoL. These were evident across multiple instruments including the PSQ-18, SDM-Q-9/SDM-Q-Doc, and Barthel Index, with poor agreement between patient and physician responses. Factors that significantly influenced the perception gap in patient satisfaction were caregiver status, disease severity, type of diagnosis, years with a specialist qualification, and consultation time. Similarly, factors that significantly influenced the perception gap in SDM were caregiver status, patient age, type of diagnosis, age of the physician, years of neurologist qualification, cumulative total patients treated, consultation time, and years of experience with the relevant disease. For patient QoL, caregiver status, disease severity, type of diagnosis, and consultation time significantly influenced the perception gap. In a previous cross-sectional survey, differences in relapse reporting in patients with multiple sclerosis and their physicians were observed, and this perception gap was more pronounced in patients with greater disability levels, decreased QoL or treatment satisfaction13. The finding that severity affects QoL is consistent with the results of this study.

There is limited research examining the factors that influence perception gaps from a physician perspective. In our study, experience-related factors of physicians were frequently seen as strong predictors in correlation analyses, multiple regression analyses, and machine learning. Physicians with more clinical experience may provide more rigorous evaluations compared with patients’ responses, generating a perception gap. While perception gaps are often viewed negatively, our findings suggest that they may reflect a more cautious or more uncompromising clinical stance by experienced physicians. Another possible reason for this disconnect is that patients may be relatively satisfied with their current management (as these neurological diseases are chronic and progressive) and not seek further improvement in their disease status. This interpretation is important, as the presence of a perception gap does not necessarily indicate poor patient care but may highlight differing priorities or expectations; therefore, it would be meaningful to investigate whether perception gaps affect patient care in the future.

The original questionnaire developed for this study captured aspects of the patient–physician relationship not addressed by standard tools. Hence, some items in this questionnaire could potentially be used as scales to measure perception gaps that are not included in other validated questionnaires, such as communication status and trust between patients and their treating physicians, and clinical environment.

The strong performance of the k-nearest neighbors algorithm in this study can be attributed to its non-parametric nature, meaning it does not rely on assumptions about the underlying data distribution or linear relationships. Instead, it makes predictions based on the proximity of data points, allowing it to adapt to the local structure of the data. This characteristic is particularly beneficial in scenarios with limited data, such as in our study, where it can effectively leverage local patterns and similarities to make accurate inferences. While our machine learning model demonstrated strong predictive performance in identifying perception gaps, we acknowledge the ethical imperative to address potential biases inherent in AI systems. As highlighted in recent literature, such biases can arise from non-representative training data, underrepresentation of minority groups, or unclear model development processes, potentially leading to prejudiced outcomes or reinforcing existing disparities in patient care21,22. To mitigate these risks, our model incorporated diverse patient and physician attributes, and future iterations should include fairness audits, subgroup performance evaluations, and transparent reporting to ensure unbiased application in clinical settings.

A strength in our study was the direct pairing of patient and physician responses, allowing for a more accurate assessment of perception gaps in real-world clinical interactions compared with studies using unmatched samples in cross-sectional surveys. The use of multiple validated instruments and a novel questionnaire further strengthen our findings. However, the study was limited to a single center, and the sample size for epilepsy was relatively small, which may affect generalizability. A further limitation is the use of PRO data in machine learning models, as PROs are inherently subjective and may vary across individuals and contexts, potentially introducing variability that could affect model generalizability. Additionally, the PROs and the original questionnaire were not validated for physician use, with the exception of the SDM-Q-Doc, which may influence the interpretation of perception gaps.

Identifying patient characteristics associated with perception gaps may help physicians anticipate where these gaps are likely to occur in clinical practice, thereby supporting more aligned treatment planning and goal setting. The application of machine learning to predict perception gaps could offer a promising avenue for clinical decision support. However, implementation of the prediction model in the clinic will require further data accumulation (i.e. larger and diverse datasets) and external validation for generalization to different clinical settings, as well as the development of an intuitive interface for practicality and user-friendliness. Further research should explore whether perception gaps are associated with clinical outcomes such as adherence, satisfaction, or disease progression. Longitudinal studies could assess how these gaps evolve over time and whether interventions, such as communication training or decision aids, can reduce them.

In conclusion, the GAP-AI study highlights the existence and complexity of perception gaps between patients with neurological diseases and their treating physicians. These gaps are influenced by multiple factors (e.g. caregiver status, disease severity, type of diagnosis, physician’s experience level, and consultation time) and can be predicted using machine learning. The application of our prediction model showcases the potential for AI to enhance clinical decision-making processes. Recognizing and addressing perception gaps may enhance patient-centered care, potentially leading to improved outcomes in managing neurological diseases. Our data and insights may open new possibilities in patient care.

Methods

Study design

This was a prospective observational study involving patients with neurological diseases and their treating physicians at the Juntendo University Hospital (Tokyo, Japan). The study design was based on previous studies that evaluated perceptions of patient health status between patients and physicians10,13,14,23. Patients and their physicians answered questionnaires on PROs and OROs at two regular outpatient check-up visits. Patients completed the questionnaires during each visit, whereas physicians completed the questionnaires immediately after each visit and answered only once for common items between questionnaires that were not patient-dependent. Differences between questionnaire responses for each patient and their treating physician were analyzed as ‘gaps’. Although the validity of physicians providing responses to a PRO and patients providing responses to an ORO has not been evaluated, this approach has been undertaken in other studies focusing on perception gaps between patients and physicians8,9,14, and was considered necessary and appropriate for achieving the objectives of this study.

The study protocol and all amendments were approved by the Juntendo University Hospital ethics committee (approval number E22-0162). The study was conducted according to the ethical principles of the Declaration of Helsinki. Informed consent was obtained from each patient at visit 1 before participating in the study. This study is registered on the Japan Registry of Clinical Trials: jRCT1030220258 (https://jrct.mhlw.go.jp/en-latest-detail/jRCT1030220258).

Study population

Patients with Parkinson’s disease, multiple sclerosis, or epilepsy visiting the Department of Neurology at Juntendo University Hospital for at least 3 months and who visited at least once in the past 3 months were eligible for inclusion. Patients were excluded if they were under 18 years of age at the time of consent, unable to answer questionnaires (e.g., owing to cognitive dysfunction), or deemed unsuitable for inclusion by the study investigator.

Procedures

Patients and their treating physicians were given validated questionnaires to capture their perceptions of patient satisfaction (PSQ-18, SDM-Q-9/SDM-Q-Doc)24, ADLs (Barthel Index) and patient QoL (36-item Short Form [SF-36]; Supplementary Table 6). Additionally, disease-specific scales were used for items that could not be generalized across different neurological conditions. These included the Parkinson’s Disease Questionnaire-39 (PDQ-39)25, Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS-UPDRS)26, Hoehn and Yahr scale27, Multiple Sclerosis Quality of Life-54 (MSQOL-54)28, EDSS29, and Patient-weighted Quality of Life in Epilepsy (QOLIE-31-P)30. Physician information (e.g., age, sex, specialist qualification, years of experience, number of patients treated) was collected separately. An original questionnaire was also distributed to assess items for which no validated assessment scale exists.

Responses to questionnaires were obtained at two outpatient visits (visit 1 and visit 2), which followed each patient’s regular appointments. Patients provided informed consent and were enrolled in the study at visit 1. Visit 2 was defined as the next scheduled appointment visit. No evaluations were performed between visits. Patients and physicians each inputted their responses onto an electronic device. Data were managed on a cloud-based system and collected through an Electronic Data Capture (EDC) platform (hashPeak, Tokyo, Japan), which employs blockchain technology to ensure data integrity and security. Data entries were timestamped, encrypted, and stored in a decentralized ledger, allowing for secure tracking and auditing throughout the study.

Outcomes

The primary outcome was the difference between scores of pairwise items in the questionnaires (PSQ-18, SDM-Q-9/SDM-Q-Doc, Barthel Index, and the original questionnaire) answered by both patients and physicians (i.e. perception gap). Patients and physicians were also asked to rank the SF-36 subdomain that they prioritized as the most important in terms of their or their patient’s QoL, respectively.

The PSQ-18 is a tool used to assess patient satisfaction with healthcare services. It measures general satisfaction, interpersonal manner, communication effectiveness, financial consideration, duration of interaction, and ease of access31. The SDM-Q-9 and SDM-Q-Doc are questionnaires that measure the extent to which patients are involved in the process of decision-making and the extent to which physicians involve patients in the decision-making process, respectively32. The Barthel Index is an ordinal scale that measures a person’s ability to complete ADLs, including feeding, bathing, grooming, dressing, bowel control, bladder control, toileting, chair transfer, ambulation, and stair climbing33. The SF-36 is a commonly used questionnaire that measures overall health and well-being. It assesses eight different subdomains: physical functioning, role physical, bodily pain, general health, vitality, social functioning, role emotional, and mental health34. The original questionnaire developed for the current study was used to assess items for which no validated assessment scales exist. This included items about patient characteristics, disease, or treatment questions, the relationship with the physician, the physician’s clinical policy, and feelings about the medical practice.

Secondary outcomes included factors influencing perception gaps in patient satisfaction, SDM, assessment of ADLs, pairwise items assessed in the original questionnaire, and QoL that were identified in the primary outcome.

Data on potential factors influencing perception gaps were collected using disease-specific tools, including the PDQ-39 and MDS-UPDRS for Parkinson’s disease; MSQOL-54 for multiple sclerosis; QOLIE-31-P for epilepsy; the full SF-36 survey34, original questionnaire, and medical record data for all patients (regardless of disease); and physician information.

An exploratory outcome was the development and evaluation of a predictive model for patient–physician gap recognition using AI machine learning.

Statistical analysis

A total sample size of 200 patients (Parkinson’s disease, 140; multiple sclerosis, 40; and epilepsy, 20), based on the historical number of outpatients at the Juntendo University Hospital, as well as 13 physicians were planned for recruitment. All enrolled participants, excluding those who withdrew consent and refused use of their data or had no data recorded after enrollment, were included for study analyses. Questionnaire items that were not answered or could not be obtained were treated as missing values. Missing values were neither assigned nor imputed. Patient and physician responses were analyzed as individual and total scores. For the primary outcome, the ‘sum of the relative difference in patient and physician responses (Yr)’ to each questionnaire was calculated using the following formula.

k: Question k, n: Number of questions in the questionnaire, Xpk: The kth question score of the questionnaire answered by the patient (according to the scoring of the questionnaire), Xik: Score of question k of the questionnaire answered by the physician (according to the scoring of the questionnaire).

Because subtracting a patient response from a physician response can result in a negative or positive value, there is potential for scores of individual questions to offset each other when summing across the entire questionnaire for obtaining the ‘relative difference’. For this reason, we also calculated the ‘sum of the absolute difference between patient and physician responses (Ya)’ to each questionnaire using the following formula, where paratheses of the Yr formula are replaced with absolute value signs to obtain a positive value:

In addition to the primary analysis, the total score of patients (Yp) and the total score of physicians (Yi) were calculated. Both total scores were defined as the sum of the scores of individual questions of each questionnaire. Differences between the total scores of patients and physicians were compared using the Mann–Whitney U test. The degree of correlation between patient and physician responses for each questionnaire was calculated using the kappa coefficient.

The distribution of the perception gap was classified into ‘concordant’ and ‘discordant’ groups, based on the median value of the Ya. Differences smaller than or equal to the median were included in the concordant group and differences larger than the median were included in the discordant group. A cross-tabulation analysis of patient and physician background factors was performed between the concordant and discordant groups to determine their potential influence on the perception gap using Fisher’s exact test.

Correlations of differences between questionnaires (except for SF-36 subdomains) in terms of the Ya were evaluated using Spearman’s correlation coefficients. For the correlation of the SF-36 subdomain with other questionnaires, summary statistics for concordance/discordance of the SF-36 subdomain with each questionnaire in terms of the Ya were calculated and compared using the Mann–Whitney U test.

A univariate analysis was conducted to assess the association between patient characteristics and physician attributes that could potentially influence the perception gap using the Spearman’s correlation coefficient. A subsequent multiple regression analysis was performed using disease type as the dependent variable and significant patient characteristics/physician attributes (P < 0.05; as determined from the univariate analysis) as independent variables.

SPSS Statistics 26 (IBM Corporation, Armonk, NY, USA) was used for statistical analyses.

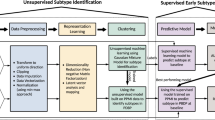

Model development

The patient and physician data set was used to develop and evaluate predictive models for recognizing perception gaps between patients and physicians. A supervised machine learning model was developed and trained on primary outcome data and secondary outcome response data. The perception gap was treated as a binary variable (concordant and discordant groups). To ensure robust cross-testing and efficacy in predicting perception gaps, we employed multiple machine learning algorithms, which included k-nearest neighbors, random forest, ensemble methods, neural networks, logistic regression, boosting, linear support vector machines, decision trees, and naïve bayes.

The target of the analysis was the difference between the patient and physician scores for the PSQ-18, SDM-9-Q/SDM-Q-Doc, Barthel Index, the original questionnaire (pairwise items evaluated by patients and physicians), and the responses to the SF-36 subdomain. The features of the learning model were patient and physician total scores of PSQ-18, SDM-9-Q/SDM-Q-Doc, Barthel Index, the original questionnaire, and the SF-36 subdomain; physician attribute information; patient-specific questionnaires (excluding patient–physician pairwise assessments and disease-specific assessments); and relevant medical record data. To enhance model inference accuracy, we performed feature selection using SHAP values to identify and retain the top 15 most influential features. This helped to reduce dimensionality while preserving the most predictive variables. To improve model training stability and generalization, the dataset was duplicated to increase the number of epochs. This approach aimed to mitigate overfitting and enhance model robustness in a high-dimensional context with small sample sizes.

Model validation

To evaluate model accuracy, fivefold cross-validation was used, with each fold serving once as a validation set while being trained on the remaining folds. Additionally, 20% of the data were withheld as a separate test set to further assess model generalization. Model performance was measured using log loss, which evaluates the degree to which predicted probabilities diverge from actual class labels, and AUC-ROC, indicative of the model’s ability to distinguish between classes35. To ensure the best balance between true and false positives and negatives, the Matthew’s correlation coefficient (MCC) was considered for selecting the classification threshold using the following formula36.

TP: True Positive, TN: True Negative, FP: False Positive, FN: False Negative.

Initial hyperparameter values were adopted from default settings provided by the respective machine learning libraries. Subsequent tuning was conducted on the model with the lowest log loss to refine performance, utilizing a grid search approach over a defined parameter space. This process was iteratively performed with varying parameters to identify the model configuration with the highest predictive accuracy. The final model development and analysis were conducted using Python 3.8.17 (Python Software Foundation).

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author (g_oyama@juntendo.ac.jp) on reasonable request.

References

Armstrong, M. J., Rastgardani, T., Gagliardi, A. R. & Marras, C. Barriers and facilitators of communication about off periods in Parkinson’s disease: Qualitative analysis of patient, carepartner, and physician interviews. PLoS ONE 14, e0215384 (2019).

Beghi, E., Giussani, G. & Sander, J. W. The natural history and prognosis of epilepsy. Epileptic Disord. 17, 243–253 (2015).

Doshi, A. & Chataway, J. Multiple sclerosis, a treatable disease. Clin. Med. (Lond). 16, s53–s59 (2016).

Port, R. J. et al. People with Parkinson’s disease: What symptoms do they most want to improve and how does this change with disease duration?. J. Parkinsons Dis. 11, 715–724 (2021).

Elwyn, G. et al. Shared decision making: A model for clinical practice. J. Gen. Intern. Med. 27, 1361–1367 (2012).

Lu, C., Li, X. & Yang, K. Trends in shared decision-making studies from 2009 to 2018: A bibliometric analysis. Front. Public Health 7, 384 (2019).

Stacey, D. et al. Decision aids for people facing health treatment or screening decisions. Cochrane Database Syst. Rev. 4, CD001431 (2017).

Barata, A. et al. Do patients and physicians agree when they assess quality of life?. Biol. Blood Marrow Transplant. 23, 1005–1010 (2017).

Olson, D. P. & Windish, D. M. Communication discrepancies between physicians and hospitalized patients. Arch. Intern. Med. 170, 1302–1307 (2010).

Burt, J., Lloyd, C., Campbell, J., Roland, M. & Abel, G. Variations in GP-patient communication by ethnicity, age, and gender: Evidence from a national primary care patient survey. Br. J. Gen. Pract. 66, e47–e52 (2016).

Hermanowicz, N., Castillo-Shell, M., McMean, A., Fishman, J. & D’Souza, J. Patient and physician perceptions of disease management in Parkinson’s disease: Results from a US-based multicenter survey. Neuropsychiatr. Dis. Treat. 15, 1487–1495 (2019).

Ogura, H. et al. Evaluation of motor complications in Parkinson’s disease: Understanding the perception gap between patients and physicians. Parkinson’s Dis. 2021, 1599477 (2021).

Schriefer, D., Haase, R., Ettle, B. & Ziemssen, T. Patient- versus physician-reported relapses in multiple sclerosis: Insights from a large observational study. Eur. J. Neurol. 27, 2531–2538 (2020).

Ysrraelit, M. C., Fiol, M. P., Gaitan, M. I. & Correale, J. Quality of life assessment in multiple sclerosis: Different perception between patients and neurologists. Front. Neurol. 8, 729 (2017).

Yutaka, A. & Hideki, K. Large-scale survey on the quality of life (QOL) of epilepsy patients - Differences in perception between patients and their doctors. J. Jpn. Epilepsy Soc. 25, 414–424 (2008).

Sacristán, J. A. Exploratory trials, confirmatory observations: A new reasoning model in the era of patient-centered medicine. BMC Med. Res. Methodol. 11, 57 (2011).

Landolfi, A. et al. Machine learning approaches in Parkinson’s disease. Curr. Med. Chem. 28, 6548–6568 (2021).

Patel, U. K. et al. Artificial intelligence as an emerging technology in the current care of neurological disorders. J. Neurol. 268, 1623–1642 (2021).

Bari, V. et al. An approach to predicting patient experience through machine learning and social network analysis. J. Am. Med. Inform. Assoc. 27, 1834–1843 (2020).

Liu, N., Kumara, S. & Reich, E. Gaining insights into patient satisfaction through interpretable machine learning. IEEE J. Biomed. Health Inform. 25, 2215–2226 (2021).

Cross, J. L., Choma, M. A. & Onofrey, J. A. Bias in medical AI: Implications for clinical decision-making. PLOS Digit. Health 3, e0000651 (2024).

Weiner, E. B., Dankwa-Mullan, I., Nelson, W. A. & Hassanpour, S. Ethical challenges and evolving strategies in the integration of artificial intelligence into clinical practice. PLOS Digit. Health 4, e0000810 (2025).

Neter, E., Glass-Marmor, L., Haiien, L. & Miller, A. Concordance between persons with multiple sclerosis and treating physician on medication effects and health status. Patient Prefer Adherence 15, 939–943 (2021).

Kriston, L. et al. The 9-item Shared Decision Making Questionnaire (SDM-Q-9). Development and psychometric properties in a primary care sample. Patient Educ. Couns. 80, 94–99 (2010).

Jenkinson, C., Fitzpatrick, R., Peto, V., Greenhall, R. & Hyman, N. The Parkinson’s Disease Questionnaire (PDQ-39): Development and validation of a Parkinson’s disease summary index score. Age Ageing 26, 353–357 (1997).

Goetz, C. G. et al. Movement disorder society-sponsored revision of the unified Parkinson’s disease rating scale (MDS-UPDRS): Scale presentation and clinimetric testing results. Mov. Disord. 23, 2129–2170 (2008).

Hoehn, M. M. & Yahr, M. D. Parkinsonism: Onset, progression and mortality. Neurology 17, 427–442 (1967).

Vickrey, B. G., Hays, R. D., Harooni, R., Myers, L. W. & Ellison, G. W. A health-related quality of life measure for multiple sclerosis. Qual. Life Res. 4, 187–206 (1995).

Kurtzke, J. F. Rating neurologic impairment in multiple sclerosis: An expanded disability status scale (EDSS). Neurology 33, 1444–1452 (1983).

Cramer, J. A. & Van Hammée, G. Maintenance of improvement in health-related quality of life during long-term treatment with levetiracetam. Epilepsy Behav. 4, 118–123 (2003).

Ware, J. E. Jr., Snyder, M. K., Wright, W. R. & Davies, A. R. Defining and measuring patient satisfaction with medical care. Eval. Program. Plann. 6, 247–263 (1983).

Patient ALS partner. SDM-Q-9 / SDM-Q-Doc. https://www.patient-als-partner.de/index.php?article_id=20&clang=2 (2024).

Mahoney, F. I. & Barthel, D. W. Functional evaluation: The Barthel index. Md. State Med. J. 14, 61–65 (1965).

Ware, J. E. Jr. & Sherbourne, C. D. The MOS 36-item short-form health survey (SF-36). I. Conceptual framework and item selection. Med. Care 30, 473–483 (1992).

Mao, A., Mohri, M. & Zhong, Y. In Proceedings of the 40th International Conference on Machine Learning Vol. 202 Article 992 (JMLR.org, Honolulu, Hawaii, USA, 2023).

Chicco, D. & Jurman, G. The advantages of the Matthews correlation coefficient (MCC) over F1 score and accuracy in binary classification evaluation. BMC Genom. 21, 6 (2020).

Acknowledgements

Medical writing support was provided by Henry Chung PhD of Oxford PharmaGenesis, Melbourne, Australia, under the guidance of the authors in accordance with Good Publication Practice (GPP 2022) guidelines (www.ismpp.org/gpp-2022), and was funded by Takeda Pharmaceutical Company Ltd.

Funding

The study was funded by Takeda Pharmaceutical Company Limited, Tokyo, Japan.

Author information

Authors and Affiliations

Contributions

Conceptualisation: G.O., Y.T., Y.F., N.H.; data curation: G.O., T.M.; formal analysis: T.M.; funding acquisition: M.I.; investigation: G.O., Y.T., T.T., T.H., W.S., Y.H., S.U., D.K., Y.O., A.O., D.T., H.H.; methodology: G.O., Y.T., T.T., S.N., T.M., Y.F.; project administration: G.O., Y.T., M.I.; resources: G.O., Y.T., T.T., T.H., W.S., Y.H., S.U., D.K., Y.O., A.O., D.T., H.H., M.I.; software: T.M.; supervision: G.O., N.H.; validation: T.M., Y.F., M.I.; visualisation: T.M.; writing – original draft: G.O., Y.T., M.I.; writing – review and editing: G.O., Y.T., T.T., S.N., T.H., W.S., Y.H., S.U., D.K., Y.O., A.O., D.T., H.H., T.M., Y.F., M.I., N.H.

Corresponding authors

Ethics declarations

Competing interests

G.O. has received honoraria from AbbVie, Abbott, Boston Scientific, Eisai Co. Ltd., FP Pharmaceutical Corporation, Kyowa Hakko Kirin, Medtronic, Ono Pharmaceutical Co. Ltd, Sumitomo Pharma, Takeda Pharmaceuticals Co. Ltd., and Viatris. T.M. has received consulting fees from Takeda Pharmaceuticals Co. Ltd. Y.F. and M.I. are employees of Takeda Pharmaceutical Co. Ltd. Y.T., T.T., S.N., T.H., W.S., Y.H., S.U., D.K., Y.O., A.O., D.T., H.H. have no conflicts to declare. N.H. has received consulting fees from AbbVie Inc., Eisai Co. Ltd., FP Pharmaceutical Corporation, Kyowa Kirin Co. Ltd., Ono Pharmaceutical Co. Ltd., Otsuka Pharmaceutical Co. Ltd., and Sumitomo Pharma Co. Ltd.; lecture fees from Daiichi Sankyo Co. Ltd., Eisai Co. Ltd., FP Pharmaceutical Corporation, Kyowa Kirin Co. Ltd., Nihon Medi-Physics Co. Ltd., Novartis Pharma K.K., Otsuka Pharmaceutical Co. Ltd., Sumitomo Pharma Co. Ltd., and Takeda Pharmaceutical Co. Ltd.; honoraria from AbbVie Inc., FP Pharmaceutical Corporation, Kyowa Kirin Co. Ltd., and Novartis Pharma K.K.; and grants from Boston Scientific Corporation, Eisai Co. Ltd., Kyowa Kirin Co. Ltd., Medtronic Japan Co. Ltd., Novartis Pharma K.K., Otsuka Pharmaceutical Co. Ltd., Sumitomo Pharma Co. Ltd., and Takeda Pharmaceutical Co. Ltd.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Oyama, G., Tomizawa, Y., Tsunemi, T. et al. Identification of perception gaps between physicians and patients with neurological diseases and the prediction of these gaps using machine learning. Sci Rep 16, 5394 (2026). https://doi.org/10.1038/s41598-025-33500-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-33500-x