Abstract

Potato plants are highly vulnerable to numerous diseases that can substantially affect both yield and quality. Conventional approaches for detecting these diseases are often labor-intensive, slow, and prone to inaccuracies, particularly under variable environmental conditions. This study presents a hybrid deep learning architecture, termed potato leaf diseases DenseNet (PLDNet), which integrates a DenseNet-based convolutional neural network with a Transformer-based attention module to accurately classify potato leaf diseases. Furthermore, an adaptive parametric activation function, referred to as Adaptive Flatten p-Mish (AFpM), is proposed to enhance the model’s learning flexibility and representational capacity. When evaluated on the PlantVillage and Mendeley datasets, PLDNet attains classification accuracies of 99.54% and 87.50%, respectively, surpassing contemporary state-of-the-art models and activation techniques. The proposed framework exhibits strong generalization performance and offers a scalable, efficient approach for automated plant disease identification. To highlight the novelty, the proposed AFpM activation function introduces a learnable parameter enabling adaptive nonlinearity, improving over Mish, Swish, and PFpM activation functions through dynamic gradient control. AFpM improves accuracy by 2.52% on Mendeley dataset, and 1.93% on PlantVillage dataset compared to PFpM, and by more than 3% compared to Swish and Mish.

Similar content being viewed by others

Introduction

Agricultural productivity remains vital for ensuring global food security, sustaining economic development, and promoting rural prosperity. With the continuous rise in population and the growing impact of climate change, maintaining plant health has become increasingly essential1,2. Contemporary technologies, particularly machine learning and deep learning, are being progressively utilized in agriculture to enhance disease identification and improve crop productivity1,2,3. These technological innovations hold significant promise for smallholder farmers in developing nations who often lack access to expert agronomic support. Agriculture continues to serve as a cornerstone of human civilization, particularly within developing economies, where it underpins both national income and the livelihoods of large portions of the population4. Nonetheless, the sector faces persistent constraints such as climatic variability, soil degradation, and the proliferation of plant pathogens5. Among major food crops, the potato stands out as one of the most economically important, ranking fourth in global consumption6. This crop is highly susceptible to numerous diseases caused by fungi, bacteria, nematodes, and viruses, all of which can drastically reduce yield and quality7. Hence, timely and accurate disease detection is essential for effective management practices. Traditional diagnostic techniques, however, often rely on manual observation or laboratory-based testing, which are laborious, time-intensive, and prone to human error8,9. Furthermore, the visual resemblance of disease symptoms, such as between early and late blight-renders precise identification difficult without specialized expertise or molecular tools10. In addition, variations in environmental parameters including humidity, temperature, and soil composition can alter disease expression and hinder prompt diagnosis11,12.

To address these challenges, machine learning techniques have evolved as reliable and efficient tools for identifying plant diseases. In recent years, several researchers have focused on developing automated systems for the detection of potato leaf infections13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31. Early studies primarily depended on handcrafted feature extraction and conventional machine learning classifiers13,14, which often made the feature design process laborious and sensitive to variations in image quality. To overcome these limitations, Convolutional Neural Networks (CNNs) have shown outstanding capabilities in automatically learning discriminative features and enhancing classification accuracy across a range of image recognition applications, including plant leaf disease categorization15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31. Nevertheless, these models still encounter difficulties in achieving consistent performance when trained on complex, real-world leaf images and maintaining high classification accuracy under varying environmental conditions.

Motivated by recent advancements in deep learning32,33,34,35,36,37,38,39, particularly in 2-dimensional Convolutional Neural Networks (2-D CNNs), we propose an automatic potato leaf disease image classification framework named PLDNet. In addition, we introduce a new activation function for deep learning, termed Adaptive Flatten p-Mish (AFpM), which enhances the adaptability and precision of disease prediction from image data. To assess the effectiveness of the proposed approach, two widely recognized and distinct potato leaf datasets were employed − the PlantVillage dataset40, containing images captured under controlled conditions, and the Mendeley dataset28, featuring images with diverse and complex natural backgrounds. Experimental evaluations demonstrate that the proposed PLDNet model achieved an overall accuracy of 99.54% on the PlantVillage dataset and 87.50% on the Mendeley dataset, surpassing the performance of existing state-of-the-art architectures. Unlike existing CNN or Transformer-based approaches, the novelty of the proposed work lies in (a.) a hybrid DenseNet–Transformer architecture designed specifically to address the real-world potato disease image variability, and (b.) the introduction of a learnable activation function (AFpM) that adapts its nonlinear behavior during model training. No prior work has jointly explored hybrid feature extraction and adaptive activations for potato leaf disease classification. The primary contributions of this study are summarized as follows:

-

Development of a CNN-based architecture, PLDNet, optimized for automated potato leaf disease classification.

-

Design of a novel activation function, Adaptive Flatten p-Mish (AFpM), aimed at enhancing CNN learning efficiency in challenging visual contexts.

-

Comprehensive benchmarking of the proposed model on two benchmark datasets − PlantVillage (controlled setting) and Mendeley (uncontrolled setting) − demonstrating superior classification accuracy.

-

Comparative evaluation with established models to validate the robustness and generalization capability of PLDNet across varying environmental conditions.

-

Presentation of a reproducible and extensible framework applicable to disease identification in other crop species.

The remainder of this paper is organized as follows. Section 2 provides a detailed review of related literature. Section 3 outlines the proposed methodology. Section 4 reports and discusses the experimental outcomes along with potential directions for improvement. Finally, Section 5 concludes the study.

Related work

Over the last decade, significant progress has been observed in the field of automated plant disease detection, particularly through the use of leaf imagery. This advancement has been primarily facilitated by deep learning techniques and the availability of extensive benchmark datasets such as PlantVillage40 and Mendeley28. Traditional machine learning algorithms, including Support Vector Machines (SVMs) and Random Forests, initially established the foundation for this research area by illustrating that handcrafted image descriptors could deliver reliable classification results. However, with the emergence of deep learning, Convolutional Neural Networks (CNNs) have revolutionized the domain by learning discriminative hierarchical features directly from image data, thereby surpassing the performance of conventional methods.

Numerous investigations have reported high classification accuracies on well-controlled datasets such as PlantVillage, employing architectures that range from basic CNNs to advanced variants like ResNet, DenseNet, EfficientNet, and hybrid ensemble frameworks. Despite these improvements, the generalization capability of such models remains a challenge, as evident from their reduced performance on complex and realistic datasets such as Mendeley. Moreover, the majority of studies emphasize architectural optimization and ensemble learning, while the influence of activation functions from fundamental to non-linearity and model convergence has received comparatively little attention. This underrepresentation constitutes a notable research gap, as prevailing models predominantly employ standard activation functions such as ReLU or Mish without systematically assessing their behavior in noisy or imbalanced agricultural image conditions.

To bridge this gap, the present study proposes a novel activation function (AFpM) and a CNN-based model (PLDNet) to improve robustness and generalization across both controlled and natural environments. The following sections provide a comprehensive analysis of recent works, emphasizing datasets, model architectures, performance outcomes, and emerging research trends. Initial efforts in potato leaf disease recognition primarily relied on conventional machine learning algorithms, which achieved commendable performance but suffered from limited scalability. For instance, Islam et al. (2017)13 implemented a multiclass Support Vector Machine (SVM), obtaining an accuracy of 95% on the PlantVillage dataset. Similarly, Iqbal et al. (2020)14 combined global image descriptors with a Random Forest classifier and achieved 97% accuracy. These findings illustrate that well-crafted feature extraction pipelines enable traditional approaches to achieve competitive results in structured image datasets.

Traditional machine learning approaches

Initial efforts in potato leaf disease recognition primarily relied on conventional machine learning algorithms, which achieved commendable performance but suffered from limited scalability. For instance, Islam et al. (2017)13 implemented a multiclass Support Vector Machine (SVM), obtaining an accuracy of 95% on the PlantVillage dataset. Similarly, Iqbal et al. (2020)14 combined global image descriptors with a Random Forest classifier and achieved 97% accuracy. These findings illustrate that well-crafted feature extraction pipelines enabled traditional approaches to achieve competitive results in structured image datasets.

Deep learning and CNN-based approaches

With the growing prominence of deep learning, neural network-based approaches have become the leading choice for automated feature extraction and classification. Tiwari et al. (2020)15 integrated VGG19 as a feature extractor with logistic regression, reporting an accuracy of 97.8% on the PlantVillage dataset. Kumar et al. (2021)16 adopted a Feedforward Neural Network (FFNN), achieving 96.5% accuracy on the same dataset. Tambe et al. (2023)19 attained a top accuracy of 99.18% using a conventional CNN model, whereas Lanjewar et al. (2024)27 enhanced the performance to 99% by employing a modified DenseNet structure. Additional CNN-based studies conducted by Patil et al. (2023)18, Kumar et al. (2023)22, Khan et al. (2024)25, and Roy et al. (2024)24 achieved accuracies between 97.16% and 98.83%, reaffirming the effectiveness of CNN architectures in well-curated datasets such as PlantVillage.

Advanced architectures and hybrid models

Deeper and optimized networks have also demonstrated strong performance in potato leaf disease recognition. Paul et al. (2024)26 implemented ResNet-50, achieving 98.79% overall accuracy, while Muthuraja et al. (2023)20 applied ResNet-152 and obtained 98.78%. Similarly, Ali et al. (2022)17 utilized an Enhanced EfficientNet model that reached 97.22%, highlighting that deeper and fine-tuned architectures yield improved generalization. Mathur et al. (2024)23 compared multiple CNN variants and found that VGG19 delivered the best performance at 93%, suggesting performance limitations in earlier CNN designs. Furthermore, hybrid and ensemble networks have contributed to additional accuracy gains. For example, Arshad et al. (2023)21 combined VGG19 and Inception-V3, achieving 98.66%, while Shabrina et al. (2023)28 employed EfficientNetV2B3 to reach 98.15% on the PlantVillage dataset. These findings collectively emphasize that model fusion and architectural integration can enhance generalization across different visual domains.

In contrast, results obtained from the real-world Mendeley dataset exhibit a noticeable reduction in accuracy due to uncontrolled imaging conditions and environmental variability. Shabrina et al. (2023)28 observed an accuracy of 73.63% using EfficientNetV2B3 on Mendeley, compared to 98.15% on PlantVillage with the same model. Sinamenye et al. (2025)29 improved upon this by incorporating a hybrid EfficientNetV2B3–Vision Transformer (ViT) architecture, achieving 85.06%. Similarly, Mhala et al. (2025)30 and Park et al. (2025)31 reported 81.31% and 78.14% accuracy using DenseNet201 and RCA-Net, respectively. Despite notable architectural differences, Densenet models tend to rely on dense feature reuse, ResNet variants often struggle with shallow texture cues, EfficientNet’s compound scaling does not generalise well beyond clean datasets, and recent ViT-based hybrids require large and high-quality training samples, resulting in only modest performance gains on controlled datasets and exposing their limited ability to handle real-world variability. These outcomes indicate that while CNN-based and hybrid models dominate in structured scenarios, their robustness declines under non-uniform conditions such as those represented in Mendeley. Therefore, developing architectures capable of adapting to diverse real-world environments remains a critical direction for future work.

Applications of existing models, research gaps and limitations

The reviewed models have been widely applied across various agricultural contexts. One of the primary applications lies in early disease diagnosis within precision agriculture, where timely identification of infected plants contributes to improved crop yield and sustainable farming practices. Additionally, these models have been deployed in mobile and unmanned aerial vehicle (UAV)-based systems, enabling real-time field monitoring and rapid detection of disease symptoms across large cultivation areas. Beyond detection, deep learning frameworks such as CNNs have also been integrated into smart farming platforms that support automated disease alerting and decision-making processes, thereby enhancing the overall efficiency of agricultural management and reducing manual inspection efforts. Collectively, these applications highlight the transformative role of AI-driven visual recognition systems in modern agriculture.

CNN-based frameworks, such as ResNet, DenseNet, and EfficientNet, have shown particular utility in embedded systems due to their balance between accuracy and computational efficiency. Hybrid approaches further extend these applications to scenarios demanding adaptability under natural lighting and occlusion conditions. A review of existing studies reveals several persistent gaps in automatic potato leaf disease classification. Existing models have primarily achieved strong classification performance in controlled datasets, such as PlantVillage, where lighting and background conditions are uniform. However, they often fail on datasets like the Mendeley dataset, where the images are captured under uncontrolled imaging conditions due to background clutter, uneven lighting, symptom similarity, and class imbalance. These challenges remain inadequately addressed in prior literature, which motivates the necessity for a more adaptive feature extraction mechanism. Consequently, a key shortcoming is the limited investigation into activation functions specifically tailored for plant disease imagery. Although numerous architectures and optimization strategies have been tested, most research continues to rely on conventional functions like ReLU41 without assessing their domain suitability. The advantages of alternative activations, such as mitigating issues like bias shift42 and neuron inactivity43, have not been extensively explored. Moreover, many high-performing ensemble and feature-fusion methods impose considerable computational costs, limiting their real-time applicability in agricultural contexts. These limitations reveal that no single family of models is sufficient for capturing all types of patterns from images. CNN models are efficient at extracting fine-grained local textures, but often fail to capture long-range relationships. Transformer-based models provide global reasoning but require large, clean datasets to remain stable and fixed-form activation functions lack adaptability under noisy real-world imaging conditions. This research lacuna motivates the integration of CNNs for localised feature enrichment, a Transformer network for global context modelling, and the AFpM activation for adaptive nonlinear representation. Together, these components tackle the domain-shift problem, lighting variability, and feature ambiguity issues.

Novel contribution - AFpM and PLDNet

To address these challenges, the present study introduces a novel activation function termed Adaptive Flatten p-Mish (AFpM) and a customized CNN architecture called PLDNet, built upon a densely connected framework44. Our proposed PLDNet model combines dense local feature extraction with global contextual modelling that allows the architecture to cope with background clutter, uneven lighting, and symptom similarity. In addition, the AFpM activation introduces adaptive nonlinear behaviour that alleviates gradient saturation and instability, issues frequently observed in existing models under real-world imaging conditions. Using the PlantVillage40 and Mendeley28 datasets as evaluation benchmarks, we comprehensively assess the performance of the proposed model. The comparative analysis includes state-of-the-art deep CNN architectures such as DenseNet20144, DenseNet16944, InceptionV345, ResNet5046, VGG1646, and MobileNetV247. Table 1 summarizes previous research on the PlantVillage and Mendeley datasets, detailing the models used, performance metrics, and whether activation functions were investigated in each case. Table 1 summarizes previous research on the PlantVillage and Mendeley datasets, detailing the models used, performance metrics, and whether activation functions were investigated in each case.

Furthermore, the performance of the AFpM activation function was thoroughly evaluated against six well-established activation functions, including ReLU41, Leaky ReLU48, GELU49, Swish50, Mish51, and PFpM52. The experimental analysis demonstrates that the proposed PLDNet model, when combined with the AFpM activation function, attains outstanding classification performance, achieving 99.54% overall accuracy on the PlantVillage dataset40 and 87.50% on the Mendeley dataset28. These results highlight the robustness and effectiveness of the developed framework for potato leaf disease classification. These contributions aim to advance automated agricultural vision systems toward improved generalization, and real-time feasibility. The main contributions of this study are summarized as follows:

-

A detailed comparative evaluation of the PLDNet model incorporating the AFpM activation function with several state-of-the-art architectures,

-

A systematic comparison of the AFpM activation function with other contemporary activation functions, considering PLDNet as the baseline network, and

-

Provision of the PLDNet implementation on GitHub for public access upon acceptance of the manuscript.

Methods

This section elucidates the proposed methodology adopted for the automatic classification of potato leaf diseases. We have designed a novel activation function named AFpM and proposed a hybrid CNN-Transformer model, PLDNet. We have also implemented appropriate pre-processing and data augmentation techniques to enhance classification accuracy, as discussed in the following subsections.

Dataset description

In this study, two well-established datasets were employed to assess the effectiveness of the proposed approach for potato leaf disease classification. The first dataset utilized is the PlantVillage dataset40, which comprises 2,152 images divided into three distinct categories, such as Early Blight, Late Blight, and Healthy. Among them, 1,000 images represent diseased leaves, while 152 correspond to healthy samples. The Early Blight class represents potato leaves affected by the fungal pathogen Alternaria Solani, typically characterized by concentric brown lesions and chlorotic zones around infected areas. The Late Blight category includes leaves infected by Phytophthora Infestans, which manifests as irregular dark patches and rapid tissue decay under humid conditions. The Healthy class consists of disease-free potato leaves exhibiting uniform green coloration and intact surface morphology. The PlantVillage dataset40 was acquired under controlled laboratory conditions at an experimental farm at Penn State University, USA. All images were captured using DSLR cameras under consistent illumination, uniform backgrounds, and minimal environmental interference. Each image is stored in RGB format with a spatial resolution of 256 \(\times\) 256 pixels.

The second dataset, referred to as the Potato Leaf Disease dataset28, is a recently published collection gathered under natural field conditions. This open-access dataset, hosted on Mendeley28, consists of 3,076 images distributed across seven categories: healthy, virus, phytophthora, nematode, fungi, bacteria, and pest. The images were captured from multiple potato farms located in Magelang and Wonosobo, Central Java, Indonesia, using various smartphone cameras in uncontrolled environments. All images are provided in JPG format with a resolution of 1500 \(\times\) 1500 pixels. The class distribution of both datasets is illustrated in Figure 1, where the X-axis denotes the class labels and the Y-axis represents the corresponding image counts. The Mendeley dataset exhibits a notable data imbalance. The classes such as virus, phytophthora, nematode, fungi, bacteria, pest, and healthy contain 532, 347, 68, 748, 569, 611, and 201 images, respectively. Figure 2 presents representative samples from each class in both datasets, highlighting the diversity of image types across the prediction categories. From Figure 2, it is evident that the Mendeley dataset includes more significant real-world image variations, such as uneven lighting, partial occlusion of leaves, shadows, and complex backgrounds. These factors introduce substantial noise into the data, making the Mendeley dataset considerably more challenging and providing a realistic benchmark for evaluating model robustness.

Class-wise data size: (a) The PlantVillage dataset; (b) The Mendeley dataset.

Sample images from each class for both datasets. (a) The Mendeley dataset; (b) The PlantVillage dataset.

Data pre-processsing

As previously mentioned, the images in the PlantVillage dataset40 have a resolution of 256\(\times\)256 pixels, whereas those in the more recently introduced Mendeley dataset28 possess a resolution of 1500\(\times\)1500 pixels. For consistency in model input dimensions, all images from both datasets were resized to 224\(\times\)224 pixels. Each image was normalized to a pixel intensity range between 0 and 1 using min–max normalization. The datasets were then divided into training and testing subsets following an 80:20 stratified split, ensuring proportional representation of each class across both subsets. Additionally, to improve model robustness and generalization, several data augmentation strategies were applied to the training data. These included random zooming within a factor of 0.2–0.3, rotations between \(-40^{\circ }\) and \(40^{\circ }\), and both vertical and horizontal flipping.

Proposed activation function

The activation function41 plays a fundamental role in convolutional neural networks (CNNs), as it introduces non-linearity that enables the network to model complex relationships between inputs and outputs. Among various options, the Rectified Linear Unit (ReLU)41 remains one of the most widely adopted due to its computational efficiency and simplicity. Nevertheless, ReLU suffers from certain limitations, including the problem of inactive neurons43 and the bias shift phenomenon42. To mitigate these issues, the Leaky ReLU function48 was introduced, allowing a small gradient to flow even for negative inputs. Further enhancements were achieved through the Gaussian Error Linear Unit (GELU) proposed by Hendrycks et al.49, which improves learning behavior but lacks adaptability because of its fixed formulation. Subsequently, several non-monotonic activation functions, such as Swish50 and Mish51, were developed, offering superior classification accuracy compared to ReLU and Leaky ReLU in many deep learning tasks. Despite this, Eger et al.53 reported instability concerns with the Swish function. The mathematical forms of these established activation functions are presented in Table 2.

In this study, we introduce a non-linear and parametric activation function termed Adaptive Flatten p-Mish (AFpM), developed as an improved variant of the earlier PFpM function52. The mathematical formulation of AFpM is presented in Equation (1), where z denotes the input neuron data, and p represents a trainable parameter associated with each neuron. The parameter p follows the GlorotNormal distribution54, i.e., \(p \sim \mathcal {N}\left( 0, \sigma ^2\right)\) with mean zero and variance \(\sigma ^2\) where \(\sigma ^2 = \frac{2}{n_{in} + n_{out}}\) and optimized throughout the training process via backpropagation55. The terms \(n_{in}\) and \(n_{out}\) represent the number of input and output units (neurons) connected to a given layer, respectively. This initialization ensures that the variance of activations remains stable, facilitating faster convergence and improved gradient flow during training.

The subscript j in the equations refers to the \(j^{th}\) neuron within a given layer. Equation (2) describes the optimization update rule for the parameter \(p_j\) during model training, where \(\lambda\) represents the learning rate, E is the objective (or loss) function, and \(\frac{\partial y(z_j)}{\partial p_j}\) defines the sensitivity of the AFpM output with respect to \(p_j\). The derivative term \(\frac{\partial y(z_j)}{\partial p_j}\) is equal to 1 for all input instances, while the derivative of AFpM with respect to z is provided in Equation (3). A graphical representation of the proposed AFpM activation function is shown in Figure 3.

Here, y(z) represents the output of the AFpM activation function, z is the pre-activation input, \(p_j\) is the learnable parameter corresponding to the \(j^{th}\) neuron, \(\lambda\) is the learning rate controlling the update step, and E denotes the loss function minimized during training. The function \(\tanh (\cdot )\) and \(\text {sech}(\cdot )\) correspond to the hyperbolic tangent and hyperbolic secant functions, respectively.

Proposed activation function, AFpM.

While most existing parametric activation functions primarily aim to adjust the slopes of their functional segments, the proposed AFpM instead modifies the hinge point, denoted as p, thereby preserving the overall structural behavior of the function. The main difference between PFpM52 and AFpM lies in the treatment of the parameter p. In the PFpM formulation52, this parameter is fixed at a constant value of \(-0.2\). In contrast, AFpM initializes p using the GlorotNormal approach and subsequently refines it through backpropagation during network training55. The learnable nature of p in AFpM enhances adaptability and flexibility, allowing the model to adjust its activation behavior according to the underlying data distribution. This parametric design enables controlled vertical shifts in the activation curve to determine an optimal p value for each context. As a result, individual layers of the network exhibit unique activation characteristics. This learnable parameter, p, is optimised jointly with network weights during training, allowing the activation shape to adapt dynamically to the underlying feature distribution. However, AFpM exhibits similar computational complexity to Mish and Swish as it employs similar fundamental operations, such as exponential, logarithm, and hyperbolic tangent, but differs only in the inclusion of a learnable scalar term. This addition does not alter the asymptotic complexity and introduces a negligible runtime overhead. Table 3 presents a qualitative comparison of AFpM with other activation functions, highlighting key distinctions that emphasize its novelty.

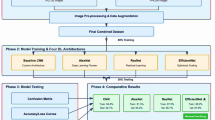

Proposed PLDNet model description

PLDNet represents a novel deep learning framework developed to address the classification task for potato leaf disease images. The model integrates the strengths of CNNs and a Transformer-based attention mechanism to effectively extract both localized visual features and long-range contextual information from leaf imagery56. The overall architecture of PLDNet is illustrated in Figure 4. In constructing PLDNet, the DenseNet169 network44, pre-trained on the ImageNet dataset, serves as the feature extraction backbone to derive detailed hierarchical representations of leaf textures and disease characteristics. DenseNet16944 employs densely connected layers that promote efficient feature reuse, mitigate the vanishing gradient issue, reduce parameter redundancy, and improve gradient propagation throughout the training process. The 7×7×1664 convolutional feature maps generated by DenseNet169 are then forwarded directly into a lightweight customized Transformer block56, enabling the attention module to operate on spatially structured feature representations without flattening. The Transformer block incorporates a single multi-head attention layer with eight heads, each attending a 208-dimensional subspace (derived from the 1664-channel input), followed by a 2048 unit feedforward module and standard residual normalization operations. This Transformer component enables the model to capture global contextual dependencies and relationships among fine-grained patterns within the leaf images. Following the Transformer block, global average pooling is applied to compress spatial dimensions and generate a compact feature vector. The final classification module includes a dense layer with 512 units, followed by an activation layer, a dropout layer (dropout rate of 0.6), and a softmax-activated output layer for multi-class classification. In total, PLDNet contains approximately 32 million trainable parameters, reflecting the combination of DenseNet backbone and the additional capacity introduced by the Transformer layer.

Proposed PLDNet Model architecture.

Justification of AFpM for potato leaf classification

The AFpM activation function addresses complex agricultural image classification challenges, such as high intra-class variability, noise, and uneven illumination. Potato leaf diseases often exhibit overlapping visual symptoms, such as the similarities between early and late blight, while the decision boundaries between classes are very subtle. Traditional activation functions (such as ReLU or Mish) often produce static nonlinear transformations, which limit the network’s ability to adapt to these subtle differences. AFpM introduces a learnable parameter component, \(p\), which can be dynamically adjusted during training. This flexibility enables the model to tailor the activation response to better fit the data distribution, thereby improving feature recognition in challenging noisy environments, such as real-world agricultural conditions. Another advantage of AFpM lies in its smooth and non-monotonic nature, which preserves gradient flow and reduces vanishing or exploding gradients, common problems in deep networks. When integrated with PLDNet, AFpM improves convergence and enhances learning robustness, particularly when working with high-resolution leaf images from datasets such as Mendeley. Compared to standard activation functions, AFpM is able to adapt to the statistical patterns of the potato leaf disease dataset, enabling the model to focus on the complex textures and disease patterns that are critical for accurate classification. While its effectiveness has been demonstrated in this field, the proposed activation function was designed with generality in mind, enabling its extension to other agricultural and non-agricultural image classification tasks.

Algorithmic description of PLDNet with AFpM

The proposed Algorithm 1 integrates a DenseNet169 backbone with a Transformer-based attention block and a novel AFpM activation function to classify potato leaf diseases from RGB images. The overall time complexity of PLDNet is \(O(L \cdot D^2 \cdot K^2 + H \cdot N^2 \cdot d)\), where \(L\) is the number of CNN layers, \(D\) is the spatial dimension, \(K\) is the convolution kernel size, \(H\) is the count of attention heads, \(N\) is the sequence length, and \(d\) is the feature dimension. The AFpM activation uses O(n) for n neurons. Considering the model parameters and activation functions in the convolution and Transformer components, the spatial complexity is \(O(N \cdot d^2 + L \cdot D^2 + C)\). The AFpM parameter is one scalar per activation layer.

PLDNet with Adaptive Flatten p-Mish (AFpM) Activation

Optimization objective: constraint programming formulation

The model parameter is \(\theta\) and the AFpM parameter is p. The classification loss (cross entropy) is minimized on the training set.

Computational complexity classification

General image classification problems belong to the P class because the complexity of CNN inference is polynomial with respect to the input size and the number of parameters. However, optimization of neural network parameters (especially for non-convex loss graphs and learnable activation functions such as AFpM) can achieve training convergence, including:

-

NP-hard subproblems: Optimization of deep networks with non-convex constraints (activation learning) is known to be NP-hard in the worst case.

-

Gradient descent heuristic allows practical convergence.

-

Minimizing overfitting and regularization constraints are computationally intensive (e.g. Dropout tuning = NP-hard combinatorial optimization).

While PLDNet is capable of performing polynomial inference, it requires solving an NP-hard training subproblem due to learnable parameters and deep architecture optimization. This reflects prediction is efficient, but training dynamics are difficult to optimize.

Experimental setup

In this study, we describe the experimental configuration adopted for the training and evaluation of the proposed approach. The available data were divided into training and testing subsets using an 80:20 stratified split. We have trained our models on the PlantVillage and Mendeley datasets separately, using identical training configurations, to assess their controlled and real-world performance independently. Model optimization was carried out using the Adam optimizer with a learning rate \((\alpha )\) of 0.0001, a categorical cross-entropy loss function, a batch size of 32, and a total of 100 training epochs. To enhance convergence efficiency and mitigate performance stagnation during training, the ReduceLROnPlateau strategy57 was applied, which adaptively decreases \(\alpha\) by a factor of 0.2 when the validation loss remains unchanged for three successive epochs. To minimize overfitting and encourage improved generalization, the Early Stopping mechanism57 was incorporated with a patience threshold of 15 and a minimum delta of 0.001, terminating training when the validation loss ceased to improve. The ModelCheckpoint callback was further employed to preserve the model weights corresponding to the minimum validation loss attained. Model development was performed using the TensorFlow framework, while Seaborn and Matplotlib were used for visualization, and Scikit-learn along with NumPy were utilized for data preprocessing within the Google Colaboratory environment.

Performance metrics

We evaluated our proposed methodology using a number of metrics58,59,60,, including confusion matrix, precision, specificity, sensitivity, F1-score, Matthews’ coefficient (MCC), overall accuracy, and balanced accuracy58

Precision58 measures the proportion of true positive predictions among all positive predictions.

Sensitivity (Recall)58 measures the proportion of true positive predictions among all actual positives.

Specificity58 measures the proportion of true negative predictions among all actual negative instances:

F1-score58 is the harmonic mean of Precision and Recall, providing a balanced measure between the two.

Overall accuracy58 measures the proportion of correct predictions among all predictions.

MCC58 is a balanced measure that takes into account all four metrics: TP, TN, FP, and FN.

Although accuracy and F1-score58 are useful metrics, they may not always provide a complete picture of a classification model’s performance, especially when there is a class disparity. MCC58 addresses these limitations by offering a balanced measure that considers both positive and negative classes, making it a more reliable metric for evaluating classification models. Therefore, MCC58 is often preferred over accuracy and F1-score58 for its robustness and balanced nature in assessing classification performance. Moreover, we have used balanced accuracy59 for evaluating performance of classification. It can be defined as the average of sensitivity scores calculated for each class, making sure each class has the same effect on the overall accuracy. It plays a vital role to ensure the reliability and generalizability of classification models, particularly in scenarios with imbalanced class distributions.

Where:

Results and discussion

In this section, we have discussed our experimental findings in detail. First, we have presented the classification performance of our proposed model, PLDNet, with the newly developed AFpM activation function on both potato leaf disease classification datasets. Then, we have shown the effect of the data augmentation technique on the classification performance of PLDNet. Subsequently, a comparative performance analysis of AFpM with several existing activation functions41,48,49,50,51,52 has been conducted using PLDNet on both datasets to evaluate the effectiveness of AFpM. Furthermore, we have compared the classification performance of PLDNet with various pre-existing deep CNN models, like DenseNet20144, DenseNet16944, InceptionV345, ResNet5046, VGG1646, and MobileNetV247, and also presented a comparative performance analysis between our proposed methodology and the previous works.

Classification performance of PLDNet with AFpM

We have applied our proposed PLDNet model along with the activation function AFpM and validated our methodology using two different potato leaf image classification datasets: a new dataset available on Mendeley28 and the PlantVillage dataset40. Table 4 contains different parameters such as precision, specificity, sensitivity, F1-score, MCC, overall accuracy, and balanced accuracy obtained by the PLDNet model for both potato leaf datasets. PLDNet has achieved approximately 87.50% overall classification accuracy on the Mendeley dataset, and the same for the PlantVillage dataset is 99.54%. Figure 5 depicts the confusion matrix obtained by the proposed PLDNet model for both datasets. It can be observed from Figure 5(a) that PLDNet has correctly classified all images belonging to Nematode, and the least class-wise accuracy has been acquired in the Healthy class. Similarly, from Figure 5(b), we can see that PLDNet accurately classified all images except two healthy leaf images for the PlantVillage dataset. Next, we have shown the receiver operating characteristic (ROC) curves along with the area under the curve (AUC) values for each class for both datasets in Figure 6. For the Mendeley dataset, the class-wise AUC values achieved by PLDNet are 0.981 for Bacteria, 0.895 for Fungi, 0.890 for Healthy, 0.996 for Nematode, 0.887 for Pest, 0.943 for Phytopthora, and 0.941 for Virus. On the other hand, for the PlantVillage dataset, the class-wise AUC values attained by PLDNet are 1.00 for Early blight, 0.996 for Late blight, and 0.968 for healthy.

Confusion matrix obtained by PLDNet model on: (a) Mendeley dataset; (b) PlantVillage dataset.

ROC curve obtained by PLDNet model on: (a) Mendeley dataset; (b) PlantVillage dataset.

Effect of data augmentation

In this section, we have shown the effect of data augmentation on potato leaf disease classification. Table 5 depicts the comparative classification performance of the PLDNet model on both Mendeley and PlantVillage before and after applying the data augmentation technique to the training data. Using the data augmentation technique, the PLDNet model has achieved approximately 87.50% overall accuracy on the Meneley dataset and 99.54% overall accuracy on the PlantVillage dataset. Without any data augmentation technique, PLDNet achieved nearly 86.04% and 96.55% overall accuracy on Mendeley and PlantVillage datasets, respectively.

The observed performance gains are due to the model’s greater exposure to a variety of leaf images, including variations in orientation, scale, and texture. These augmentations improve the model’s generalization ability by emulating real-world biases and reducing overfitting, especially for underrepresented classes. This benefit is particularly evident in the PlantVillage dataset, where data augmentation helps balance learning due to the small number of samples in the healthy class. Similar results are observed in the more complex and noisier Mendeley dataset, where data augmentation helps better feature learning and class separation, despite differences in image background and lighting. Such results highlight the necessity of data augmentation as a key step in training deep learning models for robust agricultural applications.

Comparison of AFpM with different activation functions

We have compared the classification performance of AFpM with six different pre-existing activation functions, including ReLU41, Leaky ReLU48, GELU49, Swish50, Mish51, and PFpM52, in the PLDNet model. Table 6 presents the classification performance of PLDNet on both potato leaf disease datasets using different activation functions. It can be observed that PLDNet with AFpM has attained the highest overall classification accuracy of 87.50% on the Mendeley dataset and 99.54% overall accuracy on the PlantVillage dataset. Among the pre-existing activation functions, PLDNet has achieved 85.06% and 97.68% overall accuracy on Mendeley and PlantVillage datasets, respectively, using the PFpM activation function. In contrast, the PLDNet model with the ReLU activation function has acquired an overall accuracy of only 83.12% and 92.81% for the same datasets. Therefore, the PLDNet model has achieved promising classification accuracy on both potato leaf disease datasets using different activation functions, especially AFpM.

Comparison of PLDNet with different state-of-the-art CNN models

In Table 7, we have presented a comparative classification performance between PLDNet and a few state-of-the-art deep CNN models like DenseNet20144, DenseNet16944, InceptionV345, ResNet5046, VGG1646, and MobileNetV247 on both Mendeley and PlantVillage datasets. PLDNet surpassed its counterparts in terms of different performance metrics, such as average precision, specificity, sensitivity, F1-score, MCC, overall accuracy and balanced accuracy on both datasets. PLDNet has attained the highest overall classification accuracy of 87.50% on the Mendeley dataset and 99.54% overall accuracy on the PlantVillage dataset. Similarly, among the existing state-of-the-art CNN models, DenseNet169 has achieved the highest overall accuracy of 82.47% and 91.88% on the Mendeley and the PlantVillage datasets, respectively.

Contextualization of PlantVillage vs. Mendeley results

The very high accuracy achieved on PlantVillage (99.54%) should be interpreted in light of the dataset characteristics. PlantVillage images are largely studio-quality, captured under controlled illumination with clean, homogeneous backgrounds and limited acquisition noise; therefore, near-ceiling performance is commonly reported on this benchmark and does not necessarily reflect real-world robustness. In contrast, the Mendeley dataset contains field images with substantial domain shift, including background clutter, lighting variability (shadows and over/under-exposure), viewpoint changes, partial occlusions, and sensor/compression artifacts. The reduced accuracy on Mendeley (87.50%) thus primarily reflects this increased difficulty rather than a failure of the proposed approach.

Despite these challenges, PLDNet remains comparatively robust on Mendeley due to three design choices. First, the applied data augmentation (Table 5) exposes the model to synthetic variations in orientation, scale, and appearance that partially emulate field conditions. Second, the hybrid CNN–Transformer structure supports both local texture learning and global contextual reasoning, which helps suppress irrelevant background patterns and improves class separation under clutter. Third, the adaptive AFpM activation improves nonlinear feature shaping and gradient flow, enabling more discriminative representations in noisy settings, as reflected by consistent gains over ReLU, Mish, Swish, and PFpM (Table 6). Overall, the PlantVillage–Mendeley gap highlights the importance of evaluating on real-world imagery; accordingly, we emphasize that the practical contribution of PLDNet lies in its improved generalization on challenging field data while maintaining strong performance on curated benchmarks.

Ablation study

An ablation study has been conducted to systematically evaluate the contribution of each architectural component in the proposed PLDNet framework. Starting from the DenseNet169 backbone as the baseline model, successive components have been incrementally added, including a Transformer module and different activation functions, to assess their individual and combined impact on classification performance. Experiments have been conducted on two benchmark datasets, Mendeley and PlantVillage, using the same training and evaluation protocol to ensure a fair comparison. The baseline DenseNet169 model provides a strong initial performance on both datasets; however, incorporating the Transformer module with a standard ReLU activation yields only marginal improvements, indicating limited gains from contextual modeling when paired with conventional activations. Replacing ReLU with the Mish activation leads to a more noticeable performance increase across most used metrics, demonstrating improved feature smoothness and gradient flow.

The best performance is achieved by integrating the proposed AFpM activation function within the DenseNet169 + Transformer architecture, forming the complete PLDNet model. As shown in Table 8, PLDNet consistently outperforms all other configurations on both datasets across all evaluation metrics, including precision, specificity, sensitivity, F1-score, Matthews Correlation Coefficient (MCC), overall accuracy, and balanced accuracy. Notably, PLDNet achieves near-perfect performance on the PlantVillage dataset, highlighting the effectiveness of the proposed architectural design and activation strategy. These results confirm that each component contributes positively to the final performance, with the combination of the Transformer module and the AFpM activation function playing a critical role in enhancing discriminative capability and robustness.

Comparison with existing models

To evaluate the effectiveness of the proposed PLDNet model, we conducted a comparative performance analysis between PLDNet and the literature for potato leaf disease classification. Table 9 presents a summary of existing models, specifying the datasets employed, model architectures, and their respective classification accuracies. Notably, the majority of prior studies focused solely on the PlantVillage dataset-an ideal, controlled dataset with minimal noise. Although some methods, such as those by Tambe et al. (2023)19 and Lanjewar et al. (2024)27, achieved high accuracy values of 99.18% and 99.00%, respectively, they were assessed only on this curated dataset. In contrast, PLDNet demonstrates robust performance across both PlantVillage and Mendeley datasets, attaining 99.54% accuracy on PlantVillage and 87.50% on the more challenging Mendeley dataset, which contains real-world images with complex backgrounds, lighting variations, and noise. Furthermore, models like EfficientNetV2B328 and RCA-Net31 achieved lower performance on Mendeley, reporting 73.63% and 78.14%, respectively. It highlights PLDNet’s superior generalization to diverse field conditions. The improved classification accuracy of PLDNet can be attributed to its hybrid CNN-Transformer architecture and the introduction of the Adaptive Flexible parametric Mish (AFpM) activation function, which learns optimal nonlinearities during training. This data-driven activation mechanism contributes significantly to the model’s adaptability and classification accuracy under real-world variations.

Statistical analysis

To robustly evaluate the AFpM-based PLDNet model, we calculated statistical metrics across five independent experiments with random training and test set splits. On the PlantVillage dataset, PLDNet achieved an average accuracy of 99.54% with a standard deviation of 0.18%, demonstrating high consistency across all experiments. Similar results were obtained on the Mendeley dataset: the model achieved an average accuracy of 87.50% with a standard deviation of 0.47%. The small standard deviations in both datasets demonstrate the model’s stability and reliability across different training conditions. In addition to standard deviations, 90% confidence intervals were calculated to ensure statistical performance. For the PlantVillage dataset, the confidence intervals for overall accuracy ranged from 99.36% to 99.72%, while for the Mendeley dataset, the confidence intervals ranged from 86.93% to 88.08%. These intervals confirm that the observed performance gains are not due to random initialization or chance, but rather to the underlying architecture and activation function. The statistical consistency of metrics such as precision, recall, and F1 score highlights the reliability of PLDNet and its potential for practical application in potato disease diagnosis (see Fig. 7).

Statistical Analysis of PLDNet Performance.

Limitations and future work

Although the proposed PLDNet framework demonstrates strong performance on both a controlled benchmark dataset (PlantVillage) and a real-world dataset (Mendeley), certain limitations remain and point to important directions for future research. First, the current evaluation is limited to two publicly available datasets. While these datasets capture complementary characteristics-studio-quality images versus field-acquired images-they do not fully encompass the diversity of real-world agricultural conditions. In particular, no cross-dataset generalization experiment (e.g., training on PlantVillage and testing on Mendeley) was conducted in this study, as such a setting would introduce severe domain mismatch and label distribution shifts. Consequently, although PLDNet exhibits improved robustness on the more challenging Mendeley dataset compared to existing methods, its generalization across unseen domains cannot yet be fully guaranteed.

Second, despite the use of extensive data augmentation to simulate variations in orientation, scale, and illumination, PLDNet still experiences performance degradation under extreme field conditions, such as heavy occlusion, overlapping leaves, severe background clutter, and poor or uneven lighting. These challenges highlight the inherent difficulty of deploying vision-based disease classification models in unconstrained agricultural environments. Additionally, the proposed AFpM activation function, while enhancing adaptability through learnable nonlinearities, increases computational complexity and may raise the risk of overfitting when training data are scarce. Similarly, the inclusion of a Transformer module improves contextual modeling but introduces additional computational overhead, which may limit real-time deployment on resource-constrained or embedded devices.

Future work will therefore focus on several complementary directions to strengthen generalization claims. First, we plan to perform explicit cross-dataset and cross-domain evaluations, including training on curated datasets and testing on field images, to better quantify domain shift effects. Second, domain adaptation and domain generalization techniques, such as feature alignment, adversarial learning, or self-supervised pretraining on unlabeled field data, will be explored to improve robustness under real-world conditions. Third, model optimization strategies, including pruning, quantization, and lightweight attention mechanisms, will be investigated to reduce computational cost and enable deployment on edge devices. Finally, expanding the dataset to include images from multiple geographical regions, growth stages, and environmental conditions, together with the integration of explainable AI methods (e.g., Grad-CAM or attention visualization), will further enhance model interpretability, reliability, and practical applicability in real agricultural settings.

Conclusion

This study presents a novel deep learning framework, termed PLDNet, which integrates Convolutional Neural Network (CNN) and Transformer components along with a newly designed activation function, AFpM, to achieve precise classification of potato leaf diseases. The proposed PLDNet model has achieved 99.54% accuracy on PlantVillage dataset and 87.50% on Mendeley dataset, demonstrating clear improvements over existing CNN, hybrid, and activation-based approaches, particularly under complex real-world imaging conditions. The AFpM activation function enhances the network’s adaptability by enabling dynamic nonlinear transformations, which contribute to improved classification performance. Despite its relatively higher computational cost resulting from the inclusion of the Transformer layer and trainable activation parameters, PLDNet establishes a strong benchmark in terms of generalization and robustness. Future research directions include refining the model architecture for edge-level deployment, incorporating explainable artificial intelligence techniques, and investigating self-supervised learning strategies to minimize dependence on annotated agricultural datasets.

Data availability

The dataset used in this study is available at: https://data.mendeley.com/datasets/ptz377bwb8/1 and https://www.kaggle.com/datasets/emmarex/plantdisease.

Code availability

The GitHub codebase will be made public once the paper is accepted.

References

Huang, Z. et al. Advanced deep learning algorithm for instant discriminating of tea leave stress symptoms by smartphone-based detection. Plant Physiology and Biochemistry 212, 108769 (2024).

Shehu, H. A., Ackley, A., Mark, M. & Eteng, O. E. Artificial intelligence for early detection and management of tuta absoluta-induced tomato leaf diseases: A systematic review. European Journal of Agronomy 170, 127669 (2025).

Klingler, A. et al. Prediction of grassland yield in austria: A machine learning approach based on satellite, weather, and extensive in situ data. European Journal of Agronomy 170, 127701 (2025).

Kamilaris, A. & Prenafeta-Boldú, F. X. Deep learning in agriculture: A survey. Computers and electronics in agriculture 147, 70–90 (2018).

Oerke, E.-C. Crop losses to pests. The Journal of agricultural science 144(1), 31–43 (2006).

Haverkort, A. et al. Societal costs of late blight in potato and prospects of durable resistance through cisgenic modification. Potato research 51, 47–57 (2008).

Fry, W. Phytophthora infestans: the plant (and r gene) destroyer. Molecular plant pathology 9(3), 385–402 (2008).

Arnal Barbedo, J.G. Digital image processing techniques for detecting, quantifying and classifying plant diseases. SpringerPlus. 2(1), 660 (2013)

Ramcharan, A. et al. Deep learning for image-based cassava disease detection. Frontiers in plant science 8, 1852 (2017).

Too, E. C., Yujian, L., Njuki, S. & Yingchun, L. A comparative study of fine-tuning deep learning models for plant disease identification. Computers and Electronics in Agriculture 161, 272–279 (2019).

Velásquez, A. C., Castroverde, C. D. M. & He, S. Y. Plant-pathogen warfare under changing climate conditions. Current biology 28(10), 619–634 (2018).

Yan, H. & Nelson, B. Jr. Effects of soil type, temperature, and moisture on development of fusarium root rot of soybean by fusarium solani (fssc 11) and fusarium tricinctum. Plant Disease 106(11), 2974–2983 (2022).

Islam, M., Dinh, A., Wahid, K., & Bhowmik, P. Detection of potato diseases using image segmentation and multiclass support vector machine. In 2017 IEEE 30th Canadian Conference on Electrical and Computer Engineering (CCECE), 1–4 (IEEE, 2017).

Iqbal, M.A., & Talukder, K.H. Detection of potato disease using image segmentation and machine learning. In 2020 International Conference on Wireless Communications Signal Processing and Networking (WiSPNET), 43–47 (IEEE, 2020).

Tiwari, D., et al. Potato leaf diseases detection using deep learning. In 2020 4th International Conference on Intelligent Computing and Control Systems (ICICCS), 461–466 (IEEE, 2020).

Sanjeev, K., Gupta, N.K., Jeberson, W., & Paswan, S. Early prediction of potato leaf diseases using ann classifier. Oriental journal of computer science and technology. 13(2, 3), 129–134 (2021)

Ali, W. et al. Potato plant leaf disease classification using an enhanced deep learning model. Journal of Information Communication Technologies and Robotic Applications 13(1), 23–30 (2022).

Patil, S., Korgaonkar, A., Nadankar, S. & Ekbote, A. Potato leaf disease and its classification using deep learning. Indian Journal of Computer Science 8(4), 8–17 (2023).

Tambe, U.Y., Shobanadevi, A., Shanthini, A., & Hsu, H.-C. Potato leaf disease classification using deep learning: A convolutional neural network approach. arXiv preprint arXiv:2311.02338 (2023)

Muthuraja, M., et al. An explainable approach for detecting potato leaf disease using ensemble model. In 2023 Third International Conference on Ubiquitous Computing and Intelligent Information Systems (ICUIS), 70–76 (IEEE, 2023).

Arshad, F. et al. Pldpnet: End-to-end hybrid deep learning framework for potato leaf disease prediction. Alexandria Engineering Journal 78, 406–418 (2023).

Kumar, R., Agrawal, T., Dwivedi, V.D., & Khatter, H. Potato leaf disease classification using deep learning model. In International Conference on Machine Learning, Image Processing, Network Security and Data Sciences, 186–200 (Springer, 2023).

Mathur, P., Kumar, S., Yadav, V., & Sangwan, D. Analysis of deep learning models for potato leaf disease classification and prediction. In International Conference on Advances in Data-driven Computing and Intelligent Systems, 355–365 (Springer, 2023).

Kyamelia, R., Subharthy, R., Kumar, P.T., & Sheli, S.C. Potato plant leaf disease detection using custom cnn deep net: A step towards sustainable agriculture. In 2024 ITU Kaleidoscope: Innovation and Digital Transformation for a Sustainable World (ITU K), 1–7 (IEEE, 2024).

Khan, F. et al. Enabling early treatment: A deep learning approach to multi-class potato leaf disease identification. Int. J. Innov. Sci. Technol 6(2), 1–11 (2024).

Paul, L., et al. Self-supervised contrastive learning for potato leaf disease classification. In 2024 27th International Conference on Computer and Information Technology (ICCIT), 2050–2055 (IEEE, 2024).

Lanjewar, M.G., & Morajkar, P., P, P. Modified transfer learning frameworks to identify potato leaf diseases. Multimedia Tools and Applications. 83(17), 50401–50423 (2024)

Shabrina, N. H. et al. A novel dataset of potato leaf disease in uncontrolled environment. Data in brief 52, 109955 (2024).

Sinamenye, J. H., Chatterjee, A. & Shrestha, R. Potato plant disease detection: leveraging hybrid deep learning models. BMC Plant Biology 25(1), 1–15 (2025).

Mhala, P., Bilandani, A. & Sharma, S. Enhancing crop productivity with fined-tuned deep convolution neural network for potato leaf disease detection. Expert Systems with Applications 267, 126066 (2025).

Tariq, M. H. et al. Estimation of fractal dimensions and classification of plant disease with complex backgrounds. Fractal and Fractional 9(5), 315 (2025).

Upadhyay, N. & Gupta, N. Detecting fungi-affected multi-crop disease on heterogeneous region dataset using modified resnext approach. Environmental Monitoring and Assessment 196(7), 610 (2024).

Upadhyay, N., & Bhargava, A. Artificial intelligence in agriculture: applications, approaches, and adversities across pre-harvesting, harvesting, and post-harvesting phases. Iran Journal of Computer Science, 1–24 (2025)

Upadhyay, N., & Gupta, N. Seglearner: A segmentation based approach for predicting disease severity in infected leaves. Multimedia Tools and Applications, 1–24 (2025)

Upadhyay, N., Sharma, D. K. & Bhargava, A. 3sw-net: A feature fusion network for semantic weed detection in precision agriculture. Food Analytical Methods 18(10), 2241–2257 (2025).

Jain, M.A., et al.: Money plant leaf (epipremnum aureum): A comprehensive study of raw datasets with manual classification. Data in Brief, 112066 (2025)

Koparde, S. et al. A conditional generative adversarial networks and yolov5 darknet-based skin lesion localization and classification using independent component analysis model. Informatics in Medicine Unlocked 47, 101515 (2024).

Kotwal, J. et al. Sensor infused quantum cnn for diabetes disease prediction and diet recommendation. International Journal of Computational Intelligence Systems 18(1), 113 (2025).

Kotwal, J.G., et al. Sadccnet: self-attention-based dense cascaded capsule network for bone cancer detection using deep learning approach. Iran Journal of Computer Science, 1–21 (2025)

Hughes, D., et al.: An open access repository of images on plant health to enable the development of mobile disease diagnostics. arXiv preprint arXiv:1511.08060 (2015)

Dubey, S. R., Singh, S. K. & Chaudhuri, B. B. Activation functions in deep learning: A comprehensive survey and benchmark. Neurocomputing 503, 92–108 (2022).

Clevert, D.-A., Unterthiner, T., & Hochreiter, S. Fast and accurate deep network learning by exponential linear units (elus). arXiv preprint arXiv:1511.07289 (2015)

Lu, L., Shin, Y., Su, Y., & Karniadakis, G.E. Dying relu and initialization: Theory and numerical examples. arXiv preprint arXiv:1903.06733 (2019)

Jaiswal, A., Gianchandani, N., Singh, D., Kumar, V. & Kaur, M. Classification of the covid-19 infected patients using densenet201 based deep transfer learning. Journal of Biomolecular Structure and Dynamics 39(15), 5682–5689 (2021).

Demir, A., Yilmaz, F., & Kose, O. Early detection of skin cancer using deep learning architectures: resnet-101 and inception-v3. In 2019 Medical Technologies Congress (TIPTEKNO), 1–4 (IEEE, 2019).

Mascarenhas, S. & Agarwal, M. A comparison between vgg16, vgg19 and resnet50 architecture frameworks for image classification. In 2021 International Conference on Disruptive Technologies for Multi-disciplinary Research and Applications (CENTCON), 1, 96–99 (IEEE, 2021).

Nguyen, H. Fast object detection framework based on mobilenetv2 architecture and enhanced feature pyramid. J. Theor. Appl. Inf. Technol 98(05), 812–824 (2020).

Maas, A.L., et al. Rectifier nonlinearities improve neural network acoustic models. In Proc. Icml, 30, 3 (Atlanta, GA, 2013).

Hendrycks, D., & Gimpel, K. Gaussian error linear units (gelus). arXiv preprint arXiv:1606.08415 (2016)

Ramachandran, P., Zoph, B., & Le, Q.V. Searching for activation functions. arXiv preprint arXiv:1710.05941 (2017)

Misra, D. Mish: A self regularized non-monotonic activation function. arXiv preprint arXiv:1908.08681 (2019)

Mondal, A. & Shrivastava, V. K. A novel parametric flatten-p mish activation function based deep cnn model for brain tumor classification. Computers in Biology and Medicine 150, 106183 (2022).

Eger, S., Youssef, P., & Gurevych, I. Is it time to swish? comparing deep learning activation functions across nlp tasks. arXiv preprint arXiv:1901.02671 (2019)

Desai, C., & Desai, C. Impact of weight initialization techniques on neural network efficiency and performance: A case study with mnist dataset. International Journal Of Engineering And Computer Science 13(04) (2024)

Sibi, P., Jones, S. A. & Siddarth, P. Analysis of different activation functions using back propagation neural networks. Journal of theoretical and applied information technology 47(3), 1264–1268 (2013).

Reis, H. C. & Turk, V. Potato leaf disease detection with a novel deep learning model based on depthwise separable convolution and transformer networks. Engineering Applications of Artificial Intelligence 133, 108307 (2024).

Thakur, A. et al. Transformative breast cancer diagnosis using cnns with optimized reducelronplateau and early stopping enhancements. International Journal of Computational Intelligence Systems 17(1), 14 (2024).

Chatterjee, A., Pahari, N., Prinz, A. & Riegler, M. Ai and semantic ontology for personalized activity ecoaching in healthy lifestyle recommendations: a meta-heuristic approach. BMC Medical Informatics and Decision Making 23(1), 278. https://doi.org/10.1186/s12911-023-02396-5 (2023).

Brodersen, K.H., Ong, C.S., Stephan, K.E., & Buhmann, J.M. The balanced accuracy and its posterior distribution. In 2010 20th International Conference on Pattern Recognition, 3121–3124 ( IEEE, 2010). https://doi.org/10.1109/ICPR.2010.764 .

Mondal, A., Shrivastava, V.K., Chatterjee, A., & Ramachandra, R. Sb-piplu: A novel parametric activation function for deep learning. IEEE Access (2025)

Funding

Open access funding provided by University of Inland Norway. INN have subscription for open-access (OA) publication in Scientific Reports, Nature.

Author information

Authors and Affiliations

Contributions

A.M, and A.C formulated the concept and designed the methodology. A.M collected data, and performed experiments and data analysis. A.C provided supervision and additional guidance and suggestions relevant to the experiments and analysis. A.M initially drafted the paper, and all the authors (A.M, A.C, and N.A) reviewed and edited the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval and consent to participate

Not applicable.

Consent for publication

Not applicable.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Mondal, A., Chatterjee, A. & Avazov, N. A hybrid CNN-transformer model with adaptive activation function for potato leaf disease classification. Sci Rep 16, 4282 (2026). https://doi.org/10.1038/s41598-025-34406-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-34406-4