Abstract

The closed-set assumption often fails in practical industrial applications, especially considering diverse working conditions where the data distribution may differ significantly. In light of this, a domain adaptation method with adversarial learning is designed for open-set fault diagnosis. Firstly, convolutional autoencoder is developed to distill the fault features; Secondly, an unknown boundary by weighting the similarity between known and unknown classes is established, to ensure shared class alignment between domains while classifying known classes across domains and identifying unknown fault samples. Finally, the diagnostic performance is evaluated using three sets of rolling bearing datasets. The proposed method achieved average diagnostic F1-scores of 96.60%, 96.56%, and 96.62% on these datasets, respectively. The results demonstrate that the method effectively rejects unknown fault data in the target domain while aligning known classes, validating its fault diagnosis capability under the open-world assumption.

Similar content being viewed by others

Introduction

Rolling bearings in rotary machinery are fundamental and critical components in key areas such as high-end manufacturing equipment, smart devices, high-tech maritime equipment, and advanced rail transportation1. The performance of bearings largely determines the performance of the entire mechanical system or components, playing a crucial role in modern industrial machinery operations2. Consequently, monitoring the operational status of bearings and developing intelligent fault diagnosis technology for bearing vibration signals have gained increasing attention, making bearing fault diagnosis a research hotspot in the mechanical field3,4.

Deep learning has garnered growing interest in diagnosing faults5. By constructing non-linear neural networks with many hidden layers, deep learning can adaptively distill key information layer by layer, eliminating the need for manual feature construction. This maps data from one to a new feature space, better representing the intrinsic structure and characteristics of the data, thereby enhancing classification or prediction accuracy. Therefore, “deep models” are the means to achieve automatic feature learning6,7. Under the backdrop of smart manufacturing and the industry 4.0 strategy, deep learning-based intelligent fault diagnosis is at the research forefront, becoming increasingly intelligent and effective. However, the success of deep learning hinges on two fundamental assumptions: sufficient labeled data and the same distribution of training and test data are required, which means the diagnosis tasks must satisfy the independent and identically distributed condition8,9. Fault diagnosis models developed based on these assumptions, although potentially achieving diagnostic accuracy as high as 99%, have limited practical significance. In real industrial environments, machines operate under varying working conditions, where continuous or intermittent changes in load and speed lead to data collected under different distributions, resulting in lower diagnostic accuracy. Thus, extending fault diagnosis models trained on historical data to ensure classification accuracy under varying conditions has become a research hotspot in intelligent fault diagnosis10,11.

Transfer learning is one of the technologies addressing these issues, which can be categorized into four types12,13. Feature mapping-based transfer learning technique include Chen et al.‘s use of transfer component analysis to distill transferable features14. Zhao et al. applied joint maximum mean discrepancy to identify bearing failures15. Qian et al. incorporated a Maximum Mean Discrepancy (MMD)-based regularization term into the optimization objective of convolutional neural networks to train end-to-end domain-adaptive fault diagnosis models16. Qian et al. adopted Kullback-Leibler divergence to help deep models obtain similar features17. Wang et al. designed a transfer network based on Correlation Alignment to minimize domain covariance differences18. Instance-based transfer learning technique include the TrAdaBoost algorithm proposed by Wan et al., a typical instance-based transfer learning method19. Zhou et al. utilized TrAdaBoost to identify the health status of motor bearings under different rotational speeds20. Parameter-based transfer learning technique, for variable condition motor bearing fault diagnosis scenarios, include Zhang et al. and Hasan et al.‘s fine-tuning of fault diagnosis models using samples under target working conditions21,22. Shao et al. applied parameter transfer to VGG-16, achieving extremely high diagnostic accuracy by fine-tuning the source model with a small number of samples23. Generative adversarial network-based transfer learning technique learns discriminative transferable features across domains24. Luo et al. conducted adversarial learning to distill the domain-invariant features of different domains25. Guo et al. constructed a transfer convolutional network for transferring knowledge between mechanical devices26.

Comparison of closed-set and open-set domain adaptation.

Most existing fault diagnosis models are based on the closed-world assumption, which assumes that the fault categories in the test data are identical to those in the training data. In other words, all fault categories appear during the training phase, and no new, unknown faults are encountered during the diagnostic process27,28. While fault detection models perform well under this assumption, it does not hold in real-world industrial applications. In industrial equipment fault diagnosis, new and unknown fault types are often encountered, particularly with new equipment or under novel operating conditions where fault types or patterns differ from those in the training data. Consequently, diagnostic models based on the closed-world assumption are inadequate for these scenarios.

In contrast to traditional closed-world assumption methods, the open-set assumption is better suited for handling unknown categories, especially in complex and dynamically changing industrial environments29. In the open environment of the Industrial Internet, fault diagnosis tasks are typically open and non-stationary, with new unknown fault types emerging as equipment operating states or conditions change. Under such circumstances, traditional closed-world models are prone to misclassifying unknown faults as known categories, preventing the identification of new faults and compromising diagnostic accuracy and reliability. Therefore, fault diagnosis models designed for industrial equipment in open environments must not only accurately recognize known faults from training samples but also effectively address the challenges posed by unknown fault categories. Open-set assumption methods, with their more flexible model design, can better meet these challenges and are of significant practical importance in real-world industrial applications. Comparison of closed-set and open-set domain adaptation is presented in Fig. 1.

While adversarial learning and convolutional autoencoders have been applied in deep learning, these methods generally focus on specific category identification or adversarial training. However, systematic analysis of domain and category differences in the context of the open-set assumption remains insufficient. To address this gap, this study proposes a novel domain-adversarial model for open-set fault diagnosis, designed to simultaneously diagnose known and unknown fault categories in open-set environments. Unlike existing methods, this approach integrates both domain and category differences, introducing an adversarial learning framework to adapt target domain samples. Specifically, the proposed model builds upon the traditional autoencoder structure and introduces a weighted mechanism. This weighting scheme evaluates the differences between source and target domain samples and dynamically adjusts the weights of target domain samples based on different label information. This strategy assigns higher weights to target samples that are more identifiable, based on their similarity to known and unknown classes. This enhances the transfer learning effect for known classes and simultaneously optimizes the distribution of unknown class samples through adversarial training. This strategy effectively promotes positive transfer from the source domain to the target domain, improving the model’s classification performance in open-set scenarios. In the testing phase, the model utilizes an introduced unknown-class classifier to automatically identify the class of input samples, accurately classifying known samples and effectively distinguishing unknown categories in the target domain. As a result, the proposed method demonstrates significant advantages in open-set fault diagnosis. The comparison between the proposed method and the three existing methods is shown in Table 1.

The remainder of this study is organized as follows: Sect. 2 introduces the overall framework of the domain-adversarial learning model. Section 3 provides a detailed explanation of the proposed method’s design. Section 4 presents experimental validation, and Sect. 5 concludes the study.

Domain adversarial learning

Domain adversarial learning leverages adversarial mechanisms to extract similar features from both the source and target domains. This is achieved by confusing the domain discriminator D, making the features extracted by the feature extractor G from different domains as similar as possible, thereby enabling the transfer of fault knowledge from the source domain to the target domain. Therefore, the training objective is to minimize the feature extractor G and maximize the discriminator D. The loss function is:

where \({G_d}\left( \cdot \right)\) and \({G_f}\left( \cdot \right)\) stand for the outputs of D and G, and \({d_i}\) is the binary label for the ith sample.

Fault diagnosis process based on open set domain confrontation.

Most domain adversarial adaptation methods are designed to solve cross-domain alignment problems under the closed-set assumption. This paper aims to address the application of the open-world assumption in domain adaptation. However, when dealing with bearing datasets under different working conditions, these methods often produce unreliable prediction results. To mitigate this issue, a domain-independent alternative discrimination loss is needed. Studies, such as33,34, have shown that autoencoders can be used for discrimination purposes instead of traditional neural network structures. Based on this, we introduce autoencoders into domain adversarial learning.

An autoencoder consists of two parts: the first part maps the original data to a high-dimensional space, and the second part reconstructs the data using the high-dimensional features. To identify target private classes, we combine multiple classifiers to evaluate the domain discrepancy. This process assigns a corresponding weight to each target sample, distinguishing between shared and target private classes. These weights are used to establish boundaries for unknown classes, enhancing the differentiation between known and unknown classes and improving the effectiveness of domain adaptation. The corresponding process is depicted in Fig. 2.

The open-world intelligent fault diagnosis framework.

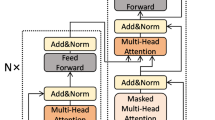

The proposed model architecture extends the adversarial network, which is presented in Fig. 3. The first component of the model is the encoder \({G_{ae}}\), which automatically learns deeper and better representations from the raw inputs of both domains. The second component is the decoder \({G_{de}}\), which reconstructs the spatial dimensions of the reduced features, by minimizing the reconstruction error loss. The third component is the known class classifier \({G_c}\), which minimizes the source classification loss. The fourth component is the extended classifier \({G_{cu}}\), which includes both known and unknown classes, expanding the final layer’s dimension from K to K + 1. This component calculates the similarity probability weight of the input samples belonging to unknown classes. The fifth component is the auxiliary domain discriminator \({G_{ad}}\), which outputs the similarity weight of samples belonging to shared samples. Similar to the role of the domain discriminator, its weight and domain loss are combined to further distinguish the distribution differences, aligning the domains by training to differentiate the domain features.

In the testing phase, the model learned during the training phase is applied to diagnose target domain. The extended classifier outputs a probability distribution corresponding to K + 1 classes, accurately classifying known classes and identifying unknown classes, which is essential for practical industrial machinery scenarios. The following sections will further elaborate on the training and testing phases in detail.

Open-set domain adaptation

Source domain loss and reconstruction error

To begin with, it is necessary to obtain the mapping relationship between the input and labels to classify known faults. The classifier learns the feature distribution with the classification optimized using a standard cross-entropy loss.

where \({\theta _{ae}}\) and \({\theta _{cu}}\) stand for the parameters for deep convolutional autoencoder and extended classifier, respectively. \({n_s}\) denotes the number of source domain samples, \({L_{{G_{cu}}}}\) represents the cross-entropy loss function and \(y_{i}^{s}\) denotes the labels corresponding to source sample \(x_{i}^{s}\).

Next, to capture the complex distributions in the latent space, we use unsupervised learning with convolutional autoencoder to reconstruct data. This approach learns the intrinsic feature variations of data samples from both domains, making the reconstructed data close to the raw data, thereby jointly optimizing the learning objectives of the autoencoder and the decoder. Specifically, source and target domain data are input into the deep convolutional autoencoder model, and the encoder and decoder process these inputs to produce reconstructed data. Reconstruction loss is computed using the scale-invariant mean squared error function. Unlike traditional mean squared error, scale-invariant mean squared error effectively addresses the issue of scale variations in data during loss computation. This is particularly important in scenarios involving multi-source data distributions and cross-domain learning, as it prevents excessive penalization caused by discrepancies in data scales. Therefore, the scale-invariant mean squared error term is employed to calculate the reconstruction loss for both the source and target domains, as shown in the following equation:

where k represents the dimensionality of the batch of data, \(x_{i}^{s}\) denotes the source domain data, \(\hat {x}_{i}^{s}\) is the reconstructed data from the source domain, \(x_{i}^{t}\) is the target domain data, and \(\hat {x}_{i}^{t}\) is the reconstructed data from the target domain. \(x\) and \(\hat {x}\) represent the original and reconstructed data, respectively, while \(\left\| {x - \hat {x}} \right\|_{2}^{2}\) refers to the squared norm, and \({L_{s{i_{mse}}}}\) indicates the scale-invariant mean squared error. While traditional mean squared error is commonly used in reconstruction tasks, it penalizes the scale components of correct predictions. In contrast, scale-Invariant mean squared error penalizes the discrepancies between frequency components, enabling the model to learn to reproduce the overall shape of the modeled object. This helps reduce the reconstruction error, ensuring that the reconstructed data closely aligns with the original input data.

Similarity weight calculation

To avoid negative transfer caused by misclassification, this study uses similarity weight calculation to learn the likelihood of a sample being shared. By capturing the similarity between cross-domain samples, this method attaches weights to the target domain samples, allowing the loss calculation to ignore unknown samples and align the known samples in both domains.

To effectively utilize class differentiation and cross-domain information for developing transferable metric weights, the known class classifier \({G_c}\) employs a leaky-softmax activation function35 as an auxiliary label predictor. This converts the features extracted by the encoder into an \(\left| {{C_s}} \right|\)-dimensional space, as shown in the formula below:

where \({z_c}\) represents the value corresponding to class c in the z-dimensional space, and the denominator includes a constant \(\left| {{C_s}} \right|\) to ensure the output is less than 1. When the value for class c is very high, the confidence of the sample being classified as class c is higher.

Based on the known source domain label information, the similarity weight \({w_1}\left( x \right)\) for an input sample is represented by summing the known class probabilities:

where \(G_{c}^{K}\left( {{G_{ae}}\left( x \right)} \right)\) is the probability that \({x_t}\) belongs to known class K. The sum of these probabilities serves as the similarity weight of the sample belonging to the shared labels of the source domain. Outputs similar to the source samples will have a high value or be close to 1, while outputs that differ significantly from the source samples will be close to 0. Thus, the \({w_1}\left( x \right)\) output quantifies the transferability of cross-domain samples.

This is trained based on the \(\left| {{C_s}} \right|\) classifier with \({G_c}\) source domain class labels, as shown in Eq. (7):

where \(y_{{i,K}}^{s}\) stand for the one-hot encoded labels of the source domain samples. However, since this similarity measure is defined using only the source samples for training, it is difficult to determine

\({w_1}\left( {{x_t}} \right)\) for the target samples. Therefore, it is necessary to further explore the intrinsic knowledge of the target domain to enhance the training effect.

To further learn the distributional changes of unknown target samples, the extended classifier \({G_{cu}}\) is used to measure the weight value of shared target samples. The goal is to develop a weight metric \({w_2}\left( {{x_t}} \right)\) for each target sample based on distinguishing labels and domain information. To enhance the accuracy of similarity metric learning, the \({(K{\text{+1)}}_{th}}\) dimension of the extended classifier \({G_{cu}}\) is utilized to measure the similarity probability weight \({w_2}\left( {{x_t}} \right)\) of the target sample belonging to the shared space. Target domain samples belonging to the shared can result in higher \({w_2}\left( {{x_t}} \right)\) values compared to unknown target class, which is depicted in Eq. (8):

Based on the domain adaptation framework, \({G_c}\) is used to evaluate the similarity of each class in the target and source domains, and \({G_{cu}}\) is used to assess the probability that a target sample belongs to an unknown class. By obtaining the weight information of the target samples and combining \({G_c}\) and \({G_{cu}}\), the similarity measure is derived.

The higher the corresponding \(w\left( {{x_t}} \right)\) value, the greater the probability it belongs to the shared class, aligning with known classes. Conversely, if the similarity value \(w\left( {{x_t}} \right)\) outputs is close to 0, the sample may be part of unknown class and will not align with any known class, corresponding to unknown classes.

Closed-set domain adaptation typically uses domain loss training methods to align shared samples between two domains. This training strategy is evidently unsuitable for open-set problems, as unknown classes can lead to incorrect alignment. This study employs multiple classifiers and designs the “similarity weight” metric to quantify the similarity between the target and source domains. To ensure that the assigned weights better reflect the domain distribution, we further train auxiliary domain discrimination \({G_{ad}}\) using Eq. (10). This process promotes the allocation of distinct known and unknown class label information weights to shared and unknown target samples, respectively. During training, the feature extraction encoder \({G_{ad}}\) converges to an optimal value, further evaluating the input target sample weights.

where \({G_{ad}}\left( {{G_{ae}}\left( {x_{i}^{s}} \right)} \right)\) means the weight assigned to the source sample, \(d_{i}^{s}\)is 0 as it belongs to the source domain. Similarly, \({G_{ad}}\left( {{G_{ae}}\left( {x_{j}^{t}} \right)} \right)\) represents the weight assigned to the corresponding target domain sample, \(d_{j}^{t}\)set to 1 as it belongs to the target domain.

Establishing the boundary for unknown classes

The binary cross-entropy loss function is introduced to learn an accurate hyperplane that separates known and unknown samples, thereby establishing the boundary for unknown classes. Unlike the OSBP method36, which sets a boundary threshold \(w\left( {{x_t}} \right)\) to distinguish between shared and private labels for establishing the boundary of unknown classes, this study uses combined similarity weight values AAA to more precisely identify unknown classes. This method further learns potential domain information, optimizing the boundary establishment process by incorporating the estimated combined similarity weight measure\(w\left( {x_{j}^{t}} \right)\) for target domain samples, as shown below.

Through this learning process during training, we achieve the desired objective of applying the generated shared training parameters to extend the classifier \({G_{cu}}\) from source samples to both shared and private target samples. This classification process includes the probability of input sample for unknown class. Therefore, to determine whether an input sample belongs to any known class or an unknown class, it is only necessary to use the output probability from the extended classifier \({G_{cu}}\) during the testing phase.

Experiments

Problem statement

Domain adaptation based on the open-world assumption includes a source domain set \({D_s}=\left( {x_{i}^{s},y_{i}^{s}} \right)_{{i=1}}^{{{n_s}}}\) with corresponding labels, consisting of \({C_s}\) classes collected from distribution \({p_s}\); and an unlabeled target domain set \({D_t}=\left( {x_{j}^{t}} \right)_{{j=1}}^{{{n_s}}}\), consisting of \({C_t}\) classes belong to distribution \({p_t}\). Here, \({p_s} \ne {p_t}\) and \({C_s} \subset {C_t}\) denote the shared label set \(C={C_s} \cap {C_t}\) and the private label set \(\bar {C}={C_t}\backslash {C_s}\), respectively. The goal is to accurately classify the shared label set, which have different distributions, using the source domain knowledge, and to accurately identify the private target data. In this context, the K shared fault classes of source and target domains are known classes, while the K + 1 private target class is unknown class.

Dataset description

To thoroughly evaluate the diagnostic performance of the proposed method, this study introduces three rolling bearing datasets for experimental validation: the Case Western Reserve University (CWRU) dataset37, Southeast University (SEU) dataset38, and Padua University (PU) dataset39. The descriptions of the three datasets are as follows:

CWRU Bearing Dataset37: The experimental setup for the CWRU bearing dataset is shown in Fig. 4. It consists of a 2-horsepower motor (on the left), a torque sensor/encoder (in the middle), and a dynamometer (on the right). The collected vibration signals come from the drive end of the motor on the test bench. The dataset contains four operational states: (1) Normal state (H), (2) Inner race fault (IF), (3) Outer race fault (OF), and (4) Rolling element fault (BF). For IF, OF, and BF, three different fault severities are considered (0.007 inches, 0.014 inches, and 0.021 inches), resulting in ten categories: one normal state and nine fault categories. The signals are sampled at a frequency of 12 kHz and collected under four different motor loads and speeds, corresponding to the following operating conditions: Condition 1: 0 hp / 1797 rpm, Condition 2: 1 hp / 1772 rpm, Condition 3: 2 hp / 1750 rpm, and Condition 4: 3 hp / 1730 rpm. This provides four sets of data with different distributions.

CWRU bearing test bench.

SEU bearing test bench.

SEU Bearing Dataset38: The experimental setup for the SEU bearing dataset is shown in Fig. 5. It includes a motor controller, motor, planetary gearbox, parallel gearbox, brake, and brake controller. Four bearing states are defined: (1) Normal state (H), (2) Inner race fault (IF), (3) Outer race fault (OF), and (4) Rolling element fault (BF). The signals are sampled at a frequency of 12 kHz and collected under two different motor speeds and loads, corresponding to the following operating conditions: Condition 1: 20 Hz − 0 V, and Condition 2: 30 Hz − 2 V. This results in two datasets with different distributions.

PU bearing test bench.

PU Bearing Dataset39: The experimental setup for the PU bearing dataset is shown in Fig. 6, which includes a test motor, measuring shaft, bearing module, flywheel, and load motor. The dataset is sourced from 32 rolling bearings, consisting of 6 normal bearings, 12 artificially damaged bearings, and 14 bearings damaged through accelerated life testing. In this study, three bearing states are selected: (1) Normal state (H), (2) Inner race fault (IF), and (3) Outer race fault (OF). The signals are sampled at a frequency of 64 kHz, with data collected under four different operating conditions. The detailed information for the four operating conditions is provided in Table 2.

Since frequency-domain information is often more sensitive to the health status of machinery, it generally leads to better fault diagnosis performance, this study converts the time-domain vibration data of bearings into frequency-domain data using the Fast Fourier Transform (FFT). For the CWRU, JNU, and SEU datasets, the initial raw signals are selected with a length of 4096 samples, followed by FFT to generate 4096 Fourier coefficients. As the coefficients are symmetric, each sample contains 2048 coefficients. This transforms each time-domain dataset into frequency-domain data with dimensions of 100 × 2048. Specifically, for the CWRU, SEU, and PU bearing datasets, each category contains 100 samples.

To evaluate the performance of the proposed algorithm framework, nine migration tasks were set for the CWRU dataset, nine for the JNU dataset, and eight for the SEU dataset. The specific tasks are outlined in Tables 3, 4 and 5.

Experimental results analysis

To clearly demonstrate the diagnostic results of the proposed method across various transfer tasks, we use K to represent the diagnostic accuracy for known classes, U for unknown classes, and F1 for overall diagnostic accuracy. The diagnostic results for each dataset are shown in Tables 6, 7 and 8.

As shown in Table 6, the test accuracy of the CWRU dataset across different tasks is presented, with results for target data sharing the healthy state with the source domain, target outlier data, and overall performance. It is evident that, except for the relatively lower unknown class recognition result in task C5, the classification accuracy for known classes and recognition of unknown classes in the other tasks all exceeded 96.7%, demonstrating high test accuracy. In task C5, the known classes only include the healthy state and two types of outer race faults. As a result, the model can only learn features from the known classes, mastering the distribution patterns between the outer race faults and the healthy state. However, due to the lack of prior knowledge of inner race faults and rolling element faults, the discriminative power for the remaining seven classes is insufficient in the feature space. Additionally, inner race and rolling element faults each contain three different severity levels, and the pattern differences between these faults further exacerbate classification confusion. For the SEU and PU datasets, which exhibit more complex distributions compared to the CWRU dataset, the overall experimental accuracy is slightly lower than that of the CWRU dataset. Nevertheless, the accuracy across most tasks exceeds 90%, with only a few exceptions. The experimental results from these three datasets largely fulfill the objectives of this study, achieving accurate classification of known class data and good recognition ability for unknown classes under the open-set domain adaptation framework.

To further validate the proposed open-world assumption-based domain adaptation method for bearing fault diagnosis, five open-set domain adaptation methods are introduced for comparison. The details of the five methods are as follows:

OSBP36: An open-set domain adaptation technique that trains both a classifier and a generator. The classifier distinguishes known and unknown samples based on a predefined threshold, while the generator produces unknown samples that can deceive the classifier.

Improved OSBP40: Built upon OSBP, this method replaces the binary cross-entropy loss with the symmetric Kullback-Leibler (KL) divergence loss.

STA41: Utilizes a multi-level weighted strategy to separate known and unknown samples and improves alignment by evaluating the contribution of different features to distribution alignment.

DODAN42: Determines the boundaries of each category by detecting outliers and applies an adversarial mechanism to transfer fault knowledge for known categories.

ANMAC28: Employs multiple auxiliary classifiers to identify unknown categories in the target domain and introduces a weighting scheme to improve alignment for known categories.

The experimental results are presented in Figs. 7, 8 and 9, which show a comparison of the F1 scores for the proposed method and the five existing methods. As observed, the proposed method demonstrates a significant performance advantage in most tasks, especially in tasks with large domain distribution differences (e.g., tasks C0 and S3), where the improvement in F1 scores is particularly notable. This indicates that the proposed method exhibits stronger adaptability when addressing cross-domain distribution changes and challenges posed by unknown category samples. Compared to OSBP, Improved OSBP, STA, and DODAN, the proposed method achieves higher precision in aligning shared samples and isolating unknown categories through dynamic similarity weight computation and unknown category boundary optimization. This effectively reduces the occurrence of negative transfer. Although OSBP and Improved OSBP enhance the detection capability of unknown category samples by incorporating additional category distribution information, they fall short in aligning the shared sample space in the target domain, leading to class overlap issues in high-dimensional feature spaces. While STA and DODAN demonstrate good classification ability in certain tasks, their fixed-threshold boundary construction strategy lacks dynamic adjustment capabilities, causing performance degradation in tasks with complex label distributions. In contrast, the proposed method, through dynamic similarity weight computation and joint classifier optimization, can more flexibly adapt to the complex distribution characteristics of target domain samples. Compared to the latest fault diagnosis method, ANMAC, the proposed method not only achieves comparable performance but also outperforms it in some tasks. The comparison with state-of-the-art algorithms demonstrates that the proposed method can effectively tackle the challenges in complex open-world scenarios and provides a new solution for cross-domain fault diagnosis in practical industrial applications.

In conclusion, the superiority of the proposed method lies in its ability to address the following key aspects: (1) The combination of similarity weights and the dynamic boundary division strategy of the extended classifier enables more precise alignment and isolation of target domain samples. (2) The dynamic adjustment of the weight mechanism and the optimization of unknown class probabilities significantly reduce the negative transfer caused by distribution differences and unknown classes. (3) The superior performance across multiple tasks and datasets demonstrates the strong cross-scenario applicability and stability of the proposed method.

Accuracy of transfer tasks on the CWRU dataset using different methods.

Accuracy of transfer tasks on the SEU dataset using different methods.

Accuracy of transfer tasks on the PU dataset using different methods.

The confusion matrices of all methods on task C0.

To visually compare the diagnostic results of the four methods, confusion matrices for tasks C0, S0, and P0 are presented in Figs. 10, 11 and 12. The results indicate that the proposed method achieves an accuracy of approximately 90% in identifying known categories, while the recognition accuracy for unknown categories is 100%, 100%, and 96% for the three tasks, respectively. This performance significantly outperforms OSBP, Improved OSBP, STA, and DODAN methods. Compared to the latest fault diagnosis method, ANMAC, the proposed method demonstrates superior recognition capability for unknown categories.

The confusion matrices of all methods on task S0.

The confusion matrices of all methods on task P0.

Feature visualization

To provide a comprehensive understanding of the feature extraction process of the proposed method, this study employs t-SNE for an intuitive visualization of the extracted features, thereby thoroughly assessing the method’s capability in handling open-set tasks. The visualization results are illustrated in Figs. 13, 14 and 15. Specifically, panels (a)-(c) display the source and target domain data before feature extraction, while panels (d)-(f) show the source and target domain features after distribution alignment. In these figures, three sets of tasks are illustrated: C0, C4, C8, S0, S3, S5, F0, F1, and F3. A comparative analysis of the original data and the distribution-aligned features across these panels reveals the following key observations: (1) Separation of raw data: In panels (a)-(c), the original data exhibit spatial separation between source and target domains for the same class, indicating that they belong to distinct categories. This demonstrates a significant distribution discrepancy between the source and target domains without feature extraction and distribution alignment. (2) Overlap after distribution alignment: In panels (d)-(f), following distribution alignment, the same class from source and target domains overlap in the feature space, indicating that they now belong to the same category. This clearly shows that the proposed method effectively mitigates distribution differences between domains, leading to the clustering of same-class data in the feature space. (3) Clustering effect of unknown classes: Furthermore, the figures show that all unknown class data are accurately clustered without overlapping with known class data. This further confirms that the proposed method not only identifies known portions in the target domain but also classifies unknown portions with high precision.

These visualization results support the conclusion that the proposed method excels in open-set tasks by effectively identifying and classifying data in the target domain, achieving precise diagnostics. Overall, these findings highlight the robust capabilities of the proposed method in feature extraction and distribution alignment, as well as its potential in practical applications.

Visualization results on the CWRU dataset.

Visualization results on the SEU dataset.

Visualization results on the PU dataset.

To evaluate the effectiveness of the designed adaptive weight allocation strategy, the weights learned for each fault category are presented using bar charts, as shown in Figs. 16, 17 and 18. The transfer tasks for each dataset are consistent with those used in the previous visualization analysis. From the results depicted in the three sets of figures, it is evident that the adaptive weight allocation strategy assigns significantly higher weights to common categories compared to private categories. For example, in Fig. 10, the weights for common categories in the three cases are close to or exceed 0.4, while the weights for private categories are around 0.2, indicating a clear difference. These weight allocation results demonstrate that the designed adaptive weight allocation strategy effectively distinguishes between common and private category data. This finding validates the effectiveness of the proposed method in using the weight allocation mechanism to differentiate between the shared and unique parts.

Average values and deviations of weights on the CWRU dataset.

Average values and deviations of weights on the SEU dataset.

Average values and deviations on the PU dataset.

Conclusion

This study proposes a domain-adversarial diagnostic framework for rolling bearing fault diagnosis under varying operational conditions within the open-world assumption. By utilizing a joint mechanism of a known-class classifier and an extended classifier, the model estimates the similarity between target domain samples and shared classes from the source domain, assigning similarity weights to each target domain sample. This mechanism effectively aligns and classifies the known classes in the target domain while simultaneously identifying the unknown classes. Experimental results demonstrate that the proposed method exhibits superior performance across multiple task scenarios, significantly improving both the classification of known fault classes and the recognition of unknown fault classes under the open-world assumption.

Despite these significant achievements, some limitations remain. First, the framework is somewhat reliant on the alignment of feature distributions between the target and source domains’ shared classes. When the source domain’s knowledge is insufficient, the performance of identifying unknown target domain classes may degrade. Second, the method’s applicability is limited when there are substantial distribution differences between the source and target domains. Additionally, while the proposed method has been validated on the CWRU, SEU, and PU datasets, which cover typical operating conditions, its scalability in more complex or unknown scenarios still needs further validation. Furthermore, the method involves multiple steps, including feature extraction, domain alignment, and classifier training, leading to relatively high computational costs. It requires further optimization for deployment on resource-constrained edge devices.

Future work will explore the integration of graph networks, contrastive learning, and self-supervised learning techniques to enhance the feature representation of target domain samples. Additionally, a multi-source domain transfer learning model will be developed, integrating information from multiple source domains to improve diagnostic accuracy. Moreover, models capable of online updates will be developed to adapt to dynamically changing operational conditions, further enhancing the method’s practical applicability in industrial environments.

Data availability

All data used in the paper can be found in https://github.com/cathysiyu/Mechanical-datasets.

References

Zhao, K., Liu, Z., Zhao, B. & Shao, H. Class-aware adversarial multiwavelet convolutional neural network for Cross-domain Fault diagnosis. IEEE Trans. Ind. Inf. 20, 4492–4503 (2024).

Chen, X. et al. Bearing remaining useful life prediction using client selection and personalized aggregation enhancement in Federated Learning[J]. IEEE Internet Things J. 11 (24), 40888–40896 (2024).

Liu, Z. et al. Reinforced fuzzy domain adaptation: revolutionizing data-unaccessible rotating machinery fault diagnosis across multiple domains[J]. Expert Syst. Appl. 252, 124094 (2024).

Dong, Y. et al. Rolling Bearing Intelligent Fault Diagnosis towards Variable Speed and Imbalanced Samples Using Multiscale Dynamic Supervised Contrast learning[J]243109805 (Reliability Engineering & System Safety, 2024).

Upadhyay, N. & Chourasiya, S. K. Extreme learning machine and ensemble techniques for classification of rolling element bearing defects[J]. Life Cycle Reliab. Saf. Eng. 11 (2), 189–201 (2022).

Wei, H. et al. Extreme learning Machine-based classifier for fault diagnosis of rotating Machinery using a residual network and continuous wavelet transform[J]. Measurement 183, 109864 (2021).

Liu, Y. et al. Counterfactual-augmented few-shot contrastive learning for machinery intelligent fault diagnosis with limited samples[J]. Mech. Syst. Signal Process. 216, 111507 (2024).

Zhou, F. et al. Fault diagnosis based on federated learning driven by dynamic expansion for model layers of imbalanced client[J]. Expert Syst. Appl. 238, 121982 (2024).

Zhao, K. et al. Self-paced decentralized federated transfer framework for rotating machinery fault diagnosis with multiple domains[J]. Mech. Syst. Signal Process. 211, 111258 (2024).

Lu, H. et al. Federated learning with uncertainty-based client clustering for fleet-wide fault diagnosis[J]. Mech. Syst. Signal Process. 210, 111068 (2024).

Li, Z. et al. Intelligent fault diagnosis under imbalanced multivariate working conditions leveraging dynamic unsupervised domain adaptation with sample and margin regularization[J]. Meas. Sci. Technol. 35 (7), 076128 (2024).

Ren, X. et al. Multi-source Domain Self-supervised Enhanced Transfer Fault Diagnosis Approach with Source Sample Refinement Strategy[J]110380 (Reliability Engineering & System Safety, 2024).

Shen, J. et al. Fedled: label-free equipment fault diagnosis with vertical federated transfer learning. IEEE Trans. Instrum. Meas. 73, 1–10 (2024).

Chen, C. et al. A cross domain feature extraction method based on transfer component analysis for rolling bearing fault diagnosis[C]//2017 29th Chinese control and decision conference (CCDC). IEEE, : 5622–5626. (2017).

Zhao, K. et al. Joint distribution adaptation network with adversarial learning for rolling bearing fault diagnosis[J]. Knowl. Based Syst. 222, 106974 (2021).

Qian, Q. et al. Maximum mean square discrepancy: a new discrepancy representation metric for mechanical fault transfer diagnosis[J]. Knowl. Based Syst. 276, 110748 (2023).

Qian, W., Li, S. & Jiang, X. Deep transfer network for rotating machine fault analysis[J]. Pattern Recogn. 96, 106993 (2019).

Wang, X., He, H. & Li, L. A hierarchical deep domain adaptation approach for fault diagnosis of power plant thermal system[J]. IEEE Trans. Industr. Inf. 15 (9), 5139–5148 (2019).

Wan, B. et al. Thermoelectric Monitoring Main Bearing Using TrAdaboost Learning Algorithm in Diesel Engine[C]//2023 IEEE 18th Conference on Industrial Electronics and Applications (ICIEA). IEEE, : 1321–1326. (2023).

Zhou, H. et al. Cross-domain intelligent fault diagnosis of rolling bearing based on distance metric transfer learning[J]. Adv. Mech. Eng. 14 (11), 16878132221135740 (2022).

Zhang, R. et al. Transfer learning with neural networks for bearing fault diagnosis in changing working conditions[J]. IEEE Access. 5, 14347–14357 (2017).

Hasan, M. J. & Kim, J. M. Bearing fault diagnosis under variable rotational speeds using stockwell transform-based vibration imaging and transfer learning[J]. Appl. Sci. 8 (12), 2357 (2018).

Shao, S. et al. Highly accurate machine fault diagnosis using deep transfer learning[J]. IEEE Trans. Industr. Inf. 15 (4), 2446–2455 (2018).

Ganin, Y. et al. Domain-adversarial training of neural networks[J]. J. Mach. Learn. Res. 17 (59), 1–35 (2016).

Luo, J., Huang, J. & Li, H. A case study of conditional deep convolutional generative adversarial networks in machine fault diagnosis[J]. J. Intell. Manuf. 32 (2), 407–425 (2021).

Guo, L. et al. Deep convolutional transfer learning network: a new method for intelligent fault diagnosis of machines with unlabeled data[J]. IEEE Trans. Industr. Electron. 66 (9), 7316–7325 (2018).

Chen, Z. et al. A multi-source weighted deep transfer network for open-set fault diagnosis of rotary machinery[J]. IEEE Trans. Cybernetics. 53 (3), 1982–1993 (2022).

Zhu, J. et al. Cross-domain open-set machinery fault diagnosis based on adversarial network with multiple auxiliary classifiers[J]. IEEE Trans. Industr. Inf. 18 (11), 8077–8086 (2021).

Jian, C. et al. Open-set domain generalization for fault diagnosis through data augmentation and a dual-level weighted mechanism[J]. Adv. Eng. Inform. 62, 102703 (2024).

San Martin, G. et al. Deep variational auto-encoders: a promising tool for dimensionality reduction and ball bearing elements fault diagnosis[J]. Struct. Health Monit. 18 (4), 1092–1128 (2019).

Dave, V. et al. Deep learning-enhanced small-sample Bearing Fault Analysis using Q-Transform and HOG image features in a GRU-XAI Framework[J]. Machines 12 (6), 373 (2024).

Kahr, M. et al. Condition monitoring of ball bearings based on machine learning with synthetically generated data[J]. Sensors 22 (7), 2490 (2022).

Zhao, K., Jia, F. & Shao, H. A novel conditional weighting transfer Wasserstein auto-encoder for rolling bearing fault diagnosis with multi-source domains[J]. Knowl. Based Syst. 262, 110203 (2023).

Wang, X. et al. A trackable multi-domain collaborative generative adversarial network for rotating machinery fault diagnosis[J]. Mech. Syst. Signal Process. 224, 111950 (2025).

Cao, Z. et al. Learning to transfer examples for partial domain adaptation[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. : 2985–2994. (2019).

Saito, K. et al. Open set domain adaptation by backpropagation[C]//Proceedings of the European conference on computer vision (ECCV). : 153–168. (2018).

Case Western Reserve University Bearing Dataset. Available online: July (2020). https://engineering.case.edu/bearingdatacenter (accessed on 3.

SEU bearing datasets. [Online], Available: https://github.com/cathysiyu/Mechanical-datasets (accessed 2019, September).

Lessmeier, C. et al. Condition Monitoring of Bearing Damage in Electromechanical Drive Systems by Using Motor Current Signals of Electric Motors: A Benchmark Data Set for Data-Driven Classification. European Conference of the Prognostics and Health Management Society, Bilbao (Spain), [Online]. Available: mb. (2016). uni-paderborn.de/kat/datacenter

Fu, J. et al. Improved open set domain adaptation with backpropagation[C]//2019 IEEE International Conference on Image Processing (ICIP). IEEE, : 2506–2510. (2019).

Liu, H. et al. Separate to adapt: Open set domain adaptation via progressive separation[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. : 2927–2936. (2019).

Su, Y. et al. A Novel Open Set Adaptation Network for Marine Machinery Fault Diagnosis[J]. J. Mar. Sci. Eng. 12 (8), 1382 (2024).

Acknowledgements

This research is supported by the Natural Science Foundation of Shaanxi Province of China (2023-JC-YB-547).

Author information

Authors and Affiliations

Contributions

Writing—original draft, Tongfei Lei ; software, Feng Pan ; validation, Jiabei Hu ; writing—review and editing, Xu He ; conceptualization, Bing Li. All authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Lei, T., Pan, F., Hu, J. et al. A fault diagnosis method for rolling bearings in open-set domain adaptation with adversarial learning. Sci Rep 15, 10793 (2025). https://doi.org/10.1038/s41598-025-88353-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-88353-1

Keywords

This article is cited by

-

Domain-adaptive faster R-CNN for non-PPE identification on construction sites from body-worn and general images

Scientific Reports (2026)

-

Auto-embedding transformer under multi-source information fusion for few-shot fault diagnosis

Scientific Reports (2025)

-

A real-time fault detection strategy for cables based on adaptive feature enhancement and multi-scale temporal modeling

Discover Artificial Intelligence (2025)