Abstract

Artificial intelligence has significantly accelerated the development of hydrological forecasting. However, research on how to efficiently identify the physical characteristics of runoff sequences and develop forecasting models that simultaneously address both global and local features of the sequences is still lacking. To address these issues, this study proposes a new PCPFN (PolyCyclic Parallel Fusion Network) prediction model that leverages the multi-periodic characteristics of runoff sequences and shares global features through a dual-architecture parallel computation approach. Unlike existing models, the PCPFN model can extract both the periodic and trend-based evolution features of runoff sequences. It constructs a multi-feature set in a “sequence-to-sequence” manner and employs a parallel structure of an Encoder and BiGRU (Bidirectional Gated Recurrent Unit) to simultaneously capture changes in both local, adjacent features and global characteristics, ensuring comprehensive attention to the sequence features. When predicting runoff data for three different hydrological conditions, the PCPFN model achieved R2 values of 0.97, 0.98, and 0.97, respectively, with other evaluation indicators significantly outperforming the benchmark models. Additionally, due to the opacity in feature distribution processes of AI models, SHAP (Shapley Additive exPlanations) analysis was used to evaluate the contribution of each feature variable to long-term runoff trends. The proposed PCPFN model, during parallel computation, not only utilizes the intrinsic features of sequences and efficiently handles the distribution of local and global features but also shares predictive information in the output module, achieving accurate runoff forecasting and providing crucial references for timely warning and forecasting.

Similar content being viewed by others

Introduction

Short-term runoff forecasting plays a crucial role in water resource development and management1,2, the operation and maintenance of hydraulic engineering3, and flood and drought disaster prevention4,5. However, due to the complex influence of global climate change6 and human activities7, extreme floods occur frequently8,9,10, and the evolutionary pattern of the runoff process has become increasingly complex, resulting in the forecast results of short-term runoff being difficult to meet the real needs within the practical accuracy range. Therefore, it is essential to consider hydrometeorological and physical characteristics in achieving accurate runoff forecasts.

To improve the accuracy of runoff forecasting, scholars have developed a series of technical methods, primarily focusing on data pre-processing and model selection (evaluation and improvement).

Related research on data preprocessing techniques

Pre-processing techniques include the selection of various influencing factors11,12, the identification of evolution patterns13,14, and the treatment of sequence noise15,16, etc. With the rapid development of monitoring technology, most watersheds now have comprehensive hydrological observation facilities that can collect essential data on watershed surface conditions, rainfall, temperature, and evaporation to meet the requirements of forecasting tasks17,18, by leveraging these feature elements, more information options can be provided for the forecasting task, enabling accurate runoff prediction19,20. However, some watersheds globally still have sparse hydrological stations and limited monitoring equipment, making it challenging to obtain hydrological data17 effectively. For example, in this study21, the Nepal watershed had to rely on limited rainfall and temperature data for hydrological simulation due to the scarcity of hydrological stations and sensors. In another study22, researchers used a range of hydrometeorological factors and developed an “encoder-decoder” based Long Short-Term Memory (LSTM) network model to address flood forecasting in data-scarce regions worldwide. Considering that all factors influencing runoff changes ultimately feedback into the runoff evolution23,24, focusing on extracting intrinsic features from a single runoff sequence is both valuable and efficient. To identify runoff evolution patterns and obtain sequence characteristics, many studies have adopted the “decomposition-prediction-reconstruction” model25,26, opening new avenues for improving hydrological forecast accuracy. However, sequences processed by decomposition techniques still exhibit extreme noise for different runoff periods. Therefore, this study27 extracted hydrological signal features using two decomposition methods or performed high and low-frequency feature selection on the decomposed sequences28 to achieve secondary denoising. These data pre-processing methods provide effective means for attaining high-accuracy runoff forecasts. Decomposition methods typically highlight periodic, trend, and seasonal features in time series. In practical applications, runoff prediction models that account for multiple periodic characteristics are better suited to capture the dynamics of hydrological systems, leading to improved accuracy and reliability. The “decompose-predict-reconstruct” approach often underutilizes the diverse feature components. Converting multiple periodic components into a “multi-feature set” enhances the model’s ability to learn interactions between features and boosts prediction efficiency.

Related research on model evaluation and improvement techniques

Research on model evaluation and improvement techniques can reflect the applicability of different forecasting models and the advantageous effects of considering different improvement techniques29,30. Process-driven models, as the prior foundation of model research, are often used in hydrological forecasting tasks. Conceptual models (such as the Xinanjiang Model31 are constructed based on a deep understanding of the mechanisms of watershed hydrological processes, predicting future runoff conditions by simulating components of the hydrological cycle like rainfall, evaporation, and soil moisture content. Distributed hydrological models (such as the SWAT Model32) overcome the limitations of computational capacity and data collection, clearly presenting the spatial variation of hydrological elements, thus addressing the shortcomings of conceptual hydrological models in considering spatial characteristics.

Currently, theoretical research on the hydrological cycle process is not perfect, and unknown datasets and unclear quantitative relationships still exist in some aspects. This directly results in process-driven hydrological models being constrained by time and space, with their internal structures unable to generate relative responses, leading to high uncertainty in simulation results33. Data-driven hydrological models do not need to consider complex hydrological cycle processes34, and they focus on the relationships among data, establishing hydrological models by analyzing the relationships between runoff and precipitation, effectively covering unexplored physical relationships and making flow predictions more effective35. Depending on the complexity of the model, data-driven models are classified into three types: traditional single-model algorithms Support Vector Machine36, neural networks (Back Propagation Neural Network37), and ensemble learning (Wavelet Networks38. At the present stage, neural network models are common data-driven models for flood forecasting. Many scholars use gated recurrent units (GRU) to handle hydrological forecasting tasks39,40,41, but single forward structures cannot meet accuracy requirements. BiGRU integrates past and future information through a bidirectional recurrent structure, achieving ideal results in many forecasting tasks42.

Presently, data-driven models also have certain limitations. Idealized assumptions and approximations in model building can lead to excessive state equations and parameters, discrepancies between the model and actual situations, and high computational complexity and difficulty43. This method requires a specific sample size to construct input-output relationships, but runoff and forecasting factor data usually suffer from missing data, short observation series, and data biases. Therefore, data-driven model prediction accuracy is influenced by data quality, the model’s adaptability to data44, its ability to extract global features45, and the need to capture long-term dependencies between inputs and outputs46. In recent years, the Transformer model47 from deep learning has been widely used in prediction fields. It relies entirely on attention modules to compute the representation of its inputs and outputs without using sequence-aligned recurrent neural networks (such as LSTM). This architecture can directly connect any two positions in a time series process using self-attention modules, meaning the Transformer can strengthen or weaken connections between any two positions, making it more flexible than traditional neural network models. This study48 first applied the Transformer model to flood forecasting tasks. Its internal attention architecture effectively addresses the lack of ability in traditional models to capture global features. For the Transformer model, the self-attention mechanism module is its crucial module, and it is often used in models to enhance performance. This study49 showed good predictive performance by reallocating feature weights to the hidden vectors output by the GRU model using an attention mechanism when predicting runoff in the Yangtze River basin with limited data. Another study50 proposed a spatiotemporal attention LSTM, independently generating attention weight matrices through spatial and temporal attention modules, focusing on more valuable feature factors in both time and space.

However, merely evaluating the applicability of different forecasting models does not meet the needs of modern, precise hydrological forecasting. In recent studies, using “series” or “parallel” integration concepts, combining various model architectures and data processing techniques, has shown superior performance in different forecasting tasks. In one study51, a slight water storage effect was incorporated into the original Xinanjiang model to explain small-scale water storage structures’ regulation and storage effect on surface runoff. Another study52 combined a remote sensing-enhanced SWAT model with a bidirectional long short-term memory model to improve daily flow simulation. A study using the “series” prediction concept53 proposed a model that combines multiple grid-based data (precipitation, EVI, soil moisture) to drive CNN-LSTM predictions of daily runoff, achieving ideal prediction results. Advances in hydrological and hydrodynamic models and computational capabilities have also enabled high-resolution flood inundation and impact forecasting in operational flood warning systems, providing more detailed and real-time information for flood management54. However, in the current field of hydrological forecasting, there is still a lack of research on how to utilize the intrinsic features of runoff efficiently and reasonably couple improved “encoding-decoding” structures to propose models that adapt to runoff characteristics.

Introduction to model structure and innovations

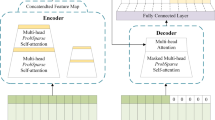

This paper proposes a new PCPFN forecasting model based on the “encoding-decoding” structure, which directly captures various periodic signals of runoff sequences and efficiently utilizes both global and proximal features of the runoff sequence. The model first processes the runoff data into multiple harmonic signals and then inputs the processed sequences as multi-feature factors into the “position encoding-encoder” module and multi-layer bidirectional gated recurrent unit (BiGRU) module. The output results obtained through parallel computation of these two modules are concatenated at the same latitude to share predictive information. Finally, the dimensional matrix is flattened, and the final prediction result is obtained through the BatchNorm1d and linear layers. To validate the practicality of PCPFN in daily runoff forecasting tasks, we used four evaluation indicators to assess the prediction model’s performance. Comparing the results with those of GRU, BiGRU, Attention-BiGRU, Transformer, PFN, and PC-PFN, the proposed PCPFN model demonstrated superior predictive performance for daily runoff sequences.

The main contributions of this study are summarized as follows:

-

(1)

A new PCPFN daily runoff sequence prediction model is proposed. This model adapts to the time-frequency local structure of a single runoff sequence, precisely separating modal functions of different time scales in the data, allowing the adjustment of regularization coefficients to control the sparsity and smoothness of multiple periodic characteristics of non-stationary sequences. The processed periodic components are used as multi-feature factors affecting the prediction results, and Shapley analysis is used to evaluate the contribution of each feature variable to long-term runoff trends. Through this method of processing sequences, the PCPFN model constructs an efficient forecasting method that uses the intrinsic features of runoff sequences to predict runoff (“sequence to sequence”).

-

(2)

The model proposes updating the Transformer’s decoder module using a multi-layer BiGRU module, allowing the encoder and multi-layer BiGRU modules to compute in parallel. This enables the model to focus on global information while effectively utilizing proximal features. The parallel integration method optimizes the correlation between different positions of time series information, enhancing the model’s utilization of time series features and emphasizing modeling capabilities for specific tasks, addressing the shortcomings of single models in predicting highly fluctuating runoff sequences.

-

(3)

A method for sharing predictive information is proposed, where the sequence matrix obtained from parallel module training is transferred to the same-dimensional concatenation layer. The sharing of predictive information is achieved by screening the different information in the output results from each module. Moreover, a Batch Normalization layer is added before the linear layer outputs the prediction results, effectively solving the internal covariate shift problem during parallel training, accelerating training convergence speed, and optimizing the model’s ability to extract common features from most data.

The rest of this paper is organized as follows: Section “Methods” introduces the methods and indicators for evaluating model performance. Section “Study areas and dataset” describes the study area and dataset. Section “Experiments and model settings” presents the model and experimental settings. Section “Results” analyzes the results of the model tests. Section “Discussions” discusses the research process, and section “Conclusions” concludes the paper.

Methods

Problem definition

Despite the rapid advancements in deep learning technologies in recent years, offering new possibilities for improving forecasting accuracy, existing studies indicate that traditional deep learning models have certain limitations in extracting runoff evolution patterns, dynamically adjusting focus on crucial information, and fully mining local essential information in sequences. These models find it challenging to adapt to modern data changes55,56. The advent of the Transformer model has provided a broad perspective of applications. Although the Transformer may show advantages in predicting extreme values57 in hydrological forecasting, each model design has its specific application scenarios and limitations.

Therefore, investigating whether constructing novel coupled models can adapt to specific domain forecasting tasks is valuable. This study aims to build a new artificial intelligence forecasting model using a “sequence to sequence” feature set, the Transformer model, and the BiGRU model. VMD is a popular decomposition technique that can effectively capture the intrinsic periodic characteristics of sequences. Previous studies49 have shown that BiGRU performs better when considering the direct dependencies between time steps in a time series. At the same time, the Transformer is more suitable for handling complex and multi-layered relationships within sequences. Based on the above theories, reasonably configuring and adjusting these methods can effectively focus on the peak and valley characteristic signals of runoff, achieving high fitting of the runoff sequence. To clearly define the problem and research objectives, this paper provides a quantitative description using the following formula. Figure 1 illustrates the target state displayed by the constructed model during testing. Figure 1a represents the peak section of the runoff series; Fig. 1 b depicts the valley section of the runoff series; and Fig. 1c illustrates a generalized view of the feature distribution in the data matrix, where color intensity indicates different feature attributes: darker colors represent peak features, while lighter colors indicate valley features.

-

(1)

Sequence decomposition and feature extraction

The runoff time series, X= [x1, x2, …, xt], is decomposed into k intrinsic mode functions (IMFs) using Variational Mode Decomposition (VMD), as shown in the following equation:

$$X=\sum\limits_{{k=1}}^{K} {{\mathbf{IM}}{{\mathbf{F}}_k}} +r,$$(1)where IMFk represents the k-th intrinsic mode function, and r is the residual term, representing the error in the decomposition process.

-

(2)

Model input and target sequence definition

After decomposition, [IMF1, IMF2, …, IMFk] are used as feature inputs for the model. Let the input feature sequence be Xin= [x1, x2, …, xn], where at each time step \({x_i} \in {{\mathbb{R}}^d}\)contains multiple d-dimensional features. The goal is to predict the target sequence Ytarget= [yt+1, yt+2, …, yt+m] after time step t, defined as:

$${Y_{{\text{target}}}}={f_{{\text{model}}}}({X_{{\text{in}}}};\theta )$$(2)where fmodel represents the mapping function of the coupled model, \(\theta\) is the model parameter, and m is the forecasted time step.

-

(3)

Model structure and output

The constructed model includes modules for extracting global and local features, with the detailed process provided in section “Construction of the PCPFN Model”. The overall output of the model is the feature combination, defined as follows:

$${\widehat {Y}_{{\text{target}}}}=Concat({\widehat {Y}_{{\text{Encoder}}}},{\widehat {Y}_{{\text{BiGRU}}}})$$(3)where \({\widehat {Y}_{{\text{Encoder}}}}\) and \({\widehat {Y}_{{\text{BiGRU}}}}\)represent the outputs of the global and local feature extraction modules, respectively.

The target state of the model focuses on specific global information areas.

Construction of the PCPFN model

Extraction of multi-feature components

The features influencing runoff evolution are diverse, and since all factors affecting runoff changes ultimately contribute to runoff evolution, we use the Variational Mode Decomposition (VMD) method to extract the complex components from the runoff sequence. The components obtained through VMD decomposition have a single-band frequency characteristic, concentrating on a specific frequency range. They exhibit the properties of analytic signals, making it easy to extract instantaneous frequency and amplitude. The components are orthogonal, avoiding modal aliasing, and they show good localization in both time and frequency domains. This method uses a variational principle for adaptive decomposition, with components that have clear physical meaning, reflecting the signal’s trend, periodicity, or high-frequency random fluctuations. Using different components as multi-feature inputs allows for the simultaneous consideration of the complex variations in the runoff sequence, simplifying the sequence’s complex components, and thereby improving the ability to predict complex runoff sequences. The computational process for extracting the multi-feature components is shown below:

VMD employs a non-recursive method to decompose the original hydrological sequence into modal components by constructing and solving a constrained variational problem.

The constructed constrained variational expression is:

where \(\left\{ {{u_k}} \right\}\) and \(\left\{ {{\omega _k}} \right\}\) correspond to the k-th modal component and central frequency after decomposition; ∂t is the partial derivative concerning t; δ(t) is the Dirac function; f is the original signal; \(\otimes\) denotes the convolution operation.

Variationally constrained problem solving:

Introducing a quadratic penalty term α and Lagrange multipliers λ to convert the extremum constraint problem into an unconstrained problem, as shown in Eq. (5):

The Alternating Direction Method of Multipliers (ADMM) is used to update the modal components and central frequencies and to search for the saddle points of the augmented Lagrange function, function \({u_{{\text{ }}k}}\), \({\omega _k}\) and λ after alternating optimization are shown in Eq. (6):

where γ is the noise tolerance, satisfying the fidelity requirements of signal decomposition; n is the number of iterations; \(u_{k}^{{n+1}}\left( \omega \right)\), \({u_i}\left( \omega \right)\), \(f\left( \omega \right)\) and \(\widehat {\lambda }\left( \omega \right)\) correspond to the Fourier transforms of \(u_{k}^{{n+1}}\left( t \right)\), \({u_i}\left( t \right)\), \(f\left( t \right)\) and \(\lambda \left( \omega \right)\), respectively.

Local feature extraction module

In time series data, data from adjacent time steps often exhibit strong correlations. To effectively identify features within a local neighborhood, we introduce the BiGRU model as the module for extracting local features. The BiGRU model is a type of recurrent neural network consisting of two independent GRU units: one processes data in the forward direction along the time series, while the other processes data in the reverse direction. Through this bidirectional structure, BiGRU excels at capturing local neighboring features because its memory unit design and bidirectional architecture allow it to automatically focus on the importance of adjacent information. The computation process is as follows:

where zt is the output of the update gate at time t; rt is the output of the reset gate at time t; ht is the hidden state; ht is the candidate state; f is the Sigmoid activation function; W is the weight matrix; and b is the bias vector.

Global feature extraction module

Global features can capture the relationships between distant elements in the data, preventing the overlook of important dependencies that are far apart. This is particularly important in runoff sequence forecasting tasks, where global features are necessary to capture patterns that span across regions or time steps. By extracting global features, the model can gain a holistic understanding of the data distribution and global patterns, rather than being confined to local information. We introduce the Encoder module from the Transformer model as the global feature extraction module. Before inputting it to the Encoder layer for encoding, dynamically adapt the number of input features through the LazyLinear layer, expanding the feature dimensions to the specified embedding dimension. At the same time, we use learnable positional embeddings to provide positional information, adding the results of input transformation and positional embeddings so that the matrix form received by the encoding layer is InputE = (Bs, WL, Dm), generating positional information for each dimension according to the rules in Eqs. (11) and (12). Meanwhile, the matrix form input to the BiGRU module remains unchanged, InputBG = (Bs, WL, i).

where pos is the position index of the data at a specific time step in the input sequence, Dm is the embedding dimension of the input sequence, and i is a particular vector dimension.

The self-attention mechanism, as the core component of the Encoder module, assigns different weights to different positions while processing sequence data. The process is: first, put all positional encoding vectors into matrix X, multiply with three weight matrices obtained from linear transformations to get the Query (Q), Key (K), and Value (V) vector matrices; then, perform dot product calculations between the corresponding Q vector in the sequence matrix and the other positional K vectors, the resulting attention scores are passed to the Softmax normalization layer; finally, each position’s normalized scores are multiplied with the V vectors to get the “single-head” self-attention output. In global information, the “single-head” mechanism can lead to excessive concentration of model attention, while the “multi-head” mechanism can effectively construct dependency relationships for each position, achieving high and low weight distribution of essential and general information, thereby achieving deep extraction of nonlinear features. The calculation process is as follows:

where \(W_{i}^{Q} \in {R^{{D_m} \times {d_k}}}\), \(W_{i}^{\kappa } \in {R^{{D_m} \times {d_k}}}\), \(W_{i}^{\nu } \in {R^{{D_m} \times {d_r}}}\), \({W^o} \in {R^{h{d_r} \times {D_m}}}\) are parameter vectors, h represents the number of attention heads, set dk=Dm/h, d is the dimension of the vector, and T denotes the transpose of the matrix.

Similar to traditional deep learning models, the feedforward neural network structure of the encoding layer also consists of input, hidden, and output layers. Still, each sub-layer of the encoder (Self-Attention layer and FFN layer) has a residual connection. As the number of sub-layers increases, there may be a problem of excessive attention value differences when fitting the high-dimensional array attention matrix, and performing layer normalization can distribute the attention values within an appropriate range, thereby improving the stability and generalization ability of the model’s prediction results. The attention output is non-linearly transformed through the feedforward network, and the residual connection process is shown in Eqs. (17) to (19).

where Zi is the attention output, \({Z_i}^\prime\) is the sub-layer residual connection output, Hi is the final output result; X is the high-dimensional input matrix, LayerNorm is the normalization layer, FFN is the feedforward layer.

The internal structure of the encoder layer and the transformation process of matrix dimensions are illustrated in Fig. 2.

Internal structure of the encoding layer and the transformation process of matrix dimensions.

Feature sharing layer

It is worth noting that although the internal structures of the two modules are similar, from the perspectives of model input and specific information recognition, the Global Feature Extraction Module needs to project the input data matrix to high dimensions through positional encoding and then obtain global information through the multi-head attention module. This allows the model to assign higher attention weights to extreme values during the training process of the runoff sequence. Dimension enhancement methods are not required for the Local Feature Extraction Module input. Its internal recurrent unit structure can fully consider the relevant information of neighboring positions in the sequence. Therefore, the recurrent unit module can efficiently capture the proximal features of the runoff sequence. By combining the advantages and disadvantages of the two modules, the predicted feature outputs from both modules are passed to the Concat layer, where they are concatenated along the last dimension and flattened into a one-dimensional matrix. The concatenated features are then further processed, and the final result is output through a linear layer. The feature sharing layer built using this method enables the new architecture to not only focus on global information but also effectively utilize neighboring features, ensuring accurate simulation of the varying fluctuation processes in the runoff sequence. The equation is as follows:

where\({\text{dim}}= - 1\) represents the concatenation along the last dimension, \(Conca{t_O}\) represents the concatenated output, and \({F_O}\) represents the final linear output.

The pseudocode for the PCPFN model is shown in Fig. 3, the pseudocode illustrates the parameters involved in training and the model training process. The overall framework of the IMCAEN model is shown in Fig. 4. In Fig. 4, (a) Demonstrates the flow of running the PCPFN model. X1-Xn represent different runoff sequences input into the model. (b) The M-F (Multi-Feature) Layer shows the process of constructing the multi-feature set. (c) The F-S (Feature-Sharing) Layer demonstrates the process of sharing predictive information through dimensional concatenation, tiling, and projection transformations of the output matrices from the two modules.

Pseudocode of VBGfoccrmer.

Network architecture of the proposed PCPFN.

Evaluation indicators

To more directly reflect the reliability of the prediction results of each model, the Root Mean Square Error (RMSE), Mean Absolute Error (MAE), Coefficient of Determination (R²), Nash-Sutcliffe efficiency coefficient (NSEC), and Peak Error (EP) are selected as model evaluation indicators. The smaller the RMSE, MAE, and EP, the smaller the error. The closer R² and NSEC are to 1, the better the model fits. Their calculation formulas are as follows:

where y(i) is the observed value for the sample i-th, \({\hat {y}_{_{{}}^{{(i)}}}}\) is the forecast value for the sample i-th, yavy is the average of the observed value, \({\widehat {y}_{avy}}\) is the average of the forecast values, yp is the predicted peak or valley runoff, and yf is the actual observed peak or valley runoff.

Study areas and dataset

Selection of study areas

This study selected the Leishui Basin above the Dongjiang Hydropower Station in the Yangtze River system in Zixing City, Hunan Province, China, the Quinebaug River Basin58 in New London County, Connecticut, USA, and the Housatonic River Basin near Great Barrington, Massachusetts, USA as the study areas. The Dongjiang Hydropower Station is located at 113°30′58.63″E, 25°87′87.91″N, in a subtropical monsoon humid climate. The basin above the dam has an area of 4719 km2, with an average annual flow of 144 m3/s and an annual runoff of 4.54 billion m3. The basin has a wide catchment area, frequent rainfall, and is greatly affected by precipitation, making it prone to extreme peak runoff events. The Quinebaug River is located at 71°59′02.74″ W, 41°35′50.97″ N, with a basin area of 1,846 km². It has a humid continental climate, hot summers, and high annual precipitation. The basin’s annual precipitation ranges from 763 mm to 1701 mm, with frequent and unevenly distributed rainfall, making the runoff evolution process complex and variable. The Housatonic River is located at 73°21′16.8″ W, 42°13′54.9″ N, approximately 240 km long, with a basin area of 730 km2, and a recent average flow of 13.93 m3/s. The peak value from 1914 to 2023 is 33.53 m (data from https://www.usgs.gov/). It has a temperate continental climate with significant seasonal and interannual precipitation variations, though the annual precipitation is relatively low. These three basins have distinct characteristics and exhibit different fluctuation patterns in runoff processes, making them suitable for testing the generality and robustness of the proposed PCPFN model.

Pre-processing and training preparation of datasets

This study collected 1461 daily runoff data points from Dongjiang Hydropower Station (1999–2002), 1826 daily runoff data points from the Quinebaug River Basin (1997–2001), and 1826 daily runoff data points from the Housatonic River Basin (1997–2001). Figure 5 shows the daily runoff time series and distribution for the three sites.

Original runoff series for the three sites.

The daily runoff data sets come from different basins with distinct hydrological conditions, so the model is trained separately on the three data sets to capture their unique features. Each dataset is divided into separate subsets, with 60% as the training set, 20% as the validation set, and the remaining as the test set. Table 1 provides statistical descriptions of the three datasets.

In preparing the data for model training, we need to specify the input dates and convert the data to a sliding window format, creating observational samples from continuous time series data, as shown in Fig. 6. In this study, the window length is set to 7, meaning the data from the previous 7 days (Q (t-1, t-2, t-3, t-4, t-5, t-6, t-7)) is used to predict the 8th day’s data. In daily runoff prediction, data collection (e.g., precipitation, temperature, and evapotranspiration) is typically recorded daily. A 7-day lag can effectively use the past week’s data for prediction, ensuring enough information in the runoff data while balancing computational complexity and prediction performance. We also experimented with lags of 3, 7, 15, 30, and 60, and found that a lag of 7 produced the best prediction results. The data input format, the number of features, and the output format are shown in Table 2. The format of the data matrix constructed using the sliding window is X= [Wt−n+1, WL, i], where Wt−n+1 represents the number of windows, WL represents the window length, and i represents the number of features. As a key dataset for model evaluation, the validation set is used to confirm the lag time since the PCPFN model is trained using the MSE loss function. By examining the MSE results from the validation set, it can be determined that, as shown in Table 3, the model performs best when the lag time is set to 7 at all three sites.

Sliding window dataset construction.

To accelerate model convergence, all data is normalized to the [0,1] range using the maximum and minimum values of the training set, according to the following Eq.

where xi is the input value of the i-th sample sequence, xmax and xmin are the maximum and minimum values of the training set, respectively, and \({x^{\prime}_t}\) is the output value of the i-th sample sequence.

Experiments and model settings

Development environment

The programming language used in this work is Python 3.9. Data pre-processing and management were done using the Pandas and NumPy libraries. The deep learning framework used is Pytorch, and training was conducted on an NVIDIA RTX 3080Ti GPU.

Model parameter settings

This study evaluates the performance of the PCPFN model by comparing it against seven benchmark models:

GRU (Model 8): A gated recurrent unit (GRU) structure that offers higher computational efficiency and faster training speed compared to traditional recurrent unit structures. It is particularly advantageous when balancing model complexity, as it requires less effort in hyperparameter tuning.

BiGRU (Model 7): A bidirectional gated recurrent unit (BiGRU) structure. This bidirectional architecture allows the model to capture richer feature representations, particularly when identifying dependencies in sequential data.

AT-BiGRU (Model 6): This model integrates an attention mechanism with the BiGRU architecture. After the model outputs hidden layer information, attention is applied to assign feature weights, enhancing the model’s predictive performance.

Transformer (Model 5): An advanced “encoder-decoder” model based on a multi-head attention mechanism, known for its outstanding performance.

PFN (Model 4): The Parallel Fusion Network (PFN) is built on the encoder module and a bidirectional GRU structure. It combines the strengths of both modules to capture global and local features.

PC-PFN (Model 3): The PolyCyclic-Parallel Fusion Network (PC-PFN) differs from the PCPFN model by processing various periodic components extracted from the sequence individually. These components are fed into the PFN model for prediction, and the final prediction is obtained by aggregating the predicted components.

PCPFN (Model 2) and MA-PCPFN (Model 1): See section “Problem definition” for a detailed description of these models.

This study uses the MA59 (Mayfly Algorithm). The dual population structure of MA allows it to effectively balance exploration (global search) and exploitation (local search). Male mayflies adjust their positions based on their own experience and the positions of other males (local optimal positions), while female mayflies move towards the most attractive male mayflies (global optimal positions60). By dynamically adjusting the search range and introducing elite selection strategies, MA effectively escapes local optima, enhancing global search capability, which improves the solution quality and convergence speed61.

The activation function used in model training is the Sigmoid function, the loss function is the MSE function, and the Adam algorithm is used to update parameters. The learning rate is set to 0.001, the number of iterations is set to 100, and the batch size is set to 256. The iteration number for the MA optimization algorithm is 5, the total population size is 10, and the random seed number is set to 2023. Parameter settings are shown in Table 4. The optimization range of the MA algorithm and the hyperparameter optimization results of the PCPFN model are shown in Table 5.

Experimental settings

This study conducted three sets of experiments:

-

Experiment 1 compared the performance of four models (GRU, BiGRU, AT-BiGRU, Transformer) to evaluate the significant effects of the attention module in prediction tasks.

-

Experiment 2 compared the performance of three models (Transformer, BiGRU, PFN) to explore the sensitivity of the two benchmark models to peaks and valleys in the sequence and to evaluate the comprehensive predictive performance of the PFN model after combining the advantageous modules of the two models.

-

Experiment 3 compared the performance of four models (MA-PCPFN, PCPFN, PC-PFN, PFN) to study the impact of two different input methods on the prediction results after pre-processing the runoff sequence, analyze the efficiency of the “sequence to sequence” feature input method in model training, and evaluate whether the optimized model can achieve the best generalization performance.

-

Experiment 4 compared the performance of two models (PCPFN and PFN), verifying whether the models maintain performance when predicting at different time steps and highlighting the differences in error accumulation between the two models.

Experiment 1 investigates the attention mechanism’s ability to focus on global features, Experiment2 evaluates PFN’s comprehensive ability to predict peaks and valleys in the sequence, and Experiment 3 verifies PCPFN’s efficient and accurate prediction of runoff sequences and the highest prediction accuracy achieved by the model after optimization. Experiment 4 analyzes the model’s multi-step prediction performance and error accumulation characteristics, emphasizing the impact of multi-feature inputs on the prediction results. The methods and parameter settings used in the experiments are described in section “Study areas and dataset” and “Model parameter settings”.

Results

To demonstrate the comprehensive performance of the models, this study selected runoff data from three basins and four evaluation indicators for assessment. Table 6 shows the evaluation metric results for all models on the training, validation, and test sets. Section “Experiment 1: verification of bidirectional unit structure and attention mechanism effects” to “Experiment 4: analysis of multi-step prediction performanceand error accumulation characteristics” provide detailed analyses of the test results and the outstanding performance of each model.

Experiment 1: verification of bidirectional unit structure and attention mechanism effects

Figure 7 illustrates the comparison of the predicted results of the four models Attention-BiGRU, Transformer, BiGRU, and GRU with the actual observations (Observed) in three different watersheds. Figure 7a in the figure represents the model’s experimental results on the Dongjiang Hydropower Station dataset; Fig. 7b represents the model’s test results on the Quinebaug River dataset; and Fig. 7c represents the model’s test results on the Housatonic River dataset.

-

(1)

From Fig. 7, it can be seen that both BiGRU and GRU can track the overall trend of the time series. However, at some critical moments (peak and valley flow), the BiGRU prediction curve is closer to the observed data, especially in the runoff data of Dongjiang Hydropower Station. In all three subplots, where data volatility is greater, BiGRU can better adapt to such fluctuations. The results shown in Table 6 indicate that the NSEC values for BiGRU after predicting the three different runoff datasets are 0.69, 0.84, and 0.88, respectively, representing improvements of 4.55%, 1.21%, and 4.76% compared to GRU predictions.

The quantitative indicators obtained from the above analysis and charts confirm that BiGRU can more accurately capture patterns in time series data with strong temporal dependencies, especially when considering forward and backward dependencies in the time series. BiGRU improves prediction performance by utilizing the complete neighboring information of the sequence.

-

(2)

In all three subplots, when facing larger peak flows, AT-BiGRU’s prediction curve at peaks is closer to the observed values, providing a tighter fit. When dealing with dynamic changes in each sequence, the attention mechanism can focus on time points where significant flow changes occur, especially when the flow fluctuates rapidly over time. By incorporating the attention module, AT-BiGRU can quickly respond to sudden changes in flow. The quantitative indicators in Table 6 indicate that the NSEC values for AT-BiGRU after predicting the three different runoff datasets are 0.77, 0.86, and 0.90, representing improvements of 11.59%, 2.38%, and 2.27% compared to BiGRU predictions.

From the above analysis, it is confirmed that introducing the attention mechanism into the bidirectional GRU structure allows the model to dynamically allocate different weights at each time point in the time series. This enables the model to adjust the importance of each time point based on its impact on the prediction results, identifying the past and future time features that are most critical for the current prediction.

-

(3)

From Fig. 7, it can be seen that when dealing with flow volatility, the Transformer’s prediction curve is smooth and closely follows the actual observed curve, especially in fitting extreme peaks and valleys. The Transformer can focus on time points where significant flow changes occur through the multi-head attention mechanism. For example, in the 150–250 day interval of Dongjiang’s extreme peak values, the Transformer model shows the best simulation effect compared to other models, and it almost perfectly fits the peak curve of Quinebaug on day 170. The quantitative indicators shown in Table 6 indicate that the NSEC values for the Transformer after predicting the three different runoff datasets are 0.80, 0.88, and 0.95, representing improvements of 3.89%, 2.33%, and 5.56% compared to AT-BiGRU predictions.

The Transformer’s ability to achieve high simulation accuracy for runoff is partly due to its multi-head attention mechanism, which can capture multiple patterns and relationships, preventing the model from over-relying on specific features in the training data. Another reason is that the internal multi-head attention mechanism allows multiple heads to process information in parallel, improving computational efficiency and potentially providing a more comprehensive representation during model learning.

Comparison of model test results in Experiment 1.

Experiment 2: focus on peaks and valleys by PFN

Figure 8 compares the prediction results of the Transformer, BiGRU, and PFN models with actual observed values in three different basins and the comparison results of local peaks and valleys. Figure 8a in the figure shows the model’s experimental results on the Dongjiang Hydropower Station dataset; Fig. 8b displays the model’s test results on the Quinebaug River dataset; and Fig. 8c presents the model’s test results on the Housatonic River dataset. The black dashed boxes indicate the enlarged selected peak and valley regions. The right side displays the predicted curves of local peaks and valleys. The red dashed boxes highlight the peak-valley areas, emphasizing the fitting performance of each model. Table 7 analyzes the error results of the predicted peaks and valleys in different periods (31 days per period) for the three models in each basin.

-

(1)

The core multi-head self-attention mechanism within the Transformer model allows the model to fully consider extreme peaks in the runoff sequence when processing each element of the sequence. Additionally, the model’s ability to process sequence data in parallel can simultaneously consider information from multiple time points, particularly when predicting peaks. As shown in Fig. 8, the Transformer model’s predicted peak results are significantly better than those of the BiGRU when faced with runoff data from three different sites. BiGRU’s internal gating mechanism allows it to more effectively control information flow, determining the relevance of neighboring information to predicting the current step. It can be observed from Fig. 8 that BiGRU performs better in fitting smoother runoff. However, the Transformer model overlearns the peak conditions in the training data, capturing complex patterns of peaks but possibly also learning noise or anomalies that should not be generalized, leading to overreactions in predicting smoother actual conditions. In recurrent networks, each prediction step depends on the previous step’s output. If early predictions in the sequence are erroneous, these errors accumulate within the network, reducing prediction accuracy when dealing with high peaks. The peak and valley error results shown in Table 7 indicate that the Transformer’s valley prediction errors are high, and BiGRU cannot achieve ideal accuracy in peak predictions.

-

(2)

By combining the multi-head attention mechanism and parallel computing strategy of the Transformer model with the BiGRU model’s efficient utilization of neighboring information through its gating mechanism, the PFN model shows good results in predicting peaks and valleys for different datasets. From the local magnification part in Fig. 8, it can be seen that the PFN model shows the highest fitting effect when simulating peak values in the runoff sequence and does not overreact when predicting valleys. Analyzing the quantitative indicators in Table 7, the PFN model’s prediction errors for peaks and valleys at the three different sites significantly outperform those of the two individual models in most cases. Referring to the evaluation indicators in Table 6 and the fitted curves of the prediction results in Fig. 8, the NSEC values obtained by the PFN model after predicting the three different runoff datasets are 0.83, 0.92, and 0.95, making it the best-performing model in this experiment.

Comprehensive analysis shows that the PFN model, through a parallel coupling of multiple modules, dynamically adjusts the use of different training modules based on the input data characteristics during training, thereby automatically selecting the most suitable method for different runoff data. Therefore, the PFN model excels at handling long-distance dependencies and accurately predicting peaks while also demonstrating high accuracy in the normal variations of runoff sequences.

Comparison of model test results in Experiment 2.

Experiment 3: Verifying the superiority of the “sequence to sequence” feature input method and the impact of hyperparameter optimization on test results

Figure 9 compares the prediction results of MA-PCPFN, PCPFN, PC-PFN, and PFN models with actual observed values in three different basins and compares the distribution of predictions. Figure 9a in the figure presents the model’s experimental results on the Dongjiang Hydropower Station dataset; Fig. 9 b displays the model’s test results on the Quinebaug River dataset; and Fig. 9c shows the model’s test results on the Housatonic River dataset. The right side features a box plot illustrating the distribution of the predicted and original sequences. Table 8 shows the runtime during the training process for the PCPFN and PC-PFN models (using six processed components as an example).

-

(1)

Experiment 2 confirmed that PFN has a high fit for both peak and valley curves in daily runoff forecasting tasks, but there are still observable deviations when predicting peaks. To meet practical requirements within the acceptable accuracy range for short-term runoff forecasting, PC-PFN effectively separates noise from runoff data using decomposition methods, decomposing the mixed signal into a predetermined number of intrinsic mode components. These smooth components are then fed into the model for training, and the weighted results show higher prediction accuracy. As shown in the box plots in Fig. 9, the highest points of PC-PFN’s predictions are closer to observed peaks, and the overall distribution trend of predictions aligns more closely with observed values. From the line chart, the visual deviation between PC-Bformer’s peak predictions and observed values is smaller. According to the conclusions in Table 6, the R2 values obtained by PC-PFN after predicting the three different runoff datasets are 0.92, 0.95, and 0.94, respectively, representing improvements of 15.00%, 3.26%, and 3.30% compared to PFN predictions.

-

(2)

The method of feeding the processed mode components one by one into the model to predict the final ensemble prediction results significantly improves prediction accuracy. However, the periodic components in different frequency domains, when predicted one by one, may lead the model to overly adapt to the specific characteristics of a single component, failing to fully utilize the interaction effects among various features. By using decomposition methods to obtain multimodal components of flow changes and incorporating them as multi-feature inputs for runoff prediction, the model’s ability to capture complex dynamic features is optimized. PCPFN uses this method to simultaneously receive information from all relevant features, capturing correlations and potential interactions between different sequences. From the model runtime shown in Table 8, it can be seen that PCPFN’s runtime is controlled within 15 s, significantly more efficient than PC-PFN. Analyzing the results shown in Fig. 9, peak prediction results may appear higher or lower in the three stations, but these deviations are within a controllable range. PCPFN shows a high consistency between predicted and observed values for smooth values. According to the conclusions in Table 6, the R² values obtained by PCPFN after predicting the three different runoff datasets are 0.93, 0.97, and 0.97, representing improvements of 1.08%, 2.11%, and 3.19% compared to PCPFN predictions.

-

(3)

The PCPFN model shows efficient and superior performance in predicting three different runoff datasets, but its internal random hyperparameter variations can cause unstable differences in prediction results. The MA-PCPFN model finds the optimal hyperparameter configuration through the MA algorithm, enabling the model to capture general patterns in the data rather than features specific to the training data, accurately predicting target variables, reducing prediction errors, and improving model fit to the data. As shown in Fig. 9, MA-PCPFN performs more stably, reducing fluctuations in prediction results, particularly in different data environments, by controlling peak and valley deviations. According to the conclusions in Table 6, the R2 values obtained by MA-PCPFN after predicting the three different runoff datasets are 0.97, 0.98, and 0.97. Compared to all the prediction models shown in the table, the PCPFN model optimized by the MA algorithm achieves the best prediction results.

Comprehensive analysis shows that integrating multiple mode sequences obtained through decomposition into a composite feature set maintains data integrity, ensuring that the model comprehensively considers the interrelated information of each periodic sequence. The “sequence to sequence” method proposed in this study not only achieves higher daily runoff forecasting accuracy but also reduces the computational resources and time required for model training and prediction, improving prediction efficiency. Additionally, the inclusion of algorithms to optimize model parameters enables the model to adapt to different types of data distributions and changes more quickly, adjusting its internal parameters to fit new data structures, and enhancing prediction stability and accuracy.

Comparison of model test results in Experiment 3.

Experiment 4: analysis of multi-step prediction performance and error accumulation characteristics

Multi-step prediction reflects the dynamic change trends of a system over future time periods, which is particularly important for runoff forecasting tasks that require continuous decision-making. However, multi-step prediction often faces higher computational complexity compared to single-step prediction. The quality of the model’s predictions directly determines its performance in solving such complex tasks. In this section, we will discuss the performance of the PCPFN and PFN models in multi-step prediction tasks using two different prediction horizons. Table 9 shows the evaluation indicators for both models in predicting runoff at three different sites, while Fig. 10 visualizes the error accumulation process for both models in a three-step forecast.

-

(1)

The information in Table 9 shows that although the PFN model uses only the runoff sequence as input, it still demonstrates a certain level of stability in both 2-step and 3-step predictions. Notably, in the Quinebaug and Housatonic basins, the R2 values are 0.82 and 0.86 (for 2-step prediction) and 0.59 and 0.69 (for 3-step prediction), indicating the model’s applicability in medium- to short-term forecasting. However, compared to the PFN model, the PCPFN model, which incorporates multi-feature inputs through VMD decomposition, shows higher accuracy and stability in 2-step- and 3-step predictions. In all the studied basins, the R2 values for the PCPFN model are significantly higher than those of the PFN model, demonstrating a clear advantage in controlling error accumulation. For example, in the 3-step prediction for the Dongjiang basin, the NSEC for the PCPFN model is 0.74, a 13.51% improvement over the PFN model. Furthermore, in the 3-step prediction for the Quinebaug basin, the R² for PCPFN reached 0.81, significantly outperforming PFN’s 0.59.

-

(2)

Compared to the single-step prediction results in Table 6, the model’s prediction accuracy decreases significantly as the number of prediction steps increases. This is due to the accumulation of errors amplified with each additional prediction step. Subfigures a and b in Fig. 10 represent the error accumulation processes for the PFN and PCPFN models, respectively. The figure shows that both models exhibit larger prediction errors when faced with extreme peaks, a phenomenon particularly noticeable in the Dongjiang basin. However, the PCPFN model effectively suppresses error propagation through its ability to extract multi-sequence features. Specifically, during peak prediction periods, its prediction error is reduced by more than 50% compared to the PFN model, and during trough periods, the prediction error approaches zero.

In summary, our developed model shows strong performance in multi-step prediction tasks. The PCPFN model, based on its ability to capture global and local features from multi-feature sequences, is better equipped to capture the complex evolution characteristics of the watershed. This indicates that the model is well-suited for medium- to short-term forecasting.

Error accumulation process during model prediction.

Evaluation metric analysis

RMSE gives higher weight to larger errors, meaning that large prediction errors are emphasized through squaring, thus having a greater impact on model evaluation. This is suitable for applications where large deviations need special attention. However, the daily runoff evolution process involves extreme changes between peaks and valleys over time, and RMSE data changes can determine the model’s ability to predict extreme and anomalous values in sequences. In the results shown in Table 6, the RMSE values of the PFN model for the Dongjiang, Quinebaug River, and Housatonic River datasets are 67.78, 11.75, and 5.57, respectively, averaging 16.28%, 28.02%, and 27.53% lower than those of Transformer, AT-BiGRU, BiGRU, and GRU. This indicates that the PFN model has significant sensitivity in predicting extreme values.

MAE provides the average level of error, giving equal weight to all errors compared to RMSE, without being overly influenced by individual large errors. Therefore, it can measure the overall level of the model in prediction tasks. In the results shown in Table 6, the MAE values of the PCPFN model for the Dongjiang, Quinebaug River, and Housatonic River datasets are 25.35, 6.21, and 1.72, showing lower average errors, indicating that the PCPFN model has high robustness in handling average errors.

In summary, analysis of the RMSE and MAE results shows that the PFN model has significant effectiveness in predicting extreme values compared to other benchmark models. The PCPFN model, incorporating “sequence to sequence” multi-feature prediction, not only achieves lower RMSE values but also demonstrates excellent performance in handling overall errors, as confirmed by the MAE results. Figure 11 shows bar and line charts of RMSE and MAE, and radar distribution charts of R2 and NSEC. From the visual structure in the figures, it can be seen that after optimizing the PCPFN’s hyperparameters using the MA algorithm, all evaluation indicators achieve optimal results. Figures 12, 13, and 14 show scatter density plots of observed and predicted flows in the test set for the three stations, where R2 is the coefficient of determination, indicating the proportion of the variance in the dependent variable y that can be explained by the independent variable x. Among the three stations, the MA-PCPFN model shows the best fit.

Comparison of results of model evaluation indicators.

The regression images of each model on the testing set of Dongjiang hydropower station.

The regression images of each model on the testing set of Quinebaug River station.

The regression images of each model on the testing set of the Housatonic River station.

Discussions

Feasibility of using “sequence to sequence” feature input method

In practical runoff forecasting applications, runoff time series data often contain random fluctuations and abnormal noise62. VMD’s adaptability ensures it can be optimized for the specific characteristics of the data, effectively separating noise63. By reducing noise levels, the model can more accurately identify and respond to the true patterns in the runoff data rather than errors caused by noise.

However, feeding the decomposed single sequence individually as input may not provide sufficient depth of information for model64. Processed multiple modal sequences can represent dynamic changes at different time scales, showing sequences with different periodic frequencies, and these multimodal sequences are strongly correlated with the original runoff sequence. As shown in Fig. 15, using Pearson correlation coefficients to verify the correlation between different decomposed components and the original sequence, most components have a correlation coefficient with the original value in the range of 0.3 to 0.8, and the components also have correlations among them. Therefore, using multimodal components decomposed by VMD as multi-feature inputs for the model is practical.

Components (IMF1–MF6) of the three runoff datasets after decomposition and the correlation between features.

Model implication

Explainable Artificial Intelligence (XAI) has garnered attention for its ability to provide explanations for model results and enhance model credibility65,66,67. The PCPFN data-driven AI model proposed in this study can explore the relationships between various predictive factors and target variables after data pre-processing by analyzing historical data. However, evaluating which factors affect the simulation accuracy at specific moments during the simulation of runoff evolution68 and exploring the positive and negative feedback relationships among multiple features in the target variable69 are major challenges in balancing model complexity and interpretability.

The PCPFN, constructed using the “sequence to sequence” approach and optimized for global feature focus, achieved R2 values of 0.97, 0.98, and 0.97 on the Dongjiang, Quinebaug River, and Housatonic River datasets, respectively, demonstrating significant reliability. However, considering the limited interpretability of our proposed data-driven AI model, SHAP value analysis was introduced to measure the feedback mechanism of multiple variable features obtained through data pre-processing on the long-term trends of daily runoff. From the SHAP value swarm plot shown in Fig. 16, it can be observed that most components used in the runoff simulation process exhibit a strong positive feedback mechanism with high feature values. The dense concentration of SHAP values for IMF1 to IMF3 and IMF5 on the right side suggests that these periodic features contribute positively to the prediction results. In contrast, IMF4 and IMF6 show some negative feedback on the predictions, with IMF4, in particular, having a significant negative impact when forecasting data for the three stations.

Contribution of each input variable to the long-term trend in runoff.

Analysis of runoff characteristics in different basins

Different basins have distinct topography, climate, land use, and hydrological characteristics, leading to significant differences in their runoff processes70. Studying data from different basins helps train models to understand the spatial heterogeneity in runoff and flood processes. Comparative analysis of different basins can also reveal regional differences and their impact on forecasting models, thereby enhancing model robustness71.

Table 10 shows the statistical results of three segments of daily runoff data, with the calculation process shown in Eq. (25). The Dongjiang basin has a subtropical monsoon humid climate, with a high average daily runoff (168.38 m3/s) and large variance, indicating significant fluctuations in runoff. The highest daily runoff reaches 1170 m3/s, showing that the basin is strongly affected by extreme weather events. The Quinebaug River has a humid subtropical climate, with a relatively low average daily runoff (34.91 m3/s) and high variance (1365.39 m3/s), indicating that although the flow is low, its variability is still significant, showing that this basin is also affected by certain extreme weather events. The Housatonic River has a temperate continental climate, with the lowest average daily runoff (14.30 m3/s) and small variance (217.08 m3/s), indicating less fluctuation in runoff compared to the other two basins. The highest runoff is 120.06 m3/s, relatively low.

Figure 17 shows the linear and nonlinear trend results of the three different daily runoff sequences. The Dongjiang basin’s daily runoff exhibits high-frequency peaks, with the nonlinear trend curve showing relatively stable runoff throughout the monitoring period, and the linear trend line also stable, indicating relatively uniform daily runoff in the Dongjiang basin except for extreme events. The Quinebaug River’s daily runoff shows sharp peaks, which are significant although not as high as those in the Dongjiang basin, with the highest point near 300 m3/s. The relatively low trend line indicates that the basin is sensitive to rainfall events. The Housatonic River’s daily runoff peaks are smaller compared to the other two basins, showing low-frequency peaks, and both trend lines are relatively flat, indicating that runoff is more stable with less fluctuation most of the time.

From the above analysis, the three basins exhibit different characteristics due to their geographical locations and climatic conditions. However, the MA-PCPFN model proposed in this study achieves NSEC values of 0.94, 0.98, and 0.98 when predicting different sequences, indicating that the model accurately captures runoff changes under different climatic conditions and handles significant differences in average flow, flow variability, extreme values, and time series fluctuations across different basins. This difference demonstrates the generalization capability of the MA-PCPFN model under various conditions.

Visualization of linear and nonlinear trends in runoff data at each station.

Purpose of model parameter optimization

By observing changes in training loss and validation loss, it can be determined whether the model is overfitting or underfitting the training data72. The iterative process of these two indicators can better judge the stability performance of the model during training.

Figure 18 shows the iterative process of training loss and validation loss for the PCPFN and MA-PCPFN models during training. From the figure, it can be seen that without hyperparameter optimization, the PCPFN model shows rapid convergence during the training process for three different datasets, with training loss quickly decreasing and stabilizing at a low level, indicating that the model effectively learns the data characteristics of each basin. However, the validation loss fluctuates greatly, suggesting that the PCPFN model tends to overfit. The MA-PCPFN shows a slightly higher initial training loss than the PCPFN but quickly reduces and approaches zero, with the validation loss exhibiting lower volatility and better stability in the later stages. Additionally, with the same prediction period, the results obtained by testing the Quinebaug River runoff data using the MA-PCPFN model proposed in this study (NSEC: 0.98) are better than the test results (NSEC: 0.84) of that study73.

In summary, hyperparameter optimization significantly improves the model’s generalization ability, particularly in terms of stability and low volatility of validation loss. This indicates that the optimized model is better suited to handle diverse and complex basin data, reducing overfitting to the training data and making it more applicable to generalization and stability requirements in real-world environments.

Iterative process of model training loss.

Performance study of the model for the problem of data-less (PUB) regions

The data-less (PUB) problem is a central challenge in hydrological research, particularly in regions lacking long-term hydrological observation data74. In this context, the application of advanced deep learning models offers a novel approach to addressing the PUB issue75. This section discusses the selection of the Cow Creek watershed in the Azalea region of southern Oregon as a case study to validate the superiority of the PCPFN model (data and watershed information sourced from https://ral.ucar.edu/solutions/products/camels). The watershed has a climate characterized by abundant winter precipitation and dry summers, leading to significant temporal variability in its hydrological response. In particular, runoff fluctuates sharply in spring due to the combined effects of precipitation and snowmelt. Although meteorological data is available, long-term hydrological observation data for the watershed is limited. In this situation, relying on meteorological and other auxiliary data to predict runoff becomes the only viable option. Therefore, this study uses daily solar hours, rainfall, wind speed, maximum and minimum temperatures, and atmospheric pressure data from January 1, 2002, to December 31, 2009, as well as physical feature components derived from the PCPFN model, to predict daily runoff from May 28, 2008, to December 31, 2009. Table 11 presents the evaluation indicators for each model under the same input conditions, while Fig. 19 illustrates the feature distribution during the training of the PCPFN model.

According to the results in Table 11, even without using the original sequence as feature input, the PCPFN model achieved the best performance across four evaluation indicators in predictions at different time steps. In the one-step prediction, the PCPFN model achieved an R² value of 0.86, significantly outperforming Transformer (0.43), AT-BiGRU (0.73), BiGRU (0.70), and GRU (0.59). In the multi-step prediction experiments, particularly the three-step prediction, performance declined for all models as errors accumulated. However, the PCPFN model consistently outperformed the others in terms of average prediction error, with MAE of 18.56 and RMSE of 33.08, both notably lower than those of the Transformer (39.67, 79.17). In comparison, although AT-BiGRU and BiGRU performed relatively well at this step length, they still lagged behind the PCPFN model. This result suggests that, compared to other individual models, the PCPFN model is better at leveraging limited hydrometeorological data. It utilizes bidirectional gated units and encoder modules to deeply uncover hidden patterns in the flow sequence, thus providing a better fit for short-term flow variations. Notably, although Transformer is considered one of the most advanced deep learning models and has demonstrated strong capabilities in many fields, its performance in this task was underwhelming, with an R² of only 0.43 in the one-step prediction task. The likely reason is that the Transformer model failed to properly understand the inherent relationship between meteorological data and hydrological response when assigning feature weights. Therefore, the development of novel deep learning models can effectively overcome the limitations of using a single model for such tasks.

To better illustrate the feature distribution of the PCPFN model during the training process with hydrometeorological data, we used Principal Component Analysis (PCA) and t-Distributed Stochastic Neighbor Embedding (t-SNE) to visualize the global and local perspectives of feature distribution for the BiGRU and Encoder modules during parallel computation76. This helps demonstrate the model’s superiority in addressing the PUB problem. PCA and t-SNE utilize linear and nonlinear dimensionality reduction techniques, respectively, to display the distribution of global and local features along the principal component directions. Subfigure a and b of Fig. 19 show the visualization of feature distribution during the training of Cow Creek watershed meteorological data for both modules. In this, PCA Component 1–3 represents the projection of the feature matrix onto the first, second, and third principal components, with the components ordered by variance. t-SNE Component 1–3 represents the coordinates of the feature matrix in the first, second, and third dimensions after t-SNE dimensionality reduction. The PCA analysis results from the figure indicate that the feature points for the Encoder module are more dispersed within the region, suggesting superior diversity in capturing global features. In contrast, the features extracted by the BiGRU module are more densely distributed within the principal component space, indicating lower variability along the second principal component direction. The t-SNE analysis reveals that, from a local spatial perspective, both modules exhibit distinct clustering of feature distributions. However, the dimensions and ranges of these clusters differ significantly.EncoderThe Encoder module shows a broader range of clustering in all three dimensions, while the BiGRU module’s clustering is more concentrated. Combined with the global PCA analysis, this suggests that the BiGRU module places greater emphasis on recognizing nearby local features.

Based on the above analysis, the results from the four evaluation indicators and the visualization of feature distribution confirm that the PCPFN model demonstrates clear superiority in feature recognition from both global and local perspectives, while also achieving numerical results within the desired range. According to our discussion in this section, the performance of the PCPFN model slightly decreased when addressing the PUB problem, but it still outperformed several of the most advanced deep learning models.

Feature distribution after training data with BiGRU and Encoder modules.

Conclusions

This study used the “sequence to sequence” approach to construct multi-feature inputs and built the PCPFN model, which shares global features through parallel computation with the encoder module and recurrent unit components. This model is tested on daily runoff data from three basins. The validation showed that models built on the attention mechanism (Transformer, AT-BiGRU) could effectively focus on global features compared to traditional GRU. Additionally, the PCPFN model could fully capture the sequence’s various periodic and trend features. Based on the encoder module, it can provide encoded features from different subspaces, and the bidirectional recurrent unit structure can efficiently capture proximal features of the runoff sequence. This balances the weight allocation of peak and valley features during model training, achieving precise prediction of sequence peaks and valleys. To obtain a more generalized prediction model, the MA algorithm is used to optimize the model’s hyperparameters, achieving optimal fitting of the runoff. Furthermore, SHAP analysis was introduced to visualize feature distribution and evaluate the positive and negative feedback of feature sets constructed using the “sequence to sequence” method during runoff simulation.

Experimental results show:

-

In validating the four models (Transformer, AT-BiGRU, BiGRU, and GRU), BiGRU based on the bidirectional recurrent unit structure outperformed the single-loop structure GRU, with BiGRU’s NSEC results being on average 3.36% higher than those of GRU. Introducing attention to the bidirectional structure achieved an NSEC of up to 0.90. However, single-head attention tends to focus on its position overly. The Transformer, using its multi-head attention mechanism, obtained the best evaluation metric results in this set of experiments.

-

To verify PCPFN’s efficient use of multimodal features and ability to capture global-specific regional information, the peak and valley error results from PFN, Transformer, and BiGRU predictions showed that PFN had the smallest average error. This model effectively balanced the feature attention levels of the two modules. In Experiment 3, the method of feeding decomposed components one by one into the model for final ensemble prediction resulted in a 3.95% reduction in R2 compared to the “sequence-to-sequence” feature construction method. Additionally, PCPFN improved average operational efficiency by 82.49% compared to PC-PFN.

-

To validate the superiority of the PCPFN model in multi-step prediction tasks and PUB problems, it maintains a certain level of stability despite the accumulation of errors. Specifically, in the Quinebaug and Housatonic watersheds, the R² values reached 0.91 and 0.95 (for two-step predictions) and 0.81 and 0.87 (for three-step predictions). For the PUB problem in the Cow Creek watershed, the PCPFN model consistently outperforms other deep learning models at any prediction step, with an average NSCE result of 0.81.

-

When constructing the “sequence to sequence” feature set, the Pearson correlation coefficient verified a correlation coefficient value between 0.3 and 0.8 between each feature component and the original runoff sequence. To enhance model interpretability, SHAP analysis of the input features showed that most feature values captured by the model were positive, indicating that the constructed feature set had a positive feedback impact on model prediction.

The method proposed in this study provides a new approach for accurate daily runoff forecasting, but it still has shortcomings. Future research can delve into the hydrological and physical characteristics of runoff sequences, extend the prediction period, further enhance the interpretability of the model training process. At the same time, to address the issue of data-scarce regions, additional variables are incorporated, and artificial intelligence forecasting models with enhanced feature learning capabilities are developed, thereby overcoming the limitations of traditional hydrological models.

Data availability

Runoff data from the two watersheds used in this study (Quinebaug River, Housatonic River) are publicly available in https://hydrology.nws.noaa.gov/pub/ and https://www.usgs.gov/. Runoff data from Dongjiang Hydropower Station may be obtained from the corresponding author authors upon request.

References

Sheffield, J. et al. Satellite remote sensing for water resources management: Potential for supporting sustainable development in data-poor regions. Water Resour. Res. 54, 9724–9758. https://doi.org/10.1029/2017WR022437 (2018).

Mirchi, A., Watkins Jr, D. W., Huckins, C. J., Madani, K. & Hjorth, P. Water resources management in a homogenizing world: Averting the growth and underinvestment trajectory. Water Resour. Res. 50, 7515–7526. https://doi.org/10.1002/2013WR015128 (2014).

Xu, B. et al. Scenario-based multiobjective robust optimization and decision-making framework for optimal operation of a cascade hydropower system under multiple uncertainties. Water Resour. Res. 58 https://doi.org/10.1029/2021WR030965 (2022). e2021WR030965.

Dąbrowska, J. et al. Between flood and drought: How cities are facing water surplus and scarcity. J. Environ. Manage. 345, 118557. https://doi.org/10.1016/j.jenvman.2023.118557 (2023). https://doi.org:.

Fang, W. et al. Assessment of dynamic drought-induced ecosystem risk: Integrating time-varying hazard frequency, exposure and vulnerability. J. Environ. Manage. 342, 118176. https://doi.org/10.1016/j.jenvman.2023.118176 (2023).

Waidelich, P., Batibeniz, F., Rising, J., Kikstra, J. S. & Seneviratne, S. I. Climate damage projections beyond annual temperature. Nat. Clim. Change https://doi.org/10.1038/s41558-024-01990-8 (2024).