Abstract

This study explores the performance of deep learning models, specifically Convolutional Neural Networks (CNN) and XGBoost, in predicting alpha and beta thalassemia using both public and private datasets. Thalassemia is a genetic disorder that impairs hemoglobin production, leading to anemia and other health complications. Early diagnosis is essential for effective management and prevention of severe health issues. The study applied CNN and XGBoost to two case studies: one for alpha-thalassemia and the other for beta-thalassemia. Public datasets were sourced from medical databases, while private datasets were collected from clinical records, offering a more comprehensive feature set and larger sample sizes. After data preprocessing and splitting, model performance was evaluated. XGBoost achieved 99.34% accuracy on the private dataset for alpha thalassemia, while CNN reached 98.10% accuracy on the private dataset for beta-thalassemia. The superior performance on private datasets was attributed to better data quality and volume. This study highlights the effectiveness of deep learning in medical diagnostics, demonstrating that high-quality data can significantly enhance the predictive capabilities of AI models. By integrating CNN and XGBoost, this approach offers a robust method for detecting thalassemia, potentially improving early diagnosis and reducing disease-related mortality.

Similar content being viewed by others

Introduction

Thalassemia is a genetic blood disorder that gets its name from two Greek words: “thalassa,” meaning “the ocean,” and “haima,” meaning “blood.”1This condition is marked by the body’s inability to produce enough hemoglobin, which is a protein in red blood cells. Hemoglobin is essential for carrying oxygen from the lungs to the rest of the body and for bringing carbon dioxide back to the lungs to be exhaled2.

Thalassemia is one of the most common diseases globally, especially in Mediterranean regions. Many countries are currently facing a growing number of thalassemia cases, making it a major public health issue and a significant cause of disability and death worldwide. Detecting thalassemia early can help lower mortality rates, so it’s crucial for healthcare providers to make accurate diagnoses. This is particularly important when identifying carriers of genetic disorders, like thalassemia, and distinguishing them from healthy individuals3.

Currently, thalassemia screening and diagnosis methods include the osmotic fragility test, blood smear examination for target Red Blood Cells (RBCs), detection of inclusion bodies, RBC indices, hemoglobin electrophoresis, High-Performance Liquid Chromatography (HPLC), genetic testing, and next-generation sequencing11,12,13,14. Of these, RBC indices and genetic testing are crucial for prevention and diagnosis in thalassemia screening programs. Genetic testing considered the gold standard, is widely used for accurate diagnosis3,4,5,6,7,8,9,10, while RBC indices are part of automated Complete Blood Count (CBC) tests, providing a quantitative and precise measure. Although mathematical formulas based on RBC indices have been proposed for screening, their effectiveness remains limited without validation in larger populations15,16,17,18. In clinical practice, thalassemia screening often relies on mean corpuscular volume (MCV) and Mean Corpuscular Hemoglobin (MCH)19,20,21,22,23,24,25, which are straightforward methods for identifying microcytosis and hypochromia and deciding if further testing is needed. Additionally, RBC distribution width (RDW) can help differentiate thalassemia from iron deficiency anemia26,27. A more comprehensive diagnosis can be achieved by evaluating multiple parameters simultaneously, a process that can be enhanced using machine learning models.

A CNN is a powerful model within the machine learning framework that is particularly effective for analyzing visual data. Unlike traditional neural networks, CNNs have a specialized architecture designed to automatically and adaptively learn spatial hierarchies of features from input data, which makes them especially suitable for image recognition and classification tasks. During the training process, CNNs utilize convolutional layers to extract important features from data, followed by pooling layers that reduce dimensionality and computational complexity. This ability to recognize complex patterns and features in images makes CNNs an advanced tool for medical imaging analysis, such as identifying disease markers in radiology or pathology images28,29,30. Furthermore, as more data becomes available, the performance of CNN models typically improves, allowing for more accurate and reliable predictions in medical diagnostics.

XGBoost, short for Extreme Gradient Boosting, is a highly efficient and scalable machine learning algorithm that has become popular for its performance in classification and regression tasks. Unlike traditional decision trees, XGBoost employs a gradient boosting framework, which builds an ensemble of weak decision trees sequentially, optimizing each new tree to correct the errors of the previous ones31,32,33. This approach allows XGBoost to effectively model complex relationships and patterns in data, making it suitable for predictive tasks in various fields, including healthcare. Due to its robust handling of missing values, feature importance ranking, and ability to prevent overfitting through regularization, XGBoost is particularly well-suited for large datasets and high-dimensional data. As the volume of data increases, XGBoost continues to deliver high accuracy and reliability, making it a powerful tool for advanced diagnostics and predictive modeling in medical research.

The current study is aligned with two case studies as primary contributions:

-

Case study 1: To find out the best deep learning model in a term of alpha thalassemia prediction accuracy across public datasets and privately aligned datasets.

-

Case study 2: To find out the best deep learning model in a term of beta thalassemia prediction accuracy across public datasets and privately aligned datasets.

-

This study will provide the best deep learning models from both case studies which perform exceptionally on alpha thalassemia patients and beta thalassemia patients.

In this study we aim to determine the suitable deep learning models for predicting alpha and beta thalassemia using both public and private datasets. It includes two case studies: one predicting models for alpha and the other for beta thalassemia. The objective is to identify the best set of models for each conditions for improving prediction.

Literature review

The primary focus of this study is to demonstrate deep learning among alpha thalassemia and beta thalassemia patients using the public dataset and the proposed private data.

The article34 “Fuzzy-Based Fusion Model for β-Thalassemia Carrier’s Prediction Using Machine Learning” presents a machine learning approach to predict β-thalassemia carriers by analyzing red blood cells. The study emphasizes the need for cost-effective and rapid screening methods for β-thalassemia, a genetic disorder characterized by abnormal hemoglobin production. It proposes a late fusion model that combines four machine learning algorithms—logistic regression, Naïve Bayes, decision tree, and neural network—to improve prediction accuracy. The model achieved an overall accuracy of 96% in detecting β-thalassemia carriers, outperforming existing approaches in terms of efficiency, reliability, and precision. The research highlights the potential of machine learning and fuzzy logic in enhancing medical diagnostics and reducing the mortality associated with thalassemia through early intervention and genetic counseling.

The article35 “An Application of Machine Learning to Thalassemia Diagnosis” explores two machine learning techniques for predicting thalassemia, a genetic blood disorder. The study proposes using Principal Component Analysis (PCA) followed by Logistic Regression (PCA-LR) and Partial Least Squares Regression (PLS) to enhance diagnostic accuracy. The PCA-LR model, which reduces data dimensionality before applying logistic regression, achieved a prediction accuracy of 87.5%. In contrast, the PLS model, which handles multicollinearity and small sample sizes effectively, reached a higher accuracy of 92.5%. The research highlights the potential of these statistical models to improve thalassemia diagnosis by offering reliable and efficient alternatives to traditional methods reliant on expert clinical judgment.

The article36 “Ascertaining Genetics of β-Thalassemia and Sickle Cell Disease Using Machine Learning Heuristics” explores how machine learning algorithms can improve the screening and diagnosis of genetic blood disorders like β-thalassemia and sickle cell disease. Focusing on a North Indian cohort, the study employs methods such as Random Forest, Logistic Regression, Support Vector Machines, and Decision Trees to predict carrier states and pathogenic variants based on simple blood test parameters. The results show that Decision Trees achieved high accuracy for hemoglobin variant prediction (96%), while Random Forest was the most accurate for predicting β-thalassemia carriers (87.39%). These findings suggest that integrating bioinformatics with machine learning could significantly enhance genetic screening programs in Europe by providing more accurate, efficient, and cost-effective diagnostic tools. This could be especially valuable given the genetic diversity and demographic changes in Europe, offering improved early diagnosis and management of these disorders across diverse populations.

The study37 “Enhancing Thalassemia Gene Carrier Identification in Non-Anemic Populations Using Artificial Intelligence Erythrocyte Morphology Analysis and Machine Learning” addresses the challenge of detecting Thalassemia Trait (TT) carriers, particularly non-anemic individuals, using an AI-based approach. Traditional genetic testing, while effective, is expensive and resource-intensive, making it less accessible in underdeveloped regions. To improve detection, the researchers developed the TT@Normal model, leveraging digital erythrocyte morphology data and machine learning, specifically the Random Forest algorithm. The study analyzed data from 76 TT gene carriers and 97 healthy controls, finding that the model accurately identified TT carriers with the presence of target cells, microcytes, and teardrop cells as key indicators. The model demonstrated outstanding performance, with high sensitivity and specificity, even in an external validation cohort. The TT@Normal tool, accessible online, offers a cost-effective, scalable solution for identifying TT carriers in non-anemic populations, particularly in regions with limited medical resources.

Sadiq et al.38 developed an ensemble classifier model that combines a random forest, support vector machine, and gradient boosting machine to detect patients with thalassemia using CBC data. This model was applied to a dataset containing CBC results from 5,066 patients, which were obtained through the Punjab Thalassemia Prevention Program (PTPP). The input parameters for the study included red blood cell count, hemoglobin level, hematocrit, mean cell volume, mean cell hemoglobin concentration, mean cell hemoglobin, red blood cell distribution width, platelet count, and white blood cell count. The model successfully identified β-thalassemia carriers with an accuracy of 93% as shown in Table 1.

Table 1depicts the limitations of previous studies. It shows that study34used logistic regression, naïve bayes, decision tree and neural network to detect beta thalassemia using private data and it achieved 96% detection accuracy but in this study deep learning case study is missing which incorporate as its limitation, the study35used logistic regression to detect thalassemia using private data and it achieved 92.5% detection accuracy but in this study deep learning case study is missing which incorporate as its limitation, the study36used decision tree to detect beta thalassemia using private data and it achieved 96% detection accuracy but in this study deep learning case study is missing which incorporate as its limitation, the study37used artificial intelligence erythrocyte morphology analysis to detect thalassemia traits using private data and it achieved 76 TT and 97 healthy controls but in this study deep learning case study is missing which incorporate as its limitation and the study38 used ensemble classifier to detect beta thalassemia using private data and it achieved 93% detection accuracy but in this study deep learning case study is missing which incorporate as its limitation.

Methodology

Figure 1 shows that this study focuses on evaluating two deep learning models, CNN and XGBoost, using both private and public datasets to detect alpha and beta thalassemia. By analyzing these models, the research aims to improve the accuracy of thalassemia detection and provide insights into the effectiveness of different datasets.

The proposed methodology to analyze the case studies of deep learning algorithms.

Case study 1: alpha thalassemia

The first case study evaluates CNN and XGBoost models using private and public datasets for alpha thalassemia detection. The goal is to compare how well these models perform on different types of datasets to identify strengths and weaknesses.

Case study 2: Beta thalassemia

In the second case study, the same CNN and XGBoost models are applied to private and public datasets focused on beta-thalassemia. Again, the models are compared to assess their effectiveness and consistency across both types of datasets.

Methodology

Dataset

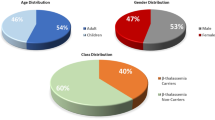

The proposed model used a dataset which is collected from PTPP. PTPP is taking measures towards a thalassemia-free country. The primary goal of PTPP is to screen the alpha thalassemia and beta thalassemia carriers with the help of state-of-the-art types of equipment. In cascade screening, whenever an alpha thalassemia or beta thalassemia patient is diagnosed, the complete screening of both parents’ families is performed. PTPP performs more than 400,000 tests every year. The record of 10,060 alpha thalassemia patients who are diagnosed after the CBC test and 9,981 beta thalassemia patients who are diagnosed after the CBC test. Table 2 depicts the private dataset of alpha thalassemia and beta thalassemia. Table 339 depicts the public dataset features of alpha thalassemia, and Table 440 depicts the public dataset features of beta thalassemia.

Figure 1 depicts the structure of the given study which is explained below in different phases:

Case study 1: alpha thalassemia

The first case study evaluates CNN and XGBoost models using private and public datasets for alpha thalassemia detection. The goal is to compare how well these models perform on different types of datasets to identify strengths and weaknesses. The proposed model also shows the results of overfitting and underfitting models.

Case study 2: Beta thalassemia

In the second case study, the same CNN and XGBoost models are applied to private and public datasets focused on beta thalassemia. Again, the models are compared to assess their effectiveness and consistency across both types of datasets. The proposed model also shows the results of overfitting and underfitting models.

Methodology workflow

Data collection and preprocessing

The study begins by gathering datasets from two sources:

Private datasets

These are obtained from clinical records, which include genetic markers, blood test results, and patient demographics related to alpha and beta-thalassemia.

Public datasets

Publicly available medical datasets related to thalassemia, such as those found in repositories like Kaggle and GitHub, are also used for comparison.

Once collected, the data is preprocessed to ensure it is ready for training:

Handling missing data

Missing values are addressed using methods such as mean or median imputation.

Encoding categorical data

Categorical variables such as gender or blood type are transformed into numerical values through techniques like one-hot encoding.

Data splitting

Each dataset is then split into two parts: The first part is a training set (70%) this portion is used to train the deep learning models and the second part is a testing set (30%) this subset is reserved for testing the models on new, unseen data to evaluate their performance.

Model training

The study uses two models:

Convolutional neural networks (CNN)

Best suited for clinical data, this model is used if the dataset includes clinical records.

XGBoost

A powerful tree-based algorithm, XGBoost is used for tabular data that includes patient information, genetic markers, and lab test results.

The models are trained on 70% of training data. During training, the accuracy and other performance metrics are monitored to ensure the models are learning effectively.

Accuracy validation and testing

Validation

As the models train, they are validated using metrics such as accuracy, precision, recall, and loss. The goal is to reach a pre-defined accuracy threshold (e.g., 90%). If the models meet this threshold, they are then tested on the remaining 30% of the dataset.

Testing

After training, the models are evaluated on the test set to measure their real-world performance. The results are analyzed using a confusion matrix, precision, recall, and F1-score.

Retraining if necessary

If the models fail to achieve the desired accuracy or other performance measures, retraining is initiated. This may involve tuning hyperparameters like the learning rate or batch size, adjusting the neural network architecture, or applying regularization techniques (e.g., dropout).

Results comparison

The final step in the research involves comparing the performance of the CNN and XGBoost models across both private and public datasets for alpha and beta-thalassemia. The study focuses on:

Performance metrics

Accuracy, precision, recall, F1-score, sensitivity, and specificity are recorded.

Figure 2 depicts the architecture of the CNN model used in this study.

CNN

Data preprocessing

Feature and label extraction

The proposed method extracted the feature set X and the label vector y from the available dataset, selecting columns n to m for features and column y for labels.

X = available dataset (:,n:m).

y = available dataset (:,y).

Conversion to arrays

The extracted data was converted to arrays, if initially provided as tables, to facilitate further processing.

X = table2array(X) if X is a table.

y = table2array(y) if y is a table.

Categorization of labels

The label vector y was ensured to be categorical, as required for classification tasks.

y = categorical(y) if y is not logical or numeric.

Data splitting

The dataset was split into training and testing subsets using a 70%− 30% split:

cv = cv-partition (size(X,1),’HoldOut’,0.3).

The indices were used to separate training and testing data:

Xtrain = X(not idx,:)

ytrain = y(not idx,:)

Xtest = X(idx,:)

ytest = y(idx,:)

Data reshaping for CNN input

The training and testing feature sets were reshaped into 4D arrays for input into the Convolutional Neural Network (CNN):

Xtrain = reshape(\({\bf X}^{\prime}_{{\rm test}}\),[\(\:{\eta\:}_{features}\),1,1, \(\:{\eta\:}_{train}\)])

Xtest = reshape(\({\bf X}^{\prime}_{{\rm test}}\),[\(\:{\eta\:}_{features}\),1,1, \(\:{\eta\:}_{test}\)])

where \(\:{\eta\:}_{features}\) is the number of features, \(\:{\eta\:}_{train}\) and \(\:{\eta\:}_{test}\) are the number of observations in training and testing sets, respectively.

CNN architecture

The CNN was defined with the following architecture:

-

Input Layer: imageInputLayer([\(\:{\eta\:}_{features}\),1,1])

-

Convolutional Layers: Two layers with 8 and 16 filters respectively, using ReLU activation and batch normalization: convolution2 dLayer1,3,8,’Padding’, ’same’) convolution2 dLayer1,3,16,’Padding’, ’same’)

-

Fully Connected Layers: One layer with 10 units, followed by a dropout layer with a rate of 0.50, and another fully connected layer matching the number of classes: fullyConnectedLayer(10).

-

dropoutLayer(0.5.

-

fullyConnectedLayer(\(\:{\eta\:}_{classes}\))

-

Output Layer: softmaxLayer and classificationLayer.

Model training

The CNN was trained using the Adam optimizer for 20 epochs, with a mini-batch size of 32 and an initial learning rate of 0.01: options = trainingOptions(’adam’,’MaxEpochs’, 20,’MiniBatchSize’, 32,’InitialLearnRate’, 1e- 2).

The model M was trained with: M = trainNetwork(Xtrain,ytrain,layers, options).

Model evaluation

Training data accuracy

The accuracy on the training set was calculated as.

Testing data accuracy

Similarly, the accuracy on the testing set was calculated.

Confusion matrices

Confusion matrices were plotted for both training and testing sets to visualize the classification performance.

XGBoost

Data preprocessing

Feature and label extraction

The feature set X and the label vector y were extracted from the dataset available dataset, selecting columns n to m for features and column y for labels.

X = available dataset(:,n:m).

y = IDABTT(:,y).

Conversion to arrays

The extracted data was converted to arrays if they were initially provided as tables to facilitate further processing.

X = table2array(X) if X is a table.

y = table2array(y) if y is a table.

Categorization of labels

The label vector y was ensured to be categorical to be suitable for classification tasks.

y = categorical(y) if y is not logical or numeric.

Data splitting

The dataset was split into training and testing subsets using a 70%− 30% split:

cv = cv-partition (size(X,1),’HoldOut’,0.3).

The indices were used to separate training and testing data:

Xtrain = X(not idx,:)

ytrain = y(not idx,:)

Xtest = X(idx,:)

ytest = y(idx,:)

Gradient boosting machine (GBM) model training

A Gradient Boosting Machine (GBM) was trained using the LogitBoost method with 100 learning cycles and decision trees as the base learners:

M = fitcensemble(Xtrain,ytrain,’Method’, ’LogitBoost’,’NumLearningCycles’, 100,’Learners’, ’Tree’)

where M denotes the trained GBM model.

Model evaluation

Training data prediction and accuracy

The trained GBM model was used to predict the labels of the training data.

ypred_train = predict(M,Xtrain).

The training accuracy was then calculated as:

Confusion matrix for training data

A confusion matrix was plotted for the training data to visualize the classification performance.

Testing data prediction and accuracy

The GBM model was then used to predict the labels of the testing data.

ypred_test = predict(M,Xtest).

The testing accuracy was calculated as:

Confusion matrix for testing data

A confusion matrix was also plotted for the testing data to evaluate the model’s performance on unseen data.

The proposed study of CNN architecture.

Results

The proposed model used two deep learning models to elaborate on the case studies of private and public datasets of alpha-thalassemia and beta-thalassemia patients. The proposed model used a MacBook Pro 2017 and MATLAB 2022 for simulations and obtaining the results. The proposed model used two deep learning models, CNN and XGBoost, and was applied to four datasets, two are private datasets of alpha thalassemia and beta thalassemia, and two are public datasets of alpha thalassemia and beta thalassemia. The private alpha thalassemia dataset has 10,060 instances with 14 independent features, the private beta thalassemia dataset has 9981 instances with 14 independent features, and the parallel public alpha thalassemia dataset has 147 instances with 15 independent features, and the public beta thalassemia dataset has 250 instances with 13 independent features. The proposed model used different statistical matrices to evaluate the performance of models, which are already defined in the research methodology section.

Table 5 states the training and testing performance metrics results of the private alpha thalassemia dataset using CNN and it shows that the model achieves 71.47%, 28.53%, Nil, 71.47%, 0, 28.53, Nil, 0 and 100 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and model achieves 70.87%, 29.13%, Nil, 70.87%, 0, 29.13, Nil, 0 and 100 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively.

Figure 3 depicts the training progress of the CNN using a private alpha thalassemia dataset, and it shows that the proposed model takes 20 epochs to train the model with 4400 iterations and 220 iterations per epoch with a 0.01 learning rate on a single CPU. So, training progress shows a little bit of distortion, which causes a lower training model classification accuracy.

Training progress of CNN on private alpha thalassemia dataset.

Table 6 states the training and testing performance metrics results of the public alpha thalassemia dataset using CNN and it shows that the model achieves 68.93%, 31.07%, Nil, 68.93%, 0, 31.07, Nil, 0 and 100 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and model achieves 77.27%, 22.37%, Nil, 77.27%, 0, 22.37, Nil, 0 and 100 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively. So, the proposed model is underfitting on publicly available datasets.

Figure 4 depicts the training progress of the CNN using the public alpha thalassemia dataset, and it shows that the proposed model takes 20 epochs to train the model with 60 iterations and 3 iterations per epoch with a 0.01 learning rate on a single CPU. So, training progress shows almost a wavy pattern in the same sequence due to fewer instances, which causes less training model classification accuracy and underfitting.

Table 7 States the training and testing performance metrics results of the private alpha thalassemia dataset using XGBoost and it shows that the model achieves 100%, 0%, 100%, 100%, 100%, 0, 0, 0 and 0 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and the model achieves 99.34%, 0.66%, 99.32%, 99.34%, 98.88%, 0.66, 0.68, 0.28 and 1.54 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively.

Training progress of CNN on public alpha thalassemia dataset.

Table 8 states the training and testing performance metrics results of the public alpha thalassemia dataset using XGBoost and it shows that the model achieves 100%, 0%, 100%, 100%, 100%, 0, 0, 0 and 0 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and model achieves 75%, 25%, 50%, 84.38%, 52.17%, 15.63, 50, 18.18 and 45.45 testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively. So, the overall performance shows that the model is overfitted.

Table 9 States the training and testing performance metrics results of the private beta thalassemia dataset using XGBoost and it shows that the model achieves 98.38%, 1.62%, 98.20%, 98.57%, 98.38%, 1.43, 1.80, 1.79 and 1.43 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and the model achieves 97.60%, 2.40%, 97.42%, 97.77%, 97.62%, 2.23, 2.58, 2.62 and 2.18 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively.

Table 10 states the training and testing performance metrics results of the private beta thalassemia dataset using CNN and it shows that the model achieves 98.35%, 1.65%, 98.48%, 98.23%, 98.35%, 1.77, 1.52, 1.51 and 1.77 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and model achieves 98.10%, 1.90%, 97.80%, 98.39%, 98.10%, 1.61, 2.20, 2.2 and 1.60 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively.

Figure 5 depicts the training progress of CNN using a private beta thalassemia dataset and it shows that the proposed model takes 20 epochs to train the model with 4360 iterations and 218 iterations per epoch with 0.01 learning rate on a single CPU. So, training progress shows smoothness and little distortion which causes less training model classification accuracy.

Training progress of CNN on private beta thalassemia dataset.

Table 11 states the training and testing performance metrics results of the public beta thalassemia dataset using XGBoost and it shows that the model achieves 100%, 0%, 100%, 100%, 100%, 0, 0, 0 and 0 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and the model achieves 88%, 12%, 93.33%, 66.67%, 92.56%, 33.33, 6.67, 28.57 and 8.19 of testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively.

Table 12 states the training and testing performance metrics results of the public beta thalassemia dataset using CNN and it shows that the model achieves 93.71%, 6.29%, 92.76%, 100%, 96.25%, 0, 7.24, 32.35 and 0 of training classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively and model achieves 96%, 4%, 95.52%, 100%, 97.71%, 0, 4.48, 27.27 and 0 testing classification accuracy, misclassification rate, sensitivity, specificity, F1-score, false positive rate, false negative rate, false omission rate and false discovery rate respectively. The results show that the proposed model underfits on the public dataset.

Table 13 depicts the comparative analysis of the proposed model with previous studies with accuracy. It shows that all previous studies worked on various classification problems like alpha thalassemia and beta thalassemia but did not work in a single study and also did not perform any deep learning study. On the other side, the proposed model achieved the highest accuracy as compared to the previous studies, it achieves 99.34% classification accuracy of alpha thalassemia using XGBoost and 98.10% classification accuracy of beta-thalassemia using CNN also the proposed model comprises a deep learning case study. So, the case study shows deep learning performs well on thalassemia private features data as compared to the public due to fewer instances.

Conclusion

This study has demonstrated the potential of deep learning models, specifically CNN and XGBoost, in accurately predicting alpha and beta thalassemia using both private and public datasets. XGBoost achieved a remarkable 99.34% accuracy on the private alpha thalassemia dataset, while CNN reached an accuracy of 98.10% on the private beta thalassemia dataset. These high accuracies highlight the effectiveness of deep learning in medical diagnostics, especially when trained on high-quality, feature-rich private datasets. The comparison between public and private datasets revealed that private datasets, due to their size and richness, consistently yield better model performance. While the results are promising, there is room for future work to further enhance model generalization on smaller and more varied public datasets. Future research can focus on:

-

1.

Data Augmentation: Implementing techniques to synthetically increase the size of public datasets, improving model accuracy on smaller datasets.

-

2.

Hybrid Models: Combining CNN and XGBoost in an ensemble approach to leverage their strengths for more robust predictions.

-

3.

Explainability: Developing interpretable AI models to provide insights into which features most significantly contribute to accurate thalassemia detection.

-

4.

Real-World Application: Deploying these models in clinical settings to validate their effectiveness in large-scale screenings and early detection efforts. The proposed model helps the doctors and clinicians to detect the thalassemia in its early stages with the input of patients blood reports. This approach can save million of lives.

Data availability

The original contributions presented in the study are included in the article; We utilized two publicly available dataset from the Kaggle data repository at https://www.kaggle.com/datasets/letslive/alpha-thalassemia-dataset and Github data reposiotory at https://github.com/mahnoor-dotcom/analyzing-thalassemia-with-ML/blob/main/IDA_BTT.csv. Furthermore, we collect private data and utilizied this data in this study. Private data and source code will be available on the request from corresponding author.

References

Hossain, M. S., Hasan, M. M., Petrou, M., Telfer, P. & Al Mosabbir, A. Te parental perspective of thalassaemia in Bangladesh: lack of knowledge, regret, and barriers. Orphanet J. Rare Dis. 16 (1), 1–10 (2021).

Hirimutugoda, Y. M. & Wijayarathna, G. Image analysis system for detection of red cell disorders using artifcial neural networks. Sri Lanka J. Bio-Medical Inf. 1 (1), 35 (2010).

Zhuang, Q. et al. Te value of combined detection of HbA2 and HbF for the screening of thalassemia among individuals of childbearing ages. Zhonghua Yi Xue Yi Chuan Xue Za Zhi. 39 (1), 16–20 (2022).

Yin, A. et al. The prevalence and molecular spectrum of alpha- and beta-globin gene mutations in 14,332 families of Guangdong Province, China. PLoS One, 9, e89855. (2014).

Lai, K., Huang, G., Su, L. & He, Y. The prevalence of thalassemia in Mainland China: evidence from epidemiological surveys. Sci. Rep. 7, 920 (2017).

He, S. et al. Molecular characterization of alpha- and beta-thalassaemia among children from 1 to 10 years of age in Guangxi, a multi-ethnic region in Southern China. Front. Pediatr. 9, 724196 (2021).

Zheng, L. et al. Screening of some indicators for alpha-thalassemia in Fujian Province of Southern China. Int. J. Gen. Med. 14, 7329–7335 (2021).

Wang, Z. et al. Prevalence and molecular spectrum of alpha- and beta-globin gene mutations in Hainan, China. Int. J. Hematol. 114, 307–318 (2021).

Wang, M., Zhang, X., Zhang, Y. & Xiao, M. Prevalence and genetic analysis of thalassemia and hemoglobinopathy in different ethnic groups and regions in Hainan Island, Southeast China. Front. Genet. 13, 874624 (2022).

Huang, T. L. et al. Gene mutation spectrum of thalassemia among children in Yunnan Province. Front. Pediatr., 8, (2020).

Viprakasit, V. & Ekwattanakit, S. Clinical classification, screening and diagnosis for thalassemia. Hematol. Oncol. Clin. North. Am. 32 (2), 193–211 (2018).

Rund, D. Thalassemia 2016: modern medicine battles an ancient disease. Am. J. Hematol. 91, 15–21 (2016).

Achour, A., Koopmann, T. T., Baas, F. & Harteveld, C. L. The evolving role of next-generation sequencing in screening and diagnosis of hemoglobinopathies. Front. Physiol. 12, 686689 (2021).

Munkongdee, T., Chen, P., Winichagoon, P., Fucharoen, S. & Paiboonsukwong, K. Update in laboratory diagnosis of thalassemia. Front. Mol. Biosci. 7, 74 (2020).

Laengsri, V. et al. ThalPred: a web-based prediction tool for discriminating thalassemia trait and iron deficiency anemia. BMC Med. Inf. Decis. Mak. 19, 212 (2019).

Urrechaga, E. & Hoffmann, J. Critical appraisal of discriminant formulas for distinguishing thalassemia from iron deficiency in patients with microcytic anemia. Clin. Chem. Lab. Med. 55, 1582–1591 (2017).

Hoffmann, J. J., Urrechaga, E. & Aguirre, U. Discriminant indices for distinguishing thalassemia and iron deficiency in patients with microcytic anemia: a meta-analysis. Clin. Chem. Lab. Med. 53, 1883–1894 (2015).

Tong, L. et al. A new red cell index and portable RBC analyzer for screening of iron deficiency and thalassemia minor in a Chinese population. Sci. Rep. 7, 10510 (2017).

Li, B., Han, X., Ma, J. & Yang, D. Mutation spectrum and erythrocyte indices characterization of alpha-thalassemia and beta-thalassemia in Sichuan women in China: a thalassemia screening survey of 42,155 women. J. Clin. Pathol. 74, 182–186 (2021).

Saleh-Gohari, N., Khademi Bami, M., Nikbakht, R. & Karimi-Maleh, H. Effects of alpha-thalassemia mutations on the hematological parameters of beta-thalassemia carriers. J. Clin. Pathol. 68, 562–566 (2015).

Singha, K., Taweenan, W., Fucharoen, G. & Fucharoen, S. Erythrocyte indices in a large cohort of beta-thalassemia carriers: implication for population screening in an area with high prevalence and heterogeneity of thalassemia. Int. J. Lab. Hematol. 41, 513–518 (2019).

Mamtani, M., Jawahirani, A., Rughwani, V., Das, K. & Kulkarni, H. Value of mean corpuscular volume and mean corpuscular hemoglobin in screening for beta-thalassemia trait. Acta Haematol. 116, 223–225 (2006).

Sirichotiyakul, S., Wanapirak, C., Srisupundit, K., Luewan, S. & Tongsong, T. A comparison of the accuracy of the corpuscular fragility and mean corpuscular volume tests for the alpha-thalassemia 1 and beta-thalassemia traits. Int. J. Gynaecol. Obstet. 107 (1), 26–29 (2009).

Singha, K., Taweenan, W., Fucharoen, G. & Fucharoen, S. Erythrocyte indices in a large cohort of beta-thalassemia carrier: implication for population screening in an area with high prevalence and heterogeneity of thalassemia. Int. J. Lab. Hematol. 41, 513–518 (2019).

Brancaleoni, V., Di Pierro, E., Motta, I. & Cappellini, M. D. Laboratory diagnosis of thalassemia. Int. J. Lab. Hematol. 38 (Suppl. 1), 32–40 (2016).

Hoffmann, J. J. M. L. & Urrechaga, E. Role of RDW in mathematical formulas aiding the differential diagnosis of microcytic anemia. Scand. J. Clin. Lab. Invest. 80 (6), 464–469 (2020).

Lian, Y. et al. Reticulocyte hemoglobin equivalent (Ret-He) combined with red blood cell distribution width has a differentially diagnostic value for thalassemias. Hemoglobin 43, 4–5 (2019).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. ImageNet classification with deep convolutional neural networks. Adv. Neural. Inf. Process. Syst. 25, 1097–1105 (2012).

Litjens, G. et al. A survey on deep learning in medical image analysis. Med. Image Anal. 42, 60–88 (2017).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521 (7553), 436–444 (2015).

Chen, T. & Guestrin, C. XGBoost: A scalable tree boosting system, Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 785–794. (2016).

Lundberg, S. M. & Lee, S. I. A unified approach to interpreting model predictions. Adv. Neural. Inf. Process. Syst., pp. 4765–4774. (2017).

Pirracchio, R., Petersen, M. L. & van der Laan, M. Improving propensity score estimators’ robustness to model misspecification using super learner. Am. J. Epidemiol. 181 (2), 141–149 (2015).

Ibrahim, M. et al. Fuzzy-based fusion model for β-thalassemia carriers prediction using machine learning technique, Advances in Fuzzy Systems, vol. 2024, p. 4468842. (2024).

Liu, S. T. An application of machine learning to thalassemia diagnosis. J. Comput. Commun. 12, 211–230 (2024).

Kumar, A. P. S. R. A. S. et al. Ascertaining genetics of β-thalassemia and sickle cell disease using machine learning heuristics. Preprints, (2024).

Zhang, F. et al. Enhancing thalassemia gene carrier identification in non-anemic populations using artificial intelligence erythrocyte morphology analysis and machine learning. Eur. J. Haematol. 112 (5), 692–700 (2024).

Sadiq, S. et al. Classification of β-thalassemia carriers from red blood cell indices using ensemble classifier. IEEE Access. 9, 45528–45538 (2021).

Kolambage, N. Alpha Thalassemia Dataset-Carriers Vs Normal, Accessed [02 September 2024] https://www.kaggle.com/datasets/letslive/alpha-thalassemia-dataset

Mahnoor Analyzing Thalassemia with ML, Accessed [ 02 September 2024] https://github.com/mahnoor-dotcom/analyzing-thalassemia-with-ML/blob/main/IDA_BTT.csv

Funding

No funding is involved in this study.

Author information

Authors and Affiliations

Contributions

Conceptualization M.U.N, M.T.N, and M.A.K.; methodology, M.U.N, M.A.K, and M.Z; validation, S.A, M.A, O.A., and K.M.A.; formal analysis, M.U.N, T.M.G., and S.A.; investigation, M.A., and T.M.G.; resources, M.U.N., T.M.G., and M.T.N; data curation, M.U.N, M.T.N and M.A.K; writing—original draft preparation, M.Z., S.A., O.A., and M.A.K.; writing—review and editing, M.U.N, M.A.K, and M.A; visualization, M.A.K., S.A; supervision, M.Z, and M.A.K. All authors have read and agreed to the published version of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Conflicts of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Ethics approval and consent to participate

This study was approved by the Ethics committee of Riphah International University, Pakistan. Informed consent was obtained from all subjects. All the experimental procedures were performed by the standards of the Declaration of Helsinki 1964.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Nasir, M.U., Naseem, M.T., Ghazal, T.M. et al. A comprehensive case study of deep learning on the detection of alpha thalassemia and beta thalassemia using public and private datasets. Sci Rep 15, 13359 (2025). https://doi.org/10.1038/s41598-025-97353-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-97353-0