Abstract

Video classification is an important domain within computer vision. It categorizes video content into meaningful classes such as actions or emotional states. In relation to image classification, it has to deal with the problem of spatiotemporal dimensions as well as a large data volume that is present in a video. In this work we introduce a novel distance metric based video summarization technique which minimizes the size of the dataset while maintaining key temporal information. We performed our experiments using distance metrics such as norm of rows distance other than euclidean distance, norm of columns distance and eigenvalue based distance metrics. Our results show that the norm of rows distance performed well and provides a suitable balance between efficiency and accuracy. Our proposed method achieved significant accuracy of \(81.23\%\), \(92.42\%\), \(98.89\%\) and \(90.27\%\) on MMAC, UCF101, UCF11 and HMDB51 benchmark datasets. Our proposed technique continuously tracked temporal information while recalculating the distance from each key frame. Due to less computational demands, our approach performs effectively in real-world application scenarios.

Similar content being viewed by others

Introduction

After the emergence of deep learning techniques, video classification task has gained much popularity among researchers. It has become an active area of research with applications in diverse fields1. The main goal of video classification is to recognize and categorize videos according to the actions present in it. Meanwhile, it should also address the challenge of temporal information2. This means that spatial and temporal features must be captured together to understand activities such as walking or sitting etc3,4. Managing this spatio temporal information makes the task computationally cumbersome. It also needs considerable storage and processing power due to large number of frames present in video datasets. Moreover, the classification process is made even more intricate due to variations in illumination, camera angles and frame rates5. This variability and complexity are still a big challenge in computer vision6. Several solutions have been explored in literature for these challenges. One common approach involves dimensionality reduction which simplifies the data by retaining only its most crucial features. Linear methods such as Principal Component Analysis (PCA) project data to maximize variance while nonlinear techniques such as t-SNE7 and UMAP8 focus on local structure with better scalability for large datasets. Linear Discriminant Analysis (LDA) maximizes class separability and Independent Component Analysis (ICA) extracts independent factors. Similarly, autoencoders use an encoder-decoder structure for hierarchical feature learning. More recent works involve spectral embedding techniques such as Isomap and Laplacian eigenmaps which model data manifolds to capture global geometry. In parallel, deep learning approaches capture spatial and temporal features simultaneously. CNNs excel at spatial patterns while RNNs handle sequential dependencies. 3D CNNs extend 2D filters temporally while two-Stream networks combine RGB inputs with optical flow. Moreover, recent works on transformer networks utilize self-attention for long range dependencies while STGCNs9 analyze spatio temporal signals in pose based tasks. Despite significant progress in video classification, many existing methods still face practical limitations particularly concerning computational efficiency and handling of temporal information. Many recent models, such as 3D CNNs and transformer based architectures are computationally intensive and memory hungry. Additionally, most models are dependent on dense frame sequences or long temporal windows. This leads to temporal redundancy and limited interpretability.

To address the limitations that we mentioned above especially high computational cost and temporal redundancy, we propose a lightweight summarization technique based on the Norm of Rows (Max) distance. Unlike deep learning models that require large datasets to perform well, our method retains temporal information and provides better classification with significantly less data. Thus, our technique is very well suited for deployment in real-time applications. The key contributions of our work are as follows.

-

1.

A new distance metric: We propose a new distance metric that provides better classification performance in video classification applications.

-

2.

Efficiency without compromising accuracy: Our results highlight the robustness of our summarization approach which proves that key information is retained even with reduced input size of videos. This can definitely save storage and computational resources.

-

3.

Integration with deep learning models: Our method is scalable which allows the integration of deep learning models into resource limited applications while maintaining high performance.

The remainder of the paper is organized as follows. The section “Related work” refers to the related work. In the section “Methodology”,the methodology of our work is described. The section “Evaluation and results” presents the evaluation and results of our work, while the section “Discussion and future work” is dedicated to discussion and future work on the results presented in the previous section. The section “Conclusion” concludes our work.

Related work

Before the advent of deep learning methods, most video classification tasks relied on manually extracted features. Out of those, few of the notable methods include the Histogram of Oriented Gradients (HOG) as proposed by Dalal et al.10 and Optical Flow method which is proposed by Fleet et al.11. These two methods became highly notable in extracting motion and visual cues from video sequences. Similarly, other feature encoding techniques such as histogram of pyramids were utilized to represent key video characteristics. Subsequently, these encoded features were combined with classifiers such as Support Vector Machines (SVMs) and Gaussian Mixture Models (GMMs) as presented in Nguyen et al.12 to achieve classification. Additionally, temporal dependencies were addressed using techniques like Hidden Markov Models (HMMs) and Dynamic Time Warping (DTW) as proposed by Muller et al.13. This led to slight improvements in the accuracy of the temporal modeling. Moreover,Kumar et al. 14 proposed a binary-classifier-enabled filtering approach for semi supervised learning which is designed to refine pseudo labels and remove uncertain predictions. The above selective learning mechanism improved performance in cases with limited labeled data. However, there were still challenges related to scalability and generalization for large datasets. Some other methods include Spatial-temporal Interest Points (STIPs)15, Improved Dense Trajectories (iDT)16, SIFT-3D17 and ActionBank18 which offer alternative ways to capture spatiotemporal patterns in video content.

Over time with the rise of deep learning methods, video classification also gained attraction again19. For instance, convolutional neural networks (CNN) and recurrent neural networks (RNN)20 allowed the extraction of hierarchical spatial features and modeled sequential dependencies. Although CNNs were originally developed for image recognition tasks which later on excelled at capturing spatial features in video frames too. However, they failed to account for temporal dependencies. This shortcoming was addressed with the introduction of 3D Convolutional Neural Networks (C3D)21. This technique applied convolutional filters in both spatial and temporal dimensions. This method demonstrated much better performance on benchmark datasets such as UCF10122 and HMDB5123. However, C3D faced significant challenges with particularly high computational cost and extensive data requirements 21.

The literature also reports various techniques to handle the limitations of C3D. In one of the works, Long Short-Term Memory (LSTM) networks were introduced to model long term temporal dependencies, overcoming issues such as vanishing gradient problem in traditional RNNs. By integrating CNNs for spatial extraction with LSTMs for temporal modeling, architectures such as Long term Recurrent Convolutional Networks (LRCN)24 achieved state-of-the-art performance in action recognition. However, training LSTMs on long video sequences posed computational challenges, and their lack of parallelizability slowed training and inference25. Moreover, two-Stream Networks presented by Gupta et al.26 advanced video classification by combining spatial and temporal features through separate streams for RGB frames and optical flow. Similarly,the two Stream ConvNet proposed by Zisserman et al.27 demonstrated notable success in capturing both appearance and motion, achieving competitive results across several datasets. However, the resource intensive computation of optical flow and its susceptibility to noise, as well as challenges in handling long range temporal dependencies, limited its effectiveness in recognizing extended actions. Further improvements in video classification led to the development of Inflated 3D ConvNets (I3D)28, which combined the benefits of 2D and 3D CNNs. I3D offered enhanced spatiotemporal learning capabilities, but this came with the drawback of large model sizes and heavy computational requirements. Additionally, I3D struggled to recognize fine grained temporal variations that occur over short periods, limiting its ability to capture subtle movements essential for some action recognition tasks. Singh et al. 29 also used light CNN based architectures to localize objects. In other work, Kumar30 also presented some future directions for deep learning models to improve the generalization and robustness of the model. In another paper Kumar31 also discussed some adversarial techniques to reduce biases in models. Some other works such as anjbarzadeh et al.32 and khan et al.33 also proposed CNN and SQL-based architectures to improve model classification and scalability.

However, in recent years, transformer based models, capture both spatial and temporal relationships without relying on convolutional or recurrent layers34. Another method, TimeSformer35 which is an adaptation of Vision Transformer (ViT)36 for video data, divides each video frame into patches and applies self attention mechanisms in both spatial and temporal dimensions. The above architecture allowed the model to process long video sequences more efficiently. It also demonstrated better performance on several video action recognition datasets. However, it is also associated with high computational cost due to self attention mechanism that it deploys. This becomes more severe when applied to long sequences. Additionally, transformers also require large datasets with labels during training of the algorithm which is also another limiting factor. A similar work on MViTv237, the contributors developed an enhanced version of the Multiscale Vision Transformer. They introduced decomposed positional embeddings and residual pooling connections. These changes helped the model to capture spatial and temporal information in a better way for video classification.

Below, we will now present some distance metrics which are used in our work.

Distance metrics

The most important part in identifying the key segments in a video is to calculate the distance between it’s frames. Normally, euclidean distance has been used previously but it often falls short when dealing with complex variations in high dimensional video data. To solve this challenge, we propose several alternative distance metrics including Norm of Rows, Norm of Columns, Max of Eigenvalue and Sum of Eigenvalues in addition to the Euclidean distance. We applied these distances to capture different spatial and structural variations among frames to improve summarization process. Here, we present the mathematical explanation of these distances.

a. Euclidian Distance: We define the Euclidean distance between two frames \(F_i\) and \(F_j\) as an \(n\) dimensional vectors as stated below:

where \(d\) is the distance between frames and \(f_{i,k}\) and \(f_{j,k}\) are the \(k\)-th components of the frames \(F_i\) and \(F_j\), respectively. The above metric is used to calculate the direct pixel to pixel distance between two frames.

b. Norm of Rows Distance: This is our proposed Norm of Rows (Max) distance which offers a new approach by focusing on the largest deviation between corresponding rows of two frames.

In order to calculate the distance between frames our proposed technique follows below steps:

-

Step 1: Firstly, we calculate the difference between elements (pixel values) of the two matrices (frames).

-

Step 2: Now, calculate the Norm of Rows distance by applying a norm operation to each row of the difference matrix.

-

Step 3: Then select the maximum row norm as the final distance value.

In order to explain our proposed concept mathematically, we define \(F_1 \in \mathbb {R}^{m \times n}\) and \(F_2 \in \mathbb {R}^{m \times n}\) as two frames or matrices with \(m\) rows and \(n\) columns (e.g.,pixel data). The steps for computing the proposed norm of rows (Max) distance are as follows:

First, we calculate the difference between the elements of the two frames.

where \(D \in \mathbb {R}^{m \times n}\) is the difference matrix.

Now, for each row \(i\) of the difference matrix \(D\), we then compute the norm. We used the \(L_2\) norm (Euclidean norm):

where \(D_i \in \mathbb {R}^n\) is the \(i\)th row of matrix \(D\), and \(D_{i,j}\) is the \(j\)th element of row \(i\).

Now, we determine the final distance by the maximum norm among all rows:

This maximum norm identifies the row with the largest deviation between the two frames and uses that as the distance measure.

c. Norm of Column Distance: As with the the norm of rows (Max) distance, this norm of columns (Max) distance is designed to measure the dissimilarity between two frames (or matrices) by focusing on the largest deviation between corresponding columns instead of rows. Mathematically, we define \(F_1 \in \mathbb {R}^{m \times n}\) and \(F_2 \in \mathbb {R}^{m \times n}\) as two frames or matrices, as in the case previously while calculating the distance norm of rows. Here, the steps for computing the Norm of Columns (Max) distance are as follows:

First, we calculate the element wise difference between the two frames:

where \(D \in \mathbb {R}^{m \times n}\) is the difference matrix.

Now, for each column \(j\) of the difference matrix \(D\), we compute the norm as:

where \(D_j \in \mathbb {R}^m\) is the \(j\)th column of matrix \(D\), and \(D_{i,j}\) is the \(i\)th element of column \(j\).

We determine the final distance by the maximum norm among all columns:

This distance is usually good for action recognition applications where individual columns correspond to specific features for instance joint angles or limb positions.

d. Max of Eigen value Distance: This distance uses the concept of eigenvalues to quantify the dissimilarity between two frames. It calculates the difference between two frames by forming a square matrix and multiplying this difference matrix by its transpose. Then, it calculates the eigen values from this result and the maximum eigenvalue becomes the new distance. Mathematically, we define \(F_1 \in \mathbb {R}^{m \times n}\) and \(F_2 \in \mathbb {R}^{m \times n}\) as two frames and where \(m\) is the number of rows and \(n\) is the number of columns. Here, we present the steps to calculate above distance:

First, we calculate the element wise difference between the two frames:

where \(D \in \mathbb {R}^{m \times n}\) is the difference matrix.

Next, we compute the product of the difference matrix \(D\) and its transpose:

Here, \(A \in \mathbb {R}^{m \times m}\) is a square matrix that encapsulates the covariance like structure of the differences between the frames.

Now, we calculate the eigenvalues of the matrix \(A\):

where \(\lambda _i\) represents the \(i\)th eigenvalue of matrix \(A\). Here, the eigenvalues capture the amount of variance along each principal direction of the difference matrix.

Now, we select the largest eigenvalue as our distance measure, i.e.,

where \(k\) is the number of eigenvalues. Here, the maximum eigenvalue represents the principal mode of variation between frames, capturing the most significant deviation. By summarizing frame differences through the largest eigenvalue, it retains meaningful action information while being computationally efficient.

e. Sum of Eigen value Distance: This distance metric is the same as the above Max of Eigen value distance except that choosing the maximum eigen value as distance, it sums all the eigen values to represent the distance. Mathematically, we calculate the sum as follows:

This sum of eigenvalues distance metric captures all directions of variance in the data, providing a broader measure of dissimilarity between the frames compared to just the maximum eigenvalue.

Properties of distance metrics

A valid distance metric must satisfy the following three fundamental properties as suggested in the literature. To this aim, we present our findings on the distance metrics as follows:

a. Non-negativity: This property states that the distance measured between two points \(x\) and \(y\) is always zero or positive. Furthermore, the distance is equal to zero if and only if the two points are identical, i.e., \(d(x, y) = 0\) if and only if \(x == y\).

b. Symmetry: According to this symmetric property of the metric, the distance from point \(x\) to point \(y\) should be identical to the distance from point \(y\) to point \(x\) .

c. Triangle Inequality: This property show that the distance between two points \(x\) and \(z\) must be less than or equal to the sum of the distances between \(x\) and \(y\) and \(y\) and \(z\). This property ensures consistency and coherence when measuring distances.

The above properties ensure that the metric is mathematically well defined and suitable for measuring distances in various applications. We tested all the above properties on a video that had 140 frames and found that our distance metric satisfies all the above criteria to be considered a valid distance metric. Figure 1 also verifies our claim, where we can see the symmetry of all the distances and non-negativity as well. The x-axis and y-axis refer to the frames. This also holds for the triangle inequality.

Symmetry of distances between all frames of a video using various distances.

In order to visualize our approach, we tested our Norm of Rows distance on one of the videos from the TVSum dataset38 in the following, where Fig. 2 shows frames from the complete video (we trimmed the video to 517 frames only to test our idea), and Fig. 3 shows the summary frames. Visual results suggest that our distance metric captures the key frames efficiently.

Complete Video frames with numbers.

Summary frames.

Methodology

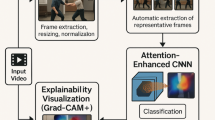

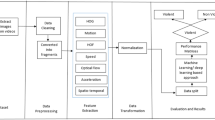

The high-level block diagram for our technique is presented in Fig. 4. In conventional methodologies, such as the upper flow of Fig. 4 for video classification, a video is provided as input. The frames of the video are then treated by a neural network to classify the content employing feature extraction techniques. In contrast, our approach seeks to optimize data efficiency by significantly reducing the size of the input dataset by summarizing only the most pertinent frames, as shown in the lower half of Fig. 4. The proposed summarization process starts with selecting the first frame as the initial key frame. Then we start calculating the distances between this key frame and the next frames to track any possible visual changes. The peaks in these distances refer to shifts in information and we identify them as our new key frames. Once we identify the new keyframe by selecting the first peak then we again start calculating the distance between this newly selected keyframe and the rest of the frames present in the video. In this way, we calculate all the key frames of the video. This newly created summarized dataset is now fed into the CNN-LSTM model where we extract features and do classification. In this whole process,our main goal is to achieve classification accuracy comparable to that achieved using the full dataset.

Block Diagram of the proposed architecture.

The threshold parameter in our algorithm to obtain the key frames actually defines the peak and dictates the sensitivity of key frame detection. It controls the overall summarization ratio (e.g., 20%, 30%, 50%, etc.). If the value of this threshold is low, it means that we are going to select more peaks and therefore, more frames are shortlisted for the summary and vice versa. However, we steadily varied the threshold to generate summaries between 20% and 80% of the original data size. This scheme ensured temporal granularity and quality keyframe selection in different datasets.

In addition to this sensitivity, our method is robust against noise. It minimizes the sensitivity related to variations or background fluctuations. Meanwhile, we utilized transfer learning to extract features and a pre trained model (Resnet50) for spatial information from summarized videos. Afterward, during temporal feature extraction , we grouped four powerful feature vectors for each video. These features are then passed onto an LSTM model for classification.

Algorithmic description of keyframe selection process

For the sake of reproducibilty, we present our algorithm 1 below:

Keyframe Selection Algorithm

The above algorithm starts by initializing the first frame as the initial keyframe. Then it calculates distances between this reference frame and all remaining frames using the selected distance metric (e.g., Norm of Rows). When this distance surpasses a threshold \(\tau\), the corresponding frame is marked as a new keyframe. We repeat this process until the end of the video and ensure that the key frames capture significant temporal transitions.

Evaluation and results

We implemented our proposed framework on four datasets that includes UCF101, UCF1139, and the CMU Multimodal Activity (MMAC)40 dataset. We also tested our method on HMDB5123 for cross domain evaluation. We chose these datasets because they offer diverse range of activities and allow a comprehensive assessment of our methodology. Firstly, the CMU MMAC dataset includes various tasks such as cutting, stirring, pouring and cooking among others. All these tasks capture natural human activities in real world settings. Similarly, the UCF101 dataset also has wide variety of activities including sports and everyday actions. Nevertheless, the UCF11 dataset is used for ablation study along with comparisons with recent state-of-the-art methods.

We performed all the experiments on a system running Ubuntu 20.04 with an AMD Ryzen 7 4800H CPU and 8 GB of RAM, with and without GPU acceleration. We implemented and trained deep learning models using Python and PyTorch. Additional libraries such as NumPy, Pandas, and Matplotlib were used for data pre-processing and visualization.The input video frames were resized to \(64 \times 64 \times 3\), and spatial-motion fusion was applied. Convolutional layers with \(3 \times 3\) kernels were initialized using the Glorot initializer, with the number of filters doubling at each layer. Max pooling layers of size \(2 \times 2\) were used for downsampling. The hyperparameters were experimentally tuned and the final configuration used a learning rate of 0.01, a momentum of 0.5, and a batch size of 32. We extracted spatial features using a pre trained ResNet-50 model, and temporal modeling was performed using an LSTM network. Moreover, we extracted individual frames from all videos present in the datasets. Through this we treated the videos as a sequence of images and making it easier to analyze the content frame by frame. Then, we also converted the frames from RGB to grayscale for the sake of simplifying the data and to reduce the input dimensionality. Below, we will now present our results on selected datasets:

Evaluation on MMAC dataset

CMU Multimodal Activity (MMAC) dataset is a collection of recordings of participants who are performing real-world tasks such as cutting, stirring and cooking. These tasks are stored in the following forms of data:

-

Video Data: This data is captured from multiple cameras including stationary and portable cameras. It also includes visual data from various angles.

-

Audio Data: This data is collected using five microphones that were placed at different places around that setting of the recording.

-

Motion Capture Data: This data is saved using 12 cameras with a 4-megapixel resolution and a 120 Hz frame rate.

-

IMU Data: This data is collected using wired (3DMGX) and Bluetooth (6DOF) IMUs.

-

Wearable Device Data: This data comes from BodyMedia sensors and eWatch devices.

The CMU MMAC dataset was collected from 55 participants, each participating in multiple sub experiments. For this research, we focused on the visual data only that is provided by the onboard RGB camera. We made summaries of the dataset using our distances and then used a pre-trained neural network to classify the actions. For the CMU-MMAC dataset, the participants contributed to making five different types of food, namely: Brownies, Pizzas, Sandwich, Salad, and Scrambled Eggs. There were 42 different classes for this action dataset provided in the supplementary file 1. We also studied the effect of the number of epochs and the size of the dataset size on the F1 score. Table 1 below presents the F1 score for 20% of the Summary dataset size with 5 and 100 epochs.

The F1 score for the complete dataset was 0.7104. We can see from Table 1 that the summaries were unable to reach the F1 score of the complete dataset in 5 epochs. Therefore, we increased the number of epochs to study its impact on the F1 score. However, there was no notable improvement, as we can see in the 100 epochs column of Table 1. As we observed that increasing the number of epochs did not lead to any significant improvement in the F1 score, we shifted our focus to enhancing the dataset size to boost the model’s performance. We increased the size of the summarized dataset, incorporating more key frames to provide the model with richer and more representative information from the video sequences. This allowed us to evaluate the impact of summary size on the F1 score and compare the results against the full dataset. By using a larger summary, we hope to improve generalization and get closer to the F1 score of the full dataset as shown in Table 2.

Next, in the following Table 3 (also shown in Fig. 5 as a graph), we present the Epochs vs F1 score to see a complete picture of increasing the epochs on our MMAC dataset.

No. of Epochs vs F1 Score on MMAC Dataset.

Next,in Table 4 below, the effect of increasing the size of the dataset vs F1 score is presented with Fig. 6 explaining the same, where the x-axis is the data point number and the y-axis represents the actual data. In Table 5 below, we compared our results with the existing work in literature. It is worth noting here that we have achieved the desired results with 13 classes, whereas others have only achieved this with 9 classes. The results are for 5 epochs only with a Norm of Rows based distance. We can further improve the results by increasing the epochs.

Summary Dataset size vs F1 Score on MMAC Dataset.

Evaluation on UCF101 dataset

In addition to our experiments with the CMU Multimodal Activity (MMAC) dataset, we extended our simulations to the UCF101 dataset to further evaluate the performance and generalizability of our proposed methodology. The UCF101 dataset is widely used in the field of action recognition and contains 13,320 videos from 101 different action categories. We utilized the same framework on UCF101 dataset as we applied to MMAC dataset. We tested the performance of our algorithm on a range of actions present in UCF101 dataset beyond kitchen activities which further validated our approach. The results for the UCF101 dataset are presented below in Table 6 and Fig. 7 respectively, and the detailed results are presented in Table 7.

Dataset size vs F1 Score on UCF101 Dataset.

Our experiments on the UCF101 dataset showed better performance overall as was also the case with MMAC dataset. Here, our keyframe extraction approach also utilized the norm-of-rows distance metric to identify meaningful actions within videos. We also noticed improvements in F1 scores even as we increased the size of the data. This observation also confirmed that our approach scales well with larger and diverse datasets. The results improved further with more epochs. By analyzing the above results, we are certain that our technique is well suited for various real world applications in video summarization and action recognition tasks. We also compared our findings with the work presented by Gowda et al.43 on UCF101. The results show that our technique achieved better classification accuracy. In Table 8 we compare the results with some base selection techniques presented by Gowda et al.43.

To further extend our comparison with the literature, we compared our results in Table 9 with other techniques in the literature.

Evaluation on UCF11 dataset

We chose the UCF11 dataset for our ablation study with Rahnama et al.54 as this dataset poses greater challenges due to its wide variations in camera movement, lighting, viewing angles, and cluttered backgrounds. It comprises of 1,600 videos, each classified into one of 11 action sports categories as presented in the supplementary file 1. The comparison of the ablation study is presented in Table 10 where all parameters and techniques are the same as those presented by Rahnama et al.54. The only difference is that we trained the classification model on our own summary instead of using their summarization technique. The results presented in Table 10 reflects the improved performance of our method. We also compared our results with other techniques in literature on the same dataset in Table 11.

Cross-domain evaluation on HMDB51 dataset

We conducted additional experiments on the HMDB51 dataset for the sake of cross domain evaluation of our method. This dataset contains videos with background variations, camera motion and illumination changes which makes it suitable for our cross domain evaluation.

Here, we applied our summarization approach using 50% of the video frames and evaluated multiple distance metrics as we applied to our previous experiments. Table 12 summarizes the results of our algorithm on this dataset.

Here, the results prove that our proposed Norm of Rows distance metric achieves the highest F1 score of 0.9027. The results confirm that our summarization approach also performs well in cross domain scenarios.

Evaluation of summary quality metrics

The quality of any summary is usually evaluated on three metrics that include coverage, redundancy and diversity. These metrics measure how well the selected frames represent the original content. To this aim, we also tested our technique on these three metrics and presented our results in Table 13. Here, we define our original set of frames as \(F = \{f_1, f_2, \ldots , f_N\}\). The summarized subset is defined as \(S = \{s_1, s_2, \ldots , s_K\}\), where \(K < N\). In the following we will provide the basic definitions of the above three metrics and then we will present the results.

Coverage. This metric elaborates how well the summary captured the overall visual information of the full video and is defined as:

where \(\text {Sim}(f_i, s_j)\) represents the cosine similarity between the feature vectors of the original and summarized frames. Here, higher coverage values mean that the summary effectively represented the content of the entire video.

Redundancy. This metric calculates the similarity within frames of a video and is given by:

The lower values of redundancy metric tells that there is a very minor overlap between frames.

Diversity. This metric is a complement to the redundancy metric. It is the degree of variation within the summary and defined as follows:

When a summary is diverse, it better captures the temporal and spatial features of the video. That is why it is important to have as many diverse frames in the video as possible. We used cosine similarity metric to compute the above metrics. Table 13 below presents the results on the HMDB51 dataset using a summarization ratio of 50%.

The results presented in Table 13 show that the proposed Norm of Rows distance metric achieves the highest coverage (0.903) with lowest redundancy (0.143). This resulted in the greatest diversity among summarized frames. Our results confirm that the method effectively captured essential spatiotemporal variations and minimized the redundant frames.

Runtime evaluation and real-time applicability

To test the performance of our algorithm on different hardware setups we simulated further experiments on Ubuntu 20.04 system with an AMD Ryzen 7 4800H CPU and 8 GB RAM. We also tested it on a GPU which had NVIDIA RTX 3060 (6 GB) processor.Here, we calculated the inference time per frame as the average duration required to process all frames of a test video divided by the total number of frames after summarization. Then we repeated the same simulation for three times and averaged over ten randomly selected videos for each dataset.

As shown in Table 14 our proposed summarization method reduces the inference time in all datasets. In our setup on CPU, the computational load was decreased by nearly 50% for 50% of the summary size. For example, the inference time dropped from 32.5 ms to 17.4 ms per frame for UCF11 dataset. This gain is particularly important for real time applications where resources to run these algorithms are limited.

During the simulations on GPU settings we noticed that our improvement is smaller but still noticeable (approximately 15–25%). The reason for this can be that GPUs already parallelize frame level computations. However, the reduction in inference time across datasets confirms that our summarization technique not only maintains classification accuracy but also enhances runtime performance. This makes our model more suitable for deployment in practical real time systems.

Discussion and future work

During our experiments on the above datasets we noticed that training the model for more epochs had no major impact on the F1 score. The proposed model already has learned most of the features in the earlier epochs. Therefore, there was no need to train further as it did not help to reduce overfitting. This can be confirmed from the results shown in Table 3 and Fig. 5. However, when we increased the size of the summarized dataset, we can see a notable improvement in the F1 score. Therefore, from this observation we concluded that a larger and more diverse dataset helped the model in capturing the variations in activities. This conclusion is derived from the results presented in Table 4 and Fig. 6. We also tested our summarization model on different summarization ratios including 20%, 30%, 40%, 50% and 80% of the original frames. The results obtained on this setting across all benchmark datasets showed that higher summarization levels retain more temporal context. This further led to improved classification accuracy. In fact, when the size of the dataset was reduced to around 50% of the original, the results turned out to be the most balanced in terms of computational complexity. This observation makes it clear that our approach can maintain temporal representation while still reducing data volume.

During our course of evaluation on different datasets we compared various distance metrics for key frame extraction in the section “Evaluation and results”. Those metrics include the Norm of rows, Norm of columns, Euclidean distance, Max of Eigenvalues and the Sum of Eigenvalues distance. From the results based on above mentioned distance metrics, it was evident that Norm of rows metric performed best. It captured structural changes across video frames and identified key frames related to spatial relationships and object movements. These features later helped in improving the results which can be verified in the section “Evaluation and results”. The main reason of this metric to perform better than rest seems to be that it pays attention to the small frame to frame shifts. It does not blend all changes into one average value but rather looks at how much motion happens along each spatial or feature direction. Then it pulls out the bigger deviations from that direction. This unique feature helps it to catch the noticeable movements such as hand motion, body shifts or other interactions. In this context, both the theory and the practical results support the claim that this row wise norm is highly sensitive to localized motion energy. As a result, it identified more representative key frames. This led to improved classification accuracy and F1 scores in all datasets that we tested.

We also compared our results with some existing techniques asserted in literature as presented in Tables 5, 9, 10 and 11. From our comparison with these techniques of literature, we derived that our approach of combining video summarization with a pre trained neural network model outperforms existing methods in classification accuracy and F1 score. As we can see in Table 5 for MMAC dataset, our proposed approach reached a classification accuracy of 89.23 which is pretty much higher than the rest of the methods. Similarly, for the UCF101 dataset, our approach achieved an accuracy of 92.42, which is slightly better than the rest, as we can see in Table 9. Moreover, for the UCF11 dataset, our accuracy score is 98.89 which is again better than the other models as shown in Table 11.

Later on, we also tested our model on HMDB51 dataset to make sure that our method works well in cross-domain scenarios. This dataset is full of challenges such as different lighting, blurry motion and cluttered backgrounds. Our results on this dataset also proved to be better than others in literature. Another challenging factor in real video applications is the noise which can significantly affect the quality of the visual features being extracted. Although our study focuses on benchmark datasets with relatively clean data, however, our proposed summarization pipeline is flexible. It can be integrated with recent video restoration methods to improve robustness. Some recent studies, including MB-TaylorFormer V268 and MC-Blur69 have made significant progress in addressing these common video problems of blurriness, changing light conditions and adverse weather effects. We will also incorporate these restoration methods before running the summarization process in future. This will lead to produce cleaner and more consistent key frames.

In future work we will also evaluate our summarization approach under more strict conditions to further establish its robustness to noise. Moreover,we also acknowledge that the rapid evolution of lightweight and transformer based architectures presents good opportunities for further enhancement of our method. We will integrate our methods with such models to improve the efficiency of our system. This relatively new direction forms a key component of our future research. Another future direction can be to work with regularization effect that comes from the summarization itself. When a dataset becomes larger, these deep models often start to cling to redundant visual patterns. This overfitting becomes an easy trap. However, distance based key frame selection reduces that risk by removing frames that do not offer much new information. In our case, we observed consistent improvements in the F1 score in all datasets without any signs of overfitting. This indicates that the proposed summarization process acts as a form of temporal and spatial regularization. Thus, our method promotes a better generalization to unseen video sequences.

Moreover, our framework shows strong resilience to visual noise. In the next stage of our work, we plan to run controlled experiments by intentionally adding issues such as blur, compression, and lighting changes to the videos. This will help us better understand how effectively our summarization method performs under less than perfect conditions. Furthermore,in practical applications, videos often differ in quality, frame rate, and degree of motion or occlusion. Although the datasets used in this study (UCF11, UCF101, MMAC, and HMDB51) include moderate variations in these aspects, our proposed summarization method demonstrates consistent behavior. we also observed that the summarization process maintained temporal coherence and keyframe consistency across videos with slight frame rate irregularities. However, in some cases as severe blur or heavy occlusion, further enhancements are needed. These directions remain part of our planned future work to ensure broader real world scalability.

Conclusion

This work is focused on developing a video classification framework that uses an efficient summarization technique. To this aim, we tried to address the challenges of handling large video datasets while keeping the temporal relationship intact between frames. Our proposed method with distance metric (Norm of Rows distance) outperforms existing metrics in terms of accuracy. Additionally, we developed a unique key frame extraction technique that selects representative frames and maintains the necessary temporal information of the video. To this end, we deployed a CNN-LSTM architecture for classification of the actions present in each video. In our experiments, we proved that neural networks trained on reduced datasets using our summarization method perform equally well as those trained on full datasets. Our analysis showed video summarization as a powerful tool to achieve both efficiency and accuracy in video classification. In future work, our aim is to improve this summarization technique and find its use across a wider range of video analysis tasks. We also plan to test its effectiveness on larger video datasets. In addition, we intend to integrate our method with advanced deep learning models to further strengthen its robustness and improve its accuracy.

Data availability

This study did not generate or analyze any new data. All data used in this study are publicly available from the following sources, while additional information is available from the corresponding author on reasonable request. UCF101 Dataset: https://www.crcv.ucf.edu/data/UCF101.php UCF11 Dataset: https://www.crcv.ucf.edu/data/UCF_YouTube_Action.php CMU MMAC Dataset: https://kitchen.cs.cmu.edu HMDB51 Dataset:https://serre.lab.brown.edu/hmdb51.html

References

ZainEldin, H. et al. Silent no more: a comprehensive review of artificial intelligence, deep learning, and machine learning in facilitating deaf and mute communication. Artif. Intell. Rev.57, 188 (2024).

Ur Rehman, A., Belhaouari, S. B., Kabir, M. A. & Khan, A. On the use of deep learning for video classification. Appl. Sci.13, 2007 (2023).

Rani, R. D. & Prabhakar, C. Combining handcrafted spatio-temporal and deep spatial features for effective human action recognition. Hum.-Centric. Intell. Syst.5, 123–150 (2025).

Xie, J. et al. A transformer-based approach combining deep learning network and spatial-temporal information for raw EEG classification. IEEE Trans. Neural Syst. Rehabil. Eng.30, 2126–2136. https://doi.org/10.1109/TNSRE.2022.3194600 (2022).

Zhang, L., Li, S. Z., Yuan, X. & Xiang, S. Real-time object classification in video surveillance based on appearance learning. In 2007 IEEE conference on computer vision and pattern recognition, 1–8 (IEEE, 2007).

Khan, K. et al. S-kmc: A non-parametric split and kernel-merge clustering algorithm. IEEE Trans. Artif. Intell.5(9), 4443–57 (2024).

Van der Maaten, L. & Hinton, G. Visualizing data using t-sne. J. Mach. Learn. Res.9, 2579–605 (2008).

McInnes, L., Healy, J. & Melville, J. Umap: Uniform manifold approximation and projection for dimension reduction. arXiv preprint, arXiv:1802.03426 (2018).

Han, H. et al. Stgcn: a spatial-temporal aware graph learning method for poi recommendation. In 2020 IEEE International Conference on Data Mining (ICDM), 1052–1057 (IEEE, 2020).

Dalal, N. & Triggs, B. Histograms of oriented gradients for human detection. In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), vol. 1, 886–893. https://doi.org/10.1109/CVPR.2005.177 (2005).

Fleet, D. & Weiss, Y. Optical Flow Estimation 237–257 (Springer, US, Boston, MA, 2006).

Nguyen, H. & McLachlan, G. Maximum likelihood estimation of gaussian mixture models without matrix operations. Adv. Data Anal. Classif.9, 371–394. https://doi.org/10.1007/s11634-015-0209-7 (2015).

Müller, M. Dynamic Time Warping. In Information retrieval for music and motion 69–84 (Springer, 2007).

Kumar, T. et al. Binary-classifiers-enabled filters for semi-supervised learning. IEEE Access9, 167663–167673 (2021).

Laptev, I. On space-time interest points. Int. J. Comput. Vis.64, 107–123 (2005).

Wang, H. & Schmid, C. Action recognition with improved trajectories. In Proceedings of the IEEE international conference on computer vision, 3551–3558 (2013).

Scovanner, P., Ali, S. & Shah, M. A 3-dimensional sift descriptor and its application to action recognition. In Proceedings of the 15th ACM international conference on Multimedia, 357–360 (2007).

Sadanand, S. & Corso, J. J. Action bank: A high-level representation of activity in video. In 2012 IEEE Conference on Computer Vision and Pattern Recognition, 1234–1241. https://doi.org/10.1109/CVPR.2012.6247806 (2012).

Jing, T., Xia, H., Hamm, J. & Ding, Z. Marginalized augmented few-shot domain adaptation. IEEE Trans. Neural Netw. Learn. Syst.35(9), 12459–69 (2023).

Marhon, S. A., Cameron, C. J. F. & Kremer, S. C. Recurrent Neural Networks 29–65 (Springer, Berlin, Heidelberg, 2013).

Siebert, J. & Urquhart, C. C3d: a novel vision-based 3d data acquisition system. In Proceedings of the Mona Lisa European workshop, combined real and synthetic image processing for broadcast and video production, Hamburg, Germany, 170–180 (Springer, 1994).

Soomro, K., Zamir, A. R. & Shah, M. Ucf101: A dataset of 101 human actions classes from videos in the wild. Preprint. arXiv:1212.0402 (2012).

Kuehne, H., Jhuang, H., Garrote, E., Poggio, T. & Serre, T. Hmdb: a large video database for human motion recognition. In 2011 International conference on computer vision, 2556–2563 (IEEE, 2011).

Donahue, J. et al. Long-term recurrent convolutional networks for visual recognition and description. 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2625–2634 (2014).

Greff, K., Srivastava, R., Koutník, J., Steunebrink, B. & Schmidhuber, J. Lstm: A search space odyssey. IEEE Trans. Neural Netw. Learn. Syst.28, 1. https://doi.org/10.1109/TNNLS.2016.2582924 (2015).

Gupta, P. & Rajput, N. Two-stream emotion recognition for call center monitoring. In Interspeech 2007, 2241–2244. https://doi.org/10.21437/Interspeech.2007-609 (2007).

Simonyan, K. & Zisserman, A. Two-stream convolutional networks for action recognition in videos. In Advances in Neural Information Processing Systems Vol. 27 (eds Ghahramani, Z. et al.) (Curran Associates Inc, 2014).

Carreira, J. & Zisserman, A. Quo vadis, action recognition: a new model and the kinetics dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 6299–6308 (2017).

Singh, A., Raj, K., Kumar, T., Verma, S. & Roy, A. M. Deep learning-based cost-effective and responsive robot for autism treatment. Drones7, 81 (2023).

Kumar, T., Brennan, R., Mileo, A. & Bendechache, M. Image data augmentation approaches: A comprehensive survey and future directions. IEEE Access12, 187536–187571 (2024).

Kumar, T. et al. Debiasing computer vision models using data augmentation based adversarial techniques. In Proceedings of the 3rd Workshop on Bias, Ethical AI, Explainability and the Role of Logic and Logic Programming (BEWARE24), co-located with AIxIA 2024 (Bolzano, Italy, 2024).

Ranjbarzadeh, R. et al. Me-ccnn: Multi-encoded images and a cascade convolutional neural network for breast tumor segmentation and recognition. Artif. Intell. Rev.56, 10099–10136 (2023).

Khan, W. et al. Sql and nosql database software architecture performance analysis and assessments: a systematic literature review. Big Data Cogn. Comput.7, 97 (2023).

Liu, Z. et al. Video swin transformer. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 3202–3211 (2022).

Bertasius, G., Wang, H. & Torresani, L. Is space-time attention all you need for video understanding?. ICML2, 4 (2021).

Ramachandran, P. et al. Stand-alone self-attention in vision models. In Advances in Neural Information Processing Systems32 (2019).

Li, Y. et al. Mvitv2: Improved multiscale vision transformers for classification and detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 4804–4814 (2022).

Song, Y., Vallmitjana, J., Stent, A. & Jaimes, A. Tvsum: Summarizing web videos using titles. In Proceedings of the IEEE conference on computer vision and pattern recognition, 5179–5187 (2015).

Liu, J., Luo, J. & Shah, M. Recognizing realistic actions from videos “in the wild”. In 2009 IEEE conference on computer vision and pattern recognition, 1996–2003 (IEEE, 2009).

De la Torre, F. et al. Guide to the carnegie mellon university multimodal activity (cmu-mmac) database (Tech. Rep., Carnegie Mellon University, 2009).

Lu, Y. & Velipasalar, S. Human activity classification incorporating egocentric video and inertial measurement unit data. In 2018 IEEE Global Conference on Signal and Information Processing (GlobalSIP), 429–433 (IEEE, 2018).

Alhersh, T., Brahim Belhaouari, S. & Stuckenschmidt, H. Action recognition using local visual descriptors and inertial data. In European Conference on Ambient Intelligence, 123–138 (Springer, 2019).

Gowda, S. N., Rohrbach, M. & Sevilla-Lara, L. Smart frame selection for action recognition. Proc. AAAI Conf. Artif. Intell.35, 1451–1459 (2021).

Noroozi, M. & Favaro, P. Unsupervised learning of visual representations by solving jigsaw puzzles. In European conference on computer vision, 69–84 (Springer, 2016).

Xu, D. et al. Self-supervised spatiotemporal learning via video clip order prediction. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 10334–10343 (2019).

Gao, R., Oh, T.-H., Grauman, K. & Torresani, L. Listen to look: Action recognition by previewing audio. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 10457–10467 (2020).

Shu, Y., Shi, Y., Wang, Y., Huang, T. & Tian, Y. P-odn: Prototype-based open deep network for open set recognition. Sci. Rep.10, 7146 (2020).

Nguyen, H. P. & Ribeiro, B. Video action recognition collaborative learning with dynamics via pso-convnet transformer. Sci. Rep.13, 14624 (2023).

Zhang, B., Wang, L., Wang, Z., Qiao, Y. & Wang, H. Real-time action recognition with enhanced motion vector cnns. In Proceedings of the IEEE conference on computer vision and pattern recognition, 2718–2726 (2016).

Zhang, B., Wang, L., Wang, Z., Qiao, Y. & Wang, H. Real-time action recognition with deeply transferred motion vector cnns. IEEE Trans. Image Process.27, 2326–2339 (2018).

Wu, C.-Y. et al. Compressed video action recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, 6026–6035 (2018).

Shou, Z. et al. Dmc-net: Generating discriminative motion cues for fast compressed video action recognition. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 1268–1277 (2019).

Shalmani, S. M., Chiang, F. & Zheng, R. Efficient action recognition using confidence distillation. In 2022 26th International Conference on Pattern Recognition (ICPR), 3362–3369 (IEEE, 2022).

Rahnama, A. & Mansouri, A. Temporal relations of informative frames in action recognition. In 2024 13th Iranian/3rd International Machine Vision and Image Processing Conference (MVIP), 1–5 (IEEE, 2024).

Liu, A.-A., Su, Y.-T., Nie, W.-Z. & Kankanhalli, M. Hierarchical clustering multi-task learning for joint human action grouping and recognition. IEEE Trans. Pattern Anal. Mach. Intell.39, 102–114 (2016).

Ye, J. et al. Learning compact recurrent neural networks with block-term tensor decomposition. In Proceedings of the IEEE conference on computer vision and pattern recognition, 9378–9387 (2018).

Ullah, A., Muhammad, K., Haq, I. U. & Baik, S. W. Action recognition using optimized deep autoencoder and cnn for surveillance data streams of non-stationary environments. Future Gener. Comput. Syst.96, 386–397 (2019).

Dai, C., Liu, X. & Lai, J. Human action recognition using two-stream attention based lstm networks. Appl. Soft Comput.86, 105820 (2020).

Afza, F. et al. A framework of human action recognition using length control features fusion and weighted entropy-variances based feature selection. Image Vis. Comput.106, 104090 (2021).

Muhammad, K. et al. Human action recognition using attention based lstm network with dilated cnn features. Future Gener. Comput. Syst.125, 820–830 (2021).

Ullah, A. et al. Efficient activity recognition using lightweight cnn and ds-gru network for surveillance applications. Appl. Soft Comput.103, 107102 (2021).

Al-Obaidi, S., Al-Khafaji, H. & Abhayaratne, C. Making sense of neuromorphic event data for human action recognition. IEEE Access9, 82686–82700 (2021).

Nasaoui, H., Bellamine, I. & Silkan, H. Human action recognition using squeezed convolutional neural network. In 2022 11th International Symposium on Signal, Image, Video and Communications (ISIVC), 1–5 (IEEE, 2022).

Karuppannan, K., Darmanayagam, S. E. & Cyril, S. R. R. Human action recognition using fusion-based discriminative features and long short term memory classification. Concurr. Comput.: Pract. Exp.34, e7250 (2022).

Wang, Z., Lu, H., Jin, J. & Hu, K. Human action recognition based on improved two-stream convolution network. Appl. Sci.12, 5784 (2022).

Vrskova, R., Hudec, R., Kamencay, P. & Sykora, P. Human activity classification using the 3dcnn architecture. Appl. Sci.12, 931 (2022).

Ullah, H. & Munir, A. Human action representation learning using an attention-driven residual 3dcnn network. Algorithms16, 369 (2023).

Jin, Z., Qiu, Y., Zhang, K., Li, H. & Luo, W. Mb-taylorformer v2: improved multi-branch linear transformer expanded by Taylor formula for image restoration. IEEE Trans. Pattern Anal. Mach. Intell.47, 5990–6005 (2025).

Zhang, K. et al. Mc-blur: A comprehensive benchmark for image deblurring. IEEE Trans. Circuits Syst. Video Technol.34, 3755–3767. https://doi.org/10.1109/TCSVT.2023.3319330 (2024).

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author information

Authors and Affiliations

Contributions

A.K. and S.B.B. conceived the research design, and A.K. performed the simulations. A.K. and A.R. conducted the ablation study. A.K. wrote the original research article, while the finalized draft is reviewed by A.I. and S.B.B.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Consent for publication

The authors have used the ChatGPT tool as an assistance for proofreading. The authors have carefully reviewed and edited all the research content.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Khan, A., Rahnama, A., Islam, A. et al. Innovative temporal summarization for complex video classification. Sci Rep 16, 7970 (2026). https://doi.org/10.1038/s41598-026-37111-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-37111-y