Abstract

Electric vehicle (EV) charging station load prediction is crucial for ensuring the stable operation of power grids and optimizing charging infrastructure. However, the stochastic nature of charging behaviors and the complex influence of external factors pose significant challenges to accurate prediction. To address these issues, this study proposes a novel Transformer-based architecture, the Multi-scale Fusion Transformer (MFT), which integrates a Multi-scale Modeling Mechanism (3M), a Feature-correlation Analysis Module (FAM), and a Multi-variable Fusion Module (MFM). The 3M enhances the model’s ability to capture temporal dependency across varying granularities, while the FAM identifies key external features such as weather and traffic patterns. The MFM dynamically fuses these features based on their relevance to each sample using a cross-attention mechanism. Experimental evaluations using real-world data from Norway demonstrate that MFT significantly outperforms baseline models in both short-term and long-term forecasting horizons. Notably, MFT exhibits superior stability and accuracy, especially in long-term prediction tasks, with up to 25.59% average performance improvement over competitors. These results confirm the effectiveness of MFT in modeling complex, multi-scale, and externally influenced load patterns, offering a robust solution for intelligent grid scheduling and energy resource management in EV-dominated futures.

Similar content being viewed by others

Introduction

As a prominent symbol of modern technological advancement, the automobile has undergone continuous evolution since its inception, profoundly reshaping human mobility and exerting significant influence on the socioeconomic structure. It has markedly improved transportation efficiency and travel convenience, effectively compressing geographical constraints. Meanwhile, the expansion of the automotive industry has driven the rapid development of urban transportation networks and supporting infrastructure, emerging as a pivotal force behind urban expansion and industrial agglomeration. As such, it holds a critical position in the modern economic system. However, conventional automobiles have also led to unsustainable energy consumption and substantial greenhouse gas emissions. According to the CO\(_{2}\) Emissions in 2022 report released by the International Energy Agency (IEA), global carbon emissions from the transportation sector increased by 2.1% in 2022, amounting to approximately 137 million tons. This surge presents a significant challenge for countries worldwide in achieving their carbon neutrality and peak emission targets1.

With the continuous advancement of electric vehicle (EV) technologies, their remarkable advantages in environmental sustainability and energy efficiency have positioned them as a key solution to global challenges such as energy scarcity, climate change, and environmental pollution2,3. However, the large-scale adoption of EV has also introduced new challenges to the secure and stable operation of power systems, particularly due to the increasing prominence of load fluctuations caused by EV charging behaviors4,5,6,7. Against this backdrop, high-precision forecasting of EV charging demand is not only a fundamental prerequisite for stable grid dispatch and sustainable operation but also a critical enabler for unlocking the potential of EV as flexible energy resources and formulating scientifically grounded grid integration strategies8,9. Furthermore, accurate demand estimation plays an essential role in guiding the optimal deployment of charging infrastructure and informing long-term investment decisions10,11.

To achieve accurate load prediction for electric vehicle (EV) charging stations, existing studies have proposed two main categories of approaches: statistical methods and learning-based models. Among statistical approaches, Buzna et al. evaluated various statistical modeling strategies for predicting EV charging demand over 28 days in the Netherlands, and the results demonstrated that the Seasonal Autoregressive Integrated Moving Average (SARIMA) model outperformed other methods such as Random Forest (RF) and Gradient Boosted Regression Trees (GBRT) in terms of prediction performance12. Similarly, Louie et al. applied a Seasonal ARIMA model to predict the total energy consumption of 2,400 charging stations across Washington State and San Diego over a two-year period, achieving promising results13. Qin et al. developed a Monte Carlo-based model that integrates users’ daily travel patterns and charging behavior to enable rapid load estimation at the distribution network level14. Wang et al. further advanced this line of work by modeling users’ probability distributions across multiple dimensions (such as charging start time, initial state of charge, power level, and duration), and incorporating logistic functions to model economic aspects of charging load, leading to more accurate prediction outcomes15. Despite the simplicity and low training cost of statistical models, they struggle to effectively capture the nonlinear evolution patterns inherent in the charging load sequence. With the rapid development of deep learning (DL), neural networks have demonstrated remarkable capability in modeling complex temporal dependency and nonlinear feature, thereby enabling more generalized load prediction16,17,18. For example, Chang et al. proposed an LSTM-based model to address the volatility of peak periods and power distributions in fast-charging loads, achieving strong prediction performance on a real-world dataset from Jeju Island19. Lu et al. applied various neural network architectures to the task of hourly aggregated load prediction, and verified that the LSTM model offered superior accuracy compared to other architectures through the backtesting procedure20. Guo et al. designed a multithreaded load prediction model that integrates user behavioral preferences by incorporating spatiotemporal travel patterns across different seasons, weekdays, and weekends, thereby enhancing the adaptability of the model21. Koohfar et al. introduced the Transformer architecture to leverage its strength in modeling long-range temporal dependencies, and demonstrated its superior performance over traditional LSTM models in multi-step load prediction22. Despite these advances, existing deep learning models still struggle to capture the multi-scale temporal dependency of charging load and fail to fully incorporate the dynamic influence of external factors such as weather and traffic conditions. These limitations constrain the further improvement of prediction accuracy to some extent.

In response to the above-mentioned challenges, this paper proposes the Multi-scale Fusion Transformer for EV Charging Station Load Prediction (MFT) by optimizing the Transformer architecture, which aims to achieve accurate charging load prediction by modeling the multi-scale temporal features of charging load and the influences of external factors such as weather conditions.

Method

Model framework

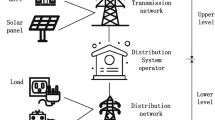

Figure 1 illustrates the overall framework of the proposed MFT, which is composed of the Multi-scale Modeling Mechanism (3M), Feature-correlation Analysis Module (FAM), and Multi-variable Fusion Module (MFM). First, 3M utilizes predefined scale mask to capture the temporal dependency of the load sequence in parallel at different temporal granularities. These multi-scale features are then concatenated and fused to form a multi-scale dependency representation. Subsequently, FAM performs Pearson correlation analysis across all training samples to evaluate the influence of various weather features and traffic densities from different surrounding regions on charging load. Based on this analysis, the base weight is assigned to each external feature according to its relevance. Furthermore, MFM adopts a cross-attention mechanism, treating the charging load as the query vector, to assess the real-time impact of external features (weather features and traffic densities) on the current sample’s prediction. It then fine-tunes the base weight into dynamic weight, which is used to perform weighted aggregation of external features, yielding an external fusion representation. Finally, the model concatenates and fuses the multi-scale dependency representation and the external fusion representation, which together serve as the input for the decoder to generate accurate future charging load prediction.

The framework of Multi-scale Fusion Transformer.

Multi-scale modeling mechanism

In the EV charging load sequence, temporal information at different scales encapsulates heterogeneous feature representations and latent patterns. Large-scale temporal dependency typically captures the global evolution trend of the load over time, which helps mitigate the impact of local fluctuation and reflects the macroscopic pattern of charging behavior. However, it often struggles to capture fine-grained local variation, due to its focus on long-term evolutionary process. In contrast, small-scale temporal dependency emphasizes short-term dynamics within a local time window, containing rich short-term detail that is valuable for revealing micro-level fluctuation in complex charging behavior, yet it is limited in modeling overarching trend.

Although multi-resolution and dilated-attention mechanisms have made notable progress in recent years for temporal modeling23,24, the former typically requires online scale selection and incurs computational redundancy, while the latter relies on progressively dilated patterns and is prone to losing local details. In contrast, multi-scale attention can capture fine-to-coarse temporal representations through parallel branches, effectively balancing local–global coupling and computational efficiency, thereby exhibiting clear advantages. Therefore, effectively extracting and integrating temporal dependency across multiple scales is critical to enhancing accuracy in EV charging load prediction. To this end, this paper develops an enhanced approach based on the scale mask, which extends the padding mask mechanism in the multi-head attention module. Under the constraint of the predefined scale mask, each attention head can model temporal dependency at a specific temporal scale independently. This design allows the model to jointly learn and integrate multi-scale temporal features from the charging load sequence, thereby improving its ability to capture both global trend and local fluctuation collaboratively.

Specifically, given the charging load sequence \(l \in {\mathbb {R}^{{T_h}{{ \times }}1}}\), we first compute the query vector set \(Q = \left\{ {{q_1},{q_2},...,{q_N}} \right\}\), key vector set \(K = \left\{ {{k_1},{k_2},...,{k_N}} \right\}\), and value vector set \(V = \left\{ {{v_1},{v_2},...,{v_N}} \right\}\) for the N attention heads.

where \({T_h}\) is the length of the charging load sequence. \(\eta\) denotes the multi-layer perceptron (MLP) that projects the one-dimensional load sequence into a higher-dimensional feature space to enhance its representation capacity. \({q_n}\), \({k_n}\), and \({v_n}\) represent the query vector, key vector, and value vector of the attention head n, respectively. \(W_n^Q\), \(W_n^K\), and \(W_n^V\) are the learnable parameters of the transformation functions \({\gamma _Q}\), \({\gamma _K}\), and \({\gamma _V}\). Since the Transformer framework processes input sequences in parallel, it lacks an inherent mechanism to capture the order of sequence elements. To address this limitation, positional encoding \(Pos = \left\{ {{p^1},{p^2},...,{p^{{T_h}}}} \right\}\) is introduced to inject position-related information into the load sequence. \({p^t}\) denotes the positional encoding of the \(t - th\) element in the sequence, which is computed as follows:

where \({{\textrm{d}}_{\bmod {\textrm{el}}}}\) represents the dimension of the positional encoding vector. 2q and \(2q+1\) correspond to the even and odd indices within the encoding, respectively. We visualize the encoding matrix in the form of a hot map using \({T_h} = 24\) and \({{\textrm{d}}_{\bmod {\textrm{el}}}} = 128\) as an example, as shown in Fig. 2.

The hot map of positional encoding.

Furthermore, we predefine the scale mask \(M = \left\{ {{m_1},{m_2},...,{m_N}} \right\}\) with varying temporal granularities for the N attention heads, which can constrain each attention head to model temporal dependency at a specific temporal scale. Where, \({m_n} \in {\mathbb {R}^{{T_h}{\mathrm{\times }}{T_h}}}\) is the scale mask assigned to the attention head n, and the value \(m_n^{a,b}\) at position \(\left( {a,b} \right)\) in \({m_n}\) can be expressed as:

where \(\mathbb {R}\) denotes the set of integers.

Finally, the dot-product weights of the N attention heads are reset using the scale mask M, guiding attention heads to extract multi-scale temporal dependency from the load sequence at different temporal granularities. The resulting features are then concatenated and fused to obtain the multi-scale dependency representation R.

where \({\alpha _n}\) and \({\alpha '_n}\) are the dot-product weights before and after reset in the attention head n, respectively. \(d_n^k\) represents the dimension of the key vector \({v_n}\), and \({r_n}\) denotes the dependency representation modeled by the attention head n. The function \(\eta\) is the multi-layer perceptron (MLP), which is used to fuse the concatenated feature to produce the final multi-scale dependency representation R.

For the sake of clarity, we visualize the calculation process of attention head 2 using \(T_h=6\) as an example, as shown in Fig. 3.

The calculation process of attention head 2.

Feature-correlation analysis module

In addition to the inherent periodic fluctuation in temporal pattern, EV charging load exhibits strong randomness and non-stationarity, largely due to its sensitivity to typical external factors such as weather conditions and traffic density. For example, under extreme weather conditions such as rainfall or high temperature, users tend to reduce outdoor activities, which lowers the frequency of EV usage and subsequently decreases charging demand, leading to a drop in overall load. Conversely, high traffic density in the vicinity of a charging station may indicate a greater number of EV in operation or awaiting charging, thereby increasing charging demand and causing a surge in the load. Therefore, analyzing the correlation between external features and charging load, and effectively modeling and fusing these multidimensional external features through appropriate mechanisms, is crucial for improving the accuracy of load forecasting models.

To obtain the influence of external features on charging load, we conduct the feature correlation analysis based on actual charging load data collected from a large residential area in Norway. The dataset includes two types of external features with a total of 14 dimensions, namely 9 weather features and 5 traffic density features. Where, the traffic density features refer to the number of vehicles within 5 fixed areas surrounding the charging station. It is important to note that the weather features are provided at a daily resolution, assuming weather conditions remain relatively stable throughout a single day. In contrast, the traffic density features are sampled on an hourly basis, aligned with the charging load sequence, enabling fine-grained modeling of dynamic external environments. The detailed definition of the external features is presented in Table 1.

Based on the aforementioned external features and charging load data, we introduce the Pearson Correlation Coefficient (PCC) as a statistical analysis tool to quantify the correlations between each external feature and the charging load. PCC effectively reveals the strength of influence that different external features exert on load fluctuation, thereby providing a theoretical foundation for subsequent feature weighting and fusion. The calculation process is as follows:

where X refers to the 14-dimensional set of external features, including both weather and traffic density features, while Y represents the charging load data. The coefficient \({\rho _{X,Y}}\) indicates the correlation between a specific external feature and the load sequence, where a positive value denotes a positive correlation and a negative value denotes a negative correlation.

Based on the above correlation analysis, a 14-dimensional vector \(\textrm{P} = \left\{ {{\rho _1},{\rho _2},...,{\rho _{14}}} \right\}\) is obtained to represent the correlation coefficients between each external feature and the charging load, as shown in Fig. 4. From the perspective of weather features, the sunshine index exhibits the strongest negative correlation with the charging load, with a PCC value of – 0.239. This suggests that intense sunlight tends to reduce users’ outdoor activities and charging frequency, leading to a decrease in load demand. In addition, features such as average temperature (– 0.137), maximum temperature (– 0.126), and average humidity (0.131) also show moderate correlations, indicating that thermal and humidity conditions can influence user travel and charging behavior to a certain extent. In contrast, features like average precipitation (0.004) and average snowfall (– 0.029) exhibit weak correlations with the load, possibly due to limited weather variability in the study region or a relatively weak user response to such meteorological conditions. For traffic density features, the overall correlation levels are low. However, certain regions still show noticeable patterns. Specifically, Region 4 and Region 5 have PCC values of 0.066 and 0.063, respectively, which are slightly higher than those of other regions. This suggests a weak but observable coupling relationship between local traffic activity and charging load.

The correlation coefficients between each external feature and the charging load.

Furthermore, we obtain the base weight set \(W = \left\{ {{w_1},{w_2},...,{w_{14}}} \right\}\) by compressing \(\textrm{P}\) using the SoftMax function. The base weight set provides a probabilistic interpretation of feature impact while preserving the relative importance among different features, which serves as a prior for the subsequent feature weighting and fusion process.

where, \({w_i}\) denotes the base weight of the \(i - th\) external feature.

Multi-variable fusion module

The base weight set \(W = \left\{ {{w_1},{w_2},...,{w_{14}}} \right\}\) reflects the contribution of different external features to the charging load at the full sample statistical level. However, different samples often exhibit certain differences relative to the statistical level of the entire sample. For instance, in some cases, rapid fluctuations in weather conditions may coincide with relatively stable traffic density, making weather features more influential for prediction. In other cases, traffic congestion may vary dramatically while weather remains stable, thereby increasing the importance of traffic-related features. Consequently, relying solely on the base weight derived from static correlation struggles to accurately capture the actual influence of external features in a given sample. To address this issue, the proposed Multi-variable Fusion Module (MFM) dynamically learns the variance weight to adaptively fine-tune the base weight at the sample level. The dynamic adjustment process enables the model to quantify the real-time influence of weather and traffic features on charging load for each individual sample, which plays a critical role in achieving accurate modeling and effective fusion of external features.

Specifically, the charging load sequence l is first encoded into a high-dimensional query vector \({l_Q}\). Correspondingly, the 14-dimensional external features \(x = \left\{ {{x_1},{x_2},...,{x_{14}}} \right\}\) are encoded into the high-dimensional key vector \({x_k} = \left\{ {{x_{k1}},{x_{k2}},...,{x_{k14}}} \right\}\) and value vector \({x_v} = \left\{ {{x_{v1}},{x_{v2}},...,{x_{v14}}} \right\}\) to enhance their feature representation capabilities.

where \({x_i}\) denotes the \(i - th\) external feature, while \({x_{ki}}\) and \({x_{vi}}\) represent its corresponding key vector and value vector, respectively. \(W_Q^l\), \(W_K^x\), and \(W_V^x\) are the learnable parameters associated with the multi-layer perceptrons \({\varphi _Q}\), \({\varphi _K}\), and \({\varphi _V}\), respectively.

Subsequently, variance weight \(\sum = \left\{ {\sigma _1^w,\sigma _2^w,...,\sigma _{14}^w} \right\}\) is computed for each external feature, which is used to fine-tune the base weight \(W = \left\{ {{w_1},{w_2},...,{w_{14}}} \right\}\) to obtain the dynamic weight \(\tilde{W} = \left\{ {{{\tilde{w}}_1},{{\tilde{w}}_2},...,{{\tilde{w}}_{14}}} \right\}\).

where \(\sigma _i^w\) and \({\tilde{w}_i}\) represent the variance weight and the dynamic weight of the \(i - th\) external feature, respectively.

Finally, the external features are weighted and aggregated based on the dynamic weight to obtain the external fusion representation E. This representation is then concatenated with the multi-scale dependency representation R and jointly fed into an LSTM decoder Dec to predict the future charging load \(\hat{l}\).

where \({\textrm{LeakyRelu}}\) represents the activation function, which is employed to enhance the non-linear feature representation capability of the external fusion representation. To provide an intuitive illustration, the process of computing external fusion representation E in MFM is visualized in Fig. 5.

The process of computing external fusion representation E in MFM.

Experiments

Implementation details

The proposed MFT model in this paper is implemented using the PyTorch25 deep learning framework and trained on a high-performance workstation equipped with an NVIDIA GeForce RTX 3090 GPU. All experiments are conducted within the PyCharm integrated development environment, encompassing model construction, debugging, and result analysis, thereby ensuring the efficiency of both the development and experimental processes.

Regarding the training configuration, the batch size is set to 64, the number of training epochs is 50, and the initial learning rate is 0.0005. The Adam optimizer26 is employed to update the model parameters, with mean squared error (MSE) used as the loss function to quantify the discrepancy between predicted and actual values. To mitigate the risk of overfitting, a Dropout mechanism with a dropout rate of 0.05 is applied to the model’s output layer. Additionally, the training samples are randomly shuffled at the beginning of each epoch to reduce the influence of sample ordering and enhance the model’s generalization ability.

From a deployment perspective, compared with the standard Transformer, the multi-head attention mechanism adopted by MFT does not introduce additional computational complexity in its design. Consequently, for the task of predicting the next 96 steps, MFT requires 17.12 MB of model parameters and 3.29 GB of floating-point operations, which are only slightly higher than those of the standard Transformer baseline, with 16.04 MB and 3.05 GB, respectively. Using the NVIDIA GeForce RTX 3090 GPU employed in our experiments as an example, MFT completes a single 96-step prediction inference in less than 100 \(\mu \textrm{s}\), demonstrating its capability to meet the requirements for online deployment in practical applications.

For data preprocessing, min–max normalization is applied to rescale all input features to the interval [0, 1], aiming to improve training stability and convergence speed while eliminating the effect of different feature magnitudes. To evaluate the model’s generalization capability and stability across different prediction horizons, the proposed method utilizes 24-hour historical charging load data from charging stations to predict the future load over 24, 48, 72, and 96 hours, covering both short-term and medium-to-long-term forecasting tasks. Finally, to comprehensively assess the model’s prediction performance, three evaluation metrics are adopted: mean squared error (MSE), root mean squared error (RMSE), and mean absolute error (MAE). The formulas are as follows:

where N denotes the total number of samples, K represents the prediction horizon. \(y_i^k\) and \(\hat{y}_i^k\) are the ground-truth and predicted value at time step k for the sample i, respectively.

Quantitative assessment of prediction performance

Incremental experiments

Incremental experiments are conducted on three models: TF, MT, and MFT. Specifically, TF refers to the standard Transformer model; MT extends TF by incorporating a multi-scale modeling mechanism (3M); and MFT further enhances MT by introducing a multi-variable fusion module (MFM). The performance comparison between TF and MT can validate the effectiveness of the multi-scale modeling mechanism, while the comparison between MT and MFT can demonstrate the contribution of the multi-variable fusion module. The results of the ablation study are summarized in Table 2. As shown in Table 2, both MT and MFT outperform the baseline TF model to varying degrees, confirming the positive impact of the proposed 3M and MFM modules on the performance of charging load forecasting.

To provide a more intuitive understanding of the performance improvement brought by each module, the improvement brought by module S is quantified.

where m and \(m_S\) are the performance evaluations of the models with and without module S, respectively. \(I_S\) represents the performance improvement brought by the module S.

As shown in Fig. 6, from the perspective of different evaluation metrics, the multi-scale modeling mechanism achieves performance improvements of 6.27%, 3.19%, and 3.86% in terms of MSE, RMSE, and MAE, respectively, indicating its effectiveness in capturing multi-scale temporal dependency. The multi-variable fusion module yields even greater improvements of 6.42%, 3.34%, and 5.49% across the same three metrics. Furthermore, an analysis based on different prediction horizons reveals that the multi-scale modeling mechanism achieves improvements of 5.72%, 5.06%, 3.51%, and 3.48% for the 24-hour, 48-hour, 72-hour, and 96-hour predictions, respectively. In contrast, the multi-variable fusion module delivers improvements of 4.35%, 1.43%, 4.83%, and 9.73% over the same time horizons. Notably, for long-term forecasts (\(K = 72\) and \(K = 96\)), the performance improvements contributed by the multi-variable fusion module significantly exceed those brought by the multi-scale modeling mechanism, which suggests that external factors such as weather conditions and traffic features exert a stronger influence on long-term charging behavior. Effectively integrating these external variables substantially enhances the accuracy and stability of long-term load prediction.

The performance brought by different modules at different prediction horizons.

Comparative experiments

In the comparative experiments, several classical time series prediction models are selected as baselines, including Gated Recurrent Unit (GRU)27, Long Short-Term Memory (LSTM)28, Bidirectional LSTM (BiLSTM)29, and Transformer30, with the aim of comprehensively evaluating the superior performance of the proposed MFT model. It is worth noting that, during the prediction evaluation of the baseline models, each of the aforementioned baseline models is used simultaneously for both information encoding and decoding. The results of the comparative experiments are presented in Table 3, MFT consistently outperforms all baseline models across all experimental settings, including different prediction horizons and evaluation metrics. These results provide strong evidence of MFT’s effectiveness in both short-term and long-term charging load prediction tasks.

The prediction error curves of the models are shown in Fig. 7, where the curve corresponding to MFT remains the flattest and exhibits the slowest growth trend throughout. From the perspective of model architecture, although the baseline models achieve strong capabilities in temporal modeling through the gated recurrent mechanism (such as GRU and LSTM) and multi-head attention (such as Transformer), they still exhibit limitations in capturing multi-scale temporal dependency and integrating multi-variable information. In contrast, the proposed MFT model, through the incorporation of the multi-scale modeling mechanism and the multi-variable fusion module, achieves average performance gains of 26.54%, 21.88%, 12.85%, and 10.79% over GRU, LSTM, BiLSTM, and Transformer, respectively. From the viewpoint of evaluation metrics, MFT achieves average improvements of 23.73%, 12.58%, and 17.73% in MSE, RMSE, and MAE, respectively. These results indicate that the proposed method offers significant advantages in reducing overall prediction errors, enhancing forecasting stability, and mitigating extreme deviations. This not only highlights the improved prediction accuracy of MFT, but also reflects its superior adaptability to the temporal fluctuations of charging load data. Moreover, for prediction horizons of 24, 48, 72, and 96 hours, MFT achieves average performance improvements of 17.30%, 19.14%, 22.69%, and 35.78% in terms on MSE; 9.15%, 10.30%, 12.34%, and 18.54% on RMSE; and 16.66%, 14.21%, 17.61%, and 22.44% on MAE, respectively. Notably, the performance improvements of MFT on all evaluation metrics exhibits a steady upward trend with increasing prediction horizon, indicating that MFT is particularly effective for long-term prediction tasks, consistently outperforming existing models and alleviating the common issue of drastic accuracy degradation as the prediction horizon extends.

The prediction error curves and the performance improvement of MFT.

Qualitative assessment of prediction performance

Figures 8, 9, 10, and 11 intuitively illustrate the prediction results of different models at varying prediction horizons (24-hour, 48-hour, 72-hour, and 96-hour) in the form of line plots. It can be clearly observed that the prediction curves generated by the proposed MFT model closely align with the ground-truth load curves across all prediction horizons. The trends of curves predicted by MFT are smooth, and the model responds sensitively to fluctuations, significantly outperforming baseline models such as GRU, LSTM, BiLSTM, and Transformer. Notably, in the long-term prediction tasks of 72 and 96 hours, most baseline models exhibit noticeable lag in their predictions, whereas MFT continues to accurately track the rhythm and magnitude of load variation. This demonstrates MFT’s superior capability in capturing long-term dependency, and the substantial advantages of MFT in terms of MSE, RMSE, and MAE are visually evident in the figure. Overall, the results clearly highlight MFT’s comprehensive superiority in both short-term and long-term prediction tasks, further validating its potential for practical deployment in complex EV charging load prediction tasks.

The prediction results of different models at \(K=24\).

The prediction results of different models at \(K=48\).

The prediction results of different models at \(K=72\).

The prediction results of different models at \(K=96\).

Conclusions

To address the challenges of insufficient multi-scale dependency modeling and limited utilization of external factors in electric vehicle (EV) charging load prediction, this paper proposes a novel Transformer-based model, named Multi-scale Fusion Transformer (MFT), which integrates both the multi-scale modeling mechanism and multi-variable fusion module. By introducing a multi-scale modeling mechanism, MFT enhances the model’s ability to capture load variation patterns across different temporal granularities, enabling it to model both long-term trend and short-term fluctuation in the load sequence. Additionally, a multi-variable fusion module is designed to dynamically weight and integrate external feature information, including weather and traffic conditions, thereby strengthening the model’s capacity to perceive and represent complex influencing factors. Incremental and comparative experiments conducted across multiple prediction horizons (24-hour, 48-hour, 72-hour, and 96-hour) demonstrate that MFT outperforms classical time series models such as GRU, LSTM, BiLSTM, and Transformer in terms of key evaluation metrics, including MSE, RMSE, and MAE, exhibiting superior accuracy and stability. Notably, MFT shows significantly greater advantages in long-term prediction tasks, verifying its effectiveness in modeling long-term load variation. Moreover, visual analysis provides an intuitive illustration of MFT’s outstanding performance in both short-term and long-term charging load prediction. The proposed MFT model offers an effective solution for high-precision load forecasting and holds substantial theoretical and practical potential for intelligent grid scheduling and the optimization of charging infrastructure.

Furthermore, existing studies have shown that the charging and discharging processes of electric vehicles are influenced by battery characteristics31,32. In future work, we will incorporate battery-related factors to further improve the prediction accuracy of electric vehicle charging load.

Data availability

The data used in this study are included within the manuscript, The data used for implementing the forecasting algorithms are available at https://github.com/shivkumarjadon6/Hourly_EV.

References

Agency, I. E. CO2 emissions in 2022 (2023). https://www.iea.org/reports/co2-emissions-in-2022

Wang, Y. et al. Service-quality based pricing approach for charging electric vehicles in smart energy communities. J. Clean. Prod. 420, 138416 (2023).

Xiang, Y. et al. Economic planning of electric vehicle charging stations considering traffic constraints and load profile templates. Appl. Energy 178, 647–659 (2016).

Lokhande, S., Bichpuriya, Y. & Sarangan, V. Real-time management of deviations in the demand of electric vehicle charging stations by utilizing EV flexibility. J. Energy Storage 97, 112719 (2024).

Yin, W., Ji, J., Wen, T. & Zhang, C. Study on orderly charging strategy of EV with load forecasting. Energy 278, 127818 (2023).

Wu, Y., Cong, P. & Wang, Y. Charging load forecasting of electric vehicles based on VMD-SSA-SVR. IEEE Trans. Transport. Electrif. 10, 3349–3362 (2023).

Cui, D. et al. Stacking regression technology with event profile for electric vehicle fast charging behavior prediction. Appl. Energy 336, 120798 (2023).

Benavides, D., Arévalo, P., Villa-Ávila, E., Aguado, J. A. & Jurado, F. Predictive power fluctuation mitigation in grid-connected PV systems with rapid response to EV charging stations. J. Energy Storage 86, 111230 (2024).

Zhang, J. et al. Charging demand prediction in Beijing based on real-world electric vehicle data. J. Energy Storage 57, 106294 (2023).

Dubey, A. & Santoso, S. Electric vehicle charging on residential distribution systems: Impacts and mitigations. IEEE Access 3, 1871–1893 (2015).

Moon, H., Park, S. Y., Jeong, C. & Lee, J. Forecasting electricity demand of electric vehicles by analyzing consumers’ charging patterns. Transp. Res. Part D: Transp. Environ. 62, 64–79 (2018).

Buzna, L., De Falco, P., Khormali, S., Proto, D. & Straka, M. Electric vehicle load forecasting: A comparison between time series and machine learning approaches. in 2019 1st International Conference on Energy Transition in the Mediterranean Area (SyNERGY MED), 1–5 (IEEE, 2019).

Louie, H. M. Time-series modeling of aggregated electric vehicle charging station load. Electr. Power Compd. Syst. 45, 1498–1511 (2017).

Qin, Y., Wang, J., Ren, S. & Li, Z. Prediction of ev random charging load based on monte carlo simulation method. in 2023 3rd International Conference on New Energy and Power Engineering (ICNEPE), 295–298 (IEEE, 2023).

Wang, S. et al. EV charging behavior analysis and load prediction via order data of charging stations. Sustainability 17, 1807 (2025).

Khodayar, M., Liu, G., Wang, J. & Khodayar, M. E. Deep learning in power systems research: A review. CSEE J. Power Energy Syst. 7, 209–220 (2020).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Jahangir, H. et al. Charging demand of plug-in electric vehicles: Forecasting travel behavior based on a novel rough artificial neural network approach. J. Clean. Prod. 229, 1029–1044 (2019).

Chang, M., Bae, S., Cha, G. & Yoo, J. Aggregated electric vehicle fast-charging power demand analysis and forecast based on LSTM neural network. Sustainability 13, 13783 (2021).

Lu, F. et al. Ultra-short-term prediction of EV aggregator’s demond response flexibility using arima, gaussian-arima, lstm and gaussian-lstm. in 2021 3rd International Academic Exchange Conference on Science and Technology Innovation (IAECST), 1775–1781 (IEEE, 2021).

Guo, Z., Bian, H., Zhou, C., Ren, Q. & Gao, Y. An electric vehicle charging load prediction model for different functional areas based on multithreaded acceleration. J. Energy Storage 73, 108921 (2023).

Koohfar, S., Woldemariam, W. & Kumar, A. Prediction of electric vehicles charging demand: A transformer-based deep learning approach. Sustainability 15, 2105 (2023).

Zhang, Y., Ma, L., Pal, S., Zhang, Y. & Coates, M. Multi-resolution time-series transformer for long-term forecasting. in International conference on artificial intelligence and statistics, 4222–4230 (PMLR, 2024).

Zhou, Z. et al. Sdformer: transformer with spectral filter and dynamic attention for multivariate time series long-term forecasting. in Proceedings of the Thirty-Third International Joint Conference on Artificial Intelligence (IJCAI-24), Jeju, Republic of Korea, 3–9 (2024).

Paszke, A. et al. Pytorch: An imperative style, high-performance deep learning library. Advances in neural information processing systems 32 (2019).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. arXiv:1412.6980 (2014).

Chung, J., Gulcehre, C., Cho, K. & Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv:1412.3555 (2014).

Hochreiter, S. & Schmidhuber, J. Long short-term memory. Neural Comput. 9, 1735–1780 (1997).

Graves, A. & Schmidhuber, J. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Netw. 18, 602–610 (2005).

Vaswani, A. et al. Attention is all you need. Advances in neural information processing systems 30 (2017).

Liu, Q. et al. Physics-guided TL-LSTM network for early-stage degradation trajectory prediction of lithium-ion batteries. J. Energy Storage 106, 114736 (2025).

Qiao, D. et al. Mechanism of battery expansion failure due to excess solid electrolyte interphase growth in lithium-ion batteries. ETransportation https://doi.org/10.1016/j.etran.2025.100450 (2025).

Acknowledgements

This research was funded by the Key R&D Program Project of Shaanxi Province (2024GX-ZDCYL-02-14), the Qinchuangyuan Cites High-level Innovation and Entrepreneurship Talent Project (QCYRCXM2023-110), the General funding project of China Postdoctoral Science Foundation (2024M752739), and the Research Funds for the lnterdisciplinary Projects CHU (300104240924).

Author information

Authors and Affiliations

Contributions

W.L. conceived the experiment(s), J.Q. and X.W. conducted the experiment(s), X.Z. analysed the results. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, W., Qiao, J., Wang, W. et al. Multi-scale fusion transformer for EV charging station load prediction. Sci Rep 16, 8609 (2026). https://doi.org/10.1038/s41598-026-38562-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-38562-z