Abstract

Focal cortical dysplasia (FCD) is a developmental disorder frequently linked to drug-resistant focal epilepsy, where surgical intervention is often the most promising treatment. Unfortunately, conventional neuroradiological methods often struggle to detect subtle FCD cases, which may result in missed surgical opportunities for patients. To address this challenge, we have developed an innovative approach to FCD lesions detection using conditional diffusion models guided by complementary location-based modal factors. Our method involves employing a classifier to identify epileptic sites while directing the diffusion model to generate pseudo-healthy images. Using the unique features of FCD present in both T1 and FLAIR images, T1 images conditions the diffusion model to produce the corresponding FLAIR image, effectively removing abnormal tissue. Recognizing the difficulty in detecting epileptic lesions due to their subtle presentation, we have incorporated histogram matching techniques to address the color distortion issues commonly associated with diffusion models. This adjustment ensures that chromatic aberration does not hinder the identification of lesions. The effectiveness of our method has been validated using the UHB FCD MRI dataset, achieving an image-level recall metric of 0.952 and a pixel-level dice metric of 0.245. These results surpass those obtained from four other comparative methods, underscoring the superior performance of our approach. Our code is available at https://github.com/CodePYJ/FCD-Detection.

Similar content being viewed by others

Introduction

Epilepsy is a prevalent and serious neurological disorder that affects more than 70 million people worldwide1. Among these, one-third suffer from drug-resistant focal epilepsy, with focal cortical dysplasia (FCD) being the leading cause of refractory epilepsy in children, contributing to more than 30% of cases2. Although surgical intervention serves as an effective treatment for drug-resistant epilepsy, its success depends on the precise location of the epileptic lesions. Unfortunately, FCD lesions often present subtle structural changes in MRI scans, such as increased cortical thickness and indistinct gray-white matter junctions, which are challenging to detect manually3,4. In addition, the existing radiological classification and diagnostic criteria based on magnetic resonance imaging for patients with FCD are inconsistent. This inconsistency arises from the subtle nature of image features, individual variances, and data privacy limitations, complicating the labeling process for clinicians and resulting in insufficient publicly labeled datasets for model training and evaluation5. Consequently, there is a pressing need to develop unsupervised or weakly supervised algorithms to reduce the dependence on precise labeling in the detection of epileptic lesions.

In recent years, anomaly detection methods have gained prominence in medical imaging due to their adaptability, especially when pixel-level annotations are unavailable. The high costs associated with medical image annotation and inconsistent results among physicians pose challenges to fully supervised learning in clinical settings, thus encouraging the advancement of unsupervised or weakly supervised approaches. Generative model-based techniques, such as GANs 6, VAEs 7, and Diffusion models 8, detect abnormalities by analyzing the distribution of normal structures and contrasting abnormal regions with these normal representations. While these methods are effective in identifying large-area lesions like tumors, they encounter significant difficulties with small lesions that have indistinct borders, such as FCD, and they require further validation.Moreover, diffusion models have become state-of-the-art generative models in terms of generating sample quality. Different noise additions can be used in the forward diffusion process9. To generate specific health images, images10 or classification labels11 are usually used as conditions to guide the diffusion model. In the reverse denoising process, the Denoising Diffusion Implicit Models (DDIM)12 sampling property can be used to convert abnormal tissue to normal.

To address the challenges in detecting subtle lesions, we propose a weakly supervised anomaly detection method built on diffusion modeling. This approach leverages weakly conditional location information of lesions to train a classifier and guide the diffusion model in generating pseudo-healthy images. By integrating the distinct features of FCD observed in T1 and FLAIR images, the T1 image serves as a condition to produce the corresponding FLAIR image, effectively removing abnormal tissue. Given the difficulty in recognizing epileptic lesions, we introduce histogram matching to address the common color distortion problem associated with the diffusion model, thereby enhancing lesion detection accuracy. In summary, the key contributions of this work are as follows:

-

We employ a weakly supervised training strategy using a location-guided diffusion model to generate pseudo-healthy images, addressing the limitations in detection performance due to the lack of precise FCD annotations.

-

Our modality translation approach, based on diffusion models, preserves image generation quality while incorporating multimodal FCD manifestations, thereby improving detection accuracy.

-

The use of histogram matching effectively eliminates color deviation issues caused by the diffusion model, further enhancing lesion identification accuracy.

Related work

Epilepsy

Drug-Resistant Epilepsy is a surgically treatable developmental epileptogenic brain malformation13. Treatment success depends critically on accurate localization and depiction of focal cortical dysplasia lesions. It manifests as cortical thickening on T1-weighted MRI and high signal intensity with blurring at the gray matter-white matter interface on FLAIR images. However, recognizing FCD lesions in clinical practice remains challenging14. First, because of privacy and other issues, publicly available datasets for epilepsy are currently very sparse, resulting in current methods being based on monocentric datasets. There are three main problems with FCD detection algorithms trained and validated using monocentric datasets: the first is that the number of datasets is too small to develop powerful classification algorithms. Second, studies may recruit training and testing data from the same sample of epileptic patients, resulting in data leakage and causing their performance to be overestimated. Third, radiologic diagnosis or MRI ratings of individuals with FCD may vary by site. The MRI volume of an individual with FCD can be described as ”MR negative” at one site and ”radiologically described FCD” at another, with corresponding implications for the evaluation of the detection algorithm. Therefore, it is important that the same well-annotated and sufficiently large dataset is used to validate the different algorithms and that the dataset is not used to train these algorithms. New methods based on machine learning and deep learning have greatly impacted the field of automated FCD detection in MRI-negative focal epilepsy15.

FCD lesion localization

In recent years, voxel-based morphometry16 and surface-based morphometry17 have been employed for computer-aided detection of FCD lesions. These traditional methods rely on manually crafted low-level features, which often struggle to effectively differentiate FCD lesions from normal structures. The advent of deep learning technologies has led to the widespread adoption of automatic detection and segmentation techniques in medical imaging. Convolutional neural networks (CNN), trained on T1-weighted and FLAIR MR images from multiple centers, have become common tools for identifying FCD lesions18. Furthermore, David t al. developed an artificial neural network for detecting FCD using morphometric maps19. In segmentation tasks, significant advancements have been achieved by combining CNN-based encoder-decoder architectures with multiscale transformers to enhance lesion feature representation through global context14. Nonetheless, these methods utilize supervised learning, which demands extensive accurate labeling, a particularly challenging requirement for FCD. Consequently, there is a need to employ anomaly detection techniques to address the labeling issue.

Anomaly detection

The DenoisingAE model20 explored the classic denoising approach of autoencoders, incorporating variational autoencoders and noise injection mechanisms to enhance sensitivity to subtle variations. The introduction of diffusion models21 revolutionized anomaly detection tasks with their robust generative capabilities. Wolleb t al. applied the diffusion model to the anomaly detection task for the first time11. This method introduced a joint training paradigm, training the diffusion generation network on both healthy images and pathological images while constructing a binary noise sample classifier. The innovation lies in integrating classifier gradients with the denoising trajectory of diffusion models to form a conditional generation framework. AnoDDPM9 employs an unsupervised training method, substituting Gaussian noise with simplex noise in traditional diffusion models to manage the size of anomalous targets. While these techniques are effective in detecting prominent abnormalities like tumors, they struggle with subtle epileptogenic anomalies.

Diffusion model

The origins of diffusion models can be traced back to the diffusion probability model introduced by Sohl-Dickstein et al.22. in 2015. This model established a framework for data generation by simulating thermodynamic diffusion processes. However, it initially garnered little attention due to limitations in computational efficiency and generation quality. A major advancement occurred in 2020 with the introduction of the denoising diffusion probabilistic model by Jonathan Ho et al.8. This model used a Markov chain to define a step-by-step process of noise addition and removal, achieving stable training in image generation for the first time and marking the entry of diffusion models into a practical phase. In 2021 , OpenAI further advanced the field by introducing a refined diffusion model21. This version incorporated explicit classifier guidance and corrected the generation direction using the classifier gradient. Remarkably, it surpassed the generation quality of GANs on the ImageNet dataset, which sparked significant academic interest in diffusion models. To address these issues, we introduce a novel model that combines the detection capability of a classifier with the generative strengths of diffusion models, achieving excellent performance in FCD lesion detection without increasing the labeling effort.

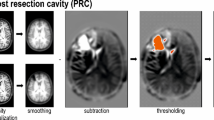

The overall framework of our proposed model for anomaly detection: (1) Diffusion model based on position-guided modality transformation, using a classifier to detect abnormal positions of FLAIR, and guiding the diffusion model with modal transformation to generate pseudo-healthy images. (2) Histogram matching algorithm, which matches the generated pseudo-healthy image with the original image to eliminate color deviation.

Methods

The proposed framework is illustrated in Fig. 1. It employs a diffusion model guided by a classifier to transform MR images from T1 images to FLAIR, leveraging the distinct characteristics of FCD in both images. The integration of a classifier capable of distinguishing between abnormal and normal tissue features facilitates the generation of pseudo-healthy MR images by converting abnormal tissues to resemble normal ones. During the reverse denoising process, DDIM are used to preserve the integrity of healthy tissues. To address color discrepancies, histogram matching is applied to the generated FLAIR images. The anomaly map is then defined by calculating the differences between the generated images and the original images.

Location-guided diffusion model

We follow the diffusion model formulation proposed in the Classifier Guided Diffusion literature21. This approach involves introducing noise to an input image, denoted as x, to create a sequence of progressively noisier images: \(D=\{x_i\}_{i=1}^{N}\). At each arbitrary time step t, a U-net is trained to predict \(x_{t-1}\) from \(x_t\) of the current time step. MSE serves as the loss function during this training phase.

In the reverse process, we employ inverse Deterministic DDIM to facilitate deterministic sampling, guided by a classifier. This process iteratively predicts \(x_{t-1}\) from \(x_t\), until the original image \(x_0\) is reconstructed. The forward process can be expressed mathematically as follows:

where \(\alpha _t=1-\beta _t\) and \(\bar{\alpha }_t = \prod _{i=1}^{t}\alpha _i\). \(\beta _t\) is the noise coefficient, representing the proportion of noise added to the data during the t-th step of diffusion. \(\alpha _t\) is the data retention coefficient, representing the proportion of the original data retained after the t-th step of diffusion. The MSE loss equation for training is:

where \(\epsilon\) represents noise sampled from a Gaussian distribution \(\mathscr {N}(0, I)\), and \(\epsilon _\theta\) is the U-net parameterized by \(\theta\). The variable c includes T1 image and positional label information.

Inverse DDIM processes \(x_{t+1}\) with the following formula:

The iterative denoising process yields samples as follows:

where \(\sigma _t = \sqrt{\frac{(1 - \bar{\alpha }_{t-1})}{(1 - \bar{\alpha }_t)}} \sqrt{1 - \frac{\bar{\alpha }_t}{\bar{\alpha }_{t-1}}}\). In DDIM, setting \(\sigma _{t} = 0\) results in deterministic sampling. We adopted the classifier guidance method detailed in DDPM-DDIM11 to direct the image generation towards the desired healthy class, denoted as h. To achieve this, we pre-train a classifier network, C, on the noisy image \(x_t\) to predict class labels for abnormal regions, including the frontal, temporal, parietal, occipital, and insular lobes, as well as healthy cases. During denoising, the scaled gradient of the classifier \(s\nabla _{x_{t}}logC(h|x_{t},t)\) is employed to update \(\epsilon _{\theta }(x_t,t,c)\).

Modality conversion

Research by Hong et al. indicates that FCD, particularly FCD II-B lesions, displays notable variability across different MRI sequences23. For instance, the FLAIR modality is highly sensitive to changes in both cortical and subcortical water content. In regions of FCD II-B lesions, this results in a distinctive pattern that includes high intracortical signals, abnormal high signal strips in the subcortical area, and blurring at the cortical-white matter boundary, which enhances visualization significantly. Conversely, T1-weighted images are more effective in revealing anatomical changes, such as increased cortical thickness, the absence of vertical structures (radial bands), and blurring at the gray-white matter junction within the lesion area 24. This complementary information forms the basis of our multimodal imaging analysis.

In tasks like reconstruction and modality mapping for anomaly detection, particularly where lesions occupy a small portion of the image, models often resort to a conservative identity mapping strategy. This approach leads to what’s termed as an identity shortcut, where the model reconstructs inputs with minimal alterations, overlooking subtle yet crucial abnormal features. This is particularly problematic in detecting FCD II lesions. Figure 2 illustrates the clear distinction between T1 and FLAIR images, with epileptic features being more pronounced in FLAIR.

We consider two MRI modality spaces: \(\mathscr {X}\) and \(\mathscr {Y}\). When training a model to transform images between these modalities, denoted as \(f: \mathscr {X} \rightarrow \mathscr {Y}\), the model learns how specific tissue responses change with different radio frequency pulses. Our dataset comprises N pairs of images from both modalities, represented as \(D=\{x_i,y_i\}_{i=1}^{N}\), where \(x \in \mathscr {X}\) refers to the T1 image and \(y \in \mathscr {Y}\) refers to the FLAIR image.

T1 and FLAIR present different appearances at the lesion area. (a) T1 image, (b) FLAIR image, and (c) FCD lesion map.

Histogram matching

Histogram matching (HM) is a technique in image processing that aligns the histogram of an input image with that of a target image, aiming to achieve similar tone or intensity distributions between the two. The fundamental concept involves mapping the pixel values of the input image to those of the target image using a specific mapping function.

The HM process encompasses three essential steps. The first step involves calculating both the grayscale histogram and the Cumulative Distribution Function (CDF) for the input and target images. The CDF is derived by cumulatively summing the Probability Density Function of each grayscale level. Next, a gray mapping table is constructed. This involves iterating over each grayscale level in the CDF of the input image to identify the closest match in the CDF of the target image, thus establishing a nonlinear pixel mapping relationship. Finally, global pixel replacement is applied to the input image in accordance with the lookup table, thereby specifying the histogram’s shape.

The CDFs of the input and target images are computed using the following equations:

where \(r_k\) and \(z_k\) represent grayscale levels, and p refers to the frequency of occurrence in either the input or target image. For each grayscale level \(r_k\) in the input image, the corresponding grayscale level \(z_k\) in the target image is identified by finding the CDF value closest to that of \(r_k\). This results in mapping the grayscale level \(r_k\) from the input image to the nearest grayscale level \(z_k\) in the target image.

Visualization of typical anomaly detection results on UHB dataset. The red color in the Anomaly Map means that the reconstructed image differs significantly from the original image, while the blue color means that there is basically no difference.

Experiments

Datasets and implementation details

Datasets

This study utilizes the publicly available UHB dataset, provided by the Epilepsy Unit at the University Hospital Bonn (UHB)5. The dataset comprises MRI data of 85 patients diagnosed with FCD II between 2006 and 2021, and includes 85 healthy controls. Each subject’s data contains two types of MRI sequences, T1 and FLAIR, along with clinical information. Seven individuals categorized as MRI-negative or non-lesional were excluded from the study.

To ensure uniformity across all MRI sequences, we performed affine transformations to align the brain scans with the SRI 24-Atlas 25. Subsequent cranial exfoliation was carried out using HD-BET 26, focusing on horizontal slices due to our 2D methodological approach. Each image slice was resized to 256 \(\times\) 256 pixels and normalized to a range between 0 and 1. Since FCD primarily affects the central brain area, we retained slices numbered 75 to 125. Aiming to maintain a 1:1 ratio of abnormal to normal slices, the training set included 842 slices from FCD patients and 900 slices from partially normal individuals, while the test set contained 118 slices from epileptic patients. We employed stratified sampling to prevent overlap of patients between the training and test sets.

Implementation details

All experiments were conducted using the PyTorch 1.12 framework on a single Tesla V100s GPU. The model training spanned 100 cycles, utilizing the Adam optimization algorithm with a learning rate of 0.00005. We trained the model with batches of 12 slices. During the sampling phase, to minimize alterations to the original images and thereby reduce false positives, we employed 100 steps of addition and denoising, with a classifier scale set at 5.

Evaluation metrics

We evaluate our model’s performance using recall (denoted as R@k), which measures the detection of false positives per image at the pixel level, alongside Dice metrics. A lesion is considered recalled if there is at least one predicted point within 10 pixels of the lesion, aligning with the 5th percentile of lesion radius across both datasets. The Dice metric is computed by binarizing the anomaly map, and the threshold is chosen by picking the value that maximizes the Dice metric based on the range of anomalous pixels. Measurements were conducted on slices sized 256 \(\times\) 256 pixels.

Comparative study

The study compares various models on the UHB dataset, including diffusion-based and autoencoder-based methods such as AnoDDPM 9, ImgCondDDPM 10, DDPM-DDIM 11, and DenoisingAE 20. To ensure fair comparison, we used default parameters from the open-source code of each competing method, matching the data to the training period. Our method demonstrated superior performance, achieving higher recall rates and fewer false positives than alternative approaches, as summarized in Table 1. Furthermore, Fig. 3 illustrates that our method closely approximates the true detection of epileptic foci.

In four experiments, our method achieved recall scores of 0.856 and 0.952, and Dice scores of 0.245, for R@5 and R@10 respectively, both representing the highest scores. On average, recall exceeded other methods by 0.318 and 0.173, while Dice scores were higher by 0.099. These results indicate that AnoDDPM, employing an unsupervised algorithm, struggles to identify epileptic features, with error accumulation due to Markov chain denoising. Conversely, the unsupervised algorithm ImgCondDDPM, with conditional bootstrapping, significantly reduces false positives but still encounters broken loop anomalies instead of transformed anomalies. Compared to the DDPM-DDIM method, which uses a weakly supervised strategy, our approach integrates positional and modal information for enhanced detection accuracy. Additionally, compared to DenoisingAE, which relies on a classical self-supervised denoising architecture with UNet at its core, our method employs a more generative diffusion model architecture, resulting in improved anomaly transformation.

Comparison results with and without histogram matching. (a) is the original image, (b) is the result using modal transformation and using histogram matching, (c) is the result without using histogram matching, and (d) is the result without using modal transformation.

Ablation study

We conducted an ablation study to evaluate the effects of three critical components: positional information guidance, modal shift, and histogram matching. As detailed in Table 2, a diffusion model using only FLAIR modality guidance fails to integrate information from the T1 image, significantly reducing the detection of abnormal locations. The R@5 and R@10 were 0.301 and 0.726, respectively, which starkly contrast with the rates of 0.852 and 0.956 achieved using the T1 image. This limitation may impair the model’s accuracy in aiding physicians and increase the risk of misdiagnosis.

Incorporating positional information enhances the model’s ability to focus on areas with a high incidence of epileptic lesions by utilizing knowledge of brain deconvolution. This addition led to improved recall rates, increasing by 0.13 and 0.096 compared to scenarios without location information. Although the Dice score decreased by 0.045, recall metrics are more crucial for providing physicians with pertinent information during diagnosis. Therefore, prioritizing a higher recall score over maintaining the Dice score is justified. Histogram matching further reduces the likelihood of overlooking lesions due to color variations, demonstrating its effectiveness with recall improvements of 0.329 and 0.027 and Dice score enhancements of 0.101. The visualization of these results is presented in Fig. 4.

To select the optimal guidance strength and noise addition level, we also conducted experiments, as shown in Tables 3 and 4. It can be seen from them that the best results were achieved when the guidance strength was 5 and the noise addition level was 100.

Conclusion

This study proposes a highly interpretable method that does not require voxel-level annotations to effectively detect abnormalities in the brains of epilepsy patients. Comprehensive experimental results demonstrate superior performance compared to DenoisingAE 20, AnoDDPM 9, DDPM-DDIM 11, and ImgCondDDPM 10, while exhibiting clinical relevance.

The method proposed in this paper is a diffusion model with position guidance and modality conversion capabilities. It improves detection accuracy by using a position classifier to focus on brain regions with a high incidence of epilepsy, designing a modality conversion strategy adapted to the characteristics of focal cortical dysplasia type II, and applying histogram matching technology to eliminate color differences. However, this method has limitations: the inherent differences between T1 and FLAIR modalities can introduce false positives and noise in the detection of small lesions, and some lesions that are similar to normal tissues in the T1 modality will be weakened in the FLAIR modality generated through modality conversion, which in turn leads to detection errors. Future work will focus on improving these shortcomings to achieve accurate detection.

Data availability

The data can be retrieved from the OpenNeuro repository via the following link: https://openneuro.org/datasets/ ds004199/versions/1.0.5.

Code availability

Our code is available at https://github.com/CodePYJ/FCD-Detection.

References

Mesraoua, B. et al. Drug-resistant epilepsy: Definition, pathophysiology, and management. J. Neurol. Sci. 452, 120766 (2023).

Xu, L., Li, M., Wang, Z. & Li, Q. Global trends and burden of idiopathic epilepsy: Regional and gender differences from 1990 to 2021 and future outlook. J. Health Popul. Nutr. 44, 45 (2025).

Widdess-Walsh, P., Diehl, B. & Najm, I. Neuroimaging of focal cortical dysplasia. J. Neuroimaging 16, 185–196 (2006).

Tassi, L. et al. Focal cortical dysplasia: Neuropathological subtypes, EEG, neuroimaging and surgical outcome. Brain 125, 1719–1732 (2002).

Schuch, F. et al. An open presurgery MRI dataset of people with epilepsy and focal cortical dysplasia type II. Sci. Data 10, 475 (2023).

Goodfellow, I. et al. Generative adversarial networks. Commun. ACM 63, 139–144 (2020).

Kingma, D. P. & Welling, M. Auto-encoding variational Bayes. arXiv preprint arXiv:1312.6114 (2013).

Ho, J., Jain, A. & Abbeel, P. Denoising diffusion probabilistic models. Adv. Neural Inf. Process. Syst. 33, 6840–6851 (2020).

Wyatt, J., Leach, A., Schmon, S. M. & Willcocks, C. G. Anoddpm: Anomaly detection with denoising diffusion probabilistic models using simplex noise. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 650–656 (2022).

Baugh, M. et al. Image-conditioned diffusion models for medical anomaly detection. In International Workshop on Uncertainty for Safe Utilization of Machine Learning in Medical Imaging. 117–127 (Springer, 2024).

Wolleb, J., Bieder, F., Sandkühler, R. & Cattin, P. C. Diffusion models for medical anomaly detection. In International Conference on Medical Image Computing and Computer-Assisted Intervention. 35–45 (Springer, 2022).

Song, J., Meng, C. & Ermon, S. Denoising diffusion implicit models. arXiv Preprint arXiv:2010.02502 (2020).

Zhang, X. et al. Glff: A global-local feature fusion model to segment epileptic focus of focal cortical dysplasia from multi-channel MR images. In 2024 IEEE International Symposium on Biomedical Imaging (ISBI). 1–5 (IEEE, 2024).

Zhang, X. et al. Focal cortical dysplasia lesion segmentation using multiscale transformer. Insights Imaging 15, 222 (2024).

House, P. M. et al. Automated detection and segmentation of focal cortical dysplasias (FCDS) with artificial intelligence: Presentation of a novel convolutional neural network and its prospective clinical validation. Epilepsy Res. 172, 106594 (2021).

Mechelli, A., Price, C. J., Friston, K. J. & Ashburner, J. Voxel-based morphometry of the human brain: Methods and applications. Curr. Med. Imaging 1, 105–113 (2005).

Thesen, T. et al. Detection of epileptogenic cortical malformations with surface-based MRI morphometry. PloS One 6, e16430 (2011).

Gill, R. S. et al. Multicenter validation of a deep learning detection algorithm for focal cortical dysplasia. Neurology 97, e1571–e1582 (2021).

David, B. et al. External validation of automated focal cortical dysplasia detection using morphometric analysis. Epilepsia 62, 1005–1021 (2021).

Kascenas, A., Pugeault, N. & O’Neil, A. Q. Denoising autoencoders for unsupervised anomaly detection in brain MRI. In International Conference on Medical Imaging with Deep Learning. 653–664 (PMLR, 2022).

Dhariwal, P. & Nichol, A. Diffusion models beat GANs on image synthesis. Adv. Neural Inf. Process. Syst. 34, 8780–8794 (2021).

Sohl-Dickstein, J., Weiss, E., Maheswaranathan, N. & Ganguli, S. Deep unsupervised learning using nonequilibrium thermodynamics. In International Conference on Machine Learning. 2256–2265 (PMLR, 2015).

Hong, S.-J. et al. Multimodal MRI profiling of focal cortical dysplasia type II. Neurology 88, 734–742 (2017).

Wong-Kisiel, L. C. et al. Morphometric analysis on t1-weighted MRI complements visual MRI review in focal cortical dysplasia. Epilepsy Res. 140, 184–191 (2018).

Rohlfing, T., Zahr, N. M., Sullivan, E. V. & Pfefferbaum, A. The sri24 multichannel atlas of normal adult human brain structure. Hum. Brain Mapp. 31, 798–819 (2010).

Isensee, F. et al. Automated brain extraction of multisequence MRI using artificial neural networks. Hum. Brain Mapp. 40, 4952–4964 (2019).

Acknowledgements

The authors would like to convey their profound appreciation to the editors and anonymous reviewers.

Funding

This research was supported by the Medical Research Project of Xi’an Science and Technology Bureau (24YXYJ0006), Guangxi Science and Technology Program (No. FN2504240022) , Guangxi Key R&D Project (No. AB24010167), Guangdong Basic and Applied Basic Research Foundation (No. 2025A1515011617).

Author information

Authors and Affiliations

Contributions

Conceptualization, Y.P., C.W., R.G. and Y.L.; methodology, Y.P., R.G., C.W. and X.Z.; software, Y.P., C.W. and R.G.; validation, Y.P. and Y.L.; formal analysis, Q.L. and Y.L.; investigation, Y.P., Q.L. and R.G.; resources, C.W. and R.G.; data curation, X.Z., Y.L. and Y.P.; writing—original draft preparation, Y.L., Y.P., X.Z., Q.L. and C.W.; writing—review and editing, Y.P., Y.L., C.W. and R.G.; visualization, Y.P., Q.L. and C.W.; supervision, R.G. and C.W.; project administration, C.W. and R.G.; funding acquisition, Y.L.. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

This research complies with the principle of the Helsinki Declaration.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Li, Y., Pan, Y., Zhang, X. et al. Pseudo-healthy image synthesis via location-guided diffusion models for focal cortical dysplasia lesion localization. Sci Rep 16, 8101 (2026). https://doi.org/10.1038/s41598-026-38981-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-38981-y