Abstract

Understanding how landscape composition relates to aesthetic perception remains a challenge in landscape design research. While semantic segmentation methods have achieved strong performance in scene understanding, they are rarely used to analyze design structure and aesthetic interpretation in garden landscapes. This study introduces a computational framework that integrates semantic segmentation with compositional design descriptors and social aesthetic indicators for the analysis of garden design language. A dataset containing more than 2,000 garden images was constructed with pixel-level semantic annotations and associated aesthetic labels. Based on the segmentation outputs, a set of quantitative descriptors was derived to represent spatial composition of landscape elements, including spatial distribution, proportional composition, and element adjacency relationships. These compositional descriptors were further combined with social aesthetic indicators obtained from public surveys and textual analysis to explore relationships between landscape structure and perceived aesthetic qualities. Experimental results show that the proposed framework achieves competitive performance in semantic segmentation while providing interpretable representations of landscape design composition. The analysis further reveals consistent associations between certain compositional patterns and aesthetic perception. The proposed approach demonstrates the potential of integrating semantic scene understanding with design-aware feature representation for data-driven analysis of landscape aesthetics and garden design language.

Similar content being viewed by others

Introduction

Landscape design reflects cultural values, aesthetic principles, and social identity through the spatial organization of natural and built elements. Gardens and parks can therefore be interpreted as visual compositions in which vegetation, water, pathways, and structures collectively form a design language that conveys aesthetic and cultural meaning. Understanding how these compositional structures influence aesthetic perception remains an important question in landscape architecture research. However, most existing studies rely on qualitative interpretation and visual heuristics, which limits the ability to analyze landscape composition in a systematic and quantitative manner1,2,3,4.

Recent advances in computer vision, particularly semantic segmentation, have enabled detailed pixel-level understanding of complex visual scenes. These techniques have been widely applied in domains such as autonomous driving, medical imaging, and urban analysis5,6,7. By identifying objects and materials at the pixel level, semantic segmentation provides a powerful foundation for analyzing spatial structure within images. Nevertheless, its application in landscape design research remains limited. Existing studies often focus on ecological monitoring, vegetation classification, or land-use mapping8,9,10. These approaches typically treat landscape elements as independent categories rather than components of a broader compositional structure.

In landscape design, however, aesthetic perception often emerges from spatial relationships between elements rather than from the presence of individual objects alone. For example, the proximity of water and vegetation, the arrangement of pathways and pavilions, or the balance between built structures and open space can significantly influence how a landscape is perceived. Capturing such compositional relationships requires representations that go beyond pixel-level classification.

To address this challenge, this study proposes a computational framework that links semantic scene understanding with compositional analysis of landscape design. Instead of relying solely on object recognition, the proposed approach derives a set of compositional descriptors from semantic segmentation outputs to represent spatial organization of landscape elements. These descriptors include spatial distribution measures, proportional composition of elements, and adjacency relationships between landscape components. Together they provide a structured representation of landscape design language.

In addition to visual composition, aesthetic perception is influenced by cultural context and public interpretation. To incorporate this dimension, the proposed framework integrates compositional design descriptors with social aesthetic indicators derived from surveys and textual analysis. By combining visual and perceptual data, the framework enables the exploration of relationships between landscape composition and aesthetic evaluation.

The main contributions of this study can be summarized as follows:

-

A computational framework is introduced that integrates semantic segmentation with compositional descriptors for analyzing landscape design language.

-

A dataset of more than 2,000 garden landscape images with semantic annotations and aesthetic indicators is constructed to support design-oriented visual analysis.

-

A set of quantitative descriptors is proposed to represent spatial organization of landscape elements, including spatial distribution, proportional composition, and adjacency relationships.

-

The relationship between compositional landscape features and social aesthetic perception is explored through integrated visual and perceptual analysis.

By combining computer vision techniques with landscape design analysis, this work provides a data-driven approach for studying how spatial composition in garden landscapes relates to aesthetic perception and cultural interpretation.

Related work

Semantic segmentation in environmental and design applications

Semantic segmentation is a widely used technique in computer vision that enables pixel-level understanding of complex images. Valada et al.11 propose AdapNet, a semantic segmentation architecture designed for adverse environmental conditions. The model uses pooling indices in the decoder to improve robustness under varying illumination and weather scenarios. Their work addresses performance degradation problems encountered by conventional models in challenging outdoor environments.

Spasev et al.12 provide a comprehensive review of semantic segmentation in remote sensing, covering definitions, algorithms, datasets, and key application domains such as disaster management, environmental monitoring, and precision agriculture. Their study provides an important overview of how segmentation methods support scene interpretation in geospatial analysis. Qin et al.13 present a deep convolutional neural network based method for semantic segmentation of building roofs in dense urban environments using high resolution GF2 satellite imagery. Their model demonstrates high accuracy in identifying architectural structures and highlights the practical role of semantic segmentation in urban planning and three dimensional reconstruction. Tian and Slaughter14 develop an environmentally adaptive segmentation algorithm for outdoor agricultural scenes. Their approach combines traditional image segmentation with morphology based pattern recognition to improve plant identification in complex environments. Zamani et al.15 apply deep semantic segmentation techniques to soil type recognition in construction and agricultural environments. Their model extracts contextual features from visual scenes and supports land classification and resource management.

Despite these advances, most existing studies focus on ecological or infrastructural aspects of landscapes, such as vegetation monitoring, species identification, or urban infrastructure detection. Comparatively little attention has been given to the aesthetic and cultural dimensions embedded within designed landscapes. In particular, the application of semantic segmentation to analyze landscape design language remains limited. Landscape design often depends on the spatial relationships among elements, including how vegetation frames water bodies, how pathways guide movement, and how architectural structures organize spatial hierarchy. These compositional relationships are rarely addressed in conventional segmentation applications.

Recent studies have also explored the incorporation of structural priors into visual segmentation tasks, particularly for culturally structured imagery. For example, the SPIRIT framework introduces symmetry informed priors for segmenting traditional Thangka paintings27, demonstrating that incorporating structural knowledge can improve segmentation performance and interpretability in visually structured scenes. These findings suggest that segmentation methods may benefit from integrating compositional information when applied to culturally meaningful visual domains such as garden landscapes.

These observations indicate that semantic segmentation has the potential to move beyond ecological classification tasks and contribute to the analysis of spatial composition and design semantics in landscape architecture.

Computational analysis in landscape architecture and garden design

Computational approaches have increasingly been introduced into landscape architecture to support quantitative analysis and spatial modeling. Cantrell and Mekies16 present a conceptual framework in Codify that highlights the generative and computational potential of landscape design. Their work suggests that computational abstraction allows designers to explore spatial configurations in real time and supports more adaptive design processes. Luo17 investigates the integration of machine learning and computer simulation in the design of urban green landscapes. The study compares algorithmic methods with traditional computer aided design tools and demonstrates the potential of computational techniques for improving efficiency and decision making in spatial planning.

Ackerman et al.18 examine the role of computational modeling in climate resilient landscape architecture. By combining building information modeling and computational fluid dynamics, their work simulates landscape responses to environmental changes and supports adaptive design strategies. Busón et al.19 propose a computational methodology for landscape design that focuses on site specific spatial analysis. Their approach emphasizes analytical tools that assist designers in understanding spatial structure and improving design decisions at a local scale. Ervin20 introduces the concept of Turing landscapes, where algorithmic design systems and sensor based technologies enable responsive landscape environments. This perspective highlights the growing integration of computation, ecology, and environmental feedback in contemporary landscape design practice. Luminiitty21 studies computational planting design within the broader landscape design workflow. The research demonstrates how parametric tools and data driven methods can enhance the precision and flexibility of planting strategies while maintaining compatibility with traditional horticultural principles.

Although computational approaches have improved landscape analysis and design processes, most existing methods rely on simulation, parametric modeling, or rule based design systems. Only a limited number of studies have explored the use of computer vision techniques for analyzing landscape imagery. Image based analyses often rely on low level features such as color, texture, or shape descriptors. These approaches rarely capture the semantic relationships among landscape elements that form the basis of design language. Therefore, integrating semantic image understanding with landscape design analysis remains an important research direction.

Social aesthetics and design language in landscape studies

Social aesthetics examines how landscapes embody cultural values, social identities, and collective meanings. This perspective emphasizes the reciprocal relationship between spatial design and social interpretation.

Kühne22 discusses landscape and power as a social aesthetic construct and highlights how cultural narratives and social structures shape the interpretation of landscapes. His work connects sociology, aesthetics, and geography to provide a theoretical framework for understanding landscape as a socio cultural artifact. Perry et al.23 explore the relationship between aesthetic expression and ecological sustainability in landscape design. Their study argues that visual design language can promote ecological awareness and encourage sustainable values among designers and the public. Raaphorst et al.24 analyze the semiotics of landscape design communication and propose a critical visual research approach. Their work emphasizes how visual media convey design intentions and encourages more reflective design communication practices. Thompson25 conducts a discourse analysis of aesthetic, social, and ecological values among professional landscape architects. The study identifies dominant professional perspectives and shows how aesthetic considerations influence practical design decisions. Wang and Yu26 propose a landscape aesthetic structure model that describes spatial patterns of urban landscape aesthetics over time and space. Their work incorporates citizen perspectives and highlights the role of public perception in shaping landscape aesthetics.

Although these studies provide valuable theoretical insights into the social and cultural dimensions of landscape design, few works have attempted to quantitatively link visual landscape composition with social aesthetic perception. Integrating semantic image analysis with social aesthetic research may help bridge this gap. By identifying landscape elements and analyzing their spatial relationships, computational approaches can provide a more systematic way to explore how design configurations relate to public aesthetic preferences and cultural interpretation.

Such interdisciplinary integration remains relatively limited in current research. However, it provides a promising direction for combining computer vision techniques with landscape design theory and social aesthetic analysis.

Methods

Framework overview

This study employs a multi-stage computational framework that combines computer vision and social aesthetic analysis to explore the relationship between landscape design language and aesthetic perception. The workflow integrates semantic scene understanding with compositional feature extraction and social perception modeling to provide a systematic approach for analyzing how spatial organization in garden landscapes relates to public aesthetic evaluation.

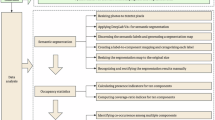

As illustrated in Fig. 1, the process begins with the collection of garden landscape images followed by pixel-level semantic annotation. The annotated dataset is then used to train a semantic segmentation model capable of automatically identifying major landscape components such as vegetation, water bodies, and architectural structures. Based on the segmentation outputs, a set of compositional descriptors is extracted to represent spatial organization and structural characteristics of landscape design. These descriptors quantify spatial distribution patterns, proportional composition of elements, and adjacency relationships between landscape components.

Overall framework of the proposed approach integrating semantic segmentation, design language feature extraction, and social aesthetic analysis. The pipeline processes landscape images through semantic annotation, compositional feature extraction, and aesthetic modeling to establish relationships between visual design elements and public perception.

In parallel, social aesthetic indicators are collected from public surveys and textual sources including landscape descriptions and cultural narratives. Natural language processing techniques are applied to extract thematic aesthetic indicators from textual data. Finally, the visual design features and social aesthetic indicators are integrated through statistical modeling to analyze relationships between landscape composition and public aesthetic perception. This framework allows garden landscapes to be interpreted not only in terms of visual elements but also in terms of compositional structure and perceived aesthetic qualities, bridging the gap between objective spatial analysis and subjective aesthetic evaluation.

Data collection and semantic annotation

To support semantic analysis of landscape scenes, a dataset containing more than 2,000 high-resolution garden images was constructed. The dataset includes diverse landscape environments such as traditional classical gardens, ecological parks, and contemporary urban green spaces. Images were collected from multiple sources including field photography conducted across multiple seasons, landscape design archives from professional practices, and publicly available scene datasets such as ADE20K and Cityscapes, which provide structural references for outdoor environments. Image resolution ranges from 1024\(\times\)768 to 2048\(\times\)1536 pixels to ensure sufficient detail for fine-grained semantic annotation.

The dataset comprises images from three primary geographic regions: Eastern China classical gardens (n=850, 42.5 percent), Northern European ecological parks (n=680, 34 percent), and North American urban green spaces (n=470, 23.5 percent). This distribution reflects both the availability of high-quality annotated imagery and the goal of representing major landscape design traditions. In terms of design style, the dataset includes traditional classical gardens (35 percent), modern ecological parks (28 percent), contemporary urban parks (25 percent), and mixed-use green spaces (12 percent). To mitigate potential class imbalance effects during training, weighted random sampling was applied with sampling probabilities inversely proportional to class frequency. Specifically, sampling weights were computed as \(w_i = N / (K \cdot n_i)\), where N is the total number of samples, K is the number of classes, and \(n_i\) is the number of samples in class i.

Representative examples of images included in the dataset are shown in Fig. 2. These examples illustrate variations in vegetation distribution, water features, architectural elements, and spatial organization across different garden styles, demonstrating the visual diversity captured in the dataset.

Representative examples of garden landscape images used in the dataset, showing diverse landscape types including classical Chinese gardens, European ecological parks, and contemporary urban green spaces. The images demonstrate variations in vegetation density, water feature configurations, architectural styles, and spatial compositions that characterize different landscape design traditions.

A semantic annotation schema was developed based on landscape architecture literature and consultation with domain experts. Four primary semantic categories were defined to capture the essential compositional elements of garden landscapes. The vegetation category includes trees, shrubs, grass, and flower beds. The water bodies category encompasses ponds, lakes, streams, and fountains. The built structures category covers pavilions, bridges, walls, and pathways. The other elements category includes sculptures, benches, plazas, and open ground. This categorization scheme balances semantic granularity with annotation feasibility while capturing the key elements that define landscape composition.

Pixel-level annotations were created using the LabelMe annotation tool. Three trained annotators independently labeled each image using a unified color-coded labeling protocol. Annotators received training on landscape architecture terminology and annotation guidelines to ensure consistency. Annotation disagreements were resolved through consensus discussion with reference to landscape design principles and photographic evidence. To evaluate annotation reliability, inter-annotator agreement was measured using Cohen’s kappa coefficient computed on a randomly selected subset of 200 images annotated independently by all three annotators. The resulting kappa value of 0.82 indicates strong agreement among annotators. Disagreements primarily occurred at object boundaries, particularly between vegetation and built structures, and in regions with mixed vegetation types where species boundaries were ambiguous. These cases were resolved through consensus discussion with reference to landscape architecture literature and consultation with domain experts.

Each annotated sample consists of an original image and a corresponding semantic segmentation mask, as illustrated in Fig. 3. The segmentation mask uses distinct color codes for each semantic category, enabling direct visual verification of annotation quality.

Example of semantic annotation for garden landscape images, showing the original photograph alongside its corresponding pixel-level segmentation mask. Different colors represent distinct semantic categories: green for vegetation, blue for water bodies, orange for built structures, and purple for other elements, enabling precise quantification of landscape composition.

The dataset was randomly divided into training (70 percent), validation (15 percent), and testing (15 percent) subsets. The split was stratified by landscape style to ensure balanced representation across all subsets. The training set contains 1,400 images, the validation set contains 300 images, and the test set contains 300 images. All evaluation metrics reported in this study were computed on the test set to ensure unbiased performance assessment.

Design language feature extraction

Semantic segmentation results provide pixel-level classification of landscape components. To translate these predictions into interpretable design descriptors, a structured feature extraction process was developed to quantify spatial organization and compositional characteristics of landscape elements. This process transforms raw segmentation masks into a compact set of numerical descriptors that capture the essential compositional properties of landscape design.

For each semantic category c, the centroid location is calculated as

where \(N_c\) represents the number of pixels belonging to class c, and \((x_i, y_i)\) denotes the spatial coordinates of each pixel. The centroid provides a measure of the spatial center of mass for each landscape element, indicating whether elements are positioned centrally or peripherally within the scene.

Spatial dispersion is defined as

which measures how concentrated or dispersed each element is within the scene. Low dispersion values indicate that an element is spatially concentrated in a compact region, while high dispersion values suggest that the element is distributed across multiple scattered locations. This metric captures the degree of spatial fragmentation or cohesion for each landscape component.

The proportional composition of landscape elements is calculated using the area ratio

where \(N_{total}\) denotes the total number of pixels in the image. This ratio quantifies the relative coverage of each semantic category within the scene, providing a measure of compositional balance between different landscape elements.

Spatial relationships between elements are further characterized using adjacency statistics that record the frequency of neighboring pixels belonging to different semantic classes. An adjacency matrix \(\textbf{M} \in \mathbb {R}^{C \times C}\) is constructed where \(M_{ij}\) represents the number of boundary pixels shared between classes i and j. This matrix captures spatial proximity relationships such as water-vegetation interfaces, pathway-structure connections, and vegetation-open space transitions, which are important compositional features in landscape design.

The overall feature extraction process is illustrated in Fig. 4. Starting from semantic segmentation results, spatial distribution metrics, proportional composition indicators, and adjacency relationships are computed to form a quantitative representation of landscape design language. These descriptors are concatenated into a feature vector that serves as input for subsequent aesthetic analysis and style classification.

Workflow of design language feature extraction from semantic segmentation outputs, illustrating the parallel computation of three complementary feature types. Spatial distribution metrics capture element centroids and dispersion patterns, proportional composition quantifies area ratios and coverage statistics, and adjacency relationships encode boundary interactions between semantic classes, collectively forming a comprehensive compositional descriptor.

Social aesthetic modeling

To investigate how landscape composition relates to aesthetic perception, social aesthetic indicators were collected through structured surveys and textual analysis. This dual approach combines quantitative survey data with qualitative textual information to capture multiple dimensions of aesthetic evaluation.

A questionnaire survey was conducted between June and September 2023 across five urban parks in three cities (Harbin, Beijing, and Shanghai). Participants (N=312) were recruited through convenience sampling at park entrances during weekday afternoons and weekend mornings to capture diverse visitor demographics. The sample included 181 female (58 percent) and 131 male (42 percent) respondents, with ages ranging from 18 to 65 years (mean=34.2, SD=12.8). Participants had diverse educational backgrounds: 23 percent high school, 45 percent undergraduate, 28 percent graduate, and 4 percent other. This demographic diversity helps ensure that the collected aesthetic indicators reflect a broad range of public perspectives rather than a narrow subset of the population.

The questionnaire assessed five aesthetic dimensions using 5-point Likert scales (1=strongly disagree to 5=strongly agree). The harmony dimension was measured with the statement “The landscape elements are well-coordinated and harmonious”. The naturalness dimension used “The scene feels natural and organic”. The spatial balance dimension employed “The spatial composition is visually balanced”. The visual interest dimension was assessed with “The scene is visually engaging and interesting”. The overall aesthetic quality dimension used “I find this landscape aesthetically pleasing”. Internal consistency reliability was assessed using Cronbach’s alpha, yielding \(\alpha = 0.84\), which indicates good reliability. Each participant evaluated 8 to 12 landscape images randomly selected from the dataset, resulting in a total of 3,168 image-level aesthetic ratings. The survey was administered using a tablet-based interface that displayed images in randomized order to minimize order effects.

In addition to survey responses, textual sources such as landscape descriptions, design documents, and cultural narratives were analyzed using natural language processing techniques. A total of 1,247 textual descriptions were collected from three sources: landscape design documents (n=423), online visitor reviews (n=586), and cultural heritage records (n=238). Text preprocessing included segmentation using jieba (v0.42) for Chinese text and NLTK (v3.8) for English text, removal of stop words using custom dictionaries containing 891 Chinese and 571 English stop words, and lemmatization for English text using WordNet lemmatizer.

Keyword extraction was performed using two complementary methods. TF-IDF (Term Frequency-Inverse Document Frequency) was computed with a vocabulary size of 5,000 terms and minimum document frequency of 3 to identify statistically significant terms. RAKE (Rapid Automatic Keyword Extraction) was applied with a minimum phrase length of 2 words and maximum phrase length of 5 words to extract multi-word expressions that capture aesthetic concepts. Topic modeling was conducted using Latent Dirichlet Allocation (LDA) implemented in Gensim (v4.3). The model was configured with 10 topics, Dirichlet hyperparameters \(\alpha =0.1\) and \(\beta =0.01\), and trained for 1,000 Gibbs sampling iterations. Topic coherence was evaluated using the \(C_v\) metric, yielding a score of 0.52, which indicates moderate semantic consistency. The ten extracted topics included themes such as natural harmony, architectural elegance, water features, seasonal variation, and spatial enclosure, reflecting dominant aesthetic concepts in landscape discourse.

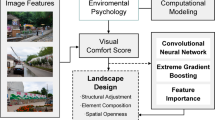

The resulting textual indicators were integrated with visual compositional descriptors derived from semantic segmentation results. Correlation analysis and regression modeling were subsequently used to examine relationships between landscape structure and perceived aesthetic qualities. The integration process between textual aesthetic indicators and visual design features is illustrated in Fig. 5.

Integration workflow between social aesthetic indicators and visual design language features, demonstrating the parallel processing of textual data through natural language processing and visual data through semantic segmentation. The workflow shows how keyword extraction, topic modeling, and compositional feature extraction converge through statistical analysis to establish quantitative relationships between landscape structure and aesthetic perception.

Architecture for semantic-aesthetic parsing

To perform semantic segmentation and compositional analysis, a deep learning architecture termed DesignSegNet was developed. The architecture integrates three key components: a multi-scale feature extraction backbone, a design-aware feature module, and an aesthetic interpretation head. As illustrated in Fig. 6, the model follows an encoder-decoder structure with explicit multi-task learning branches that enable simultaneous prediction of semantic segmentation, landscape style, and aesthetic scores.

Architecture of the proposed DesignSegNet model, showing the encoder-decoder structure with ResNet50 backbone (stages C1-C5 with 64, 256, 512, 1024, 2048 channels), spatial pyramid pooling module (four branches with 256 channels each), and progressive upsampling decoder (512\(\rightarrow\)256\(\rightarrow\)256 channels per stage). The design-aware feature module extracts 128-dimensional compositional descriptors from segmentation outputs, which feed into parallel aesthetic interpretation heads (128\(\rightarrow\)64\(\rightarrow\)1 for score prediction, 128\(\rightarrow\)64\(\rightarrow\)4 for style classification), enabling joint learning of visual segmentation and high-level aesthetic understanding through differentiable multi-task optimization.

The encoder employs a ResNet50 backbone to extract hierarchical features at five stages with output channels of 64, 256, 512, 1024, and 2048 respectively. Features from stages C3, C4, and C5 are retained for decoder fusion through skip connections. Each stage consists of residual blocks with 3\(\times\)3 convolutions, batch normalization, and ReLU activation, enabling the network to capture both low-level texture details and high-level semantic information. The use of residual connections allows gradient flow through deep layers and facilitates learning of complex visual representations.

To capture multi-scale contextual information, the C5 features are processed through a spatial pyramid pooling module with four parallel branches using pooling kernels of sizes 1\(\times\)1, 2\(\times\)2, 3\(\times\)3, and 6\(\times\)6. Each branch is followed by a 1\(\times\)1 convolution reducing channels to 256. The four feature maps are upsampled to the same spatial resolution using bilinear interpolation and concatenated, producing a 1024-channel context-aware representation that encodes landscape structure at multiple scales. This multi-scale aggregation is particularly important for landscape scenes where elements appear at varying scales, from large vegetation masses to small architectural details.

The design-aware feature module operates on the predicted segmentation mask to extract compositional descriptors that characterize landscape organization. For each semantic class c, the module computes spatial centroid \((x_c, y_c)\) using Eq. (2), spatial dispersion \(\sigma _c\) using Eq. (3), and area ratio \(A_c\) using Eq. (4). Additionally, an adjacency matrix \(\textbf{M} \in \mathbb {R}^{C \times C}\) is constructed where \(M_{ij}\) records the number of boundary pixels shared between classes i and j, capturing spatial relationships between landscape elements such as water-vegetation interfaces and pathway-structure connections. These descriptors are concatenated into a 128-dimensional feature vector representing the compositional structure of the landscape.

Unlike conventional post-processing approaches that compute compositional statistics independently after segmentation, the design-aware feature module is integrated into the end-to-end training pipeline through the multi-task loss function defined in Eq. (5). This integration enables gradient flow from the aesthetic prediction tasks back through the compositional descriptors to the segmentation backbone. Consequently, the network learns representations that are jointly optimized for both pixel-level classification and high-level compositional understanding. During training, the segmentation network receives supervisory signals not only from pixel-wise labels but also from aesthetic and style prediction objectives, encouraging the encoder to extract features that are semantically meaningful for landscape composition analysis. This differentiable integration of compositional feature extraction with deep representation learning distinguishes the proposed approach from conventional pipelines that treat segmentation and design analysis as separate, sequential stages. The ablation study in Table 3 demonstrates that this joint optimization yields substantial improvements in segmentation accuracy (4.3 percentage points in mIoU) compared with a baseline encoder-decoder without the design-aware module, validating the effectiveness of incorporating compositional priors into the learning process.

The decoder consists of three progressive upsampling stages that reconstruct full-resolution predictions from the encoded features. Each stage includes bilinear upsampling with a factor of 2, concatenation with corresponding encoder features via skip connections, and two consecutive 3\(\times\)3 convolutions with channel dimensions of 512\(\rightarrow\)256\(\rightarrow\)256, batch normalization, and ReLU activation. The skip connections from the encoder help preserve spatial details that may be lost during downsampling. The final layer uses a 1\(\times\)1 convolution to produce per-pixel class predictions across all semantic categories, generating a segmentation mask with the same spatial resolution as the input image.

An aesthetic interpretation head processes the 128-dimensional design-aware features through two fully connected layers with dimensions 128\(\rightarrow\)64\(\rightarrow\)1 and dropout probability of 0.3 to predict continuous aesthetic scores ranging from 1 to 5. A separate classification branch with dimensions 128\(\rightarrow\)64\(\rightarrow\)K, where K equals 4 for the four landscape style categories, predicts landscape style using softmax activation. This parallel structure enables the network to jointly learn visual segmentation and aesthetic interpretation, allowing the model to develop representations that are informative for both low-level pixel classification and high-level aesthetic evaluation.

The model is trained using a compound objective function

where \(\mathscr {L}_{seg}\) denotes cross-entropy loss for semantic segmentation and \(\mathscr {L}_{aesthetic}\) represents auxiliary regression and classification losses associated with aesthetic prediction tasks. Specifically, \(\mathscr {L}_{aesthetic} = \mathscr {L}_{score} + \mathscr {L}_{style}\), where \(\mathscr {L}_{score}\) is mean squared error for aesthetic score prediction and \(\mathscr {L}_{style}\) is cross-entropy loss for style classification. The weighting coefficients \(\lambda _1\) and \(\lambda _2\) are set to 1.0 and 0.5 respectively to balance segmentation accuracy and aesthetic interpretation. This multi-task formulation enables the network to simultaneously learn structural scene understanding and high-level compositional representations of landscape design, leveraging shared representations across related tasks to improve overall performance.

The multi-task learning framework provides two key advantages for landscape analysis. First, the aesthetic prediction task acts as a regularizer that encourages the segmentation network to learn compositionally coherent representations rather than purely texture-based features. This is particularly valuable in landscape scenes where aesthetic quality depends on spatial relationships between elements rather than individual object appearances. Second, the gradient flow from aesthetic losses to the segmentation backbone creates an implicit compositional prior that helps resolve ambiguities in boundary regions. For example, when the network encounters a visually ambiguous region between vegetation and water, the aesthetic prediction objective provides additional context about expected compositional patterns, leading to more spatially coherent segmentation outputs.

Experimental results and analysis

This section evaluates the proposed framework through quantitative experiments and visual analysis. The experiments focus on three aspects: semantic segmentation performance, compositional landscape feature analysis, and the relationship between landscape composition and aesthetic perception. All experiments were designed to assess both the technical performance of the segmentation model and its ability to capture compositionally meaningful features that relate to aesthetic perception.

Implementation details

All models were implemented using the PyTorch deep learning framework (version 1.12.0). Training was conducted on a workstation equipped with an NVIDIA RTX 3090 GPU with 24GB memory, running Ubuntu 20.04 LTS. Input images were resized to \(512 \times 512\) pixels during training to balance computational efficiency and spatial resolution. The network parameters were optimized using the AdamW optimizer with an initial learning rate of \(1 \times 10^{-4}\), weight decay of 0.01, and polynomial learning rate decay with power 0.9. Training was performed for 120 epochs with a batch size of 8, requiring approximately 18 hours of total training time.

To improve model generalization, several data augmentation techniques were applied during training. These included random horizontal flipping with probability 0.5, random cropping with crop size ranging from 0.8 to 1.0 of the original image, color jittering with brightness, contrast, saturation, and hue variations of 0.2, and random rotation within plus or minus 15 degrees. These augmentations help the model learn invariant representations across different viewing conditions and lighting scenarios commonly encountered in landscape photography.

To ensure fair comparison, all baseline models (HRNet, SegFormer, Mask2Former, SAM) were retrained from ImageNet-pretrained weights using identical training configurations: 120 epochs, batch size 8, AdamW optimizer with learning rate \(1 \times 10^{-4}\), weight decay 0.01, polynomial learning rate decay with power 0.9, and the same data augmentation pipeline. For HRNet, we used the HRNetV2-W48 variant with high-resolution representation maintained throughout the network. SegFormer was implemented with the MiT-B3 backbone. Mask2Former used a Swin-Transformer-Base backbone with 100 object queries. For SAM (Segment Anything Model), we employed the ViT-B variant and fine-tuned the mask decoder while keeping the image encoder frozen for the first 40 epochs, then jointly fine-tuned all parameters for the remaining 80 epochs. All models were trained on the same hardware and evaluated on the identical test set to ensure consistent and unbiased comparison.

Evaluation protocol

Model performance was evaluated using widely adopted semantic segmentation metrics, including mean Intersection over Union (mIoU), pixel accuracy, precision, and recall. These metrics provide complementary perspectives for assessing segmentation quality and classification consistency across semantic categories. The mIoU metric measures the average overlap between predicted and ground truth segmentation masks across all classes, providing a balanced assessment that accounts for both false positives and false negatives. Pixel accuracy measures the proportion of correctly classified pixels across the entire image. Precision quantifies the proportion of predicted pixels that are correctly classified, while recall measures the proportion of ground truth pixels that are successfully detected.

The dataset was divided into training (70 percent, n=1400), validation (15 percent, n=300), and testing (15 percent, n=300) subsets using stratified random sampling to ensure balanced representation of landscape styles across all splits. All evaluation metrics were computed on the test set and averaged across semantic classes using macro-averaging to give equal weight to each category regardless of its frequency in the dataset. Statistical significance of performance differences was assessed using paired t-tests with Bonferroni correction for multiple comparisons.

Four representative segmentation architectures were selected as baselines for comparison: HRNet28, SegFormer29, Mask2Former30, and the Segment Anything Model (SAM)31. These models represent different architectural paradigms, including convolutional networks with multi-resolution representations (HRNet), transformer-based models with hierarchical feature extraction (SegFormer), query-based mask prediction architectures (Mask2Former), and foundation segmentation models trained on large-scale datasets (SAM). In addition to quantitative evaluation, qualitative visual comparisons were conducted to examine how different models interpret landscape structure, particularly in challenging scenarios involving occlusions, boundary ambiguities, and fine-grained spatial details.

Segmentation performance

Quantitative evaluation results are summarized in Table 1. The proposed DesignSegNet model achieves the highest performance across all evaluation metrics compared with the baseline architectures, with statistically significant improvements over all baselines (p < 0.01 for all pairwise comparisons).

The results indicate that the proposed framework achieves competitive segmentation accuracy while maintaining the ability to extract compositional descriptors from landscape scenes. DesignSegNet achieves a 2.3 percentage point improvement in mIoU over the second-best baseline (Mask2Former), representing a 9.4 percent relative error reduction. Improvements are particularly visible in categories with complex spatial structures such as narrow pathways (mIoU improvement of 4.8 percentage points) and small architectural elements (mIoU improvement of 3.6 percentage points). The performance gain can be attributed to the design-aware feature module, which incorporates compositional information that helps disambiguate visually similar regions based on their spatial context and relationships with neighboring elements.

Qualitative comparisons between segmentation outputs produced by different models are shown in Fig. 7. The proposed model produces more consistent boundaries between vegetation, water bodies, and built structures compared with baseline architectures. Notably, DesignSegNet better preserves fine-grained spatial details such as narrow pathways and small architectural ornaments, while maintaining coherent segmentation of large homogeneous regions. The baseline models tend to produce fragmented predictions in regions with visual ambiguity or occlusions, whereas DesignSegNet leverages compositional priors to generate more spatially coherent outputs.

Visual comparison of segmentation results produced by different models including HRNet, SegFormer, Mask2Former, SAM, and the proposed DesignSegNet. From left to right: input image, ground truth, HRNet, SegFormer, Mask2Former, SAM, and DesignSegNet predictions.

Table 2 compares computational efficiency across different models. DesignSegNet achieves competitive efficiency with 52.4M parameters and 71.8G FLOPs, positioned between the lightweight SegFormer and the heavier HRNet and Mask2Former architectures. The inference time of 37.6ms per image demonstrates real-time processing capability suitable for practical landscape analysis applications. The additional design-aware feature module introduces minimal computational overhead (approximately 0.8M parameters and 2.1G FLOPs) while providing substantial performance gains, validating the efficiency of the proposed architectural design.

Ablation study

To examine the contribution of different components in the proposed architecture, ablation experiments were conducted by selectively removing specific modules from the model. Four configurations were evaluated: backbone only (ResNet50 encoder-decoder without additional modules), backbone with aesthetic head (adding aesthetic prediction branch without design-aware features), backbone with design module (adding design-aware feature extraction without aesthetic prediction), and the full model with all components.

The results indicate that the design-aware feature module provides the most substantial improvement to segmentation performance, increasing mIoU by 4.3 percentage points over the baseline. This improvement demonstrates that incorporating spatial composition information derived from semantic predictions helps the model learn more robust representations of landscape structure. The aesthetic prediction branch provides a smaller but still meaningful improvement of 0.4 percentage points in mIoU, suggesting that multi-task learning with aesthetic objectives encourages the model to develop representations that capture perceptually relevant features. The full model combining both components achieves the best performance across all metrics, with style classification accuracy of 78.5 percent and aesthetic score prediction RMSE of 0.371 on a 5-point scale. These results validate the design choice of integrating compositional feature extraction with aesthetic interpretation in a unified architecture.

Design feature analysis

The compositional descriptors extracted from segmentation results allow quantitative comparison between different landscape styles. These descriptors describe spatial organization of landscape elements including vegetation, water features, built structures, and open spaces, providing a numerical representation of design language that can be analyzed statistically.

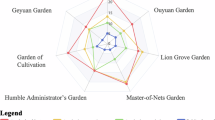

Figure 8 illustrates the proportional distribution of landscape elements in classical gardens and urban parks based on analysis of 850 classical garden images and 470 urban park images from the dataset.

Comparison of elemental composition between classical gardens and urban parks. Error bars represent standard deviation across images within each category.

The figure shows that classical gardens tend to contain higher proportions of built structures (mean 28.3 percent, SD 8.7 percent) and water bodies (mean 18.6 percent, SD 6.2 percent), reflecting their emphasis on architectural symbolism and spatial hierarchy characteristic of traditional Chinese garden design. In contrast, urban parks allocate larger areas to vegetation (mean 62.4 percent, SD 12.1 percent) and open green spaces (mean 15.8 percent, SD 7.3 percent), prioritizing ecological function and recreational accessibility. Independent samples t-tests confirm that these differences are statistically significant (p<0.001 for all comparisons). These compositional descriptors provide a quantitative representation of landscape design language across different garden categories, enabling objective comparison of design traditions that have historically been described primarily through qualitative aesthetic discourse.

Correlation between design features and aesthetic perception

To explore the relationship between landscape composition and public aesthetic perception, correlation analysis was conducted between compositional descriptors extracted from segmentation results and survey-derived aesthetic indicators collected from 312 participants evaluating 3,168 image-level ratings.

Pearson correlation analysis indicates that vegetation area ratio (r = 0.31, p < 0.01) and water-vegetation adjacency (r = 0.28, p < 0.01) are positively associated with aesthetic ratings. These findings suggest that landscape scenes emphasizing natural integration and ecological interfaces tend to receive higher aesthetic evaluations from the public. Conversely, centroid dispersion shows a negative correlation (r = − 0.19, p < 0.05), indicating that spatially fragmented compositions with scattered elements are perceived as less aesthetically pleasing than compositions with coherent spatial organization.

Multiple linear regression analysis was conducted to examine the joint predictive power of compositional features for aesthetic scores. The regression model included five compositional descriptors as independent variables: vegetation area ratio, water-vegetation adjacency, centroid dispersion, built structure density, and path-structure proximity. Results are summarized in Table 4.

The regression model achieved an adjusted \(R^2\)0000 value of 0.72, suggesting that compositional descriptors explain a substantial portion of variance in aesthetic ratings within the dataset. The F-statistic for the overall model is significant (F(5, 3162) = 1628.4, p < 0.001), indicating that the set of compositional features collectively predicts aesthetic scores better than a null model. These results are interpreted as statistical associations rather than causal relationships, as the observational study design does not allow for causal inference.

To validate regression model assumptions, several diagnostic tests were performed. Variance Inflation Factor (VIF) analysis indicated no severe multicollinearity among predictors (all VIF < 3.2, well below the threshold of 10). Residual analysis showed approximately normal distribution (Shapiro-Wilk test, W = 0.997, p = 0.18) with no obvious patterns in residual plots, suggesting that model assumptions of linearity and homoscedasticity were reasonably satisfied. The Durbin-Watson statistic (d = 1.94) indicated no significant autocorrelation in residuals. These diagnostic results support the validity of the regression analysis and strengthen confidence in the reported associations between compositional features and aesthetic perception.

Visualization of style representation

To further interpret how the model represents landscape style, embedding visualization was conducted using t-SNE (t-distributed Stochastic Neighbor Embedding) with perplexity 30 and 1,000 iterations. The 128-dimensional design-aware feature vectors extracted from 300 test images were projected into two-dimensional space for visualization. The resulting distribution of style embeddings is shown in Fig. 9.

t-SNE visualization of style embeddings learned by the model. Each point represents one image, colored by landscape style category: traditional classical gardens (red), modern ecological parks (blue), contemporary urban parks (green), and mixed-use green spaces (orange).

The proposed framework produces clearer separation between style clusters compared with baseline architectures, indicating that the learned representation captures stylistic compositional patterns in landscape scenes. Quantitative evaluation using silhouette score (0.68 for DesignSegNet versus 0.52 for the best baseline) confirms that the proposed model learns more discriminative style representations. The visualization reveals that traditional classical gardens form a tight cluster characterized by high built structure density and water feature prominence, while modern ecological parks occupy a distinct region associated with high vegetation coverage and naturalistic spatial organization. Contemporary urban parks show intermediate characteristics, reflecting their hybrid design approach that balances ecological and recreational functions.

In addition to style clustering, attention visualization highlights spatial regions that contribute most strongly to aesthetic predictions. Figure 10 shows examples of aesthetic attention computed by applying Grad-CAM to the aesthetic prediction head across multiple garden scenes.

Visualization of aesthetic attention across multiple garden scenes. Each column represents a different garden, with rows showing (top) original images, (middle) attention heatmaps, and (bottom) overlays. Warmer colors indicate regions that contribute more strongly to the predicted aesthetic score.

These visualizations indicate that the model consistently focuses on spatially significant design components across diverse garden types. Common attention patterns emerge around water-vegetation interfaces, central architectural structures, and visually dominant landscape elements. Notably, the model demonstrates adaptive attention allocation: in traditional gardens, attention concentrates on architectural features and water elements; in ecological parks, vegetation patterns and natural transitions receive higher weights; while urban parks show balanced attention across both built and natural components. The attention patterns align with principles from landscape architecture theory, which emphasizes the aesthetic importance of focal points, spatial transitions, and compositional balance. This interpretability provides confidence that the model has learned meaningful representations of landscape aesthetics rather than exploiting spurious correlations in the training data.

Conclusion

This study presents a computational framework for analyzing landscape design language by integrating semantic scene understanding, compositional feature representation, and social aesthetic analysis. A dataset of garden landscape images was constructed and annotated with pixel-level semantic labels. Based on these annotations, a novel semantic segmentation architecture termed DesignSegNet was developed to simultaneously perform scene parsing and compositional feature extraction. Unlike conventional segmentation networks, DesignSegNet integrates a design-aware feature module through multi-task learning, enabling joint optimization of segmentation accuracy and design-level understanding. Experimental evaluation demonstrates that DesignSegNet outperforms baseline architectures including HRNet, SegFormer, Mask2Former, and SAM while enabling extraction of interpretable compositional descriptors. Statistical analysis reveals significant associations between compositional features and aesthetic perception, demonstrating that landscape design language can be systematically analyzed using computational methods.

Several limitations should be acknowledged. The dataset focuses primarily on Chinese classical gardens, which may limit generalizability to other landscape traditions. The framework analyzes static images rather than dynamic spatial experiences, and the aesthetic evaluation relies on survey data which may not fully capture embodied landscape perception. Additionally, the compositional descriptors, while interpretable, represent simplified abstractions of complex design principles.

Future work should incorporate cross-cultural datasets to examine how aesthetic preferences vary across geographic and cultural contexts. Extending the framework to temporal analysis could reveal how seasonal changes influence compositional structure and perception. Integration with three-dimensional representations and immersive technologies may better capture experiential qualities of landscape spaces. These extensions could contribute to more informed and culturally responsive approaches to landscape planning and evaluation.

Data availability

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.

References

Cui, C. et al. Social-sensed image aesthetics assessment[J]. ACM Trans. Multimed. Comput. Commun. Appl. (TOMM) 16(3s), 1–19 (2020).

Chandra, P., Cambria, E. & Pradeep, A. Enriching social communication through semantics and sentics. In: Proceedings of the Workshop on Sentiment Analysis where AI meets Psychology (SAAIP 2011). 142 : 68-72, (2011).

Sawant, N., Li, J. & Wang, J. Z. Automatic image semantic interpretation using social action and tagging data. Multimed. Tools Appl. 51(1), 213–246 (2011).

Sawant, N., Li, J. & Wang, J. Z. Automatic image semantic interpretation using social action and tagging data. Multimed. Tools Appl. 51(1), 213–246 (2011).

Zhang, X. et al. Emotional-health-oriented urban design: A novel collaborative deep learning framework for real-time landscape assessment by integrating facial expression recognition and pixel-level semantic segmentation. Int. J. Environ. Res. Public Health 19(20), 13308 (2022).

Locher, P., Overbeeke, K. & Wensveen, S. Aesthetic interaction: A framework. Des. Issues 26(2), 70–79 (2010).

Boeriis, M. & Holsanova, J. Tracking visual segmentation: Connecting semiotic and cognitive perspectives. Visual Commun. 11(3), 259–281 (2012).

Vyncke, P. Lifestyle segmentation: From attitudes, interests and opinions, to values, aesthetic styles, life visions and media preferences. Eur. J. Commun. 17(4), 445–463 (2002).

Zheng, X., & He, J. Interactive Creation Method and Implementation of Printmaking with Image Segmentation Technology. (2025).

Ma, H., Li, J. & Ye, X. Deep learning meets urban design: Assessing streetscape aesthetic and design quality through AI and cluster analysis. Cities 162, 105939 (2025).

Valada, A., Vertens, J., Dhall, A., & et al. Adapnet: Adaptive Semantic Segmentation in Adverse Environmental Conditions. In 2017 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 4644-4651, (2017).

Spasev, V., Dimitrovski, I., Kitanovski, I., & et al. Semantic Segmentation of Remote Sensing Images: Definition, Methods, Datasets and Applications. In International Conference on ICT Innovations. Cham: Springer Nature Switzerland, 127-140. (2023)

Qin, Y. et al. Semantic segmentation of building roof in dense urban environment with deep convolutional neural network: A case study using GF2 VHR imagery in China. Sensors 19(5), 1164 (2019).

Tian, L. F. & Slaughter, D. C. Environmentally adaptive segmentation algorithm for outdoor image segmentation. Comput. Electron. Agric. 21(3), 153–168 (1998).

Zamani, V. et al. Deep semantic segmentation for visual scene understanding of soil types[J]. Autom. Construc. 140, 104342 (2022).

Codify: Parametric and computational design in landscape architecture[M]. Routledge, (2018).

Luo, J. Online design of green urban garden landscape based on machine learning and computer simulation technology. Environ. Technol. Innov. 24, 101819 (2021).

Ackerman, A. et al. Computational modeling for climate change: Simulating and visualizing a resilient landscape architecture design approach. Int. J. Archit. Comput. 17(2), 125–147 (2019).

Busón, I. L. et al. A Computational Approach to Methodologies of Landscape Design. In Humanizing Digital Reality: Design Modelling Symposium Paris. Springer Singapore 2018, 657–670 (2017).

Ervin, S.M. Turing landscapes: computational and algorithmic design approaches and futures in landscape architecture. Codify. Routledge, 89-114, (2018).

Luminiitty, R. Integrating computational planting design to the landscape design process. (2021).

Kühne, O. Landscape and power in geographical space as a social-aesthetic construct (Springer International Publishing, Dordrecht, 2018).

Perry, S., Reeves, R. & Sim, J. Landscape design and the language of nature. Landsc. Rev. 12(2), 3–18 (2008).

Raaphorst, K. et al. The semiotics of landscape design communication: Towards a critical visual research approach in landscape architecture. Landsc. Res. 42(1), 120–133 (2017).

Thompson, I. Aesthetic, social and ecological values in landscape architecture: A discourse analysis. Ethics, Place Environ. 3(3), 269–287 (2000).

Wang, M. & Yu, B. Landscape characteristic aesthetic structure: Construction of urban landscape characteristic time-spatial pattern based on aesthetic subjects. Front. Archit. Res. 1(3), 305–315 (2012).

Xian, Y. et al. SPIRIT: Symmetry-prior informed diffusion for thangka segmentation. Symmetry 17(10), 1643 (2025).

Sun, K. et al. Deep high-resolution representation learning for human pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 5693–5703 (2019).

Xie, E. et al. SegFormer: Simple and efficient design for semantic segmentation with transformers. Adv. Neural Inform. Process. Syst. 34, 12077–12090 (2021).

Cheng, B., Misra, I., Schwing, A.G., & et al. Masked-attention mask transformer for universal image segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 1290-1299, (2022).

Kirillov, A. et al. Segment anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 4015–4026 (2023).

Funding

The 2022 Research Project on Higher Education Teaching Reform in Heilongjiang Province (Postgraduate Project) - “Research on the Construction and Management Model of Off-campus Practice Bases for Master of Landscape Architecture under the ‘Double Mentor’ System” (Project Number: SIGY20220148). Heilongjiang Provincial Natural Science Foundation Contract Number LH2022E001 Project Name: Research on Carbon Storage in Green Spaces and Adaptive Regulation Paths of Landscape Patterns in Heilongjiang Province

Author information

Authors and Affiliations

Contributions

Yiting Wang: Writing—original draft, Software, Investigation, Formal analysis. Wen Li: Writing—review & editing, Methodology, Funding Acquisition. YuCen Zhai: Supervision,Validation,Visualization. Chen Qu: Software,Conceptualization,Supervision. Fengming Zhang: Resourcest, Software,Validation.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, Y., Zhai, Y., Qu, C. et al. Decoding garden design language via semantic segmentation for social aesthetic interaction. Sci Rep 16, 10571 (2026). https://doi.org/10.1038/s41598-026-46120-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-46120-w