Abstract

The foreign exchange markets, renowned as the largest financial markets globally, also stand out as one of the most intricate due to their substantial volatility, nonlinearity, and irregular nature. Owing to these challenging attributes, various research endeavors have been undertaken to effectively forecast future currency prices in foreign exchange with precision. The studies performed have built models utilizing statistical methods, being the Monte Carlo algorithm the most popular. In this study, we propose to apply Auxiliary-Field Quantum Monte Carlo to increase the precision of the FOREX markets models from different sample sizes to test simulations in different stress contexts. Our findings reveal that the implementation of Auxiliary-Field Quantum Monte Carlo significantly enhances the accuracy of these models, as evidenced by the minimal error and consistent estimations achieved in the FOREX market. This research holds valuable implications for both the general public and financial institutions, empowering them to effectively anticipate significant volatility in exchange rate trends and the associated risks. These insights provide crucial guidance for future decision-making processes.

Similar content being viewed by others

Introduction

Foreign exchange markets, commonly known as FOREX, hold the distinction of being the largest financial market globally. They are renowned for their inherent complexity and volatility, characterized by unpredictable behavior and significant fluctuations in exchange rates (Wei and Zhu, 2022). The ability to forecast currency pair movements is of great importance in the field of FOREX. Over the past years, researchers worldwide have devoted considerable attention to the FOREX market, driven by its vulnerable characteristics (Islam et al., 2020; Ayitey et al., 2023). As a result, various types of research have been conducted to accurately predict future prices of FOREX currencies.

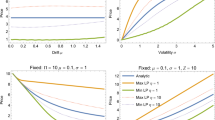

The models to estimate FOREX markets have been of great importance in international finance in recent decades, occupying an important effort by academics and professionals to forecast the future values of currencies, considered a difficult task (Cheung and Erlandsson, 2005; Kamal et al., 2012; Hauzenberger and Florian, 2019; Islam et al., 2020). These models are usually estimated by statistical methodologies, both classic regression models such as ordinary least squares (OLS) or factor models up to Monte Carlo models, which have given great precision results and are the most commonly used (Giacomini and Rossi, 2010; Jaworski, 2018). These models have been used in different variants, with Metropolis-Hastings and Sequential filtering being the Monte Carlo designs most used in Economics and Finance. Such models are assessed based on the standard deviation result achieved, the size of the sample, as well as the complexity. The literature shows the different fit results after applying different statistical techniques and Monte Carlo algorithms for these Currency Market models. For example, models constructed with linear statistical techniques, such as Ordinary Least Squares, linear regression methods, and Factor models, have provided an adjustment of 0.93–1.84 standard deviation (Rossi, 2013; Park and Park, 2013; Byrnea et al., 2016; Ince et al., 2016; Serjam and Sakurai, 2018), but their adjustments are between 1.26–2.11 when constructed with small samples (Rossi, 2013; Jacob and Uusküla, 2019). On the other hand, other more advanced statistical models, especially non-linear models, such as Vector Autoregression, time-varying parameter models, and Error Correction models. With large samples, these models have obtained a precision between 0.58–0.83 (Clements and Lan, 2010; Kavtaradze and Mokhtari, 2018; Taveeapiradeecharoen et al., 2019) while in the case of small samples, the results have been 0.75–1.24 (Cheung et al., 2019; Colombo and Pelagatti, 2020; Rubaszek and Ca’ Zorzi, 2020). Hence, it becomes apparent that econometric methods demonstrate a level of accuracy within the range of 0.40–0.83 when performing simulations with sample sizes exceeding 100 observations. However, these methods do not yield a significantly low standard deviation when applied to small samples (Park and Park, 2013; Beckmann and Schuessler, 2016).

Giacomo and Rossi (2010) applied the Fluctuation test and the One-Time Reversal test for the monthly period of 1973–2008 with the data of the dollar/British pound exchange rate, showing a standard deviation higher than 0.49 for both econometric techniques. Park and Park (2013) conducted a comparative study including the Japanese Yens, Swiss francs, Canadian dollars, and UK pounds per the US dollar, for the quarterly period of 1973–2010, applying the time-varying cointegration coefficients method. They showed a mean square error above 0.62. For its part, Beckmann and Schuessler (2016) demonstrate in a Monte Carlo simulation how a time-varying parameter model such as ours, namely one that provides varying degrees of temporal variation in the coefficients, can be very suitable for retrieving samples in the database. Byrne et al. (2016) applied the Time-Varying Parameters method, for a sample of several OECD currencies per US dollar, obtaining an overall standard deviation of 0.72. Ince et al. (2016) used ordinary least squares (OLS) to estimate theoretical models of the FOREX market for data on US dollar-Swiss franc and US dollar–Japanese Yen exchange rates. They got an overall standard deviation superior to 1.06 in their estimates. Finally, Hauzenberger and Huber (2019) applied the Markov process of Time-Varying transition to forecast the FOREX market for Japan, Norway, Australia, Switzerland, Canada, Sweden, South Korea, and the UK relative to the US dollar. They reached an average deviation of 0.94. In their study, Tigani et al. (2022) developed a model utilizing Gaussian kernel density and Monte Carlo simulation to forecast the volatility patterns of the EUR/USD currency pair on an hourly basis. The proposed model holds potential applications in the financial market, particularly in algorithmic trading, where the Monte Carlo method is employed to estimate integrals within this framework. The authors suggest that future research should include a comparative analysis of the accuracy of various sampling methods, such as Metropolis-Hastings, Gibbs sampling, and Markov Chain Monte Carlo, to further enhance the understanding and effectiveness of the model.

Therefore, many researchers have targeted their studies on the FOREX market by using methods ranging from statistical methods to deep learning. However, because volatility behavior in the foreign exchange market has important implications for modeling and calculating risk in these markets, future researchers will need to use innovative and improved approaches to analyze the impact and forecasting of this volatility behavior (Kamal et al., 2012; Islam et al., 2020; Chinthapalli, 2021). Hence, the Quantum Monte Carlo method emerges as a sophisticated quantum approach, offering a dependable solution to capture the mentioned volatility. The aim is to simulate speculative attack models in order to provide further insights into potential events that may transpire within FOREX markets. Furthermore, Islam et al. (2020) conclude that future research on FOREX market prediction can be through robust and accurate approaches, like GRU, Monte Carlo methods, Kohonen’s self-organizing neural network, modular neural network, and numerous additional algorithms, which are not yet completely studied in this domain.

To fill this gap in the previous literature and to solve these problems of accuracy of the existing methods for estimating FOREX markets, our paper analyses USD/EUR and USD/JPY exchange rates in the period 2013–2021. This work compares three Monte Carlo techniques, Markov Chain Monte Carlo, Sequential Monte Carlo, and Auxiliary-Field Quantum Monte Carlo (AFQMC), with the AFQMC technique being the best performer. This technique has already demonstrated its methodological superiority in other areas in carrying out accurate sampling with few observations and data distributions (Giacomini and Rossi, 2010; Rossi, 2013; Kolasa et al., 2017; Jaworski, 2018). Our results show a more robust estimation according to accuracy, both in-sample, and out-of-sample estimations, a better behavior with small and irregular samples compared to the conventional regression, deep learning, and Monte Carlo methods. Also, these Monte Carlo models need less time to make the estimates, especially in the case of the AFQMC method. Hence, these results also show great computing of popular FOREX market models that previous literature showed as difficult to estimate accurately, reducing the instability of the previous literature (Jaworski, 2018; Dash, 2018; Cheung et al., 2019; Hauzenberger and Huber, 2019; Colombo and Pelagatti, 2020). These results can be very useful in their application in FOREX market models and in other models in Financial Econometrics that help the valuation challenges of financial professionals and other related interest groups.

Our research has been motivated by the impact of recent economic events on FOREX markets, including the market collapse in 2020 following a decade of global economic growth and recovery from the 2007 to 2008 financial crisis. The onset of the COVID-19 pandemic in early 2020 further exacerbated the situation, resulting in significant economic downturns and increased uncertainty. As a result, traders now require a thorough understanding of market behavior before making trading decisions (Ilić and Digkoglou, 2022). Monte Carlo simulation is a widely employed method in business and finance to assess portfolios and investments by simulating various uncertain factors that influence their value, thereby establishing the distribution of their value across a range of possible outcomes. Extensive literature highlights the numerous applications of Monte Carlo methods in finance, encompassing security valuation, risk management, portfolio optimization, model calibration (Staum, 2009), derivatives valuation (Rebentrost et al., 2018), theoretical research (Creal, 2012), calculations (Asmussen, 2018), and stock market forecasting (Parungrojrat and Kidsom, 2019). Moreover, the Monte Carlo method is often combined with other techniques, such as the Mali calculation (Fournié et al., 2001). In conclusion, as unexpected crises like the recent situation in Ukraine unfold, predictive methods gain significance for investors and researchers (Ilić and Digkoglou, 2022).

The present research differs from others in that it compares various Monte Carlo techniques in FOREX markets prediction. Most of the models in previous studies have been dominated by statistical techniques such as ordinary least squares, quantile regression, and recently neural network techniques. Monte Carlo simulation holds a significant position as one of the key algorithms in finance and numerical computational science, playing a crucial role in the realm of risk management being able to easily deal with high-dimensional problems such as volatility (Guo, 2022). Aşırım et al. (2023) concluded that the prediction of the foreign exchange market is quite difficult due to this volatility. However, the Monte Carlo method could help risk management in this prediction. In addition, Jarusek et al. (2022) recommend applying the Monte Carlo method to manage market chaos and volatility, since prediction in the foreign exchange market is difficult to perform in a complex dynamic system. Applying the Monte Carlo method to trading systems allows for better risk analysis and risk management. The Monte Carlo method also helps to estimate when a system has stopped working, to know the characteristics of the system, and therefore to understand what we can expect from its performance (Aşırım et al., 2023).

We provide at least two additional contributions to the literature. First, the application of AFQMC methodology has increased the precisión of the FOREX market model. Our findings indicate stronger accuracy than previous studies, both in-sample, and out-of-sample estimation, as enhanced efficiency with short and irregular samples versus conventional regression, deep learning, and Monte Carlo methods. FOREX markets are considered to be highly complex and volatile, with important implications for modeling and calculating risk in these markets. The Quantum Monte Carlo approach delivers a sophisticated quantum analysis and is a credible way to measure such volatility, the aim is to formulate speculative attack models to generate further details regarding the potential developments in the FOREX market. Despite the complexity of the new quantum method, we offer a new possibility that other authors and practitioners can develop in the future. So, our research has significant implications for financial institutions and governments, given the increasing relevance of advanced risk management. According to Rebentrost et al. (2018), quantum computers reveal the hope of a substantial improvement in the speed of these computations. One-day computations should be shortened to significantly smaller time scales, making real-time risk analysis possible. This near real-time assessment could enable the entity to respond more quickly to changes in the market and to exploit dealing occasions. Therefore, we apply the Quantum Monte Carlo method in our study because most of the scenarios that define the financial field involve a great deal of computational difficulty, and thus lend themselves to quantum computation. It is a widely used technique in science, with applications in physics, chemistry, engineering, and finance (Orus et al., 2019). Monte Carlo simulation facilitates visualizing all possible outcomes of decisions, inclusive of the actual probabilities of each occurring. This allows the impact of risk to be quantitatively assessed, enabling more accurate forecasting and eventually better decision-making under uncertainty (Sikora et al., 2019).

Secondly, our model helps to identify possible currency movements in the foreign exchange market through Monte Carlo simulation, thus avoiding a speculative attack. In this way, we can determine how a financial crisis could affect the price of currencies. In the event that the current global virus situation worsens or similar crises emerge in the future, it becomes increasingly important to mitigate the adverse effects of currency price volatility. In this regard, the insights gained from the implementation of the Monte Carlo simulation hold great relevance. Consequently, our model serves as a valuable tool for the analysis of complex problems that are difficult to tackle analytically, as well as for their subsequent forecasting.

The paper is organized as follows: the section “Literature review” presents a literature review, discussing previous research in the field. Section “FOREX markets fundamentals” introduces the exchange rate dynamics models used in the analysis. In the section “Estimation methods”, the methodologies employed for the estimations are explained. Section “Empirical methods” presents the data utilized in the study and presents the results and findings. Finally, the section “Conclusion” concludes by summarizing the key insights and conclusions derived from the research.

Literature review

In recent years, there has been a strong focus on predicting the volatile FOREX market, leading researchers to explore various methods. Among these, statistical analysis techniques have gained popularity, with researchers employing algorithms such as regression, decision trees, trading rules, support vector regression (SVR), and fuzzy systems (Dymova et al., 2016; Achchab et al., 2017). Raimundo and Okamoto (2018) contributed to this area by proposing a hybrid model for FOREX rate prediction. Their approach involved combining wavelet models with support vector regression (SVR). They leveraged the discrete wavelet transform (DWT) to extract relevant information from the FOREX dataset, which was then used as input for SVR to forecast currency prices. To assess the accuracy of their hybrid model, they compared its performance to that of traditional autoregressive integrated moving average (ARIMA) and autoregressive fractionally integrated moving average (ARFIMA) models. Evaluation metrics such as root mean square error (RMSE) and mean absolute error (MAE) were employed. The results demonstrated the superiority of their hybrid system over the traditional models, underscoring its effectiveness in predicting FOREX rates.

In their respective studies, Serjam and Sakurai (2018), Taveeapiradeecharoen et al. (2019), and Thu and Xuan (2018) explored different approaches for predicting foreign exchange (FOREX) rates. Serjam and Sakurai utilized linear kernel support vector regression (SVR) on historical data from major currency pairs, employing previous time frames as features. They discovered a profitable rule, profitability inversion, applicable to systems with unique characteristics. Taveeapiradeecharoen, Chamnongthai, and Aunsri proposed a model based on compressed vector autoregression, reducing FOREX data using a random compression technique and employing Bayesian model averaging (BMA). Their model outperformed the existing benchmark for six currency pairs. Thu and Xuan introduced an SVM-based model for EUR/USD prediction, comparing different metrics and observing a significant disparity in performance between the Gaussian radial basis function (RBF) and polynomial models. Additionally, they demonstrated a threefold increase in profit rate using the SVM model compared to the conventional transaction method.

Das et al. (2019) proposed a hybrid system that combined an online sequential model of extreme learning machine (ELM) with the krill herd (KH) optimization technique. Their system was compared with a recurrent backpropagation neural network (RBPNN) and the ELM algorithm using four currency pairs (SGD/INR, YEN/INR, USD/EUR, and USD/INR). The evaluation based on RMSE, MAPE, and Theil’s U metrics showed that their system performed the best with error rates of 0.071646, 0.10375, and 0.00014516, respectively. However, in terms of MAE and ARV performance evaluations, their proposed model did not provide the best results. For MAE evaluation, the online sequential model of the extreme learning machine performed the best, and for ARV evaluation, the krill herd with the extreme learning machine (KH-ELM) achieved the best results. In another study, Das et al. (2020) introduced a forecasting model that combined the Jaya optimization technique with extreme learning machines for predicting currency exchange rates. They used two currency pairs (USDEUR and USDINR) and evaluated their model’s performance using MAPE, ARV, Theil’s U, and MAE. The performance comparison with other models based on ELM, NN, and FLANN showed that ELM exhibited the best optimization. Their evaluation data indicated that ELM DE provided the lowest error for MAPE evaluation, while ELM TLBO, ELM PSO, and ELM Jaya achieved the best results for MAE, ARV, and Theil’s U, respectively. Chou and Truong (2019) tested the effectiveness of optimization using a benchmark function. They examined daily CAN/USD rates and 4-h EUR/USD closing prices, which resulted in mean absolute percentage errors of 0.2532% and 0.169%, respectively. Their forecast system achieved an accuracy rate of 89.8–99.7%, demonstrating improved forecasting accuracy compared to previous models for the CANUSD currency pair. The error rate of SMOF surpassed the baseline sliding window model, ranging from 20.8% to 29.9%.

The utilization of neural networks has been instrumental in time series prediction, particularly in the dynamic FOREX market, leveraging the power of hidden neurons for accurate forecasting. De Almeida et al. (2018) proposed an innovative FOREX trading model that combined support vector machines (SVM) with the genetic algorithm (GA) to optimize trading rules, resulting in an impressive return on investment of 83% during their testing. Colombo and Pelagatti (2020) conducted simulations using regularized regression splines, random forest (RF), and SVM on data from advanced economies, with SVM exhibiting superior precision, boasting a mean error of ~0.4. Sun et al. (2019) introduced a hybrid SVM method that incorporated a neural network and applied it to forecast US dollar exchange rates against major currencies, achieving mean error ranges of 0.31–1.7 for the period from 2011 to 2017. Ni et al. (2019) proposed a model for FOREX time series prediction utilizing the CRNN method, which combined a convolutional neural network and recurrent neural network, demonstrating superior performance compared to LSTM and CNN models based on RMSE evaluations. Cao et al. (2020) harnessed the power of deep learning, specifically deep long-short memory (LSTM), to forecast the exchange rate between the US dollar and the Chinese yuan, achieving a remarkable precision level of up to 75%. Hajizadeh et al. (2019) introduced a hybrid model that combined the GARCH model with a neural network to forecast foreign exchange currency price volatility using the EUR/USD dataset, leading to improved prediction accuracy. Finally, Fan et al. (2021) investigated the correlation between Taiwan Weighted Stock and Google Trends, demonstrating the superiority of neural networks over support vector machines and decision trees in their machine learning and trend search experiments.

Recently, there has been a notable surge of interest in research focused on the FOREX markets, with researchers exploring diverse methodologies to enhance market prediction capabilities. From statistical learning to deep learning, different models and hybrid approaches have emerged. However, in the field of economics and finance, Monte Carlo techniques have been traditionally applied and are considered more reliable. Therefore, in our currency market prediction model, which focuses on data-intensive currency quotes, we have employed Monte Carlo simulation. This method allows us to consider all possible outcomes of our decisions and provides the actual probabilities of each outcome occurring. By quantitatively assessing the impact of risk, we can achieve more accurate forecasting and make better decisions under conditions of uncertainty (Sikora et al., 2019).

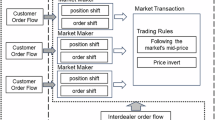

FOREX markets fundamentals

In this study, we estimated various exchange rate dynamics models, including uncovered interest rate parity, purchasing power parity, speculative pressure indexes (Cuthbertson and Nitzsche, 2004), sticky-price monetary, behavioral equilibrium exchange rate, and Taylor rule fundamentals models (Cheung et al., 2019). To evaluate these models, we drew a sample of data and constructed different-size designs. Uncovered interest rate parity and purchasing power parity are the most commonly used models for exchange rate dynamics estimation (Rossi, 2013). To estimate speculative attack scenarios, we used the model developed by Eichengreen et al. (1994), which has served as a basis for subsequent models of speculative attacks (Braga de Macedo and Lempinen, 2013; Wang et al., 2020; Valchev, 2020). Braga de Macedo and Lempinen (2013) developed a general equilibrium model to analyze and compare exchange rate adjustments to those presented in Dornbusch (1976) and Kouri (1978). They concluded that only the aggregation of holdings of assets susceptible to speculative expectations is relevant to their model, and they studied three different cases.

To evaluate the efficacy of these models, we conducted Monte Carlo simulations, generating 10,000 continuous-time trajectories spanning a 30-year period. We employed the 90% variance range as a metric to assess performance. Our findings revealed that the Dornbusch formulation exhibited a variance range of 200% around the average, which was reduced to 100% in the Kouri case and further narrowed down to 20% in the general equilibrium scenario. Valchev (2020) proposed a new exchange rate determination model that is robust to the evidence that the Uncovered Interest Parity reverses its direction at longer horizons. They showed that the feedback to the momentum of equilibrium output is not monotonic due to the interplay of fiscal and monetary policies, which fits well with the dynamics of exchange rates. In their study, Wang et al. (2020) developed an early warning system to forecast turbulence in the Shanghai Stock Exchange Composite Index. They employed a SWARCH model combined with a long short-term memory (LSTM) technique. The researchers found that LSTM demonstrated robustness, as evidenced by its high accuracy of 96.4% and an average forecast horizon of 2.8 days. Furthermore, LSTM outperformed all baseline models consistently throughout the evaluation process, indicating its stability and effectiveness in predicting turbulence. They also suggested future directions for investigation, such as expanding the number of explanatory variables and incorporating other deep-learning mechanisms.

We also estimated the second-generation speculative attack model of Flood and Marion (1997) using Monte Carlo estimation algorithms, including the Metropolis-Hastings, Sequential Monte Carlo (SMC) algorithms, and the novel AFQMC. In assessing the performance of our models, we utilized classification accuracy measured in percentage using both in-sample and out-sample data. Additionally, we employed residual measurements such as root-mean-square error (RMSE) and mean absolute percentage error (MAPE) to further evaluate the quality of our predictions.

This study extends the existing theoretical models in the literature by incorporating well-established concepts such as uncovered interest rate parity, purchasing power parity, behavioral equilibrium exchange rate, sticky price monetary, and Taylor rule fundamentals. These theoretical foundations have been previously discussed in works by Rossi (2013) and Ismailov and Rossi (2018), providing a strong theoretical basis for our research. Additionally, we evaluate various estimation techniques in the context of speculative attack models to simulate stress and volatility scenarios, such as sudden currency value drops.

Uncovered interest rate parity (UIRP)

Fisher (1962) introduced the concept of uncovered interest rate parity (UIRP), which explains the relationship between interest rates and changes in the relative value of currencies. According to UIRP, in an ideal situation where the nominal exchange rate is represented by St, investors can buy 1 = St units of foreign bonds using one unit of their domestic currency. Here, St denotes the price of the foreign currency in terms of the domestic currency. Under the assumption that a foreign bond yields one unit plus the foreign interest rate i*t+h between time t and t + h, the expected return of the foreign investment, converted to the home currency, should be equal to the return of the home bond (1 + it+h), assuming no transaction costs and no-arbitrage. This can be expressed as (1 + it+h) Et (St+h = St) = 1 + it+h, where Et(.) represents the expectation at time t. The parameters alpha and beta in this equation have theoretical values of 0 and 1, respectively (Ismailov and Rossi, 2018). Thus, the equation represents the uncovered interest rate parity (UIRP).

Using Eq. (1), Meese and Rogoff (1988) conducted a study to predict out-of-sample real exchange rates by utilizing real interest rate differentials. Their findings revealed that this approach outperformed a random walk model. Similarly, research by (Cheung and Erlandsson, 2005) and Alquist and Chinn (2008) supported the superiority of the uncovered interest rate parity (UIRP) over a random walk model for extended time horizons, although the improvement in yield was not statistically significant.

Purchasing power parity (PPP)

The concept of purchasing power parity (PPP) is based on the principle that the price of a basket of goods should be the same in different economies when expressed in a single currency. This implies that the purchasing power of a unit of money should be equivalent across countries. Various interpretations of the PPP principle exist, but a common approach involves comparing price levels in one country with those in a foreign country after converting the prices into a common currency. This concept has been discussed in prior works by Giacomini and Rossi (2010) and Rossi (2013). In the context of a commodity price (CP) index, the prices in the home country and the foreign country are denoted as pt and pt*, respectively. The PPP assumption can be represented by setting the parameters α and β to 0 and 1, respectively, similar to the uncovered interest rate parity (UIRP) model (Ismailov and Rossi, 2018). Therefore, the PPP can be expressed as follows:

Previous studies have raised concerns about the predictive power of the purchasing power parity (PPP) model, as its performance is not significantly superior to that of the random walk model in short-term forecasts. The random walk model demonstrates stronger forecasting ability in the short term, while the PPP model tends to outperform the random walk model in longer time horizons. However, the gap between the two models diminishes considerably when it comes to short-term predictions (Cheung and Erlandsson, 2005; Alquist and Chinn, 2008).

Behavioral equilibrium exchange rate (BEER) model

The behavioral equilibrium exchange rate (BEER) model is a theoretical framework used to calculate the equilibrium exchange rate of a currency based on its economic fundamentals. The model operates on the principle that the exchange rate is determined by the supply and demand of the currency in the foreign exchange market. By taking into account various economic variables such as inflation, interest rates, productivity, and trade balance, the BEER model aims to estimate an exchange rate that aligns with a country’s economic fundamentals. The underlying assumption is that the exchange rate will adjust to rectify any imbalances in the economy and achieve long-term equilibrium (Barbosa et al., 2018; Demir and Razmi, 2022).

The BEER model is becoming increasingly popular among policymakers and economists as it offers a framework for assessing the misalignment of a currency’s exchange rate relative to its fundamentals. It has been utilized to identify cases of currency overvaluation or undervaluation and guide policymakers in implementing appropriate policy measures to restore the currency’s equilibrium exchange rate. A common standard definition of this model is

In this framework, the logarithm of the price level (CPI), represented as p, is a key factor. Other variables included are ω, which represents the ratio of the price of non-tradable goods, r, denoting the real interest rate, gdebt, indicating the ratio of public debt to GDP, tot, representing the logarithm of the trade terms, and nfa, signifying foreign net assets. The model incorporates various elements such as the Balassa–Samuelson effect, which takes into account the relative price of non-tradable goods, the real interest differential model, which considers the difference in real interest rates, a foreign exchange premium associated with the public debt stock, and additional portfolio balance effects resulting from the net foreign asset exposure of the economy (Barbosa et al., 2018).

Models built upon this framework are frequently employed to ascertain the medium-term reference horizon at which foreign currencies are projected to stabilize, particularly in the context of analyzing monetary policies. Market professionals routinely utilize this technique to gauge the extent of divergence exhibited by currencies from their equilibrium values.

Sticky price monetary (SPM) model

The sticky price monetary (SPM) model is a theoretical framework employed in the field of FOREX to provide insights into the relationship between monetary policy changes and currency exchange rates. In this model, prices in the economy are characterized as “sticky,” meaning they adjust slowly to changes in supply and demand. According to the SPM model, adjustments in monetary policy impact the nominal interest rate, which, in turn, influences the demand for the domestic currency. When the nominal interest rate increases, there is upward pressure on the demand for the domestic currency as investors seek higher returns. This increased demand leads to an appreciation of the domestic currency’s exchange rate (Auray et al., 2019).

In the short run, the sticky price phenomenon implies that prices in the economy do not instantaneously respond to changes in supply and demand. Consequently, even when the exchange rate appreciates, the prices of goods and services do not immediately decrease to reflect the currency appreciation. This situation renders the country’s exports relatively more expensive and less competitive in the global market, resulting in a decline in demand for its goods and a reduced demand for domestic currency. As a consequence, the exchange rate experiences depreciation. However, in the long run, prices gradually adjust to the new exchange rate, restoring the economy to its equilibrium state (Chadwick et al., 2015).

Over time, prices will eventually adapt to the new exchange rate, allowing the economy to reach its equilibrium state. However, in the short run, according to the sticky price monetary (SPM) model, changes in monetary policy can cause fluctuations in the exchange rate. This is primarily due to the sluggish adjustment of prices in the economy, which do not immediately respond to changes in monetary conditions (Auray et al., 2019).

The sticky-price monetary model provides a fundamental understanding of how flexible exchange rates behave. The model can be described as follows:

in which st represents the exchange rate at time t, m denotes the logarithm of money at time t, y states the logarithm of real GDP at time t, i and π show the interest rate and inflation rate at time t, correspondingly, and ut is an error term.

Taylor rule fundamentals

The Taylor rule fundamentals model expands on the concept of the Taylor rule, which is employed by central banks to establish the target interest rate, by applying it to foreign exchange rates in the currency market. This model takes into account various economic factors, including inflation, output gap, and the exchange rate, to determine the equilibrium exchange rate. By incorporating these fundamental variables, the Taylor rule fundamentals model aims to provide insights into the relationship between interest rate changes and exchange rate movements (Chen et al., 2017).

The underlying assumption of the model is that the central bank modifies interest rates based on variations in economic fundamentals in order to uphold price stability and achieve optimal employment levels. The target interest rate is established through a rule that takes into account the difference between the actual inflation rate and the target rate, as well as the output gap. Furthermore, the model incorporates a component that captures the divergence of the exchange rate from its equilibrium value, which is determined by the fundamental variables (Molodtsova and Papell, 2009).

The Taylor rule fundamentals model is useful for predicting future exchange rate movements based on changes in economic fundamentals. If economic fundamentals suggest that the exchange rate is overvalued, the model predicts that the central bank will raise interest rates to bring the exchange rate back to its equilibrium value. Conversely, if economic fundamentals suggest that the exchange rate is undervalued, the model predicts that the central bank will lower interest rates to stimulate economic growth and raise the exchange rate (Rossi, 2013).

The Taylor rule fundamentals model offers insights into how economic fundamentals, interest rates, and exchange rates are interrelated in the foreign exchange market. It suggests that central banks adjust their policy rates based on deviations in inflation and output from their target levels. Moreover, the model takes into account the deviation of the exchange rate from its equilibrium value, which is influenced by underlying fundamental variables. Mathematically, the Taylor rule fundamentals model can be represented as follows:

being \(\widetilde {y_t}\) the output gap, st+k is the expected exchange rate at time t + k, st represents the spot exchange rate at time t, β0 is the intercept or constant term of the model. β1 is the coefficient of the output gap variable, which represents the sensitivity of the exchange rate to changes in the output gap. β2 denotes the coefficient of the inflation variable, which represents the sensitivity of the exchange rate to changes in inflation. And ut is an error term.

Speculative attacks model

The identification of currency crises should not be limited to the exchange rate regime or changes in the nominal exchange rate alone. It is possible that a regime change does not reflect the true reasons behind a country’s decision to maintain the current level of its currency’s exchange rate. Economic development or political institutions’ decisions to enter a monetary union may increase currency price volatility. Additionally, frustrated speculative attacks may occur, where an excessive increase in demand for foreign exchange does not yield the expected benefit. Monetary authorities have different options to cover this demand, including adjusting the exchange rate, interest rates, and foreign exchange reserves (Alaminos et al., 2022a).

Furthermore, speculative attacks that fail to achieve their intended outcome can also contribute to currency crises. When there is an excessive increase in the demand for foreign exchange, monetary authorities may use a variety of options to cover this demand, including adjusting the exchange rate, interest rates, and foreign exchange reserves.

The speculative attacks model of Eichengreen et al. (1994) is a framework used to identify currency crises by taking into account more than just the exchange rate regime or nominal exchange rate changes. While these factors are important, they may not always accurately reflect the underlying reasons for a country to maintain its current exchange rate level. Instead, there are other factors such as changes in economic development or a country’s decision to enter a monetary union that can also impact the volatility of a currency’s price.

Therefore, Eichengreen et al. (1994) used three variables to construct an index to capture currency crisis episodes: the series of currency exchange rates, interest rate differentials between national and foreign countries, and national reserve differentials between the two countries. The formula used to construct the speculative pressure index on the currency is as follows:

In this model, ei,t represents the exchange rate, which is the price of a foreign currency in terms of i’s currency at time t. The variables ii and ir represent the difference in short-term interest rates between i’s currency and a reference currency. The terms ri and rr indicate the percentage difference in the changes of ratios between international reserves and narrow money (M1). The weights α, β, and γ assign importance to each of these factors. During a crisis, the occurrence is explained by an outlier value of the EMP index that surpasses the sample mean by a significant standard deviation.

Explaining the meaning and effects of every large factor of this model, we can detail the next breakdown:

α%Δei,t: The term mentioned represents the impact of exchange rate fluctuations on the EMP. The coefficient α determines the degree of sensitivity of the EMP to changes in the exchange rate. A higher value of α indicates greater responsiveness of the EMP to exchange rate movements.

βΔ(ii,t−ir,t): This component reflects the influence of interest rate differentials on the EMP. The coefficient β represents the level of sensitivity of the EMP to variations in interest rate differentials. A higher β value indicates greater responsiveness of the EMP to changes in interest rate differentials.

γ(%Δri,t %Δrr,t): This term accounts for the impact of changes in reserves on the EMP. The coefficient γ quantifies the degree of sensitivity of the EMP to fluctuations in the reserves differential. A higher γ value signifies a greater sensitivity of the EMP to changes in the reserves differential.

Speculative attacks’ second-generation model

The speculative attacks model posits that the government’s choice to devalue its currency is contingent upon weighing the costs linked to abandoning the fixed exchange rate system against the loss of credibility that ensues. If the cost of devaluation is deemed lower than the cost of maintaining the fixed exchange rate, the government may opt to devalue its currency. This decision-making process is shaped by the anticipation of forthcoming economic policies, which are depicted by different equilibrium levels.

The second-generation models of currency crises diverge from the first-generation models by incorporating multiple equilibria that consider the interplay between the private sector and the government. These models capture the interaction between the private and public sectors, resulting in various potential outcomes (Alaminos et al., 2022b). When international financial actors foresee a potential currency devaluation, it can trigger a financial crisis as interest rates rise to incentivize domestic currency over foreign currencies. This situation may prompt the government to devalue its currency due to the high costs associated with servicing its debt. Conversely, when private agents do not anticipate an exchange rate change, interest rates remain low, reducing the likelihood of devaluation.

Flood and Marion (1997) introduced second-generation models to elucidate the self-fulfilling nature of shocks. According to this framework, if economic agents anticipate a potential currency devaluation, their expectations are factored into wage negotiations, leading to economic imbalances. These imbalances subsequently result in an increase in the country’s price level. To rectify such disequilibrium, the government may choose to adjust the exchange rate, which is fixed based on wage agreements. When the government decides against devaluation, it addresses the economic imbalances and prevents an inflationary surge by reducing its influence on the variables that determine production levels. Alternatively, if the government opts for a flexible exchange rate regime, it contributes to a situation where both wage levels and price levels in the country rise. Equation (7) illustrates the costs associated with the exchange rate regime in both scenarios.

being pt represents the national price level, yt represents the country’s output at time t, y* denotes the target output set by economic policy, and θ represents the weight assigned to deviations in inflation from the policy goal.

As done with the previous model, a more detailed breakdown of the meaning and effect of each part of the present model is shown below for a better explanation to the reader:

At time t, Lt represents the speculative pressure index, indicating the level of pressure exerted by market participants on the country’s currency to devalue. The value of 0.5 is a constant weighting factor that equally considers the two components of the index. The parameter θ represents the sensitivity of the index to changes in the exchange rate. A higher θ value indicates that the index is more responsive to fluctuations in the exchange rate. The variable pt refers to the current period’s exchange rate, while pt−1 represents the exchange rate in the previous period. The difference yt−y* signifies the gap between the actual output (yt) and the potential output (y*) of the economy. This difference reflects the level of economic activity and growth in the country. The term 0.5θ(pt − pt−1) represents the second component of the speculative pressure index, capturing the deviation of actual output from potential output. When the economy is underperforming relative to its potential, market participants may perceive a need for a currency devaluation to stimulate growth and enhance economic activity. Consequently, this leads to increased pressure on the currency to devalue.

In summary, the model indicates that the speculative pressure index is influenced by expectations of exchange rate devaluation and the deviation of the economy’s performance from its potential. The index tends to increase when there are anticipations of devaluation or when the economy is underperforming. This creates a self-fulfilling cycle, as heightened pressure can trigger a devaluation, which in turn intensifies the pressure for further devaluation.

Estimation methods

Markov Chain Monte Carlo (Metropolis–Hasting Algorithm)

The Metropolis–Hasting (MH) algorithm is a well-known and complex sampling technique used in statistical analysis (Heratha and Herath, 2018; Haario et al., 2006). This algorithm is based on Markov chains and is related to rejection methods, which means that a proposed value is required, and the normalization of the distribution function being sampled is not necessary. The MH algorithm is inspired by the behavior of systems near equilibrium in statistical mechanics. In this algorithm, the transition probabilities between different states, X and Y, are utilized to describe the evolution of the system. The concept of equilibrium is reached when, on average, the system has an equal probability of being in either state X or Y. Furthermore, the probability of transitioning from state Y to X is equivalent to the probability of transitioning from state X to Y. This equilibrium condition is mathematically expressed through the detailed balance equation.

where f(X)P(Y|X) represents the likelihood of finding the system in the vicinity of state X, denoted by f(X), multiplied by the conditional probability of the system transitioning from state X to state Y, denoted by P(Y|X) (Ayekple et al., 2018). The detailed balance condition plays a crucial role in maintaining the correct distribution in the algorithm and is employed to determine the acceptance probability of proposed moves in the Metropolis–Hastings algorithm. The acceptance probability is determined by comparing the probabilities on the left and right-hand sides of the detailed balance equation. By adhering to this condition, the algorithm ensures that the samples obtained align with the desired distribution. P(Y|X) is known and it is about finding f(X). The MH algorithm claims the opposite: given an f(X) is about finding the transition probability that brings the system to equilibrium. Transitions are proposed from X to Y following any test distribution T(Y|X). It compares f(Y) with f(X) and it accepts Y with likelihood A(Y|X).

The acceptance probability determines whether a proposed state is accepted or rejected based on the comparison of probability densities between the current state X and the proposed state Y. If the probability density of Y is higher than that of X, the acceptance probability A(Y|X) is set to 1, resulting in the acceptance of the move to Y. However, if the probability density of Y is lower than that of X, the acceptance probability A(Y|X) is <1. In this case, the move to Y is accepted with a probability of A(Y|X) and rejected with a probability of 1−A(Y|X). The proposed state Y is randomly chosen from the proposal distribution T(Y|X), which satisfies the detailed balance condition. The detailed balance condition ensures that the ratio of transition probabilities, P(Y|X) to P(X|Y), is equal to the ratio of acceptance probabilities, A(Y|X) to A(X|Y). By satisfying this condition, the algorithm adheres to the principle of detailed balance, facilitating the convergence of the Markov chain to the desired equilibrium distribution (Heratha and Herath, 2018). Therefore,

A Markov chain made up of the states is constructed X0, X1, X2,…,XN which is reached in each transition, from an initial X0. Each Xn is a random variable that will satisfy the following condition:

The mentioned condition implies that as the number of iterations increases indefinitely, the empirical distribution of the states Xn, represented by ∅n(X), approaches the target distribution f(X). This means that the algorithm generates a sequence of states, X0, X1, X2,…,XN, and as the number of iterations (N) grows larger, the distribution of the final state XN converges to the desired target distribution f(X) (Haario et al., 2006). During each step of the random path, there is a transition probability T(Y|X) that ensures the transitions between states are properly normalized, indicating the likelihood of moving from one state to another.

Assuming it is always possible to go from Y to X if it is possible to go from X to Y, and vice versa, we define,

where T(Y|X) represents the probability of proposing state Y given the current state X, which is known as the proposal distribution. The target distribution is denoted by f(X) for the current state X and f(Y) for the proposed state Y. The proposal probability ratio q(Y|X) is used to calculate the acceptance probability of the proposed state Y given the current state X. This acceptance probability determines whether the proposed state is accepted or not. The value of q(Y|X) is treated as the probability of acceptance in the algorithm, indicating the likelihood of transitioning to the proposed state Y.

The acceptance of the proposed state Y depends on the value of q(Y|X). If q(Y|X) is less than or equal to 1, the state Y is always accepted. However, if q(Y|X) is greater than 1, the acceptance probability is determined by min{1, q(Y|X)}. The condition q(Y|X) ≥ 0 ensures that the proposal probability ratio is non-negative, which is necessary for the acceptance probability to be well-defined (Betancourt, 2019).

The summary of the procedure explained so far to generate Markov chains from the Metropolis–Hastings algorithm has the following general outline:

Algorithm: Metropolis–Hastings |

Step 1: Initialize X0, t = 0. |

Step 2: Repeat { |

Generate a candidate Y ⁓ q(.|Xt) |

Generate U ⁓ U(0, 1) |

If U ≤(Xt; Y), take Xt+1 = Y |

otherwise, take Xt+1 = Xt |

Increase t |

} |

For this derivation of the algorithm, we have the Metropolis random walks, where q(Y|X) = q(|X−Y|). In all cases, it is necessary to note that the quantity α(X,Y) is fundamentally for the construction of Markov chains. The choice of the form of α(X,Y), which is very simple, guarantees that π(·) satisfies the balance condition, and therefore that π(·) is itself the stationary distribution of the Markov chain (Neureiter et al., 2022). The key quantity in the Metropolis algorithm is the acceptance probability α(X,Y), which determines whether the proposed state Y is accepted or rejected. The choice of α(X,Y) is crucial for constructing Markov chains that have a desired stationary distribution. The form of α(X,Y) is typically quite simple and is designed to satisfy the balance condition. The balance condition ensures that the desired distribution π(·) is the stationary distribution of the Markov chain. In other words, as the Markov chain converges, the distribution of states should approach the desired distribution π(·) (Ayekple et al., 2018). The balance condition is satisfied when the acceptance probability α(X,Y) is defined as α(X,Y) = min{1, π(Y)/π(X)}.

In this context, π(X) and π(Y) represent the probability densities of states X and Y, respectively. The acceptance probability is determined by comparing the relative likelihoods of transitioning from state X to state Y. If the probability density of the new state Y is higher than that of the current state X (π(Y) > π(X)), the transition is always accepted with probability 1 (Heratha and Herath, 2018). If the new state Y has a lower probability density (π(Y) < π(X)), the transition is accepted with probability π(Y)/π(X). By incorporating this acceptance probability, the Markov chain tends to explore the state space in a way that is consistent with the desired distribution π(·).

Following the implementation, this study used the Gelman–Rubin Test as a criterion to analyze the convergence of Markov chains (Nguyen and Jones, 2022), which consists of the following steps:

-

1.

Generate M ≥ 2 strings, each with 2N iterations, starting from different starting points.

-

2.

Discard the first N iterations of each chain to allow for burn-in.

-

3.

Calculate the within-chain variance of each chain \({{{s}}}_{{{j}}}^2\) after discarding the first N iterations. Then, calculate the average of the within-chain variances:

$${{{W}}} = \frac{1}{{{{M}}}}\mathop {\sum}\limits_{{{{j}}} = 1}^{{{M}}} {{{{s}}}_{{{j}}}^2}$$(14)where s2 is the variance of each chain, calculated after discarding the first N iterations. Calculate the between-chain variance B by taking the variance of the means of the M chains:

$$B = \frac{N}{{M - 1}}\mathop {\sum}\limits_{j = 1}^M {\left( {\overline {\theta _j} - \overline{\overline \theta } } \right)^2}$$(15)where \(\overline{\overline \theta }\) is the mean of the M strings.

-

4.

Estimate the variance of the target parameter θ as follows:

$${\rm {v}}ar\left( \theta \right) = \left( {1 - \frac{1}{N}} \right)W + \frac{1}{N}B$$(16)This term represents a weighted average of the variance within each chain and the variance between different chains.

-

5.

Compute the potential scale reduction factor, R:

The chains are accepted to have converged when typically 0.97 < R < 1.03 (Neureiter et al., 2022). The Gelman–Rubin test is used to evaluate convergence by comparing the within-chain variance (W) and the between-chain variance (B). Convergence is indicated when the within-chain variance is similar to the between-chain variance, suggesting that the chains have converged to the same distribution. The test calculates the statistic R, which represents the ratio of the estimated variance of the target parameter using all chains to the within-chain variance of each individual chain.

Sequential Monte Carlo (SMC)

The particle filtering framework is a strong state space model for inference (Bloem-Reddy and Orbanz, 2018). They have also been commonly used for financial and macroeconomic applications (Lux, 2018). The essence is to map the state variable distribution using a Monte Carlo approach built through a vast amount of random samples, that evolve according to a simulation-based updating schedule. As such, novel observations are rendered by the filter as they are available. Every particle is given a weight and recursively updated.

The filtering problem lies in the determination of \(p\left( {\left. {x_t} \right|y_{1:t},\,\emptyset } \right)\). This can be done in the projection (Eq. (19)) and update (Eqs. (20) and (21)) steps:

In Eq. (18), the prediction step is performed, which involves estimating the probability of the next state. This estimation is done by integrating the current state using the transition function and the current state probability. Equation (19) is the update step, where the probability of the next state given the observations is estimated using Bayes’ rule and the likelihood function. Finally, Eq. (20) is the likelihood computation step, where the likelihood of the next observation is estimated by integrating over the next state using the transition function and the current observation probability (Bloem-Reddy and Orbanz, 2018). In summary, these equations outline the recursive estimation process employed in sequential Monte Carlo (SMC) to estimate the probability distribution of the system state at each time step.

The sequential Monte Carlo filter represents the distributions of interest employing Monte Carlo approximations, that is, employing a set of M particles. We assume to be in time t−1 and to create M extractions of \(p\left( {\left. {x_t} \right|x_{t - 1},\emptyset } \right)\), in such a way that they are simulated from the system of formulas of state. Then, we allocate every particle m = 1, …, M a weight proportional to its probability:

The weights can be normalized as

To generate the particles, the SMC filter uses Monte Carlo approximations. Starting from the previous time step (t−1), the filter creates M samples of \(p\left( {\left. {y_t} \right|x_t,\emptyset } \right)\). These samples are simulated using the system of state equations. After generating the particles, each particle is assigned a weight wt,m that is proportional to the likelihood of the observation yt given the state value xt associated with that particle. This weight represents the importance of that particle in approximating the target distribution (Bloem-Reddy and Orbanz, 2018). Particles with a higher likelihood of the observations are assigned higher weights.

Therefore, the weights can be used to calculate Monte Carlo integrals using importance sampling (Lux, 2018). Resampling considers the number of offspring in proportion to the weight of importance and is generated by simulating a set U from M of random variables uniformly distributed in [0; 1], employing the cumulative sum of the normalized weights

and then setting Om equal to the number of points in U that are between qm−1 and qm. When ∅ is fixed, the subsequent distribution p(∅|y1:t) can be described as its Monte Carlo mean and its variance \(\overline {\emptyset}_t\) and \(s_t^2\), where \(s_t^2\) denotes the vector of empirical variances of each element ∅ (Bloem‐Reddy and Orbanz, 2018). Instantly it can be noted that, for artificial evolution of the parameters, the Monte Carlo variance rises to \(s_t^2 + \xi _t\). The Monte Carlo approximation can be stated as a softened kernel density of the particles:

while the target variance \(s_t^2\) can be expressed as

being \({\rm {Cov}}\left( {{\emptyset}_{t - 1},k_t} \right) = - \frac{{\xi _t}}{2}\).

This Eq. (24) approximates the distribution of the unknown variables (∅) given the observed data (y1:t) using a set of M particles. At every time step, the particles are advanced from the previous state \(\left( {{\emptyset}_t^{(j)}} \right)\) to the current state (∅t+1) using a transition kernel \(k_t^{(j)}\) with a tuning parameter ξt. The importance weights of the particles are then calculated based on their ability to explain the observed data at the current time step, as described by Eq. (21) (Lux, 2018).

Equation (25) provides the estimation of the target variance at time t, taking into account the variance at the previous time step \(\left( {s_{t - 1}^2} \right)\), the tuning parameter ξt, and the covariance between the particles at the previous time step (∅t−1) and the transition kernel at the current time step (kt). This equation allows for the adaptation of the target variance, which helps in maintaining an appropriate balance between exploration and exploitation in the Sequential Monte Carlo algorithm.

Finally, the bootstrap filter includes a resampling step to address particle degeneracy by eliminating particles with low-importance weights. In the past, several authors used the importance-weighted empirical distribution, but this time, we use a uniformly-weighted distribution as the way is being applied to a few recent papers (Martino and Elvira, 2021).

where \(N_t^{\left( i \right)}\) is the number of a descendant of the particle x0:t which will be calculated by the branching process during sampling. The most commonly used mechanism process is about resampling N times from \(\hat P_N\) (Gordon et al., 1993). During the sampling process, a branching mechanism is utilized to duplicate or eliminate each particle based on a branching process. The decision to duplicate or eliminate a particle is generally governed by the assigned importance weights for each particle. This ensures that particles with higher weights have a higher likelihood of being duplicated, while particles with lower weights may be eliminated. The process of eliminating particles and duplicating others helps to prevent particle depletion and ensure that the particles adequately represent the posterior distribution. We require \(\mathop {\sum}\nolimits_i {N_t^{\left( i \right)}} = N\) for all t. If \(N_t^{\left( j \right)} = 0\), the particle \(x_{0:t}^{(j)}\) dies. We try to choose \(N_t^{\left( i \right)}\) such that

The surviving particles, indicated by N(i)t > 0, approximate the distribution \({p(x0{:}t|y1{:}t)}\). Importance weights are employed to adjust the sample density and approximate the target distribution. Resampling is subsequently employed to eliminate particles with low weights and duplicate particles with high weights. This process generates a new set of samples that closely resemble the target distribution.The resampling step ensures that the samples remain diverse, avoiding the degeneracy of the particle filter.

Auxiliary-field quantum Monte Carlo (AFQMC)

The CPMC algorithm consists of two main components. The first component involves projecting the basic state as a random walk of open-importance samples in a deterministic Slater space. This random walk utilizes an accurate restricted representation, which allows CPMC to scale polynomially. The algorithm also incorporates importance sampling, enhancing its applicability in various scenarios. The second component involves constraining the trajectories of the random path. During this process, each created Slater determinant maintains significant overlap with a reference test wave function, represented as |ψT〉. This constraint eliminates the sign problem, resulting in CPMC scaling algebraically rather than exponentially. However, it introduces a systematic error in the algorithm.

In the CPMC method, particular attention is given to a particle basis that is specific to the problem at hand. The Hamiltonian employed in this method follows the Born–Oppenheimer approximation and does not involve the mixing of spin states. Throughout the discussion, it is assumed that the Hamiltonian conserves the total spin projection, \(\hat S_z\), and that the electron number remains fixed for each spin component. However, even if the Hamiltonian does mix spin states, it can still be effectively handled using the CPMC method, as demonstrated in a study by Nguyen et al. (2014). For clarity, the notation used in the subsequent discussion will be explained in the following paragraph.

In the context of the Hubbard model lattice, M represents the number of base states available for a single electron. The state |χi〉 refers to the ith ground state of a single particle, with i ranging from 1 to M. The operators \(c_i^{\dagger}\) and ci correspond to the creation and annihilation operators, respectively, for an electron in the state |χi〉. The operator ni represents the number operator associated with state |χi〉. The variable N represents the total number of electrons, while Nσ specifically indicates the number of electrons with spin σ (where σ can be either up or down). The symbol φ represents an orbital of a single particle. The expansion \(\varphi = \mathop {\sum}\nolimits_i {\varphi _i\left| {x_i}\rangle \right.} = \mathop {\sum}\nolimits_i {c_i^{\dagger} \varphi _i\left| 0\rangle \right.}\), based on the single-particle base states {|χi〉}, can be represented as a vector of dimension \(M:\left( {\begin{array}{*{20}{c}} {\begin{array}{*{20}{c}} {\varphi _1} \\ {\varphi _2} \end{array}} \\ {\begin{array}{*{20}{c}} \vdots \\ {\varphi _M} \end{array}} \end{array}} \right)\). The state |φ〉 represents a many-body wave function that can be expressed as a Slater determinant. Given N different single-particle orbitals, we can construct a many-body wave function by taking their antisymmetric product, denoted as \(\left| \phi\rangle \right. \equiv \hat \varphi _1^{\dagger} \hat \varphi _2^{\dagger} \ldots \hat \varphi _N^{\dagger} \left| 0\rangle \right.\). Here, the operator \(\hat \varphi _m^{\dagger}\) is defined as the sum \(\hat \varphi _m^{\dagger} \equiv \mathop {\sum}\nolimits_i {c_i^{\dagger} \varphi _{i,m}}\). The matrix Φ represents a matrix of dimension M × N, where M is the number of base states and N is the number of orbitals. This matrix Φ contains the coefficients of the orbitals required to form a Slater determinant, expressed as |∅〉: \({\Phi} \equiv \left( {\begin{array}{*{20}{c}} {\varphi _{1,1}} & {\varphi _{1,2}} & \cdots & {\varphi _{1,N}} \\ {\varphi _{2,1}} & {\varphi _{2,2}} & \cdots & {\varphi _{2,N}} \\ \vdots & \vdots & {} & \vdots \\ {\varphi _{M,1}} & {\varphi _{M,2}} & \cdots & {\varphi _{M,N}} \end{array}} \right)\). The resulting M × N matrix corresponds to a Slater determinant. The state |Ψ〉 represents a many-body wave function that may not necessarily be a single Slater determinant, with the initial state denoted as |Ψ0〉.

We can denote the superposition integral, which is a number, between two non-orthogonal Slater determinants |φ〉 and |φ′〉 as follows:

being Φ† the conjugate transpose of the matrix Φ. The equation given in the statement describes the superposition integral between two non-orthogonal Slater determinants, |φ〉 and |φ′〉. The notation \(\det \left( {\phi ^{\dagger} \phi ^\prime } \right)\) represents the determinant of the matrix product of the conjugate transpose of the single-particle wave functions in |φ〉 and |φ′〉. A superposition integral serves as a numerical measure of the overlap between two Slater determinants, playing a vital role in evaluating transition probabilities and computing expectation values (Ceperley, 2010). Furthermore, the operation of applying the exponential of an operator on a Slater determinant follows a specific procedure.

leads to another determinant of Slater:

with \(\hat \phi _m^{\prime {\dagger} } = \mathop {\sum}\nolimits_j {c_j^{\dagger} \phi _{jm}^\prime }\) and \(\phi ^\prime \equiv e^U\phi\), being the matrix U formed from elements Uij. The second property of a Slater determinant states how it is affected by the exponential of an operator. Consider an operator B̂ that can be written as a sum of creation and annihilation operators, as shown in Eq. (29), where \(c_i^{\dagger}\) and \(c_j^{\dagger}\) are the creation and annihilation operators, respectively, and Uij are the elements of a matrix U. When this operator acts on a Slater determinant |ϕ〉, it results in another Slater determinant |ϕ′〉, as shown in Eq. (30) (Nguyen et al., 2014). Here, \(\phi _1^{\prime {\dagger} }\hat \phi _2^{\prime {\dagger} } \ldots \hat \phi _N^{\prime {\dagger} }\left| 0 \right.\) are the rows of a new N × N matrix formed by taking the transpose of a matrix ϕ′, which is given by multiplying the original matrix ϕ with the matrix exponential of U.

Given B ≡ eU indicates a square matrix M × M, the operation of \(\hat B\) over simply involves multiplying eU, a matrix M × M, by Φ, a matrix M × N (Ceperley, 2010). It is, therefore, appropriate to consider every Slater determinant represented as two separate rotatable parts:

The appropriate matrix expression would be the following:

where ϕ↑ and ϕ↓ have dimensions M × N↑ and M × N↓, accordingly. The overlap between two Slater determinants is simply the product of the overlaps of individual turn determinants (Ceperley, 2010):

Any operator \(\hat B\) defined by Eq. (30) operates separately on the two rotating parts:

Equation (32) expresses a Slater determinant as a product of two determinants corresponding to spin-up and spin-down electrons, respectively. The notation ϕ′↑ and ϕ↓ denote the submatrices corresponding to spin-up and spin-down electrons in the Slater determinant, respectively. The dimensions of these submatrices are M × N↑ and M × N↓, respectively, where M is the number of spatial orbitals, and N↑ and N↓ are the numbers of spin-up and spin-down electrons, respectively. Equation (33) shows how to calculate the overlap between two Slater determinants, which is simply the product of the overlaps of their spin-up and spin-down determinants. The overlap of each spin-determinant is expressed as a determinant of the corresponding submatrices. Finally, Eq. (34) states that any operator \(\hat B\) defined by Eq. (30) operates separately on the spin-up and spin-down parts of the Slater determinant. This means that the operator acts on each submatrix independently.

Equation (32) provides a representation of a Slater determinant as the product of two determinants, one for the spin-up electrons (ϕ′↑) and another for the spin-down electrons (ϕ↓). These determinants are submatrices with dimensions M × N↑ and M × N↓, respectively, where M represents the number of spatial orbitals, N↑ is the count of spin-up electrons, and N↓ is the count of spin-down electrons. The overlap between two Slater determinants is computed in Eq. (33) by taking the product of the overlaps of their spin-up and spin-down determinants, each expressed as a determinant of the corresponding submatrices. Equation (34) states that any operator \(\hat B\) defined by Eq. (30) acts independently on the spin-up and spin-down parts of the Slater determinant, operating on the respective submatrices separately. The Hubbard model is a simple paradigm of an interacting electron system (Ceperley, 2010). Its Hamiltonian is given by the following equation:

where t represents an element of the jump matrix, and \(c_{i\sigma }^{\dagger}\) and ciσ are creation and destruction operators for electrons with spin σ at position i (Nguyen et al., 2014). The Hamiltonian is defined as a network with dimensions \(M = \mathop {\prod}\nolimits_d {L_d}\). The exchange rate calculations are performed in an approximation to the ground state in particle physics. The ground state wave function |Ψ0〉 can be obtained by repeatedly applying the projection of the ground state operator to any test wave function |ΨT〉 that is not orthogonal to |Ψ0〉.

given ET as the best estimate of the exchange rate of the currency, if the wave function at the nth time step is |Ψ (n)〉, the wave function at the next time step can be expressed as:

The objective of the algorithm is to determine the ground state wave function |Ψ0〉, which represents the most stable state of the currency exchange rate system. Equation (36) outlines the iterative process of applying the ground state operator projection to a test wave function |ΨT〉 in order to converge toward the ground state wave function |Ψ0〉. The parameter Δτ controls the time step, while \(\hat H\) denotes the Hamiltonian operator and ET represents the estimated exchange rate. The algorithm gradually evolves the wave function from an initial state (|ΨT〉) to the stable ground state (|Ψ0〉) by repeatedly applying the projection operator. Equation (37) illustrates the wave function at the (n + 1)th time step, obtained by applying the projection operator to the wave function at the previous time step. Through repeated iterations, the algorithm approaches the ground state wave function, which provides an estimation of the currency exchange rate.

In the Hubbard model, the Hubbard–Stratonovich (HS) transform is employed to convert the exponential term \({\rm {e}}^{ - {\Delta}\tau \hat V}\) into the desired form. This transform is utilized to simplify the mathematical representation of the system:

where γ is given by cosh(γ) = exp(ΔτU/2). There is no information in the above procedure about the importance of the deterministic result in the representation of |Ψ0〉 contained in the sampling of \(\vec x\).The following equation expresses the mixed estimator of the ground state approximation. |

where it is required to estimate the denominator by \(\mathop {\sum }\limits_k \langle{\emptyset}_{\rm {T}}\left| {{\emptyset}_k}\rangle \right.\), being |∅k〉 random walks after the balance (Nguyen et al., 2014; Ceperley, 2010). We define the function estimates the superposition of a Slater determinant ∅k:

The mixed estimator (Eq. (39)) can be expressed as the expectation value of the Hamiltonian operator, Ĥ, in the ground state wave function, |ψ0〉, projected onto a trial wave function, ∅T. The numerator represents this expectation value, while the denominator corresponds to the overlap between the trial wave function and the ground state wave function.

We assign a weight wk = OT (∅k) to every random walk (Ceperley, 2010; Nguyen et al., 2014). The weight for each ride in the set is set to one, indicating equal importance for all rides \(\left| {{\emptyset}_k^{\left( 0 \right)}\Big\rangle = \left| {{\emptyset}_{\rm {T}}}\rangle \right.} \right.\) for all k. Then the form is iterated as follows:

where \(\hat B\left( {\vec x} \right) = \hat B_{k/2}\hat B_{\rm {V}}\left( {\vec x} \right)\hat B_{k/2}\).

The walkers, represented as \(\left| {\widetilde {\emptyset}^{\left( n \right)}} \right.\), are now sampled from a revised distribution. These walkers visually represent the wave function of the fundamental state using a diagrammatic representation:

Equation (42) shows how the wave function of the trial state at iteration n, denoted by \(\left| {\psi ^{\left( n \right)}} \right\rangle\), can be approximated using a sum over different configurations (denoted by \(\left| {{\emptyset}_k^{\left( n \right)}} \right\rangle\)) weighted by their corresponding weights (denoted by ω(n)). These configurations are sampled from a new distribution, which is determined by the previous set of weights and the normalization factor OT. The equation also includes a normalization factor in the denominator to ensure that the sum over all configurations is properly normalized to 1, which is a requirement for any valid wave function. This normalization factor depends on the overlap between the trial state and each individual configuration (Ceperley, 2010). This iterative approach involves improving the trial wave function by utilizing a set of walkers that represent the system. The walkers are sampled from a distribution that is updated at each iteration, taking into account the previous set of weights. This systematic process allows for the gradual convergence toward the true ground state of the system.

The modified function \(\widetilde P\left( {\vec x} \right)\) is defined as the product of individual probabilities \(\widetilde P\left( {\vec x} \right)\) for sampling the auxiliary field at each site in the network. This can be expressed as \(\tilde p\left( {\vec x} \right) = \mathop {\prod}\nolimits_i^M {\tilde p\left( {x_i} \right)}\), where M represents the total number of sites in the network.

where \(\left| {{\emptyset}_{k,i - 1}^{\left( n \right)}} \right\rangle = \hat b_{\rm {V}}\left( {x_{i - 1}} \right)\hat b_{\rm {V}}\left( {x_{i - 2}} \right) \ldots \hat b_{\rm {V}}\left( {x_i} \right)\left| {{\emptyset}_k^{\left( n \right)}} \right\rangle\), is the current state of the kth walker, \(\left| {{\emptyset}_k^{\left( n \right)}} \right\rangle\), after your first (i−1) fields have been sampled and updated, and \(\left| {{\emptyset}_{k,1}^{\left( n \right)}} \right\rangle = \hat b_{\rm {V}}\left( {x_i} \right)\left| {{\emptyset}_{k,1 - 1}^{\left( n \right)}} \right\rangle\) is the next sub-step after selecting the ith field (Ceperley, 2010; Nguyen et al., 2014). In each \(\widetilde p\left( {x_i} \right)\), xi can only take the value of +1 or −1 and can be sampled after choosing xi del \(\widetilde p\left( {x_i} \right)/N_i\), where the normalization factor is \({{{\mathcal{N}}}}_i \equiv \tilde p\left( {x_i = + 1} \right) + \tilde p\left( {x_i = - 1} \right)\), and carrying weight for the random walk \(w_{k,i}^{(n)} = {{{\mathcal{N}}}}_iw_{k,1 - 1}^{(n)}\). The inverse of the superposition matrix \(\left[ {\left( {{\emptyset}_{\rm {T}}} \right)^{\dagger} {\emptyset}_k^{(n)}} \right]^{ - 1}\) is maintained and updated after each xi is selected.

For every walker\(\left| {{\emptyset}_k^{(n)}} \right.\), a random walk step thus involves:

-

6.

One x sampling starting from the likelihood density function \(\widetilde P\left( x \right)/N\left( {{\emptyset}_k^{\left( n \right)}} \right)\). With discrete, Ising-like auxiliary fields for a Hubbard interaction, the sampling is achieved by a heat bath-like algorithm, sweeping through each field xi.

-

7.

compute the \({\emptyset}_k^{\left( n \right)}\) walker to produce a new walker after building the corresponding B(x).

-

8.

allocate a weight \(\omega _k^{\left( {n + 1} \right)} = \omega _k^{\left( n \right)}N\left( {{\emptyset}_k^{\left( n \right)}} \right)\) to the new walker.

In the reorthogonalization procedure, no modification of the weight of a walker is required. This is because the upper triangular matrix R, which arises during reorthogonalization, only contributes to the overlap OT, and the weight of the walker already accounts for this overlap. Therefore, after each reorthogonalization step, R can be disregarded, as explained by Motta et al. (2019). To estimate the expected value of an observable that does not commute with the Hamiltonian, the back-propagation approach, proposed by Zhang in 2004, is employed. When two independent populations are decoupled, significant fluctuations in the estimator can occur after uncoupling the significance functions, since the population in the random walk is sampled. In the backpropagation method, an iteration n is selected, and the entire population \(\left\{ {\left| {{\emptyset}_k^{\left( n \right)}} \right\rangle } \right\}\) is stored. For each new walker as the random walk progresses from n, two pieces of information are recorded: the auxiliary field parameters leading to the new walker from its parent walker, and an integer tag describing the parent. Backpropagation is then performed for m additional iterations. For each walker l in the (n + m)th population, a determinant \(\left\langle {\psi _{\rm {T}}} \right|\) is initiated, and the propagators are applied in reverse order. The m successive propagators are constructed using the stored elements between steps m and m + l, with \({{{\mathrm{exp}}}}\left( { - \frac{{{{{\mathrm{{\Delta}}}\uptau }}\hat H_1}}{2}} \right)\) inserted as necessary, following Zhang’s, 2004 methodology. The resulting determinants \(\left\langle {\overline {\emptyset}_l^{(m)}} \right|\) are combined with their parents from iteration n to calculate the expectation value 〈O〉BP, similar to the composite estimator. The weights are appropriately denoted as \(\omega _l^{\left( {n + m} \right)}\), accounting for significance sampling, as discussed by Motta et al. (2019).

Empirical results