Abstract

In this article, we present the TechEthos Game: Ages of Technology Impacts, a serious game rooted in the EU-funded Horizon 2020 project TechEthos. This game is built to support public reflection and perspectives on the social and ethical issues of new and emerging technologies, and to surface and collect participants’ awareness, attitudes, and values towards specific technology families. The TechEthos Game offers an immersive exploration of the interplay between emerging technologies and their societal implications by inviting players to discuss and reflect on ethically aligned technological futures. Within the game, participants are invited to take on the role of the “Citizen World Council,” tasked with the duty of guiding the trajectory of global technology. Through iterative decision-making processes, players grapple with the dual objectives of technological progression and societal well-being, ensuring that the balance does not tilt perilously towards either side, and expressing preferable solutions for mitigating risks. On the one hand, the game’s design demands continuous assessment and proactive intervention from players; on the other hand, it asks them to articulate their excitement and concerns regarding the four emerging technologies: climate engineering, digital extended reality, natural language processing, and neurotechnologies. In this paper, we discuss the process of designing a serious game for qualitative and participatory research, present the results from the game, and reflect on lessons learned from using a serious game as a citizen engagement activity.

Similar content being viewed by others

Introduction

European research and innovation policy has, over recent decades, made several marked shifts to be more responsive to social and ethical concerns accompanying technological development (Weber et al. 2012; Schot and Steinmueller 2018). This can be seen, for example, in the “grand challenges” turn of research and innovation framework programs toward thematically aligned areas such as energy, climate, health, security, etc. (Kuhlmann and Rip 2014). It is also visible in transformative (Weber and Rohracher 2012) and mission-oriented initiatives (Mazzucato 2018). Such turns correspond to broader policy shifts in research and innovation (R&I) governance from the asymmetrical “deficit” model of the public (Wynne, 1994) to a more democratic structure working to include public and their legitimate values and concerns (Felt and Wynne 2007). Such R&I governance efforts grapple directly with questions of how governments advance economic progress through innovation with a greater connection to social, ethical, and environmental goals (Pfotenhauer and Juhl 2017; Foley et al. 2016).

In Europe, R&I in the decade since 2013 has been marked by one such push in particular: a push for “responsible research and innovation” (Stilgoe et al. 2013; Owen et al. 2021). Responsible research and innovation (RRI; as known in Europe) and responsible innovation (RI; as known in the UK) (Brundage and Guston 2019) break out six key outcome-oriented dimensions and four process-oriented dimensions to practically enhance societal-focus in research and innovation across all stages (e.g., from project inception to commercialization) (Bernstein et al. 2022). The outcome-oriented dimensions include gender equality, ethics, science literacy and science education, open access, governance, and public engagement. The process dimensions cover ambitions like reflection on goals of research; inclusion of diverse and marginalized perspectives; anticipation of longer-term consequences of research; and responsively adapting research plans and policies based on the insights arising from these activities (von Schomberg 2013; Stilgoe et al. 2013). In Horizon 2020, the European Commission’s 8th framework program for research and innovation, responsible research and innovation was rolled out as a policy intervention to better align—by design—R&I process and outcomes with societal values, needs, and expectations (de Saille 2015; Novitzky et al. 2020; European Commission 2020).

This approach to technological development views technologies as inherently value-laden because their design, implementation, and societal impact embody normative choices. Three philosophical strands illustrate this: first, the recognition that technologies embody values in their material design and affordances; second, Winner’s (1980) claim that artifacts have politics, emphasizing that technologies shape social arrangements and power relations; and third, van den Hoven’s (2013) account of technology as moral mediation, where design choices influence ethical and behavioral outcomes. Taken together, these perspectives highlight why ethical reflection cannot treat technologies as neutral tools, but as active participants in shaping social life, including the spread of phenomena such as misinformation.

The framework of Value Sensitive Design (VSD), introduced by Friedman and Hendry (2019), further illustrates how implicit values guide technical decision-making, whether in privacy settings, algorithmic biases, or accessibility features. The potential for unintended consequences, as discussed by Owen et al. (2013), underscores the ethical responsibility of developers to anticipate long-term societal effects, as seen in cases such as social media’s contribution to misinformation. Additionally, the governance perspective of Stilgoe, Owen, and Macnaghten (2013) argues that transparency and democratic engagement are essential precisely because technology influences societal norms in non-neutral ways. Following these insights, technology should be consciously designed to reflect and promote ethical and social values that enhance collective well-being. This requires moving beyond abstract ethical principles to articulating concrete, actionable values that can be integrated into technological design and governance (von Schomberg 2013). Without such specificity, ethical considerations risk being reduced to vague aspirations rather than shaping technology in meaningful ways (Floridi 2019). Ethical reflection necessitates inclusive deliberation, where diverse stakeholders engage in anticipatory governance to define desirable futures (Guston 2014). Responsible innovation aims to align technologies with societal values by addressing ethical concerns proactively rather than reactively, making reflecting on the future worlds and the impacts of technologies essential.

Efforts to integrate social and ethical concerns “by design” face particular challenges related to the classical dilemma of ascertaining social and ethical consequences of science and technology where knowledge is vastly incomplete (Collingridge 1980). Yet as Buckley et al. (2017) observed, this dour assessment significantly underappreciates the potential of imaginative, participatory approaches to anticipate and integrate more societally responsive approaches to technology development. Traditional framings of such dilemmas also often ignore, on grounds of legitimacy in democratic societies, the importance of “wider and deeper democratic deliberation, including by vulnerable marginalized communities” (Genus and Stirling 2018, p 67).

In this article, we present the TechEthos Game: Ages of Technology ImpactsFootnote 1, a serious game built to support public reflection and perspectives on the social and ethical issues of new and emerging technologies. The game was developed in the EU-funded Horizon 2020 project, TechEthos, to surface and collect participants’ awareness, attitudes, and values towards four technology families and emerging technologies within: climate engineering, digital extended reality, natural language processing, and neurotechnologies. The game advances resources for “ethics by design” (d’Aquin et al. 2018) in service of research and innovation that is more inclusive of and responsive to the concerns of the public whose money is invested by governments into R&I funding. Beyond being an entertaining and informative activity, the game is a method of qualitative social research and is embedded in a larger citizen engagement activity. When embedded in research settings, the activity aims to answer the following research questions:

-

How aware are participants of the technologies presented?

-

What is their attitude towards the presented technologies?

-

Which values do citizens reveal when deliberating about these technologies, their applications, and potential implications?

The game and workshops in which it was deployed were constructed to enable data collection to answer these research questions. To be precise, the aim of the game was to elicit the players’ awareness, attitudes, and values towards the specific technologies by stimulating discussion.

The specific contribution of the game lies in its integration of participatory gameplay with systematic qualitative research and ethics-by-design processes. Where other serious games in this context are mainly presented as educational tools, we used and designed this game in particular as a methodological instrument to elicit empirically grounded societal values to inform the development of ethical guidelines and governance frameworks for emerging technologies. By deploying the game across multiple countries and different technology domains, this work demonstrates a replicable approach for embedding citizen perspectives into responsible innovation processes. We advance the anticipated impact of our contribution, therefore, methodologically and practically: advancing serious games as reusable research instruments for technology ethics and offering reflexive design insights for future game developers and participatory practitioners.

Below, after exploring the use of serious games in participatory research, we introduce the Horizon 2020 project TechEthos and describe the aims and the creation of the TechEthos Game using the Triadic Game Design methodology (Harteveld 2011). Then we present the TechEthos Game itself in terms of its key steps and rules of play. Next, we review the results of gameplay in 20 events across 6 countries in Europe with more than 321 participants. We discuss the social and ethical concerns surfaced at multiple levels, with attention to desirable and undesirable consequences for users and non-users alike (c.f., Table 3 in Author et al. 2023). In closing, we emphasize the potential of serious games to advance an inclusive and low-threshold approach to informing RRI for ethics-by-design.

Using games for participatory research

The transformative power of games extends far beyond the realm of mere entertainment. In the context of participatory research, games offer a unique medium for engaging players, stimulating discussions, and enabling exploration of complex subjects. The employment of games has garnered increasing recognition due to their unique attributes that facilitate deeper engagement and learning (Gugerell 2023; Pueyo-Ros et al. 2023). Interactive engagement is crucial in participatory research, making the active involvement of players in serious games a useful avenue for supporting their understanding and retention of subject matter (Khaled and Vasalou 2014).

The first steps in using games in academic settings are commonly attributed to Richard Duke, who pioneered the field in the 1960s and 1970s (Duke 2014). His work laid the groundwork for the current understanding of serious games as a tool for engagement and learning. According to psychologists and educators, two elements structure player engagement in serious games: the subjective enjoyment of the game experience and the motivations to play the game. By involving cognitive skills and multiple senses, games contribute to learning processes where substantive, experiential, and social learning all play a role. Games provide an environment where participants can freely make decisions, experience the outcomes, and reflect on these in a risk-free setting. This safety net is critical for encouraging open exchange and experimentation without the fear of real-world repercussions. Likewise, these types of games stimulate rich discussions and reflections post-gameplay. Players, having engaged actively with the game’s content, are often more prepared to share their strategies, justifications, and reflections. This exchange of ideas not only enriches the data collected for research but also provides valuable insights into the players’ thought processes and learning outcomes (Bayeck 2020; Freese et al. 2020; Monteiro-Krebs et al. 2024).

Game-based approaches are also explored in support of research and innovation with and for society (Speelman et al. 2023). Card game formats are often used to integrate interdisciplinary perspectives, facilitate discussions for complex ethical issues, and make action plans in areas. In IT development, the Moral-IT Deck and Impact Assessment Board were used as an “ethics by design” tool to prompt technologists and designers to reflect on ethical issues such as public data privacy and plan appropriate safeguards (Urquhart and Craigo 2021). Felt et al. developed a card game called “IMAGINE RRI” that facilitates understanding of RRI through discussions of life science research (2018). A further example can be found in Dayé et al., who identified the effectiveness of a game method in techno-political controversy mapping and future exploration (Daye et al. 2023).

Beyond research, public sectors have also employed game methods for policymaking practice. The European Commission Joint Research Centre has developed a serious game system, called Scenario Exploration System (SES) designed to help diverse participants, in less than 3 hours, to explore options for policymaking in a variety of topical areas from migration to nanotechnology (Bontoux et al. 2020). The authors identify the applicability of the game in three domains: forward-looking strategic and systemic reflection, engagement, and education. As Vervoort (2019) observed, such games -- whether in research or connected to policy and practice, not only bring substantial opportunities for border societal engagement, but also for advancing inquiry and analysis into people’s perceptions, values, and concerns with possible future developments of R&I (Umbrello et al., 2023).

The TechEthos project

Inspired by the adaptability and utility of game-based approaches, the TechEthos project adopted this method for its aims. TechEthos was an EU-funded project that dealt with the ethics of the new and emerging technologies anticipated to have high socio-economic impact. The project involved ten scientific partners and six science engagement organizations and ran from January 2021 to the end of 2023.

TechEthos aimed to facilitate “ethics by design”, meaning, to bring ethical and societal values into the design and development of new and emerging technologies from the very beginning. The technological families covered in the project were climate engineering (Buchinger et al. 2022a), digital extended reality and natural language processing (Buchinger et al. 2022b), as well as neurotechnologies (Buchinger et al. 2022c). Combining philosophical perspectives with legal studies and social sciences, the interdisciplinary project followed a mixed-methods approach to produce operational ethics guidelines for researchers, developers, research ethics committees and policy makers.Footnote 2 One key focus of the project was the engagement of different stakeholders to reconcile the needs of research and innovation and to elicit excitements and concerns (Cannizzaro et al. 2023; Hiney and Vleugel, 2023; Seedall et al. 2023).

Given the uncertainty accompanying the development path of emerging technologies and the challenge of dealing with diverse stakeholder groups involving different levels of understanding and perspectives on the ethics of such technologies, TechEthos applied a multi-stage and multi-stakeholder methodology. This methodology used scenarios as a backbone, whereby different societal groups’ reflections about ethical issues were explored through scenario narratives. Scenarios were further enriched through engagement with expert stakeholders before being adapted and integrated into the TechEthos Game.

The purpose of the TechEthos Game was to better understand citizens’ awareness, attitudes, and values toward new and emerging technologies. Three main steps were pursued, starting with the development of the game-based participatory methodology, followed by the recruitment of citizens, concluded with the engagement of citizens in a series of 20 workshops across six different countries (Austria, Czech Republic, Romania, Serbia, Spain, Sweden). Findings were used to support the development of ethical guidelines (Hiney and Vleugel 2023) for each technology family, as well as a series of policy briefs to the European Commission.

Creating a serious game: the Triadic Game Design

Our decision to develop a game as part of the TechEthos project was rooted in the aim of eliciting participants’ perspectives towards the trio of technologies covered by the project—climate engineering, digital extended reality, and neurotechnology. Traditional methodologies in participatory research often rely on surveys, interviews, and focus groups, which, while informative, can at times lack the immersive quality needed to spur reflection and engagement. Games, with their dynamic nature as explored above, can bridge this gap, helping participants to internalize and understand the intricacies of a subject in an experiential manner.

Despite the advantages of using games in social science research, a challenge lies in developing a suitable approach. Using existing games is often not suitable, as different games in different research contexts seek different outcomes and aim for different stakeholder groups. For example, existing methods like the Envisioning Cards (Friedman and Hendry 2012), the Implication Fan as part of the Ethics Toolbox of the Berlin Ethics Lab (Fischer et al. 2024) or the Digital Ethics Compass (Danish Design Centre 2021) focus on the ethical reflection with developers. For our purpose, however, we needed an approach that fostered discussion among laypersons and non-experts.

The game thus had to overcome two challenges at once: (1) create a low entry barrier and (2) convey as much information as needed while reducing complexity. The game mechanic therefor needed to be easy to understand yet suitable to the specifics of exploring a technology and its potential impacts on society. In the specific context of TechEthos, the goal of the game was to elicit the players’ awareness, attitudes, and values towards the technologies.

As these specific criteria could not been covered across all three technology families covered by the topic through existing methods, we decided to develop a new game using the Triadic Game Design (TGD) method (Harteveld 2011). The TGD method entailed three distinct phases fusing the realities of emerging technologies, with the meanings ascribed to them, and the playful elements that make games engaging (Harteveld 2011).

-

Reality: This phase covered the understanding of the core issues of the game: the context and intricacies surrounding the emerging technologies. To cover this, we drew from the background research conducted by the TechEthos project to set the foundation for our core content.

-

Meaning: This phase refers to understanding the motivations, intended outcomes, and the larger purpose behind the game. Our ambition was to elicit participant attitudes towards the emerging technologies—and their ethical and social implications. This also animated the meaning component of the game design.

-

Play: Lastly, the play phase aims at developing an actionable gameplay. In this phase, elements of reality and meaning are fused with the tangible steps of play to yield a game aligned with our design purpose.

Following the TGD, we ran several workshops with game designers, incorporating the feedback and insights from three co-creation workshops where several important decisions regarding the direction of the game were defined (Umbrello et al., 2022). For example, collaboration was considered highly important to the participants of the co-creation workshops. In terms of game design, this meant the players were working together against the game mechanics. Moreover, for the purposes of gathering meaningful data regarding people’s attitudes and values, we decided to have players represent themselves in the role of citizens called upon to make decisions that may impact the future of the world. The game needed to allow the players to make choices that resonated with their ethical standpoints towards the emerging technologies and stimulate them to express their standpoints. To avoid remaining at an abstract level, we sought to embed players’ discussions in concrete socio-technical tensions. The game stages unresolved value conflicts such as individual benefit versus collective risk, or rapid innovation versus long-term precaution. By asking players to negotiate such trade-offs, the game sets impulses to discuss how the players feel and how they deliberate when confronted with competing social goals or ethical claims.

Regarding the game mechanics, the workshop participants pointed out that players must make trade-offs such as taking one course of action and abandoning another, and that these choices have consequences. The decision players take at one stage also impact the next and in turn shape the technology development through the ages. For example, discarding an technology card early in the game triggers the removal of dependent cards later in play, solidifying the perception that choices have consequences and leave an impact on the social factors relevant to each technology family. Lastly, the game needed to address different technology families with their own timescales and social impacts. Some of them could be more focused on health or education, others about work, research, or social connections. In terms of game design, this meant the rules of the game stayed the same with every technology but each version was made with a different set of cards. This allows for an easy adoption and the creation of specific materials to the tailored needs of each technology family. This way, the game is set in two parts: a generic set of cards that will be used for all versions of the game, and a specific deck of cards dedicated to each technology family.

Its novelty lies in integrating narrative immersion with value articulation: participants reflect abstractly on ethical dilemmas but also enact trade-offs through collaborative gameplay. This enables the game to serve both as a citizen engagement method and as a research instrument for capturing nuanced public perspectives, thereby extending the scope of existing tools. The game developed advances the field of participatory tools by combining experiential gameplay with structured qualitative data collection, with a focus on the lay public. Finally, its lightweight design means there is a low threshold for other developers to modify the game cards for play through with different technology areas, where exploration of public values might be important to explore.

In the following, we describe the gameplay that resulted out of the game design decisions made.

About the TechEthos Game

At the beginning of the game, the host introduces the players to the activity by reading out the first page of the rulebook:

”You have been chosen, from a wide array of applicants, to sit on your regional delegation to the Citizen World Council (CWC) and decide in good conscience what may be best for future generations of people and the Planet.

The CWC has to forge the future starting with a specific set of technologies that we see emerging and whose potential is not yet realized. It will be your duty to decide which technological developments you personally value the most for a better future.

Be careful: each of your decisions will have unforeseeable consequences on three aspects of society. At each step in the game, you will learn the impact of your choices. Your mission is to avoid that any of the three social factors reach their limit. Because if the impacts are too significant, the world as we know it will change beyond our recognition.

But do not despair! There is hope: if at some point the impact on a social factor during the game reaches its limits, the Citizen World Council has the power to respond with global actions that set ethical boundaries to technological developments. This will help cancel a card’s impact on social factors and make the world safe – at least for another round.

Let’s play!”

In the TechEthos game, players take the role of members of the Citizen World Council (CWC), a fictional institution that regulates the development path of one of the technologies while maintaining a number of social factors (e.g., inequality, fairness, etc.) and trying to keep the world stable. As part of the CWC, they grapple with the profound responsibility of shaping the world’s technological trajectory. With each game decision, players navigate the intricate balance between advancing technology and its social ramifications. Unintended consequences on three distinct social factors evolve with each game move, and players must continuously assess and manage these repercussions. This means the players move through different stages and choosing at each stage to keep or discard certain cards. In other words, Players make choices between different technologies (Tech Age I), their applications in everyday life (Tech Age II) and evaluate the potential ethical impacts of those technologies (Tech Age III).

Game components

For the game to be played, it needs a game host and three to seven additional players. The game materials consist of the following elements (see Fig. 1).

-

Boardgame (1): The board game structures the discussion and offers a predefined layout for the cards.

-

Tech Age Cards (21): The game divides into three phases called Ages, each with its deck of cards, intricately detailing three technologies (Age 1), potential applications (Age 2), and anticipated ethical challenges (Age 3).

-

World Cards (3): This card describes three social factors that will be impacted by the players decisions and the consequences of the technologies they support. There are three world cards, affecting the level of difficulty for the game. In combination with the Three Impact Tokens, the card tracks the evolution of those factors as participants play the game.

-

Council Response Cards (10): Critical for course correction, these cards allow the council (players) to articulate and unanimously agree upon responses to technology-induced issues.

-

Impact Cards (3): Each technology kept in the game will leave an impact on the world. This card holds all Tech Cards and lists their impact on one or more social factors.

-

Vote Cards (14): Players receive one each of +1 and +2 Vote Cards, pivotal for game progression.

-

Credits Card & Turn Cards (2): These succinctly encapsulate game rules and offer insights into the overarching TechEthos project.

-

Rulebook (1): Explains the rules of the game and helps the facilitator to steer the conversations.Footnote 3

Game components of the TechEthos Game: Ages of Impact.

Game set-up

Players begin by positioning the gameboard and selecting one out of four technology families (climate engineering, digital extended reality, natural language processing, or neurotechnologies) for the session. The game components are arranged according to the rulebook and the gameboard. The difficulty level, ranging from Easy to Expert, determines the chosen World Card, setting the stage for the impending strategic maneuvering.

Gameplay

The game progresses through three different ages and in each age, the players are presented with different Tech Age Cards. In the first age, players are presented with three technologies that are part of the larger technology family. For example, when playing the card deck on representing the technology family “digital extended realities”, the first three cards revealed introduce the technologies metaverse, virtual reality and digital twins. After revealing, each player shares their opinion on the technologies and explains to the others why the metaverse would be beneficial in the future, or why digital twins should not be developed further. The facilitator navigates the discussion and encourages each player to share what they are excited and concerned about. As soon as the discussion comes to a hold, the facilitator asks each player to cast their votes and to decide which technology to support further. The technology with the least votes is discarded.

In the second age, four cards are revealed explaining certain fields of applications (see Fig. 2). After the players have read and understood the cards, the facilitator encourages the players to discuss on the presented examples and share their excitement and concerns. Players again vote on which applications to support further, with the least supported applications discarded.

Anatomy of a Tech Age Card.

The third and last age introduces certain principles and values that may be impacted by the previous choices taken. Here, players discuss the implications and again vote on which ethical issues to focus on as a World Council. After the final impacts of their decisions are revealed, players have a last opportunity to offer Council Responses to impacts generated by their choices.

Each decision taken by players impacts one or more social factors depicted on the World Cards, which is revealed at the end of each phase by addressing the Impact Card. Depending on the choices made, the Impact Token for one of three social factors is moved forward. As soon as the Impact Token reaches the predefined limit of steps for each social factor, the societal structure becomes endangered and players might have to intervene by introducing solutions to safeguard societal wellbeing. The solution is written on the Council Response Card. Once all players reach an agreement, the Impact Token is set back one step and the game proceeds.

Winning or losing

The game’s underlying objective is to avert a societal breakdown across the three tech ages. A player’s ability to collaboratively steer clear of social factor thresholds determines the game’s outcome. Winning indicates a resilient world sustained through collaborative and wise Council action across all three tech ages, though it might not be the ideal world for every player. Post-game reflections allow players to deliberate on different paths and prospective solutions.

Discussion of gameplay results

The main intention behind the game was to involve citizens in the process of developing ethical guidelines for the respective emerging technologies—climate engineering (CE), digital extended reality (XR), natural language processing (NLP), and neurotechnologies (NT). Therefore, beyond being an immersive approach for science communication or an entertaining activity to spark the deliberate exchange of ideas, the main aim of the game is to elicit players’ attitudes towards technologies and invite them to share their concerns and excitement. To do this, we hosted several workshops all over Europe and collected a multitude of perspectives on the emerging technologies.

Participants and recruiting

To reach a broader audience and different voices, we engaged participants from six different European countries: Austria, Czech Republic, Romania, Serbia, Spain, and Sweden. With the support of local centers for science communication and museums,Footnote 4 we were able to conduct 20 workshops and engaged with 321 participants in total. In each country we involved participants with diverse backgrounds, with 30 per cent belonging to at least one or several vulnerable groups.Footnote 5 Each participant was engaged with one particular technology family: A total of 113 people participated in the Climate Engineering gameplay workshops, 65 in Digital Extended Reality, 26 in Natural Language Processing, and 117 in the Neurotechnology.

The workshops were conducted between December 2022 and March 2023 and accompanied by a thorough collection of different data points. This research process was guided by three research questions:

- How aware are participants of the technologies presented?

- What is their attitude towards the presented technologies?

- Which values do citizens reveal when deliberating about these technologies, their applications, and potential implications?

The workshops, in particular the game, were constructed in a way that they allowed to collect data that would answer the research questions.

Data Collection

To elicit citizens’ awareness, attitudes, and relevant values, we collected data in several ways during the gameplay workshop. We handed out a pre- and a post-survey to collect quantitative data on participant’s general awareness of the technologies and how excited or concerned the participants were about the technology on a scale of 1-5. As stated above, the game was designed in a way that stimulated a discussion about the potential risks of the respected technology. Such discussions were transcribed by the local partners and served as qualitative data on the reasons for excitement and concerns as well as the relevant values addressed by the participants. Each workshop was structured by the following time plan (see Table 1):

Awareness

In the TechEthos project, we sought to understand whether individuals had heard of the technology family or its respective technologies before (awareness). During the citizen engagement activities, participants were asked about their level of awareness at the beginning of the game exercise, after hearing a general background presentation on the workshops’ main technology family. Participants indicated their awareness with sticky dots on a two-dimensional scale ranging from “not really aware” to “somewhat aware” to “very aware.”

Among all participants from the six countries, the level of awareness of the technology families is evenly distributed. 70% of all participants had heard of the technology families discussed, with slight differences between natural language processing and climate engineering. While the former technology seems to be more prevalent among participants (one in three said they knew the technology very well), only one in five of the participants said they knew the latter technology family very well (see Fig. 3).

Awareness of the technology families.

When looking more deeply into the technology families, we see specific standouts. The most prominent technology people indicated awareness of were Chatbots. As mentioned during the game phase of the workshop, one reason for their prominence is the use in current applications, for example voice assistants like Apple’s Siri or Alexa by Amazon (Comment 663, NLP). The other reason was the use of chatbots on websites, for example with fashion retailers (Comment 435, NLP). Besides current applications, the participants also mentioned the latest developments in this field, like ChatGPT, which was first released (and covered by global news media) shortly before the project’s first workshop was conducted.

The second and third most prominent technologies after Chatbots were Virtual Reality (89% are somewhat or very aware) and Brain-Computer Interfaces (74% are somewhat or very aware). One reason for their prominence is that both technologies are established tropes within Science-Fiction and thus part of popular culture for more than forty years. In table discussions, participants regularly used Science-Fiction (SF) films as a frame of reference to discuss the technologies. A facilitator from Spain, who hosted a session on neurotechnologies, noted that in the discussion, participants brought up “examples from movies like Minority Report” (Comment 526, NLP) and shared fears “of becoming cyborgs” (ibid.), when discussing Brain-Computer Interfaces. One participant of the XR session in Serbia stated that they feared, “that we will stop communicating verbally and start living like in science fiction movies” (Comment 91, XR) while another participant from the Czech Republic expressed their believe that the fictional narratives have already or will become reality in the near future, expressing how SF is impacting the realm of imagination: “It’s like the matrix. We’re already in the matrix. Every Science Fiction will become reality one day” (Comment 332, XR).

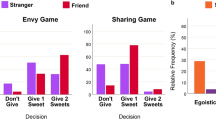

Attitudes

To investigate attitudes towards the technologies covered, we elicited participants’ degree of excitement or concern twice, through pre- and post-surveys connected to the gameplay workshops. In general, across the technologies covered, participant excitement prevailed over concern. However, we offer this observation noting that participants’ attitudes towards the emerging technologies were not an “either-or” affair. Most of the time, participants expressed combinations of excitement and concern over different facets of technologies and their implications. To give one example in the area of neurotechnologies, participants expressed excitement at prospects of improved health outcomes for patients, while also concern that the technology might be used to manipulate users or that data collected might be misused.

Therefore, we observe a key result as the importance of acknowledging participants’ conditional attitudes toward technologies. Participants might a technology if [X] is assured or if [Y] is prevented, where the two variables X and Y might represent societal values important participants and threatened by headless deployment or application of a technology. To better understand this conditionality, it is necessary to explore the values participants share when they discuss the technologies, applications, implications, and dimensions of excitement and concerns.

Values

Each of the workshops was documented and transcribed by the local partners, focusing on the comments and the arguments that participants exchanged during the gameplay. This resulted in a total of 782 comments. To elicit the citizen values, a grounded theory approach to qualitative coding and analysis of the comments was pursued by the project team (Bryant and Charmaz 2007). In the process, four members of the author team, each social scientist with different backgrounds, ages and nationalities, were responsible for coding. Each researcher began by independently open coding a subset of the data. Coders worked for approximately 20–30-min bursts, after which time they took a short break before reconvening to discuss. To ensure consistency across the coding team, each coder then had the opportunity to present his or her subset of codes for each item followed by a discussion as a group. Open codes assigned reflected a consensus of the group.

As the general set of open codes stabilized from round-to-round of coding, the team moved from discussing all open codes of their subset during a coding round, to focussing on only those codes for which they felt there was a degree of ambiguity requiring group discussion. In total, all of the 782 statements were coded with 475 open codes. Next, the researchers axially coded the open codes to construct higher-level categories. A preliminary round of axial coding led to the construction of 84 from the 475 open codes. In a final consensus coding round, involving iterative discussion referencing the original statements, the first-round axial codes were re-coded into a final set of 25 citizen value categories. Definitions and exemplary quotations from each category are described below. This analysis affords a comparison of abstracted values for each technology surfaced through the workshop engagement with public and other stakeholders from across six countries (see Fig. 4).

Values mentioned by participants, sorted by frequency of mentioning.

In the following, we will present the most prominent value categories mentioned by participants and overarching all three technology families.Footnote 6

Overarching results

The workshops, and particularly the game exercise, stimulated discussions about ways that emerging technologies should be designed, from participants’ perspectives. Following the “ethics by design” (d’Aquin et al. 2018) approach, which the TechEthos project subscribed to, the results reflect the values that are important to the participants when developing these technologies further. Across technology families and workshops, we observed three prominent value categories shared by participants.

First, safety and reliability, occurring in all four technology areas, manifested differently in each. In Climate Engineering (CE), participants were worried about the unknown effects and potential dangers (both to physical safety and health impacts, as well as the dangers to ecosystems) of the technology and questioned whether applications have been tested well enough to utilize them safely. In Digital Extended Reality (XR) and Natural Language Processing (NLP) participants valued potential contributions to a safer life, especially in the context of dangerous jobs, but also expressed worry about the addictiveness of the technology and its safe use, especially among younger generations. In Neurotechnologies (NT) safety mostly referred to the health impacts of the technology and a differentiation was made between invasive and non-invasive applications, with the latter causing more concern related to potential harms.

Second equity, diversity and inclusion also recurred across technology areas, again with different manifestations in each. In CE, this value category considered individuals, but more prominently was concerned with countries and was closely related to issues justice. Of particular concern were global distributive justice and intergenerational justice, as well as fairness in decision making regarding the use of the technologies. Coming from these considerations, participants emphasized the importance of making these technologies available globally and making sure all can benefit from them regardless of socioeconomic status or location. In XR and NLP the discussions more often focused on ensuring equal access to the technologies for all social groups. Participants raised concerns about high costs of the technology and therefore the potential for exacerbating the existing inequalities between social groups. In NT the value category equity, diversity and inclusion was also linked to concerns regarding equal access to the technology. This addresses the concern of normalizing and eradicating diverging forms of thinking instead of respecting and accepting forms of neurodivergence, but also the concern that any form of cognitive enhancement (if it will work) becomes only available for those who can afford it, resulting in a cognitive gap in our society. Furthermore, participants also expressed values around respect for neurodiversity in application areas, whether in the construction of models based on data or application across medical or legal use cases.

Third and finally were participant values related to responsible use and accountability. In CE, responsible use and accountability referred to clarity around assignment to parties for potential disasters and making sure that the applications of the technology are wise, thoughtful and well-planned. With XR and NLP concerns around this value category emerged around the question of who is responsible and accountable for the results of the technology and its consequences, given how many different actors are involved from the creation of the technology through to its utilization. Furthermore, citizens were worried about the misuse of the technology. In NT the discussions were more focused on the question of what sectors should use the technology. Citizens mentioned that well-planned use of the technology is crucial and that societies should prioritize its application in more important areas like health care, and not in entertainment. Furthermore, having clear accountability with regards to the collected data and for unintended consequences of the technology was a critical issue.

These results informed the TechEthos project’s development of guidelines to support ethics-by-design of the emerging technologies studied. Through the playful scenario of a Citizen World Council reflecting on exciting and concerning aspects of emerging technologies, participant feedback now has the potential to shape actual development of these technologies. Consequently, the game contributes another approach to surfacing lay public values and concerns for enhancing democratic input to technological development (Felt & Wynne 2007).

Limitation of the approach

We would like to pause here to observe a few points regarding the collection and analysis of the results presented above. These points, we believe, accompany much qualitative social science research, however bear repeating in the interest of transparency. While the TechEthos Game was designed to elicit citizens’ values regarding emerging technologies, it is important to acknowledge that values are never elicited in a vacuum: they are shaped by cultural context, discursive framing, facilitation practices, and the design assumptions embedded in the game mechanics themselves. Therefore, the game itself is an artifact that participates in articulating values rather than merely observing them.

First, the game necessarily operationalises values through predefined cards, categories, and social factors. Although these elements were grounded in extensive interdisciplinary research and co-creation workshops, the cards, as well as the game mechanics, structure what can be articulated and how ethical concerns can be expressed. Some moral perspectives may be amplified by the framing of trade-offs and voting mechanisms, while others may be harder to express within a decision-oriented format. In this sense, the game privileges deliberative and discursive modes of ethical reasoning that align with certain democratic and European governance traditions.

Second, the Citizen World Council narrative and collaborative gameplay model embed implicit assumptions about how ethical decisions ought to be made: through collective discussion, negotiation, and compromise under conditions of shared responsibility. The game aims to create a structured space in which some forms of ethical reasoning become visible and discussable. While this framing proved effective for stimulating dialogue and reflection, it may resonate differently across cultural contexts or moral frameworks that place greater emphasis on authority, moral absolutes, or non-negotiable values.

Third, cultural sensitivity remains a central challenge when eliciting values across countries and languages. Although participants were able to deliberate in their local languages and contexts, the subsequent translation, coding, and abstraction into value categories inevitably involved interpretive choices by the research team. These choices reflect scholarly judgement and are thus not neutral in their approach, i.e., alternative categorizations are plausible. The value categories presented in this paper should therefore be understood as analytically constructed orientations emerging from the culturally situated engagements.

Fourth, as readers will observe, the created categories reflect the subjective choice of the reviewers in terms of titling and description. Every attempt was made to impart clarity, as well as to ensure the demarcations between categories were as discreet as possible. Nevertheless, it is plausible that another group might have abstracted a slightly different set of 25 values from the list of 84 axial codes. We believe—despite all coders working together on the same team with relatively similar academic backgrounds, coming from a range of ages, expertise, genders, nationalities, and cultures, lends a degree of robustness to the agreed upon codes sufficient for reporting with confidence.

Recognizing these limitations is essential, particularly given the potential influence of this work on future serious game designers and participatory ethics practitioners. Beyond the attention to the ethical content discussed during gameplay, ethical game design requires also reflexivity regarding the normative assumptions embedded in mechanics, narratives, and facilitation formats. Thus, rather than positioning the presented game as a value-neutral tool, we propose understanding it as an intervention that invites certain kinds of ethical reflection.

Beyond methodological considerations, systemic challenges that are inherent to the development of emerging technologies, also constrain the real-world application of this game (and also similar approaches). This covers, among others, the willingness and capacity of stakeholders to participate in such workshops. Furthermore, due to the qualitative nature of the approach, scaling to larger audiences can become challenging. Although the game in itself is available to be played and adopted for different settings and technologies, analysis will inevitably remain context specific. When it comes to adoption of results into guidelines, recommendations, policy, or design processes, corporate dominance in lobbying and profit-driven innovation remain, by and large, overwhelming countervailing forces when it comes to calls for more responsible innovation. While our game cannot resolve these systemic barriers directly, it contributes by providing a low-threshold entry point for citizen voices to be collected, aggregated and activated to complement or advance other governance processes (e.g., elections, policy or awareness campaigns) seeking more responsible innovation.

Looking ahead, the TechEthos Game is ready to be adapted to other technological domains and scaled for different formats (e.g., online, classroom, or policymaking workshops), and integrated into ongoing governance arenas. Since its release, the game has already been adopted by the Austrian research project “MemorAI Styria” (MemorAI 2025). While its immediate impact is to enrich citizen engagement within a specific project, its design suggests pathways for institutionalization or engaging with different stakeholders: embedding gameplay in ethics review, funding deliberations, policy consultations or also reflecting with engineers, as will be tested in the European project “RobustifAI” (RobustifAI 2025). Such steps enhance the long-term relevance of serious games as tools for anticipatory and responsible innovation.

Conclusion

European research and innovation investments increasingly call for the “societal acceptance” of technologies. This implies that people can take or leave a technology being developed but have little effect in shaping its development. Yet as illustrated in the results presented above on participant awareness, attitudes and values, people do indeed have a voice and preferences that could, if considered directly, condition the direction of research and innovation, and its eventual “acceptance” by society. It is rather, perhaps, that such voices are heard too little or too late or given less weight in the context of research and innovation investments and technology development. As European R&I frameworks continue to strive toward reflecting European values, a recognition of the conditional nature of “acceptance” in “ethics by design” processes could improve technological development by directly orienting research and innovation toward prioritized valued and addressing concerns associated with said values.

However, eliciting and integrating societal perspectives and its conditional acceptance remains a challenge. Although reflecting the values that might be supported or threated by the technology is a task that is often asked for in the area of responsible research and innovation, the method to capture these values remains often unclear and methodologically vague (Boenink and Kudina 2020). In this article, we presented an approach that offers a low threshold to engage with citizens and discussing their perspectives towards emerging technologies. The TechEthos Game: Ages of Impact allows participants to immerse in a profound discussion on their own attitudes, excitements, and concerns. As showcased, these discussions become a rich source for qualitative analyses of the underlying values that build the foundation on which the attitudes towards new and emerging technologies rest.

The “ethics by design” approach championed by TechEthos sought to identify guidelines to support inclusion of social and ethical concerns in the earliest possible stages of technology development. In presenting the prioritized values of safety and reliability, equity, diversity and inclusion, and responsible use and accountability, this project presents a clear opportunity for policy, business, and researchers interested in pursuing climate engineering, digital extended reality technologies, natural language processing or neurotechnologies—and having a better chance of addressing core values and concerns of people directly and indirectly affected by these technologies. Indeed, the above values illustrate how there is a range of potential benefits and potential burdens associated with the development of these technologies, often sharing two sides of the same coin. For example, the ultimate aims of climate engineering are vital: addressing climate change is more urgent now than ever before. However, the medicine must not be worse than the cure, which is where conditional acceptance priorities of equity and accountability, for example, come into play.

Whether or not “society” “accepts” a technology is an unhelpful way to consider technology development. It inherently diminishes the legitimate values and concerns of millions of people and, further, does not distinguish that different groups of people may have different values and concerns (rather than being one homogenous “society”). Our experiences using the TechEthos Game, with more than 300 participants across six countries, speaks to how “societal acceptance” is contingent. To elaborate: “acceptance” is contingent on who is being asked about the technological features or goals. “Acceptance” is also contingent on whether researchers, policy makers, and business interests genuinely—“by design”—work to address societal priorities in the process of technology development. As one table facilitator reported on a discussion during one of the workshops:

“They concluded that they had arrived at the desired world because everyone had had a say in decisions. The group was heterogeneous in terms of gender and age and this made it possible to approach problems from different perspectives. The young people from vulnerable groups at the table highlighted the fact that they had felt important during the game because it was the first time someone had listened to them” (Comment 43, XR).

To advance responsible innovation, continued R&I investments in climate engineering, digital extended reality, natural language processing and neurotechnology would do well to foster equity, reliability, and regard for healthy people and planet by design … and to avoid undermining these values explicitly or by not attending to them.

Data availability

The datasets generated by the survey research and analyzed during the current study are available in the Dataverse repository: https://dataverse.harvard.edu/dataset.xhtml?persistentId=doi:10.7910/DVN/BDKMOQ.

Notes

All the materials are available as CC-BY-NC at the following website: https://doi.org/10.5281/zenodo.12206042

We collaborated with ScienceCenter-Netzwerk (Austria), iQLANDIA (Czech Republic), Bucharest Science Festival (Romania), Center for the Promotion of Science (Serbia), Parque de las Ciencias (Spain), and VA – Vetenskap & Allmänhet (Sweden).

This would cover participants belonging to the following groups: socio-economic disadvantage e.g., as youth (over 18 years old) not in education, employment or training, homelessness; social and physical isolation (e.g., people living in rural, remote and regional areas, isolated elderly) gender and LGBTQ + ; Minority status, including Roma communities, migrants, refugees, asylum seekers learning difficulties and physical difficulties and disabilities; and mental and physical health, including patients with chronic or incurable diseases. When recruiting participants, the local partners collaborated with trusted associations providing relevant services. No questions regarding vulnerability were to be asked directly to participants. The recruitment by the associations was a sufficient indicator of belonging to this societal group. Furthermore, the local partners could decide to hold the event on their premises or at external venue where populations belonging to groups that have been identified as vulnerable could more easily access and participate (e.g., community centers, the venues of local associations, temporary/pop-up activity spaces, etc.). For example, our local partner from Sweden hosted a game workshop on Neurotechnologies at an elderly care home.

For an in-depth analysis of the results, please refer to the official report (Buchinger et al., 2023a)

References

Adomaitis L, Grinbaum A (2023) XR and General Purpose AI: from values and principles to norms and standards. Deliverable to the European Commission. TechEthos. https://www.techethos.eu/xr-from-values-to-norms-and-standards/

Bayeck R (2020) Examining Board Gameplay and Learning: A Multidisciplinary Review of Recent Research. Simulation & Gaming, 51. https://doi.org/10.1177/1046878119901286

Bernstein MJ, Nielsen MW, Alnor E, Brasil A, Birkving AL, Chan TT, Griessler E, de Jong S, van de Klippe W, Meijer I, Yaghmaei E, Nicolaisen PB, Nieminen M, Novitzky P, Mejlgaard N (2022) The societal readiness thinking tool: a practical resource for maturing the societal readiness of research projects. Sci Eng Ethics 28(1):6. https://doi.org/10.1007/s11948-021-00360-3

Bernstein MJ, Mehnert W, Nishi M, Mandzhieva R, Csabi A, Buchinger E (2023) Key messages on ethical values and principles for neurotechnology development and use. Deliverable to the European Commission. TechEthos. https://www.techethos.eu/key-messages-ethical-governance-neurotechnologies/

Boenink M, Kudina O (2020) Values in responsible research and innovation: From entities to practices. J Responsible Innov 7(3):450–470. https://doi.org/10.1080/23299460.2020.1806451

Bontoux L, Sweeney JA, Rosa AB, Bauer A, Bengtsson D, Bock A-K, Caspar B, Charter M, Christophilopoulos E, Kupper F, Macharis C, Matti C, Matrisciano M, Schuijer J, Szczepanikova A, van Criekinge T, Watson R (2020) A game for all seasons: lessons and learnings from the JRC’s scenario exploration system. World Futures Rev 12(1):81–103. https://doi.org/10.1177/1946756719890524

Brundage M, Guston DH (2019) Understanding the movement(s) for responsible innovation. In International handbook on responsible innovation. Edward Elgar Publishing, p 102–121) https://www.elgaronline.com/display/edcoll/9781784718855/9781784718855.00014.xml

Bryant A, Charmaz K (2007) The SAGE handbook of grounded theory. SAGE Publications Ltd, https://doi.org/10.4135/9781848607941

Buchinger E, Kinegger M, Zahradnik G, Bernstein MJ, Porcari A, Gonzalez G, Pimponi D, Buceti G (2022a) Climate Engineering. TechEthos Project Factsheet based on TechEthos technology portfolio: assessment and final selection of economically and ethically high impact technologies, Deliverable 1.2 to the European Commission. TechEthos. https://www.techethos.eu/in-short-climate-engineering/

Buchinger E, Kinegger M, Zahradnik G, Bernstein MJ, Porcari A, Gonzalez G, Pimponi D, Buceti G (2022b) Digital Extended Reality. TechEthos Project Factsheet based on TechEthos technology portfolio: Assessment and final selection of economically and ethically high impact technologies, Deliverable 1.2 to the European Commission. TechEthos. https://www.techethos.eu/in-short-digital-extended-reality/

Buchinger E, Kinegger M, Zahradnik G, Bernstein MJ, Porcari A, Gonzalez G, Pimponi D, Buceti, G (2022c) Neurotechnologies. TechEthos Project Factsheet based on TechEthos technology portfolio: assessment and final selection of economically and ethically high impact technologies, Deliverable 1.2 to the European Commission. TechEthos. https://www.techethos.eu/in-short-neurotechnologies/

Buchinger E, Mehnert W, Csabi A, Nishi M, Bernstein MJ, Gonzales G, Porcari A, Grinbaum A, Adomaitis L, Lenzi D, Rainey S, Umbrello S, Vermaas P, Paca C, Alliaj G, Whittington-Davis A (2023a) D3.1 Evolution of advanced TechEthos scenarios. TechEthos Project Deliverable to the European Commission. TechEthos. https://www.techethos.eu/multi-stakeholder-evolution-of-techethos-scenarios-on-ethical-issues-in-climate-engineering-digital-extended-reality-and-neurotechnologies/

Buckley JA, Thompson PB, Whyte KP (2017) Collingridge’s dilemma and the early ethical assessment of emerging technology: The case of nanotechnology enabled biosensors. Technol Soc 48:54–63. https://doi.org/10.1016/j.techsoc.2016.12.003

Cannizzaro S, Bhalla N, Brooks L, Richardson K, Francis B, Lenzi D (2023) TechEthos Deliverable D5.3: Suggestions for the revision of existing operational guidelines for climate engineering, neurotechnologies and digital XR technologies. TechEthos. https://www.techethos.eu/suggestions-for-the-revision-of-existing-operational-guidelines-for-climate-engineering-neurotechnologies-and-digital-xr-technologies/

Collingridge D (1980) The social control of technology. Pinter London

d’Aquin M, Troullinou P, O’Connor NE, Cullen A, Faller G, Holden L (2018) Towards an “Ethics by design” methodology for AI research projects. In Proceedings of the 2018 AAAI/ACM conference on AI, ethics, and society, p 54–59. https://doi.org/10.1145/3278721.3278765

de Saille S (2015) Innovating innovation policy: The emergence of ‘Responsible Research and Innovation. J Responsible Innov 2(2):152–168. https://doi.org/10.1080/23299460.2015.1045280

Danish Design Centre (2021) The Digital Ethics Compass. DDC – Dansk Design Center. https://ddc.dk/vaerktoejer/toolkit-the-digital-ethics-compass/

Dayé C, Prunč RL, Hofmann-Wellenhof M (2023) The Mammoth prophecies: a role-playing game on controversies around a socio-technical innovation and its effects on students’ capacities to think about the future. Eur J Futures Res 11(1):7. https://doi.org/10.1186/s40309-023-00219-9

Duke RD (2014) Gaming: the future’s language. wbv Media GmbH & Company KG, Bielefeld

European Commission (2020) Institutional changes towards responsible research and innovation: Achievements in Horizon 2020 and recommendations on the way forward. Directorate General for Research and Innovation. https://data.europa.eu/doi/10.2777/682661

Felt U, Wynne B (eds) (2007) Taking European knowledge society seriously: report of the Expert Group on Science and Governance to the Science, Economy and Society Directorate, Directorate-General for Research, European Commission. Office for Official Publ. of the Europ. Communities. https://op.europa.eu/en/publication-detail/-/publication/5d0e77c7-2948-4ef5-aec7-bd18efe3c442#

Felt U, Fochler M, Sigl L (2018) IMAGINE RRI. A card-based method for reflecting on responsibility in life science research. J Responsible Innov 5(2):201–224. https://doi.org/10.1080/23299460.2018.1457402

Floridi L (2019) The logic of information: a theory of philosophy as conceptual design. Oxford University Press

Fischer N, Mehnert W, Ammon S (2024) Ethics Toolbox. Berlin Ethics Lab—Toolbox. https://www.tu.berlin/en/philtech/berlin-ethics-lab/ethics-toolbox

Foley RW, Bernstein MJ, Wiek A (2016) Towards an alignment of activities, aspirations and stakeholders for responsible innovation. J Responsible Innov 3(3):209–232. https://doi.org/10.1080/23299460.2016.1257380

Francis B, Lenzi D, Bourban M (2023a) Key messages for the ethical governance of Carbon Dioxide Removal (CDR). TechEthos. https://www.techethos.eu/key-messages-ethical-governance-cdr/

Francis B, Lenzi D, Bourban M (2023b) Key messages for the ethical governance of Solar Radiation Modification (SRM) research. TechEthos. https://www.techethos.eu/key-messages-ethical-governance-srm/

Freese M, Lukosch H, Wegener J, König A (2020) Serious games as research instruments—do’s and don’ts from a cross-case-analysis in transportation. Eur J Transp Infrastruct Res 20(4). https://doi.org/10.18757/ejtir.2020.20.4.4205

Friedman B, Hendry D (2012) The envisioning cards: a toolkit for catalyzing humanistic and technical imaginations. In Proceedings of the SIGCHI conference on human factors in computing systems, p 1145–1148. https://doi.org/10.1145/2207676.2208562

Friedman B, Hendry, DG (2019) Value sensitive design: shaping technology with moral imagination (Illustrated Edition). The MIT Press

Genus A, Stirling A (2018) Collingridge and the dilemma of control: Towards responsible and accountable innovation. Res Policy 47(1):61–69. https://doi.org/10.1016/j.respol.2017.09.012

Gugerell K (2023) Serious games for sustainability transformations: Participatory research methods for sustainability - toolkit #7. GAIA - Ecol Perspect Sci Soc 32(4):292–295. https://doi.org/10.14512/gaia.32.3.5

Guston DH (2014) Understanding ‘anticipatory governance. Soc Stud Sci 44(2):218–242. https://doi.org/10.1177/0306312713508669

Harteveld C (2011). Triadic game design: balancing reality, meaning and play. Springer, London

Hiney M, Vleugel M (2023) TechEthos Deliverable D5.1: Complementing the ALLEA European Code of Conduct for Research Integrity. TechEthos Project Deliverable. TechEthos. https://www.techethos.eu/complementing-the-allea-european-code-of-conduct-for-research-integrity/

Khaled R, Vasalou A (2014) Bridging serious games and participatory design. Int J Child-Computer Interaction. https://doi.org/10.1016/j.ijcci.2014.03.001

Kuhlmann S, Rip A (2014) Research policy must rise to a grand challenge. Research Europe 2013;1–11. https://doi.org/10.1016/j.respol.2012.07.011

Mazzucato M (2018) Mission-oriented innovation policies: Challenges and opportunities. Ind Corp Change 27(5):803–815. https://doi.org/10.1093/icc/dty034

MemorAI (2025) MemorAI Styria. https://memorai.uni-graz.at/de/

Monteiro-Krebs L, Geerts D, Sanders K, Caregnato SE, Zaman B (2024) Board games as a research method: a case study on research game design and use in studying algorithmic mediation. In Extended Abstracts of the 2024 CHI conference on human factors in computing systems, p 1–8. https://doi.org/10.1145/3613905.3637116

Novitzky P, Bernstein MJ, Blok V, Braun R, Chan T-TT, Lamers W, Loeber A, Meijer I, Lindner R, Griessler E (2020) Improve alignment of research policy and societal values. Science 369(6499):39–42. https://doi.org/10.1126/science.abb3415

Owen R, Bessant J, Heintz M (eds.) (2013) Responsible innovation: managing the responsible emergence of science and innovation in society, 1st edn. Wiley. https://doi.org/10.1002/9781118551424

Owen R, Von Schomberg R, Macnaghten P (2021) An unfinished journey? Reflections on a decade of responsible research and innovation. J Responsible Innov 8(2):217–233. https://doi.org/10.1080/23299460.2021.1948789

Pueyo-Ros J, Comas J, Säumel I, Castellar JAC, Popartan LA, Acuña V, Corominas L (2023) Design of a serious game for participatory planning of nature-based solutions: the experience of the Edible City Game. Nat-Based Solut 3: 100059. https://doi.org/10.1016/j.nbsj.2023.100059

Pfotenhauer SM, Juhl J (2017). Innovation and the political state: beyond the myth of technologies and markets. In Godin B, Vinck D (eds), Critical studies of innovation: alternative approaches to the pro-innovation bias. Edward Elgar Publishing

RobustifAI (2025) RobustifAI|Generative AI through human-centric integration of neural and symbolic methods. https://robustifai.eu/

Schot J, Steinmueller WE (2018) Three frames for innovation policy: R&D, systems of innovation and transformative change. Res Policy 47(9):1554–1567. https://doi.org/10.1016/j.respol.2018.08.011

Seedall C, Lindemann T, Klar R, Tambornino L (2023). Criteria for ethical review by RECs in emerging technology research. TechEthos Project Deliverable. TechEthos. https://www.techethos.eu/criteria-for-ethical-review-by-recs/

Speelman EN, Escano E, Marcos D, Becu N (2023) Serious games and citizen science; from parallel pathways to greater synergies. Curr Opin Environ Sustainability 64: 101320. https://doi.org/10.1016/j.cosust.2023.101320

Stilgoe J, Owen R, Macnaghten P (2013) Developing a framework for responsible innovation. Res Policy 42(9):1568–1580. https://doi.org/10.1016/j.respol.2013.05.008

Umbrello S, Vermaas P, Paca C, Alliaj G, Nishi M, Whittington A, Jouvenot F, Bernstein MJ, Mehnert W, Buchinger E (2022). D3.2 Tools to develop and advance scenarios dealing with the ethics of new technologies. TechEthos Project Deliverable. TechEthos. https://www.techethos.eu/the-techethos-game-ages-of-technology-impacts/

Umbrello S, Bernstein MJ, Vermaas PE, Resseguier A, Gonzalez G, Porcari A, Grinbaum A, Adomaitis L (2023) From speculation to reality: enhancing anticipatory ethics for emerging technologies (ATE) in practice. Technol Soc 74: 102325. https://doi.org/10.1016/j.techsoc.2023.102325

Urquhart LD, Craigon PJ (2021) The Moral-IT Deck: a tool for ethics by design. J Responsible Innov 8(1):94–126. https://doi.org/10.1080/23299460.2021.1880112

van den Hoven J (2013) Value sensitive design and responsible innovation. In Owen R, Bessant J (eds.), Responsible innovation. John Wiley & Sons, Ltd, p 75–83) https://doi.org/10.1002/9781118551424.ch4

Vervoort JM (2019) New frontiers in futures games: Leveraging game sector developments. Futures 105:174–186. https://doi.org/10.1016/j.futures.2018.10.005

von Schomberg R (2013) A Vision of Responsible Research and Innovation. In Owen R, Bessant JR, Heintz M (eds.), Responsible innovation: managing the responsible emergence of science and innovation in society. Wiley & Sons Ltd, p 51–74

Weber KM, Rohracher H (2012) Legitimizing research, technology and innovation policies for transformative change. Res Policy 41(6):1037–1047. https://doi.org/10.1016/j.respol.2011.10.015

Winner L (1980) Do artifacts have politics? Daedalus 109(1):121–136

Wynne B (1994) Public understanding of science. In Jasanoff S, Markle G, Peterson J, Pinch T (eds.) Handbook of science and technology studies. Sage, Thousand Oaks, p 361–388

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Contributions

Wenzel Mehnert: Conceptualization, Methodology, Investigation, Data Curation, Writing—Original Draft, Writing—Review & Editing, Visualization Michael J. Bernstein: Conceptualization, Methodology, Investigation, Data Curation, Writing—Original Draft, Writing—Review & Editing, Supervision Steven Umbrello: Conceptualization, Methodology, Writing—Original Draft Alexandra Csabi: Data Curation, Writing—Original Draft Masafumi Nishi: Data Curation, Writing—Original Draft Renata Mandzhieva: Data Curation, Writing—Original Draft Greta Alliaj: Methodology, Investigation, Writing—Original Draft, Project administration Pieter E. Vermaas: Conceptualization, Methodology, Writing—Review & Editing, Supervision.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

Name of the approval body: De Montfort University, Leicester, UK Approval number: 508517 Date of approval: 24 Oct 2022 Scope of approval: Face to face research interactions, including interviews, focus groups, workshops etc.; Travel for research purposes; Any activity that could be considered ‘fieldwork’.

Informed consent

How: All informed consent was obtained in written form (see below). When: The informed consent was obtained at the day of the workshops. In total, there were 20 workshops conducted in six different countries. All workshops were conducted between December 2022 and March 2023. During the introduction of the workshops, participants were introduced to research ethics and data collection, the context and the planning (timeline, schedule, etc.) of the workshop. Participants also received a packet containing a unique, randomized ID and a informed consent sheet. It covered information on data use and consent to publish the results. By Whom: The informed consent was obtained by the partner institutions that facilitated the workshops: (1) ScienceCenter-Netzwerk (Austria), (2) iQLANDIA (Czech Republic), (3) Bucharest Science Festival (Romania), (4) Center for the Promotion of Science (Serbia), (5) Parque de las Ciencias (Spain), and (6) VA – Vetenskap & Allmänhet (Sweden). From whom: In total, 331 participants took part in the 20 workshops. Among the participants were members belonging to vulnerable groups: socio-economic disadvantage (e.g., as youth (over 18 years old) not in education, employment or training, homelessness); social and physical isolation (e.g., people living in rural, remote and regional areas, isolated elderly); gender and LGBTQ + ; Minority status, including Roma communities, migrants, refugees, asylum seekers; learning difficulties and physical difficulties and disabilities; mental and physical health, including patients with chronic or incurable diseases. It was explicitly stated that among the participants were no minors (or under 16, as per legislation) and that every participant was capable to give consent. For more information on the participants, see chapter 5.2 here: @D3.1_Evolution_of_advanced_TechEthos_scenarios_draft-1.pdfScope of the consent: Here is the Informed Consent Form that was handed out to each participant. Informed Consent Form I, the undersigned, confirm that I have read and understood the information about the project, as described information sheet. I have been given the opportunity to ask questions about the activity in which I am participating and the survey. I am participating in this research voluntarily, meaning that I decide whether I participate or not. I am free to stop participating at any time. I understand that I will be asked to provide my professional or personal views. I may refuse to answer any questions I do not wish to discuss -- without any consequences. I understand that my answers to the survey will be separated from any information that can identify me and stored separately. I understand that the workshop will not be recorded but that facilitators will write down notes from the discussion, without attributing them to a specific person. I understand that the information gathered during this activity (non-identifiable to specific persons) will be used for a report of the project which will be submitted to the European Commission and made available publicly on the project website and in a public repository. The data may also be used for other related research outputs such as publications in scientific journals. Under the General Data Protection Regulation 2016/679 (art. 13 and 14), the project has an obligation to inform me of the purpose of the collection, use, storage, and retention of the information I have provided. I understand that the project will only collect information that is relevant to its activities. Any personal information will be stored on the organiser’s internal servers and will accessible to only those involved in the TechEthos project. The project will not transfer my personal information to third parties outside the project. I have the right to access data about myself and correct any errors. I have the right to obtain the restriction of processing or the right to transmit my data to another controller under the specific terms of the General Data Protection Regulation. In addition, I have the right to object to the processing of my personal data. If I am of the opinion that my data is processed unlawfully, I have the right to file a complaint with a supervisory authority. I understand that this activity conforms to the guidelines of the European Commission. I can contact person X, leader of the activities of the project in country X with any queries relating to my data or the project itself: •By email: email X •By phone: phone no X Data protection officer at the level of the overall TechEthos project: Beatrice Kornelis (beatrice.kornelis@ait.ac.at) Data protection officer within the organization X: to be added Participants information: Name______ (Organisation)_______ Date and place_________ Signature________ Consent form: All participants have been fully informed that their anonymity is assured, why the research is being conducted, how their data will be utilised. There was no risk implied with the participation on the study. For more, see the attached Consent form that was handed out at the workshops.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Mehnert, W., Bernstein, M.J., Umbrello, S. et al. Ethical playgrounds: unveiling a serious game for technology ethics within the TechEthos project. Humanit Soc Sci Commun 13, 484 (2026). https://doi.org/10.1057/s41599-026-06645-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1057/s41599-026-06645-x