Abstract

Co-design has been suggested to improve intervention effectiveness and sustainability. However, digital health intervention co-design is inconsistently reported. This umbrella review aims to synthesize what is known about co-design of digital health interventions. We searched five databases from inception. Reviews which reported on co-design methodologies used in digital health were eligible. Information on review type, health conditions, and reported specifics of co-design were extracted and synthesized. Methodological quality was assessed using the AMSTAR2 tool. We included 21 reviews published between 2015 and 2023. Co-design participants included patients, caregivers and healthcare professionals. The frequency and breadth of participant involvement in co-design activities were reported in less than half of reviews. Participants evaluated intervention co-design as a positive process. All reviews were rated as critically low quality. This umbrella review highlights the inconsistent reporting of co-design in digital health. Here, we emphasize the importance of creating guidelines to direct co-design activities.

Similar content being viewed by others

Introduction

Digital health interventions are health interventions delivered through digital tools or communication technologies which collect, store, share and analyze health information for purposes of improving patient health and health care delivery1. This umbrella term includes health interventions delivered through a broad range of digital tools including but not limited to wearable devices, mobile apps, texting through smartphones, and telehealth1,2,3. The number and popularity of digital health interventions has rapidly grown over the past decade as part of increased interest in healthcare digitalization and its potential to improve access to (personalized) care at lower costs3,4. Digital health interventions have been associated with overall positive changes in disease self-management, clinical outcomes, and quality of life in patient populations including young adults, pediatrics, and older adults5,6,7. In addition, many studies have reported these interventions to be highly acceptable to patients, family members, and clinicians8,9. Clinically implemented digital health interventions can provide actionable data to support policy making and fiscally responsible allocations of public funding. Digital health data generated in a structured way also may provide academic and industry partners with evidence related to real-world innovation utility. Despite these successes, there is increasing awareness of field-related challenges including the rarity in which these interventions are subject to rigorous scientific evaluation, lack of adoption by end-users, and poor integration into routine clinical care3.

Design and development of digital health interventions is complex and the lack of end-user involvement in the process is a key contributor to limited intervention use by end-users—adversely effecting potential impact on health outcomes and sustained practice integration10,11,12. Co-design, a collective creative approach where varied stakeholders including patients, clinicians and policy makers are actively involved in the development, design and implementation of interventions, has been suggested to address this pitfall13. Co-design enables researchers and designers to embed the specific needs, attitudes, and values of the end users and key contributors early in intervention development while simultaneously pre-emptively identifying and addressing potential barriers to adoption10,11,12,13. Co-design also addresses, in part, the ethical imperative to engage patients, clients, and families meaningfully in research related to them.

Despite these potential positive impacts, co-design strategies are inconsistently implemented in digital health intervention development and often involve only limited end-user involvement, particularly by vulnerable populations14. Further, a clear understanding of how best to conduct co-design of digital health interventions from a practical point of view, especially when considering how to engage the diversity of health system users, remains elusive. This knowledge is critical for key audiences including health funders, policy makers and practitioners to know which co-design approaches and activities may be most useful to the development of effective interventions. This review aims to address this gap by answering the question: what is known about the practical methods to conduct co-design of digital health interventions (including setting, population, intervention details, session length, co-design strategy) and the breadth and depth of end-user involvement in the process?

Results

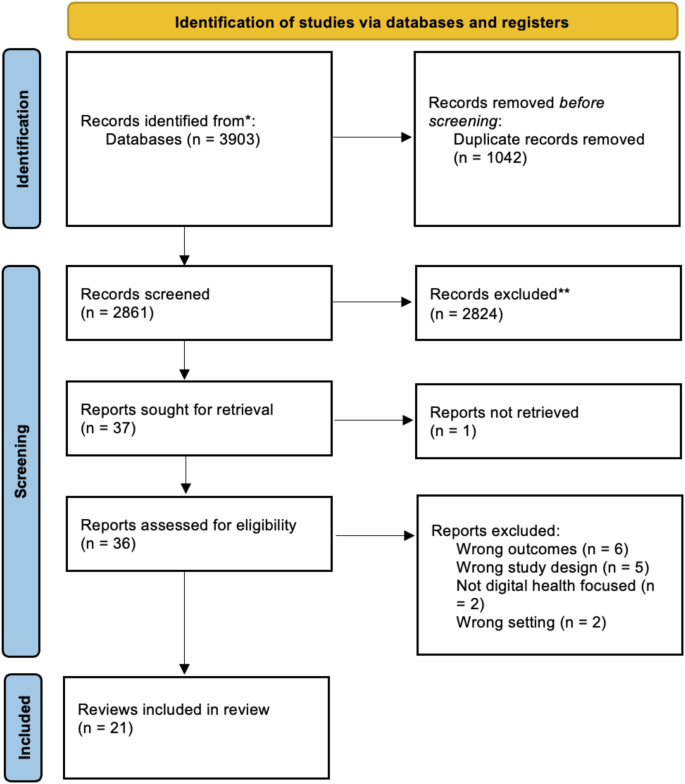

Our search identified 3903 titles and abstracts. After excluding duplicates, we screened 2861 for inclusion and then 37 full-text articles. Twenty-one were included in the final analysis (Fig. 1). Reasons for exclusion included outcomes not matching inclusion criteria (n = 6), wrong study design (n = 5), not digital health focused (n = 2) and wrong setting (n = 2).

This flowchart, adapted from the PRISMA 2020 Flow Diagram, shows the number of records identified from the search (2861 non-duplicative records), the number of records excluded based on title and abstract (2824, and the number of studies excluded based on the full article review (15), and the reason for exclusions. Twenty-one reviews were included in this analysis.

Study characteristics

Table 1 presents the characteristics of included reviews. Reviews were published between 2015 and 2023, most often in Australia (n = 4)15,16,17,18, United Kingdom (n = 2)19,20, Canada (n = 2)21,22, United States (n = 2)23,24, the Netherlands (n = 2)25,26 and Denmark (n = 2)27,28. As shown in Fig. 2, papers were recent, most often published in 2022 (n = 6)19,23,25,29,30,31, 2021 (n = 3)17,32,33, 2020 (n = 3)26,27,20, 2019 (n = 3)18,21,24. Most reviews identified as systematic reviews (n = 11)16,17,19,23,24,26,30,31,32,34,35 or scoping reviews (n = 6)18,21,25,27,29,28. Other review types include literature reviews (n = 2)15,32, rapid review (n = 1)22, and practitioner review (n = 1)20. Reviews identified between 935 and 433 studies19. Authors referred to interventions in their reviews as digital health (n = 6)19,21,28,31,32,20, mobile health (n = 6)17,18,22,30,31,35 or electronic health (n = 3)23,25,26. Other classifications included serious digital gaming interventions (n = 1)34, information communication technologies (n = 1)24, assistive technology (n = 1)27, technology-based interventions (n = 1)16, health-related technology (n = 1)33 and a combination of digital and mobile health (n = 1)31.

This bar graph shows the number of studies (y-axis) published per year (x-axis). The cumulative number of reviews is also depicted by the line through the bar graph.

Most commonly, reviews focused on co-design of digital intervention targeted towards individuals with chronic conditions (n = 8)18,29,21,27,28,30,32,20 and a combination of individuals with chronic conditions and health promotion in healthy individuals (n = 8)15,16,17,23,24,26,33,35. Further, one review identified studies focused broadly on acute and chronic conditions and health promotion in healthy individuals (n = 1)19, health promotion in healthy individuals (n = 1)34 and a combination of acute and chronic conditions (n = 2)22,31. Finally, one review did not report on the specific conditions included25.

A variety of terms were used to refer to co-design including co-design (n = 3)17,22,33, human factor approaches (n = 1)15, participatory design (n = 3)26,29,34, community-based participatory research (n = 1)35, human-centered development (n = 2)25,30, patient-centered methods for design and development (n = 1)24, involvement of end-users in design and/or test phases (n = 1)27. The remaining studies used multiple terms to describe co-design throughout their reviews (n = 9)16,18,19,21,23,28,31,32,20.

Reviews most frequently (n = 17)15,16,17,18,22,23,24,25,26,27,28,30,31,32,33,20,35 aimed to summarize or synthesize the current state of co-design approaches used in their identified interventions and health conditions. In addition to synthesizing the current state of co-design activities, four reviews provided more specific goals including the study by Henni et al.29, who aimed to investigate the needs and perceived barriers of people with impairments as they pertained to user engagement with digital health interventions29.

Co-design participants

Most reviews (n = 19) included a range of co-design participants such as patients, caregivers, healthcare professionals, policy makers, teachers, and behavior specialists. The remaining two reviews24,33 reported on studies which only involved patients in co-design processes. Ten of 2118,29,23,26,27,28,30,32,34,35 reviews discussed co-design session sample sizes and, when reported by included studies, these ranged from 232 to 100035 participants.

Reviews reported on engaging patients ranging from children to older adults (n = 7 )15,29,22,24,25,31,34, older adults (≥60 years) (n = 6)18,23,28,30,32,33, adolescents to adults (n = 2)17,35, children and young people (n = 2)16,20, children (≤18 years) (n = 1)21, adults (n = 1)27, or did not provide data on participant ages (n = 2)19,26. Specific participant age ranges or definitions of what was meant by terms such as ‘children’ were often not reported. A single review presented data on study participant race and ethnicity35 and three reported on gender or sex16,29,35. The review by Eyles et al., which reported on race and ethnicity and gender or sex emphasized that in their identified studies information on age, gender and socioeconomic position of participants and stakeholders was generally poorly reported35.

Co-design activities

All reviews identified described the co-design activities used by included studies, with wide variation in the level of detail provided. Surveys were the most frequently used quantitative approach to enable co-design; reported in 11 reviews15,17,21,23,24,25,28,32,20,34,35. Focus groups and interviews were also frequent; reported in 1715,17,18,19,29,22,23,24,25,27,28,30,20,32,33,34,35 and 1415,17,19,29,22,23,24,25,27,28,30,20,32,34 of reviews respectively. Other qualitative approaches reported in the reviews were observation (n = 10)15,17,21,23,27,28,30,31,33,35 and think-aloud strategies (n = 6)18,19,23,31,33,20. Various creative co-design activities were also reported, including storyboarding (n = 6)16,17,26,30,20,35, persona/scenario building (n = 6)16,17,25,26,30,31, drawing (n = 2)33,20, photos/video elicitation (n = 4)18,29,33,35, storytelling (n = 2)31,20, and role-playing (n = 1)26. Where digital prototypes were included in co-design, they were most frequently 2D or paper-based models (n = 6)15,17,18,26,30,33, wireframes (n = 3)17,30,20, or web-based software (n = 2)33,20. Reported intervention evaluations were iterative usability testing (n = 8)15,17,18,29,22,25,28,30, digital health intervention-embedded engagement metrics including app-tracking (n = 2)15,20, pilot testing (n = 4)17,27,30,33, and living laboratories (n = 3)15,27,33.

The locations where co-design activities were conducted was discussed in seven reviews18,23,27,30,32,20,35. Of reviews reporting on co-design location, one found that of the 25 studies identified, only 11 reported a specific setting. These settings were laboratories, clinics, homes, community, senior centers, and virtual; however, the specific number of studies reporting each location was not provided23. The remaining reviews provided scant detail on locations.

Reporting on co-design frequency, duration and degree of participation

The duration and frequency of co-design sessions was reported by less than half of the reviews; with only eight18,24,27,30,32,33,34,35 discussing session duration and seven18,27,28,32,33,34,35 reporting session frequency. Within those studies, frequency and duration were reported in varying detail. Most reviews reported a handful of examples from the studies they identified, including a 2-h collaborative design workshop or a half-day co-design workshop33. Reviews by Eyles et al.35 and Woods et al.18 reported that the studies they identified provided inadequate descriptions of both session duration and frequency.

Seventeen of the studies15,16,17,19,21,22,23,24,25,26,27,28,30,31,20,33,35 made attempts to distinguish during which part of intervention pre-design, development, evaluation and post-design participants were included in. The review by Cole et al.23 rated level of co-design participation using a framework36 with ratings being “informed”, “consulted”, “involved”, “in collaboration as a co-leader” and “empowering oneself and others”. Of the 25 studies, Cole et al. states that most involved the first three levels of participation. The review by Orlowski et al.16 also made attempt to classify participant involvement in co-design through categorizing studies using several different concepts drawn from participatory based research16. Overall, they found that 70% of projects reported predominantly consultative consumer involvement16.

Twelve of the reviews15,17,18,21,24,25,26,28,30,32,20,35 provided details on the aspects of the intervention that end-users participated in co-designing, although to varying degrees. For example, the review by Bevan Jones et al. highlighted a study in which discussions with youth patient partners focused on illustrations, characters, scripts and animation for the digital health intervention20. The study by Wegener et al. provided a detailed list of specific contributions older participants provided to identified intervention development including content of applications and how censors should be worn28.

Frameworks used to guide co-design and review conduct

Thirteen reviews16,17,18,21,22,23,24,25,26,28,30,31,35 aimed to identify frameworks or theories which underpinned intervention development including behavioral change, intervention development and co-design frameworks. Of these thirteen reviews, all except one identified frameworks used in studies21. Thirty-two frameworks, models or theories related to co-design were described by reviews. The most used were variations of the participatory design (PD) method, user-centered design and human-centered design frameworks. Authors of reviews also used co-design frameworks or models to synthesize results, with ten of 2117,18,21,23,25,26,32,33,34 reporting such use.

Evaluation of co-design

Co-design was evaluated in terms of (1) overall effectiveness and (2) participants’ views of the process. Reviews reported that quantitative evaluation of co-design effectiveness was overall challenging and only one review provided a meta-analysis34. This meta-analysis did not support the notion that digital games developed with participatory design improve health outcomes more than those not co-designed34. The review by Vandekerckhove et al.26 reported a series of outcomes including eHealth development (number of ideas for development), eHealth quality (usability, feasibility) and user outcome (effectiveness) which were reported in their identified studies26. Qualitative reports on the potential for co-design to improve digital health intervention utility were reported by three reviews19,21,24. These reviews stated that an end-user advisory group can lend valuable insights into intervention content and structure, making interventions more user-friendly and feasible to implement21,24 and that adoption of participatory approaches to the design of eHealth interventions and the use of personalized content enhances overall system effectiveness19. Five reviews16,22,26,32,20 reported on participants’ views on participating in co-design and overall reported high levels of satisfaction; however, most of these reviews emphasized that this was an infrequently assessed quality metric in identified studies.

Co-design barriers and challenges

Nine reviews16,18,19,22,25,28,30,33,20 reported on challenges to co-design of digital health interventions. Power imbalances between researchers and participants were amongst the most cited barriers to co-design conduct. Additional barriers included time and financial constraints, costs, difficulty recruiting participants particularly participants from a minority or vulnerable group, participant “groupthink” at co-design sessions, and the thoughts of medical and health professionals being privileged than that of patients. Two reviews reported barriers to specific co-design strategies25,20 which included perceived inadequacies of surveys and questionnaires in exploring complex issues as well as difficulties participants faced in freely talking to strangers in new settings, including in focus groups20. The review by Sumner et al.33 listed barriers to successful co-design of digital health interventions and proposed subsequent strategies to address them. These strategies included building relationships and trust, empowering the end-user, building end-user knowledge, and establishing value and interest33. It was suggested that lacking buy-in from researchers and participants, as well as issues with recruitment, could be addressed through conducting co-design in environments familiar to participants33.

Accessibility and equity

Eight reviews reported on accessibility and equity29,21,22,26,28,30,20,35. Bevan Jones et al. identified a study which discussed the inclusion of cultural advisors and hosting formal design opening and closing sessions with community elders in the Maori and Pacific Islander populations20. Identified strategies to recruit vulnerable populations discussed in reviews included using a proactive outreach approach which involved using a combination of approaching and recruitment strategies. Other reviews highlighted that not embedding equity and accessibility principles in co-design of digital health interventions risked worsening the digital divide21 and design failures if developer biases and stereotypes related to certain groups, such as older adults, were embedded in products30. A handful of the reviews identified focused specifically on improving co-design in vulnerable populations including children with special health care needs and their families21, people with impairments29, people with dementia27 and older people with frailty or impairment28.

Review quality appraisal

All twenty-one reviews were classified as critically low quality. Very few studies met the requirements for questions one (n = 2)33,34, seven (n = 2)22,26, nine (n = 1)33, 10 (n = 1)16, 11 (n = 1)34, and 13 (n = 1)30. No studies met the requirements for questions 12 and 15. For additional details and full questions see Table 2.

Discussion

This umbrella review provides insight into what is known about the practical methods used in the co-design of digital health interventions, the breadth and depth of end-user involvement in the process, and the characteristics of included end-users. Overall, we highlighted the inconsistent and poor reporting of co-design activities used in digital health intervention design and in the reviews. Most reviews reported the inclusion of a broad range of co-design participants including patients, caregivers, healthcare professionals, policymakers, teachers, and behavior specialists; however, the demographic profile of participants known to be engaged in designing digital health interventions is inconsistently reported. All reviews reported on the co-design activities used in studies, including interviews and surveys, however very few described specifics of the sessions including underpinning methodological frameworks, frequency, intensity, and location. Evaluation of co-design effectiveness, in terms of impact on intervention functionality and participant views on participating in the process, were infrequently reported. Reported barriers to co-design included power imbalances and lack of buy-in by researchers, and relationship building and establishing participant value and interest were considered mitigating factors to such challenges. Accessibility and cultural sensitivity were discussed in less than half of the reviews but, when present, centered on recruiting diverse populations to improve representation, and the inclusion of cultural advisors to create more welcoming environments and cultural respect.

Reviews were most frequently published in recent years, a finding likely reflective of the major growth in the field of digital health and the growing appreciation for the need to involve end users in product design37. Increased interest in the principle of co-designing interventions has occurred across fields, including in artificial intelligence38, non-digital health interventions and educational interventions39,40, as involving end-users is considered to reduce biases41, increase engagement and intervention effectiveness42. Reviews were also only conducted in high-income countries. Given the broad potential for digital technologies and artificial intelligence to improve the access to and acceptability of healthcare, reporting on co-design processes from the perspectives of users, particularly those in including in low- and middle-income countries is required43.

Terminology used in reviews to describe the principle of co-design varied and included “patient and public involvement”, “user-centered design”, “co-creation” and “human factors approaches”. These terms were used interchangeably by authors and involved very similar methodologies. This likely reflects how the concept of end-user involvement in design and development has evolved over time44,45,46 and across fields. Standardizing the term used may minimize confusion, create a sense of cohesion across the disciplines of healthcare, engineering, and software developments, as well as ensure methodologies are implemented rigorously and consistently.

All reviews reported on the specific co-design activities used in their identified studies. Strategies were surveys, interviews, and focus-groups, among others; however, very little detail was provided about the practical methods employed, including the intensity and frequency of co-design sessions or when to best implement these strategies during the design, development, and evaluation processes. Further, although several reviews did endeavor to describe the degree of participant involvement in the design, development, and evaluation process, only two reviews qualified this using a participatory framework16,23. Such information is critical to an understanding of the breadth and depth of meaningful end-user participation in digital health intervention development and has been recognized as such in healthcare research more broadly39,40. Future research reports should aim to address these gaps through detailed description of the specific co-design activities and processes used. In addition, only approximately half of reviews reported on the specific features of the intervention which participants co-designed including technical requirements and content. Inclusion of this detailed information is critical in reviews to ensure future researchers can use the information as a guide for their own studies.

Reporting on the details of those participants involved in digital health co-design, including profiles of their age, sex, gender, race, ethnicity, socio-economic status, and health status were scant. Such reporting is required to provide evidence regarding health impacts, satisfaction with care, disparities and inequities experienced across demographic groups and is recommended as best practice in research47,48,49. Historically, design work has engaged so-called “super-users” (users who frequently contribute to research projects) due to their comfortability with the research and ability to contribute50,51. Such participants are often not representative of the population for whom a digital health intervention is designed, potentially exacerbating digital divides, and shrinking intervention effectiveness43,52 for groups such as older adults, young children, and those with low health or digital literacy43,52,53. Research is needed to understand how best to recruit such groups into co-design work, which co-design methods may be most appropriate for different groups, and how to support meaningful engagement throughout the process. One way this can be accomplished is through ensuring that locations and settings/environments of co-design sessions are accessible for participants, whether in their own community rather than an academic setting or through virtual means43,52,53.

Utilizing a framework or theory to underpin methodological choices is one way to increase quality research, including co-design methods54. As a form of participatory research, it is critical researchers maintain congruency between their epistemological, theoretical, and methodological decisions55 to increase the rigor of their co-design research. Despite this, only half of reviews either utilized a framework to guide their review or highlighted the frameworks identified in their studies. Framework-, model- or principles-based digital health co-design, which is underpinned by empirical evidence, should be a focus of future research.

Finally, our review indicates a need for improved reporting on the impact of co-design in terms of the effectiveness of generated digital health products and participant experiences. This concern could be reflective of inconsistent reporting at the study level. Previously identified as problematic within the co-design literature56, efforts have been made to develop robust tools and frameworks to evaluate patient and public engagement56,57. Rigorous and on-going evaluations of co-design activities are required to determine the degree of end-user engagement and learn how to improve practices in the future56. Participant involvement in intervention design and development also has potential to create a sense of empowerment by building knowledge bases and advocacy skills58.

Considering the quality of included reviews, all were scored using the AMSTAR 2 tool as critically low. AMSTAR 2 items where reviews scored particularly low included those related to description of included study designs. Reviews also provided sparse detail on their constituent studies, making it difficult to understand who was included in co-design, how co-design was conducted, and how it evaluated. We focused only on the data presented in reviews and therefore we cannot report on whether this lack of detail extends to reporting in the primary studies included in each review.

This review of review is not without its own limitations. We included a broad range of review types including scoping, systematic and narrative synthesis which challenged our ability to compare review findings. We also only included reviews published in English, potentially limiting understanding of these co-design understandings in other languages and cultural contexts.

Our umbrella review has synthesized data reported by reviews focused on co-designing digital health interventions and summarized what is known about co-design methods, the breadth and depth of end-user involvement and the characteristics of included end-users. Although we identified the co-design activities most frequently used, due to underreporting of information in reviews, we were limited in our ability to determine the details of these activities, including who participated. Few reviews reported on the evaluation of co-design activities and we found little consensus on the most appropriate framework or methodology to guide co-design. This reflects a lack of standardization and consistency across the field of digital health co-design. Currently available guidelines for patient and public involvement in intervention development include the Guidance for Reporting Involvement of Patients and the Public (GRIPP)59 and the GRIPP260. However, these guidelines are generic and not specific to co-design in digital health61. Recommendations for governance and innovation in responsible digital health development highlight inclusive co-creation as best practice, with co-design capable of supporting digital healthcare that is clinically, ethically, and fiscally responsible62,63. Instruments to rate the quality of end-user involvement and associated reporting are required to create investigator accountability as it pertains to digital co-design.

In Table 3 we overlay the major findings of this review with practical suggestions for digital health co-design practitioners and scientists in the field. In doing so, we suggest efforts are needed to develop standardized guidelines for reporting co-design methodologies and to direct specific co-design methods and processes, with emphasis on guidance around the strategies that may be most engaging and effective in particular populations and health conditions. Ultimately, to be truly emblematic of co-design principles, these guidelines should be co-created with patients and caregivers and include meaningful involvement of healthcare professionals to enhance capacity to create clinically relevant health tools64. Such work has potential to ensure co-design as a principle in digital health development continues to evolve and leads to effective and sustainable interventions.

Material and methods

Study design, literature search and study selection

A systematic review of reviews was undertaken, which is the recommended approach in instances where the amount of research in an area is expected to be large65. Our reporting is in accordance for the reporting guideline for overviews of reviews of healthcare interventions: PRIOR statement (Supplementary Table 1)66. No ethics approval was needed due to the nature of the manuscript. With the assistance of three research librarians (one specialized in nursing research, one in engineering and one pediatric hospital librarian), we searched PubMed/Medline, Embase, PsycInfo, Cochrane Reviews and Association for Computing Machinery (ACM) Digital Library from inception to March 8, 2023. Our search strategy (Supplementary Table 2) was developed using combinations of key words for digital health, telemedicine, and co-design. Our search strategy was created following an initial consultation with a university-based librarian specializing in healthcare literature. A subsequent consultation meeting was held with a university-based librarian specializing in engineering, who adapted our search for the ACM Digital database. The protocol for this umbrella review was not registered, however a detailed protocol was prepared through group discussion and can be accessed at request.

Included reviews reported on co-design methodologies used in digital health interventions. We defined co-design as the active involvement of end-users in the design and development of digital health interventions12,13,14. Further, we also included studies which assessed end-user involvement in implementation and evaluation if digital health interventions.

We defined digital health interventions as the use of information and communication technologies in medicine and other health professions to manage illness and health risks or promote wellness67. All types of digital modalities were included. Categories which fall under digital health included, but were not limited to, telemedicine, electronic health, mobile health, virtual gaming, virtual reality, chat bots, remote monitoring, and wearable digital devices. We included reviews focused on co-design of an intervention aimed at managing an acute (sudden onset involving <3 months and a return to the patient’s baseline likely68) or chronic (lasting >3 months or occurring three times or more in 1-year and requiring ongoing medical attention or limiting activities of daily living69) health condition or promoting healthy lifestyle habits. To be included end-users of digital health interventions must have been patients or the public. However, co-design activities could involve a wide range of participants including patients, caregivers, policy makers and software engineers so long as patients or the public were involved in some capacity.

To be included, reviews could focus on co-design of the health intervention or digital tool itself. Reviews must have: addressed any of co-design methods, co-design setting, or degree of end-user involvement; been written in English; and searched one or more databases using a systematic approach to identify studies. Two authors (A.K. and TCC.H.) piloted our application of the inclusion and exclusion criteria using 25 randomly selected abstracts and decision agreement was 100%. All title and abstract, and then full-text, screening was conducted in duplicate (A.K. and TCC.H.) in Covidence. Conflicts were resolved by discussion and any remaining were resolved by an independent third reviewer (L.J.).

Data extraction and data synthesis

Two authors (A.K. and TCC.H.) extracted study data into an author developed and piloted codebook. Agreement on data extraction was 90% and frequent process meetings were used to resolve disagreements and reach 100% agreement. Data abstraction fields were grouped according to key data features to enable synthesis. These fields include study characteristics (publication year, country of origin, type of review, digital health intervention, etc.), co-design participants, and co-design activities. In order to understand the breadth and depth of end-user involvement in co-design we extracted frequency (how often activities took place), duration of co-design activities, degree of end-user participation throughout the co-design or evaluation process and aspects of the intervention (e.g., clinical content, tool appearance, hardware function) that end-users participated in co-designing. Quantitative data were analyzed using descriptive statistics and presented narratively.

Quality appraisal

The quality of each included review was assessed in duplicate (A.K. and TCC.H) using the A Measurement Tool to Assess Systematic Reviews 2 (AMSTAR-2)70. Rating discrepancies were discussed and resolved with the author group.

Data availability

This study is an umbrella review, and it does not generate any new data. Questions regarding data access should be addressed to the corresponding author.

Change history

01 July 2025

This article has been updated to amend the license information.

References

Sharma, A. et al. Using digital health technology to better generate evidence and deliver evidence-based care. J. Am. Coll. Cardiol. 71, 2680–2690 (2018).

Borges Do Nascimento, I. J. et al. Barriers and facilitators to utilizing digital health technologies by healthcare professionals. npj Digital Med. 6, 161 (2023).

Murray, E. et al. Evaluating digital health interventions. Am. J. Prev. Med. 51, 843–851 (2016).

Abernethy, A. et al. The Promise of Digital Health: Then, Now, and the Future. NAM Perspect 6, 1–16 (2022).

Fedele, D. A., Cushing, C. C., Fritz, A., Amaro, C. M. & Ortega, A. Mobile health interventions for improving health outcomes in youth: a meta-analysis. JAMA Pediatr. 171, 461 (2017).

Garnett, A., Northwood, M., Ting, J. & Sangrar, R. mHealth interventions to support caregivers of older adults: equity-focused systematic review. JMIR Aging 5, e33085 (2022).

Samadbeik, M., Garavand, A., Aslani, N., Sajedimehr, N. & Fatehi, F. Mobile health interventions for cancer patient education: a scoping review. Int. J. Med. Inform. 179, 105214 (2023).

Jibb, L. A. et al. Development of a mHealth real-time pain self-management app for adolescents with cancer: an iterative usability testing study. J. Pediatr. Oncol. Nurs. 34, 283–294 (2017).

Hunter, J. F. et al. A pilot study of the preliminary efficacy of Pain Buddy: a novel intervention for the management of children’s cancer‐related pain. Pediatr. Blood Cancer 67, e28278 (2020).

Burke, L. E. et al. Current science on consumer use of mobile health for cardiovascular disease prevention: a scientific statement from the American Heart Association. Circulation 132, 1157–1213 (2015).

Winters, N., Oliver, M. & Langer, L. Can mobile health training meet the challenge of ‘measuring better’? In Measuring the Unmeasurable in Education (ed. Unterhalter, E.) 115–131 (Routledge, 2020).

Malloy, J. A., Partridge, S. R., Kemper, J. A., Braakhuis, A. & Roy, R. Co-design of digital health interventions for young adults: protocol for a scoping review. JMIR Res. Protoc. 11, e38635 (2022).

Noorbergen, T. J., Adam, M. T. P., Teubner, T. & Collins, C. E. Using co-design in mobile health system development: a qualitative study with experts in co-design and mobile health system development. JMIR Mhealth Uhealth 9, e27896 (2021).

Moll, S. et al. Are you really doing ‘codesign’? Critical reflections when working with vulnerable populations. BMJ Open 10, e038339 (2020).

Baysari, M. T. & Westbrook, J. I. Mobile applications for patient-centered care coordination: a review of human factors methods applied to their design, development, and evaluation. Yearb. Med. Inf. 24, 47–54 (2015).

Orlowski, S. K. et al. Participatory research as one piece of the puzzle: a systematic review of consumer involvement in design of technology-based youth mental health and well-being interventions. JMIR Hum. Factors 2, e12 (2015).

The University of Newcastle et al. Co-design in mHealth systems development: insights from a systematic literature review. THCI 13, 175–205 (2021).

Woods, L., Duff, J., Cummings, E. & Walker, K. Evaluating the development processes of consumer mHealth interventions for chronic condition self-management: a scoping review. Comput. Inform. Nurs. 37, 373–385 (2019).

Baines, R. et al. Meaningful patient and public involvement in digital health innovation, implementation and evaluation: a systematic review. Health Expect. 25, 1232–1245 (2022).

Bevan Jones, R. et al. Practitioner review: Co‐design of digital mental health technologies with children and young people. J. Child Psychol. Psychiatr. 61, 928–940 (2020).

Bird, M. et al. Use of synchronous digital health technologies for the care of children with special health care needs and their families: scoping review. JMIR Pediatr. Parent 2, e15106 (2019).

Cwintal, M. et al. A rapid review for developing a co-design framework for a pediatric surgical communication application. J. Pediatr. Surg. 58, 879–890 (2023).

Cole, A., Adapa, K., Richardson, D. R. & Mazur, L. M. Co-design approaches involving older adults in the development of electronic healthcare tools: a systematic review. in Studies in Health Technology and Informatics (eds Otero, P., Scott, P., Martin, S. Z. & Huesing, E.) (IOS Press, 2022).

Mitchell, K. M., Holtz, B. E. & McCarroll, A. Patient-centered methods for designing and developing health information communication technologies: a systematic review. Telemed. J. E Health 25, 1012–1021 (2019).

Kip, H. et al. Methods for human-centered eHealth development: narrative scoping review. J. Med. Internet Res. 24, e31858 (2022).

Vandekerckhove, P., De Mul, M., Bramer, W. M. & De Bont, A. A. Generative participatory design methodology to develop electronic health interventions: systematic literature review. J. Med. Internet Res. 22, e13780 (2020).

Øksnebjerg, L., Janbek, J., Woods, B. & Waldemar, G. Assistive technology designed to support self-management of people with dementia: user involvement, dissemination, and adoption. A scoping review. Int. Psychogeriatr. 32, 937–953 (2020).

Wegener, E. K., Bergschöld, J. M., Whitmore, C., Winters, M. & Kayser, L. Involving older people with frailty or impairment in the design process of digital health technologies to enable aging in place: scoping review. JMIR Hum. Factors 10, e37785 (2023).

Henni, S. H., Maurud, S., Fuglerud, K. S. & Moen, A. The experiences, needs and barriers of people with impairments related to usability and accessibility of digital health solutions, levels of involvement in the design process and strategies for participatory and universal design: a scoping review. BMC Public Health 22, 35 (2022).

Nimmanterdwong, Z., Boonviriya, S. & Tangkijvanich, P. Human-centered design of mobile health apps for older adults: systematic review and narrative synthesis. JMIR Mhealth Uhealth 10, e29512 (2022).

Nusir, M. & Rekik, M. Systematic review of co-design in digital health for COVID-19 research. Univ. Access Inf. Soc. https://doi.org/10.1007/s10209-022-00964-x (2022).

Sanz, M. F., Acha, B. V. & García, M. F. Co-design for people-centred care digital solutions: a literature review. Int. J. Integr. Care 21, 16 (2021).

Sumner, J., Chong, L. S., Bundele, A. & Wei Lim, Y. Co-designing technology for aging in place: a systematic review. Gerontologist 61, e395–e409 (2021).

DeSmet, A. et al. Is participatory design associated with the effectiveness of serious digital games for healthy lifestyle promotion? A meta-analysis. J. Med. Internet Res. 18, e94 (2016).

Eyles, H. et al. Co-design of mHealth delivered interventions: a systematic review to assess key methods and processes. Curr. Nutr. Rep. 5, 160–167 (2016).

Vaughn, L. M. & Jacquez, F. Participatory research methods—choice points in the research process. J. Particip. Res. Methods 1, 1–9 (2020).

Lupton, D. Digital health now and in the future: findings from a participatory design stakeholder workshop. Digital Health 3, 205520761774001 (2017).

Sadek, M., Calvo, R. A. & Mougenot, C. Co-designing conversational agents: a comprehensive review and recommendations for best practices. Des. Stud. 89, 101230 (2023).

Slattery, P., Saeri, A. K. & Bragge, P. Research co-design in health: a rapid overview of reviews. Health Res. Policy Syst. 18, 17 (2020).

Iniesto, F., Charitonos, K. & Littlejohn, A. A review of research with co-design methods in health education. Open Educ. Stud. 4, 273–295 (2022).

Chu, C. H. et al. Digital ageism: challenges and opportunities in artificial intelligence for older adults. Gerontologist 62, 947–955 (2022).

Voorheis, P., Petch, J., Pham, Q. & Kuluski, K. Maximizing the value of patient and public involvement in the digital health co-design process: a qualitative descriptive study with design leaders and patient-public partners. PLoS Digit. Health 2, e0000213 (2023).

Singh, D. R., Sah, R. K., Simkhada, B. & Darwin, Z. Potentials and challenges of using co-design in health services research in low- and middle-income countries. Glob. Health Res. Policy 8, 5 (2023).

Sánchez De La Guía, L., Puyuelo Cazorla, M. & de-Miguel-Molina, B. Terms and meanings of “participation” in product design: from “user involvement” to “co-design. Des. J. 20, S4539–S4551 (2017).

Sanders, E. B.-N. & Stappers, P. J. Co-creation and the new landscapes of design. CoDesign 4, 5–18 (2008).

Sanders, L. & Stappers, P. J. From designing to co-designing to collective dreaming: three slices in time. Interactions 21, 24–33 (2014).

Diaz, T. et al. A call for standardised age-disaggregated health data. Lancet Healthy Longev. 2, e436–e443 (2021).

Flanagin, A., Frey, T., Christiansen, S. L. & AMA Manual of Style Committee. Updated guidance on the reporting of race and ethnicity in medical and science journals. JAMA 326, 621–627 (2021).

Stanbrook, M. B. & Salami, B. CMAJ ’s new guidance on the reporting of race and ethnicity in research articles. CMAJ 195, E236–E238 (2023).

Chauhan, A., Leefe, J., Shé, É. N. & Harrison, R. Optimising co-design with ethnic minority consumers. Int. J. Equity Health 20, 240, s12939-021-01579-z (2021).

O’Brien, J., Fossey, E. & Palmer, V. J. A scoping review of the use of co‐design methods with culturally and linguistically diverse communities to improve or adapt mental health services. Health Soc. Care Community 29, 1–17 (2021).

Kemp, E. et al. Health literacy, digital health literacy and the implementation of digital health technologies in cancer care: the need for a strategic approach. Health Prom. J. Aust. 32, 104–114 (2021).

Ní Shé, É. et al. Clarifying the mechanisms and resources that enable the reciprocal involvement of seldom heard groups in health and social care research: a collaborative rapid realist review process. Health Expect. 22, 298–306 (2019).

Greenhalgh, T. et al. Frameworks for supporting patient and public involvement in research: systematic review and co‐design pilot. Health Expect. 22, 785–801 (2019).

Cornish, F. et al. Participatory action research. Nat. Rev. Methods Prim. 3, 34 (2023).

Boivin, A. et al. Patient and public engagement in research and health system decision making: a systematic review of evaluation tools. Health Expect. 21, 1075–1084 (2018).

Abelson, J. et al. Development of the engage with impact toolkit: a comprehensive resource to support the evaluation of patient, family and caregiver engagement in health systems. Health Expect. 26, 1255–1265 (2023).

Bird, M. et al. Preparing for patient partnership: a scoping review of patient partner engagement and evaluation in research. Health Expect. 23, 523–539 (2020).

Staniszewska, S., Brett, J., Mockford, C. & Barber, R. The GRIPP checklist: strengthening the quality of patient and public involvement reporting in research. Int. J. Technol. Assess. Health care 27, 391–399 (2011).

Staniszewska, S. et al. GRIPP2 reporting checklists: tools to improve reporting of patient and public involvement in research. BMJ 358, 1–6 (2017).

Munce, S. E. et al. Development of the preferred components for co-design in research guideline and checklist: protocol for a scoping review and a modified Delphi process. JMIR Res. Protoc. 12, e50463 (2023).

Landers, C., Blasimme, A. & Vayena, E. Sync fast and solve things—best practices for responsible digital health. npj Digital Med. 7, 113 (2024).

Skivington, K. et al. A new framework for developing and evaluating complex interventions: update of Medical Research Council guidance. BMJ 374, 1–9 (2021).

Nickel, G. C., Wang, S., Kwong, J. C. C. & Kvedar, J. C. The case for inclusive co-creation in digital health innovation. npj Digital Med. 7, 251, s41746-024-01256-9 (2024).

Choi, G. J. & Kang, H. Introduction to umbrella reviews as a useful evidence-based practice. J. Lipid Atheroscler. 12, 3 (2023).

Gates, M. et al. Reporting guideline for overviews of reviews of healthcare interventions: development of the PRIOR statement. BMJ 378, 1–11 (2022).

Jandoo, T. WHO guidance for digital health: what it means for researchers. Digital Health 6, 2055207619898984 (2020).

Holman, H. R. The relation of the chronic disease epidemic to the health care crisis. ACR Open Rheumatol. 2, 167–173 (2020).

The Dutch National Consensus Committee “Chronic Diseases and Health Conditions in Childhood” et al. Defining chronic diseases and health conditions in childhood (0–18 years of age): national consensus in the Netherlands. Eur. J. Pediatr. 167, 1441–1447 (2008).

Shea, B. J. et al. AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ j4008 https://doi.org/10.1136/bmj.j4008 (2017).

Acknowledgements

This study was funded by Manchester Melbourne Toronto Joint Research Fund provided by the University of Manchester and the University of Toronto. L.J. and C.H.C. are funded by their Centre for Aging and Brain Health Innovation (CABHI) grant. The funders played no role in study design, data collection, analysis and interpretation of data, or the writing of this manuscript.

Author information

Authors and Affiliations

Contributions

L.J., C.H.C., P.W., and C.S.P. conceptualized the review idea, design and provided supervision. All authors conceptualized the search strategy. A.K. and T.C.C.H. contributed to the investigator role through data collected and validation roles. A.K. contributed to the project administration and data visualization. The initial manuscript was written by A.K. and L.J. All authors critically reviewed the first draft and approved prior to publication.

Corresponding author

Ethics declarations

Competing interests

P.W. is a director and shareholder of CareLoop Health Ltd, a digital mental health company; CareLoop Health Ltd had no role in this project. L.J., C.H.C., C.S.P., A.K. and T.C.C.H. have no financial or non-financial competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kilfoy, A., Hsu, TC.C., Stockton-Powdrell, C. et al. An umbrella review on how digital health intervention co-design is conducted and described. npj Digit. Med. 7, 374 (2024). https://doi.org/10.1038/s41746-024-01385-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41746-024-01385-1

This article is cited by

-

Digital health interventions for promoting adults lifestyle behaviors: who is being left behind? An evidence synthesis of social inequality

International Journal of Behavioral Nutrition and Physical Activity (2026)

-

Consensus-based reporting guideline for participatory development and evaluation of digital health interventions

npj Digital Medicine (2026)

-

Co-designing the future: End-user involvement in digital interventions

npj Digital Medicine (2026)

-

Co-designing with frail nursing home residents to gamify a VR-based physio-cognitive intervention

npj Digital Medicine (2026)

-

Equity by design principles for digital health interventions

International Journal for Equity in Health (2025)