Abstract

Ophthalmology, an early adopter of medical artificial intelligence (AI), has made significant advancements, but ethical discussions limited. This bibliometric analysis reviewed 498 publications from Web of Science and Scopus (2000–2023) to explore the evolution, status, thematic trends, data modalities and their ethical focus, and solutions in ophthalmic AI ethics. Ophthalmology ranks second in medical AI ethics, with major contributors from the United States (US), China, the United Kingdom (UK), Singapore and India. Ethical concerns focus on privacy, fairness, and transparency. Ethical priorities differ by modality with fundus imaging (59.4%) and Optical Coherence Tomography (OCT) (30.9%) as the most common. Most studies (78.3%) address ethics in diagnostic algorithm development, while only 11.5% directly target ethical concerns, though this focus is increasing. This study highlights the need for AI technologies and guidelines to address ethical challenges in ophthalmology, offering a future direction for research and innovation in medical AI governance.

Similar content being viewed by others

Introduction

In recent years, the widespread application of artificial intelligence (AI) in the medical field has significantly enhanced the efficiency and convenience of diagnosis and treatment1,2. AI has broken geographic barriers, expanding the boundaries of medical research and clinical practice, particularly in cross-border, multi-center randomised controlled trials (RCTs) and real-world studies3,4,5,6,7. However, as AI and big data technologies are increasingly being applied, they also bring new ethical challenges, particularly in areas such as data privacy, algorithm transparency, and accountability for decision-making1,2,8,9,10,11,12,13,14. These challenges have drawn the attention of the public, academics and policymakers, prompting a re-examination and response to the complex ethical issues that arise. For instance, the European Union’s General Data Protection Regulation (GDPR) and “Ethics Guidelines for Trustworthy AI” emphasize data privacy and accountability15; the United States of America (US) enforces the Health Insurance Portability and Accountability Act (HIPAA)16 and AI ethical principles from the Department of Defence17; China promotes human-centered AI ethics with international cooperation18; and the World Health Organization (WHO) has issued global guidelines to ensure ethical AI use in healthcare (https://www.who.int/publications-detail-redirect/9789240029200).

Ophthalmology, with its complex anatomical structures and broad range of non-invasive examination methods, generates large volumes of data, providing a solid foundation for the application of AI in this field19,20. The integration of image-based, numerical, and textual ophthalmic data has enabled AI to demonstrate significant advantages and potential in disease screening, diagnosis, and treatment within ophthalmology21,22,23,24,25,26,27,28. However, this raises prominent ethical issues, with privacy being a prime example. Ophthalmic AI uses data from various sources, including images and electronic health records, to improve diagnostic accuracy, but also increases the risk of re-identification29,30. These data inherently contain sensitive personal information, such as eye appearance, iris and retinal images, further amplifying the potential risk of privacy breaches during AI processing31. Therefore, it is necessary to strengthen research and discussion on the ethical issues of ophthalmic AI, and to establish clear guidelines and regulatory frameworks.

Although several studies have preliminarily explored the current ethical issues and related technical research in ophthalmic AI (Supplementary Table 1), there has been insufficient focus on AI ethics specifically within ophthalmology, as well as a lack of quantitative research offering an objective and systematic perspective32,33,34,35. These limitations restrict our understanding of the current stage of development and collaboration patterns in ophthalmic AI ethics, as well as our comprehension of specific ethical issues, such as: How do ethical concerns in ophthalmic AI evolve with technology and time? What is the priority of different ethical principles across various data modalities? What are the main strategies currently used to address ethical issues in ophthalmic AI? Can a deeper understanding of these questions help future developers design safe and ethically compliant algorithms, enhance user confidence in clinical applications, and provide important guidance for scientific collaboration, effective resource allocation, and policy-making?

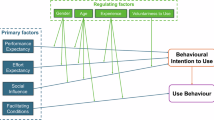

To systematically address these challenges, this study utilizes bibliometric methods to comprehensively analyse the current state of research and resolution strategies in ophthalmic AI ethics (Fig. 1). Our research focuses on four key areas: (1) clarifying the role of ophthalmology in the broader field of medical AI ethics; (2) exploring the development trends and research priorities of ethical issues in ophthalmic AI; (3) analysing the differences in ethical concerns and their prioritization across various data modalities in ophthalmic AI; and (4) evaluating the current strategies for resolving ethical issues in ophthalmic AI. Through these analyses, we aim to provide more targeted recommendations for the ethical framework of ophthalmic AI, ensuring that specific ethical challenges can be effectively addressed within particular data modalities, thereby reducing ethical risks and promoting the sustainable development of ophthalmic AI technology. Looking more broadly, we hope this work can offer other disciplines a novel quantitative approach and valuable insights from ophthalmology’s experience to enhance their understanding and management of discipline-specific ethical challenges.

Common bibliometric analysis (Performance Analysis, Co-authorship Analysis, and Co-occurrence Analysis) was used to assess the disciplinary positioning and current status of ophthalmic AI ethics, including its development stage, key contributors, and identification of research hotspots and trends. Based on a comprehensive review of the selected literature, comprehensive bibliometric analysis (Frequencies Analysis and Trend Analysis) was conducted to explore the evolution of hot ethical themes, the prioritization of data modalities and their ethical focus, as well as the distribution and trends of strategies addressing ethical challenges.

Results

Dataset overview

We identified and conducted bibliometric analysis of 13,819 publications on AI ethics across various medical disciplines from the Web of Science Core Collection (WOSCC) and Scopus using the search terms from Supplementary Table 2, with annual outputs detailed in Supplementary Table 3.

Based on the retrieval and screening strategy shown in Supplementary Fig. 1, 498 articles were included for bibliometric analysis of ophthalmic AI ethics, comprising 429 (86.1%) research articles and 69 (13.9%) reviews. Supplementary Table 4 lists the top ten most cited papers in this area, primarily published post-2018, each with over 240 citations and an average citation count of ~ 465.

Disciplinary positioning, development stage and key contributors

To identify ophthalmic AI ethics’ position within the broader medical field, we compared publication volumes in ophthalmology with other medical sub-disciplines using original records retrieved from the WOSCC database. The literature on AI ethics in “medicine and health” comprises 8345 publications, including 6773 articles and 1572 reviews. Among all medical sub-disciplines, oncology (1143 total; 872 articles, 271 reviews) and ophthalmology (1082 total; 920 articles, 162 reviews) ranked as the top two among fifteen sub-disciplines, with all others having fewer than 1000 publications related to AI ethics (Fig. 2a). Annual publications (Supplementary Table 3) showed earlier emergence of AI ethics literature in ophthalmology compared to oncology. Over the recent five-year period (Fig. 2b), both fields have experienced robust growth, with ophthalmology publications exceeding those in oncology in 2022.

a Bar Chart of AI Ethics Publications in Medicine and Sub-disciplines. b Trends in Publication Numbers by Medical Sub-disciplines Over the Past Five Years (2018–2022, same below).

Performance analysis was used to evaluate the growth and maturity of ophthalmic AI ethics. An exponential growth model [Y = 20.06e0.4039x (Rad2 = 0.97)] was developed, mapping normalized years to annual publication volumes. Besides, Supplementary Fig. 2 charts the quantity and citation metrics of ophthalmic AI ethics publications from 2000 to June 15, 2023. From 2000 to 2017, discussions on ethical issues in ophthalmic AI were sparse, not exceeding 20 publications annually. Between 2018 and 2022, the number of publications saw a steady rise, averaging an annual growth of 27.1%, and surged notably from 2020 to 2022, with an average annual increase of 71.2% and nearly 66% of publications (328 total) released in the last three years of the analysis.

With the aim of recognizing key contributors, we conducted performance analysis and co-authorship analysis across countries, institutions, and authors. Research contributions span 70 countries, with the US, China, the UK, India, and Singapore being the most active (Supplementary Table 5). Co-authorship analysis highlights strong academic collaborations, particularly among these leading countries (Supplementary Fig. 3a). Similarly, institutional and authors’ contributions have been widespread, with major academic centers in the US, China, the UK, and Singapore playing key roles in advancing AI ethics research in ophthalmology (Supplementary Tables 6, 7 and Supplementary Fig. 3).

Research hotspots and ethical themes

In an effort to uncover research hotspots in ophthalmic AI, through co-occurrence analysis, terms from the titles, abstracts, and keywords of the included literature were categorized into three clusters (Fig. 3a): the red cluster focused on ethical issues commonly addressed in the clinical practice and application of ophthalmic AI technologies, highlighting their progress. The green cluster primarily examined the performance of ophthalmic AI in image processing, with a special emphasis on robustness, high accuracy, and F1 scores in feature extraction and vessel segmentation in retinal and OCT images. The blue cluster addressed AI ethics research related to diabetic retinopathy (DR).

Terms were color-coded by average publication year in Fig. 3b to show shifts in research hotspots: blue for earlier terms, orange/red for recent ones, producing a time overlay visualization. From 2019 to 2020, “respect”, “benefit” and “robustness” dominated ethical discussions; 2020–2021 saw rising interest in “safety”, “reliability”, “bias” and “transparency”; and from 2021 to 2022, the focus shifted to “privacy”, “security” and “trust”.

a Co-occurrence Network Map of Terms. Research Hotspots are divided into three directions: “Progress and Ethics of Artificial intelligence in Ophthalmology “ (red), “Image Analysis Improvements” (green), and “Multi-demand AI for DR” (blue). Each circle represents a term, the size of each node indicates its frequency of appearance, and each line represents a co-occurrence relationship, with thickness indicating relative strength, same below. b Overlay visualization (time trend) map. Colors orange/red indicate recent occurrences, blue indicates earlier ones. c Proportion of Ethical Themes. Frequency of each ethical theme in 498 articles, expressed as a percentage. d Evolution of Ethical Themes. Frequency change of each ethical theme over the past five years. AI, artificial intelligence; DR, diabetic retinopathy.

For further exploration of ethical hotspots, a comprehensive analysis was conducted, encompassing the extraction of ethical themes from the full text of included literature and the analysis of their frequency and trends. Comprehensive analysis yielded results similar to the co-occurrence analysis of terms (Fig. 3c), with the top five ethical themes by frequency being “Trust, Reliability & Robustness” (60.0%), “Transparency & Interpretability” (44.8%), “Fairness & Equality (Bias) ” (32.7%), “Non-maleficence (Safety) & Beneficence” (19.3%) and “Privacy & Data Security” (14.5%).

Similarly, the evolution of these ethical hotspots (Fig. 3d) mirrored the results from overlay visualization map of terms, with consistent interest in “Trust, Reliability & Robustness” and “Non-maleficence (Safety) & Beneficence” from 2019–2022, increasing attention to “Transparency & Interpretability” and “Fairness & Equality (Bias)” around 2019–2020, and a growing focus on “Privacy & Data Security” starting in 2021.

Common data modalities and their ethical focus

Comprehensive analysis ranked the 10 most frequently mentioned modalities in the included literature (Fig. 4a). “Fundus imaging (colorful/colorless)” was most frequently mentioned in the included literature (n = 296, 59.4%), corresponding to the prominence of retinal diseases, followed by “OCT” (n = 154, 30.9%) covering anterior and posterior segment imaging. Other notable mentions included “Text and numeric data” (n = 57, 11.4%), “Eye/Facial appearance photography” (n = 44, 8.8%), and “Surgery imaging” (n = 32, 6.4%).

a Proportion of Ophthalmic Data. Occurrence frequency of each type of ophthalmic data in 498 articles. b Bar Chart of Ethics Themes by Ophthalmic Data. Ranking of ethical themes and their frequencies associated with each type of ophthalmic data.

Critically, to identify and mitigate ethical disputes in their usage, the study catalogs the primary ethical themes associated with each data modality (Fig. 4b). Themes such as “Trust, Reliability & Robustness”, “Transparency & Interpretability”, “Fairness & Equality (Bias)” and “Privacy & Data Security” were prevalent, though their frequency and emphasis varied by data modality. Fundus imaging prioritized interpretability under principles of trust and robustness, Eye/Facial appearance photography emphasized privacy, while OCT and text-numeric data were more concerned with “Interpretability” and “Fairness”. Notably, Surgery imaging placed high importance on “Non-maleficence (Safety) & Beneficence”.

Resolution strategies and trends

Lastly, our investigation used comprehensive analysis to assess strategies frequently employed to tackle ethical issues in ophthalmic AI and their trend. Most referenced studies (89.8%) employed technological interventions to address ethics, with 78.3% integrating ethical solutions, like enhanced interpretability heatmaps, into AI development for eye disease screening. However, only 11.5% of studies focused primarily on resolving ethical dilemmas through technological advancements. Moreover, 10.2% of studies highlighted the equal significance of technology and normative documents in ethical management, yet none suggested that normative documents alone can resolve AI’s ethical challenges (Fig. 5a). Research on “Technology-involving ethics” has significantly increased over the past five years, alongside a rising trend in strategies that combine “Technology-ethics domain” and “Both technology & normative documents domain” (Fig. 5b).

a Proportion of Solution Strategies. Frequency of each solution strategy in 498 articles, expressed as a percentage. b Trends in Solution Strategies Over the Last Five Years.

Discussion

Through the utilization of various bibliometric and quantitative methods, this study highlights that the ethics of AI in ophthalmology is a global leader in medical AI ethics, now shifting from developmental stages to real-world application. Collaborations between institutions and scholars from the US, China, the UK, Singapore and India have significantly advanced the field. Current research hotspots encompass diseases, technologies, and ethical considerations, with recent ethical focal points centering on privacy and security, trust and reliability, fairness and discrimination, and transparency. Data modalities like fundus imaging, OCT and text data are frequently referenced, with ethical priorities determined by the specific nature and application of the data. Efforts to resolve these issues were primarily integrated into the development of diagnostic AI for ophthalmology, underlining the urgent need for further technological development aimed at addressing ethical concerns and enhanced normative guidance.

The quantity and trends of academic publications are key metrics for evaluating the evolution and development stage of a field. According to Price’s curve, knowledge domains expand through three phases: an initial stage, a period of exponential growth, and a stable development phase36. From 2000 to 2017, the field of ophthalmic AI ethics was in its infancy (Supplementary Fig. 2), marked by basic AI technology and minimal applications in ophthalmology, leading to limited scholarly attention to ethical concerns. Nevertheless, ophthalmic AI ethics emerged earlier and in greater volume compared to other medical sub-disciplines, trailing only oncology (Supplementary Table 3). From 2018 to 2022, the field entered an exponential growth (Supplementary Fig. 2), surpassing oncology in publication volume by 2022 (Supplementary Table 3), driven by the rapid adoption of AI technologies in ophthalmology and the emergence of new ethical challenges. Various reviews37,38,39 and most cited articles40,41,42,43,44 (Supplementary Table 4) involved key ethical challenges such as patient-centric approaches, informed consent, privacy, interpretability, data bias, equity in healthcare access, and robustness. The ethical issues outlined above are widely recognized across medical AI disciplines, though their prioritization varies with clinical and data contexts. In ophthalmology, privacy has been especially emphasized due to the biometric sensitivity of retinal imaging and the field’s early use of image-based AI. Oncology places greater weight on equity and access in light of disease heterogeneity and treatment disparities45,46,47, while dermatology focuses on algorithmic bias stemming from underrepresented skin tones48,49,50. These differences highlight ophthalmology’s distinct ethical orientation, especially in privacy protection, and its important role in shaping AI ethics in healthcare. Given its early development and sustained ethical engagement, ophthalmic AI ethics is expected to experience significant growth and increased relevance over the next three decades, as suggested by growth model-based of publication trends. Therefore, the following sections will delve into the field of ophthalmic AI ethics to understand its current state and address specific ethical issues that have previously remained unexplored.

In order to understand why ophthalmology holds a leading role and has achieved rapid progress in AI ethics, our study identifies the key countries and their supporting factors, as well as outlines the contributions of core collaborative clusters. Supported by substantial funding for related scientific research (Supplementary Table 8) and foundational ethical frameworks established by their governments17,18,51,52,53, the US, China, the UK, Singapore, and India, along with their institutions, lead in publications, citations, and H-index within the field of ophthalmic AI ethics. Despite these advances, collaboration remains limited in regions like Africa and the Middle East. This disparity may be attributed to factors such as limited research funding54, insufficient computational infrastructure54,55, regulatory uncertainty54,55, and lower availability of digitized ophthalmic data19 in these regions. To promote more inclusive collaboration, future efforts should focus on capacity-building initiatives, open-access data sharing platforms, and targeted international funding programs that actively support underrepresented regions in ophthalmic AI research.

Next, our work quantitatively reveals the research hotspots within the field of ophthalmic AI ethics, with a particular focus on ethical challenges and their evolution, to help scholars identify current and future focal points. Research hotspots span disease-specific, technological, and ethical dimensions. The “Multi-demand AI for DR” cluster underscores the attention on prevalent eye diseases like DR, requiring AI for its expert validation56,57,58,59,60,61, improved screening27,40,57,62,63,64, interpretability41,65,66,67, and timely referrals40,56,68,69. It also highlights the need to expand research to cover a broader range of ocular diseases, particularly those affecting the ocular surface and orbit. Technologically, the green cluster highlights ongoing efforts in ophthalmic image analysis, in which AI technologies like U-Net27,70,71,72 and GANs35,73,74,75,76 enhance feature extraction and segmentation, with techniques such as transfer learning42,77,78,79,80,81, attention mechanisms82,83,84,85, and data augmentation86,87 contributing to improvements in model interpretability, generalizability, and privacy safeguards.

The red cluster, “Progress and Ethics of Artificial Intelligence in Ophthalmology”, highlighted key ethical concerns in ophthalmic AI: “Transparency & Interpretability”, “Fairness & Equality (Bias)”, “Privacy & Data Security” and “Trust, Reliability & Robustness.” First, the “black box” nature of deep learning88 complicates ophthalmic AI decision-making38, underscoring the need for transparency through disclosures in data management and model development. For example, a deep learning system developed by DeepMind in collaboration with Moorfields Eye Hospital for OCT-based retinal disease triage provided visual outputs and confidence scores, which supported clinician interpretation and referral decisions, illustrating how transparency enables clinical adoption89. Second, the fairness of ophthalmic AI is closely tied to ophthalmic data. Population-specific anatomical differences90,91, imaging device variability92, and dataset disparities mean that AI trained on single-center or homogeneous data may exacerbate inequities in ophthalmic care. For instance, a diabetic retinopathy classifier trained on Kaggle-EyePACS images performed significantly worse in individuals with darker skin tones (60.5%) compared to those with lighter skin tones (73.0%), raising concerns about inequitable ophthalmic disease detection and widening diagnostic disparities in real-world practice93. Third, the rapid advancement of AI in ophthalmology was driven by extensive data modalities94, including protected health information(PHI)95. Despite anonymization efforts, algorithms can still extract personal details, such as sex27,96 and age27,96 from ophthalmic data. A recent study from the University of Western Brittany showed that some diabetic retinopathy classification models are vulnerable to white-box membership inference attacks, allowing third-party users to detect patient inclusion in training data97. These vulnerabilities challenge the presumed anonymity of ophthalmic data, potentially discouraging data sharing while undermining reliance on ophthalmic AI. Last but not least, the imperative for “Trust, Reliability & Robustness” in ophthalmic AI is critical, as diagnostic errors can lead to irreversible visual impairment, profoundly affecting patients’ quality of life. However, evidence from real-world studies suggests that ophthalmic stakeholders’ lack of trust in AI decisions, shaped by algorithmic opacity, diagnostic uncertainty, and disruptions to clinician–patient dynamics, has limited adoption and hindered the reliable integration of AI into ophthalmic care pathways98.

As AI becomes more deeply integrated into ophthalmology, there has been a noticeable shift from traditional ethical themes like beneficence and respect toward addressing AI-specific ethical challenges. Since 2018, robustness as a foundation for trust and reliability has intensified. From 2019 to 2020, the rapid deployment of ophthalmic AI raised initial ethical concerns, with a focus on patient-centric design and autonomy99,100. Between 2020 and 2021, ethics discussions deepened, tackling the ‘black box’ nature of AI by improving transparency and interpretability67,101,102,103, and aiming to reduce biases to boost generalizability12,104,105. The need for trust and safety25,59,104,106,107 became more urgent. From 2021 to 2022, rapid AI advancements driven by big data presented new ethical dilemmas around privacy, security, and public trust. Researchers responded by enhancing privacy protections32,35,87,108,109,110,111, fortifying algorithms against attacks112,113, proactively identifying vulnerabilities, and striving to build societal trust in ophthalmic AI92,114,115.

To better guide the ethical application of multimodal data in ophthalmic AI, our study demonstrates that different data modalities in ophthalmic AI present unique ethical concerns based on their inherent properties and clinical uses. Given the essential role of fundus imaging and slit-lamp photography in screening posterior and anterior segment eye diseases116,117,118, and considering the diverse eye appearances and fundus structures across different populations91, thus making it imperative for ophthalmic AI to ensure unbiased lesion detection to support robust and reliable clinical decisions. OCT, corneal imaging, and visual field perimetry diagnose eye diseases by capturing subtle abnormalities, highlighting AI’s importance in interpreting minor lesions and ensuring equitable detection across diverse populations. Advancements in large language models (LLMs) and multimodal models have led to increased use of text and numeric data, such as electronic health records119, demanding transparency and equity in application and management, rigorously reducing biases in data processing to uphold each patient’s right to equitable eye health. However, conventional LLMs have limited proficiency in ophthalmic domain knowledge and are prone to hallucinations, leading to inconsistent responses to the same text and numeric data, which underscores the need for reliability when using these data in ophthalmic AI120,121. Eye/Facial appearance and ophthalmic radiology and ultrasound, which involve personal identifiers and deep tissue structures, prioritize privacy and data security to avert identification and breaches. Lastly, AI in surgical imaging, primarily related to robot-assisted retinal surgeries122,123,124,125,126,127,128,129, focuses on preventing severe outcomes like blindness, underscoring the necessity for operational safety and harm avoidance. These insights are crucial for refining ethical priorities across different data modalities in the development and application of ophthalmic AI, ensuring adherence to ethical standards in data management and usage to mitigate disputes and guarantee the regulated use of ophthalmic data.

Building on previous insights, our research further clarifies the prevailing strategies for addressing ethical challenges in ophthalmic AI and explores how these strategies are influenced by evolving technological and societal demands. Currently, the resolution of ethical issues in ophthalmic AI is collaterally addressed during the process of enhancing technical performance, accounting for 78.3% of strategies. Prioritizing technical performance, researchers often address ethical issues (e.g., Transparency & Interpretability) in a cost-effective way, typically by refining existing visualization technologies like Grad-CAM in AI. However, developing new technologies in ophthalmic AI that address specific ethical challenges requires interdisciplinary collaboration across ophthalmology, engineering, and ethics, necessitating long-term and systematic research efforts. Consequently, only 11.5% of publications are dedicated primarily to tackling ethical dilemmas, such as creating technologies digital mask130, or using self-supervised learning with minimal samples to reduce biases in retinal data131. Moreover, normative documents in ophthalmic AI provide a framework guiding technological implementation, but they cannot fully resolve practical issues, as they offer general guidelines rather than specific solutions. Nonetheless, they play a key role in establishing ethical standards. The field of ophthalmic AI, however, lacks consensus on data sharing, cross-border, and multi-center collaboration, crucial for privacy protection and ethical cooperation. Reflecting this growing recognition, recent trends highlight increasing awareness of single-strategy limitations, emphasizing the need to integrate ethical technologies with normative guidelines to address the complex challenges in ophthalmic AI effectively.

This study has capitalized on the use of a quantitative bibliometric approach to offer an objective and comprehensive analysis of ophthalmic AI ethics. It highlights ophthalmology’s leading role in medical AI ethics while systematically exploring development trends, collaboration patterns, and specific ethical challenges that previous qualitative studies could not address. Additionally, this study provides actionable insights and methodological frameworks transferable to other medical disciplines, guiding both current and future ethical analyses and interventions across these fields. However, there are a few limitations. Our analysis mainly relies on data from the WOSCC and Scopus databases retrieved as of June 15, 2023, potentially missing publications from other sources or grey literature, and studies published thereafter. Despite using the most common databases for such studies and including literature from nearly the past two decades, rapid developments in ophthalmic AI ethics and continual database updates could affect the timeliness of our findings. Additionally, our review focused only on English-language articles and reviews, possibly excluding relevant non-English publications. Future work should expand to include diverse databases and multilingual studies to ensure a comprehensive and globally inclusive perspective on ophthalmic AI ethics. Regular updates, combined with longitudinal studies, are essential to track the evolution of ethical challenges over time and maintain the relevance of the findings. Cross-disciplinary research will further broaden the applicability of these insights to other medical fields. By integrating these directions, future research can enhance the relevance and practical application of ophthalmic AI ethics, ultimately contributing to more robust and globally applicable ethical standards in medical AI.

In conclusion, ophthalmology plays a leading role in medical AI ethics, spearheaded by institutions and scholars in the US, China, the UK, Singapore and India. Current ethical concerns focus on privacy, security, fairness, and transparency, with distinct ethical priorities arising from the nature and use of various data modalities. Most research studies address ethical issues during diagnostic algorithm development. Moving forward, there is both a need and a trend toward AI technologies and guidelines tailored to specific ethical challenges. Using ophthalmology as an exemplar, our work provides other disciplines with a robust quantitative analytical method. Together with the experience gained from ophthalmology’s rapid development on AI-related ethical issues, other fields of biomedical research can use these insights to better understand their own challenges and guide future ethical advancements within their specific fields.

Methods

Document retrieval

A comprehensive search strategy was formulated incorporating terms related to “Medicine and its Sub-disciplines,” “Artificial Intelligence,” and “Ethics”. Discipline-specific searches covered 16 themes including “Medicine and Health”, “Cardiovasology”, “Respirology”, “Urology and Male reproductive system”, “Gastroenterology”, “Neurology”, “Psychiatry and Mental health”, “Musculoskeletal and Connective tissue diseases”, “Gynecology and Obstetrics”, “Pediatrics”, “Endocrinology”, “Hematology and Lymphatic system”, “Oncology”, “Ophthalmology”, “Dermatology” and “Otolaryngology”. Ethical terms were derived from commonly used vocabularies in medicine132 and AI133, and AI-related keywords encompassed broad concepts of AI134. The specific search terms are listed in Supplementary Table 2.

Given the interdisciplinary nature of the topic spanning engineering, medicine, and social sciences, databases such as WOSCC and Scopus were selected to ensure data quality. The search was restricted to publications between January 1, 2000, and June 15, 2023, in English, and limited to “Articles” and “Reviews.”

Inclusion and exclusion criteria

Search results for ophthalmic AI ethics from WOSCC (n = 1082) and Scopus (n = 1084) were downloaded and consolidated in Endnote after removing 67 duplicates. The criteria for inclusion and exclusion were as follows:

Inclusion criteria: (1) Time Frame, January 1, 2000 - June 15, 2023; (2) Themes must relate to all three of the following: ethics or morality; ophthalmology or eye diseases or eye and vision health; AI; (3) Contents of the publications must address one or more of the following: ethical challenges specific to ophthalmic AI; standards, norms, guidelines, consensus or legal documents for ethics of ophthalmic AI; technologies that address ethical issues133, which include, but are not limited to: computer vision, machine learning (including deep learning), natural language processing, and robotics, amongst others; cases or real-world applications that address ethical issues in ophthalmic AI; (4) Language has been limited to English; (5) Document types include articles or reviews.

Exclusion criteria: (1) No full text available or publications with duplicated content; (2) Publications only related to one or two of the required themes; (3) Non-human research; (4) Language that is not English; (5) Documents that are not articles or reviews.

Two authors (XC and YY) independently reviewed 2099 publications, with any discrepancies resolved through consultation with a third reviewer (DY). Following an in-depth manual screening process, 1,601 publications were excluded, leaving 498 publications (429 articles and 69 reviews) for detailed bibliometric analysis. The detailed methodology is illustrated in Supplementary Fig. 1.

Disciplinary positioning, development stage, and key contributors

We applied bibliometric methods to all literature sourced from the WOSCC and Scopus databases, following the quality control guidelines by Donthu and colleagues135.

To delineate the role of ophthalmic AI ethics within the broader medical AI ethics landscape, we assessed both total and annual publication volumes relative to other medical disciplines. This analysis considered overall publication volume, trends over the past five years, and initial publication frequencies. Due to its interdisciplinary focus and selective journal indexing (e.g., Science Citation Index), this evaluation exclusively utilized the original records retrieved from the WOSCC database.

For the development stage, performance analysis was used to quantify research contributions through publication volume and citation frequency. Considering the cutoff of June 2023, all analysis was conducted up to the end of 2022 to mitigate truncation effects. An exponential function curve fitted to data from the past five years, with the adjustment determinant (Rad²) calculated, with growth rate of publications was determined using the following formula:

The performance analysis described above was designed to demonstrate the change in the annual number of publications in the literature over a period of time13, to determine developmental stage of the research field, and to predict the future trend of publications. Additionally, annual citation trends were analyzed to evaluate the impact and maturity of the field. All statistical analyses were performed using Microsoft Excel 2016 and visualized using GraphPad Prism (version 9.5.1).

To identify key contributing countries, institutions, and authors in ophthalmic AI ethics, performance analysis was used to determine the top ten countries, institutions, and authors based on the number of publications. In addition, co-authorship analysis evaluated social interactions among entities (countries/institutions/authors) and their contribution to the research field. This study employed VOSviewer (version 1.6.19)136 and Bibliometrix137 to perform co-authorship network after consolidating synonyms for countries/institutions/authors (Supplementary Note 1), with annotations for node meanings and scale.

Research hotspots and ethical themes

Using VOSviewer (version 1.6.19), this study merged synonymous terms from titles and abstracts (e.g., “AI” and “artificial intelligence”, details in Supplementary Note 1) and removed irrelevant nodes to extract keywords appearing at least 10 times (110 in total), creating a co-occurrence network and an overlay visualization (time trend) map for terms.

For a deeper examination of ethical themes in ophthalmic AI literature, we identified nine key ethical themes based on established reviews32,33,34,35 and prevalent discussions in AI and medicine132,133. These include “Transparency & Interpretability”, “Fairness & Equity”, “Responsibility & Accountability”, “Privacy & Data Security”, “Non-maleficence & Beneficence”, “Autonomy & Respect”, “Trust, Reliability & Robustness”, “Solidarity” and “Sustainability”. The vocabulary and content of each ethical theme is detailed in Supplementary Table 9. Comprehensive bibliometric analysis was conducted manually by two authors (XC and YY), with discrepancies adjudicated by a third reviewer (DY), by reviewing the full content of the included literatures to assess the frequencies and trends of these topics from 2018 to 2022, which were visualized using Microsoft Excel 2016.

Common data modalities and their ethical focus

After reviewing ophthalmic AI literature, we categorized 10 common ophthalmic data modalities116,138 as variables of interest, including: “Text and numeric data”, “Eye/Facial appearance photography”, “Slit-lamp photography”, “Corneal imaging”, “Optical coherence tomography”, “Ophthalmic radiology and Ultrasound”, “Fundus imaging”, “Perimetry”, “Surgery Imaging” and “Others”. Frequencies and trends for each data modality and associated ethical themes were analyzed through comprehensive bibliometric analysis to determine their ethical focus.

Resolution strategies and trends

Four resolution strategy variables have been identified. For technology-based strategies, we distinguished between “Technology-involving ethics”, which refers to methods where ethical issues are addressed as a secondary consideration during the development of ophthalmic AI, and “Technology-ethics domain”, which focuses on developing algorithms primarily to address ethical issues. Literature solely centered on normative documents such as guidelines was classified under the “Normative document domain”. Finally, publications that gave equal emphasis to both technological development and adherence to normative documents fall into the “Both technology & normative documents domain”. Frequencies and trends of these strategies were analyzed to identify the primary strategies and evolving trends in ophthalmic AI ethics.

Data availability

The datasets analyzed in this study were obtained from the WOSCC and Scopus under institutional licenses and cannot be publicly shared due to licensing restrictions. Researchers with access to these databases can replicate the dataset using the search terms and screening strategy described in Supplementary Table 2 and Supplementary Fig. 1. H.L. accessed and verified all data reported in the manuscript.

Code availability

VOSviewer (v1.6.19) and Bibliometrix R package were used for common bibliometric analysis. These tools are open-source and publicly accessible at www.vosviewer.com and www.bibliometrix.org, respectively. No custom code was generated and used.

References

He, J. et al. The practical implementation of artificial intelligence technologies in medicine. Nat. Med. 25, 30–36 (2019).

Rajpurkar, P., Chen, E., Banerjee, O. & Topol, E. J. AI in health and medicine. Nat. Med. 28, 31–38 (2022).

Ying, H. et al. A multicenter clinical AI system study for detection and diagnosis of focal liver lesions. Nat. Commun. 15, 1131 (2024).

Han, R. et al. Randomised controlled trials evaluating artificial intelligence in clinical practice: a scoping review. Lancet Digit. Health 6, e367–e373 (2024).

Wolf, R. M. et al. Autonomous artificial intelligence increases screening and follow-up for diabetic retinopathy in youth: the ACCESS randomized control trial. Nat. Commun. 15, 421 (2024).

Lin, D. et al. Application of Comprehensive Artificial intelligence Retinal Expert (CARE) system: a national real-world evidence study. Lancet Digit Health 3, e486–e495 (2021).

Abramoff, M. D. et al. Autonomous artificial intelligence increases real-world specialist clinic productivity in a cluster-randomized trial. npj Digit. Med. 6, 1–8 (2023).

Womersley, K., Fulford, K., Fulford, K. B., Koralus, P. & Handa, A. Hearing the patient’s voice in AI-enhanced healthcare. BMJ 383, p2758 (2023).

Sorich, M. J., Menz, B. D. & Hopkins, A. M. Quality and safety of artificial intelligence generated health information. BMJ 384, q596 (2024).

Chan, S. C. C., Neves, A. L., Majeed, A. & Faisal, A. Bridging the equity gap towards inclusive artificial intelligence in healthcare diagnostics. BMJ 384, q490 (2024).

Stahl, B. C. Ethical Issues of AI. Artificial Intelligence for a Better Future 35–53 https://doi.org/10.1007/978-3-030-69978-9_4 (2021).

Rodrigues, R. Legal and human rights issues of AI: Gaps, challenges and vulnerabilities. J. Respons. Technol. 4, 100005 (2020).

Saheb, T., Saheb, T. & Carpenter, D. O. Mapping research strands of ethics of artificial intelligence in healthcare: a bibliometric and content analysis. Comput. Biol. Med. 135, 104660 (2021).

Li, F., Ruijs, N. & Lu, Y. Ethics & AI: a systematic review on ethical concerns and related strategies for designing with AI in. Healthc. AI 4, 28–53 (2023).

European framework on ethical aspects of artificial intelligence, robotics and related technologies | Think Tank | European Parliament. https://www.europarl.europa.eu/thinktank/en/document/EPRS_STU(2020)654179.

Health Insurance Portability and Accountability Act of 1996 (HIPAA) | CDC. https://www.cdc.gov/phlp/publications/topic/hipaa.html (2022).

DOD Adopts Ethical Principles for Artificial Intelligence. U.S. Department of Defense https://www.defense.gov/News/Releases/Release/Article/2091996/dod-adopts-ethical-principles-for-artificial-intelligence/https%3A%2F%2Fwww.defense.gov%2FNews%2FReleases%2FRelease%2FArticle%2F2091996%2Fdod-adopts-ethical-principles-for-artificial-intelligence%2F.

yizengcasia. The Ethical Norms for the New Generation Artificial Intelligence, China. International Research Center for AI Ethics and Governance https://ai-ethics-and-governance.institute/2021/09/27/the-ethical-norms-for-the-new-generation-artificial-intelligence-china/ (2021).

Khan, S. M. et al. A global review of publicly available datasets for ophthalmological imaging: barriers to access, usability, and generalisability. Lancet Digit. Health 3, e51–e66 (2021).

Lin, J. C., Ghauri, S. Y., Lee, M. J., Scott, I. U. & Greenberg, P. B. Big data in ophthalmology: a systematic review of public databases for ophthalmic research. Eye 37, 3044–3046 (2023).

Reid, J. E. & Eaton, E. Artificial intelligence for pediatric ophthalmology. Curr. Opin. Ophthalmol. 30, 337–346 (2019).

Wu, X. et al. Application of artificial intelligence in anterior segment ophthalmic diseases: diversity and standardization. Ann. Transl. Med 8, 714 (2020).

Li, T. et al. Applications of deep learning in fundus images: a review. Med Image Anal. 69, 101971 (2021).

Foo, L. L. et al. Artificial intelligence in myopia: current and future trends. Curr. Opin. Ophthalmol. 32, 413–424 (2021).

Mishra, K. & Leng, T. Artificial intelligence and ophthalmic surgery. Curr. Opin. Ophthalmol. 32, 425–430 (2021).

Li, J.-P. O. et al. Digital technology, tele-medicine and artificial intelligence in ophthalmology: A global perspective. Prog. Retin Eye Res 82, 100900 (2021).

Iqbal, S., Khan, T. M., Naveed, K., Naqvi, S. S. & Nawaz, S. J. Recent trends and advances in fundus image analysis: a review. Comput. Biol. Med. 151, 106277 (2022).

Liu, L. et al. DeepFundus: a flow-cytometry-like image quality classifier for boosting the whole life cycle of medical artificial intelligence. Cell Rep. Med. 100912 https://doi.org/10.1016/j.xcrm.2022.100912 (2023).

Shahriar, S., Allana, S., Hazratifard, S. M. & Dara, R. A survey of privacy risks and mitigation strategies in the artificial intelligence life cycle. IEEE Access 11, 61829–61854 (2023).

Huang, A., Liu, P., Nakada, R., Zhang, L. & Zhang, W. Safeguarding Data in Multimodal AI: A Differentially Private Approach to CLIP Training. arXiv.org https://arxiv.org/abs/2306.08173v2 (2023).

Hájek, J. & Drahanský, M. Recognition-Based on Eye Biometrics: Iris and Retina. in Biometric-Based Physical and Cybersecurity Systems (eds. Obaidat, M. S., Traore, I. & Woungang, I.) 37–102. https://doi.org/10.1007/978-3-319-98734-7_3 (Springer International Publishing, Cham, 2019).

Tom, E. et al. Protecting data privacy in the age of AI-enabled ophthalmology. Transl. Vis. Sci. Technol. 9, 36 (2020).

Abdullah, Y. I., Schuman, J. S., Shabsigh, R., Caplan, A. & Al-Aswad, L. A. Ethics of artificial intelligence in medicine and ophthalmology. Asia-Pac. J. Ophthalmol. 10, 289 (2021).

Liu, T. Y. A. & Wu, J.-H. The ethical and societal considerations for the rise of artificial intelligence and big data in ophthalmology. Front. Med. 9, (2022).

Lim, J. S. et al. Novel technical and privacy-preserving technology for artificial intelligence in ophthalmology. Curr. Opin. Ophthalmol. 33, 174–187 (2022).

Fernández-Cano, A., Torralbo, M. & Vallejo, M. Reconsidering Price’s model of scientific growth: an overview. Scientometrics 61, 301–321 (2004).

Schmidt-Erfurth, U., Sadeghipour, A., Gerendas, B. S., Waldstein, S. M. & Bogunović, H. Artificial intelligence in retina. Prog. Retinal Eye Res. 67, 1–29 (2018).

Ting, D. S. W. et al. Artificial intelligence and deep learning in ophthalmology. Br. J. Ophthalmol. 103, 167–175 (2019).

Burton, M. J. et al. The lancet global health commission on global eye health: vision beyond 2020. Lancet Glob. Health 9, e489–e551 (2021).

Gargeya, R. & Leng, T. Automated identification of diabetic retinopathy using deep learning. Ophthalmology 124, 962–969 (2017).

Quellec, G., Charrière, K., Boudi, Y., Cochener, B. & Lamard, M. Deep image mining for diabetic retinopathy screening. Med. Image Anal. 39, 178–193 (2017).

Kermany, D. S. et al. Identifying medical diagnoses and treatable diseases by image-based deep learning. Cell 172, 1122–1131.e9 (2018).

Li, Q. et al. A cross-modality learning approach for vessel segmentation in retinal images. IEEE Trans. Med. Imaging 35, 109–118 (2016).

Raghavendra, U. et al. Deep convolution neural network for accurate diagnosis of glaucoma using digital fundus images. Inf. Sci. 441, 41–49 (2018).

Istasy, P. et al. The impact of artificial intelligence on health equity in oncology: scoping review. J. Med Internet Res. 24, e39748 (2022).

Hantel, A. et al. A process framework for ethically deploying artificial intelligence in oncology. JCO 40, 3907–3911 (2022).

Taber, P. et al. Artificial intelligence and cancer control: toward prioritizing justice, equity, diversity, and inclusion (JEDI) in emerging decision support technologies. Curr. Oncol. Rep. 25, 387–424 (2023).

Gordon, E. R. et al. Ethical considerations for artificial intelligence in dermatology: a scoping review. Br. J. Dermatol. 190, 789–797 (2024).

Chen, M. et al. Ethics of artificial intelligence in dermatology. Clin. Dermatol. 42, 313–316 (2024).

Shah, S. F. H. et al. Ethical implications of artificial intelligence in skin cancer diagnostics: use-case analyses. Br. J. Dermatol. 192, 520–529 (2025).

National Artificial Intelligence Strategy Unveiled. https://www.smartnation.gov.sg/media-hub/press-releases/national-artificial-intelligence-strategy-unveiled/.

U.S. Food and Drug Administration. Proposed Regulatory Framework for Modifications to Artificial Intelligence/Machine Learning (AI/ML)-Based Software as a Medical Device (SaMD). https://www.fda.gov/media/122535/download.

House of Lords - AI in the UK: ready, willing and able? - Artificial Intelligence Committee. https://publications.parliament.uk/pa/ld201719/ldselect/ldai/100/10003.htm.

Hleihel, W. & Najjar, N. Impact of Artificial Intelligence on Healthcare and Its Challenges in the MENA Region. In AI in the Middle East for Growth and Business: A Transformative Force (eds Azoury, N. & Yahchouchi, G.) 131–144. https://doi.org/10.1007/978-3-031-75589-7_9 (Springer Nature Switzerland, Cham, 2025).

Ade-Ibijola, A. & Okonkwo, C. Artificial Intelligence in Africa: Emerging Challenges. in Responsible AI in Africa: Challenges and Opportunities (eds Eke, D. O., Wakunuma, K. & Akintoye, S.) 101–117. https://doi.org/10.1007/978-3-031-08215-3_5 (Springer International Publishing, Cham, 2023).

Gulshan, V. et al. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. JAMA 316, 2402–2410 (2016).

Heydon, P. et al. Prospective evaluation of an artificial intelligence-enabled algorithm for automated diabetic retinopathy screening of 30 000 patients. Br. J. Ophthalmol. 105, 723–728 (2021).

Nielsen, K. B., Lautrup, M. L., Andersen, J. K. H., Savarimuthu, T. R. & Grauslund, J. Deep learning-based algorithms in screening of diabetic retinopathy: a systematic review of diagnostic performance. Ophthalmol. Retin. 3, 294–304 (2019).

Abramoff, M. D., Tobey, D. & Char, D. S. Lessons learned about autonomous AI: finding a safe, efficacious, and ethical path through the development process. Am. J. Ophthalmol. 214, 134–142 (2020).

Li, F. et al. Deep learning-based automated detection for diabetic retinopathy and diabetic macular oedema in retinal fundus photographs. Eye 36, 1433–1441 (2022).

Goldstein, J., Weitzman, D., Lemerond, M. & Jones, A. Determinants for scalable adoption of autonomous AI in the detection of diabetic eye disease in diverse practice types: key best practices learned through collection of real-world data. Front Digit Health 5, 1004130 (2023).

Saenz, A. D., Harned, Z., Banerjee, O., Abramoff, M. D. & Rajpurkar, P. Autonomous AI systems in the face of liability, regulations and costs. NPJ Digit Med. 6, 185 (2023).

Wolf, R. M. et al. Potential reduction in healthcare carbon footprint by autonomous artificial intelligence COMMENT. npj Digit. Med. 5, 62 (2022).

Wolf, R. M., Channa, R., Abramoff, M. D. & Lehmann, H. P. Cost-effectiveness of autonomous point-of-care diabetic retinopathy screening for pediatric patients with diabetes. JAMA Ophthalmol. 138, 1063–1069 (2020).

Hanif, A. M., Beqiri, S., Keane, P. A. & Campbell, J. P. Applications of interpretability in deep learning models for ophthalmology. Curr. Opin. Ophthalmol. 32, 452 (2021).

Lim, W. X., Chen, Z. & Ahmed, A. The adoption of deep learning interpretability techniques on diabetic retinopathy analysis: a review. Med. Biol. Eng. Comput. 60, 633–642 (2022).

Van Craenendonck, T., Elen, B., Gerrits, N. & De Boever, P. Systematic comparison of heatmapping techniques in deep learning in the context of diabetic retinopathy lesion detection. Transl. Vis. Sci. Technol. 9, 64 (2020).

Abràmoff, M. D., Lavin, P. T., Birch, M., Shah, N. & Folk, J. C. Pivotal trial of an autonomous AI-based diagnostic system for detection of diabetic retinopathy in primary care offices. npj Digit. Med 1, 1–8 (2018).

Bhaskaranand, M. et al. The value of automated diabetic retinopathy screening with the EyeArt system: a study of more than 100,000 consecutive encounters from people with diabetes. Diab. Technol. Ther. 21, 635–643 (2019).

Ronneberger, O., Fischer, P. & Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. in Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015 (eds. Navab, N., Hornegger, J., Wells, W. M. & Frangi, A. F.) 234–241 (Springer International Publishing, Cham, 2015). https://doi.org/10.1007/978-3-319-24574-4_28.

Adithya, V. K. et al. EffUnet-SpaGen: an efficient and spatial generative approach to glaucoma detection. J. Imaging 7, 92 (2021).

Chen, C., Chuah, J. H., Ali, R. & Wang, Y. Retinal vessel segmentation using deep learning: a review. IEEE Access 9, 111985–112004 (2021).

Ng, W. Y. et al. Updates in deep learning research in ophthalmology. Clin. Sci. 135, 2357–2376 (2021).

Niu, Y., Gu, L., Zhao, Y. & Lu, F. Explainable diabetic retinopathy detection and retinal image generation. IEEE J. Biomed. Health Inform. 26, 44–55 (2022).

Salini, Y. & HariKiran, J. Deepfakes on retinal Images using GAN. Int. J. Adv. Comput. Sci. Appl. 13, 701–708 (2022).

Kugelman, J., Alonso-Caneiro, D., Read, S. A. & Collins, M. J. A review of generative adversarial network applications in optical coherence tomography image analysis. J. Optom. 15, S1–S11 (2022).

Jiang, Z., Zhang, H., Wang, Y. & Ko, S.-B. Retinal blood vessel segmentation using fully convolutional network with transfer learning. Comput. Med. Imaging Graph. 68, 1–15 (2018).

Hemelings, R. et al. Accurate prediction of glaucoma from colour fundus images with a convolutional neural network that relies on active and transfer learning. Acta Ophthalmol. 98, E94–E100 (2020).

Guo, C., Yu, M. & Li, J. Prediction of different eye diseases based on fundus photography via deep transfer learning. J. Clin. Med. 10, 5481 (2021).

Tariq, H. et al. Performance analysis of deep-neural-network-based automatic diagnosis of diabetic retinopathy. Sensors 22, 205 (2022).

Pin, K., Chang, J. H. & Nam, Y. Comparative study of transfer learning models for retinal disease diagnosis from fundus images. CMC-Comput. Mat. Contin. 70, 5821–5834 (2022).

Meng, Q., Hashimoto, Y. & Satoh, S. How to extract more information with less burden: fundus image classification and retinal disease localization with ophthalmologist intervention. IEEE J. Biomed. Health Inform. 24, 3351–3361 (2020).

Zhao, R., Li, Q., Wu, J. & You, J. A nested U-shape network with multi-scale upsample attention for robust retinal vascular segmentation. Pattern Recognit. 120, 107998 (2021).

Papadopoulos, A., Topouzis, F. & Delopoulos, A. An interpretable multiple-instance approach for the detection of referable diabetic retinopathy in fundus images. Sci. Rep. 11, 14326 (2021).

Yan, Y. et al. Attention-based deep learning system for automated diagnoses of age-related macular degeneration in optical coherence tomography images. Med. Phys. 48, 4926–4934 (2021).

Thakoor, K. A. et al. Strategies to improve convolutional neural network generalizability and reference standards for glaucoma detection from OCT scans. Transl. Vis. Sci. Technol. 10, 16 (2021).

Abbood, S. H., Hamed, H. N. A., Rahim, M. S. M., Alaidi, A. H. M. & ALRikabi, H. T. S. D. R.-L. L. Gan: diabetic retinopathy lesions synthesis using generative adversarial network. Int. J. Online Biomed. Eng. 18, 151–163 (2022).

Litjens, G. et al. A survey on deep learning in medical image analysis. Med. Image Anal. 42, 60–88 (2017).

De Fauw, J. et al. Clinically applicable deep learning for diagnosis and referral in retinal disease. Nat. Med. 24, 1342–1350 (2018).

Zetterberg, M. Age-related eye disease and gender. Maturitas 83, 19–26 (2016).

Bourne, R. R. A. Ethnicity and ocular imaging. Eye (Lond.) 25, 297–300 (2011).

González-Gonzalo, C. et al. Trustworthy AI: Closing the gap between development and integration of AI systems in ophthalmic practice. Prog. Retin Eye Res. 90, 101034 (2022).

Burlina, P., Joshi, N., Paul, W., Pacheco, K. D. & Bressler, N. M. Addressing artificial intelligence bias in retinal disease diagnostics. arXiv.org https://arxiv.org/abs/2004.13515v4 (2020).

Cheng, C.-Y. et al. Big data in ophthalmology. Asia-Pac. J. Ophthalmol. 9, 291 (2020).

Kayaalp, M. Patient privacy in the era of big data. Balk. Med J. 35, 8–17 (2018).

Alonso-Fernandez, F., Hernandez-Diaz, K., Ramis, S., Perales, F. J. & Bigun, J. Facial masks and soft-biometrics: Leveraging face recognition CNNs for age and gender prediction on mobile ocular images. IET Biometr. 10, 562–580 (2021).

Hamidouche, M., Bellafqira, R., Quellec, G. & Coatrieux, G. White-box Membership Attack Against Machine Learning Based Retinopathy Classification. Preprint at https://doi.org/10.48550/arXiv.2206.03584 (2022).

Saad, A., Turgut, F., Becker, M., DeBuc, D. & Somfai, G. M. Stakeholder attitudes on AI integration in ophthalmology. Klin. Monbl Augenheilkd. 242, 515–520 (2025).

Keskinbora, K. H. Medical ethics considerations on artificial intelligence. J. Clin. Neurosci. 64, 277–282 (2019).

Ethical standards in robotics and AI | Nature Electronics. https://www.nature.com/articles/s41928-019-0213-6.

Yoo, T. K. et al. Explainable machine learning approach as a tool to understand factors used to select the refractive surgery technique on the expert level. Transl. Vis. Sci. Technol. 9, 8 (2020).

Singh, A. et al. Evaluation of explainable deep learning methods for ophthalmic diagnosis. Clin. Ophthalmol. 15, 2573–2581 (2021).

Liu, T. Y. A. & Bressler, N. M. Controversies in artificial intelligence. Curr. Opin. Ophthalmol. 31, 324–328 (2020).

Raman, R. et al. Using artificial intelligence for diabetic retinopathy screening: Policy implications. Indian J. Ophthalmol. 69, 2993–2998 (2021).

He, M., Li, Z., Liu, C., Shi, D. & Tan, Z. Deployment of artificial intelligence in real-world practice: opportunity and challenge. Asia-Pac. J. Ophthalmol. 9, 299–307 (2020).

Xie, Y. et al. Health economic and safety considerations for artificial intelligence applications in diabetic retinopathy screening. Transl. Vis. Sci. Technol. 9, 22 (2020).

Ramessur, R. et al. Impact and challenges of integrating artificial intelligence and telemedicine into clinical ophthalmology. Asia-Pac. J. Ophthalmol. 10, 317 (2021).

Tan, T.-E. et al. Retinal photograph-based deep learning algorithms for myopia and a blockchain platform to facilitate artificial intelligence medical research: a retrospective multicohort study. Lancet Digit. Health 3, E317–E329 (2021).

Gutierrez, L. et al. Application of artificial intelligence in cataract management: current and future directions. Eye Vis. (Lond.) 9, 3 (2022).

Halfpenny, W. & Baxter, S. L. Towards effective data sharing in ophthalmology: data standardization and data privacy. Curr. Opin. Ophthalmol. 33, 418–424 (2022).

Nguyen, T. X. X. et al. Federated learning in ocular imaging: current progress and future direction. Diagnostics 12, 2835 (2022).

Lal, S. et al. Adversarial attack and defence through adversarial training and feature fusion for diabetic retinopathy recognition. Sensors 21, 3922 (2021).

Zbrzezny, A. M. & Grzybowski, A. E. Deceptive tricks in artificial intelligence: adversarial attacks in ophthalmology. J. Clin. Med. 12, 3266 (2023).

Taribagil, P., Hogg, H. D. J., Balaskas, K. & Keane, P. A. Integrating artificial intelligence into an ophthalmologist’s workflow: obstacles and opportunities. Expert Rev. Ophthalmol. 18, 45–56 (2023).

Kumar, P., Chauhan, S. & Awasthi, L. K. Artificial intelligence in healthcare: review, ethics, trust challenges & future research directions. Eng. Appl. Artif. Intell. 120, 105894 (2023).

Li, Z. et al. Artificial intelligence in ophthalmology: the path to the real-world clinic. CR Med 4, 101095 (2023).

Bhambra, N., Antaki, F., Malt, F. E., Xu, A. & Duval, R. Deep learning for ultra-widefield imaging: a scoping review. Graefes Arch. Clin. Exp. Ophthalmol. 260, 3737–3778 (2022).

Oganov, A. C. et al. Artificial intelligence in retinal image analysis: development, advances, and challenges. Surv. Ophthalmol. 68, 905–919 (2023).

Lin, W.-C., Chen, J. S., Chiang, M. F. & Hribar, M. R. Applications of artificial intelligence to electronic health record data in ophthalmology. Transl. Vis. Sci. Technol. 9, 13–13 (2020).

Chotcomwongse, P., Ruamviboonsuk, P. & Grzybowski, A. Utilizing large language models in ophthalmology: the current landscape and challenges. Ophthalmol. Ther. https://doi.org/10.1007/s40123-024-01018-6 (2024).

Betzler, B. K. et al. Large language models and their impact in ophthalmology. Lancet Digit Health 5, e917–e924 (2023).

Channa, R., Iordachita, I. & Handa, J. T. Robotic vitreoretinal surgery. Retin. -J. Retin. Vitr. Dis. 37, 1220–1228 (2017).

Roizenblatt, M., Grupenmacher, A. T., Belfort Junior, R., Maia, M. & Gehlbach, P. L. Robot-assisted tremor control for performance enhancement of retinal microsurgeons. Br. J. Ophthalmol. 103, 1195–1199 (2019).

He, C. et al. Toward safe retinal microsurgery: development and evaluation of an RNN-based active interventional control framework. IEEE Trans. Biomed. Eng. 67, 966–977 (2020).

Ebrahimi, A. et al. Adaptive control improves sclera force safety in robot-assisted eye surgery: a clinical study. IEEE Trans. Biomed. Eng. 68, 3356–3365 (2021).

Ebrahimi, A., Sefati, S., Gehlbach, P., Taylor, R. H. & Iordachita, I. I. Simultaneous online registration-independent stiffness identification and tip localization of surgical instruments in robot-assisted eye surgery. IEEE Trans. Robot. 39, 1373–1387 (2023).

Edwards, T. L. et al. First-in-human study of the safety and viability of intraocular robotic surgery. Nat. Biomed. Eng. 2, 649–656 (2018).

Cehajic-Kapetanovic, J. et al. First-in-human robot-assisted subretinal drug delivery under local anesthesia. Am. J. Ophthalmol. 237, 104–113 (2022).

Jungo, A. et al. Unsupervised out-of-distribution detection for safer robotically guided retinal microsurgery. Int. J. Comput. Assist. Radiol. Surg. 18, 1085–1091 (2023).

Yang, Y. et al. A digital mask to safeguard patient privacy. Nat. Med. 28, 1883-+ (2022).

Burlina, P. et al. Low-shot deep learning of diabetic retinopathy with potential applications to address artificial intelligence bias in retinal diagnostics and rare ophthalmic diseases. JAMA Ophthalmol. 138, 1070–1077 (2020).

Gillon, R. Medical ethics: four principles plus attention to scope. BMJ 309, 184–188 (1994).

Jobin, A., Ienca, M. & Vayena, E. The global landscape of AI ethics guidelines. Nat. Mach. Intell. 1, 389–399 (2019).

Chowdhary, K. R. Introducing Artificial Intelligence. In Fundamentals of Artificial Intelligence (ed Chowdhary, K. R.) 1–23. https://doi.org/10.1007/978-81-322-3972-7_1 (Springer India, New Delhi, 2020).

Donthu, N., Kumar, S., Mukherjee, D., Pandey, N. & Lim, W. M. How to conduct a bibliometric analysis: an overview and guidelines. J. Bus. Res. 133, 285–296 (2021).

van Eck, N. J. & Waltman, L. Software survey: VOSviewer, a computer program for bibliometric mapping. Scientometrics 84, 523–538 (2010).

Aria, M. & Cuccurullo, C. bibliometrix: an R-tool for comprehensive science mapping analysis. J. Informetr. 11, 959–975 (2017).

Abràmoff, M. D. et al. Foundational considerations for artificial intelligence using ophthalmic images. Ophthalmology 129, e14–e32 (2022).

Acknowledgements

This study was funded by the National Natural Science Foundation of China (NSFC, grant nos. 82441003, 82301265 and 82301256), the Science and Technology Planning Project of Guangdong Province (grant no. 2023A1111120011), the China Postdoctoral Science Foundation (grant no. 2023M734047) and the Young Elite Scientists Sponsorship Program by CAST (grant no.2024QNRC001). The funders played no role in study design, data collection, analysis, and interpretation of data, or the writing of this manuscript.

Author information

Authors and Affiliations

Contributions

These authors contributed equally: X.C., Y.Y. and D.Y. These authors jointly supervised this work: P.Y.W.M., M.J.B., L.L. and H.L. H.L. conceived and designed the study. H.L. and Y.Y. managed project administration. X.C. and Y.Y. were responsible for data curation, formal analysis, investigation, software development, and visualization. X.C., Y.Y. and M.L. developed methodology. X.C., Y.Y., R.W. and Y.L. contributed to data visualization. D.Y., R.W. and H.L. provided supervision. D.Y., H.L., X.C. and Y.H. drafted the original manuscript. D.Y., R.W., Y.L., W.Z., P.Y.W.M., M.J.B., L.L. and H.L. conducted the review of the manuscript for important intellectual content. All authors have read and approved the final manuscript. H.L. is the study guarantor.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Chen, X., Yang, Y., Yun, D. et al. Current status and solutions for AI ethics in ophthalmology: a bibliometric analysis. npj Digit. Med. 8, 594 (2025). https://doi.org/10.1038/s41746-025-01976-6

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41746-025-01976-6