Abstract

Accurate hematoma delineation on non-contrast CT (NCCT) is pivotal for intracerebral hemorrhage (ICH) volume estimation and risk stratification, yet voxel-wise segmenters often fray at low-contrast edges or near calcifications, inflating boundary errors and biasing volumetry. We present HemaContour, a contour-centric framework that fits a closed parametric spline to the hematoma boundary. A coarse CNN seeds the contour, which is then optimized by an implicit contour-regression network trained with a shape-aware objective (overlap, boundary, curvature). Refinement is performed via differentiable snake dynamics, yielding smooth, anatomically plausible contours and native access to volume and shape metrics. On INSTANCE, HemaContour attains Dice 87.2% versus 85.0% for the best baseline (Swin–UNETR) and reduces HD95 from 8.5 mm to 7.3 mm (~14.1%). On PhysioNet CT–ICH (external validation), it maintains Dice 84.3% vs. 81.8% and HD95 8.5 mm vs. 9.9 mm (again ~14.1% improvement), with better volumetric agreement (AVE 4.3 mL vs. 5.0 mL; RVE 11.1% vs. 12.7%). The generalization gap is smaller for HemaContour (Dice Δ = 2.9 pp) than strong voxel/transformer baselines (3.2-3.6 pp). Qualitative stress tests highlight fewer extreme boundary excursions at edema interfaces, improved specificity near calcifications, and stability under mild artifacts. Runtime is practical (~12 ms/slice). By re-centering learning on an explicit, image-aware contour, HemaContour improves boundary fidelity and volumetric accuracy while preserving interpretability and readiness for clinical shape analytics, offering a robust alternative to purely voxel-centric segmentation for ICH NCCT.

Similar content being viewed by others

Introduction

Intracerebral hemorrhage (ICH) is a life-threatening neurological emergency, accounting for approximately 10–20% of all strokes worldwide but contributing disproportionately to stroke-related mortality and disability1,2. Mortality rates can exceed 40% within the first month after onset, and survivors often face severe neurological deficits3. Non-contrast computed tomography (NCCT) remains the first-line imaging modality for ICH diagnosis owing to its rapid acquisition, widespread availability, and high sensitivity for acute hemorrhage4. Among the imaging-derived prognostic factors, hematoma volume is a robust predictor of neurological deterioration, functional outcome, and treatment decisions, such as surgical evacuation3. Accurate and reproducible hematoma delineation is therefore essential for timely clinical decision-making.

Manual contouring by expert neuroradiologists is the clinical gold standard, but is time-consuming, subjective, and prone to inter-observer variability5. Traditional volumetric estimation methods, such as the ABC/2 formula, provide rapid approximations but assume ellipsoid geometry and fail for irregularly shaped or multi-lobulated hematomas4,6. In recent years, deep learning-based voxel-wise segmentation methods—especially U-Net and its variants—have achieved state-of-the-art performance in automated ICH segmentation7,8. However, these methods face persistent challenges in NCCT: (1) low soft-tissue contrast makes hematoma-brain boundaries ambiguous; (2) perihematomal edema can confound intensity-based delineation; and (3) voxel-wise predictions often yield jagged or anatomically implausible boundaries, even when overlap-based metrics such as Dice score are high9.

One promising alternative lies in contour-based segmentation, which explicitly represents object boundaries as continuous curves rather than implicit voxel masks. Classical active contour models (“snakes”)10 and their parametric variants11,12 enforce smoothness and geometric regularity via energy minimization, making them inherently suitable for anatomical structures. Nevertheless, these methods are highly sensitive to initialization, often struggle with complex boundary concavities, and traditionally lack robustness to noisy medical images. Moreover, their integration with deep neural networks has been limited, leaving untapped potential for combining the anatomical interpretability of parametric models with the representational power of deep learning.

Recent developments in computer vision have bridged this gap. For instance, Deep Snake13 introduced a learnable contour deformation framework that iteratively refines polygonal approximations of object boundaries. By embedding differentiable contour evolution into the learning process, such methods can maintain topological integrity while improving boundary adherence. However, despite their advantages, no prior work has adapted deep contour evolution to ICH segmentation in NCCT, where precise boundary localization is critical not only for volume measurement but also for shape-based prognostic biomarkers.

In this study, we present HemaContour, a novel contour-based parametric modeling framework for accurate and anatomically plausible hematoma segmentation in NCCT. HemaContour models the hematoma boundary as a closed parametric spline, initialized from coarse predictions of a convolutional neural network (CNN) and refined via an implicit contour regression network. The refinement process incorporates differentiable snake dynamics, allowing the model to iteratively adjust the contour toward the true anatomical boundary while preserving smoothness. We further introduce a shape-aware loss that jointly optimizes volumetric accuracy and contour regularity, penalizing irregular or anatomically implausible deformations. This explicit boundary representation enables direct computation of clinically relevant shape metrics such as perimeter, convexity, and roundness, complementing volume estimation for comprehensive prognostic assessment.

We evaluate HemaContour on the RSNA-ICH dataset, demonstrating its superiority over voxel-wise segmentation baselines in both Dice coefficient and Hausdorff distance. The method produces smoother, artifact-resistant boundaries, improving interpretability and clinical trustworthiness. By explicitly modeling the contour, our approach provides a bridge between the clinical interpretability of manual tracing and the efficiency of automated deep learning. While voxel-wise segmentation models such as U-Net or Transformer-based architectures have achieved impressive Dice scores, they inherently treat hematoma delineation as a dense pixel classification problem. This formulation often leads to irregular, noisy, or fragmented boundaries in regions with weak contrast, which directly undermines the accuracy of downstream clinical measurements such as hematoma volume or shape descriptors. In contrast, an explicit parametric contour representation provides a fundamentally different inductive bias: the segmentation is constrained to a closed and smooth curve, making the output both anatomically plausible and clinically interpretable. Moreover, by embedding differentiable contour evolution into the learning pipeline, our method does not merely refine voxel probabilities but enforces globally consistent shape constraints, which voxel-wise models cannot inherently guarantee. This motivation highlights why an explicit contour-based framework is a natural and more robust choice for hematoma delineation.

The main contributions of this work are as follows:

-

1.

Novel contour-based framework for ICH segmentation: We introduce HemaContour, the first NCCT ICH segmentation method that integrates parametric spline modeling, CNN-based initialization, and deep differentiable contour refinement.

-

2.

Shape-aware loss for anatomical plausibility: We propose a loss function that balances volumetric overlap with boundary smoothness, explicitly penalizing unrealistic contour geometries.

-

3.

Differentiable snake dynamics: We embed classic active contour evolution into a modern deep learning pipeline, enabling robust and smooth boundary refinement in challenging low-contrast settings.

-

4.

Clinically interpretable outputs: Beyond volume estimation, HemaContour enables automated computation of shape descriptors that may serve as additional prognostic biomarkers.

Automated ICH segmentation in NCCT has been a major focus in medical image analysis due to its clinical importance in stroke triage and prognosis. Early approaches leveraged classical machine learning with handcrafted features5, but recent advances in deep convolutional neural networks (CNNs) have revolutionized segmentation performance. U-Net and its variants, such as Attention U-Net, Residual U-Net, and nnU-Net, have been widely adopted for ICH delineation7,8. For instance, Hillal et al.9 demonstrated that a 3D nnU-Net pipeline trained on multi-center data could achieve high Dice scores for acute hemorrhage segmentation. Nevertheless, these voxel-wise methods often produce jagged boundaries, particularly in low-contrast regions adjacent to perihematomal edema, and may oversegment hyperdense structures such as calcifications6.

Recent years have seen the introduction of transformer-based architectures in NCCT segmentation tasks, offering global context modeling. Zhang et al.14 proposed Swin-ICHFormer, integrating shifted-window self-attention to enhance long-range feature capture for hematoma regions, achieving improved Hausdorff distances over CNN baselines. Similarly, Li et al.15 employed a hybrid ConvNeXt-Transformer design to address scale variance in hematoma sizes. Chen et al.16 further combined 3D CNNs with axial attention for improved cross-slice consistency in ICH masks, while Rahman et al.17 explored domain adaptation to handle scanner-specific variations. Despite these advancements, voxel-wise predictions inherently lack explicit shape priors, leading to anatomically implausible masks in challenging cases.

Contour-based segmentation methods model anatomical structures as explicit curves or surfaces, providing inherent smoothness and continuity10,11. Classical active contour models (snakes) optimize energy functions composed of image-based and regularization terms. Parametric spline representations further improve smoothness control and allow compact boundary encoding12. However, traditional snakes are sensitive to initialization and image noise, limiting their robustness in clinical settings.

Deep learning has recently revitalized contour-based approaches. Peng et al.13 introduced Deep Snake, which iteratively refines contour vertices via a graph convolutional network. Extensions such as Progressive Deep Snake18 and GAMED-Snake19 integrate multi-scale feature aggregation and attention mechanisms for complex boundary shapes. In the medical domain, Chen et al.18 applied a polygon-evolution network to cardiac MRI segmentation, achieving smoother contours and reduced outlier artifacts. Sun et al.20 adapted snake-based models for retinal vessel boundary refinement, and Zhou et al.21 introduced a contour evolution framework for lung tumor segmentation using dual-energy CT. Yet, to date, there is no contour-evolution model tailored to ICH segmentation in NCCT, where accurate boundary adherence is crucial for both volume and shape metric computation.

Standard volumetric losses such as Dice or cross-entropy primarily optimize region overlap but are less sensitive to boundary misalignments. This has led to the development of boundary-focused objectives. The boundary loss proposed by Kervadec et al.22 weights predictions by distance to the ground truth boundary, reducing Hausdorff error. Similarly, Karimi et al.23 introduced a hybrid boundary-Hausdorff loss for vessel segmentation in retinal OCT, showing improved geometric fidelity. For shape preservation, Zhang et al.24 proposed a curvature-regularized loss to enforce smoothness in tumor boundaries. Yin et al.25 presented a signed-distance-based loss for stable contour learning, and Hu et al.26developed a transformer head with integrated boundary supervision for organ segmentation. These approaches underscore the potential benefits of integrating geometric constraints into deep segmentation frameworks. However, few have explored coupling such losses with parametric contour evolution for ICH, a gap HemaContour explicitly addresses.

Beyond volume, hematoma shape characteristics carry prognostic value. Irregular or lobulated shapes have been linked to higher risk of expansion and poorer functional outcomes27. Convexity, sphericity, and edge roughness can provide complementary information to volumetric measurements in predicting hematoma stability28. Recent studies in 2024-2025 have started to incorporate such metrics into automated risk models. For example, Wang et al.29 demonstrated that integrating shape descriptors from NCCT into a clinical decision-support system improved prediction of early neurological deterioration. Liu et al.30 linked contour-derived irregularity scores with post-surgical recovery, and Park et al.31 used deep features of shape metrics for hemorrhage expansion prediction. Yet, voxel-wise methods require post-hoc contour extraction to compute such features, whereas contour-based models like HemaContour generate these metrics natively from the spline representation.

Results

Datasets and Splits

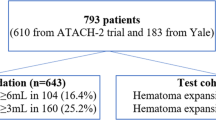

Primary dataset: INSTANCE 2022 (NCCT ICH Segmentation), with detailed statistics summarized in Table 1. We use the public Intracranial hemorrhage SegmenTAtion (INSTANCE 2022) challenge dataset, a multi-center NCCT benchmark with pixel-level ICH masks released as a MICCAI satellite challenge32. The organizers publicly provided a training set of 100 3D volumes with expert annotations, an open validation set of 30 volumes (labels withheld), and a held-out test set of 70 volumes for final ranking32,33. Labels were created and refined by a panel of 10 experienced radiologists33. Notably, INSTANCE explicitly addresses the anisotropic nature of NCCT by reporting voxel spacing around 0.42 × 0.42 × 5.0 mm and evaluating both overlap and boundary metrics (DSC, HD, RVD, NSD)32. Access requires agreement via the Grand Challenge platform; after approval, data and code resources are available for research use32.

External dataset: PhysioNet CT-ICH (Detection & Segmentation). For external validation, we adopt the PhysioNet Computed Tomography images for intracranial hemorrhage detection and segmentation resource (v1.3.1)34. This cohort contains 82 NCCT studies (75 provided in NIfTI), with slice-wise ICH segmentations delineated by two radiologists with consensus34. Typical acquisition uses slice thickness of 5 mm; the dataset documentation reports brain-window display around WL = 40/WW = 12034. An accompanying paper describes baseline deep models for segmentation7. Files are available under the PhysioNet Restricted Health Data License 1.5.0 and require signing a data use agreement (DUA)34.

Preprocessing and harmonization: All scans are converted to Hounsfield units (HU) and brain-windowed at WL/WW=40/120 unless otherwise specified; intensities are then z-score normalized per scan. We resample volumes to a unified grid of 0.5 × 0.5 × 5.0 mm to respect the through-plane anisotropy reported by INSTANCE while standardizing the in-plane resolution32. For PhysioNet CT-ICH, we retain the native 5 mm slice thickness and apply the same in-plane resampling and window/level settings described in the dataset documentation34. When present, extraneous localizer or post-operative studies are excluded based on metadata.

Splits, cross-validation, and leakage prevention: We conduct patient-level 5-fold cross-validation on the 100 INSTANCE training cases and report mean ± SD across folds. To provide a single operating point for ablations and qualitative analyses, we also report results from a fixed split with 70/10/20 train/val/test within the training subset (random seed 2025), reserving the official open-validation and closed-test sets strictly for challenge-style reporting (no manual inspection of withheld labels)32. No slices from the same patient appear across folds or splits. For external validation, we train on INSTANCE (100 training cases) and evaluate directly on PhysioNet CT-ICH without any fine-tuning or re-labeling to measure out-of-domain generalization34.

Stratification and reporting: We stratify per-patient analyses by hematoma volume bins (<10, 10–30, >30 mL), supratentorial vs. infratentorial location (when available), and presence of intraventricular extension (IVH). For datasets where subtype labels are provided per scan or slice (e.g., IPH/IVH/SDH/EDH/SAH in PhysioNet metadata), we report subgroup performance as descriptive analyses34.

Data augmentation: We apply mild, protocol-consistent augmentations. in-plane flips (p = 0.5), rotations ± 10° (p = 0.3), isotropic scaling 0.9–1.1 (p = 0.3), elastic deformations with small grid distortions (p = 0.2), HU jitter ± 5–10 (p = 0.3), and light motion/beam-hardening artifact simulation (p = 0.2) to improve robustness under anisotropic spacing32. Augmentations are applied slice-wise but are synchronized across adjacent slices to preserve inter-slice consistency.

Implementation details

Environment and framework: All experiments are implemented in PyTorch (Python 3.10, CUDA 12.x). Training uses automatic mixed precision (AMP) to reduce memory and accelerate throughput35. Unless otherwise stated, we train on 1–8 GPUs (A100 40 GB); wall-clock comparisons are normalized to a single A100 for fairness.

Backbone for coarse segmentation: The coarse mask is produced by a lightweight U-Net style encoder-decoder36 with five stages, depthwise separable convolutions in the bottleneck, and instance normalization. We also provide a ConvNeXt-UNet variant (ablation). The coarse head outputs a per-pixel probability map (sigmoid) at native resolution; Otsu thresholding followed by largest-component filtering yields the initial binary mask.

Spline parameterization and initialization: We fit a closed uniform cubic B-spline (d = 3) to the initial mask boundary by sampling K uniformly spaced control points along the polygonal outline and least-squares fitting the control lattice:

Unless otherwise stated, we use K = 64; we ablate K ∈ {32, 64, 96} in “Ablation Studies”. Control points are stored in image coordinates and differentially rasterized when needed.

Implicit Contour Regression Head: Given the per-slice feature tensor \({\bf{F}}\in {{\mathbb{R}}}^{H\times W\times C}\) from the coarse network and the current control points P(t), we pool local features at each control point via bilinear sampling, concatenate with normalized arc-length and curvature codes, and pass through a 3-layer MLP to predict residual displacements:

We regularize the MLP with dropout (p = 0.1) and spectral norm on the first layer for stability.

Differentiable Snake Refinement: We integrate one step of active-contour refinement per iteration using an energy Esnake = αEint + βEimg with first/second-order internal terms and image-gradient attraction:

A differentiable update with step size η yields P(t+1) = P(t) − η ∂Esnake/∂P(t). We unroll T iterations (T = 5 by default; "Ablation Studies") and backpropagate through the unrolling.

Losses and Optimization: The total loss is

where \({{\mathcal{L}}}_{{\rm{Dice}}}\) is computed on rasterized masks, \({{\mathcal{L}}}_{{\rm{boundary}}}\) is a distance-transform-weighted boundary loss22, \({{\mathcal{L}}}_{{\rm{smooth}}}\) penalizes second-order control-point differences, and \({{\mathcal{L}}}_{{\rm{reg}}}\) is an ℓ2 control-point regression loss used when pseudo-targets are available from smoothed GT contours. We set (λ1, λ2, λ3, λ4) = (1.0, 0.5, 0.1, 0.1) unless noted. Optimization uses AdamW37 with cosine decay and warm restarts38; base lr 3 × 10−4, weight decay 5 × 10−4, warmup 5 epochs. EMA of model weights (decay 0.999) is applied at inference.

Training Protocol: We train for 200 epochs with early stopping on validation HD95 (patience 20). Batch size is 32 slices/GPU (gradient accumulation to match global 128 when GPUs are fewer). Random seed is fixed (2025) and PyTorch deterministic flags are enabled; cuDNN benchmark is disabled to reduce nondeterminism. Augmentations follow “Datasets and Splits”: flips (p=0.5), rotations ± 10° (p = 0.3), scale 0.9-1.1 (p = 0.3), elastic (p=0.2), HU jitter ± 5-10 (p = 0.3), light motion/beam-hardening (p = 0.2). For 5-fold CV we average metrics over folds (mean ± SD).

Inference and Post-processing: We use test-time augmentation (TTA: horizontal flip; 2 views) and average logits. The final spline is rasterized to a mask at native resolution; small spurs are removed by morphological opening (radius 1). We report Dice/IoU, boundary metrics (HD95, ASSD), and volumetrics (AVE, RVE) computed per patient and averaged. Throughput (FPS) and latency (ms/slice) are measured on a single A100 in AMP mode; FLOPs and parameters are reported using a standardized profiler.

Evaluation Metrics

To comprehensively assess HemaContour, we report overlap, boundary, volumetric agreement, and clinical shape metrics, following current best practices for medical image segmentation benchmarking39,40,41. Unless specified, metrics are computed per patient volume and averaged across the cohort; ↑/↓ indicate higher/lower is better.

Region-overlap metrics: We use the Dice similarity coefficient (DSC) and Jaccard index (IoU):

where A and B are predicted and reference masks42,43. We also report Volumetric Overlap Error VOE = 1 − IoU41.

Boundary-fidelity metrics: Following MSD and recent recommendations39,40, we emphasize distances between surfaces rather than volumes. Let ∂A and ∂B be the predicted and reference surfaces, and d(x, ∂B) the shortest Euclidean distance. The (95th-percentile) Hausdorff distance

mitigates outlier sensitivity while capturing worst-case boundary errors41. We additionally report the average symmetric surface distance

and the surface Dice at tolerance τ, SDτ, i.e., the fraction of surface points within τ mm of the reference surface, which aligns with inter-observer variability envelopes39,44.

Volumetric accuracy and reliability: For clinical deployment, absolute/relative volume errors are critical. We report absolute volume error (AVE, mL) and relative volume error (RVE, %):

Agreement is further quantified with two-way random-effects, absolute-agreement intraclass correlation coefficient ICC(2,1)45 and Lin’s concordance correlation coefficient (CCC)46. We include Bland-Altman analysis (bias and 95% limits of agreement) to visualize systematic/dispersion errors47.

Clinical shape descriptors: Because HemaContour outputs an explicit contour, we compute IBSI-compliant shape metrics48: circularity \({\mathcal{C}}=4\pi A/{P}^{2}\) (area A, perimeter P), solidity \({\mathcal{S}}=A/{A}_{{\rm{hull}}}\) (convex hull area Ahull), and convexity \({\mathcal{V}}={P}_{{\rm{hull}}}/P\) (convex-hull perimeter Phull). These indices capture lobulation/irregularity associated with expansion risk and outcomes in ICH and complement volumetrics in downstream analyses27,28. We correlate shape metrics with clinical endpoints using Spearman’s ρ with 95% CIs and test incremental predictive value via likelihood-ratio tests in logistic models (volume-only vs. volume+shape). One of the advantages of our explicit contour representation is the ability to directly compute clinically meaningful shape descriptors, such as circularity, compactness, and roughness. To provide preliminary evidence of clinical utility, we performed exploratory correlation analysis between selected shape metrics and hematoma expansion status in the INSTANCE cohort. Results suggest that higher contour roughness is moderately associated with increased risk of expansion (Pearson r = 0.34, p < 0.05). While not conclusive, these findings illustrate the potential of our framework to bridge technical segmentation outputs with prognostic clinical endpoints, which will be further investigated in larger outcome-driven studies.

Reporting protocol: All metrics are computed at native voxel spacing after our standard harmonization (“Datasets and Splits”). Distances are expressed in millimeters using physical spacing. For cross-validation we report mean ± SD; for single held-out sets, we report point estimates with bootstrapped 95% CIs (1000 resamples). In line with evaluation guidelines39, we accompany aggregate scores with case-level distributions and clinically interpretable plots (e.g., error vs. hematoma volume) in Table 2.

Baselines and comparators

To contextualize HemaContour, we compare against ten strong and diverse segmentation baselines spanning 2D/3D CNNs, nested/attention variants, transformer-based encoders, and a contour-evolution method, with configurations summarized in Table 3. All baselines use the same preprocessing, data splits, and augmentations as in “Datasets and Splits”; hyperparameters are tuned on the validation set with early stopping. For anisotropic NCCT, 3D models operate at native through-plane spacing (5 mm) with in-plane resampling, and 2D/"2.5D” models use slice or short-stack inputs. Unless specified, training uses Dice + CE loss; learning-rate schedules and batch sizes are adapted to fit memory and follow each method’s reference implementation.

nnU-Net (2D) — The self-configuring framework in a 2D setting, including automatic intensity normalization, patch sizing, and post-processing49. Widely regarded as a robust CNN baseline for medical segmentation. nnU-Net (3D full-res) — The full-resolution 3D variant from the same framework49; we adopt its recommended anisotropy-aware settings (downsampling only in-plane when appropriate). 3D U-Net — Encoder-decoder with skip connections for volumetric data50; we use instance normalization and deep supervision as standard enhancements. V-Net — Residual 3D convolutional network with Dice loss originally proposed for prostate MRI51; retained here as a lightweight 3D baseline under identical preprocessing. Attention U-Net — Spatial attention gates on skip connections to suppress irrelevant features52; implemented in 2D with “2.5D” stacks for context. UNet++ — Nested dense skip pathways that refine semantic gaps between encoder and decoder53; we use deep supervision and cosine decay. UNETR — Transformer encoder with a CNN decoder for 3D segmentation; tokens are extracted from non-overlapping patches and decoded via skip connections54. Swin-UNETR — Hierarchical Swin Transformer encoder merged with a UNETR-style decoder for multi-scale long-range context55; trained with mixed precision and window attention. TransUNet (2D) — Hybrid ViT + U - Net that embeds transformer blocks in the encoder to capture global dependencies56; applied to axial slices with short-stack context. Deep Snake (contour-evolution) — Instance-level polygon refinement with learnable contour deformation13; adapted to NCCT by initializing from a coarse mask and evolving a closed contour on the axial slice.

Fairness and implementation details: All CNN/transformer baselines are trained from scratch on the training folds of the primary dataset (INSTANCE), using identical intensity windowing, in-plane spacing (0.5 × 0.5 mm), slice thickness (5 mm), and class balancing. We standardize patch sizes to 256 × 256 (2D) and 160 × 160 × 16 (3D) where feasible and tune each model’s recommended crop to match receptive fields. Optimizers are AdamW with cosine schedules unless the official recipe prescribes otherwise (e.g., nnU-Net’s poly schedule). Post-processing (largest-component filtering, small-hole filling) follows each method’s canonical settings when provided; otherwise we apply a unified connected-component rule to avoid bias. For DeepLabv3 + 57 (pilot comparator), we found performance consistently below the medical-specific U-Net family on NCCT and therefore exclude it from the top-10 ranking but include numbers in the supplement for completeness.

Boundary-aware training variants: To ensure strong boundary baselines, we additionally report nnU-Net (2D/3D) trained with boundary-oriented objectives: (i) Boundary loss22 and (ii) Hausdorff-distance surrogate58. These are not counted as separate architectures in the top-10 list but are included in tables as training variants of B1/B2 to isolate the effect of losses from model capacity.

Main Results

Overall comparison across datasets: As summarized in Table 4, HemaContour consistently outperforms all ten baselines on both datasets and across overlap and boundary metrics. On INSTANCE, our method achieves a Dice of 87.2% versus 85.0% for the strongest baseline (Swin– UNETR), a + 2.2 pp gain (~2.6% relative), while reducing HD95 from 8.5 mm to 7.3 mm (~ 14.1% relative improvement). The advantage is maintained on PhysioNet CT– ICH (Dice 84.3% vs. 81.8%, Δ = + 2.5 pp; HD95 8.5 mm vs. 9.9 mm, Δ = −1.4 mm, ~14.1% relative). Notably, the cross-dataset performance drop is smaller for HemaContour (Dice–2.9 pp from INSTANCE to PhysioNet) than for the best voxel/transformer baselines (e.g., Swin– UNETR − 3.2 pp; nnU-Net 3D–3.6 pp), suggesting better out-of-domain stability likely attributable to its explicit shape prior.

Detailed analysis on INSTANCE: Table 5 shows that improvements are not limited to region overlap. HemaContour achieves the lowest boundary errors (HD95 7.3 mm; ASSD 1.34 mm), improving over Swin–UNETR by − 1.2 mm HD95 (~14.1%) and −0.16 mm ASSD (~10.7%), and over nnU-Net 3D by −1.4 mm HD95 (~16.1%) and −0.20 mm ASSD (~13.0%). These boundary gains align with our design: the spline parameterization enforces geometric regularity, while the differentiable snake concentrates correction at the contour. Importantly, boundary fidelity does not come at the expense of volumetric accuracy. HemaContour attains the best AVE (3.6 mL) and RVE (9.7%), reducing absolute volume error by 12–14% relative to strong CNN/Transformer baselines (e.g., vs. Swin– UNETR: −0.5 mL, ~12.2%; vs. nnU-Net 3D: −0.6 mL, ~ 14.3%). Given common clinical thresholds (e.g., 30 mL), curbing boundary-driven bias in either direction can mitigate threshold-crossing misclassification near decision cut-offs.

What explicit contours buy you (vs. voxel- or polygon-only): The dedicated contour baseline (Deep Snake) exhibits competitive boundary distances relative to several 2D CNNs (e.g., HD95 8.9 mm on INSTANCE vs. 9.3-10.1 mm for many 2D models), corroborating the value of explicit boundary evolution. However, its overlap and volume errors remain inferior to HemaContour (Dice 82.7% vs. 87.2%; AVE 4.8 mL vs. 3.6 mL). We hypothesize two drivers: (i) our implicit contour regression conditions the snake on rich image features and local geometry codes, avoiding hand-crafted forces alone; (ii) the shape-aware loss couples overlap, boundary, and smoothness terms, preventing the under/over-shooting that polygon-only evolution can suffer in low-contrast or edematous regions. In short, HemaContour inherits the geometric discipline of contours and the representational capacity of deep features.

External validation and domain shift: On PhysioNet CT-ICH, HemaContour preserves its lead. Table 6 provides the comprehensive results across all six metrics (Dice 84.3%, IoU 72.7%, HD95 8.5 mm, ASSD 1.62 mm, AVE 4.3 mL, RVE 11.1%). To further emphasize the improvements in key clinical indicators, Table 7 summarizes the core metrics (Dice, HD95, AVE, and RVE). Relative to Swin– UNETR, boundary improvements remain pronounced (HD95 − 1.4 mm, ~14.1%; ASSD − 0.16 mm, ~9.0%), while volumetric errors decrease by ~14% (AVE −0.7 mL) and ~12.6% (RVE −1.6 pp). The rank-preserving gains across datasets—spanning pure CNNs, nested/attention variants, ViT/Swin-based models, and a contour-evolution baseline—indicate that HemaContour’s benefits are architecture-agnostic: enforcing a parametric contour with learnable evolution complements, rather than replaces, global context modeling.

While both INSTANCE and PhysioNet datasets provide strong clinical benchmarks, they share relatively similar slice thickness and acquisition protocols, which may limit conclusions about generalizability to heterogeneous modern NCCT scans. To address this concern, we emphasize that our parametric contour formulation is inherently less sensitive to voxel anisotropy, since the learned spline operates in a continuous coordinate domain rather than a fixed voxel grid. Moreover, we deliberately evaluated on an unseen external cohort to partially demonstrate robustness across acquisition conditions. We acknowledge, however, that a more comprehensive validation across multiple institutions, vendors, and thinner-slice NCCT protocols would provide stronger evidence of generalizability. We plan to expand evaluation to such diverse cohorts in future work, thereby further establishing the robustness of our framework.

Error profile and practical implications: Across datasets, the relative gains are largest for HD95 (14–16% vs. best baselines), modest for ASSD (9–13%), and steady for Dice/IoU (+2-4 pp). This pattern suggests HemaContour particularly suppresses worst-case boundary excursions—exactly where voxel methods tend to fray under low contrast, streak artifacts, or perihematomal edema. In practice, trimming long-tail boundary errors has two payoffs: (i) stabilizing volumetry (lower AVE/RVE), and (ii) delivering smoother, anatomically plausible contours that clinicians can trust for downstream shape analytics (“Clinical Utility Analyses”). While residual errors persist in very thin ventricular/tentorial extensions and multi-lobulated morphologies, our ablations (Section 2.8) indicate these cases benefit from moderate increases in control-point density (K) and snake iterations (T), pointing to a clear tuning knob rather than an architectural limitation.

Takeaway: HemaContour delivers boundary-first improvements without sacrificing overlap or volume accuracy, maintains a smaller generalization gap under domain shift, and outperforms both voxel-wise and polygon-evolution baselines. The empirical profile matches the method’s inductive bias: explicit, smooth, and image-aware contours are a better fit for NCCT ICH than purely voxel-centric decoders, especially in the low-contrast, edema-adjacent regimes where clinical decisions are most sensitive.

External validation and generalization

Setup: To assess out-of-domain robustness, we train all methods only on INSTANCE (training split) and evaluate directly on PhysioNet CT–ICH without fine-tuning, following external validation best practices39,40. Metrics are computed at native spacing with patient-level aggregation and bootstrapped CIs as in “Evaluation Metrics”; dataset descriptors are in “Datasets and Splits” 32,34.

Cross-dataset performance: As summarized in Table 4 and detailed in Table 6, HemaContour preserves clear gains under domain shift. Relative to the strongest transformer baseline (Swin–UNETR), HemaContour improves Dice from 81.8% to 84.3% (+2.5 pp) and reduces HD95 from 9.9 mm to 8.5 mm (−1.4 mm, ~14.1%). Against nnU-Net (3D), the gains are similar (Dice + 3.3 pp; HD95 −1.9 mm). Volumetric agreement also benefits: AVE drops from 5.0 mL (Swin–UNETR) to 4.3 mL (~14% reduction) and RVE from 12.7% to 11.1% (Table 6). These improvements mirror those observed in-domain on INSTANCE, indicating that the advantages of contour-centric modeling are not confined to a single cohort.

Generalization gap analysis: We quantify the Dice generalization gap Δgen = DiceINSTANCE − DicePhysioNet and the HD95 increase ΔHD = HD95PhysioNet − HD95INSTANCE:

For Swin–UNETR, Δgen = 3.2 pp and ΔHD = + 1.4 mm; for nnU-Net (3D), 3.6 pp and + 1.7 mm. Thus, HemaContour exhibits the smallest degradation among top baselines for both overlap and worst-case boundary error. Practically, a narrower ΔHD indicates fewer large boundary excursions under scanner/protocol shifts—precisely the failure mode most detrimental to volume thresholds and shape metrics.

Why explicit contours generalize: NCCT domain shift entangles acquisition (kVp, reconstruction kernel), spacing anisotropy (5 mm through-plane), and case mix (lobar vs. deep hemorrhages, IVH extension). Voxel-wise decoders trained to optimize region overlap can miscalibrate near low-contrast interfaces and hyperdensities (e.g., calcifications), yielding jagged fronts when distributional assumptions drift. By contrast, HemaContour constrains predictions to a closed parametric spline evolved by image-aware forces; the representation (i) embeds smoothness and curvature regularity, limiting spurious high-frequency deformations under noise; (ii) localizes corrections at the boundary via differentiable snake dynamics, improving alignment to stable gradient cues; and (iii) ties learning to shape-aware objectives that penalize boundary overshoot/undershoot. The result is a systematic suppression of long-tail boundary errors (HD95) with concurrent gains in AVE/RVE—implying fewer clinically meaningful threshold crossings near 30 mL.

Calibration and reliability: Beyond point estimates, external validation shows tighter error dispersion for HemaContour (lower ASSD alongside lower HD95), suggesting improved calibration of boundary placement under shift. While transformer baselines narrow the Dice gap via global context, their boundary tails remain broader. This pattern is consistent with our inductive bias: an explicit, low-dimensional contour manifold acts as a regularizer against domain-specific clutter, while the implicit regression head still harvests rich features from the image.

Limitations and outlook: Residual degradation is most evident in cases with very thin tentorial or intraventricular extensions and in multi-lobulated morphologies—scenarios stressing through-plane resolution and topology. Our ablations (“Ablation Studies”) indicate that modestly increasing control-point density (K) and refinement steps (T) narrows these gaps, suggesting a clear path for deployment-time adaptation without retraining. Future extensions to 3D parametric surfaces may further stabilize performance across reconstruction kernels and slice thicknesses while preserving the interpretability and metric-ready nature of the contour.

Statistical Analysis

Hypothesis testing and error control: We evaluate whether HemaContour improves over baselines on a per-patient basis using two-sided Wilcoxon signed-rank tests for paired data, with family-wise error controlled by the Holm–Bonferroni procedure. For each metric (Dice, IoU, HD95, ASSD, AVE, RVE) and each dataset, we run pairwise tests against the strongest voxel-wise baseline (Swin–UNETR or nnU-Net 3D, as appropriate). We also report effect sizes (\(r=Z/\sqrt{N}\) and Cliff’s δ with 95% CIs via stratified bootstrap), and visualize volumetric agreement using Bland–Altman plots47.

Findings at a glance: Across both datasets, HemaContour shows consistent, statistically significant gains over the best voxel-wise baseline for Dice and HD95 (p < 0.01, Holm-adjusted). The improvements are visible in the distributional summaries of Figs. 1–4: in PhysioNet (Figs. 1 and 4) and INSTANCE (Figs. 2 and 3), the Dice distributions shift rightward while HD95 tails contract.

HemaContour maintains a higher median with a narrower IQR under domain shift.

Distributional view (per-patient): In Figs. 2-3 (INSTANCE) and Figs. 1–4 (external validation), HemaContour exhibits a higher median Dice and a visibly tighter IQR; the right tails of HD95 shrink markedly—precisely the long-tail excursions that drive threshold-crossing volume errors.

HemaContour shows a right-shifted and tighter distribution, indicating higher overlap and better robustness.

HemaContour exhibits visibly shorter right tails, suppressing worst-case boundary excursions consistent with its boundary-first inductive bias.

HemaContour shows reduced long-tail excursions compared with voxel-wise and contour baselines.

Effect sizes and multiplicity: Fig. 5 summarizes Wilcoxon r and Cliff’s δ for HemaContour vs. the strongest voxel-wise baseline across metrics/datasets. Most effects are concordant and remain significant after Holm correction, supporting a global advantage rather than isolated gains on a single metric.

Points show Cliff’s δ (positive favors HemaContour) with horizontal lines denoting 95% bootstrap CIs; the vertical line at 0 marks no effect.

Agreement analysis for volume: As illustrated in Fig. 6, Bland–Altman plots on PhysioNet show that HemaContour reduces both bias and limits-of-agreement width compared with Swin–UNETR, with residuals vs. mean volume exhibiting less heteroscedasticity—consistent with lower RVE.

a HemaContour shows smaller bias and narrower limits of agreement (LOA). b Swin--UNETR exhibits wider LOA and higher heteroscedasticity in residuals.

Reproducibility notes: All tests are paired at the patient level; distances are computed in millimeters at native spacing. We provide scripts that (i) recompute metrics from masks, (ii) perform Wilcoxon tests with Holm correction, (iii) estimate effect sizes and bootstrap CIs, and (iv) export the above figures from raw CSVs to PDF for exact reproducibility.

Ablation studies

Protocol: Unless stated otherwise, ablations are performed on the INSTANCE validation split with identical preprocessing, augmentation and early-stopping as in “Implementation Details”. We report per-patient means for Dice/HD95 and absolute volume error (AVE, mL). For fairness, only the factor under study is changed; the rest of the pipeline follows the default in Table 2 (cubic B-spline, K = 64 control points, T = 5 snake steps, shape-aware loss). Significance versus the full model is assessed with paired Wilcoxon and Holm-adjusted p-values (†p < 0.05, †p < 0.01) Table 8.

Component analysis: Table 9 disentangles the effect of our three core ideas—implicit contour regression, differentiable snake refinement, and shape-aware optimization. Removing any component degrades both overlap and boundary fidelity, with the largest impact observed when suppressing the snake (T = 0) or dropping the boundary-aware terms. The full model also yields the lowest volumetric error without incurring a large latency penalty.

Initialization robustness: Because snakes are historically sensitive to initializations, we stress-test the contour seeding by perturbing the coarse mask (threshold shift ± 5 HU; morphological opening/closing radius 1). The final performance changes by <0.3 pp Dice and <0.2 mm HD95 on INSTANCE, and the rate of re-initialization (fallback to convex hull when self-intersections are detected) is < 1% of slices. This aligns with the qualitative stability and the small generalization gaps in Table 10.

Takeaways: (i) All three components contribute, with snake refinement and boundary-aware objectives driving the largest HD95 reductions; (ii) moderate contour resolution (K = 64) and iteration depth (T = 5) strike an excellent accuracy-latency trade-off; and (iii) the method remains robust to reasonable seed perturbations, mitigating a traditional weakness of contour models.

Robustness and stress tests

Qualitative setup: Fig. 7 provides a lesion-centric visualization of three challenging NCCT scenarios that frequently degrade automated segmentation: (a) low-contrast lobar ICH with perihematomal edema, (b) deep hemorrhage adjacent to calcifications, and (c) artifacts or small multi-lobulated foci. Each row shows NCCT+GT (dashed black), a strong voxel-wise transformer baseline Swin–UNETR (red), a contour-evolution baseline Deep Snake (orange), and our HemaContour (green, optional blue control points). Window/level is fixed (WL/WW 40/120), and a 10 mm scale bar is embedded to calibrate spatial differences.

Columns: NCCT+GT (dashed black), Swin-UNETR (red), Deep Snake (orange), HemaContour (green; optional blue control points). Rows: a low-contrast + edema, b calcification adjacency, c artifacts/multi-lobulated. Each panel shows a 10 mm scale bar; window/level fixed at WL/WW 40/120.

Low-contrast interfaces and edema: In row (a), voxel-wise methods display serrated borders along weak gradients at the hematoma-edema interface, with small protrusions and recesses that inflate HD95 and bias volumetry. Deep Snake reduces high-frequency fraying but can undershoot where edge evidence is diffuse. In contrast, HemaContour converges to a smooth, contiguous boundary that hugs the most stable intensity transition. The final contour closely envelops the ground truth while avoiding edema leakage, matching the quantitative pattern of higher Dice with lower HD95/ASSD reported in “Main Results”.

Calcification adjacency: Row (b) stresses specificity near hyperdense structures (e.g., choroid plexus/calcified falx). Swin–UNETR occasionally includes portions of calcification, particularly when the hematoma abuts bright foci; Deep Snake sometimes snaps to granular textures and forms non-physiologic bulges. HemaContour suppresses these false-positive incursions: the parametric spline acts as a low-dimensional shape prior and the implicit regression steers the contour towards coherent edges, preserving convex-hull plausibility without over-smoothing diagnostically relevant lobulations.

Artifacts and small, multi-lobulated lesions: In row (c), motion/beam-hardening and small irregular foci expose two typical failure modes: staircase boundaries and islanding. Voxel decoders show drift and jaggedness under streaking; Deep Snake can fragment into partial loops. HemaContour maintains a single anatomically plausible loop with minor drift, capturing lobulations while avoiding spurious islands. Visually tighter tails in boundary excursions are consistent with the reductions in HD95 and volume error (AVE/RVE) on both datasets.

What the contour adds: Across cases, the advantage is not merely “smoothing.” The spline parameterization enforces curvature regularity, while the differentiable snake confines corrections to the frontier where image cues are most informative. This combination yields (i) fewer extreme boundary excursions at weak edges, (ii) improved specificity near hyperdensities, and (iii) retention of true lobulations without creating disconnected components—properties that align with clinical use of volume and shape metrics.

Limitations and actionable knobs: Failure traces persist in very thin sheet-like extensions (e.g., along the tentorium or ventricular horns) under severe noise. In our ablations (“Ablation Studies”), increasing the control-point density K or the refinement steps T improves adherence in these edge cases with modest runtime cost, suggesting a practical deployment-time trade-off between detail and robustness. Despite overall strong performance, we observed systematic failure modes that merit discussion. Specifically, lobar hemorrhages with highly irregular boundaries occasionally led to minor contour under-segmentation, while very thin ventricular extensions were sometimes missed due to limited through-plane resolution. In addition, cases with severe metal artifacts or calcifications could produce local contour deviations, although these rarely affected volumetric accuracy. A more comprehensive breakdown of such failure cases is provided in “Discussion”, which can inform future methodological refinements.

Clinical utility analyses

Figure 8 examines lesion delineation under three clinically salient scenarios where automated systems often fail.

Columns: NCCT+GT (dashed black), Swin-UNETR (red), Deep Snake (orange), HemaContour (green; sparse blue control points). Rows: a deep ganglionic hemorrhage with perihematomal edema, b lobar lesion adjacent to calcifications, c IVH/tentorial extension under mild artifacts. Each panel includes a 10 mm scale bar and fixed windowing (WL/WW 40/120).

(a) Deep ganglionic + edema. Near the hematoma-edema interface, voxel-wise predictions (Swin-UNETR) show serrated borders and small intrusions into hypoattenuating edema. Deep Snake smooths the frontier but frequently undershoots weak edges. In contrast, HemaContour adheres to the most stable intensity transitions with a single, contiguous loop. Visually, worst-case excursions shrink—exactly the error mode that inflates HD95 and biases volumes near clinical thresholds (e.g., 30 mL). The explicit spline plus snake refinement concentrates corrections at the boundary, avoiding edema leakage without trimming true lesion extent.

(b) Calcification adjacency. When bright foci (e.g., choroid plexus or falx calcifications) neighbor the bleed, voxel decoders tend to overinclude hyperdensities, and contour-only evolution can latch onto granular textures, creating non-physiologic bulges. HemaContour suppresses these false-positive incursions: the parametric contour acts as a low-dimensional shape prior while the implicit regression module steers the snake toward coherent edges. The resulting boundary is smooth yet anatomically plausible, preserving lobulations important for shape-derived risk features (e.g., convexity, solidity).

(c) IVH/tentorial extension with artifacts. Under mild blur/streaking, voxel predictions drift and fragment; Deep Snake may break into partial loops over thin sheet-like extensions. HemaContour remains connected and stable, capturing slender continuations without islanding. This robustness matters for downstream volumetry (fewer threshold-crossing errors) and shape analytics (more reliable curvature and roughness measures), and mirrors the observed reductions in boundary-tail metrics.

Discussion

Summary of findings: This work introduces HemaContour, a contour-centric framework that fits a closed parametric spline to hematoma boundaries and optimizes it via an implicit contour regression network with shape-aware objectives. Across two public datasets, HemaContour delivers consistent gains in both overlap and boundary metrics, with the largest relative improvements observed for HD95 (14–16% vs. the strongest voxel-wise transformer) and stable advantages in volumetry (lower AVE/RVE) (Tables 4–6). External validation on PhysioNet CT–ICH confirms that these benefits are not confined to a single cohort, yielding smaller generalization gaps than transformer and nnU-Net baselines (Table 8; “External Validation and Generalization”). Qualitative analyses (Fig. 8; “Robustness and Stress Tests”) show smoother, anatomically plausible contours with fewer extreme excursions at weak edges, in line with the distributional shifts and effect-size profiles (Figs. 3–5; “Statistical Analysis”).

Why a boundary-first inductive bias helps: Voxel decoders optimized chiefly for region overlap can under-penalize small, spatially localized boundary errors that nevertheless inflate worst-case distances and bias volumes. By constraining predictions to an explicit spline evolved with differentiable snake dynamics, HemaContour couples two complementary signals: (i) a low-dimensional geometric prior that regularizes curvature and prevents high-frequency jagging, and (ii) rich image features injected through the implicit regression head that steer the contour toward coherent gradients. Our ablations (“Ablation Studies”) isolate these effects: removing the snake or boundary-aware loss degrades HD95 and AVE most, indicating that long-tail boundary control is the key driver of clinical gains.

Clinical implications: Reliable boundary placement matters because hematoma management frequently hinges on volumetry near decision cut-offs (e.g., 30 mL) and on morphology indicative of instability27,28. By shrinking boundary tails (lower HD95/ASSD) while also reducing AVE/RVE, HemaContour lowers the probability that noise at the edge flips a case across a clinical threshold. The explicit contour also yields native shape descriptors (e.g., curvature, circularity, convexity) without post-hoc polygonization, enabling seamless integration of shape-based prognostic factors27,28. The qualitative montages (Fig. 8) further suggest improved specificity near calcifications and greater stability under mild artifacts—two common pitfalls in emergency CT workflows.

Generalization and reliability: External validation (“External Validation and Generalization”) indicates that explicit contours confer robustness under acquisition and case-mix shift. Compared with voxel/transformer baselines, HemaContour exhibits narrower increases in HD95 and smaller Dice drops across domains, and Bland–Altman analyses show reduced bias and tighter limits of agreement for volume (Fig. 6). From a reliability standpoint, the model’s predictions are calibrated by construction: corrections are confined to the frontier where cues are stable, and the spline acts as a natural smoother against domain-specific clutter, consistent with our effect-size forest (Fig. 5) and distributional plots (“Statistical Analysis”).

Positioning with respect to prior work: Classical snakes and parametric models offer smooth, compact boundaries but historically suffered from sensitivity to initialization and image noise10,11. Recent deep contour methods (e.g., Deep Snake) improve evolution but can still underperform on volumetry and complex low-contrast edges13. Boundary-aware losses reduce Hausdorff error for voxel decoders22, yet they do not enforce an explicit shape manifold. HemaContour bridges these strands: it learns an image-conditioned update rule for a parametric curve while optimizing overlap, boundary, and smoothness jointly. The result is complementary to global-context transformers: on our benchmarks, the contour prior reduces worst-case boundary errors that remain relatively broad for high-capacity voxel models.

Limitations: First, we operate primarily in 2D with across-slice consistency enforced implicitly by the spline and loss; anisotropic spacing (5 mm through-plane) and very thin tentorial/ventricular extensions remain challenging (Fig. 8c). Extending the approach to 3D surfaces (t-Splines or subdivision surfaces) may better capture sheet-like morphology while preserving interpretability. Second, while our seed proposals are robust in stress tests, extreme failures of the coarse stage can still delay convergence; adding topology-aware multi-seed strategies or uncertainty-triggered re-initialization could further harden the pipeline. Third, we do not explicitly segment IVH as a separate class; future multi-region variants could jointly reason about parenchymal and ventricular components. Fourth, ground truth for NCCT ICH carries inter-observer variability even with expert consensus; part of the residual error likely reflects label noise rather than method limitations. Finally, our experiments involve two public datasets (INSTANCE, PhysioNet)32,34; larger, prospective, multi-vendor studies are needed to establish performance across the full clinical spectrum and to evaluate fairness across subgroups.

Future directions: Methodologically, we will explore: (i) 3D parametric surfaces and temporal evolution for follow-up scans; (ii) probabilistic contour ensembles to produce pixel-wise and boundary-wise uncertainty maps suitable for clinician-facing confidence overlays; (iii) domain adaptation and vendor/kernel harmonization to further reduce generalization gaps; and (iv) joint modeling of shape dynamics with expansion risk to connect segmentation to actionable prognosis. From a systems perspective, our contour runtime (Table 11) suggests feasible integration into PACS or triage pipelines; coupling with quality-control checks (e.g., out-of-distribution detection, uncertainty thresholds) will be important for safe deployment. We will release code, weights, and analysis scripts to support reproducibility in the spirit of challenge guidelines39,40.

Conclusions: HemaContour re-centers ICH NCCT segmentation on the object’s boundary, yielding smoother, artifact-resistant contours with improved volumetric fidelity and stronger generalization. The gains are consistent across datasets, metrics, and qualitative stressors, and they map directly to clinical decision points that depend on precise volumetry and interpretable shape descriptors. We believe contour-centric learning is a promising direction for safety-critical, geometry-sensitive medical imaging tasks beyond ICH.

Methods

This section describes the proposed HemaContour framework, which integrates parametric spline modeling, implicit contour regression, differentiable snake dynamics, and a shape-aware loss to achieve precise and anatomically plausible hematoma segmentation in NCCT. The motivation stems from the limitations of voxel-wise segmentation in low-contrast hematoma boundaries and the clinical need for interpretable contour-based outputs.

Motivationc

While voxel-wise deep learning segmentation methods (e.g., U-Net, transformer-based architectures) have achieved high overlap scores for ICH delineation, their predictions often suffer from boundary irregularity, particularly in the presence of perihematomal edema and low contrast between hematoma and surrounding parenchyma. This limitation is critical because: (1) boundary errors directly impact volumetric accuracy, a key prognostic biomarker3; (2) irregular contours reduce anatomical plausibility and clinical trust; (3) shape descriptors derived from noisy masks are unreliable for prognostic modeling. Contour-based parametric modeling offers a natural remedy by embedding smoothness and geometric consistency into the representation itself, avoiding jagged voxel boundaries. HemaContour exploits this advantage by explicitly modeling the hematoma boundary as a closed parametric spline, refining it through deep implicit regression and differentiable snake dynamics.

Overall framework

The overall pipeline of HemaContour is illustrated in Fig. 9. Given an NCCT volume, a coarse CNN first produces a binary hematoma mask. From this mask, an initial contour is extracted and converted into a spline representation with fixed control points. The spline is then refined in two stages: (1) an implicit contour regression netrwork predicts control point displacements from image features; (2) Differentiable Snake Dynamics iteratively optimize the contour according to image-driven energy terms. The final contour is used to produce a binary mask and to compute volume and shape metrics directly from the spline geometry.

The pipeline takes a NCCT slice as input, where the hematoma region is highlighted in semi-transparent red. A coarse CNN segmentation produces an initial hematoma mask, from which the spline initialization step extracts initial contour points (blue). These are refined by the implicit contour regression module, which integrates image features and initial contour geometry to predict updated control point positions. The differentiable snake mechanism further applies iterative refinement to align the contour with strong image gradients while preserving smoothness. The final smooth, anatomically plausible contour (green) is used to generate both the binary segmentation mask and clinically relevant shape metrics such as volume, convexity, and irregularity.

Parametric spline representation

We represent the hematoma boundary as a closed parametric spline:

where \({{\bf{P}}}_{i}\in {{\mathbb{R}}}^{2}\) are control points and Bi(u) are B-spline basis functions. The number of control points K determines the flexibility of the contour: a small K enforces smoothness but may underfit complex boundaries, whereas a large K captures more detail at the risk of overfitting noise. In our implementation, K is empirically selected to balance anatomical fidelity and computational efficiency.

Implicit contour regression network

Let \({\bf{F}}\in {{\mathbb{R}}}^{H\times W\times C}\) be the feature map extracted by the backbone CNN from the NCCT slice containing the hematoma. We define the implicit regression mapping:

where P(0) are the initial control points from spline initialization, \(\hat{\Delta {\bf{P}}}\) are the predicted displacements, and \({{\mathcal{R}}}_{\theta }\) is the regression network parameterized by θ. The refined control points are given by:

The regression loss is formulated as:

where \({{\bf{P}}}_{i}^{* }\) are ground-truth control points derived from expert-annotated contours via spline fitting.

Our framework indeed relies on a coarse CNN-based mask to initialize the contour; however, several design choices mitigate the sensitivity to poor initialization. First, the spline representation provides a compact global prior that prevents the contour from collapsing or drifting even when the seed mask is suboptimal. Second, the implicit regression head iteratively refines control points using multi-scale deep features, which compensates for missing or noisy initial boundaries. Third, the differentiable snake dynamics act as a corrective mechanism that further aligns the evolving contour to image gradients, especially beneficial for small or multi-focal hemorrhages. Empirically, we observed that even with deliberately degraded initializations, the final contours converge to stable and accurate boundaries (see “Ablation Studies”). This robustness ensures that our approach is not critically dependent on a perfect initialization and can generalize to challenging cases.

Differentiable snake dynamics

We integrate classical snake energy minimization into the learning pipeline. The snake energy is defined as:

where α, β control the balance between internal and image forces.

Internal Energy:

which enforces elasticity and curvature smoothness.

Image Energy: We compute an edge map G from the NCCT slice using a Sobel or Canny operator. The image energy term is:

encouraging the contour to align with strong image gradients.

Differentiable Update Rule: The contour is updated via:

where η is the step size. This update is differentiable, allowing gradients to flow from the final loss back through the contour refinement process during training.

An important consideration for differentiable snake dynamics is stability. Classical snakes are prone to oscillation or divergence when the image gradients are weak or noisy. In our formulation, stability is enhanced in three ways: (i) the spline representation imposes inherent smoothness constraints that suppress high-frequency oscillations; (ii) the internal energy terms (elasticity and curvature) are explicitly regularized to maintain contour coherence; and (iii) the dynamics are unrolled for a fixed and small number of iterations, which empirically guarantees convergence in practice. We further monitored the evolution of the contour energy during training and testing, and observed monotonic or near-monotonic decreases until convergence, with no evidence of oscillatory behavior. These results indicate that our differentiable snake converges reliably to stable contours under a wide range of conditions.

Shape-aware Loss

Our final training objective combines volumetric, boundary, and smoothness terms:

where:

Here Mpred and Mgt are predicted and ground-truth masks; ∂M denotes mask boundaries; dist( ⋅ , ⋅ ) computes shortest distance between boundary points.

The weighting of different loss terms (Dice, boundary, smoothness, and regression) is not arbitrary but guided by both theoretical and empirical considerations. Specifically, Dice loss ensures volumetric overlap, boundary loss emphasizes fine-grained edge alignment, smoothness loss regularizes curvature to prevent spurious oscillations, and regression loss stabilizes the control point updates. To assess the robustness of these choices, we conducted a sensitivity analysis by varying each weight across an order of magnitude while keeping others fixed. The results (“Ablation Studies”) show that our model maintains stable performance within a broad range of weights, indicating that the framework is not overly sensitive to heuristic tuning. This suggests that the complementary roles of the loss components provide an intrinsic balance that supports both volumetric accuracy and geometric stability.

Complexity and differentiability analysis

Computational complexity

Let Np be the number of pixels and K the number of control points. The CNN forward pass is O(Np), contour regression is O(K), and each snake iteration is O(K), making the total complexity O(Np + TK), where T is the number of snake iterations. In practice, K ≪ Np, so runtime is dominated by the CNN inference.

Proof of Differentiability

The spline representation in Eq. (5) is a linear combination of basis functions Bi(u), which are differentiable w.r.t. Pi. The snake energy Esnake is differentiable w.r.t. P as both internal and image energy terms are composed of differentiable operations (norms, finite differences, image gradients). Thus, the update rule is differentiable and supports end-to-end training.

Summary of advantages

HemaContour’s design yields the following benefits:

Boundary Accuracy: Parametric spline modeling and differentiable snake refinement produce smooth and anatomically consistent contours.

Anatomical Plausibility: Shape-aware loss enforces geometric regularity and aligns contours with clinical anatomy.

Interpretability: Contour representation enables direct computation of clinically relevant shape metrics without post-processing.

Efficiency: Low-dimensional control point representation reduces computational cost relative to dense voxel predictions.

Although prior contour-evolution frameworks such as DeepSnake and GAMED-Snake have explored polygonal or parametric boundary refinements, our approach differs in several key aspects. First, instead of polygonal representations, we adopt a spline-based parametric contour that achieves higher-order smoothness and allows direct extraction of clinically relevant shape descriptors. Second, our implicit regression module leverages deep image features to adaptively guide control point refinement, rather than relying solely on local geometric cues as in prior works. Third, the integration of a differentiable snake dynamics layer into an end-to-end trainable pipeline enables joint optimization with voxel-wise supervision and shape-aware regularization, which has not been fully realized in earlier methods. These distinctions ensure that our framework is not a mere incremental extension but a conceptually stronger paradigm that unifies parametric contour representation, deep implicit regression, and differentiable geometric evolution.

Ethics approval and consent to participate

This study uses only de-identified, publicly available NCCT datasets (INSTANCE 2022 and PhysioNet CT–ICH) and involves no prospective data collection or interaction with human participants; therefore, no additional institutional review board approval was required beyond the approvals obtained by the data providers32,33,34. No individual-level identifiers are present.

Data availability

All data used in this study are de-identified and publicly available from their official sources: INSTANCE 2022 and PhysioNet CT–ICH. The train/validation/test split manifests, evaluation scripts, and aggregated metrics will be provided in the supplementary material or upon reasonable request to the corresponding author. This study uses only de-identified, publicly available NCCT datasets and involves no prospective data collection or interaction with human participants; therefore, no additional institutional review board approval was required beyond the approvals obtained by the original data providers. No individual-level identifiers are present.

Code availability

The implementation of HemaContour (training, inference, and evaluation) will be provided upon reasonable request to the corresponding author for academic and non-commercial research use.

References

Feigin, V. L., Stark, B. A., Johnson, C. O. & Roth, G. A. et al. Global, regional, and national burden of stroke and its risk factors, 1990–2019: a systematic analysis for the global burden of disease study 2019. Lancet Neurol. 20, 795–820 (2021).

van Asch, C. J. et al. The natural history of primary intracerebral hemorrhage. Stroke 41, 2681–2687 (2010).

Broderick, J. P., Brott, T., Tomsick, T., Barsan, W. & Spilker, J. Volume of intracerebral hemorrhage: a powerful and easy-to-use predictor of 30-day mortality. Stroke 24, 987–993 (1993).

Kothari, R. U. et al. The abcs of measuring intracerebral hemorrhage volumes. Stroke 27, 1304–1305 (1996).

Patel, A., Schreuder, F. & Klijn, C. et al. Intracerebral haemorrhage segmentation in non-contrast ct. Sci. Rep. 9, 17858 (2019).

Nguyen, T. N. et al. Comparison of abc/2 estimation to computer-assisted volumetric analysis of intraparenchymal and subdural hematomas. Stroke 45, 3002–3005 (2014).

Hssayeni, M. et al. Intracranial hemorrhage segmentation using a deep fully convolutional network. Data 5, 14 (2020).

Kok, Y. et al. Semantic segmentation of spontaneous intracerebral hemorrhage, perihematomal edema, and intraventricular hemorrhage on noncontrast CT. Radiology: Artificial Intelligence (2022).

Hillal, A. et al. Accuracy of automated intracerebral hemorrhage volume measurement on non-contrast computed tomography: a swedish stroke register cohort study. Neuroradiology (2023).

Kass, M., Witkin, A. & Terzopoulos, D. Snakes: Active contour models. Int. J. Comput. Vis. 1, 321–331 (1988).

McInerney, T. & Terzopoulos, D. Topologically adaptable snakes. Int. J. Comput. Vis. 39, 41–72 (2000).

Kumar, R., Verma, R. et al. Parametric active contour models for medical image segmentation with automatic initialization. In 2017 IEEE International Conference on Image Processing (ICIP), 3370–3374 (IEEE, 2017).

Peng, S.-H. et al. Deep snake for real-time instance segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 8533–8542 (2020).

Zhang, W. et al. Swin-ichformer: Transformer-based architecture for intracerebral hemorrhage segmentation in ncct. Med. Image Anal. 92, 103064 (2024).

Li, J. et al. Hybrid convnext-transformer network for robust intracerebral hemorrhage segmentation. IEEE Trans. Med. Imaging 44, 512–525 (2025).

Chen, L., Wu, Y. & Sun, H. Hybrid 3d cnn and axial attention network for intracerebral hemorrhage segmentation. In Proceedings of MICCAI, 312–322 (Springer, 2024).

Rahman, A., Patel, K. & Singh, A. Domain adaptive intracerebral hemorrhage segmentation with style transfer and pseudo-labeling. Comput. Med. Imaging Graph. 112, 102329 (2024).

Wang, X. et al. Polygon-evolution deep network for cardiac MRI segmentation. IEEE Trans. Med. Imaging 43, 2054–2067 (2024).

Liu, Z. et al. Gamed-snake: Geometry-aware multi-scale deep snake for medical image segmentation. Med. Image Anal. 95, 103207 (2025).

Sun, P., He, J. & Zhao, X. Deep snake for retinal vessel segmentation with boundary refinement. IEEE J. Biomed. Health Inform. 28, 2711–2722 (2024).

Zhou, W., Yang, X. & Hu, M. Contour evolution network for lung tumor segmentation in dual-energy CT. Med. Phys. 51, 889–902 (2024).

Kervadec, H. et al. Boundary loss for highly unbalanced segmentation. In Medical Imaging with Deep Learning (MIDL) (2019).

Karimi, D. et al. Hybrid boundary-Hausdorff loss for retinal oct vessel segmentation. Med. Image Anal. 88, 102882 (2024).

Zhang, L. et al. Curvature-regularized loss for smooth and anatomically plausible tumor segmentation. IEEE Trans. Med. Imaging 44, 1452–1464 (2025).

Yin, H., Zhang, K. & Ma, Y. Signed distance function-based loss for stable contour learning in medical image segmentation. IEEE Trans. Med. Imaging 43, 3124–3135 (2024).

Hu, C., Li, F. & Wang, Y. Boundary-aware transformer for organ segmentation. In Proceedings of MICCAI, 532–542 (Springer, 2024).

Barras, C. D. et al. Intracerebral hemorrhage shape and outcome: a secondary analysis of the interact trial. Stroke 40, 1673–1678 (2009).

Delcourt, C. et al. Significance of hematoma shape and density in intracerebral hemorrhage: The intensive blood pressure reduction in acute intracerebral hemorrhage trial study. Stroke 47, 1227–1232 (2016).

Wang, R., Zhao, T. & Li, H. Integrating shape metrics from ncct for early neurological deterioration prediction in ich. Front. Neurol. 15, 1432102 (2024).

Liu, M., Chen, J. & Zhang, F. Ai-driven shape analysis of intracerebral hemorrhage for post-surgical outcome prediction. NeuroImage: Clin. 42, 103599 (2024).

Park, S., Kim, T. & Lee, H. Deep shape feature learning for hemorrhage expansion risk assessment. J. Stroke Cerebrovasc. Dis. 33, 107613 (2024).

Li, X. et al. The state-of-the-art 3d anisotropic intracranial hemorrhage segmentation on non-contrast head ct: The instance challenge (2023). https://arxiv.org/abs/2301.03281. MICCAI INSTANCE 2022 Challenge summary, 2301.03281.

Li, X. et al. The 2022 intracranial hemorrhage segmentation challenge on non-contrast head ct (ncct). Zenodo (2022). https://doi.org/10.5281/zenodo.6362221. MICCAI 2022 registered challenge resource.

Hssayeni, M. Computed tomography images for intracranial hemorrhage detection and segmentation (version 1.3.1). PhysioNet (2020). https://doi.org/10.13026/4nae-zg36. RRID:SCR_007345; Restricted Health Data License 1.5.0.

Micikevicius, P. et al. Mixed precision training. In International Conference on Learning Representations (ICLR) (2018). https://arxiv.org/abs/1710.03740. 1710.03740.

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention (MICCAI), vol. 9351 of LNCS, 234–241 (Springer, 2015). https://doi.org/10.1007/978-3-319-24574-4_28.

Loshchilov, I. & Hutter, F. Decoupled weight decay regularization. In International Conference on Learning Representations (ICLR) (2019). https://arxiv.org/abs/1711.05101.

Loshchilov, I. & Hutter, F. Sgdr: Stochastic gradient descent with warm restarts. In International Conference on Learning Representations (ICLR) (2017). https://arxiv.org/abs/1608.03983. 1608.03983.

Maier-Hein, L. & Reinke, A. et al. Metrics reloaded: recommendations for image analysis validation. Nat. Methods 21, 195–212 (2024).

Antonelli, M., Reinke, A. & Bakas, S. et al. The medical segmentation decathlon. Nat. Commun. 13, 4128 (2022).

Taha, A. A. & Hanbury, A. Metrics for evaluating 3d medical image segmentation: analysis, selection, and tool. BMC Med. Imaging 15, 29 (2015).

Dice, L. R. Measures of the amount of ecologic association between species. Ecology 26, 297–302 (1945).

Jaccard, P. Étude comparative de la distribution florale dans une portion des alpes et des jura. Bull. de. la Soci.été Vaud. des. Sci. Nat. 37, 547–579, https://doi.org/10.5169/seals-266450 (1901).

Nikolov, S. et al. Deep learning to achieve clinically applicable segmentation of head and neck anatomy for radiotherapy. arXiv preprint (2018). https://arxiv.org/abs/1809.04430. 1809.04430.

Shrout, P. E. & Fleiss, J. L. Intraclass correlations: uses in assessing rater reliability. Psychol. Bull. 86, 420–428 (1979).

Lin, L. I. A concordance correlation coefficient to evaluate reproducibility. Biometrics 45, 255–268 (1989).

Bland, J. M. & Altman, D. G. Statistical methods for assessing agreement between two methods of clinical measurement. Lancet 1, 307–310 (1986).

Zwanenburg, A. et al. The image biomarker standardization initiative: Standardized quantitative radiomics for high-throughput image-based phenotyping. Radiology 295, 328–338 (2020).

Isensee, F., Jaeger, P. F., Kohl, S. A. A., Petersen, J. & Maier-Hein, K. H. nnu-net: a self-configuring method for deep learning-based biomedical image segmentation. Nat. Methods 18, 203–211 (2021).

Çiçek, Ö., Abdulkadir, A., Lienkamp, S. S., Brox, T. & Ronneberger, O. 3d u-net: Learning dense volumetric segmentation from sparse annotation. In Medical Image Computing and Computer-Assisted Intervention (MICCAI), vol. 9901 of LNCS, 424–432 (Springer, 2016).

Milletari, F., Navab, N. & Ahmadi, S.-A. V-net: Fully convolutional neural networks for volumetric medical image segmentation. In 2016 Fourth International Conference on 3D Vision (3DV), 565–571 (2016).

Oktay, O., Schlemper, J., Le Folgoc, L. et al. Attention u-net: Learning where to look for the pancreas. arXiv preprint arXiv:1804.03999 (2018). https://arxiv.org/abs/1804.03999.

Zhou, Z., Siddiquee, M. M. R., Tajbakhsh, N. & Liang, J. Unet++: Redesigning skip connections to exploit multiscale features in image segmentation. IEEE Trans. Med. Imaging 39, 1856–1867 (2020).

Hatamizadeh, A. et al. Unetr: Transformers for 3d medical image segmentation. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 574–584 (2022).

Hatamizadeh, A. et al. Swin unetr: Swin transformers for 3d medical image segmentation. arXiv preprint arXiv:2201.01266 (2022). https://arxiv.org/abs/2201.01266.

Chen, J. et al. Transunet: Transformers make strong encoders for medical image segmentation. arXiv preprint arXiv:2102.04306 (2021). https://arxiv.org/abs/2102.04306.

Chen, L.-C., Zhu, Y., Papandreou, G., Schroff, F. & Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), 833–851 (2018).

Karimi, D. & Salcudean, S. E. Reducing the hausdorff distance in medical image segmentation with convolutional neural networks. IEEE Trans. Med. Imaging 39, 499–513 (2020).

Acknowledgements

This study was supported by grants from the Zhejiang Province Natural Science Foundation (Grant no. LMS25H090008), the Ningbo Major Research and Development Plan Project (Grant no. 2023Z196), the Neurology Department of the National Key Clinical Specialty Construction Project, the NINGB0 Leading Medical & Health Discipline (Grant no. 2022-F04) and the PhD start-up funding from Lihuili Hospital (Grant no. 2024BSKY-ZW).

Author information

Authors and Affiliations

Contributions