Abstract

Apple Watch provides continuous monitoring of physiological and behavioural health metrics, increasingly used to support health-care delivery. Yet, evidence regarding its measurement accuracy remains limited. We aimed to assess the accuracy of measurements from Apple Watch. We searched nine databases from inception to September 24, 2025, with no restrictions on language or publication type. Eligible studies validated any Apple Watch health metric against a criterion method. The primary outcome was the agreement between Apple Watch and the criterion. We included 82 studies, which assessed 14 health metrics (430,052 participants; pooled mean age 41.3 years [SD 13.3]). Bland-Altman meta-analysis showed a small underestimation of heart rate, although limits of agreement (LoA) indicated moderate measurement variability (mean bias -0.27 bpm [95% CI -0.72–0.17]; LoA -7.19 to 6.64). For atrial fibrillation detection, Apple Watch was more specific than sensitive (specificity 0.91 [95% CI 0.81–0.96]; sensitivity 0.79 [95% CI 0.61–0.90]). For blood oxygen saturation, there was low mean bias (-0.04% [95% CI -0.42–0.35]) but wide limits of agreement (-4.00 to 3.94). Accuracy for sleep and step count was moderate, whereas error for energy expenditure was inconsistent and frequently large. Measurement accuracy varied by metric, measurement conditions, and individual physiology. Longitudinal validation of key clinical metrics, including vital signs, is needed to inform clinical practice and policy. This study was registered with PROSPERO, CRD42023481841.

Similar content being viewed by others

Introduction

Wearable devices provide personal health monitoring and their clinical role in supporting health-care delivery is growing swiftly1. They have enabled longitudinal assessment of physiology at scale due to their measurement of health metrics such as heart rate, blood oxygen saturation, and cardiorespiratory fitness2,3. This has allowed early detection of respiratory illness, prediction of cardiovascular risk, and population-level assessment of physical activity2,4,5. Given the current emphasis on personalised medicine and digital phenotyping, there is a growing need for accurate consumer devices that enable the remote capture of digital biomarkers and biometrics6.

Compared with traditional methods, wearable devices offer continuous measurement that may facilitate identification of trends in health status and preventative care7,8. Yet, without validation, wearable device measurements may misguide assessment and treatment, potentially resulting in misrepresentations of health or delayed interventions.

Apple Watch (Apple Inc., California) is the most widely owned wearable device worldwide, with over 100 million users6, and measures several health metrics that have been associated with cardiovascular and all-cause mortality when assessed using criterion methods8,9,10,11,12,13,14,15,16,17. However, its measurement accuracy is not well-established. Existing literature indicates that accuracy is dependent on the individual metric, as well as the measurement conditions18. Previously, Apple Watch heart rate measurements have shown strong agreement with criterion measures, but factors such as exercise intensity, movement pattern, and skin contact affect accuracy19,20,21,22. Conversely, energy expenditure estimates have demonstrated low levels of agreement22,23,24, and sensitivity and specificity for atrial fibrillation detection range widely between studies25.

This heterogeneity permeates the current literature. Variation in study protocols and criterion methods render comparative analysis of validation studies challenging. Prior systematic reviews and meta-analyses have included a small number of studies, many of which validated Apple Watch software and hardware that has since been discontinued23,24,26. Over the past five years, there has been a substantial increase in validation research, however, a contemporary literature synthesis including all health metrics has not been conducted. The yearly update cycle of Apple Watch, and swift advances in machine learning algorithms which underpin measurements, accentuate this issue27.

A continuously updated synthesis of Apple Watch metrics is required. To address this, our review was designed as a living study to provide an up-to-date evaluation of the device’s measurement accuracy, in accordance with the analytical validation component of the V3 framework28. We defined health metrics as any health-related physiological, behavioural, or environmental metric measured natively by Apple Watch. Our aim was to better understand the competencies and boundaries of Apple Watch in clinical and personal health contexts. Our objectives in this systematic review and meta-analysis were to: (1) identify all Apple Watch health metrics that have been validated in primary research studies, (2) evaluate the measurement accuracy of each metric, and (3) identify gaps in the current research.

Results

Following the removal of duplicates, 1202 records were identified. After title and abstract screening, 221 full texts were assessed for eligibility (PRISMA flow diagram, Fig. 1). Articles excluded following full-text review are listed in the Supplementary Information (pp. 18–32). Overall, 82 studies (430,052 participants) were included in this systematic review. Additional results, which include synthesis of hypertension notification, heart rate variability, sound exposure, and Six-Minute Walk Test distance estimation, along with funnel plots, are provided in Supplementary Note 1.

PRISMA flow diagram.

Fourteen health metrics from all Apple Watch models through to Series 9 and Ultra 2 were validated. Fifty-seven percent of all participants were male, and the median sample size was 44. Information on total sample size was available for 81 of all 82 studies, and male–female split was available for 75 studies. Heart rate was the most frequently validated metric (38 studies), whereas only one study assessed hypertension notification, sound exposure, and heart rate variability. Study characteristics, including criterion methods and sample demographic, are listed in Table 1.

Risk of bias

Overall, 13 (14%) studies were classified as ‘low’ risk of bias, 29 (30%) as ‘some concerns’, and 53 (56%) as ‘high’. Domain 1 (participants) and Domain 4 (statistical analysis) were most frequently rated as high risk. Twenty-six (27%) studies did not appropriately select participants to represent the target population (Domain 1), and 20 (21%) used inappropriate statistical analysis. This included complete exclusion of unsuccessful measurements, use of unsuitable statistical measures of agreement (e.g., t-tests), inadequate reporting of missing data, or failure to account for repeated measures. By contrast, Domain 3 (reference standard) was predominantly rated as low risk (85/95 [89%]). Validation protocols, criterion methods, and time intervals between assessments were mostly appropriate. Detailed risk of bias assessment for each metric is provided as a supplementary file, with narrative synthesis in the Supplementary Information (pp. 2–3).

Heart rate

Thirty-eight studies (1855 participants; 66% male) validated heart rate measurements from all Apple Watch models through Series 9 and Ultra 2. Agreement with criterion measures was strongest at rest, whereas it was lower during exercise involving irregular movement patterns and among individuals with arrhythmia29. Mean difference for resting heart rate ranged from -2.47 bpm to 3.61 bpm, and MAPE ranged from 1.69% to 7.2%30,31. During exercise, 10/11 (91%) studies reported MAPE lower than 10%21,30,32,33,34,35,36,37,38,39. MAPE tended to rise as intensity increased, although a decrease was noted in three studies32,34,38.

Meta-analysis of heart rate, with resting and exercise conditions combined, included 22 studies (n = 1247)29,30,31,32,33,34,37,38,40,41,42,43,44,45,46,47,48,49,50. The pooled mean bias (MB) was low, although limits of agreement (LoA) indicated measurement variability (-0.27 bpm [95% CI -0.72–0.17]; LoA -7.19 to 6.64; τ2 0.53; Fig. 2). For resting heart rate, we found that Apple Watch measurements were higher than criterion measures (MB 0.21 bpm [95% CI -0.65–1.07]; LoA -8.14 to 8.56; τ2 0.67; Fig. 3A). During exercise, Apple Watch underestimated heart rate (MB -0.63 bpm [95% CI -1.37–0.12]; LoA -6.86 to 5.60; τ2 0.93; Fig. 3B).

The red dashed line represents the pooled mean bias; the blue dashed lines represent the pooled limits of agreement (-7.19 to 6.64).

A Forest plot for heart rate at rest. The pooled mean bias (0.21 bpm) and limits of agreement (-8.14 to 8.56) are represented by the dashed red and blue lines, respectively. B Forest plot for heart rate during exercise (mean bias -0.63; limits of agreement -6.86 to 5.60).

Six studies (16%) were rated as ‘low’ risk of bias, 11 (29%) as ‘some concerns’, and 21 (55%) as ‘high’. To examine the robustness of our findings, we conducted sensitivity analysis excluding studies at high risk of bias. The pooled mean bias and limits of agreement were comparable to our primary analysis (MB -0.50 bpm [95% CI -1.47–0.47]; LoA -7.54 to 6.53; 13 studies; Fig. S1).

To compare findings across Apple Watch models, we performed exploratory subgroup analysis according to the generation of optical heart rate sensor: first-generation (Apple Watch models up to Series 3), second-generation (Series 4–5 and all SE models), and third-generation (Series 6 onwards, including Ultra models). Compared to our primary analysis, we found narrower limits of agreement for the third-generation sensor (LoA -3.68 to 2.59; 8 studies; Fig. S2), but wider limits of agreement for the first- and second-generation sensors. Mean bias was comparable across all analyses. Further detail is provided in Supplementary Note 1.

Atrial fibrillation detection

Seventeen studies validated atrial fibrillation detection (n = 422,654; 57% male): two evaluated PPG-based detection from tachograms (Irregular Rhythm Notification)25,50, and the remainder assessed the ECG app. Sensitivity and specificity ranged widely between studies (19%–100% and 66%–100%, respectively). Six of the 15 studies that calculated sensitivity reported values higher than 80%51,52,53,54,55,56, and six fell in the range of 65% to 90%50,57,58,59,60,61. Sensitivity and specificity substantially improved when inconclusive ECG tracings were excluded51,53,56,59,60,62. The rate of inconclusive tracings was between 15 and 25% in several studies52,53,54,55,60,63. Thirteen studies were rated as ‘high’ risk of bias and four as ‘some concerns’.

Eleven studies (n = 3144) were included in meta-analysis of atrial fibrillation detection, all of which validated the ECG app51,52,53,55,56,57,59,60,62,63,64. Pooled sensitivity was 0.79 (95% CI 0.61–0.90), and pooled specificity was 0.91 (95% CI 0.81–0.96). The overall Zhou and Dendukuri I2 indicated moderate heterogeneity (55%). The area under the curve suggested strong discriminative ability (0.93; Fig. 4). Exploratory subgroup analysis examining the influence of hardware and software version is presented in the Supplementary Information (p. 6).

Summary Receiver Operating Characteristic Curve for atrial fibrillation detection.

ECG waveform morphology

Seven studies (n = 535, 68% male) compared the amplitude and duration of Apple Watch ECG recordings to 12-lead ECG46,49,65,66,67,68,69. QT interval was the most frequently assessed segment (five studies)42,65,67,68,69. Four studies reported that Apple Watch underestimated QT interval duration, although limits of agreement were relatively wide42,65,67,68. Many of these studies evaluated different segments of the ECG waveform, restricting comparison.

Blood oxygen saturation

Blood oxygen saturation (SpO2) measurements were validated in Series 6 through Series 8, and six studies included patient cohorts42,45,70,71,72,73. Seven studies reported overall mean difference <1% SpO2, indicating good measurement accuracy, particularly in normoxic ranges42,70,72,73,74,75,76. However, limits of agreement approximating ±5% SpO2 were reported in multiple studies, indicating variability in measurements45,70,72,74,75,76,77. Measurement error tended to increase as SpO2 decreased. All five studies that assessed SpO2 in both hypoxic and normoxic ranges found stronger agreement with criterion measures in normoxic ranges72,74,75,76,77. Apple’s white paper reported accuracy root mean square (Arms) within the limits (<3.5%) defined by the US Food and Drug Administration (FDA) for medical pulse oximeters across the entire range of 70-100% SpO2. Two additional studies also reported Arms within these limits across the range of 80–100%75,76. Contrastingly, two studies reported wide limits of agreement for hypoxic ranges, reflecting variability in accuracy72,77.

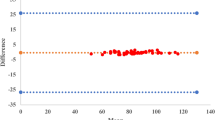

Nine studies (n = 969) were included in meta-analysis of blood oxygen saturation. Pooled mean bias indicated that Apple Watch underestimated SpO2, although limits of agreement demonstrated variability (MB -0.04% [95% CI -0.42–0.35], LoA -4.01 to 3.94; τ2 0.13; Fig. 5). Our exploratory subgroup analysis found overestimation and wider limits of agreement for measurements obtained in hypoxic ranges (MB 0.43% [95% CI -3.85–4.71]; LoA -8.35 to 9.21; Supplementary Information p. 7).

The pooled mean bias (-0.04% SpO2) and limits of agreement (-4.01 to 3.94) are represented by the dashed red and blue lines, respectively.

Energy expenditure

Margins of error for energy expenditure estimates were often large, both during exercise and at rest (8 studies; n = 270; 63% male). There was considerable variation between and within individual studies. Participants were predominantly young physically active adults, and five of the eight studies assessed Apple Watch Series 2 or older. All six studies that calculated MAPE reported values of 20% or higher in at least one test condition31,32,36,39,78,79. Overall, MAPE ranged from 9.71% (running) to 151.66% (walking). No distinct trend in measurement error by exercise intensity could be observed.

Step count and wheelchair push count

Three studies validated step count from Apple Watch First Generation and Series 1. In the largest study (n = 71), a small underestimation and strong correlation was found, however, moderate correlation and wide limits of agreement were reported in each of the other studies80. There was no distinct trend in accuracy based on walking or running speed80,81. Notably, no study included sedentary periods or seated activities that involved arm movements in their validation).

Demonstrating included metrics, inclusion requirement for device wear, risk of bias ratings, and meta-analysis results. bpm beats per minute, LoA limits of agreement. Icons adapted from Phosphor Icons, used under the MIT License.

Three studies evaluated wheelchair push count. Apple Watch overestimated overall wheelchair push count in two studies82,83, and underestimated in the other84. However, margins of error varied substantially, even within studies. MAPE ranged from 1% to 21% for Series 183,84, and was 9.2% for Series 482.

VO2 max estimation

One study (n = 30) compared VO2 max estimates to indirect calorimetry and found that Apple Watch underestimated VO2 max, noting a clinically significant mean difference (-6.07 mL/kg/min) and wide limits of agreement85.

Sleep stage classification and sleep apnoea detection

Three studies validated sleep stage classification (n = 221)86,87,88. Overall, they found good differentiation between sleep and wake states, but moderate-to-poor differentiation between physiologically similar sleep stages. Two studies reported sensitivity for binary sleep-wake classification ≥97%, however, they also reported low accuracy for classification of deep sleep, with a tendency to misclassify it as light sleep86,87. Robbins and colleagues (n = 29, Series 8) found that Apple Watch significantly underestimated deep sleep, and overestimated light sleep86. For sleep apnoea detection, Apple’s clinical validation study found higher specificity (98.5% [95% CI 98.0–99.0]) than sensitivity (66.3% [95% CI 62.2–70.3]). Fig. 6 provides a graphical overview of this review's results.

Discussion

This systematic review and meta-analysis evaluated the accuracy of 14 health metrics from Apple Watch to inform its use in personal health monitoring and clinical settings. We found that accuracy varied by metric, measurement conditions, and physiological characteristics, highlighting the need to interpret accuracy in the context of each metric’s intended use.

The pooled mean bias for heart rate was low (-0.27 bpm [95% CI -0.72–0.17]), although limits of agreement were moderately wide (-7.19 to 6.64 bpm). The pooled limits of agreement demonstrated measurement variability of ~±7 bpm and reflected agreement across a broad population by incorporating both within- and between-study variability, as described by Tipton & Shuster. In line with Bland and Altman’s recommendations, the limits of agreement are the key measure for determining whether Apple Watch is a suitable alternative to current measurement methods. We observed sufficient accuracy to quantify exercise intensity among healthy adults, although moderate misestimation may occur in some cases, particularly among individuals with cardiac disease. Our subgroup analyses showed substantially lower variability for measurements obtained with the third-generation optical sensor (LoA -3.68 to 2.59) compared to older generations. This indicated that accuracy was both population- and condition-dependent.

For blood oxygen saturation, we also found low mean bias (-0.04% [95% CI -0.42–0.35]), but the pooled limits of agreement (-4.01 to 3.94) suggested that Apple Watch may, in certain instances, misclassify individuals in hypoxic ranges as being in normoxic ranges. Across individual studies and in our subgroup analysis, we identified greater variability and lower agreement among patients in hypoxaemia. However, two studies found that, in healthy adults, Apple Watch met the standards set by the FDA and International Organization for Standardization (ISO) for medical grade pulse oximetry when hypoxaemia was induced. These findings indicate that Apple Watch may serve as a useful adjunct to traditional pulse oximetry, although its accuracy is limited in hypoxic ranges.

For atrial fibrillation detection, Apple Watch was more specific than sensitive (pooled sensitivity 0.79 [95% CI 0.61–0.90]). The pooled specificity (0.91 [95% CI 0.81–0.96]) indicated that notification of atrial fibrillation likely reflects true presence, suggesting notification warrants further clinical investigation. Both sensitivity and specificity ranged widely between studies, however, and in many, more than a quarter of measurements were inconclusive, representing a notable rate of unsuccessful assessment.

The error of energy expenditure estimates was often large and varied considerably, both within and between studies, and the mean difference for VO2 max (-6.07 mL/kg/min) was clinically significant, as a 3.5 mL/kg/min increase has been associated with a risk ratio of 0.89 for all-cause mortality89. We observed moderate accuracy for sleep overall, with good classification between sleep and wake states — sufficient for personal health monitoring — but differentiation between physiologically similar sleep stages was poor. There was also moderate accuracy for step count, wheelchair push count and hypertension notification, although there were fewer than four studies included for each metric. A number of metrics are yet to be validated, including respiratory rate, wrist temperature and measures of sedentary behaviour.

There are important distinctions between our findings and previous systematic reviews and meta-analyses, although we report similar results for certain metrics22,23,24,90,91,92,93,94,95,96. A prior meta-analysis, which pooled multiple effect estimates from single studies — a method that is not recommended97 — found a similar mean bias but wider limits of agreement for heart rate (-0.12 bpm; LoA −11.06 to 10.81)22. Notably, the authors included several studies that we deemed ineligible for our review, primarily due to the validity of criterion methods and lack of adherence to manufacturer guidelines for device wear. Elsewhere, low and moderate agreement have been identified for energy expenditure and step count, respectively22,23,24. Many of these previous systematic reviews, however, included fewer than five studies and exclusively assessed old Apple Watch software and hardware23,24. Only two prior meta-analyses have evaluated atrial fibrillation detection. The first pooled just three studies using a fixed-effects model, which does not appropriately account for heterogeneity93, while the second meta-analysis pooled results from multiple manufacturers’ devices92.

We found that Apple Watch’s measurement accuracy broadly aligns with that of other wearable devices. Across manufacturers, error margins for energy expenditure estimates are often large98, whereas heart rate measurements typically exhibit stronger agreement with criterion measures26. For heart rate and blood oxygen saturation, Apple Watch showed stronger agreement with criterion measures than Garmin, Fitbit, and Withings devices23,24,71,99. For sleep, however, agreement with polysomnography was lower for Apple Watch than for Whoop, Fitbit, and Garmin88,100.

Three factors particularly impact measurement accuracy. Firstly, the metric’s measurement method. Metrics such as step count, VO2 max, and energy expenditure require inputs from multiple sensors, combined through sensor fusion27. When they are combined, error from individual inputs may compound101,102. In contrast, metrics like heart rate and SpO2 are obtained directly from photoplethysmography (PPG), requiring less derivation. Secondly, factors such as movement, moisture, and skin contact impact motion sensor measurements and the clarity of PPG waveforms27,103,104. This is one source of inaccurate heart rate measurements during high-intensity exercise with irregular movement patterns29. Thirdly, physiological factors, including blood perfusion and individual variation in heart rate response to exercise affect measurements18. Low blood perfusion, due to low body temperature or physiological traits, can lead to inaccuracy, especially given the PPG sensor’s reliance on pulsatile arterial blood, which accounts for a minority of blood in the tissue at the wrist27. Algorithms that are ill-suited to an individual’s physiology may also lead to inaccuracy. Given the sensitivity of PPG waveforms and sensor measurements to these factors, the machine learning algorithms that interpret them are increasingly important, and recent literature has shown improved accuracy due to algorithmic developments alone105.

To determine whether accuracy is adequate, the measurement’s intended use must be considered. For clinical use, thresholds corresponding to clinically important change may guide interpretation. For instance, a 10 bpm increase in resting heart rate has been associated with a 9% increase in all-cause mortality risk16, whereas a 3.5 mL/kg/min increase in VO2 max and a 1000-step increase in daily step count have both been associated with decreased all-cause mortality risk89,106. Accuracy that permits detection of clinically meaningful change — within thresholds identified by large epidemiological studies and meta-analyses, or those stipulated by regulatory bodies such as the FDA, ISO, and European Union107,108,109 — may be deemed adequate. For personal health and fitness monitoring, however, wider margins of error may suffice to provide high-level trends over time in physiological and behavioural health metrics. In population-level research trials, where scale may attenuate individual error, such measurements could provide researchers with insight into associations and risk stratification across groups. The required accuracy, therefore, should be guided by the measurement’s use and by validation among the intended measurement population.

We recognise that our results are contingent on the characteristics of our included studies, particularly given the variability in accuracy across participant cohorts and measurement conditions. A greater proportion of trials involving cardiac populations or exercise involving erratic movement patterns, for instance, may have produced different results. Methodological rigour was also inconsistent: adherence to validation guidelines, such as INTERLIVE’s expert statements, was low110,111,112,113, statistical procedures were sometimes inadequately described, and inconclusive measurements were excluded from certain analyses. In addition, few studies conducted free-living validation, which best reflects typical use, likely due to challenges obtaining criterion measures.

Consequently, our study has several limitations. First, statistical and methodological heterogeneity prevented meta-analysis of energy expenditure, and restricted subgroup analyses. We were unable to conduct subgroup analysis by body mass index or skin tone as it was infrequently reported. Additionally, we could not precisely differentiate between the impact of hardware and software on accuracy due to the proprietary nature of updates to the foreground heart rate and SpO2 algorithms, as well as the limited number of studies evaluating each Apple Watch model. Second, the generalisability of our findings was restricted due to the bias towards physically active individuals and males among participants. The variation in sex balance between metrics, coupled with limited validation among older adults and those with comorbidities, accentuates this restriction. Third, many studies were at high risk of bias. While we conducted sensitivity analyses excluding these studies for heart rate, this was not feasible for blood oxygen saturation and atrial fibrillation; fewer than five studies were rated as ‘low’ or ‘some concerns’ for these metrics, and the marked imbalance between groups would have limited the validity and interpretability of any formal analysis. Fourth, few studies were included for metrics such as step count and sleep. This was due to our stringent approach to criterion method validity and adherence to manufacturer guidelines for device wear. Fifth, many studies assessed Apple Watch models that have since been discontinued. Nevertheless, several studies validated measurements from the most recent optical heart rate sensor and algorithms, as they are not updated with each new Apple Watch model.

The main strength of this study is its breadth and meta-analyses. It is the first to synthesise all health metrics from Apple Watch that have currently been validated, and it provides the most comprehensive meta-analyses to date of heart rate, atrial fibrillation detection, and blood oxygen saturation. We gave ample consideration to the validity of criterion methods and ensured that Apple Watch was validated in the manner it was designed to be worn. We did not consider research-grade wearables as valid criterion methods for step count or energy expenditure due to the conflicting evidence on their validity98,114,115. A rigorous search and screening process was implemented, comprising nine databases and four reviewers, and to reduce publication bias, grey literature was included. This study is designed as a living systematic review and meta-analysis to ensure that the evidence synthesis does not become outdated quickly as Apple Watch evolves. An updated search will be conducted yearly to integrate new studies and new metrics, and data will be published in an open-access format.

The clinical applications of wearable devices are budding. There is growing recognition that wearable devices may improve preventative care and management of chronic disease2,102. Major organisations, including the American Heart Association and the British Heart Foundation, are conducting large research trials to inform the integration of wearable data in cardiovascular care2,116,117,118. Moreover, the development of digital biomarkers, together with emerging metrics such as hypertension notification, aim to translate wearable measurements into clinically actionable data that support disease management and assessment. Clear interpretation of these data may provide agency to patients, allowing them to better manage their condition in partnership with their healthcare professional, ultimately reducing health-care cost and burden102,119,120,121,122.

Future research should examine the longitudinal relationships of Apple Watch metrics with markers of health and disease, as well as validating measurements taken at single time-points. Clearer understanding of measurement precision and reliability will enable more accurate interpretation of trends in health metrics over time. Validation studies that include older adults, patient populations, and metrics related to vital signs — such as respiratory rate and wrist temperature — are needed. As software and hardware advance, and new metrics are developed, continued validation across diverse cohorts and conditions is required to inform the capabilities and limitations of Apple Watch.

This systematic review and meta-analysis demonstrated the variation in measurement accuracy between Apple Watch health metrics, as well as the influence of measurement condition and individual physiology. We identified good agreement for heart rate overall, whereas error for energy expenditure estimates was often inconsistent and large. Wide limits of agreement for SpO2 indicated measurement variability, and we found moderate accuracy for sleep and step count. As a ubiquitous consumer device, Apple Watch provides the general population with assessment of activity, physiology, and cardiovascular function that may otherwise be inaccessible. Despite inaccuracies, the continuous nature of these measurements may offer unique health insights, and further research exploring their use in public health is warranted.

Methods

This systematic review and meta-analysis was conducted and reported as per PRISMA guidelines123. The protocol was prospectively registered in PROSPERO (CRD42023481841; www.crd.york.ac.uk/PROSPERO/view/CRD42023481841).

Search strategy and selection criteria

We searched PubMed, SPORTDiscus, Embase, IEEE Xplore, Web of Science, Scopus, CINAHL and the Cochrane Library from inception to September 24, 2025. Keywords, Medical Subject Headings (MeSH), and synonyms related to Apple Watch and its measurement accuracy were included. To identify additional studies and grey literature, a hand search was undertaken across Google Scholar, the Apple Health website, and the US Food and Drug Administration 510(k) database. The university’s Research Engagement Librarian was involved throughout the development of the search strategy, which was peer-reviewed prior to implementation. Details of the tailored search strategy for each database are reported in Supplementary Note 2.

We included primary research studies which compared any health metric from Apple Watch to a validated criterion measure. Description of valid criterion measures are available in the Supplementary Information (pp. 11-12). Studies investigating metrics not intended to be measured by Apple Watch, or in populations in which they were not intended for use, were excluded; for example, recording ECG with Apple Watch placed at the ankle, or blood oxygen saturation assessment in neonates. Measurements were required to be taken in accordance with manufacturer guidelines. Studies in which multiple devices were worn on one wrist were excluded due to potential measurement interference caused by improper device placement, photoplethysmographic light impedance from adjacent devices, and motion sensor disruption, among other factors. Grey literature, including conference abstracts and unpublished white papers, was also included. There were no restrictions placed on demographic or language.

Three authors (RL, B.O.’G., M.B.) independently screened titles, abstracts, and full texts, with two authors per citation. Disagreements were resolved by consensus. The study selection process was carried out using Covidence (Veritas Health Innovation Ltd). This study was designed as a living systematic review. Searches will be updated every 12 months, or earlier if major Apple Watch hardware or software updates occur. Newly identified studies will be screened and incorporated using the same methodology. Updates will be disseminated via the Open Science Framework (osf.io/v5d3k).

Outcomes

The primary outcome was the agreement between measurements from Apple Watch and the criterion method for each health metric. This included pooled mean bias, Bland-Altman limits of agreement, sensitivity, and specificity for metrics that were meta-analysed. We extracted measures of agreement across all populations and conditions, including varied exercise intensities and clinical cohorts (e.g., cardiovascular disease). Measures of effect included mean difference, sensitivity and specificity, mean average percentage error (MAPE), Bland-Altman limits of agreement, and correlation coefficients.

Data extraction

Two reviewers (RL, M.B.) independently extracted data in duplicate using a pilot-tested extraction form in Microsoft Excel. Extracted data were then compared and merged following consensus. This included data about participant demographic, criterion method, validation protocol, and statistical analysis. In the case of missing or unclear information, authors were contacted via email, and one follow-up was sent to those who did not respond. Where required, we back-calculated statistics necessary for meta-analysis, if sufficient data were available124.

Risk of bias assessment

An adapted version of the COSMIN checklist (COnsensus-based Standards for the selection of health Measurement INstruments) was used to assess risk of bias. COSMIN defines standards for evaluating the methodological quality of studies validating health measurement instruments and is implemented by the expert-led ‘Towards Intelligent Health and Well-Being Network of Physical Activity Assessment’ (INTERLIVE) consortium110,125. The modified tool includes four domains: participants, index measure, reference standard, and statistical analysis. Each domain includes multiple items with three possible answers (‘yes’, ‘unclear’, or ‘no’), and ratings were assigned in accordance with the checklist’s recommendations. Studies with at least one ‘no’, or more than two ‘unclear’ ratings were categorised as ‘high’ risk, while those with one ‘unclear’ item were designated as ‘some concerns’. Studies with ‘yes’ in all domains were classified as ‘low risk’. Where studies validated more than one metric, risk of bias was assessed individually for each. Three authors (R.L., B.O’G., M.B.) independently assessed risk of bias and disagreements were resolved by consensus.

Statistical analysis

Meta-analysis of heart rate and blood oxygen saturation was conducted in accordance with the framework developed by Tipton & Shuster126. A random-effects model with inverse variance weighting was used account for heterogeneity between trials127. Pooled Bland-Altman limits of agreement and mean bias were calculated. Subgroup meta-analyses were conducted for heart rate measured at rest and during exercise. To prevent unit-of-analysis errors, only one estimate per study per condition was included in meta-analyses, in line with the approach described by Borenstein and colleagues97. Where studies reported multiple mean difference values, they were pooled prior to meta-analysis, accounting for variance. If the standard deviation of the differences was not reported, it was back-calculated by rearranging the formula used to compute 95% limits of agreement128. Details of the formulae for back-calculation and the methods for pooling mean differences are provided in the Supplementary Information (p. 15).

Pooled sensitivity and specificity for atrial fibrillation detection was calculated using bivariate meta-analysis with Reitsma (mada package)129. Diagnostic accuracy contingency tables were back-calculated when not reported, in accordance with previously described methods (appendix p. 14)124. We evaluated statistical heterogeneity by estimating the degree of between-study variability using the Tau² statistic130,131. Analyses were conducted in R version 4.5.1 (The R Foundation for Statistical Computing, Vienna) with RStudio (version 2025.09.0 + 387) and in Python 3.13

Data availability

Synthesised results data, risk of bias assessment, and study protocol are available via the Open Science Framework, at osf.io/v5d3k. Raw datasets generated as part of this review are available from the corresponding author upon request.

Code availability

The code used in this study is publicly available via GitHub, at github.com/rorylambe/applewatch-systematicreview. This repository includes R code used to conduct meta-analyses of heart rate, atrial fibrillation, and blood oxygen saturation.

References

Smuck, M., Odonkor, C. A., Wilt, J. K., Schmidt, N. & Swiernik, M. A. The emerging clinical role of wearables: factors for successful implementation in healthcare. NPJ Digit. Med. 4, 45 (2021).

Truslow, J. et al. Understanding activity and physiology at scale: the Apple heart & movement study. npj Digit. Med. 7, 242 (2024).

Shapiro, I., Stein, J., MacRae, C. & O’Reilly, M. Pulse oximetry values from 33,080 participants in the Apple Heart & Movement Study. NPJ Digit. Med. 6, 134 (2023).

Bent, B. et al. Engineering digital biomarkers of interstitial glucose from noninvasive smartwatches. NPJ Digit. Med. 4, 89 (2021).

Ballinger, B. et al. DeepHeart: Semi-supervised sequence learning for cardiovascular risk prediction. In Proc. AAAI Conference on Artificial Intelligencef, 32 (AAAI, 2018).

Pammi, M. et al. Digital twins, synthetic patient data, and in-silico trials: can they empower paediatric clinical trials? Lancet Digit. Health 7, 100851 (2025).

Gibson, C. M. et al. Does early detection of atrial fibrillation reduce the risk of thromboembolic events? rationale and design of the Heartline study. Am. Heart J. 259, 30–41 (2023).

Mandsager, K. et al. Association of cardiorespiratory fitness with long-term mortality among adults undergoing exercise treadmill testing. JAMA Netw. Open 1, e183605 (2018).

Jayedi, A., Gohari, A. & Shab-Bidar, S. Daily step count and all-cause mortality: a dose–response meta-analysis of prospective cohort studies. Sports Med. 52, 89–99 (2022).

Kokkinos, P. et al. Cardiorespiratory fitness and mortality risk across the spectra of age, race, and sex. J. Am. Coll. Cardiol. 80, 598–609 (2022).

Clausen, J. S., Marott, J. L., Holtermann, A., Gyntelberg, F. & Jensen, M. T. Midlife cardiorespiratory fitness and the long-term risk of mortality: 46 years of follow-up. J. Am. Coll. Cardiol. 72, 987–995 (2018).

Tsuji, H. et al. Reduced heart rate variability and mortality risk in an elderly cohort. The Framingham Heart Study. Circulation 90, 878–883 (1994).

Jarczok, M. N. et al. Heart rate variability in the prediction of mortality: a systematic review and meta-analysis of healthy and patient populations. Neurosci. Biobehav. Rev. 143, 104907 (2022).

Gallicchio, L. & Kalesan, B. Sleep duration and mortality: a systematic review and meta-analysis. J. sleep. Res. 18, 148–158 (2009).

Kwok, C. S. et al. Self-reported sleep duration and quality and cardiovascular disease and mortality: a dose-response meta-analysis. J. Am. Heart Assoc. 7, e008552 (2018).

Zhang, D., Shen, X. & Qi, X. Resting heart rate and all-cause and cardiovascular mortality in the general population: a meta-analysis. Cmaj 188, E53–E63 (2016).

Dohrn, M., Sjöström, M., Kwak, L., Oja, P. & Hagströmer, M. Accelerometer-measured sedentary time and physical activity—a 15 year follow-up of mortality in a Swedish population-based cohort. J. Sci. Med. Sport 21, 702–707 (2018).

Bent, B., Goldstein, B. A., Kibbe, W. A. & Dunn, J. P. Investigating sources of inaccuracy in wearable optical heart rate sensors. NPJ digital Med. 3, 18 (2020).

Alugubelli, N., Abuissa, H. & Roka, A. Wearable devices for remote monitoring of heart rate and heart rate variability-what we know and what is coming. Sensors (Basel) 22, 8903 (2022)

Hajj-Boutros, G., Landry-Duval, M.-A., Comtois, A. S., Gouspillou, G. & Karelis, A. D. Wrist-worn devices for the measurement of heart rate and energy expenditure: a validation study for the Apple Watch 6, Polar Vantage V and Fitbit Sense. Eur. J. Sport Sci. 23, 165–177 (2023).

Kim, C., Song, J. H. & Kim, S. H. Validation of wearable digital devices for heart rate measurement during exercise test in patients with coronary artery disease. Ann. Rehabil. Med. 47, 261–271 (2023).

Choe, J. P. & Kang, M. Apple Watch accuracy in monitoring health metrics: a systematic review and meta-analysis. Physiol. Meas. https://doi.org/10.1088/1361-6579/adca82 (2025).

Fuller, D. et al. Reliability and validity of commercially available wearable devices for measuring steps, energy expenditure, and heart rate: systematic review. JMIR Mhealth Uhealth 8, e18694 (2020).

Germini, F. et al. Accuracy and acceptability of wrist-wearable activity-tracking devices: systematic review of the literature. J. Med. Int. Res 24, e30791 (2022).

Perez, M. V. et al. Large-scale assessment of a smartwatch to identify atrial fibrillation. N. Engl. J. Med. 381, 1909–1917 (2019).

Doherty, C., Baldwin, M., Keogh, A., Caulfield, B. & Argent, R. Keeping pace with wearables: a living umbrella review of systematic reviews evaluating the accuracy of consumer wearable technologies in health measurement. Sports Med. 54, 1–20 (2024).

Apple. Using Apple Watch To Measure Heart Rate, Calorimetry, And Activity. https://www.apple.com (2024).

Goldsack, J. C. et al. Verification, analytical validation, and clinical validation (V3): the foundation of determining fit-for purposefor Biometric Monitoring Technologies (BioMeTs. npj Digit. Med. 3, 55 (2020).

Støve, M. P. & Hansen, E. C. K. Accuracy of the Apple Watch Series 6 and the Whoop Band 3.0 for assessing heart rate during resistance exercises. J. Sports Sci. 40, 2639–2644 (2022).

Reece, J. D., Bunn, J. A., Choi, M. & Navalta, J. W. Assessing heart rate using consumer technology association standards. Technologies 9, 46 (2021).

Bai, Y. et al. Comprehensive comparison of Apple Watch and Fitbit monitors in a free-living setting. PLoS ONE 16, e0251975 (2021).

Falter, M., Budts, W., Goetschalckx, K., Cornelissen, V. & Buys, R. Accuracy of Apple Watch measurements for heart rate and energy expenditure in patients with cardiovascular disease: cross-sectional study. JMIR mHealth uHealth 7, e11889 (2019).

Giggins, O. M. et al. In 43rd Annual International Conference of the IEEE Engineering in Medicine and Biology Society EMBC, 6970-6973 (IEEE, 2021).

Ho, W.-T., Yang, Y.-J. & Li, T.-C. Accuracy of wrist-worn wearable devices for determining exercise intensity. Digi. Health 8, 20552076221124393 (2022).

Nelson, B. W. & Allen, N. B. Accuracy of consumer wearable heart rate measurement during an ecologically valid 24 hour period: intraindividual validation study. JMIR mHealth uHealth 7, e10828 (2019).

Nuss, K. J. et al. Accuracy of heart rate and energy expenditure estimations of wrist-worn and arm-worn Apple watches. J. Meas. Phys. Behav. 2, 166–175 (2019).

Støve, M. P. et al. Measurement latency significantly contributes to reduced heart rate measurement accuracy in wearable devices. J. Med. Eng. Technol. 44, 125–132 (2020).

Thomas, J., Doyle, P. & Doyle, J. A. Validity of optical heart rate measurement in commercially available wearable fitness tracking devices. bioRxiv 9, e77911 (2022).

Uphill, A. et al. Validity of Apple Watch, Garmin Forerunner® 935 and GENEActiv for estimating energy expenditure during close quarter battle training in Special Forces soldiers. Eur. J. Sport Sci. 24, 614–622 (2024).

Abt, G., Bray, J. & Benson, A. C. The validity and inter-device variability of the Apple Watch™ for measuring maximal heart rate. J. Sports Sci.36, 1447–1452 (2018).

Etiwy, M. et al. Accuracy of wearable heart rate monitors in cardiac rehabilitation. Cardiovasc. Diagnosis Ther. 9, 262 (2019).

Khushhal, A. A., Mohamed, A. A. & Elsayed, M. E. Accuracy of apple watch to measure cardiovascular indices in patients with cardiac diseases: observational study. Glob. Heart 20, 74 (2025).

Mulholland, A. M., MacDonald, H. V., Aguiar, E. J. & Wingo, J. E. Influence of skin pigmentation on the accuracy and data quality of photoplethysmographic heart rate measurement during exercise. Eur. J. Appl. Physiol. https://doi.org/10.1007/s00421-025-05977-x (2025)

Wallen, M. P., Gomersall, S. R., Keating, S. E., Wisløff, U. & Coombes, J. S. Accuracy of heart rate watches: implications for weight management. PLoS ONE 11, e0154420 (2016).

Helmer, P. et al. Reliability of continuous vital sign monitoring in post-operative patients employing consumer-grade fitness trackers: A randomised pilot trial. Digit. Health 10, 20552076241254026 (2024).

Khushhal, A. A., Mohamed, A. A., Alsegame, M. M. & Alsaedi, A. M. Accuracy of Apple Watch in measuring 30-second resting electrocardiography in patients with cardiac diseases and comorbidity: an observational cross-sectional study. J. Multidiscip. Healthcare 18, 493−504 (2025).

Koshy, A. N. et al. Smart watches for heart rate assessment in atrial arrhythmias. Int. J. Cardiol. 266, 124–127 (2018).

O'Grady, B., Lambe, R., Baldwin, M., Acheson, T. & Doherty, C. The validity of apple watch series 9 and ultra 2 for serial measurements of heart rate variability and resting heart rate. Sensors https://doi.org/10.3390/s24196220 (2024).

Saghir, N. et al. A comparison of manual electrocardiographic interval and waveform analysis in lead 1 of 12-lead ECG and apple watch ECG: A validation study. Cardiovasc. Digit Health J. 1, 30–36 (2020).

Wasserlauf, J. et al. Accuracy of the Apple watch for detection of AF: A multicenter experience. J. Cardiovasc. Electrophysiol. 34, 1103–1107 (2023).

Abu-Alrub, S. et al. Smartwatch electrocardiograms for automated and manual diagnosis of atrial fibrillation: a comparative analysis of three models. Front. Cardiovasc. Med. 9, 836375 (2022).

Mannhart, D. et al. Clinical validation of an artificial intelligence algorithm offering cross-platform detection of atrial fibrillation using smart device electrocardiograms. Arch. Cardiovasc. Dis. 116, 249–257 (2023).

Mannhart, D. et al. Clinical validation of 5 direct-to-consumer wearable smart devices to detect atrial fibrillation: BASEL wearable study. Clin. Electrophysiol. 9, 232–242 (2023).

Scholten, J. et al. A comparison of over-the-counter available smartwatches and devices for electrocardiogram based detection of atrial fibrillation. Eur. Heart J.Digit. Health 2, ztab104. 3047 (2021).

Velraeds, A. et al. Improving automatic smartwatch electrocardiogram diagnosis of atrial fibrillation by identifying regularity within irregularity. Sensors 23, 9283 (2023).

Apple. Using Apple Watch for Arrhythmia Detection. https://www.apple.com/healthcare/docs/site/Apple_Watch_Arrhythmia_Detection.pdf (2020).

Briosa, E. G. A. et al. Diagnostic performance of single-lead electrocardiograms from a smartwatch and a smartring for cardiac arrhythmia detection. Heart Rhythm 6, 808–817 (2025).

Müller, M. et al. Validity of a smartwatch for detecting atrial fibrillation in patients after heart valve surgery: a prospective observational study. Scand. Cardiovasc. J. 58, 2353069 (2024).

Pepplinkhuizen, S. et al. Accuracy and clinical relevance of the single-lead Apple Watch electrocardiogram to identify atrial fibrillation. Cardiovasc. Digit. Health J. 3, S17–S22 (2022).

Racine, H.-P. et al. Role of coexisting ECG anomalies in the accuracy of smartwatch ECG detection of atrial fibrillation. Can. J. Cardiol. 38, 1709–1712 (2022).

Saghir, N. S. et al. Correlation of atrial fibrillation detection using oura ring with photoplethysmography in comparison to the apple watch electrocardiography algorithm (DH-576-04). Heart Rhythm 19, S61–S62 (2022).

Ford, C., Xie, C. X., Low, A., Roberts, L. & Teh, A. W. Smart wars-comparison of the apple watch series 4 and kardiaband smart watch technology for the diagnosis of atrial fibrillation. J. Am. Coll. Cardiol. 77, 3226–3226 (2021).

Seshadri, D. R. et al. Accuracy of Apple Watch for detection of atrial fibrillation. Circulation 141, 702–703 (2020).

Wouters, F. et al. Comparative evaluation of consumer wearable devices for atrial fibrillation detection: validation study. JMIR Formative Res. 9, e65139 (2025).

Behzadi, A. et al. Feasibility and reliability of smartwatch to obtain 3-lead electrocardiogram recordings. Sensors 20, 5074 (2020).

Buelga Suárez, M. et al. Smartwatch ECG tracing and ischemic heart disease: ACS watch study. Cardiology 148, 78–82 (2023).

Harmon, D. et al. Performance and accuracy of a smart watch single-lead ecg: a pilot study (Po-626-01). Heart Rhythm 19, S150 (2022).

Klier, K., Koch, L., Graf, L., Schinköthe, T. & Schmidt, A. Diagnostic accuracy of single-lead electrocardiograms using the Kardia Mobile App and the Apple Watch 4: validation study. JMIR Cardio 7, e50701 (2023).

Strik, M. et al. Validating QT-interval measurement using the Apple Watch ECG to enable remote monitoring during the COVID-19 pandemic. Circulation 142, 416–418 (2020).

Arslan, B. et al. Accuracy of the apple watch in measuring oxygen saturation: comparison with pulse oximetry and ABG. Ir. J. Med. Sci. 193, 477–483 (2024).

Jiang, Y. et al. Investigating the accuracy of blood oxygen saturation measurements in common consumer smartwatches. PLOS Digit. Health 2, e0000296 (2023).

Rajakariar, K. et al. Accuracy of smartwatch pulse oximetry measurements in hospitalized patients with coronavirus disease 2019. Mayo Clin. Proc. Digi. Health 2, 152–158 (2024).

Spaccarotella, C. et al. Assessment of non-invasive measurements of oxygen saturation and heart rate with an apple smartwatch: comparison with a standard pulse oximeter. J. Clin. Med. 11, 1467 (2022).

Apple. Blood Oxygen app on Apple Watch. https://apps.apple.com (2022).

Rafl, J., Bachman, T. E., Rafl-Huttova, V., Walzel, S. & Rozanek, M. Commercial smartwatch with pulse oximeter detects short-time hypoxemia as well as standard medical-grade device: Validation study. Digit. health 8, 20552076221132127 (2022).

Walzel, S. et al. Evaluation of leading smartwatches for the detection of hypoxemia: comparison to reference oximeter. Sensors 23, 9164 (2023).

Jiang, Y. et al. Performance of wearable pulse oximetry during controlled hypoxia induction. medRxiv https://doi.org/10.1101/2024.07.16.24310506 (2024).

Lee, M., Lee, H. & Park, S. Accuracy of swimming wearable watches for estimating energy expenditure. Int. J. Appl. Sports Sci. 30, 80−90 (2018).

Sun, X. et al. Validity of apple watch 6 and Polar A370 for monitoring energy expenditure while resting or performing light to vigorous physical activity. J. Sci. Med. Sport 26, 482–486 (2023).

Veerabhadrappa, P. et al. Tracking steps on an Apple Watch at different walking speeds. J. Gen. Intern. Med. 33, 795–796 (2018).

Bunn, J. A., Jones, C., Oliviera, A. & Webster, M. J. Assessment of step accuracy using the consumer technology association standard. J. Sports Sci. 37, 244–248 (2019).

Benning, N.-H., Knaup, P. & Rupp, R. Measurement performance of activity measurements with newer generation of Apple Watch in wheelchair users with spinal cord injury. Methods Inf. Med. 60, e103–e110 (2021).

Glasheen, E., Domingo, A. & Kressler, J. Accuracy of Apple Watch fitness tracker for wheelchair use varies according to movement frequency and task. Ann. Phys. Rehabil. Med. 64, 101382 (2021).

Karinharju, K. S. et al. Validity of the Apple Watch® for monitoring push counts in people using manual wheelchairs. J. Spinal Cord. Med. 44, 212–220 (2021).

Lambe, R., O’Grady, B., Baldwin, M. & Doherty, C. Investigating the accuracy of apple watch VO2 max measurements: a validation study. PLoS ONE 20, e0323741 (2025).

Robbins, R. et al. Accuracy of three commercial wearable devices for sleep tracking in healthy adults. Sensors (Basel) 24, 6532 (2024)

Apple. Estimating sleep stages from Apple Watch. https://www.apple.com/health/pdf/Estimating_Sleep_Stages_from_Apple_Watch_Oct_2025.pdf (2023).

Lee, T. et al. Accuracy of 11 wearable, nearable, and airable consumer sleep trackers: prospective multicenter validation study. JMIR Mhealth Uhealth 11, e50983 (2023).

Laukkanen, J. A., Isiozor, N. M. & Kunutsor, S. K. Objectively assessed cardiorespiratory fitness and all-cause mortality risk: an updated meta-analysis of 37 cohort studies involving 2,258,029 participants. Mayo Clin. Proc. 97, 1054–1073 (2022).

Koerber, D., Khan, S., Shamsheri, T., Kirubarajan, A. & Mehta, S. Accuracy of heart rate measurement with wrist-worn wearable devices in various skin tones: a systematic review. J. Racial Ethn. Health Disparities 10, 2676–2684 (2023).

Byrne, J. et al. Investigating the accuracy of wheelchair push counts measured by fitness watches: a systematic review. Cureus 15, e45322 (2023).

Singh, B. et al. Real-world accuracy of wearable activity trackers for detecting medical conditions: systematic review and meta-analysis. JMIR Mhealth Uhealth 12, e56972 (2024).

Belani, S., Wahood, W., Hardigan, P., Placzek, A. N. & Ely, S. Accuracy of detecting atrial fibrillation: a systematic review and meta-analysis of wrist-worn wearable technology. Cureus 13, e20362 (2021).

Giebel, G. D. & Gissel, C. Accuracy of mhealth devices for atrial fibrillation screening: systematic review. JMIR Mhealth Uhealth 7, e13641 (2019).

Nazarian, S., Lam, K., Darzi, A. & Ashrafian, H. Diagnostic accuracy of smartwatches for the detection of cardiac arrhythmia: systematic review and meta-analysis. J. Med. Internet. Res. 23, e28974 (2021).

Windisch, P., Schröder, C., Förster, R., Cihoric, N. & Zwahlen, D. R. Accuracy of the apple watch oxygen saturation measurement in adults: a systematic review. Cureus 15, e35355 (2023).

Borenstein, M., Hedges, L. V., Higgins, J. P. & Rothstein, H. R. Introduction to Meta-Analysis 1st edn, Vol. 452 (John wiley & sons, 2021).

O'Driscoll, R. et al. How well do activity monitors estimate energy expenditure? A systematic review and meta-analysis of the validity of current technologies. Br. J. Sports Med. 54, 332–340 (2020).

Chevance, G. et al. Accuracy and precision of energy expenditure, heart rate, and steps measured by combined-sensing Fitbits against reference measures: systematic review and meta-analysis. JMIR mHealth uHealth 10, e35626 (2022).

Miller, D. J., Sargent, C. & Roach, G. D. A validation of six wearable devices for estimating sleep, heart rate and heart rate variability in healthy adults. Sensors (Basel) 22, 6317 (2022)

Doherty, C., Baldwin, M., Lambe, R., Burke, D. & Altini, M. Readiness, recovery, and strain: an evaluation of composite health scores in consumer wearables. Transl. Exercise Biomed. 2, 2(2025).

Dunn, J., Coravos, A., Fanarjian, M., Ginsburg, G. S. & Steinhubl, S. R. Remote digital health technologies for improving the care of people with respiratory disorders. Lancet Digit. Health 6, e291–e298 (2024).

Kim, H.-G., Cheon, E.-J., Bai, D.-S., Lee, Y. H. & Koo, B.-H. Stress and heart rate variability: a meta-analysis and review of the literature. Psychiatry Investig. 15, 235 (2018).

Nayak, S. K. et al. A Review of Methods and Applications for a Heart Rate Variability. Anal. Algorithms 16, 433 (2023).

Behrmann, J. et al. Inferring optical tissue properties from photoplethysmography using hybrid amortized inference. arXiv https://doi.org/10.48550/arXiv.2510.02073 (2025).

Banach, M. et al. The association between daily step count and all-cause and cardiovascular mortality: a meta-analysis. Eur. J. Prevent. Cardiol. 30, 1975–1985 (2023).

US Food and Drug Administration. Pulse Oximeters for Medical Purposes – Non-Clinical and Clinical Performance Testing, Labeling,and Premarket Submission Recommendations. https://www.fda.gov/media/184896/download (2025).

International Organization for Standardization. Particular Requirements For Basic Safety And Essential Performance Of Pulse Oximeter Equipment. https://cdn.standards.iteh.ai (2025).

European Union. Regulation (EU) 2017/745 of the European Parliament and of the Council of 5 April 2017 on Medical Devices. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32017R0745 (2025).

Molina-Garcia, P. et al. Validity of estimating the maximal oxygen consumption by consumer wearables: a systematic review with meta-analysis and expert statement of the interlive network. Sports Med. 52, 1577–1597 (2022).

Argent, R. et al. Recommendations for determining the validity of consumer wearables and smartphones for the estimation of energy expenditure: expert statement and checklist of the interlive network. Sports Med 52, 1817–1832 (2022).

Mühlen, J. M. et al. Recommendations for determining the validity of consumer wearable heart rate devices: expert statement and checklist of the INTERLIVE Network. Br. J. Sports Med. 55, 767–779 (2021).

Johnston, W. et al. Recommendations for determining the validity of consumer wearable and smartphone step count: expert statement and checklist of the INTERLIVE network. Br. J. Sports Med. 55, 780–793 (2021).

Suau, Q. et al. Current knowledge about actigraph GT9X link activity monitor accuracy and validity in measuring steps and energy expenditure: a systematic review. Sensors (Basel) 24, 825 (2024)

Dreisbach, S., Rhudy, M., Moran, M., Henriquez, B. & Veerabhadrappa, P. Accuracy of apple watch and actigraphs during overground and treadmill walking. Hum. Mov. 26, 83–90 (2025).

Dixon, W. G. et al. Charting a course for smartphones and wearables to transform population health research. J. Med. Internet Res. 25, e42449 (2023).

Centre, B. H. F. D. S. Smartphone And Wearable Data In Cardiovascular Research: Understanding The Views Of The Public And Professionals. https://zenodo.org/records/10894877 (2024).

Hughes, A., Shandhi, M. M. H., Master, H., Dunn, J. & Brittain, E. Wearable devices in cardiovascular medicine. Circ. Res 132, 652–670 (2023).

Weiss, A. J. & Jiang, H. J. Overview of clinical conditions with frequent and costly hospital readmissions by payer. Agency Healthcare Res. Quality (2021).

Burke, R. E. & Coleman, E. A. Interventions to Decrease Hospital Readmissions: Keys for Cost-effectiveness. JAMA Intern. Med. 173, 695–698 (2013).

Herrera, C. A. et al. The World Bank–PAHO Lancet regional health Americas commission on primary health care and resilience in Latin America and the Caribbean. Lancet Reg. Health Am. 28, 100643 (2023).

Gonçalves-Bradley, D. C. et al. Mobile technologies to support healthcare provider to healthcare provider communication and management of care. Cochrane Database Syst. Rev. 8, Cd012927 (2020).

Page, M. J. et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. Bmj 372, n71 (2021).

Taylor, K. S., Mahtani, K. R. & Aronson, J. K. Extracting data from diagnostic test accuracy studies for meta-analysis. BMJ Evid. Based Med. 26, 19–21 (2021).

Mokkink, L. B. et al. COSMIN Risk of Bias tool to assess the quality of studies on reliability or measurement error of outcome measurement instruments: a Delphi study. BMC Med. Res. Methodol. 20, 293 (2020).

Tipton, E. & Shuster, J. A framework for the meta-analysis of Bland–Altman studies based on a limits of agreement approach. Stat. Med. 36, 3621–3635 (2017).

Higgins, J. P., Thompson, S. G. & Spiegelhalter, D. J. A re-evaluation of random-effects meta-analysis. J. R. Stat. Soc. Ser. A Stat. Soc. 172, 137–159 (2009).

Bland, J. M. & Altman, D. Statistical methods for assessing agreement between two methods of clinical measurement. lancet 327, 307–310 (1986).

Doebler, P., Holling, H. & Sousa-Pinto, B. Meta-Analysis of Diagnostic Accuracy with mada. https://cran.r-project.org/web/packages/mada/vignettes/mada.pdf (2023).

Higgins, J. P., Thompson, S. G., Deeks, J. J. & Altman, D. G. Measuring inconsistency in meta-analyses. Bmj 327, 557–560 (2003).

Deeks, J. J., Higgins, J. P., Altman, D. G. & Group, C. S. M. Analysing Data and Undertaking Meta-Analyses. https://www.cochrane.org/authors/handbooks-and-manuals/handbook/current/chapter-10 (2019).

Montalvo, S. et al. Commercial smart watches and heart rate monitors: A concurrent validity analysis. The Journal of Strength & Conditioning Research 37, 1802–1808 (2023).

Alfonso, C. et al. Agreement between two photoplethysmography-based wearable devices for monitoring heart rate during different physical activity situations: a new analysis methodology. Scientific reports 12, 15448 (2022).

Düking, P. et al. Wrist-worn wearables for monitoring heart rate and energy expenditure while sitting or performing light-to-vigorous physical activity: validation study. JMIR mHealth and uHealth 8, e16716 (2020).

Lee, C. & Chow, C. Comparison of Apple watch series 4 vs. Kardiamobile: A tale of two devices. Canadian Journal of Cardiology 37, S43–S44 (2021).

Al-Kaisey, A. M. et al. Accuracy of wrist-worn heart rate monitors for rate control assessment in atrial fibrillation. International journal of cardiology 300, 161–164 (2020).

Pasadyn, S. R. et al. Accuracy of commercially available heart rate monitors in athletes: a prospective study. Cardiovascular diagnosis and therapy 9, 379 (2019).

Hwang, J. et al. Assessing accuracy of wrist-worn wearable devices in measurement of paroxysmal supraventricular tachycardia heart rate. Korean circulation journal 49, 437–445 (2019).

Heyken, M. et al. Comparison of wearables for self-monitoring of heart rate in coronary rehabilitation patients. Georgian medical news 315, 78–85 (2021).

Huynh, P. et al. Heart rate measurements in patients with obstructive sleep apnea and atrial fibrillation: Prospective pilot study assessing apple watch’s agreement with telemetry data. JMIR cardio 5, e18050 (2021).

Khushhal, A. et al. Validity and reliability of the Apple Watch for measuring heart rate during exercise. Sports medicine international open 1, E206–E211 (2017).

Thomson, E. A. et al. Heart rate measures from the Apple Watch, Fitbit Charge HR 2, and electrocardiogram across different exercise intensities. Journal of sports sciences 37, 1411–1419 (2019).

Gillinov, S. et al. Variable accuracy of wearable heart rate monitors during aerobic exercise. Medicine & Science in Sports & Exercise 49, 1697–1703 (2017).

Sequeira, N. et al. Common wearable devices demonstrate variable accuracy in measuring heart rate during supraventricular tachycardia. Heart Rhythm 17, 854–859 (2020).

Apple. Blood Oxygen app on AppleWatch. https://www.apple.com/healthcare/docs/site/Blood_Oxygen_app_on_Apple_Watch_October_2022.pdf (2022).

Apple. Estimating sleep stages from Apple Watch. https://www.apple.com/healthcare/docs/site/Estimating_Sleep_Stages_from_Apple_Watch_Sept_2023.pdf (2023).

Apple. Hypertension Notification Feature on Apple Watch. https://apple.com/health (2025)..

Apple. Using Apple Watch to Estimate Six-Minute Walk Distance. https://www.apple.com/healthcare/docs/site/Using_Apple_Watch_to_Estimate_Six_Minute_Walk_Distance.pdf.

Fischer, T. et al. Are smartwatches a suitable tool to monitor noise exposure for publichealth awareness and otoprotection?. Front Neurol. 13, 856219 (2022).

Acknowledgements

This research was supported by The Science Foundation Ireland National Challenge Fund (grant ID: 22/NCF/FD/10949). The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

R.L., C.D., and B.O’G. conceived and designed the study. R.L., C.D., B.O’G., M.S., M.B., and B.C. contributed to the methods of the study. R.L. and B.O’G. conducted the searches of all databases. R.L., M.B., and B.O’G. selected the articles and extracted the data. R.L., M.B., and B.O’G. analysed the data. R.L., M.B., B.O’G., and C.D. accessed and verified the data. R.L. and M.B. assessed risk of bias and R.L. conducted meta-analyses. R.L., M.B., and C.D. wrote the first draft of the manuscript. All authors contributed to data interpretation, revision, and writing of the final version of the manuscript. All authors critically reviewed and approved the content of the manuscript. All authors had full access to all the data in the study and had final responsibility for the decision to submit for publication.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Lambe, R., Baldwin, M., O’Grady, B. et al. The accuracy of Apple Watch measurements: a living systematic review and meta-analysis. npj Digit. Med. 9, 63 (2026). https://doi.org/10.1038/s41746-025-02238-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41746-025-02238-1