Abstract

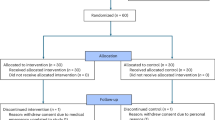

Early studies of large language models (LLMs) in clinical settings have largely treated artificial intelligence (AI) as a tool rather than an active collaborator. As LLMs demonstrate expert-level diagnostic performance, the focus shifts from whether AI can offer valuable suggestions to how it integrates into physicians’ diagnostic workflows. We conducted a randomized controlled trial (n = 70 clinicians) to assess a custom system designed for collaborative diagnostic reasoning. The design involved independent diagnostic assessments by the clinician and AI, followed by an AI-generated synthesis integrating both perspectives, highlighting agreements, disagreements, and offering commentary. We evaluated two collaborative workflows: AI as first opinion (preceding clinician) and AI as second opinion (following clinician). Both improved clinician diagnostic accuracy over conventional resources, (85% and 82% vs. 75%). Performance was comparable across workflows and not statistically different from AI-alone accuracy (90%), highlighting the potential of collaborative AI to complement clinician expertise. Qualitative analyses illustrate how workflow design shapes human-AI interaction. C: NCT06911645.

Similar content being viewed by others

Data Availability

The diagnostic challenge problems and datasets generated and analyzed during the study are not publicly available as their disclosure would risk their inclusion in training datasets of future models. The data can be made available on reasonable request to the corresponding author.

Code availability

The system prompt for the custom GPT is available in the supplemental information. Additional information can be made available to qualified researchers on reasonable request to the corresponding author.

References

Nori, H., King, N., McKinney, S. M., Carignan, D. & Horvitz, E. Capabilities of GPT-4 on medical challenge problems. CS https://doi.org/10.48550/arXiv.2303.13375 (2023).

Cabral, S. et al. Clinical reasoning of a generative artificial intelligence model compared with physicians. JAMA Intern. Med. 84, 581–583 (2024).

Goh, E. et al. Large language model influence on diagnostic reasoning: a randomized clinical trial. JAMA Netw. Open 7, e2440969 (2024).

McDuff D., et al. Towards accurate differential diagnosis with large language models. Nature. 1–7. https://doi.org/10.1038/s41586-025-08869-4 (2025).

Tversky, A., Kahneman, D. Judgment under uncertainty: heuristics and biases: biases in judgments reveal some heuristics of thinking under uncertainty. Science. 185:1124–1131. 1974.

Fogliato, R. et al. Who goes first? Influences of human-AI workflow on decision making in clinical imaging. In Proc. 2022 ACM Conference on Fairness, Accountability, and Transparency (FAccT ‘22), 1362–1374 (Association for Computing Machinery, New York, NY, USA, 2022). https://doi.org/10.1145/3531146.3533193.

Nourani, M. et al. (2021). Anchoring bias affects mental model formation and user reliance in explainable AI systems. 26th International Conference on Intelligent User Interfaces, 340–350.

Yin, J., Ngiam, K. Y., Tan, S. S. L. & Teo, H. H. Designing AI-based work processes: how the timing of AI advice affects diagnostic decision making. Manag. Sci. https://doi.org/10.1287/mnsc.2022.01454 (2022).

Sellen, A. & Horvitz, E. The rise of the AI co-pilot: Lessons for design from aviation and beyond. Commun. ACM 67, 18–23 (2024).

Buçinca, Z., Malaya, M. B. & Gajos, K. Z. To trust or to think: cognitive forcing functions can reduce overreliance on AI in AI-assisted decision-making. Proc. ACM Hum.-Comput. Interact. 5, 1–21 (2021).

Hemmer, P. et al. (2023). Human-AI collaboration: the effect of AI delegation on human task performance and task satisfaction. In Proceedings of the 28th International Conference on Intelligent User Interfaces (pp. 453–463).

Fügener, A., Grahl, J., Gupta, A. & Ketter, W. Cognitive challenges in human–artificial intelligence collaboration: Investigating the path toward productive delegation. Inf. Syst. Res. 33, 678–696 (2022).

Bussone, A., Stumpf, S., & O’Sullivan, D. The Role of Explanations on Trust and Reliance in Clinical Decision Support Systems. Proceedings of the 2015 International Conference on Healthcare Informatics, 160–169 (2015).

Gaube, S. et al. Do as AI say: Susceptibility in deployment of clinical decision-aids. Npj Digit. Med. 4, 1–8 (2021).

Pop, V. L., Shrewsbury, A. & Durso, F. T. Individual differences in the calibration of trust in automation. Hum. Factors 57, 545–556 (2015).

Zhang, Y., Liao, Q. V., & Bellamy, R. K. E. Effect of confidence and explanation on accuracy and trust calibration in AI-assisted decision making. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 295–305. https://doi.org/10.1145/3351095.3372852 (2020).

Passi, S., Dhanorkar, S., Vorvoreanu, M. Addressing Overreliance on AI. In: Xu, W. (eds) Handbook of Human-Centered Artificial Intelligence. Springer, Singapore. https://doi.org/10.1007/978-981-97-8440-0_98-1 (2025).

Drosos, I., Sarkar, A., Toronto, N. “ It makes you think”. Provocations Help Restore Critical Thinking to AI-Assisted Knowledge Work. ArXiv Prepr. https://doi.org/10.48550/arXiv.2501.17247 (2025).

Herbert H. Clark. Using language. Cambridge University Press. (1996).

Shaikh, O., Mozannar, H., Bansal, G., Fourney, A. & Horvitz, E. Navigating Rifts in Human-LLM Grounding: Study and Benchmark. ACL 2025: Proc. 63rd Annu. Meet. Assoc. Comput. Linguist. https://doi.org/10.48550/arXiv.2503.13975 (2025).

Brennan, S. E. The grounding problem in conversations with and through computers. In Social and cognitive approaches to interpersonal communication, pp. 201–225. Psychology Press. (2014).

Bohus, D. & Eric, H. Facilitating multiparty dialog with gaze, gesture, and speech. In International Conference on Multimodal Interfaces and the Workshop on Machine Learning for Multimodal Interaction, https://doi.org/10.1145/1891903.1891910 (2010).

Traum, D. R. A Computational Theory of Grounding in Natural Language Conversation. PhD thesis, Department of Computer Science, University of Rochester. Also available as TR 545, Department of Computer Science, University of Rochester. (1994).

Bansal, G. et al. Beyond accuracy: The role of mental models in human-AI team performance. Proc. AAAI Conf. Hum. Comput. Crowdsourc. 7, 2–11, https://doi.org/10.1609/hcomp.v7i1.5285 (2019).

Horvitz, E. Principles of mixed-initiative user interfaces. Proceedings of the SIGCHI conference on Human Factors in Computing Systems (CHI ‘99). Association for Computing Machinery, New York, NY, USA, 159–166. https://doi.org/10.1145/302979.303030.

Amershi, S. et al. Guidelines for human-AI interaction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems (CHI ‘19). Association for Computing Machinery, New York, NY, USA, Paper 3, 1–13. https://doi.org/10.1145/3290605.3300233.

Wilder, B., Horvitz, E., Kamar, E. Learning to complement humans. Proceedings of the Twenty-Ninth International Conference on International Joint Conferences on Artificial Intelligence, IJCAI'20. 212:1526–1533. https://doi.org/10.24963/ijcai.2020/212.

Bansal, G., Nushi, B., Kamar, E., Horvitz, E. & Weld, D. S. Is the most accurate AI the best teammate? Optimizing AI for teamwork. In Proc. AAAI Conference on Artificial Intelligence, Vol. 35 11405–11414 (2021).

Calisto, F. M., Abrantes, J. M., Santiago, C., Nunes, N. J. & Nascimento, J. C. Personalized explanations for clinician-AI interaction in breast imaging diagnosis by adapting communication to expertise levels. Int J. Hum.-Comput. Stud. 197, 103444 (2025).

Mozannar, H., Satyanarayan, A. & Sontag, D. Teaching humans when to defer to a classifier via exemplars. Artif. Intell. 36, 5323–5331, (2022).

Weld, D. S., Bansal, G. The challenge of crafting intelligible intelligence Communications of the ACM 62, 70–79.

Bansal, G. et al. Does the Whole Exceed its Parts? The Effect of AI Explanations on Complementary Team Performance. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, Article 81, 1–16. https://doi.org/10.1145/3411764.3445717 (2020).

Horvitz, E., Heckerman, D., Nathwani, B. & Fagan, L. M. The use of a heuristic problem-solving hierarchy to facilitate the explanation of hypothesis-directed reasoning. In Proc. of Medinfo, 27–31 https://erichorvitz.com/medinfo_explain_inference.pdf (1986).

Horvitz, E. & Paek, T. Complementary computing: policies for transferring callers from dialog systems to human receptionists. User Model. User Adapt. Interact. 17 https://doi.org/10.1007/s11257-006-9026-1 (2007).

Kamar, E., Hacker, S. & Horvitz, E. Combining Human and Machine Intelligence in Large-scale Crowdsourcing, AAMAS 2012, Valencia, Spain, https://dl.acm.org/doi/10.5555/2343576.2343643 (2012).

Mozannar, H., Bansal, G., Fourney, A. & Horvitz, E. When to show a suggestion? Integrating human feedback in AI-assisted programming. Artif. Intell. 38, 10137–10144 (2024).

Bejnordi, B. E. et al. Diagnostic assessment of deep learning algorithms for detection of lymph node metastases in women with breast cancer. JAMA 318, 2199–2210 (2017).

Langlotz, C. P. & Shortliffe, E. H. Adapting a consultation system to critique user plans. Int J. Man-Mach. Stud. 19, 479–496 (1983).

Miller, P. L. ATTENDING: Critiquing a physician’s management plan. IEEE Trans. Pattern Anal. Mach. Intell. 5, 449–461 (1983).

Ouyang, L. et al. Training language models to follow instructions with human feedback. Adv. Neural Inf. Process Syst. 35, 27730–27744 (2022).

Salecha, A. et al. Large language models display human-like social desirability biases in Big Five personality surveys. PNAS Nexus 3, pgae533 (2024).

Sharma, M. et al. Towards understanding sycophancy in language models. ArXiv Prepr. Published online, (2023).

Savage, T. et al. Large language model uncertainty proxies: discrimination and calibration for medical diagnosis and treatment. J. Am. Med Inf. Assoc. 32, 139–149 (2025).

Balachandran, V. et al. Eureka: Evaluating and understanding large foundation models. ArXiv Prepr. Published online https://doi.org/10.48550/arXiv.2409.10566 (2024).

Acknowledgements

We are grateful to Jason Hom, MD, Curtis Langlotz, MD, PhD, Natalie Pageler, MD, Mihaela Vorvoreanu, PhD, and Daniel Yang, MD, for their insightful feedback. We thank Isabel Weng, MHS, for guidance on the statistical analyses. This work was supported by the Stanford Institute for Human-Centered Artificial Intelligence (HAI), Stanford Medical Scholars Research Program, Stanford Bio-X Interdisciplinary Initiatives Seed Grants Program, the Gordon and Betty Moore Foundation [Grant #12409], and the National Library of Medicine [2T15LM007033].

Author information

Authors and Affiliations

Contributions

S.E.: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Validation, Project administration, Writing – original draft, Writing – review & editing. B.B.: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Validation, Visualization, Writing – original draft, Writing – review & editing. P.J.: Conceptualization, Data curation, Investigation, Methodology, Project administration, Validation, Writing – review & editing. I.L.: Data curation, Formal analysis, Investigation, Methodology, Visualization, Writing – review & editing. A.A.: Data curation, Writing – review & editing, M.D.: Methodology, Formal analysis, Writing – review & editing. R.G.: Writing – review & editing. E.G.: Methodology, Writing – review & editing, V.K.: Data curation, Writing – review & editing, Z.K.: Writing – review & editing. J.K.: Data curation, Writing – review & editing. A.0.: Writing – review & editing. A.R.: Writing – review & editing. K.S.: Writing – review & editing, E.S.: Writing – review & editing, J.C.: Supervision, Methodology, Funding acquisition, Writing – review & editing. E.H.: Conceptualization, Formal analysis, Investigation, Software, Methodology, Project administration, Supervision, Validation, Writing – original draft, Writing – review & editing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Everett, S.S., Bunning, B.J., Jain, P. et al. From tool to teammate in a randomized controlled trial of clinician-AI collaborative workflows for diagnosis. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02545-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-026-02545-1