Abstract

The scalable solution to constrained combinatorial problems in high dimensions can address many challenges encountered in scientific and engineering disciplines. Inspired by the use of graph neural networks for quadratic-cost combinatorial optimization problems, Heydaribeni and colleagues proposed HypOp, which aims to efficiently solve general problems with higher-order constraints by leveraging hypergraph neural networks to extend previous algorithms to arbitrary cost functions. It incorporates a distributed training architecture to handle larger-scale tasks efficiently. Here we reproduce the primary experiments of HypOp and examine its robustness with respect to the number of graphics processing units, distributed partitioning strategies and fine-tuning methods. We also assess its transferability by applying it to the maximum clique problem and the quadratic assignment problem. The results validate the reusability of HypOp across diverse application scenarios. Furthermore, we provide guidelines offering practical insights for effectively applying it to multiple combinatorial optimization problems.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All data used in the reproduction experiment and robustness evaluation experiment were generated following the methodology outlined in ref. 7. The datasets, including hypergraph data, MaxCut data, MIS data, MaxClique data and QAPLIB data, are available via GitHub at https://github.com/AhauBioinformatics/HypOp_Reuse/tree/main/data (ref. 31). Source data are provided with this paper.

Code availability

The original HypOp code is available via Code Ocean at https://doi.org/10.24433/CO.4804643.v1 (ref. 8). Our modified HypOp version with changes is available via GitHub at https://github.com/AhauBioinformatics/HypOp_Reuse (ref. 31).

References

Peres, F. & Castelli, M. Combinatorial optimization problems and metaheuristics: review, challenges, design, and development. Appl. Sci. 11, 6449 (2021).

Mohseni, N., McMahon, P. L. & Byrnes, T. Ising machines as hardware solvers of combinatorial optimization problems. Nat. Rev. Phys. 4, 363–379 (2022).

Schuetz, M. J. A., Brubaker, J. K. & Katzgraber, H. G. Combinatorial optimization with physics-inspired graph neural networks. Nat. Mach. Intell. 4, 367–377 (2022).

Karalias, N. & Loukas, A. Erdos goes neural: an unsupervised learning framework for combinatorial optimization on graphs. Adv. Neural Inf. Process. Syst. 33, 6659–6672 (2020).

Tönshoff, J., Ritzert, M., Wolf, H. & Grohe, M. Graph neural networks for maximum constraint satisfaction. Front. Artif. Intell. 3, 580607 (2021).

Feng, Y., You, H., Zhang, Z., Ji, R. & Gao, Y. Hypergraph neural networks. In Proc. AAAI Conference Artificial Intelligence Vol. 33, 3558–3565 (AAAI, 2019).

Heydaribeni, N., Zhan, X., Zhang, R., Eliassi-Rad, T. & Koushanfar, F. Distributed constrained combinatorial optimization leveraging hypergraph neural networks. Nat. Mach. Intell. 6, 664–672 (2024).

Heydaribeni, N. et al. HypOp: distributed constrained combinatorial optimization leveraging hypergraph neural networks. Code Ocean https://doi.org/10.24433/CO.4804643.v1 (2024).

Meng, L. et al. A survey of distributed graph algorithms on massive graphs. ACM Comput. Surv. 57, 27:1–27:39 (2024).

Vatter, J., Mayer, R. & Jacobsen, H.-A. The evolution of distributed systems for graph neural networks and their origin in graph processing and deep learning: a survey. ACM Comput. Surv. 56, 1–37 (2024).

Gad, A. G. Particle swarm optimization algorithm and its applications: a systematic review. Arch. Comput. Methods Eng. 29, 2531–2561 (2022).

Dorigo, M. & Socha, K. in Handbook of Approximation Algorithms and Metaheuristics (ed. Gonzalez, T. F.) Ch. 26 (Chapman and Hall/CRC, 2018).

Mirjalili, S. in Evolutionary Algorithms and Neural Networks: Theory and Applications Ch. 4 (Springer, 2019).

Sun, H., Guha, E. K. & Dai, H. Annealed training for combinatorial optimization on graphs. Preprint at http://arxiv.org/abs/2207.11542 (2022).

Nau, C., Sankaran, P. & McConky, K. Comparison of parameter tuning strategies for team orienteering problem (TOP) solved with Gurobi. In Proc. IISE Annual Conference and Expo 393–398 (IISE, 2022).

Lamm, S., Sanders, P., Schulz, C., Strash, D. & Werneck, R. F. Finding near-optimal independent sets at scale. In Proc. 18th Workshop on Algorithm Engineering and Experiments (eds Goodrich, M. & Mitzenmacher, M.) 138–150 (SIAM, 2016).

Hespe, D., Schulz, C. & Strash, D. Scalable kernelization for maximum independent sets. J. Exp. Algorithmics 24, 1–22 (2019).

Lamm, S., Schulz, C., Strash, D., Williger, R. & Zhang, H. Exactly solving the maximum weight independent set problem on large real-world graphs. In Proc. 21st Workshop on Algorithm Engineering and Experiments (eds Kobourov, S. & Meyerhenke, H.) 144–158 (SIAM, 2019).

Erdős, P. & Rényi, A. On the evolution of random graphs. Publ. Math. Inst. Hung. Acad. Sci. 5, 17–61 (1960.

Barabási, A.-L. & Albert, R. Emergence of scaling in random networks. Science 286, 509–512 (1999).

Watts, D. J. & Strogatz, S. H. Collective dynamics of ‘small-world’networks. Nature 393, 440–442 (1998).

Pelofske, E., Hahn, G. & Djidjev, H. N. Solving larger maximum clique problems using parallel quantum annealing. Quantum Inf. Process. 22, 219 (2023).

Ling, S. On the exactness of SDP relaxation for quadratic assignment problem. Preprint at https://arxiv.org/abs/2408.05942 (2024).

Wang, R., Yan, J. & Yang, X. Neural graph matching network: learning lawler’s quadratic assignment problem with extension to hypergraph and multiple-graph matching. IEEE Trans. Pattern Anal. Mach. Intell. 44, 5261–5279 (2022).

Loiola, E. M., De Abreu, N. M. M., Boaventura-Netto, P. O., Hahn, P. & Querido, T. A survey for the quadratic assignment problem. Eur. J. Oper. Res. 176, 657–690 (2007).

Burkard, R. E., Karisch, S. E. & Rendl, F. QAPLIB – a quadratic assignment problem library. J. Glob. Optim. 10, 391–403 (1997).

Kang, H., Lee, Y. & Yoe, H. A design of a GPU based solver for quadratic assignment problems. In Proc. 15th International Conference on Information and Communication Technology Convergence (eds Jeong, S. H. et al.) 635–640 (IEEE, 2024).

Burkard, R. E. in Discrete Location Theory (eds Mirchandani, P. B. & Francis, R. L.) Ch. 9 (Wiley, 1991).

Li, Y. Heuristic and Exact Algorithms for the Quadratic Assignment Problem (Pennsylvania State Univ., 1992).

Cuturi, M. Sinkhorn distances: lightspeed computation of optimal transport. In Proc. 27th International Conference on Neural Information Processing Systems, Vol. 2 (eds Burges, C. J. C. et al.) 2292–2300 (ACM, 2013).

Li, X. et al. AhauBioinformatics/HypOp_Reuse. Zenodo https://doi.org/10.5281/zenodo.17168913 (2025).

Acknowledgements

This work was supported by the National Natural Science Foundation of China (grant nos. 62472005, 62102004) (Z.Y.), the Anhui Province Excellent Young Teacher Training Project (grant no. YQYB2024007) (Z.Y.) and the National Undergraduate Innovation and Entrepreneurship Training Programme Project (grant no. 202510364005) (J.G.).

Author information

Authors and Affiliations

Contributions

Z.Y. and J.X. conceived of and supervised the project. X.L., J.G. and Z.Y. designed the computational experiments and data analyses. X.L., W.X., B.W. and K.C. prepared the data. X.L., J.G., P.W. and W.X. implemented the methods, conducted the experiments and performed the data analyses. X.L., J.G. and Z.Y. wrote the paper.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks Martin Schuetz, Petar Veličković and Haoyu Wang for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

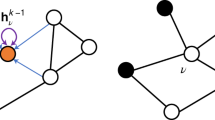

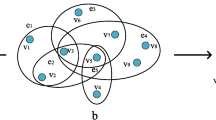

Extended Data Fig. 1 Transfer Learning.

a, b: Transfer learning using HypOp from Graph MaxCut to MIS on synthetic random regular graphs. c, d: Transfer learning using HypOp from Hypergraph MaxCut to Hypergraph MinCut on synthetic random hypergraphs.

Supplementary information

Supplementary Information (download PDF )

Supplementary Fig. 1 and Discussion.

Source data

Source Data Fig. 1 (download TXT )

Statistical source data for Fig. 1.

Source Data Fig. 2 (download TXT )

Statistical source data for Fig. 2.

Source Data Fig. 3 (download TXT )

Statistical source data for Fig. 3.

Source Data Fig. 4 (download TXT )

Statistical source data for Fig. 4.

Source Data Fig. 5 (download TXT )

Statistical source data for Fig. 5.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Li, X., Gui, J., Xue, W. et al. Reusability report: A distributed strategy for solving combinatorial optimization problems with hypergraph neural networks. Nat Mach Intell 7, 1870–1878 (2025). https://doi.org/10.1038/s42256-025-01141-4

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s42256-025-01141-4