Abstract

Background

Understanding how symptoms affect daily functioning is central to improving care for childhood cancer survivors. As narrative symptom reporting becomes increasingly common in survivorship care, scalable automated tools are needed to interpret these descriptions and identify their functional impact. This study evaluates how two large language models (ChatGPT-4o, Llama-3.1) perform this task across different prompt engineering strategies.

Methods

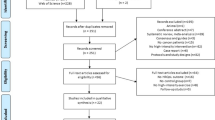

We analyzed semi-structured interviews from 30 childhood cancer survivors and their caregivers, yielding 819 pain- and fatigue-related symptom narratives. Each narrative was expert-annotated for physical, social, or cognitive functional impact, serving as the reference standard. ChatGPT-4o and Llama-3.1 were evaluated using four prompting strategies: zero-shot, few-shot, step-by-step reasoning (Chain-of-Thought), and generated knowledge. Model outputs were compared with expert annotations, and performance was quantified using standard classification and discrimination metrics with resampling-based confidence intervals.

Results

Here, we show that prompting strategies based on generated knowledge and step-by-step reasoning consistently outperform zero-shot and few-shot across both models. Overall, these strategies produce the most accurate and stable classification of physical, social, and cognitive functional impact. Specifically, ChatGPT-4o achieves more balanced precision and discrimination across physical, social, and cognitive functioning, whereas Llama-3.1 demonstrates higher sensitivity but substantially lower precision, particularly for physical and social functioning.

Conclusions

Prompt engineering improves how large language models interpret survivor-reported pain and fatigue. These findings support the use of carefully designed prompts to enable automated, context-aware analysis of symptom narratives, providing a scalable approach to support symptom monitoring and survivor-centered care.

Plain language summary

Many children who survive cancer continue to have pain and tiredness that affect how they move, think, and take part in daily life. These experiences are often recorded in interviews or written notes, which are hard for doctors to review quickly. This study tested whether two computer programs could aid in interpreting these symptom narratives. We provided the programs with different types of instructions and asked them to determine how pain and fatigue affected physical, social, and thinking activities. We found that instructions that included background information or step-by-step thinking led to more accurate results. These tools could help doctors better track symptoms, identify problems earlier, and provide more personalized care for childhood cancer survivors.

Similar content being viewed by others

Data availability

Source data underlying Table 1 is in Supplementary Table 1; source data underlying Tables 2–3 are in Supplementary Data parts 3 and 4. These source data are available on Zenodo.org (https://zenodo.org/records/18526848)53. These public datasets enable reproduction of the reported tables but do not include unstructured symptom narratives. The full analytic datasets used for model training and statistical analyses, including unstructured symptom narratives, may contain protected health information (PHI) and therefore cannot be made publicly available. These data are stored within secure St. Jude data systems and are available to qualified researchers upon reasonable request, subject to institutional review and execution of a data use agreement (DUA). Requests for access to restricted analytic data should be directed to the corresponding author, who will coordinate access in accordance with St. Jude data-governance policies.

References

Ohlsen, T., Martos, M. & Hawkins, D. Recent advances in the treatment of childhood cancers. Curr. Opin. Pediatr. 36, 57–63 (2023).

Siegel, R. L., Miller, K. D., Wagle, N. S. & Jemal, A. Cancer statistics, 2023. CA Cancer J. Clin. 73, 17–48 (2023).

Miller, K. D. et al. Cancer treatment and survivorship statistics, 2022. CA Cancer J. Clin. 72, 409–436 (2022).

Armstrong, G. T. et al. Reduction in late mortality among 5-year survivors of childhood cancer. NEJM 374, 833–842 (2016).

Hudson, M. M. et al. Clinical ascertainment of health outcomes among adults treated for childhood cancer. JAMA 309, 2371–2381 (2013).

Bhakta, N. et al. The cumulative burden of surviving childhood cancer: an initial report from the St Jude Lifetime Cohort Study (SJLIFE). Lancet 390, 2569–2582 (2017).

Shin, H. et al. Associations of symptom clusters and health outcomes in adult survivors of childhood cancer: a report from the St Jude Lifetime Cohort Study. J. Clin. Oncol. 41, 497–507 (2023).

Horan, M. R. et al. Multilevel characteristics of cumulative symptom burden in young survivors of childhood cancer. JAMA Netw. Open 7, e2410145–e2410145 (2024).

Molcho, M., D’Eath, M., Alforque Thomas, A. & Sharp, L. Educational attainment of childhood cancer survivors: a systematic review. Cancer Med. 8, 3182–3195 (2019).

Leahy, A. B. & Steineck, A. Patient-reported outcomes in pediatric oncology: the patient voice as a gold standard. JAMA Pediatr. 174, e202868–e202868 (2020).

Basch, E., Leahy, A. B. & Reeve, B. B. Symptom monitoring with patient-reported outcomes during pediatric cancer care. JAMA 332, 1979–1980 (2024).

Aiyegbusi, O. L. et al. Recommendations to address respondent burden associated with patient-reported outcome assessment. Nat. Med. 30, 650–659 (2024).

Minvielle, E., di Palma, M., Mir, O. & Scotté, F. The use of patient-reported outcomes (PROs) in cancer care: a realistic strategy. Ann. Oncol. 33, 357–359 (2022).

Lu, Z. et al. Natural language processing and machine learning methods to characterize unstructured patient-reported outcomes: validation study. J. Med. Internet Res. 23, e26777 (2021).

Sezgin, E., Hussain, S. A., Rust, S. & Huang, Y. Extracting medical information from free-text and unstructured patient-generated health data using natural language processing methods: feasibility study with real-world data. JMIR Form. Res. 7, e43014 (2023).

Castro, A., Pinto, J., Reino, L., Pipek, P. & Capinha, C. Large language models overcome the challenges of unstructured text data in ecology. Ecol. Inf. 82, 102742 (2024).

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies 4171–4186 (Association for Computational Linguistics, 2019).

Yang, X. et al. Multiple large language models versus experienced physicians in diagnosing challenging cases with gastrointestinal symptoms. NPJ Digit. Med. 8, 85 (2025).

Singhal, K. et al. Large language models encode clinical knowledge. Nature 620, 172–180 (2023).

Hadi, A., Tran, E., Nagarajan, B. & Kirpalani, A. Evaluation of ChatGPT as a diagnostic tool for medical learners and clinicians. PLoS ONE 19, e0307383 (2024).

Liévin, V., Hother, C. E., Motzfeldt, A. G. & Winther, O. Can large language models reason about medical questions?. Patterns 5, 100943 (2024).

Wei, W. I. et al. Extracting symptoms from free-text responses using ChatGPT among COVID-19 cases in Hong Kong. Clin. Microbiol. Infect. 30, 142.e141–142.e143 (2024).

Nazi, Z. A., Hossain, M. R. & Mamun, F. A. Evaluation of open and closed-source LLMs for low-resource language with zero-shot, few-shot, and chain-of-thought prompting. Nat. Lang. Proc. J. 10, 100124 (2025).

Sim, J. A. et al. Natural language processing with machine learning methods to analyze unstructured patient-reported outcomes derived from electronic health records: a systematic review. Artif. Intell. Med. 146, 102701 (2023).

Sim, J. A., Huang, X., Horan, M. R., Baker, J. N. & Huang, I. C. Using natural language processing to analyze unstructured patient-reported outcomes data derived from electronic health records for cancer populations: a systematic review. Expert Rev. Pharmacoecon. Outcomes Res. 24, 467–475 (2024).

Cho, S. et al. Leveraging large language models for improved understanding of communications with patients with cancer in a call center setting: proof-of-concept study. J. Med. Internet Res. 26, e63892 (2024).

Gupta, G. K., Singh, A., Manikandan, S. V. & Ehtesham, A. Digital diagnostics: the potential of large language models in recognizing symptoms of common illnesses. AI 6, 13 (2025).

Huang, X. et al. Evaluating the performance of ChatGPT in clinical pharmacy: a comparative study of ChatGPT and clinical pharmacists. Br. J. Clin. Pharm. 90, 232–238 (2024).

Han, C. et al. Evaluation of GPT-4 for 10-year cardiovascular risk prediction: insights from the UK Biobank and KoGES data. iScience 27, 109022 (2024).

Goh, E. et al. Large language model influence on diagnostic reasoning: a randomized clinical trial. JAMA Netw. Open 7, e2440969–e2440969 (2024).

Rydzewski, N. R. et al. Comparative evaluation of LLMs in clinical oncology. NEJM AI 1, 1–14 (2024).

Hirosawa, T. et al. ChatGPT-generated differential diagnosis lists for complex case-derived clinical vignettes: diagnostic accuracy evaluation. JMIR Med. Inf. 11, e48808 (2023).

Chen, A., Chen, D. O. & Tian, L. Benchmarking the symptom-checking capabilities of ChatGPT for a broad range of diseases. J. Am. Med. Inf. Assoc. 31, 2084–2088 (2024).

Forrest, C. B. et al. Self-reported health outcomes of children and youth with 10 chronic diseases. J. Pediatr. 246, 207–212 (2022).

Pierzynski, J. A. et al. Patient-reported outcomes in paediatric cancer survivorship: a qualitative study to elicit the content from cancer survivors and caregivers. BMJ Open 10, e032414 (2020).

Forrest, C. B. et al. Establishing the content validity of PROMIS Pediatric pain interference, fatigue, sleep disturbance, and sleep-related impairment measures in children with chronic kidney disease and Crohn’s disease. J. Patient Rep. Outcomes 4, 11 (2020).

Varni, J. W. et al. PROMIS Pediatric Pain Interference Scale: an item response theory analysis of the pediatric pain item bank. J. Pain. 11, 1109–1119 (2010).

Lai, J. S. et al. Development and psychometric properties of the PROMIS(®) pediatric fatigue item banks. Qual. Life Res. 22, 2417–2427 (2013).

Hahn, E. A. et al. Precision of health-related quality-of-life data compared with other clinical measures. Mayo Clin. Proc. 82, 1244–1254 (2007).

Kojima, T., Gu, S. S., Reid, M., Matsuo, Y. & Iwasawa, Y. Large language models are zero-shot reasoners. In Proceedings of the 36th International Conference on Neural Information Processing Systems 22199–22213 (Association for Computational Linguistics, 2022).

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. In Proceedings of the 36th International Conference on Neural Information Processing Systems 24824–24837 (NeurIPS, 2022).

Liu, J. et al. Generated knowledge prompting for commonsense reasoning. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics 3154–3169 (Association for Computational Linguistics, 2022).

Zelikman, E., Wu, Y., Mu, J. & Goodman, N. Shape of thought: When distribution matters more than correctness in reasoning tasks. In Proceedings of the 36th International Conference on Neural Information Processing Systems 15476–15488 (NeurIPS, 2022).

Kakaday, R., Herrera, E. Z., Coskey, O., Hertel, A. W. & Kaiser, P. The STREAMLINE Pilot—study on time reduction and efficiency in AI-mediated logging for improved note-taking experience. Appl. Clin. Inf. 16, 614–621 (2025).

Wornow, M. et al. The shaky foundations of large language models and foundation models for electronic health records. NPJ Digit. Med. 6, 135 (2023).

Ji, Z. et al. Survey of hallucination in natural language generation. ACM Comput. Surv. 55, 1–38 (2023).

Lewis, P. et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. In Proceedings of the 34th International Conference on Neural Information Processing System 9459–9474 (NeurIPS, 2020).

Li, M., Kilicoglu, H., Xu, H. & Zhang, R. BiomedRAG: a retrieval augmented large language model for biomedicine. J. Biomed. Inf. 162, 104769 (2025).

Liu, P. et al. Pre-train, prompt, and predict: a systematic survey of prompting methods in natural language processing. ACM Comput. Surv. 55, 1–35 (2023).

Xu, D. et al. Editing factual knowledge and explanatory ability of medical large language models. In Proceedings of the 33rd ACM International Conference on Information and Knowledge Management 2660–2670 (Association for Computing Machinery, 2024).

Rajkomar, A., Hardt, M., Howell, M. D., Corrado, G. & Chin, M. H. Ensuring fairness in machine learning to advance health equity. Ann. Intern. Med. 169, 866–872 (2018).

Mehrabi, N., Morstatter, F., Saxena, N., Lerman, K. & Galstyan, A. A survey on bias and fairness in machine learning. ACM Comput. Surv. 54, 1–35 (2021).

Source data from: Optimizing prompting strategies improves large language model classification of pain- and fatigue-related functional impact in childhood cancer survivors. Zenodo https://doi.org/10.5281/zenodo.18526848 (2026).

Acknowledgements

The research reported in this manuscript was supported by the U.S. National Cancer Institute (NCI) under award numbers NCI U01CA195547, NCI R21CA202210, NCI R01CA238368, and NCI R01CA258193. This research was also supported by the grant from the National Research Foundation of Korea (NRF), funded by the Korean government (no.: 2022R1C1C1009902). The content is solely the responsibility of the authors and does not represent the official views of the funding agencies. Support for St. Jude Children’s Research Hospital was also provided by the Cancer Center Support (CORE) grant (P30 CA21765, C. Roberts, Principal Investigator) and the American Lebanese-Syrian Associated Charities (ALSAC). In addition, the authors thank Rachel M. Keesey and Ruth J. Eliason for conducting in-depth interviews with study participants; Jennifer L. Clegg and Conor M. Jones, MD, for annotating symptom data from the interview data; and Christopher B. Forrest, MD, PhD, for adjudicating discrepancies in the annotation of symptom data.

Author information

Authors and Affiliations

Contributions

Conceptualization: J.A.S. and I.C.H.; Funding acquisition: J.A.S., K.K.N., M.M.H., and I.C.H.; Methodology: J.A.S., X.H., and I.C.H.; Data analysis: J.A.S. and M.K.; Interpretation of data: all co-authors; Project administration: I.C.H.; Resources: K.K.N., M.M.H., J.N.B., and I.C.H.; Supervision: I.C.H.; Writing: J.A.H., M.R.H., and I.C.H.; Review and editing: all co-authors; All authors have read and agreed to the submitted version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Medicine thanks the anonymous reviewers for their contribution to the peer review of this work. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Sim, Ja., Horan, M.R., Huang, X. et al. Optimizing prompting strategies improves large language model classification of pain- and fatigue-related functional impact in childhood cancer survivors. Commun Med (2026). https://doi.org/10.1038/s43856-026-01499-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s43856-026-01499-5