Abstract

The proliferation of distributed energy resources introduces multi-source uncertainties, including implicit uncertainties arising from third-party operators’ partial observability of security constraints, challenging traditional distribution network planning methods dependent on model simplification and predefined scenarios. We address this gap via an adaptive hierarchical learning architecture that co-optimizes distributed energy resources location, capacity, and operational strategies data-drivenly, enabling autonomous learning of implicit constraints without full model knowledge. Our framework embeds a bi-level Stackelberg structure where Monte Carlo Tree Search autonomously generates planning schemes at the upper level, while multi-agent reinforcement learning directly learns operational policies from real-time data at the lower level under partial observability. Validation on both benchmark and large-scale practical distribution systems shows lower investment costs and faster solutions while maintaining voltage stability, demonstrating superior scalability and adaptiveness to implicit uncertainties versus scenario-based methods.

Similar content being viewed by others

Introduction

The growing integration of distributed energy resources (DERs), such as renewable sources (e.g., photovoltaic (PV)), energy storage system (ESS), and reactive power sources (e.g., the static var compensator (SVC) and the capacitor bank (CB)), enhances flexibility and sustainability in the active distribution network (ADN)1. However, the compounded variability and unpredictability of these multiple DERs across geographically dispersed nodes may be cumulated to further constitute multi-source uncertainties2. Moreover, from the perspective of third-party operators (TPOs), such as DER owners or virtual power plants, these uncertainties are exacerbated by the lack of full visibility, such as network topology and security constraints, which are often inaccessible due to privacy or regulatory barriers3. This introduces an implicit uncertainty: the relationship between operational strategies and security constraints must be learned data-drivenly without relying on predefined models or scenarios4. Thus, existing scenario-based methods fail to capture this implicit uncertainty, as they assume complete model knowledge3,5. These uncertainties critically challenge cooperative planning6 and operation7 due to their spatiotemporal accumulation, complicating system-wide decision-making. Moreover, tightly coupled planning-operation interdependencies necessitate strategic co-optimization of DER type, location, capacity and scheduling to holistic performance8.

Existing DER planning in ADNs studies face three key limitations: (1) expansion models incorporating DER, e.g., EV charging stations9, rely on limited stochastic scenarios (e.g., five scenarios). (2) Though charging demand forecasting via diffusion models improves realism10, hourly simulation timesteps inadequately capture dynamic power system behavior9,10,11,12, which indeed of significance describting the operation conditions in the DER plannnig. (3) Bi-level cooperative models13 address uncertainties through probabilistic scenarios but require conversion to single-level problems14 or mixed-integer linear programs15, imposing computational burdens.

From the review of related literature, current solutions exhibit two fundamental constraints. (1) Model dependency. The planning problem is generally formulated as a bi-level model coupled with investment and scheduling strategies16, for solving the problem, the model is either transformed or simplified into a single-level model, which can be solved directly using the mathematical optimizers, or using the heuristic methods to iteratively optimize. However, these model-based approaches are quite dependent on accurate forecasting of input data17, making it challenging to address multi-source uncertainties without excessive simplification. (2) Scenario rigidity is another problem. A predefined set of scenarios is essential for the optimization at the operating level, which poses challenges for long-term planning. In practice, DER planning in ADNs is usually spanned in extended time horizons, a limited number of predefined scenarios may fail to accurately represent the range of possible conditions, and increasing the scenario set to enhance representativeness imposes substantial computational burdens, the contradiction between computational efficiency and reliability is inevitable, particularly facing the complicated combinatorial optimization problem18. While prior works address uncertainties via probabilistic scenarios or robust optimization, they presuppose full distribution network model accessibility. In contrast, our work tackles implicit uncertainties where operators must learn security constraints from limited observations, enabling decision-making under partial observability.

As a milestone in the development of artificial intelligence, reinforcement learning (RL)-based methods can parameterize the latent relationship of uncertainty through interactive learning19. In recent years, applications in the planning and operating problems have received increasing attention, such as voltage regulation20, optimal operation21, maintenance schedules22, etc. The interaction between planning and operation is decoupled in the planning process23, although the performance of the planning level can be satisfied, the optimization model in the operating level is still transformed, e.g., by the second-order cone relaxation11, which increased the number of variables. That means, though RL methods parameterize uncertainty through interactive learning, extant implementations either decouple planning-operation interactions—retaining operation-level optimizers that increase variables or fix DER locations/capacities during RL training, restricting adaptability.

While progress has been made in DER planning under uncertainties through model-based optimization, the coupling of operation problems under partial observability and implicit uncertainties remains less explored. First, inadequate handling of multi-source uncertainties due to limited scenario generation in sizing and deploying large-scale of DERs in ADNs. Second, persistent reliance on model transformation for bi-level optimization despite RL advances in the single planning or operation level. Third, from a TPO perspective where external topology of distribution network are inaccessible, the DSO cannot determine whether the security constraints of the distribution network can be satisfied when formulating operational strategies under incomplete information conditions.

Although existing works effectively handle uncertainties under full model knowledge with various optimization-based approaches proposed to handle uncertainties, they assume that operators have complete access to the topology and security constraints of the distribution network. An adaptive robust expansion planning model is developed that employs a tri-level decomposition algorithm to address uncertainties in loads and wind power outputs, using polyhedral uncertainty sets and dual cutting planes24. For bi-level models for coordinating planning and operation in distribution networks, a robust coordination expansion planning framework is introduced for deregulated retail markets, incorporating demand response and dynamic pricing through a double-nested game model25. And a bi-level optimization framework is presented for expansion planning, which coordinates network and DER owners’ assets using Karush–Kuhn–Tucker conditions and strong duality theory15. Besides, distribution system expansion is explored considering non-utility-owned DG and an independent system operator, utilizing stochastic programming with scenario generation26. These methods rely on mathematical optimization techniques, such as scenario-based stochastic programming or robust optimization, which often require predefined uncertainty scenarios, model simplifications, and can be computationally intensive for large-scale systems. While effective in idealized settings, they may struggle in practical scenarios where TPOs lack complete grid information or face implicit uncertainties, such as the correlational uncertainty between operational strategies and security constraints.

However, the TPOs, such as the DER owners, often face partial observability due to privacy or regulatory barriers, leading to implicit uncertainties, where the relationship between operational strategies and security constraints must be learned data-drivenly without predefined models. This gap is not addressed by traditional methods that rely on scenario generation or model simplification. Our work bridges this gap by proposing a learning architecture that operates under partial observability, enabling adaptive decision-making without model assumptions.

We bridge those gaps via a learning-based cooperative planning approach that simultaneously determines the location, capacity, and optimal operational strategy of DERs, which can operate under partial observability, enabling adaptive decision-making without model assumptions.

In the proposed bi-level decision-making framework, the DSO is responsible for making investment decisions in each investment step and communicates the planning stage to the lower level. The DER owners of the corresponding planning state independently make operational decisions under the supervision of the DSO and relay the operating strategies back to the upper level. The Monte Carlo tree search (MCTS) algorithm is embedded in the multi-agent deep reinforcement learning (MADRL) to address the multi-source uncertainties of the planning and operating decision-making process.

The contributions of this paper are summarized as follows.

-

1)

A learning-based bi-level Stackelberg planning framework is proposed for the simultaneous planning and operation of DERs in ADNs. The conventional planning model is reformulated into a learnable structure without simplification or transformation, ensuring real-time adaptability in both planning and operational decision-making under a multi-source uncertainty environment.

-

2)

A learning and generating method of optimal planning strategies based on tree-based search is proposed to address the combinatorial explosion of the solution space in the planning problem. The solution space can be explored and compressed adaptively, enabling efficient and scalable optimization without relying on predefined or fixed solution paths, thereby reducing computational complexity while maintaining performance.

-

3)

This method eliminates the need for predefined scenario-based models, allowing the TPOs to dynamically adapt to changes in the network and providing more accurate operational guidance for the optimal planning.

Results

A bi-level planning-operation framework

A bi-level planning-operation framework is proposed for simultaneous coordinated locating and deploying of DERs in the ADN, in which a model-free solution method incorporated MCTS and multi-agent TD3 (MATD3) algorithm is implemented to cope with the multi-source uncertainty and curse of dimensionality in an adaptive learning way.

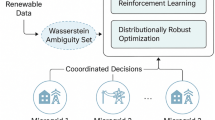

Our framework adopts a Stackelberg game structure (Fig. 1), where the upper-level planning layer acts as the leader to determine investment strategies per timescale, while the lower operating layer functions as the follower—generating optimal scheduling policies and feeding corresponding operational costs back to the upper level. At the upper level, DSO optimizes DER location and capacity decisions to minimize total costs—integrating investment, maintenance, and operational expenditures. Crucially, investment strategy performance varies across schemes; thus, the lower level performs two core functions: (1) conducting cooperative optimization under a given investment strategy, and (2) evaluating its performance via operational cost feedback. This establishes persistent bidirectional interplay: upper-level planning dictates lower-level operational scenarios, while lower-level optimization results directly guide planning scheme generation. Embedded within this architecture, our integration of MCTS and MATD3 enables autonomous optimal planning scheme generation aligned with grid supply-demand dynamics. Moreover, the framework offers superior scalability-boundary values for planned DERs can be flexibly adjusted without full retraining, outperforming traditional methods. The hierarchy serves two critical functions:

-

1)

Bidirectional feedback: the upper level dictates investment strategies, which define the operational scenarios for the lower level. In return, the lower level provides operational cost feedback to evaluate and guide planning decisions. This closed-loop interaction ensures that planning schemes are dynamically adjusted based on real-time grid conditions, overcoming the scalability limits of scenario-based methods.

-

2)

Uncertainty adaptation: by decoupling planning and operation into distinct tiers, the hierarchy allows each level to focus on its timescale-specific uncertainties. For instance, MCTS compresses the solution space combinatorially, while MATD3 handles stochastic demand and generation variations. This separation reduces computational complexity while maintaining voltage stability.

The framework depicts the adaptive hierarchical learning framework, featuring a bi-level Stackelberg structure where the upper level employs MCTS for planning and the lower level uses multi-agent reinforcement learning for operational optimization under partial observability.

Benchmark-scale distribution system

We validate our framework on a 12.66 kV, 33-node benchmark distribution network (Supplementary Fig. 1), comprising 32 load points supplied by a main substation (node #1) and 6 PV farms (nodes #9, #14, #19, #21, #23, #29). The geographic node distribution partitions the system into 6 control zones, enabling localized resource management. The load demand data27 and PV generation profiles28 provide real-time consumption (32 users) and solar outputs (6 regions). We partition datasets into 2-year training and one-year testing sets, normalized to system capacity with 15-min resolution. Figure 2 illustrates median and interquartile ranges (25–75%) of active power profiles. Reactive demand is modeled by applying ±10% random perturbations to default power factors.

The solid lines represent the expected mean profiles of active power for load demand and PV generation over a 24-h period. The translucent shaded bands surrounding each line depict the associated uncertainty intervals.

Candidate DERs-ESS, Gas turbines, SVCs, and CBs—are deployable across all load nodes. Technical specifications, cost parameters and Algorithm hyperparameters for MCTS and MATD3 (referencing machine learning best practices29,30) are co-listed in Supplementary Table 1, ensuring full experimental reproducibility.

The upper-level planning runs less than 9 episodes, selecting one strategy per episode until convergence or episode limit. For lower-level training, we generate 360 initial planning schemes via MCTS, dynamically updated every 192 timesteps (two operating episodes) to adapt to evolving grid conditions. The actor and critic networks share identical architectures: one input layer, three hidden layers (256 neurons each), and one output layer. Parameter sharing across agents accelerates training convergence. Notably, gated recurrent units in actor networks enhance partial observability handling. We further stabilize training by updating actor networks half as frequently as critic networks.

Planning scheme formulation

In the upper level, the priority of the planning state in the search tree is determined by the value obtained through simulation, guiding investment strategy towards better performance. The probability distribution of the corresponding planning strategy in three training episodes is shown in Fig. 3, the evolution of the shade of the square color could provide a visual representation of the knowledge accumulation process. It can be seen from Fig. 3a that the minimum select probability is 0.004, and the maximum is 0.007, i.e., the probability of all planning strategies is uniformly distributed, indicating a pure exploration phase where the algorithm assesses all possible actions without prior knowledge or bias in training episode #1. The distinct preference for specific strategies (e.g., the investment of CB resources on node 27) is emerging gradually in training episode #5, as shown in Fig. 3b, indicating the transition from exploration to exploitation, it begins to focus on more promising strategies that have better performance based on the accumulated knowledge in exporting stage. This trend is further accentuated in Fig. 3c, the bias towards investing CB on node 27 is more pronounced (the probability reaches 0.33), and a nascent preference for ESS on node 22 is also observable. This evolution underscores the capability of adaptive learning of the proposed method that converges on optimal strategies through iterative learning.

The x-axis represents the candidate nodes, and the y-axis represents the candidate resources. a Probability distribution in episode #1. b Probability distribution in episode #5. c Probability distribution in episode #9.

In the lower level, the convergence trend of reward obtained by the agents is an important metric to evaluate the training performance of the MADRL algorithm, the average reward calculated for each 40 episodes over 3 independent runs with different random seeds is shown in Fig. 4. It can be observed that two stages of learning and convergence are contained during training process, before 1200 episodes, the curve is characterized by volatility and a relatively lower reward metric, that indicate the exploratory nature of the algorithm during the learning stage. As training progresses, a notable increase in the average reward can be observed, and finally converges to a stable value, this trend suggests that the agents can learn the optimal operating strategy while overcoming the multi-source uncertainty based on the iteration with the environment.

The solid blue line signifies the mean average reward achieved during training, while the surrounding light blue band denotes the standard deviation or confidence interval, reflecting the variability and stability of the learning process across multiple trials.

Cost-benefit and operation performance

To emphasize the superiority of the proposed learn-based approach (case I) over the previous optimization-based works, two test cases are designed additionally for comparison. In case II, the bi-level model is converted into a single-level model through the parameter correlation11, and solved based on the mathematical optimizer after the linearization. Similar to several of the previous works, the iteration of planning and operating level is disregarded, and the problem is modeled as a single linear problem. The planning level of case III is similar to the proposed approach, but the optimization and evaluation of each planning state still rely on the optimizer. It should be noted that the same training data set is used for all test cases, and the K-Medoids31 method is applied to generate typical scenario sets to reduce the complexity of the optimization-based model.

The statistics comparison of related indexes evaluating the planning performance is conducted in Table 1, including the optimal planning scheme of the corresponding method, the total investment cost, the curtailment of load and PV under 30 days of consecutive trial operation, and the computation time of generating the planning scheme. Among these, the load and PV profiles used for trial operation are randomly sampled from the test set, which includes the unexperienced scenarios unseen during training. It can be observed that the investment cost in case I is about 54.14% lower in comparison with that in case II, and 24.69% lower in comparison with case III, for case II, the excessive investment of expensive SVC results in the increase of cost, on the other hand, while the investment costs of case II and case III are similar, the three types of resources in case III are planned on the same node thoughtlessly, which may exacerbate the imbalance of power flow. In terms of LC and PVC, Thanks to the planning and operating strategies learned based on the continuous interaction of state-action space, which is data-driven and autonomous, among the three cases, the proposed method achieved the lowest LC and PVC, with an average daily LC of 0.123 MWh and PVC of 0.138 MWh over 30 test days. By contrast, optimization-based methods rely on a few representative scenarios to capture the variability of the environment, which limits the adaptability of the multi-source uncertainty. Therefore, the performance of case II and case III fails to reach the expected value due to the environment deviating from the scenarios generated. Furthermore, the proposed method can generate optimal planning and operating decisions impressively short at 68 min following complete training, indicating a high level of efficiency and practical feasibility for real-time applications. It is worth noting that the 28.6 h of training time is deemed acceptable as the entire training process is conducted offline, ensuring the testing application is not hindered by computational demands. In summary, the effectiveness and superiority of the proposed method in obtaining cost-effective planning schemes can be demonstrated based on the numerical results.

The changes in network operating cost and investment cost during each planning timescale are illustrated in Fig. 5. From Fig. 5a we can observe that although case I has higher investment costs than case III in the first six steps, it is still the lowest overall. In Fig. 5b, both case I and case III can reduce the network operating costs compared to case II, but case I remains globally optimal. The above analysis further highlights the robustness of the proposed method in achieving optimal performance, i.e., the optimality in each time step is not required. In conclusion, the effectiveness and superiority of the proposed method in learning cost-effective planning and operating strategies is demonstrated.

a The investment cost. b The operation cost.

Specifically, the node voltage is selected as a key index to evaluate operational performance. The evaluation covers 30 consecutive trial operations, with statistical comparisons provided in Table 2. Results show that case I achieves the best performance: it has the lowest average voltage deviation (0.16%), the smallest maximum voltage rise and drop (0.82 and 0.67%), and an out-of-control ratio of only 3.03%. Only one voltage exceedance is observed over the 30-day period. This indicates that the learning-based strategies maintain voltages within the safe range (0.95–1.05 p.u.) when applied to a standard power flow calculator. Thus, security constraints are satisfied without relying on full model knowledge. The close alignment between the learned policies and optimizer outputs demonstrates the method’s robustness. It effectively handles implicit uncertainties through data-driven adaptation and mitigates errors despite partial observability.

To analyze the cooperation of DERs under the optimal planning scheme, the operating results obtained by the lower level on a single test day are shown in Fig. 6, only the results on the hour are listed for simplicity. It can be observed that the ESS, the Gas as well, and the CB can be properly scheduled efficiently and cost-effectively. For instance, all the ESSs in Fig. 6c could charge to absorb the surplus power during the peak PV hours (e.g., 11:00–13:00) to promote the capacity of clean energy, and discharge during peak load periods (e.g., 20:00–22:00) to supplement power shortages. In Fig. 6d, the output of Gass could be flexibly adjusted according to the load demand to reduce the load curtailment. In Fig. 6e we can observe that the CBs are also appropriately operated to satisfy the requirement of reactive demand, according to the fluctuation of load demand. Besides, the reactive power demand beyond the capacity of the main substation can only be provided by the CB, so the output values of the CBs are higher compared with Gass. The effectiveness of the proposed method in learning cost-effective operating strategies is further demonstrated.

a The active power of PVs. b The active and reactive power of load. c The operating strategy of ESSs. d The operating strategy of Gass. e The operating strategy of CBs.

Large-scale practical distribution system

In this subsection, a larger test ADN constructed with 152 nodes is employed to further evaluate the scalability of the proposed method. The topology of the test system is shown in Supplementary Fig. 2. The electricity support area of the test system is about 80 km2, supplied by a 110 kV substation. The parameter configuration of the network is provided in Supplementary Table 2, including 3 voltage levels, 10 PV farms and 6 control regions. The training and testing datasets are the same as those used in the benchmark system, but the reasonable scaling is applied according to the actual load level. Similarly, the ESS, Gas, SVC and CB are selected as candidate DERs, and all load points are considered as candidate nodes, the detailed properties are used in the test case with details32 presented in Supplementary Table 2.

The maximum planning episode in the upper level is set as 16, and in the lower level, the training planning scheme set consists of 720 planning schemes randomly generated based on the MCTS algorithm. Moreover, another hidden layer is added to the actor and critic networks, and the neurons of the hidden layers are set as 256. The remaining parameters are consistent with those in the benchmark system.

The probability distributions of the corresponding planning schemes of #1, #8, and #15 training episodes are shown in Fig. 7. It can be observed that the three stages, initial exploration, directed exploration, and convergence, encapsulate the entire training process. In the initial exploration stage, the probability distribution is uniform across all nodes and resources, indicating that each potential planning action is being considered equally. As training progresses, the exploration direction is refined to identify promising planning strategies, demonstrating the learning ability of the proposed method in the more complex large distribution systems. The preference for specific nodes and resources is clearly shown in the convergence stage, denoting that the optimal planning strategy has efficiently converged.

The x-axis represents the candidate nodes, and the y-axis represents the candidate resources. a Probability distribution in episode #1. b Probability distribution in episode #8. c Probability distribution in episode #15.

The average reward calculated for each 40 episodes over 5 independent runs with different random seeds are shown in Supplementary Fig. 3. Similar to the 33-node system, it can be seen that the reward gradually increases and stabilizes, indicating that the optimal operational strategies can be learned in the training process. In summary, the effectiveness and scalability are demonstrated in large and more uncertain environments where exhaustive exploration is nearly impossible

The outputted planning schemes and corresponding statistics of three comparison methods are shown in Table 3. To effectively capture the fluctuations in load and data, 20 typical scenarios are generated through clustering in case II and case III. It can be seen that the proposed method still has advantages in the large 152-node system, that is, the optimal planning and operation performance is obtained while the investment cost is the lowest. On the one hand, the LC and PVC of case I are the lowest, emphasizing the adaptability of the proposed method to multi-source uncertainties compared with the optimization-based methods, especially in large-scale power systems. On the other hand, in the case of a 4.6 times increase in the number of nodes (from 33 to 152 nodes), the training time for case I only increases by 2.7 times, and the testing time increases by 2.9 times. By contrast, the computation complexity increases exponentially with the number of optimization scenarios, which makes it difficult for optimization-based methods to solve within the specified time. These results confirm our framework’s linear time complexity and uncertainty robustness in large-scale ADNs.

Discussion

The adaptive hierarchical learning framework presented in this study fundamentally redefines uncertainty-aware planning for DERs. Unlike traditional methods that require full model knowledge, our approach enables TPOs to learn security constraints autonomously, reducing reliance on predefined scenarios and model simplifications. This is particularly valuable in real-world settings where distribution network topology are inaccessible. By integrating MCTS at the planning level with MATD3 RL at the operational level, we establish a paradigm that intrinsically resolves longstanding limitations in energy system optimization, which includes the dependency on predefined scenarios that oversimplify multi-source uncertainties and the computational intractability of combinatorial optimization in large-scale networks. This architecture enables direct learning from real-time grid dynamics through bidirectional planning-operation coordination, effectively replacing scenario-based approximations with adaptive response mechanisms. Crucially, the convergence of investment strategies—visually evidenced by the evolving probability distributions where selective node-resource pairing probabilities escalate from uniform exploration to targeted exploitation—demonstrates MCTS’s capacity to autonomously prune suboptimal solutions while navigating high-dimensional decision spaces.

Validation across 33-node and 152-node distribution systems reveals profound operational implications. In the 33-node case, MATD3-driven coordination reduced voltage violations by 95%, primarily through dynamic resource synchronization: ESS units consistently absorbed PV surplus during peak generation while discharging during evening demand peaks, and CBs provided reactive power support aligned with load fluctuations. Such coordination translated into a 24% investment cost reduction versus traditional methods, underscoring how real-time learning surpasses scenario-based optimization’s representational limitations. Case I achieves lower investment costs and load curtailment because it learns implicit constraints adaptively, avoiding the bias of predefined scenarios used in case II/III, according to Table 1. The superior voltage control in case I in Table 2 stems from its ability to dynamically adapt to implicit security constraints through learning, whereas traditional methods fail under model uncertainty. A key feature of the framework is its computational scalability. In scaling to the 152-node system, training time grew linearly with node count, whereas scenario-based methods suffered from exponential complexity growth. This efficiency stems from MCTS’s selective search tree expansion and MATD3’s decentralized policy updates, collectively avoiding the curse of dimensionality that plagues monolithic optimization models.

These advances carry critical theoretical and industrial implications. Unlike bi-level conversion methods that introduce optimality gaps through model simplification, our approach preserves planning-operation interdependencies via closed-loop feedback. Furthermore, the persistent cost trajectories where investment/operational costs consistently dominated benchmarks despite transient fluctuations. For grid operators, the 68-minute testing time enables real-time replanning for extreme weather or demand shocks, directly supporting renewable integration targets in carbon-neutral transitions.

The convergence of probabilistic planning strategies and cost trajectories further validates adaptability to complex uncertainty landscapes. This work provides a foundational learning-centric alternative to traditional optimization, enabling large-scale renewable integration in ADNs. While this study focuses on DER planning under TPO constraints, future work will integrate network asset expansion (e.g., feeder and transformer upgrades) into the hierarchical learning process. This can be achieved by augmenting the state-action space of the MCTS-MATD3 framework to jointly optimize DER and infrastructure investments.

Methods

Mathematical formulation

The problem depicted in Fig. 1 can be modeled as a bi-level planning-optimization model shown in Eq. (1). The minimization of total costs associated with investment, maintenance, and operation of the resources to be planned are modeled as the objective function, and the constraints mainly contain the rules that need to be followed throughout the process.

where, the \({x}^{{{\rm{up}}}}\) and \({x}^{{{\rm{low}}}}\) are the decision variables of the upper and lower level, described in detail in the next subsection and the and denote the equality and inequality constraints, respectively.

The upper-level formulation

The objective function could be formulated in Eq. (2), which comprised three main parts, namely the costs of investment \({c}^{{\mathrm{inve}}}\), maintenance \({c}^{{{\rm{main}}}}\) and operation \({c}^{{\mathrm{oper}}}\) respectively, in which the hosting capacity of RES and the total amount of the unserved load (AUL) are contained in the operating cost.

where, the \({x}^{{{\rm{up}}}}\) includes the decision variables \({\varOmega }_{n}\),\({\varOmega }_{y}\), \({\delta }_{i}^{y}\) and \({E}_{i}^{y}\). \({\varOmega }_{n}\) and \({\varOmega }_{y}\) are the set of nodes and the types set of the candidate DERs to be planned, \({\delta }_{i}^{y}\) is a binary variable used to denote whether the type-y DER is installed on the node \(i\), \({\varsigma }^{y,{\mathrm{inve}}}\) and \({\varsigma }^{y,{\mathrm{main}}}\) indicate the unit investment and maintenance cost of type-y resource, respectively, \({E}_{i}^{y}\) is the investment capacity of type-y resource on the node \(i\). Note that the operation cost is obtained from the lower level.

The constraints of the upper level mainly include the investment number and node constraints of resources, which are shown in Eqs. (6) and (7). Equation (6) restricts the investment number to their upper limit, and it is assumed that all resources cannot be invested in the same node, as shown in Eq. (7).

where, the \({n}^{y,\,\max }\) is the maximum investment number of type-y resource.

The lower-level formulation

The operating objective in the planning process is to maximize the hosting capacity of RES while minimizing the total amount of AUL, which is formulated as Eq. (8).

where, \({\varsigma }^{{{\rm{res}}}}\), \({\varsigma }^{{{\rm{aul}}}}\) and \({\varsigma }^{{{\rm{loss}}}}\) are the unit cost of cutting renewable energy sources, cutting load and network loss, \({\delta }_{i}^{y}\) denotes the binary variable used to denote whether the type-x resource is installed on the node \(i\), in which \(y=\{{\mathrm{pv}},{\mathrm{sub}},{\mathrm{gas}},{\mathrm{ess}}\}\), \(\Delta {P}_{i,t}^{{\mathrm{res}}}\) and \(\Delta {P}_{i,t}^{{\mathrm{aul}}}\) are the curtailment amount of RES and unserved load on the node \(i\) at time \(t\).

The constraints of the lower level mainly include the network and DERs. The convexified power flow based on Distflow is presented in Eq. (10). The complex power exchanged between the substation and main grid is constrained by Eq. (11), and the Eq. (12) restricts the nodal voltages and branch currents.

where, \({\varOmega }_{e\in (ij,)}\) is the set of branches connected to branch \(ij\), \({P}_{ij,t}\), \({Q}_{ij,t}\), \({i}_{ij,t}\), \({r}_{ij,t}\) and \({x}_{ij,t}\) denote the active power, reactive power, current, resistance, and reactance on the branch \(ij\) at time \(t\), respectively. \({S}^{{{\rm{sub}}}}\) is the apparent power of the substation.

The operating constraints of DERs consist of four parts of ESS, gas generator (Gas), SVC, and CB, which are formulated as Eq. (13).

where, \({E}_{i,t}^{{{\rm{ess}}}}\) is the capacity of the ESS on the node \(i\) at time \(t\), \({\eta }_{{\mathrm{sdr}}}\) is the self-discharge rate of ESS, and \({\eta }_{c}\), \({\eta }_{dc}\) are the charging and discharging coefficients. Note that the \({x}^{{{\rm{low}}}}\) includes the operational decisions for the DERs.

Monte Carlo tree search algorithm for optimal planning

The upper planning problem is solved based on the MCTS algorithm. As a state-of-the-art decision-making algorithm that combines the precision of tree-based search and the generality of random sampling, the MCTS could adaptively explore and narrow the solution space by weighting the nodes to divergent priorities in the search tree. In contrast to conventional exhaustive search methods, a great advantage of MCTS is that only the value of the terminal state of each episode is required, instead of evaluating the intermediate states, which greatly enhances the robustness and generality of the search process33.

The transition of investment timescale in the upper level could be interpreted using the two-dimensional array, in which the x-axis denotes the candidate nodes, and the y-axis denotes the candidate DERs to be an investment. Each two-dimensional array represents a state S, i.e., a certain investment scheme, and the update of the state is decided by action a, \(a=(x,y)\) indicating the investment of y-type resources in node x (the action is not executed is x = 1). Each episode starts at state S0 and constructs and expands the search tree over the investment timescale until reaches the terminal state (i.e., the desired performance or the upper limit of the timescale).

The structure of the search tree constructed during planning is shown in Supplementary Fig. 4, which includes the node (state, S) and directed edge (action, a), the total number of current state S has been visited \(N(S)\) is stored in the node information, the statistics include \(N(S,a)\), \(\bar{Q}(S,a)\) is stored in the edge information, in which \(N(S,a)\) is how many times a is selected in state S, and the \(\bar{Q}(S,a)\) is the average value of selecting a in state S, which could be derived from Eq. (15) in our planning problem.

The search tree is built and expanded iteratively in each episode, aiming to maximize the cumulative value of the sequence of actions chosen. When the terminal state is reached, at which point the search is halted and outputs the best-performing root action, i.e., the optimal investment strategy of the current episode. Four stages34, including selection, expansion, simulation, and back-propagation, are applied per iteration, explained in detail in Supplementary Fig. 5.

Starting from the root state, the actions are applied based on a designed search policy to descend through the tree until reaches the first expandable state. A state is expandable if the resources could be continued planned under this state (i.e., has unexpanded children state). As a common search policy, the upper confidence bounds for trees (UCT) could address the exploitation-exploration dilemma effectively. As shown in Eq. (16), the visit of current children state \(N(S,a)\) will increase the denominator, which decreases its contribution. Conversely, the exploration value will be enhanced because of the increasing of numerator \({\mathrm{ln}}N(S)\) over the other child of the same parent state. To sum up, each child state has a nonzero probability to be selected, because exploring different planning schemes is important.

where, \({c}_{p} > 0\) is a constant used to regulate the exploration performance.

The action obtained through the UCT policy is performed, and the corresponding next state will be added to expand the search tree.

Starting from the expansion state, the actions are selected based on the designed search policy until the terminal state is reached. One notable thing is that the new states and edges generated by the simulation will not be added to the search tree, except for the simulation value of the terminal state.

Starting from the expansion state, and simulation result is backpropagated to all selected states along the searching trajectory, The statistics of the states and edges are also updated in this stage by Eq. (17).

The pseudocode of applying the MCTS algorithm to solve the upper-level planning problem is summarized in Algorithm I.

Algorithm I

MCTS for the upper planning level

Input: the boundary of planning objective, network, and resources

for episode = 1 to M do

Initialize the root state \({S}_{0}\)

for planning timescale t = 1 to N do

while \({S}_{t}\) is fully expanded and non-terminal do

\({S}_{t}\leftarrow {{\rm{select}}}({S}_{t})\) // Using UCT (Eq. (16))

if \({S}_{t}\) is non-terminal then

\({S}_{t+1}\leftarrow {{\rm{expland}}}({S}_{t})\)

\(\Delta \leftarrow {\mathrm{simulation}}({S}_{t+1})\) // Update statistics (Eq. (18))

\({\mathrm{backpropagate}}({S}_{t+1},\Delta )\)

\({a}^{\ast }\leftarrow {{\rm{bestchild}}}(s,{c}_{p})\)

Output: the optimal planning scheme

MATD3 algorithm for optimal operation

The precise evaluation of each planning state’s value is necessary for the iteration of MCTS in the upper planning level, and the optimal operation of distributed resources under a specific planning state is the premise of obtaining the corresponding value. However, in addition to the installed types, installed nodes, and installed capacity of distributed resources to be planned, the operating level also depended heavily on the power demand level of the local power network, all of which are highly uncertain. One of the desired features of a suitable solution algorithm is that it could obtain the optimal operating cost self-optimistically under uncertain inputs, on the premise of computational timeliness. One of the required characteristics of a suitable solution algorithm is that it could achieve the optimal operation of distributed resources self-optimistically in a given planning scheme, within the required computational timeliness.

In this section, a multi-agent DRL algorithm combines the advantages of off-policy and actor-critic architecture, i.e., MATD3 is applied to deal with the lower operating level. Before the core algorithm’s derivation, the partially observable Markov decision process (POMDP) framework incorporated the uncertainty of planning schemes is briefly introduced.

POMDP formulation

The POMDP framework is crucial for modeling the implicit uncertainty faced by TPOs: the state such as security constraints) is partially observable, and agents must learn from historical interactions to infer hidden relationships. As a core mathematical framework for the interaction between agents (the DERs) and the environment (network) in RL, the POMDP provides a clear framework to define and optimize policy. Unlike the traditional Markov decision process (MDP), which assumes that the state of the environment is fully observable, the POMDP enables decision-making under partial observability, i.e., the agents can optimize their strategies based on incomplete or locally available information. As a consequence, the POMDP is particularly well-suited for addressing the challenges posed by multi-source uncertainties in ADN.

The basic elements of the proposed POMDP formulation include the planning scheme set, the agent set, the state set, and the reward function, elaborated in the following.

The planning scheme set contains the number \({n}^{y}\) and installed nodes \({i}^{y}\) of corresponding distribution resources that have been planned at the current investment timescale t, as shown in Eq. (18). For instance, \(P{s}_{t}^{{\mathrm{ess}}}=[2,(5,12,0,0)]\) denotes the 2 ESSs are invested, in node #5 and #20, in which \({{{\boldsymbol{n}}}}^{{{\bf{ess}}},{{\max }}}={{\bf{4}}}\). To enhance training stability and convergence, we incorporate a curriculum learning strategy to generate the planning scheme set based on MCTS.

This strategy structures the learning process by introducing planning schemes in progressively challenging stages. Initially, agents are exposed to simpler planning schemes, which serve as foundational learning stages with lower complexity. Over subsequent rounds, the complexity of planning schemes is gradually increased, presenting agents with more intricate and realistic configurations that align with actual operational conditions.

where, the distributed resource is not connected to the network if \({i}_{j}=0\).

The agent set \({\mathcal I}\) includes four agents, corresponding to four types of resources to be planned, as shown in Eq. (20). The action set \({{\mathscr{A}}}\) denotes the operation variables of the corresponding agent, i.e., the charging/discharging power of ESSs, the active power of Gass, the reactive power of SVCs and CBs, shown in Eq. (21).

The state set \({{\mathscr{S}}}\) includes the environment information in the previous time step, which could be defined as Eq. (22).

The reward \({r}_{t}\) is the function of \({{\mathscr{S}}}\) and \({{\mathscr{A}}}\), could be defined as the negative sum of the operation cost according to Eq. (8).

The objective of POMDP is to find an optimal joint policy \({\pi }^{\ast }\) that maximizes the expected infinite horizon discounted reward \(J(\pi )\).

where, \(\gamma\) is the is the discounting factor, the initial state \({{{\mathscr{S}}}}_{0}\) follows the initial state distribution \({\mu }_{0}\).

Solutions via MATD3 algorithm

For RL, the strategy update of each agent is relied on the policy function and Q-function, for agent \({ {\mathcal I} }_{i}\), the policy function \({\pi }_{{ {\mathcal I} }_{i}}({{{\mathscr{A}}}}_{{ {\mathcal I} }_{i}}|{{{\mathscr{S}}}}_{{ {\mathcal I} }_{i}};{\theta }_{{ {\mathcal I} }_{i}}^{\pi })\) maps the state to a deterministic action, and the action-value function \(Q({{{\mathscr{A}}}}_{{ {\mathcal I} }_{i}}|{{{\mathscr{S}}}}_{{ {\mathcal I} }_{i}};{\theta }_{{ {\mathcal I} }_{i}}^{Q})\) takes the global state \({{\mathscr{S}}}\) and the action \({{\mathscr{A}}}\) as input and outputs the corresponding action value Q, in which the \({\theta }^{\pi }\) and \({\theta }^{Q}\) are the parameters of the corresponding function. However, finding a capable function to approximate is unrealistic when faced with the problem of high-dimensional and continuous action space. The introduction of a deep neural network to approximate the policy and Q-function could address the above problem effectively.

The conclusion of the Policy Gradient Theorem35 proves that the gradient of policy \({\nabla }_{{\theta }_{{ {\mathcal I} }_{i}}^{\pi }}J({\theta }_{{ {\mathcal I} }_{i}}^{\pi })\) can be expressed as Eq. (26), and then the chain rule is applied to decompose the gradient into two parts, as shown in Eq. (27). Thus, the parameter \({\theta }^{\pi }\) of actor-network can be updated a by gradient ascent method shown in Eq. (28).

where, the D is the replay buffer, is used to store the transition experiences \(({{{\mathscr{S}}}}_{t},{{{\mathscr{A}}}}_{t},{r}_{t},{{{\mathscr{S}}}}_{t+1})\), a batch of the dataset is randomly during training to optimize the value function, so that the previous experiences could be recycled. \(-{ {\mathcal I} }_{i}\) denotes the agents except the agent \({ {\mathcal I} }_{i}\), and \(\eta\) is the time step for updating the network parameters.

The optimizing process of action-value function \(Q({{\mathscr{A}}}|{{\mathscr{S}}};{\theta }^{Q})\) in a critic network could be called policy evaluation. Generally, the parameters \({\theta }^{Q}\) could be updated by minimizing the loss, i.e., the mean square error between the action value predicted by the Critic network and the target value \(y\).

where, the \(Q{\prime} ({\theta }^{Q{\prime} })\) is the target critic network introduced to alleviate the update oscillation caused by the frequent update of action values, as shown in Eq. (31). During the calculation of expected reward according to Eq. (25), the maximizing operation is involved in each step generally leads to the overestimation bias of action value. Therefore, two independent critic networks, i.e., double critic-network are set up in our MATD3 algorithm, when updating the value, the smaller value of double critic networks will be selected so that to reduce the overestimation, the calculation of \({y}_{t}\) after applying the double critic-network is given in Eq. (33), where the overestimation could be mitigated by choosing the smaller estimated value between two separate critic networks.

The training procedure of applying the MATD3 algorithm to solve the lower-level operating problem is summarized in Algorithm II.

Algorithm II

MATD3 for the lower operating level

Input: the boundary of operating objective, planning scheme and generation & load level of the network

Randomly initialize the actor network parameter \({\theta }^{\pi }\), the critic network parameters \({\theta }_{1}^{Q}\), \({\theta }_{2}^{Q}\)

Randomly initialize the target network parameters \({\theta }^{\pi {\prime} }\), \({\theta }_{1}^{Q{\prime} }\),\({\theta }_{2}^{Q{\prime} }\)

for episode = 1 to M do

Obtain the initial state set \({{{\mathscr{S}}}}_{0}\)

for t = 1 to N do

Select the action \({{{\mathscr{A}}}}_{{ {\mathcal I} }_{i},t}={\pi }_{{ {\mathcal I} }_{i}}+{{\mathscr{N}}}\) for each agent, w.r.t. the policy and noise to enhance exploration, became part of \({{{\mathscr{A}}}}_{t}\)

Execute \({{{\mathscr{A}}}}_{t}\) and calculate the reward \({r}_{t}\) based on Eq. (24), and observe the next state \({{{\mathscr{S}}}}_{t+1}\)

Store the transition experiences to \({{\mathscr{D}}}\)

for agent \({\mathcal I}\) =\({ {\mathcal I} }^{{\mathrm{ess}}}\) to \({ {\mathcal I} }^{cb}\) do

Sample minibatch from \({{\mathscr{D}}}\)

Update the actor network by Eqs. (27) to (29)

Update the critic networks by Eqs. (30), (31) and (33)

Update the target networks by Eq. (32)

Output: the optimal operating strategy of resources given the planning scheme

Data availability

All data needed to evaluate the conclusions in this paper are present in the paper and/or the Supplementary Information. Additional data and raw data are available upon request from the corresponding author.

Code availability

The code that supports the findings within this manuscript is available from the corresponding authors upon reasonable request.

References

Xiang, Y. et al. Optimal active distribution network planning: a review. Electr. Power Compon. Syst. 44, 1075–1094 (2016).

Wang, R. et al. Multi-resource dynamic coordinated planning of flexible distribution network. Nat. Commun. 15, 4576 (2024).

Yu, Q. et al. China’s urban EV ultra-fast charging distorts regulated price signals and elevates risk to grid stability. Nat. Commun. 16, 8451 (2025).

Feng, S. et al. Dense reinforcement learning for safety validation of autonomous vehicles. Nature 615, 620–627 (2023).

Singh, V., Moger, T. & Jena, D. Uncertainty handling techniques in power systems: a critical review. Electr. Power Syst. Res. 203, 107633 (2022).

Guo, X. et al. Grid integration feasibility and investment planning of offshore wind power under carbon-neutral transition in China. Nat. Commun. 14, 2447 (2023).

Xiang, Y., Fang, M., Liu, J., Zeng, P. & Wu, G. Distributed dispatch of multiple energy systems considering carbon trading. CSEE J. Power Energy Syst. 9, 459–469 (2023).

Yang, J. & Xiang, Y. Deep transfer learning based surrogate modeling for optimal investment decision of distribution networks. IEEE Trans. Power Syst. 39, 2506–2516 (2024).

Boubaker, S. et al. Electric vehicles charging station allocation based on load profile forecasting and Dijkstra’s algorithm for optimal path planning. Sci. Rep. 15, 23844 (2025).

Tao, Y., Qiu, J., Lai, S., Sun, X. & Zhao, J. Adaptive integrated planning of electricity networks and fast charging stations under electric vehicle diffusion. IEEE Trans. Power Syst. 38, 499–513 (2023).

Xiang, Y., Han, W., Zhang, J., Liu, J. & Liu, Y. Optimal sizing of energy storage system in active distribution networks using Fourier-Legendre series based state of energy function. IEEE Trans. Power Syst. 33, 2313–2315 (2018).

Kou, P., Liang, D., Gao, R., Yang, C. & Gao, L. Optimal placement and sizing of reactive power sources in active distribution networks: a model predictive control approach. IEEE Trans. Sustain. Energy 12, 966–977 (2021).

Li, R., Wang, W., Wu, X., Tang, F. & Chen, Z. Cooperative planning model of renewable energy sources and energy storage units in active distribution systems: a bi-level model and Pareto analysis. Energy 168, 30–42 (2019).

Wang, C., Liu, C., Chen, J. & Zhang, G. Cooperative planning of renewable energy generation and multi-timescale flexible resources in active distribution networks. Appl. Energy 356, 122429 (2024).

Kabirifar, M., Fotuhi-Firuzabad, M., Moeini-Aghtaie, M., Pourghaderi, N. & Dehghanian, P. A bi-level framework for expansion planning in active power distribution networks. IEEE Trans. Power Syst. 37, 2639–2654 (2022).

Almutairi, S. Z., Alharbi, A. M., Ali, Z. M., Refaat, M. M. & Aleem, S. A. A hierarchical optimization approach to maximize hosting capacity for electric vehicles and renewable energy sources through demand response and transmission expansion planning. Sci. Rep. 14, 15765 (2024).

Shen, X., Shahidehpour, M., Zhu, S., Han, Y. & Zheng, J. Multi-stage planning of active distribution networks considering the co-optimization of operation strategies. IEEE Trans. Smart Grid 9, 1425–1433 (2018).

Wang, F., He, Q. & Li, S. Solving combinatorial optimization problems with deep neural network: a survey. Tsinghua Sci. Technol. 29, 1266–1282 (2024).

Wei, X. et al. Real-time resilient power system operation with defender–attacker soft actor-critic reinforcement learning. IEEE Trans. Ind. Inform. https://doi.org/10.1109/TII.2025.3609071 (2025).

Xiang, Y., Lu, Y. & Liu, J. Deep reinforcement learning based topology-aware voltage regulation of distribution networks with distributed energy storage. Appl. Energy 332, 120510 (2023).

Li, H. & He, H. Learning to operate distribution networks with safe deep reinforcement learning. IEEE Trans. Smart Grid 13, 1860–1872 (2022).

Shang, Y., Wu, W., Liao, J., Guo, J. & Su, J. Stochastic maintenance schedules of active distribution networks based on Monte-Carlo tree search. IEEE Trans. Power Syst. 35, 3940–3952 (2020).

Xiang, Y., Dai, J., Xue, P. & Liu, J. Autonomous topology planning for distribution network expansion: a learning-based decoupled optimization method. Appl. Energy 348, 121522 (2023).

Amjady, N., Attarha, A., Dehghan, S. & Conejo, A. J. Adaptive robust expansion planning for a distribution network with DERs. IEEE Trans. Power Syst. 33, 1698–1715 (2018).

Huang, C., Wang, C., Xie, N. & Wang, Y. Robust coordination expansion planning for active distribution network in deregulated retail power market. IEEE Trans. Smart Grid 11, 1476–1488 (2020).

Munoz-Delgado, G., Contreras, J. & Arroyo, J. M. Distribution system expansion planning considering non-utility-owned DG and an independent distribution system operator. IEEE Trans. Power Syst. 34, 2588–2597 (2019).

Dua, D. & Graff, C. UCI machine learning repository. Available: https://archive.ics.uci.edu/dataset/321/electricityloaddiagrams20112014 (University of California, 2023).

Elia Group. Photovoltaic power production estimation and forecast on Belgian grid (Near real-time)[Data set]. (Elia Open Data Portal 2024).

Hinton, G. Neural networks for machine learning: lecture 6a: overview of mini-batch gradient descent https://www.cs.toronto.edu/~tijmen/csc321/slides/lecture_slides_lec6(Coursera, 2024).

The machine learning community: r/learnmachinelearning) https://www.reddit.com/r/learnmachinelearning/ (Reddit, 2024).

Moradijoz, M., Parsa Moghaddam, M. & Haghifam, M.-R. A flexible distribution system expansion planning model: a dynamic bi-level approach. IEEE Trans. Smart Grid 9, 5867–5877 (2018).

Lu, Y., Xiang, Y., Huang, Y. & Liu, J. Deep reinforcement learning based optimal scheduling of active distribution system considering distributed generation, energy storage and flexible load. Energy 271, 127087 (2023).

Shang, Y. et al. Stochastic dispatch of energy storage in microgrids: an augmented reinforcement learning approach. Appl. Energy 261, 114423 (2020).

Browne, C. B., Powley, E., Whitehouse, D., Lucas, S. M. & Cowling, P. I. A survey of Monte Carlo tree search methods. IEEE Trans. Comput. Intell. AI Games 4, 1–43 (2012).

Sutton, R. S., McAllester, D. A., Singh, S. P. & Mansour, Y. Policy gradient methods for reinforcement learning with function approximation. Adv. Neural Inf. Process. Syst. 12, 1057–1063 (2000).

Acknowledgements

This work was supported by the Sichuan Natural Science Foundation Project (2026NSFSCZY0091) and the National Natural Science Foundation of China (52177103).

Author information

Authors and Affiliations

Contributions

Y. Xiang and Y. Lu proposed the conception, writed the original article. L. Li, A. P. Zhao, Y. Liu, X. Wang and C. Gu reviewed and revised the article. T. Pu and J. Liu discussed the manuscript before submission.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Engineering thanks Payman Dehghanian, Niloofar Pourghaderi, Ke Jia and the other anonymous reviewer(s) for their contribution to the peer review of this work. Primary handling editors: [Wenjie Wang].

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Xiang, Y., Li, L., Lu, Y. et al. Adaptive hierarchical learning for uncertainty-aware distributed energy resource planning. Commun Eng 5, 40 (2026). https://doi.org/10.1038/s44172-026-00591-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44172-026-00591-x