Abstract

Whether psychosocial interventions containing active psychological components prolong survival in cancer patients has been studied for decades, yet findings from primary (RCTs) and secondary research (meta-analyses) remain inconclusive. Our preregistered systematic review, meta-analysis, and multiverse meta-analysis aimed to clarify this research question using contemporary methods of research synthesis. We searched Web of Science, Scopus, MEDLINE, PsycINFO, Embase, and Google Scholar for RCTs of structured psychosocial interventions for cancer patients published until October 17, 2025. We calculated the overall effect; assessed its robustness; estimated a median survival benefit, characterized the psychological components included in the interventions; examined risk of bias, study quality, meta-analytic post hoc power, and sponsorship bias; explored 25 substantive and methodological moderators; and considered publication bias as well as p-hacking. Using multiverse meta-analysis, we calculated multiple overall effects based on reasonable specifications employed in prior meta-analyses (descriptive analysis) and compared them with the 95% CI band of 1000 simulated overall effects assuming no true effect (inferential analysis). Psychosocial interventions, provided alongside medical treatment, demonstrated a small, positive and robust overall effect on survival in cancer patients, with an HR of 0.80, 95% CI [0.71, 0.90] across 32 RCTs comprising 5704 participants. Heterogeneity was moderate to substantial with an I² = 48% and a wide 95% PI (HR 0.49–1.29). Median survival time benefit was estimated at 3.9 months, 95% CI [ − 0.7, 8.5], based on data from 16 trials. The psychological components most frequently applied were educational, cognitive–behavioral, emotionally expressive, and group-based social support. Low average meta-analytic post hoc power (17%) likely contributed to inconsistent findings among trials. Multiverse meta-analysis confirmed the presence of a general overall survival effect and indicated that previously conflicting meta-analytic conclusions primarily stemmed from differences in effect size metrics and analytic decisions. Psychosocial (psychological) interventions appear to improve survival in cancer patients, with effect sizes comparable in magnitude to effects previously reported in the literature for medical cancer treatments such as chemo-, radio-, and hormone therapy. The certainty of evidence was rated moderate, primarily due to statistical heterogeneity, hence effects might not generalize equally to all populations. Considering survival impact, established psychological benefits, favorable safety profile, and comparatively low cost, the findings support a paradigm shift toward establishing psychosocial interventions alongside medical therapy as a standard component of comprehensive cancer care; potentially guiding future research and clinical practice.

Similar content being viewed by others

Introduction

Each year, ~19.3 million individuals develop cancer, and about 10 million patients die from the disease1. Psychological risk factors for cancer development and progression, such as stress, anxiety, depression, anger, bereavement, repression of emotions, helplessness, and hopelessness, are already well known2,3,4,5,6,7.

Psychosocial interventions are provided to help cancer patients overcome the initial shock of diagnosis, difficulties in coping with the illness, associated mental health problems, and irritating side effects of medical treatment through cognitive–behavioral, supportive-expressive, or mindfulness-based techniques8,9.

Additionally, they appear to alleviate psychological and physiological risk factors for cancer progression10 and have been shown to reduce stress in cancer patients11, a major factor for the onset, development, and recurrence of cancer5,6,12,13,14,15, which extends far beyond the benefits of palliative care alone16.

Whether psychosocial interventions are similarly able to prolong the survival of cancer patients has been hotly debated since the landmark study by Spiegel et al.17, which sparked much research in the field of psycho-oncology. To date, almost half of the respective randomized controlled trials (RCTs) have found a life-extending effect, whereas the remainder have not (see Fig. 1A, B for a timeline with published RCTs and meta-analyses)—including three failed replication attempts of the initial positive finding18,19,20.

A Timeline covering studies published from 1980 to 2002. B Timeline covering studies published from 2002 to 2025. Note that Fawzy et al., 2003 and Kuchler et al., 2007 were follow-up publications.

Likewise, ten prior meta-analyses (see Table 1) have resulted in similar contradictory conclusions. Four meta-analyses yielded a significant overall effect (at least at one of the examined follow-ups), whereas six did not (see Fig. 1A, B).

Heterogeneity in the results of primary (RCTs) as well as secondary research (reviews and meta-analyses) has fueled controversial debates and led to the formation of two opposing camps. One group argues that the evidence for psychosocial interventions enhancing the survival of cancer patients is convincing9,21,22,23,24,25, whereas the other argues it is not26,27,28,29,30,31,32,33,34,35,36.

In an attempt to investigate possible causes of the ongoing controversy, we have identified a number of factors that could explain the ambiguous findings of prior meta-analytic research and believe that a modern and comprehensive synthesis of the existing evidence remains necessary.

An overview of the most relevant issues identified in prior meta-analytic research is provided in the following paragraphs addressing the number of included trials, the choice of effect size measures, the investigation of risk of bias factors within studies, the handling of clinical and statistical heterogeneity, the examination of reporting bias across studies, the need for a comprehensible effect size measure for practitioners and patients, and concerns regarding data-analytic decisions in prior meta-analyses (see Table 1).

Possible causes for the contradictory findings in prior meta-analyses

Number and selection of included trials

A meta-analysis is considered to produce the highest form of scientific evidence37 and its execution therefore requires quite delicate handling38. Prior meta-analyses on the topic (see Table 1) followed the current gold standard in evidence-based research and analyzed RCTs only39, whereas the most recent attempt40 applied even stricter criteria and focused exclusively on RCTs that used passive controls and an intention-to-treat (ITT) analysis.

The application of selective inclusion criteria might serve the aim of increasing the validity of the meta-analytic overall effect41. However, in our view, the feasibility of such an approach depends on the amount of available evidence and, on top of that, may address only indirectly some of the risks of bias within studies (e.g., study quality) and reporting bias across studies (e.g., publication bias) that could affect the meta-analytic result. Further, selective inclusion criteria might even allow investigators to exploit researcher degrees of freedom resulting in the mass production of redundant meta-analyses that often contain only fragments of the totality of evidence42.

Moreover, the first meta-analysis on this topic was published almost two decades ago43, when only a few RCTs were available. As a consequence, prior meta-analyses (see Table 1) frequently involved only a handful of trials and none of them more than 15 RCTs.

Hence, the statistical power of the prior meta-analyses to identify small-to-moderate overall effects might have been insufficient38. In addition, a trend can be observed that overall effects tended to be not significant in earlier meta-analyses, but significant in more recent meta-analyses (see Fig. 1A, B). Thus, updating this line of meta-analytic research, without additional restrictions, still appears to be required to adequately address the pending research question of whether psychosocial interventions may prolong the survival of cancer patients or not.

Choice of the effect size measure

Prior meta-analyses differed in their use of effect size measures: odds ratio (OR) vs. risk ratio (RR) vs. hazard ratio (HR). Yet, in the context of survival data, the use of the HR is preferable over the OR and the RR, as it considers information of time-to-event data from all participants, including those who were lost during the trial44. Moreover, the HR considers the whole study period and does not allow study authors or meta-analysts to selectively pick one (or multiple) of many possible follow-up intervals as endpoints (e.g., at 1, 5, or 10 years), which can be readily done with the OR and the RR (see Table 1).

Hence, the HR helps to measure survival more precisely and safeguards against possible questionable research practices (i.e., the exploitation of researcher degrees of freedom to achieve a desired result45). However, only four of the prior meta-analyses have used the HR, whereas the other six have used either the OR or RR (see Table 1).

Risk of bias within studies

Risk of bias in primary research represents a threat to the validity of science such as selection bias (sample not representative of the target population, due to non-randomization or high dropout rates), design bias (inherent flaws in the study design, such as additional interventions or inadequate control groups), data analysis bias (manipulation of data analysis until statistically significant results are found), and financial or other conflicts of interest (researchers’ personal interests influencing the outcome)46.

Common threats to the validity of meta-analytic research that might have a profound influence on the overall effect are: (1) the methodological and statistical quality of the included studies, which is a crucial factor for the overall quality of a meta-analysis47; (2) the meta-analytic post hoc power of included trials (i.e., the probability that a study can detect the observed overall effect of a meta-analysis), which is critical for the validity of meta-analytic hypothesis testing46,48,49; (3) the risk of sponsorship bias (i.e., the distortion of study effects by financial interests), which is important for a precise and unbiased overall effect estimate50,51; as well as (4) the multiplicity of analyses (i.e., conducting numerous analyses) and (5) the flexibility in analyses (i.e., adjusting analytical methods based on results), which both increase the risk of finding significant results by chance52,53.

Five out of ten prior meta-analyses collected data with the Risk-of-Bias Tool 154, seven out of the ten prior meta-analyses presented descriptive data on study quality, but only two have evaluated its impact on survival by excluding low-quality studies in the form of a sensitivity analysis (see Table 1). However, none of the meta-analyses reviewed here have investigated (meta-analytic) post hoc power or risk of sponsorship bias. It follows that these risk of bias factors still need to be examined systematically in this field of research.

Clinical and statistical heterogeneity

The meta-analytic overall effect is meaningful if the included studies are sufficiently homogenous, whereas it can be misleading in the face of high clinical (substantive and methodological) or high statistical heterogeneity55,56. In this sense, substantive moderators (e.g., age, sex, cancer type, tumor stage, or hormone receptor status20,57,58) and methodological moderators (e.g., intervention type, nature of control group, type of statistical analysis, or length of follow-up interval57,58) might exert an effect on overall survival time and should therefore be investigated in a thorough moderator analysis.

Note that for a long time the term “psychosocial intervention” was rather vaguely defined, but finally has been described and grouped into six distinct categories by Teo et al.59 with respect to cancer patients: (1) cognitive-behavioral; (2) meaning-enhancing; (3) dignity therapy, life review, and narrative-driven; (4) other counseling; (5) education-only; and (6) musical therapy, writing therapy, and others (see Table 2 for a detailed description of these categories). Examining the previous literature regarding the topic, the impression may arise that the goal of the ongoing debate was to (generally) declare all types of psychosocial interventions as either effective or ineffective in prolonging cancer survival. However, one should consider that specific types of interventions may work well in certain populations, whereas in others they may not21. Therefore, further investigation in this area could prove beneficial.

Possible causes of heterogeneity should be investigated not only carefully but also in a systematic manner that examines all potentially relevant moderators, along with those interactions between moderators that are deemed important and make the most sense60.

In addition, machine-learning and bootstrap-based approaches may help to identify the most relevant moderators in an exploratory fashion, especially when the number of moderators is larger than the number of included studies, thus providing results which then can be matched to (or contrasted against) the results of conventional moderator analysis61.

Clinical heterogeneity was clearly evident in the corpus of primary studies (RCTs), and statistical heterogeneity was identified in eight of the 10 prior meta-analyses (see Table 1). Yet, prior meta-analyses often did not sufficiently investigate sources of heterogeneity. Six meta-analyses investigated categorical moderators in subgroup analyses. However, only two meta-analyses inspected more than two moderators and only two applied statistical tests to explore actual subgroup differences (see Table 1).

In some cases, continuous moderators were transformed into categories by dividing studies into groups with low, middle, and high values of a given characteristic. While this approach may aid interpretability, it can also introduce issues concerning the justification of cutoff values and may increase false-positive rates62,63. Furthermore, none of the prior meta-analyses controlled for possible type-1 errors in the moderator analysis. In sum, this casts doubt on the evidentiary value of some of the reported moderators regarding survival. Consequently, a broad range of possible moderators still needs to be investigated systematically.

Reporting bias across studies

The ten prior meta-analyses did not apply proper (or any) contemporary methods to examine publication bias and p-hacking (see Table 1). Publication bias may result in the distortion of the meta-analytic overall effect, due to a higher likelihood of primary studies being published if they report significant effects64,65, whereas p-hacking denotes the manipulation of data analysis with the aim to produce a significant result66,67.

Publication bias was investigated in five of the 10 prior meta-analyses. Four of them used graphical methods (funnel plots), while only one68 applied statistical tests to search for evidence of publication bias (see Table 1); however, using a method (Egger’s regression test69) for which an enhanced extension (FAT-PET-PEESE70) is available and a procedure (Rosenthal’s fail-safe N71), which is not considered valid anymore64,65. This meta-analysis was also the only one that tried to adjust the overall effect for publication bias, but yet again, with a method (Trim-and-Fill72) that is not deemed appropriate anymore64,66. None of the prior meta-analyses checked for the evidence of p-hacking (see Table 1). It follows that the totality of evidence still needs to be tested for the presence of reporting bias with contemporary methods.

Data-analytic decisions

The visualization of study overlap for all meta-analyses published so far (including the present meta-analysis) with the GROOVE Tool73 resulted in a high overlap of evidence (see Fig. 2 for a matrix of evidence of GROOVE), as the overall corrected covered area adjusted by structural zeros was 43% (see Supplementary Fig. A1, A2 for overall results of GROOVE). From these figures, it can be concluded that some meta-analyses are redundant, whereas others are very selective. Hence, it seemed self-evident to examine the complexity of prior meta-analytic decisions and their effects on the outcomes of these meta-analyses.

The green cells marked with 1 indicate a primary study which was included in the respective meta-analysis, the white cells indicate a primary study which was not included, and the black cells marked with X indicate a structural zero (a study that could not have been included either because it was published after the meta-analysis or because a follow-up publication has been incorporated instead).

In general, there are many ways to analyze data (various researcher degrees of freedom), which in some cases may yield broadly similar results, whereas in other cases vastly different ones. Accordingly, the question of which data should be analyzed (the data universe) and how these data should be analyzed (the model universe) have become more and more relevant in recent years, for primary studies41,74,75 as well as meta-analysis76. Four of the ten prior meta-analyses reported a significant overall effect, whereas six did not (see Table 1). These meta-analyses differed in numerous ways concerning the data that were analyzed (e.g., at times a focus on metastatic or non-metastatic female breast cancer patients) and how these data were analyzed (e.g., survival analysis after 1, 2, 4, 5, 6, or 10 years of follow-up).

The specification curve and multiverse-analysis approach to meta-analysis76,77 can provide insights into the causes of heterogeneity in the results of past meta-analyses and specifically enables evaluation of the generalizability (vs. narrowness) and robustness (vs. fragility) of findings across all different meta-analytic specifications. It does so by: (1) identifying reasonable specifications of (past) meta-analyses concerning which data were meta-analyzed (WHICH factors; data universe) and how these data were meta-analyzed (HOW factors; model universe); (2) calculating the overall effects of all of these specifications and their combinations; (3) presenting the results graphically with descriptive (meta-analytic) specification curve plots (which display the meta-analytic overall effect of each specification, in an ascending order, together with the number of included trials) and histograms with the number of corresponding p values; and (4) applying parametric bootstrap techniques and presenting inferential (meta-analytic) specification curve plots, which allow one to infer whether observed overall effects from all of these specifications deviate from the 95% confidence interval (CI) bands of simulated true overall effects of zero. Therefore, conducting a specification curve and multiverse meta-analysis is deemed necessary to resolve the existing ambiguities regarding this research question.

A tangible effect size for practitioners, patients, and policymakers

Practitioners, patients, and policymakers may have difficulties interpreting effect sizes such as the HR, OR, or RR, which are frequently used in meta-analyses about survival. Some statistical knowledge about specific cutoff values that allow classification of an overall effect ratio as small, medium, or large is necessary, while the question remains about what a small, medium, or large effect actually means. Therefore, we also provide a more tangible effect size, the overall median survival (time) difference78,79. This is a weighted average of the difference in median survival time between the intervention and control group.

Objectives of the present analysis

The purpose of our preregistered systematic review, meta-analysis, and multiverse meta-analysis was to investigate whether psychosocial interventions prolong the survival of cancer patients, addressing all of the above issues. Therefore, we (1) systematically searched for eligible RCTs, calculated HRs where necessary, and estimated an overall effect HR based on the currently available corpus of primary studies; (2) estimated the overall median survival difference between the intervention and control groups to provide a more tangible effect size; (3) applied sensitivity analyses for both effect size measures; (4) investigated risk of bias, study quality, meta-analytic post hoc power, and risk of sponsorship bias within primary studies to assess the validity of included RCTs; (5) inspected clinical and statistical heterogeneity with a systematic approach to moderator analysis that examined all previously explored moderators (identified from included trials, prior meta-analyses, and other relevant literature), while controlling for type-1 errors and also drawing on a machine-learning approach; (6) examined publication bias and p-hacking across primary studies in order to assess the evidential value of the meta-analytic findings; (7) planned to estimate a meta-analytic overall effect adjusted for publication bias (in case the appropriate conditions would be met); (8) explored possible causes for heterogeneity in the results of prior meta-analyses; (9) checked for the existence of true (nonzero) overall effects across reasonable specifications by specification curve and multiverse meta-analysis, and (10) evaluated the certainty of all the gathered evidence.

Consequently, we present a comprehensive analysis of the effects of psychosocial interventions on survival in cancer patients, designed to clarify sources of ambiguity in both primary and prior secondary research findings and contribute to a more coherent scientific understanding.

Methods

Study eligibility criteria

General eligibility criteria of primary studies are listed according to the patient, intervention, comparison, outcome, timing, and study type (PICOTS80) framework (see Table 3 for a list of general eligibility criteria).

Specific inclusion criteria

Studies were required to focus on psychosocial interventions designed to improve the mental, emotional, and social well-being of cancer patients. Eligible interventions comprised structured approaches containing active psychological components, such as psychoeducation, mindfulness-based interventions, cognitive-behavioral therapy (CBT), emotional-expressive therapy, or peer support. These interventions primarily targeted mental health outcomes, including anxiety, depression, and distress. Included trials could involve palliative care, provided that psychosocial interventions constituted a central element of the study and were administered to both the intervention as well as the control group. General recommendations on lifestyle factors (e.g., nutrition and exercise) were acceptable, but systematic lifestyle interventions were not allowed to be a core component, particularly when they were provided exclusively to the intervention group.

Specific exclusion criteria

Trials were excluded if they focused primarily on palliative care, symptom control (e.g., pain or nausea management), or end-of-life care without a structured psychosocial intervention. Studies that lacked defined psychological components (e.g., general discussions without therapeutic techniques or unstructured emotional support) were also excluded. Research integrating systematic lifestyle interventions (e.g., structured dietary changes or exercise programs) was excluded to ensure that psychosocial effects could be isolated from other factors, especially when administration differed between the intervention and the control groups.

Registration and protocol

This meta-analysis and multiverse meta-analysis was preregistered on October 18, 2020 at the Open Science Framework (https://osf.io/4knzu) after an initial literature search but before any analysis of the current dataset took place (registration number 10.17605/OSF.IO/4KNZU). The article follows the PRISMA 2020 reporting guidelines81. The methodology for our analysis was refined throughout the course of the project as a more suitable approach was identified in line with the guidelines of good scientific practice. Hence, the final form deviates from the preregistration in the following ways:

First, exporting citations from the database CENTRAL is no longer free (since 2019), so instead, Web of Science, Scopus, and Embase were added to the literature search. Second, the database OpenGrey closed in 2020, so we used ProQuest Dissertations & Theses Global as an alternative. Third, effect sizes (HRs) were calculated using the approach of ref. 82, which is a more precise method than that of ref. 83—especially when some information on censoring is available. Fourth, standard forest plots were used instead of rainforest plots to present the results, as the R package Metaviz84 did not allow the presentation of subgroup summary effects calculated with pooled τ2 estimates.

Fifth, the R package MetaForest was used to explore moderators instead of Meta-CART85, as it had a higher power to detect effects, offered better protection against overfitting, and captured interactions more effectively. Sixth, the additional FE moderator analysis was dropped, as MetaForest proved to be a far more powerful tool to identify possible moderators in an exploratory manner. Seventh, FAT-PET-PEESE was added to test for publication bias in an exploratory manner. Eighth, the overall effect was planned to be adjusted using the best appropriate method according to the Meta Showdown Explorer instead of predetermining it beforehand.

Ethical considerations

Ethics approval was not required, as this study analyzed only previously published trials with existing ethics approval and informed consent, in accordance with the University of Vienna Ethics Committee policy. No individual patient data were collected.

Information sources

Electronic databases such as Web of Science, Scopus, MEDLINE (via PubMed), PsycINFO (via EBSCO), Embase, and Google Scholar (first 500 results for RCTs, first 100 results for meta-analyses) were searched for eligible RCTs and prior meta-analyses on October 18, 2020 and were regularly updated until October 17, 2025. A similar combination of these databases has been proposed to be the most promising for systematic literature searches86. In addition, the reference lists of all eligible RCTs and prior meta-analyses that dealt with the same topic were hand-searched. On top of that, databases ClinicalTrials.gov and ProQuest Dissertations & Theses Global were searched for gray literature.

Literature search

The main search terms were (psychotherapy OR psychosocial) AND (cancer OR neoplasm) AND (survival OR mortality) AND “randomized controlled trial” (for the search of RCTs) or meta-analysis (for the search of meta-analyses; see Supplementary Tables A1–A3 for full lists of search strings).

Selection process of primary studies

The literature search and study screening were conducted independently by two authors (KDAH, LB), with disagreements resolved through discussion and consensus. At first, all identified articles were imported into a reference management program (EndNote 21), and duplicates were removed. Next, titles and abstracts were screened for eligibility. Finally, full-text versions of seemingly eligible trials were obtained and evaluated for final inclusion.

Data collection process

A data extraction sheet was created that contained all variables of interest. It was pilot-tested on ten randomly selected RCTs and refined accordingly. Data were collected independently by two authors (KDAH, LB) and subsequently checked for consistency. Any disagreements were again resolved by discussion and consensus. Seven of 18 contacted authors of eligible RCTs provided more detailed information about the articles of Guo, Z. et al.87, Julião et al.88, Kirkegaard et al.89, Kissane et al.19,90, Ross et al.91, Spiegel et al.17,20, and Temel et al.92.

Data items

Data extracted from eligible RCTs included (1) general study information: first author, year of publication, sample size, number of deaths, name of interventions, and intervention components (e.g., psychoeducation, cognitive restructuring, and expression of emotions); (2) effect sizes: HR (plus 95% CIs) and median survival time (plus minimum, maximum, first and third quartile of survival time in months); (3) moderator variables: age (years), sex (% male), marital status (% married), cancer type (breast, colorectal, lung, melanoma, other, and mixed samples), tumor stage (stage I–IV, mixed samples), tumor phase (early [stage I & II], late [stage III & IV], mixed samples), (distant) metastasis (metastatic [stage IV], non-metastatic [stage I–III], mixed samples), (baseline) estrogen receptor status positive (%), (baseline) progesterone receptor status positive (%), (baseline) lymph node status positive (%), (baseline) surgery before study entry (%), (baseline) chemotherapy before study entry (%), (baseline) radiotherapy before study entry (%), (baseline) hormone therapy before study entry (%), intervention type (CBT-based, meaning-enhancing, narrative-driven, other counseling, education-only, others), intervention format (individual, group), intervention dose (hours), intervention period (weeks), intervention onset (during hospital stay, after hospital stay), background of intervention provider (medical, psychological, social, mixed teams), (significant) differences between groups in attendance of psychosocial interventions outside of study (yes, no), control group type (active: minimal psychosocial input such as health education, relaxation training, or guidance material; passive: treatment as usual without any structured psychosocial input), length of follow-up (months), analysis type (ITT, not ITT), patients lost to intervention (%), and continent of origin (Africa, Asia, Australia, Europe, North America, South America); and (4) risk of bias assessment: risk of bias (low, some concerns, high), study quality (score), meta-analytic post hoc power (%), funding sources (no funding, government funding only, [also] public charity funding involved, [also] private foundation funding involved, [also] for-profit organization funding involved, for-profit organization funding only), and risk of sponsorship bias (low, moderate, high). Note that some categories could not be analyzed due to an insufficient number of studies.

In addition, intervention components were systematically extracted from each included study based on intervention descriptions in the main article and supplementary materials. Each intervention was coded for the presence of psychological and supportive components reported in the articles. Multiple components could be coded per study, reflecting the multicomponent nature of the interventions. Component frequencies were summarized descriptively by absolute and relative occurrence (in significant and non-significant studies). This analysis was exploratory and descriptive in nature, and did not assess causal effects.

Effect size measures

The proportional hazards assumption in survival analysis posits that the ratio of hazard rates between two groups remains constant over time, which is important for the calculation of the Cox proportional hazards regression model. Both primary studies (see Table 4) and prior meta-analyses (see Table 1) have adopted this assumption. According to established guidelines, the assumption is likely met if curves do not cross and the distance between them remains relatively constant over time93. We assessed its validity by visually inspecting published Kaplan–Meier survival curves from all incorporated RCTs, as individual patient data were unavailable. Our inspection revealed no major violations and minor deviations, observed in a few cases, were not considered sufficient to bias effect size (HR) estimates or the overall conclusions.

Survival in RCTs was measured from trial entry or randomization until death, censoring, or the end of the study. Effect sizes such as HRs (preferably univariate; i.e., without any control for covariates) and median survival time were taken from published articles, communication with the authors, or prior meta-analyses.

However, in case these effect sizes were unavailable, survival probabilities were extracted from published Kaplan–Meier survival curves with GetData Graph Digitizer software 2.2694. Data for the HR calculations were reconstructed by dividing published Kaplan–Meier survival curves into appropriate time intervals (e.g., 1-, 2-, or 3-month segments) and extracting information regarding the number of events (i.e., deaths) from cumulative survival probability as well as the number of censored patients (i.e., dropouts) from tick marks in the survival curves and from information in text, figures, or tables in the articles.

The numbers at risk for each time interval were then calculated using Excel sheets, following a method developed by ref. 82, which makes it possible to incorporate data on censoring while implementing the approach of ref. 83—a widely used method for reconstructing data from Kaplan–Meier survival curves. For transparency and reproducibility, all calculation spreadsheets are publicly accessible (see Supplemental File S1 for HR and death number calculation sheets at https://osf.io/wnxdb/files).

The HR for each trial in question was then computed from the number of events and the numbers at risk for each time interval by an online HR calculator95. HRs of 0.77, 0.53, and 0.36 represented small, medium, and large effects, respectively (the inverse of HR 1.3, 1.9, and 2.896). HR <1 indicated a survival benefit for the intervention group, HR >1 a survival benefit for the control group, and HR = 1 no effect.

Data extraction and calculation of effect sizes were executed by one author (KDAH) and subsequently checked by another author (UST), who is an active researcher in the fields of quantitative methods and statistics. This independent verification ensured methodological rigor and minimized error risk.

As the analysis is based on reconstructed individual patient data from Kaplan–Meier curves, the estimates are subject to the usual limitations of this approach; however, all steps were performed using validated, peer-reviewed methods to ensure the highest possible accuracy.

Meta-analytic synthesis methods

All eligible RCTs were included in a single synthesis without further grouping. Statistical analysis was carried out using R 4.3.0 and the R package Metafor 4.0.097. The R code of the meta-analysis and multiverse meta-analysis (see Supplemental File S2 for the data sheet and the R script of the meta-analysis at https://osf.io/wnxdb/files) was written and validated by the first author (KDAH) while subsequently cross-checked by a colleague, Johannes K. Vilsmeier, at our institution. HRs were transformed to a log scale (logHR) for calculations and retransformed for presentation38.

Clinical (substantive and methodological) heterogeneity among primary studies was accounted for with a random-effects (RE) meta-analytic model using the restricted maximum-likelihood (REML) estimator, as it has the most reasonable properties to estimate the overall effect38,98,99.

Statistical heterogeneity (i.e., variation in true population effects) was analyzed with the Q test. The relative amount of true (between-study) variance to total (observed) variance I2, the absolute amount of true variance τ² (log scale), and the true standard deviation τ (log scale) are reported (a simple retransformation on the original scale was not possible with the latter two parameters)100. For the purpose of describing the likely range in which effect sizes of future studies could fall55, we present a 95% prediction interval (PI) estimated according to ref. 101.

For the presentation of the meta-analytic results, three forest plots with studies ordered according to effect size (for an overview), precision (to search for small-study effects), and publication year (to visualize time trends) were produced. The robustness of the overall effect was evaluated with a sensitivity analysis (leave-one-out method). The development of the overall effect over time was assessed using a cumulative meta-analysis102, starting with the effect of the first published study and adding the other effects one at a time in their order of publication.

In order to provide a more easily grasped effect size for clinicians and patients, a weighted overall median survival (time) difference between intervention and control groups (in months) was calculated, again using an RE model and REML estimator, with the R package Metamedian 1.0.078,79. This method estimates study weights with the help of the first as well as third quartiles (if available) and/or the minimum and maximum values of survival time, which may provide good approximations, but not necessarily perfectly accurate weights. Therefore, the robustness of this estimate was also analyzed with sensitivity analysis (leave-one-out method). We emphasize that the weighted median survival difference only provides a rough overall statistic, as it can only be based on trials that actually provide median survival values (those with survival rates <50% in the intervention and control groups) and, hence, this metric was not used for any hypothesis testing.

Risk of bias within studies

Overall risk of bias

The risk-of-bias tool 2 (RoB 2), developed by ref. 103, systematically assesses RCTs (primary studies) for biases in five domains: (1) the randomization process, (2) deviations from intended interventions, (3) missing outcome data, (4) outcome measurement, and (5) selection of reported results. Risk of bias was assessed independently by two authors (KDAH, KS), and differences in opinion were settled by discussion and consensus. Interrater reliability was calculated using Cohen’s Kappa (κ), based on the initial independent ratings prior to consensus, and using interpretation guidelines by ref. 104.

Study quality

Study quality was assessed with reference to the outcome of interest (survival) by a combination of checklists (see Supplementary Table A4 for a list of study quality items) consisting of the Quality Assessment of Controlled Intervention Studies (Items 1–14105), the DELPHI list (Item 15106), and the CONSORT checklist (Items 16–18107). A score of +1 was given to each item if the respective criterion was met, -1 if it was not met, and 0 if unknown (range: +18 to −18 points). Literature that was explicitly referred to in the articles of primary studies to contain further information was also checked for assessing the study quality of each trial (see Supplementary Table A5 for a list of additional literature used). Study quality was evaluated independently by two authors (KDAH and AG), and any differences were resolved by discussion and consensus.

Post hoc study power

To date, there is no approved method to estimate study-specific post hoc power, as every study may represent a slightly different population. Each population effect is required to be known to calculate study-specific post hoc power, but specific population effects cannot be reliably estimated from single studies108,109. However, it can be argued that meta-analytic post hoc power follows a more plausible rationale, as its assessment is not based on study-specific effects, but the assumed common population effect estimated by the meta-analysis.

Hence, we calculated the meta-analytic post hoc power of each RCT according to an (adjusted) R code from ref. 110 by taking each sample size (n), each proportion of patients in the intervention group (nint./n), the estimated overall effect HR of our meta-analysis, and the probability of events (deaths/n) within each trial into account.

Sponsorship bias

Studies were grouped according to similar categories proposed by ref. 111, although in a more refined manner: no funding involved (coded 0), government funding only (coded 1), (also) public charity funding involved (coded 2), (also) private foundation funding involved (coded 3), (also) for-profit organization funding involved (coded 4), and for-profit organization funding only (coded 5). The analysis of funding sources compared the subgroup summary effects of all groups with each other (codes 0–5), whereas the analysis of risk of sponsorship bias compared the subgroup summary effects of groups with either low (coded 0–3), moderate (coded 4), or high risk (coded 5) of sponsorship bias.

Moderator analysis

Potential causes of heterogeneity were investigated with a systematic approach to effect-moderator analysis that involved: (1) all moderator variables explored by the ten prior meta-analyses; (2) factors that (significantly) affected survival in the included RCTs; (3) factors identified in additional literature (e.g., on important prognostic factors); and (4) the risk of bias factors study quality, post hoc power, and sponsorship bias (see Supplementary Table A6 for a moderator disclosure table).

A mixed-effects (ME) meta-analytic model was used in the subgroup analysis to identify moderators that may generalize to other populations. It is a standard approach in subgroup analysis to model the variation within subgroups as a random effect and the variation between subgroups as a fixed effect38.

For categorical data, we formed subgroups containing at least two studies (k ≥ 2), calculated summary effects for each subgroup, and subsequently tested whether any of these differed significantly from zero (test for subgroup effects; TfSE). Next, we tested whether subgroup summary effects differed significantly from each other (test for subgroup differences; TfSD). A categorical moderator was identified only when both tests delivered significant results.

For continuous data, we tested each predictor (moderator) and the outcome (survival) for a significant correlation (test of moderator; ToM) in individual meta-regression analyses if at least three studies were available (k ≥ 3). Note that results of subgroup analyses (due to lack of statistical power) and meta-regressions (due to risk of overfitting) that include less than ten studies (k < 10) should be interpreted with caution93. Additionally, studies should be evenly distributed among subgroups in subgroup analysis.

Residual heterogeneity was assessed with the Q test, and the relative amount of variance is reported by I2. A Benjamini–Hochberg procedure112, with a false discovery rate (FDR) of 10%, was applied to all subgroups (p values of TfSD) and meta-regression analyses (p values of ToM) in order to control for the accumulation of type-1 errors from multiple testing.

Exploratory machine-learning-based search for moderators

An exploratory search for potential moderators was performed with a recently developed machine-learning and weighted bootstrap sampling approach called MetaForest61,113, which derives from an adaptation of the random forest algorithm114,115. The method is robust against overfitting, captures linear and non-linear relationships, and can also be used to detect higher-order interactions. Importantly, this procedure allows reliable identification of relevant moderators from a larger set of potential moderators in meta-analyses, even when the number of moderators is larger than the actual number of included studies, due to higher power than conventional moderator analyses61,113. We followed the approach for small-sample meta-analyses as outlined by ref. 113.

First, a data file was created in R containing only the moderators of interest with ≤3 missing values (≤10%) replaced by their mode (for categorical variables) or median values (for continuous variables), applying simple imputation, in order for MetaForest to work properly. Moderators with >3 missing values (>10%) were excluded to avoid bias. We refrained from using multivariate imputation techniques due to the low intercorrelations between many of the moderators, which would have compromised the quality of the imputed values. Second, the optimal number of trees was identified by running a test model with a high tree number (20,000), which allowed us to determine the number of trees at which the model’s out-of-bag (OOB) cumulative mean squared (prediction) error (MSEoob, a measure of predictive accuracy61) converged. Third, a final model with the optimal number of trees (the value at which the test model converged) was run, in order to avoid unnecessary model complexity.

Fourth, in order to eliminate irrelevant moderators, a 100-fold recursive preselection algorithm was applied, which drops the moderator with the most negative variable importance from the model before rerunning the analysis until only moderators with positive variable importance (in >10% of replications) remain. Fifth, only the moderators for which the 50th percentile interval (range between the 25th and 75th percentiles) from the variable importance metrics did not include zero were kept in the model. Sixth, optimal tuning parameters (type of weights, number of candidate variables per split, and minimum terminal node size) were determined via machine-learning techniques implemented in the R package caret116, which uses tenfold clustered cross-validation to find the optimal model with the smallest (cross-validation) root mean squared error (R2cv).

Seventh, for the final model with optimal tuning parameters, a convergence plot (which visualizes at what number of trees the cumulative MSEoob stabilized113), a variable importance plot (which shows the relative importance of each relevant moderator in predicting the effect size113), and a partial dependence plot (which displays the relationship between a relevant moderator and the effect size, whilst averaging over all other moderators61) were created for the presentation of results. Additionally, first-order interactions between identified moderators were explored by generating partial dependence plots of important bivariate interactions.

Reporting bias across studies

Reporting bias across trials was investigated with several contemporary methods, as each of these addressed different features of the data and their combination was thus considered more informative than each method alone.

First, a contour-enhanced funnel plot117,118 was visually inspected to search for hints of publication bias (i.e., if studies with high standard errors were selectively missing at one side of the funnel plot).

Second, we tested for the presence of publication bias with p-uniform119, which examines the distribution of significant p values and FAT-PET-PEESE (exploratory), which regresses observed effect sizes either on their standard errors (PET) weighted by the least squares method or on their sampling variances (PEESE) and tests for a significant slope (FAT; test for publication bias).

Third, p-uniform* (an advanced version of p-uniform120), which estimates the true overall effect size adjusted for publication bias (if present) by modeling the distribution of both significant and not significant p values and PET-PEESE, which uses regression modeling between effect size and precision (standard errors) by either fitting a linear regression (PET; used when the effect is estimated to be zero) or a curvilinear regression (PEESE; used when the overall effect is estimated to be non-zero65,121) were planned to adjust the overall effect estimate when two conditions were met: (1) Publication bias could be clearly identified; and (2) the Meta Showdown Explorer66 attested a higher likelihood of accurately and precisely estimating the adjusted overall effect for one of the two methods compared to the simple RE model, based on pre-computed simulation data under conditions similar to the given dataset.

Fourth, p-curve122,123, which, like p-uniform, explores the distribution of significant p values, was used to examine the data for evidence of p-hacking (testing for left-skewness of the p-curve).

Fifth, it was tested whether the studies contained (any) evidential value (testing for right-skewness of the p-curve), for which either the test for half the curve (p values of primary studies <0.025) has to be significant (p < 0.05) or both, the test for half the curve (p values of primary studies <0.025) and the full curve (p values of primary studies <0.05), have to yield p < 0.10).

Sixth, it was tested whether the studies´ evidential value was inadequately low (testing for flatness of the p-curve; power <33%) for which either the test for the full curve (p values of primary studies <0.05) has to be significant (p < 0.05) or both, the test for half the curve (p values of primary studies <0.025) and the binomial test (share of results <0.025), have to yield p < 0.10122,123.

Specification curve and multiverse meta-analysis

The ambiguity in the results of prior meta-analyses was investigated via specification curve and multiverse meta-analysis76.

First, the WHICH (data universe) and HOW factors (model universe) of the prior meta-analyses were identified (see Supplementary Table A7 for a WHICH and HOW factor disclosure table). Some prior meta-analyses focused on specific (sub-)populations, such as (non-)metastatic female breast cancer patients, whereas others had no such restrictions. Some analyses based the decision of the utilized meta-analytic model (FE or RE model) on the presence of statistical heterogeneity (the result of the Q test), whereas others decided to use the RE model on a priori theoretical grounds; the latter is nowadays considered appropriate38. Accordingly, the factors sex of patients (female only vs. all patients), cancer type (breast cancer only vs. all cancer types) and presence of metastasis (metastatic vs. non-metastatic vs. all stages) were defined as WHICH factors, whereas the meta-analytic model (FE vs. RE) was defined as a HOW factor. Note that other theoretically possible categories (e.g., male only or other cancer types) were not included, as no prior meta-analyses had examined them, and available RCTs were also limited in this regard.

Additionally, prior meta-analyses using ORs or RRs calculated multiple overall effects at various follow-up endpoints (at 1, 2, 4, 5, 6, or 10 years), unlike meta-analyses using HRs, which always made use of the full study periods (see Table 1). However, different follow-up endpoints were not used as a WHICH factor, because (1) with six different follow-up endpoints and k = 32 trials each level would have included only a handful of studies (at best) and (2) this approach would not have adequately reflected what prior meta-analyses (using ORs or RRs) had actually done (i.e., coding multiple effect sizes at various follow-up endpoints for each and every trial; where data were available).

Hence, the follow-up endpoint was coded as a HOW factor for ORs and RRs by calculating multiple overall effects at 1, 2, 4, 5, 6, and 10 years for each study, where data were available, but not for HRs (as the latter always makes use of the full study period available). This entailed that the number of HOW factors differed between ORs, RRs, and HRs, which is why separate descriptive specification curves had to be plotted for ORs, RRs, and HRs, respectively.

Although the full dataset underlying the multiverse meta-analysis included multiple effect sizes from some studies across different follow-up intervals, these were never analyzed together within the same model. Thus, while some dependencies might have existed in the broader context, they did not violate the assumption of independence within any single meta-analysis reported in the multiverse. Each analysis was conducted using standard random-effects models, which are appropriate under these conditions.

The three WHICH factors sex of patients (2 levels), cancer type (2 levels), and presence of metastasis (3 levels) as well as the HOW factor meta-analytical model (2 levels) and the HOW factor follow-up endpoint (6 levels; for OR and RR) resulted in 24 (2 × 2 × 3 × 2) possible specifications for the HR and 144 (2 × 2 × 3 × 2 × 6) possible specifications for the OR and RR. Note that the algorithm of the R code only kept unique specifications with k ≥ 2 studies; this means that the actual number of specifications shown in the resulting plots might be lower than the maximum number possible.

Second, the original code for the specification curve and multiverse meta-analysis76 was adjusted to fit the conditions of the current dataset (see Supplemental File S3 for the data sheets and the R scripts of the multiverse meta-analysis at https://osf.io/wnxdb/files).

Third, descriptive meta-analytic specification curve plots for ORs, RRs, and HRs were created that displayed the observed overall effect sizes, their 95% CIs, and the included number of studies for each specification in ascending order.

Fourth, separate p value histograms were generated for ORs, RRs, and HRs that allowed us to examine whether or not the overall distribution of p values diverged from what would be expected from true zero overall effects (i.e., if p values were evenly distributed or if there was an accumulation of significant p values >5%).

Fifth, inferential meta-analytic specification curve plots for ORs, RRs, and HRs with overall effects and their 95% CI bands from 1000 simulated specifications with true overall effects of zero were constructed (via bootstrapping) and contrasted against the actually observed overall effects from the descriptive specification curve plots; all based on the corpus of available studies. Regions where the curve of observed overall effects lay outside the 95% CI band of simulated true zero overall effects were interpreted as evidence for the existence of a true (nonzero) overall effect for the respective specifications.

Note that separate data files and separate inferential specification curve plots for follow-up intervals at 1, 2, 4, 5, 6, and 10 years (regarding ORs and RRs) were created, as otherwise the data contained missing values for certain trials and specific follow-up intervals (e.g., not every trial had a 10-year follow-up), which could not be handled otherwise by the multiverse meta-analysis R code.

Certainty in the evidence (GRADE rating)

The certainty of evidence was evaluated using the GRADE framework, which assesses the confidence in the estimated overall effect across five key domains: risk of bias, inconsistency, indirectness, imprecision, and publication bias. Given that the present analysis was based exclusively on RCTs, the starting level of certainty was high, as recommended by the GRADE Working Group124. We then considered each potential reason for downgrading:

I. Risk of bias was evaluated using the RoB 2 tool103 across all five domains: (1) bias due to the process of randomization, (2) deviation from the intended intervention, (3) missingness of the outcome data, (4) measurement of the outcomes, (5) selection of the reported result, and an “overall risk of bias” judgment. Downgrading was considered if a substantial proportion of studies had either “some concerns” or “high risk” ratings.

II. Inconsistency was evaluated based on statistical heterogeneity indicators, particularly I², τ², τ, and the 95% PI. Inconsistency was judged serious if variation in effect estimates could not be fully explained by moderators.

III. Indirectness was considered if the included studies deviated from the population, intervention, comparator, or outcome of interest. No downgrading was planned unless systematic differences were evident.

IV. Imprecision was assessed primarily based on whether the 95% CI of the overall effect HR included the null. A secondary measure, such as the overall median survival (time) difference, was not part of hypothesis testing and was therefore not considered.

V. Reporting bias (such as publication bias and p-hacking) was evaluated using multiple approaches (contour-enhanced funnel plot, p-uniform, FAT-PET-PEESE, and p-curve) and downgraded only in case of consistent evidence for a reporting bias.

Additionally, upgrading factors such as (1) a large magnitude of effect, (2) a dose-response relationship, or (3) plausible confounding reducing the effect size were considered.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Results

Study selection

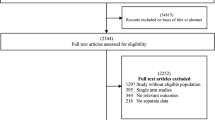

Literature search for RCTs in electronic databases yielded a total of 6848 publications (see Fig. 3 for a PRISMA flow chart of the search for trials). Following the removal of 1427 duplicate entries, a total of 5421 articles were retained for further analysis. Subsequently, titles and abstracts were screened, and 5312 articles were removed because they did not fit the inclusion criteria (three of which were retracted papers). A total of 109 articles were retrieved for full inspection, of which 77 articles were omitted for meeting exclusion criteria (see Supplementary Table A8 and Note 1 for details on excluded articles). Finally, 32 RCTs remained and were included for analysis. Literature search for meta-analyses identified ten prior meta-analyses (see Supplementary Fig. A3 for a PRISMA flow chart of the search for meta-analyses), and search for gray literature resulted in 0 articles (see Supplementary Fig. A4 for a PRISMA flow chart of the search for gray literature).

PRISMA flow chart 2020 displaying the selection process of the systematic literature search for RCTs.

Study characteristics

In total, 32 RCTs involving 5704 patients were included in the meta-analysis, and the HRs of 14 of these trials had not been included in any of the prior meta-analyses reviewed (see Table 4 for a list of study characteristics for all RCTs). Sample sizes varied from 55 to 375 patients (M = 178). Mean ages ranged from 43 to 68 years (M = 56). Cancer types were brain (k = 1), breast (k = 11), colorectal (k = 2), esophageal (k = 1), gastric (k = 1), leukemia (k = 1), liver (k = 1), lung (k = 2), melanoma (k = 2), prostate (k = 1), thyroid (k = 1), and mixed cancer type samples (k = 8). Intervention types were CBT-based (k = 11), meaning-enhancing (k = 5), narrative-driven (k = 8), and other counseling (k = 8). Interventions were delivered either individually (k = 11) or in groups (k = 21), and dosages ranged from 3 to 96 h (M = 33). Control groups were either active (k = 14) or passive (k = 18). Follow-ups ranged from 0.1 to 18 years (M = 6).

Individual studies

HRs of the intervention group (see Supplementary Table A9 for an effect size and p value disclosure table) were available for 16 RCTs, calculated from the inverse HRs of the control group for two RCTs, taken from prior meta-analyses for four RCTs, and calculated from Kaplan–Meier survival curves for ten RCTs. The observed effect sizes ranged from logHR = −1.56 to 0.35 (HR = 0.21 to 1.42) with 72% of them below zero (HRs below 1; see Fig. 4 for a forest plot sorted according to effect size).

HR <1 indicates a survival benefit for the intervention group; HR >1 indicates a survival benefit for the control group; HR = 1 indicates no effect. The 95% CIs in the forest plot may differ in the decimal places from those stated in the articles (see Table 4), as they had to be transformed to SEs for the meta-analysis and were then back-transformed for the forest plot by Metafor. RE model random-effects model, Q = Q test for heterogeneity, Qp = Q test p value, I2 = Proportion of true variance to total variance, 95% PI = 95% prediction interval, k = 32 studies.

Meta-analytic synthesis

There was a small overall effect favoring longer survival in the intervention group, with logHR = −0.23, 95% CI [−0.35, −0.11], SE = 0.06, z = −3.68, p < 0.001, which translates into HR = 0.80, 95% CI [0.71, 0.90]. The Q test for the presence of true effect size heterogeneity was significant with Q(31) = 59.27, p = 0.002. The proportion of true variance to total variance was I2 = 48%, 95% CI [19, 77]; the variance of true effects (log scale) was τ2 = 0.05, 95% CI [0.01, 0.18]; and the standard deviation (log scale) was τ = 0.23, 95% CI [0.11, 0.43]. The 95% PI ranged from HR 0.49 to 1.29. Consequently, although there was evidence of a small overall effect across all studies, effects in future RCTs could (potentially) range from substantially positive to slightly unfavorable; however, none of the included trials reported a significant negative effect on survival. Sensitivity analysis showed that the estimated overall effect was robust, with effect sizes ranging between HR = 0.78, 95% CI [0.69, 0.88] to HR = 0.81, 95% CI [0.72, 0.92] when refs. 125,126 were removed, respectively.

Small-study effects

The pattern in the forest plot sorted by precision did not fully align with a classic small-study effect or publication bias. Although the smallest, least precise studies tended to report the largest effects, a stable trend appeared in the middle section, where studies with good to moderate precision also consistently showed positive effects (see Supplementary Fig. B1 for a forest plot sorted according to precision).

Time trend

A small time trend appeared to be present in the forest plot sorted by publication year. In earlier studies (before 2015), the HRs were relatively mixed, with values both smaller and greater than 1 (see Supplementary Fig. B2 for a forest plot sorted according to publication year). However, from 2015 onward, the HRs consistently fell below 1, suggesting a potential systematic shift in effect sizes over time.

Similarly, cumulative meta-analysis indicated that only from the year 2015 onwards were enough trials available to provide sufficient precision and statistical power for the small overall effect HR to become significant (see Supplementary Fig. B3 for a forest plot of the cumulative meta-analysis).

Overall median survival difference

The overall median survival difference for cancer patients treated with psychosocial interventions showed a trend with a survival benefit of 3.9 months, 95% CI [−0.7, 8.5], SE = 2.34, z = 1.66, p = 0.10; based on articles that provided sufficient data (k = 16). Sensitivity analysis showed that the overall median survival difference ranged between 2.1 months, 95% CI [−0.6, 4.8] to 4.9 months, 95% CI [0.7, 9.2] when refs. 91,127 were removed, respectively.

Active psychological components

Psychosocial interventions in this meta-analysis were consistently multicomponent and delivered either in a group-based format (k = 21; four trials with significant/17 with non-significant effects), which typically entailed elements of group-based social support, or in an individual-based format (k = 11; 6/5), delivered on a one-to-one basis. With regard to other active psychological components, the most frequently included elements were medical education (k = 16; 6/10), coping skills/techniques (k = 13; 5/8), and expression of emotions (k = 12; 3/9). Components of moderate frequency comprised relaxation techniques (k = 8; 4/4), problem-solving (k = 8; 3/5), (non-specific) psychological support (k = 7; 4/3), enhancement of social support (k = 6; 3/3), cognitive reappraisal or restructuring (k = 6; 2/4), goal setting (k = 6; 2/4), reminiscence of past life events (k = 6; 0/6), and improvement of communication (k = 5; 2/3). Components of lower frequency were mindfulness-based components (k = 4; 2/2), psychoeducation (k = 4; 1/3), coping support (k = 3; 3/0), counseling (k = 3; 2/1), and stress management (k = 3; 1/2). Less frequently reported elements are listed elsewhere (see Table 5 for a full list of psychological components).

Risk of bias within studies

The overall risk of bias ranged from some concerns (k = 30) to high risk (k = 2), primarily due to insufficient information on allocation concealment and the absence of a preregistration protocol (see Supplementary Fig. B4 for a Risk-of-Bias Plot). Interrater reliability between the two raters for the initial risk of bias assessment was high, with Cohen’s κ = 0.94, indicating almost perfect agreement104. For included RCTs, average total score of study quality was moderate with M = 7 points (range 1 to 11; see Supplementary Tables B1–B3 for a list with study quality items), whereas average meta-analytic post hoc power was very low with M = 17% (range 6 to 40%; see Supplementary Table B4 for a meta-analytic post hoc power calculation sheet). The corpus of primary studies contained five unfunded and 21 funded trials, while no information was given in six trials. Risk of sponsorship bias was low for 21 studies and moderate for five, while no information was present for the same six trials (see Supplementary Table B5 for a funding source and risk of sponsorship bias rating sheet).

Moderator analysis

Analysis of 25 moderators (one already excluded beforehand due to no data) and four risk of bias factors (see Supplementary Table C1 for an overview) was conducted (see Supplementary Fig. C1–C29 for specific forest and bubble plots). The moderator analysis type revealed that studies using ITT analysis reported a significant subgroup effect (HR = 0.74, 95% CI [0.65, 0.85]), whereas studies that did not apply ITT analysis did not report a significant effect (HR = 0.96, 95% CI [0.78, 1.18]; see Supplementary Fig. C12 for a forest plot of the moderator analysis type). However, the p value for this moderator (TfSD, p = 0.038) exceeded the Benjamini–Hochberg critical threshold of p = 0.003 for this rank, and thus did not remain significant after correction for multiple comparisons.

Therefore, this finding should be interpreted with caution. Another moderator, surgery prior to study entry, indicated a trend (ToM p = 0.063): studies with a higher percentage of patients who had undergone surgery before study entry showed greater effects (see Supplementary Fig. C22 for a bubble plot of the moderator surgery prior to study entry).

Identifying relevant moderators via machine learning

The machine-learning-based moderator search using MetaForest included 15 moderators and two risk of bias factors (each with ≤3 missing values), whereas 11 moderators and two other risk of bias factors (with >3 missing values) were excluded (see Supplementary Table C2A, B for a list of included and excluded moderators). The final model was run with 5000 regression trees, showed a cumulative MSEoob of ~0.22, and converged at ~2000 trees (see Supplementary Fig. D1 for a convergence plot of the final model). The optimal tuning parameters were weights = uniform, candidate variables per split = 2, and minimum terminal node size = 3.

The model yielded a cross-validation prediction error of MSEoob = 0.19, indicating moderate error between the model’s predictions and actual values. It had a positive but small predictive value in unseen data, with R²oob = 0.12, suggesting that only 12% of the variance in new data were explained—indicating weak predictive performance, which was expected due to the high number of moderators and the limited number of studies.

In contrast, it achieved moderate predictive performance using cross-validation with R²cv = 0.28, suggesting that the model explained 28% of the variance within the resampling procedure. This suggests the model performed better in internal validation than on genuinely new data. The gap between R²oob and R²cv indicates mild overfitting to the training data.

Identified moderators sorted according to variable importance (VI) were analysis type (VI = ~0.038), tumor phase (VI = ~0.029), and study quality (VI = ~0.025; see Supplementary Fig. D2 for a variable importance plot). Note that clear cutoff values for MetaForest analysis do not exist, but a general rule of thumb in random forest analyses considers VI values of 0.01–0.05 as low, 0.05–0.1 as moderate, and >0.1 as high128.

Partial dependence plots, displaying the relationships of each identified moderator with the effect size while averaging over the other moderators, were generated in combination with the raw data and the 95% percentile intervals of the predictions of individual trees from the model (see Supplementary Fig. D3 for partial dependence plots of the identified moderators). The plots for analysis type and study quality indicated slight tendencies that studies applying an intention-to-treat (ITT) analysis or achieving a higher quality score reported greater life-prolonging effects. Partial dependence plots displaying bivariate relationships between the identified moderators did not indicate any interactions (see Supplementary Fig. D4 for partial dependence plots of bivariate interactions).

Reporting bias across studies

Publication bias

There was some visual indication of publication bias in the contour-enhanced funnel plot (see Fig. 5 for a contour-enhanced funnel plot), as trials with larger standard errors (usually those with small sample sizes) seemed to be missing in the lower right area of the plot (i.e., effect sizes in the direction opposite to the favored outcome). However, the test for publication bias of p-uniform (based on k = 10 significant effect sizes) was not significant p = 0.266. Similarly, the funnel plot asymmetry test of FAT-PET-PEESE (exploratory; based on all k = 32 effect sizes) did not confirm publication bias but only showed a trend (p = 0.091).

HR <1 indicates a survival benefit for the intervention group; HR >1 indicates a survival benefit for the control group; HR = 1 indicates no effect. The reference line was drawn at HR = 1 (no effect) with significance contours at p < 0.10, 0.05, 0.01, k = 32 studies.

Correcting for publication bias

The Meta Showdown Explorer was tuned to the conditions of the current dataset: severity of publication bias = none (as indicated by p-uniform and FAT-PET-PEESE; the option small was not available, only none, medium, and high), heterogeneity τ = 0.2, number of included studies k = 30, true effect size SMD = 0.2 (a small effect), questionable research practices = none (as indicated by p-curve).

The outcome suggested that the (naive) RE meta-analysis would outperform p-uniform and FAT-PET-PEESE in estimating the overall effect with a lower false-positive rate (type-1 error probability) and a higher true-positive rate (indicative of statistical power) than both methods (see Supplementary Fig. E1, E2 for a Meta Showdown Explorer Plot of the false- and true-positive rate).

Regarding the precision in estimating a true zero effect (δ = 0) and a small true (nonzero) effect (δ = 0.2), the naive (RE) meta-analysis would again outperform p-uniform and be just as precise as FAT-PET-PEESE (see Supplementary Figs. E3, E4 for a Meta Showdown Explorer Plot of correctly estimating a zero and small overall effect).

For the sake of completeness, the results of FAT-PET-PEESE are reported. PET estimated an overall effect of 1.02, 95% CI [0.78, 1.35], p = 0.871, while PEESE estimated an overall effect of 0.89, 95% CI [0.77, 1.02], p = 0.096, corrected for an undetectable (and potentially distorted) reporting bias (see Supplementary Fig. E5 for a FAT-PET-PEESE funnel plot).

p-Hacking and tests of evidentiality

The p-curve analysis (based on k = 10 significant p values) showed no visual indication of p-hacking (see Fig. 6 for the p-curve of all 10 significant p values). Two separate tests were conducted as part of the p-curve analysis.

Right-skewness (indicating evidential value of the corpus of primary studies) was present, but the observed p-curve (blue line) proceeded close to the reference line of low power of 33% (green dashed line). Left-skewness (indicating p-hacking) was not evident, k = 10 significant p values.

First, the test for evidential value, which examines whether the p-curve is significantly right-skewed (indicating the presence of true effects rather than selective reporting or randomness), was not significant for the full curve (p values <0.05; p = 0.458), but significant for the half curve (p values <0.025; p = 0.029). According to ref. 123, evidential value is supported if either the full or the half-curve test is significant, indicating that the primary studies showed evidential value.

Second, the test for inadequate evidential value due to low statistical power (i.e., <33% power) was significant for the full curve (p = 0.043), but not for the half-curve (p = 0.720) and the binomial test (proportion of p values < 0.025; p = 0.935). Following ref. 123, evidence is considered inadequately powered if either the full-curve test is p < 0.05, or if both the half-curve and binomial tests are p < 0.10, suggesting that the primary studies may be underpowered.

Taken together, the studies analyzed thus appeared to provide some evidential value, but may suffer from low statistical power, meaning that exact replications could have a low probability of detecting similar effects. Importantly, the number of significant p values included in this analysis was at the minimum recommended for p-curve (k = 10), and the method is known to sometimes overestimate evidential value under such conditions129, limiting the robustness and generalizability of the inferences. Consequently, these results should be interpreted with caution and considered preliminary rather than definitive.

Similarly, the power of the significant p values from the individual HRs to detect an overall effect, corrected for selective reporting (for possible publication bias and p-hacking; ref. 130), was very low at 5%, 90% CI = [5, 31%]. Again, because this estimate is based on the minimum recommended number of significant p values (k = 10), it may not precisely reflect the true evidential power. Therefore, p-curve might provide a distorted power estimate66.

Specification curve and multiverse meta-analysis

Descriptive specification curve plots (see Figs. 7–9 for descriptive specification curve plots separated into HRs, ORs, and RRs) showed that those meta-analytic specifications restricting the corpus of primary studies (k = 32) to a subset of trials (for logHRs: k < 12; for logORs and logRRs: k < 18)—for example, setting a focus on metastatic or non-metastatic female breast cancer patients (for all effect sizes) or on follow-up intervals longer than two years (only for logORs and logRRs)—frequently resulted in non-significant overall effects (with the 10-year follow-up being the exception).

LogHR <0 indicates a survival benefit of the intervention group; logHR >0 indicates a survival benefit of the control group; logHR = 0 indicates no effect. Interpret the curve vertically. The top panel shows each specification’s overall effect (horizontal line) and 95% CI (vertical line) relative to the dashed no-effect line (logHR = 0). The middle panel shows the number of trials per specification. The bottom panel depicts the combination of “How” and “Which” factors for each specification. Colors indicate sample size, from larger (violet, blue, green) to smaller (yellow, orange, red). FE fixed effects, DL DerSimonian and Laird method, k = 32 studies.

LogOR <0 indicates a survival benefit of the intervention group; logOR >0 indicates a survival benefit of the control group; logOR = 0 indicates no effect. Interpret the curve vertically. The top panel shows each specification’s overall effect (horizontal line) and 95% CI (vertical line) relative to the dashed no-effect line (logOR = 0). The middle panel shows the number of trials per specification. The bottom panel depicts the combination of “How” and “Which” factors for each specification. Colors indicate sample size, from larger (violet, blue, green) to smaller (yellow, orange, red). The “How” factor Follow-up was added here, as logORs in the prior analyses were calculated after 1-, 2-, 4-, 5-, 6-, and 10-year intervals for every trial that provided data (in contrast to logHRs). FE fixed effects, DL DerSimonian and Laird method, k = 32 studies.

LogRR <0 indicates a survival benefit of the intervention group; logRR >0 indicates a survival benefit of the control group; logRR = 0 indicates no effect. Interpret the curve vertically. The top panel shows each specification’s overall effect (horizontal line) and 95% CI (vertical line) relative to the dashed no-effect line (logRR = 0). The middle panel shows the number of trials per specification. The bottom panel depicts the combination of “How” and “Which” factors for each specification. Colors indicate sample size, from larger (violet, blue, green) to smaller (yellow, orange, red). The “How” factor Follow-up was added here, as logRRs in the prior analyses were calculated after 1-, 2-, 4-, 5-, 6-, and 10-year intervals for every trial that provided data (in contrast to logHRs). FE fixed effects, DL DerSimonian and Laird method, k = 32 studies.

Moreover, the amount of significant p values from the observed overall effects were ~42% (logHRs), ~13% (logORs), and ~22% (logRRs; see Fig. 10 for histograms of p value distributions), which is much higher than what would be expected if a general zero overall effect across all specifications was true (in that case the expected amount of significant p values would be ~5% for each effect size). This finding suggests the existence of a general true (nonzero) overall effect of psychosocial interventions enhancing the survival time of cancer patients across the specifications examined in prior meta-analyses and the present review; based on the currently available corpus of primary studies.

The proportion of significant p values (p < 0.05) is indicated by the red columns, while the proportions of not significant p values (p > 0.05) are marked with the blue columns in each histogram, k = 32 studies.

Notably, significant p values for logHRs were much more likely than for logORs and logRRs, due to the fact that effect sizes of the corpus of primary studies were pooled over all follow-ups for the calculation of logHRs (thus incorporating a relatively high amount of trials for each specification leading to a higher precision and, therefore, a higher likelihood of overall effects to turn out significant), in contrast to pooling effect sizes from subsets of the corpus of primary studies for the calculation of 1-, 2-, 4-, 5-, 6-, or 10-year follow-up intervals for logORs and logRRs (thus incorporating a gradually smaller number of trials with longer follow-up intervals); note that almost all studies had at least a 1-year follow-up, but only half of all studies had a 5-year follow-up period.

Remarkably, the inferential (bootstrap) specification curve plot for logHRs (see Fig. 11 for an inferential specification curve plot of logHRs) confirmed that almost all specifications of observed overall effects deviated clearly (and significantly) from the 95% CI band of 1000 simulated overall true zero effects, which can be viewed as evidence for the existence of a general true (nonzero) overall effect across specifications for logHRs.