Abstract

AI-driven machine vision faces critical energy and latency challenges from exponential growth in visual data. This review proposes bio-inspired energy-efficient in-sensor computing utilizing emerging optoelectronic memristors, examining neural network architectures (fully connected/convolutional, recurrent, and spiking neural networks) for static, motion, and event-driven processing that directly emulates biological visual pathways. Critical integration challenges and strategic roadmaps are systematically analysed to achieve cortex-level energy efficiency in next-generation vision systems.

Similar content being viewed by others

Introduction

AI-driven machine vision has rapidly developed, enabling artificial systems to “see” and extract valuable information from images and videos1,2,3,4,5. In sectors like autonomous vehicles, these systems perform complex vision tasks6, such as driving on freeways, avoiding collisions, following lanes, and automatically overtaking slower vehicles. Current implementations, however, rely heavily on the deployment of numerous sensors, with image sensors (using conventional CMOS imagers or CCD cameras) for capturing visual data, infrared sensors for perimeter detection, and other sensors like radar or ultrasonic sensors for precise distance detection. Projections indicate that by 2032, the data generated from such sensors may escalate to approximately 1027 bytes per year, with visual systems contributing 80% of this volume7. And this surge of unprocessed data often contains redundancies, due to frame-based imaging modes, which poses critical challenges for data-intensive and delay-sensitive applications (e.g., robotic navigation)8. Furthermore, in current von Neumann architecture-based systems (e.g., software neural networks or CMOS synaptic circuits), the analog-to-digital conversion of massive visual data streams requires separate memory units for storage and processing. This physical separation of sensing, computing, and memory units results in energy inefficiency and communication latency9.

The development of energy-efficient and intelligent alternative technologies has become critical for overcoming the “von Neumann bottleneck”. Biological visual systems demonstrate superior efficiency (femtojoules per operation, fJ/operation) through four mechanisms: (1) hierarchical biostructure, which compresses data through edge detection and dynamic range adjustment; (2) task-specific parallel pathways for motion, color, and intensity processing; (3) event-driven encoding, and (4) retinal preprocessing that filters 80% of redundant data prior to neural transmission10,11. These principles have inspired “in-sensor computing”12,13,14,15, which shifts partial computational from centralized processors to sensory terminals16. Optoelectronic memristors (OEMs) exemplify this paradigm17, integrating optical sensing and computation to directly compress multidimensional data (intensity, wavelength, polarization) into machine-interpretable patterns18,19,20,21. Volatile OEMs further enable transient storage for spatiotemporal processing. To extract higher-dimensional optical features (spectral, polarization, phase), advanced strategies employ metasurfaces or specialized optoelectronic devices. In the spectral dimension, materials with varying transmission coefficients act as wavelength-selective bandpass filters to extract color features. For the polarization dimension, highly anisotropic materials or chiral plasmonic metasurfaces enhance linear/circular polarization detection, even reconstructing full Stokes parameters in extreme environments (e.g., underwater)22,23,24. In the phase dimension, metasurfaces with pre-engineered phase profiles act as modulators to extract the specific phase information25.

In this review, we will begin by exploring the pathways of the human visual system and their relevance to bio-inspired neural networks designed in in-sensor computing. The basic device structures of OEMs, including two-terminal and three-terminal configurations, will also be discussed, along with their operational mechanisms. Building on these memristors, we will provide a detailed summary of recent developments in bio-inspired neural architectures, focusing on three primary areas: (1) in-sensor fully connected networks (FCNs)/convolutional neural networks (CNNs) for static image recognition, (2) in-sensor recurrent neural networks (RNNs) for motion recognition, and (3) in-sensor spiking neural networks (SNNs) for event-based dynamic information. Furthermore, we will consider potential deployment strategies for these systems using advanced manufacturing technologies, and outline a strategic roadmap that highlights the key challenges facing the development of in-sensor computing.

Biological inspiration for in-sensor computing

The intricate interplay between the retina and the visual cortex forms the basis of the brain’s hierarchical, compact, and adaptable learning processes. Studies show that visual information is transmitted and processed through complex hierarchical pathways in the brain26,27,28,29. Visual signals are initially transduced by photoreceptors in the retina, where rods specialize in low-light vision and cones facilitate color and high-resolution visual tasks. These signals are conveyed via the optic nerve to the lateral geniculate nucleus (LGN), which acts as a crucial relay and integrative hub for visual information before it reaches the primary visual cortex (V1). In V1, spatial and temporal features such as edge orientation, contrast, and motion are further analyzed. From V1, the visual stream diverges into specialized regions, including V2 (secondary visual cortex), V3 (tertiary visual cortex, critical for form-motion integration), MT (middle temporal area), V4, and IT (inferotemporal cortex)30.

Different neural pathways within this system specialize in different aspects of visual processing (Fig. 1). The ventral stream (“what pathway”), involving V1, V2, V4, and IT, excels in object recognition by analyzing shape, color, and texture. In contrast, the dorsal stream (“how pathway”) processes motion and spatial relationships through V1, V2, MT, and the parietal cortex, enabling real-time navigation and action planning31. This dual-pathway mechanism serves as a biological blueprint for multi-task decision-making. For example, in robotic vision, ventral-like algorithms identify object features (e.g., shape), while dorsal-like models analyze the spatial position and posture, improving operational precision. Similarly, autonomous vehicles integrate ventral-like static recognition (e.g., traffic lights) with dorsal-like dynamic prediction (e.g., pedestrian trajectories) using hierarchical algorithms that fuse low-level edge detection and high-level semantic mapping. Inspired by these biological systems, researchers are developing hardware that mimics these neural hierarchies, spanning low-level sensory processing (e.g., object recognition, motion detection) to high-level cognitive integration (e.g., decision-making, trajectory prediction). This hierarchical design enables simultaneous static and dynamic visual processing across temporal sequences and multiple targets, achieving biological-level efficiency in complex environments30,32,33.

The dorsal pathway (in red) specializes in motion recognition and utilizes volatile devices to simulate and track object location and movement. In contrast, the ventral pathway (in blue) focuses on object recognition, employing non-volatile devices to identify and classify static features.

Optoelectronic device architectures for in-sensor computing

The emulation of biological vision systems in hardware requires physical models at two different levels. In this section, we will first discuss individual components such as artificial synapses and neurons.

Photoreceptors

The human retina is a complex biological image detector that integrates various specialized cell types, including photoreceptors (rods and cones), bipolar cells, amacrine cells, and ganglion cells. Photoreceptors serve as the primary phototransducers, converting light signals into neuro-electric pulses through biochemical reactions34. These light-sensitive cells form two functional systems: the cone-mediated photopic system for daylight vision and the rod-dominated scotopic system for low-light detection. The photopic system features approximately 6 million cone cells densely packed in the fovea, enabling color discrimination and high-resolution vision under bright illumination. Three spectral subtypes S/M/L cones collectively allow for the recognition of over 1 million color variations through trichromatic encoding. In contrast, the scotopic system employs 90 to 120 million rod cells distributed peripherally, which are optimized for detecting faint light and motion but lacking color sensitivity. Retinal signal processing involves a hierarchical refinement of visual information. In this process, bipolar cells integrate information from multiple photoreceptors and relay the outputs to ganglion cells, while amacrine cells modulate spatial and temporal features through lateral connections. This neural network extracts essential visual parameters, such as luminance, contrast, edge orientation, and motion direction. Once these parameters are processed, ganglion cells encode the information into action potentials. These compressed neural signals are then transmitted via the optic nerve to the visual cortex for higher-order interpretation.

To ensure photoreceptors to adapt to varying lighting conditions, the retina utilizes two types of biological visual adaptation: photopic adaptation and scotopic adaptation. When transitioning from bright to dark environments, the retina initially struggles to detect objects because cone cells require strong light to function. However, through the scotopic adaptation process, the rod cells gradually become activated and increase light sensitivity to low light, allowing for the recognition of dark objects. Conversely, in bright light conditions, the retina may be temporarily overwhelmed by bright objects, making it difficult to discern details. Through photopic adaptation, the visual system can gradually adjust by suppressing ambient light noise and enhancing the sensitivity of cone cells, enabling the recognition of bright objects over time. This dual adaptation optimizes dynamic range, allowing vision from starlight to daylight35,36. Recently, Chai et al. developed MoS2 phototransistors with an environment-adaptive image-sensing capability. Many charge trap sites in the channel/insulator interface region were intentionally introduced to enable the photopic and scotopic sensing operations. The visual adaptation mode could be activated by applying a constant gate bias to the gate terminal12. However, the major limitation of currently developed adaptive neuromorphic devices is that they cannot automatically switch their adaptive mode according to the ambient lighting environment. In addition to photoreceptor-like devices for adaptive light detection, integrating these photoreceptors with ganglion cells can enhance functionalities like contrast enhancement and various preprocessing tasks.

Optoelectronic neuron

The human retina operates as a distributed computing architecture, where heterogeneous neurons encode visual information through spatiotemporal spike patterns. Mimicking this paradigm, optoelectronic Leaky Integrate-and-Fire (LIF) neurons implement biomimetic spike-based processing, enabling energy-efficient neuromorphic vision systems37,38. The fundamental computation of LIF neurons involves the conversion of input signals into spike patterns, which is widely applied in SNNs. Spike-based processing allows for sparse and efficient information transmission and compression. The conventional LIF model incorporates four key components: threshold-driven firing, “all-or-nothing” property, refractory period, and frequency-dependent tunability. When the membrane potential exceeds a certain threshold, these neurons generate a single electrical signal called a “spike”, with the intensity and duration of the spike reflecting the temporal attributes of the input signal39. In optoelectronic LIF neurons, optical signals can be converted into electronic spikes, and the firing frequency of LIF neurons can be modulated in different optical dimensions, thereby promoting the nervous ability to encode visual information40.

The LIF neuron model can be implemented using a hybrid circuit composed of threshold-switching memristors (TSM), capacitors, and comparators40,41. This model is composed of simple units capable of replicating the spiking behavior of the neurons. A critical component of artificial optoelectronic LIF neurons is a threshold switching memory. The input current charges a parallel capacitance and determines whether a spike is generated by reaching the membrane potential threshold42. The TSM switches from high-resistance state (HRS) to low-resistance state (LRS) when the voltage exceeds the threshold (Vth)43, and reverts to HRS when the voltage drops below the holding voltage (Vhold). The transition generates a discharge spike. The integration of threshold switch (ITO/Ta2O5/Ag) and photosensitive indium–gallium–zinc-oxide (IGZO)-based photoresistor simulates output spike characteristics under light modulation. In a study by Wan et al., LIF neurons responding to optical signals were implemented using ITO/Ta2O5/Ag/IGZO/ITO device42. This device demonstrated the translation of external optical stimuli into spike potentials and achieved low energy consumption of ~400 picowatts per spike. These results reveal the great potential of such devices for constructing an artificial visual system with high energy efficiency and high biological plausibility.

It is important to emphasize that the LIF model replicates only the leaky integrate and fire characteristic of a neuron. Implementing the function of a neuron with a single ion channel requires a similar unit, but to mimic the various ion channels present in the membrane, multiple units must be coupled44. The H−H neuron system can emulate a more complex neuronal behavior. From a circuit theoretic standpoint, the Na and K ion channels of the Hodgkin–Huxley model are mathematically equivalent to two distinct memristors45.

Optoelectronic Synapses

Neurons transmit signals through synapses, which can either enhance or inhibit the signal depending on synaptic weights46. In optoelectronic neuromorphic computing, devices adjust their photoresponsivity and conductance in response to light and electric stimuli, facilitating updates to synaptic weights47,48. Current implementations include two-terminal and three-terminal optoelectronic devices, differing in their weight tuning and read paths.

Two-terminal optoelectronic synapses

Two-terminal devices, particularly the metal-semiconductor-metal (MSM) vertical sandwich structure, are widely used in electrical neuromorphic systems. Two-terminal vertical OEMs utilize the same terminals for weight adjustment and reading, making them suitable for high-density arrays due to their simplicity and compactness. We have categorized five main types of two-terminal devices based on their structures and mechanisms, analyzing their main behavior, pros, and cons in application:

Ferroelectric Control Mechanism: In this mechanism, the photoresponsivity of devices can be manipulated through ferroelectric polarization (Fig. 2a). The polarization alters the barrier height and the built-in electric field, further affecting the device’s photoresponsivity. This control ability allows for the regulation of both positive and negative photoresponsivity. Thus, the ferroelectric device array can perform an in-situ matrix-vector multiplication operation between an input image and a photoresponsivity matrix, enabling object recognition in FCNs. The primary advantage lies in the non-volatility of switched ferroelectric domains combined with rapid switching speeds and low energy consumption, making them suitable for in-sensor computing. For example, a Pt/Pb(Zr0.2Ti0.8)O3 (PZT)/SrRuO3 (SRO) heterostructure can achieve a linear multilevel photoresponse intensity by altering the polarization direction, making it suitable for CNNs49. However, the presence of numerous defects within and at the interfaces of ferroelectric materials challenges reliability, and most ferroelectric materials are difficult to integrate with CMOS technology for large-scale applications, except for hafnium-based ferroelectrics.

Two-terminal devices: Ferroelectric-optoelectronic devices (a) whose photoresponsivity can be modulated through ferroelectric pulse polarization. Interface trapping devices (b) capable of enhancing response current under light exposure51,111. Photo-induced phase-change devices (c) where conductivity can non-volatilely increase with light exposure112. CF optoelectronic devices (d) in which photo-generated carriers can control the charge of oxygen vacancies, thereby non-volatilely modulating their conductivity53,113. Planar two-terminal optoelectronic devices (e) utilizing ion migration mechanisms. Three-terminal devices: FeFETs (f) utilize partial polarization switching within the ferroelectric gate oxide to change conductance. FGTs (g) operate by trapping charge on a floating gate to control the threshold voltage. Conductance tuning of ECRAM (h) is based on the motion of Li+ ions between the electrolyte and channel. CTTs (i) enable precise modulation of electrical characteristics by trapping and releasing photo-generated carriers. Multi-Terminal FETs with a double-gate design (j) for reconfigurable photoresponsivity.

Interface Charge Capture Mechanism: This mechanism involves the capture of photo-generated carriers by the interface barrier and defect vacancies (Fig. 2b). When light is incident on the device, photo-generated carriers are trapped at the interface by the barrier and defects, leading to conductivity modulation. For example, oxide heterojunction structures such as ITO/CeO2-x/AlOy/Al50, ITO/ZnO1-x/AlOy/Al51 can modulate their conductance by altering the interface trap and de-trapping states through light modulation. However, due to the localized effect of the density of states, conductivity modulation tends to saturate. To overcome these limitations, researchers are exploring new materials and device structures to enhance the stability and response speed of the devices. Devices based on interface traps are easy to fabricate and have a wide response wavelength range and fast response rates. However, due to the difficult-to-control distribution of defect states, these devices suffer from poor uniformity and relaxation phenomena, making them unsuitable for nonvolatile storage but ideal for dynamic temporal tasks.

Photo-Induced Phase Change Mechanism (PCM): In this mechanism, the conductivity of the device can be controlled via photo-induced amorphous/crystalline phase changes (Fig. 2c). By utilizing light of different wavelengths, frequencies, and intensities, precise control over device conductivity can be achieved. For example, MoO3 can undergo phase changes under light stimulus, allowing for the modulation of its conductance17. However, this phase transition process typically requires high light energy consumption in practical applications. To reduce energy consumption, researchers are seeking more efficient photo-induced phase change materials and developing new device structures to lower energy consumptions. Despite demonstrating excellent multi-level storage capabilities, practical implementation faces energy efficiency challenges due to high photon energy requirements, particularly in visible spectrum applications.

Conductive Filamentary (CF) Optoelectronic Devices: These devices rely on oxygen vacancies in the conductive filament (Fig. 2d). When light stimulates the device, oxygen vacancies in the filament are ionized, generating more positive vacancies. These vacancies can assist in hopping conduction of electrons, thereby promoting control over the device’s conductivity. This mechanism provides a unique way to control electron transport via light stimulation, offering fresh insights for the development of novel optoelectronic devices. For example, ZnO-based conductive filament states can be modulated by light due to their resistive switching and photosensitive properties52,53. Researchers are investigating ways to optimize the structure and materials of the conductive filament to enhance the performance and stability of the devices. The advantage of CF devices is their compatibility with large-scale CMOS integration, wide wavelength response range, and fast response rates. However, the randomness in filament formation challenges the uniformity and reliability of the devices.

In addition, there are planar two-terminal optoelectronic devices that utilize an ion migration mechanism. Under optoelectronic modulation, ionic migration can dynamically form conductive channels, altering carrier distribution and interface barriers. This enables the device to exhibit programmable optoelectronic response characteristics, effectively emulating the plasticity of neural synapses (Fig. 2e). A typical example is the planar two-terminal MSM device constructed using two-dimensional (2D) metal sulfides. In this device, sulfur vacancy migration behavior is precisely controlled by an electric field, actively modulating the metal-semiconductor contact barrier. This ion migration-induced dynamic barrier adjustment mechanism drives non-volatile changes the photocurrent, successfully mimicking the synaptic weight update functionality in CNNs. The advantages of planar migration devices include rich modulation behavior, but they suffer from uneven defect state distribution, reliability issues, and larger device sizes, making them difficult to fabricate with CMOS BEOL54,55.

Three-terminal/multi-terminal optoelectronic synapses

Three-terminal field-effect transistors (FETs) introduce an additional gate terminal, which allows for independent control of the weight tuning process without interfering with the reading path56. Thus, three-terminal/multi-terminal OEMs simultaneously perform signal transmission and self-learning, faciliating their use in advanced neuromorphic engineering that mimics the brain, which is challenging for two-terminal devices. Their operation is primarily based on four mechanisms:

Ferroelectric FETs (FeFETs) and floating-gate transistors (FGTs) are advanced technologies for non-volatile photoresponse control. FeFETs integrate a ferroelectric gate dielectric (e.g., poly(vinylidene fuoride) (PVDF), Pb(Zr,Ti)O3 (PZT), LiNbO3, or zirconium-doped hafnia (HZO), whose intrinsic dipole polarization can be reversibly reoriented via gate pulses57,58. When subjected to a gate electric field, partial alignment of ferroelectric domains induces bound polarization charges at the dielectric-channel interface. These charges modulate the channel conductivity through electrostatic screening (Fig. 2f), inducing a non-volatile conductance state.

FGTs achieve non-volatile optical conductance updates through an embedded FG layer within the dielectric layer (Fig. 2g)59,60. This architecture vertically integrates a thick blocking dielectric, photoresponsive semiconducting FGs, an ultrathin tunneling dielectric (e.g., Al₂O₃, HfO₂), and a semiconducting channel with a wider bandgap than the FG material. When sub-bandgap light is absorbed by FG materials (e.g., perovskite quantum dots (QDs), black phosphorus (BP) QDs, optimized for visible-to-near-infrared sensitivity)61, photogenerated electron-hole pairs separate due to the aligned band structure: electrons inject into the channel to enhance conductivity, while holes remain trapped in the FG. The ultrathin tunneling dielectric, featuring a high energy barrier and low defect density, suppresses carrier recombination between the FG and channel, thereby ensuring stable retention of the injected charge. And applying a positive gate voltage recombines trapped holes, enabling reversible resetting of optical conductance states. Although gate voltage precisely controls stored charge density, high operating voltages raise energy consumption concerns due to increased leakage currents.

Furthermore, significant progress in emerging electrochemical random-access memory (ECRAM) technology has emerged. ECRAM utilizes gate voltage pulses to move ions (such as Li+) in and out of the electrochemical layer, altering the charge distribution in the semiconductor channel62. This mechanism allows for effective tuning of photoresponsivity (Fig. 2h). Charge trapping transistors (CTTs) can modulate the channel in either a volatile or non-volatile way by trapping photo-generated carriers, due to defects present at the semiconductor’s interface or in its bulk (Fig. 2i). Volatile modulation discharges quickly, leading to a rapid return of channel conductivity to its initial state. In contrast, non-volatile modulation retains charge effectively and is less susceptible to external disturbances.

In advanced configurations, current OEMs have been further designed with two or multiple gate terminals to enable brain-inspired functionalities. For example, applying dual gate voltages (+Vg and -Vg) can dynamically reconfigure p-type and n-type regions within a single channel, forming a reconfigurable p-n homojunction (Fig. 2j). This design effectively mimics synaptic weight updates in neural networks. Furthermore, multi-terminal FETs can transduce various environmental stimuli (e.g., light, pressure, temperature) into electrical signals, enabling co-modulation by multiple inputs and facilitating the artificial synapses that directly interface with external environments. A representative example involves using coplanar multi-gate structures as modulating inputs, with the channel layer acting as a postsynaptic terminal. The system can achieve spatiotemporal coordinate and visual orientation recognition, resembling the hierarchical processing observed in the human visual cortex63. Additionally, multi-terminal FETs support parallel learning and dendritic integration by leveraging independent gate inputs to simulate synaptic summation and nonlinear activation. This architecture reduces the hardware complexity of neural networks by integrating multiple functions into a single device. However, research on neuromorphic multi-terminal FETs is still at an early stage.

Bio-inspired neural networks

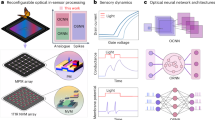

Integration of artificial synapses and neurons into artificial neural networks (ANNs) has been realized in various architectures including CNNs, RNNs, and SNNs. Particularly in the emerging field of in-sensor computing, researchers have developed novel implementations using optoelectronic devices: in-sensor FCNs/CNNs, in-sensor RNNs, and in-sensor SNNs (Fig. 3).

Three architectures: FCN/CNN (a) RNN (b) and SNN (c). Also shown are their building blocks, key features, and implementation examples.

In-Sensor FCN/CNN

Recent research has focused on the construction of FCNs, particularly emphasizing single-layer perceptron networks at sensor terminals64. A key example is the introduction of reconfigurable sensor arrays capable of efficiently performing multiply-and-accumulate (MAC) operations, which are central for in-sensor processing pipelines (Fig. 3a). Consider a sensor array arranged in a M × N configuration, where N indicates the number of pixels. Each pixel comprises M sensory elements (or subpixels), defined by the number of classification neurons. In this array, the photocurrent generated by each sensor, represented by Imn = Rmn × Pn, illustrates the analog multiplication of the incident light power (Pn) at the n-th pixel with the photoresponsivity (Rmn) of the subpixel. The resulting currents are then summed along m-th interconnected sensor elements utilizing Kirchhoff’s current law, yielding M types of output photocurrent, expressed as Im = ∑n=1NImn = ∑n=1NRmnPn. This operation can be succinctly articulated as the matrix–vector multiplication of I = RP. Here, P = (P1, P2, …, PN)T is the stimuli vector, and R = (Rmn) is the photoresponsivity matrix of the sensor array.

The matrix R is reconfigurable through methods like electrostatic doping. By applying dual gate voltages of +Vg and -Vg, real-time shifts of the channel’s energy bands are achieved (Fig. 4a). This facilitates the formation of p-type and n-type regions within a single channel, effectively forming a p-n homojunction65. By varying the magnitude and polarity of the gate voltages, each sensor’s photocurrent or photoresponsivity can be adjusted to specific positive or negative values for a given incident light power (Fig. 4b). This effectively emulates changes in synaptic weight during training in neural networks, where “negative” refers to the direction (polarity) of the resulting photocurrent. Mueller et al. configured a 3 × 3 imaging array (N = 9) with three photodiodes per pixel (M = 3) (Fig. 4c) to achieve ultrafast image recognition at the ns level (Fig. 4f). Each photodiode employed WSe2, a 2D ambipolar semiconductor, as the channel material, with its photoresponsivity adjusted with dual gates through electrostatic doping. Additionally, the short-circuit photocurrents (Isc) produced by each photodiode under optical illumination were summed according to Kirchhoff’s law by hard-wiring the devices in parallel (Fig. 4d).

a Schematic of a single WSe2 photodiode. b Current–voltage characteristic curve of a single WSe2 photodiode, along with the gate voltage tunability of the photoresponsivity (inset). c Microscope image of the photodiode array consisting of 3 × 3 pixels, where each pixel comprises three WSe2 photodiodes (subpixels). d Illustration of the photodiode array, with subpixels connected in parallel to generate output currents IM. e Schematic of the classifier. f Accuracy of the classifier during training for varying artificial noise levels. Reproduced with permission64. Copyright 2020, Springer Nature. g Measured photocurrent with pulsed light and stepped gate voltages (Vg1, Vg2). h Schematic and micrograph of a 3 × 3 photodiode network for analog MAC computation. The accumulated photocurrent is converted to voltage (Vout) by a transimpedance amplifier (top). Various filtered images obtained with in-sensor computing (bottom). i Measured Vout of the photodiode array configured as an edge detection filter kernel, with the light spot moving across the array. Reproduced with permission114. Copyright 2022, Springer Nature.

Following this, we further discuss the implementation of in-sensor CNNs, utilizing a 3 × 3 convolutional kernel. The 3 × 3 sensor network is designed as an optoelectronic convolutional processor that employs a 3 × 3 map of photoresponsivity as an image filter kernel. To process an image, a 3 × 3 patch defined by light spots of varying power is projected onto the sensor network. Each sensor generates photocurrent given by Ik = Rk × Pk (where k = 1–9), which illustrates the multiplication of incident light power by photoresponsivity. The total photocurrent is accumulated using Kirchhoff’s current law, providing an outcome of the optoelectronic convolution. This output can be succinctly expressed as a dot product between the 1 × 9 incident light power vector and the 9 × 1 photoresponsivity vector. By sliding the 3 × 3 patch through the n × n image and repeatedly performing the optoelectronic convolution, this generates a (n-2) × (n-2) matrix of accumulated photocurrents, totaling (n-2)2 values, which represents the image processed with the convolutional filter. Donhee Ham et al. developed a dual-gate silicon p–i–n photodiode array that adjusts photoresponsivity through electrostatic doping for in-sensor optoelectronic convolutional image processing (Fig. 4g). This array utilizes a 3×3 photodiode network to implement seven different convolutional filters (Fig. 4h, i). However, these optoelectronic devices still suffer from the volatile nature of their photoresponsivities, which require a sustained gate bias to maintain.

Non-volatile In-Sensor FCN/CNN

Integrating memory into in-sensor computing improves the versatility of visual systems, particularly for high-level computational tasks that require frequent data reading and writing. In this framework, “memory” refers to the system’s ability to store weights (photoresponsivity R), rather than the photocurrents (I). This is similar to that for in-memory computing (Ioutput = G × V), where weight conductance G is stored instead of input voltage (V). The ability for non-volatile weight storage, which maintains a stable and linear photoresponsivity, facilitates the analog matrix–vector multiplication of I = RP. Thus, images can be directly recognized through the integrated visual systems without requiring external memory or processing units, achieving energy efficiency.

In early implementations, the weights of visual systems are stored in external memory and supplied to each sensor via cabling, which inevitably consume additional energy. More importantly, extremely complex circuits are required for the individual control of each unit in neural networks, which is almost impossible and unacceptable for large-scale sensory networks. For scalability, it is essential to store these weights locally. Table 1 presents some typical works that utilizes various mechanisms for local weight storage. For example, Wang et al. have developed ferroelectric-defined reconfigurable 2D MoTe2 photodiode arrays for in-memory sensing and computing, using organic ferroelectric P(VDF-TrFE) films as gate dielectrics (Fig. 5b, d)57. The weight is stored and programmed by gradually switching the ferroelectric domains, enabling 51 distinguishable weight states with linear and symmetrical characteristics (Fig. 5f). Once set, each sensor retains its photoresponsivity value and outputs a photocurrent with adjustable sign/magnitude. However, the claimed “memory” ability of some current neuromorphic sensors primarily refers to the storage of photocurrent, which can be used for some signal pre-processing operations.

a Schematic of the M-MoS2-M device structure with back-to-back M–MoS2 Schottky diodes. The MoS2 under the source/drain electrodes is treated with O2 and Ar plasma54. Copyright 2023, Springer Nature. b Schematics of the device structure of the Pt/PZT/SRO ferroelectric photosensor. Reproduced with permission49. Copyright 2022, Springer Nature. c Schematic of the device structure of core-sheath SWNT@GDY nanotubes based photosensor. Reproduced with permission66. Copyright 2023, AAAS. d Schematic of the ferroelectric-defined reconfigurable 2D homojunctions. e Symmetric and reversible modulation of the photoresponsivity tuned by ferroelectric domains. f Long-term stability of positive states, negative states, and “zero” state. g Illustration of the hardware implementation process using a ferroelectric-defined photodiode array. The array recognizes the incoming pattern and sends a corresponding command to the robot dog via a wireless signal. h Patterns “L”, “T”, and “⅃” command the robot dog to move left, right and stop, respectively. Array 1 (I1), Array 2 (I2) and Array 3 (I3) are trained to identify patterns “L”, “T”, and “⅃”, respectively. i Robot dog navigation is determined by the corresponding real-time current profiles in (h). Reproduced with permission115. Copyright 2023, Springer Nature.

Ionic migration offers an alternative mechanism for achieving reconfigurable and non-volatile photoresponsivity. By inducing an unbalanced ion distribution, it creates variations in electric potential and internal electric fields. Hu et al. demonstrated this concept by introducing a high concentration of sulfur vacancies (positive ions) at the metal-semiconductor (M-S) junctions of a M-MoS₂-M structure (Fig. 5a)54. The migration of sulfur vacancies, driven by an in-plane electric field (voltage pulses), redistributes the local sulfur vacancy concentration in the MoS2 channel, thus adjusting the Schottky barrier at the junctions. This modulation breaks the symmetry of the electric potential in M-S-M devices, affecting both the amplitude and polarity of the short-circuit photocurrent. Furthermore, like memristors, these photocurrent states are non-volatile as the barrier and vacancy concentration remain stable without an electric field. As a complementary strategy, Zhang et al. proposed an ion-induced localized-field modulation using core-sheath single-walled carbon nanotube@graphdiyne (SWNT@GDY) (Fig. 5c). This GDY structure features large, evenly distributed pores, allowing for high storage capacity of Li+ ion. A small gate bias facilitates the intercalation of Li+ ions from the electrolyte into GDY, where they remain stored after the removal of the gate bias. Applying positive or negative gate pulses permits precise control over the captured Li+ ions in GDY, enabling linear modulation of photoresponsivity66.

In-Sensor RNN

In dynamic machine vision, accurately predicting future motion trajectories is crucial for applications like video analysis, robotic vision, and self-driving technology. This predictive capability relies on the effective integration of current and previous visual inputs, enabling systems to perform complex tasks like speed extraction, motion anticipation, and motion extrapolation67. RNNs are specially designed to process sequences of data, learning from both past and present inputs to better model temporal dependencies within the visual data (Fig. 3b). Recently, in-sensor reservoir computing (RC) has emerged as a promising paradigm within the RNN framework68, significantly lowering computational costs while maintaining high efficiency, as only the readout layer requires training. In RC, a dynamic reservoir (an untrained recurrent layer) nonlinearly projects input data into a high-dimensional feature space. This transformation converts complex inputs into linearly separable states, which are then combined through weighted summation during the training of the readout layer69,70.

Volatile optoelectronic devices exhibit intrinsic short-term memory capabilities, resulting in temporal evolution and summation of conductance under sequential light pulse stimulation (Fig. 6a). This behavior is ideal for dynamic processing and memory in hardware implementations of in-sensor RC71. Table 2 lists various volatile optoelectronic devices used in these applications. For example, the interaction between photo-generated carriers and charge trap centers slows the photocurrent response, allowing these devices to serve effectively as the reservoir layer of the RC system. As demonstrated by van Dijken et al., in ITO/ZnO/NSTO photomemristors, the trapping and de-trapping of photo-generated carriers by defect states (oxygen vacancies) at the ZnO/NSTO interface triggers a dynamic memristive response. Similarly, chai et al. showed that in MoS2 phototransistors, the photo-generated carriers trapped in the gap states of MoS2 result in a sublinear increase in conductance over time, contributing to the device’s volatile characteristics.

a Response characteristics of volatile MoS₂ phototransistors with nonlinear temporal summation characteristics when stimulated by light. b Processing four light stimulation pulses by MoS₂ phototransistors with time constants varying from 10 ms (Vg = + 3 V) to 106 ms (Vg = −3 V). c Encoding spatiotemporal information with MoS₂ phototransistor at different time scales, tracking motion at speeds ranging from 0.005 to 50 km h−1. d Mapping spatiotemporal information with MoS₂ phototransistor arrays, converting the temporal vision sequence s(t) into compressive states x(t). Reproduced with permission105. Copyright 2023, Springer Nature. Schematic (e) and photograph (f) of the fully-hardware photoelectronic RC system, integrating a photoelectronic reservoir layer for feature outputs, a memristor array for training, and an FPGA controller. Reproduced with permission103. Copyright 2022, Springer Nature.

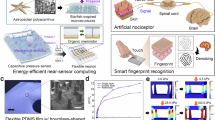

Current studies are exploring novel device structures and systems to improve in-sensor RC capabilities. Adjustable temporal summation at sensory terminals allows for effective processing of motion perception across various scenarios (Fig. 6d). However, RC systems utilizing two-terminal volatile devices often face limitations with non-adjustable timescales and relatively fixed nonlinear transformation functions. As previously mentioned, three-terminal phototransistors with an additional terminal for gate control offer a promising solution. They allow precise control of photo-generated carriers and a dynamic response to varying sensory stimuli. Research by Chai et al. has shown that MoS2 phototransistor arrays can directly perceive different types of motion, ranging from slow (e.g., snail) to fast (e.g., cheetah). By varying gate voltages, they achieved a temporal resolution of 10 to 106 ms (Fig. 6b, c), effectively modulating the charge dynamics of shallow trapping centers. Additionally, there is increasing interest in developing energy-efficient integrated systems. Long et al. constructed an integrated photoelectronic RC system using volatile photoelectronic devices (amorphous GaOx) as the reservoir layer, connected to a memristor array (TaOx/HfOy) for latent fingerprint recognition (Fig. 6e, f). Remarkably, the system achieves over 90% recognition accuracy even under a 15% stochastic noise level.

In-sensor SNN

SNNs enable information processing in a way that closely resembles biological processes, thereby enhancing efficiency in tasks such as motion recognition. This network utilizes spiking neurons, primarily of the “LIF” type, to communicate through discrete spikes72. Each neuron receives input spikes (“events”) from a small area of visual space, altering its internal state (membrane potential), and producing an output spike (action potentials) when its state surpasses a predetermined threshold73. The computation performed by SNNs using LIF neurons relies primarily on simple operations such as the integration of input spikes through addition and the comparison of the internal state against a threshold value74. As a result, SNNs have significantly lower computational demands compared to traditional ANNs, which often depend on complex matrix operations involving extensive multiplications and additions75,76.

The optimal scenario for SNNs is to receive spike trains containing timing information from the ever-changing real world, just like how our brains operate (Fig. 3c). Currently, the performance of SNN training algorithms is evaluated using static image datasets that are converted into spike trains through various encoding techniques (Fig. 7a), such as rate coding, rate/temporal fusion (RTF) coding, or time-to-first-spike (TTFS) coding42,77,78. However, it is crucial to move beyond static benchmark datasets. More research is necessary to compile appropriate benchmark datasets from dynamic vision sensors (DVS) that can capture only dynamic changes in a scene79,80. Such dynamic sensory data can be combined with the temporal processing capability of SNNs to enable extremely low-power computing81.

a Greyscale images are encoded into series of spike trains by the rate coding, TTFS coding, and RTF coding schemes, and fed into a trained SNN to predict the vehicle speed and steering angle. Reproduced with permission77. Copyright 2024, Springer Nature. b An in-sensor SNN created by combining event-driven characteristics and in-sensor computing. Reproduced with permission116. Copyright 2023, Springer Nature. c Output spike situation in each neuron when the three types of motions are performed sequentially. d Non-volatile and programmable photoresponsivity under a series of gate voltage pulses, proving its feasibility for use as the synaptic weights in neural networks. e Event-based sensors generate programmable spikes when light intensity changes. f Comparison between frame- and event-based vision sensors. The bottom panel shows the output signal after the pixel sensing process of event-based sensors, and only in the regions with the change of the light intensity changes generate positive or negative current spikes. Reproduced with permission82. Copyright 2023, Springer Nature.

Furthermore, researchers have introduced the concept of in-sensor SNN. Unlike for static image recognition, in in-sensor SNNs, the MAC for motion recognition operates between the input light intensity change (vector) and the synaptic weight in the photodiode array (matrix), according to the physical law: ΔIm =∑n=1NΔImn = ∑1N k × Rmn × ΔPn, where in which k is a constant, Rmn is the photoresponsivity of the m-th subpixel in the n-th pixel, and ΔPn is the local light intensity change in the n-th pixel. The summed ΔIm is input to the output neurons with a LIF neuron. Chai et al. developed a computational event-driven vision sensor utilizing the 2D2R1C architecture (Fig. 7b), which converts dynamic motion into programmable, sparse, and informative spiking signals (Fig. 7e, f)82. Each pixel features two branches with opposite polarity and different photoresponse times, to generate event-driven spiking signals. They employ WSe2 photodiodes with a floating dual-gate structure to maintain different non-volatile photoresponsivity (Fig. 7d). This allows for precise modulation of spiking signals amplitudes in each pixel, effectively emulating different synaptic weights in SNNs. The in-sensor SNNs can execute the motion recognition tasks with a temporal resolution of 5 μs (Fig. 7c). Also, they designed a 2T2R1C cell with 2D MoS2 phototransistors with an inherently high dynamic range of 140 dB83.

Benchmarking metrics

Emerging optoelectronic devices must follow standardized key metrics for effective performance evaluation. Device-level metrics focus on the properties of individual component (e.g., energy efficiency, response speed, array scale, endurance (cycles)/retention). Table 3 summarizes the current state-of-the-art key metrics for both non-volatile/volatile OEMs for optoelectronic computing.

Energy efficiency

The optimization of energy efficiency is critical for hardware implementations. As integration technology advances and computing density increases, the energy per operation becomes the defining metric for OEM performance. ECRAM devices based on ionic migration mechanisms demonstrate ultra-low programming energy (e.g., ~aJ/operation for ionic migration) and eliminate static power for state retention, while the reorientation of ferroelectric domains requires higher voltage and longer programming times compared to the drift of mobile cations in the electrolyte. In contrast, volatile OEMs (e.g., interfacial charge trapping) consume ~nJ/operation during dynamic operation, reflecting their reliance on continuous energy for temporal response. Remarkably, self-powered photovoltaic OEM architectures achieve zero-energy inference and energy use only during programming.

Speed

The response time of optoelectronic devices greatly impacts the working speed of vision sensors. It is determined by the materials and device structures. The charge-trapping-based devices inherently suffer from millisecond-scale latency due to the charge-trapping/de-trapping dynamics, creating a critical bottleneck for high-speed imaging. Remarkably, p–n junctions with an internal electric field can realize ultrafast photoresponse64. For volatile OEMs, their conductance dynamics are exploited for computing functions, such as short-term memory for motion recognition. Therefore, the temporal evolution of their conductance also determines the speed of processing the dynamic visual input.

Array scale

As computing tasks become more complex, integrating more processing units on a single chip is now critical. Oxide semiconductors (e.g., ZnO) offer distinct advantages for scalable fabrication, including low device-to-device variation, low-temperature processing, and CMOS compatibility52. However, these materials primarily respond to violet light, limiting their utility in environmental sensing and high-energy imaging. Emerging materials like perovskites84 demonstrate great potential for large-area fabrication and detection across visible to near-infrared wavelengths. Yet, they degrade rapidly when exposed to air, severely reducing their long-term stability. Recent advances in uniform large-area fabrication and material engineering aim to mitigate these limitations, paving the way for robust, high-performance optoelectronic systems.

Endurance (cycles)/retention

In non-volatile memory systems, endurance and retention are two critical operational metrics. ECRAM devices utilizing ionic migration mechanisms exhibit unstable properties in the programmed conductivity state, due to inherent material limitations. The migration of cations through liquid electrolytes induces spontaneous ion redistribution, leading to conductivity decay. In contrast, devices based on ferroelectric polarization mechanisms mainly exhibit much longer non-volatile retention performances of the programmed conductance state. Their stability derives from fundamental physical barriers that maintain polarization through coercive field thresholds. Reorienting the direction of polarized domains in ferroelectric materials is a passive process requiring an external bias programming signal. Therefore, the programmed conductance state can be maintained for a long time if there are no additional high-voltage programming attempts.

Perception range

The perception range of optoelectronic devices refers to their ability to sense light intensity, speed, and wavelength. Adaptation in these devices enables the adjustment of the detection range, thus expanding their perception ability in different dimensions. Spatial adaptations allow optoelectronic devices to accurately capture details in both shadows and highlights within a single frame. Temporal adaptations broaden the scope of motion perception, extending it from static objects to high-speed movements. Additionally, spectrum adaptation fine-tunes the device’s sensitivity to various wavelengths, improving color detection under varying lighting conditions.

Integration technologies

The practical use of OEMs relies not just on diverse material systems and device structures but also on effective circuit design and processing technologies85,86. Traditional integration methods, particularly those used in printed circuit boards, often result in significant distances between sensing and processing units, which adversely impact integration density and efficiency. Thus, advancing integration technologies is essential for achieving low-power and low-latency architectures87,88. In-sensor computing architecture performs information processing within sensor networks, effectively eliminating the physical separation between sensing and computing units. Additionally, various integration technologies, including planar System on Chip (SoC), monolithic three-dimensional (3D) integration, heterogeneous 3D integration, and so on, are being actively explored.

Planar SoC integrates both sensing and processing units on a single substrate, typically arranging components in a flat, 2D layout. However, this integration technology limits the density and complexity compared to 3D integration, and longer interconnects can lead to increased signal latency due to extended data pathways between components. Despite these limitations, this design simplifies manufacturing and enabling better thermal management (Fig. 8a). Various visual sensor chips have utilized planar SoC technology for tasks such as feature extraction and high-level processing89. Wu et al. demonstrated a fully integrated 1-kb array with 128 × 8 one-transistor-one-OEM cells integrated with Si CMOS logic circuits (Fig. 9a). These Pd/TiOx/ZnO/TiN OEMs exhibit multi-modal characteristics by integrating functionalities of electronic memristors, dynamic OEMs, and non-volatile OEMs52. A fully OEM-based in-sensor RC system was thus developed for human motion recognition, where an 18-dynamic OEM array implements the reservoir layer, while five identical 1k electronic memristor arrays (mapping the 720 × 5 weights using 90 × 8 devices on each array) implement the readout layer (Fig. 9b). This monolithic integration of the multi-functional OEM with Si CMOS provides a cost-effective platform for various in-sensor computing applications (Fig. 9c).

Three types of integration architectures: planar SoC (a) monolithic 3D integration (b) and heterogeneous 3D integration (c).

a Micrograph of the 128 × 8 crossbar array (top), and a cross-sectional high-resolution TEM image showing the 1T1OEM structure with a Pd/TiOx/ZnO/TiN stack on top of the drain terminal of the Si transistor (bottom). b Circuit diagram of a fully OEM-based in-sensor RC system. c Distributions of the (720 × 5) target conductance weights, the OEM hardware weights and the error values (left). A comparison of the recognition accuracy after training for the GPU full precision simulation and the fully OEM-based in-sensor RC hardware (right). Reproduced with permission52. Copyright 2024, Springer Nature. d Schematic of the fabricated M3D-SAIL chip. e Schematic of the 1T1R cell with electrical connections to the Si CMOS circuits underneath. f Schematic of video keyframe extraction task implemented by M3D-SAIL. g Energy consumption and latency benchmark of the M3D-SAIL chip. Reproduced with permission90. Copyright 2022, WILEY-VCH. h Schematic of stackable hetero-integrated chips with chip-to-chip communication in a sensor computing system. The first layer senses input and enables parallel processing over the 3D hetero-integrated chip stack. i Schematic of a hetero-integrated chip, mainly consisting of (left) an optoelectronic device stack with LED/PD arrays aligned with backside holes via deep reactive-ion etching, and (right) a neuromorphic computing core featuring a memristor array that performs three types of 3×3 kernel operations. Reproduced with permission91. Copyright 2022, Springer Nature.

The 3D integration enables the vertical stacking of different functional components, such as sensors, memory, computing, and communication units, into multiple tiers within a single system. This approach offers key advantages over planar geometry, including higher bandwidth for processing large amounts of data from sensor networks, a reduced footprint that saves space within the system, and shorter connection routing that minimizes delays for low-latency communication. Monolithic 3D integration adopts a microfabrication process with ultra-dense interlayer vias (ILVs) to build on-chip interconnects among functional layers. With a significantly smaller pitch than through-silicon vias (TSVs) used in heterogeneous 3D integration, ILVs enable higher integration density and ultrahigh on-chip data bandwidth (Fig. 8b). Wu et al. demonstrated the monolithic 3D integration of an IGZO-FET-based photosensor array for optical sensing, a one-transistor-one-resistor (1T1R) array using IGZO-FET and resistive random-access memory (RRAM) for analog computing-in-memory, and Si CMOS circuits for control logic (Fig. 9d, e), referred to as M3D-SAIL90. This approach exploits the high-bandwidth on-chip data transfer and parallel computing-in-memory cores for energy-efficient processing in video keyframe extraction (Fig. 9f and g), achieving 31.5× lower energy consumption and 1.91× faster computing speed compared to its 2D counterpart.

In heterogeneous 3D integration, copper microbumps and TSVs are commonly used to stack separately fabricated dies. While this technology increases area overhead, manufacturing complexities, and introduces parasitic capacitances that limit overall performance, it offers greater flexibility for specific applications. Unlike traditional monolithic 3D integration, which often relies on a single microfabrication process and may restrict design diversity, heterogeneous 3D integration allows for the combination of different semiconductor technologies within a single integrated package. To further enhance the capabilities of current heterogeneous 3D integration, Kim et al. introduced a reconfigurable integration technology that employs optoelectronic device stack including GaAs photodetectors (PDs) and InGaP light-emitting diodes (LEDs) for chip-to-chip communication in each heterogeneous chip layer, making it possible to stack and restack a variety of chip modules (Fig. 9h)91. Further in each stackable chip, neuromorphic computing processors embedded with a 32 × 32 Ag–Cu-alloy-based Si memristor crossbar array serves as deep learning hardware accelerators, efficiently processing analog data near photosensors (Fig. 9i). This system directly classifies information from a light-based image source. The initial data generated by the chip’s eye layer responds to external optical stimuli and is then processed through memristor-based hardware accelerators.

Roadmap

As illustrated in Fig. 10, it showcases the present research progress and future developments in the field of in-sensor computing. In the early stages, the primary focus was on the investigation of individual devices. These devices incorporated fundamental physical mechanisms such as trapping/detrapping92, photo-induced phase changes, p-n junctions, heterojunctions93, and chemical doping94, among others. The main goal of these foundational devices was to facilitate preliminary low-level pre-processing tasks such as analog storage50, image denoising17, and decoding. As the complexity and miniaturization of device structures advance, and with the maturation of array technology, the feasibility of validating more sophisticated visual processing tasks increases. For instance, CNN can be harnessed for cognitive functions, including but not limited to static object recognition and target tracking. Additionally, RC has the potential to execute motion recognition tasks. Looking forward, the development should focus on several key areas, including high-performance biomimetic devices, large-scale integration, multifunctional neural network algorithms, reducing system energy consumption, and expanding the application of intelligent terminal device chips:

The development of this system encompassed fundamental materials to chip system integration, involving material science, physical mechanism, device design, fabrication, algorithm, and system integration. For the hardware, the research focused on the combination of different physical properties of single devices, gradually advancing towards small-scale arrays and three-dimensional architectures. For the functionality, there has been a clear progression from low-level processing to high-level processing, such as storage, filtering, and denoising, and then moving on to more advanced tasks such as decoding, target tracking, and the capability to process complex visual scenes through a combination of multiple neural networks.

High-performance biomimetic devices

To achieve the characteristics of artificial visual systems, such as high sensitivity, wide spectral response, high dynamic range, and adaptive adjustment, it is essential to research the physical mechanisms underlying the dynamic response of devices. Such investigations will drive breakthroughs in response sensitivity, wavelength range, response time, and biomimetic functional behavior. For instance, doping fabrication can be used to increase the density of states, expanding the response from ultraviolet to infrared wavelengths. Additionally, interface heterojunction design can enhance photocarrier separation efficiency while reducing device operating energy consumption. Furthermore, addressing engineering challenges is crucial to improving device uniformity and reliability, thereby establishing a solid hardware foundation for high-performance in-sensor computing systems.

Large-scale, high-density integration

It is crucial to develop integration technologies that align with the CMOS backend process and to embrace a three-dimensional integration architecture to boost data processing efficiency. By strategically assigning sensing, computational processing, and logic control functions across different layers, and by amplifying the information transmission density through interlayer connections, we can optimize system functionality. Pre-processing can be carried out at the sensing layer, while a variety of neural network tasks can be managed at the computing-in-memory layer. Furthermore, the flow of signals can be controlled at the logic layer.

Addressing reliability challenges

OEMs face critical reliability challenges, including cycle-to-cycle variations and device-to-device discrepancies that impede practical deployment. Key concerns involve material and interface defects (e.g., polarization fatigue in ferroelectric devices and stochastic filament formation in CF devices) and material degradation under repeated operation (e.g., electrolyte decomposition in ECRAM). To enhance reliability, strategies include material optimization (e.g., defect-passivated high-quality ferroelectrics like HfZrO₂ and solid-state electrolytes like LiPON), structural innovations (3D array integration, nanoscale electrode designs for filament confinement). Advanced fabrication techniques (e.g., atomic layer deposition) and CMOS-compatible processes reduce variability. Collaborative co-design of materials, processes, and architectures is essential to overcome reliability bottlenecks in large-scale in-sensor computing systems.

Multifunctional neural network algorithms

We need to develop low-level to high-level processing algorithms and handle both static and dynamic visual tasks, simulating complex information processing, and thus mimicking the collaborative work of the dorsal and ventral pathways in the visual system to accomplish tasks across different time sequences, low illumination and multi-target tasks.

Improving system energy efficiency

Generally, the energy consumption of CMOS sensor processors consists of the combined energy usage of the sensor, memory, computing system, and peripheral transmission system. To further evaluate the energy efficiency of in-sensor computing systems, it is essential to consider the total energy consumption of the entire system required to complete the task. The total energy consumption includes the operational energy of the optoelectronic array and the energy consumption of peripheral circuits, which can be calculated as follows:

Total Energy Consumption = (Number of computational operations) × (Electrical operation energy per device + Optical operation energy per device) + Peripheral system energy consumption. To enhance the energy efficiency of the system, the following optimization strategies can be employed:

-

(1)

Optimization of algorithm architectural design: Reduce the number of computational operations required by the algorithm to lower the overall computation.

-

(2)

Optimization of device functionality: Improve device materials and structures to reduce the energy consumption of individual operations. For example, utilizing photovoltaic P-N junctions can reduce the external electric field required for photoelectric responses, thereby minimizing energy consumption.

-

(3)

Enhancement of system integration: Optimize the design of peripheral circuits to reduce the energy consumption of non-core components.

By implementing these multi-level optimization strategies, the energy efficiency of in-sensor computing systems can be significantly improved, providing robust hardware support for high-performance computing.

Application prospects for intelligent terminal chip

Optoelectronic multifunctional devices provide an efficient and flexible hardware foundation for intelligent terminal devices, demonstrating broad application prospects as shown in Fig. 11. In the field of embodied intelligence, highly adaptive optoelectronic devices can maintain exceptional performance even under extreme lighting conditions. By utilizing dynamically tunable optoelectronic response devices, 3D augmented reality processing can be achieved, enabling robots to efficiently handle complex real-world problems. In autonomous driving, hardware systems equipped with high-speed SNN support can enable rapid processing of visual information and motion control, significantly reducing latency and enhancing processing speed and system safety. In the medical field, intelligent vision systems can efficiently classify and extract critical pathological information, greatly shortening diagnosis time and improving accuracy, thereby effectively assisting doctors in evaluating and managing medical conditions. In space exploration, by extending the multispectral response capabilities of devices, functions such as cosmic ray detection and extraterrestrial material analysis can be realized, providing advanced technological support for deep-space exploration.

a Embodied intelligence. b autonomous driving. c Medical field. d Space exploration.

Throughout this process, it is vital to fully utilize emerging technologies and methodologies to build more efficient, reliable in-sensor computing systems. This may involve exploring new materials, adopting advanced manufacturing processes, and innovating in algorithms and architectures. Through these efforts, we aim to transition in-sensor computing technology from the lab to practical applications, thereby providing robust support for the development of intelligent systems.

Conclusions

This review provides an overview of bio-inspired OEMs for energy-efficient in-sensor computing. By integrating bio-inspired neural networks—particularly, in-sensor FCNs/CNNs for static recognition, RNNs for motion tracking, and SNNs for event-driven processing—these OEMs mimic retinal-cortical vision via innovative pathway processing. Looking ahead, it is crucial to further explore new materials, advanced manufacturing processes, and innovate algorithms and architectures to develop robust, large-scale in-sensor computing systems. Additionally, building standardized benchmarking metrics and reducing overall system energy consumption will be vital for progress. Broadening the application of intelligent terminal chips will also play a key role in advancing intelligent systems. we aim to build more efficient and reliable in-sensor computing systems, ultimately bridging the gap between lab and practical applications.

References

Wang, W. et al. Neuromorphic sensorimotor loop embodied by monolithically integrated, low-voltage, soft e-skin. Science 380, 735–742 (2023).

Chen, Y. et al. All-analog photoelectronic chip for high-speed vision tasks. Nature 623, 48–57 (2023).

Pei, J. et al. Towards artificial general intelligence with a hybrid Tianjic chip architecture. Nature 572, 106–111 (2019).

Schaller, R. R. Moore’s law: past, present and future. IEEE Spectr. 34, 52–59 (1997).

Yao, P. et al. Fully hardware-implemented memristor convolutional neural network. Nature 577, 641–646 (2020).

Zhou, F. & Chai, Y. Near-sensor and in-sensor computing. Nat. Electron. 3, 664–671 (2020).

Schuman, C. D. et al. Opportunities for neuromorphic computing algorithms and applications. Nat. Comput. Sci. 2, 10–19 (2022).

Indiveri, G. & Douglas, R. Neuromorphic vision sensors. Science 288, 1189–1190 (2000).

Marx, V. The big challenges of big data. Nature 498, 255–260 (2013).

Bays, P. M. & Husain, M. Dynamic shifts of limited working memory resources in human vision. Science 321, 851–854 (2008).

Ding, H., Smith, R. G., Poleg-Polsky, A., Diamond, J. S. & Briggman, K. L. Species-specific wiring for direction selectivity in the mammalian retina. Nature 535, 105–110 (2016).

Liao, F. et al. Bioinspired in-sensor visual adaptation for accurate perception. Nat. Electron. 5, 84–91 (2022).

Ouyang, B. et al. Bioinspired in-sensor spectral adaptation for perceiving spectrally distinctive features. Nat. Electron. 7, 705–713 (2024).

Li, L. et al. Adaptative machine vision with microsecond-level accurate perception beyond human retina. Nat. Commun. 15, 6261 (2024).

Jiang, X. et al. Multiframe-integrated, in-sensor computing using persistent photoconductivity. J. Semicond. 45, 092401 (2024).

Zhou, G. et al. Full hardware implementation of neuromorphic visual system based on multimodal optoelectronic resistive memory arrays for versatile image processing. Nat. Commun. 14, 8489 (2023).

Zhou, F. et al. Optoelectronic resistive random access memory for neuromorphic vision sensors. Nat. Nanotechnol. 14, 776–782 (2019).

Syed, G. S., Zhou, Y., Warner, J. & Bhaskaran, H. Atomically thin optomemristive feedback neurons. Nat. Nanotechnol. 18, 1036–1043 (2023).

He, Z. et al. An organic transistor with light intensity-dependent active photoadaptation. Nat. Electron. 4, 522–529 (2021).

Pang, X. et al. Non-volatile rippled-assisted optoelectronic array for all-day motion detection and recognition. Nat. Commun. 15, 1613 (2024).

Dang, Z. et al. Object motion detection enabled by reconfigurable neuromorphic vision sensor under ferroelectric modulation. ACS Nano 18, 27727–27737 (2024).

Gao, Q. et al. Physical origin of planar linear dichroism in van der Waals semiconductors using main group elements. Sci. China Inf. Sci. 67, 222402 (2024).

Zhang, F. et al. Bandgap engineering of BiIns Nanowire for wide‐spectrum, high‐responsivity, and polarimetric‐sensitive detection. Adv. Funct. Mater. 33, 2306077 (2023).

Wang, X. et al. Polarizer-free polarimetric image sensor through anisotropic two-dimensional GeSe. Sci. China Mater. 64, 1230–1237 (2021).

Wu, W.-Q., Wang, C.-F., Han, S.-T. & Pan, C.-F. Recent advances in imaging devices: Image sensors and neuromorphic vision sensors. Rare Met. 43, 5487–5515 (2024).

Van Essen, D. C., Anderson, C. H. & Felleman, D. J. Information processing in the primate visual system: an integrated systems perspective. Science 255, 419–423 (1992).

Priebe, N. J. & Ferster, D. Inhibition, spike threshold, and stimulus selectivity in primary visual cortex. Neuron 57, 072901 (2008).

Kravitz, D. J., Saleem, K. S., Baker, C. I., Ungerleider, L. G. & Mishkin, M. The ventral visual pathway: an expanded neural framework for the processing of object quality. Trends Cogn. Sci. 17, 26–49 (2013).

Tamietto, M. & Morrone, M. C. Visual plasticity: blindsight bridges anatomy and function in the visual system. Curr. Biol. 26, R70–R73 (2016).

Peng, Z. et al. Multifunctional human visual pathway-replicated hardware based on 2D materials. Nat. Commun. 15, 8650 (2024).

Baden, T., Euler, T. & Berens, P. Understanding the retinal basis of vision across species. Nat. Rev. Neurosci. 21, 5–20 (2020).

Solomon, S. G. & Lennie, P. The machinery of colour vision. Nat. Rev. Neurosci. 8, 276–286 (2007).

Chen, X., Wang, F., Fernandez, E. & Roelfsema, P. R. Shape perception via a high-channel-count neuroprosthesis in monkey visual cortex. Science 370, 1191–1196 (2020).

Zheng, Y., Jia, S., Yu, Z., Liu, J. K. & Huang, T. Unraveling neural coding of dynamic natural visual scenes via convolutional recurrent neural networks. Patterns 2, 100350 (2021).

Zhang, S. & Wang, Z. Artificial cat eye camera for objects detection against complex backgrounds and varied lighting. J. Semicond. 45, 120401–120403 (2024).

Ran, W., Wang, Z. & Shen, G. Artificial hawk-eye camera for foveated, tetrachromatic, and dynamic vision. J. Semicond. 45, 090401–090403 (2024).

Bhattacharya, K., Kumar, M. & Das, S. Spike-Afferent Visual Optoelectronic Nerve (SAVON) for Retinomorphic Sensing Applications. IEEE J. Electron Devices Soc. https://doi.org/10.1109/JEDS.2024.3492101 (2024).

Shao, J. et al. Artificial sensory neurons and their applications. J. Semicond. 46, 011606 (2025).

Kim, Y. et al. Two-terminal neuromorphic devices for spiking neural networks: neurons, synapses, and array integration. ACS Nano 18, 34531–34571 (2024).

Chen, Y. et al. Revolutionizing neuromorphic computing with memristor-based artificial neurons. J. Semicond. 46, 1–11 (2025).

Ren, Q. et al. Optoelectronic devices for in‐sensor computing. Adv. Mater. 2407476 (2024).

Wang, X. et al. Vertically integrated spiking cone photoreceptor arrays for color perception. Nat. Commun. 14, 3444 (2023).

Choi, H. et al. Spiking neural network integrated with impact ionization field‐effect transistor neuron and a ferroelectric field‐effect transistor synapse. Adv. Mater. 2406970 (2024).

Pickett, M. D., Medeiros-Ribeiro, G. & Williams, R. S. A scalable neuristor built with Mott memristors. Nat. Mater. 12, 114–117 (2013).

Beck, M. E. et al. Spiking neurons from tunable Gaussian heterojunction transistors. Nat. Commun. 11, 1565 (2020).

Xu, L. et al. Ultrasensitive dim-light neuromorphic vision sensing via momentum-conserved reconfigurable van der Waals heterostructure. Nat. Commun. 15, 9011 (2024).

Ielmini, D. & Wong, H.-S. P. In-memory computing with resistive switching devices. Nat. Electron. 1, 333–343 (2018).

Prezioso, M. et al. Training and operation of an integrated neuromorphic network based on metal-oxide memristors. Nature 521, 61–64 (2015).

Cui, B. et al. Ferroelectric photosensor network: an advanced hardware solution to real-time machine vision. Nat. Commun. 13, 1707 (2022).

Tan, H. et al. An optoelectronic resistive switching memory with integrated demodulating and arithmetic functions. Adv. Mater. 27, 2797–2803 (2015).

Hu, D.-C., Yang, R., Jiang, L. & Guo, X. Memristive synapses with photoelectric plasticity realized in ZnO1–x/AlOy heterojunction. ACS Appl. Mater. interfaces 10, 6463–6470 (2018).

Huang, H. et al. Fully integrated multi-mode optoelectronic memristor array for diversified in-sensor computing. Nat. Nanotechnol. 20, 93–103 (2025).

Wang, T.-Y. et al. Reconfigurable optoelectronic memristor for in-sensor computing applications. Nano Energy 89, 106291 (2021).

Li, T. et al. Reconfigurable, non-volatile neuromorphic photovoltaics. Nat. Nanotechnol. 18, 1303–1310 (2023).

Fu, X. et al. Graphene/MoS2-xOx/graphene photomemristor with tunable non-volatile responsivities for neuromorphic vision processing. Light Sci. Appl. 12, 39 (2023).

Song, Y. et al. Fatigue of ferroelectric field effect transistor: mechanisms and optimization strategies. J. Semicond. 46, 1–14 (2025).

Tong, L. et al. 2D materials–based homogeneous transistor-memory architecture for neuromorphic hardware. Science 373, 1353–1358 (2021).

Kumar, M. & Suri, M. Emerging non-volatile memory (NVM) technologies based nano-oscillators: Materials to applications. J. Appl. Phy. 136, 160701 (2024).

Uk Lee, D., Jun Lee, H., Kyu Kim, E., You, H.-W. & Cho, W.-J. Low operation voltage and high thermal stability of a WSi2 nanocrystal memory device using an Al2O3/HfO2/Al2O3 tunnel layer. Appl. Phys. Lett. 100, 072901 (2012).

Suh, D. C. et al. Improved thermal stability of Al2O3/HfO2/Al2O3 high-k gate dielectric stack on GaAs. Appl. Phys. Lett. 96, 142112 (2010).

Wang, Y. et al. Photonic synapses based on inorganic perovskite quantum dots for neuromorphic computing. Adv. Mater. 30, 1802883 (2018).

Huang, H. et al. Electrolyte-gated transistors for neuromorphic applications. J. Semicond. 42, 013103 (2021).

Xie, D. et al. Coplanar multigate MoS2 electric-double-layer transistors for neuromorphic visual recognition. ACS Appl. Mater. Interfaces 10, 25943–25948 (2018).

Mennel, L. et al. Ultrafast machine vision with 2D material neural network image sensors. Nature 579, 62–66 (2020).

Pan, C. et al. Reconfigurable logic and neuromorphic circuits based on electrically tunable two-dimensional homojunctions. Nat. Electron. 3, 383–390 (2020).

Zhang, G.-X. et al. Broadband sensory networks with locally stored responsivities for neuromorphic machine vision. Sci. Adv. 9, eadi5104 (2023).

Moon, J. et al. Temporal data classification and forecasting using a memristor-based reservoir computing system. Nat. Electron. 2, 480–487 (2019).

Lukoševičius, M. & Jaeger, H. Reservoir computing approaches to recurrent neural network training. Comput. Sci. Rev. 3, 127–149 (2009).

Liang, X. et al. Physical reservoir computing with emerging electronics. Nat. Electron. 7, 193–206 (2024).

Cucchi, M., Abreu, S., Ciccone, G., Brunner, D. & Kleemann, H. Hands-on reservoir computing: a tutorial for practical implementation. Neuromorph. Comput. Eng. 2, 032002 (2022).

Kim, J. et al. Analog reservoir computing via ferroelectric mixed phase boundary transistors. Nat. Commun. 15, 9147 (2024).

Gallego, G. et al. Event-based vision: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 44, 154–180 (2020).

Roy, K., Jaiswal, A. & Panda, P. Towards spike-based machine intelligence with neuromorphic computing. Nature 575, 607–617 (2019).

Davies, M. et al. Advancing neuromorphic computing with Loihi: A survey of results and outlook. Proc. IEEE 109, 911–934 (2021).

Pfeiffer, M. & Pfeil, T. Deep learning with spiking neurons: opportunities and challenges. Front. Neurosci. 12, 774 (2018).

Zhang, W. et al. Neuro-inspired computing chips. Nat. Electron. 3, 371–382 (2020).

Li, F. et al. An artificial visual neuron with multiplexed rate and time-to-first-spike coding. Nat. Commun. 15, 3689 (2024).

Wang, F. et al. A two-dimensional mid-infrared optoelectronic retina enabling simultaneous perception and encoding. Nat. Commun. 14, 1938 (2023).

Wu, Y. et al. CMOS-compatible retinomorphic Si photodetector for motion detection. Sci. China Inf. Sci. 66, 162401 (2023).

Wu, S. E. et al. Retinomorphic motion detector fabricated with organic infrared semiconductors. Adv. Sci. 10, 2304688 (2023).

Liao, F., Zhou, F. & Chai, Y. Neuromorphic vision sensors: Principle, progress and perspectives. J. Semicond. 42, 013105 (2021).

Zhou, Y. et al. Computational event-driven vision sensors for in-sensor spiking neural networks. Nat. Electron. 6, 870–878 (2023).

Zhou, Y. et al. A 2T2R1C vision cell with 140 dB dynamic range and event-driven characteristics for in-sensor spiking neural network. In International Electron Devices Meeting 31–34 (IEEE, 2022).

He, Z. et al. Perovskite retinomorphic image sensor for embodied intelligent vision. Sci. Adv. 11, eads2834 (2025).

Lee, D. et al. In-sensor image memorization and encoding via optical neurons for bio-stimulus domain reduction toward visual cognitive processing. Nat. Commun. 13, 5223 (2022).

Dang, B. et al. One‐phototransistor–one‐memristor array with high‐linearity light‐tunable weight for optic neuromorphic computing. Adv. Mater. 35, 2204844 (2023).

Pendurthi, R. et al. Monolithic three-dimensional integration of complementary two-dimensional field-effect transistors. Nat. Nanotechnol. 19, 970–977 (2024).

Jayachandran, D., Sakib, N. U. & Das, S. 3D integration of 2D electronics. Nat. Rev. Electr. Eng. 1, 300–316 (2024).