Abstract

Generative artificial intelligence has brought disruptive innovations in health care but faces certain challenges. Retrieval-augmented generation (RAG) enables models to generate more reliable content by leveraging the retrieval of external knowledge. In this perspective, we analyze the possible contributions that RAG could bring to health care in equity, reliability, and personalization. Additionally, we discuss the current limitations and challenges of implementing RAG in medical scenarios.

Similar content being viewed by others

Introduction

Generative artificial intelligence (AI) has recently attracted widespread attention across various fields, including the GPT1,2 and LLaMA3,4 series for text generation, DALL-E5 for image generation, as well as Sora6 for video generation. In health care systems, generative AI holds promise for applications in consulting, diagnosis, treatment, management, and education7,8. Additionally, the utilization of generative AI could enhance the quality of health services for patients while alleviating the workload for clinicians8,9,10.

Despite this, it is crucial to acknowledge the inherent limitations of generative AI models, which include susceptibility to biases from pre-training data11, lack of transparency, the potential to generate incorrect content, and difficulty in maintaining up-to-date knowledge, among others7. For instance, large language models (LLMs) were shown to generate biased responses by adopting outdated race-based equations to estimate renal function12. In the process of image generation, biases related to gender, skin tone, and geo-cultural factors have been observed13. Similarly, for downstream tasks such as question answering, LLM-generated content is often factually inconsistent and lacks evidence for verification14. Moreover, due to their static knowledge and inability to access external data, generative AI models are unable to provide up-to-date clinical advice for physicians or effective personalized health management for patients15.

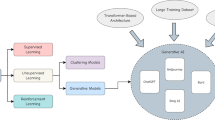

In tackling these challenges, retrieval-augmented generation (RAG) has been explored as a potential solution16,17. By providing models with externally retrieved data, RAG can enhance the reliability of generated content. A typical RAG framework consists of three parts (Fig. 1): indexing, retrieval, and generation. In the indexing stage, external data is split into chunks, encoded into vectors, and stored in a vector database. In the retrieval stage, the user’s query is encoded into a vector representation, and then the most relevant information is retrieved through similarity calculations between the query and the information in the vector database. The retrieval techniques are not limited to dense retrieval but also include sparse and hybrid retrieval approaches, and advanced reranking methods can be employed to improve the relevance of retrieved content. In the generation stage, both the user’s query and the retrieved relevant information are prompted by the model to generate content. Compared to model fine-tuning for a specific task, RAG is generally more computationally efficient and has been shown to improve accuracy for knowledge-intensive tasks18, offering a more flexible paradigm for model updates and integration with other AI techniques.

External data is first encoded into vectors and stored in the vector database (where vectors are mathematical representations of various types of data in a high-dimensional space). In the retrieval stage, when receiving a user query, the retriever searches for the most relevant information from the vector database. In the generation stage, both the user’s query and the retrieved information are used to prompt the model to generate content.

In this perspective, we discuss the role of RAG in the context of generative AI, particularly its possible applications within health care. We examine RAG’s possible contributions from three aspects: equity, reliability, and personalization (Fig. 2). Additionally, we explore the limitations of RAG in medical application scenarios, emphasizing the need for further research to understand the impact of its implementation within health systems19.

Generative AI has limitations such as biased reproduction, lack of transparency, inaccurate information, and static knowledge, which hinder its further application in health care. Retrieval-augmented generation holds the potential to alleviate these issues and drive medical innovation from the perspectives of equity, reliability, and personalization.

Promoting health equity in generative AI applications

Bias reduction

The content generated by generative AI models could perpetuate biases inherent in the pre-training data, which are reflected in aspects including demographic characteristics, political ideologies, and sexual orientations12,13,20. Such biases can not only lead to unfair diagnoses and treatments but also exacerbate health inequalities for particular populations.

RAG is able to obtain information from external knowledge sources, including medical literature, clinical guidelines, and case reports, to optimize the output of generative AI models17. By retrieving information specific to certain subpopulations, the model could analyze a patient’s condition from multiple perspectives, potentially reducing the risk of bias contained in the generated content. For instance, when targeting different gender groups, RAG could retrieve research findings on their specific physiological patterns, common disease spectra, clinical manifestations, as well as related recommendations on clinical practice21,22,23. Similarly, for different ethnic groups, RAG enables access to research reports involving their genetic, environmental, and lifestyle factors, to understand differences in disease incidence rates and unique symptom presentations24. Furthermore, for other specific subpopulations (such as different age groups, socioeconomic statuses, etc.), RAG can retrieve tailored medical evidence to help comprehensively understand their unique health needs25. Although there remain challenges in ensuring access to high-quality data for underrepresented groups, RAG offers possible solutions to mitigate these issues.

Disparity mitigation

Health disparities present additional challenges to marginalized groups in accessing medical resources and health services, potentially hindering the achievement of fairness. Although generative AI models are trained on extensive data, the pre-training data itself exhibits imbalances in representing different groups. For example, 92.64% of the pre-training corpus of GPT-3 is derived from English sources, resulting in limited coverage of communities that use other languages1. This skewness could make it challenging to meet the medical needs of underrepresented groups.

Collecting data specific to these underrepresented populations and incorporating it into the RAG system holds the potential to mitigate the disparities in health care. Specifically, in low-resource regions, the RAG system might leverage knowledge that integrates local medical research literature, clinical guidelines, and practical experiences to provide more relevant diagnostic and treatment advice to local residents26. While some regional guidelines may not be digitized, audio and image recognition technologies could convert this information into digital format, creating region-specific contextual databases27. Similarly, by developing high-quality multilingual medical knowledge bases, RAG can play an important role in cross-language information retrieval and knowledge integration, with the potential to eliminate barriers posed by language differences. However, it is worth noting that even the most advanced LLMs currently support only a limited number of mainstream languages, which limits the effectiveness of RAG in multilingual environments, particularly when dealing with languages in low-resource setting28. Additionally, RAG systems are able to retrieve pre-collected materials and present them in various formats, such as text, images, and videos, to facilitate patient education. This way allows the explanation of complex medical concepts to patients with diverse educational and cultural backgrounds29.

Generating reliable content

Mistake alleviation

One significant challenge of generative AI models in health care is their potential to generate incorrect or unfaithful information7,8. Although there are already specific models pre-trained on large amounts of medical data, such as Med-PaLM2 and Med-Gemini, the phenomenon of “hallucination” cannot be avoided29,30. This issue is extremely sensitive since any false information related to disease diagnosis, treatment plans, or medication guidance will likely cause serious harm to patients31.

For example, medication errors are a major category of medical mistakes, resulting in numerous patient fatalities each year32,33. During the stage of converting prescription instructions into a standard format, pharmacy technicians may incorrectly record dosage, frequency, or route of administration32. Additionally, when patients transfer medications from their original packaging to other containers, it becomes difficult for pharmacists to recognize the medications, which could lead to omission errors33. Given that electronic health record recommendations and alerts are often imprecise, and traditional natural language processing methods require extensive human annotation, generative AI offers an attractive solution. However, generative AI models sometimes also generate incorrect drug information, leading to further harm. RAG might help to address some of these issues. By searching various drug information, RAG can automatically parse prescriptions at the data entry stage and generate more accurate medication guidance, thereby reducing medical errors caused by information transmission. Moreover, in the process of drug identification, a multimodal RAG system has the capability to recognize the appearance features of drugs, such as color, shape, and imprints. By matching these characteristics with database information, the RAG system could generate reliable drug information to serve as a reference for pharmacists, thereby improving the efficiency of drug identification. However, it is crucial to emphasize that these applications are still in the early stage of development and require thorough validation before implementation.

Transparency enhancement

The “black box” nature of generative AI models makes it difficult to explain how specific diagnoses or treatment recommendations are derived. This lack of transparency not only undermines the trust of physicians and patients in the generated content but, more importantly, it may pose serious medical risks and ethical concerns. Although some research has attempted to enhance models’ reasoning abilities and transparency through approaches like chain of thought34, multi-agent discussion35, and post-hoc attribution36, there are still limitations in medical applications37.

In comparison, RAG is able to retrieve traceable medical facts from external knowledge bases, promoting the generation of more transparent content; however, this process still requires manual verification38. In assisting clinical decision-making, RAG may provide the sources of information upon which the diagnoses are based, including clinical guidelines, medical evidence, and clinical cases. By categorizing queries into simple factual searches or multi-step reasoning processes, RAG can further clarify how different types of information contribute to a given recommendation, enhancing the transparency of its decision-making. Additionally, some research utilizes external medical knowledge graphs (such as the Unified Medical Language System) or self-construed knowledge graphs to enhance the diagnostic capabilities of models14,39. Based on a given query, the RAG system first identifies relevant nodes in the knowledge graph, such as diseases, symptoms, or medications, and then retrieves both direct relations and multi-hop paths connecting these nodes. This process allows the RAG system to extract structured, relevant knowledge efficiently and leverage it to provide clear diagnostic explanations14.

Personalizing health care services

Health management

RAG also shows potential for personalized health care management. Generative AI models lack the ability to incorporate personal information, making it difficult to offer effective health services8. For example, they may not be aware of a user’s allergies and recommend allergenic foods. In contrast, the RAG system could integrate health data and lifestyle habits of individuals to build a comprehensive personal profile, which might enable more customized health guidance.

For patients, by connecting their medical records and clinical data while allowing for real-time updates, the RAG system has the capability to provide more precise health management guidance. For instance, for patients with chronic conditions who need to take multiple medications long-term, the system can generate medication reminders according to physicians’ prescriptions, ensuring that patients take their medications correctly and timely, thereby improving medication adherence. For the public, the RAG system can analyze personal health data, lifestyle, environmental factors, and genetic information (if granted access by individual users) to identify potential health risks. In this way, the RAG system provides personalized health recommendations, including diet, exercise, and stress management, effectively promoting disease prevention. For example, for individuals with a high genetic risk of heart disease, the system could recommend specific dietary plans and appropriate exercise regimens to reduce the risk of eventually developing the disease.

Precision medicine

Precision medicine aims to maximize medical effectiveness and patient benefits by tailoring treatment strategies according to a patient’s genetic profile, environmental influences, lifestyle, and other individual factors40. Although current generative AI models have demonstrated potential to assist in clinical decision-making35,41, they still face challenges in precision medicine42, as they struggle to utilize highly individualized patient data to provide precise treatment recommendations.

RAG might offer unique advantages for advancing precision medicine. By retrieving a patient’s complex clinical and molecular data, the RAG system empowers physicians to develop more accurate and personalized treatment plans tailored to each patient43. For example, generative AI models typically provide similar general clinical advice to cancer patients exhibiting similar signs and symptoms. However, in reality, these patients generally have different disease progression and prognoses due to differences in their biomarkers (e.g., DNA, RNA, proteins, metabolites, host cells, and microbiomes)44. Although collecting and protecting such sensitive data remains a challenge, RAG could better leverage this information for precision medicine practices. Specifically, the RAG system may be able to comprehensively analyze a patient’s biomarkers, classify them into more granular subgroups, and recommend appropriate personalized treatment plans to physicians based on established clinical guidelines.

Discussion

RAG may enable better integration of generative AI into health systems and bring more innovative applications in consulting, diagnosis, treatment, management, and education. Despite the potential of RAG systems in health care, they also face significant limitations. First, the retrieval of external knowledge can introduce additional biases, since the sources themselves might contain biases. Second, due to the lack of sufficient high-quality information on underrepresented groups, RAG systems may become less effective in such cases, with the generated content relying more on the knowledge of the models themselves. As a result, minority groups are unlikely to benefit much from existing RAG systems. Third, although RAG systems can enhance transparency by providing evidence, determining which parts of a response are derived from which pieces of retrieved knowledge is difficult without human inspection. Meanwhile, possible knowledge conflicts between retrieved documents or with the model’s internal knowledge highlight the importance of source validation, though effective implementation remains challenging45. Fourth, RAG systems face certain privacy risks, as sensitive information stored in retrieval databases can be extracted through designed prompts. Implementing appropriate privacy protection mechanisms is crucial to mitigate the risk of information leakage in generated content, especially when handling sensitive medical information46. Therefore, we suggest a multidisciplinary collaboration among clinicians, researchers, stakeholders, and regulators to explore how RAG can be used more equitably, reliably, and effectively to improve existing practices in health care. Such collaboration should focus on addressing practical challenges, including ensuring interoperability with EHR systems, building clinician trust, and providing adequate training for health care professionals to fully harness the potential of RAG47.

Data availability

No datasets were generated or analysed during the current study.

References

Brown, T. et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 33, 1877–1901 (2020).

OpenAI et al. GPT-4 Technical Report. https://doi.org/10.48550/ARXIV.2303.08774 (2023).

Touvron, H. et al. LLaMA: Open and Efficient Foundation Language Models. https://doi.org/10.48550/ARXIV.2302.13971 (2023).

Touvron, H. et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. https://doi.org/10.48550/ARXIV.2307.09288 (2023).

Website. https://openai.com/index/dall-e-3/.

Website. https://openai.com/index/sora/.

Thirunavukarasu, A. J. et al. Large language models in medicine. Nat. Med. 29, 1930–1940 (2023).

Yang, R. et al. Large language models in health care: development, applications, and challenges. Health Care Science 2, 255–263 (2023).

Roberts, K. Large language models for reducing clinicians’ documentation burden. Nat. Med. 30, 942–943 (2024).

Chen, S. et al. The effect of using a large language model to respond to patient messages. The Lancet Digit. Health 6, e379–e381 (2024).

Chen, S. et al. Cross-Care: assessing the healthcare implications of pre-training data on language model bias. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2405.05506 (2024).

Omiye, J. A., Lester, J. C., Spichak, S., Rotemberg, V. & Daneshjou, R. Large language models propagate race-based medicine. npj Digit. Med. 6, 1–4 (2023).

Wan, Y. et al. Survey of bias in Text-to-Image generation: definition, evaluation, and mitigation. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2404.01030 (2024).

Yang, R. et al. KG-Rank: Enhancing large language models for medical QA with knowledge graphs and ranking techniques. Proceedings of the 23rd Workshop on Biomedical Natural Language Processing 155–166 (Association for Computational Linguistics, Stroudsburg, PA, USA, 2024).

Kirk, H. R., Vidgen, B., Röttger, P. & Hale, S. A. The benefits, risks and bounds of personalizing the alignment of large language models to individuals. Nat. Mach. Intell. 6, 383–392 (2024).

Gilbert, S., Kather, J. N. & Hogan, A. Augmented non-hallucinating large language models as medical information curators. npj Digit. Med. 7, 1–5 (2024).

Zakka, C. et al. Almanac—Retrieval-Augmented Language Models for Clinical Medicine. NEJM AI. https://doi.org/10.1056/AIoa2300068 (2024).

Ovadia, O., Brief, M., Mishaeli, M. & Elisha, O. Fine-tuning or retrieval? Comparing knowledge injection in LLMs. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2312.05934 (2023).

Yang, R. et al. Disparities in clinical studies of AI enabled applications from a global perspective. NPJ Digit. Med. 7, 209 (2024).

Ayoub, N. F. et al. Inherent bias in large language models: a random sampling analysis. Mayo Clin. Proc. Digit. Health 2, 186–191 (2024).

Haupt, S., Carcel, C. & Norton, R. Neglecting sex and gender in research is a public-health risk. Nature. https://doi.org/10.1038/d41586-024-01372-2 (2024).

Narasimhan, M. et al. Self-care interventions for women’s health and well-being. Nat. Med. 30, 660–669 (2024).

Vieira Machado, C., Araripe Ferreira, C. & de Souza Mendes Gomes, M. A. Promoting gender equity in the scientific and health workforce is essential to improve women’s health. Nat. Med. 30, 937–939 (2024).

Rebbeck, T. R., Mahal, B., Maxwell, K. N., Garraway, I. P. & Yamoah, K. The distinct impacts of race and genetic ancestry on health. Nat. Med. 28, 890–893 (2022).

Lewis, C. V., Huebner, J., Hripcsak, G. & Sabatello, M. Underrepresentation of blind and deaf participants in the All of Us Research Program. Nat. Med. 29, 2742–2747 (2023).

Ferber, D. et al. GPT-4 for information retrieval and comparison of medical oncology guidelines. NEJM AI. https://doi.org/10.1056/AIcs2300235 (2024).

Acosta, J. N., Falcone, G. J., Rajpurkar, P. & Topol, E. J. Multimodal biomedical AI. Nat. Med. 28, 1773–1784 (2022).

Llama 3.2: Revolutionizing edge AI and vision with open, customizable models. Meta AI. https://ai.meta.com/blog/llama-3-2-connect-2024-vision-edge-mobile-devices/.

Saab, K. et al. Capabilities of Gemini models in medicine. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2404.18416. (2024).

Singhal, K. et al. Towards expert-level medical question answering with large language models. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2305.09617 (2023).

Yang, R. et al. Ascle—a Python natural language processing toolkit for medical text generation: development and evaluation study. J. Med. Internet Res. 26, e60601 (2024).

Pais, C. et al. Large language models for preventing medication direction errors in online pharmacies. Nat. Med. 30, 1574–1582 (2024).

Larios Delgado, N. et al. Fast and accurate medication identification. npj Digit. Med. 2, 1–9 (2019).

Liévin, V., Hother, C. E., Motzfeldt, A. G. & Winther, O. Can large language models reason about medical questions? PATTER 5, 100943 (2024).

Ke, Y. H. et al. Mitigating Cognitive Biases in Clinical Decision-Making Through Multi-Agent Conversations Using Large Language Models: Simulation Study. J Med Internet Res 26, e59439 (2024).

Krishna, S. et al. Post hoc explanations of language models can improve language models. Adv. Neural Inf. Process. Syst. 36, 65468–65483 (2023).

Zhao, H. et al. Explainability for large language models: a survey. ACM Trans. Intell. Syst. Technol. 15, 1–38 (2024).

Kresevic, S. et al. Optimization of hepatological clinical guidelines interpretation by large language models: a retrieval augmented generation-based framework. npj Digit. Med. 7, 1–9 (2024).

Wu, J., Zhu, J. & Qi, Y. Medical graph RAG: towards safe medical Large Language Model via graph retrieval-augmented generation. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2408.04187 (2024).

König, I. R., Fuchs, O., Hansen, G., von Mutius, E. & Kopp, M. V. What is precision medicine? Eur. Respir. J. 50, 1700391 (2017).

Liu, S. et al. Using AI-generated suggestions from ChatGPT to optimize clinical decision support. J. Am. Med. Inform. Assoc. 30, 1237–1245 (2023).

Truhn, D., Eckardt, J.-N., Ferber, D. & Kather, J. N. Large language models and multimodal foundation models for precision oncology. npj Precis. Oncol. 8, 1–4 (2024).

Benary, M. et al. Leveraging large language models for decision support in personalized oncology. JAMA Netw. Open 6, e2343689–e2343689 (2023).

Vargas, A. J. & Harris, C. C. Biomarker development in the precision medicine era: lung cancer as a case study. Nat. Rev. Cancer 16, 525–537 (2016).

Yang, R. et al. Graphusion: a RAG framework for Knowledge Graph Construction with a global perspective. Preprint at arXiv. https://doi.org/10.48550/ARXIV.2410.17600 (2024).

Zeng, S. et al. The good and the bad: exploring privacy issues in retrieval-augmented generation (RAG). Preprint at arXiv. https://doi.org/10.48550/ARXIV.2402.16893 (2024).

Ning, Y. et al. Generative artificial intelligence and ethical considerations in health care: a scoping review and ethics checklist. Lancet Digit. Health. https://doi.org/10.1016/S2589-7500(24)00143-2 (2024).

Acknowledgements

This work was supported by the Duke-NUS Signature Research Program funded by the Ministry of Health, Singapore. Any opinions, findings and conclusions, or recommendations expressed in this material are those of the author(s) and do not reflect the views of the Ministry of Health.

Author information

Authors and Affiliations

Contributions

R.Y. and N.L. conceived the idea. R.Y. drafted the initial paper. N.L. supervised the work. All authors contributed to the interpretation of content, revisions, and final approval of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Yang, R., Ning, Y., Keppo, E. et al. Retrieval-augmented generation for generative artificial intelligence in health care. npj Health Syst. 2, 2 (2025). https://doi.org/10.1038/s44401-024-00004-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44401-024-00004-1

This article is cited by

-

HealthContradict: Evaluating biomedical knowledge conflicts in language models

npj Digital Medicine (2026)

-

Scaling medical AI across clinical contexts

Nature Medicine (2026)

-

Toward integrated sleep health: multimodal AI in Hang Hao Meng agent

npj Digital Medicine (2026)

-

Optimizing RAG-based LLMs for healthcare question answering tasks

Knowledge and Information Systems (2026)

-

Implementing generative artificial intelligence in precision oncology: safety, governance, and significance

Journal of Hematology & Oncology (2026)