Abstract

Large language model (LLM) chat tools have the potential to transform healthcare workflows by improving efficiency and reducing administrative burdens. While prior research has predominantly focused on clinicians, non-clinician healthcare staff constitute the majority of the workforce, and their real-world chat tool use remains uncharacterized. This retrospective, cross-sectional study analyzed de-identified chat logs from a secure, HIPAA-compliant LLM chat tool deployed at an academic medical center over an 11-month period. Among 30,503 chat threads analyzed, 98% originated from non-clinician users across 239 roles. Usage was dominated by administrative tasks including email and document writing (53.9%), text manipulation (9.1%), and brainstorming (6.7%). A notable proportion of interactions included off-label queries unrelated to work or organizational goals, including 5.9% involving clinical decision-making. These findings highlight the need for targeted training, tailored governance policies, and refined evaluation frameworks to optimize appropriate LLM use while mitigating risks in healthcare settings.

Similar content being viewed by others

Introduction

Large language models (LLMs) are rapidly being integrated into healthcare1,2,3,4,5,6, with the potential to significantly transform clinical workflows, enhance decision support, and streamline administrative processes7. Recent studies highlight the promise of these advanced AI tools to improve efficiency8, reduce clinician burden9, and potentially enhance patient outcomes through more effective information management and communication support9,10,11,12. However, the vast majority of existing research has focused primarily on physicians and clinical personnel13, when physicians represent only one in 25 employees in U.S. healthcare14. There remains limited empirical evidence regarding the specific LLM applications most commonly utilized by non-clinician healthcare staff, such as administrative assistants, case managers, and interpreters15.

Without clear insights into how non-clinicians interact with these tools, healthcare organizations face difficulties in optimizing implementations16, ensuring patient safety, and proactively managing emerging risks associated with AI-driven technologies15,17. To address this critical knowledge gap, our study provides a quantitative analysis of non-clinician usage logs from a secure LLM deployment within an academic medical center. This research aims to equip health system leaders, policymakers, and technology developers with actionable insights to maximize the benefits and mitigate the risks of integrating LLMs into health systems.

Results

Usage Trends Across Categories

A total of 30,503 chat threads were analyzed, with 26,691 threads originating from frequent users. Ten primary categories of LLM usage were identified: email and document writing, text manipulation, brainstorming, general information, medical questions, technical support, patient communication, coding, language translation, and image generation. These categories, along with their definitions and representative examples, are detailed in Table 1.

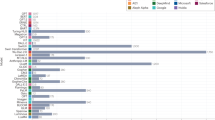

Analysis of usage patterns demonstrated that ‘Email & Document Writing’ tasks dominated, representing nearly 53.9% of total activity. Other prominent categories included ‘Text Manipulation’ (9.1%), ‘Brainstorming’ (6.7%), ‘Handle Information Requests’ (6.1%), and ‘Answer Medical Knowledge Questions’ (5.9%). ‘Technical Support & IT Issues’ (4.4%), ‘Patient-Provider Messaging’ (3.5%), ‘Coding’ (2.6%), ‘Process Referrals/ Documents’ (2.0%), and ‘Generate Visual Aids’ (0.8%) comprised the remainder of usage (Fig. 1).

Horizont albars indicate the percentage of conversation threads assigned to each task category (x-axis, distribution of prompts [%]). Boxes on the left depict the corresponding MedHELM category and subcategory for each task, with connecting lines indicating the mapping.

Summary of User Role Categories in the Study Sample

Of the users who signed in to their department and had a role available to our system (comprising 97.2% of total users), 98.0% were non-clinicians. In this study, the term ‘department’ referred to distinct clinical entities, typically different ambulatory clinic locations or clinical services, that require unique builds within the electronic health record. Please see the Supplementary Information (Supplementary Fig. 2) for details regarding the distribution of user role categories, which were manually mapped to high-level U.S. Standard Occupational Classification (SOC) Major Groups18. The complete taxonomy mapping of usage categories to the MedHELM framework is detailed in Supplementary Table 2 (see Supplementary Information C for advanced practice provider and allied health professional definitions).

Illustrative Examples from Quantitative Usage Categories

In addition to quantifying aggregate usage patterns, examples of user queries were reviewed that depict the potential value and risks of secureLLM deployment. An example of this being requests to automate frequently repeated administrative tasks, such as:

“user: please help me write a brief summary of what a spider angioma is for patients in my pediatric dermatology clinic”

“user: ask me questions to better generate a script for our schedulers to inform families of our wait times before scheduling.”

However, our analysis also surfaced conversations largely unrelated to work or organizational goals. These included:

“user: guess that baby shower game tagline”

“user: can i know the price of the stock tesla 2025?”

“user: what is a good joke for today?”

Despite the majority of user roles being non-clinical, a significant proportion of prompts were related to clinical decision making. Examples included:

“user: What are the symptoms for RSV?”

“user: Patient taking high dose oxcarbazepine, valproic acid medium dose valproic acid and cenobamate. Presents with constipation and abdominal pain as well as decreased appetite. What is the differential diagnosis?”

“user: Write a letter of medical necessity for patient to get Monogenic Hypertension Evaluation testing through Athena Diagnostics.

“user: write letter for appeal to CVS Caremark to approve Retacrit for patient.”

Another use case was related to the generation of insurance prior authorization requests, detailing medical necessity of certain treatments.

Discussion

This study contributes an early quantitative analysis of real-world chat tool use among non‑clinician healthcare staff, addressing a gap in the existing literature that has focused almost exclusively on clinicians. Our analysis of 30,503 chat threads moves beyond prior survey-based or pre-categorized approaches5,6, revealing how non-clinicians adopt and integrate these LLM tools.

Usage was dominated by tasks, such as email composition and document preparation, suggesting considerable untapped potential for more sophisticated applications. Expanding staff awareness of the range of workflow-relevant functions, beyond traditional search or basic writing tasks, alongside training in prompt engineering16 and the development of customized templates for high‑frequency administrative tasks, such as prior authorization letters19, could improve efficiency and reduce administrative burden. However, the presence of prompts requiring nuanced clinical judgment underscores the need for role‑appropriate education and governance20 to prevent out‑of‑scope use.

Unexpected usage patterns included a notable proportion of non-work related personal queries (i.e., trivia queries, creative writing), which at scale may generate unnecessary computational and environmental costs21. In addition, prompts requiring nuanced clinical judgment were observed. This likely represents the small proportion of clinicians engaged with the system despite role-targeted alternatives, or non-clinicians involved in workflows related to insurance. Yet governance and risk-management considerations necessitate further defining non-clinician scope with regard to seeking LLM-derived medical guidance. Several non-clinical uses were identified, including Email and Document Writing, Coding, Technical Support and IT Issues, Text Manipulation, and Brainstorming, that were absent from the MedHELM task taxonomy. This gap illustrates limits in existing frameworks’ ability to capture real‑world LLM uses among non‑clinician staff. We propose the addition of these tasks to MedHELM version 2 under the ‘Administration & Workflow’ category.

These findings highlight the importance of deployment strategies and monitoring tailored to departmental needs. Training priorities will differ, for example, between language translation teams focused on the LLM’s language strengths and information technology (IT) groups leveraging coding assistance features or internal IT workflow troubleshooting capabilities. Role‑based analyses are essential for determining appropriate user education that includes insights into job-specific workflow enhancements, such as prompting techniques for document insight generation or best practices regarding programming assistance.

Limitations include the single‑center design, the 11‑month study period, and potential classification challenges due to multi‑intent conversations. Inconsistent role designations also limited role‑specific analyses. Future research should link usage patterns to departmental context, refine occupational coding, and explore automating high‑volume administrative tasks, such as prior authorization or patient education document creation.

Based on these findings, we offer three targeted policy recommendations for future LLM deployments in healthcare, each linked to specific patterns that were observed:

-

1.

Implement real-time dashboards for usage monitoring. Both directly valuable workflow applications (i.e., automating documentation) and unrelated or inappropriate use (e.g., personal queries, off‑task interactions) were observed. Continuous visibility into usage patterns would allow health systems to quickly identify emerging high‑impact applications worth expanding and to intervene early when unrelated, risky, or non‑compliant prompts are used. We recommend institutions deploy analytics tools within the secure LLM environment to display usage by category, department, and frequency, with automated alerts for unusual trends (i.e., spikes in clinical decision support queries from non‑clinical departments).

-

1.

Develop role‑ and department‑specific, guidance and educational resources. Despite 98% of users being non‑clinicians, a notable subset of prompts involving nuanced clinical decision‑making or insurer‑facing medical necessity statements. Tailoring guidance to user roles would help prevent out‑of‑scope activity, reduce risk, and control compute costs. We recommend organizations map common task categories by department and deliver targeted education that clarifies appropriate use of secure LLMs.

-

2.

Automate validated use cases. Many users repeated specific tasks (such as patient education or insurance-related communications). Automating high-value use cases can enable further efficiency gains. We recommend institutions validate specific workflows by creating automations and templates that encourage use and simultaneously educate users on a broader range of chat tool capabilities.

By systematically monitoring real-world usage patterns and proactively managing risks, health systems can maximize the benefits of LLM integration while safeguarding patient safety and operational efficiency.

Methods

Study Design and Setting

This retrospective, cross-sectional study was conducted at Stanford Medicine Children’s Hospital, leveraging de-identified usage logs of user prompts from a secure, HIPAA-compliant LLM chat tool (GPT-4o, OpenAI). The study period spanned from April 22, 2024 to February 28, 2025 and encompassed a total of 30,503 conversation threads across 239 clinical and administrative roles. For the purposes of this study, the clinician role was defined as one that provides direct patient care in the form of diagnosis, treatment and prescribing (including physicians, APPs, certified nurse midwife, certified nurse practitioner, clinical nurse specialist, and certified registered nurse anesthetist)22. Roles of non-clinicians spanned functions in management, training, nursing, education, administrative support, IT services, and more. For a more detailed breakdown of non-clinician user roles by category, see Supplementary Fig. 2 in Supplementary Information. Of note, most physicians are employed by the affiliated university and are granted access to and encouraged to use a different secure LLM chatbot.

This study was reviewed and deemed exempt by the Stanford University Institutional Review Board (Protocol ID: 81541).

Sample Selection

To focus on users with greater familiarity and engagement with the platform, our primary analysis was restricted to threads generated by “frequent users,” defined as individuals with more than five recorded interactions with the chat tool. This threshold was chosen to ensure an adequate sample size, as very few users had surpassed five interactions early in deployment (20.7% in September 2024) and this proportion increased to 43.7% by the end of the study period. Study size was determined by the number of chat threads available at the time of the data analysis.

Categorization Scheme Development

The categorization scheme for user queries was developed through an iterative process, initially adapting categories from Bedi et al.15 to reflect healthcare-specific workflows. The preliminary category list was comprised of 11 categories, including an “other” designation for uncaptured use cases. Each human reviewer independently applied these labels to a random sample of 100 messages, followed by consensus labeling sessions to resolve discrepancies and refine categories and their associated definitions.

To generate example messages for each category, a secondary LLM was prompted to produce five candidate messages per category. These were then classified by this LLM to ensure alignment, and independently reviewed by two project team members (WH and KB), who selected the three most representative examples for each category. When a conversation was labeled as “other,” the secondary LLM suggested a descriptive category name, which informed subsequent category list iterations. A curated set of examples was subsequently used to guide both manual and automated classification of conversation threads. The final category list, including definitions and representative examples, is detailed in Table 1 and the Supplementary Information.

Classification Process

Classification of user queries employed a hybrid human-AI approach. Three independent physician reviewers (WH, KB, SM) manually labeled random samples of conversation threads. Discrepancies were resolved by consensus or, when necessary, adjudication by a third reviewer. Automated classification was performed using the GPT-4o API. Model prompts and category definitions were iteratively refined based on error analysis and reviewer feedback (please see Supplementary Information A for detailed prompt methodology and categorization examples in Supplementary Table 1). After this process was completed, author KB manually mapped category results to the MedHELM task taxonomy23.

Validation and Reliability Assessment

Detailed validation methodologies and performance metrics are provided in Supplementary Information B, with categorization performance visualized in Supplementary Fig. 1.

Data Handling and Preprocessing

All conversations were preserved in their entirety as single input sequences to the LLM, and included only the user prompts. Threads exceeding 32,000 characters, the maximum row limit for Excel, were truncated which affected a small proportion (1.1%) of the dataset.

Data Availability

The datasets generated and/or analyzed during the current study are not publicly available due to the data being obtained from internal systems and containing patient, personal, and/or proprietary information that is not in their current form appropriate for public posting, but are available from the corresponding author on reasonable request.

Code availability

Classification was performed using the GPT-4o API with a temperature setting of zero. All model responses were returned in JSON format for reliability, with any errors adjudicated by a secondary model. Please see Supplementary Information A for the categorization prompt used, along with example instructions for formatting responses in JSON.

References

Ng, M. Y., Helzer, J., Pfeffer, M. A., Seto, T. & Hernandez-Boussard, T. Development of secure infrastructure for advancing generative artificial intelligence research in healthcare at an academic medical center. J. Am. Med. Inform. Assoc. 32, 586–588 (2025).

UCSF. Versa Chat and Other General Purpose Chatbots https://ai.ucsf.edu/versa-chat-and-other-general-purpose-chatbots (UCSF, 2024).

Vanderbilt University. Amplify, vanderbilt’s new custom generative ai software, is now open to all faculty, staff and students. (Vanderbilt University, 2024).

Korom, R. et al. AI-based clinical decision support for primary care: a real-world study. Preprint at https://doi.org/10.48550/arXiv.2507.16947 (2025).

Malhotra, K. et al. Health system-wide access to generative artificial intelligence: the New York University Langone Health experience. J. Am. Med. Inform. Assoc. 32, 268–274 (2025).

Small, W. R. et al. The first generative AI prompt-A-thon in healthcare: a novel approach to workforce engagement with a private instance of ChatGPT. PLOS Digit. Health 3, e0000394 (2024).

American Medical Association. Physician Sentiments around the Use of AI in Health Care: Motivations, Opportunities, Risks, and Use Cases. https://www.ama-assn.org/system/files/physician-ai-sentiment-report.pdf (American Medical Association, 2025).

Hickman, C., Pridgen, K., Hughes, D., Pair, L. & Holland, A. C. The role of artificial intelligence in increasing efficiency, reducing errors, and improving patient outcomes in clinical practice. Clin. J. Nurse Pract. Women’s. Health 2, 101–108 (2025).

Tripathi, S., Sukumaran, R. & Cook, T. S. Efficient healthcare with large language models: optimizing clinical workflow and enhancing patient care. J. Am. Med. Inform. Assoc. 31, 1436–1440 (2024).

Jiang, Y. et al. MedAgentBench: a virtual EHR environment to benchmark medical LLM agents. JM AI 2, AIdbp2500144 (2025).

Uptegraft, C., Black, K. C., Gale, J., Marshall, A. & He, S. The elastic electronic health record: a five-tiered framework for applying artificial intelligence to electronic health record maintenance, configuration, and use. JMIR AI 4, e66741–e66741 (2025).

Zou, S. & He, J. Large Language Models in Healthcare: A Review. in 2023 7th International Symposium on Computer Science and Intelligent Control (ISCSIC) 141–145 (IEEE, https://doi.org/10.1109/iscsic60498.2023.00038. 2023).

Unell, A., Kashyap, M., Pfeffer, M. & Shah, N. Real-world usage patterns of large language models in healthcare. Preprint at https://doi.org/10.1101/2025.05.02.25326781 (2025).

Moses, H. et al. The anatomy of health care in the United States. JAMA 310, 1947 (2013).

Bedi, S. et al. Testing and evaluation of health care applications of large language models: a systematic review. JAMA 333, 319–328 (2025).

Vedak, S. & Yao, D. Generative AI for healthcare (Part 1): demystifying large language models. npj Digit. Med. 7, 62 (2024).

Mello, M. M. & Cohen, I. G. Regulation of health and health care artificial intelligence. JAMA 333, 1769 (2025).

U.S. Bureau of Labor Statistics. Standard Occupational Classification Manual (2018 Edition). https://www.bls.gov/soc/2018/soc_2018_manual.pdf (BLS, 2018).

Doximity. Clinical reference and admin support. Doximity GPT. https://www.doximity.com/doximity-gpt-info.

Ong, J. C. L. et al. International partnership for governing generative artificial intelligence models in medicine. Nat. Med. https://doi.org/10.1038/s41591-025-03787-4 (2025).

Osmanlliu, E. et al. The urgency of environmentally sustainable and socially just deployment of artificial intelligence in health care. NEJM Catal. 6, 0501 (2025).

AUA Ad Hoc Work Group on Advanced Practice Providers Chris M. Gonzalez MD, MBA, Timothy Brand MD, Lou Koncz PA-C, Ken Mitchell MPAS, PA-C, Aaron Spitz MD, Susanne Quallich ANP-BC, NP-C, CUNP, FAANP, Tim Irizarry MS, NREMT-P, PA-C, Jonathan Rubenstein MD, Pablo J. Santamaria MD, FACS, Howard Snyder MD, FACS, John Gore MD, Christopher Porter MD, Kathleen M. Zwarick PhD, CAE and John Kristan. AUA Consensus Statement on Advanced Practice Providers: Executive Summary. Urol. Pract. 2, 219–222 (2015).

Bedi, S. et al. Holistic evaluation of large language models for medical tasks with MedHELM. Nat. Med. (in press).

Acknowledgements

The authors wish to thank Michelle M. Mello, JD, PhD, MPhil, for her contribution to the critical review of this manuscript for important intellectual content. This research utilized data provided by an internal chatbot tool from Stanford Medicine Children's Hospital (SMCH). The tool is developed and maintained by SMCH and is designed to facilitate LLM interactions in a PHI-compliant environment. Dr. Chen has received research funding support in part by the Josiah Macy Jr. Foundation (AI in Medical Education), NIH/National Institute of Allergy and Infectious Diseases (1R01AI17812101), NIH-NCATS-Clinical & Translational Science Award (UM1TR004921), Stanford Bio-X Interdisciplinary Initiatives Seed Grants Program (IIP) [R12] [JHC], Stanford RAISE Health Seed Grant 2024, and NIH/Center for Undiagnosed Diseases at Stanford (U01 NS134358).

Author information

Authors and Affiliations

Contributions

W.J.H. had full access to all of the data in the study and takes responsibility for the accuracy and integrity of the data analysis.*Concept and design* : K.C.B., W.J.H., S.P.M., A.B., N.H.S., and K.M.*Acquisition, analysis, or interpretation of data* : K.C.B., W.J.H., S.P.M., H.K., and J.H.C.*Drafting of the manuscript* : K.C.B., S.P.M., H.K., N.H.S., and K.M.*Critical review of the manuscript for important intellectual content* : K.C.B., W.J.H., S.P.M., A.B., J.H.C., N.H.S., and K.M.*Statistical analysis*: W.J.H.*Obtained funding* : J.H.C.*Administrative, technical, or material support* : K.C.B., W.J.H., S.P.M., H.K., J.H.C., N.H.S., and K.M.*Supervision* : K.C.B., W.J.H., S.P.M., A.B., J.H.C., N.H.S., and K.M.

Corresponding author

Ethics declarations

Competing interests

Dr. Chen reported being a co-founder of Reaction Explorer LLC that develops and licenses organic chemistry education software. Dr. Chen also reported receiving paid medical expert witness fees from Sutton Pierce, Younker Hyde MacFarlane, Sykes McAllister, Elite Experts as well as consulting fees from ISHI Health. Dr. Chen reported receiving paid honoraria or travel expenses for invited presentations by insitro, General Reinsurance Corporation, Cozeva, and other industry conferences, academic institutions, and health systems. Dr. Shah reported being a cofounder of Prealize Health (a predictive analytics company) as well as Atropos Health (an on-demand evidence generation company) and serving on the Board of the Coalition for Healthcare AI (CHAI), a consensus-building organization providing guidelines for the responsible use of artificial intelligence in health care. Dr. Shah serves as an advisor to Opala, Curai Health, JnJ Innovative Medicines and AbbVie pharmaceuticals. Dr. Morse serves as an advisor to Baxter. No other disclosures were reported.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Black, K.C., Haberkorn, W.J., Ma, S.P. et al. Uses of generative AI by non-clinician staff at an academic medical center. npj Health Syst. 3, 13 (2026). https://doi.org/10.1038/s44401-025-00063-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44401-025-00063-y