Abstract

Real-time wireless transmission of large-scale images is essential for remote-sensing applications, yet conventional architectures treat imaging, compression and wireless transmission as separate processes, resulting in substantial latency under constrained channel capacity. Here we present an in-sensor wireless computing architecture that integrates imaging, compression, signal modulation and wireless transmission into a single step. The system exploits the unique alternating-current photoresponse of two-dimensional-material device arrays to perform in-sensor computation, enabling images to be wirelessly transmitted as compressed spatial-frequency representations. The received signals can be directly recognized with accuracy comparable to that obtained using the original images, while reducing transmission latency by up to 96.8% relative to conventional approaches. This work establishes in-sensor wireless computing as a promising route towards low-latency remote imaging, with potential applications in next-generation satellite–terrestrial networks and edge–cloud collaborative computing.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data that support the findings of this study are available via Code Ocean at https://codeocean.com/capsule/8892839/tree/v1 (ref. 37).

Code availability

The code that supports the results shown in this article is available via Code Ocean at https://codeocean.com/capsule/8892839/tree/v1 (ref. 37).

Change history

20 March 2026

In the version of this article initially published online, the Code Ocean link listed in the Code availability section and ref. 37 was incorrect and has now been updated to https://codeocean.com/capsule/8892839/tree/v1 in the HTML and PDF versions of the article.

References

Zhou, D. et al. Aerospace integrated networks innovation for empowering 6G: a survey and future challenges. IEEE Commun. Surv. Tutor. 25, 975–1019 (2023).

Portillo, I. et al. A technical comparison of three low Earth orbit satellite constellation systems to provide global broadband. Acta Astron. 159, 123–135 (2019).

Qu, Z. et al. LEO satellite constellation for Internet of Things. IEEE Access 5, 18391–18401 (2017).

Zhu H. et al. Orientation robust object detection in aerial images using deep convolutional neural network. In IEEE International Conference on Image Processing 3735–3739 (IEEE, 2015).

Cakaj, S. et al. The coverage analysis for low Earth orbiting satellites at low elevation. Int. J. Adv. Comput. Sci. Appl. 5, 6–10 (2014).

Chen, J. et al. A remote sensing data transmission strategy based on the combination of satellite-ground link and GEO relay under dynamic topology. Future Gener. Comput. Syst. 145, 337–353 (2023).

Guo, Y. et al. Direct observation of atmospheric turbulence with a video-rate wide-field wavefront sensor. Nat. Photon. 18, 935–943 (2024).

Zheng, H. et al. Multichannel meta-imagers for accelerating machine vision. Nat. Nanotechnol. 19, 471–478 (2024).

Huang, Z. et al. Pre-sensor computing with compact multilayer optical neural network. Sci. Adv. 10, eado8516 (2024).

Bian, L. et al. A broadband hyperspectral image sensor with high spatio-temporal resolution. Nature 635, 73 (2024).

Chen, Y. et al. All-analog photoelectronic chip for high-speed vision tasks. Nature 623, 48–57 (2023).

Chen, S. Optical generative models. Nature 644, 903–911 (2025).

Kalinin, K. P. et al. Analog optical computer for AI inference and combinatorial optimization. Nature 645, 354–361 (2025).

Yao, Z. et al. Integrated lithium niobate photonics for sub-angstrom snapshot spectroscopy. Nature 646, 567–575 (2025).

Fan, D. et al. Two-dimensional semiconductor integrated circuits operating at gigahertz frequencies. Nat. Electron 6, 879–887 (2023).

Zhang, X. et al. Two-dimensional MoS2-enabled flexible rectenna for Wi-Fi-band wireless energy harvesting. Nature 566, 368–372 (2019).

Xia, F. et al. Two-dimensional material nanophotonics. Nat. Photon. 8, 899–907 (2014).

Mennel, L. et al. Ultrafast machine vision with 2D material neural network image sensors. Nature 579, 62–66 (2020).

Yang, Y. et al. In-sensor dynamic computing for intelligent machine vision. Nat. Electron. 7, 225–233 (2024).

Zhou, Y. et al. Computational event-driven vision sensors for in-sensor spiking neural networks. Nat. Electron. 6, 870–878 (2023).

Zhang, Z. et al. All-in-one two-dimensional retinomorphic hardware device for motion detection and recognition. Nat. Nanotechnol. 17, 27–32 (2022).

Chen, J. et al. Optoelectronic graded neurons for bioinspired in-sensor motion perception. Nat. Nanotechnol. 18, 882–888 (2023).

Pi, L. et al. Broadband convolutional processing using band-alignment-tunable heterostructures. Nat. Electron. 5, 248–254 (2022).

Yang, Y. et al. Ferroelectric enhanced performance of a GeSn/Ge dual-nanowire photodetector. Nano Lett. 20, 3872–3879 (2020).

Wang, C. et al. Gate-tunable van der Waals heterostructure for reconfigurable neural network vision sensor. Sci. Adv. 6, eaba6173 (2020).

Li, D. et al. In-material physical computing based on reconfigurable microwire arrays via halide-ion segregation. Nat. Commun. 16, 5472 (2025).

Pan, X. Parallel perception of visual motion using light-tunable memory matrix. Sci. Adv. 9, eadi4083 (2023).

Yang, Y. et al. High-performance broadband tungsten disulfide photodetector decorated with indium arsenide nanoislands. Phys. Status Solidi A 17, 217 (2020).

Li, Z. et al. In-pixel dual-band intercorrelated compressive sensing based on MoS2/h-BN/PdSe2 vertical heterostructure. ACS Nano 19, 6263 (2025).

Yang, Z. et al. Generalized Hartmann–Shack array of dielectric metalens sub-arrays for polarimetric beam profiling. Nat. Commun. 9, 4607 (2018).

Yang, J. et al. Large dynamic range Shack–Hartmann wavefront sensor based on adaptive spot matching. Light Adv. Manuf. 4, 42–51 (2024).

Darwish, T. et al. LEO satellites in 5G and beyond networks: a review from a standardization perspective. IEEE Access 10, 35040–35060 (2022).

Fastvideo. GPU camera sample. GitHub https://github.com/fastvideo/gpu-camera-sample (2023).

Park, C. et al. A 51-pJ/pixel 33.7-dB PSNR 4× compressive CMOS image sensor with column-parallel single-shot compressive sensing. IEEE J. Solid State Circuits 56, 2503–2515 (2021).

Zhou, W. et al. Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13, 600–612 (2004).

Zou, Q. et al. Deep learning based feature selection for remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 12, 2321–2325 (2015).

Wang, Y. et al. Data and code used in “In-sensor wireless computing for intelligent remote sensing”. Code Ocean https://codeocean.com/capsule/8892839/tree/v1 (2026).

Acknowledgements

This work was supported in part by the National Key R&D Program of China under grant 2023YFF1203600 (S.-J.L.), the National Natural Science Foundation of China (62304104 (Y.Y.), 62034004 (F.M.)), the Leading-edge Technology Program of Jiangsu Natural Science Foundation (BK20232004 (F.M.)), the Natural Science Foundation of Jiangsu Province (BK20233001), the AI & AI for Science Project of Nanjing University (14380240, 14380242 and 14380005), and the Fundamental Research Funds for the Central Universities (14380227, 14380247 and 14380250). F.M. and S.-J.L. acknowledge support from AIQ foundation and the e-Science Center of Collaborative Innovation Center of Advanced Microstructures. The microfabrication center of the National Laboratory of Solid State Microstructures (NLSSM) is acknowledged for their technical support. This work has been supported by the New Cornerstone Science Foundation through the XPLORER PRIZE. The work was also supported by Open Fund of State Key Laboratory of Infrared Physics (grant number SITP-SKLIP-ZD-2025-01) and Nanjing University International Collaboration Initiative.

Author information

Authors and Affiliations

Contributions

Y.Y., S.-J.L. and F.M. conceived of the concept and designed the experiments. S.-J.L. and F.M. supervised the whole project. Y. Wan, N.Y., C.L. and L.-J.L. prepared the materials and fabricated the phototransistor array. Y. Wang, N.Y. and Z.Z. performed device characterization and optoelectronic measurements. Y. Wang, Y.Y. X.-J.Y. and G.-J.R. performed in-sensor wireless computing with the phototransistor array. Y. Wang, T.J., W.L., Q.L. and Y.Y. carried out simulations. Y.Y., Y. Wang, Z.L., P.W. and C.P. analysed the experimental data. Y.Y., S.-J.L. and F.M. wrote the paper with input from all other authors.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Sensors thanks Su-Ting Han, Zhongrui Wang and Wentao Xu for their contribution to the peer review of this work. Peer reviewer reports are available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

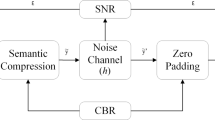

Extended Data Fig. 1 Flowchart of the in-sensor wireless computing scheme.

The entire scheme comprises a wireless transmitter with image compression functionality and a wireless receiver with image reconstruction and recognition capabilities. The parameters and their relationships are marked in red in the diagram for both hardware implementation and software simulation.

Extended Data Fig. 2 Visualization of high-resolution image reconstruction quality.

Comparisons of image reconstruction quality based on a 1000×1000 spatial-frequency pattern size for different original image sizes from 1000×1000 to 10000×10000. The left panel of each box is the original image and the right panel of each box is the corresponding reconstructed image. The reconstruction quality can be evaluated by the structural similarity index (SSIM) between the reconstructed and original images, as indicated in the right panels of each box.

Extended Data Fig. 3 Evaluation of high-resolution image reconstruction quality.

The structural similarity index (SSIM) between the reconstructed and original images as a function of the original image sizes and the corresponding compression ratios.

Extended Data Fig. 4 Evaluation of high-resolution image dataset reconstruction quality.

Recognition accuracy based on the reconstructed datasets by using 1000×1000 spatial frequency patterns with different original image sizes from 1000×1000 to 10000×10000 and the corresponding compression ratios. The dataset used for training is the remote sensing dataset RSSCN7, and the neural network model employed is MobileNetV3-Large-100. The model was trained for a total of 120 epochs.

Extended Data Fig. 5 Visualization of robustness and error tolerance of our scheme.

Comparisons of original images and the corresponding reconstructed images based on the additive white Gaussian noise (AWGN) corrupted spatial frequency pattern. Each box presents a pair of images: the left panel shows the original image without noise. The right panel shows the image reconstructed from the signal after transmission through an AWGN channel at a specified signal-to-noise ratio (SNR) ranging from 0 dB to 35 dB. The structural similarity index (SSIM) ranging from 0 (no similarity) to 1 (totally identical) indicates the reconstruction fidelity under each noise level, which is annotated on the corresponding right panel.

Extended Data Fig. 6 Evaluation of noise tolerance of wireless transmission channel.

Reconstruction fidelity of images based on spatial frequency information with different levels of additive white Gaussian noise (AWGN). The original image has a resolution of 703×509, and the size of spatial-frequency pattern used for reconstruction is 101×101.

Extended Data Fig. 7 Visualization of high-resolution image dataset reconstruction under different compression ratios.

The reconstructed RSSCN7 remote sensing datasets with the six different compression ratios from 10:1 to 100:1. These images are obtained by performing a two-dimensional Fourier transform on the original images followed by low-pass filtering and phase discretization in the spatial frequency domain, and then conducting an inverse Fourier transform for reconstruction. The original image size is fixed at 1000×1000, and the number of spatial frequency points for reconstruction varies, resulting in different compression ratios.

Extended Data Fig. 8 Evaluation of high-resolution image dataset reconstruction with different compression ratios.

Recognition accuracy based on the reconstructed RSSCN7 remote sensing datasets with different compression ratios. The MobileNetV3-Large-100 neural network model is used for training and testing on the reconstructed datasets based on the spatial frequency patterns under the six different compression ratios. The original images are RGB with a size of 703×509, all of them are converted into grayscale and resized to 1000×1000 before training and testing.

Supplementary information

Supplementary Information (download PDF )

Supplementary Figs. 1–17, Notes 1 and 2 and Table 1.

Supplementary Video 1 (download MP4 )

Experimental process of image sensing, wireless transmission and wireless reception based on our set-up.

Supplementary Video 2 (download MP4 )

Experimental process of image sensing, wireless transmission and wireless reception based on our set-up.

Supplementary Video 3 (download MP4 )

Experimental process of image sensing, wireless transmission and wireless reception based on our set-up.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Wang, Y., Yang, Y., Yang, N. et al. In-sensor wireless computing for intelligent remote sensing. Nat. Sens. (2026). https://doi.org/10.1038/s44460-026-00043-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44460-026-00043-1