Abstract

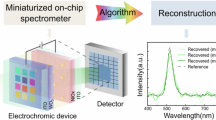

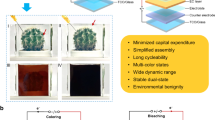

The rapid proliferation of edge-computing applications, including autonomous vehicles, wearable electronics and mobile robotics, is driving demand for compact vision systems capable of real-time intelligent processing under strict energy and latency constraints. Conventional imaging architectures, however, separate sensing from computation, producing large data streams that increase power consumption and system complexity. Here we report electrochromic hyperspectral embedding, an in-sensor computing framework that adaptively compresses spectral information at the pixel level. Our approach exploits electrically tunable photocurrent responses in electrochromic photodetectors, enabling each pixel to selectively encode its most task-relevant spectral components before readout. The resulting low-dimensional outputs interface directly with lightweight memristor-based analogue computing hardware for efficient inference. Compared with conventional artificial intelligence vision systems, electrochromic hyperspectral embedding reduces data transmission by more than an order of magnitude while maintaining high classification accuracy, offering a materials-to-system pathway towards compact, high-speed and energy-efficient intelligent vision for scalable edge applications.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data that support the findings of this study are available within this Article and its Supplementary Information. Source data are provided with this paper.

Code availability

The code used in this study is available via GitHub at https://github.com/Chaoyi-He1/HSI.

References

Fossum, E. R. CMOS image sensors: electronic camera-on-a-chip. IEEE Trans. Electron Devices 44, 1689–1698 (1997).

Yang, Z. et al. A vision chip with complementary pathways for open-world sensing. Nature 629, 1027–1033 (2024).

Wang, Z., Wan, T., Ma, S. & Chai, Y. Multidimensional vision sensors for information processing. Nat. Nanotechnol. 19, 919–930 (2024).

Hirsch, R. Exploring Colour Photography: A Complete Guide (Laurence King, 2005).

Fairman, H. S., Brill, M. H. & Hemmendinger, H. How the CIE 1931 color-matching functions were derived from Wright–Guild data. Color Res. Appl. 22, 11–23 (1997).

Taghizadeh, M., Gowen, A. A. & O’Donnell, C. P. Comparison of hyperspectral imaging with conventional RGB imaging for quality evaluation of Agaricus bisporus mushrooms. Biosyst. Eng. 108, 191–194 (2011).

Chang, C. I. Hyperspectral Imaging: Techniques for Spectral Detection and Classification (Springer Science and Business Media, 2003).

Khan, M. J., Khan, H. S., Yousaf, A., Khurshid, K. & Abbas, A. Modern trends in hyperspectral image analysis: a review. IEEE Access 6, 14118–14129 (2018).

Plaza, A. et al. Recent advances in techniques for hyperspectral image processing. Remote Sens. Environ. 113, S110–S122 (2009).

Bian, L. et al. A broadband hyperspectral image sensor with high spatio-temporal resolution. Nature 635, 73–81 (2024).

Feng, Y. Z. & Sun, D. W. Application of hyperspectral imaging in food safety inspection and control: a review. Crit. Rev. Food Sci. Nutr. 52, 1039–1058 (2012).

Hege, E. K., O’Connell, D., Johnson, W., Basty, S. & Dereniak, E. L. Hyperspectral imaging for astronomy and space surveillance. Imaging Spectrometry IX 5159, 380–391 (2004).

Lu, B., Dao, P. D., Liu, J., He, Y. & Shang, J. Recent advances of hyperspectral imaging technology and applications in agriculture. Remote Sens. 12, 2659 (2020).

Landgrebe, D. Hyperspectral image data analysis. IEEE Signal Process. Mag. 19, 17–28 (2002).

Taher, J. et al. Feasibility of hyperspectral single photon lidar for robust autonomous vehicle perception. Sensors 22, 5759 (2022).

Guerri, M. et al. Deep learning techniques for hyperspectral image analysis. Intell. Comput. 2, 100043 (2024).

Nalepa, J., Myller, M., Kawulok, M. & Smolka, B. Recent advances in multi- and hyperspectral image analysis. Sensors 21, 5652 (2021).

Wan, T. et al. In-sensor computing: materials, devices, and integration technologies. Adv. Mater. 35, 2203830 (2023).

Liu, Y., Fan, R., Guo, J., Ni, H. & Bhutta, M. U. M. In-sensor visual perception and inference. Intell. Comput. 2, 0043 (2023).

Zhou, F. & Chai, Y. Near-sensor and in-sensor computing. Nat. Electron. 3, 664–671 (2020).

Zhou, Y. et al. Computational event-driven vision sensors for in-sensor spiking neural networks. Nat. Electron. 6, 870–878 (2023).

Lee, H. et al. Three-terminal ovonic threshold switch (3T-OTS) with tunable threshold voltage for versatile artificial sensory neurons. Nano Lett. 22, 733–739 (2022).

Mennel, L. et al. Ultrafast machine vision with 2D material neural network image sensors. Nature 579, 62–66 (2020).

Chen, J. et al. Optoelectronic graded neurons for bioinspired in-sensor motion perception. Nat. Nanotechnol. 18, 882–888 (2023).

Lin, P. et al. Three-dimensional memristor circuits as complex neural networks. Nat. Electron. 3, 225–232 (2020).

Pi, L. et al. Broadband convolutional processing using band-alignment-tunable heterostructures. Nat. Electron. 5, 248–254 (2022).

Ouyang, B. et al. Bioinspired in-sensor spectral adaptation for perceiving spectrally distinctive features. Nat. Electron. 7, 705–713 (2024).

Jang, H. et al. In-sensor optoelectronic computing using electrostatically doped silicon. Nat. Electron. 5, 519–525 (2022).

Lee, S., Peng, R., Wu, C. & Li, M. Programmable black phosphorus image sensor for broadband optoelectronic edge computing. Nat. Commun. 13, 1485 (2022).

Guo, P., Jia, M., Guo, D., Wang, Z. L. & Zhai, J. Retina-inspired in-sensor broadband image preprocessing for accurate recognition via the flexophototronic effect. Matter 6, 537–553 (2023).

Hou, Y. et al. Retina-inspired narrowband perovskite sensor array for panchromatic imaging. Sci. Adv. 9, eade2338 (2023).

Yoon, H. et al. Electrically tunable van der Waals junctions for miniaturized spectrometers. Science 378, 296–299 (2022).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Cordts, M. et al. The Cityscapes dataset for semantic urban scene understanding. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 3213–3223 (CVPR, 2016).

Yang, Y. et al. Lightweight real-time semantic segmentation network with efficient transformer and CNN. Pattern Recognit. Lett. 178, 20–28 (2024).

Zeng, J. et al. Transparent-to-black electrochromic smart windows based on N,N,N′,N′-tetraphenylbenzidine derivatives and tungsten trioxide with high adjustment ability for visible and near-infrared light. Sol. Energy Mater. Sol. Cells 226, 111070 (2021).

Yen, H. et al. Transmissive to black electrochromic aramids with high near-infrared and multicolor electrochromism based on electroactive tetraphenylbenzidine units. J. Mater. Chem. 21, 6230–6237 (2011).

Zhou, J. et al. High voltage, long cycling organic cathodes rendered by in situ electrochemical oxidation polymerization. Adv. Funct. Mater. 34, 2411127 (2024).

Liu, B. et al. Modulation of energy levels by donor groups: an effective approach for optimizing the efficiency of zinc–porphyrin based solar cells. J. Mater. Chem. 22, 7434–7444 (2012).

Graham, M. et al. Photocurrent measurements of supercollision cooling in graphene. Nat. Phys. 9, 103–108 (2013).

Roy, O. & Vetterli, M. The effective rank: a measure of effective dimensionality. In Proc. 15th European Signal Processing Conference (EUSIPCO 2007) 606–610 (EUSIPCO, 2007).

Gavish, M. & Donoho, D. L. The optimal hard threshold for singular values is 4/√3. IEEE Trans. Inf. Theory 60, 5040–5053 (2014).

Pehlivan, E., Niklasson, G. A., Granqvist, C. G. & Georén, P. Ageing of electrochromic WO3 coatings characterized by electrochemical impedance spectroscopy. Phys. Status Solidi A 207, 1772–1776 (2010).

Kim, H. J., Chen, B., Suo, Z. & Hayward, R. C. Ionoelastomer junctions between polymer networks of fixed anions and cations. Science 367, 773–776 (2020).

Rojas-González, E. A. & Niklasson, G. A. Setup for simultaneous electrochemical and color impedance measurements of electrochromic films: theory, assessment, and test measurement. Rev. Sci. Instrum. 90, 085103 (2019).

Alexandrov, A. S. & Mott, N. F. Polarons and Bipolarons (World Scientific, 1996).

Zozoulenko, I. Polarons, bipolarons, and absorption spectroscopy of PEDOT. ACS Appl. Polym. Mater. 1, 83–94 (2019).

Santos, M. J. L., Brolo, A. G. & Girotto, E. M. Study of polaron and bipolaron states in polypyrrole by in situ Raman spectroelectrochemistry. Electrochim. Acta 52, 6141–6145 (2007).

Wang, C. Y. et al. Gate-tunable van der Waals heterostructure for reconfigurable neural network vision sensor. Sci. Adv. 6, eaba6173 (2020).

Ng, S. E. et al. Retinomorphic color perception based on opponent process enabled by perovskite bipolar photodetectors. Adv. Mater. 36, 2406568 (2024).

Shokouh, G. S., Magnier, B., Xu, B. & Montesinos, P. Ridge detection by image filtering techniques: a review and an objective analysis. Pattern Recognit. Image Anal. 31, 551–570 (2021).

Lee, J. et al. Polarization-sensitive in-sensor computing in chiral organic integrated 2D p-n heterostructures for mixed-multimodal image processing. Nat. Commun. 16, 4624 (2025).

Smith, T. & Guild, J. The C.I.E. colorimetric standards and their use. Trans. Opt. Soc. 33, 73 (1931).

Basterretxea, K. et al. HSI-Drive: a dataset for the research of hyperspectral image processing applied to autonomous driving systems. IEEE Trans. Intell. Veh. 6, 866–873 (2021).

Gutiérrez, Z. J. et al. On-chip hyperspectral image segmentation with fully convolutional networks for scene understanding in autonomous driving. IEEE Symp. Ser. Comput. Intell. (SSCI) 207–214 (IEEE, 2023).

Vaswani, A. et al. Attention is all you need. NeurIPS 2017, 6000–6010 (2017).

Zhang, S. Task-aware attention model for clothing attribute prediction. IEEE Trans. Circuits Syst. Video Technol. 30, 1051–1064 (2020).

Du, Q. & Yang, H. Similarity-based unsupervised band selection for hyperspectral image analysis. IEEE Geosci. Remote Sens. Lett. 5, 564–568 (2008).

Rahman, M. A. & Wang, Y. Optimizing intersection-over-union in deep neural networks for image segmentation. Adv. Vis. Comput. 10072, 234–244 (2016).

Lundberg, S. M. & Lee, S.-I. A unified approach to interpreting model predictions. Adv. Neural Inf. Process. Syst. 30, 4765–4774 (2017).

Li, C. et al. Analogue signal and image processing with large memristor crossbars. Nat. Electron. 1, 52–59 (2018).

Granqvist, C. G. Electrochromic materials: out of a niche. Chem. Rev. 110, 268–320 (2010).

Zhou, F. & Chai, Y. Near-sensor and in-sensor computing. Nat. Rev. Mater. 8, 381–399 (2023).

AEC-Q100-REV-J: Failure Mechanism Based Stress Test Qualification for Integrated Circuits (Automotive Electronics Council, 2023).

Huang, Y. et al. Mini-LED, micro-LED and OLED displays: present status and future perspectives. Light Sci. Appl. 9, 105 (2020).

Bahri, M., Ashino, R. & Vaillancourt, R. Convolution theorems for quaternion Fourier transform: properties and applications. Abstr. Appl. Anal. 2013, 162769 (2013).

You, S. et al. Hyperspectral City V1.0 dataset and benchmark. Preprint at https://arxiv.org/abs/1907.10270 (2019).

Meerdink, S. K. et al. The ECOSTRESS spectral library version 1.0. Remote Sens. Environ. 230, 111196 (2019).

Acknowledgements

This work was partially supported by Texas A&M University. Y.C.L. and L.K. acknowledge partial support from the US Army Research Office, under award no. W911NF2120059. L.K. acknowledges the partial support from the Chancellors Research Initiative, State of Texas. Q.X. and Y.H. acknowledge the Dev and Linda Gupta Endowment that partially supported this work. X.Z. acknowledges support from the National Science Foundation (grant nos. ECCS-2239822 and ECCS-2426252). V.K.S. and M.C.H. acknowledge support for data analysis from the DOE ASCR Microelectronics Science Research Center Project BIA, which is funded by the US Department of Energy Office of Science under contract no. DE-AC02-06CH11357.

Author information

Authors and Affiliations

Contributions

R.L. and C.H. contributed equally to this work. R.L., L.K. and Y.C.L. conceived the research. R.L. conducted the material preparation, device fabrication and characterization. C.H., R.L. and E.Z. worked on the modelling and training of the AI algorithms. J.Z., K.S.L., J.C., R.K., R.L., Z.W. and X.Z. contributed to training data processing. Y.H. and Q.X. contributed to the hardware simulation. R.L., C.H., V.K.S., X.L., X.Z., H.X., M.C.H., L.K. and Y.C.L. contributed to the data analysis. L.K. and Y.C.L. supervised the project. All authors contributed to the writing and revision of the paper.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Sensors thanks Ying-Chen Chen and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information (download PDF )

Supplementary Notes 1–6, Figs. 1–23 and Tables 1–7.

Source data

Source Data Fig. 1 (download XLSX )

Source numerical data used to generate Fig. 1d, including channel number, model parameter counts and hardware complexity metrics.

Source Data Fig. 2 (download XLSX )

Raw and processed photocurrent, responsivity spectra, power-dependent measurements and impedance fitting parameters underlying Fig. 2c–f.

Source Data Fig. 3 (download XLSX )

Numerical RGB convolution kernel outputs used to generate Fig. 3b,c.

Source Data Fig. 4 (download XLSX )

Classification accuracy, IoU values, confusion matrix data and channel selection metrics used to generate Fig. 4c–f.

Source Data Fig. 5 (download XLSX )

Model parameter counts, memristor array usage, latency values and IoU results used to generate Fig. 5c,d.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Li, R., He, C., Huang, Y. et al. Electrochromic hyperspectral embedding for ultracompact intelligent vision. Nat. Sens. (2026). https://doi.org/10.1038/s44460-026-00065-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44460-026-00065-9