Abstract

Few forecasting models have been translated into digital prediction tools for prevention and control of climate-sensitive infectious diseases. We propose a 3-U (useful, usable, and used) research framework for advancing the adoptability and sustainability of these tools. We make recommendations for 1) developing a tool with a high level of accuracy and sufficient lead time to permit effective proactive interventions (useful); 2) conducting a needs assessment to ensure that a tool meets the needs of end-users (usable); and 3) demonstrating the efficacy and cost-effectiveness of a tool to secure its adoption into routine surveillance and response systems (used).

Similar content being viewed by others

Introduction

Climate-sensitive infectious diseases

The Intergovernmental Panel on Climate Change has concluded with high confidence that climate change has caused and will continue to cause an increased incidence of climate-sensitive infectious diseases (CSIDs), including vector-borne, food-borne, water-borne, and respiratory diseases1. Increasing temperature can enhance the transmission of vector-borne diseases by increasing vector survival, feeding activity, and replication rate; increasing the rate of development of the pathogen within the vector; lengthening the transmission season; and expanding the geographic areas suitable for transmission2,3. In addition, changes in precipitation can increase vector abundance in context-specific ways2,3. A warming climate can also increase the incidence of water-borne and food-borne diseases by accelerating the proliferation of pathogens in their habitats and causing more frequent extreme weather events that facilitate the spread and outbreaks of these diseases4,5. Exposure to wildfire smoke, which is increasing due to climate change, may increase the risk of respiratory infections6,7. Furthermore, both unusually wet and unusually dry conditions are associated with increased risk of fungal and other respiratory infections in sometimes complex ways8,9,10, and meteorological factors, including temperature, humidity, and precipitation, influence the seasonality of viral respiratory infections11.

Opportunities and barriers to develop prediction tools for CSIDs

Digital technology could facilitate the incorporation of climatic big data into disease surveillance systems to enable them to serve as early warning systems for CSIDs12. Climate-informed statistical models can be used to develop digital tools to forecast CSID outbreaks at different spatiotemporal resolutions13. Such tools, integrated into surveillance systems, could provide the lead time needed for public health professionals to thwart predicted outbreaks by proactively implementing preventive measures, in partnership with communities12,13,14. Such early warning systems are especially important to implement in low-and middle-income countries (LMICs)3,15, which suffer the worst impacts of climate change, have the highest incidence of CSIDs like dengue and malaria, and have the least adaptive capacity to address these impacts due to limited resources.

Numerous studies have developed climate-informed forecasting models for CSIDs. However, only a small proportion of these models have been translated into user-friendly digital tools for incorporation into routine surveillance systems13,16. Furthermore, the relatively few developed tools rarely have been used in the field for disease prevention by public health practitioners. A comprehensive landscape mapping review published by the Inter-American Institute for Global Change Research identified 37 existing climate-informed CSID modelling tools that were transparently described and validated, named, and accessible13,16. Thirty of these tools focussed on vector-borne diseases. Twenty of the tools had either been presented as an accessible product or used in an implementation setting; however, only one-quarter of the tools had interfaces legible to decision-makers. The review did not attempt to determine whether the tools were being actively used to inform decision-making.

The Inter-American Institute for Global Change Research landscape mapping review included interviews with researchers and policy stakeholders that revealed several important barriers to the use of climate-informed CSID models as forecasting tools by public health practitioners13,16. One key barrier was an inadequate collaboration among modellers, tool developers (i.e., software engineers), and decision-makers to translate models into practical tools that would be adopted by decision-makers. A second key barrier was insufficient training for practitioners who would use the tools. These barriers pointed to the need for the co-creation of practical, user-friendly, sustainable tools through multi-sectoral collaboration and local training and capacity building. Finally, there is a paucity of compelling evidence on the efficacy and cost-effectiveness of prediction tools for reducing the incidence of CSIDs. Such evidence is needed to make a case for public health authorities to adopt these tools.

Goal of this perspective

Based on our experience in research and practice, our multidisciplinary team of experts from disciplines including medicine, public health, epidemiology, infectious disease modelling, climate science, sociology, economics, health services and management, and information and communication technology, believe that for CSID prediction tools to be sustainably adopted into routine surveillance systems, they must first be shown, according to the concept of use17, to be useful, usable, and effectively used for disease prevention and control.

The concept of use, which we borrowed from the field of product design, has three contextual principles: useful, usable, and used17. A product is useful if it allows the users to accomplish a specific purpose. However, it may not be used if it is not usable, meaning that the product is readily understandable, user-friendly, and can be used in a simple, convenient, efficient, engaging, and effective manner. Furthermore, a product may be both useful and usable but still not used if, for example, it does not meet defined needs and cost-effectiveness so that it is adopted by potential users. In this Perspective, we propose a comprehensive research framework for advancing the adoptability and sustainability of CSID prediction tools, based on the concept of use and the 3-U principles (Fig. 1).

Each blue oval represents a stage in the development of an early warning system. Methods to ensure that the early warning system is useful, usable, and used are applied at specific stages of the development process. In addition, a needs assessment should be conducted for each stage of the project.

How to make the tool USEFUL

A useful tool should be based on a CSID-specific model that predicts the incidence or an outbreak of the CSID with a high level of accuracy and with enough lead time to permit effective proactive prevention efforts. Mechanistic models, based on first principles, simulate biological processes involved in transmission dynamics, whereas empirical models are based on observed statistical associations between predictors and the CSID outcome. Many prediction models incorporate both mechanistic and statistical components18.

Model development

A prerequisite for adequate model development is the availability in the target geographic location of high-quality, long-term datasets for the daily, weekly, or monthly incidence of the CSID of interest; climate data, such as daily mean air temperature and daily precipitation; and other key predictors, such as population density, land cover, and socioeconomic variables. An important limitation is that some or all of these data may be of insufficient quality (e.g., paper rather than electronic records) or not available at all, especially in LMICs and other low-resource settings, in which case development of a prediction model and subsequent tool may not be possible. Thus, before a project to develop a digital prediction tool for CSID prevention and control can be launched, consultations with data providers are essential to understand the existence, features, strengths, and limitations of these datasets. Furthermore, a systematic review could be conducted to identify previous modelling approaches for the CSID of interest, their strengths and limitations, and climate and non-climate predictors identified in previous studies19. Then, CSID outcome and predictor datasets at the appropriate spatiotemporal resolution need to be identified and access secured.

It would be valuable for public health authorities to incorporate forecasting uncertainties into their decision-making20,21. Therefore, approaches that yield probabilistic outcomes are most useful. For instance, spatiotemporal models fitted using a Bayesian framework enable quantification of the probability that an outbreak may occur at a specific time and location22. While a number of machine learning methods have recently been developed for forecasting, they often do not yield uncertainty estimates23. The ideal model should provide accurate disease forecasting at a small enough spatial scale and a long enough lead time to make it feasible for resource-constrained public health departments to proactively implement the measures needed to prevent a CSID outbreak in time to make a difference. Finally, previous studies have found that ensemble models, which aggregate multiple independent base models, generally outperform the individual models24,25, suggesting that ensemble models may be favoured for CSID forecasting.

Model validation

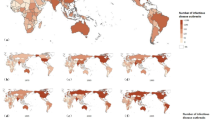

A validation process, which evaluates the accuracy of a model in predicting disease incidence and outbreaks, is essential for selecting the optimal model(s). Time series cross-validation is a general tool for assessing the predictive ability of a model26, in which a model’s predictive performance after a training period is tested by comparing predicted and observed values using the leave-future-out cross-validation technique27. To validate the prediction of CSID incidence, three inter-related metrics can be used in concert28: (i) Sharpness examines the variance of the predictive distribution at each time point; (ii) Bias tests whether a model systematically over- or under-predicts; and (iii) Continuous Rank Probability Score (CRPS) is a summary measure that rewards sharp, unbiased forecasts. CRPS is similar to a mean absolute error but uses the full probability distribution of the forecasts instead of a point estimate, making it relevant for Bayesian prediction models22. It is desirable for forecasts to have a low CRPS (CRPS has no upper bound, with zero indicating a perfect forecast) and to be sharp (i.e., for the predictive distribution to have low variance) and unbiased. Validation is also needed to assess the accuracy of a model for predicting CSID outbreaks (as opposed to incidence), which occur when the number of observed cases exceeds a location-specific epidemic threshold. The Brier score29, used to measure the accuracy of probabilistic forecasts for predicting events, is commonly used for validation of outbreak predictions of Bayesian models. An example of model development and validation for dengue forecasting is shown in Fig. 2. It is possible that a model that predicts CSID incidence and outbreaks far enough in advance to be useful will not be identified, in which case the project should be abandoned.

Each box represents a step in the process of developing and validating a prediction model. The grey boxes provide details on the methods and data used for model development and evaluation. The figure in the “Prediction Tool” box represents a colour code for a warning message.

How to make the tool USABLE

A usable tool is user-friendly, simple, and meets the needs of end-users (e.g., public health practitioners) for CSID prevention and control. Such a tool could be a web-based software system with a mobile app interface based on the outputs of the prediction model. The tool should allow users to input data, receive alerts, and access real-time information conveniently from anywhere. Ideally, a usable tool would be integrated into a routine surveillance system for the CSID in question, based on datasets that are automated and updated in real-time by existing systems and available for future use in the developed tool for routine forecasting. It is important to recognise that in many settings (especially LMICs and other low-resourced settings) routine surveillance systems are rudimentary or do not exist and that existing systems (which vary widely in type, quality, human resources, and financial capacity) may not be automated, updated in real-time, and/or available for future routine use. In many instances, after these usability factors are carefully assessed, a decision may be made that substantial capacity building would be needed as a prerequisite to tool development.

Needs assessment

Furthermore, to ensure that the tool meets the needs of end-users, once a decision is made to proceed with tool development, a needs assessment (NA) should be conducted for each stage of the project. The NA, which should incorporate assessments of community and public health system assets and technology acceptance of potential tool users, should engage a wide range of stakeholders, including decision-makers, public health practitioners, data providers, researchers, other technical experts, and community leaders.

For the early model development and validation stage of the project, the NA should identify the needs to ensure that the tool is appropriate and acceptable to relevant stakeholders. For example, public health practitioners may have a well-established definition for an outbreak of the CSID in question. If this is the case, ideally the model should adhere to this definition. However, if the model developers find that another definition would be more useful for forecasting, a negotiation process between the research team and the practitioners should ensue to come up with an outbreak definition that both allows for optimal forecasting and is acceptable to the practitioners.

For all stages of tool development, the NA should assess the requirements for the tool to be effectively used in the surveillance system and routine prevention practices. For example, when the tool predicts an outbreak, what will the proactive intervention to prevent the outbreak look like? This will depend on factors such as the financial and human assets available to the public health system, as well as practitioners’ beliefs about the effectiveness of alternative intervention strategies. If the proactive intervention might involve community mobilisation, then assessing community assets that could aid in the mobilisation, such as faith-based organisations and media outlets, would be relevant. Again, a negotiation between the research team and the practitioners may be needed to settle upon the characteristics of the proactive intervention. For the project’s final stages and the post-project stage, the NA should evaluate the need for sustaining and expanding the use of the tool going forward. This often will be a question of assessing financial and human assets as well as public health system priorities and needs.

Bradshaw’s widely-used concept of four types of needs, as stated in his seminal 1972 “Taxonomy of Social Need”, can provide a foundational framework for an NA and categorising and understanding the complexity of needs30. It has been used to inform social policy design, service allocation, and resource distribution. Comparative needs are defined objectively and are assessed by comparing the health status of populations and the health services available to them. Normative needs, defined by experts, set a standard against which the tool and its use should be evaluated. Expressed needs are defined by what products or services a community uses. Felt needs are defined by what members of the target population state their needs and desires to be. An NA process for tool development can be based on a literature review and consultations with other user groups (comparative needs), as well as in-depth interviews or focus group discussions with 1) policymakers, experts, and scientists (normative needs) and 2) practitioners, data and technical service providers, and community members (expressed needs and felt needs) (Fig. 3).

This framework is used to identify needs related to integrating a tool into a routine surveillance system and preventive practices. The top row of the figure represents the needs assessment methods. The second row describes the data collection process and research participants included in each needs assessment activity. The third row shows the corresponding types of needs identified by each needs assessment method. The final box shows the findings of the needs assessment.

A usable tool is easy to use, compatible with existing systems and technologies, and aligns with the users’ skills and capabilities. Previous studies have shown that the “fit” between information technology (IT) and routine clinical practices “will lead intended end-users to accept or reject the IT, to use it or misuse it, to incorporate it into their routine or work around it”31. This is why the assessment of technology acceptance of potential users is a vital component of the NA. Various technology acceptance frameworks that consider determinants of technology use, such as perceived usefulness, perceived ease of use, social influence, and habit may be used to facilitate this assessment32,33,34.

NAs have limitations. First, felt needs vary among individuals and cultures, leading to potential biases depending on the perspectives of the key informants. Thus, involving diverse and representative stakeholders is crucial35. Second, not all expressed needs are communicated due to barriers such as lack of access. Triangulating multiple sources of information can help reveal these hidden needs within the community. Third, because needs may evolve over time, researchers should maintain stakeholder engagement throughout the project and establish feedback mechanisms to capture changing needs. Finally, needs are often complex and conflicting among different stakeholders, which may complicate identifying solutions that address all perspectives. Researchers must prioritise needs that are both actionable and aligned with the broader objectives of the project36.

How to advance the tool to be USED

Efficacy evaluation

Even if an early-warning tool is useful and usable, it still may not be used if there is limited or no evidence of its efficacy and cost-effectiveness for reducing CSID incidence. A randomised controlled trial is the gold standard study design for assessing the efficacy of a public health intervention. Since an early-warning tool is a community-level, geographically based intervention carried out by a public health department, rather than an intervention that targets individual people, a randomised controlled trial of the efficacy of an early-warning tool must randomise geographic units in concert with their associated public health departments. Such a trial is known as a cluster (i.e., group) randomised controlled trial (CRCT), where the unit of randomisation is by necessity, the cluster (in this case, a geographic unit) and not the individual person37. CRCTs have been widely used to assess the efficacy of infectious disease interventions38, including, for example, a CRCT of community mobilisation for dengue prevention in 150 census enumeration areas in Nicaragua and Mexico39, a CRCT of mass drug administration against malaria in 32 villages in The Gambia40, and a CRCT of a community-led total sanitation intervention with a focus on improved household toilets to reduce diarrhoea incidence in children in 48 villages in Ethiopia41.

A prerequisite for conducting a CRCT to assess an early warning tool would be a geographic region consisting of sub-regions (i.e., districts) served by a public health department(s) that has (have) the willingness and capacity to implement a proactive intervention when the early-warning tool predicts an outbreak. Clusters would be randomised to an intervention arm and a non-intervention (control) arm. To determine the number of clusters to be randomised, a target reduction in CSID incidence (e.g., 25%) in the intervention arm compared to the control arm needs to be set, and then a sample size calculation needs to be conducted to ensure sufficient statistical power. This calculation needs to take into account both the number of clusters and the number of individuals within clusters due to a phenomenon known as within-cluster correlation; i.e., individuals within clusters tend to be more like each other than individuals in other clusters with respect to the probability of the outcome42. Statistical power is inversely proportional to the degree of within-cluster correlation.

An example of a CRCT for a vector-borne CSID is illustrated in Fig. 4. The non-intervention (control) clusters would conduct routine prevention practices, as promulgated by public health authorities, for the CSID in question. Intervention clusters also would conduct routine prevention practices, but, in addition, if the early-warning system predicts an outbreak, they would conduct a set of proactive preventive interventions prescribed in the study protocol. Primary outcome measures could be disease incidence and frequency of outbreaks, and secondary outcomes could be entomological indices as determined by vector monitoring.

Here, we illustrate a CRCT to evaluate the efficacy and cost-effectiveness of a prediction tool for a vector-borne CSID. Clusters are randomly assigned to the intervention arm and the control arm. The clusters in both arms follow routine disease control procedures. The clusters in the intervention arm also receive a proactive intervention prompted by the prediction of an outbreak by the early warning system. Measurement of disease incidence and outbreaks, vector indices, and cost-effectiveness are conducted in all clusters before and during the intervention to compare outcomes in the two arms.

Cost-effectiveness evaluation

In addition, a cost-effectiveness analysis that generates value-for-money evidence should be incorporated into the trial43. The key outcome would be the incremental cost-effectiveness ratio (ICER), that compares the incremental cost change for disease surveillance and the incremental outcome cost change (e.g., disability-adjusted life years [as determined by society’s willingness to pay], number of hospitalised CSID cases). The tool would be considered cost-effective if the estimated ICER were less than one.

Limitations of cluster-randomised controlled trials

Although, by necessity, the efficacy of a CSID early warning system needs to be assessed at the level of geographic units, CRCTs have limitations. First, if the within-cluster correlation is high, then a large number of clusters may be needed for sufficient statistical power, which could be expensive and difficult to implement. Second, despite randomisation, CRCTs may be subject to confounding when the number of randomised clusters is relatively small, such that the intervention and control groups may not be comparable with respect to confounders simply due to chance37. This limitation could be addressed by conducting stratified randomisation of clusters on important known confounders, such as baseline CSID incidence rate, and by adjusting for confounders in the analysis.

Third, there is the possibility of cross-contamination of intervention and control clusters due to factors such as the movement of people or vectors (in the case of a vector-borne CSID) across clusters, which would make intervention and control clusters effectively more alike and, therefore, bias efficacy estimates toward the null38,44. This limitation could be addressed by creating geographic buffer zones between intervention and control clusters, by only using the central part of each cluster in the data analysis (effectively creating geographic buffers), or by using clusters of large geographic size to reduce the impact of cross-cluster movement of people or vectors (in the case of a vector-borne CSID)38,44.

Furthermore, the possibility of cross-contamination could be avoided completely by using a quasi-experimental, nonrandomized pre/post-intervention study design instead of a CRCT45. In this design, the intervention is implemented in a defined geographic area and the incidence of the CSID being studied is compared before and after the intervention is implemented; without a control geographic area, there is no contamination issue. However, abandonment of a randomised design opens up the possibility of severe confounding as there are many factors, both climatic and non-climatic, that could affect the incidence of a CSID between the pre-intervention and post-intervention periods apart from the intervention itself 45. For example, for reasons that are not well understood, between 2023 and 2024 there was an unprecedented increase in probable dengue cases in Brazil – from 1.65 million in the entire year of 2023 to 6.56 million in the first 45 epidemiological weeks of 202446. Thus, it often is preferable to risk bias toward the null in a CRCT versus risking severe confounding in a nonrandomized pre/post-intervention study.

There are specific barriers to conducting trials in LMICs, including low levels of financial and human capacity, ethical and regulatory system obstacles, poor research environment, operational barriers, and competing demands on potential research, practice, or community partners47. Finally, it is possible that a CRCT will have a null result, which would indicate that the early warning tool should not be implemented.

How to make the tool SUSTAINABLE

The goal of sustainability of the prediction tool must be considered prior to, during, and after the project.

Pre-Project

The project should engage stakeholders, including decision-makers, researchers, local, regional, and national public health practitioners, data providers, software developers, other technical experts, and community representatives in designing the project to ensure a sense of ownership and that its desired outcomes are consistent with the needs of the health sector and the community.

During the project

NAs should be conducted for all phases of the project to ensure the appropriateness and acceptability of the tool to the relevant stakeholders. The CRCT should be designed as a pragmatic trial that ensures alignment with routine practices of the public health departments at various administrative levels (e.g., local, regional, national), including integration of the tool into the existing surveillance and response systems without adversely interfering with current practices.

Post-project

The following activities would ensure post-project use of the tool:

-

Adoption/endorsement of the tool by public health departments, ideally including at the national level (i.e., the Ministry of Health). Once the efficacy and cost-effectiveness of the prediction tool have been established, the project should implement communication activities (technical workshops) to promote such adoption.

-

Capacity-building through training-of-trainers (public health practitioners) to advance implementation of the tool beyond the intervention districts in the trial. The trainers would train practitioners in other districts of the country once the tool has been adopted.

-

Collaborating with public health departments to integrate monitoring and evaluation of the tool into the routine practices of the CSID surveillance system. Monitoring and evaluating the ongoing effectiveness of the tool in maintaining a reduction of CSID incidence should be funded by regular public health funding schemes.

-

To maximise the usefulness, reach, and reproducibility of the project, particularly in LMICs, release the study design and research outputs along with guidance documents via open source. The modelling software tool could be deposited in a version control platform like GitHub. Guidance documents would describe details of the research, including methods, instruments, procedures, statistical analysis, and computer code. In addition, sample datasets, along with a user’s guide that would enable researchers to use the developed software effectively should be published. This would help researchers reproduce the project and develop early warning tools for CSID adapted to their particular settings.

Conclusion

Although there is a high level of interest in developing prediction tools for CSID prevention, how to ensure the actual use of these tools in routine surveillance systems is still an open question. In 2005, the World Health Organisation published a conceptual framework for developing early warning systems for CSIDs48. The publication focused on how to develop a “useful” (our terminology, not theirs) early warning system and performed a detailed evaluation of candidate CSIDs. Although it advocated for including health policymakers in all stages of system design and for rigorous evaluation and cost-effectiveness analysis, it did not provide detailed guidance on developing a “usable” and “used” early warning system.

In this Perspective, we recommend a 3-U framework to promote the usefulness, usability, and actual use of prediction tools, as well as approaches to ensure that the tool is sustainable. We hope our recommendations will help policymakers, researchers, public health practitioners, and donors better formulate projects to advance the adoptability and sustainability of early warning tools for CSID prevention and control. As the incidence of CSIDs continues to increase due to climate change, integrating early warning tools into routine practice will represent a crucial climate change adaptation measure. This will be especially challenging in LMICs and other low-resource settings, where these measures are most needed.

References

IPCC. Summary for policymakers. In Climate Change 2023: Synthesis Report. Contribution of Working Groups I, II and III to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change (eds Lee, H. & Romero, J) 1–34 (IPCC, Geneva, Switzerland, 2023).

Mordecai, E. A. et al. Thermal biology of mosquito‐borne disease. Ecol. Lett. 22, 1690–1708 (2019).

Rocklöv, J. & Dubrow, R. Climate change: an enduring challenge for vector-borne disease prevention and control. Nat. Immunol. 21, 479–483 (2020).

Anas, M. et al. in: Microbiomes and the Global Climate Change (eds Showkat Ahmad Lone & Abdul Malik). 123–144 (Springer, Singapore, 2021).

Alcayna, T. et al. Climate-sensitive disease outbreaks in the aftermath of extreme climatic events: a scoping review. One Earth 5, 336–350 (2022).

Cascio, W. E. Wildland fire smoke and human health. Sci. Total Environ. 624, 586–595 (2018).

Reid, C. E. & Maestas, M. M. Wildfire smoke exposure under climate change: impact on respiratory health of affected communities. Curr. Opin. Pulm. Med. 25, 179–187 (2019).

Mirsaeidi, M. et al. Climate change and respiratory infections. Ann. Am. Thorac. Soc. 13, 1223–1230 (2016).

Gorris, M. E., Treseder, K. K., Zender, C. S. & Randerson, J. T. Expansion of coccidioidomycosis endemic regions in the United States in response to climate change. GeoHealth 3, 308–327 (2019).

Head, J. R. et al. Effects of precipitation, heat, and drought on incidence and expansion of coccidioidomycosis in western USA: a longitudinal surveillance study. Lancet Planet. Health 6, e793–e803 (2022).

He, Y., Liu, W. J., Jia, N., Richardson, S. & Huang, C. Viral respiratory infections in a rapidly changing climate: the need to prepare for the next pandemic. EBioMedicine 93, 104593 (2023).

Pley, C., Evans, M., Lowe, R., Montgomery, H. & Yacoub, S. Digital and technological innovation in vector-borne disease surveillance to predict, detect, and control climate-driven outbreaks. Lancet Planet. Health 5, e739–e745 (2021).

Ryan, S. J. et al. The current landscape of software tools for the climate-sensitive infectious disease modelling community. Lancet Planet. Health 7, e527–e536 (2023).

Neta, G. et al. Advancing climate change health adaptation through implementation science. Lancet Planet. Health 6, e909–e918 (2022).

Nsubuga, P. et al. Public health surveillance: a tool for targeting and monitoring interventions. In Disease Control Priorities in Developing Countries. 2nd ed. (eds Jamison, D. T. et al.) 997–1015 (Oxford University Press, 2006).

Stewart Ibarra, A. et al. Landscape mapping of software tools for climate-sensitive infectious disease modelling. (Inter-American Institute for Global Change Research: IAI and Wellcome Trust, 2022).

Interaction Design Foundation - IxDF. Useful, Usable, and Used: Why They Matter to Designers. https://www.interaction-design.org/literature/article/useful-usable-and-used-why-they-matter-to-designers (2020).

Lessler, J., Azman, A. S., Grabowski, M. K., Salje, H. & Rodriguez-Barraquer, I. Trends in the mechanistic and dynamic modeling of infectious diseases. Curr. Epidemiol. Rep. 3, 212–222 (2016).

Page, M. J. et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. Int. J. Surg. 88, 105906 (2021).

Lowe, R. et al. Dengue outlook for the World Cup in Brazil: an early warning model framework driven by real-time seasonal climate forecasts. Lancet Infect. Dis. 14, 619–626 (2014).

Colón-González, F. J. et al. A methodological framework for the evaluation of syndromic surveillance systems: a case study of England. BMC Public Health 18, 1–14 (2018).

Colón-González, F. J. et al. Probabilistic seasonal dengue forecasting in Vietnam: A modelling study using superensembles. PLoS Med. 18, e1003542 (2021).

Wikle, C. K. & Zammit-Mangion, A. Statistical deep learning for spatial and spatiotemporal data. Annu. Rev. Stat. Appl. 10, 247–270 (2023).

Yamana, T. K., Kandula, S. & Shaman, J. Superensemble forecasts of dengue outbreaks. J. R. Soc. Interface 13, 20160410 (2016).

Johansson, M. A. et al. An open challenge to advance probabilistic forecasting for dengue epidemics. Proc. Natl. Acad. Sci. USA 116, 24268–24274 (2019).

Hyndman, R. J. & Athanasopoulos, G. Forecasting: Principles and Practice. 3rd edition. (OTexts, 2018).

Bürkner, P.-C., Gabry, J. & Vehtari, A. Approximate leave-future-out cross-validation for Bayesian time series models. J. Stat. Comput. Simul. 90, 2499–2523 (2020).

Funk, S. et al. Assessing the performance of real-time epidemic forecasts: A case study of Ebola in the Western Area region of Sierra Leone, 2014-15. PLoS Comput. Biol. 15, e1006785 (2019).

Brier, G. W. Verification of forecasts expressed in terms of probability. Mon. Weather Rev. 78, 1–3 (1950).

Bradshaw, J. in Problems and Progress in Medical Care: Essays on Current Research, 7th series (ed Gordon McLachlan). 71–82 (Oxford, Oxford University Press, 1972).

Holden, R. J. & Karsh, B.-T. The technology acceptance model: its past and its future in health care. J. Biomed. Inform. 43, 159–172 (2010).

Davis, F. D., Bagozzi, R. P. & Warshaw, P. R. User acceptance of computer technology: A comparison of two theoretical models. Manag. Sci. 35, 982–1003 (1989).

Venkatesh, V. & Davis, F. D. A theoretical extension of the technology acceptance model: Four longitudinal field studies. Manag. Sci. 46, 186–204 (2000).

Tamilmani, K., Rana, N. P., Wamba, S. F. & Dwivedi, R. The extended Unified Theory of Acceptance and Use of Technology (UTAUT2): A systematic literature review and theory evaluation. Int. J. Inf. Manage. 57, 102269 (2021).

Royse, D., Staton-Tindall, M., Badger, K. & Webster, J. M. Needs Assessment. (Oxford: Oxford University Press, 2009).

Bradshaw, J. The conceptualization and measurement of need: a social policy perspective. In Researching the People's Health (eds Popay, J & Williams, G) 45–57 (Routledge, London, 1994).

Hayes, R. J. & Moulton, L. H. Cluster Randomised Trials. (Boca Raton, Florida, Chapman and Hall/CRC, 2017).

Hayes, R., Alexander, N. D., Bennett, S. & Cousens, S. Design and analysis issues in cluster-randomized trials of interventions against infectious diseases. Stat. Methods Med. Res. 9, 95–116 (2000).

Andersson, N. et al. Evidence based community mobilization for dengue prevention in Nicaragua and Mexico (Camino Verde, the Green Way): cluster randomized controlled trial. BMJ 351, h3267 (2015).

Dabira, E. D. et al. Mass drug administration of ivermectin and dihydroartemisinin–piperaquine against malaria in settings with high coverage of standard control interventions: a cluster-randomised controlled trial in The Gambia. Lancet Infect. Dis. 22, 519–528 (2022).

Cha, S. et al. Effect of a community-led total sanitation intervention on the incidence and prevalence of diarrhea in children in rural Ethiopia: a cluster-randomized controlled trial. Am. J. Trop. Med. Hyg. 105, 532 (2021).

Hurley, J. C. How the cluster-randomized trial “works. Clin. Infect. Dis. 70, 341–346 (2020).

Sanders, G. D. et al. Recommendations for conduct, methodological practices, and reporting of cost-effectiveness analyses: second panel on cost-effectiveness in health and medicine. JAMA 316, 1093–1103 (2016).

Cavany, S. et al. Does ignoring transmission dynamics lead to underestimation of the impact of interventions against mosquito-borne disease? BMJ Glob. Health 8, e012169 (2023).

Eliopoulos, G. M. et al. The use and interpretation of quasi-experimental studies in infectious diseases. Clin. Infect. Dis. 38, 1586–1591 (2004).

Brazil Ministério da Saúde. Atualização de Casos de Arboviroses. https://www.gov.br/saude/pt-br/assuntos/saude-de-a-a-z/a/aedes-aegypti/monitoramento-das-arboviroses (2024).

Alemayehu, C., Mitchell, G. & Nikles, J. Barriers for conducting clinical trials in developing countries- a systematic review. Int. J. Equity Health 17, 37 (2018).

Kuhn, K. Campbell-Lindrum, D., Haines, A. & Cox, J. Using Climate to Predict Infectious Disease Epidemics. (Geneva: World Health Organization, 2005).

Acknowledgements

This work was supported by the Wellcome Trust [226474/Z/22/Z]. F.C.G., an employee of Wellcome Trust, contributed to methodology and reviewed and edited the manuscript as a co-author. C.L.L. was supported by an Australian National Health and Medical Research Council Fellowship (Grant number 1193826).

Author information

Authors and Affiliations

Contributions

D.P. and R.D. conceptualised the manuscript, outlined the research framework and methodology, led the manuscript writing, and were responsible for project administration. D.P. wrote the first draft of the manuscript and was responsible for the literature search. F.C.G. contributed to the methodology, reviewed, and edited. D.M.W. contributed modelling expertise, reviewed, and edited. V.B. contributed software development expertise, reviewed, and edited. N.S.V. and K.Q.D. contributed intervention method expertise, reviewed, and edited. S.N. contributed health economic evaluation methods expertise, reviewed, and edited. C.C., H.P., and C.T.P. contributed needs assessment expertise, reviewed, and edited. Q.V.D. contributed climatic modelling expertise, reviewed, and edited. M.H., C.L.L., S.R., L.T.P., and D.N.T. reviewed and edited. All authors read and approved the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Frédéric Piel, and the other anonymous reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Phung, D., Colón-González, F.J., Weinberger, D.M. et al. Advancing adoptability and sustainability of digital prediction tools for climate-sensitive infectious disease prevention and control. Nat Commun 16, 1644 (2025). https://doi.org/10.1038/s41467-025-56826-6

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41467-025-56826-6

This article is cited by

-

Digital behaviour change ecosystems for sustainable innovation

Discover Sustainability (2026)