Abstract

Working memory is essential for guiding our behaviors in daily life, where sensory information continuously flows from the external environment. While numerous studies have shown the involvement of sensory areas in maintaining working memory in a feature-specific manner, the challenge of utilizing retained sensory representations without interference from incoming stimuli of the same feature remains unresolved. To overcome this, essential information needs to be maintained dually in a form distinct from sensory representations. Here, using working memory tasks to retain braille patterns presented tactually or visually during fMRI scanning, we discovered two distinct forms of high-level working memory representations in the parietal and prefrontal cortex, together with modality-dependent sensory representations. First, we found supramodal representations in the superior parietal cortex that encoded braille identity in a consistent form, regardless of the involved sensory modality. Second, we observed that the prefrontal cortex and inferior parietal cortex specifically encoded cross-modal representations, which emerged during tasks requiring the association of information across sensory modalities, indicating a different high-level representation for integrating a broad range of sensory information. These findings suggest a framework for working memory maintenance that incorporates two distinct types of high-level representations–supramodal and cross-modal–operating alongside sensory representations.

Similar content being viewed by others

Introduction

Working memory plays a pivotal role in guiding our continual thoughts and behaviors in our everyday lives1,2,3,4,5,6,7,8. This cognitive faculty enables us to temporarily retain relevant information and utilize it to guide our moment-to-moment behaviors1,2. Furthermore, comprehending the neural mechanisms underlying working memory maintenance in the brain holds profound implications for practical domains such as mind reading or behavior prediction9. Nevertheless, the nature of working memory representations in the brain has been a subject of prolonged debate, particularly concerning the working memory representations in higher-order cortical areas such as the prefrontal cortex (PFC)5,10,11,12.

Although the traditional view of working memory suggests a critical role of the PFC in both the maintenance and executive control of working memory13,14,15,16, numerous neuroimaging studies consistently demonstrate that sensory details retained in working memory are localized within the corresponding sensory cortical regions in a modality-specific manner3,5,17,18,19,20,21,22,23,24,25. Thus, another view of working memory suggests that while the PFC is involved in executive control or attention encoding, maintenance occurs in posterior cortical regions, including sensory areas, in a feature-specific manner5,22,24,26. However, the maintenance of individual features in corresponding sensory areas, such as holding visual features in the visual cortex, may not be sufficient to guide ongoing behaviors. A critical issue arises when a sensory system already occupied by a specific sensory feature needs to process concurrent sensory input. The low-level memory trace held in the sensory system may interfere with incoming same-feature traces, resulting in an unsuccessful comparison between upcoming and existing information for guiding moment-to-moment behaviors12,27,28. To address this issue, one plausible proposal suggests the duplicated maintenance of working memory in both the higher-order cortex and the sensory cortex11,12. These duplicated representations may be useful in retaining working memory contents without distraction, even when new sensory information continuously flows into our sensory systems.

If high-level representations play a role in the duplicated retention process of working memory, they should consistently be observed during working memory processes regardless of sensory modality or task goals. Moreover, instead of replicating sensory information in its entirety, it is necessary to hold essential information in a form distinct from sensory representations. Based on prior research on frontal representations during working memory tasks12,20,29,30,31, these high-level representations may maintain abstract information that lacks sensory details. Consequently, the same high-level representation is expected for a given item across working memory tasks involving different sensory modalities, indicating the supramodal nature of high-level representation. This idea of high-level representation is also in line with the early view that information from the environment is first processed in a sensory register in a modality-specific way, and then some selected information is combined and transferred into an amodal form of working memory13,15. However, it remains elusive whether higher-order cortical areas possess such supramodal representations in working memory processing. While prior studies suggest the PFC as a major candidate region for holding such high-level representations10,11,12,14,16, numerous studies using visual working memory tasks have shown that the contents of working memory were decoded from the neural responses of visual cortical regions but not from those of the PFC19,26. Moreover, the involvement of PFC in memory maintenance has been reported to depend on behavioral goals or tasks rather than being consistently present29. Furthermore, whether representations in higher-order cortical areas, including the prefrontal and parietal cortices, are homogeneous remains unclear.

To determine the presence of a supramodal representation of working memory, it is essential to compare the representations across working memory tasks involving different sensory modalities. Moreover, since there is a possibility that a shared representation between modalities can occur only when there is a task goal that requires integration or association of two modalities, the representation during a working memory task that necessitates linking two modalities should also be compared.

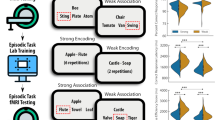

Here we designed an event-related fMRI experiment, consisting of visual working memory, tactile working memory, and two cross-modal working memory tasks (Fig. 1a and Supplementary Fig. 1a). We used braille patterns as tactile stimuli and corresponding images of the braille patterns as visual stimuli (Fig. 1b). The shape of the braille patterns can be recognized through both tactile perception and visual perception. Therefore, if a high-level abstract representation exists for individual braille stimuli during working memory, the representation of braille identity may be shared between visual and tactile working memory tasks regardless of the involved sensory modality (supramodal representation).

a Experimental paradigm for a comparative approach between tactile and visual working memories. Participants performed two within-modal (tactile-to-tactile, TT, and visual-to-visual, VV) and two cross-modal (tactile-to-visual, TV, and visual-to-tactile, VT) delayed match-to-sample tasks. During within-modal tasks, participants were presented with a braille sample and a braille probe either visually (VV) or tactually (TT) with a 7-s delay period between them, and they indicated whether the probe matched the sample. The procedure for cross-modal tasks was the same as for the within-modal tasks, except that the sample and the probe were presented in different sensory modalities. b Tactile and visual stimuli. Five braille patterns were presented either tactually or visually. c Surface maps of brain activation during each task. The colored areas show the mean BOLD response magnitude (percent signal change) across participants (p < 0.05, FDR-corrected), mapped on the inflated brain for sample onset, delay period, and probe onset of each task. Black and white arrows pointing to specific brain regions highlight significant activation in the targeted areas. lh left hemisphere, rh right hemisphere, S superior, I inferior, A anterior, P posterior, L lateral, M medial.

During the visual or tactile working memory task, participants were presented with a braille sample and a braille probe visually (visual working memory task) or tactually (tactile working memory task) with a delay period between them and were required to indicate whether the probe matched the sample (Fig. 1a and Supplementary Fig. 1a). To test the hypothesis that supramodal representations of working memory exist in higher-order cortical areas, we examined the common neural substrates and shared representations of braille identity during the delay periods of visual and tactile working memory tasks, focusing on the parietal and prefrontal cortical areas. Furthermore, to compare these representations with those of working memory tasks that require linking tactile and visual modalities, participants performed two cross-modal working memory tasks. In these tasks, participants were presented with a sample visually and a probe tactually for the visual-to-tactile task, and vice versa for the tactile-to-visual task (Fig. 1a and Supplementary Fig. 1a). Comparison of the sample and the probe in these tasks necessitated the association or integration of visual and tactile information.

Through these comparative analyses of working memory tasks involving different sensory modalities, we provide direct evidence supporting the presence of supramodal representation of working memory in the superior parietal cortex, regardless of the processed sensory modality, alongside modality-dependent sensory representations. Furthermore, we also demonstrate a distinct type of high-level representation of working memory in the inferior parietal cortex and PFC, where the maintenance of working memory depends on behavioral goals that involve the association of features from different sensory modalities. Our findings propose a framework for working memory maintenance, facilitating continuous behavioral engagement across diverse sensory environments.

Results

Participants were instructed to perform working memory tasks, wherein they retained specific sensory information (either tactile or visual) regarding the shape of a braille sample and compared it with a subsequently presented probe (Fig. 1a, b and Supplementary Fig. 1a). In all within-modal (tactile-to-tactile task, TT, and visual-to-visual task, VV) and cross-modal working memory tasks (tactile-to-visual task, TV, and visual-to-tactile task, VT), the participants exhibited robust performance levels (mean correct rate and SEM: 89.22% ± 1.72% for TT, 98.71% ± 0.39% for VV, 90.78% ± 1.57% for TV, 88.02% ± 1.71% for VT) (Supplementary Fig. 1b), indicating that the participants successfully recognized and maintained the individual shape of braille during the tasks.

According to the demands of each task, different sensory systems may be engaged17,19,23,32,33,34,35. We first compared the involvement of cortical regions across the four working memory tasks, by examining the average magnitudes of response across whole brain areas during each working memory task. As expected, we observed modality-specific neural responses in respective sensory cortices at each sample or probe stimulus onset. Strong responses were found in the left (contralateral) somatosensory cortical areas at the sample onsets of TT and TV tasks, while posterior visual areas were highly activated at the sample onsets of VV and VT tasks (p < 0.05, FDR-corrected) (Fig. 1c). Moreover, significant responses were found in the somatosensory cortical areas at the probe onset of TT and VT tasks whereas, at the probe onset of VV and TV tasks, strong responses were found in the posterior visual areas (p < 0.05, FDR-corrected) (Fig. 1c). Notably, besides the modality-specific responses in the sensory cortices, significant responses were also observed in the parietal and frontal cortical areas during the delay periods as well as sample and probe phases of all tasks (p < 0.05, FDR-corrected) (Fig. 1c), indicating common engagement of these higher-order cortical areas across the working memory tasks.

Different types of neural substrates for the maintenance of braille pattern information during working memory

To determine neural substrates underlying the maintenance of individual braille pattern information during the delay period of each task, we used multi-voxel pattern analysis. To test the existence of specific neural representations for individual braille stimuli across the brain, we calculated within- and between-braille correlations based on the voxel pattern similarity, and derived discrimination indices for individual braille stimuli as the difference between the within- and between-braille stimuli correlations (Fig. 2a; see “Methods”).

a To examine the existence of individual braille pattern information, we derived discrimination indices for individual braille patterns as the difference between within-braille and between-braille correlations, based on voxel pattern similarity using the split-half correlation analysis method (see “Methods” for details). b The distribution of retained stimulus information during the delay period of within-modal (TT and VV) tasks (discrimination index > 0, p < 0.01, right-tailed, FDR-corrected). Common neural substrates for the maintenance of braille pattern information in the TT and VV tasks were found in the superior parietal cortex. lh left hemisphere, rh right hemisphere, S superior, I inferior, A anterior, P posterior, L lateral, M medial. c The distribution of retained sample stimulus information during the delay period of cross-modal (TV and VT) tasks (discrimination index > 0, p < 0.01, right-tailed, FDR-corrected). During these tasks, broader cortical areas, including the prefrontal cortex and inferior parietal cortex, along with the superior parietal cortex, were engaged in the maintenance of braille pattern information. d Based on the results of above (b, c), the areas of overlap between TT and VV tasks, excluding TV and VT areas, are colored cyan (TT ∩ VV ∩ TVC ∩ VTC), and the overlap areas of TV and VT tasks, excluding TT and VV areas, are depicted in yellow (TV ∩ VT ∩ TTC ∩ VVC) (C denotes complement of a set). The overlap areas of all tasks are colored magenta (TT ∩ VV ∩ TV ∩ VT). Black arrows pointing to specific brain regions highlight significant activation in the targeted areas.

If supramodal representations exist, the first requisite is that the neural substrates for the information representation of working memory may be shared across different tasks. Remarkably, it was commonly observed that the superior parietal cortex retained individual braille pattern information in both the TT and VV tasks (discrimination index greater than zero, p < 0.01, right-tailed, FDR-corrected) (Fig. 2b). This maintenance of stimulus information in the superior parietal cortex was also found during the delay period of both cross-modal (TV and VT) tasks (Fig. 2c). Even when tracking the braille representation at each time point of the delay period, a consistent tendency of shared maintenance was observed in the superior parietal cortex (Supplementary Fig. 2). However, in the prefrontal cortex, such common maintenance across all tasks were not found (Fig. 2b and Supplementary Fig. 2a). These results showed the existence of common neural substrates for working memory maintenance in the superior parietal cortex, but not the prefrontal cortex, regardless of processed sensory modality (Supplementary Fig. 2a). These findings suggest the possibility that the superior parietal cortex serves as a candidate for the neural substrates of supramodal representation in working memory.

During the cross-modal tasks compared to the within-modal tasks, broader areas of the parietal cortex were engaged in the maintenance of individual braille pattern information (Fig. 2c and Supplementary Fig. 2b). Not only the superior parietal cortex but also the inferior parietal cortex showed significant decoding of braille identity during the delay period of both TV and VT tasks (p < 0.01, right-tailed, FDR-corrected). Moreover, remarkably, significant decoding of braille identity was also found in the prefrontal cortical regions during these cross-modal tasks (p < 0.01, right-tailed, FDR-corrected) (Fig. 2c and Supplementary Fig. 2b). Combined with that the representation of braille pattern information was not observed in these cortical areas during the within-modal (TT and VV) tasks (Fig. 2b and Supplementary Fig. 2a), these results indicate that the maintenance of working memory in the inferior parietal and the prefrontal cortical areas depends on the necessity of connecting both tactile and visual modality features.

In addition to the neural representations in the higher-order cortical areas, sensory modality-specific neural substrates for stimulus information maintenance were also observed in the sensory cortical regions (Supplementary Fig. 2), in alignment with prior research17,19,23. During the delay period of the tactile working memory task (TT task), the discrimination indices were significantly greater than zero in the left (contralateral) somatosensory cortical areas (p < 0.01, right-tailed, FDR-corrected), while positive discrimination was evident in the visual cortical areas during the delay of the visual working memory task (VV task) (Supplementary Fig. 2a). These results show a distinct and flexible working memory representation contingent upon the utilized sensory information, consistent with the sensory recruitment account of working memory3,5,17,18,19,20,23,25.

The results from the above whole-brain searchlight analyses suggest the presence of three distinct types of neural substrates involved in working memory maintenance: (1) regions where working memory information is consistently retained regardless of the processed sensory modality, (2) regions where working memory information is exclusively maintained during cross-modal working memory tasks, in which the integration of information from different sensory modalities is required, and (3) regions specifically responsible for retaining information depending on the sensory modality being processed. Focusing on the high-level representations of working memory, we further clarified the neural substrates of type (1) and (2). To do this, we examined the overlap in regions showing significant braille discrimination across the following conditions: all within-modal and cross-modal tasks, within-modal tasks excluding cross-modal tasks, and cross-modal tasks excluding within-modal tasks (Fig. 2d and Supplementary Fig. 2c). Consistent with the above searchlight results for each task, we identified shared neural substrates for the maintenance of braille pattern information across all tasks in the superior parietal cortical regions of the left hemisphere (p < 0.01, right-tailed, FDR-corrected) (Fig. 2d and Supplementary Fig. 2c, colored in magenta). Moreover, we found neural substrates shared exclusively across cross-modal tasks in the lateral prefrontal and the inferior parietal areas of the left hemisphere (discrimination index above zero during the task TV and VT, p < 0.01, right-tailed, FDR-corrected; excluding areas with significant discrimination index during the task TT or VV, p < 0.05, right-tailed, FDR-corrected) (Fig. 2d and Supplementary Fig. 2c, colored in yellow), whereas few areas were shared exclusively across within-modal tasks when excluding cross-modal overlap (Fig. 2d and Supplementary Fig. 2c, colored in cyan).

To examine the representations of working memory in more detail, we defined regions of interest (ROIs), and derived discrimination indices from the response patterns of each ROI (Fig. 3). Given that tactile stimuli were presented on the right index finger and the searchlight results showed a more prominent tendency in the left hemisphere, we focused on the regions of the left hemisphere in this ROI analysis (Fig. 3a). Consistent with the searchlight analysis, there was significant discrimination of individual braille pattern information in superior parietal regions, the superior parietal lobule (SPL) and intraparietal sulcus (IPS), during the delay period of all within-modal and cross-modal tasks (all t(28) ≥ 3.817, p < 0.001, Cohen’s d ≥ 0.709, right-tailed, FDR-corrected) (Fig. 3b). On the other hand, the dorsolateral prefrontal cortex (dlPFC) and the angular gyrus (ANG) in the inferior parietal cortex showed differential patterns for the cross-modal versus within-modal tasks (Fig. 3c). From the neural responses of these regions, decoding of braille identity was possible during the cross-modal tasks (dlPFC: t(28) ≥ 3.287, p ≤ 0.001, Cohen’s d ≥ 0.610; ANG: t(28) ≥ 3.551, p ≤ 0.001, Cohen’s d ≥ 0.659, right-tailed, FDR-corrected), but not during the within-modal tasks (dlPFC: t(28) ≤ 1.111, p ≥ 0.138, Cohen’s d ≤ 0.206; ANG: t(28) ≤ 1.370, p ≥ 0.091, Cohen’s d ≤ 0.254, right-tailed, FDR-corrected) (Fig. 3c). Moreover, we also found significant differences in discrimination indices between the cross-modal and within-modal tasks in SPL and IPS as well as in dlPFC and ANG (main effect of modality condition in two-way ANOVA with the factors of modality condition (within-modal, cross-modal) and sample stimulus modality (tactile, visual), SPL: F(1,28) = 5.548, p = 0.026; IPS: F(1,28) = 7.863, p = 0.009; dlPFC: F(1,28) = 14.064, p = 0.001; ANG: F(1,28) = 22.065, p < 0.001) (Fig. 3b, c). Furthermore, there was a significant interaction between modality condition and ROI category (three-way ANOVA with modality condition (within-modal, cross-modal), sample stimulus modality (tactile, visual), and ROI category (superior parietal cortex (SPL, IPS), other higher-order regions (dlPFC, ANG)) as factors, F(1,28) = 4.743, p = 0.038) (Fig. 3b, c). These results indicate the presence of distinct types of neural substrates for working memory maintenance in higher-order cortical areas.

a Regions-of-interest (ROI), superior parietal lobule (SPL), intraparietal sulcus (IPS), dorsolateral prefrontal cortex (dlPFC), and angular gyrus (ANG), are displayed on inflated lateral (left panel) and posterior-dorsal (right panel) surfaces. lh left hemisphere, S superior, I inferior, A anterior, P posterior, L lateral, M medial. b The mean discrimination indices for braille identity in SPL and IPS during the delay period of each task. Both SPL and IPS showed significant discrimination of individual braille stimuli across all tasks. c The mean discrimination indices for braille identity in dlPFC and ANG during the delay period of each task. In these areas, the decoding of braille identity was possible during cross-modal (TV and VT) tasks but not during within-modal (TT and VV) tasks. d Regions-of-interest (ROI), the left (contralateral) primary somatosensory cortex (S1) and the central early visual cortex (cEVC), are displayed on inflated lateral (upper panel) and posterior-dorsal (lower) surfaces. e The mean discrimination indices for braille identity in S1 and cEVC during the delay period of each task. In S1, significant decoding of individual sample stimuli was observed during the TT and TV tasks, but not during the VV and VT tasks. In cEVC, decoding of braille identity was possible during the VV, TV, and VT tasks, but not during the TT task. *p < 0.05, **p < 0.01 (one-sample t test, right-tailed, FDR-corrected); #p < 0.05, ##p < 0.01 (two-way ANOVA, main effect of modality condition (within-modal versus cross-modal)). A full list of statistical values is provided in Supplementary Table 1. The lower, middle, and upper lines of each box represent the 25%, 50%, and 75% quartiles, respectively. The upper and lower whiskers extend to the highest and lowest data values within 1.5 times the interquartile range from the hinge. Each dot indicates the mean data value for each participant (n = 29).

In parallel with the working memory representations in higher-order cortical areas, modality-dependent representations in sensory areas were also evident. Consistent with the aforementioned searchlight results, significant discrimination of individual braille objects was found in the left primary somatosensory cortex (S1) (Fig. 3d) during working memory tasks where sample stimuli were presented tactually (TT: t(28) = 3.698, p = 0.001, Cohen’s d = 0.687, 95% CI = [0.021, Inf]; TV: t(28) = 4.637, p < 0.001, Cohen’s d = 0.861, 95% CI = [0.033, Inf], right-tailed, FDR-corrected). However, this discrimination was not observed during tasks with visual braille samples (VV: t(28) = 1.418, p = 0.084, Cohen’s d = 0.263, 95% CI = [−0.003, Inf]; VT: t(28) = 1.598, p = 0.061, Cohen’s d = 0.297, 95% CI = [−0.001, Inf], right-tailed, FDR-corrected) (Fig. 3e). On the other hand, in the central early visual cortex (cEVC) (Fig. 3d), significant decoding of individual braille objects was observed during all tasks except for the TT task (all except for TT: t(28) ≥ 2.144, p ≤ 0.020, Cohen’s d ≥ 0.398; TT: t(28) = −0.341, p = 0.632, Cohen’s d = 0.063, 95% CI = [−0.031, Inf], right-tailed, FDR-corrected) (Fig. 3e). Furthermore, a significant interaction effect between the ROI (S1, cEVC) and the sample stimulus modality (tactile, visual) was found for the discrimination indices (ANOVA, F(1,28) = 4.567, p = 0.042).

Additionally, to examine the potential carry-over effect of sample stimulus perception on neural representations during the delay period, we conducted a probe decoding analysis for the time window starting at probe onset and extending to subsequent time points. We found no significant discrimination of probe stimuli across this time window during both the TT and VV tasks in the parietal and prefrontal cortical regions (Supplementary Fig. 3a, b), indicating that the impact of probe stimulus perception on working memory representations in these regions is minimal. Based on this result, it is unlikely that sample stimulus perception is mainly reflected in the neural representations during the subsequent delay period, supporting that these neural representations primarily reflect working memory maintenance.

These results collectively suggest that the superior parietal areas, including the SPL and IPS, serve as common neural substrates where working memory information is consistently retained, regardless of the processed sensory modality. Combined with the decoding results observed in the sensory cortical areas, this retention may contribute to the duplicated maintenance of working memory. Furthermore, our results indicate a distinct type of neural substrate in the prefrontal and inferior parietal areas, including dlPFC and ANG, where working memory information is exclusively maintained during cross-modal working memory tasks, which require the integration of information from different sensory modalities.

Supramodal representation of working memory

We showed two types of neural substrates responsible for working memory maintenance in the higher-order cortex. Next, focusing on the superior parietal cortical regions, SPL and IPS, which are common neural substrates for maintenance across all tasks, we examined whether the neural representations for the maintained information in these regions were shared across different working memory tasks. To directly compare the representations of braille identity between different tasks and determine the correspondence in the representations, we derived discrimination indices based on the pattern correlations between each pair of the working memory tasks (Fig. 4a). If neural representations in the superior parietal cortex possess supramodal nature, the distinct activation patterns for individual braille stimuli would be consistent across tasks regardless of the processed modality, allowing us to decode braille pattern identity. Notably, we found significantly positive discrimination indices for individual braille stimuli across the two within-modal tasks (TT and VV) in the SPL and IPS (SPL: t(28) = 2.472, p = 0.010, Cohen’s d = 0.459, 95% CI = [0.020, Inf]; IPS: t(28) = 2.059, p = 0.025, Cohen’s d = 0.382, 95% CI = [0.009, Inf], right-tailed, FDR-corrected), indicating shared representations for individual braille stimuli between TT and VV tasks (Fig. 4b). Moreover, shared representations in SPL and IPS were also identified among other tasks, including those between the cross-modal tasks (TV and VT), between TV and TT tasks, between TV and VV tasks, between VT and TT tasks, and between VT and VV tasks (all t(28) ≥ 3.176, p ≤ 0.002, Cohen’s d ≥ 0.590, right-tailed, FDR-corrected) (Fig. 4b–d).

a To directly compare the representations of braille identity between different tasks and ascertain their correspondence, discrimination indices were derived based on within- and between-braille correlations for each pair of working memory tasks. b–d Discrimination indices for braille identity for each pair of the tasks in SPL and IPS. Significantly positive discrimination indices were found between the two within-modal tasks (TT and VV), as well as between the two cross-modal tasks (TV and VT) (b). Furthermore, positive discrimination indices were observed between task TV and each within-modal task (TV and TT; TV and VV) (c), and between task VT and each within-modal task (VT and TT; VT and VV) (d). e Cross-decoding of braille identity using multi-class classification analysis. An SVM classifier was trained on neural response patterns from one task and tested on patterns from the other task, and vice versa. The classification accuracies in both directions were then averaged. f–h Classification performance between each pair of the tasks in SPL and IPS. The dotted lines indicate the chance level. Consistent with the discrimination index results, significant cross-decoding was observed between the two within-modal tasks (TT and VV), as well as between the two cross-modal tasks (TV and VT) (f). Furthermore, significant cross-decoding was observed between task TV and each within-modal task (TV and TT; TV and VV) (g), and between task VT and each within-modal task (VT and TT; VT and VV) (h). Asterisks above each box, *p < 0.05, **p < 0.01 (one-sample t test, right-tailed, FDR-corrected). Asterisks between two boxes, **p < 0.01 (paired t test, two-tailed). A full list of statistical values is provided in Supplementary Table 2. The lower, middle, and upper lines of each box represent the 25%, 50%, and 75% quartiles, respectively. The upper and lower whiskers extend to the highest and lowest data values within 1.5 times the interquartile range from the hinge. Each dot indicates the mean data value for each participant (b–d: n = 29; f–h: n = 28).

To further verify these results of shared representations, we also conducted a multi-class classification analysis. For each pair of tasks, a classifier was trained on neural response patterns from one task and tested on patterns from the other task (and vice versa), using support vector machine (SVM) (Fig. 4e). Consistent with the discrimination index results, significant cross-decoding was observed between TT and VV tasks, TV and VT tasks, TV and TT tasks, TV and VV tasks, VT and TT tasks, and VT and VV tasks in SPL and IPS (all t(27) ≥ 2.404, p ≤ 0.012, Cohen’s d ≥ 0.454, right-tailed, FDR-corrected, compared to the chance level 20%) (Fig. 4f–h). While the cross-decoding level between the cross-modal tasks (TV and VT) was higher than that between the within-modal tasks based on the pattern similarity analysis (SPL: t(28) = −3.180, p = 0.004, Cohen’s d = 0.591, 95% CI = [−0.194, −0.042]; IPS: t(28) = −3.793, p = 0.001, Cohen’s d = 0.704, 95% CI = [−0.176, −0.053], FDR-corrected) (Fig. 4b), this tendency was not observed in the comparison based on multi-class classification analysis (SPL: t(27) = −0.794, p = 0.434, Cohen’s d = 0.150, 95% CI = [−3.493, 1.544]; IPS: t(27) = −1.468, p = 0.154, Cohen’s d = 0.277, 95% CI = [−4.253, 0.706]) (Fig. 4f). Moreover, across all other task comparisons, the level of cross-decoding was comparable (all |t(27)| ≤ 1.182, p ≥ 0.247, Cohen’s d ≤ 0.223, FDR-corrected) (Fig. 4c, d, g, h).

Additionally, unlike the supramodal representations observed in the SPL and IPS, we found no significant cross-discrimination of individual braille stimuli between the TT and VV tasks within the sensory cortical areas (S1: t(28) = −0.489, p = 0.686, Cohen’s d = 0.091, 95% CI = [−0.035, Inf]; cEVC: t(28) = −0.766, p = 0.755, Cohen’s d = 0.142, 95% CI = [−0.051, Inf], right-tailed, FDR-corrected) (Supplementary Fig. 4), indicating modality-specific rather than supramodal representations in these areas.

Collectively, these findings suggest the presence of shared representations for braille pattern information in the superior parietal cortex, irrespective of the sensory modality through which it is processed, indicating the supramodal nature of high-level working memory representation.

Additionally, considering the significant braille discrimination detected across all four tasks in the left precentral cortex according to our searchlight analysis (Fig. 2d), we also defined the left premotor cortex (left Brodmann area 6, BA6) and performed ROI analysis to assess the discrimination of individual braille objects in this area. Contrary to the results observed in the superior parietal regions, we observed a different tendency. Although discrimination of braille identity was found across all working memory tasks (TT: t(28) = 3.474, p = 0.001, Cohen’s d = 0.645, 95% CI = [0.016, Inf]; VV: t(28) = 2.085, p = 0.023, Cohen’s d = 0.387, 95% CI = [0.005, Inf]; TV: t(28) = 7.097, p < 0.001, Cohen’s d = 1.318, 95% CI = [0.053, Inf]; VT: t(28) = 4.885, p < 0.001, Cohen’s d = 0.907, 95% CI = [0.024, Inf], right-tailed, FDR-corrected) (Supplementary Fig. 5a), no correspondence was found between the tactile and visual working memory tasks (TT and VV) (t(28) = 0.570, p = 0.287, Cohen’s d = 0.106, 95% CI = [−0.017, Inf], right-tailed, FDR-corrected) (Supplementary Fig. 5b). These results indicate that although working memory maintenance was observed across all tasks, it does not necessarily imply a shared representation between the tasks.

Cross-modal representation of working memory

The prefrontal and inferior parietal cortical areas were engaged in the maintenance of braille pattern information during the cross-modal tasks, contrasting with their non-involvement in within-modal tasks (Figs. 2 and 3). These results suggest the presence of cross-modal working memory-specific representations in these areas, indicating a distinct form of high-level representation separate from supramodal representation.

If these cross-modal specific representations reflect the integration or association of tactile and visual stimulus information, the representations for each braille item may be common between the TV and VT tasks, irrespective of the direction of modality transition. Conversely, shared representations are unlikely to emerge between within-modal (TT and VV) tasks, where the association of tactile and visual modality features is unnecessary. To investigate these possibilities, we directly compared the neural representations of individual braille stimuli between the tasks. Consistent with the idea of tactile-visual association, we found significant cross-decoding between the TV and VT tasks in dlPFC and ANG, based on both the pattern similarity analysis (dlPFC: t(28) = 4.029, p < 0.001, Cohen’s d = 0.748, 95% CI = [0.039, Inf]; ANG: t(28) = 4.825, p < 0.001, Cohen’s d = 0.896, 95% CI = [0.070, Inf], right-tailed, FDR-corrected) (Fig. 5a) and classification analysis (dlPFC: t(27) = 2.677, p = 0.006, Cohen’s d = 0.506, 95% CI = [20.899, Inf]; ANG: t(27) = 3.817, p < 0.001, Cohen’s d = 0.721, 95% CI = [21.949, Inf], right-tailed, FDR-corrected) (Fig. 5b). However, there was no correspondence between the TT and VV tasks in either pattern similarity analysis (dlPFC: t(28) = −0.684, p = 0.750, Cohen’s d = 0.127, 95% CI = [−0.036, Inf]; ANG: t(28) = −1.161, p = 0.872, Cohen’s d = 0.216, 95% CI = [−0.047, Inf], right-tailed, FDR-corrected) (Fig. 5a) or classification analysis (dlPFC: t(27) = 0.492, p = 0.313, Cohen’s d = 0.093, 95% CI = [18.805, Inf]; ANG: t(27) = −0.965, p = 0.829, Cohen’s d = 0.182, 95% CI = [17.880, Inf], right-tailed, FDR-corrected) in these areas (Fig. 5b). Furthermore, significant differences were found between cross-modal and within-modal task comparisons in dlPFC (t(28) = −3.433, p = 0.002, Cohen’s d = 0.638, 95% CI = [−0.124, −0.031]) and ANG (t(28) = −4.104, p < 0.001, Cohen’s d = 0.762, 95% CI = [−0.191, −0.064]) based on the pattern similarity analysis, and in ANG (t(27) = −3.501, p = 0.002, Cohen’s d = 0.662, 95% CI = [−6.798, −1.774]) based on the classification analysis (Fig. 5). These results showed common representations between the cross-modal tasks, regardless of the direction of modality transition, but not between the within-modal tasks. Collectively, these findings suggest the presence of cross-modal representations that depend on the behavioral goal to associate features from two different sensory modalities in the prefrontal and inferior parietal cortex.

a Discrimination indices for braille identity between different tasks in dlPFC, and ANG. Significantly positive discrimination indices were found between the two cross-modal tasks (TV and VT), but not between the two within-modal tasks (TT and VV) in these areas. b Classification performance between different tasks in dlPFC, and ANG. The dotted lines indicate the chance level. Significant cross-decoding was observed between the two cross-modal tasks (TV and VT) but not between the two within-modal tasks (TT and VV) in these areas. Asterisks above each box, **p < 0.01 (one-sample t test, right-tailed, FDR-corrected). Asterisks between two boxes, **p < 0.01 (paired t test, two-tailed). A full list of statistical values is provided in Supplementary Table 3. The lower, middle, and upper lines of each box represent the 25%, 50%, and 75% quartiles, respectively. The upper and lower whiskers extend to the highest and lowest data values within 1.5 times the interquartile range from the hinge. Each dot indicates the mean data value for each participant (a: n = 29; b: n = 28).

To compare supramodal representations with cross-modal representations retained in different regions of the parietal and the prefrontal cortex, we examined the representational relationships between these regions. We calculated the correlations between representational dissimilarity matrices (RDMs) derived from two ROIs, based on comparisons of two within-modal tasks and two cross-modal tasks (Fig. 6a). Consistent with the characteristics of supramodal representation, the degree of representational similarity between SPL and IPS was significantly greater than that observed between other ROI pairs for both within-modal (all t(28) ≥ 5.223, p < 0.001, Cohen’s d ≥ 0.970, FDR-corrected) and cross-modal (all t(28) ≥ 3.133, p ≤ 0.004, Cohen’s d ≥ 0.582, FDR-corrected) task comparisons (Fig. 6b, c). These results indicate that higher-order regions exhibiting supramodal representations share highly similar representational structures, whereas the structures between supramodal and cross-modal representations, as well as those between cross-modal representations, are relatively less similar than those within supramodal representations.

a Representational similarity between two ROIs was measured as the correlation between their respective representational dissimilarity matrices (RDMs), based on comparisons of two within-modal tasks and two cross-modal tasks. b Representational similarities between each pair of ROIs (SPL, IPS, dlPFC, and ANG) based on the RDMs derived from the within-modal tasks (TT and VV). The level of representational similarity between SPL and IPS was higher than that of the other ROI pairs (paired t test, FDR-corrected). c Representational similarities between each pair of ROIs (SPL, IPS, dlPFC, and ANG) based on the RDMs derived from the cross-modal tasks (TV and VT). The level of representational similarity between SPL and IPS was higher than that of the other ROI pairs (paired t test, FDR-corrected). A full list of statistical values is provided in Supplementary Table 4. The colored dashed lines represent the mean level of representational similarity between SPL and IPS. The lower, middle, and upper lines of each box represent the 25%, 50%, and 75% quartiles, respectively. The upper and lower whiskers extend to the highest and lowest data values within 1.5 times the interquartile range from the hinge. Each dot indicates the mean data value for each participant (n = 29).

Taken together, our results demonstrate the presence of two distinct types of high-level representations of working memory in different cortical regions. The first type, supramodal representation, is consistently observed in the superior parietal cortex across all working memory tasks, irrespective of the processed sensory modality. The second type, cross-modal representation, is specifically engaged during cross-modal working memory tasks, reflecting associative property, and is predominantly found in the prefrontal and inferior parietal cortical regions. These findings suggest a framework for working memory maintenance that incorporates distinct supramodal and cross-modal high-level representations, together with sensory representations, to efficiently guide ongoing behaviors.

Discussion

Our research reveals supramodal and cross-modal forms of working memory representations within higher-order cortical regions, which may operate with sensory representations to guide our behaviors. By employing tactile, visual, and cross-modal delayed-match-to-sample tasks involving braille stimuli, we identified the superior parietal cortex as a common neural substrate for retaining braille stimulus information across all tasks, regardless of the sensory modalities involved. Notably, neural representations for tactile and visual braille stimuli were shared in the superior parietal cortex, indicating the supramodal nature of working memory representation. While the presence of sensory representations was dependent on the processed modalities, these supramodal representations were consistently observed regardless of the modalities involved. Unlike the supramodal maintenance observed in the superior parietal cortex, a distinct type of high-level working memory representation was found in the prefrontal cortex and inferior parietal cortex. The neural response patterns of these regions could be used to decode braille identity only during cross-modal working memory tasks, not during within-modal tasks, suggesting that working memory maintenance in these areas depends on the association of features from two different sensory modalities. Furthermore, shared representations between cross-modal tasks were found regardless of the direction of modality transition, reflecting an associative property across different sensory modalities. Collectively, our findings suggest the existence of two distinct types of high-level representation—supramodal and cross-modal representations—in different higher-order cortical areas during working memory, alongside sensory representations. These findings provide a conceptual framework for comprehending working memory maintenance, which may guide our ongoing behaviors in diverse sensory environments.

While previous studies mostly focused on working memory maintenance involving visual stimuli, the investigation of working memory encompassing other sensory modalities has received much less attention. By employing a newly developed tactile stimulus delivery system during fMRI scanning36, we showed the nature of working memory representations for tactile stimuli compared to those for visual stimuli. This comparative analysis across sensory modalities facilitated the elucidation of two crucial aspects of working memory representation in the brain. First, by examining whether common representations exist irrespective of input sensory modality, we could determine the presence of high-level supramodal representation in working memory. Second, by exploring whether representations specific to cases where information from two sensory modalities should be connected exist, we could address the potential for cross-modal specific representation in working memory.

We identified common neural substrates for maintaining braille identity information in the superior parietal cortex, regardless of the sensory modality of the sample or probe stimuli (Figs. 2 and 3b and Supplementary Fig. 2). Moreover, based on the neural response patterns of the superior parietal cortex, cross-decoding across any pair of working memory tasks was possible (Fig. 4). These findings indicate the presence of a high-level supramodal representation of working memory in this region. This supramodal representation likely lacks detailed sensory information and may convey common abstract information12,13,15. These findings align with the early notion proposing that the preserved working memory is amodal, originating from modality-specific sensory registers13, and are consistent with recent views on abstract representation in higher-order cortical areas12. However, while the prefrontal cortex has traditionally been considered as a primary higher-order region in working memory10,11,12,14, our results indicate the superior parietal cortex as a neural substrate for supramodal representation. The presence of this supramodal representation of working memory may address issues related to sensory system occupation, as outlined in the introduction. Consistent with previous research17,18,19,21,23,37,38,39, we also observed the involvement of sensory cortical areas in working memory maintenance, depending on processed sensory modalities (Fig. 3e and Supplementary Fig. 2a). The coexistence of supramodal representation and sensory detail maintenance may enable us to support our behaviors by seamlessly comparing forthcoming information with that retained in working memory.

We have also shown the existence of a distinct type of high-level representation specific to cross-modal working memory tasks. Specifically, the decoding of braille identity from the response patterns of the prefrontal cortex and inferior parietal cortex was possible only when tactile information and its corresponding visual counterpart needed to be connected, which was not observed during within-modal (TT or VV) working memory tasks (Figs. 2 and 3c and Supplementary Fig. 2). Moreover, individual braille-level cross-decoding between TV and VT tasks was possible from the response patterns in the prefrontal cortex and inferior parietal cortex (Fig. 5), indicating a shared representation for each braille between those tasks regardless of the direction of modality transition. Collectively, these results suggest the existence of tactile-visual cross-modal representations in the prefrontal cortex and inferior parietal cortex, which depend on the task demands or behavioral goals to determine whether the visually presented braille identity during the probe phase matches the tactually presented identity during the sample phase, or vice versa. These findings align with a previous study that suggests the flexible involvement of the prefrontal cortex in working memory maintenance, depending on behavioral goals29. This cross-modal representation of working memory may contribute to maintaining tactile-visual associative information for the same braille identity, facilitating the accurate and rapid utilization of complex real-world information to guide moment-to-moment behaviors. To effectively guide behavior during cross-modal tasks in collaboration with motor areas, the prefrontal and inferior parietal cortex may maintain tactile-visual associative information. Further studies are needed to illuminate these proposed mechanisms.

While the present study suggests that prefrontal representations are cross-modal specific, some previous studies support the supramodal nature of frontal representations. In particular, an fMRI study showed that frontal regions such as the inferior frontal gyrus (IFG) and pre-supplementary motor area (pre-SMA) are commonly involved in maintaining tactile (vibration) and visual (flicker) stimulation frequencies during working memory40. Moreover, single-cell recordings in nonhuman primates revealed common parametric coding of tactile and auditory stimuli in pre-SMA41. What might explain these discrepancies? One possibility is that different subregions of the frontal lobe have distinct roles in working memory maintenance. Notably, the frontal regions identified in these prior studies appear distinct from the dlPFC, which is primarily associated with working memory and was found to hold cross-modal representations in our study. Furthermore, in our searchlight analysis for significant discrimination of braille stimuli (Fig. 2 and Supplementary Fig. 2), small clusters were also observed in the frontal lobe, possibly within the IFG or pre-SMA. Thus, these suggest the possibility that the inferior and/or posterior part of the frontal cortex may support supramodal representations of working memory, although these neural substrates are much smaller than the primary dlPFC. Further research is needed to clarify whether different frontal subregions have distinct roles in working memory maintenance.

The parietal cortex has been considered as a multisensory region42,43,44,45. To investigate the mechanisms of multisensory integration, prior studies have primarily used tasks that require the integration of multiple sensory modalities, including tactile and visual information. Lesion studies have shown that damage to the parietal cortex impairs visual-tactile integration44,46, while neuroimaging and electrophysiological studies have demonstrated increased neural activation in this region during multisensory integration42,43,47,48. These findings align with our results, which indicate that the parietal cortex is involved in cross-modal tasks (Figs. 4 and 5). Notably, however, our findings further suggest that the superior and inferior parietal cortices support different types of working memory representations, indicating that these regions process distinct types of information. While the inferior parietal cortex specifically retained working memory during cross-modal tasks (Fig. 3c), the superior parietal cortex maintained working memory representations across both within-modal (tactile or visual) and cross-modal tasks (Fig. 3b). Furthermore, in the superior parietal cortex, working memory representations of the same braille identity information were shared across all tasks, regardless of the sensory modality involved (Fig. 4). A recent study showed that the SPL is commonly involved in both tactile-motor planning and visuo-motor planning, aligning with our findings in that it processes both tactile and visual stimulus information even in the absence of sensory input49.

The two types of high-level working memory representations suggested in this study may contribute to tactile-visual integration from the following two processes. First, both tactual and visual signals may commonly activate supramodal representations in the superior parietal cortex. Our results showed a more prominent presence of these supramodal representations during cross-modal tasks than within-modal tasks (Fig. 4b), suggesting that they are more actively engaged in cross-modal tasks. These common representations in the superior parietal cortex may serve as a bridge between different sensory modalities. However, because this high-level common representation likely reflects abstract rather than detailed sensory features, it may also be necessary to connect with detailed sensory representations to ensure accurate behavioral responses during the probe phase of the tasks. Cross-modal representations in the inferior parietal and prefrontal cortex may provide an alternative mechanism for linking the two sensory modalities, potentially compensating for limitations in supramodal representations. Tactile-visual integration during cross-modal tasks may thus be supported by a dual mechanism based on both of these types of high-level representations. These suggestive contributions of supramodal and cross-modal representations to working memory will need to be further investigated in future work.

One might argue that the supramodal representation we observed might not truly be supramodal but rather reflects the influence of visual imagery even during the tactile working memory task50. However, previous studies have indicated that visual imagery primarily engages visual cortical representations51,52,53, yet there was minimal involvement of visual areas during the TT task (Figs. 2b and 3e and Supplementary Fig. 2a). Of course, in cross-modal tasks, working memory maintenance is observed in both visual and tactile areas (Figs. 2c and 3e), and cross-task decoding in these areas (Supplementary Fig. 4) supports the possibility of feedback from higher-order cortical regions. Hence, while we cannot entirely dismiss the possibility of visual imagery contamination during the tactile working memory task, its influence on the shared representations appears to be negligible. Additionally, the perception of the sample stimulus could influence neural representations during the delay period. Although it is difficult to completely rule out this possibility, given that no significant discrimination of probe stimuli was found in the parietal and prefrontal cortical regions during the subsequent time period of the TT and VV tasks (Supplementary Fig. 3), the impact of sample perception during the following delay period is likely minimal. Furthermore, there is the possibility that varying task difficulty could lead to differential representations between the supramodal and cross-modal representations. However, a comparison of participants’ performance (accuracy levels) across tasks revealed comparable performance (Supplementary Fig. 1b), with the exception of the VV task. Thus, this result supports the notion that the difference between supramodal and cross-modal representations was not primarily driven by difference in difficulty.

While we suggest the existence of a supramodal representation that is common across all working memory tasks, the spatial resolution limitations of fMRI make it unclear whether the same set of cells in the superior parietal cortex encode working memory information. Additionally, the temporal resolution constraints of fMRI pose challenges in understanding the neural mechanisms underlying the rapid information transfer between the sensory cortex and higher-order cortical regions. Single-cell recordings in nonhuman primates or humans in the future may clarify the detailed mechanisms, including the involvement of cell populations depending on the processed modality within each brain region (e.g., modality-dependent cells in the sensory cortex of each modality; bimodal cells54 in higher-order cortex) and the causal relationships between these regions.

In our results, significant maintenance of working memory across the tasks (Figs. 2 and 3b and Supplementary Fig. 2) and shared representations regardless of processed sensory modality (Fig. 4) were found in the superior parietal cortex. However, only significant maintenance of working memory across the tasks, but not shared representations between the TT and VV tasks, was observed in the premotor cortex (Fig. 2d and Supplementary Fig. 5). This result indicates that the common involvement of certain neural substrates in working memory maintenance does not guarantee shared representation. The premotor cortex has been suggested to be involved in visually guided motor planning, such as shaping hand posture to reach and grasp objects55,56,57,58, and more recently, in tactually guided motor planning49. Taking our results together, the premotor cortex may support processes of sensory-motor interaction, such as visually (or tactually) guided hand motion during the probe phase of our tasks.

In conclusion, our findings suggest the existence of two distinct high-level representations of working memory: supramodal representation and cross-modal representation. The orchestration of these representations may efficiently guide the achievement of behavioral goals. The supramodal representations in the superior parietal cortex, reflecting abstract information, and the cross-modal representations, which bridge specific sensory features, may be synergistically utilized with the sensory representations of working memory.

Methods

Participants

Thirty-one healthy participants (mean age 23.61 ± 0.73 years, range 18–34; 18 females) with no known cognitive deficits or neurological diseases took part in the experiment. All participants were right-handed and had normal or corrected-to-normal vision. Two participants were excluded from the data analysis due to low performance (accuracy below 0.7 out of 1 in any of the working memory tasks), resulting in 29 participants (mean age 23.24 ± 0.68 years, range 18–34; 17 females) being included in the reported results. All participants provided written informed consent for the procedure. The experimental procedure was approved by the Institutional Review Boards of Seoul National University and Korea Advanced Institute of Science and Technology.

Stimuli

We used five different braille patterns as tactile and visual stimuli (Fig. 1b). Each braille pattern had a unique shape, with no pattern matching through rotation, parallel translation, or symmetric transposition. Every braille pattern consisted of four dots arranged in a 6-dot (3-by-2) cell. The tactile braille patterns were engraved on the top of polyoxymethylene blocks. The size of each tactile block was 18 × 18 × 12 mm, and the diameter and height of each dot were 2 × 1 mm, with a minimum spacing of 0.6 mm between dots. The tactile stimuli were presented automatically via a newly developed pneumatic tactile stimuli delivery system (pTDS)36. For the visual stimuli, we used images of the same braille pattern set, presented at a size of 7 × 7 degrees visual angle.

Experimental procedure

In the experiment, participants performed four working memory tasks in separate runs, inside the fMRI scanner with the tactile stimuli delivery system for about 2 h. The four tasks were two within-modal tasks (tactile-to-tactile task, TT, and visual-to-visual task, VV) and two cross-modal working memory tasks (tactile-to-visual task, TV, and visual-to-tactile task, VT), designed based on the delayed-match-to-sample task paradigm (Fig. 1a).

Prior to the working memory tasks, the participants were familiarized with the pTDS and tactile braille stimuli, to reduce the gap between recognition difficulties of tactile and visual stimuli and to obtain stable patterns. During this familiarization, participants were asked to touch and recognize each braille stimulus with their right index finger. In each trial, a yellow fixation cross indicated the onset of the trial. When the fixation cross turned red (“Finger-in” cue) 1.5 s after the trial onset, the participants were asked to insert their right index finger in the entrance hole to touch a braille stimulus. The finger movement was detected by a light sensor. When the fixation cross changed to blue (“Finger-out” cue), 1.5 s after the detection of touching the braille stimulus, they were instructed to withdraw their finger from the hole. The maximum allowed duration between the “Finger-in” cue and finger detection by the sensor, or between the “Finger-out” cue and finger withdrawal detection by the sensor, was 1.5 s. If finger insertion was not detected within the duration, the next trial of the task restarted. If finger withdrawal was not detected within the maximum duration, the “Finger-out” cue, a blue fixation cross, was switched to a blue square as a warning for the participant to remove their finger. This familiarization task consisted of 2 runs of 20 trials, and two additional trials (one per each run) of delayed-match-to-sample task, which were the same as that of the tactile-to-tactile (TT) working memory task explained in detail below (Supplementary Fig. 1a). The order of the stimuli was randomized, and the number of exposures to each stimulus was counterbalanced across runs.

Participants then performed within-modal working memory tasks (Fig. 1a, Supplementary Fig. 1a). Each trial began with a change in the fixation cross color from white to yellow. In the tactile-to-tactile (TT) task, 1.5 s after trial onset, participants touched the braille sample as guided by the “Finger-in” cue. After 1.5 s, they withdrew their finger following the “Finger-out” cues, as in the familiarization task. This was followed by a 7-s delay period, during which participants maintained the braille sample information while the fixation cross turned green. During the subsequent probe phase, participants touched the braille probe, again guided by the “Finger-in” and “Finger-out” cues. The maximum allowed duration between the “Finger-in” cue and finger detection by the sensor was 1.5 s for the sample phase and 3.5 s for the probe phase. The maximum duration between the “Finger-out” cue and finger withdrawal detection was 1.5 s. During the subsequent response phase, participants were presented with a question mark and were asked to indicate whether the probe matched the sample within 3 s, by pressing the “yes (matched)” or “no (non-matched)” buttons with their left hand. In the visual-to-visual (VV) task, participants maintained each visually presented braille sample during a 7-s delay and then determined whether the visual braille probe matched the sample. To account for the tactile stimulus onset delay in the TT task, the onset of the braille sample in each VV task trial was delayed by 0.5 s. The braille sample was presented for 1.5 s, followed by a 7-s delay period, a 1.5-s probe phase, and the response phase. The response phase was the same as that of the TT task. Each task consisted of 4 runs of 10 trials, and the TT and VV tasks were presented alternately with the first run task type counterbalanced across participants. Half of the trials were “non-match” trials in which the sample and probe are different braille shapes, and the other half were “match” trials where the sample and probe are the same shape. Probe stimuli in the non-match trials were one of the other four braille shapes, and each braille pattern was presented as a nonmatching probe once in each run. The inter-trial interval (ITI) varied across trials, ranging from 6.5 s to 10.5 s, with an average of 8.5 s.

Before moving on to the cross-modal tasks, participants were simultaneously presented with each braille pattern both tactually and visually, with each pattern shown twice in a randomized order, without fMRI scanning. This procedure was implemented to facilitate smooth performance in the cross-modal tasks.

Participants then performed cross-modal working memory tasks. The task scheme was the same as in the within-modal tasks, but the sample and the probe were presented in different modalities (Fig. 1a and Supplementary Fig. 1a). In the tactile-to-visual (TV) task, the tactile sample was compared with the visual probe, and in the visual-to-tactile (VT) task, the visual sample was compared with the tactile probe.

Since the reaction time for finger withdrawal during the sample phase can affect the recognition duration of the sample stimuli (Supplementary Fig. 1a), we examined the reaction time of finger withdrawal in response to the “Finger-out” cue. The average reaction time for sample braille recognition during the TT and TV tasks was below 0.6 s (TT: mean 0.570 s, SEM = 0.043 s; TV: mean 0.521 s, SEM = 0.035 s), with no significant difference between the two tasks (t(28) = 1.609, p = 0.119, paired t test) (Supplementary Fig. 1c).

fMRI acquisition

Participants were scanned on a 3 T Siemens MAGNETOM Trio located at Seoul National University. Echo-planar imaging (EPI) data were acquired using a 32-channel head coil, with an in-plane resolution of 2.8 mm × 2.8 mm and 40 slices, each with a thickness of 2.5 mm (0.25 mm inter-slice gap, repetition time (TR) = 2000 ms, echo time (TE) = 25 ms, flip angle = 90°, matrix size 76 × 76, field of view (FOV) = 210 mm). Whole brain volumes were scanned, and slices were oriented approximately parallel to the base of the temporal lobe. Anatomical images were scanned with the standard magnetization-prepared rapid-acquisition gradient echo (MPRAGE) sequence (192 sagittal slices with voxel size 1 × 1 × 1 mm3, TR = 2300 ms, TE = 2.36 ms, flip angle = 8°, matrix size 256 × 256, FOV = 256 mm) after the experimental runs of the fMRI session.

Regions of interest

For the ROI-based analyses, superior parietal lobule (SPL), intraparietal sulcus (IPS), and angular gyrus (ANG) were automatically defined by parcellation of FreeSurfer59,60, “G_pariet_sup,” “S_intrapariet_and_P_trans,” and “G_pariet_inf-Angular,” respectively. For the dorsolateral prefrontal cortex (dlPFC), the “rostralmiddlefrontal (rostral middle frontal gyrus)” was used, and Brodmann areas 6 (BA6) was derived from “BA6_exvivo.thresh.label” file. The primary somatosensory cortex (S1) was defined by combining the subdivisions (BA3a, BA3b, BA1, BA2) derived from “BA3a_exvivo.thresh.label”, “BA3b_exvivo.thresh.label,” “BA1_exvivo.thresh.label,” and “BA2_exvivo.thresh.label” files. The central early visual cortex (cEVC) was defined as the overlapping area between anatomically defined early visual areas and functionally defined early visual areas. The anatomically defined early visual areas were based on vcAtlas labels61, “hOc1.mpm.vpnl.label” for V1, “hOc2.mpm.vpnl.label” for V2, “hOc3v.mpm.vpnl.label” for V3v, and “hOc4v.mpm.vpnl.label” for V4v, and the areas derived from these labels was combined. The functionally defined early visual areas were derived from a functional localizer scan of an independent participant set (n = 38). The central part of these functionally defined early visual areas was determined by contrasting alternating blocks of a central disk (5°) and an annulus (6–15°), and the area showing a significant response in more than one-third of the participants was used. All ROIs were defined in the left hemisphere except for the cEVC.

fMRI data analysis

fMRI data analysis was conducted using AFNI (http://afni.nimh.nih.gov/), SUMA (AFNI surface mapper), FreeSurfer, and custom MATLAB scripts (details of the functions used for data analysis are fully described below). Data preprocessing included slice-time correction and motion correction. Specifically, slice-time correction was applied to temporally align slices within each volume to the start of the TR using AFNI’s 3dTshift with heptic (seventh order) Lagrange polynomial interpolation. Rigid-body motion correction was then performed using the first volume of the first run as a reference, implemented with AFNI’s 3dvolreg. The six motion parameters obtained during motion correction were included as nuisance regressors in the subsequent GLM analysis.

To derive the event-related response magnitudes of the BOLD signal during the working memory delay, we generated a standard general linear model with a 4-s boxcar using AFNI software package (BLOCK function of 3dDeconvolve). The β-value of each voxel was derived for 4 s within the delay period—from 2 s after the working memory delay onset to 6 s after the delay onset—to minimize the effect of stimuli perception phases being mixed into the activation patterns during the delay period and was normalized by the average magnitude of the response for each run (percent signal change). In addition, the neural responses at stimulus onsets (sample and probe) and each time point during the delay period (spaced by 2 s, or 1 TR) were derived in the same way but with GAM function of 3dDeconvolve in AFNI software. Specifically, at each time point, the data were derived from the peak amplitudes of BOLD responses elicited by the input event, modeled with GAM, which represents the hemodynamic response function (HRF) as a gamma wave. Consequently, the delayed peak of BOLD responses was already accounted for in the data at each time point. For the whole brain map of the activation level, the average value of percent signal change across the voxels within each searchlight sphere (radius 9.8 mm, corresponding to ~123 voxels) was assigned to each centered voxel to smooth the data to derive group averaged surface map.

The discrimination index was derived from the multi-voxel patterns in each searchlight sphere or in each ROI. For this, four runs of each task for each participant were divided into two halves (two runs in each half) in all possible three ways, and the t-values between each event and baseline in each half of the data for each split were estimated. To derive neural activation patterns when participants maintain the distinct representation of each braille, only the trials where each participant correctly identified the matching or nonmatching sample and probe were included. Additionally, we conducted analyses including all trials, without excluding incorrect trials, and observed the same overall pattern in our main findings reported in this paper. The t-value patterns were extracted from the voxels within each searchlight sphere or each ROI, normalized in each voxel by subtracting the mean value across all stimulus conditions, and the Pearson correlation coefficients between the t-value patterns were calculated62,63,64. The correlation values were Fisher’s z transformed and averaged across the three splits for further analyses. A discrimination index for a braille stimulus was calculated by subtracting the mean of between-braille condition correlations from within-braille condition correlations (Fig. 2a).

To identify overlap areas of working memory maintenance across different tasks, average data of discrimination index across the participants for each task were thresholded (p < 0.01, right-tailed, FDR-corrected). The areas of overlap between TT and VV tasks, excluding TV and VT areas (TT ∩ VV ∩ TVC ∩ VTC) (C denotes complement of a set), the overlap areas of TV and VT tasks, excluding TT and VV areas (TV ∩ VT ∩ TTC ∩ VVC), and the overlap areas of all tasks were derived (Fig. 2d). For excluding areas, p < 0.05 with FDR-correction was applied for thresholding.

To directly compare the working memory representations of braille identity between different tasks, discrimination indices were derived based on within- and between-braille condition correlations between TT and VV, TV and VT, TV and TT, TV and VV, VT and TT, and VT and VV tasks (Fig. 4a). To further test whether the working memory representations are generalized across tasks, cross-decoding of braille identity was tested using multi-class classification, SVM (support vector machine). For the classification analysis, the t-value patterns derived for each trial were used. A multi-class SVM classifier was trained on neural response patterns of one task and tested on neural response patterns of another task, and vice versa (Fig. 4e). The classification accuracies from both directions were then averaged. The classifier training was conducted using MATLAB’s “fitcecoc” function with default parameters (linear kernel), training 10 binary learners corresponding to all possible pairs of the five braille pattern labels. Then, testing was conducted using “predict” function of MATLAB. The number of trials for each braille was balanced in the training sets by random sampling, and the training and test were repeated 100 times. Then, the accuracies were averaged across iterations. Data from one participant was excluded from this analysis because there were insufficient correct trials (less than two trials for particular braille stimuli).

Discrimination indices for each task and cross-task discrimination indices were also derived for the probe braille identity to assess potential carry-over effects of stimulus perception on neural responses at subsequent time points. For this, neural activation patterns were derived for each time point, spaced 2 s (1 TR) apart, from probe stimulus onset (0 s) to 6 s post-onset, following the same procedure used for extracting activation patterns during the working memory delay period (using the GAM function of 3dDeconvolve in AFNI). Discrimination indices were then calculated in the same manner as for sample stimulus decoding but for the probe braille identity.

The representational structure in each region was compared with that of another region using representational similarity analysis63,65. To do this, representational dissimilarity matrices (RDMs) were derived for each ROI based on Fisher’s z-transformed correlations of multivoxel patterns for each pair of braille stimuli computed between two different tasks (TT and VV; TV and VT). Subsequently, the Pearson correlation between the off-diagonal elements of the RDMs from the two ROIs was calculated as a measure of representational similarity between the regions (Fig. 6a). The correlation coefficients were Fisher’s z-transformed before averaging across participants and conducting statistical analyses.

Statistical analyses

We used one-sample t test to compare the response magnitudes of BOLD signals (two-tailed), discrimination indices (right-tailed), and classification accuracies (right-tailed) against baseline levels (zero for response magnitudes and discrimination indices, chance level of 20% for classification accuracies). To compare the discrimination indices or behavioral scores among the four tasks, we conducted repeated measures ANOVA with Greenhouse–Geisser correction using “fitrm” and “ranova” functions of MATLAB, followed by post hoc t test. Paired t tests were used to compare two measures, such as Finger-out reaction times or cross-task discrimination indices/classification accuracies. To correct for multiple comparisons across the four tasks or different measures, the significance of the response magnitudes of BOLD, the discrimination indices, or classification accuracies was assessed using FDR-adjusted p values (Benjamini–Hochberg procedure). For one-sample t tests comparing the response magnitudes of BOLD signals or discrimination indices against baseline levels, p values corresponding to each task were FDR corrected separately for each ROI or each node in the searchlight analysis (Figs. 1–3 and Supplementary Figs. 2 and 5a). For one-sample t tests comparing cross-task discrimination indices or cross-task classification accuracies against baseline levels, the two p values from the measures presented together in each graph were FDR corrected (Figs. 4 and 5 and Supplementary Figs. 4 and 5b–d). The p values for the paired t tests of cross-regional representational similarity were corrected across five comparisons (SPL-IPS versus other pairs of ROIs). Statistical analyses were conducted using MATLAB and AFNI. The full lists of statistics are provided in Supplementary Tables 1–7.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Data availability

The processed fMRI data are available under restricted access due to limitations in the informed consent obtained from participants, and the raw fMRI and MRI data are protected and are not available due to data privacy laws. The sharing and reuse of the data are available upon request from the corresponding author and require approval from the Institutional Review Boards. Source data are provided with this paper.

Code availability

The custom MATLAB scripts used to analyze the functional MRI data in this study are available on GitHub at https://github.com/memcolab/Park2025NatCommu.

References

Baddeley, A. & Hitch, G. J. In Recent Advances in Learning and Motivation, Vol. 8 (ed. Bower, G. A.) 47–90 (Academic Press, 1974).

Baddeley, A. Working Memory (Clarendon Press/Oxford University Press, 1986).

Postle, B. R. Working memory as an emergent property of the mind and brain. Neuroscience 139, 23–38 (2006).

Cowan, N. Working memory underpins cognitive development, learning, and education. Educ. Psychol. Rev. 26, 197–223 (2014).

D’Esposito, M. & Postle, B. R. The cognitive neuroscience of working memory. Annu. Rev. Psychol. 66, 115–142 (2015).

Miller, E. K., Lundqvist, M. & Bastos, A. M. Working memory 2.0. Neuron 100, 463–475 (2018).

Van Ede, F. & Nobre, A. C. Turning attention inside out: how working memory serves behavior. Annu. Rev. Psychol. 74, 137–165 (2023).

Chatham, C. H. & Badre, D. Multiple gates on working memory. Curr. Opin. Behav. Sci. 1, 23–31 (2015).

Polanía, R., Paulus, W. & Nitsche, M. A. Noninvasively decoding the contents of visual working memory in the human prefrontal cortex within high-gamma oscillatory patterns. J. Cogn. Neurosci. 24, 304–314 (2012).

Curtis, C. E. & D’Esposito, M. Persistent activity in the prefrontal cortex during working memory. Trends Cogn. Sci. 7, 415–423 (2003).

Riley, M. R. & Constantinidis, C. Role of prefrontal persistent activity in working memory. Front. Syst. Neurosci. 9, 181 (2016).

Christophel, T. B., Klink, P. C., Spitzer, B., Roelfsema, P. R. & Haynes, J.-D. The distributed nature of working memory. Trends Cogn. Sci. 21, 111–124 (2017).

Atkinson, R. C. & Shiffrin, R. M. In Psychology of Learning and Motivation, Vol. 2 89–195 (Elsevier, 1968).

Goldman-Rakic, P. S. Cellular basis of working memory. Neuron 14, 477–485 (1995).

Cowan, N. The magical number 4 in short-term memory: a reconsideration of mental storage capacity. Behav. Brain Sci. 24, 87–114 (2001).

Miller, E. K. & Cohen, J. D. An integrative theory of prefrontal cortex function. Annu. Rev. Neurosci. 24, 167–202 (2001).

Harrison, S. A. & Tong, F. Decoding reveals the contents of visual working memory in early visual areas. Nature 458, 632–635 (2009).

Serences, J. T., Ester, E. F., Vogel, E. K. & Awh, E. Stimulus-specific delay activity in human primary visual cortex. Psychol. Sci. 20, 207–214 (2009).

Christophel, T. B., Hebart, M. N. & Haynes, J.-D. Decoding the contents of visual short-term memory from human visual and parietal cortex. J. Neurosci. 32, 12983–12989 (2012).

Kumar, S. et al. A brain system for auditory working memory. J. Neurosci. 36, 4492–4505 (2016).

Lee, S.-H. & Baker, C. I. Multi-voxel decoding and the topography of maintained information during visual working memory. Front. Syst. Neurosci. 10, 2 (2016).

Serences, J. T. Neural mechanisms of information storage in visual short-term memory. Vis. Res. 128, 53–67 (2016).

Schmidt, T. T. & Blankenburg, F. Brain regions that retain the spatial layout of tactile stimuli during working memory–a ‘tactospatial sketchpad’? Neuroimage 178, 531–539 (2018).

Scimeca, J. M., Kiyonaga, A. & D’Esposito, M. Reaffirming the sensory recruitment account of working memory. Trends Cogn. Sci. 22, 190–192 (2018).

Uluç, I., Schmidt, T. T., Wu, Y. & Blankenburg, F. Content-specific codes of parametric auditory working memory in humans. Neuroimage 183, 254–262 (2018).