Abstract

The increasing demand for motion tracking systems has been accelerated by advancements in virtual reality (VR) and motion reconstruction technologies. Combined with emerging innovations in the Internet of Things (IoT), these systems have unlocked transformative applications, from immersive user experiences to personalized healthcare solutions. However, conventional motion tracking systems often fall short of delivering sophisticated tracking and feedback capabilities, while systems designed for detailed motion analysis are typically costly and limited to controlled environments. This study introduces a cost-effective motion tracking system that integrates full-body motion analysis with real-time, bidirectional haptic feedback. Utilizing flexible, patch-type epidermal haptic devices alongside a remote machine‑learning framework, the system captures full‑body motion and delivers personalized, time‑synchronized feedback. Its closed‑loop design lays the groundwork for real‑time bidirectional haptic cues that accommodate user responsiveness and engagement.

Similar content being viewed by others

Introduction

The rapid advancement of sensing-based haptic feedback interactive systems has fundamentally transformed human-digital interaction by seamlessly integrating real-time physiological monitoring with contextually responsive tactile stimulation1,2,3,4,5. These systems represent a paradigm shift from traditional unidirectional interfaces toward bidirectional platforms that simultaneously capture human behavior and physiology while delivering coordinated haptic feedback to bridge physical and virtual realms. Contemporary interactive systems typically combine various sensing modalities, including inertial, physiological, and environmental sensors, with haptic actuators to create responsive feedback loops that enhance user experience and engagement6,7. Among these sensing modalities, motion capture technologies play a particularly crucial role as they provide the foundation for understanding human intent, emotional states, and behavioral patterns essential for remote interaction8,9,10. The ability to accurately sense and interpret dynamic motion in real-time enables systems to infer user intent and facilitate emotionally resonant communication, making it indispensable for immersive applications such as virtual/augmented reality (VR/AR), social robotics, telepresence, and rehabilitation, where understanding user state is vital for responsive and empathetic system behavior4,11,12.

However, despite the significant potential of interactive systems, technical challenges remain in implementing wearable platforms that effectively integrate motion tracking and haptic feedback capabilities13,14,15. Most existing platforms are designed for specific anatomical regions, typically on the hands, arms, or torso, limiting their applicability to localized interactions that fail to capture the interconnected nature of human movement and the spatial distribution of tactile perception across the entire body3,16,17,18,19,20. This anatomical constraint fundamentally restricts the realism and immersion achievable in applications requiring full-body engagement, such as virtual reality training, rehabilitation protocols, or social telepresence experiences. Furthermore, critical challenges persist in achieving time synchronization across multiple inertial sensors, implementing effective drift correction, and enabling real-time motion classification21,22. These technical limitations have hindered the development of applications that enable genuine real-time interaction, particularly in VR contexts where users in remote locations seek to interact naturally23,24.

To address these challenges, we present a simple yet adaptable network of flexible, skin-conformal patches, each consisting of a triaxial accelerometer, haptic actuator and BLE-enabled SoC, which can be freely placed across diverse anatomical locations. Furthermore, our work employs closed-loop bidirectional interaction through precisely time-synchronized actuation, substantially reducing latency and server dependency. Our machine learning (ML) classifier processes motion data from diverse body placements, including the body, arms, and legs, to accurately identify both large-scale movement patterns and subtle, granular activities, enhancing tracking precision while also providing a deeper understanding of the interconnected nature of human motion. As demonstrated in Supplementary Table 1, our system reduces end‑to‑end latency to ~40 ms while keeping each node’s weight at ~5 g, offering improved performance over existing wearable motion capture and feedback solutions.

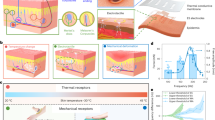

To complement our system’s low latency, we incorporated tactile haptic feedback due to its suitability in lightweight and wearable applications25. Haptic feedback mechanisms can broadly be categorized into two types: tactile and kinesthetic feedback. Kinesthetic feedback involves bulky mechanical components or high-voltage actuators (often >300 V) that apply force to muscles or joints, making them unsuitable for wearable applications due to size, complexity, and power constraints26. In contrast, tactile feedback stimulates cutaneous mechanoreceptors through skin-level vibrations or pressure, enabling intuitive interaction without compromising device portability25. While multimodal feedback can enhance realism in specialized cases, prior work has shown that vibrotactile cues alone effectively support user engagement and perceptual awareness across diverse applications27,28,29,30,31. In this study, we focus on wearable vibrotactile platforms, which enhance interaction awareness through skin-level cues in a safe, scalable, and unobtrusive manner suitable for immersive systems.

By enabling real-time bidirectional exchange of synchronized haptic feedback between physically remote users, our system restores an essential aspect of tactile communication, accommodating intuitive awareness of each other’s movements and intent without relying on visual or verbal cues15,32. This capability represents a critical advancement for immersive applications such as social telepresence, remote physical training, and collaborative VR/AR environments33,34. We initially assessed our proposed system in VR/AR applications, where it provides real-time haptic feedback in response to user movements. By linking physical actions to corresponding tactile cues, the system supports a more natural and interactive virtual experience. Its wireless, low-power design enables prolonged use at home, while the collected motion data facilitates personalized intervention. By mapping user movements to synchronized vibration feedback, the closed-loop system facilitates mutual awareness and real-time responsiveness between users, enhancing the sense of presence and interaction in remote communication scenarios (Fig. 1a). Our work introduces a multifunctional wearable system that integrates real-time body motion sensing with flexible haptic interfaces and IoT-enabled deep learning to overcome the limitations of conventional interactive platforms. This integration supports a range of applications, including gaming, training, and remote collaboration, and contributes to the broader development of next-generation human-machine interfaces.

a The graphic illustrates the chain of operation during motion activity, depicting the transmitted signal’s virtual action process along with the classification result from the machine learning algorithm. Haptic feedback portrays stimulation of actuator for the receiver. b Optical image of the device undergoing bending, showing the flexible characteristic of the device. c Exploded view illustrates the layers comprising the device with the electrical components and supporting layers encapsulated in an elastomeric solution. d Block diagram illustrates the components of the comprehensive system, along with the wireless protocols employed for communication. e Comparison between reference arm angles obtained via marker-based pose estimation and orientation angles calculated from gravity vector analysis of triaxial accelerometer data across various arm positions. f Bland–Altman plot comparing accelerometer-derived angles with video-based reference measurements. The mean bias and limits of agreement (±2.0 s.d.) are shown as solid and dashed lines, respectively, indicating the degree of agreement between the two methods across a range of arm orientations.

Results

Device and system design

The device encompasses crucial features for on-skin applications by employing elastomeric encapsulation with materials possessing low moduli, thereby circumventing any elastic mismatches with skin tissue, and facilitating conformal, soft contact for seamless integration into daily life35,36,37,38,39. Our device, as shown in Fig. 1b, incorporates a flexible patch serving as a platform for housing both active and passive electronic components and enhancing operational stability. The electronic components consist of a battery, a triaxial accelerometer, a BLE microcontroller unit, and an actuator. Additional details into the structured layers of these components, between the elastomeric layers, are illustrated in the exploded view (Fig. 1c). Combined with the thin substrate layer, this strategically layered structure enables linear sensor sensitivity across varied motion frequencies while optimizing haptic feedback transmission (Supplementary Fig. 1).

We adopted eccentric rotating mass (ERM) actuators for practical vibrotactile feedback in wearable systems due to their mechanical simplicity, robustness, and compatibility with flexible substrates. While alternative haptic mechanisms like electro-tactile stimulation, dielectric elastomer actuators, and linear resonant actuators (LRAs) offer specific advantages, they are limited by complexity, high power consumption, or insufficient performance in skin-mounted applications40,41,42,43,44,45,46,47,48. ERM actuators provide strong, intuitive sensations in a compact form factor with efficient mounting strategies49,50,51. Despite amplitude-frequency coupling and performance variations under different operating conditions, ERMs remain the most practical solution for scalable haptic deployment52,53,54.

To ensure long-term reliability under dynamic usage conditions, we conducted 1000-cycle repeated bending tests with a 25 mm bending radius (Supplementary Fig. 2), confirming stable sensor output and preserved wireless communication integrity throughout deformation. The fabrication process (Methods section and Supplementary Fig. 3) leverages cost-effective development techniques while incorporating a 50-μm thick PET support layer that mitigates strain-induced damage to copper interconnects. Uniaxial tensile testing revealed that the PET-supported structure demonstrated a substantially increased modulus of 58.26 MPa compared to 1.16 MPa for the PDMS-only structure, effectively limiting strain propagation through the PDMS matrix and protecting embedded copper electrodes from fracture during mechanical deformation. Finite element analysis further confirmed the efficacy of this configuration in reducing mechanical stress concentrations (Supplementary Fig. 4). Our systematic analysis revealed that vibration efficiency is highly dependent on substrate thickness, with thinner (0.5 mm) substrates transmitting significantly higher vibration energy, confirming the effectiveness of our chosen configuration for optimized tactile feedback perception (Supplementary Fig. 5).

The overall structure of the multiple sensing and stimulation system depicted in Fig. 1d enables simultaneous motion acceleration measurement and programmable vibrational feedback through an integrated BLE protocol interface, supporting bidirectional interactions via synchronized sensing and tactile feedback modules (Supplementary Movie 1). Strategic sensor placement across multiple body segments facilitates precise acceleration data acquisition from distributed attachment points, with the spatial distribution of tracked body segments directly determining the motion tracking system’s resolution and degree-of-freedom capture capability55. This distributed approach enables comprehensive analysis of both subtle and dynamic movements while maintaining minimal attachment requirements essential for natural user interaction (Supplementary Fig. 6).

The modular design supports customizable positioning through medical-grade adhesive interfaces that ensure sustained adhesion performance, as validated through quantitative 90° peel testing of the PDMS-adhesive-skin interface (Supplementary Fig. 7). Through the evaluation of adhesion characteristics, we found that while the acrylic-PDMS interface exhibited higher initial peel strength, the skin-silicone interface consistently demonstrated higher average peel force during steady-state peeling, demonstrating reliable mechanical integration with skin-safe yet stable adhesion essential for consistent device performance during prolonged wear56. Biocompatibility assessment of the tape material has been previously confirmed with no irritation observed after 24-h continuous wear, and no additional skin damage was noted during our current attachment experiments, with no signs of redness, itching, or discomfort being observed throughout extended wear periods, validating safety and comfort for extended daily-use applications57,58.

The thermomechanical performance of the resulting electronics ensures comfortable daily usage for on-skin applications without causing any thermal discomfort as demonstrated in Supplementary Fig. 8a, b. The BLE SoC exhibits a heat dissipation range between 24.5 °C and 27 °C during data streaming, illustrating the comfort range for epidermal contact. Additionally, the temperature distribution on the actuator shows minimal variation across different patterns, regardless of the haptic feedback operation. The entire system is powered by a 3.0 V, 220 mAh coin cell battery chosen for its compact size (20 mm in diameter) and suitability for wearable applications, with all components carefully selected to operate within the 3.0–3.3 V range, enabling direct connection without voltage regulators. The system demonstrates exceptional power efficiency with individual component consumption as follows: the accelerometer consumes ~350 μA, the BLE SoC operates at ~1.4 mA during data transmission through optimized low-power connection interval, and the ERM actuator draws up to 60 mA during actuation, though typically observed at ~40 mA during short-duration haptic feedback bursts. The device maintains an average consumption of 1.3 mA during Bluetooth advertising and 1.5 mA during continuous data streaming, with the actuator engaged only in response to specific user interactions (Supplementary Fig. 8c). Based on these power consumption metrics, our observations indicate that the device can continuously transmit motion activity data for ~160 h, establishing this platform as a robust foundation for advanced human-machine interaction applications requiring sustained, comfortable, and reliable epidermal integration.

As illustrated in Fig. 1e, precise acceleration data acquired from multiple body segments enables monitoring of limb orientation by analyzing the vector-based direction of gravitational forces captured by each accelerometer. Using these gravity vectors, we tracked the relative orientation of the arm in three distinct positions and compared them to measurements from a video-based marker tracker (Fig. 1f). It is also important to note that during extended operation the accelerometers showed minimal baseline drift, and their sensitivity remained constant over time, ensuring reliable performance for prolonged durations (Supplementary Fig. 9). Building on the sensor’s stability performance, we implemented a BLE communication protocol for wireless data acquisition, transmission, and synchronization, an essential component for maintaining precise timing across multiple sensors and enabling accurate spatiotemporal control of haptic feedback. The system's user interface maps paired devices via their MAC addresses and streams synchronized motion data to a server for classification, forming the foundation for coordinated feedback and responsive interaction. Two-way communication between the user interface and the server computer enables the server-based algorithm to remotely access the data for motion classification, and the mobile devices retrieve the corresponding feedback patterns.

Interfacing of full-body motion-haptic networks

While our system was developed with modularity in mind, our primary design objective was to balance motion capture resolution with classification efficiency, enabling real-time data transmission and responsive haptic feedback without introducing unnecessary complexity. Scalability in wearable sensor networks has been widely recognized as a means to enhance versatility and expand application scope. For example, research on infant activity monitoring showed that increasing the number of sensing nodes improved the resolution of behavioral measurements during full-body movements59. Similarly, studies on inertial-based motion capture demonstrated that incorporating additional nodes further improved accuracy and enabled finer reconstruction, full-body activity estimation is sufficiently achieved with a limited number of sensors60. Building on these findings, our system offers synchronized multi-node motion acquisition while maintaining the efficiency necessary for practical, real-time feedback applications.

The simultaneous connection and handling of multiple peripheral devices have been conducted via BLE service handling of the mobile application (Fig. 2a and Methods section). We were able to synchronize the influx of accelerometer data through the fixed buffer method for our configured sampling rates of 50 Hz. Previous studies on multi-sensor data synchronization via mobile platforms have commonly employed single-threaded methods, where a single thread manages connections and collects data using a round-robin approach61. Similarly, our system adopts a round-robin strategy, utilizing a central buffer to store data from each peripheral device. Since all sensors operate at the same frequency and the central buffer ensures synchronized start times, our devices achieved precise coordination within a single sampling period of 20 ms (Fig. 2b), with the complete motion signal further detailed in Supplementary Fig. 10. Individual spikes in sensor output from isolated limb motions were also captured, depicted in Supplementary Fig. 11, to demonstrate the system’s ability to accurately differentiate and track distinct sequential limb actions.

a Diagram illustrating the synchronization of multiple peripheral devices. Each peripheral device’s connection configurations are stored in the mobile application to set the buffer size along with its location on the user’s body. b Output data of the inertial peak displays phase difference between the multiple devices. c Schematic of the triggering mechanism illustrating the configurations for the automatic recording of motion signal data. Sub-triggers consisting of a threshold and threshold meter operate in the mobile application. d Graph illustrating the manually recorded squat motion along with the segment recorded by the automatic triggering mechanism. e 3-dimensional t-SNE scatterplot comparing the manual and automatic-triggered clusters for the respective classes.

Bidirectional communication between the users is composed of two classifier ports (Supplementary Fig. 12), each simultaneously initiating signal processing for signals from the full-body triggering mechanism and running the classification model. The classification results are stored in a remote SQL database using the MySQLdb Python library and sent to the other user’s mobile device via UDP. Supplementary Fig. 13 outlines the function and events involved in sending and retrieving these results for classification and activating the corresponding actuation response. Simultaneous data transmission during haptic feedback does not cause false triggers and any distortions in the motion signal are denoised via band-pass filter. Inertial signals from both actuation and idle states were recorded and compared as further illustrated in Supplementary Fig. 14.

Automatic detection of spikes and drastic changes in signal amplitude have been studied extensively throughout the analysis of physiological signals62,63,64. In the initial phase of our experiment, we manually collected motion data, capturing distinct user-initiated actions. Notably, motions characterized by subtle activities, such as handshakes or hugs, exhibited considerable variability across recorded users, potentially introducing challenges to the robustness of our deep-learning model during the training process. To enhance the consistency of each motion class, we introduced a triggering mechanism (Fig. 2c) that ensured a predefined degree of strength was exerted by the users during the initiation of a motion. Implementing an automatic trigger enables the interfacing application to selectively transmit data to the server and subsequently, reduces the computation requirement of the server computer. Figure 2d portrays the raw signal for the squatting motion, highlighting the automatically triggered portion during manual recording. We can observe the signals influenced by motion are promptly captured at the start of excitation of the signal, while minimizing data from inactivity. We further compared automatically and manually recorded signals for selected motion classes from a single user using t-distributed stochastic neighbor embedding (t-SNE) clustering (Fig. 2e), which revealed that signals captured with the active triggering mechanism exhibited reduced variance across most classes.

Real-time full-body motion tracking of sensor network

Waves produced by inertial excitation of the body have been shown to reside in the lower frequency ranges. Signal processing techniques such as the Fourier transform and Continuous Wavelet Transform (CWT) were employed in the signal processing stage to compare the spectral densities present over the time domain65,66. Based on a previous study, classification of objects via a piezoresistive structure was conducted utilizing both Fourier transform spectrograms and CWT features67. Results from these findings indicated that the CWT was more adept at analyzing complex datasets, particularly those requiring the preservation of information across both the frequency and time domains68,69. In our CWT analysis, we employed a Morse wavelet to derive low-dimensional spectro-temporal features. The parameters for the mother wavelet, specifically the gamma and time-bandwidth product, were set to 3 and 60, respectively. It’s noteworthy that prior research focusing on the detection of seizures has also adopted similar parameter configurations when utilizing low-dimensional features69. This consistency in parameter choice underscores the robustness and applicability of such settings across diverse analytical contexts. As detailed in the Methods section and illustrated in Supplementary Fig. 15, the 10 specified classes for the full-body layout display relatively clear distinctions amongst each motion across the accelerometer’s axis and can further be distinguished based on a periodic spectral density pattern for repetitive motions.

The utilization of a lightweight convolutional neural network, with an efficient stack of convolutional layers, facilitated the extraction of complex features from the scalograms, enabling accurate classification across different sensor attachment layouts70. Moreover, our data has shown to demonstrate localized characteristics, emphasizing the significance of the scalogram’s ability to capture nuanced temporal and frequency features localized within the signals. Furthermore, since our selected classes of motions also consisted of activities with low intensity, the ability to observe slight variations within each class proved to be a significant factor for analyzing subtle differences in signal patterns (Supplementary Fig. 16). The use of deep learning was critical in this context, as it enabled the system to identify subtle spatiotemporal variations in sensor signals that may not be easily captured through conventional thresholding or rule-based methods. 10,000 samples of data (1000 samples ×10 different actions) were split into training/validation/test sets at a ratio of 8:1:1 to evaluate the predictive performance of the proposed method. Undergoing three layers of convolution within the CNN structure, each device’s transformed scalogram is processed to extract features (Fig. 3a). The model’s accuracy and loss metrics for full-body attachment positions reveal a scalogram accuracy of ~97%, as illustrated by the corresponding data (Fig. 3b). The confusion matrix further reveals the classified results for a selected class of activities (Fig. 3c).

a Comprehensive diagram illustrates the process of motion data, which undergoes signal processing, feature extraction, and classification through the model's structure. b Loss minimization graph of the training and validation sets for full body motion classification, accompanied by a scatterplot illustrating the validation and training accuracy for their respective validation sets. c Confusion matrix illustrating the test set results of full body motion. d Precision–recall curves comparing four classification models on the full-body motion dataset. The Multimodal CNN model processes three separate CWT-based inputs from each axis using a multi-branch architecture. The RMS CNN model applies a root-mean-square operation across the x, y, and z-axis and generates a single scalogram image for CNN-based classification. In contrast, the SVM and Logistic Regression models directly use the raw time-series sensor data as input. e Classification accuracy of the Multimodal CNN and RMS CNN models at three sensor attachment positions: –25% (gray), 0% (black), and +25% (red) relative to the center of the limb segment. Positions are defined based on the distance from the wrist to the elbow (arm) and from the ankle to the knee (leg). f Time consumed for each trial, consisting of the time taken for the application to send the motion signal to the remote PC and the period for the computer to process and return the classification result back to the mobile device through the cloud server.

Our proposed multi-input CNN model, which utilizes CWT coefficient matrices from multiple sensors, achieved the highest area under the precision-recall curve (AUPRC) among the evaluated approaches, including RMS-based CNN, support vector machine (SVM), and logistic regression classifiers (Fig. 3d). Compared to the single-input CNN trained on a single CWT representation, our architecture consistently demonstrated superior precision-recall performance, achieving an AUPRC of 0.994. By treating each device’s CWT coefficient matrix as an individual modality, the multi-input classifier effectively leveraged complementary information across sensors, resulting in significant improvements in classification accuracy. The proposed model maintained consistently high classification accuracy across different sensor attachment positions on both the arm and leg (Fig. 3e), indicating robustness to moderate variations in device placement. The performance of the multi-input model declined when both sensor position and orientation varied, while a CNN using rotation invariant RMS features achieved over 85% accuracy, demonstrating greater robustness (Supplementary Fig. 17). This suggests that while the proposed model works best with consistent orientation, RMS-based methods offer a practical alternative when placement is inconsistent. Owing to the lightweight architecture and direct use of CWT coefficients, the proposed model achieved low-latency inference, processing and classifying incoming signals in ~40 ms during quasi-real-time operation (Fig. 3f).

In addition to analyzing the full-body layout, we conducted a classification analysis with the devices concentrated on the arms and legs to better understand the contribution of individual limbs to overall motion patterns. Specifically, we conducted a focused analysis using sensors positioned on the arms and legs to evaluate their individual contributions to overall activity recognition. This targeted approach achieved accuracy of 94% by capturing nuanced variations in motion patterns unique to the arms and legs (Supplementary Fig. 18), underscoring the value of targeted sensor placements for distinguishing complex movements and capturing both isolated limb dynamics and synchronized full-body activities.

Bidirectional full-body interactive wearable network system

Upon receiving the classification result from the server, the mobile central device provides the appropriate feedback by controlling the ERM actuator in either a synchronous or recurring pattern. The interaction between the two users can be observed in Fig. 4a, illustrating the exchange of real-time feedback through programmable tactile signals. While the feedback between users does not directly reproduce the sensation of physical pressure on contact, the delivery of synchronized vibration cues enhances the sense of presence and mutual awareness, prioritizing interpersonal responsiveness and shared timing over full mechanical fidelity to support dynamic, real-time communication. These predefined feedback patterns, detailed in Supplementary Fig. 19, are encoded as functions within the application. The figure also presents the results of a perception test, in which three participants each experienced five trials per motion class, totaling 15 evaluations per class, to assess the perceptibility of the haptic cues. Our Supplementary Movie 2 displays faster response times with vibrotactile feedback when compared to the visual LED actuation of the received motion class. These functions utilize the device's labeled location and its corresponding Generic Attribute (GATT) for conducting write characteristic operations, as displayed in Fig. 4b. During haptic feedback, the skin’s response to the actuator’s vibrations demonstrates the miniaturized haptic platform’s ability to produce varied tactile sensations, which were further assessed using the accelerometer to measure changes in haptic magnitude at different settings (Supplementary Fig. 20). Simultaneously, the comprehensive system employs an event observer to identify new classifications from the server, triggering the actuation thread and activating the stored feedback pattern based on the received classification number. Each feedback pattern is maintained for a duration of 1.5 s. Furthermore, to initiate further assessment of the developed system, we recorded real-time motion data and feedback output signals for each user. Figure 4c illustrates both the raw and trigger-recorded signals, providing a comprehensive view of the system’s performance. The signal reading and response between the two users highlight the overall time required for signal transmission and computation to generate and deliver feedback to the corresponding user, showing the efficiency and responsiveness of the system in facilitating timely feedback exchange between the users. To further demonstrate the real-time classification performance, system responsiveness, and feedback operation in practical usage, we provide Supplementary Movie 3, which provides quasi real-time motion capturing and feedback delivery during user interaction.

a Graphic representation of the closed-loop system featuring the exchange of motion signals and feedback between the engaging users. b Block diagram displaying the application’s handling of individual devices for operation of the feedback system. Feedback patterns for each motion type are stored in the mobile device, which can output a pulse or steady response simultaneously for each paired device. Group toggle function enables feedback in a synchronized/cascading pattern. Toggle intervals as shown on the right, illustrate the default activation times configured for each period. c Comprehensive signal output diagram recorded during the remote interaction between the users. User 1 (left) conducts the punching motion, transmitting the triggered data to the remote database for classification. The red dotted lines indicate the starting point for the feedback pattern for User 2 (right). Reciprocally, User 2’s exchange of squatting motion data to haptic feedback is also indicated through the blue dotted lines.

Discussion

This study addresses key challenges in motion tracking by introducing a cost-effective, lightweight, and flexible system composed of patch-type epidermal devices. The proposed platform enables comprehensive, full-body motion monitoring while ensuring user comfort and long-term wearability. Its conformal design accommodates diverse body regions, supporting both localized and full-body movement analysis with high accuracy. A major advantage of the system is its integration of real-time motion sensing with haptic feedback through a bidirectional communication framework. This combination enhances applications such as sports monitoring and immersive VR by providing intuitive tactile cues.

Although the developed system delivers unimodal vibrotactile feedback, this modality alone has proven effective in enhancing user perception and engagement in contexts such as physical rehabilitation and immersive virtual environments. Building on this foundation, we envision the evolution of the platform into a multimodal haptic interface, incorporating additional modalities, such as pressure and thermal feedback, to enable more functionally diverse and perceptually rich interactions. While ERM actuators inherently couple vibration frequency and amplitude, their mechanical robustness, ease of integration, and power efficiency render them advantageous for continuous wearable applications relative to other actuator types. We hypothesize that their feedback characteristics can be further optimized through systematic tuning of mechanical parameters, including mass eccentricity, mounting substrate stiffness, and encapsulation strategies. These directions will be explored in future work to improve both actuation fidelity and user-specific responsiveness71.

Based on these potential merits, we believe the platform supports unique use cases beyond conventional wearables. In rehabilitation, it can potentially be used for real-time correction of functional movements (e.g., sit-to-stand, reach-and-grasp) through synchronized motion tracking and joint-level vibrotactile feedback, promoting motor learning and patient autonomy in unsupervised settings72. Additionally, the system's compact, wireless design can facilitate remote collaboration in scenarios like tele-rehabilitation or partner training, where physical feedback improves user engagement and presence. While our current system does not explicitly estimate joint angles in an anatomical sense, the extracted angle information can potentially serve as a valuable reference for movement characterization. We believe future works could leverage these data to support more robust modeling of body kinematics and integrate joint-level feedback more precisely in real-time applications.

To further improve autonomy and responsiveness, the current system adopts a lightweight classification model and utilizes the UDP protocol to minimize latency and reduce dependency on centralized servers. Building upon this foundation, future developments will focus on embedding real-time inference directly within the device, enabling fully standalone operation and expanding its applicability to offline, mobile, or resource-constrained environments.

Methods

Ethical issues

All the experimental procedures involving human subjects were conducted in accordance with the approved protocols by the Sungkyunkwan University Bioethics Committee (Institutional Review Board; IRB, approval number: SKKU 2025-03-012). Additionally, all IRB guidelines were followed, and participants have provided verbal consent before the collection of data.

Device fabrication

The silicone elastomer (PDMS, Sylgard 184, Dow) mixed at 10:1 ratio served as a flexible substrate through spin-coating onto a slide glass (spin-coating at 500 rpm for 30 s, curing on a hot plate at 95 °C for 10 min). A copper foil (thickness of 10 μm) was applied to the surface and patterned using an optical-fiber laser marker (HY-FMC20, HYOSUNG Laser). Soldering paste (SMD291AX250T5, Chip Quik) bonded electrical components including BLE SoC (nRF52832, Nordic Semiconductor), antenna (2450AT14A0100T, Johanson Technology), triaxial accelerometer (ADXL335, Analog Devices), vibration ERM motor (VC0720B015F, Vybronics), and passive components (resistors, capacitors, and inductors) onto the copper trace by baking at 360 °C. Encapsulation was processed by drop casting the PDMS which is same material with the bottom layer.

Circuitry of electronics

For detecting human movements, three output nodes of the accelerometer were connected to general-purpose input/output (GPIO) pins of the Bluetooth microcontroller. The acceleration data was passed to an internal ADC and transmitted to a user interface device with sampling rate of 50 Hz. Wireless electronic modules consist of the BLE SoC with customized firmware, which allows haptic actuation on the platform and communicates with ML server for motion classification. For vibrational feedback, the microcontroller activated another GPIO pin to drive current into the ERM motor, where a metal-oxide-semiconductor field-effect transistor (RUM002N05T2L, ROHM Semiconductor) served as switch. A coil cell battery (CR2032, Toshiba) provided power supply to the entire circuit with capacity of 220 mAh.

Sensor resolution

A power function generator and shaker table (FG100, 3B Scientific) were used to assess the sensing module’s effectiveness in capturing vibration data associated with human movements. The power generator was configured to produce sine waves at frequencies of 50 Hz, 100 Hz, and 200 Hz. The sensor was mounted on the metal plate with double-sided tape and was oriented so that the z-axis is perpendicular to the surface. The z-axis output node of the accelerometer in the device was connected to digital oscilloscope (DSOX1204A, KEYSIGHT) using 40 AWG enamel-coated copper wires to measure data without being affected by vibrations.

Peel Test for adhesive characterization

To quantitatively evaluate the adhesion performance of the medical-grade double-sided adhesive tape (2477P, 3M) used for skin-device integration, 90° peel tests were conducted under controlled conditions. The tape consists of two distinct adhesive surfaces: a silicone-based adhesive designed for gentle attachment to skin, and an acrylic-based adhesive formulated for stronger bonding to substrates such as PDMS. Peel tests were performed separately for each interface to assess their mechanical stability and suitability for wearable applications. For the skin-adhesive interface, the silicone side of the tape was applied directly onto the forearm of a human subject under consistent pressure. For the device-adhesive interface, a thin PDMS sheet was placed on the same location of the arm to replicate the mechanical properties of the device substrate, and the acrylic side of the tape was adhered to it. In both cases, the non-adhered end of the tape was clamped and peeled at a constant rate perpendicular to the surface (90° angle) using a tensile testing machine (ESM-303, Mark-10). The measurements were repeated at identical anatomical positions to minimize variability.

Evaluation of vibration transmission efficiency

To investigate the effect of substrate thickness on haptic feedback transmission, we designed an experiment using an ERM actuator and an accelerometer. The ERM actuator was affixed to one side of a vibration propagation layer, and the accelerometer was attached to the opposite side. Between the actuator and sensor, substrate samples of varying thicknesses (0.5 mm, 1.0 mm, 1.5 mm, 2.0 mm, and 2.5 mm) were inserted. For each substrate thickness, the actuator was powered under identical voltage and current conditions. The resulting vibrations transmitted through the substrate were recorded by the accelerometer at a fixed sampling rate. The acceleration signals were then subjected to FFT analysis to extract the vibration energy spectra, which were quantified in units of g²/Hz.

Mechanical reliability under repeated bending deformation

We conducted a cyclic bending test with a fixed bending radius of 25 mm to evaluate the mechanical robustness and signal stability of the device under repeated deformation. The device was mounted on a tensile testing system (ESM-303, Mark-10) configured to apply repeated mechanical bending. A total of 1000 bending cycles were performed continuously under ambient conditions. During the test, the integrated 3-axis accelerometer continuously transmitted acceleration data wirelessly at a sampling rate of 50 Hz. All transmitted data were recorded in real time to monitor signal stability throughout the test. For visualization and data presentation, the recorded acceleration signals were downsampled to 1 Hz, allowing for clear observation of long-term trends without excessive data volume.

Mechanical characterization with protective layer

Uniaxial tensile testing was performed using a motorized tensile testing system (ESM-303, Mark-10) to compare the stress–strain behavior of devices with and without the PET layer. To simulate critical stress conditions on the copper traces, 0.2 mm wide copper interconnects (minimum linewidth used in the actual device) were embedded within PDMS for both test groups. In one group, the electrodes were encapsulated solely in PDMS, and in the other group, a PET film was placed beneath the encapsulated layer. For each condition, rectangular samples (50 mm × 1.1 mm × 0.05 mm) were elongated at a constant rate of 3 mm/min until a maximum strain of 1% was reached.

Finite element analysis of the mechanical stability of the device under uniaxial stretching

The commercial FEA software Abaqus (ABAQUS/CAE, Ver. 6.24) was used to evaluate the mechanical stability of the device under uniaxial stretching conditions. Two device models were considered: one with a PET layer at the bottom and one without. A traction force was applied to both lateral ends of the bottom layer in each model. The elastomer layers and copper circuit were bonded using tie constraints to prevent delamination during deformation. In the model with the PET layer, the PET was also bonded to the bottom of the device using a tie constraint. The elastomer layers were modeled using hybrid eight-node 3D solid elements (C3D8RH in Abaqus), the PET layer with eight-node 3D solid elements (C3D8R) and the copper circuit with four-node 2D shell elements (S4R). To confirm the stability of the simulation results, a mesh convergence test was conducted by gradually refining the mesh until the strain distribution stabilized. The Young’s modulus (E) and Poisson’s ratio (ν) for each material were as follows: Eelastomer = 2300 kPa, νelastomer = 0.49; Ecopper = 119 GPa, νcopper = 0.34; and EPET = 2.76 GPa, νPET = 0.33.

Characterization of the haptic device

For measurements of actuation properties on the skin surface, we used a synthetic skin with physical properties that were matched to epidermis-dermis bilayer. The PDMS (Sylgard 184, Dow) at a weight ratio of the base and curing agent of 30:1 served as the epidermal layer with elastic modulus of ~230 kPa and thickness of ~1.5 mm. The dermis was made by a ~5-mm thickness slab of soft silicone elastomer (Ecoflex, Smooth-on) with an elastic modulus of ~60 kPa. To capture vibratory response, the triaxial accelerometer (ADXL335, Analog Devices) was placed in the dermis layer like tactile receptors, such as Pacinian corpuscles. Two experiments defined characteristics of the haptic interface system: coverage regions with a single actuator device embedded in the haptic platform and vibrational information relevant to voltage intensity of the actuator. For the first experiment, an ERM actuator (VC0720B015F, Vybronics) encapsulated in PDMS elastomer was used to apply vibrations to the surface of the synthetic skin model. Physical signal measurements were conducted at different displacements of 0 cm, 1 cm, 2 cm, 3 cm, and 4 cm from the center of the actuator, with a constant operating intensity of 3.0 V. In the second experiment, a sensor embedded in the dermis layer detected physical signals at varying actuator intensities of 3.3 V, 2.8 V, 2.3 V, and 1.8 V. All output data were measured via digital oscilloscope (DSOX1204A, KEYSIGHT) for both experiments.

System on a chip firmware configuration

All software components and codes were developed using the Segger Embedded Studios IDE within the Nordic Software Development Kit version 17.1.0. The devices fabricated for the experiment were uploaded with identical firmware programs to facilitate uniform handling, particularly with regard to the central device. Employing identical attribute protocols and access profiles across all peripherals, our program automatically initiates the sampling sequence upon establishing a connection with the mobile device.

Angle calculation and comparison

Continuous angle change for the limbs was calculated via vector-based orientation estimation from accelerometer data73. Our system computes the gravity vectors measured by the limb-mounted accelerometer and the chest-mounted reference accelerometer, first converting the raw acceleration into gravity units via the equation:

Where our 12-bit ADC reference at 0 g’s equals 2048 and ADC counts per 1 g equivalates to 380 steps/g. Once converting our three acceleration axis values to g-units, we normalize each vector to obtain the gravity direction:

Once both vectors are normalized, the angle between the two body segments is calculated using the dot product formula:

This yields the angle between their respective gravity directions, reflecting the relative orientation of the limb with respect to the torso.

To obtain reference values for elbow angles, we employed a video-based marker tracking method using circular white, red, and blue markers placed on the shoulder, elbow, and wrist, respectively. A 30 fps HD video was recorded from a fixed frontal view, and each frame was converted to hue, saturation, and value (HSV) color space to enable robust color segmentation. The largest contour within each predefined HSV range was used to identify the centroid of each marker. Elbow angles were then calculated by treating the elbow marker as the vertex between the shoulder and wrist, forming vectors from the elbow to each point, and computing the enclosed angle using the dot product. These video-derived angles served as reference measurements for evaluating the accuracy of accelerometer-based estimations.

Calibration and trigger threshold configuration

The calibration process initiates the first step of our triggering mechanism. After pairing the desired devices, users initiate calibration through the application, maintaining a stationary posture for 10 s. This establishes a baseline of absolute acceleration values, from which the system calculates the variance/standard deviation serving as a dynamic threshold (a) throughout the experiment. Real-time monitoring involves a 100 ms buffer storing absolute acceleration values, with continuous variance calculation and comparison against the predefined threshold. To enhance accuracy, we introduced two user-configurable mechanisms. A configurable constant multiplier (Vn), defaulted to 1.5, is applied to the variance in the motion detection equation. The first trigger activates when more than half of the connected devices surpass their respective threshold (Vn × a) values, initiating a 500 ms countdown timer and a counter for incrementation. The counter, serving as the second layer trigger, tracks activations within the timeframe. Upon reaching the configured value, indicative of sustained motion, 3-axis accelerometer signals for each device are recorded for 1 s and transmitted to a remote database for further classification. The 1-s recording window was determined based on empirical observations of motion durations, which showed that most relevant movements conclude within this time frame. This duration provides sufficient temporal context for classification without affecting system latency, as the trigger mechanism operates as a lightweight event flag without interrupting continuous data streaming. This comprehensive methodology seamlessly integrates calibration, adaptive thresholding, and user-configurable triggers, providing a versatile and reliable system for real-time motion event capture and analysis. The two-layer triggering mechanism ensures specificity, significantly reducing the likelihood of capturing irrelevant motion events.

Recording data comparison

To observe the consistency of the extracted data between the two types of recording methods, we conducted the t-distributed stochastic neighbor embedding (t-SNE) to cluster the 10 different motion classes. The process begins with the extraction of features from motion data, where each feature, fi represents the movement characteristics of a specific body part. These features are organized into a feature matrix, X, where each row corresponds to a motion sample and each column represents a feature. Mathematically, this can be represented as:

This feature matrix, X, undergoes normalization to ensure that each feature contributes equally to the clustering process.

Following normalization, the t-SNE algorithm is applied to the normalized feature matrix X′ to embed the data into a lower-dimensional space while preserving local relationships. The t-SNE algorithm minimizes the divergence between pairwise similarities in the high-dimensional space and the embedded space, as defined by the Student’s t distribution. Mathematically, the t-SNE cost function can be expressed as:

For our simulation, we utilized the sklearn.manifold TSNE library with perplexity, learning rate, number of iterations and random state values of 30, 200, 1000 and 12, respectively, to produce the 3-dimensional cluster graph for the respective classes. Here, the perplexity variable equation can be expressed as:

where the conditional probability, pj|i, is represented by the Euclidean distance between two points, i and j.

Dataset configuration

We selected 10 types of motion data for analysis: ‘Squat’, ‘Hook’, ‘Hug’, ‘Jump’, ‘Jumping jack’, ‘Pick’, ‘Punch’, ‘Turn’, and ‘Walking’. Data was collected from six participants (three male and three female, ages ranging from 24 to 30 years), with ~1000 samples gathered for each motion. Validation and test datasets were constructed, ensuring each motion had 100 samples, with an equal distribution across the participants. In addition to these full-body motions, we also acquired datasets focusing on arm-related and leg-related motions to explore region-specific characteristics. The arm-related motion set included ‘Handshake’, ‘Hook’, ‘Hug’, ‘Jump’, ‘Punch’, ‘Running’, ‘Walking’, ‘Pick’, ‘Turn’, and ‘Waving’, while the leg-related motion set included ‘Hook’, ‘Hug’, ‘Punch’, ‘Running’, ‘Walking’, ‘Squat’, ‘Chair kick’, ‘Front kick’, ‘Pick’, and ‘Turn’. The same data collection and validation procedures were applied to maintain consistency across datasets. The selected motion classes were designed to include a balanced combination of linear and non-linear movement patterns. Linear actions, such as ‘Walking’ and ‘Running’, were included as reference classes due to their relatively consistent and directionally straightforward kinematics. In contrast, the remaining motions reflect more complex, non-linear dynamics involving diverse joint coordination and directionality. This design choice enabled us to evaluate not only the classifier’s ability to distinguish between clearly separable motions, but also its robustness in recognizing subtle variations between similar patterns.

CWT feature extraction and conversion

This study considers a method for classifying 10 different actions. Participants are required to continuously perform each task while data is recorded for two seconds. The obtained dataset includes roughly 1000 samples for each class. The recorded data is first trimmed at the end for all elements of each dataset to ensure equal length. Subsequently, the data is filtered through a third order band-pass filter with cutoff frequencies of 5 and 30 Hz using Python data processing logic. To extract the time-frequency information for the motion classes, the device’s filtered data, each consisting of three axes, were obtained for five devices, resulting in a total of 15 data points. The datapoints for each axis are then concatenated to produce three time-series signals before conducting the CWT.

The application of CWT can be expressed in the following equation:

where x(t) represents the accelerometer’s raw signal and ψ denotes the mother wavelet with the scale and time-shift factors, a and b, as parameters that allow for the analysis and decomposition of the signal at different scales and time positions. For the classification input data, illustrated in Supplementary Fig. 13, CWT data was obtained through the adaptation of an analytic Morse wavelet with symmetry parameter (γ) and time-bandwidth product values (β) of 3 and 60, respectively. The mother wavelet, ψ(t), can be expressed as:

Subsequently, we generated a series of 50 sample points representing the frequency range for our region of interest. The mother wavelet was applied across our configured frequency range, resulting in a comprehensive wavelet family composed of individual wavelet functions. Once the wavelet family was convolved with the raw dataset, the produced coefficient arrays were summed along the horizontal axis.

Unified CNN framework for triaxial accelerometer data fusion

Our machine learning architecture utilizes a multi-branch Convolutional Neural Network (CNN) framework to address diverse classification tasks along the x, y, and z axes of the triaxial accelerometer. Each axis is represented by an individual model: xModel, yModel, and zModel.

Each branch receives an input of shape (63, 95, 5), representing the CWT-transformed time-series data for one axis across five sensors. The branch begins with a convolutional layer employing 32 filters of size (3 × 3) with ReLU activation and same padding, followed by a (2 × 2) max-pooling layer and batch normalization. This is succeeded by a second convolutional layer with 64 filters of size (3 × 3), again using ReLU activation, batch normalization, and max pooling. A third convolutional layer with 128 filters of size (3 × 3) is then applied, followed by global average pooling to produce a compact feature vector for each axis.

To integrate multimodal information from all three axes, the output feature vectors from the xModel, yModel, and zModel branches are concatenated. This unified representation is passed through a fully connected layer with 256 units and ReLU activation, followed by a dropout layer with a rate of 0.5 to mitigate overfitting. Finally, a dense output layer with 10 units and softmax activation is used to perform multi-class motion classification. The model is trained over 200 epochs, with a batch size of 32, using categorical cross entropy loss and the Adam optimizer with a learning rate of 10−5. Variations in hyperparameters and activation functions were tested to observe changes in validation results. Further details on hyperparameters and configurations can be found in Supplementary Table 2.

Feature extraction under varying sensor orientations

In contrast to the controlled training setup, we additionally collected data for the 10 target classes with sensors placed at different angles and positions, such as the lateral side of the wrist or the back of the leg. These changes in orientation introduced varying gravity-induced offsets across devices, which were corrected by subtracting the initial value of each axis during an idle pose. After removing these static biases, the root mean square of the triaxial acceleration was computed for each device to serve as the input of the orientation-invariant CNN model.

Sensor placement strategy

We conducted experiments by shifting the sensors to three relative positions: –25%, 0%, and +25% with respect to the midpoint of the limb segment. These values correspond to locations along the length from the wrist to the elbow on the arm and from the ankle to the knee on the leg. In each trial, sensors were placed at the same relative position on both limbs to simulate practical variations that may occur during real-world use. Approximately 15 samples per motion class were collected for each of the three positions, and these additional datasets were used solely for inference without any model retraining to evaluate the generalizability and placement tolerance of our trained classifier.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Data availability

The source data is provided with this paper. The motion data generated in this study has been deposited in the Zenodo database under the accession code [https://doi.org/10.5281/zenodo.16750783]. Source data are provided with this paper.

Code availability

Code is available in URL: [https://doi.org/10.5281/zenodo.16750789].

References

Youn, J.-H. et al. Skin-attached haptic patch for versatile and augmented tactile interaction. Sci. Adv. 11, eadt4839 (2025).

Kwon, S. Y. et al. On-skin and tele-haptic application of mechanically decoupled taxel array on dynamically moving and soft surfaces. npj Flex. Electron. 6, 98 (2022).

Yao, K. et al. Encoding of tactile information in hand via skin-integrated wireless haptic interface. Nat. Mach. Intell. 4, 893–903 (2022).

Sun, Z., Zhu, M., Shan, X. & Lee, C. Augmented tactile-perception and haptic-feedback rings as human-machine interfaces aiming for immersive interactions. Nat. Commun. 13, 5224 (2022).

Oh, J. et al. A liquid metal based multimodal sensor and haptic feedback device for thermal and tactile sensation generation in virtual reality. Adv. Funct. Mater. 31, 2007772 (2021).

Guo, Y. et al. Active electronic skin: an interface towards ambient haptic feedback on physical surfaces. npj Flex. Electron. 8, 25 (2024).

Chen, S., Chen, Y., Yang, J., Han, T. & Yao, S. Skin-integrated stretchable actuators toward skin-compatible haptic feedback and closed-loop human-machine interactions. npj Flex. Electron. 7, 1 (2023).

Baldi, T. L., Paolocci, G., Barcelli, D. & Prattichizzo, D. Wearable haptics for remote social walking. IEEE Trans. Haptics 13, 761–776 (2020).

Bark, K. et al. Effects of vibrotactile feedback on human learning of arm motions. IEEE Trans. Neural Syst. Rehabil. Eng. 23, 51–63 (2015).

Kim, K. K. et al. A deep-learned skin sensor decoding the epicentral human motions. Nat. Commun. 11, 2149 (2020).

Kim, M., Cho, J., Lee, S. & Jung, Y. IMU sensor-based hand gesture recognition for human-machine interfaces. Sensors 19, 3827 (2019).

Kim, H. et al. Recent advances in wearable sensors and integrated functional devices for virtual and augmented reality applications. Adv. Funct. Mater. 31, 2005692 (2021).

Low, J. H., Ang, M. H. & Yeow, C. H. Customizable soft pneumatic finger actuators for hand orthotic and prosthetic applications. In Proceedings of 2015 IEEE International Conference on Rehabilitation Robotics (ICORR) 380–385 (IEEE, 2015).

Liu, Y. et al. Electronic skin from high-throughput fabrication of intrinsically stretchable lead zirconate titanate elastomer. Research 2020, 1085417 (2020).

Yang, T. H. et al. Recent advances and opportunities of active materials for haptic technologies in virtual and augmented reality. Adv. Funct. Mater. 31, 2008831 (2021).

Wen, F., Zhang, Z., He, T. & Lee, C. AI enabled sign language recognition and VR space bidirectional communication using triboelectric smart glove. Nat. Commun. 12, 5378 (2021).

Zhang, Z. et al. Deep learning-enabled triboelectric smart socks for IoT-based gait analysis and VR applications. npj Flex. Electron. 4, 29 (2020).

Zhu, M., Sun, Z. & Lee, C. Soft modular glove with multimodal sensing and augmented haptic feedback enabled by materials’ multifunctionalities. ACS Nano 16, 14097–14110 (2022).

Jung, K., Kim, S., Oh, S. & Yoon, S. H. HapMotion: motion-to-tactile framework with wearable haptic devices for immersive VR performance experience. Virtual Real. 28, 13 (2024).

Jung, Y. H., Kim, J. H. & Rogers, J. A. Skin-integrated vibrohaptic interfaces for virtual and augmented reality. Adv. Funct. Mater. 31, 2008805 (2021).

Nikolic, J. et al. A synchronized visual-inertial sensor system with FPGA pre-processing for accurate real-time SLAM. In Proceedings 2014 IEEE International Conference on Robotics and Automation (ICRA) 431–437 (IEEE, 2014).

Chen, C., Jafari, R. & Kehtarnavaz, N. A survey of depth and inertial sensor fusion for human action recognition. Multimed. Tools Appl. 76, 4405–4425 (2017).

Ratcliffe, J., Soave, F., Bryan-Kinns, N., Tokarchuk, L. & Farkhatdinov, I. Extended reality (XR) remote research: a survey of drawbacks and opportunities. In Proceedings 2021 CHI Conference on Human Factors in Computing Systems Article 527 (ACM, 2021).

Kroma, A. et al. The reality of remote extended reality research: Practical case studies and taxonomy. Front. Comput. Sci. 4, 954038 (2022).

Raza, A., Hassan, W. & Jeon, S. Pneumatically controlled wearable tactile actuator for multi-modal haptic feedback. IEEE Access 12, 59485–59499 (2024).

Vanichvoranun, N. & Yoon, S. H. A lightweight wearable multi-joint force feedback for high definition grasping in VR. In Proceedings of 2023 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops 625–626 (VRW, 2023).

Seim, C. E., Wolf, S. L. & Starner, T. E. Wearable vibrotactile stimulation for upper extremity rehabilitation in chronic stroke: clinical feasibility trial using the VTS Glove. J. Neuroeng. Rehabil. 18, 14 (2021).

Siedenburg, K., Bürgel, M., Özgür, E., Scheicht, C. & Töpken, S. Vibrotactile enhancement of musical engagement. Sci. Rep. 14, 7764 (2024).

Turchet, L., Rosaia, R., Diodati, A. & Carner, M. Exposure to vibrotactile music improves audiometric performances in individuals with cochlear implants. Sci. Rep. 15, 20054 (2025).

Islam, M. S. & Lim, S. Vibrotactile feedback in virtual motor learning: a systematic review. Appl. Ergon. 101, 103694 (2022).

Moon, H. S., Orr, G. & Jeon, M. Hand tracking with vibrotactile feedback enhanced presence, engagement, usability, and performance in a virtual reality rhythm game. Int J. Hum. Comput. Int. 39, 2840–2851 (2023).

Dwivedi, Y. K. et al. Metaverse beyond the hype: multidisciplinary perspectives on emerging challenges, opportunities, and agenda for research, practice and policy. Int. J. Inf. Manag. 66, 102542 (2022).

Shi, Y. & Shen, G. Haptic sensing and feedback techniques toward virtual reality. Research 7, 0333 (2024).

Yin, J., Hinchet, R., Shea, H. & Majidi, C. Wearable soft technologies for haptic sensing and feedback. Adv. Funct. Mater. 31, 2007428 (2021).

Schwartz, G. et al. Flexible polymer transistors with high pressure sensitivity for application in electronic skin and health monitoring. Nat. Commun. 4, 1859 (2013).

Sonar, H. A., Gerratt, A. P., Lacour, S. P. & Paik, J. Closed-loop haptic feedback control using a self-sensing soft pneumatic actuator skin. Soft Robot. 7, 22–29 (2019).

Cotton, D. P. J., Graz, I. M. & Lacour, S. P. A multifunctional capacitive sensor for stretchable electronic skins. IEEE Sens. J. 9, 2008–2009 (2009).

Park, Y. L., Chen, B. R. & Wood, R. J. Design and fabrication of soft artificial skin using embedded microchannels and liquid conductors. IEEE Sens. J. 12, 2711–2718 (2012).

Kim, D. et al. Squid-inspired and wirelessly controllable display for active camouflage in aquatic-environment. npj Flex. Electron. 8, 7 (2024).

Lin, W. et al. Super-resolution wearable electrotactile rendering system. Sci. Adv. 8, eabp8738 (2022).

Kajimoto, H. Electrotactile display with real-time impedance feedback using pulse width modulation. IEEE Trans. Haptics 5, 184–188 (2012).

Chai, G., Wang, H., Li, G., Sheng, X. & Zhu, X. Electrotactile feedback improves grip force control and enables object stiffness recognition while using a myoelectric hand. IEEE Trans. Neural Syst. Rehabil. Eng. 30, 1310–1320 (2022).

Ji, X. et al. Untethered feel-through haptics using 18-µm thick dielectric elastomer actuators. Adv. Funct. Mater. 31, 2006639 (2021).

Youn, J. H., Mun, H. & Kyung, K. U. A wearable soft tactile actuator with high output force for fingertip interaction. IEEE Access 9, 30206–30215 (2021).

Choi, D. S., Lee, S. H. & Kim, S. Y. Transparent and soft haptic actuator for interaction with flexible/deformable devices. IEEE Access 8, 170853–170861 (2020).

Son, J. et al. Skin-mountable vibrotactile stimulator based on laterally multilayered dielectric elastomer actuators. Adv. Funct. Mater. 33, 2213589 (2023).

Fang, Y., Guo, W. & Sheng, X. Toward a wireless wearable system for bidirectional human-machine interface with gesture recognition and vibration feedback. IEEE Sens. J. 22, 9462–9472 (2022).

Dementyev, A., Olwal, A. & Lyon, R. F. Haptics with input: back-EMF in linear resonant actuators to enable touch, pressure and environmental awareness. In Proceedings of the 33rd Annual ACM Symposium on User Interface Software and Technology, 420−429 (ACM, 2020).

Jung, Y. H. et al. A wireless haptic interface for programmable patterns of touch across large areas of the skin. Nat. Electron. 5, 374–385 (2022).

Najm, A., Banakou, D. & Michael-Grigoriou, D. Development of a modular adjustable wearable haptic device for XR applications. Virtual Worlds 3, 436–458 (2024).

Choi, S. & Kuchenbecker, K. J. Vibrotactile display: perception, technology, and applications. Proc. IEEE 101, 2093–2104 (2013).

Maereg, A. T., Nagar, A., Reid, D. & Secco, E. L. Wearable vibrotactile haptic device for stiffness discrimination during virtual interactions. Front. Robot. AI 4, 42 (2017).

Chen, J., Teo, E. H. & Yao, K. Electromechanical actuators for haptic feedback with fingertip contact. Actuators 12, 104 (2023).

Kim, J.-T. et al. Mechanics of vibrotactile sensors for applications in skin-interfaced haptic systems. Extrem. Mech. Lett. 58, 101940 (2023).

Wang, D. et al. Haptic display for virtual reality: progress and challenges. Virtual Real. Intell. Hardw. 1, 136–162 (2019).

Chung, H. U. et al. Binodal, wireless epidermal electronic systems with in-sensor analytics for neonatal intensive care. Science 363, eaau0780 (2019).

Lee, H. K. et al. Real-time deep learning-assisted mechano-acoustic system for respiratory diagnosis and multifunctional classification. npj Flex. Electron. 8, 69 (2024).

Kim, D. et al. Optimal bilayer composites for temperature-tracking wireless electronics. Nanoscale 16, 5613–5623 (2024).

Jeong, H. et al. Miniaturized wireless, skin-integrated sensor networks for quantifying full-body movement behaviors and vital signs in infants. Proc. Natl Acad. Sci. 118, e2104925118 (2021).

Wouda, F. J. et al. Time Coherent Full-Body Poses Estimated Using Only Five Inertial Sensors: Deep versus Shallow Learning. Sensors 19, 3716 (2019).

Wåhslén, J., Lindh, T. & Eriksson, M. A novel approach to multi-sensor data synchronization using mobile phones. In Proceedings of Fifth International Conference on Body Area Networks 171–174 (ACM, 2010).

Kim, S. & McNames, J. Automatic spike detection based on adaptive template matching for extracellular neural recordings. J. Neurosci. Methods 165, 165–174 (2007).

Acır, N. & Güzeliş, C. Automatic spike detection in EEG by a two-stage procedure based on support vector machines. Comput. Biol. Med. 34, 561–575 (2004).

Franke, F., Natora, M., Boucsein, C., Munk, M. H. J. & Obermayer, K. An online spike detection and spike classification algorithm capable of instantaneous resolution of overlapping spikes. J. Comput. Neurosci. 29, 127–148 (2010).

Gao, Z. et al. Automatic change detection for real-time monitoring of EEG signals. Front. Physiol. 9, 325 (2018).

Martinez-Rios, E. A., Bustamante-Bello, R., Navarro-Tuch, S. & Perez-Meana, H. Applications of the generalized morse wavelets: a review. IEEE Access 11, 667–688 (2023).

Yesilyurt, I. The application of the conditional moments analysis to gearbox fault detection-a comparative study using the spectrogram and scalogram. NDT Int. 37, 309–320 (2004).

So, C. et al. Epidermal piezoresistive structure with deep learning-assisted data translation. npj Flex. 6, 70 (2022).

Ayrulu-Erdem, B. & Barshan, B. Leg motion classification with artificial neural networks using wavelet-based features of gyroscope signals. Sensors 11, 1721–1743 (2011).

Yan, X., Yang, D., Lin, Z. & Vucetic, B. Significant low-dimensional spectral-temporal features for seizure detection. IEEE T. Neur. Sys. Reh. 30, 668–677 (2022).

Kim, J.-H. et al. A wirelessly programmable, skin-integrated thermo-haptic stimulator system for virtual reality. Proc. Natl Acad. Sci. 121, e2404007121 (2024).

Moesgen, T., Salovaara, A., Pouta, E., Pyykko, R. & Xiao, Y. Vibrotactile motion guidance for stroke rehabilitation: a comparative study. In Proceedings of 6th International Conference on Medical and Health Informatics (ICMHI), 147–152 (ACM, 2022).

Nwaizu, H., Saatchi, R. & Burke, D. Accelerometer based human joints' range of movement measurement. In Proceedings of 10th International Symposium on Communication Systems, Networks and Digital Signal Processing (CSNDSP), 1–6 (IEEE, 2016).

Acknowledgements

S.M.W. acknowledges that this work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (RS-2024-00411904, IITP-2025-RS-2020-II201821, RS-2022-NR070801, RS-2025-02303342). Additionally, S.M.W. acknowledges support by the Korean Institute for Advancement of Technology (KIAT) grant funded by the Korea Government (MOTIE) (RS-2024-00435693, Human Resource Development Program for Industrial Innovation (Global)). J.-Y.Y. acknowledges funding from the Alchemist Project Program (RS-2024-00422269), supported by the Ministry of Trade, Industry & Energy (MOTIE, Korea).

Author information

Authors and Affiliations

Contributions

S.U.P., H.K.L., H.B.K., J.K., J.-Y.Y., and S.M.W. conceived the overall research objectives and goals; H.K.L., S.M.W., D.K., W.K., J.J., B.W.L., Y.H.J., S.P. conducted the designs and engineering investigation of the devices used throughout this study. S.U.P., H.B.K., B.K., I.Y.C., and H.J. performed the software design and software validation of the comprehensive system. S.U.P., H.K.L., H.B.K., J.K., J.-Y.Y., and S.M.W. are responsible for the initial drafting of the manuscript, while all authors contributed to the critical editing and review of the final version.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Kyung-In Jang, Zhengbao Yang, who co-reviewed with Weikang Lin, and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Source data

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Park, S.U., Lee, H.K., Kim, H.B. et al. Wearable interactive full-body motion tracking and haptic feedback network systems with deep learning. Nat Commun 16, 8604 (2025). https://doi.org/10.1038/s41467-025-63644-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41467-025-63644-3