Abstract

The reward prediction error (RPE) hypothesis posits that phasic dopamine (DA) activity in the ventral tegmental area (VTA) encodes the difference between expected and actual rewards to drive reinforcement learning. However, emerging evidence suggests DA may instead regulate behavioral performance. Here, we used force sensors to measure subtle movements in head-fixed mice during a Pavlovian stimulus-reward task, while recording and manipulating VTA DA activity. We identified distinct DA neuron populations tuned to forward and backward force exertion. They are active during both spontaneous and conditioned behaviors, independent of learning or reward predictability. Variations in force and licking fully account for DA dynamics traditionally attributed to RPE, including variations in firing rates related to reward magnitude, probability, and omission. Optogenetic manipulations further confirmed that DA modulates force exertion and behavioral transitions in real time, without affecting learning. Our findings challenge the RPE hypothesis and instead suggest that VTA DA neurons dynamically adjust the gain of motivated behaviors, controlling their latency, direction, and intensity during performance.

Similar content being viewed by others

Introduction

The ventral tegmental area (VTA) is the source of the mesolimbic dopamine (DA) pathway that has been implicated in reward and motivation1. According to the influential reward prediction error (RPE) hypothesis, VTA DA neurons encode the difference between actual and predicted reward, providing a teaching signal for associative learning2,3. This model has shaped our understanding of DA’s role in associative learning for decades. However, the RPE model cannot explain many experimental results4,5,6. It has been challenged by many who propose that DA contributes to vigor, effort regulation, incentive salience, or movement kinematics, but there is no consensus on the contribution of DA to learning and behavior6,7,8,9,10.

One reason for conflicting opinions on DA function is the lack of precise and continuous behavioral measurements. Many previous studies used measures that are usually limited to discrete time stamps (e.g., licking), or temporal durations (e.g., time spent in the reward port)11,12,13,14,15. When continuous behavioral measures were used with high temporal and spatial resolution, DA activity in the substantia nigra pars compacta was found to be highly correlated with kinematic variables like head velocity, regardless of reward prediction or outcome valence, and selective stimulation of DA neurons increased movement velocity6. Recent work using sensitive measures of force also showed that VTA DA neurons represent and regulate force exertion in head-fixed mice16. These results suggest that previous observations in support of the RPE hypothesis could be explained by the contributions of DA signaling to online behavioral performance rather than learning.

In this study, we used force sensors to measure subtle movements in head-fixed mice during a Pavlovian stimulus-reward learning task previously used to study RPE signaling. Using in vivo electrophysiology and optogenetics, we showed that phasic DA activity in the VTA does not encode RPE, but is critical for modulating behavioral performance online as measured by force exertion and licking. We found two major populations of DA neurons that increased firing before forward and backward force exertion during the generation of anticipatory CRs and during spontaneous behaviors. Such force tuning is the same regardless of learning, reward predictability, or outcome valence. We also found an abrupt change in DA tuning when the mouse transitions to consummatory responding upon reward delivery, at which point phasic DA becomes more predictive of licking behavior. Based on these results, we propose a working model of phasic DA function, according to which DA modulates the adaptive gain in behavioral transition control. This model explains the pattern of phasic DA activity during Pavlovian conditioning.

Results

Force tuning in dopamine neurons

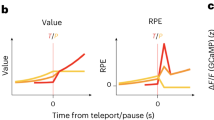

We investigated the relationship between force and VTA DA activity by training mice in a Pavlovian conditioning task while using a force-sensing head fixation apparatus (Fig. 1a and Supplementary Fig. 1)16,17,18. Mice exerted forward forces after CS presentation, reflecting their anticipatory approach behavior. The force sensors also revealed small spontaneous movements during the inter-trial interval (ITI, Fig. 1b). Spontaneous movements were present throughout training and their amplitudes remained stable, even in well-trained mice (Fig. 1c and Supplementary Fig. 1).

a Force monitoring during a stimulus-reward task. b Representative task and spontaneous force from a trained mouse (Supplemental Movie 1). c Spontaneous movements were present at all stages of training (Two-way RM ANOVA, Effect of Direction: F(1,9) = 21.6, p = 0.0012, no effect of training F(2,18) = 3.4, p = 0.054, Interaction: F(12,18) = 14.04, p = 0.0002, n = 10 mice. Spontaneous forward movements were more common at all stages of training (p < 0.0001). d VTA dopamine (DA) neurons were identified by optogenetic tagging in DAT-Cre mice, confirmed by GFP and TH co-expression. e Opponent signaling of force direction by two classes of DA neuron (Two-way ANOVA, Interaction: F(1,472) = 1218, p < 0.0001). f Forward and Backward DA populations comprised ~50% of all DA neurons. g Distributions of cross-correlation lag for Forward and Backward DA neurons with their preferred force direction. h Left: Forward DA neurons were activated during forward movements (n = 341 neurons). Right: the same neurons (same sorting order) were suppressed during backward movements. i Representative Forward DA neuron (linear regression; Forward R2 = 0.73, p = 0.001, Backward R2 = 0, p = 0.89). j Forward DA population tuning (linear regression; Forward R2 = 0.97, p < 0.0001, Backward R2 = 0.81, p = 0.0003, n = 341 neurons). k Left: Backward DA neurons were suppressed during spontaneous forward movements (n = 133 neurons). Right: same neurons (same sorting order) were activated during spontaneous movements backward. l Representative Backward DA neuron (linear regression; Forward R2 = 0.17, p = 0.22, Backward R2 = 0.81, p = 0.0004). m Backward DA population tuning (linear regression; Forward R2 = 0.63, p = 0.0061, Backward R2 = 0.88, p < 0.0001, n = 133 neurons). n DA concentration using firing rate (FR) and reuptake dynamics (K, Vmax) to predict force exertion. o Modeled DA concentration from observed Forward and Backward DA activity recapitulates force profiles (n = 341 Forward DA, 133 Backward DA; 4455 forward, 5313 backward movements). Error bars represent SEM. Source data are provided as a Source Data file.

We recorded single unit activity from the VTA in mice with moveable optrodes and used optogenetic stimulation to confirm cell type (n = 1683 single units; n = 948 from putative DA neurons; n = 98 from tagged DA neurons; Fig. 1d and Supplementary Figs. 2–4). We identified two major classes of DA neurons that exhibited distinct direction-specific tuning during spontaneous movements (Fig. 1e). They make up about 50% of recorded DA neurons (Fig. 1f). Both populations led force generation in their preferred direction (Fig. 1g). Forward DA neurons (n = 341) increased firing prior to spontaneous forward movements and decreased firing during backward movements (Fig. 1h). These neurons were tuned for spontaneous force exertion (Fig. 1i): when movements were forward, these neurons increased their firing rate and when movements were backward, they reduced their firing rate (Fig. 1j). In contrast, Backward DA neurons (n = 133) reduced firing during spontaneous forward movements and increased their firing during movements backward (Fig. 1k). Backward DA neurons were also tuned for force (Fig. 1l, m).

If the release of DA by VTA DA neurons plays a causal role in movement, it must do so through DA receptor activation in downstream neurons. To model the relationship between the firing rate of DA neurons and extracellular DA concentration, we generated a biophysical model using standard DA release and reuptake parameter values19,20. This model can predict DA concentration using the firing rates of Forward and Backward DA neurons. We assumed that these populations target different striatal regions that are involved in generating forces in opposite directions (Fig. 1n). Because DA concentration is proportional to exerted force, the direction of force exerted is determined by the difference in DA concentrations in target regions. Without any tuning to fit the data or using any filters, the model predicted the direction of exerted force and its time course (Fig. 1o and Supplementary Fig. 5).

Force direction and DA neuron activity during Pavlovian conditioning

To reveal force direction-related DA activity during the Pavlovian task we took advantage of the flexibility of the force sensors and moved the location of the reward spout behind the mouth while keeping reward predictability constant (Fig. 2a). Although this change in spout location is only ~ 2 mm, it required a change in the direction of force exertion to access the reward (Fig. 2a and Supplementary Movie 2). Anticipatory licking rates (a conventional measure of the CR on this task) were similar for both spout positions (Fig. 2b). However, the slight change in spout position resulted in different movements generated by the mice: when the spout was moved backward by only 2 mm, mice generated more backward force and less forward force (Fig. 2c). Consequently, depending on the spout location, the force component of the CRs could be generated in different directions for the same reward (Fig. 2d). This allows us to test whether VTA DA neurons can be modulated by force direction even when reward prediction is constant.

a Force sensors allow slight forward/backward movement (Supplemental Movie 2). b Anticipatory licking rates during “Spout in front” and “Spout behind” conditions. c Opposite force direction during same sessions. Markers indicate Forward and Backward CRs. d Top: Licking rates between Front and Behind conditions (paired two-tailed t-test, p = 0.35, n = 26 sessions). Bottom: Change in force with different spout locations (paired two-tailed t-test, p < 0.0001, n = 26 sessions). e Left: Firing rates of Forward DA and Backward DA neurons aligned to CS during Spout in Front (n = 49 Forward DA and 37 Backward DA; n = 7 mice). Right: Same neurons during Spout Behind. f Forward and Backward DA neurons burst to the CS regardless of spout position (Two-way ANOVA, no effects of Cell type (p = 0.78) or Position (p = 0.14), significant interaction (F(1,84) = 10.5, p = 0.0017). Post-hoc: Backward DA neurons fired slightly higher during Spout Behind (p = 0.0048). g Heatmaps show long latency forward (left) and backward (right) CR force. h Average force generated during forward and backward CRs (n = 15 sessions from 7 mice). i Left: Forward DA and Backward DA neuron firing from (e) aligned to Forward CRs (n = 49 Forward DA and 37 Backward DA; n = 7 mice). Right: Same neurons aligned to Backward CRs. j Opponent signaling during CR generation by Forward and Backward DA neurons (analysis of 250 ms window prior to CRs, Two-way ANOVA, effect of Direction: F(1,85) = 12.92, p = 0.0005, significant interaction between Cell group and Direction: F(1,85) = 66.9, p < 0.0001, Post-hoc: Forward DA fired more during Forward CR (p < 0.0001), Backward DA fired more during Backward CR (p = 0.0014). k Firing rates of neurons from (e and i) aligned to spontaneous forward movements (Left) and spontaneous backward movements (Right). l Analysis of firing 250 ms before spontaneous movement (two-way ANOVA, Interaction between Direction and Cell type: F(1,84) = 103, p < 0.0001). Post-hoc: Forward DA fired more during spontaneous forward movements (p < 0.0001), Backward DA fired more during spontaneous backward movements (p < 0.0001). Error bars represent SEM. Source Data provided.

In traditional analysis of DA activity during Pavlovian conditioning, neural activity is always aligned to stimulus events. However, it is well known that phasic DA activity can be elicited by salient sensory events with a very short-latency independent of learning21,22,23,24,25. Any DA activity that may be direction-specific during the Pavlovian task may therefore be obscured by the large-magnitude direction-independent CS-response. Indeed, aligning Forward and Backward DA populations to the CS shows that CS activations are similar regardless of spout direction (Fig. 2e, f).

Because force CR latency varies from trial to trial, the direction selectivity of DA neurons could be revealed when we aligned their activity to CRs with longer latencies (Fig. 2g, h). Movement-related responses were therefore separated in time from the short-latency salience-related responses to the CS. Forward DA neurons showed higher activity for forward CRs than backward CRs, whereas Backward DA neurons showed higher activity for backward CRs than forward CRs (Fig. 2j). Importantly, force CR direction preference matched the direction preferences exhibited by these same cells during spontaneous forward and backward movements (Fig. 2k, l).

We also identified two additional classes of putative DA neurons with no direction preference, increasing or decreasing firing during both forward and backward spontaneous movements (Supplementary Fig. 6). A large population of unclassified neurons had relatively wide waveforms and low firing rates, unlike GABAergic VTA neurons, which have narrow waveforms with high baseline firing rates (Supplementary Fig. 3)26. These unclassified neurons were better correlated with force rather than the change in force. They also showed reduced activity to the CS on trials lacking CRs (Supplementary Fig. 7), further indicating a role in generating force during both spontaneous and task-related movements.

DA activity and force generation during aversive stimuli

According to the RPE hypothesis, an unexpected aversive stimulus should produce a negative prediction error, reflected as a decrease or pause in VTA DA activity27,28. If DA activity reflects force exertion, the same relationship between DA and force should be observed regardless of whether the outcome is rewarding or aversive. To test this possibility, we delivered aversive air puffs in separate sessions (Fig. 3a)18. Unexpected air puffs resulted in a backward movement, away from the source of the air puff, followed by rebound forward movement (Fig. 3b). This pattern was observed across all mice (Fig. 3c). Latency measures confirmed the backward component had a shorter latency than the rebound forward component (Fig. 3d).

a We exposed mice to a mild air puff to the face in a separate session to test the effect of stimulus valence on phasic DA activity. b Mice respond to air puffs with a backward change in force followed by a forward change in force (rebound). c Average change in force trace across mice (n = 24 sessions). d Backward force was generated before the rebound during the air puff trials (paired two-tailed t-test, p < 0.0001, n = 24 sessions from 4 mice). e Left, Rasters for a representative Backward DA neuron and a representative Forward DA neuron showing activation at different latencies. Right, average firing rate traces for the neurons whose rasters are shown to the left. Note latency differences. f Latency for Backward DA neuron firing was lower than Forward DA (unpaired two-tailed t-test, p = 0.018, n = 41 Forward DA, n = 35 Backward DA). g Forward DA and Backward DA peak firing rates were not different (unpaired two-tailed t-test, p = 0.33, n = 41 Forward DA, n = 35 Backward DA). h Forward and Backward DA neuron firing activity was tuned for each population’s preferred force direction during air puff trials (Left, linear regression; Non-preferred, Forward DA, R2 = 0.32, Backward DA, R2 = 0.47; Right, linear regression; Preferred, Forward DA, R2 = 0.94, Backward DA, R2 = 0.92). Error bars represent SEM. Source Data provided.

The direction-selective DA neurons reflected the temporal patterns of force changes during an aversive air puff (Fig. 3e). The Backward DA population was activated first during the initial backward movement, followed by the Forward DA population that was activated prior to the onset of forward movement (Fig. 3f). Forward DA neurons and Backward DA neurons did not differ in their firing rates (Fig. 3g). Consistent with results shown in Figs. 1 and 2, Forward and Backward DA neurons maintained tuning for changes in force in their preferred direction (Fig. 3h). Our results agree with past research showing activation of DA neurons in response to aversive stimuli as well as rewarding stimuli3,6,18,22, but we now show that previous observations could be explained by distinct types of DA neurons signaling force in different directions.

Changes in reward size and probability change force exertion and DA activity

Phasic DA signaling is known to change systematically with reward size manipulations29,30. Previous studies did not quantify the behavioral changes associated with such manipulations other than licking. We found that the increase in reward size also altered movements generated by mice (Fig. 4a). Increasing reward size reduced the latency of force CRs (Fig. 4b) and the duration of URs (Fig. 4c), and increased the mean force exerted for both the CR and the UR (Fig. 4d). DA neurons increased their firing rates to the CS and to the US when mice were receiving larger rewards (Fig. 4e–g).

a Representative changes in force on trials with different reward size. b CR latency is earlier on trials with larger reward (paired two-tailed t-test, p = 0.018, n = 7 mice). c UR duration is longer on trials with larger reward (paired two-tailed t-test, p = 0.014, n = 7 mice). d Mice exert greater force during both the CR and UR when larger rewards are delivered (Two-way ANOVA, significant main effect of Reward Size, F(1,6) = 14.63, p = 0.0087). e Example raster plots showing the effect of reward size on bursting activity of a DA neuron. f Example DA neuron showing effects of reward size on firing activity. g Mean firing rates were greater following larger rewards after both the CS and the US (Two-way ANOVA, significant main effects of Reward size F(1,57) = 6.96, p = 0.0107 and Response F(1,57) = 12.75, p = 0.0007, n = 59 neurons). h Representative change in force on rewarded trials under different levels of reward probability. i Force UR duration is extended on 50% rewarded trials (paired two-tailed t-test, p = 0.004, n = 6 mice). j Top: Greater CR force when rewards are more probable (paired two-tailed t-test, p = 0.047, n = 6 mice). Bottom: Greater changes in UR force on rewarded trials when reward is less likely (paired two-tailed t-test, p = 0.03, n = 6 mice). k Firing activity of a representative DA neuron recorded during trials with 100% and 50% reward probability. l Extra firing after lower probability rewards promotes greater force exertion. m During 50% probability trials, DA neurons show lower mean firing after the CS and greater firing after the US. When reward is more reliable, neurons show the opposite pattern (Two-way ANOVA, significant interaction between trial phase and reward probability, F(1,82) = 60.85, p < 0.0001. Post-hoc tests revealed that firing rate was higher after CS in 100% condition than 50% condition (p = 0.035), but was higher after US in 50% condition than 100% condition, p = 0.0118, n = 84 neurons). Error bars represent SEM. Source Data provided.

Prior work also found that phasic DA activity was modulated by reward probability30. Higher reward probability increases DA signal after CS—a finding usually interpreted as providing support for the RPE hypothesis. We manipulated reward probability and compared its effect on force exerted and DA activation (Fig. 4h). UR duration increased when mice received rewards only 50% of the time (Fig. 4i). Mice produced lower CR force when reward probability was 50% but generated larger UR force upon reward delivery (Fig. 4j). These patterns were accompanied by similar changes in firing rate of DA neurons (Fig. 4k, l). Firing rates after the CS were lower when the reward probability was 50%, but once mice received the reward, DA neurons showed higher activity. The opposite pattern was observed when the reward was delivered at 100% probability (Fig. 4m).

Reward omission reveals dips in DA firing and force

Reduced firing after reward omission is thought to signal a negative RPE (actual reward less than predicted)31. In well-trained mice (n = 5), we also omitted the reward after CS presentation (Fig. 5a). DA neuron populations showed reduced activity after reward omission (Fig. 5b, c). Interestingly, after reward omission, mice abruptly terminated force exertion, and did not generate additional force which is normally observed after reward delivery (Fig. 5d). The change in force was therefore negative following reward omission (Fig. 5e). The characteristic “dip” in DA neuron activity after reward omission (Fig. 5f) coincided with a clear reduction in force exertion (Fig. 5g and Supplementary Movie 3). Together, these results show that activity after the reward is consistent with DA mediating the transition to consummatory behavior and determining its persistence. In the absence of reward, DA signaling is low, and the UR is terminated early. The dip in DA contributes to pausing the ongoing behavior.

a Reward omission experiment whereby expected reward was omitted on 50% of trials. b Simultaneously recorded population of DA neurons during trials with expected reward delivery (top) and omission (bottom). Note characteristic “dip” in DA neurons during reward omission. c Mean change in firing rate for the population in (b) during rewarded and unrewarded trials shows a robust dip in activity following omission. d Mice abruptly withhold forward force exertions upon reward omission (Supplemental Movie 3). e Reward omission results in parallel “dips” in change in force and DA activity. f Reduction in firing rate after reward omission (paired two-tailed t-test, p < 0.0001, n = 28 neurons from 3 mice). g Omission resulted in a reduction in mean force in the period following reward omission (paired two-tailed t-test, p = 0.0033, n = 5 mice). h Raster plots of example neurons from mice depicting well-known phasic DA activity to surprise reward, reward predicted by tone, and omitted reward. Right: changes in force occurring in parallel with DA activity in the same session. Mice push forward to surprise rewards, to cues predicting reward, and after receiving expected reward. Mice terminate force generation upon reward omission, producing a reduction in forward force. i Bidirectional modulation of DA firing across conditions in (a) (One-way ANOVA, F(4,131) = 7.971, p < 0.0001; Post-hoc tests showed increased firing after Surprise reward, p < 0.0001, after CS in well-trained mice, p < 0.0001, in response to expected reward, p = 0.0001, and decreases after omission of expected reward, p < 0.0001; n = 84 neurons). j Mean changes in force for the same sessions (One-way ANOVA, F(4,24) = 23.16, p < 0.0001; Compared to baseline (No CS), force increased after Surprise reward, p = 0.0027, after CS in well-trained mice, p = 0.0013, in response to expected reward, p = 0.039, and significant reductions after omission of expected reward, p = 0.02; n = 10 mice). Note that patterns of force changes closely parallel changes in DA activity shown in (i). Error bars represent SEM. Source Data provided.

CR force increases during Pavlovian conditioning

DA neurons are known to shift their activity from the US to the CS during stimulus-reward learning31,32. Such changes have been explained by the RPE hypothesis, in particular by the temporal difference algorithm (Fig. 5h–j)3,31,33. We found that the development of CS-evoked DA responses during learning was accompanied by an increase in force as mice gradually produced more anticipatory behavior (Fig. 6a, b). There was a corresponding increase in DA activity (Fig. 6c). Phasic DA activity strongly predicts CR force changes (Fig. 6d). In addition to increasing CR magnitude, force CR onset time also becomes less variable with training (Fig. 6e). With training, there was a significant decrease in both force CR latency and the variance in latency (Fig. 6f, g and Supplementary Fig. 8).

a Left: heatmap showing average population firing activity in response to the CS as a function of training session. Right: Change in force recorded during the same sessions shown at left. Force amplitude increased and latency decreased with training. b Change in force increased with training (one-way repeated-measures ANOVA, F(2.85,14.24) = 9.4, p = 0.0012). c Peak firing rate of DA neuron populations increased during training (one-way repeated-measures ANOVA, F(2.15,8.1) = 9.25, p = 0.0076). d Peak firing rate was correlated with the peak change in force recorded across all training sessions (linear regression; R2 = 0.95). e Training involves reductions in force CR latency and onset variance. Heatmaps of force or individual sessions during training (Left) and after extensive training (Right). Black tick marks indicate time of movement initiation. f Latency to force CR is reduced with training. One-way repeated-measures ANOVA (F(2.252,11.26) = 4.35, p = 0.036, n = 6 mice). g The variance of force CR onset time is reduced with training. One-way repeated-measures ANOVA (F(3.05, 15.25) = 7.33, p = 0.0028, n = 6 mice). Error bars represent SEM. Source Data provided.

DA activity determines force onset during learning

In principle, the changes in CR timing during learning can be explained by the RPE hypothesis, which equates the development of reward prediction with the probability of CR generation31,34. We found that a trial-by-trial analysis of the relationship between DA activity and behavior could disambiguate the role of DA in performance versus learning.

During intermediate training stages, mice frequently generated force CRs, though CR latency remained variable (Supplementary Fig. 8). During such sessions, the activity of many DA neurons after the CS varied with the onset time of the CR, i.e., they strongly predict when the force CR will be generated. When the force CR took longer to generate (Fig. 7a), these latency-predicting neurons showed lower firing rates than during trials when the CR was more rapidly produced (Fig. 7b). During the session, their activity did not vary with trial number, a pattern that we might expect if DA activity reflects increasing reward prediction during training (Fig. 7c, d). Overall, CR-predicting DA activity was better explained by movement onset latency (Fig. 7e and Supplementary Fig. 8) than time in session (Fig. 7f). Their activity appears to contribute to the production of the CR rather than signaling RPE (Fig. 7g and Supplementary Fig. 8). A separate population of DA neurons recorded during these same sessions was activated by the CS regardless of movement onset latency, showing no relationship between force CR latency and their firing rate (non-CR-predicting DA, Supplementary Fig. 8). The activity of this DA population was not explained by the amount of training either, as it did not vary with trial number during the session (Supplementary Fig. 8). This pattern suggests that this population may be related to CS salience rather than learning.

a Left: Heatmap of force generated during the CR during learning, sorted by CR latency. Right: Average force during trials with short vs. long latency CRs. b Left: Raster plot of an example DA neuron from the same session in (a) same sorting. Middle: mean firing for short vs. long latency. Right: CR latency predicted firing rate (R2 = 0.7). c Left: Same force as in (a) now sorted by trial number. Right: Average force during the first and second halves of the session. d Left: Same DA neuron from (b), sorted by trial number. Middle: average firing rate during the first and second session halves. Right: Trial number did not predict firing (R2 = 0.03). e Left: Average firing rate of 94 CR-predicting DA neurons during short and long latency CRs. Right: Activity was lower for long CR latencies (paired two-tailed t-test, p < 0.0001). f Left: Mean activity of same 94 CR-predicting DA neuron firing, comparing the first and second session halves. Right: No differences between first and second session halves (paired two-tailed t-test, p = 0.36, n = 94 DA neurons). g Activity of CR-predicting neurons was predicted by CR latency (linear regression; R2 = 0.98, p < 0.0001) but not trial number (linear regression; R2 = 0.09, p = 0.29; n = 94 DA neurons). h Left: Force heatmap from a well-trained mouse; CR latency is uniform. Right: Mean force during short and long CR latencies in a well-trained mouse. i Left: Raster of an example DA neuron from session in (h) sorted by latency. Middle: Mean firing for short and long latency CRs. Right: CR latency did not predict firing rate. j Left: Mean firing rates for 218 non-predictive DA neurons during short and long CR trials. Right: No effect of CR latency on DA activity (paired two-tailed t-test, p = 0.93, n = 218 DA neurons). k No relationship between firing and CR latency or trial number in well trained mice (linear regression, CR latency: R2 = 0.16, p = 0.69; Trial number: R2 = 0.11, p = 0.74; n = 218 DA neurons). Error bars represent SEM. Source Data provided.

With further conditioning, as force CR latencies became more uniform (Fig. 7h and Supplementary Fig. 8), DA activity in response to the CS also became less variable (Fig. 7h–j). We could not predict CR latency nor time in session for most DA neurons after extensive training (Fig. 7k), although some DA neurons could still predict CR onset latency after training (Supplementary Fig. 8).

Reduced DA responses to the CS in the absence of CR, independent of learning

Occasionally, even in well-trained mice, the force CR was not generated on some trials. DA neurons showed dramatically reduced responses to the CS if no CR was generated, regardless of the amount of training (Fig. 8a–e). Moreover, sometimes CSs were presented while mice were already engaged in spontaneous movement bouts (Fig. 8f), which resulted in smaller changes in CR force (Fig. 8g). This was also accompanied by reduced DA signaling after CS presentation (Fig. 8h) and independent of the amount of training (Fig. 8i, j). Together, these results strengthen the connection between DA activity and CR initiation rather than reward prediction. While the CS always predicted reward in trained mice, reduced DA activity corresponded to a lack of CRs or CRs generated with reduced force.

a Top: Raster plot showing a DA neuron during trials with force CRs and corresponding force heatmap. Bottom: same neuron during trials without CRs and corresponding force. b Mean force was greater on trials with a CR (paired two-tailed t-test, p < 0.0001, n = 42 sessions). c DA firing rates were lower during trials without a CR (paired two-tailed t-test, p < 0.0001, n = 408 neurons). d Force is lower in the absence of a CR regardless of training (Two-way ANOVA; Training: F(2,97) = 14.4, p < 0.0001, Trial Type: F(1,97) = 96.36, p < 0.0001, Interaction: F(2,97) = 14.42, p < 0.0001. Post-hoc: reduced force without CR during intermediate and late stages, p < 0.0001). e DA firing is lower in the absence of a force CR regardless of training (Two-way ANOVA; Training: F(2,1057) = 29.4, p < 0.0001, Trial Type: F(1, 1057) = 81.76, p < 0.0001, Interaction: F(2, 1057) = 4.73, p = 0.009; Post-hoc: less firing during No CR trials in intermediate and late stages, p < 0.0001). f Left: Random CS presentation sometimes interrupted spontaneous movements. Right: Reduced DA responding to CS interrupting ongoing movement. g Mice generated less force if the CS interrupted ongoing movements (paired two-tailed t-test, p < 0.0001, n = 62 sessions). h Reduced DA responding to the CS if the CS interrupted ongoing movements (paired two-tailed t-test, p < 0.0001, n = 692 neurons). i Force was lower in interrupted trials across training (Two-way ANOVA; Training: F(2,114) = 28.6, p < 0.0001, Trial Type: F(1,114) = 46.58, p < 0.0001, interaction: F(2,114) = 4.18, p = 0.0176, Post-hoc: less force on Interrupted trials during intermediate (p = 0.0027) and late (p < 0.0001) stages of training). j DA firing was reduced on Interrupted trials across training (Two-way ANOVA; Training: F(2,858) = 5.724, p = 0.0034, Trial Type: F(1,858) = 116.6, p < 0.0001, interaction: F(2,858) = 3.5, p = 0.0313. Post-hoc: reductions in firing rate during interrupted trials in early (p = 0.0047), intermediate (p < 0.0001), and late (p < 0.0001) stages. Error bars represent SEM. Source Data provided.

DA signals the transition to consummatory behavior upon reward delivery

DA activation upon US delivery is often described as mediating a reward signal, but prior work has not closely inspected the behavioral changes after the US. Early in training, the URs (force exertion and licking) were variable in their onset relative to the US (Supplementary Fig. 9). On trials where the UR was delayed, aligning data to the US showed reduced force and licking UR measures and reduced DA firing activity (Supplementary Fig. 9). However, by aligning to the delayed licking UR onset rather than the US timestamp, we showed that force generation, licking, and DA firing activity were restored in magnitude (Supplementary Fig. 9). DA activity therefore signals the initiation of consummatory behavior rather than US delivery.

With training, the UR (force after US delivery) becomes a continuation of the CR and cannot be clearly separated (Supplementary Fig. 9). The RPE hypothesis predicts a reduction in phasic DA in response to the US in trained mice, as the reward becomes well-predicted. While the UR force changes decreased with training, DA activity after the US did not decrease (Supplementary Fig. 9). Mice reliably produce subtle changes in licking and force generation after the US, even after extensive training. Once the CR was generated, upon detection of the sucrose reward, mice increased licking (Fig. 9a–c). DA activity just before licking strongly predicts subsequent licking UR (Fig. 9d, e). Mice also changed their force exertion pattern when the reward was delivered (Fig. 9f). Upon reward delivery, the force briefly reversed direction, resulting in a transient backward force as the mouse stopped the anticipatory approach behavior and transitioned to forward force exertion again while increasing licking behavior (Fig. 9g, h). Overall, DA neuron activation was coincident with the redirection of force but preceded consummatory behavior as measured by forward force generation and the increase in licking (Fig. 9i). DA activity after reward delivery predicts licking rate rather than either force or the integral of force (Fig. 9j and Supplementary Fig. 9). Both Forward and Backward DA neurons showed phasic increases in activity upon reward delivery (Supplementary Fig. 10), suggesting the direction-specific force tuning was only a feature of anticipatory CR reflecting approach behavior, but the same DA neurons contribute to the generation of the lick bout. In other words, upon reward feedback, these DA neurons can now adjust gain for consummatory behaviors rather than preparatory behaviors.

a Left: Heatmap of change in lick rate for an example well-trained session. Right: Average change in lick rate for the example session. b Average change in licking rate after the US (n = 6 mice). c Reward transiently increases licking rate (One-way repeated-measures ANOVA (F(1.5,7.7) = 11.44, p = 0.0065. Post-hoc: increased licking rates in first 250 ms vs. pre-reward, p = 0.0429; stable level resumes afterward, p = 0.028; n = 6 mice). d Overlayed timeseries of representative DA population (n = 12 neurons) and change in licking rate for a single session. DA neurons precede the increase in licking rate after reward. e Licking rate in the 1 s window after reward was predicted by firing rate in the first 250 ms after the US (linear regression, R2 = 0.81; n = 5 mice). f Left: Example heatmap showing the changes in force occurring after US delivery. Right: Example of rapid force direction reversal after US delivery. g Average change in force aligned to US delivery in trained mice (n = 6 mice). h Change in force and average firing rate for a DA population (n = 12 neurons) recorded in a well-trained mouse. DA neuron activity increases prior to forward force generation. i Timing of DA activation relative to force and licking UR behaviors (One-way repeated-measures ANOVA, F(1.57,7.85) = 44.50, p < 0.0001; Post-hoc: DA neuron population activity differed in latency to peak change in licking (p = 0.015) and peak increases in force (p = 0.0012). Licking rate preceded changes in forward force (p = 0.0011). DA neurons and the brief backward force generated right after US delivery did not have different latency, p = 0.177, n = 6 mice). j Comparison of the regression coefficients between DA neurons after the reward and force and licking UR behavior (One-way repeated-measures ANOVA (F(2,12) = 19.7, p = 0.0002; Post-hoc: R2 values between DA and Licking Rate were higher than those for Impulse (p = 0.0003) and change in force (p = 0.0006), n = 5 mice). Error bars represent SEM. Source Data provided.

Bidirectional optogenetic manipulation altered force exertion without affecting learning

According to the RPE hypothesis, DA serves as a teaching signal for learning35. We tested whether stimulating DA neurons in place of a sucrose reward after CS presentation would lead to learning of the CS-stimulation association, as reported previously12. We trained both VTA DAT-Cre with ChR2 expression (n = 3) and WT mice (n = 3) by delivering a tone (CS) followed by stimulation instead of sucrose reward. There was no licking CR in the absence of reward (Fig. 10a, b). After 400 trials, we introduced a sucrose reward in addition to stimulation. When the reward was delivered, stimulation did not affect the learning rate compared to controls (Fig. 10b, c). In agreement with previous work6,18, in well trained mice optogenetic stimulation 4 s after reward delivery also produced forward force (Fig. 10d–f).

a Top: Cre-dependent ChR2 or DIO-eYFP was injected into the VTA of DAT-Cre mice (n = 3) to excite VTA DA neurons. Bottom: To test sufficiency of VTA DA for learning, brief high-frequency stimulation (30 Hz–15 pulses) was used in place of reward for 400 trials, followed by stimulation and sucrose reward. b Stimulation did not support CS-US learning (Two-way ANOVA, significant interaction, F(38,152) = 1.752, p < 0.01, post-hoc: no significant time point differences, p > 0.05, n = 3 mice per group). c No effects on latency to initiate CRs (Two-way ANOVA, Trial Number, F(38, 152) = 5.80, p < 0.0001, no effect of Stimulation F(1,4) = 0.1, no interaction, F(38,152) = 0.9, p > 0.05, n = 3 mice per group). d Well-trained mice received DA neuron stimulation 4 s after reward, when spontaneous movements were least likely. e Example effect of DA activation on forward force generation. f Stimulation 4 s after reward produced forward force (paired two-tailed t-test, p = 0.0129, n = 6 mice). g Top: To inhibit VTA DA neurons, stGtACR2 (n = 4) was expressed in DAT-Cre mice or control WT mice (n = 6). Bottom: VTA DA neurons were inhibited during the CS-US interval. h Example backward movement resulting from inhibition of DA neurons. i Inhibition did not prevent learning but increased anticipatory licking (Two-way ANOVA; Stimulation: F(1, 160) = 25.34, p < 0.0001, Trial Number: F(19, 160) = 6.775, p < 0.0001, no interaction, F(19,160) = 1.3, p > 0.05, n = 4 stGtACR, 6 Control). j Optogenetic inhibition reduced latency to backward movement (Two-way ANOVA, Stimulation: F(1, 7) = 339.5, p < 0.0001, Trial number: F(19, 133) = 0.8, p > 0.05, no interaction F(19,133) = 0.86, n = 4 stGtACR, 5 Control). k To test for negative RPE, trained mice received 1 s of DA inhibition at reward. l Inhibition at reward did not significantly impair learning (n = 4 mice). There was no change in anticipatory licking following inhibition (paired two-sided t-test, p = 0.46, n = 30 binned rate estimates from 4 mice). Error bars represent SEM. Source Data provided.

To further test whether VTA DA signaling is necessary for learning, we used an inhibitory opsin (SIO-stGtACR236, N = 4 DAT-cre mice, control: N = 6 WT mice) to inhibit VTA DA neurons during the CS-US interval (Fig. 10g). Inhibition produced backward movement, but did not impair learning (Fig. 10h). In fact, mice licked more after inhibition of DA neurons compared to controls, contrary to what the RPE hypothesis predicts (Fig. 10i). Inhibition also reduced their latency to backward movement (Fig. 10j). According to the RPE hypothesis, if DA neurons are inhibited immediately after reward delivery, there should be a negative RPE and reduced anticipatory licking on future trials37. Contrary to this prediction, when we inhibited DA neurons at reward delivery to mimic a negative prediction error (Fig. 10k), anticipatory licking (CR) was not reduced on subsequent trials (Fig. 10l). Together our optogenetic results show that phasic DA is neither necessary nor sufficient for stimulus-reward learning.

Discussion

We found systematic changes in force measures and the activity of VTA DA neurons during a stimulus-reward task. We identified two distinct groups of DA neurons with force tuning (Fig. 1). Force tuning is found during spontaneous movements during the ITI and during force CR production. It does not depend on learning. Altering reward size, probability, or omitting the reward systematically alter phasic DA activity, as predicted by the RPE hypothesis, but the changes in DA activity can be explained by subtle changes in performance rather than learning (Figs. 4 and 5). DA activation after CS predicts variations in both the latency and the presence of the CR (Figs. 6–8) and UR (Fig. 9 and Supplementary Fig. 9). Finally, stimulating or suppressing DA neurons can directly affect the direction of force exertion without affecting learning (Fig. 10).

Studies have shown short-latency DA bursts in response to salient stimuli, independent of learning22,24. This has led to the proposal that there are two components of DA signaling, a short-latency salience signal and a delayed signal reflecting RPE21. We could also discern two components of phasic DA activity, though the early salience-related component can be merged with the slower component for approach behavior when CR latency is short. The first component can be evoked by a salient stimulus like the CS. Although this component can predict the latency of CR force onset, it does not show direction-specific force tuning. On the other hand, there is a second component of DA signaling with longer latency that is relatively independent of salience. This component can distinguish between forward and backward force exertion (Fig. 2i, j). Thus, aside from force tuning, most DA neurons can also signal stimulus salience. But both components of DA signaling can be explained by changes in performance rather than RPE, as they are present before learning.

Whereas the RPE model predicts gradual increases in phasic DA after CS as reward prediction grows with each reward delivery31, our single-trial analysis shows that DA activity does not increase uniformly across a session. Rather, the variations in phasic DA signaling following the CS are better explained by CR latency. Higher DA predicts earlier CR force, suggesting a faster rise to activation thresholds for CR generation (Fig. 7). Furthermore, DA activation was significantly reduced if force CRs were not generated prior to the US, regardless of training stage (Fig. 8). This suggests that increased DA signaling alters performance by initiating and speeding up responses, instead of signaling RPE.

DA response to reward and reward omission

While DA responses to the CS grow as the CR becomes more consistent, the DA response to the US does not decrease with training, contrary to the predictions of the RPE model (Supplementary Fig. 9). A recent study in mice found that DA activity aligned to the US increased with training, but did not show whether this is associated with reduced UR latency32. Our results suggest that, regardless of training, DA activity after the US promotes transition to consummatory behavior. By aligning to the reward delivery (US), we showed reduced DA activity if force and licking URs are not immediately generated after reward. Thus the putative “reward response” is actually used to generate the UR. This activity does not show force direction tuning, but reflects subtle performance changes, such as a brief pause associated with stopping forward approach (CR) as soon as the reward is detected, followed by a DA burst that predicts consummatory licking quantitatively (Fig. 9 and Supplementary Figs. 9 and 10). Thus DA is not just a reward signal; it is directly shaping ongoing action patterns. For rhythmic pattern generators with a relatively fixed frequency (e.g., licking at ~7 Hz in mice), this could mean controlling the duty cycle—the proportion of time spent licking, in agreement with our observations38,39.

A key observation that has been used to support the RPE hypothesis is the “dip” in DA firing when an expected reward does not arrive31. This dip is conventionally interpreted as a negative RPE signal. We also found a dip in DA activity following omission of predicted reward. However, this pattern could be explained by a change in performance, as mice abruptly stop force exertion after reward omission. There was a corresponding reduction in force that parallels the dip in DA activity at the expected time of reward delivery (Fig. 5).

Finally, we showed that VTA DA neurons can increase firing to rewarding and aversive stimuli, but such activity is determined by differences in the patterns of force exertion. DA activity appears to be independent of outcome valence but reflects the direction and magnitude of anticipatory approach behavior3,40. If VTA DA neurons signals RPE or reward value, they should be inhibited by the air puff. We found, however, that they were excited and modulated by the direction of movements evoked by the air puff (Fig. 3). Importantly, force tuning remains similar despite the change in outcome valence. These results are also consistent with previous research demonstrating that mesolimbic DA is also responsive to aversive stimuli41.

Our results therefore contradict the RPE hypothesis of DA function. The basic assumption in traditional reinforcement learning models in general, and the RPE hypothesis in particular, is that learning is expressed directly and immediately in performance7. They therefore conflate learning with changes in performance. In addition, such models treat actions as simple, discrete choices, ignoring their spatial and temporal complexity. Recently, Lee et al. argued that a vector RPE model can explain results showing heterogeneous coding of task variables but uniform reward responses42. They suggest that DA heterogeneity reflects a high-dimensional state representation with a distributed RPE code. Our results suggest that DA signaling dynamically modulates behavior in real time, but its role is to facilitate transitions commanded by other brain areas, e.g., corticostriatal inputs. Consequently, it could play multiple roles to facilitate different behavioral demands depending on its timing.

Adaptive gain hypothesis

Our results provide support for an alternative hypothesis of DA function—the adaptive gain hypothesis43. This hypothesis posits that dopamine acts as a dynamic gain signal in the cortico-basal ganglia networks. It can adjust the transitions between states or actions in transition control systems44. In this framework, dopamine does not just signal reward or drive motivation in a static way; it shapes how behaviors unfold in real time, including their initiation, maintenance, and termination. The primary role of DA is to alter the activation function of downstream neurons, such as striatal projection neurons. Because it is a gain signal, in accord with its role as a neuromodulator, it does not unconditionally cause behavior all by itself, but works in concert with corticostriatal commands, and possibly other glutamatergic pathways, for action generation.

DA signaling could have two components with different timing21,24. In response to a salient stimulus DA neurons can be activated by early-stage sensory pathways and show a short-latency burst. The early dopamine burst acts as a global alert signal, amplifying the gain on downstream circuits to promote a new behavioral transition. This “preparatory gain” boosts sensitivity or readiness, lowering the threshold for action initiation and predicting when a behavioral transition can occur (CR latency). Virtually all recorded DA neurons show this property. In principle, their uniform activation by salient stimuli allows them to act as general preparatory gain signal that primes all potential action modules without specifying details of the action. This could be mediated by changing the excitability of many striatal neurons in response to inputs45. The short-latency response does not show force tuning, as the detailed action command with kinematic information has not been formulated. On the other hand, once a particular action has been commanded (e.g., move forward), the basal ganglia output can initiate actions, while simultaneously sending an efference copy signal that modulates DA signaling in real time46. This can be done via axon collaterals of GABAergic projection neurons from the VTA and SNr26,47. In turn, DA adjusts the gain in corticostriatal transmission. According to this hypothesis, force tuning is a consequence of the slightly delayed “execution gain” that uses an online estimate of the ongoing behavioral state to adjust the system gain, a common method used in control systems to update gain dynamically according to task demands. This model is also supported by previous work showing a role for DA in regulating effort10, movement vigor48, kinematics6, or the regulation of impulse in approach behavior18.

Although the present results provide an alternative explanation of results that are traditionally used to support the RPE hypothesis (Figs. 4–7), they do not rule out a role for DA in learning. There is a close relationship between learning and performance, though one cannot simply interpret any change in performance as learning49. Learning can be defined as long-term changes in system parameters, which are presumably implemented by long-term synaptic plasticity, rather than a transient change in system performance43. Many factors, such as motivational state, effector properties, and environmental changes could contribute to performance changes, without requiring long-term changes in system parameters. On the other hand, many forms of learning still require repetitions, similar to the more artificial repetitive stimulation patterns used in induction protocols for synaptic plasticity in in vitro studies. As adaptive gain, DA is necessary for behavioral repetition and persistence, and as such could contribute to long-term plasticity and learning. In this sense, DA plays a permissive role in learning. This account is in accord with short-term effects of DA modulation, such as altering neuronal excitability, and long-term effects of DA signaling in gating synaptic plasticity45,50,51. According to this account, changes in DA signaling during learning are due to changes in upstream neurons (e.g., from the prefrontal cortex) that directly project to DA neurons52.

Explaining previous results on DA signaling

Our results may seem to contradict a large body of work on the role of VTA DA in learning. In particular, recent studies using optogenetics have attempted to demonstrate a causal role for DA in learning12,53. It is therefore important to discuss some of the most relevant results, to see if our results shed light on their interpretation.

Steinberg et al. claimed to provide evidence for a causal role of VTA DA neurons in learning using a blocking task53. In blocking, learning of a stimulus-reward association is prevented (blocked) in the presence of an established reward predictor. Around six decades ago, this observation motivated the formulation of learning models using prediction errors, according to which existing associative strength of all available predictors reduces the RPE and hence learning about a new CS. Steinberg et al. argued that stimulation of DA neurons at the time of reward produced a positive RPE to restore learning to the blocked stimulus. However, in their optogenetic experiments, there were no controls for stimulation-induced changes in attention to the CS or US, stimulus generalization, or CR performance. Since the behavioral measure they used was just time spent in the reward port, it is unclear what the CR was or how it was affected by stimulation. Indeed, because blocking can be reversed by manipulations like spontaneous recovery, post-training reminders, and post-training extinction of the competing stimulus, some have argued that it is due to a performance deficit rather than a failure to learn54.

Saunders et al. found that optogenetically mimicking phasic DA signaling, instead of a natural reward, can artificially generate CRs. According to them, stimulation of DA neurons paired with neutral cues transforms these cues into CSs that elicit locomotion CRs. After training, the CR can start even before stimulation. These results do not necessarily support the RPE model. Saunders et al. did not use reward omission or manipulations of reward predictability to test RPE. All DA bursts were experimenter-controlled, ensuring consistent “reward” (laser stimulation) during paired trials. Although after extensive training CRs can start before stimulation onset, we cannot rule out residual effects of DA from previous trials contributing to CR production. DA can have postsynaptic effects (e.g., on excitability and GPCR-dependent intracellular signaling) that last for seconds, even when the extracellular DA has declined45. In their study, there is no evidence that the CS simply evokes a CR without any DA stimulation, since there are no CS-only probe trails. In fact, their observations are in accord with the predictions of the adaptive gain model, with the preparatory gain phase mapping onto DA bursts that create CSs and drive CRs. DA and excitatory corticostriatal drive together determine whether striatal projection neurons (e.g., those in the nucleus accumbens) reach the threshold for firing. DA can also increase in response to salient stimulus (preparatory gain), which is sufficient for behavioral activation if the relevant top-down cortical command for approach or locomotion is present.

It should also be noted that in the Saunders et al. study TH-Cre rats were used to activate DA neurons, but TH may not be a selective marker for DA neurons. Previous work in mice has shown that TH is also expressed in non-dopaminergic neurons14. Consequently, whether the results could be attributed to the activation of DA neurons per se remains open to debate. In our optogenetic experiments, we did not observe the generation of force CRs when we stimulated DA neurons in DAT-Cre mice in place of sucrose reward. This inconsistency with the Saunders et al. study could be attributed to the different promoters (DAT vs TH), or due to differences in the overall number of stimulation sessions. Only after learning of the CS−Reward association were we able to evoke movements with DA activation alone outside of the task. Our results suggest a performance-modulation role for DA once learning is established rather than one in which new CRs are generated.

Another relevant study by Iino et al. manipulated the DA dip that is normally observed following omission of predicted rewards55. DA dips detected by D2 DA receptors (D2Rs) occurred when rewards were omitted (CS− trials), disinhibiting adenosine A2A receptor (A2AR)-mediated signaling in D2-SPNs to suppressing licking to CS−. In the adaptive gain model, DA reductions can suppress ongoing behavior, enabling transitions to alternative states (e.g., pausing or withholding licking). Importantly, Iino et al. found that extinction learning did not involve DA dips or D2-SPNs. According to RPE, DA dips (negative RPE) should lead to extinction, but this prediction was not supported by their results, which suggest that DA dips are specific to discrimination tasks where a non-rewarded cue (CS−) is contrasted with a rewarded one (CS+).

Lee et. al found that inhibition of DA during reward consumption reduced the likelihood of subsequent CR. They argued that the manipulation generated a negative RPE. According to the RPE model, negative RPE signals would lead to a progressive weakening of the association, and relearning with positive RPE signals will be required to restore the original level of performance. However, mice resumed full CR generation immediately after the end of inhibition, without relearning (Fig. 4a and Extended Data Fig. 2). This pattern does not support their claim that inhibiting DA neurons introduces a negative RPE, which weakens the CS-US association, which would predict gradual relearning with CS-US pairings. This supports a performance account, where DA modulates gain for CR generation. We also performed a similar experiment where we used GtACR2 to inhibit DA neurons at the US. In previous work, we have shown GtACR2 can successfully inhibit DA spiking18. We did not observe changes in subsequent licking CRs (Fig. 10k–m). As Lee et al. did not measure force exertion, it is unclear exactly how their mice changed their behavior during learning. Differences in experimental procedures (e.g., using a different opsin (NpHR)) may also account for the conflicting results.

Recent work by Cai et al. has shown that abolishing phasic DA does not abolish spontaneous locomotion in mice42. They therefore argue that phasic DA is not necessary for movement per se, but contributes to the generation of reward-guided behavior. But their results are incompatible with the RPE hypothesis, as abolishing phasic DA did not affect learning. The finding that mice can still move without significant phasic DA signaling is not surprising given the adaptive gain model. As a gain signal, DA is expected to amplify the corticostriatal drive but is not unconditionally necessary for movement. With low gain, it is still possible to generate some behaviors, given sufficient driving signal from excitatory transmission. One would simply expect behavior to be less frequent and slowed, and indeed this was precisely what Cai et al. observed. Consequently, their results also contradict the RPE model but can be explained by the adaptive gain model.

In short, although results from these studies as well as many others are often considered to support the RPE hypothesis, they can in fact be better explained by the adaptive gain model. Previous studies often neglected subtle changes in behavioral performance during learning, which can be masked by the common practice of averaging across many trials with variable movement properties. A common practice is to align DA activity to experimenter-determined timestamps for events such as the CS and US, instead of aligning to behavioral transitions. This is not surprising since previous work did not use continuous behavioral measures. As we have shown, aligning to CS and US can lead to misinterpretation, and detailed behavioral analysis based on continuous behavioral measures is therefore critical for understanding the relationship between DA signaling and behavior56,57.

Methods

Mice

All experimental procedures were approved by the Animal Care and Use Committee at Duke University (Protocol # 162-22-09). 11 DAT-ires-Cre mice, 6 DAT-Cre + Ai32 mice, 2 VGAT-Cre mice, and 8 wild-type male and female mice were used (Jackson Labs, Bar Harbor, ME). Sex was not considered in study design. DAT::Ai32 mice were generated by crossing Ai32 mice, which express channelrhodopsin (ChR2) in neurons with Cre recombinase, and DAT-Cre mice, which express Cre under the control of the dopamine transporter (DAT) promoter. Mice (2–8 months old) were group housed on a 12:12 light cycle, with experimentation occurring during the light phase. During testing, mice were put on water restriction and maintained at 85–90% of their initial body weights. They received free access to water for approximately two hours following daily experimental sessions.

Viral constructs

rAAV9.EF1α.DIO.hChR2(H134R) (Addgene plasmid # 35507) and pAAV-hSyn1-SIO-stGtACR2-FusionRed (Addgene # 105677-AAV1) were used in this study.

Surgery

Mice were anesthetized with 2.0–3.0% isoflurane and then placed into a stereotactic frame (David Kopf Instruments, Tujunga, CA) and maintained at 1.0–1.5% isoflurane for surgical procedures. A craniotomy was then drilled above the VTA (AP: 3.1–3.4 mm relative to bregma, ML: 0.4–0.6 mm relative to bregma, DV: 4.0–4.4 mm relative to brain surface). For electrophysiological recordings, drivable electrodes were placed just above the VTA (AP: 3.2–3.4 mm, ML 0.3–0.6 mm, DV, −3.8 mm) and 16-channel recording electrodes were lowered into the VTA (AP: 3.2–3.4 mm, ML 0.5 mm, DV, −4.0–4.4 mm). For optotagging experiments, an optic fiber was attached to the electrode array at an angle (~ 15°). For optogenetic experiments, 300 nL of DIO-ChR2 or SIO-StGtaCR2 were bilaterally infused into the VTA of DAT-Cre or WT mice using a microinjector (Nanoject 3000, Drummond Scientific) at a rate of 1 nL per second. The injection pipette was left to sit for 3–5 min in order to allow the virus to absorb into the brain tissue and prevent leakage. Custom-made optic fibers (5–6 mm length below ferrule, >70% transmittance, 105 μm core diameter) were then implanted at an angle (15°) above the VTA (AP: 3.2–3.4 mm, ML: 1.6 mm, DV: 3.8 mm). Fibers and electrodes were secured to the skull using screws and dental acrylic and all mice were fitted with a titanium headbar implant for head fixation. All mice were allowed to recover for two weeks before beginning training on the Pavlovian task.

Histology

For brain collection, mice were anesthetized in an induction chamber with 3–5% isoflurane until immobile. Depth of anesthesia is confirmed by absence of toe-pinch response and steady respiration. Under deep anesthesia, euthanasia is performed by transcardial perfusion: Mice were transcardially perfused with 0.1 M phosphate-buffered saline (PBS) followed by 4% paraformaldehyde (PFA) in order to confirm viral expression as well as optic fiber and electrode placement.

To confirm placement, brains were stored in 4% PFA with 30% sucrose for 72 h. Tissue was then post-fixed for 24 h in 30% sucrose before cryostat sectioning coronally (Leica CM1850) at 60 µm. Fiber and electrode implantation sites were then verified. To confirm eYFP and FusionRed expression in DAT+ cells in the VTA of DAT-ires-Cre and DAT + Ai32 transgenic mice, sections were rinsed in 0.1 M PBS for 20 min before being placed in a PBS-based blocking solution. The solution contained 5% goat serum and 0.1% Triton ×-100 and was allowed to sit at room temperature for 1 h. Sections were then incubated with a primary antibody (polyclonal rabbit anti-TH 1:500 dilution, ThermoFisher, catalog no. P21962; polyclonal chicken anti-EGFP, 1:500 dilution, Abcam, catalog no. ab13970) in blocking solution overnight at 4 °C. Sections were then rinsed in PBS for 20 min before being placed in a blocking solution with secondary antibody used to visualize DAT neurons in the VTA (goat anti-rabbit Alexa Fluor 594, 1:1000 dilution, Abcam, catalog no. ab150080; goat anti-chicken Alexa Fluor 488, 1:1000 dilution, Life Technologies, catalog no. A11039) for 1 h at room temperature. Sections were mounted and immediately coverslipped with Fluoromount G with DAPI medium (Electron Microscopy Sciences; catalog no. 17984-24). Placement was validated using an Axio Imager.V16 upright microscope (Zeiss) and fluorescent images were acquired and stitched using a Z780 inverted microscope (Zeiss).

Head-fixed behavioral system

The head-fixation device for measuring forces exerted by mice during behavioral testing and stimulation was described previously16,17. Briefly, the head was clamped via the headbar into the head-fixation frame, which contained force sensors (100 × g load-cells, RB-Phil-203, RobotShop.com). Load cells measure force by linearly translating mechanical deformations into a voltage signal. This voltage signal was then amplified using an INA125P (Texas Instruments) in a circuit configuration that allowed for bidirectional measurement of force. Load cell voltages (1 kHz sampling rate), electrophysiological data, and timestamps for licks, reward, and laser were recorded with a Cerebus data acquisition system (Blackrock Microsystems) for offline analysis. A spout connected to a reservoir with a 10% sucrose solution was positioned at in front or slightly below the mouth (Behind condition). Reward delivery was controlled by opening a solenoid valve (161T010, NResearch, NJ) attached to the tubing connected to the spout. A capacitance-touch sensor (MPR121, AdaFruit.com) attached to the spout was used to detect licks.

Pavlovian stimulus-reward task

Mice were first allowed to habituate to the head-fixed condition for 5 min for approximately 1–2 days. Once habituated to head-fixation, they were trained on approximately 70–100 trials a day. A spout that delivers 10% sucrose was positioned in front of the mouth. White noise was continually present in the background. At the beginning of each trial, a 3 KHz tone that lasted 200 ms was presented, followed by delivery of 5–30 μL of sucrose 800 ms after the end of the tone. The delay between onset of the tone and reward delivery was 1 s. There was a random intertrial interval that varied to prevent any anticipation of the onset of the tone (4–60 s).

Air puff delivery was delivered using an EFD 1500 XL pneumatic fluid dispenser. The puff lasted 20 ms and the output tube was aimed at the face. Air puff trials were conducted as distinct sessions outside of the cue-reward sessions. There was a 5-min break between the end of a reward session and the beginning of an air puff session.

For Spout Behind sessions, the spout tip was moved slightly underneath (2 mm change in position, Supplementary Movie 2) the chin of the mice so that they had to move backwards to obtain water reward. For reward probability manipulations, sessions contained reward delivery of either 100% or 50% reward probability. For reward magnitude manipulations, sessions contained either small rewards (20 ms reward duration, 5 µl) or large rewards (60 ms reward duration, 15 µl).

Wireless in vivo electrophysiology

Drivable electrodes were single-drive movable micro-bundles of tungsten electrodes (1 × 16; 23 μm diameter) placed within a guide cannula (Innovative Neurophysiology, Inc.). Electrophysiological data were recorded using a wireless head stage (Triangle Biosystems) that was interfaced with a Blackrock Cerebus data acquisition system (Blackrock Microsystems). A digital bandpass filter was applied to the electrophysiological data (250 Hz–5 kHz) and spike timestamps and waveforms were recorded at 30 kHz. Filtered data were sorted using Offline Sorter (Plexon). A 3:1 signal-to-noise ratio, and an 800 μs or greater refractory period were required for the neural data to be used for analysis. Single units were selected based on a principal component analysis of waveforms using 2 principal components. To obtain new neurons with driveable electrodes, the electrodes were lowered by ~25 μm after each behavioral session. By comparing spike waveforms from consecutive recordings, we estimated that only about 4% of the recorded units may come repeated recording of the same neurons. These units were not omitted from the dataset, as it is not possible to conclusively establish that they are from the same units despite similar waveforms. All peri-event raster plots were generated using NeuroExplorer (Nex Technologies).

Optogenetic identification of VTA DA neurons

Several populations have wide waveforms and low firing rates characteristic of DA neurons (Supplementary Fig. 3). However, prior work has shown that waveforms and firing rate cannot establish neuronal identify in some VTA neurons58,59. We therefore used optogenetic tagging to confirm DA neuron identity. We attached fiber optic implants to our drivable electrodes16. Only fibers with ≥ 70% light transmittance measured through the optic fiber tip (PM120VA, ThorLabs) were used. Light (5–8 mW, 5 ms pulse width, 10–20 Hz for 1 s for ChR2) was delivered via laser (470 nm DPSS laser, Shanghai Laser & Optics) at the end of each behavioral session. Neurons were classified as tagged if each pulse of light produced a spike occurring with a latency of ≤ 7 ms and on ≥ 70% of trials (Supplementary Fig. 2). A total of 1683 neurons were recorded, of which 98 were tagged using optogenetics.

Classification of VTA neurons

For each unit, firing rates were estimated in a 3 s window beginning 0.5 s before the tone and lasting 3 s after the tone using 25 ms bins. Neural firing rates were only estimated on trials with the Spout in Front position and that contained CRs. Neuron firing rates were baseline-subtracted by the average of all pre-tone baseline firing rates (first 50 bins) and normalized to obtain the z-score for each unit around that baseline. These responses were concatenated along with 50 replicates of the baseline rate to generate a functional vector for each unit. The functional vectors representing the entire population were stacked together into one matrix. We then used agglomerative clustering on this matrix, which resulted in 5 different response profiles. All preprocessing was done in Matlab and clustering was performed with the function clusterdata using Euclidean distance and Ward linkage parameters. After clustering was complete, cells were manually checked for the consistency of the responses. Some neurons were found to be clustered incorrectly and were then manually changed to the appropriate cluster. We confirmed that these functional classes corresponded to distinct waveform profiles of DA and GABAergic populations. All three DA populations are distinct from our GABAergic populations and clustered together with the confirmed tagged DA neurons (Supplementary Fig. 4). All 3 populations were analyzed as DA neurons in the manuscript.

To classify DA neurons according to spontaneous responses, a similar approach was used. We first estimated the firing rates of all DA neurons around spontaneously generated forward and backward movements using 10 ms bins and baseline subtracted by the average of all pre-event baseline firing rates (first 50 bins). Mean firing rates during both forward and backward movements were then combined to produce a spontaneous activity vector. These vectors were assembled into a single matrix representing all DA neurons. We then used agglomerative clustering, which resulted in 6 different response profiles. All preprocessing was done in Matlab and clustering was performed with the function clusterdata using Euclidean distance and Ward linkage parameters. The resulting groups fell into either Forward DA, Backward DA, Increasing non-selective DA, decreasing non-selective DA neurons, in addition to a small group of unrelated cells.

Optogenetic parameters

Optogenetic stimulation sessions were identical to the Pavlovian conditioning tasks with the electrophysiological recordings, as described above. Pulses of light (Excitation: 5–8 mW measured at the tip of the optic fiber connected to the optic implant, 5 ms pulse width, 30 Hz 5–30 pulses; Inhibition: 5 mW, 1 s pulse width) were delivered via a laser (470 nm DPSS laser, Shanghai Laser & Optics) and controlled through an Arduino.

Force conversions

Force conversion of load cell signals was described previously16,17. Briefly, we calibrated the load cell circuits using a conversion factor (expressed in Newtons per Volt) determined by the linear relationship between the voltage changes resulting from known masses placed on the sensor. Force was determined by multiplying voltage signal by the conversion factor to obtain a value in Newtons. Impulse was calculated using the Matlab function trapz to integrate the total area under the force curve over time from movement onset to movement termination18. The change in force was the first derivative of the force signal, calculated using the diff function in Matlab. The resulting values were divided by the bin size (1 ms) and smoothed by convolving the signal with a Gaussian filter with a standard deviation of 12 bins.

Detection of movement initiation

Forward movements were defined during force exertions that exceeded a threshold of 0.5 standard deviation of force greater than 0, lasted longer than 100 ms and was also separated by at least 100 ms from another movement. Backward movements were defined as events lower than 1.5 standard deviations of all force values below 0. These events had to last longer than 100 ms, had to be separated by at least 500 ms. Backward movements had to occur independently of forward movements and so could not coincide with the end of any forward movement, as sometimes mice moved backward immediately after moving forward. CRs were defined as the first force exertion after the CS. URs were defined as first exertion events occurring after the reward. During early stages of training, URs were detected using first lick onset to overcome high variability in force direction. Spontaneous movements were identified as any forward or backward movements occurring in the intervals beginning 3 s after any stimulus (Tones, rewards, or air puff) and 0.5 s before the next stimulus.

To see if DA activity can predict latency, trials with force exertion between 0 and 1 s following CS presentation were selected. Firing rate on a given trial was estimated by taking the inverse of the mean inter-spike-interval for all pre-movement spikes. Trials were binned according to latency into 20 bins. Neurons whose CS activation did not exceed a z-score value of 3 for two consecutive time bins were not included in the analysis. For each CS-modulated neuron, the mean firing rates observed during trials with a specific binned latency value were assigned to that bin and averaged. R2 was computed using the averaged firing rates corresponding to binned latency values (Matlab function corr).

Training stages were defined as early, intermediate, and late by the following criteria. Early training: initial sessions with CR variance being above the 95th percentile across all sessions recorded for the mouse. Intermediate training: sessions not classified as early but that were above the median CR latency variance across all sessions. Late training: sessions with CR latency variance below the median CR latency variance across all sessions.

Analysis of force tuning

Recent work found that VTA DA neurons can be identified based on their responses to forward and backward movements18. Because cell responses during Pavlovian conditioning are not entirely indicative of direction tuning, we also classified VTA DA neurons according to their preferring direction by comparing the neural activity of all recorded neurons during spontaneous forward and backward movement events, which were a consistent feature of mouse behavior independent of learning (Fig. 1 and Supplementary Fig. 1). This approach allowed us to identify force tuning independently of Pavlovian conditioning. Change in force during spontaneous movements from −500 ms to +500 ms around movement initiation was used (25 ms bins). Firing rate for each neuron was also binned into the same bins, creating two vectors. A cross-correlation was computed between the firing activity vectors and the change in force for the neuron’s preferred direction of force exertion to determine the time shift between the two signals. Neural activity was then shifted according to the lag between its activity and change in force in the preferred direction. We next sorted the change in force vector according to magnitude and excluded outliers (1st and 99th percentile), which contain very few replicates. We averaged neural activity obtained concurrently with forces from 10 evenly spaced monotonically increasing force bins that evenly spanned the range force changes. This same procedure was used when analyzing the relationships between single unit activity with force in the non-preferred direction.

Cross-correlation analysis was performed in Matlab. Neuron firing was referenced to either Force or Change in force, depending on the signal best matched to the firing profile (broad modulation or bursting). 25 ms bins were used. The cross-correlogram was smoothed with a Gaussian filter with SD = 3, and the lag that corresponded to the peak of the cross-correlogram was collected for each neuron. For neurons that showed only decreasing changes in firing (DA decreasing), we used time to the minimum of the cross-correlogram as the lag.

Analysis of DA activity in relation to consummatory behavior

For each trial, we calculated the lick rate and the area under the force curve (Impulse) over the 1 s window after reward. The average change in force was taken over a 500 ms window over reward. The estimated firing rate for each DA neuron was also calculated for each trial over a 250 ms window, and these estimates were averaged across the entire DA population to generate a single DA firing rate estimate per trial. Behavior variables and DA population rates were binned over all training sessions into 25 trial bins for linear regression analysis.

Licking activity for each trial was aligned to the US. Instantaneous change in licking rate was estimated by calculating the inverse of each inter-lick-interval. The instantaneous change in lick rate was given a timestamp by taking the midpoint between its corresponding two lick timestamps, and these values were binned into a time bin vector spanning −1 to +2 from the US, with 25 ms bins. Estimated firing rates from DA neurons was determined in the same manner and were binned into the same time bins as licking. The final latency to change in lick rate for each subject was found by taking the latency from the US to the peak change in lick rate.

Computational model of DA concentration