Abstract

The Noise2Void technique is demonstrated for successful denoising of atomic resolution scanning transmission electron microscopy (STEM) images. The technique is applied to denoising atomic resolution images and videos of gold adatoms on a graphene surface within a graphene liquid-cell, with the denoised experimental data qualitatively demonstrating improved visibility of both the Au adatoms and the graphene lattice. The denoising performance is quantified by comparison to similar simulated data and the approach is found to significantly outperform both total variation and simple Gaussian blurring. Compared to other denoising methods, the Noise2Void technique has the combined advantages that it requires no manual intervention during training or denoising, no prior knowledge of the sample and is compatible with real-time data acquisition rates of at least 45 frames per second.

Similar content being viewed by others

Introduction

The latest (scanning) transmission electron microscope ((S)TEM) instruments are capable of spatial resolutions of better than 50 pm, allowing atomic structure to be resolved for many different crystal orientations1,2,3,4. Yet imaging at such high magnifications requires high electron fluence to provide sufficient signal-to-noise ratio (SNR) in the resulting images so that information is transferred5,6. Thus, the limiting factor to achieving atomic resolution (S)TEM imaging of a particular material is often it’s stability under a high-energy electron beam7,8,9. Image denoising techniques, which aim to improve the SNR of images after acquisition, have been studied for many years, with a particular focus on removing additive Gaussian white noise (AGWN)10. By improving the SNR, denoising enables information transfer to be retained while lowering the electron fluence (electrons incident per unit area)11,12. Effective denoising therefore has the potential to unlock improved atomic resolution (S)TEM imaging of systems such as metal organic frameworks and pharmaceutical crystals, where spatial information transfer is currently limited by the material’s electron beam sensitivity7.

For in situ (S)TEM imaging of dynamic processes, the requirements for excellent electron stability are further increased since the material must survive for multiple image frames13. Here, successful image denoising provides opportunities for fractionating the material’s critical electron dose across more images: either increasing the number of frames in an image series before damage is observed or increasing frame acquisition rate while retaining information transfer within the individual images.

In this work, we focus on denoising for a particular experimental challenge, one that combines demanding requirements for both spatial and temporal resolution in the STEM. This is the investigation of local atomic motion at solid-liquid interfaces; behaviour that underpins many physical processes such as wetting, adhesion, chemical etching, dissolution and solidification14,15,16. Such studies have recently become achievable in both TEM and STEM imaging modes using advanced liquid cells17,18. Imaging of volatile liquid-phase samples inside the vacuum of the TEM requires confining a thin volume between two electron transparent windows that are impermeable to liquid (Fig. 1). In these environmental cells, both the liquid and the cell windows introduce undesired distortions to the electron beam as it transmits through the sample lowering the SNR16,19. Graphene windows are thinner and less dense than commercial silicon nitride windows so introduce less unwanted scattering12,19,20. However, even the presence of the liquid alone will lower the SNR compared to the same sample imaged in vacuum11. To maintain spatial resolution in liquid-cell (S)TEM images therefore requires higher electron fluence than achieving the same resolution ex situ in the absence of liquid.

a An overview of the modified UNet architecture used for Noise2Void denoising where the inputs are noisy dual-channel (HAADF & BF) STEM video frames (left) and the outputs are the same frames denoised (right). ‘Conv2d’, ‘ConvTranspose2d’ and ‘LeakyReLU’ refer to convolutional blocks, transpose-convolution blocks and leaky ‘ReLU’ activation blocks, respectively, while ‘Concat’ and ‘AvgBlurPool’ refer to channel-wise concatenation and bilinear downsampling38, respectively. The black horizontal arrows represent UNet skip connections. b The results of a separate atom-finding step applied to the example image above. In the denoised output, 89% more adatom features are identified compared to the raw image, showing the improved performance of atom finding analysis when Noise2Void is used in a data-analysis pipeline. Inset are corresponding atomic diagrams of the magnified region, showing the graphene lattice positions with gold adatoms overlain. The blue arrows highlight gold atoms that were only detected after Noise2Void denoising was applied. Also shown is a model of the TEM liquid-cell, showing the convergent electron probe (green), the few-layer graphene windows (black) and the boron nitride spacer layer (blue).

A further challenge associated with liquid-cell TEM and STEM is the potential for electron beam-induced changes to the liquid-cell chemistry. For aqueous solutions, these radiolytic changes to the local liquid environment occur at comparatively low electron fluence21. The effects include increasing pH and the creation of chemically active free radicals, which may be only indirectly visible in the (S)TEM images as behavioural artefacts within the system under investigation22. Various methods exist to mitigate radiolytic effects by altering the solution chemistry to inhibit the concentration of radiolytic species21 yet ultimately the coupling of SNR and required image resolution determines the minimum electron dose in the liquid-cell11. Denoising offers the potential for improving the SNR of liquid-cell images post-acquisition, enabling reduction in the electron fluence and consequently reducing radiolytic changes in the liquid environment.

Deep learning techniques have advanced the field of image denoising over the last decade with improved performance, computational efficiency (in application) and by not being reliant on manual parameter tuning23. Deep learning techniques typically require training data, in the form of input and ground-truth/target pairs, to train models. Application to experimental denoising tasks like improving the SNR of (S)TEM images, therefore presents a challenge because often the ground-truth input data (noise-free equivalent of the experimental data) is not known.

There are several examples of supervised deep learning denoising techniques being successfully applied to (S)TEM images24,25,26. These have been generally found to outperform more traditional approaches such as total-variation (TV) regularisation27, non-local low-rank/sparse-representation techniques28,29 and simple filters30. However, they required extensive training from image simulations or artificially noised images, which can be computationally expensive, and once trained demonstrate limited transferability to different samples. Various examples of the application of denoising to environmental cells can be found in literature31,32,33 but the available improvement in SNR was relatively modest despite high computational complexity.

The Noise2Noise family of deep learning techniques, which includes Noise2Void, present a powerful alternative solution to the problem of lacking ground-truth (noise-free) equivalents of noisy experimental images but which has not been rigorously investigated for application to denoising of (S)TEM data. Broadly, the Noise2Noise approaches use specialised training regimes in order to utilise the noisy experimental images as both their input and ground-truth training data, thereby providing opportunities for image denoising without requiring manual intervention or extensive simulated data to train a model. The Noise2Void technique involves applying these specialised training regimes to a UNet34, which is a type of convolutional neural network (CNN) that would otherwise typically require supervised training (involving paired/labelled training data)35.

In this paper we test application of the Noise2Void denoising approach to the exemplar system of atomically resolved STEM imaging of gold adatoms in graphene liquid-cells (Fig. 1). The sample is atomically dispersed gold on few-layer graphene, imaged in situ within a graphene liquid-cell to visualise the dynamic behaviour at the solid-liquid interface (Fig. 1b). We present denoising of experimental and simulated data, and quantitatively compare denoising performance to simple Gaussian filtering and the more computationally demanding total variation approach. We demonstrate how this denoising approach can be used as an initial step to enhance the effectiveness of feature identification from atomic resolution images, as illustrated in Fig. 1b.

Results

Noise2Void implementation

To train a suitable denoising model for a particular set of images, the Noise2Void algorithm assumes that the image-noise is random and pixel-wise uncorrelated34. For each training datum, the algorithm uses the same noisy image as both input and ground-truth, thereby training the network to replicate the input at the output. Taken alone, this would simply train the model to learn unity, making it useless. The key insight is that a ‘blind-spot’ is introduced to the model, preventing it from using the value of pixel A at the input to predict the value of pixel A at the output. Each pixel value at the output must be predicted using that pixel’s neighbours (at the input), but not that pixel itself. Since pixel-wise uncorrelated information cannot, by definition, be predicted with knowledge of a pixel’s neighbourhood only, it cannot be reproduced at the model’s output. Pixel-wise correlated information in the image can however be reproduced at the output. Therefore, in attempting to reproduce the input at the output, the network reproduces the input image without pixel-wise uncorrelated noise34. The ‘blind-spot’ effect is not enforced in the network architecture, but is implemented in training. During training, the input image has a grid of pixels masked, with their intensity replaced by some other value.

The family of Noise2Noise-inspired algorithms includes the original Noise2Noise, as well as Noise2Self, Noise2Void and N2V234,36,37,38. The Noise2Void technique uses a UNet architecture35 and the ‘blind-spot’ masked pixel’s intensity is replaced with one of its neighbour’s34. However, the key novelty of the Noise2Void technique itself does not place a strict/specific constraint on the exact network architecture. N2V238 improves on Noise2Void, in part by using a modified UNet, which is the approach we adapt in this work. In N2V2, the masked pixel’s intensity is replaced not by a neighbour but with a local average38. For both Noise2Void and N2V2, the model’s training loss is calculated by determining the model’s ability to reconstruct the input image only at the masked pixels. Since each masked pixel is modified at the input, their prediction at the output can only be made based on the intensities in their local neighbourhoods, thus implementing the ‘blind-spot’ without needing to add constraints to the network architecture. To successfully apply Noise2Void to denoising of the STEM data considered in this work, here several adaptations were made to the Noise2Void techniques described previously (in the original Noise2Void and the N2V2 paper34,38). This included modifications to the input format, the network architecture and the training regime to allow application of the approach to experimental STEM images.

The Noise2Void approach is limited to only statistically uncorrelated image noise34. For in situ (S)TEM experiments, the liquid within a liquid-cell always imparts statistically uncorrelated noise onto the (S)TEM images11,39 and this can often be the dominant source of image noise. Also, as discussed earlier, it is often desirable to minimise beam-dose during in situ experiments and this also introduces uncorrelated image noise28. Therefore despite being limited to uncorrelated image noise, Noise2Void is particularly suited to in situ liquid-cell experiments.

Modification to support multiple channels

One of the advantages of the STEM imaging approach is the ability to simultaneously acquire data from different detectors. Information in these images from different detectors is often physically correlated. To illustrate the application of Noise2Void to such data sets we have acquired STEM image pairs, collecting data from the bright field (BF) and annular dark field (ADF) detectors simultaneously (see Fig. 1a). These images are generated from detector measurements acquired simultaneously during a single raster scan and contain physically correlated, yet distinct, information due to the different angular sampling of the transmitted electrons, so should be considered together to gain the best understanding of the material under investigation. Images produced from the ADF detector with high collection solid angle are often referred to as Z-contrast, being dominated by Rutherford scattering with the intensity scaling with the sample’s thickness and atomic number40. The BF detector collects small angle scattering to produce a phase contrast image or interference pattern where regular crystalline features tend to give strong contrast, although it is not directly interpretable in terms of the crystal structure of the material41. Previous implementations of Noise2Void and N2V2 in literature have only applied the technique to monochrome images (i.e. a single colour channel)34,38. As our experimental data-sets consists of time-series of image-pairs, we have modified the network to operate on both ADF and BF channels in unison by implementing the input frames as dual-channel images. This has the advantage that the network can use BF channel information to enhance its denoising of the ADF channel and vice versa.

Modification to network architecture

In this work, the UNet architecture was modified compared to the original UNet paper35 and to all previous Noise2Void papers34,38. The original UNet paper uses an encoder-decoder architecture with a depth of four, max-pooling between layers and 64 feature channels at the output of its first layer35. The original Noise2Void paper changed the UNet to have a depth of two and 32 feature channels at the output of its first layer (so a much leaner network)34. The effect of the number of layers in the UNet architecture in dictating the denoising success was tested in the range two to four layers, with four layers found to give the best performance. Therefore for all the results presented in this work, the UNet has a depth of four, with 64 feature channels at the output of the first layer.

The pooling technique was also experimented with, inspired by modifications to pooling reported in the N2V2 paper38, with the aim of optimising denoising performance. Replacing the max pooling layers of UNet with average pooling was found to improve denoising performance, likely due to the greater linearity of information transfer down the network.

A further architecture modification made here is the increase of the receptive field compared to the original Noise2Void implementation34 and the N2V238 architecture. With this change the network has more information on which to make pixel-predictions. Although increasing the number of layers in the network would also achieve this, increasing the number of layers beyond four significantly increases GPU VRAM requirements so the approach becomes harder to practically implement. Increasing the receptive-field in only the first layer was sufficient to achieve improved denoising performance. The size of the convolutional kernel in the first layer was therefore increased to 5 × 5, with 3 × 3 kernels elsewhere, whereas other networks use 3 × 3 kernels in all layers. Our modified Noise2Void architecture is illustrated schematically in Fig. 1a.

Modification to training

Our Noise2Void model was first trained on 8475 dual-channel frames from a full data set of 16,750 frames with the training data extracted by sampling every other frame. Initial results with Noise2Void produced grid-like artefacts in the denoised frames (see Fig. 2b), unwanted features which are also reported in the original Noise2Void paper34. These artefacts are reduced with the addition of a jitter to the positions of the masked pixels (px) in the training images, specifically random displacements of up to 2 px translations, and modification to the network’s upsampling approach42. This differs from the N2V2 approach, where grid-like artefacts were reduced by removing the outermost skip connection, which in this case could have limited spatial resolution.

a Example input dual-channel image (cropped). b Denoising result showing clear chequerboard artefacts where denoising was performed with an early version of our underlying modified Noise2Void UNet using standard pixel-masking (during training) and transposed convolutions for upsampling. c The final Noise2Void UNet architecture, used elsewhere in this work, with chequerboard artefacts significantly diminished. The architectures of c and b have different upsampling and jittered pixel-masking. An overview of the modified Noise2Void training algorithm can be seen in (d), with the masked pixels jittered/randomly translated by a small amount. Note that, while only annular dark field images are shown in (d), training is performed on the full dual-channel images.

Other modifications to the Noise2Void architecture were also tested while optimising the Noise2Void denoising performance, including replacing the convolution blocks with Xception43 style separable convolutions (to improve network efficiency), adding an Atrous Spatial Pyramid Pooling (ASPP) layer44 at the bottleneck and replacing every convolution block with an ASPP block, but all resulted in a poorer denoising result. Removing the outermost skip-connection compared to the original UNet was also tested as reported in N2V238, but this also resulted in worse performance.

Denoising performance—experimental data

Figure 3a shows a representative frame from the experimental dataset, consisting of a dual-channel ADF-BF image pair with the ADF image shown on the top row and the simultaneously acquired BF image on the bottom row. The aim of the denoising is to improve confidence in identification of the positions of the Au adatoms at the solid liquid interface (bright features in the ADF images and dark features in the BF images) even at low electron fluence. A secondary aim is the identification of the location of the Au adatoms with respect to the underlying graphene lattice, which requires resolving the graphene’s atomic lattice. We first consider the latter imaging challenge. Figure 3b-d compares the same experimental image pair after Gaussian denoising, TV denoising and after the Noise2Void approach. Unlike our modified dual-channel Noise2Void, Gaussian and TV denoising are not dual-channel techniques, and so are applied to each image channel separately. To more clearly highlight the different SNRs, intensity profiles are extracted from identical locations on all images demonstrating the intensity modulation resulting from the graphene lattice sampled along the \([10\bar{1}0]\) direction as shown in Fig. 3a–d. Examination of Fig. 3d shows that the UNet Noise2Void approach has revealed the underlying graphene lattice in both the BF and the ADF channel, while the periodicity is not clear in the ADF images subjected to either Gaussian blurring or TV denoising (Fig. 3b,c).

Each frame is a dual-channel image (ADF on upper row, BF on lower row). Intensity line-profiles are presented under the images showing the intensity modulations resulting from the crystal lattice of the graphene windows. The line-profiles are taken along the \([10\bar{1}0]\) direction of the graphene lattice, at the same position for all data (indicated by the green and blue arrows on ADF and BF images respectively), with a width of 1 px. From left to right the images compare the same frame a from the original experimental data used as input for all the denoisers, b after Gaussian denoising, c after denoising by the total variation technique and d after denoising by our modified Noise2Void approach. The square-root of pixel intensities are displayed for the ADF channel, while the BF images and all line-profiles are plotted on a linear scale. Across each row, intensities are scaled equally for ease of comparison. Only Noise2Void denoising recovers the periodic graphene lattice in both the BF and the ADF channels.

Once the graphene lattice is resolved, it is necessary to identify the number and position of Au adatoms on the graphene surface. Fig. 4 provides similar data to Fig. 3 but with the line profile sectioning through an Au adatom on the graphene inside the liquid-cell.

Intensity line profiles are presented under the images showing the intensity modulations. The line profiles are taken through two gold adatoms and are at the same position for all data, (indicated by the green and blue arrows on ADF and BF images, respectively) with a width of 1 px. From left to right, the images compare the same frame (a) from the original experimental data used as input for all the denoisers, b after Gaussian denoising, c after denoising by the total variation technique and d after denoising by our modified Noise2Void approach. The square root of pixel intensities is displayed for the ADF channel, though the BF images and all line profiles below are plotted linearly. Across each row, intensities are scaled equally for ease of comparison. The ADF has a much flatter background with the Noise2Void denoising, compared to the input, Gaussian blur and TV denoising, making it easier to recover the Au adatom positions.

The TV and Noise2Void denoisers produce flatter ‘background’ (Au-free graphene region) intensities in the ADF image than Gaussian blurring, which has the benefit of increasing the number of Au adatoms that are correctly identified (as shown in Fig. 1b).

A rigorous quantification of SNR in TEM images requires the underlying ‘ground-truth’ image signal be known, to accurately measure the noise. This is achievable by comparison to image simulations as demonstrated in section ‘Denoising Performance—Simulated Data’. Nonetheless, useful insights can still be gained from consideration of experimental SNR values.

We first consider Noise2Void denoising of the experimental ADF images. The background ‘noise’ includes a ‘real’ periodic signal from the graphene lattice as well as unwanted intensity variations resulting from both the presence of the liquid and background noise from the imaging system. The Z-contrast dependence of the ADF signal means the graphene lattice is only a weak modulation compared to the intensity of Au adatoms, so when seeking to identify only the Au adatom peak locations it can be considered small compared to the unwanted signals we wish to remove. In practice, Noise2Void denoising can reveal the periodic modulations of the graphene lattice in the ADF signal (as shown in Fig. 3d). However, we ignore this in the following analysis, recognising this lattice signal will result in underestimation of the SNR improvement when gold-free regions are considered as the noise reference.

The SNR for the Au adatoms measured from the ADF intensity line profile in Fig. 4 gives a value of 2 for the raw input image. This is improved to 3.3, 7.2 and 12 for Gaussian blurring, TV denoising and Noise2Void denoising, respectively (see ‘Methods’ for details of SNR quantification). Arguably more challenging than identification of the positions of well isolated individual adatoms, is the ability to resolve separate adatoms that are in close proximity. This competes with achieving a high SNR for single adatoms when using Gaussian blur so may be a limiting factor for conventional denoising approaches. Fig. S1 compares intensity line profiles where two Au adatoms are separated by 0.34 nm (~7.4 px). Quantification of the drop in the intensity of the ADF image between the adatoms, relative to the height of the least intense adatom peak reveals a drop of 57% for the original raw input image, compared to 28%, 14% and 29% for the Gaussian blur, TV and Noise2Void, respectively. Together, the data in Figs. 4 and S1 suggest that Noise2Void is the better denoiser for the ADF channel because it improves the SNR significantly from 2 to 12, while also retaining the ability to separately distinguish nearby features, which can be suppressed by other denoising methods. Examples of denoised Au nanoclusters can be seen in Fig. S4.

Compared to the ADF image channel, the denoising performance for the BF channel in Fig. 3 is harder to assess because the feature of interest (the graphene lattice) covers the whole image and there is no region that can reasonably be approximated as purely background. Considering Figs. 4 and S1, in all denoisers, but especially TV and Noise2Void, higher-frequency components have been diminished, yet it is not clear if this significantly improves the image for the extraction of the crystallographic lattice in later analyses.

The transfer of periodic crystallographic information after denoising is more easily assessed in Fourier space, as shown in Fig. 5, where the upper row is the magnitude of the fast Fourier transform (FFT) of the ADF image and the second row is the FFT-magnitude of the corresponding BF image. The input frame is shown in Fig. 5a, while Fig. 5b-d shows each denoiser’s output frame. The Gaussian denoised FFT-magnitude shows that only high spatial frequency noise has been removed from both the ADF and the BF images, as expected with this simple approach. Encouragingly, the Noise2Void approach has both suppressed the high-frequency noise and also enhanced the transfer of low spatial frequencies relative to the high spatial frequencies in both ADF and BF images. The graphene lattice appears in the FFT-magnitudes as sets of hexagonal lattice spots. These are visible in the FFT-magnitudes of the BF input image and in all the denoised BF images. However, in agreement with the real space analysis in Fig. 3, only denoising with Noise2Void reveals all six of the first-order graphene spots (corresponding to the \(\{01\bar{1}0\}\) lattice spacings) for the FFT of the ADF image.

The magnitudes of 2D fast Fourier transforms (FFTs) of the ADF and BF image channels (first row and second row, respectively) are shown with intensities plotted on a logarithmic scale. The third and fourth rows show a polar transform of the 2D FFTs, for the ADF and BF images respectively, six-way folded in the azimuthal direction with the azimuthally integrated intensity overlaid. For each row, intensities are displayed equally for ease of comparison. From left to right, the columns compare the same frame (a) from the original experimental data used as input for all the denoisers, b after Gaussian denoising, c after denoising by the total variation technique and d after denoising by our modified Noise2Void approach.

The third and fourth rows of Fig. 5 show six-fold reduced polar plots of the FFT-magnitudes, where these have been warped by a polar transformation (such that the horizontal axis now corresponds to the radial direction in the above FFT-magnitudes) and summed periodically over 60∘ (azimuthal) sections/wedges. Line profiles have then been overlain, where they display the totally azimuthally integrated intensity as a function of radius (the radial profile). In these radial profiles, each set of six-fold symmetric hexagonal graphene lattice spots reduces to a single spot. While the lowest order graphene lattice (\(01\bar{1}0\) spot) is clearly visible in the BF radial profile for all data presented, careful inspection will also reveal these spots in the ADF radial profiles. The higher order (\(2\bar{1}\bar{1}0\) type) spots can also be seen in both channels which, for the BF channel have SNR values of 9.0, 8.2, 10.7 and 16.0 for the input, Gaussian blur denoised, TV denoised and Noise2Void denoised images, respectively.

The Noise2Void ADF FFT-magnitude shows an unusual hexagonal symmetry in the information transfer, approximately corresponding to the orientation of the graphene lattice. It appears that Noise2Void has selectively suppressed regions in the FFT more distant from the lattice-points of the graphene lattice, in a manner akin to a process that is often performed manually by selective FFT filtering to enhance the visibility of a crystal lattice. Nonetheless, no component of the underlying UNet architecture is six-fold rotationally symmetric, meaning the Noise2Void model has learned this symmetry during training. While denoising of a perfect lattice can be effectively achieved with manual FFT filtering, this utilises prior knowledge of the sample’s structure to choose how and where to modify the FFT (i.e. a physics-based model). In contrast, Noise2Void has no initial knowledge of the sample and must therefore have learned the intrinsic six-fold symmetry by identifying correlations within the videos (i.e. a data-driven model). The hexagonal symmetry introduced by Noise2Void denoising accommodates the various orientations of the crystal lattice across the experimental dataset. The resemblance of the FFTs of Noise2Void’s denoised videos to what one might expect from an expert’s FFT filtering can be seen as endorsement of the ability of Noise2Void to identify and patterns in the training data and exploit that correlation to enhance denoising performance. Expert FFT filtering is often laborious (requiring adaptation whenever the sample’s orientation changes), whereas Noise2Void’s results in similar success but without manual intervention.

More generally, when applied to the whole experimental dataset, we find the Noise2Void denoised images result in 89% more successfully tracked adatoms in comparison to the raw images. This practical measure of performance demonstrates the real-world enhancement that this technique can bring to a wider data-analysis pipeline, which involved tracking 5266 adatoms (improved from 2771) across a large dataset consisting of over a hundred experimental videos.

Denoising performance—simulated data

Despite the apparent success of the Noise2Void method presented in Figs. 3, 4, 5 and S1, the lack of ground truth data for experimental images limits quantitative comparison of the different approaches. We therefore apply our Noise2Void approach to denoising of simulated STEM image pairs that closely resemble the experimental data and compare the results to noise-free simulations (see methods for further information). Three metrics are used to allow quantitative comparison; peak signal-to-noise ratio (PSNR), structural similarity (SSIM), and normalised root-mean-square error (N-RMSE). Peak signal-to-noise ratio (PSNR) and structural similarity (SSIM) indicate the similarity of the image to the ground-truth (higher is better), while normalised root-mean-square error (N-RMSE) indicate the difference from the ground-truth (lower is better). While PSNR is the most widely used metric, SSIM is more closely correlated with human perception and N-RMSE is comparatively simpler to understand45. The implementation for each can be found in scikit-image’s ‘metrics’ module46 (see ‘Methods’ section). The denoising results for simulated data with an input PSNR value of 7 dB and 6 dB for the ADF and BF channels respectively, are presented in Fig. 6 where our Noise2Void approach is again compared to Gaussian and TV denoising. We also compare to BM3D denoising47 (Fig. S6). The PSNR, SSIM and N-RMSE values are summarised in Fig. 7. This quantitative comparison shows that the Noise2Void approach gives the best denoising performance for both BF and ADF images, even for very noisy simulated input data, with all metrics significantly outperforming Gaussian blurring and the TV approach for our simulated data. Similar behaviour is seen when considering the SSIM and N-RMSE values with the noisy input data showing an order of magnitude improvement in all metrics for the ADF channel. The SSIM and N-RMSE improvements for the BF channels are also similarly significant, corresponding to 9 and 6 fold improvement factors, respectively. Qualitatively, our comparisons suggest that our Noise2Void approach can result in less significant image artefacts than TV and BM3D denoising (see Figs. 6 and S6).

Frames are dual-channel annular dark field (upper row) and bright field (second row) with input PSNR of 7 dB and 6 dB, respectively. From left to right the columns compare (a) the simulated frame without noise, b simulated frame with noise added used as input for all the denoisers, c after Gaussian denoising, d after denoising by the total variation technique and e after denoising by our modified Noise2Void approach. Below the images are plotted the intensity line-profiles extracted with a width of 1 px.

Graphs show how denoising metrics separately for ADF and BF channels (corresponding to the images shown in Fig. 6). These metrics compare the similarity of each denoiser’s output (and the noisy input) to the noise-free simulation. For PSNR and SSIM, larger values are better while for N-RMSE smaller values are better.

Denoising at higher PSNR

The experimental input images used in section ‘Denoising performance – experimental data’ have relatively high SNR (~2 in the ADF images, qualitatively corresponding to a PSNR of ~16 in the simulated ADF images, an order of magnitude higher than the input simulations in Fig. 6). This high SNR has the advantage that it provides guidance for feature identification from the raw data, yet it is highly desirable to collect raw experimental data with much poorer SNR to minimise the electron dose applied to the sample. To enable optimisation of imaging conditions and infer the minimum electron fluence for data acquisition, we now consider denoising of data for a range of different input PSNR values. The PSNR scale (when measured in dB) is logarithmic so our trained Noise2Void algorithm was applied to simulations with input PSNRs in the range from 7 dB to 20 dB. Figure 8 demonstrates denoising of input simulated ADF images with PSNR values of 16.0 and 9.9 dB, showing improvements to 30.9 and 27.5 dB, respectively, in the denoised ADF outputs. A quantitative comparison of the denoising performance for PSNR values from 7 dB to 20 dB and a comparison to TV denoising is shown in Fig. 9 (and Fig. S3). Our Noise2Void approach is shown to outperform TV denoising in the ADF channel for all input PSNRs, with the most effective denoising achieved for the ADF channel over the BF. Furthermore, the output PSNR in the denoised image remains exceptionally high across all input PSNRs, giving an improvement ratio of the logarithmic PSNR (PSNR output dB / PSNR input dB) of ~3 at the lowest input PSNR, compared to an improvement ratio of ~1.6 for the highest input PSNR, both for the ADF channel.

Frames are dual-channel annular dark field (upper row) and bright field (second row). From left to right the columns compare (a) the simulated frame without noise, b simulated frame with noise added to give PSNRs of 16 dB in the ADF and BF image channels, c after denoising of the frame in b with our modified Noise2Void approach, d simulated frame with noise added to give PSNRs of 10 dB in the ADF and BF image channels, respectively, e after denoising of the frame in (d) with our modified Noise2Void approach. Below the images are plotted the intensity line profiles extracted through four Au adatoms with a width of 1 px.

The same noise-free simulated image is used for all noisy inputs, with varying levels of AGWN added. Output PSNR as a function of input PSNR, for each denoising technique, is shown in a while three example points are shown in (b). Corresponding input and output images can be seen in Figs. S12, S13, S14.

As discussed earlier, it is experimentally desirable to minimise the electron fluence, however, this reduces the SNR. Since Fig. 9 shows that denoising performance is not a simple function of input SNR, an exact factor cannot be given for the reduction in electron fluence that our trained Noise2Void can compensate for, it depends on the input SNR. However, assuming a reasonable SNR value for the input images, such as the PSNR of 14dB shown in Fig. 9, our trained Noise2Void denoiser improves the SNR by approximately an order of magnitude and therefore reduces the necessary electron fluence by approximately an order of magnitude, for a given sample. An experimental demonstration of our denoiser’s performance at electron fluences differing by approximately an order of magnitude can be seen in Fig. S5, where two images with electron fluences of 3.17 × 105e−nm−2 s−1 and 1.67 × 106e−nm−2 s−1 are shown.

Artefact analysis

Denoising techniques risk introducing image artefacts into the denoised images. It is therefore necessary to understand the potential image artefacts from our trained Noise2Void model.

An image containing only Gaussian noise was denoised using the trained model. The output images and their FFTs (see Figs. S7, S8) show patterns that lack well defined periodicities. Another image, containing a bright point in the middle of the ADF channel, was also denoised (see Fig. S9). Together, these show that, while the denoiser does impart artefacts on the image, these do not have well-defined periodicities and are of low intensity compared to the intensities of atom-like features present in the training and simulation datasets.

Finally, holes in the graphene liquid-cell’s windows, which can be seen in the bright field channel of images after increasing electron dose by an order of magnitude to damage the graphene, were checked to ensure adatoms are not present within the defective region. This would be unphysical and therefore guaranteed to be an image artefact. The lack of adatoms in these regions (see Fig. S10) suggests that the denoiser is not generating adatoms artificially.

It is important to note that the risk of artefacts may be higher where there is not a sufficiently large number of input images for model training. Unlike TV denoising or simple Gaussian blurring therefore, our modified Noise2Void approach would not be suitable where there is only a small number of experimental images.

Comparison of denoising speed

The speed of a particular denoising method is important if it is to be applied for real time denoising as well as for efficient data processing post-acquisition. A significant speed milestone for TEM denoising is therefore matching the rate at which experimental data is acquired, allowing the method to be used to direct or assist with the experimental data collection.

The precise speed of a denoiser will depend on several factors, including disk read-write speed, processor performance, hardware utilisation etc. Nevertheless, it is informative to compare the relative speed of each denoising technique applied to the same video series. The time required for Gaussian blurring, TV and our Noise2Void technique was compared for the denoising of a single video (containing about ~100 frames) on the same hardware. The mean time-per-frame of the modified Noise2Void approach was found to be 22 ms (45 frames-per-second), only twice the 11ms required by simple Gaussian blurring, and an order of magnitude less than the 300ms required by TV. The test was repeated three times to assess the variability of the time required for each denoising approach and the results were found to vary by approximately 10%. We also found our modified Noise2Void approach to be approximately 13 times faster than BM3D denoising (see Fig. S6). All approaches are faster than the relatively slow data acquisition rates used for these liquid-cell STEM experiments (~1.9 frames-per-second). Nevertheless faster acquisition rates of 10–20 frames-per-second (50–100 ms per acquisition) are routinely used in TEM and STEM imaging, for example during instrument alignment and for analysing electron-beam sensitive samples. Noise2Void, therefore, has the advantage compared to TV denoising of being fully compatible with real-time STEM imaging, suggesting it could provide a valuable tool to assist with alignments, especially when imaging electron beam sensitive samples or where low-dose conditions are required.

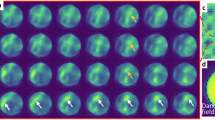

Transfer training

Training of the Noise2Void model is the slowest and most computationally intensive part of the Noise2Void denoising. We find that training requires a high-performance workstation while the denoising can be done on a standard laptop. The time required to train a Noise2Void model is highly dependent on the compute resources available and amount of training data. Approximately an hour and a half was required to train each Noise2Void model, with more training details available in the ‘Methods’ section. The ability of a trained model to be used for a new experimental data set is therefore important for the wider usability and applicability of this denoising approach. Fig. 10 shows the success of the trained Noise2Void approach when applied to a new set of experimental data. The new experimental dataset was 1230 experimental frames, which were again dual-channel images, 512 × 512 px in size, acquired on a similar but not identical sample. The data was obtained by a different microscopist, and during a different experimental session so instrumental parameters will have changed including lens aberrations, which dictate information transfer to the image. Figure 10a shows an example frame from the new experimental data. Figure 10b shows the result from our modified Noise2Void model (presented in Figs. 3 and 5 and trained on previous experimental data) when applied to denoise the new data. Fig. 10c compares this to where the original model was used as a starting point, but then further trained using a subset of the new experimental data (so overall the model had been trained on both old and new datasets). We refer to this as a ‘transfer trained’ model. Finally, we consider the model performance relative to where completely fresh models were trained only on half the new data set (Fig. 10d) and only on the complete new data set (Fig. 10e). Examination of the images and line profiles demonstrates that all approaches denoise the data effectively, with the ADF SNR ratio improved for all the denoised data. The model trained on fresh data gives a slightly improved transfer of the graphene lattice in the ADF channel after denoising, but the improvement is small demonstrating that retraining is not required as part of a workflow for similar samples.

Frames are dual-channel annular dark field (upper row) and bright field (second row). Below the images are plotted the intensity line-profiles (width of 1 px), extracted at identical locations along the armchair crystallographic direction of the underlying graphene lattice and bisecting (a) Au adatom. From left to right the columns display a) the noisy input frame, b the output using the Noise2Void model trained on previous data (used in Figs. 2-8), c the output after transfer-training the original model on this new experimental data, d, e the output using our Noise2Void network architecture but trained on this new data, using half the data as input (d) and using all of it (e).

The similarity of the denoiser outputs in Fig. 10 suggests that transfer training and training from scratch provided little improvement over the model trained only on the original dataset. This may be because the original model was generalised to an extent such that the difference between our old and new experimental data was not significant. It therefore cannot be said, for our two experimental data sets, that transfer training provided an advantage over training from scratch. However, this does not imply that the same would be true for two different datasets and where the two datasets differ sufficiently, there may well be a significant advantage to transfer training over training from scratch.

Comparison with mono-channel networks

A significant difference of this work over earlier implementations of Noise2Void is the application to dual-channel images. It is therefore interesting to consider whether this dual-channel UNet provides improved performance compared to two single-channel UNets for denoising dual-channel data. To test this, a Noise2Void denoiser was created using two separate mono-channel UNets, each trained on a single channel (ADF or BF images). Both networks are identical, except for the number of channels at the input and output, and each mono-channel model was trained for the same number of training epochs as the dual-channel model used elsewhere in this work. Comparison of the training losses for both the dual and the mono-channel networks (Fig. S11) shows that the dual-channel and ADF mono-channel plateaux after 32 epochs, but the BF mono-channel is continuing to decrease, suggesting further training is required.

While the ADF mono-channel showed similar results to the dual-channel Noise2Void denoiser, image artefacts were present in the output of the BF mono-channel denoiser, which is to be expected when the model’s training has not converged. While further training of the BF mono-channel network may remove these artefacts, it’s presence suggests that the mono-channel denoising technique requires more training compared to the dual-channel model. This would require more computing resources and is therefore disadvantageous.

The speed of the dual-channel denoiser was also compared to the mono-channel, and found to be around a factor of two faster, with frames taking on average 43 ms per frame for the mono-channel compared to 23 ms per frame for the dual-channel denoiser (both times are averages over 100 frames). This is unsurprising since for the mono-channel case there are two UNets rather than one. The combination of longer training and slower denoising speed demonstrates that the dual-channel UNet network architecture is preferred for denoising this dual-channel video dataset compared to two mono-channel UNets. Given the increasing capabilities of STEM instruments to collect large multiple-channel data sets, the demonstrated denoising approach for efficient data analysis is likely to be increasingly important.

Discussion

In this study, we have demonstrated the successful application of a modified Noise2Void architecture for denoising atomic resolution STEM dual-channel images. Neither the training nor the application of the method requires manual intervention, with no prior sample knowledge or image simulations required. The method achieved a factor of 3 improvement in PSNR and an order of magnitude reduction in N-RMSE values, as measured by application to image simulation. The success when applied to experimental data is demonstrated by the increased SNR for visibility of both isolated metal adatoms and the graphene lattice, outperforming Gaussian blurring and the TV approach. The Noise2Void methodology is found to have a mean processing time-per-frame of just 23ms (or 45fps) making it fully compatible with real time denoising of experimental STEM images, even when performing live search and alignment procedures. Denoising is highly desirable to improve the SNR of experimental data, providing opportunities for greater information recovery, for lowering the electron fluence or for taking experimental data sets at higher frame rates. These new imaging capabilities can be used to reduce the potential for electron beam-induced changes to the system or for investigating atomic-scale dynamic behaviour with improved temporal resolution. We demonstrate the potential of the technique for atomic resolution imaging of Au adatoms on graphene surrounded by liquid, a material system with a strongly crystalline component. We expect that the technique may be applicable wherever uncorrelated image noise is a significant factor, such as environmental cell or low-dose TEM/STEM.

Methods

Sample preparation

The cells were constructed using layer-by-layer transfer of 2D crystals onto a SiN support grid as described previously17,48. The cell was filled with a solution of HAuCl4 salt dissolved in organic solvent to generate atomically dispersed species of Au and Au clusters on a graphene surface surrounded by liquid. The upper and lower graphene windows are few layer graphene (3-4 layers or ~1 nm thick) and the liquid thickness is controlled by the thickness of the boron nitride spacer crystal to be 30–50 nm (Fig. 1). The samples were imaged using a double corrected GrandARM JEOL ARM300CF STEM with a convergence angle of 32 mrad, an accelerating voltage of 80 kV. Simultaneous bright field and annular dark field STEM images were acquired with acceptance angles of up to 18.7 mrad and between 46.8 mrad and 169.3 mrad, respectively. Frame sizes were 512 × 512 pixels. In total 16,950 frames were acquired as video sequences with ~ 100 frames per video.

Multislice simulations

Multislice image simulations were performed using the abTEM software49. Atomic coordinates were for a 4-layer sheet of Bernal stacked graphene, with gold atoms randomly deposited on the upper surface. Simulation parameters were matched to the JEOL GrandARM experimental data.

White noise (Gaussian) noise was added to the simulated images in Fig. 6 as additive Gaussian white noise (AGWN). PSNRs were simulated in the range of ~7–20 dB, with values of 16.4 and 16.2 for the ADF and BF channels, respectively, found to qualitatively provide the best match to the SNR of the experimental images.

Denoising

All denoising processing was performed on a Nvidia A5000 equipped workstation. Gaussian blurring was performed frame-wise and channel-independently with a standard deviation of the Gaussian kernel of σ = 1 px throughout the dataset. Kernels of σ = 2 px and σ = 0.5 px were also tested but found to give poorer performance, so are not included in the results.

Total variation denoising was performed using the Chambolle algorithm50 as implemented by scikit-image v0.20.0. This implementation takes as a parameter \(\frac{1}{\lambda }\), which was chosen as 1.0 for the ADF channel and 0.75 for the BF channel. These values were chosen as optimal after performing denoising calculations for \(\frac{1}{\lambda }\) = 0.1–2.0 in increments of 0.1.

BM3D47 denoising was performed using the Python library bm3d from the Python Package Index. Optimum sigma PSD parameters for the simulated images were found by testing values between 0.4 and 1.2 separately for each image channel. For the scaling of our simulations, values of 0.8 and 0.4 were found to be optimal for the ADF and BF image channels, respectively.

For the Noise2Void denoising, the UNet has a depth of four, with 64 feature channels at the output of the first layer. Average pooling is used, and the size of the convolutional kernel in the first layer is increased to 5 × 5 kernels with 3 × 3 kernels used elsewhere. Our modified Noise2Void model was initially trained on 8475 frames from a full data set of 16,750 frames with the training data extracted by sampling every other frame. All frames are dual-channel ADF and BF STEM images. No data augmentation was used and the Adam optimiser with a constant learning rate of 10−5 was used to train the model. Default initialisation was used for model weights. Training lasted for 32 epochs with a batch size of 12 dual-channel images. Noise2Void pixel masks were selected in a grid of pixels, with grid spacing equal to 24 px. These grid-points were then modified/jittered by randomly translating them by up to two pixels in each direction (see Fig. 2).

We also tested the performance of our modified Noise2Void model on new experimental data. We compared this to retraining of the model (transfer training), where the previously trained model was used as a starting point and this was retrained on a subset of 615 images from the new experimental data. The original and retrained model were also compared to a completely new (randomly initialised) model trained exclusively on this new data (both using 615 frames from the total data set of 1230 frames and using all the new data) (section ‘Transfer training’).

Noise metrics

Values of PSNR, SSIM and N-RMSE were calculated using scikit-image’s metrics module. SNR values were calculated by dividing the peak intensity (above the mean background) by the root-mean-square background noise.

The peak signal-to-noise ratio (PSNR), measured in decibels (dB) is calculated as

for data range R and mean-squared error MSE.

The calculation of SSIM is more involved, incorporating the luminance, contrast and structure of the images with

for images x, y where μ and σ represent the mean and standard deviations and C are small constants for numerical stability. This is fully described and justified in ref. 51.

Normalised root mean square error (N-RMSE) was calculated as

for each pixel n out of a total of N pixels, each with intensity In and where \({I}_{\min }\), \({I}_{\max }\) are the minimum and maximum intensities in the image and μI is the mean pixel intensity.

Data availability

Data supporting these findings, including experimental videos, simulated images, model weights and denoised output is made available upon reasonable request. Code for training and applying the Noise2Void models, and for generating the simulations, is made available at https://github.com/wilot/N2V-for-Au-GLC.

Code availability

Code for training and applying the Noise2Void models, and for generating the simulations, is made available at https://github.com/wilot/N2V-for-Au-GLC.

References

Erni, R., Rossell, M. D., Kisielowski, C. & Dahmen, U. Atomic-resolution imaging with a sub-50-pm electron probe. Phys. Rev. Lett. 102, 096101 (2009).

Liu, J. J. Advances and applications of atomic-resolution scanning transmission electron microscopy. Microsc. Microanal. 27, 943–995 (2021).

Morishita, S. et al. Attainment of 40.5 pm spatial resolution using 300 kV scanning transmission electron microscope equipped with fifth-order aberration corrector. Microscopy 67, 46–50 (2017).

Sawada, H. et al. STEM imaging of 47-pm-separated atomic columns by a spherical aberration-corrected electron microscope with a 300-kV cold field emission gun. J. Electron Microsc. 58, 357–361 (2009).

Rose, H. Information transfer in transmission electron microscopy. Ultramicroscopy 15, 173–191 (1984).

Lee, Z., Rose, H., Lehtinen, O., Biskupek, J. & Kaiser, U. Electron dose dependence of signal-to-noise ratio, atom contrast and resolution in transmission electron microscope images. Ultramicroscopy 145, 3–12 (2014).

Tien, E.-P. et al. Electron beam and thermal stabilities of MFM-300 (M) metal–organic frameworks. J. Mater. Chem. A 12, 24165–24174 (2024).

Ghosh, S., Kumar, P., Conrad, S., Tsapatsis, M. & Mkhoyan, K. A. Electron-beam-damage in metal organic frameworks in the TEM. Microsc. Microanal. 25, 1704–1705 (2019).

Chen, Q. et al. Imaging beam-sensitive materials by electron microscopy. Adv. Mater. 32, 1907619 (2020).

Fan, L., Zhang, F., Fan, H. & Zhang, C. Brief review of image denoising techniques. Vis. Comput. Ind. Biomed. Art. 2, 7 (2019).

de Jonge, N. Theory of the spatial resolution of (scanning) transmission electron microscopy in liquid water or ice layers. Ultramicroscopy 187, 113–125 (2018).

de Jonge, N., Houben, L., Dunin-Borkowski, R. E. & Ross, F. M. Resolution and aberration correction in liquid cell transmission electron microscopy. Nat. Rev. Mater. 4, 61–78 (2019).

Ilett, M. et al. Analysis of complex, beam-sensitive materials by transmission electron microscopy and associated techniques. Philos. Trans. R. Soc. A: Math., Phys. Eng. Sci. 378, 20190601 (2020).

Howe, J. M. & Saka, H. In situ transmission electron microscopy studies of the solid-liquid interface. MRS Bull. 29, 951–957 (2004).

Zhang, Q. et al. Atomic dynamics of electrified solid–liquid interfaces in liquid-cell TEM. Nature 630, 643–647 (2024).

Pu, S., Gong, C. & Robertson, A. W. Liquid cell transmission electron microscopy and its applications. R. Soc. Open Sci. 7, 191204 (2020).

Clark, N. et al. Tracking single adatoms in liquid in a transmission electron microscope. Nature 609, 942–947 (2022).

Wang, C., Qiao, Q., Shokuhfar, T. & Klie, R. F. High-resolution electron microscopy and spectroscopy of ferritin in biocompatible graphene liquid cells and graphene sandwiches. Adv. Mater. 26, 3410–3414 (2014).

Ross, F. M. Opportunities and challenges in liquid cell electron microscopy. Science 350, aaa9886 (2015).

Park, J. et al. Graphene liquid cell electron microscopy: progress, applications, and perspectives. ACS Nano 15, 288–308 (2021).

Abellan, P. et al. Factors influencing quantitative liquid (scanning) transmission electron microscopy. Chem. Commun. 50, 4873–4880 (2014).

Lee, J., Nicholls, D., Browning, N. D. & Mehdi, B. L. Controlling radiolysis chemistry on the nanoscale in liquid cell scanning transmission electron microscopy. Phys. Chem. Chem. Phys. 23, 17766–17773 (2021).

Tian, C. et al. Deep learning on image denoising: an overview. Neural Netw. 131, 251–275 (2020).

Wang, F., Henninen, T. R., Keller, D. & Erni, R. Noise2atom: unsupervised denoising for scanning transmission electron microscopy images. Appl. Microsc. 50, 23 (2020).

Khan, A., Lee, C.-H., Huang, P. Y. & Clark, B. K. Leveraging generative adversarial networks to create realistic scanning transmission electron microscopy images. npj Comput. Mater. 9, 85 (2023).

Ede, J. M. & Beanland, R. Improving electron micrograph signal-to-noise with an atrous convolutional encoder-decoder. Ultramicroscopy 202, 18–25 (2019).

Kawahara, K., Ishikawa, R., Sasano, S., Shibata, N. & Ikuhara, Y. Atomic-resolution STEM image denoising by total variation regularization. Microscopy 71, 302–310 (2022).

Mevenkamp, N. et al. Poisson noise removal from high-resolution stem images based on periodic block matching. Adv. Struct. Chem. Imaging 1, 3 (2015).

Yankovich, A. B. et al. Non-rigid registration and non-local principle component analysis to improve electron microscopy spectrum images. Nanotechnology 27, 364001 (2016).

Roels, J. et al. An overview of state-of-the-art image restoration in electron microscopy. J. Microsc. 271, 239–254 (2018).

Marchello, G., De Pace, C., Duro-Castano, A., Battaglia, G. & Ruiz-Pérez, L. End-to-end image analysis pipeline for liquid-phase electron microscopy. J. Microsc. 279, 242–248 (2020).

Reboul, C. F. et al. Single: Atomic-resolution structure identification of nanocrystals by graphene liquid cell em. Sci. Adv. 7, eabe6679 (2021).

Frangakis, A. S. It’s noisy out there! a review of denoising techniques in cryo-electron tomography. J. Struct. Biol. 213, 107804 (2021).

Krull, A., Buchholz, T.-O. & Jug, F. Noise2Void - Learning Denoising From Single Noisy Images. In: Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2124–2132 (2019).

Ronneberger, O., Fischer, P. & Brox, T. U-Net: Convolutional networks for biomedical image segmentation. In Navab, N., Hornegger, J., Wells, W. M. & Frangi, A. F. (eds.) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015, 234–241 (Springer International Publishing, Cham, 2015).

Lehtinen, J. et al. Noise2Noise: Learning image restoration without clean data. In Dy, J. & Krause, A. (eds.) Proc. 35th International Conference on Machine Learning, Vol. 80 of Proceedings of Machine Learning Research, 2965–2974 (PMLR, 2018).

Batson, J. & Royer, L. Noise2Self: Blind denoising by self-supervision. In Chaudhuri, K. & Salakhutdinov, R. (eds.) Proc. 36th International Conference on Machine Learning, Vol. 97 of Proceedings of Machine Learning Research, 524–533 (PMLR, 2019).

Höck, E., Buchholz, T.-O., Brachmann, A., Jug, F. & Freytag, A. N2V2 - Fixing Noise2Void checkerboard artifacts with modified sampling strategies and a tweaked network architecture. In Karlinsky, L., Michaeli, T. & Nishino, K. (eds.) Computer Vision – ECCV 2022 Workshops, 503–518 (Springer Nature Switzerland, 2023).

Welch, D. A., Faller, R., Evans, J. E. & Browning, N. D. Simulating realistic imaging conditions for in situ liquid microscopy. Ultramicroscopy 135, 36–42 (2013).

Krivanek, O. L. et al. Atom-by-atom structural and chemical analysis by annular dark-field electron microscopy. Nature 464, 571–574 (2010).

Cowley, J. Scanning transmission electron microscopy of thin specimens. Ultramicroscopy 2, 3–16 (1976).

Odena, A., Dumoulin, V. & Olah, C. Deconvolution and checkerboard artifacts. Distill https://doi.org/10.23915/distill.00003 (2016).

Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proc. IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (IEEE, 2017).

Chen, L.-C., Papandreou, G., Kokkinos, I., Murphy, K. & Yuille, A. L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 40, 834–848 (2018).

Wang, Z. & Bovik, A. C. Mean squared error: love it or leave it? A new look at signal fidelity measures. IEEE Signal Process. Mag. 26, 98–117 (2009).

van der Walt, S. et al. scikit-image: image processing in Python. PeerJ 2, e453 (2014).

Dabov, K., Foi, A., Katkovnik, V. & Egiazarian, K. Image denoising by sparse 3-d transform-domain collaborative filtering. IEEE Trans. Image Process. 16, 2080–2095 (2007).

Kelly, D. J. et al. Nanometer resolution elemental mapping in graphene-based TEM liquid cells. Nano Lett. 18, 1168–1174 (2018).

Madsen, J. & Susi, T. abTEM: Transmission electron microscopy from first principles. Open Res. Eur. 1, 13015 (2021).

Chambolle, A. An algorithm for total variation minimization and applications. J. Math. Imaging Vis. 20, 89–97 (2004).

Wang, Z., Bovik, A., Sheikh, H. & Simoncelli, E. Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13, 600–612 (2004).

Acknowledgements

The authors acknowledge funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (Grant ERC-2016-STG-EvoluTEM-715502 and QTWIST (no.101001515)). We also thank the Engineering and Physical Sciences Research Council (EPSRC) for funding under grants EP/Y024303, EP/S021531/1, EP/M010619/1, EP/V007033/1, EP/S030719/1, EP/V001914/1, EP/V036343/1 and EP/P009050/1 and also for the EPSRC Centre for Doctoral Training(CDT) Graphene-NOWNANO. TEM access was supported by the Henry Royce Institute for Advanced Materials, funded through EPSRC grants EP/R00661X/1, EP/S019367/1, EP/P025021/1 and EP/P025498/1. RVG. acknowledges funding from the European Quantum Flagship Project 2DSIPC (no. 820378). We thank Diamond Light Source for access and support in use of the electron Physical Science Imaging Centre (Instrument E02 and proposal numbers MG33252 and MG35552) that contributed to the results presented here.

Author information

Authors and Affiliations

Contributions

W.T. implemented the Noise2Void architecture, its modifications, performed the training, simulations, comparisons and timings. S.S.-A. and N.C. fabricated the graphene liquid cell samples. R.C. and S.S.-A. performed the TEM imaging. S.J.H. and R.G. supervised this research. W.T. wrote this manuscript and S.S.-A., N.C. and S.J.H. contributed to writing and editing this manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Thornley, W., Sullivan-Allsop, S., Cai, R. et al. Noise2Void for denoising atomic resolution scanning transmission electron microscopy images. npj Comput Mater 12, 68 (2026). https://doi.org/10.1038/s41524-025-01939-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41524-025-01939-1