Abstract

To address the problem that the sand cat swarm optimization (SCSO) algorithm experiences a decline in convergence speed and a tendency to fall into local optima during the iteration process, this paper proposes a multi-strategy enhanced sand cat swarm optimization (MESCSO) algorithm to improve its ability to escape local optima and enhance convergence efficiency. Firstly, an improved sine mapping combined with random opposition-based learning (ISMROBL) is employed during population initialization to enhance the uniformity and diversity of initial solutions. Secondly, a nonlinear decreasing parameter is introduced to dynamically balance global exploration and local exploitation. Thirdly, generalized quadratic interpolation (GQI) is incorporated to strengthen global search capability, while the improved mean differential mutation (IMDM) strategy enhances local exploitation. Finally, accelerated opposition-based learning (AOBL) is applied to refine individual positions and improve the algorithm’s ability to escape local optima. Experimental results on 23 standard benchmark functions and the CEC2014 benchmark functions show that MESCSO achieves superior performance compared to nine algorithms. In addition, MESCSO is tested on five constrained engineering design problems. The results demonstrate that, compared with SCSO, MESCSO yields improvements of 0.53%, 1.47%, 0.03%, 0.41%, and 0.31%, respectively, thereby confirming its effectiveness and applicability.

Similar content being viewed by others

Introduction

With the rapid advancement of science and technology, numerous complex optimization problems have arisen in engineering domains. These problems are often characterized by high dimensionality, nonlinearity, multimodality, or discreteness. Traditional optimization methods, however, are typically limited by problem structure and scale, resulting in suboptimal performance in practical scenarios. Specifically, conventional approaches often involve high computational complexity and slow convergence, making it difficult to efficiently locate global optima within a reasonable timeframe when dealing with large-scale or highly complex problems1,2. Furthermore, in practice, constructing accurate mathematical models is often challenging and the optimization process is frequently subject to various constraints. These challenges further expose the limitations of traditional techniques and hinder their ability to deliver fast and high-precision solutions.

Metaheuristic algorithms (MAs) are a class of optimization methods inspired by natural phenomena, biological swarm behavior, or human social activities. These algorithms typically exhibit strong global search capabilities and do not rely on the specific mathematical characteristics or precise models of the problem. As a result, they are well-suited for solving high-dimensional, nonlinear, multimodal, discrete, and complex optimization problems with constraints. Owing to their flexibility and robustness, MAs are particularly effective in addressing complex scenarios commonly encountered in practical engineering problems.

MAs can generally be categorized into four groups based on their inspiration sources or operating mechanisms: evolutionary-based algorithms, swarm-based algorithms, physical-based algorithms, and human-based algorithms.

Evolutionary-based algorithms are inspired by biological evolution theory. These algorithms simulate natural selection, reproduction, and mutation processes to perform optimization. The most representative example is the Genetic Algorithm (GA)3, which is grounded in Darwin’s theory of evolution and applies selection, crossover, and mutation to simulate natural evolution. Related algorithms include Evolutionary Strategy (ES)4, Genetic Programming (GP)5, Differential Evolution (DE)6, and Evolutionary Programming (EP)7.

Swarm-based algorithms simulate collective behaviors and cooperative mechanisms observed in biological swarms. Particle Swarm Optimization (PSO)8 simulates the foraging behavior of birds. Sparrow Search Algorithm (SSA)9 simulates the foraging and vigilance of sparrow populations. Dragonfly Algorithm (DA)10 simulates dragonfly predation and migration. And the Firefly Algorithm (FA)11 is based on the mutual attraction via light signals among fireflies. Other notable swarm-based algorithms include Sled Dog Optimizer (SDO)12, Snow Geese Algorithm (SGA)13, Red-billed Blue Magpie Optimizer (RBMO)14, and Blood-Sucking Leech Optimizer (BSLO)15.

Physical-based algorithms are inspired by fundamental principles in physics, such as mechanics, thermodynamics, and electromagnetism. These methods simulate the physical properties and dynamic behaviors of particles or substances to address optimization problems. Simulated Annealing (SA)16 is a classic example that emulates the annealing process in metallurgy, where temperature decreases gradually to achieve system stability. Similar algorithms include Atom Search Optimization (ASO)17, Gravitational Search Algorithm (GSA)18, Chernobyl Disaster Optimizer (CDO)19, Energy Valley Optimizer (EVO)20, Nuclear Reaction Optimization (NRO)21, and Electromagnetic Field Optimization (EFO)22.

Human-based algorithms are inspired by interactions, decision-making, and social phenomena in human societies. These algorithms simulate dynamic processes in collective human behavior. Brain Storm Optimization (BSO)23 simulates the brainstorming process. Queuing Search Algorithm (QSA)24 draws inspiration from human queuing behavior. And Teaching-Learning-Based Optimization (TLBO)25 simulates the interactions between teachers and learners in a classroom. Similar algorithms include Mountaineering Team-Based Optimization (MTBO)26, Poor And Rich Optimization (PRO)27, Gaining Sharing Knowledge Based Algorithm (GSK)28, Gold Rush Optimizer (GRO)29, and Cognitive Learning Optimizer (CLO)30.

In recent years, due to the growing complexity and diversity of real-world optimization problems, the field of metaheuristic optimization has undergone significant advancements. Numerous novel algorithms have been proposed to improve global search capability, convergence speed, and solution robustness. The Frigatebird Optimizer (FBO)31 simulates the food-snatching and predatory behaviors of frigatebirds. It operates in two stages: the first emulates the disturbance behavior used to steal food from other seabirds, enabling global exploration; the second simulates diving attacks to guide individuals in converging toward the optimal solution, thereby facilitating local exploitation. The Gyro Fireworks Algorithm (GFA)32 is inspired by the firing process of a gyro-style firework and adopts a multi-stage search strategy. During early iterations, the algorithm assumes a fully fueled firework, enabling extensive global exploration via Lévy flight and spiral motion. As the “fuel” depletes in later iterations, the search narrows with spiral convergence toward optimal solutions, thereby enhancing local refinement. The Fishing Cat Optimizer (FCO)33 divides the search process into four stages, reflecting the ambush and hunting strategies of fishing cats. In the early phase, it simulates waiting and sensing behaviors to perform alternating expansive and contractive global exploration. In the later phase, it simulates diving and capturing behaviors to conduct a similarly alternating local exploitation strategy. Through this four-phase dynamic mechanism, FCO33 achieves an effective balance between exploration and exploitation, thereby enhancing search efficiency and the capability to avoid local optima.

Although MAs have demonstrated broad applicability in solving complex non-linear and multimodal problems, their optimization performance varies significantly across different application scenarios. According to the “No Free Lunch” (NFL) theorem34, no single algorithm can achieve optimal performance on all types of problems. As a result, continuously exploring and incorporating more adaptive improvement strategies has become essential for enhancing the generality and practical value of metaheuristic algorithms. This research direction has gradually emerged as a core trend in the development and advancement of metaheuristics in recent years35.

The Sand Cat Swarm Optimization algorithm (SCSO)36 is a novel swarm-based metaheuristic algorithm proposed by Amir Seyyedabbasi et al. in 2022. The SCSO algorithm simulates the hunting behavior of sand cats in the wild, especially their keen perception of low-frequency sounds and their ability to lock onto prey, and establishes an efficient balance between global exploration and local exploitation by simulating the two phases of detecting and attacking prey by sand cats, so as to achieve an efficient optimization solution. Peng et al. proposed a Multi-strategy Integrated Sand Cat Swarm Optimization Algorithm (MSCSO)37, which integrates a good point set strategy, dynamic nonlinear adjustment of the search range, and an alert mechanism from the Sparrow Search Algorithm to significantly enhance global search ability. Li et al. proposed an improved version of SCSO, called CWXSCSO38, which employs a new dynamic exponential factor, an elite decentralization strategy, and crossbar strategy to enhance the global optimization capability. Cai et al. proposed an Improved Sand Cat Swarm Optimization Based on Lens Opposition-based Learning and Sparrow Search Algorithm (LSSCSO)39 by combining dynamic spiral search with lens contrast learning to broaden the search range and improve population diversity. Zhang et al. proposed an Improved Multi-Strategy Sand Cat Optimization Algorithm (IMSCSO)40, which incorporates fitness-distance balancing based on roulette selection, population perturbation, and best worst mutation mechanism to significantly enhance search performance and effectively avoid premature convergence. These improved SCSO algorithms are summarized in Table 1.

Although numerous MAs have been widely applied to complex optimization problems, their performance still varies significantly across different tasks, indicating that their adaptability and generalization capabilities require further improvement. In recent years, strategy fusion and mechanism coordination have emerged as key research directions for enhancing algorithmic performance35. To this end, this paper proposes a Multi-Strategy Enhanced Sand Cat Swarm Optimization Algorithm (MESCSO) aimed at comprehensively improving optimization effectiveness.

The enhancement strategies in MESCSO algorithm focus on five main aspects. Firstly, to improve the quality of the initial population, an improved sine mapping is combined with a random opposition-based learning mechanism during initialization. This strategy enhances population diversity and broadens the search space for potential optimal solutions. Secondly, a new nonlinear decreasing parameter is designed to dynamically regulate algorithmic parameters, improving adaptability and balance during the search process and enabling better coordination between exploration and exploitation at different stages. Thirdly, a generalized quadratic interpolation (GQI) strategy is introduced during the exploitation phase, allowing individuals to escape local regions and approach the global optimum more efficiently. Fourthly, an improved mean differential mutation (IMDM) strategy is employed in the exploration phase, which leverages average differences among individuals to enhance global search capability in high-dimensional complex spaces and prevent premature convergence. Finally, an accelerated opposition-based learning (AOBL) mechanism is probabilistically applied after each iteration based on a random threshold to refine population positions. Combined with a greedy selection strategy, it preserves individuals with better fitness, thereby improving both population diversity and overall search performance.

Through the integration of the five aforementioned strategies, the global exploration ability of the MESCSO algorithm is significantly enhanced, and its convergence performance is notably improved. To comprehensively evaluate its optimization capability, extensive experiments were conducted on 23 standard benchmark functions and CEC2014 benchmark functions. The experimental analysis includes data statistics, convergence curves, box plot, and the Wilcoxon rank sum test. Furthermore, to verify the practical applicability of the proposed algorithm in engineering optimization, five representative engineering problems were selected for testing. The experimental results demonstrate that MESCSO algorithm performs well in solving complex optimization problems.

The main contributions of this paper are as follows:

-

1.

The ISMROBL strategy is employed to enhance the population diversity of sand cats, thereby improving the algorithm’s global optimization capability.

-

2.

An IMDM strategy is adopted during the exploration phase to expand the search space and further strengthen global exploration.

-

3.

In the exploitation phase, a GQI strategy is incorporated to help sand cat individuals escape local optima and locate better solutions, enhancing the algorithm’s search performance.

-

4.

An AOBL mechanism is introduced after each iteration to dynamically correct individual positions according to the probabilities. When trapped in local optima, the MESCSO algorithm applies opposition-based random reinitialization to improve its ability to escape.

-

5.

The proposed MESCSO algorithm is evaluated and compared with the other nine algorithms, demonstrating superior optimization performance.

The remainder of this paper is organized as follows: “The sand cat swarm optimization algorithm (SCSO)” section reviews the fundamental principles of the SCSO algorithm and “The multi-strategy enhanced sand cat swarm optimization algorithm (MESCSO)” section presents the improvement strategies of the MESCSO algorithm. “Experimental results and analysis” section reports the experimental results on 23 standard benchmark functions and CEC2014 benchmark functions. “Structural engineering optimization problems section” evaluates the performance of MESCSO algorithm on five constrained engineering design problems. Finally, “Conclusions” section concludes this paper.

The sand cat swarm optimization algorithm (SCSO)

Initialize population

In the initialization phase of the SCSO algorithm, the problem dimension d and the number of sand cat individuals m must first be defined. The position of each sand cat is represented as \(X = (x_1, x_2, \dots , x_d)\), where each component \(x_i\) must be located between the lower and upper boundaries. By generating independent random values for each dimension within the search space, an initial solution matrix of size \(m \times d\) is formed, as shown in Eq. (1). Each individual in this initial population is then evaluated using the fitness function, and the results are ranked to identify and record the position of the best-performing sand cat. In subsequent generations, the remaining sand cats iteratively approach this optimal position or explore its vicinity, aiming to improve the overall solution quality. If the optimal solution found in the current generation outperforms that of the previous generation, it is stored as the new global best. Otherwise, the global best remains unchanged.

where ub and lb represent the upper and lower limits of the search area, and rand(0, 1) is a random number between 0 and 1.

Exploration phase

During the exploration phase, sand cats rely on their high sensitivity to low-frequency sound waves to detect the location of prey. Their sensitivity to low-frequency signals is defined within a specific range denoted as \(r_G\). To quantify this auditory sensitivity, a parameter \(S_M\) is induced to represent the maximum detectable range of the sound, and its value is set to 2, which indicates that the sensitivity gradually decays from 2 kHz to 0. As the number of iterations t increases, the sensitivity is linearly scaled down according to Eq. (2).

where t represents the current iteration number, and T denotes the maximum number of iterations. Based on this, a global control factor R is defined to balance the processes of exploration and exploitation.

The actual radius of sensitivity for each sand cat r is determined by the following equation.

Subsequently, each sand cat updates its position \(X(t+1)\) for the next generation by comparing its current position \(X_c(t)\) with the current best candidate position \(X_{bc}(t)\). The position update is defined as:

Exploitation phase

In the exploitation phase, it is assumed that the sand cat’s hunting range is represented as a circular area. Each sand cat randomly generates an attack angle \(\theta\) using a roulette wheel selection mechanism. The value of \(\theta\) is randomly selected within the range of \(0^\circ\) to \(360^\circ\). This angle determines the direction in which the sand cat moves within the circular region. To describe the distance between the sand cat and its prey, Eq. (6) is defined as follows:

where \(X_{rand}\) represents the distance between the current best position \(X_b(t)\) and the current position \(X_c(t)\) of each sand cat in generation t. Then, based on the auditory sensitivity radius r and the randomly generated angle \(\theta\), the updated position of the sand cat in generation \(t +1\), denoted as \(X(t+1)\), is obtained as follows:

By introducing random angles and a cosine factor, sand cats are enabled to move and hunt in various directions. As the iteration progresses, the sand cats continuously reduce their search radius and attempt to lock onto prey more precisely. This mechanism enhances the diversity of the algorithm and effectively suppresses premature convergence.

Bridging phase

The SCSO algorithm achieves seamless switching between prey-searching and prey-attacking modes through a global control factor R, as defined in Eq. (3). When \(|R| \le 1\), the sand cat performs a prey-attacking operation (exploitation phase). Otherwise, it remains in the prey-searching operation (exploration phase). The specific position update rule is given in Eq. (8).

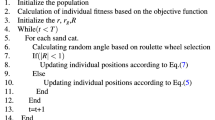

Through the above mechanism, the SCSO algorithm can dynamically adjust its search strategy at different stages and maintain a relatively fast convergence rate. The corresponding pseudo-code is presented in Algorithm 1.

Pseudo-Code of SCSO algorithm

The multi-strategy enhanced sand cat swarm optimization algorithm (MESCSO)

ISMROBL population diversity

Since the SCSO algorithm adopts a purely random strategy during the population initialization phase, it may result in uneven distribution of individuals, thereby reducing the diversity and quality of the initial population and further affecting the convergence performance of the algorithm. The initial position of each individual in the population plays a crucial role in determining the optimization effectiveness of swarm intelligence algorithms. Compared to traditional random generation based solely on probability, chaotic mapping is characterized by randomness, nonrepetitiveness, and unpredictability, which can help ensure better uniformity in population distribution. Based on this, MESCSO algorithm incorporates chaotic mapping during population initialization to enhance the diversity of potential solutions. In contrast to conventional random initialization methods, chaotic mappings introduce nonlinear dynamic behavior, which strengthens the exploration ability and robustness of swarm intelligence algorithms, while effectively avoiding premature convergence. Commonly used chaotic mappings include logistic mapping, tent mapping, cubic mapping, and sine mapping.

Sine mapping is widely used in chaotic mappings due to its simple structure and high computational efficiency, but its uneven probability density distribution limits its performance to some extent. In order to overcome this shortcoming, this paper improves the Sine mapping to enhance its performance in terms of spatial traversal, population diversity, and uniformity of phase plane distribution. The improved Sine chaos mapping is shown in Eq. (9).

In the Eq. (9), the initial values of \(a_i\) and \(b_i\) are randomly selected from the interval (0,1). The control parameters are set as follows: \(k_1=5\), \(k_2=3\) and \(k_3=4\), where \(\mu =0.5\) denotes the nonlinear coupling strength coefficient. The variable \(y_{i+1}\) represents the normalized chaotic sequence generated by the chaotic mapping, and the operator “mod” denotes the modulo operation. To map the chaotic sequence to the actual solution space, it is necessary to perform scaling and translation so that the sequence falls within the defined problem domain [lb, ub]. The transformation equation is given as follows:

where \(X_{sine}\) is a candidate solution generated using the improved sine mapping.

The core idea of Opposition-Based Learning (OBL)41 is to compare the current solution with its corresponding opposite solution and select the better one to guide the next generation of evolution, thereby reducing the probability of falling into local optima. The basic formulation of OBL can be expressed as:

However, the original OBL strategy lacks sufficient randomness, which may lead to performance degradation in complex or high-dimensional search spaces. In such cases, the opposition solutions may suffer from limited diversity due to their uniform distribution. To address this issue, this paper introduces a random disturbance term to enhance the diversity and exploration ability of opposition solutions, resulting in the formulation of the Random Opposition-Based Learning (ROBL) strategy42, as described below:

where \(X_{ROBL}\) is a candidate solution generated using the ROBL strategy.

The ISMROBL population diversity strategy is as follows: first, the improved sine chaotic mapping strategy is employed to initialize the population. Then, the ROBL strategy is applied to generate opposition individuals. By comparing the fitness values of the original individuals and their opposites, the top N individuals with the best fitness are selected to form the final initial population. This initialization strategy enables the algorithm to begin the evolutionary process with individuals of higher fitness, thereby accelerating convergence.

Nonlinear decreasing parameter

In the SCSO algorithm, the convergence factor \(r_G\) is gradually reduced from 2 to 0 in a linearly decreasing manner. However, this approach starts to gradually shift to local search only in the middle of the iteration, which results in low search efficiency and limited optimization effect. To address this limitation, this paper proposes a nonlinear decreasing parameter to enhance the exploration ability of the algorithm in the global search space. Compared with the SCSO algorithm, the improved algorithm maintains a stable exploration intensity at the beginning of iterations to avoid missing potential critical regions. And it accelerates the convergence in the middle and late stages to significantly improve the local exploitation efficiency. This nonlinear decreasing parameter helps to better balance the global exploration and local exploitation during the whole iteration process, and its mathematical expression is as follows:

GQI strategy

Polynomial interpolation is a method that estimates a local minimum of the objective function by constructing a polynomial based on the function values at several specific points. However, traditional quadratic interpolation methods typically require three selected points, and their accuracy and performance are highly dependent on the distribution of these points. If the selected points are poorly distributed, the interpolation accuracy may degrade significantly, and the algorithm may waste computational resources in ineffective regions of the search space.

To address these shortcomings, this paper introduces a Generalized Quadratic Interpolation (GQI) strategy43, which constructs a quadratic model based on any three distinct points. This enables more accurate estimation of the local minimum of the objective function. The GQI method provides more explicit guidance for local search, thereby improving search efficiency and solution quality. The formulation of GQI for obtaining the minimum of the interpolation function under all conditions is given as follows:

where \(x_i\), \(x_j\), and \(x_k\) represent any three selected points. \(x'\), \(x_j'\), and \(x_k'\) denote the updated positions of these points obtained through interpolation. \(f(x_i)\), \(f(x_j)\), and \(f(x_k)\) are the fitness values corresponding to the three selected points. \(x^*\) denotes the optimal position obtained using the GQI strategy.

In MESCSO algorithm, the GQI strategy is employed to update the sand cat population in order to enhance local exploitation. Set a random number p between 0 and 1. When \(p>0.5\), the exploitation phase follows the formulas in the original SCSO algorithm. When \(p<0.5\), the GQI strategy is applied. The position update based on the GQI strategy is given as follows:

where n follows a standard normal distribution, and m is a randomly selected integer within the interval [1, d]. Equation (16) represents the GQI function, which is used to determine the minimum of the interpolated function constructed by \((X_{b}(t),\ f(X_{b}(t))\), \((X_{r1}(t),\ f(X_{r1}(t))\), and \((X_{r2}(t),\ f(X_{r2}(t))\). Here, \(X_{r1}(t)\) and \(X_{r2}(t)\) are the positions of two different individuals randomly selected from the current population, and they are distinct from the position \(X_{b}(t)\).

IMDM strategy

To address the issues of premature convergence and insufficient population diversity in SCSO algorithms, this paper builds upon the Mean Differential Mutation (MDM) strategy proposed by Wang et al.44 and introduces further improvements. The MDM strategy is inspired by the concepts of DE and Mean Particle Swarm Optimization (MeanPSO)45, and has demonstrated promising results in practical applications. However, MDM is susceptible to limitations due to the directionality of initial differences and the use of mean values, which may hinder the algorithm’s ability to escape from local optima.

To further improve population diversity and global search performance, this paper proposes an improved version of MDM, referred to as IMDM. Based on the original difference vector guided by the mean, IMDM enhances the incorporation of global population information, allowing the search direction to be adaptively adjusted according to the current population state. In addition, a self-adaptive control factor is introduced into the update and mean-guided difference process. IMDM can dynamically regulate the search strength and direction based on the current population state, thereby reducing the risk of premature convergence to local optima.

In MESCSO algorithm, the update of each sand cat is guided not only by its own historical best position Y(t) and the global best position \(P_{g}(t)\), but also by the population mean position \(P_{m}(t)\). Compared with the original mechanism, the IMDM strategy significantly improves the algorithm’s ability to escape local optima. Moreover, it better leverages both individual and population information, thus effectively enhancing population diversity at both the individual and group levels. The mathematical formulation of the IMDM strategy during the exploration phase of sand cats is expressed as follows:

where \(r_1\), \(r_2\), and \(r_3\) are random numbers within the range [0, 1]. \(c_1\) and \(c_2\) are two important control parameters called acceleration coefficients with a value of 1.4. \(c_3\) is the mutation coefficient with a value of 0.55. \(w_{max}\) and \(w_{min}\) represent the maximum and minimum values of the inertia weight, respectively.

AOBL update mechanism

In swarm intelligence optimization algorithms, the search process typically consists of two stages: exploration and exploitation. The position update strategies employed in each stage differ in nature. To further enhance the global search capability and convergence efficiency of the algorithm, this paper introduces an Accelerated Opposition-Based Learning (AOBL) mechanism46 following the basic position update. AOBL is used to refine the current candidate solutions. This mechanism provides greater flexibility in the early stages of the algorithm, thereby enhancing global exploration capability and preventing premature convergence. In the later stages, it gradually reduces exploration and increases exploitation ability, improving the overall optimization performance and achieving a dynamic balance between exploration and exploitation.

Specifically, after completing the standard position update in the exploration or exploitation phase, a random number \(rand \in [0,1]\) is generated. If \(rand<0.5\), the AOBL mechanism is triggered. The corresponding opposite position is then calculated and used to replace the current updated position. This mechanism performs the position correction according to the following way:

where \(r_4\) and \(r_5\) are random numbers within the range [0, 1]. \(X_{AOBL}\) is a candidate solution generated using the AOBL mechanism.

To achieve a dynamic balance between exploration and exploitation, an acceleration coefficient \(e_{ac}\) is introduced. This coefficient gradually decreases with the number of generations, thereby regulating the influence of the AOBL mechanism. The corresponding calculation formula is as follows:

where \(e_{max}\) and \(e_{min}\) represent the maximum and minimum values of the acceleration coefficient, respectively. In this paper, the values are set as \(e_{max}=1\) and \(e_{min}=\) 1e-5.

Finally, to ensure solution quality, a greedy selection strategy is employed. The updated position \(X_{AOBL}\), obtained through AOBL mechanism, is compared with the original updated position X based on fitness. If the fitness of \(X_{AOBL}\) is better, it is adopted as the final updated position. Otherwise, the original position X is retained. This mechanism effectively enhances the algorithm’s ability to escape local optima while ensuring the steady improvement of the optimization process.

MESCSO algorithm flowchart and pseudocode

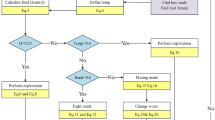

The pseudocode of the MESCSO algorithm is presented in Algorithm 2, and its overall flow chart is illustrated in Fig. 1.

Pseudo-Code of MESCSO algorithm

Time complexity analysis

The time complexity of the proposed MESCSO algorithm is primarily influenced by the population size (N), the dimensionality of the optimization problem (dim), the maximum number of iterations (T), and the cost of evaluating the objective function (C). The overall computational complexity comprises several components, including the initialization phase and the iterative update process, which incorporates multiple enhancement strategies. The specific time complexity analysis is as follows:

-

1.

The initialization parameter time is O(1).

-

2.

The initial population position time is \(O(N\times dim)\).

-

3.

The calculation time cost of ISMROBL strategy \(O(N\times C)\).

-

4.

The time cost for the sand cat to prey is \(O(T\times N\times dim)\).

-

5.

The cost time of the calculation function includes the calculation time cost of the algorithm itself \(O(T\times N\times C)\), the calculation time cost of GQI strategy \(O(T\times N\times C)\).

-

6.

A probabilistically triggered AOBL strategy mechanism. Since AOBL is executed with a probability of 0.5 per iteration, its expected computational cost is \(O(0.5\times T\times N\times dim)\) for position generation and \(O(0.5\times T\times N\times C)\) for the calculation time cost.

Combining all components, the total time complexity of the MESCSO algorithm is expressed as:

Although MESCSO introduces a moderate computational overhead as a result of incorporating multiple enhancement strategies, this increase is justified by the significant improvements in optimization performance, as demonstrated through comparative experiments with SCSO presented in Section “Experimental Results and Analysis”.

Experimental results and analysis

All experiments in this paper were conducted on a computer equipped with an AMD 7940H processor, 16 GB memory, and a 64-bit Windows 11 operating system, using MATLAB 2023b.

To evaluate the overall performance of the MESCSO algorithm, 23 standard benchmark functions and CEC2014 benchmark functions were selected. These benchmark functions cover a variety of optimization problems, including unimodal, multi-modal, and high-dimensional noisy types, which provide a more comprehensive assessment of the algorithm’s adaptability and performance across diverse problem scenarios.

To better demonstrate the optimization capability of MESCSO, it was compared with several well-known metaheuristic algorithms, including the Sand Cat Swarm Optimization (SCSO)36, Arithmetic Optimization Algorithm (AOA)47, Subtraction-Average-Based Optimizer (SABO)48, Whale Optimization Algorithm (WOA)49, Beluga Whale Optimization (BWO)50, Dung Beetle Optimizer (DBO)51, Harris Hawks Optimization (HHO)52, Kepler Optimization Algorithm (KOA)53, and Sine Cosine Algorithm (SCA)54. The parameter settings for these algorithms are listed in Table 2.

Flow chart of the MESCSO algorithm.

Experiments on the 23 standard benchmark functions

As shown in Table 3, a total of 23 standard benchmark functions were selected in this paper, including 7 unimodal functions, 6 multimodal functions, and 10 fixed-dimension multimodal functions. In the definitions, F represents the function itself, dim denotes the dimensionality of the function, Range indicates the search space, and \(F_{min}\) refers to the known global optimum value. For the experimental setup, the population size N was set to 30, the problem dimensions dim was set to 30 and 500, and the maximum number of iterations T was 500. To comprehensively evaluate the performance of the algorithms, MESCSO algorithm and the nine other comparison algorithms were independently executed 30 times on each function. The average fitness and standard deviation of each algorithm were recorded.

Experimental results and analysis of 23 standard benchmark functions

Table 4 presents the statistical results of 10 algorithms tested on the 23 standard benchmark functions. To clearly display the statistics, the best average fitness value and standard deviation are bolded. For functions F1-F4 at dimensions 30 and 500, the MESCSO algorithm achieved the theoretical optimum in all cases. Among the other algorithms, only the AOA reached the theoretical optimum on the 30-dimensional function F2. For functions F5-F6 and F12-F13, the performance of MESCSO algorithm was second only to BWO algorithm and outperformed the other comparison algorithms. In the function F7, MESCSO algorithm achieved the best average fitness. For the function F8, MESCSO algorithm was outperformed by HHO and BWO algorithm, but still outperformed the rest. MESCSO algorithm also reached the theoretical optimum on functions F9-F11, along with SCSO, BWO, SABO, and HHO algorithm. The functions F14-F23 are relatively simple, making it easier to find good solutions. Compared with other algorithms, MESCSO algorithm found better fitness values on functions F14-F23.

However, the statistical results in Table 4 alone are not sufficient to fully demonstrate the optimization performance of the MESCSO algorithm across all 23 standard benchmark functions. To more intuitively compare the optimization capability of MESCSO algorithm, Figs. 2, 3 and 4 illustrate the convergence curves of MESCSO algorithm and the other nine comparison algorithms on the 23 standard benchmark functions.

As shown in the figures, MESCSO algorithm exhibits strong convergence ability on functions F1-F4 and F9-F11, quickly reaching the theoretical optimum. Although the SCSO, BWO, SABO, and HHO algorithms also found the optimal solutions on functions F9- F11, MESCSO algorithm achieved the fastest convergence rate. For functions F5-F6 and F12-F13, MESCSO algorithm converges slightly slower, but outperforms HHO algorithm and ranks just below BWO algorithm in terms of accuracy. In the case of functions F14-F23, all algorithms are able to obtain relatively good fitness values, indicating their general effectiveness in solving simpler problems. However, the MESCSO algorithm consistently achieves better fitness values compared to the others. Considering both the statistical results and the convergence curves, MESCSO algorithm demonstrates superior and more stable performance overall.

Convergence behavior analysis

To investigate differences in convergence behavior across algorithms and assess their impact on solution performance, a series of experiments were carried out to examine the convergence characteristics of MESCSO and SCSO. Several representative functions from the 23 standard benchmark functions were selected, each with a dimensionality of 30.

As shown in Fig. 5, the convergence results are presented in five columns, each representing a distinct analytical aspect. The first column visualizes the structure of the objective function’s search space, offering insight into the function’s inherent complexity and associated global optimization challenges. The second column displays the historical search trajectories of the population throughout the search space. MESCSO demonstrates a wide and evenly distributed pattern, highlighting its robust global exploration capacity.

The third column tracks the evolution of the population’s average fitness across iterations. MESCSO shows a more rapid and stable decline, converging earlier and more reliably. This indicates that MESCSO effectively exploits promising regions once identified. In contrast, SCSO shows an initial decline followed by oscillations and occasional rebounds, indicating susceptibility to local optima and unstable convergence. Notably, for function F8, both algorithms exhibit significant fluctuations in average fitness, which may facilitate the exploration of potential optima in highly complex landscapes.

The fourth column illustrates the evolution of individual trajectories in the population’s first dimension. SCSO individuals show slight early-stage fluctuations but quickly converge, implying premature convergence to local optima. MESCSO, on the other hand, maintains a broader trajectory range with more pronounced jumps, indicating enhanced capability to escape local traps and explore a wider solution space. The final column presents the convergence curves of the algorithms. MESCSO demonstrates superior overall convergence accuracy, with a more stable convergence trajectory. These results confirm that MESCSO exhibits significantly better optimization performance compared to the original SCSO algorithm.

Analysis of the Wilcoxon rank sum test results

The Wilcoxon rank sum test is a nonparametric statistical test commonly used to evaluate the statistical differences between improved algorithms and baseline algorithms. Although Table 4 provides the average fitness and standard deviation for each algorithm, it does not offer a sufficiently rigorous basis for determining the relative superiority of MESCSO algorithm over other optimization algorithms. Therefore, the Wilcoxon rank sum test is employed for further verification and evaluation. In this experiment, the significance level is set at 5%. If its value is less than 5%, it indicates that the performance difference between MESCSO and the comparison algorithm on a given benchmark function is statistically significant. Table 5 presents the Wilcoxon rank sum test results of MESCSO versus the other nine algorithms across the 23 standard benchmark functions. In the table, the symbols “+”, “-”, and “=” indicate that MESCSO algorithm performs better than, worse than, or equal to the corresponding comparison algorithm, respectively, on a given function.

As shown in Table 5 , most of the test results are less than 5%, indicating that there are significant differences between MESCSO and the majority of the comparison algorithms. However, some of the results are greater than 5%, suggesting that the optimization performance of MESCSO on those functions is not significantly different from that of the other algorithms. For functions F9-F11, there are many results equal to 1, indicating that the algorithms generally found the same optimal values on these functions, resulting in no significant differences. On other functions, MESCSO outperforms most of the comparison algorithms. According to the Wilcoxon rank sum test, MESCSO consistently yields better results, except for the BWO algorithm, which shows slightly better performance on a few simple functions. Nevertheless, MESCSO algorithm outperforms BWO algorithm on more than half of the benchmark functions.

Overall, the Wilcoxon test results confirm that MESCSO outperforms most algorithms on the majority of benchmark functions, especially in comparison to the SCSO algorithm, demonstrating stronger convergence and optimization capabilities. This also validates the effectiveness of the improvements proposed in this paper, including ISMROBL, GQI, IMDM, and AOBL mechanisms. Compared with BWO algorithm, MESCSO algorithm performs better on more than half of the benchmark functions, further highlighting its superiority. In summary, the MESCSO algorithm demonstrates superior global search ability and stability across the 23 standard benchmark functions, with improvements that significantly enhance the performance of the SCSO algorithm.

Experiments on the CEC2014 benchmark functions

To more comprehensively evaluate the optimization performance of the MESCSO algorithm, in addition to the 23 standard benchmark functions used in the preliminary experiments, this paper further employs the more challenging CEC2014 benchmark functions for performance validation. Table 6 lists the specific functions used in this experiment. In terms of experimental settings, the population size for each optimization algorithm was set to \(N=30\), the maximum number of iterations was \(T=500\), and the dimensionality of the search space was fixed at \(dim=10\). To ensure statistical significance and result stability, each algorithm was independently executed 30 times. Finally, the average fitness and standard deviation were used as evaluation metrics to comprehensively assess the performance of each algorithm on different test functions.

Experimental results and analysis of CEC2014 benchmark functions

Table 7 summarizes the statistical results of 10 algorithms on the CEC2014 benchmark functions. To clearly present the statistical results, the best average fitness values and standard deviations are bolded. According to the table, the MESCSO algorithm demonstrates superior overall performance across the 30 CEC2014 benchmark functions, significantly outperforming the SCSO and other nine mainstream comparison algorithms. For the basic unimodal functions CEC1-CEC3, MESCSO algorithm not only achieves better average fitness values, but also maintains smaller standard deviations in most cases, showing good convergence accuracy and stability. Although the standard deviation on function CEC2 was slightly higher than that of BWO algorithm, MESCSO algorithm still maintained strong optimization performance. For the simple multimodal functions CEC4-CEC8, MESCSO algorithm exhibited strong global search capability, achieving better average fitness on many functions. This indicates its ability to escape local optima across functions with many local extrema, despite relatively weaker stability in some cases. On the hybrid composition functions CEC9-CEC16, MESCSO continued to perform well, maintaining leading average fitness across all comparison algorithms. However, it showed larger standard deviations on some functions. For the more challenging composition functions CEC17-CEC30, MESCSO achieved superior performance on most functions, especially on CEC17, CEC21, CEC23, CEC26, and CEC29, where it not only obtained the best average fitness but also reached the smallest standard deviation, verifying its strong convergence ability and robustness under high-complexity conditions. Although the performance on function CEC18 was slightly lower than that of SABO and HHO algorithm, and it ranked behind SCA algorithm on function CEC22 and CEC24, and fell behind SCSO, HHO, and BWO algorithm on function CEC28, MESCSO algorithm still showed overall excellent performance. In function CEC30, MESCSO algorithm ranked just behind DBO algorithm, yet continued to exhibit strong competitiveness.

Figure 6 illustrates the convergence curves of MESCSO and nine comparison algorithms on the CEC2014 benchmark functions. As shown in the figure, MESCSO algorithm exhibits better convergence performance. On the unimodal functions CEC1-CEC3, MESCSO algorithm quickly approaches the global optimum and continues optimizing to reach relatively low fitness values. In contrast, the SCSO algorithm tends to fall into local optima during the optimization process and lacks an effective mechanism for escaping. The convergence curves of other comparison algorithms are also inferior to that of MESCSO algorithm.

On simple multimodal functions, MESCSO demonstrates strong global search ability, with convergence curves that typically descend more rapidly. This allows the algorithm to enter promising regions early in the search process and continuously approach the global optimum through iterative evolution. This performance can be attributed to the introduction of the ISMROBL, GQI, IMDM, and AOBL mechanisms, which enhance the algorithm’s ability to escape from local optima in complex search spaces.

Convergence curves of standard benchmark functions (F1–F13) of MESCSO algorithm with \(dim=30\).

Convergence curves of standard benchmark functions (F1–F13) of MESCSO algorithm with \(dim=500\).

Convergence curves of standard benchmark functions (F14–F23) of MESCSO algorithm.

Convergence behaviour of MESCSO and SCSO.

From the convergence curves on functions CEC4-CEC17, MESCSO algorithm is able to find better solutions on many functions and achieves faster convergence. Although many algorithms struggle to converge effectively on the complex, high-dimensional functions CEC17-CEC30 due to premature convergence, MESCSO algorithm still locates better positions on function CEC17, CEC20, CEC21, CEC25, CEC27, and CEC29. This leads to better convergence toward the global optimum and further demonstrates MESCSO’s strong search dynamics and its ability to escape local traps and approach global solutions in complex optimization scenarios.

Box plot results and analysis

The box plot is a commonly used statistical visualization method that displays the distribution characteristics of a dataset through five key statistics: the minimum, first quartile, median, third quartile, and maximum. The upper and lower boundaries of the box represent third quartile and first quartile, respectively, with the horizontal line inside the box indicating the median. The whiskers extend to the minimum and maximum values within the data range. In addition, outliers are marked in the plot, providing an intuitive reflection of data dispersion, symmetry, and stability.

Figure 7 illustrates the box plots of the fitness values obtained by MESCSO and nine comparison algorithms after 30 independent runs on the CEC2014 benchmark functions. Through visual comparison, it is evident that MESCSO algorithm demonstrates smaller interquartile ranges and lower median lines on most test functions, indicating more concentrated results, lower variability, and significantly better stability. For instance, on functions such as CEC1, CEC3, CEC4, CEC5, CEC7, CEC17, CEC21, and CEC29, MESCSO not only achieves the lowest median values but also exhibits significantly narrower extreme value ranges compared to other algorithms. This demonstrates the MESCSO algorithm’s ability to consistently deliver high-quality solutions with stable performance across unimodal, multimodal, and complex hybrid functions. In contrast, the SCSO and other algorithms exhibit wider boxes and longer whiskers on multiple test functions, suggesting greater variability and instability across runs, possibly due to premature convergence or stagnation. In particular, on functions such as CEC2, CEC10, CEC11, CEC12, CEC28, and CEC30, many comparison algorithms exhibit serious outliers and long tails. Nevertheless, MESCSO still maintains a relatively concentrated trend.

Overall, the analysis of the box plots further verifies the comprehensive advantages of the MESCSO algorithm in terms of fitness value distribution, convergence stability, and optimal solution acquisition capability, demonstrating its strong reliability and practical applicability across multiple independent runs.

Analysis of the Wilcoxon rank sum test results

Based on the above analysis, the MESCSO algorithm achieved promising results on the CEC2014 benchmark functions. Table 8 presents the Wilcoxon rank sum test results comparing MESCSO with nine other optimization algorithms across the CEC2014 benchmark functions. The significance level was set to 5%. The bottom of the table provides a summary of the “+/ /=” counts, indicating the number of functions where MESCSO performed significantly better, worse, or equivalent than each comparison algorithm.

From the overall statistical results, MESCSO algorithm exhibited statistically significant performance differences compared to most comparison algorithms, with the majority of its value less than 5%. Specifically, in comparison with SABO, SCA, KOA, and BWO algorithm, MESCSO algorithm demonstrated notably better optimization performance on 27 functions, 28 functions, and 30 functions, highlighting its superior global optimization capability. Additionally, MESCSO algorithm outperformed AOA, HHO, and WOA algorithm on 19 functions, 24 functions, and 25 functions, respectively, indicating its adaptability across diverse types of optimization problems, including unimodal, multimodal, and hybrid composite functions, along with strong solution quality. Compared to the SCSO algorithm, MESCSO algorithm showed clear advantages in 14 functions, slight inferiority in only one, and comparable performance in the remaining 15, confirming that the proposed improvements significantly enhance both search accuracy and exploration capability.

In summary, the Wilcoxon rank sum test results clearly demonstrated that MESCSO outperforms several mainstream optimization algorithms on many benchmark functions with statistically significant advantages. These results further validate the effectiveness of the integrated strategies including ISMROBL, GQI, IMDM, and AOBL mechanism in enhancing convergence speed, improving global exploration ability, and suppressing premature convergence.

Convergence curves of CEC2014 benchmark functions (F1–F13) of MESCSO algorithm with \(dim=10\).

Box plots of CEC2014 benchmark functions of MESCSO algorithm with \(dim=10\).

Structural engineering optimization problems

To further investigate the practical applicability of the MESCSO algorithm, this section evaluates its performance on five representative structural engineering optimization problems. These problems include pressure vessel design, welded beam design, multi-disc clutch braking design, tension/compression spring design, and cantilever beam design. For these five different constrained optimization problems, the population size N was set at 30 and the maximum number of iterations T was 500. For each problem, MESCSO is run 30 times independently. In this section, the best and statistical results of different algorithms are used for comparison. A simple and commonly used penalty method is applied to solve the constrained optimization problems. The penalized objective function \(f_p\) is expressed as follows:

where \(\lambda\) is a penalty factor which is set as \(10^{20}\) in this paper. For each constraint \(g_k\), if it is violated, then \(\delta _k=1\); otherwise, \(\delta _k=0\).

Pressure vessel design problem

The primary objective of the pressure vessel design problem is to minimize the total manufacturing cost of the vessel while satisfying multiple engineering constraints. A schematic diagram of the pressure vessel is shown in Fig. 8. This problem involves four decision variables: the shell thickness \(T_s\), head thickness \(T_h\), inner radius R, and vessel length L.

Schematic diagram of the pressure vessel design.

The mathematical formulation of the pressure vessel design problem is as follows.

Consider:

Objective function:

Subject to:

Variable Range:

The experimental results for the pressure vessel design problem are presented in Table 9, demonstrating that the MESCSO algorithm performs effectively in solving engineering optimization problems. As shown in the table, the MESCSO algorithm obtained the following optimal solution: \(T_s = 0.778267\), \(T_h= 0.384764\), \(R= 40.323219\), and \(L= 199.950488\), with a minimum cost of 5885.90733. Among the other comparison algorithms, six produced cost values greater than 6000, while two yielded costs below 6000. Therefore, the MESCSO algorithm achieved the lowest cost among all methods, indicating its superior optimization performance for this problem.

Welded beam design problem

The welded beam design problem is a commonly encountered and challenging issue in structural engineering. The primary objective of this problem is to minimize the fabrication cost of the welded beam while satisfying a series of structural and mechanical constraints. A schematic diagram of the welded beam is illustrated in Fig. 9. This problem involves four decision variables: weld width h, connecting beam length l, beam height t, and connecting beam thickness b.

Schematic diagram of the welded beam design.

The mathematical formulation of the welded beam design problem is as follows.

Consider:

Objective function:

Subject to:

where:

Variable Range:

The results of the welded beam design problem are presented in Table 10. Using the MESCSO algorithm, the optimal values of the decision variables were obtained as follows: weld width \(h = 0.198704\), connecting beam length \(l = 3.339804\), beam heigh \(t = 9.192051\), and connecting beam thickness \(b = 0.198833\). Compared to other algorithms, MESCSO algorithm achieved the lowest weight, with a final weight value of 1.670363. In the welded beam design problem, many algorithms yielded minimum weights greater than 1.7, whereas MESCSO algorithm produced the smallest weight. This indicates that the MESCSO algorithm provides the best optimization performance.

Multi-disc clutch braking problem

The design optimization of a multi-disc clutch braking system is a representative constrained optimization problem in the field of automotive engineering. The primary objective is to minimize the system mass while ensuring braking performance, thereby enhancing overall vehicle energy efficiency and operational stability. This problem involves five key design variables: inner diameter \(r_i\), outer diameter \(r_o\), brake disc thickness t, driving force F, and surface friction coefficient z. The structural configuration of the system is illustrated in Fig. 10.

Schematic diagram of the multi-disc clutch braking problem.

The mathematical formulation of the multi-disc clutch braking problem is as follows.

Consider:

Objective function:

Subject to:

where:

Variable Range:

The experimental results for the multi-plate clutch braking problem are presented in Table 11. As shown in Table, the MESCSO algorithm yielded an inner diameter \(r_i = 69.999\), an outer diameter \(r_o = 90\), brake disc thickness \(t = 1\), driving force \(F = 735.016\), and surface friction coefficient \(z = 2\). Compared to other algorithms, MESCSO algorithm achieved the lowest objective weight, with a final value of 0.235242. Among the seven algorithms tested for this problem, MESCSO demonstrated the best optimization performance.

Tension/compression spring design problem

The structural optimization of a tension/compression spring aims to minimize the mass of the spring while satisfying a set of mechanical performance and geometric constraints, thereby improving system efficiency and structural compactness. This problem involves three primary design variables: wire diameter d, average coil diameter D, and effective coil number N. A schematic diagram of the spring is illustrated in Fig. 11.

Schematic diagram of the tension/compression spring design.

The mathematical formulation of the tension/compression spring design problem is as follows.

Consider:

Objective function:

Subject to:

Variable Range:

The experimental results of various optimization algorithms for the tension/compression spring design problem are summarized in Table 12. As shown, the MESCSO algorithm achieved a well-balanced solution across all three design variables, with values of \(d= 0.051713\), \(D = 0.357294\), and \(N=11.255436\). The corresponding optimal objective function value was 0.012665, which was the smallest among all the algorithms, demonstrating the MESCSO algorithm’s superior performance in this problem.

Cantilever beam design problem

The primary objective of the cantilever beam design problem is to minimize the total weight of the beam by adjusting the dimensional parameters of its structural components while satisfying a set of design constraints. In this problem, the design variables typically consist of the widths or heights of five hollow square cross-section beam elements, with each variable representing the size of a specific beam segment. All beam segments share the same wall thickness. A schematic diagram of the cantilever beam model is shown in Fig. 12.

Schematic diagram of the cantilever beam design.

The mathematical formulation of the cantilever beam design problem is as follows.

Consider:

Objective function:

Subject to:

Variable Range:

The experimental results for the cantilever beam design problem are presented in Table 13. As shown in the table, the MESCSO algorithm produced optimal widths or heights for the five equal-thickness hollow square sections as 6.036097, 5.309212, 4.478850, 3.501063, and 2.148696, respectively, resulting in a minimum total weight of 1.339972. In this problem, the MESCSO algorithm demonstrated superior optimization performance compared to the other algorithms.

Conclusions

This paper proposes a Multi-Strategy Enhanced Sand Cat Swarm Optimization (MESCSO) algorithm, which effectively addresses several limitations of the original Sand Cat Swarm Optimization (SCSO) algorithm, including poor exploration capability in the later stages, a tendency to fall into local optima, and reduced convergence speed. Firstly, during population initialization, an improved sine mapping and random opposition-based learning (ISMROBL) is introduced to enhance the uniformity and diversity of initial solutions, preventing the population from prematurely converging to a local region in the early phase. Secondly, a nonlinear decreasing parameter is proposed to dynamically balance global exploration and local exploitation. In addition, the introduction of the generalized quadratic interpolation (GQI) strategy significantly strengthens local search capabilities, improving search accuracy around local optima and enhancing exploitation efficiency. The improved mean differential mutation (IMDM) strategy further improves global exploration ability and enhances the algorithm’s capacity to escape local optima. Finally, to improve overall convergence stability, an accelerated opposition-based learning (AOBL) mechanism combined with a greedy selection strategy is probabilistically applied after each iteration based on a random threshold. When triggered, this mechanism adjusts individual positions to enhance the algorithm’s ability to escape local optima and improve convergence performance. To comprehensively validate the performance of the MESCSO algorithm, extensive comparative experiments were conducted against nine mainstream metaheuristic algorithms on 23 standard benchmark functions with 30 and 500 dimensions and the 10-dimensional CEC2014 benchmark functions. Experimental results show that MESCSO demonstrates superior search accuracy and convergence speed across a wide range of test cases. Furthermore, five typical constrained structural engineering design problems were used to verify the MESCSO algorithm’s practical adaptability and applicability. The results confirm that MESCSO consistently achieves better optimization outcomes than the compared algorithms.

In summary, compared to the original SCSO and other related algorithms, MESCSO algorithm through the integration of ISMROBL, the nonlinear decreasing parameter, GQI, IMDM, and AOBL mechanism effectively overcomes the deficiencies of insufficient exploration, local stagnation, and slow convergence in the later stages of SCSO algorithm. It significantly enhances global exploration, local exploitation precision, and overall algorithmic stability, thereby achieving a comprehensive performance improvement.

Despite the demonstrated advantages of MESCSO, there are still some limitations that need to be addressed in future research. Firstly, the integration of multiple enhancement strategies introduces additional computational overhead, potentially impacting the algorithm’s efficiency in handling large-scale or real-time optimization problems. Secondly, despite the improved dynamic balance enabled by nonlinear parameter control, certain strategies (e.g., ISMROBL and AOBL) require manual parameter tuning, which may limit the algorithm’s adaptability across diverse problem domains. To address these limitations, we will further optimize the algorithm structure and adaptive parameter adjustment strategy to continuously enhance the generalization performance and stability of MESCSO algorithm in complex optimization environments, and especially conduct in-depth research on the issues of algorithm stability that still need to be improved. In addition, we will expand the MESCSO algorithm to practical applications, including but not limited to UAV 3D path planning, text clustering analysis, feature selection optimization, parameter estimation, image segmentation, fault diagnosis and other typical application scenarios. At the same time, we will explore the deep integration of the MESCSO algorithm with advanced machine learning techniques to optimize and regulate the hyper-parameters of the machine learning model, which will further expand the application prospects and practical value of the algorithm.

Data availability

Data are contained within the article.

References

Long, W., Wu, T., Liang, X. & Xu, S. Solving high-dimensional global optimization problems using an improved sine cosine algorithm. Expert Syst. Appl. 123, 108–126. https://doi.org/10.1016/j.eswa.2018.11.032 (2019).

Zhou, C., Zhao, H. & Xu, S. High-efficient sample point transform algorithm for large-scale complex optimization. Comput. Methods Appl. Mech. Eng. 432, 117451. https://doi.org/10.1016/j.cma.2024.117451 (2024).

Holland, J. H. Genetic algorithms. Sci. Am. 267, 66–73 (1992).

Beyer, H.-G. & Schwefel, H.-P. Evolution strategies—-A comprehensive introduction. Nat. Comput. 1, 3–52. https://doi.org/10.1023/A:1015059928466 (2002).

Banzhaf, W., Koza, J., Ryan, C., Spector, L. & Jacob, C. Genetic programming. IEEE Intell. Syst. Appl. 15, 74–84. https://doi.org/10.1109/5254.846288 (2000).

Storn, R. & Price, K. Differential evolution-a simple and efficient heuristic for global optimization over continuous spaces. J. Global Optim. 11, 341–359. https://doi.org/10.1023/A:1008202821328 (1997).

Sinha, N., Chakrabarti, R. & Chattopadhyay, P. K. Evolutionary programming techniques for economic load dispatch. IEEE Trans. Evol. Comput. 7, 83–94. https://doi.org/10.1109/TEVC.2002.806788 (2003).

Kennedy, J. & Eberhart, R. Particle swarm optimization. In Proceedings of ICNN’95-International Conference on Neural Networks, vol. 4, 1942–1948 (IEEE, 1995).

Xue, J. & Shen, B. A novel swarm intelligence optimization approach: Sparrow search algorithm. Syst. Sci. Control Eng. 8, 22–34. https://doi.org/10.1080/21642583.2019.1708830 (2020).

Mirjalili, S. Dragonfly algorithm: A new meta-heuristic optimization technique for solving single-objective, discrete, and multi-objective problems. Neural Comput. Appl. 27, 1053–1073. https://doi.org/10.1007/s00521-015-1920-1 (2016).

Fister, I., Fister, I. Jr., Yang, X.-S. & Brest, J. A comprehensive review of firefly algorithms. Swarm Evol. Comput. 13, 34–46. https://doi.org/10.1016/j.swevo.2013.06.001 (2013).

Hu, G., Cheng, M., Houssein, E. H., Hussien, A. G. & Abualigah, L. Sdo: A novel sled dog-inspired optimizer for solving engineering problems. Adv. Eng. Inform. 62, 102783. https://doi.org/10.1016/j.aei.2024.102783 (2024).

Tian, A.-Q., Liu, F.-F. & Lv, H.-X. Snow geese algorithm: A novel migration-inspired meta-heuristic algorithm for constrained engineering optimization problems. Appl. Math. Model. 126, 327–347. https://doi.org/10.1016/j.apm.2023.10.045 (2024).

Fu, S. et al. Red-billed blue magpie optimizer: A novel metaheuristic algorithm for 2d/3d uav path planning and engineering design problems. Artif. Intell. Rev. 57, 134. https://doi.org/10.1007/s10462-024-10716-3 (2024).

Bai, J. et al. Blood-sucking leech optimizer. Adv. Eng. Softw. 195, 103696. https://doi.org/10.1016/j.advengsoft.2024.10369 (2024).

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 220, 671–680. https://doi.org/10.1126/science.220.4598.671 (1983).

Zhao, W., Wang, L. & Zhang, Z. A novel atom search optimization for dispersion coefficient estimation in groundwater. Futur. Gener. Comput. Syst. 91, 601–610. https://doi.org/10.1016/j.future.2018.05.037 (2019).

Rashedi, E., Nezamabadi-Pour, H. & Saryazdi, S. Gsa: A gravitational search algorithm. Inf. Sci. 179, 2232–2248. https://doi.org/10.1016/j.ins.2009.03.004 (2009).

Shehadeh, H. A. Chernobyl disaster optimizer (cdo): A novel meta-heuristic method for global optimization. Neural Comput. Appl. 35, 10733–10749. https://doi.org/10.1007/s00521-023-08261-1 (2023).

Azizi, M., Aickelin, U., Khorshidi, H. & Baghalzadeh Shishehgarkhaneh, M. Energy valley optimizer: A novel metaheuristic algorithm for global and engineering optimization. Sci. Rep. 13, 226. https://doi.org/10.1038/s41598-022-27344-y (2023).

Wei, Z., Huang, C., Wang, X., Han, T. & Li, Y. Nuclear reaction optimization: A novel and powerful physics-based algorithm for global optimization. IEEE Access 7, 66084–66109. https://doi.org/10.1109/ACCESS.2019.2918406 (2019).

Abedinpourshotorban, H., Shamsuddin, S. M., Beheshti, Z. & Jawawi, D. N. Electromagnetic field optimization: A physics-inspired metaheuristic optimization algorithm. Swarm Evol. Comput. 26, 8–22. https://doi.org/10.1016/j.swevo.2015.07.002 (2016).

Shi, Y. Brain storm optimization algorithm. In Advances in Swarm Intelligence: Second International Conference, ICSI 2011, Chongqing, China, June 12–15, 2011, Proceedings, Part I 2 303–309. https://doi.org/10.1007/978-3-642-21515-5_36 (Springer, 2011).

Zhang, J., Xiao, M., Gao, L. & Pan, Q. Queuing search algorithm: A novel metaheuristic algorithm for solving engineering optimization problems. Appl. Math. Model. 63, 464–490. https://doi.org/10.1016/j.apm.2018.06.036 (2018).

Rao, R. V., Savsani, V. J. & Vakharia, D. Teaching-learning-based optimization: An optimization method for continuous non-linear large scale problems. Inf. Sci. 183, 1–15. https://doi.org/10.1016/j.ins.2011.08.006 (2012).

Faridmehr, I., Nehdi, M. L., Davoudkhani, I. F. & Poolad, A. Mountaineering team-based optimization: A novel human-based metaheuristic algorithm. Mathematics 11, 1273. https://doi.org/10.3390/math11051273 (2023).

Moosavi, S. H. S. & Bardsiri, V. K. Poor and rich optimization algorithm: A new human-based and multi populations algorithm. Eng. Appl. Artif. Intell. 86, 165–181. https://doi.org/10.1016/j.engappai.2019.08.025 (2019).

Mohamed, A. W., Hadi, A. A. & Mohamed, A. K. Gaining-sharing knowledge based algorithm for solving optimization problems: A novel nature-inspired algorithm. Int. J. Mach. Learn. Cybern. 11, 1501–1529. https://doi.org/10.1007/s13042-019-01053-x (2020).

Zolfi, K. Gold rush optimizer: A new population-based metaheuristic algorithm. Oper. Res. Decis. 33, 113–150. https://doi.org/10.37190/ord230108 (2023).

Javed, S. T., Zafar, K. & Younas, I. Imitation-based cognitive learning optimizer for solving numerical and engineering optimization problems. Cogn. Syst. Res. 86, 101237. https://doi.org/10.1016/j.cogsys.2024.101237 (2024).

Wang, X. Frigatebird optimizer: A novel metaheuristic algorithm. Phys. Scr. 99, 125233. https://doi.org/10.1088/1402-4896/ad8e0e (2024).

Wang, X. Gyro fireworks algorithm: A new metaheuristic algorithm. AIP Adv. https://doi.org/10.1063/5.0213886 (2024).

Wang, X. Fishing cat optimizer: A novel metaheuristic technique. Eng. Comput. 42, 780–833. https://doi.org/10.1108/EC-10-2024-0904 (2025).

Wolpert, D. H. & Macready, W. G. No free lunch theorems for optimization. IEEE Trans. Evol. Comput. 1, 67–82. https://doi.org/10.1109/4235.585893 (1997).

Velasco, L., Guerrero, H. & Hospitaler, A. A literature review and critical analysis of metaheuristics recently developed. Arch. Comput. Methods Eng. 31, 125–146. https://doi.org/10.1007/s11831-023-09975-0 (2024).

Seyyedabbasi, A. & Kiani, F. Sand cat swarm optimization: A nature-inspired algorithm to solve global optimization problems. Eng. Comput. 39, 2627–2651. https://doi.org/10.1007/s00366-022-01604-x (2023).

Peng, H. et al. A modified sand cat swarm optimization algorithm based on multi-strategy fusion and its application in engineering problems. Mathematics 12, 2153. https://doi.org/10.3390/math12142153 (2024).

Li, Y., Yu, Q. & Du, Z. Sand cat swarm optimization algorithm and its application integrating elite decentralization and crossbar strategy. Sci. Rep. 14, 8927. https://doi.org/10.1038/s41598-024-59597-0 (2024).

Cai, Y., Guo, C. & Chen, X. An improved sand cat swarm optimization with lens opposition-based learning and sparrow search algorithm. Sci. Rep. 14, 20690. https://doi.org/10.1038/s41598-024-71581-2 (2024).

Zhang, K., He, Y., Wang, Y. & Sun, C. Improved multi-strategy sand cat swarm optimization for solving global optimization. Biomimetics 9, 280. https://doi.org/10.3390/biomimetics9050280 (2024).

Tizhoosh, H. R. Opposition-based learning: A new scheme for machine intelligence. In International Conference on Computational Intelligence for Modelling, Control and Automation and International Conference on Intelligent Agents, Web Technologies and Internet Commerce (CIMCA-IAWTIC’06), vol. 1, 695–701. https://doi.org/10.1109/CIMCA.2005.1631345 (IEEE, 2005).

Mohapatra, S. & Mohapatra, P. Fast random opposition-based learning golden jackal optimization algorithm. Knowl. Based Syst. 275, 110679. https://doi.org/10.1016/j.knosys.2023.110679 (2023).

Zhao, W. et al. Quadratic interpolation optimization (qio): A new optimization algorithm based on generalized quadratic interpolation and its applications to real-world engineering problems. Comput. Methods Appl. Mech. Eng. 417, 116446. https://doi.org/10.1016/j.cma.2023.116446 (2023).

Lin, M., Wang, Z., Wang, F. & Chen, D. Improved simplified particle swarm optimization based on piecewise nonlinear acceleration coefficients and mean differential mutation strategy. IEEE Access 8, 92842–92860. https://doi.org/10.1109/ACCESS.2020.2994984 (2020).

Deep, K. & Bansal, J. C. Mean particle swarm optimisation for function optimisation. Int. J. Comput. Intell. Stud. 1, 72–92. https://doi.org/10.1504/IJCIStudies.2009.025339 (2009).

Yuzgec, U. Accelerated opposition learning based chaotic single candidate optimization algorithm: A new alternative to population-based heuristics. Knowl. Based Syst. 314, 113169. https://doi.org/10.1016/j.knosys.2025.113169 (2025).

Abualigah, L., Diabat, A., Mirjalili, S., Abd Elaziz, M. & Gandomi, A. H. The arithmetic optimization algorithm. Comput. Methods Appl. Mech. Eng. 376, 113609. https://doi.org/10.1016/j.cma.2020.113609 (2021).

Trojovskỳ, P. & Dehghani, M. Subtraction-average-based optimizer: A new swarm-inspired metaheuristic algorithm for solving optimization problems. Biomimetics 8, 149. https://doi.org/10.3390/biomimetics8020149 (2023).

Mirjalili, S. & Lewis, A. The whale optimization algorithm. Adv. Eng. Softw. 95, 51–67. https://doi.org/10.1016/j.advengsoft.2016.01.008 (2016).

Zhong, C., Li, G. & Meng, Z. Beluga whale optimization: A novel nature-inspired metaheuristic algorithm. Knowl. Based Syst. 251, 109215. https://doi.org/10.1016/j.knosys.2022.109215 (2022).

Xue, J. & Shen, B. Dung beetle optimizer: A new meta-heuristic algorithm for global optimization. J. Supercomput. 79, 7305–7336. https://doi.org/10.1007/s11227-022-04959-6 (2023).

Heidari, A. A. et al. Harris hawks optimization: Algorithm and applications. Futur. Gener. Comput. Syst. 97, 849–872. https://doi.org/10.1016/j.future.2019.02.028 (2019).

Abdel-Basset, M., Mohamed, R., Azeem, S. A. A., Jameel, M. & Abouhawwash, M. Kepler optimization algorithm: A new metaheuristic algorithm inspired by Kepler’s laws of planetary motion. Knowl. Based Syst. 268, 110454. https://doi.org/10.1016/j.knosys.2023.110454 (2023).

Mirjalili, S. Sca: A sine cosine algorithm for solving optimization problems. Knowl. Based Syst. 96, 120–133. https://doi.org/10.1016/j.knosys.2015.12.022 (2016).

He, Q. & Wang, L. An effective co-evolutionary particle swarm optimization for constrained engineering design problems. Eng. Appl. Artif. Intell. 20, 89–99. https://doi.org/10.1016/j.engappai.2006.03.003 (2007).

He, Q. & Wang, L. A hybrid particle swarm optimization with a feasibility-based rule for constrained optimization. Appl. Math. Comput. 186, 1407–1422. https://doi.org/10.1016/j.amc.2006.07.134 (2007).

Mirjalili, S., Mirjalili, S. M. & Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 69, 46–61. https://doi.org/10.1016/j.advengsoft.2013.12.007 (2014).

Mirjalili, S., Mirjalili, S. M. & Hatamlou, A. Multi-verse optimizer: A nature-inspired algorithm for global optimization. Neural Comput. Appl. 27, 495–513. https://doi.org/10.1007/s00521-015-1870-7 (2016).

MiarNaeimi, F., Azizyan, G. & Rashki, M. Horse herd optimization algorithm: A nature-inspired algorithm for high-dimensional optimization problems. Knowl. Based Syst. 213, 106711. https://doi.org/10.1016/j.knosys.2020.106711 (2021).

Jia, H. et al. Multi-strategy remora optimization algorithm for solving multi-extremum problems. J. Comput. Des. Eng. 10, 1315–1349. https://doi.org/10.1093/jcde/qwad04 (2023).

Gupta, S. & Deep, K. Improved sine cosine algorithm with crossover scheme for global optimization. Knowl. Based Syst. 165, 374–406. https://doi.org/10.1016/j.knosys.2018.12.00 (2019).

Lin, M., Wang, Z. & Zheng, W. Hybrid particle swarm-differential evolution algorithm and its engineering applications. Soft. Comput. 27, 16983–17010. https://doi.org/10.1007/s00500-023-09025-8 (2023).

Chopra, N. & Ansari, M. M. Golden jackal optimization: A novel nature-inspired optimizer for engineering applications. Expert Syst. Appl. 198, 116924. https://doi.org/10.1016/j.eswa.2022.116924 (2022).

Babalik, A., Cinar, A. C. & Kiran, M. S. A modification of tree-seed algorithm using Deb’s rules for constrained optimization. Appl. Soft Comput. 63, 289–305. https://doi.org/10.1016/j.asoc.2017.10.013 (2018).

Dhiman, G. & Kumar, V. Spotted hyena optimizer: A novel bio-inspired based metaheuristic technique for engineering applications. Adv. Eng. Softw. 114, 48–70. https://doi.org/10.1016/j.advengsoft.2017.05.014 (2017).

Pierezan, J. & Coelho, L. D. S. Coyote optimization algorithm: A new metaheuristic for global optimization problems. In 2018 IEEE Congress on Evolutionary Computation (CEC) 1–8. https://doi.org/10.1109/CEC.2018.8477769 (IEEE, 2018).

Li, S., Chen, H., Wang, M., Heidari, A. A. & Mirjalili, S. Slime mould algorithm: A new method for stochastic optimization. Futur. Gener. Comput. Syst. 111, 300–323. https://doi.org/10.1016/j.future.2020.03.055 (2020).

Kaveh, A. & Khayatazad, M. A new meta-heuristic method: Ray optimization. Comput. Struct. 112, 283–294. https://doi.org/10.1016/j.compstruc.2012.09.00 (2012).

Agushaka, J. O., Ezugwu, A. E. & Abualigah, L. Dwarf mongoose optimization algorithm. Comput. Methods Appl. Mech. Eng. 391, 114570. https://doi.org/10.1016/j.cma.2022.114570 (2022).

Houssein, E. H., Oliva, D., Samee, N. A., Mahmoud, N. F. & Emam, M. M. Liver cancer algorithm: A novel bio-inspired optimizer. Comput. Biol. Med. 165, 107389. https://doi.org/10.1016/j.compbiomed.2023.107389 (2023).

Eskandar, H., Sadollah, A., Bahreininejad, A. & Hamdi, M. Water cycle algorithm—A novel metaheuristic optimization method for solving constrained engineering optimization problems. Comput. Struct. 110, 151–166. https://doi.org/10.1016/j.compstruc.2012.07.010 (2012).

Tanabe, R. & Fukunaga, A. S. Improving the search performance of shade using linear population size reduction. In 2014 IEEE Congress on Evolutionary Computation (CEC) 1658–1665. https://doi.org/10.1109/CEC.2014.6900380 (IEEE, 2014).

Mirjalili, S. Moth-flame optimization algorithm: A novel nature-inspired heuristic paradigm. Knowl. Based Syst. 89, 228–249. https://doi.org/10.1016/j.knosys.2015.07.006 (2015).

Lee, K. S. & Geem, Z. W. A new meta-heuristic algorithm for continuous engineering optimization: Harmony search theory and practice. Comput. Methods Appl. Mech. Eng. 194, 3902–3933. https://doi.org/10.1016/j.cma.2004.09.007 (2005).

Hayyolalam, V. & Kazem, A. A. P. Black widow optimization algorithm: A novel meta-heuristic approach for solving engineering optimization problems. Eng. Appl. Artif. Intell. 87, 103249. https://doi.org/10.1016/j.engappai.2019.103249 (2020).

Song, M. et al. Modified Harris Hawks optimization algorithm with exploration factor and random walk strategy. Comput. Intell. Neurosci. 2022, 4673665. https://doi.org/10.1155/2022/4673665 (2022).

Gomes, G. F., Cunha, S. S. & Ancelotti, A. C. A sunflower optimization (sfo) algorithm applied to damage identification on laminated composite plates. Eng. Comput. 35, 619–626. https://doi.org/10.1007/s00366-018-0620-8 (2019).