Abstract

Ensuring reliability under normal operating conditions remains a critical challenge in modern industrial systems, where life testing is constrained by time, cost, and safety considerations. This paper proposes a Weibull reliability model analyzed via partially accelerated life tests under normal operating environments, integrated with an improved adaptive progressive censoring scheme to maximize information while minimizing testing duration. The developed methodology bridges the gap between traditional accelerated life testing and realistic operating conditions, enabling precise inference on reliability indices without excessive extrapolation. We derive closed-form expressions for the likelihood function, parameter estimators, and Fisher information, establishing robust inferential procedures for both complete and censored samples. Symmetrical Bayesian frameworks are used for parameter estimation, providing approximate confidence intervals and Bayesian credible intervals to measure uncertainty. A thorough simulation study investigates the finite-sample efficiency of the estimators under various censoring levels and stress profiles. Furthermore, two real datasets, light-emitting diode (LED) lifetime data and insulating liquid breakdown times, are analyzed, illustrating the model’s ability to capture complex failure mechanisms and outperform competing Weibull-based schemes in terms of goodness-of-fit and predictive reliability. The proposed framework not only enhances the flexibility of Weibull reliability analysis but also offers practical guidelines for reliability engineers aiming to balance cost, test duration, and information efficiency.

Similar content being viewed by others

Introduction

In the contemporary fast-paced technological landscape, manufacturers encounter significant competition, compelling them to develop products of high reliability. However, assessing the reliability of these products presents challenges, as failures often require extended periods to occur, which is a crucial aspect of evaluating product lifespan. To address this issue, researchers employ accelerated life tests (ALTs) to assess product durability rapidly. In ALTs, products are subjected to extreme conditions, such as elevated temperatures, excessive voltage, or increased pressure, to hasten the occurrence of failures. By analyzing this data, researchers can forecast the product’s reliability under normal usage conditions. Although there are various methodologies for conducting ALTs, the most prevalent approaches include constant-stress and step-stress ALTs.

In constant-stress ALTs, all test items are kept under the same high-stress conditions until they fail. For more details about constant-stress ALTs, studies by Watkins and John1, Fan and Yu2, Kumar et al.3, Wu et al.4, and Elwahab5, provide detailed insights. For step-stress ALTs, stress levels are raised gradually at fixed times or after a certain number of failures. For step-stress ALTs, works by Tang et al.6, Lee et al.7, Hakamipour8, and Bakouch et al.9, among others, are recommended. To effectively predict a product’s reliability under normal usage conditions utilizing ALTs, a complete understanding of the life-stress model is critical. This model clarifies the relationship between a product’s lifespan and varying stress levels. However, in instances where this relationship remains unclear, the application of partially ALTs (PALTs) is warranted. This study specifically concentrates on constant-stress PALTs , in which items are subjected exclusively to either normal or accelerated testing conditions, thereby avoiding the complexities associated with stress-model investigation. Cheng and Wang10, Ismail11, Nassar and Alam12, Nassr and Elharoun13, Nassar et al.14, Fathi et al.15, and Yao and Gui16, among others, have conducted studies into constant-stress methodologies. Prior work has examined estimation for various lifetime models mainly via classical and Bayesian methods. However, most studies concentrate on distributional parameters, with little to no treatment of reliability measures. Moreover, the literature largely relies on conventional progressive Type-II censoring (PTIIC) scheme and its variants, with minimal attention to newer flexible schemes like improved adaptive progressive Type-II censoring (IAPTIIC), the primary focus of this study.

In constant-stress PALTs, units are run at fixed stress levels (use and accelerated levels) to obtain failure information quickly while preserving relevance to use conditions. In practice, time, cost, and safety constraints mean that not all units can be observed to failure, so the test must be paired with a censoring plan that governs removals and stopping rules. Censoring plans represent a fundamental approach to reducing both testing duration and associated costs. In practical experimental settings, the acquisition of complete lifetime data can pose significant challenges due to factors such as imposed study deadlines or the premature removal of units to conserve resources. The two principal censoring methodologies employed are Type-I censoring, in which tests are terminated after a predetermined period, and Type-II censoring, where tests are concluded following a specified number of failures. A widely employed and flexible method of censoring in reliability studies is the PTIIC scheme. This scheme enables researchers to withdraw a predetermined number of surviving test items throughout the experiment. The withdrawn items, which have not yet experienced failure, may subsequently be used for additional tests or analyzed independently. See for more details Asgharzadeh17, Rastogi and Tripathi18, Wu and Gui19, Abo-Kasem et al.20, and Khalifa et al.21.

A more advanced censoring method, referred to as the adaptive PTIIC (APTIIC )scheme, was developed by Ng et al.22. This approach seeks to enhance the accuracy of statistical inference methodologies by addressing limitations present in a previously proposed hybrid progressive censoring plan proposed by Kundu and Joarder23. In their design, the testing process concludes at a random time, determined by either a pre-set time limit or the occurrence of a specific number of failures, whichever happens first. This approach frequently leads to an insufficient number of observed failures for analysis.

Ng et al.22 observed that the APTIIC strategy exhibits optimal efficacy in the absence of a designated testing deadline; however, for products requiring high reliability, this approach may result in excessively lengthy experiments, thereby making it impractical. For more detail regarding the APTIIC plan, one can refer to Dutta et al.24, Lv et al.25, Sharma and Kumar26, Kumari et al.27. To mitigate this limitation, Yan et al.28 proposed an enhanced version termed the IAPTIIC scheme. The IAPTIIC strategy ensures that testing concludes with a predetermined endpoint, thereby avoiding the issue of extended experiments while still enabling researchers to gather a suitable number of failures. By effectively balancing time constraints with objectives related to failure counts, IAPTIIC presents a more viable solution for the testing of durable products. Recently, some authors considered the IAPTIIC plan in their investigation, see for example Dutta and Kayal29, Dutta et al.30, and Nassar et al.31. In the subsequent section, we will discuss in detail the IAPTIIC plan in conjunction with constant-stress PALTs.

From an experimental perspective, the proposed censoring design is most feasible in laboratory settings where multiple units can be tested simultaneously and where intermediate removals of surviving items are logistically manageable. Such conditions commonly occur in accelerated life tests of electronic components, material specimens, or chemical samples, where units can be monitored in batches under controlled stress environments. However, in large-scale field studies or industrial setups with limited testing chambers, human resources, or high unit-removal costs, the full implementation of IAPTIIC plan may be challenging. In these cases, traditional censoring schemes such as Type-I or conventional progressive censoring may provide more practical alternatives, albeit at some cost in statistical efficiency. This trade-off underscores the importance of tailoring censoring designs to the specific experimental constraints while leveraging the advantages of IAPTIIC scheme whenever feasible.

In practical reliability analysis, the selection of an appropriate statistical model to characterize failure-time data is of main importance. The precision of the results is significantly influenced by how well the selected model fits the observed data. Among the numerous models available, the Weibull distribution is distinguished as a widely utilized choice. Its flexibility renders it particularly suitable for modeling failure patterns across various domains, including but not limited to engineering and communication systems. The Weibull distribution is remarkably versatile, capable of modeling failure data exhibiting constant, increasing, or decreasing failure rates over time. This flexibility has established it as a popular choice across various studies, such as Bhattacharya and Bhattacharjee32, Starling et al.33, Hussain et al.34, Kazempoor et al.35, and Yu et al.36. Assume that the lifetime of a component under normal operating conditions, denoted by the random variable Y, follows the Weibull distribution. The respective probability density function (PDF) and cumulative distribution function (CDF) of Y can be written as:

where \(\phi\) and \(\vartheta\) are the scale and shape parameters, respectively.

The cumulative distribution function related to the random variable X is as follows

This study focuses on analyzing two critical reliability measures associated with the Weibull distribution, namely the reliability function (RF) and the hazard rate function (HRF). Under normal operating conditions, the RF and HRF for the Weibull distribution can be expressed, respectively, as follows

This study integrates constant-stress PALTs with the IAPTIIC plan to efficiently collect failure data for long-lasting products, significantly reducing testing time and costs when lifetimes follow the Weibull distribution. We are motivated to complete this work for many reasons, as follows:

-

1.

The first is that the constant-stress PALTs are particularly advantageous for highly reliable products, as traditional testing methodologies can be extremely lengthy. The constant-stress PALTs mitigate this issue by subjecting items to both normal and high-stress conditions, thereby accelerating failure observations while maintaining realistic testing durations.

-

2.

The second is that the IAPTIIC plan enhances statistical inference efficiency by ensuring an adequate number of failures are observed within a predefined time frame, thereby overcoming the limitations of prior censoring approaches, such as the APTIIC plan.

-

3.

The third key point is the significance of the Weibull distribution in life testing and reliability analysis, which has garnered considerable attention from reliability engineers and statisticians alike.

In addition, two specific gaps in the literature motivate our study:

-

1.

To the best of our knowledge, no prior work has analyzed the Weibull distribution under constant-stress PALTs when combined with the IAPTIIC scheme. Existing studies on constant-stress PALTs typically adopt conventional single-stage or multi-stage censoring plans, not the more flexible IAPTIIC design.

-

2.

Most available studies on the Weibull model emphasize estimating distributional parameters and the acceleration factor, while providing no point or interval estimation for reliability measures.

In the current study, we focus on estimating the Weibull parameters, the acceleration factor which quantifies how stress accelerates failures, and the RF and HRF under normal operating conditions, these reliability measures have received limited attention in earlier studies. To achieve this, we employ two statistical approaches: the classical method using maximum likelihood estimation for point estimates and approximate confidence intervals (ACIs), and the Bayesian approach, which utilizes posterior estimates derived via the squared error loss function and supported by Markov Chain Monte Carlo (MCMC) procedure. The proposed methods are precisely tested through simulation experiments across various scenarios to evaluate their accuracy. Additionally, we validate our approach with two real-world case studies, demonstrating its practical applicability and confirming the suitability of the Weibull model for real-life data.

The remaining parts of this study are structured as follows: Section “Model description and test methodology” provides a detailed explanation of the IAPTIIC plan within the framework of constant-stress PALTs, along with the structural functions of the Weibull distribution under accelerated conditions. In Sect. “Classical point and interval procedures”, we present the maximum likelihood estimators (MLEs) and approximate confidence intervals (ACIs) for the unknown parameters, RF, and HRF under normal operating conditions. Section “Bayesian estimation” focuses on Bayesian estimation, including point estimates and Bayes credible intervals (BCIs). Section “Monte Carlo comparisons” summarizes the design and results of the simulation study, evaluating the performance of the proposed methods. Section “Real accelerated applications” applies these methodologies to two real-world data sets, showcasing their practical applicability. Key observations and insights from the study are presented in Sect. “Concluding remarks”.

Model description and test methodology

In the context of constant-stress PALTs, suppose we have a total of n test units. These units are divided into two samples as follows:

-

1.

The first sample consists of \(n_1\) units, randomly selected from the n test units, which are tested under normal operating conditions. It is assumed that the lifetime of each unit in this group follows the Weibull distribution with PDF and CDF given, respectively, by (1) and (2).

-

2.

The second sample includes the remaining \(n_2 = n - n_1\) units, which are subjected to accelerated conditions. In this case, the HRF of a test unit can be derived using the relation \(H_2(y;\varvec{\theta }) = \lambda H_{1}(y;\phi ,\vartheta )\), where \(\lambda >1\) is the acceleration factor using proportional hazards life–stress link, and \(\varvec{\theta }=(\phi ,\vartheta ,\lambda )^{\top }\) is the vector of the unknown parameters.

As a results, the RF under accelerated conditions can be derived as

$$\begin{aligned} R_2(y;\varvec{\theta })=\exp \left\{ -\int _{0}^{y}H_2(x;\varvec{\theta })dx\right\} , \end{aligned}$$where the corresponding RF and HRF, in this case, follow

$$\begin{aligned} H_2(y;\varvec{\theta })=\phi \lambda \vartheta y^{\vartheta -1}, y>0, \phi ,\vartheta>0, \lambda >1 \end{aligned}$$(4)and

$$\begin{aligned} R_2(y;\varvec{\theta })=e^{-\phi \lambda y^{\vartheta }}. \end{aligned}$$The CDF and PDF of the Weibull distribution at accelerated conditions can be written, respectively, as

$$\begin{aligned} G_{2}(y;\varvec{\theta })=1-e^{-\phi \lambda y^{\vartheta }} \end{aligned}$$(5)and

$$\begin{aligned} g_{2}(y;\varvec{\theta })= \phi \lambda \vartheta y^{\vartheta -1}e^{-\phi \lambda y^{\vartheta }}. \end{aligned}$$(6)

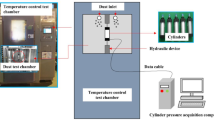

For each test sample, the units are tested under the IAPTIIC scheme. Let \(m_j < n_j, j=1,2\) represent the target number of failures to be acquired, with a predefined removal pattern \((S_{j1}, \dots , S_{jm_j})\). The experimenter sets two time thresholds, \(T_{j1} < T_{j2}\), which define the experimental time frame, testing may extend beyond \(T_{j1}\) but must terminate by \(T_{j2}\). Thus, the IAPTIIC sampling plan within the framework of constant-stress PALT is implemented as follows: At each observed failure time \(Y_{ji}\), where \(j = 1, 2\) and \(i = 1, \dots , m_j\), the experimenter removes \(S_{ji}\) surviving units from the remaining functional units. The experiment continues until one of the following three terminal scenarios occurs: Case I: If \(Y_{jm_j} < T_{j1}\), the experiment terminates at \(Y_{jm_j}\) with all remaining units removed at that time, yielding the conventional PTIIC sample. Case II: If \(Y_{jr_{j}}< T_{j1} < Y_{jr_{j}+1}\) for \(r_{j} < m_j\) represent the number of failures before time \(T_{j1}\), an adaptive adjustment is applied, where removals are stopped between \(T_{j1}\) and \(Y_{jm_j}\) by setting \(S_{jr_j+1} = \cdots = S_{jm_j-1} = 0\), and the experiment proceeds until \(Y_{jm_j}\) is observed, generating the APTIIC sample. Case III: If \(Y_{jm_j} > T_{j2}\), the study concludes at \(T_{j2}\), with \(d_j\) observed failures satisfying \(Y_{jd_j} \le T_{j2} < Y_{jd_j+1}\), removals stopped after \(T_{j1}\) (\(S_{jr_j+1} = \cdots = S_{jd_j} = 0\)), and the final withdrawal given by \(S_j^* = n_j - d_j - \sum _{i=1}^{r_j} S_{ij}\). Figure 1 represents the schematic representation of the different three cases of the IAPTIIC scheme with constant-stress PALTs.

Let \(\underline{\textbf{y}}=(y_{j1},y_{j2}, \dots , y_{jd_{j}}), j=1,2\) be the observed two IAPTIIC samples with constant-stress PALTs. Then, the joint likelihood function in this case can be written, without constant terms, as

where

Possible cases of the IAPTIIC scheme with constant-stress PALTs.

Classical point and interval procedures

In this section, we discuss the point and interval estimations for the model parameters, acceleration factor, and the two reliability metrics under usage circumstances conditions.

Point estimation

Let \((y_{11}, y_{12}, \dots , y_{1d_1})\) represent an observed IAPTIIC sample obtained from the Weibull population under normal operating circumstances, with the PDF and CDF given by (1) and (2), respectively. Furthermore, let \((y_{21}, y_{22}, \dots , y_{2d_2})\) denote an observed IAPTIIC sample collected from the Weibull population under accelerated conditions, with the CDF and PDF described by (5) and (6), respectively. Based on these samples, the likelihood function of the unknown parameter vector \(\varvec{\theta }\) can be expressed using (7) as follows

where \(B=B_{1}+B_{2}\) and \(\varphi _{j}(\underline{\textbf{y}};\vartheta )=\sum _{i=1}^{B_{j}}y_{ji}^{\vartheta }+\sum _{i=1}^{D_{j}}S_{ji}y_{ji}^{\vartheta }+S_{j}^{\star }\tau _{j}^{\vartheta }.\) The log-likelihood function of (8) takes the form

To find the MLEs of the parameters \(\phi\), \(\lambda\), and \(\vartheta\), we take the partial derivatives of the log-likelihood function given by (9) with respect to each parameter and solve the resulting system of equations as

and

where \(\varphi _{j}^{\star }(\underline{\textbf{y}};\vartheta )=\sum _{i=1}^{B_{j}}y_{ji}^{\vartheta }\log (y_{ji})+\sum _{i=1}^{D_{j}}S_{ji}y_{ji}^{\vartheta }\log (y_{ji}) +S_{j}^{\star }\tau _{j}^{\vartheta }\log (\tau _{j})\). From the normal equations in (10) and (11), the MLEs of the unknown parameters \(\lambda\) and \(\phi\), can be acquired explicitly, after some simplifications, as

and

By replacing the unknown parameters \(\phi\) and \(\lambda\) with their MLEs \(\hat{\phi }(\vartheta )\) and \(\hat{\lambda }(\vartheta )\) into Eq. (9), the profile log-likelihood of \(\vartheta\) can be derived after some simplifications as follows

After differentiating (15) with respect to \(\vartheta\) and equating the result to zero, the MLE of \(\vartheta\), denoted by \(\hat{\vartheta }\), can be obtained by solving the next equation iteratively, say \(\vartheta =l^{*}(\vartheta )\),

It is worth noting that the MLE \(\hat{\vartheta }\) cannot be expressed in closed form. Therefore, one can adopt the iterative procedure outlined in Pareek et al.37 to obtain \(\hat{\vartheta }\). Once \(\hat{\vartheta }\) is determined, the MLEs of the other parameters, \(\lambda\) and \(\phi\), denoted by \(\hat{\lambda }\) and \(\hat{\phi }\), can be straightforwardly computed by substituting \(\hat{\vartheta }\) into (13) and (14), respectively.

Under the invariance property of MLEs, the reliability characteristics under normal usage settings at a specific time t can be derived from (3). This is accomplished by

Interval estimation

Beyond obtaining point estimates for unknown parameters and reliability metrics under normal conditions, it is crucial to measure the uncertainty in these estimates. Interval estimation helps achieve this by providing confidence ranges that likely contain the true values at a specified confidence level \((1-\alpha )100\%\). In this study, we construct the \((1-\alpha )100\%\) ACIs based on the large-sample behavior of the MLEs. Under standard regularity conditions, the MLE vector \(\hat{\varvec{\theta }} = (\hat{\phi }, \hat{\lambda }, \hat{\vartheta })^\top\) approximately follows a normal distribution with mean \(\varvec{\theta }\) and variance covariance matrix estimated by inverting the observed Fisher information matrix, denoted by \(\varvec{\Sigma }(\hat{\varvec{\theta }})\), and given by

where the detailed expressions for the second derivatives of the log-likelihood function (9) are provided by

where \(\varphi _{j}^{\bullet }=\sum _{i=1}^{B_{j}}y_{ji}^{\vartheta }\log ^{2}(y_{ji})+\sum _{i=1}^{D_{j}}S_{ji}y_{ji}^{\vartheta }\log ^{2}(y_{ji}) +S_{j}^{\star }\tau _{j}^{\vartheta }\log ^{2}(\tau _{j})\). Accordingly, the \((1-\alpha )100\%\) ACIs of the three unknown parameters are eventually obtained as shown below

where \(z_{\alpha /2}\) refers to the upper \(\alpha /2\) percentile of the standard normal distribution.

Constructing ACIs for the RF and HRF under normal conditions requires estimating the variances related to their MLEs \(\hat{R}_{1}(t)\) and \(\hat{H}_{1}(t)\), respectively. A key challenge is determining the uncertainty in these metrics derived from the MLEs of the parameters.

The delta method offers a practical solution by approximating these variances employing: (1) The estimated variance-covariance matrix of the parameter estimates presented in (17). (2) The first-order partial derivatives of the RF and HRF with respect to the parameters. These partial derivatives are evaluated at the MLEs of the unknown parameters. Let \(\hat{\nabla }_1\) and \(\hat{\nabla }_2\) refer to the gradient vectors of the RF and HRF obtained at the MLEs of \(\phi , \lambda\) and \(\vartheta\), respectively, expressed as

where

and

The approximate variances can subsequently be derived as

Then, the \((1-\alpha )100\%\) ACIs for the RF and HRF under normal usage conditions are

Bayesian estimation

This section investigates the Bayes estimates and BCIs for the model parameters, acceleration factor, and the RF and HRF under normal usage settings within the framework of the IAPTIIC plan with constant-stress PALTs. The Bayes estimates are obtained under the assumption that the three unknown parameters are independent and by using the symmetric squared error loss function. In practice, the Bayesian method represents a substantial alternative to traditional estimation techniques, conceptualizing unknown parameters as random variables, wherein prior beliefs are updated in light of new observations. By employing this principle, the Bayesian perspective enhances estimates through iterative learning.

In constant-stress PALTs combined with the IAPTIIC plan, the effective number of observed failures is often small and uneven across stress segments. This happens because only part of the test is run at high stress, units are removed adaptively during the experiment, and early stopping at inspection times can leave few failures at normal use. Under these conditions, the classical estimators may be unstable or biased in small samples. In addition, the ACIs that rely on large-sample normality can then have poor coverage, especially for derived reliability quantities such as the SF and HRF. The Bayesian approach works well with small samples because it combines prior information with the data to stabilize estimates. Credible intervals stay reliable when failures are few, and informative priors prevent extreme or unstable results while respecting natural constraints.

By examining the likelihood function (8), it is evident that the gamma prior serves as a natural conjugate prior for the unknown parameter \(\phi\). For the parameter \(\vartheta\), although no conjugate prior exists, we adopt the gamma prior due to its compatibility with the support of \(\vartheta\) and its ability to maintain simplicity in posterior analysis. As for the acceleration factor \(\lambda\), a non-informative prior is chosen to simplify computational challenges during the implementation of MCMC procedures. Accordingly, the joint prior distribution of \(\varvec{\theta }\), can be written as

with \(\nu _{j},\omega _{j}>0, j=1,2\) are the known hyper-parameters. By merging the observed data provided by the likelihood function in (8), with prior knowledge represented in the joint prior distribution in (18), the posterior distribution of the parameter vector \(\varvec{\theta }\) is derived as

where C is the normalized constant derived as

Under the squared error loss function, the Bayes estimator of a parameter (or function of parameters) is the mean of its posterior distribution. Let \(\varpi (\varvec{\theta })\) represent any function of the unknown parameters \(\varvec{\theta }\), such as a parameter itself, the RF, or the HR. The Bayes estimator of \(\varpi (\varvec{\theta })\) is calculated as

The Bayes estimator in (20) cannot be computed directly due to the complexity of the posterior distribution. Instead, the MCMC procedures are used to approximate Bayes point estimates and construct the BCIs. The MCMC procedures are a key computational tool in Bayesian analysis, enabling numerical approximation of complex posteriors by generating samples from the target distribution. Among MCMC methods, two widely used algorithms are: Gibbs Sampling and Metropolis-Hastings (MH) algorithm. These methods are foundational in statistical modeling and reliability engineering, connecting theoretical frameworks with practical applications. To implement MCMC methods, like Gibbs or MH, we first need the full conditional distributions for each parameter. These distributions are derived from the joint posterior distribution in (19).

For the parameter \(\phi\), for example, in the vector \(\varvec{\theta }\), the full conditional distribution is proportional to the joint posterior, ignoring terms not involving \(\phi\). The explicit forms of these conditional distributions are instrumental in determining the most efficient MCMC algorithm to employ; for instance, the Gibbs sampler is appropriate when the conditionals are known, whereas the MH algorithm is employed in other cases. In our case, the full conditional distributions of \(\phi , \lambda\) and \(\vartheta\) can be derived, respectively, using the joint posterior distribution in (19) as

and

To determine if the full conditional distributions in (21)–(22) correspond to any recognized probability distributions, we examine their structure and derive the following observations :

-

1.

The full conditional distribution of the scale parameter \(\phi\) in (21) is gamma distribution with scale parameter \(\left[ \sum _{j=1}^{2}\lambda ^{j-1}\varphi _{j}(\underline{\textbf{y}};\vartheta )+\omega _{1}\right]\) and shape parameter \((B+\nu _{1})\).

-

2.

The full conditional distribution of the acceleration factor \(\lambda\) in (22) is gamma distribution with scale parameter \(\left[ \phi \varphi _{2}(\underline{\textbf{y}};\vartheta )\right]\) and shape parameter \(B_{2}\).

-

3.

The full conditional distribution of the shape parameter \(\vartheta\) given by \(K(\vartheta |\phi ,\lambda ,\underline{\textbf{y}})\) in (23) is unknown.

As a result, generating MCMC samples from the conditional distributions of the scale parameter \(\phi\) and acceleration factor \(\lambda\) can be straightforwardly achieved using standard gamma sampling algorithms. In contrast, the conditional distribution of the shape parameter \(\vartheta\) does not conform to a recognizable probability distribution.

To address this, we advocate for the adoption of the MH algorithm. A critical step in implementing the MH algorithm involves selecting a suitable proposal distribution for sampling; here, we justify the use of a normal distribution as the proposal through empirical analysis via graphical examination of the conditional posterior in (23). Accordingly, the MH-within-Gibbs algorithm is deployed to simulate MCMC samples for \(\phi\), \(\lambda\), and \(\vartheta\), enabling the derivation of Bayesian point and interval estimates for any function of these parameters. The implementation of the MH-within-Gibbs framework follows the structured sampling procedure detailed below

-

Step 1: Set initial values for \(\phi\), \(\lambda\), and \(\vartheta\) as \((\phi ^{(0)}, \lambda ^{(0)}, \vartheta ^{(0)})\equiv (\hat{\phi },\hat{\lambda },\hat{\vartheta })\).

-

Step 2: Put \(h = 1\).

-

Step 3: Generate \(\vartheta ^{(h)}\) via the MH algorithm:

-

(i)

Propose a candidate \(\vartheta ^{*}\) from \(N(\vartheta ^{(h-1)}, \hat{\sum }_{33})\).

-

(ii)

Compute \(\xi = \min \left\{ 1, \frac{K\left( \vartheta ^{*} \mid \phi ^{(h-1)},\lambda ^{(h-1)}, \underline{\textbf{y}}\right) }{K\left( \vartheta ^{(h-1)} \mid \phi ^{(h-1)},\lambda ^{(h-1)}, \underline{\textbf{y}}\right) }\right\} .\)

-

(iii)

Draw \(u \sim U(0, 1)\).

-

(iv)

Update \(\vartheta ^{(h)} = \vartheta ^{*}\) if \(u \le \xi\); otherwise, retain \(\vartheta ^{(h)} = \vartheta ^{(h-1)}\).

-

(i)

-

Step 4: Get \(\phi ^{(h)}\) from Gamma\(\left( B+\nu _{1},\left[ \sum _{j=1}^{2}[\lambda ^{(h)}]^{j-1}\varphi _{j}(\underline{\textbf{y}};\vartheta ^{(h)})+\omega _{1}\right] \right)\).

-

Step 5: Get from Gamma\(\left( B_{2},\left[ \phi ^{(h)} \varphi _{2}(\underline{\textbf{y}};\vartheta ^{(h)})\right] \right)\).

-

Step 6: At iteration h, compute the RF and HRF at normal operating conditions:

$$R_{1}^{(h)} = e^{-\phi ^{(h)} t^{\vartheta ^{(h)}}}, \quad H_{1}^{(h)} = \phi ^{(h)} \vartheta ^{(h)} t^{\vartheta ^{(h)} - 1}.$$ -

Step 7: Update the counter to \(h \leftarrow h + 1\).

-

Step 8: Repeat Steps 3–7 for \(\mathcal {M}\) iterations to obtain the MCMC samples:

$$\left\{ \phi ^{(h)}, \lambda ^{(h)}, \vartheta ^{(h)}, R_{1}^{(h)}, H_{1}^{(h)}\right\} , \quad h = 1, \dots , \mathcal {M}.$$

Then, we can compute the Bayesian estimates and BCIs from the gathered MCMC samples. To mitigate initialization bias and ensure convergence, the first \(\mathcal {A}\) iterations are discarded as a burn-in period. The posterior mean estimates for the model parameters \(\phi\) and \(\vartheta\), acceleration factor \(\lambda\), and reliability metrics \(R_{1}(t)\) and \(H_{1}(t)\) are computed, respectively, as

To construct the BCIs, the retained MCMC samples are sorted in ascending order:

The \(100(1-\alpha )\%\) BCIs are then calculated as the \(\alpha /2\) and \((1-\frac{\alpha }{2})\) quantiles of the sorted samples, as

Monte Carlo comparisons

This section presents several extensive Monte Carlo simulation studies aimed at evaluating the performance of both point and interval estimators, with a particular focus on their accuracy and reliability in estimating the parameters \(\vartheta\), \(\phi\), and \(\lambda\), as well as the reliability and hazard functions \(R_{1}(t)\) and \(H_{1}(t)\).

Simulation setups

To assess estimator performance under varying conditions, we generate 1,000 datasets from the IAPTIIC plan with constant-stress PALT sampling, using two sets of parameter settings (\(\vartheta\), \(\phi\), \(\lambda\)), namely: Set-1:(1.5, 0.5, 1.5) and Set-2:(2, 1, 2)—where \((\vartheta ,\phi )\) denote the Weibull parameters and \(\lambda\) is the acceleration factor. Simulations are conducted across multiple configurations of full sample sizes \(n_i\ (i = 1,2)\), effective sample sizes \(m_{ij}\ (i,j = 1,2)\), and threshold times \(T_{ij}\ (i,j = 1,2)\). Table 1 outlines these configurations while fixing \(T_{11} (= {0.5,\ 1.0})\), \(T_{21} (=1.0,\ 1.5)\), \(T_{12} (=0.4,\ 0.8)\), and \(T_{22} (= {0.8,\ 1.2})\). At the pre-specified time \(t = 0.1\), we evaluate the estimates of the reliability and hazard functions, \((R_1(t), H_1(t))\), under nominal operating conditions, with the true values taken as (0.98, 0.24) for Set-1 and (0.99, 0.20) for Set-2.

Following the generation of 1,000 IAPTIIC samples gathered under constant-stress PALT sampling, the MLEs (along with associated 95% ACIs) for \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) are obtained using the Newton-Raphson optimization algorithm using maxLik package developed by Henningsen and Toomet 38. For the Bayesian inference, two sets of hyperparameter configurations \((\nu _i, \omega _i)\) (for \(i=1,2\)) are considered for each parameter scenario.

All specified values of \((\nu _i, \omega _i),\ i = 1, 2\), corresponding to the prior distributions in Sets 1 and 2, are selected such that the resulting prior means coincide with the actual values of the Weibull parameters \(\vartheta\) and \(\phi\). Specifically:

-

Set-1:

-

Group-1: \((\nu _1, \nu _2) = (7.5, 2.5)\) and \(\omega _i = 5\) for \(i = 1, 2\);

-

Group-2: \((\nu _1, \nu _2) = (15, 5)\) and \(\omega _i = 10\) for \(i = 1, 2\).

-

-

Set-2:

-

Group-1: \((\nu _1, \nu _2) = (10, 5)\) and \(\omega _i = 5\) for \(i = 1, 2\);

-

Group-2: \((\nu _1, \nu _2) = (20, 10)\) and \(\omega _i = 10\) for \(i = 1, 2\).

-

Subsequently, \(\mathcal {M}=12,000\) samples for \(\vartheta\), \(\phi\), and \(\lambda\) are generated via the MCMC approach based on the posterior distributions (21), (22), and (23), respectively. To mitigate the effects of initial transients, the first \(\mathcal {A}=2,000\) samples are discarded as burn-in. The remaining samples, including 10,000 MCMC iterations, are then used to compute Bayesian point estimates and 95% credible intervals for \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) using the Metropolis-Hastings within Gibbs sampling scheme. These computations are carried out using the coda package developed by Plummer et al. 39, which facilitates posterior inference and convergence diagnostics.

In each experimental setup, the mean point estimate (MPE) for \(\vartheta\) (as an example) is given by

where \(\check{\vartheta }^{[i]}\) is the estimate of \(\vartheta\) obtained at i-th simulated sample.

Evaluate the precision of the calculated point estimators using the two metrics, namely root mean squared error (RMSE) and mean relative absolute bias (MARB), respectively, as

Evaluate the efficiency of the suggested interval estimation frameworks by computing the average interval length (AIL) and the coverage probability (CP) as follows:

where \(\textbf{D}(\cdot )\) is the indicator function, and \((\mathcal {L}(\cdot ), \mathcal {U}(\cdot ))\) denotes the estimated interval limits.

Simulation results and discussions

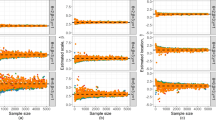

Part of the full simulation results for the point and interval estimators of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) from Set 2 have been moved to the supplementary file for brevity. Based on the results presented in Tables 2, 3, 4, 5, 6, 7, 8, 9, 10 and 11, and guided by the criteria of achieving the lowest RMSE, MRAB, and AIL together with the highest CP, the following key findings are observed:

-

The proposed point and interval estimators for \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) exhibit satisfactory performance across both Weibull population groups, Set i for \(i=1,2\).

-

When \(n_i\) (or \(m_i\)), for \(i=1,2\), grows, it leads to improved estimator performance and confirms their consistency. A similar trend is observed when the total of the censoring scheme values, \(\sum _{j=1}^{m_i} S_{ij}\), decreases.

-

Owing to the inclusion of prior information, Bayesian point and credible interval estimation findings consistently outperform their frequentist estimation results in terms of accuracy for all parameters considered.

-

Among the two prior configurations determined in Group-i for \(i=1,2\), estimates derived from Group-2 (which has smaller variance) are generally more accurate than those obtained from Group-1, aligning with expectations.

-

As the threshold values \(T_{ij}\) for \(i,j=1,2\) increase, a notable improvement in estimation accuracy is observed: the RMSEs, MRABs, and AILs for \(\theta\), \(\mu\), \(\beta\), \(R_{1}(t)\), and \(H_{1}(t)\) consistently decrease, while their CPs exhibit an increasing trend.

-

Comparing the performance under different censoring schemes, \((S_{j1}, \dots , S_{jm_j})\), we noticed that:

-

For parameters \(\vartheta\), \(\lambda\), and \(H_{1}(t)\), the right-censoring (specified in Test [3]) provides more accurate estimation results than others;

-

For parameter \(\phi\), the middle-censoring (specified in Test [2]) provides more accurate estimation results than others.

-

For parameter \(R_{1}(t)\), the left-censoring (specified in Test [1]) provides more accurate estimation results than others.

-

-

Comparing the two Weibull population sets 1 and 2 on the estimators performance, i.e., when the values of \(\vartheta\), \(\phi\), and \(\lambda\) increased, it is noted that:

-

For parameters \(\vartheta\), \(\phi\), \(\lambda\), and \(H_{1}(t)\), their RMSE, MRAB, and AIL simulated values are increased, while the associated CP values decreased;

-

For reliability parameter \(R_{1}(t)\), its RMSE, MRAB, and AIL simulated values are decreased, while the associated CP values increased.

-

-

Using Set-1, the calculated estimates of all unknown parameters considered, in most cases, demonstrate superior performance and greater stability compared to those derived from Set-2.

-

It is observed that the estimated CPs of BCIs frequently exceed the nominal 95% level, particularly in cases of small sample sizes or heavy censoring. This conservative behavior stems from the use of diffuse priors, which inflate posterior uncertainty and result in wider intervals. Although this may reduce efficiency relative to ACIs, it effectively prevents under-coverage—an important feature in high-risk reliability applications, where underestimated uncertainty could lead to unsafe conclusions.

-

Although the simulation experiments are done with \(\vartheta \ge 1\), the proposed methodology is not restricted to this case. Since the Weibull distribution and the censoring design are mathematically valid for \(\vartheta < 1\), the estimation procedures extend naturally to decreasing failure-rate scenarios. Thus, the results presented here can be interpreted as illustrative rather than exhaustive, with the method remaining applicable across increasing, constant, and decreasing hazard structures.

-

Overall, the Bayesian framework, implemented via an MH-within-Gibbs algorithm, proves to be the most effective method for estimating the parameters of the Weibull model parameters (\(\vartheta\), \(\phi\)), the acceleration factor (\(\lambda\)), and the associated reliability and failure rate functions (\(R_{1}(t)\) and \(H_{1}(t)\)) against normal operating conditions in the constant-stress PALT sampling with IAPTIIC data.

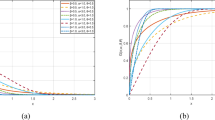

Using a data visualization tool and taking Set-1 as an example, Figure 2 displays the maximum likelihood and Bayesian estimates of \(R_{1}(t)\) and \(H_{1}(t)\) for \((n[1],n[2])=(40,30)\) under two sample allocations: \((m[1],m[2])=(20,10)\) (left panel) and \((m[1],m[2])=(30,20)\) (right panel), with \((T_{11},T_{12},T_{21},T_{22})\) set to (0.5, 1.0, 0.4, 0.8) and (1.0, 1.5, 0.8, 1.2), respectively. In each subplot, the labels \(A_i\) and \(B_i\) (\(i=1,2,3\)) on the x-axis denote the RMSE and MRAB values obtained from the MLE, Bayes (Group-1), and Bayes (Group-2) frameworks, respectively. These visualizations corroborate the numerical results for \(R_{1}(t)\) and \(H_{1}(t)\) reported in Tables 5 and 6.

Plots for RMSE and MRAB results of \(R_{1}(t)\) and \(H_{1}(t)\) when \((n[1],n[2])=(40,30)\).

Real accelerated applications

This section presents two real-data engineering applications to empirically assess the performance of the proposed estimation methods. The first dataset pertains to the failure times of white organic light-emitting diodes, while the second concerns the lifetime of micro-droplets in ambient environmental conditions.

Before examining the proposed real-world datasets, it is worth mentioning here that:

-

The light-emitting diodes data analyzed in this work are representative of reliability issues in modern illumination systems, such as automotive headlights, traffic lights, and large-scale display panels. In such applications, failure or accelerated degradation of light-emitting diodes can lead to increased maintenance costs and safety concerns. Our framework allows engineers to obtain reliable lifetime metrics under partially accelerated testing, thereby reducing testing time while maintaining inferential accuracy.

-

The insulating liquid dataset reflects a key reliability concern in high-voltage transformers, which are essential components in power generation and distribution networks. The dielectric performance of these fluids directly affects insulation integrity and transformer lifespan. By applying the proposed censoring scheme, practitioners can obtain more efficient reliability estimates, which in turn guide condition monitoring, preventive maintenance, and warranty design.

-

Thus, the proposed methodology bridges statistical theory and engineering practice, offering a robust tool for reliability assessment in diverse industrial scenarios.

Light-emitting diode data

Light-emitting diodes (LEDs), a prominent subclass of semiconductor devices, are widely integrated into modern electronic displays, including televisions and multicolor panels. An LED is composed of a thin layer of semiconductor material that has been treated to enhance its properties. When electricity is applied in the correct direction, it produces light at a specific wavelength, based on the materials used and their treatment. Table 12 presents the failure times, measured in units of thousand hours, for LEDs subjected to both normal-use and accelerated-stress conditions, with each scenario comprising 58 observed failure points. Cheng and Wang have previously explored this dataset10, and it was more recently revisited in the analyses by Nassar and Elshahhat40. To evaluate the suitability of the Weibull distribution as a potential model for these lifetime data, the Kolmogorov–Smirnov (\(\textsf {KS}\)) statistic, along with its associated P-value, is employed. From Table 12, the MLEs (with their standard errors (SEs)) and the 95% ACIs (with their interval lengths (ILs)) of the Weibull parameters \(\vartheta\) and \(\phi\) for the normal-use and stress conditions data are obtained; see Table 13. Furthermore, the KS statistics and their corresponding \(\textsf {P}\)-values for the complete datasets under each condition are 0.1659 (0.082) and 0.1541 (0.127), respectively. These results support the adequacy of the Weibull model in capturing the failure behavior of the LED devices under both operational conditions.

A further diagnostic evaluation of the Weibull fit is shown in Fig. 3, incorporating four graphical techniques that collectively assess goodness-of-fit from multiple perspectives: (i) empirical and estimated reliability lines and associated 95% ACI/BCI bounds; (ii) probability-probability (PP) lines comparing observed and fitted probabilities; (iii) quantile-quantile (QQ) lines; and (iv) a contour map of the log-likelihood function. For both normal-use and accelerated-stress datasets, the panels in Fig. 3a–c exhibit strong alignment between the empirical observations and the Weibull model, indicating an excellent fit. Lastly, the log-likelihood contour in Fig. 3d clearly demonstrates the existence and uniqueness of the fitted MLEs for the model parameters \(\vartheta\) and \(\phi\), thereby justifying their use as initial values in further inferential procedures.

Visualization panels of the Weibull model from LED datasets.

To demonstrate the feasibility of the proposed methodologies, taking \(m_{1}=m_{2}=20\), three artificial samples based on various choices of threshold levels and progressive censoring patterns \((S_{j1}, \dots , S_{jm_j}),\ j=1,2\), several IAPTIIC samples with constant-stress PALT from the LED datasets are created; see Table 14. For each generated sample, the classical estimates in addition to their 95% ACI estimates as well as the Bayes estimates in addition to their 95% BCI estimates of \(\beta ,\ \delta\), and \(R_{1}(t)\) (at distinct time \(t=0.5\)) are calculated. In Table 15, the point estimates (along with their SEs) and the interval estimates (along with their ILs) of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) are reported. Due to there being no available prior information about \(\vartheta\), \(\phi\), or \(\lambda\), we set \(\nu _j\) and \(\omega _j\) for \(j=1,2\) as 0.001, which implies that the prior densities are almost improper. According to the MCMC methodology, we repeat the procedure \(\mathcal {M}=50,000\) times and discard the first \(\mathcal {A}=10,000\) iterations as burn-in. The starting values of \(\vartheta\), \(\phi\), and \(\lambda\) used to run the MCMC sampler are assumed to be their frequentist estimates.

As we anticipated, from Table 15, the Bayes estimates perform better than the estimates derived from the likelihood method in terms of their lowest St.Es, and the BCI estimates behave superior compared to those developed by the ACI method in terms of shortest ILs.

From the remaining 40,000 MCMC samples generated for each artificial dataset described in Table 14, Table 16 summarizes key posterior characteristics of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\). These include the posterior mean, mode, quartiles (\(\mathcal {Q}_i,\ i = 1, 2, 3\)), standard deviation (SD), and skewness (Sk.). The reported summaries support the same Bayesian findings listed in Table 15 and indicate that the distribution of the simulated iterations of \(\vartheta\) or \(\lambda\) is almost fairly symmetrical, of \(\phi\) or \(H_{1}(t)\) is almost fairly symmetrical, while that of \(R_{1}(t)\) is negatively skewed.

To examine whether the MLEs for the parameters \(\vartheta\), \(\phi\), and \(\lambda\) derived from Sample \(\mathcal {S}[1]\) are both existent and unique, we constructed the corresponding log-likelihood contour plots as illustrated in Fig. 4. These graphical representations clearly demonstrate well-defined peaks, confirming that the MLEs for \(\vartheta\), \(\phi\), and \(\lambda\) (as summarized across all samples in Table 14) exist and are also unique. Moreover, these findings corroborate the numerical results provided in Table 15. Consequently, the frequentist point estimates are selected as initial values in the MCMC iterations.

A central concern in implementing MCMC methods lies in verifying the convergence behavior of the generated chains. To address this, we retained 40,000 post-burn-in samples from each chain and utilized both trace plots and posterior density plots, shown in Fig. 5, to assess convergence and mixing. The trace plots serve as diagnostic tools for evaluating chain stability over iterations, while the density plots illustrate the smoothed posterior distributions. In each panel of Fig. 5, the posterior mean (Bayesian estimate) for each parameter—whether \(\vartheta\), \(\phi\), \(\lambda\), the reliability function \(R_{1}(t)\), or the hazard function \(H_{1}(t)\)—is marked by a solid curve, with the associated credible interval boundaries indicated by dashed lines. As observed, (i) the MCMC chains show strong evidence of convergence, and (ii) the burn-in phase, denoted \(\mathcal {A}\), is sufficiently long to eliminate dependence on the initial values, ensuring that posterior inference is based on representative draws from the stationary distribution.

Insulating liquids data

Insulating fluid performance under high-voltage stress refers to the fluid’s ability to resist electrical breakdown when subjected to intense electric fields. It is typically evaluated by measuring the time or voltage at which the fluid fails to insulate, indicating its reliability and durability in electrical equipment like transformers or capacitors. This application investigates the breakdown behavior of an insulating fluid subjected to high-voltage endurance testing under varying electrical stress conditions. Specifically, the analysis focuses on the time-to-failure, measured in seconds, as a function of applied voltage levels. The dataset, originally gathered by Nelson41, includes experimental observations collected at two fixed stress settings: 40kV, representing typical operational exposure, and 45kV, reflecting accelerated degradation conditions. In Table 17, each stress level comprises twelve recorded failure times, providing a basis for comparative reliability modeling under normal and intensified usage scenarios.

Likelihood contours of \(\vartheta\) (left), \(\phi\) (middle), and \(\lambda\) (right) from LED data.

Density (left) and Trace (right) of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) from LED data.

The suitability of the Weibull distribution for modeling insulating fluid failure times is evaluated using the \(\textsf {KS}\) test, with significance assessed at the 5% level via the associated \(\textsf {P}\)-value. To achieve this, using Table 17, we estimate the Weibull parameters \(\vartheta\) and \(\phi\) using the ML method (along with their SEs) as presented in Table 18. It exhibits strong statistical evidence that the Weibull model offers an adequate fit to the insulating fluid datasets. This conclusion is visually supported by Fig. 6a–c, which shows a high degree of agreement between the observed and fitted values under both normal and accelerated stress conditions. Figure 6d confirmed that the fitted MLEs of \(\vartheta\) and \(\phi\) (provided in Table 18) exist and are unique as well as thereby justifying their use as initial values in further inferential procedures.

Visualization panels of the Weibull model from insulating fluid datasets.

To illustrate the applicability of the proposed inferential approaches, we consider the case where \(m_1 = m_2 = 8\) and generate three artificial IAPTIIC datasets under varying threshold settings and progressive designs \((S_{j1}, \dots , S_{jm_j}),\ j=1,2\), based on the insulating fluid data structure; see Table 19. For each simulated dataset, both classical and Bayesian estimates are computed for the parameters \(\beta\), \(\delta\), and the reliability function \(R_1(t)\) at \(t = 0.5\), including their corresponding 95% ACI/BCI intervals. Table 20 reports point estimates (with SEs) alongside the interval estimates (with ILs) for the parameters \(\vartheta\), \(\phi\), \(\lambda\), \(R_1(t)\), and \(H_1(t)\). In the absence of strong prior information on \(\vartheta\), \(\phi\), and \(\lambda\), we adopt vague priors by setting \(\nu _j = \omega _j = 0.001\) for \(j = 1, 2\), effectively rendering the prior distributions nearly non-informative. The MCMC algorithm is executed for \(\mathcal {M} = 50{,}000\) iterations, discarding the first \(\mathcal {A} = 10{,}000\) as burn-in, and initialized using frequentist estimates of the parameters. As anticipated, the results in Table 20 reveal that Bayesian estimation outperforms the classical approach, yielding lower standard errors, while the BCI intervals demonstrate improved precision over ACI intervals based on shorter lengths. The vital statistics (summarized in Table 21) support the same numerical findings of \(\vartheta\), \(\phi\), \(\lambda\), \(R_1(t)\), and \(H_1(t)\) (reported in Table 20).

To verify the existence and uniqueness of the MLEs for \(\vartheta\), \(\phi\), and \(\lambda\) obtained from Sample \(\mathcal {S}[1]\), several contour panels are depicted in Fig. 7. The subplots in Fig. 7 evidence support the numerical outputs presented in Table 20, lending further credibility to the estimated values and showing that all frequentist estimates of \(\vartheta\), \(\phi\), and \(\lambda\) existed and are unique. Accordingly, the corresponding MLE of \(\vartheta\), \(\phi\), or \(\lambda\) is employed as a starting point for the MCMC setup.

Likelihood contours of \(\vartheta\) (left), \(\phi\) (middle), and \(\lambda\) (right) from insulating fluid data.

In Bayesian analysis, demonstrating the convergence of MCMC chains is essential to ensure reliable posterior inference. After discarding \(\mathcal {A}\) an initial burn-in period, we retained 40,000 posterior samples from each chain for inferential analysis. Fig. 8 presents trace and density plots of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\). It states that the simulated MCMC chains mix well and reach convergence, and the burn-in size is adequate for eliminating transient behavior from the initial iterations.

Density (left) and Trace (right) of \(\vartheta\), \(\phi\), \(\lambda\), \(R_{1}(t)\), and \(H_{1}(t)\) from insulating fluid data.

In summary, the analysis of data from light-emitting diodes and insulating fluids confirms that the proposed Weibull lifetime model provides a practical and effective framework for engineering reliability studies. The results also emphasize the usefulness of the developed estimation procedures in real-world applications, especially under constant-stress partially accelerated life tests with an improved adaptive Type-II progressive censoring scheme, for estimating model parameters and key reliability measures.

Concluding remarks

This study addressed the challenge of acquiring meaningful failure data for highly reliable, long-lifespan products by integrating constant-stress partially accelerated life tests with an improved adaptive progressive Type-II censoring scheme. The proposed methodology balances the necessity for accelerated failure observations under stress conditions and the practical constraints of time and cost. Utilizing the Weibull distribution to model lifetimes, we estimated scale and shape parameters, the acceleration factor, the reliability function, and the hazard rate function under normal operating conditions. Classical maximum likelihood estimation provided point estimates and approximate confidence intervals, while the Bayesian framework, supported by Markov Chain Monte Carlo sampling, yielded posterior estimates and Bayesian credible intervals. Monte Carlo simulations demonstrated the superior accuracy and robustness of the Bayesian approach, particularly in scenarios characterized by small sample sizes or high censoring rates. The practicality of the proposed framework was validated through the analysis of two real-world accelerated lifetime datasets from the engineering field: one based on times to failure of 58 light-emitting diodes and the other representing the breakdown times of an insulating fluid subjected to high voltage, confirming the suitability of the Weibull model for reliability analysis. These case studies highlighted the industrial relevance of the method, offering a structured approach for engineers to evaluate product longevity without excessive testing durations. This work fills a critical gap in the reliability literature by merging the Weibull distribution with constant-stress partially accelerated life tests and an improved adaptive progressive Type-II censoring scheme, thereby enhancing life-testing efficiency for contemporary high-reliability products. Some possible limitations of the current study are: (1) we assume a Weibull lifetime model; if the real data do not follow Weibull, the estimates and confidence intervals may be inaccurate; and (2) the improved adaptive progressive Type-II censoring plan can struggle when only a few failures are observed, which can make the estimates unstable and the uncertainty large. It would be of interest to study Weibull reliability under the same adopted censoring plan with deep-learning–based approaches; see the work of Xu et al.42 for further background. Another avenue for future work is to extend the methodology to a competing risks framework and evaluate reliability estimation in that setting.

Data availability

Data is provided within the manuscript or supplementary information files

References

Watkins, A. J. & John, A. M. On constant stress accelerated life tests terminated by Type II censoring at one of the stress levels. J. Stat. Plann. Infer. 138(3), 768–786 (2008).

Fan, T. H. & Yu, C. H. Statistical inference on constant stress accelerated life tests under generalized gamma lifetime distributions. Qual. Reliab. Eng. Int. 29(5), 631–638 (2013).

Kumar, D., Nassar, M., Dey, S. & Alam, F. M. A. On estimation procedures of constant stress accelerated life test for generalized inverse Lindley distribution. Qual. Reliab. Eng. Int. 38(1), 211–228 (2022).

Wu, W., Wang, B. X., Chen, J., Miao, J. & Guan, Q. Interval estimation of the two-parameter exponential constant stress accelerated life test model under Type-II censoring. Qual. Technol. Quantit. Manag. 20(6), 751–762 (2023).

Elwahab, M. E. A., Alqasem, O. A. & Nassar, M. Analysis and applications of Nakagami constant-stress model using progressive type-II censored data. Phys. Scr. 100(3), 035206 (2025).

Tang, L. C., Sun, Y. S., Goh, T. N. & Ong, H. L. Analysis of step-stress accelerated-life-test data: A new approach. IEEE Trans. Reliab. 45(1), 69–74 (1996).

Lee, H. M., Wu, J. W. & Lei, C. L. Assessing the lifetime performance index of exponential products with step-stress accelerated life-testing data. IEEE Trans. Reliab. 62(1), 296–304 (2013).

Hakamipour, N. Comparison between constant-stress and step-stress accelerated life tests under a cost constraint for progressive type I censoring. Seq. Anal. 40(1), 17–31 (2021).

Bakouch, H. S., Moala, F. A., Alghamdi, S. & Albalawi, O. Bayesian methods for step-stress accelerated test under gamma distribution with a useful reparametrization and an industrial data application. Mathematics 12(17), 2747 (2024).

Cheng, Y. F. & Wang, F. K. Estimating the Burr XII parameters in constant-stress partially accelerated life tests under multiple censored data. Commun. Stat.-Simul. Comput. 41(9), 1711–1727 (2012).

Ismail, A. A. On designing constant-stress partially accelerated life tests under time-censoring. Strength Mater. 46, 132–139 (2014).

Nassar, M. & Alam, F. M. A. Analysis of modified Kies exponential distribution with constant stress partially accelerated life tests under type-II censoring. Mathematics 10(5), 819 (2022).

Nassr, S. G. & Elharoun, N. M. Inference for exponentiated Weibull distribution under constant stress partially accelerated life tests with multiple censored. Commun. Stat. Appl. Methods. 26(2), 131–148 (2019).

Nassar, M., Alotaibi, R. & Elshahhat, A. Reliability estimation of XLindley constant-stress partially accelerated life tests using progressively censored samples. Mathematics 11(6), 1331 (2023).

Fathi, A., Farghal, A. W. A. & Soliman, A. A. Inference on Weibull inverted exponential distribution under progressive first-failure censoring with constant-stress partially accelerated life test. Stat. Pap. 65(8), 5021–5053 (2024).

Yao, H. & Gui, W. Inference on exponentiated Rayleigh distribution with constant stress partially accelerated life tests under progressive type-II censoring. J. Appl. Stat. 52(2), 448–476 (2025).

Asgharzadeh, A. Point and interval estimation for a generalized logistic distribution under progressive type II censoring. Commun. Stat. -Theory Methods. 35(9), 1685–1702 (2006).

Rastogi, M. K. & Tripathi, Y. M. Estimating the parameters of a Burr distribution under progressive type II censoring. Stat. Methodol. 9(3), 381–391 (2012).

Wu, M. & Gui, W. Estimation and prediction for Nadarajah-Haghighi distribution under progressive type-II censoring. Symmetry 13(6), 999 (2021).

Abo-Kasem, O. E., El Saeed, A. R. & El Sayed, A. I. Optimal sampling and statistical inferences for Kumaraswamy distribution under progressive Type-II censoring schemes. Sci. Rep. 13(1), 12063 (2023).

Khalifa, E. H., Ramadan, D. A., Alqifari, H. N. & El-Desouky, B. S. Bayesian Inference for Inverse Power Exponentiated Pareto Distribution Using Progressive Type-II Censoring with Application to Flood-Level Data Analysis. Symmetry. 16(3), 309 (2024).

Ng, H. K. T., Kundu, D. & Chan, P. S. Statistical analysis of exponential lifetimes under an adaptive Type-II progressive censoring scheme. Nav. Res. Logist. 56(8), 687–698 (2009).

Kundu, D. & Joarder, A. Analysis of Type-II progressively hybrid censored data. Comput. Stat. Data Anal. 50(10), 2509–2528 (2006).

Dutta, S., Dey, S. & Kayal, S. Bayesian survival analysis of logistic exponential distribution for adaptive progressive Type-II censored data. Comput. Stat. 39(4), 2109–2155 (2024).

Lv, Q., Tian, Y. & Gui, W. Statistical inference for Gompertz distribution under adaptive type-II progressive hybrid censoring. J. Appl. Stat. 51(3), 451–480 (2024).

Sharma, H. & Kumar, P. On survival estimation of Lomax distribution under adaptive progressive type-II censoring. Stat. Trans. New Series 26(1), 51–67 (2025).

Kumari, A., Kumar, K. & Kumar, I. Bayesian and classical inference in Maxwell distribution under adaptive progressively Type-II censored data. Int. J. Syst. Assur. Eng. Manag. 15(3), 1015–1036 (2024).

Yan, W., Li, P. & Yu, Y. Statistical inference for the reliability of Burr-XII distribution under improved adaptive Type-II progressive censoring. Appl. Math. Model. 95, 38–52 (2021).

Dutta, S. & Kayal, S. Inference of a competing risks model with partially observed failure causes under improved adaptive type-II progressive censoring. Proceed. Instit. Mech. Eng., Part O: J. Risk Reliabil. 237(4), 765–780 (2023).

Dutta, S., Alqifari, H. N. & Almohaimeed, A. Bayesian and non-bayesian inference for logistic-exponential distribution using improved adaptive type-II progressively censored data. PLoS ONE 19(5), e0298638 (2024).

Nassar, M., Alotaibi, R. & Elshahhat, A. Reliability analysis at usual operating settings for Weibull Constant-stress model with improved adaptive Type-II progressively censored samples. AIMS Math. 9(7), 16931–16965 (2024).

Bhattacharya, P. & Bhattacharjee, R. A study on Weibull distribution for estimating the parameters. J. Appl Quantit Methods 5(2), 234–241 (2010).

Starling, J. K., Mastrangelo, C. & Choe, Y. Improving Weibull distribution estimation for generalized Type I censored data using modified SMOTE. Reliabil. Eng. Syst. Safety 211, 107505 (2021).

Hussain, I. et al. Comparative analysis of eight numerical methods using Weibull distribution to estimate wind power density for coastal areas in Pakistan. Energies 16(3), 1515 (2023).

Kazempoor, J., Habibirad, A., Nadi, A. A. & Borzadaran, G. R. M. Statistical inferences for the Weibull distribution under adaptive progressive type-II censoring plan and their application in wind speed data analysis. Stat., Optimiz. Inform. Comput. 11(4), 829–852 (2023).

Yu, J., Wang, Q. & Wu, C. Online monitoring of the Weibull distributed process based on progressive Type II censoring scheme. J. Comput. Appl. Math. 443, 115744 (2024).

Pareek, B., Kundu, D. & Kumar, S. On progressively censored competing risks data for Weibull distributions. Comput. Stat. Data Anal. 53(12), 4083–4094 (2009).

Henningsen, A. & Toomet, O. maxLik: A package for maximum likelihood estimation in R. Comput. Stat. 26(3), 443–458 (2011).

Plummer, M., Best, N., Cowles, K. & Vines, K. CODA: convergence diagnosis and output analysis for MCMC. R News 6(1), 7–11 (2006).

Nassar, M. & Elshahhat, A. Statistical analysis of inverse Weibull constant-stress partially accelerated life tests with adaptive progressively type I censored data. Mathematics 11, 370 (2023).

Nelson, W. B. Accelerated testing: Statistical model, test plan and data analysis (Wiley, New York, 2004).

Xu, A., Wang, R., Weng, X., Wu, Q. & Zhuang, L. Strategic integration of adaptive sampling and ensemble techniques in federated learning for aircraft engine remaining useful life prediction. Appl. Soft Comput. 175, 113067 (2025).

Acknowledgements

The authors would like to express their thanks to the editor and the six anonymous referees for helpful comments and observations. This research was funded by Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2025R50), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Funding

This research was funded by Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2025R50), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Author information

Authors and Affiliations

Contributions

Methodology, A. E., R. A., and M. N. ; Funding acquisition, R. A.; Software, A. E.; Supervision, R. A.; Writing–original draft, R. A. and M. N.; Writing–review and editing, A. E. and M. N.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Elshahhat, A., Alotaibi, R. & Nassar, M. Analysis of Weibull time metrics using normal operating via partially accelerated tests with improved adaptive progressive censoring and its applications. Sci Rep 15, 39548 (2025). https://doi.org/10.1038/s41598-025-23990-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-23990-0