Abstract

Accurate credit card fraud detection is vital for protecting financial systems and reducing economic losses. Graph neural networks (GNNs) have shown strong potential by capturing complex patterns in transaction networks. However, existing GNN-based approaches exhibit limitations in handling class imbalance, adapting to non-graph transaction data, and capturing the relative importance of features. Therefore, we propose HMOA-GNN, a novel framework for credit card fraud detection designed to handle tabular and highly imbalanced transaction data. First, the density-driven hierarchical hybrid sampling (DEHS) module balances the dataset by generating synthetic fraudulent transactions in dense regions and removing noise. Next, the metric-optimized latent space similarity graph construction (MOLS-GC) module applies metric learning to build graphs that satisfy the homophily assumption. Finally, the Adversarially trained, feature-adaptive GraphSAGE-based model (AdaAdvSAGE) enhances feature aggregation through adversarial learning and adaptive feature selection. Experiments on multiple real-world datasets demonstrate the superior performance of our framework in credit card fraud detection.

Similar content being viewed by others

Introduction

Credit card fraud, which involves unauthorized use of credit or debit cards including non-consensual transactions and technologically enabled card cloning1, causes direct financial losses for consumers and adverse consequences such as credit impairment, legal disputes, and privacy breaches, significantly disrupting daily life and financial stability. For financial institutions, the impact is equally severe, encompassing economic losses as well as reputational damage, reduced customer trust, and heightened compliance risks. In response to the growing frequency of fraud incidents, banks and payment platforms are often required to invest substantial resources into fraud investigations, customer compensation, and system upgrades, thereby driving up operational costs2. Therefore, credit card fraud has evolved from an individual-level threat into a systemic risk, making the development of efficient and accurate detection methods a pressing issue for financial security.

Traditional rule-based credit card fraud detection (CCFD) methods are often vulnerable to evasion, as fraudsters can imitate legitimate transaction behaviors to bypass detection systems3,4. To overcome this limitation, machine learning and deep learning approaches have been employed to uncover latent anomalous patterns within transaction processes, formulating fraud detection either as a binary classification problem or as an anomaly detection task5,6. However, most existing methods7 still analyze transactions in isolation based on static features, which limits their ability to capture sophisticated and evolving fraud strategies. In response, recent research has shifted toward modeling dependencies among transactions. Graph-based approaches8, particularly graph neural networks (GNNs), can capture both local and global interaction patterns, thereby enhancing the comprehensiveness and generalization of fraud detection systems.

Despite significant advancements in CCFD, several persistent challenges continue to limit the effectiveness and generalizability of existing approaches. One major issue lies in the extreme class imbalance inherent in transaction datasets, where fraudulent transactions represent only a minute fraction of the total data. This imbalance hinders the model’s ability to distinguish fraudulent behavior accurately9,10,11. Sampling methods can mitigate class imbalance by generating synthetic minority-class transactions or removing a portion of majority-class transactions. However, many existing methods adopt a single oversampling strategy and tend to overlook the underlying density and distributional characteristics of the data12,13,14. This may lead to the generation of noisy or redundant samples near class boundaries. In parallel, undersampling the majority-class may remove informative samples, undermining the model’s ability to capture the overall data distribution15,16,17. Therefore, it is necessary to design a hybrid sampling strategy that considers the distribution of transaction data, thereby addressing the challenge of extreme class imbalance in transaction datasets.

In addition, although GNNs have shown remarkable success in modeling graph-structured data18,19,20, they are not directly applicable to conventional tabular (i.e., non-graph-structured) transaction data, which often lacks explicit graph structures21,22. Constructing an informative and task-aligned graph topology from non-graph-structured transactions is complicated by noise, heterogeneity, and potential violations of the homophily assumption23. The homophily assumption states that connected nodes in a graph are likely to share the same label. Moreover, most existing GNN models, especially those that employ GraphSAGE-style aggregation methods, adopt static feature selection and weighting mechanisms24,25, which fail to capture the varying importance of features across different transaction samples. This limitation reduces the model’s expressiveness in identifying complex and evolving fraud patterns. Therefore, constructing a transaction graph topology that adheres to the homophily assumption, uncovering latent behavioral patterns in non-graph-structured transactional data, and accounting for the varying importance of features across different transaction samples are essential for accurately identifying sophisticated fraudulent activities in real-world scenarios.

Therefore, in this paper, we propose a multi-strategy enhanced adaptive adversarial GNN framework, named HMOA-GNN, for CCFD. To mitigate potential transaction data quality degradation resulting from the reliance on a single sampling strategy, we propose a multi-stage hierarchical hybrid sampling strategy, augmented by a density-based sample generation mechanism. Here, the majority class refers to legitimate transactions, whereas the minority class refers to fraudulent transactions. By generating new samples in high-density regions of the minority class, this method enhances discriminative features of fraudulent samples while minimizing noise, leading to more accurate decision boundaries. Considering the limitations of traditional GNNs when applied to non-graph-structured transaction data, we draw inspiration from the homophily assumption23. Based on this idea, we design a metric-optimized latent space similarity graph construction method to build a transaction graph that conforms to the assumption. This graph mapping method effectively uncovers latent behavioral patterns and complex relationships in non-graph-structured transaction data, thereby extending the applicability of GNNs in fraud detection and establishing a well-founded graph-structural foundation for subsequent feature representation learning.

In addition, to overcome the inadequacy of feature aggregation mechanisms in existing GNN methods, particularly their inability to capture the relative importance of features across different transaction samples, we present an adversarially trained, feature-adaptive GraphSAGE-based model. This model employs an adversarial learning strategy to adaptively estimate the contribution of each transaction feature to the classification outcome and dynamically adjusts their weights, thereby enhancing feature utilization during the aggregation process. By integrating the structural information from a graph constructed under the homophily assumption, the model effectively captures complex dependencies among transaction features while introducing inter-layer residual connections to mitigate over-smoothing, thereby enhancing its capability to fraud detection.

The contributions of this work are as follows:

-

1.

We propose HMOA-GNN, a novel framework for CCFD designed to address tabular and highly imbalanced transaction data. Specifically, it includes a Density-driven Hierarchical Hybrid Sampling (DEHS) module, a Metric-Optimized Latent Space Similarity Graph Construction (MOLS-GC) module, and an Adversarially trained, Feature-Adaptive GraphSAGE-based model (AdaAdvSAGE).

-

2.

We propose the DEHS module, which employs a hybrid sampling strategy to hierarchically identify central and boundary samples, generate synthetic minority samples guided by local density distributions, and eliminate noisy or redundant data. This approach aims to construct a more balanced and informative training dataset, thereby alleviating the effects of extreme class imbalance.

-

3.

In order to construct a transaction graph topology that aligns with the homophily assumption, we present the MOLS-GC method, which employs metric learning to refine transaction embeddings and constructs similarity graphs in the latent space, thus promoting the clustering of transactions belonging to the same class and enhancing the model’s structural representation.

-

4.

Aiming to overcome the limitations of GraphSAGE in feature-level discrimination and overall robustness, we propose AdaAdvSAGE, which integrates adversarial training with an adaptive feature selection module and introduces inter-layer residual connections to alleviate over-smoothing in deep layers. This architecture produces more discriminative and robust node representations, thereby enhancing performance in fraud detection tasks.

The remainder of this paper is organized as follows. Section 2 introduces the research background and related work. Section 3 presents the preliminary knowledge. Section 4 describes the proposed method. Section 5 introduces the experimental design and results. Section 6 summarizes the paper.

Related works

We summarize the related work in two main areas: (1) strategies for addressing class imbalance in datasets; (2) the application of GNNs in CCFD.

Sampling methods for class imbalance

Class imbalance in transaction datasets remains a prevalent and challenging issue in CCFD tasks. Numerous sampling strategies have been proposed to effectively mitigate the issue of class imbalance, with undersampling methods aiming to reduce the majority class while preserving data distribution. Kumar et al.15 employed an entropy and neighborhood-based approach to eliminate low-entropy majority samples from overlapping regions, reducing redundancy. Sun et al.16 introduced a kernel-based method to remove majority samples from high-density minority areas, mitigating information loss. Zhu et al.26 employed clustering to identify and discard noisy samples based on hyperspheres around cluster centers.

Oversampling techniques increase minority samples by generating synthetic samples. Chawla et al.12 developed SMOTE, which interpolates between minority samples to balance the data. Ni et al.27 refined this with a spiral oversampling strategy to reduce overlap. Li et al.28 proposed a subspace-based method to maintain original distribution and prevent decision boundary shifts. Maldonado et al.13 introduced FW-SMOTE, using weighted Minkowski distance to address high-dimensional data.

Hybrid sampling methods combines undersampling and oversampling to leverage their strengths. Lin et al.29 studied the sequence of applying each and its impact on imbalance. Guo et al.30 proposed a hybrid of Tomek links, BIRCH clustering, and B-SMOTE to improve intra-class and inter-class balance. Alamri and Ykhlef31 presented a dynamic hybrid method integrating Bagging, which adapts sampling ratios and targets hard-to-classify samples to enhance robustness.

However, existing hybrid sampling methods often suffer from limited adaptability, relying on fixed strategies that may not generalize well across datasets with varying class overlap or noise. They also risk discarding useful information or generating suboptimal samples due to insufficient consideration of data structure.

Graph neural network for credit card fraud detection

GNNs have achieved significant progress in financial fraud detection32. Inspired by Convolutional Neural Networks (CNNs), GNNs extend convolution operations to non-Euclidean graph-structured data by recursively aggregating features from neighbor nodes to update target node representations, enabling modeling of entities such as accounts and transactions33. Among them, GraphSAGE25 introduces an inductive message-passing mechanism in the spatial domain, enabling efficient representation learning for previously unseen nodes by sampling and aggregating their local neighbors. However, it treats the features of all neighbor nodes uniformly during aggregation, which constrains its capacity to capture complex dependencies among transaction features arising from diverse behavioral patterns. Li et al.34 proposed MG-HRL, a multi-view graph-based hierarchical representation learning method that models transaction networks as heterogeneous information networks with six meta-paths to mine correlations among users and employs heterogeneous hypergraph representation learning to capture high-order representations of transaction subgraphs, achieving superior performance in detecting organized money laundering groups. Yang et al.35 proposed a multiview fusion neural network (FMvPCI) that integrates multiview graph convolutional encoding with fuzzy clustering to unify protein embeddings and cluster memberships, thereby enhancing the accuracy of protein complex identification. Su et al.36 proposed an interpretable and generalizable transformer-based graph representation learning framework that integrates multi-omics data with both homogeneous and heterogeneous biological network topologies to achieve accurate cancer gene prediction across pan-cancer and cancer-specific scenarios. Yang et al.37 proposed a variational Bayesian learning–based link-driven attributed graph clustering method (LCAAG), which infers node cluster labels from link-level modeling and achieves superior accuracy and scalability. Liu et al.38 proposed PSAGNN, a novel model that employs phased optimization, biased perturbation, and weighted penalties to exploit interbank preferences and scale-free network properties, effectively countering feature and structural poisoning attacks for superior interbank credit rating prediction.

Despite their effectiveness in many tasks, GNNs face challenges in CCFD due to the incompatibility between tabular transaction data and the input format required by GNNs, as such data lacks inherent graph structure. Qiao et al.39 provided a systematic review of major methods for graph construction and learning, ranging from general machine learning approaches to specific applications. However, their discussion on emerging deep learning-based GNN techniques remains insufficient. Carneiro and Zhao40 analyzed four graph construction methods based on K-nearest neighbors (KNN) and \(\epsilon\)-neighborhood (\(\epsilon\)NN) criteria. However, these graph construction approach relies on Euclidean distance in the original high-dimensional space, where such a metric becomes ineffective at distinguishing sample proximity. Consequently, the resulting graph topology often fails to capture the intrinsic relationships within the data, thereby hindering the construction of a transaction graph consistent with the homophily assumption.

Therefore, existing GNN-based approaches for CCFD still face limitations in capturing the complex dependencies among transaction features induced by diverse behavioral patterns. Moreover, these methods often overlook the challenges posed by high-dimensional, non-graph-structured transaction data, where traditional distance metrics become ineffective and the underlying intricate relational patterns remain insufficiently explored and modeled.

Preliminary

This section introduces definitions related to transaction data, graph structures, message passing and aggregation, followed by an introduction to task aims.

Tabular transaction data

We utilize a dataset consisting of a single transaction record of cardholder. Each record represents a distinct transaction event, rather than a monetary transfer between two peer entities.

Formally, each transaction is represented as a tuple:

where each \(feature_i\) corresponds to an attribute such as:

-

Amount: the monetary value of the purchase,

-

Timestamp: the time at which the transaction occurred,

-

(Optional): additional fields such as merchant category code, transaction location, device type, or card presence indicators.

Tabular transaction dataset is structured as a collection of such records, with no inherent graph or network structure. This data representation allows for the extraction of both static features (e.g., transaction amount, merchant category) and behavioral patterns (e.g., spending frequency, time-of-day preferences). It serves as the foundation for downstream fraud detection tasks.

Graph-structured transaction data

We construct a graph-structured transaction dataset \(G = \left( V,E \right)\), where each node \(v_i \in V\) is associated with a feature vector \(\textbf{v}_i\), and the initial node embedding is defined as \(\textbf{h}_i^0 = \textbf{v}_i\). The embedding of node \(v_i\) at the k-th layer is denoted by \(\textbf{h}_i^k\). To enforce the homophily assumption, an undirected edge is established between two nodes if their corresponding transactions exhibit high similarity. It is important to note that each transaction in the original dataset is represented as a node, which is referred to as a transaction node, in the graph-structured dataset.

Message passing and aggregation

We adopt the message-passing framework from GNNs to learn representations over transaction graphs.

Let \(v_i \in V\) denote the i-th node in the graph. At each layer k, the node \(v_i\) embedding \(\textbf{h}_i^{k}\) is updated through a two-step process: message aggregation from neighbor nodes and embedding update. The general form of the message-passing update is

where \(\mathscr {N}_{k_\textrm{NN}}(v_i)\) denotes the set of \({k_\textrm{NN}}\) nearest neighbors of node \(v_i\), \(\textrm{Agg}^{k}(\cdot )\) is a differentiable, permutation-invariant function (e.g., mean, LSTM, or pooling), \(W^{k}\) is a trainable weight matrix at layer k, \(\sigma\) is a non-linear activation function, such as ReLU.

Aims

CCFD aims to identify whether a given transaction is fraudulent. This task is inherently a binary classification problem, where the goal is to learn a classifier

where \(\textbf{x}\! \in \! \mathbb {R}^n\) represents the feature vector of a single transaction, \(y\! \in \! \{0, 1\}\) denotes the corresponding label, with \(y \!= \!1\) indicating a fraudulent transaction and \(y\! =\! 0\) indicating a legitimate transaction, and \(f(\textbf{x})\) denotes the model’s prediction of the likelihood that the transaction is fraudulent.

Methods

Framework of HMOA-GNN.

System model

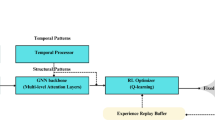

The architecture of our proposed HMOA-GNN framework, which is illustrated in Fig. 1, comprises four steps:

Step (a): Transaction dataset balancing. To address the inherent class imbalance in transaction datasets, we propose a Density-driven Hierarchical Hybrid Sampling strategy comprising two processing channels: an undersampling channel and an oversampling channel. The undersampling channel initially reduces the proportion of majority-class samples, and the resulting subset is employed to estimate the underlying density distributions of transaction samples. Guided by these density estimates, the oversampling channel synthesizes minority-class samples and applies a noise filtering process to ensure representativeness and diversity. This dual-channel hybrid sampling procedure yields a balanced transaction dataset, effectively mitigating bias introduced by skewed class distributions.

Step (b): Transaction graph construction. We develop a Metric-optimized Latent Space Similarity Graph Construction method based on a metric learning model implemented with an autoencoder architecture. The training objective integrates reconstruction loss and triplet loss to embed the balanced, tabular transaction data into a compact low-dimensional latent space. Subsequently, a KNN-based graph construction strategy is applied to the resulting pairwise distance matrix to generate edge connections between transaction records. This process transforms tabular transaction data into graph-structured representations that conform to the homophily assumption, thereby enabling downstream graph-based learning, while simultaneously mitigating the issue of distance metric degradation in high-dimensional spaces.

Step (c): Transaction node representation learning. We propose AdaAdvSAGE, an adversarially trained and feature-adaptive GraphSAGE-based model designed for transaction node representation learning. In this model, the adaptive feature selection module assigns weights to features based on their discriminative relevance across diverse transaction nodes, resulting in weighted representations that preserve critical behavioral signals and support more effective modeling of fraudulent transaction patterns. To enhance the expressive power of graph representations, we design an adversarial training strategy that perturbs the weighted features in the direction of the loss gradient with respect to the weighted features to generate adversarial samples. These are then used alongside the original data during training, promoting the robustness of the model. To alleviate over-smoothing in deeper GNN layers, inter-layer residual connections are incorporated, facilitating the preservation of informative signals across layers. As a result, the model yields high-quality node embeddings that effectively capture aggregated multi-hop neighborhood information.

Step (d): Fraud detection. The learned node embedding representations are fed into a multi-layer perceptron (MLP) classifier, which is trained to infer the probability that a given transaction is fraudulent. This final classification stage operationalizes the preceding representation learning into actionable fraud detection decisions, facilitating timely and accurate identification of illicit activities.

Density-driven hierarchical hybrid sampling

As we all know, credit card transaction data is highly imbalanced. Conventional sampling methods often fail to differentiate informative from noisy samples and neglect the density structure of minority classes, leading to information loss or the generation of overlapping samples in sparse regions. These issues degrade data representativeness and increase the risk of overfitting.

To address these issues, we propose a Density-driven Hierarchical Hybrid Sampling (DEHS) method, whose overall method is shown in Fig. 2. As illustrated, the method comprises three components: center selection and boundary refinement, density-driven selective sampling, and noisy sample filtering, which are organized into two distinct sampling channels. In the undersampling channel, we implement a center-selection and boundary-refinement strategy to reduce majority-class transaction samples through outlier filtering, clustering-based undersampling, and majority-class pruning, thereby preserving representative structural patterns. Subsequently, the resulting subset is used to estimate the density distribution of minority-class transaction samples. Concurrently, in the oversampling channel, these density estimates guide the selective synthesis of additional minority-class samples in high-density regions, with the synthesized fraudulent samples regulated by a noise filtering mechanism to minimize overlap while enhancing representativeness and diversity. This multi-stage, dual-channel hybrid sampling method ultimately yields a well-balanced transaction dataset, effectively mitigating the bias and performance degradation caused by severe class imbalance.

Process of DEHS method.

Center selection and boundary refinement strategy

To mitigate the adverse effects of noise and redundancy in the transaction dataset, which can obscure class boundaries and degrade model performance, we adopt a multi-stage sample processing strategy. This strategy comprises outlier filtering, clustering-based undersampling, and majority-class pruning, aiming to preserve representative structures while improving the quality and balance of the training data.

Outlier filtering: First, we introduce a majority-class purification module based on isolation forest. Isolation forest detects outliers effectively, reducing noise and improving training data quality. The specific process is as follows:

The credit card transaction dataset D is divided by transaction type into legitimate transactions set \({{D}_{0}}\) and fraudulent transactions set \({{D}_{1}}\). Let the i-th legitimate transaction in \({{D}_{0}}\) be \(x_{i}^\textrm{leg}\), \(x_{i}^\textrm{leg}\in {{D}_{0}}\). For each transaction, the anomaly score \({{s}_{i}^\textrm{asc}}\) is computed individually using the formula as follows:

where \(n_0\) is the number of legitimate transactions in \({{D}_{0}}\). \(s\left( x_{i}^\textrm{leg},n_0 \right)\) is the anomaly score of \(x_{i}^\textrm{leg}\) in \({{D}_{0}}\), ranging from \(\left( 0,1 \right]\). The function \(\kappa \left( x_{i}^\textrm{leg} \right)\) calculates the path lengths of \(x_{i}^\textrm{leg}\) across all isolation trees. \(E\!\left[ \kappa \!\left( x_{i}^{\textrm{leg}}\right) \right]\) corresponds to the average of \(\kappa \!\left( x_{i}^{\textrm{leg}}\right)\). The term \(c(n_0)\) is a normalization constant, defined as the expected path length of a point in a fully balanced binary tree of size \(n_0\), calculated as follows:

where \(H(n_0-1)\) denotes the \((n_0-1)\)-th harmonic number, i.e., the sum of the reciprocals of the first \(n_0-1\) positive integers.

In the context of the Isolation Forest, it represents the expected path length of an unsuccessful search in a binary search tree. For large \(n_0\), \(H(n_0-1)\) can be approximated by

where \(\gamma\) is the Euler–Mascheroni constant, which equals 0.5772 (approximately).

Then, based on the anomaly score \({{s}_{i}^\textrm{asc}}\) computed using Eq. (4), the anomaly-score threshold \(\alpha\) is set to the k-th highest score among legitimate transactions, thereby identifying those k transactions with scores exceeding \(\alpha\) as outliers.

Subsequently, based on the predefined anomaly-score threshold \(\alpha\), transactions in \(D_{0}\) whose anomaly scores exceed \(\alpha\) are identified as outliers and removed. This process yields the refined legitimate transaction dataset \(D_{0}^\textrm{OF}\), which can be expressed as

Clustering-based undersampling: To facilitate the selection of representative samples, we take as input the dataset \(D_{0}^{\textrm{OF}} = \{x_{1}, x_{2}, \dots , x_{n_0^\textrm{OF}}\}\), obtained from the preceding outlier filtering stage. Each \(x_{i} \in \mathbb {R}^{d}\) represents the d-dimensional feature vector of the i-th majority-class transaction. This dataset then serves as the basis for the subsequent clustering step.

We subsequently employ a K-means clustering–driven grouping stage on \(D_{0}^{\textrm{OF}}\) to identify and cluster similar majority-class transactions. The algorithm partitions the input samples into k clusters \(\{C_{1}, C_{2}, \dots , C_{k}\}\) by iteratively minimizing the within-cluster Euclidean distance \(\mathscr {J}\):

where \(r_{ij} \in \{0,1\}\) is the cluster-assignment indicator, such that \(r_{ij} = 1\) if and only if \(x_{i} \in C_{j}\), and \(\varvec{\mu }_{j} \in \mathbb {R}^{d}\) denotes the centroid of cluster \(C_{j}\).

After convergence, the representative sample \(x_j^*\) in each cluster is defined as:

Then, the set of all selected representatives forms the cluster-based representative set

This clustering-based undersampling strategy removes most redundant samples while maintaining structural diversity and representativeness, thereby contributing to a more balanced dataset for subsequent processing.

Majority-class pruning: Next, to further eliminate redundant transaction samples, we propose a one-sided selection (OSS) strategy to streamline majority-class samples. It is capable of retaining majority-class samples that are close to the minority class, thereby preserving the decision boundary while reducing noise introduced by redundant majority samples. The OSS strategy is executed according to the following procedure:

(1) Train initial KNN classifier: A KNN classifier is trained using all transactions and predicts sample labels based on Euclidean distance. For each sample \({{x}_{i}}\), KNN classifier finds the k closest samples in the training set and determines its class by majority vote.

(2) Select informative majority samples: For every majority-class sample \({{x}_i^\textrm{leg}}\!\in \!D_{\textrm{clu}}\), check whether it is misclassified as a minority sample by the KNN classifier. If a majority sample is misclassified as a minority, it is likely close to minority samples and considered critical for classification. These misclassified samples are labeled as boundary majority transaction samples set \({{S}_\textrm{bd}}\), which is defined as

where \(\operatorname {KNN}({{x}_i^\textrm{leg}})\) denotes the class label assigned to the legitimate transaction sample \({{x}_i^\textrm{leg}}\) by a KNN classifier, which assigns the label corresponding to the most frequent class among the k closest samples in the feature space. In this context, \(\text {fraudulent}\) denotes the minority class label in the dataset.

(3) Remove redundant majority samples: Majority-class samples not misclassified by KNN classifier (e.g., \(x\in D_{\textrm{clu}}\backslash {{S}_\textrm{bd}}\)) are typically located far from the decision boundary and are considered redundant. Such redundant samples can be removed from the dataset to perform undersampling. Thus, the resulting majority transaction samples set \({{D}_{maj}}\) is

Density-driven selective sampling

In highly imbalanced credit card transaction datasets, the minority-class transactions in the set \(D_{1}\) are both scarce and unevenly distributed. Existing oversampling methods12,13,14 often fail to effectively exploit samples located near the decision boundary between the majority and minority classes, and their performance is further degraded by the high degree of overlap between synthetic minority samples and majority-class samples.

To overcome these limitations, we propose a density-driven selective sampling method. This method builds upon the sample density computed within \(D_{1}\), which quantifies the concentration of neighboring samples having the same class label in the feature space. A higher density value indicates that a sample is surrounded by many same-class neighbors, whereas a lower density reflects a sparser local distribution. Leveraging this density information, our method selects minority-class samples from high-density regions within \(D_{1}\) with a higher probability as base points for generating new synthetic fraudulent transaction samples.

Formally, for a sample \(x_i \in D_{1}\), its sample density \(\rho _i\) is defined as:

where k denotes the number of neighbor points considered in the sample density calculation. The function \(\textrm{dist}(x_i, x_j)\) denotes the Euclidean distance between two transaction samples \(x_i\) and \(x_j\), and is defined as

where d indicates the dimensionality of the feature vectors, and \(x_{i,k}\) and \(x_{j,k}\) denote the k-th dimensional coordinates of \(x_i\) and \(x_j\), respectively. In the specific context of this calculation, \(x_i\) refers to the target fraudulent transaction under analysis, and \(x_j\) refers to its j-th nearest fraudulent neighbor in the dataset D, with \(x_i \ne x_j\).

Additionally, we define the density weight as a normalized measure that determines the probability of selecting a given minority-class sample for the generation of new synthetic samples. The density weight of the i-th sample \(\tilde{w}_i\) is defined as follows:

where \(N_{1}\) denotes the number of minority-class samples in \(D_\textrm{maj}\) and \(\rho _i\) is the density value of the i-th sample. A higher density value yields a larger density weight, thereby increasing the likelihood that the corresponding sample will be selected during the new sample generation process.

To effectively steer the oversampling process toward denser regions within the minority class, a density-based weighting mechanism is incorporated into the sample selection strategy. Specifically, each sample \(x_i\) from the minority class is selected according to a probability distribution \(\mathscr {P}(x_i)\), which is proportional to its normalized density weight \(\tilde{w}_i\). The corresponding synthetic fraudulent sample \(x_{\textrm{new}}^{\textrm{frd}}\) is subsequently generated as follows:

where \(x_{\textrm{new}}^{\textrm{frd}}\) denotes a newly synthetic fraudulent transaction sample, \(x_{j} - x_{i}\) geometrically refers to the line segment between \(x_{j}\) and \(x_{i}\) in the original dataset. New samples are randomly distributed along this segment, with higher-density minority-class samples having a greater chance of being selected as \(x_i\).

The density-based selective sampling method generates new transaction samples by preferentially selecting minority-class transaction samples located in high-density regions. This sampling strategy reduces the redundancy typically introduced by conventional oversampling approaches.

Noisy sample filtering

After generating new synthetic fraudulent transaction samples, we apply a noise filtering mechanism to ensure that these synthetic samples contribute effectively to the subsequent learning of fraudulent patterns without introducing additional noise. In this context, noise refers to synthetic samples that deviate significantly from the distribution of genuine fraudulent data and that may degrade the performance of the classifier if incorporated into training. The neighborhood \(\mathscr {N}_{k}\!\left( x_{\textrm{new}}^{\textrm{frd}}\right)\), comprising the \({k}\) nearest transactions of the synthetic sample \(x_{\textrm{new}}^{\textrm{frd}}\) based on the Euclidean distance in Eq. (14), is defined as:

where \(x_{\textrm{new}}^{\textrm{frd}}\) denotes a synthetic fraudulent sample, \(\mathscr {N}_{k}\!\left( x_{\textrm{new}}^{\textrm{frd}}\right)\) is the set of its k nearest transactions, and \(x_{k}\) is the k-th nearest transaction sample.

To quantify the proportion of fraudulent transactions within the neighborhood \(\mathscr {N}_{k}(x_{\textrm{new}}^{\textrm{frd}})\), we introduce the indicator function \(\textbf{I}_{\text {frd}}(\cdot )\), which returns 1 if a transaction is fraudulent (class \(=1\)) and 0 otherwise. The formal definition of this indicator function is as follows:

Based on this definition, the number of fraudulent transactions among the k nearest neighbors of \(x_{\textrm{new}}^{\textrm{frd}}\) is given by

where \(x_{i}\) denotes the i-th nearest transaction sample.

If a synthetic sample \(x_{\textrm{new}}^{\textrm{frd}}\) is surrounded by many minority-class points, it is considered valid and retained; otherwise, it is treated as noise and discarded. To formalize this criterion, we specify a neighbor-count threshold \(\theta _{\textrm{nbrs}}\) that quantifies the minimum number of minority neighbors required for retention. The filtering rule is given by

where \(D_{\textrm{min}}\) initially comprises the original minority-class transaction dataset \(D_{1}\).

The oversampling and filtering procedure is iteratively applied until \(D_{\textrm{min}}\) is balanced with respect to the majority class, containing an adequate number of synthetic fraudulent transaction samples for subsequent model training.

Therefore, by employing our proposed density-driven hierarchical hybrid sampling method, we obtain the balanced dataset \(\mathscr {D}_{\textrm{bal}}\), which is formally defined as follows:

where the reduced majority-class dataset \({{D}_\textrm{maj}}\) and the filtered synthetic minority-class dataset \({{D}_\textrm{min}}\) are merged to obtain a more balanced and representative dataset \({{D}_\textrm{bal}}\), which is employed for subsequent model training and evaluation.

The pseudo-code of the DEHS is presented in Algorithm 1. As illustrated in Algorithm 1, in the undersampling channel, the anomaly score of each transaction sample in set \(D_{0}\) is first computed. Subsequently, the center selection and boundary refinement strategy comprising outlier filtering, clustering-based undersampling, and majority-class pruning is applied to eliminate redundant majority-class samples, yielding a representative set of legitimate transactions \(D_{\textrm{maj}}\). In the oversampling channel, the sample density of each transaction in set \(D_{1}\) is computed, and corresponding density weights are calculated. Based on these weights, a sufficient number of synthetic minority-class samples are generated via a density-driven selective sampling strategy. After removing noisy samples, the refined synthetic set is combined with \(D_{1}\) to form the fraudulent transaction sample set \(D_{\textrm{min}}\). Finally, \(D_{\textrm{maj}}\) and \(D_{\textrm{min}}\) are merged to construct a balanced credit card transaction dataset \(D_{\textrm{bal}}\).

DEHS.

Metric-optimized latent space similarity graph construction

GNNs have emerged as a powerful paradigm for modeling complex relational structures, exhibiting remarkable capability across a variety of relational learning tasks. Their strength lies in leveraging the graph topology to capture high-order dependencies and propagate information across connected nodes. However, in CCFD, the available transaction records are typically organized as independent tabular entries without an explicit graph structure. The lack of inherent inter-transaction connectivity presents a substantial challenge to the direct application of GNNs, rendering the uncovering of latent behavioral patterns and intricate relational dependencies considerably more difficult. Moreover, the high dimensionality of transaction features leads to the degradation of distance metrics in high-dimensional spaces, hindering effective representation learning and making it difficult to construct graph structures that conform to the homophily assumption.

To address the above challenges, we propose a Metric-Optimized Latent Space Similarity Graph Construction (MOLS-GC) method. As illustrated in Fig. 3, we first train an autoencoder and adopt its encoder as a parameterized latent mapping function \(f_\phi\), which projects the original transaction features into a latent space where intra-class distances are minimized and inter-class separations are maximized, thereby yielding semantically coherent neighborhood structures in which fraudulent and legitimate transactions are distinctly segregated. In this optimized space, we compute the pairwise distances among the embedded transaction samples to obtain a distance matrix, upon which a KNN-based graph construction strategy is applied to connect samples exhibiting high mutual similarity. This process yields a transaction graph structure that promotes structural homogeneity and inherently conforms to the homophily assumption.

Process of MOLS-GC method.

Latent space optimization via metric learning

To effectively prepare the data for graph construction, it is necessary to address metric degradation and the curse of dimensionality by performing embedding optimization, thereby shaping a latent space in which similarity relationships are both semantically meaningful and structurally coherent. To this end, we adopt a metric learning approach, which enables the model to explicitly learn a task-specific similarity structure by embedding transaction samples from the same class in close proximity while pushing apart those from different classes. This enhances class separability and produces embedding neighborhoods that are well suited for subsequent graph construction, particularly under the homophily assumption commonly leveraged in GNNs.

We define a parameterized latent mapping function \(f_\phi \!:\! \mathbb {R}^d \!\rightarrow \! \mathbb {R}^m, m \ll d\), which maps an input transaction feature vector \(x_i \in \mathbb {R}^d\) to a latent representation \(\textbf{v}_i \!=\! f_\phi (x_i) \in \mathbb {R}^m\). In this study, \(f_\phi (\cdot )\) is implemented as the encoder component of an autoencoder architecture, with the corresponding decoder \(g_\psi (\cdot )\) responsible for reconstructing the input in the original feature space. The reconstructed version of \(x_i\), denoted as \(\hat{x}_i\), is obtained as follows:

To promote both information preservation and discriminative capability in the learned representations, we adopt a composite training objective that integrates reconstruction loss and triplet loss. This joint optimization strategy enables the model to learn embeddings that are not only semantically meaningful but also exhibit enhanced class separability, leading to the construction of more informative neighborhood structures that support effective graph learning tailored to downstream tasks. We now detail the formulation and role of each component in our method.

-

Reconstruction Loss: The reconstruction loss \(\mathscr {L}_{\textrm{rec}}\) measures the fidelity of the reconstruction relative to the original input, ensuring that the latent representations preserve the intrinsic structural properties of the transaction data. Formally, it is defined as the mean squared error (MSE)

$$\begin{aligned} \mathscr {L}_{\textrm{rec}} = \frac{1}{n} \sum _{i=1}^{n} \left\| x_i - \hat{x}_i \right\| _2^2 \end{aligned}$$(24)where \(x_i \in D_{\textrm{bal}}\) denotes the i-th input sample from the balanced dataset, \(\hat{x}_i\) is its reconstruction, and n is the total number of samples in the dataset. Minimizing \(\mathscr {L}_{\textrm{rec}}\) constrains \(f_\phi\) to preserve essential information while compressing the data into the latent space.

-

Triplet Loss: The triplet loss \(\mathscr {L}_{\textrm{tri}}\) imposes a metric structure on the embedding space by minimizing the distance between samples of the same class while maximizing the distance from those of different classes. For each batch, one sample is selected as the anchor \(\textbf{v}_\textrm{a}\), samples from the same class are treated as positives \(\textbf{v}_\textrm{p}\), and samples from different classes are treated as negatives \(\textbf{v}_\textrm{n}\). The loss is given by:

$$\begin{aligned} \mathscr {L}_{\textrm{tri}} = \max \left( 0, \textrm{dist}_\textrm{cos}(\textbf{v}_\textrm{a}, \textbf{v}_\textrm{p}) - \textrm{dist}_\textrm{cos} (\textbf{v}_\textrm{a}, \textbf{v}_\textrm{n}) + \delta \right) \end{aligned}$$(25)where \(\textrm{dist}_\textrm{cos}(\cdot , \cdot )\) denotes the cosine distance metric as defined in Eq. (30), and \(\delta > 0\) is the margin. This formulation enforces that the distance between \(\textbf{v}_\textrm{a}\) and \(\textbf{v}_\textrm{p}\) is at least \(\delta\) smaller than the distance between \(\textbf{v}_\textrm{a}\) and \(\textbf{v}_\textrm{n}\), thereby reducing intra-class variance and enhancing inter-class separation.

The final objective function combines the reconstruction and triplet losses in a weighted sum:

where \(\lambda _{\textrm{rec}}, \lambda _{\textrm{tri}} > 0\) are hyperparameters controlling the contribution of each loss term. This joint optimization ensures that \(f_\phi\) learns a structurally coherent and discriminative latent representation space, which facilitates the subsequent construction of transaction graphs that satisfy the homophily assumption.

By applying the trained encoder \(f_\phi\) to all samples in \(D_{\textrm{bal}}\), we obtain the complete set of optimized low-dimensional transaction embeddings:

where \(\textbf{v}_i\) denotes the m-dimensional vector representation of the i-th transaction in the metric-optimized latent space.

We then define the node set \(V = \{ v_1, v_2, \dots , v_n \}\) such that there exists a bijective correspondence between \(V\) and the node embedding set \(\textbf{V} = \{ \textbf{v}_1, \textbf{v}_2, \dots , \textbf{v}_n \} \subset \mathbb {R}^m\). Each embedding \(\textbf{v}_i \in \textbf{V}\) serves as the attribute representation of the corresponding node \(v_i \in V\). The formal definition is given as follows:

KNN-based graph construction

In GNNs, the homophily assumption refers to the principle that similar nodes are more likely to be connected. This assumption is pivotal for the effective application of GNNs in CCFD. To construct a graph structure that complies with this assumption, it is essential to ensure that the edges in the graph accurately reflect semantic similarity among nodes in the embedding space. The KNN method offers a natural and efficient solution, as it locally selects the most representative neighbors based on similarity in the latent space, thereby reinforcing the homophilic structure of the graph.

Upon completion of the autoencoder training phase, the encoder \(\smash {f_{\phi }}\) generates low-dimensional latent representations \(\{\textbf{v}_i\}_{i=1}^n\) for all n samples, where \(\textbf{v}_i \in \mathbb {R}^m\) denotes the m-dimensional embedding of the i-th sample. These embeddings are subsequently utilized for similarity-based neighborhood analysis in the latent space.

To enforce the homophily property in the constructed graph, we adopt cosine similarity as the primary similarity measure, defined as

where \(\textbf{v}_i \cdot \textbf{v}_j\) denotes the inner product between \(\textbf{v}_i\) and \(\textbf{v}_j\), and \(\Vert \cdot \Vert _2\) represents the Euclidean norm. For computational convenience, cosine similarity is transformed into a distance metric, which is formally defined as

yielding a symmetric distance matrix \(\textbf{D} = [\textbf{D}_{ij}] \in \mathbb {R}^{n \times n}\), where each entry is given by

Based on the distance matrix \(\textbf{D}\), the k nearest neighbors of node \(v_i\), denoted by \(\mathscr {N}_{k_\textrm{NN}}(v_i)\), are defined as the set of \({k_\textrm{NN}}\) distinct nodes (excluding \(v_i\) itself) that have the smallest distances to \(v_i\). Formally, this is given by:

where \(\textbf{D}_{i, -i}\) denotes the i-th row of the distance matrix \(\textbf{D}\) with the i-th entry (the self-distance) excluded, and \(\operatorname {argsort}_{k_\textrm{NN}}(\cdot )\) returns the indices of the \({k_\textrm{NN}}\) smallest values.

The undirected edge set E is then defined according to a symmetric connectivity rule: an edge \((v_i,v_j)\) is established if and only if \(v_i \in \mathscr {N}_{k_\textrm{NN}}(v_j)\) or \(v_j \in \mathscr {N}_{k_\textrm{NN}}(v_i)\). This ensures bidirectional neighborhood consistency and enhances graph connectivity.

Finally, the graph is represented as

where V denotes the set of node, and each node \(v_i\) is associated with an encoder-derived embedding \(\textbf{v}_i \in \mathbb {R}^m\).

We define the feature matrix \(\textbf{V} \in \mathbb {R}^{n \times m}\) such that the i-th row corresponds to the embedding of node \(v_i\).

The pseudo-code of the MOLS-GC is presented in Algorithm 2. As illustrated in Algorithm 2, the proposed method first defines an autoencoder architecture comprising two components: an encoder and a decoder. The balanced transaction dataset \(D_{\textrm{bal}}\) is employed to train the autoencoder, with the objective function specified in Eq. (26). During training, the network parameters are iteratively optimized until convergence. Upon completion, the trained encoder is adopted as the latent mapping function to project the transaction dataset into a low-dimensional latent space, yielding the embedded dataset \(\textbf{V}\). Subsequently, the pairwise distances among the embedded transaction samples in the low-dimensional space are computed to derive a distance matrix. On the basis of this matrix, a KNN-based graph construction procedure is employed, wherein each embedded transaction sample in \(\textbf{V}\) is treated as a graph node. Edges are then established between nodes exhibiting high mutual similarity, resulting in a graph \(G = \left( V,E \right)\) whose structural properties inherently conform to the homophily assumption.

MOLS-GC.

AdaAdvSAGE

In spectral-based GCNs41, the entire adjacency matrix and Laplacian matrix need to be stored during training, which leads to substantial memory overhead. Considering the massive volume of credit card transaction data in real-world applications, spectral-based GCN algorithms become impractical. In contrast, the spatial-based GraphSAGE25 defines convolution operations directly on the neighborhood space of nodes, thereby enabling mini-batch training and alleviating memory limitations caused by large-scale training data. However, its static feature aggregation mechanism fails to adequately capture the relative importance of features across different transaction instances, reducing the robustness of the model and simultaneously constraining detection performance.

To address this issue, inspired by the GraphSAGE25, we propose AdaAdvSAGE, a GraphSAGE-based model that incorporates an adaptive feature selection module and an adversarial training mechanism, while introducing inter-layer residual connections to alleviate over-smoothing and gradient vanishing, thereby enabling robust node representation learning. As illustrated in Fig. 4, the graph-structured transaction data is first processed by an adaptive feature selection module, wherein a feature selector, implemented as a feedforward neural network, adaptively adjusts the feature selection weights. This adaptive feature selection mechanism enables the model to selectively amplify discriminative features while attenuating noisy or redundant information, thereby enhancing its capacity to detect subtle and context-specific patterns indicative of fraudulent behavior.

Subsequently, after neighbor sampling, during the message-passing and aggregation phases, each node receives information from its neighbor nodes and integrates these neighbor features with its own representation through an aggregation function to update the node embedding. Meanwhile, we incorporate an adversarial training mechanism that constructs adversarial perturbations in the latent space by following the gradient of the classification loss with respect to the node features. These perturbations are used to generate adversarial samples, guiding the model to learn more robust representations in directions where it is most vulnerable.

Furthermore, to mitigate the problem of over-smoothing in deep graph aggregation layers, we implement inter-layer residual connections between consecutive aggregation layers, wherein the output of the current layer is combined in a weighted manner with the embedding from the preceding layer, ensuring effective information propagation and preservation of discriminative features throughout the network.

Overall, the proposed AdaAdvSAGE constitutes a unified GNN model tailored specifically for CCFD on transaction graphs that conform to the homophily assumption. It ensures both discriminative power and robustness while maintaining stable information flow across network depths. In the following sections, we provide a detailed analysis of each component in sequence.

Process of AdaAdvSAGE.

Adaptive feature selection

In real-world CCFD, fraudulent transactions often resemble legitimate ones, particularly near decision boundaries, challenging graph-based models. Conventional GNNs fail to fully capture the relative importance of features in different transaction samples, diluting the discriminative signal and amplifying noise.

To address this limitation, we propose an adaptive feature selection module, which dynamically evaluates and assigns importance to each feature dimension based on the node’s local context. This allows the model to adaptively emphasize discriminative features while effectively filtering out task-irrelevant noise.

We consider a graph \(G = (V, E)\), where V and E denote the node and edge sets, respectively. For a given node \(v_i \in V\), its feature vector is denoted by \(\textbf{v}_i \in \mathbb {R}^m\), where m is the dimensionality of the latent space. To enable adaptive feature selection, we introduce a learnable selector that computes feature-wise importance weights \(w_i \in [0,1]^m\) based on \(\textbf{v}_i\), using a two-layer feedforward neural network. Specifically, the computation of \(w_i\) is defined by:

where \(W_1 \in \mathbb {R}^{h \times m}\) and \(W_2 \in \mathbb {R}^{m \times h}\) are the learnable weight matrices of the two linear transformations, \(b_1 \in \mathbb {R}^h\) and \(b_2 \in \mathbb {R}^m\) are the bias terms, \(\delta (\cdot )\) denotes a non-linear activation function (LeakyReLU in our implementation), and \(\sigma (\cdot )\) is an element-wise sigmoid function constraining the output to [0, 1].

The final feature representation \(\tilde{\textbf{v}}_i\) is computed by performing an element-wise product between the original feature vector \(\textbf{v}_i\) and the corresponding learned weights \(w_i\), as follows:

where \(\odot\) means multiplying two vectors element by element (each position multiplied separately). In this way, \(\tilde{\textbf{v}}_i\) is obtained as the weighted version of the feature vector of node \(v_i\).

By adaptively highlighting informative, task-relevant dimensions of \(\textbf{v}_i\) while suppressing noisy or redundant ones before neighborhood aggregation, the proposed adaptive feature selection module enhances the model’s ability to capture subtle fraudulent patterns in local graph structures and improves the effectiveness of subsequent graph convolutions.

Latent-space adversarial perturbation generation

Considering the presence of subtle and deceptive variations in transaction features, we adopt an adversarial training strategy to improve the robustness of proposed AdaAdvSAGE model. In CCFD, fraudulent transactions often closely resemble legitimate ones, making it difficult for the model to distinguish between them, especially near decision boundaries. Although GNNs are effective at capturing relational structures, they are vulnerable to small perturbations in latent node features. By generating adversarial samples through latent-space perturbations guided by the gradient of the classification loss, the model is encouraged to learn more discriminative and robust representations. The following describes how such adversarial perturbations are constructed and incorporated into the training process.

For a target node \(v_i \in V\) with weighted feature vector \(\tilde{\textbf{v}}_i \in \mathbb {R}^m\) (as obtained in Eq. (35)), we define the training loss \(\mathscr {L}_{\textrm{BCE}}\) using the binary cross-entropy (BCE) function, which is suitable for binary classification tasks. Given the ground truth label \(y \in {0, 1}\) and the predicted probability \(\hat{y} \in [0, 1]\), the BCE loss is formulated as

To construct adversarial perturbations, we compute the gradient of \(\mathscr {L}_{\textrm{BCE}}\) with respect to the weighted feature vector, denoted as \(\nabla {\tilde{\textbf{v}}_i} \mathscr {L}_{\textrm{BCE}} \in \mathbb {R}^m\). These gradients guide the injection of adversarial perturbations into node features, thereby enhancing the model’s resilience to malicious or noisy variations in the latent space.

The adversarial perturbation is generated following the fast gradient sign method (FGSM):

where \(\varepsilon > 0\) is a hyperparameter controlling the perturbation magnitude, and \(\operatorname {sign}(\cdot )\) is applied element-wise.

The perturbed feature vector is then given by

During training, both \(\tilde{\textbf{v}}_i\) and \(\tilde{\textbf{v}}^{\textrm{adv}}_i\) are fed into the subsequent message-passing and aggregation layers. This adversarial training strategy compels the model to learn under the most loss-sensitive conditions, thereby improving its resilience to adversarial perturbations and enhancing its capacity to detect anomalous or fraudulent patterns.

Inter-layer residual connections

Considering that GraphSAGE tends to suffer from over-smoothing in deep aggregation layers, we draw inspiration from ResNet42 and introduce inter-layer residual connections between successive aggregation layers to mitigate the risk of losing discriminative information during multi-layer message propagation.

Let \(v_i \in V\) denote the i-th node in the graph, whose initial representation is defined as \(\textbf{h}^{0}_i= \textbf{z}_i\), where \(\textbf{z}_i \in \{\tilde{\textbf{v}}_i,\ \tilde{\textbf{v}}^{\textrm{adv}}_i\}\) denotes either the weighted input feature or its adversarial counterpart, as specified in Eqs. (35) and (38), respectively.

At each layer \(k=1,\ldots ,K\), AdaAdvSAGE updates node representations by aggregating messages from each node and its neighbors using the standard GraphSAGE operator (Eq. 2), identical to GraphSAGE. Let \(\hat{\textbf{h}}_{i}^{k} \in \mathbb {R}^{d_k}\) denote the pre-residual output of the k-th message aggregation layer, where \(d_k\) is the dimensionality of the node representations at layer k. This output is computed from the previous-layer representation and the local neighborhood of node \(v_i\). It can be represented as:

where \(\mathscr {N}_{k_\textrm{NN}}(v_i)\) denotes the set of neighbors of node \(v_i\), \(\textrm{Agg}^{k}(\cdot )\) denotes the mean aggregation function, \(W^{k}\) is a trainable weight matrix at layer k, and \(\sigma\) represents the ReLU activation function.

The post-residual representation is then obtained via a convex combination of the aggregated output and the residual connection:

where \(\gamma \in [0, 1]\) controls the strength of the residual connection. The parameter \(\gamma\) is treated as a tunable hyperparameter selected via validation.

When the input and output dimensions differ, i.e., \(d_k \ne d_{k-1}\), a linear projection is applied to align the dimensions before residual fusion:

This residual connection preserves discriminative information from lower layers and stabilizes gradient flow, thereby alleviating over-smoothing while maintaining effective cross-layer information propagation.

Adversarial training and inference

The proposed AdaAdvSAGE, a GraphSAGE-based GNN, integrates adaptive feature selection, adversarial perturbations, neighborhood aggregation, and inter-layer residual connections into a unified architecture. This design yields robust node representations and captures relational patterns in transaction graphs. To further illustrate how these components interact within the model, we provide a step-by-step description of its workflow. We next detail the forward pass, training objective, and inference.

For each node \(v_i \in V\), we first obtain its weighted transaction feature vector \(\tilde{\textbf{v}}_i\) using Eq. (35). The corresponding adversarial version \(\tilde{\textbf{v}}^{\textrm{adv}}_i\) is generated according to Eq. (38). Both representations are passed through a K-layer GNN composed of message aggregation and inter-layer residual connections, as described in Eqs. (40) and (41).

We process the data through the described GNN and obtain the final representation of each node, which subsequently serves as the basis for the classification task. Let \(\textbf{h}_i^K\) denote the final hidden representation of node \(v_i\) at the K-th layer. For classification, we apply two fully connected layers: the first one transforms the representation into a hidden space with a non-linear activation, while the second applies a sigmoid activation to produce the final predicted probability. Formally, the predicted probability for node \(v_i\) is computed as follows:

where \(\hat{y}_i\) denotes the predicted probability that node \(v_i\) belongs to the fraudulent transaction class, \(\textbf{W}_1 \in \mathbb {R}^{d' \times d_K}\) and \(b_1 \in \mathbb {R}^{d'}\) are the weights and bias of the first layer, \(\phi (\cdot )\) denotes a non-linear activation function such as ReLU, \(\textbf{W}_2 \in \mathbb {R}^{d'}\) and \(b_2 \in \mathbb {R}\) are the parameters of the second (output) layer, and \(\sigma (\cdot )\) is the sigmoid function that maps the output to [0, 1], the variable \(d_K\) denotes the dimension of the node representation \(\textbf{h}_i^K\) at the K-th GNN layer, while \(d'\) refers to the dimensionality of the intermediate hidden space used for classification. Note that \(d_K\) is typically determined by the GNN architecture, whereas \(d'\) is a tunable hyperparameter.

To enhance robustness, we adopt an adversarial training strategy, in which small perturbations are applied to each sample along the gradient direction of the loss function with respect to the input, thereby generating adversarial samples. The model is then optimized by minimizing the joint loss over clean and adversarial samples. This strategy improves the stability of the model against input perturbations while preserving accuracy on clean data, encouraging the reliance on more task-relevant and robust features. Specifically, we train the model on both clean and adversarial samples using the average BCE loss:

where \(\hat{y}_i\) and \(\hat{y}^{\textrm{adv}}_i\) denote the predicted probability based on clean and adversarial inputs respectively, and \(y_i \in \{0,1\}\) is the true label.

After training, during inference, we discard the adversarial branch and compute the node representation on the clean input. Specifically, we first obtain \(\textbf{h}_i^K\), the final hidden representation of node \(v_i\) at the K-th layer. We then use the same classifier as in Eq. (42) to compute the predicted probability for fraud detection:

The output \(Pred_i \in [0,1]\) quantifies the probability that transaction \(v_i\) is fraudulent. To obtain the final classification result, a decision threshold \(\tau\) is applied: if \(Pred_i \ge \tau\), the transaction is classified as fraudulent; otherwise, it is classified as legitimate. In practice, \(\tau\) can be set to 0.5. This completes the fraud detection pipeline from adaptive feature selection to robust prediction.

The training and inference procedure for the AdaAdvSAGE model is summarized in Algorithm 3. The model comprises an adaptive feature-selection module, a GraphSAGE backbone with mean-pooling aggregation and inter-layer residual connections, an adversarial training mechanism, and a two-layer classifier. During each forward pass, the feature-selection module assigns feature-wise weights to each node to produce weighted node representations, which are then fed into a K-layer GraphSAGE to capture multi-hop neighborhood information. To improve robustness during training, adversarial samples are generated by perturbing the weighted features in the direction of the loss gradient; both clean and adversarial inputs are used jointly to optimize the network. Inter-layer residual connections between successive aggregation layers mitigate over-smoothing and help preserve discriminative signals across depths. Finally, a two-layer neural classifier operates on the resulting embeddings to output fraud-probability predictions.

AdaAdvSAGE.

Experiment

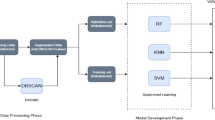

In this section, we evaluate the performance of the proposed HMOA-GNN framework using three widely recognized benchmark datasets in the domain of CCFD: the European Cardholders Transaction dataset43, the IEEE-CIS Fraud Detection dataset44, and the Simulated Credit Card Transactions dataset45.

In this section, we first describe the experimental setup, including the hardware and software environments, as well as the procedures adopted in our experiments. Subsequently, ablation studies are conducted on the three core modules of the proposed HMOA-GNN framework (DEHS, MOLS-GC, and AdaAdvSAGE) to evaluate their respective contributions to the overall performance. Then, to examine the effectiveness of the proposed DEHS method in mitigating class imbalance, we compare it against four representative data balancing techniques: random undersampling, Synthetic Minority Oversampling Technique (SMOTE)12, Histogram SMOTE (H-SMOTE)46, and K-means-based undersampling47. The performance of the HMOA-GNN framework, when combined with each of these sampling strategies, is then evaluated on the fraud detection task.

Afterward, to further verify the effectiveness of the proposed HMOA-GNN framework in CCFD tasks, we conducted a comparative analysis with several representative models in this domain. The experimental results demonstrate that our method achieves superior overall performance compared with the existing approaches for CCFD.

Datasets

-

1.

European Cardholders Transaction dataset: This Kaggle dataset43 comprises 284,807 credit card transactions from european cardholders over two days in September 2013, of which only 492 (0.17%) are fraudulent. The severe class imbalance biases models toward legitimate transactions, reducing fraud detection accuracy. The dataset contains no missing values, obviating the need for imputation or feature filtering.

-

2.

IEEE-CIS Fraud Detection dataset: Provided by Vesta44, this dataset includes real e-commerce transactions from September–December 2017. It consists of a transaction table (590,540 entries, 41 features) and an identity table (394 features), yielding 435 features after merging.

-

3.

Simulated Credit Card Transactions dataset: This dataset45 comprises credit card transactions simulated between January 1, 2019, and December 31, 2020, including both legitimate and fraudulent activities. It covers 1,000 customers making transactions with 800 merchants. The dataset was generated using the Sparkov Data Generation tool created by Brandon Harris, and all simulation files were merged and converted into a standardized format.

Performance evaluation metrics

In the field of fraud detection, several evaluation metrics are commonly adopted to address the challenges posed by imbalanced datasets. In this study, we primarily employ Recall, F1-score, and AUC as performance metrics.

The AUC (Area Under the ROC Curve) is computed by plotting the trade-off between the True Positive Rate (TPR) and False Positive Rate (FPR) under varying classification thresholds. TPR and FPR are defined as follows:

where TP denotes true positives, FN false negatives, FP false positives, and TN true negatives.Accuracy measures the proportion of correctly classified samples in the total dataset

Precision quantifies the percentage of predicted fraudulent transactions that are indeed fraudulent

Recall is defined as the proportion of actual fraudulent transactions that are correctly identified by the model. In general, the cost of failing to detect fraudulent transactions is higher than the cost of incorrectly classifying legitimate transactions as fraudulent. Therefore, in fraud detection scenarios, a higher recall is often preferred over precision. The mathematical definition of recall is given as follows:

The F1-score is the harmonic mean of Precision and Recall

where F1-score balances both the Precision and Recall, making it a robust evaluation metric that helps avoid extreme bias toward one metric over the other.

Experimental setup

Experimental environment

All experiments were conducted on a high-performance computing workstation to ensure reproducibility and reliability of the results. The software environment consisted of Python 3.9.13 as the primary programming language, with deep learning models implemented using PyTorch 2.1.1 and accelerated by CUDA 12.1. The hardware configuration included an Intel Core i9-14900HX processor, a single NVIDIA GeForce RTX 4060 graphics processing unit with 8 GB of memory, and a total of 64 GB of system RAM, providing sufficient computational resources to support efficient training and evaluation of the proposed models.

Preprocessing

We propose the DEHS method to generate synthetic fraudulent transaction samples. The legitimate and fraudulent transactions in the dataset are separated, after which a center selection and boundary refinement strategy is applied to eliminate noise and redundant data from the legitimate transactions. The number of synthetic samples to be generated, along with the density of each minority-class sample, is then computed to obtain the corresponding density weight for each sample. Guided by the density weights, minority-class sample points are strategically selected for synthetic sample generation. Each synthetic sample is created along the line segment connecting the selected sample and its nearest neighbor. Subsequently, the density of each generated sample is evaluated to determine whether it constitutes noise. Noisy samples are discarded, whereas valid samples are retained. This selection–generation cycle is repeated until the desired number of synthetic samples is produced, yielding a balanced dataset.

Data graphization

We employ the MOLS-GC method to convert the non-graph-structured credit card transaction data into graph data. Using the portion of the balanced dataset processed by the DEHS method, we train an autoencoder to obtain a latent mapper. The credit card transaction data employed for training and detection are then passed through the latent mapper to obtain their latent representations in the new latent space. Each transaction’s similarity to others in the latent space is computed, and edges are established to its nearest neighbors via the KNN algorithm, forming graph-structured data suitable for GNN input.

Training AdaAdvSAGE model

The graph-structured credit card transaction data are first balanced by the DEHS method. They are then transformed into graph format through the MOLS-GC process, which produces latent-space representations of the transaction nodes. After these steps, the data are fed into the proposed AdaAdvSAGE model.

During training, each transaction node undergoes adaptive feature selection. In this step, feature-wise weights are learned to highlight discriminative dimensions while suppressing noisy or redundant information. Next, adversarial perturbations are generated in the latent space by following the gradient of the classification loss with respect to node features. Both clean and adversarial samples are used together to optimize the model. This strategy improves the robustness of the learned representations. Node embeddings are further updated through GraphSAGE-based neighborhood aggregation with inter-layer residual connections. These connections mitigate over-smoothing and help preserve discriminative signals across layers. Through this process, we obtain the well-trained AdaAdvSAGE model for CCFD.

Fraud detection

After training, the graph-structured credit card transaction data are fed into the trained AdaAdvSAGE model. Each transaction node is first processed by the adaptive feature selection module, and its representation is subsequently propagated through the aggregation layers with residual connections, where the node embeddings are progressively refined. Finally, a two-layer classifier, implemented as a standard multi-layer perceptron, maps the embeddings to fraud probability scores. Transactions are then classified as fraudulent or legitimate according to a decision threshold. This inference procedure ensures clarity, reproducibility, and fairness in comparison.

Hyperparameter setting

During the experimental process, we tuned the hyperparameters and evaluated the resulting models until the optimal set of hyperparameters was identified. The best values of the hyperparameters are presented in Table 1.

Practical considerations and potential deployment

In terms of practical deployment within financial transaction platforms, the HMOA-GNN framework can be encapsulated as a scalable microservice, interfacing with existing business systems through RESTful APIs or gRPC. Prior to entering the detection model, transaction data undergoes preprocessing and graph construction within a distributed data pipeline (e.g., message queues and stream processing frameworks), ensuring stable integration with large-scale and high-velocity transaction streams. Leveraging the inductive learning capability and scalability of the AdaAdvSAGE model, the processed transaction data can be directed to the deployed model for either real-time or batch detection. In real-time mode, the model generates representations by jointly considering transaction features and the neighborhood information within the transaction graph, thereby enabling rapid risk assessment. In batch mode, the framework supports periodic large-scale analyses, which complement real-time detection by providing comprehensive risk monitoring. Furthermore, the system design allows integration with existing rule-based engines or risk-control platforms through modular connectors, enabling hybrid decision support. The architecture also supports horizontal scalability, ensuring robustness against peak transaction volumes and adaptability to the heterogeneous technical infrastructures of different financial institutions.

Ablation experiment

In our ablation studies, we define the baseline as a simplified variant of the HMOA-GNN framework in which all proposed optimization modules, including DEHS, MOLS-GC, and AdaAdvSAGE, are excluded.

Specifically, the baseline does not employ any mechanism to address the inherent class imbalance in the credit card transaction datasets. For graph construction, it adopts a random neighbor connection strategy, where each transaction record is linked to a set of randomly selected counterparts and treated as neighbors. On this graph, the baseline retains only the fundamental GraphSAGE-style message passing and aggregation operations, without incorporating any of the proposed enhancements. This design yields a minimal yet consistent setting, thereby ensuring that observed performance gains can be attributed solely to the integration of DEHS, MOLS-GC, or AdaAdvSAGE modules.

By contrasting the baseline with the module-augmented variants, we are able to quantify the distinct contributions of each component to the overall performance improvement.

Ablation experiment of DEHS module

To validate the effectiveness of the DEHS module, we sampled subsets from each of the three datasets using a fixed random seed (set to 42) to ensure reproducibility. We first examined the performance gains achieved by incorporating the DEHS module into baseline methods, thereby assessing its standalone contribution. Furthermore, we evaluated the overall performance of the HMOA-GNN framework both with and without the DEHS module, in order to quantify its impact on the framework as a whole.

Table 2 presents the ablation results on three benchmark datasets. Overall, the DEHS module demonstrates significant advantages in handling scenarios with extreme class imbalance, particularly on the IEEE-CIS and Simulated datasets. For the Baseline model, the minority class is almost entirely unrecognized without DEHS (e.g., both Recall and F1-score are 0 on the European and Simulated datasets). After incorporating DEHS, Recall improves to 0.8485 on IEEE-CIS and 0.5323 on Simulated, while F1-score increases from 0 to 0.5138 and 0.4204, respectively. These results indicate that DEHS effectively alleviates the detrimental impact of severe imbalance on detection performance, even though it may slightly reduce accuracy and precision under the Baseline model. Furthermore, within the HMOA-GNN framework, removing DEHS leads to a substantial decline in Recall and F1-score (e.g., Recall drops from 0.8333 to 0.6061 on IEEE-CIS, and from 0.6935 to 0.3065 on Simulated), further confirming the importance of DEHS in constructing a more balanced and informative training set.

In contrast, on the European dataset, the results of Baseline and Baseline w/ DEHS remain identical, with AUC consistently fixed at 0.5 and all minority-class metrics equal to 0. This phenomenon can be attributed to the extremely sparse and indistinct distribution of minority classes in this dataset. Under such circumstances, merely generating additional minority samples through DEHS is insufficient for building an effective decision boundary when relying solely on a simple baseline model. Nevertheless, when combined with the HMOA-GNN framework, the contribution of DEHS becomes evident: Recall increases from 0.6863 to 0.9216, and F1-score rises from 0.8140 to 0.9307, despite a slight decrease in precision (from 1.0000 to 0.9400). This demonstrates that the quality enhancement of samples provided by DEHS can be fully exploited under a framework equipped with structural modeling and adaptive feature learning capabilities.

In summary, the DEHS module proves to be a pivotal component in alleviating class imbalance and substantially enhancing the model’s capability to detect minority classes, with its efficacy consistently validated across multiple benchmark datasets. The absence of improvement on the European dataset under the baseline model further underscores that data balancing in isolation is inadequate for addressing the challenges of minority class detection. Instead, its full potential can only be realized when integrated with structural modeling strategies that enable more discriminative representation learning.

Ablation experiment of MOLS-GC module

To validate the effectiveness of the MOLS-GC module, we sampled subsets from three datasets using a fixed random seed (set to 42) to ensure reproducibility. We then examined the performance improvements of baseline methods after integrating the MOLS-GC module and further assessed the standalone effectiveness of the module itself. In addition, we compared the overall performance of the HMOA-GNN framework with and without the incorporation of the MOLS-GC module, thereby evaluating its impact on the framework as a whole.