Abstract

The expansion of LEAN and small batch manufacturing demands flexible automated workstations capable of switching between sorting various wastes over time. To address this challenge, our study is focused on assessing the ability of the Segment Anything Model (SAM) family of deep learning architectures to separate highly variable objects during robotic waste sorting. The proposed two-step procedure for generic versatile visual waste sorting is based on the SAM architectures (original SAM, FastSAM, MobileSAMv2, and EfficientSAM) for waste object extraction from raw images, and the use of classification architecture (MobileNetV2, VGG19, Dense-Net, Squeeze-Net, ResNet, and Inception-v3) for accurate waste sorting. Such a pipeline brings two key advantages that make it more applicable in industry practice by: 1) eliminating the necessity for developing dedicated waste detection and segmentation algorithms for waste object localization, and 2) significantly reducing the time and costs required for adapting the solution to different use cases. With the proposed procedure, switching to a new waste type sorting is reduced to only two steps: The use of SAM for the automatic object extraction, followed by their separation into corresponding classes used to fine-tune the classifier. Validation on four use cases (floating waste, municipal waste, e-waste, and smart bins) shows robust results, with accuracy ranging from 86 to 97% when using the MobileNetV2 with SAM and FastSAM architectures. The proposed approach has a high potential to facilitate deployment, increase productivity, lower expenses, and minimize errors in robotic waste sorting while enhancing overall recycling and material utilization in the manufacturing industry.

Similar content being viewed by others

Introduction

Waste generation represents an inevitable part of the manufacturing process. Considering that global production of municipal solid waste is expected to reach 3.40 billion tons by 20501, it poses a threat to the environment and emerges as a pivotal resource for remanufacturing. If inadequately managed, an increase in waste volumes will put a burden on achieving UN Sustainable Development Goals (SDGs)2, such as Good Health and Well-being (SDG3), Clean Water and Sanitation (SDG6), Sustainable Cities and Communities (SDG11), Life Below Water (SDG14), and Life on Land(SDG15). If managed effectively, this surge will reduce resource scarcity3,4, decrease production costs5,6, and minimize energy dependence7. These benefits may be especially appealing to industrial enterprises, as proper waste management has a direct impact on production costs and resource expenses. To highlight the environmental benefits, proper waste management in the industry could reduce municipal solid waste disposal by more than 64%)8. Advancements on this path should strengthen remanufacturing, which is expected to reach an annual value of up to 90 billion euros by 2030, increasing employment by 65,000 new jobs in the EU alone9. To initiate this change, there is an increasing trend toward hardening policies that regulate how industrial enterprises should treat their waste to keep technological development sustainable10. However, although LEAN and other modern philosophies of manufacturing management argue “zero waste” as one of the top priorities in manufacturing-remanufacturing supply chains11, the practice has shown that this goal is unlikely to be achieved12. Instead, companies tend to reduce and reuse waste—while at the end of the manufacturing process, there is a portion of raw or processed materials that need to be sorted for recycling or disposal.

Although the waste sorting industry tends to be automated, there is a portion of waste (commonly at the end of the sorting chain), in most cases, that still has to be sorted manually by human operators. The characteristic of these waste sorting tasks and corresponding workplaces is a limited number of materials or objects that need to be sorted. This distinct industrial waste sorting from the sorting of raw municipal waste, which may require accurate recognition of hundreds of different materials and products. Furthermore, with the expansion of “small batch and customized manufacturing” in developed countries, there is a growing need for flexible production lines that are capable of switching between different products with minimal effort. Considering such a trend, this study is focused on the niche of industrial waste sorting, which requires more generic and adaptable solutions compared to traditional ones that are designed for fixed production lines or municipal waste.

Automating waste sorting processes with robots has proven its potential to increase productivity, lower expenses, and minimize errors while enhancing the overall efficiency of material use and recycling13,14. A typical robotic waste sorting system is shown in Fig. 1a, and it consists of a pick-and-place solution operating atop a conveyor. The robot is guided by a vision system that includes one or more cameras and an algorithm designed to comprehend image content, determine the item’s location, and execute the picking task. In these terms, a critical task affecting the utility of the whole waste sorting systems becomes the ability of computer vision algorithms to recognize various types of waste and the robustness of grippers that need to perform pick-and-place of highly variable objects15.

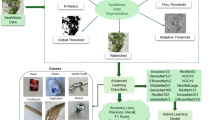

Overview of the proposed procedure for generic waste sorting.

This article primarily focuses on the advancements of robotic vision for waste sorting, whose comparative overview is given in Table 1. This paragraph emphasizes a series of challenges that justify the need for the generic procedure proposed in this study. First, the waste sorting problems may be highly specific and heterogeneous (in terms of considered materials and equipment used in Table 1), so there is a need to retrain computer vision algorithms for each specific industrial application. In terms of approaches used, most studies are based on using detection, instance segmentation, and machine learning—while a portion of studies combined these two approaches to obtain better sorting accuracy. Referring to the targeted waste sorting in the small batch and flexible production means that each new switching or extension of production will require additional investments in the development and annotation of new datasets, as well as training of computer vision algorithms, e.g., segmentation or detection. In contrast to the large facilities for municipal waste sorting into a limited number of types of materials (e.g., metal, wood, plastic, glass, and paper)—their further sorting of specific metals (e.g., aluminum, copper, and brass), or specific types of plastic (polypropylene, polyvinyl chloride, polyethylene terephthalate, etc.) represents a challenge from the automation viewpoint. In these terms, the wider application of robotic solutions depends on reducing the costs and complexity of adopting a computer vision module in Fig. 1. This study identifies the segmentation and/or detection steps as a bottleneck since the manual collection and annotation of waste data require a significant amount of time and investment. By eliminating this step, the waste separation could be simplified into one step: The development of deep learning classifiers, which assumes only the separation of specific objects/materials images into corresponding class folders. Accordingly, this study hypothesizes that the barrier to adapting robotic waste sorting in industrial setup could be significantly reduced by enabling versatile robot picking of unknown waste objects.

Related work

Previous research approached the challenge of waste sorting as a computer vision-based detection task, relying on combining feature engineering with machine learning algorithms, while the most recent studies have adopted various deep learning models and architectures to address this problem. In16, the authors introduced an automatic flexible sorting system capable of handling objects with high variability in shape, position, and class, avoiding stopping production when new items are introduced. It detects new products on the conveyor and generates online labeled real images used to re-train the deep learning network. Chen et al. designed and built a robot prototype for construction waste recycling, achieving real-time navigation, a deep learning-based detection method (under different illumination and spatial density conditions), and a 3D object pickup strategy for the accurate identification and stable grasping of waste items17. Two deep-learning techniques (convolutional neural network (CNN) and Graph-LSTM) that can recognize waste products (six classes) on a belt conveyor are presented18. A vision-based architecture for the effective sorting of parts based on shape and material properties is proposed in19, introducing a novel deep learning multi-modal approach, in which multiple parallel auto-encoders are used to extract spatio-spectral information from the RGB and multi-spectral sensors and project them in a common latent space. YOLO v8 was used to localize waste along the conveyor belt. Furthermore, a Multispectral Mixed Waste Dataset (MMWD) was produced, containing multi-spectral data of seven plastic and wood waste classes. In20, the authors developed a machine-learning procedure for the recognition of construction and demolition waste fragments from RGB images using three classifiers—CNN, gradient boosting (GB) decision trees, and multilayer perceptron leveraging selected feature extraction, enhancing classification speed and accuracy. To go beyond single-label waste classification, a multi-task learning architecture is proposed, based on a CNN used to simultaneously identify and locate wastes in images21. An e-waste classification model is developed to classify metallic and non-metallic fractions into broad categories of metal, PCB, plastic, and glass, using a feature vector consisting of mean intensity, standard deviation, and image-sharpness extracted from the thermograms22. Gundupalli et al. reported a system for classifying useful recyclables (e.g., iron, paper, plastic, etc.) from municipal solid waste thermal imaging samples23. A new metaheuristic method with deep transfer learning for the detection and classification of industrial waste was presented24. The model has two key phases—waste object recognition (YOLO-v5 object detector with the Harris Hawks Optimization algorithm) and waste object classification (stacked sparse autoencoder model). In25, the authors developed an optimized hybrid deep learning model (combining CNN and Deep Belief Network) for waste classification that boosted the performance to predict waste and classify it with increased accuracy. Jahanbakhshi et al. addressed the problem of classification of carrots based on various shapes (regular and irregular) to manage and control their waste, using improved CNN based on learning a pooling function by combining average pooling and max pooling26. A deep learning object detection network using X-ray images of the internal structure of waste electric and electronic equipment to separate and sort batteries is presented27. As a comparative summary of previous studies on this topic, an overview of their major differences in high-level approaches, algorithms, considered waste types, and datasets is given in Table 1.

In this paragraph, we emphasize a series of challenges that justify the need for the generic procedure proposed in this study. First, the waste sorting problems may be highly specific and heterogeneous (in terms of considered materials and equipment used in Table 1), so there is a frequent need to retrain computer vision algorithms for each specific industrial application. In terms of approaches used, most previous studies are based on using detection, instance segmentation, and machine learning—while a portion of studies combined these two approaches to obtain better sorting accuracy. Referring to the targeted waste sorting in the small batch and flexible production means that each new switching or extension of production will require additional investments in the development and annotation of new datasets, as well as training of computer vision algorithms, e.g., segmentation or detection. In contrast to the large facilities for municipal waste sorting into a limited number of types of materials (e.g., metal, wood, plastic, glass, and paper)—their further sorting of specific metals (e.g., aluminum, copper, and brass), or specific types of plastic (polypropylene, polyvinyl chloride, polyethylene terephthalate, etc.) represents a challenge from the automation viewpoint. In these terms, the wider application of robotic solutions depends on reducing the costs and complexity of adopting a computer vision module in Fig. 1. This study identifies the segmentation and/or detection steps as a bottleneck since the manual collection and annotation of waste data require a significant amount of time and investment. By eliminating this step, the waste separation could be simplified into one step: The development of deep learning classifiers, which assumes only the separation of specific objects/materials images into corresponding class folders. Accordingly, this study hypothesizes that the barrier to adapting robotic waste sorting in industrial setup could be significantly reduced by enabling versatile robot picking of unknown waste objects.

The rest of the paper is organized as follows. The methodology for versatile waste sorting is presented in Sect. 2 “Related work”, followed by experimental setup and results in Sect. 3 “Methods”. Section 4 “Experiments and results” provides a discussion of the results and advantages of the proposed pipeline. The paper ends with concluding remarks and future work perspectives in Sect. 5 “Discussion”.

Methods

In this study, the waste sorting task is split into two sub-tasks: 1) localization of waste objects on a conveyor, and 2) their classification into corresponding classes (Fig. 2). The task of waste object localization is solved using the Segment Anything architecture (SAM), of which five alternatives are considered in this study.

Architectures of deep learning algorithms used for waste sorting. a) Raw image captured from an industrial camera; b) SAM architecture; c) SAM outputs for a single detected object; d) EfficientSAM architecture; e) FasterSAM architecture; f) Cropped waste object; g) MobileNetV2 architecture; h) Sample image of municipal waste; i) SAM output for the considered sample image; j) Cropped waste objects from the considered sample image.

The base SAM is a zero-shot segmentation model developed on 11 million images and 1.1 billion segmentation masks28. Briefly, it is a foundation model that enables obtaining accurate segmentation masks from input images for a variety of input prompts. The SAM architecture is composed of three core modules: An image encoder, a prompt encoder, and a mask decoder (Fig. 2b). The image encoder generates one-time image embeddings using a mask auto-encoder29and a pretrained Vision Transformer (ViT-H)30. The prompt encoding modules are designed for efficient encoding of various prompt modes. The prompt is information that indicates what to segment in an image, and it can be foreground/background points, bounding box or mask, points, or text. In this study, we used the points prompts that are represented by positional encodings31and added with learned embeddings. By combining the image embedding with prompt encodings, the mask decoder module generates segmentation masks by using prompt self-attention32and cross-attention32 in two directions (from prompt to image embedding and back). The advantage of the SAM is that one can run the image encoder only once, and then the model can be prompted multiple times using the same image embeddings (which can provide masks for new prompts in ~ 50 ms). This characteristic makes the SAM superior for solving complex vision tasks that may need to be performed iteratively or in highly variable conditions (e.g., image annotation or perception in unseen environments).

The Fast Segment Anything (FastSAM) is proposed to address the bottleneck of the computationally expensive transformer branch, which limits real-industry SAM applications. This is achieved by decoupling the segment anything task into all-instance segmentation (input size 1024) and subsequent prompt-guided selection regions of interest33The first step is done using the YOLOv8-seg34object detector, which itself contains the instance segmentation branch based on the YOLACT35. The FastSAM achieved performances comparable with the original SAM, at 50 × higher run-time speed and using only 2% of the SA-1B Dataset (https://ai.meta.com/datasets/segment-anything) proposed with the original SAM architecture.

The Faster Segment Anything (FasterSAM) is proposed with the primary aim of bringing SAM to mobile devices, which is the reason why it is also termed MobileSAMby authors36. MobileSAM study starts from the recommendation of the original SAM paper that the default ViT-H encoder (632 M parameters) could be replaced with a retrained lightweight alternative (e.g., ViT-L 307 M, and ViT-B 86 M parameters) while trying to reduce computational power needed to perform to a single GPU. Specifically, 256 A100 GPUs and 68 h are needed to train the ViT-H encoder, and 128 GPUs and multiple days to train and replace with ViT-L and ViT-B—which represents a barrier for the majority of research labs to participate and contribute to the topic. This is achieved by decoupling the image encoded and mask decoder, by first distilling the knowledge from the heavy image encoder (ViT-H) to a lightweight one (ViT-Tiny)37 and fine-tuning the original mask decoder to better align with the distilled image encoder. The MobileSAm is 60 × smaller than the original SAM (with comparable performances) and 5 × faster and 7 × smaller compared to the concurrent FastSAM.

MobileSAMv2, as indicated, represents an improved version of MobileSAM. By adopting the YOLOv8 for efficient detection with bounding boxes, authors replaced the default grid-search point prompts with object-aware box prompts38. Overall, the authors reported a 16 × speed-up in performing the segment anything task while maintaining performance competitive to baseline SAM (3.6% performance boost for zero-shot object proposal on the LVIS dataset).

EfficientSAMis an extended work of the original SAM, made by leveraging SAM-leveraged masked image pretraining (SAMI) for producing pertained lightweight ViT backbones for segmenting anything task39in combination with the MAE pre-training method29. Briefly, the authors used the SAMI-pretrained light-weight image encoders and mask decoder to build EfficientSAMs and finetuned the models on SA-1B for segment anything task. For the segment anything task, the EfficientSAMs outperformed MobileSAM and FastSAM by a large margin (~ 4 AP), while having comparable complexity.

As this study focuses on developing the procedure for versatile robot picking of unknown or highly damaged/deformed waste objects, the SAM is used to improve: 1) the development of the object classification dataset, and 2) the localization of waste objects that need to be picked by robots. By using the SAM, data collection and annotation for classification is reduced to the acquisition of a sample video and split of the extracted object images into corresponding class folders. Besides the expected list of classes, we added one more “Unknown” class—where the robot needs to separate unseen waste objects that, at the moment, are not considered for recycling. In this way, unknown waste objects could be segregated from the recycling process or incorporated into the classification model as a separate class afterward.

By using the SAM output instance masks (Fig. 2c), each object is cropped, its background is removed, and it is forwarded to the MobileNetV2 classifier (Fig. 2d and 2e). The MobileNetV2 is a compact architecture based on an inverted residual structure, where the input and output of the residual block are thin bottleneck layers, while the intermediate expansion layer uses lightweight depthwise convolutions to filter features as a source of non-linearity40. Besides MobileNetV2, as alternative classification architectures, we also considered VGG1941, Dense-Net42, Squeeze-Net43, Inception-v344, and ResNet45. All classification models were pretrained on the ImageNet dataset46and fine-tuned using the PyTorch framework. To increase the robustness of considered models, we performed an online augmentation (random rotation ± 30°, random flip, random crop, and Gaussian noise) with a probability of 20%—while the dataset was randomly split into training (70%), validation (15%), and test (15%) datasets. The training was done using the Adam optimization algorithm47, with the cross-entropy loss function and the initial learning rate of 1e-4 (which was decreased by a factor of 0.1 every 7 epochs).

As indicated in Fig. 1, one of the classes is marked as “Unknown”—because in waste sorting it is common to have occurrences of unexpected materials. Moreover, the Unknown class is used to enable the extension of the dataset and corresponding classifier once the frequency of its appearance exceeds a certain limit. At that moment, there will already be a sufficient number of unsorted objects—which can instantly be used for new training. The workflow of this continuous improvement is illustrated in the pseudo-code shown in Table 2.

Experiments and results

All the implementations were done by using the Python programming language, along with the PyTorch library. All the computations were performed on the Lambda workstation with the AMD Threadripper 3970X (32 cores, 3.79 GHz processor), 128 GB RAM, and two Titan RTX (24 GB) + NVLink GPUs.

We gratefully acknowledge the contributions of following public data sources that were used for developing our dataset and this particular figure present in our manuscript: ZeroWaste-f48, RoboFlow Waste Conveyor49, WaRP-D50, ANTLab51, Kaggle Waste Materials classification Data dataset52, and FloW53 dataset.

To assess the proposed procedure, we developed four datasets corresponding to different use cases: a) Floating waste, b) Municipal waste, c) E-waste, and d) Smart bin waste. The Municipal waste dataset (1500 images) was developed by combining three public datasets. We randomly selected 1000 (out of 4503 images) from the ZeroWaste-f dataset, containing cardboard, soft plastic, rigid plastic, and metal)48, 300 out of 1518 images from the RoboFlow Waste conveyor dataset (Purdue University) containing cardboard, glass, metal, paper, and plastic49, and 200 out of 522 images from the WaRP-D dataset containing various types of bottles (glass, plastic), cardboard, and canisters (plastic, cans)50. From each image, we extracted objects using the SAM algorithm—which were further manually stratified into four classes corresponding to the following materials: Plastic, paper, metal, and glass (the rest materials were classified as “Unknown” class). The E-waste dataset (2100 images) was developed using images collected by authors from an e-recycling company in Serbia (Fig. 3). The company operators manually sorted and provided 700 pieces (of varying sizes) of each material, which were further imaged and manually split into the three class groups (aluminum, copper, and brass). The Smart Bins dataset (6587 images) was developed by combining two public datasets. We randomly selected 4600 out of 5199 images from the ANTLab (Politecnico di Milano) Smart Waste Bin dataset containing: Glass, metal, paper, and plastic objects (the remaining images were grouped into the “Unknown” class)51and 1987 images from the Kaggle Waste Materials classification Data dataset52. The Floating waste dataset (1600 images) was developed by combining two public datasets. From the FloW dataset53and RoboFlow floating waste dataset54, we extracted 1000 images containing plastic bottles, and 600 images containing objects classified as “Unknown”. The metrics selected for the evaluation and comparison of developed models included: \(Accuracy= \frac{{T}_{p}+{T}_{n}}{{T}_{p}+{F}_{p}+{T}_{n}+{F}_{n}}\) , \(Precision=\frac{{T}_{p}}{{T}_{p}+{F}_{p}}\) , \(Recal=\frac{{T}_{p}}{{T}_{p}+{F}_{n}}\), and \(F1 score=2\frac{Recall*Precision}{Recall+Precision}\), where Tp are true positive, Tn true negative, Fp false positive, and Fn false negative classifications. The obtained results are given in Table 3. In Table 4, we performed a comparison of the proposed pipeline with the existing object detection (using YOLOv11 algorithm55and instance segmentation (using MaskRCNN algorithms56) approaches. The classification accuracy metrics shown in Table 4 were obtained by manual assessment of YOLOv11 and MaskRCNN output objects and classes by human experts.

Considered use cases from the waste sorting industry.

Discussion

In terms of scene complexity and variation in object appearance, the E-waste dataset is most determined. It contains only three materials/classes, and they are imaged on the conveyor in laboratory conditions—resulting in accurate results (97%). We emphasize that this dataset is the most relevant representation of the considered waste classification in small batch manufacturing companies. The other three datasets were created by using public datasets. Although images were randomly selected, and waste object extraction from images was done automatically using the SAM, we preferred to exclude noisy or low-quality images from our datasets. The obtained accuracies were between 86 and 97% in all three cases. For the Smart bin waste, the limited number of considered waste objects (glass, metal, paper, and plastic) makes it similar in terms of complexity and performance to the E-waste dataset. Municipal waste may be considered the most complex and heterogeneous in terms of object appearance. The complexity also comes from the fact that many objects contain multiple materials (e.g., plastic bottles have paper etiquette). In these cases, the accuracy of the classifier is determined by the training strategy and how the corresponding dataset is developed. We emphasize that the lowest performances were obtained on the Floating waste dataset, which may be justified by the fact that images were captured from different and sub-optimal viewpoints ranging from above to horizontal viewpoint (while objects were far away and partially visible from water) (Fig. 4).

Comparison of SAM and FastSAM outputs for different waste types.

As mentioned in the introduction, in contrast to municipal waste sorting which has undetermined variations in the number and appearance of object classes, in the manufacturing industry there are commonly certain cases of materials/objects to be classified. Even in the waste sorting industry, at the end of the sorting chain (e.g., sorting of materials to metal, wood, plastic, etc.), there is a need to perform final sorting on a certain number of classes (e.g., aluminum, copper, and steel). On the one hand, this significantly simplifies waste sorting, while on the other hand, recognition of various types of materials requires constant retraining of algorithms listed in Table 1. As indicated in48, popular detection, segmentation, and instance segmentation algorithms (such as Mark-RCNN, TridentNet, and DeepLabV3) struggle to generalize on waste datasets that include in-the-world datasets. This is reasonable, as at the deployment stage they will be constantly exposed to unseen objects, as waste objects may be highly damaged and unrecognizable compared to referent ones used at the training stage. As a solution to this challenge, some studies used the blob detection of foreground objects (on the moving conveyor)16. However, this approach fails to separate overlapping objects (e.g., municipal waste in Fig. 3), which is the fundamental problem in waste separation. Our experiments indicate that the SAM fits this challenge well, especially in manufacturing industry-related problems (e.g., E-waste in Fig. 3)18. developed a real-time deep learning model of CNN & GLSTM for solid waste class detection and prediction, achieving high accuracy in real-world situations. Even though a generic, procedure for simultaneous localization and recognition of waste types proposed by21requires object separation in images, due to multi-label classification—each image in the dataset has bounding box annotations and multiple labels. The two-stage methods that combine detection and classification imply that both detection and classification modules need to be retrained in case of a new object or material class24,27. This increases the complexity of the entire process due to the necessity of additional annotation, which is intricate and time-consuming. Finally, when it comes to approaches that use only classification for computer vision-based waste sorting, they fall short in distinguishing waste objects in cases of multiple overlapping objects in a scene25,26.

Compared to the traditional approaches (object detection, object segmentation, and instance segmentation approaches)—the advantage of using the SAM + MobileNetV2 pipeline in waste sorting is two-fold. Besides easing object detection and separation, it is also very suitable for speeding up data preparation during the development of image classifiers. We report these two features as a key advantage compared to the conventional waste sorting pipelines listed in Table 1 and Table 4. Specifically, the results of our experiments in Table 4 indicate that the proposed approach slightly outperforms conventional object detection and instance segmentation algorithms in terms of accuracy. However, we underline that retraining existing artificial intelligence systems assumes significant investments in developing new datasets and very low efforts in re-running an existing code for model fine-tuning. Considering that, and the specificity of small batch production systems, the major challenge of the proposed pipeline is a significant reduction of costs and complexity of developing/switching to new waste sorting use cases. In our experiments, the development of novel datasets consumed only a few hours and no use of annotation tools. This is an important distinction from the previous approaches, which required the development of datasets for object detection and segmentation—which is a time-consuming process. To ease this process and continuous improvement of the system, we recommend incorporating one extra (“Unknown”) class, which enables the system to separate unseen waste objects that at the moment are not considered for recycling or could not be accurately classified. In this way, unknown waste objects could be segregated from recycling or forwarded to human operators for manual sorting. At the same time, if the frequency of a specific unseen class increases above some threshold, its images could be incorporated into the fine-tuning of the classification model as a separate class. This feature is useful in situations where the occurrence of waste material varies over time, or where recycling rules may change due to some disruption in supply chain or regulation policies.

In summary, while the described procedure is developed to be generic and has notable advantages, it is important to be aware of potential limitations. First, the validation of the proposed procedure is limited in the context of its specific applications focused on waste sorting in the manufacturing industry. Even more, this study is focused on a specific niche, defined as “waste sorting in small batch and flexible manufacturing”. Considering results obtained in the four use cases, there is a correlation between lowering performances of the procedure with increasing complexity and diversity of datasets. This is related to the abilities of the SAM itself, as it is trained on 11 million natural images, out of which neglectable portions were waste images. Referring to this, future research work may be directed toward fine-tuning the SAM to better separate municipal waste objects.

At this stage of development, we conclude that the proposed procedure based on combining the SAM model and MobileNetV2 classifier is suitable for the considered small batch manufacturing waste sorting, which is confirmed on the e-recycling and smart bin waste datasets. Implementing effective waste management practices in small batch manufacturing enterprises will contribute to local and global sustainability efforts. Locally, the proposed solution will increase resource efficiency and reduce costs associated with manufacturing byproducts disposal. Furthermore, an automated waste selection procedure will reduce the risk of injury by allocating human operators to other production sectors, increasing overall enterprise productivity. As a result, this will enhance the circular economy within micro, small, and medium-sized enterprises whose liquidity is considered critical to the creation of sustainable societies. Globally, these advances will positively impact shifts in megatrends, such as rapid urbanization, emerging markets, climate change, and resource depletion.

Conclusion

Waste generation is an inevitable byproduct of manufacturing processes that presents a substantial challenge to environmental well-being. Recent advancements in robotic waste handling offer promising solutions for the given problem, especially in the context of small batch and customized manufacturing or disassembly. In this study, we proposed a generic deep learning-based approach for versatile waste sorting and evaluated it using four different use cases that included floating waste, municipal waste, e-waste, and smart bins. The experimental results showed that the proposed fusion of SAM and MobileNetV2 classifier provides a series of advantages over previous studies based on conventional object detection and object segmentation algorithms. Specifically, our generic procedure simplifies separation into one step: The development of deep learning classifiers, which assumes only the separation of specific objects/materials images into corresponding class folders (in contrast to the time-consuming annotation of bounding boxes and semantic masks). The obtained sorting accuracy ranged from 86% on the heterogeneous floating waste dataset, to 97% on the e-waste that is developed in this study as a representative use case of industrial waste sorting. It is concluded that the proposed approach could be used to facilitate the automation process in the waste industry, increase productivity, lower expenses, and minimize errors in the robotic sorting ofindustrial waste57.

Data availability

The data that support the findings of this study are contained in ZeroWaste-f dataset 49, RoboFlow Waste conveyor dataset (Purdue University) 50, WaRP-D dataset 51, Smart Waste Bin dataset 52, Kaggle Waste Materials classification Data dataset 53, FloW dataset 54, and RoboFlow floating waste dataset 55, public datasets, which terms of use and availability are defined by original datasets authors. For a portion of data collected by authors of this study, we report that they are not publicly available and cannot be distributed as per agreement.

References

Wankhede, P. & Wanjari, M. Health issues and impact of waste on municipal waste handlers: A review. J. Pharm. Res. Int. 33, 577–581 (2021).

Sachs, J. D., Kroll, C., Lafortune, G., Fuller, G. & Woelm, F. Sustainable Development Report 2022. (Cambridge University Press, 2022).

Velenturf, A. P. M. & Purnell, P. Resource recovery from waste: Restoring the balance between resource scarcity and waste overload. Sustainability https://doi.org/10.3390/su9091603 (2017).

Siqueira, M. U. et al. Brazilian agro-industrial wastes as potential textile and other raw materials: A sustainable approach. Mater. Circ. Econ. 4, 9 (2022).

Horton, P., Allwood, J., Cassell, P., Edwards, C. & Tautscher, A. Material demand reduction and closed-loop recycling automotive aluminium. MRS. Adv. 3, 1393–1398 (2018).

Madhusudanan, S. & Amirtham, L. R. Optimization of construction cost using industrial wastes in alternative building material for walls. Key. Eng. Mater. 692, 1–8 (2016).

Olives, R., Ribeiro, E. & Py, X. Materials for the energy transition: Importance of recycling. In MATEC Web of Conferences vol. 379 (EDP Sciences, 2023).

European Environment Agency. Waste generation in Europe. https://www.eea.europa.eu/en/analysis/indicators/waste-generation-and-decoupling-in-europe#footnote-UHIHGTKG.

Parker, D. et al. Remanufacturing market study. (2015).

Silva, A., Rosano, M., Stocker, L. & Gorissen, L. From waste to sustainable materials management: Three case studies of the transition journey. Waste Manag. 61, 547–557 (2017).

Agyabeng-Mensah, Y., Tang, L., Afum, E., Baah, C. & Dacosta, E. Organisational identity and circular economy: Are inter and intra organisational learning, lean management and zero waste practices worth pursuing?. Sustain. Prod. Consum. 28, 648–662 (2021).

Quicker, P., Consonni, S. & Grosso, M. The zero waste utopia and the role of waste-to-energy. Waste Manag. Res. 38, 481–484 (2020) (Preprint at).

Sarc, R. et al. Digitalisation and intelligent robotics in value chain of circular economy oriented waste management – A review. Waste Manag. 95, 476–492 (2019).

Nižetić, S., Djilali, N., Papadopoulos, A. & Rodrigues, J. J. P. C. Smart technologies for promotion of energy efficiency, utilization of sustainable resources and waste management. J. Clean. Prod. 231, 565–591 (2019).

Lu, W. & Chen, J. Computer vision for solid waste sorting: A critical review of academic research. Waste Manag. 142, 29–43 (2022).

Da Rold, A., Furiato, M., Zaki, A. M. A., Carnevale, M. & Giberti, H. Deep learning-based robotic sorter for flexible production. Proced. Comput. Sci. 217, 1579–1588 (2023).

Chen, X., Huang, H., Liu, Y., Li, J. & Liu, M. Robot for automatic waste sorting on construction sites. Autom. Constr. 141, 104387 (2022).

Li, N. & Chen, Y. Municipal solid waste classification and real-time detection using deep learning methods. Urban Clim. 49, 101462 (2023).

Konstantinidis, F. K. et al. Multi-modal sorting in plastic and wood waste streams. Resour. Conserv. Recycl. 199, 107244 (2023).

Nežerka, V., Zbíral, T. & Trejbal, J. Machine-learning-assisted classification of construction and demolition waste fragments using computer vision: Convolution versus extraction of selected features. Expert. Syst. Appl. 238, 121568 (2024).

Liang, S. & Gu, Y. A deep convolutional neural network to simultaneously localize and recognize waste types in images. Waste Manag. 126, 247–257 (2021).

Gundupalli, S. P., Hait, S. & Thakur, A. Classification of metallic and non-metallic fractions of e-waste using thermal imaging-based technique. Process. Saf. Environ. Prot. 118, 32–39 (2018).

Gundupalli, S. P., Hait, S. & Thakur, A. Multi-material classification of dry recyclables from municipal solid waste based on thermal imaging. Waste Manag. 70, 13–21 (2017).

Neelakandan, S. et al. Metaheuristics with Deep Transfer Learning Enabled Detection and classification model for industrial waste management. Chemosphere 308, 136046 (2022).

Zhang, H., Cao, H., Zhou, Y., Gu, C. & Li, D. Hybrid deep learning model for accurate classification of solid waste in the society. Urban Clim. 49, 101485 (2023).

Jahanbakhshi, A., Momeny, M., Mahmoudi, M. & Radeva, P. Waste management using an automatic sorting system for carrot fruit based on image processing technique and improved deep neural networks. Energy Rep. 7, 5248–5256 (2021).

Sterkens, W., Diaz-Romero, D., Goedemé, T., Dewulf, W. & Peeters, J. R. Detection and recognition of batteries on X-Ray images of waste electrical and electronic equipment using deep learning. Resour. Conserv. Recycl. 168, 105246 (2021).

Kirillov, A. et al. Segment anything. arXiv preprint arXiv:2304.02643 (2023).

He, K. et al. Masked autoencoders are scalable vision learners. In Proc.of the IEEE/CVF conference on computer vision and pattern recognition 16000–16009 (2022).

Dosovitskiy, A. et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020).

Tancik, M. et al. Fourier features let networks learn high frequency functions in low dimensional domains. Adv. Neural. Inf. Process. Syst. 33, 7537–7547 (2020).

Cao, F. & Lu, X. Self-attention technology in image segmentation. In International Conference on Intelligent Traffic Systems and Smart City (ITSSC 2021) vol. 12165 271–276 (SPIE, 2022).

Zhao, X. et al. Fast segment anything. arXiv preprint arXiv:2306.12156 (2023).

Jocher, G. YOLOv5 by Ultralytics. 2020. URL https://github.com/ultralytics/yolov5 (2023).

Bolya, D., Zhou, C., Xiao, F. & Lee, Y. J. Yolact: Real-time instance segmentation. In Proc. of the IEEE/CVF international conference on computer vision 9157–9166 (2019).

Zhang, C. et al. Faster segment anything: Towards lightweight sam for mobile applications. arXiv preprint arXiv:2306.14289 (2023).

Hinton, G. Distilling the Knowledge in a Neural Network. arXiv preprint arXiv:1503.02531 (2015).

Zhang, C. et al. Mobilesamv2: Faster segment anything to everything. arXiv preprint arXiv:2312.09579 (2023).

Xiong, Y. et al. Efficientsam: Leveraged masked image pretraining for efficient segment anything. In Proc. of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 16111–16121 (2024).

Sandler, M., Howard, A., Zhu, M., Zhmoginov, A. & Chen, L.-C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proc. of the IEEE conference on computer vision and pattern recognition 4510–4520 (2018).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014).

Huang, G., Liu, Z., Van Der Maaten, L. & Weinberger, K. Q. Densely connected convolutional networks. In Proc. of the IEEE conference on computer vision and pattern recognition 4700–4708 (2017).

Iandola, F. N. et al. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size. arXiv preprint arXiv:1602.07360 (2016).

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J. & Wojna, Z. Rethinking the inception architecture for computer vision. In Proc. of the IEEE conference on computer vision and pattern recognition 2818–2826 (2016).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. of the IEEE conference on computer vision and pattern recognition 770–778 (2016).

Russakovsky, O. et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 115, 211–252 (2015).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014).

Bashkirova, D. et al. ZeroWaste dataset: towards deformable object segmentation in cluttered scenes. In Proc. of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 21147–21157 (2022).

University, P. waste conveyor Dataset. Roboflow Universe Preprint at https://universe.roboflow.com/purdue-university-ojtm4/waste-conveyor (2023).

Yudin, D. et al. Hierarchical waste detection with weakly supervised segmentation in images from recycling plants. Eng. Appl. Artif. Intell. 128, 107542 (2024).

Longo, E. et al. Take the trash out... to the edge. Creating a Smart Waste Bin based on 5G Multi-access Edge Computing. In Proc. of the Conference on Information Technology for Social Good 55–60 (2021).

Isaac Ritharson. Waste Materials classification Data. Preprint at https://doi.org/10.34740/KAGGLE/DSV/6288406 (2023).

Cheng, Y. et al. Flow: A dataset and benchmark for floating waste detection in inland waters. In Proc. of the IEEE/CVF International Conference on Computer Vision 10953–10962 (2021).

Roboflow. floating waste dataset FINAL COLOR Image Dataset. https://universe.roboflow.com/bannari-mman-institute-of-technology/floating-waste-dataset-final-color/dataset/1.

Khanam, R. & Hussain, M. Yolov11: An overview of the key architectural enhancements. arXiv preprint arXiv:2410.17725 (2024).

He, K., Gkioxari, G., Dollár, P. & Girshick, R. Mask r-cnn. In Proc. of the IEEE international conference on computer vision 2961–2969 (2017).

Nišić, D., Lukić, B., Gordić, Z., Pantelić, U. & Vukićević, A. E-waste management in serbia, focusing on the possibility of applying automated separation using robots. Appl. Sci. 14, 5685 (2024).

Acknowledgements

This research was supported by the Science Fund of the Republic of Serbia, #6784, Modular and versatile collaborative intelligent waste management robotic system for circular economy – CircuBot.

Funding

This research was supported by the Science Fund of the Republic of Serbia, #6784, Modular and versatile collaborative intelligent waste management robotic system for circular economy – CircuBot.

Author information

Authors and Affiliations

Contributions

Conceptualization A.M.V, M.P., N.K., and K.J.; Data curation A.M.V. and M.P.; Formal analysis A.M.V.; Funding acquisition A.M.V., and K.J.; Investigation A.M.V., M.P. and N.J.; Methodology A.M.V., M.P., N.K., and K.J.; Project administration A.M.V., M.D., and K.J.; Resources A.M.V., M.D., and K.J.; Software A.M.V.; Supervision A.M.V., K.J.; Validation A.M.V., M.P., and N.K.; Visualization A.M.V.; Roles/Writing—original draft A.M.V., M.P., and N.J.; and Writing—review & editing A.M.V., M.P., A.N., N.K., N.J., and K.J.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Vukicevic, A.M., Petrovic, M., Jurisevic, N. et al. Versatile waste sorting in small batch and flexible manufacturing industries using deep learning techniques. Sci Rep 15, 3756 (2025). https://doi.org/10.1038/s41598-025-87226-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-87226-x

Keywords

This article is cited by

-

A critical review of textile waste recycling: focusing on global policies, recycling approaches, and recovery products application

Environmental Science and Pollution Research (2026)