Abstract

With the development of deep learning, the potential for its use in remaining useful life (RUL) has substantially increased in recent years due to the powerful data processing capabilities. However, the relationships and interdependencies of operation parameters in non-Euclidean space are ignored utilizing the current deep learning-based methods during the degradation process for engine. To address this challenge, an improved sand cat swarm optimization-assisted Graph SAmple and aggregate and gate recurrent unit (ISCSO-GraphSage-GRU) is proposed to achieve RUL prediction in this work. Firstly, the maximum information coefficient (MIC) is utilized for describing the interdependent relations of measured parameters. Building on this foundation, the constructed graph data is used as input to GraphSage-GRU so as to overcoming the shortcomings of existing deep learning methods. Additionally, this work proposed an improved sand cat swarm optimization (ISCSO) to improve the predicted performance of GraphSage-GRU, including tent mapping in population initialization and a novel adaptive approach enhance the exploration and exploitation of sand cat swarm optimization. The CMAPSS dataset is used to validate the effectiveness and advancedness of ISCSO-GraphSage-GRU, and the experimental results show that the R2 of the ISCSO-GraphSage-GRU is greater than 0.99, RMSE is less than 6.

Similar content being viewed by others

Introduction

Benefiting from the development of sensor technology, prediction and health management (PHM) techniques for complex systems (i.e. aero-engines) use the collected measured data as solution to improve the reliability and intelligence1. In aero engines, it consists of turbines, fuel/oxygen pumps, etc., are operating in complex and variable environments, which leads to complex degradation patterns. Therefore, it is essential to provide health monitoring state during operation process, based on which a prediction of the remaining useful life (RUL) can be realized, which will ensure the normal operation of the engine2. Currently, the RUL prediction for aero engines can be categorized into model-based and data-driven approaches. For the model-based method, it is designed to achieve the RUL prediction by constructing an accurate simulation model based on the physical and mathematical mechanism from engine. However, it is difficult to construct an accurate physical model for complex systems due to the diversity of measured data3. In recent years, the data-driven RUL prediction approach have shown a lot of promise. Some shallow machine learning such as random forest4, extreme learning machine5, gradient boosting decision tree6, etc. and some deep learning methods like convolutional neural network (CNN)7, recurrent neural network (RNN), have been successfully applied to the RUL prediction. Notably, the shallow machine learning methods rely heavily on human-defined features, which may obtain an invalid predicted results8. In constant, it is possible for deep learning methods to achieve adaptive feature extraction, which avoids the influence of human experience9,10,11. Among the deep learning methods, the historical information and current information can be used by RNN due to the particular network structure. Gated recurrent unit (GRU), a variation of RNN, is better at handling long sequences by incorporating several gates to control the memories in the model12. For example, Zhang et al.13 used GRU to obtain implicit degradation information from sensors based on domain knowledge and achieved accurate RUL prediction. Zhou et al.14 proposed an improved GRU to mitigate the forgetting rate, which is applied in RUL prediction. These studies have shown that the GRU have shown a lot of promise for RUL prediction.

Although it is possible for GRU to realize the RUL prediction of the engine, the co-dependence between the degradation data in the non-Euclidean space is ignored. Specifically, GRU exploit the potential relationships among different operation parameters of engines in a predefined order, ignoring the arbitrary interdependencies between data or various physical measurements of multiple sensors15. Recently, graph neural networks (GNNs) have shown potential in the field of aero-engine RUL prediction task16. This kind of methods utilize the propagation method on all nodes overlooking the order of the nodes and update the weights of aggregated neighborhood nodes, Providing the possibility for exploring arbitrary interdependencies between data or various physical measurements of multiple sensors in engines17. Indeed, GNNs have been successfully utilized in traffic fields, materials, etc. For example, Kong et al.18 verified the predicted performance of GNN with real traffic data. Reiser et al.19 introduced and summarized a roadmap for the potential and application of graph neural networks in the field of chemistry and materials. In20, GNNs were introduced to analysis the operation relationship of bearings by Yang et al. However, GNNs have some drawbacks such as high computational complexity and memory consumption, leading to limitations in computational efficiency and operational memory when dealing with engine degradation data21. As a variant of GNN, the computational efficiency and over smoothing problem of which is mitigated with GraphSAGE by sampling and aggregating neighbouring nodes. In22, GraphSAGE was used to achieve the traffic prediction so that both the dynamic spatial and temporal dependencies could be captured. Chen et al.23 used air-conditioning operation data and GraphSAGE to build a prediction model for air-conditioning energy consumption, and accurate energy consumption prediction was achieved. Zhu et al.24 used GraphSAGE to achieve the bearing fault diagnosis, which indicated that GraphSAGE have potential and application prospects intelligent diagnostic field. These studies show that GraphSAGE will hold great promise to pave the way on prognostics for the engineering data cases. However, GraphSAGE has rarely used for RUL prediction except for few results on engine.

In view of the neural network, the hyperparameters such as learning rate, number of neurons, etc. are the essential factors affecting the constructed RUL prediction model17. Traditional hyperparameter tuning methods depend on manual experience, leading to problems such as falling into local optimums, and inefficiency. In recent years, some swarm intelligence optimization algorithms have been introduced to alleviate these problems, such as genetic algorithm (GA)26, alpine skiing optimization algorithm (ASO)27, grey wolf optimization algorithm (GWO)28, Artificial Bee Colony (ABC)29, greylag goose optimization (GGO)30, puma optimizer (PO)31, football optimization algorithm (FbOA)32, liver cancer algorithm (LCA)33, parrot optimizer (PO)34, artemisinin optimization (AO)35, polar lights optimization (PLO)36, rime optimization algorithm (RIME)37 and so on. Although the hyperparameters of neural networks can be optimized by these optimization algorithms, local optimum, premature maturity, etc., can be caused. Inspired by these problems, the improvement of traditional optimization algorithms has been a hot research topic. As one of the state-of-the-art swarm intelligence optimization algorithms, sand cat swarm optimization algorithm (SCSO) is not only simple and easy to understand but also efficient. In constant to other algorithms, it controls the transitions in the exploration and exploitation phases in a balanced manner and performed well in finding good solutions with fewer parameters and operations for the hyperparameters of neural networks. There are many studies have demonstrated the effectiveness of SCSO for solving optimization problems38,39,40. Similar to other algorithms, sand cat swarm optimization algorithm (SCSO) faces a several challenges such as unhomogeneous random initialization, poor level of post-development, etc41. Hence, it is necessary to make some improvements in SCSO to improve the global search capabilities in the during the hyperparametric search process.

To alleviate the above dilemmas, an improved RUL prediction method is proposed in this paper. The proposed method has the following three major contributions:

-

1.

The maximum information coefficient (MIC) is introduced to the relations among measured parameters. Building on this foundation, GraphSAGE-GRU is proposed to capture the degradation information for engine to construct an accurate RUL prediction model.

-

2.

An improved sand cat swarm optimization algorithm (ISCSO) is proposed, which includes tent mapping in population initialization and a novel adaptive approach enhance the exploration and exploitation of sand cat swarm optimization. These are used to alleviate the problems such as unhomogeneous random initialization, poor level of post-development during the hyperparameter searching process.

-

3.

A novel RUL prediction method based on the developed GraphSAGE-GRU and ISCSO is proposed for solving the problem of acquisition of degradation information from euclidean and non-euclidean spaces. The experiments show that it has a better predictive ability.

Four sections follow this introduction. The related theories are described in Preliminary section. The proposed RUL prediction model and the ISCSO are presented in Proposed method section. The CMAPSS dataset is utilized to verify the effectiveness of the proposed algorithm in Results and discussion section. Some conclusions and future work are given in “Conclusion” section.

Preliminary

GraphSAGE

Traditional neural networks can only handle regular relations in Euclidean space, GraphSAGE is able to provide additional relationships and data interdependencies42. In the GraphSAGE, the computational efficiency and memory problems have been improved compared to GCN. Specifically, it is constructed by sampling the neighboring nodes of the graph data in the degradation samples to generate embedded representations of the nodes. In this way, the representation of the engine’s degradation information in non-Euclidean space can be realized. Similar to graph neural networks, the constructed degradation sample can be represented as:

where V and E denote the nodes set and edge in graph samples. A is the adjacency matrix, which is utilized to represent the weights of any two nodes.

Then, the constructed graph data are fed into the GraphSAGE to. Firstly, GraphSAGE can realize fixed-size sampling for the neighboring nodes of each graph node. On this basis, the aggregation function (e.g. mean, sum, LSTM, etc.) is used to realize the representation in the next layer from previous layer. The advantages of this is that the Computational efficiency and generalization of GCN are improved. The aggregation process is shown in Fig. 1.

where \(h_{a}^{{(l)}}\) and \(h_{a}^{{(l - 1)}}\) represent the relation representation results of a at l layer and l-1 layer, respectively.\(aggregate(\cdot )\) denotes the aggregation function, which aims at concatenating the target node with the neighboring nodes at the l-1 layer.\(\sigma (\cdot )\) denotes the activation function.\(N(a)\) is the set of all nodes. W and \({W^k}\) are the learnable matrix. \(h_{{a1}}^{l}\) indicates the aggregation results. \(concat(\cdot )\) is recognized as the concatenate function.

The process of graphSAGE.

Gated recurrent unit (GRU)

Considering that the degradation information from the engine can be recognized as time series data, which will show cyclical and trending patterns during the degradation process, thus, time relationship is an essential feature need to be considered. Although recurrent neural network (RNN) can be utilized to obtain the degenerate hidden relationships, it is not be directly employed due to the gradients vanishing as a variant of RNN, gated recurrent unit (GRU) is an improved network to alleviate the problem of gradients vanishing in traditional RNN. Compared to long short-term memory networks (LSTM)43, GRU has a simpler structure and faster convergence speed, which can significantly improve the training efficiency. Its structure is shown in Fig. 2. From this figure, it can be seen that the GRU includes reset gates and update gates to control the flow and memorization of historical information. the update gate determines how much historical information is introduced in the current state. The reset gate controls the current input information based on the historical state. Namely, the larger the value of the reset gate is, the more information is added into the current state. For a single GRU structure, the output hidden state of the GRU at moment t can be represented as.

where \({h_{t - 1}}\), \({h_t}\), and \(\widetilde {{{h_t}}}\)represent the hidden state at previous moment, hidden state at current moment and Intermediate hidden state, respectively. \({W_z}\), \({W_r}\) and \({W_h}\) are the learnable matrices, respectively. \({b_z}\), \({b_r}\) and \({b_h}\) denote the bias matrices. \({r_t}\) and \({z_t}\) indicate the output results of reset gate and update gate. \(\odot\) represents the dot product.

The structure of GRU.

Sand cat swarm optimization algorithm (SCSO)

Considering the fact that hyperparameters such as filtered redundant information, number of hidden layers, and number of layers of neural network can affect influence the RUL prediction performance, it is necessary to adjust the hyperparameters according to the input degraded data in order to obtain an effective RUL prediction model. Traditional hyperparameter optimisation methods are either experience- or time-consuming, which cannot guarantee the best parameter combination. As a swarm intelligence optimization algorithm, Sand Cat Swarm Optimization Algorithm is proposed inspired by the behaviour of sand cats, for the detection of low-frequency noises, sand cats are able to locate their prey both above and below the ground with its unique ability. The basic setup of the algorithm can be represented as

where \({S_M}\) is set to 2, t and tmax represent the iteration number at current moment and the max iteration.\({r_G}\) is decreased from 2 to 0 as the number of iterations increases. R is the parameter for choosing in the searching process and attack process. Notably, the sand cat enters the attack state when |R|≤1, otherwise it is the search state. r denotes the sensitivity range of each sand cat. rand is a random number between 0 and 1. The mathematical model of the attack is represented as follows

where \({P_r}\) denotes a random position around the optimal position, which is used to ensure that the sand cat can approach the optimal position. \(P_{c}^{{t+1}}\) represent the updated location. \(\theta\) is chosen randomly by roulette to avoid falling into a local optimum. When |R|>1, the sand cat searches for prey locations within its sensitivity range, and the position can be expressed as

where \(p_{b}^{t}\) denotes the optimal candidate position.

Proposed method

The engine can be considered as a complex equipment, in which the measured data is characterized by large scale, non-linearity and high dimensionality. In this work, a RUL prediction method for complex equipment based on GraphSAGE and GRU is proposed.

Adjacency matrix for graphsage

For the engine, V is organized by the sensors in the engine such as temperature, rotational speed, etc., which is determined by a fixed sliding time window. Specifically, the length of each window and its stride is set to T and S, respectively. Each input sample V of engines is recorded as xi. xi is a sub-matrix X of \(T * N\). N represents a parameter such as temperature, coulomb efficiency, etc., in one operation cycle. The parameters inside the xi represent the condition of the engines over time. This allows the degradation trends in engines to be preserved. E is composed of nonlinear relationships between different parameters at different time within a sliding time window. Notably, the nonlinear relations of these sensors are difficult to be captured using mathematical equations. Thus, the maximum information coefficient (MIC) is introduced to describe the nonlinear relations among the nodes44. Take the temperature and the speed at same time as an example, the function is defined as follows:

where \(p(x,y)\) denotes the joint probability, \(p(x),p(y)\) represent marginal probability distribution. \(MIC([P,S])\) can be expressed as

where a, b denote the number of x-axis and y-axis grids, respectively. Mutual information values can be computed on different grids, and B represents the upper limit of the grid. \(MIC([P,S])\) is recognized as the attributes of node rotational speed and pressure. The other parameters are similar as well. Notably, different edges of the same sensor are regarded as the interactions from other sensors, some edges reflect the collaboration between sensors, and there are some edges indicate disturbances during operation. In order to effectively represent these connections between sensors, it is necessary to filter redundant information while reducing information loss. It will improve the prediction precision of the RUL prediction model and the interpretability. Therefore, filtering selection method is used to select the feature edges set.

An improved sand cat swarm optimization algorithm based on multi-strategy (ISCSO)

Population initialization is an essential part of the swarm intelligence optimization algorithm. For example, a fuller coverage of the solution space can be provided by the uniform distribution rather than the random distribution. In the traditional SCSO, random distribution was used to achieve the population initialization, which is difficult to cover the entire solution space. In contrast, chaotic sequence in the solution space has the characteristics of ergodicity, randomness and regularity, which can find the search space with a higher probability than random search. In order to get better initial solution, Tent mapping is introduced to improve the solution space. Notably, Tent mapping suffer from problems easily on small loop cycles and immobile points, and the optimal solution can only be found when the optimal solution is only edge-valued. Thus, an improved Tent mapping with beta-distributed random numbers is introduced, which is expressed as45

where \(y_{i}^{j}\) and \(y_{i}^{{j+1}}\) denote the j-th dimensional and the (j + 1)-th dimensional component of the i-th soldier. \(\mu\) represents the chaos coefficient, which is defined as 2. \({b_{betarnd}}\) is the random numbers of the beta distribution. \(\delta\) is the shrinkage factor. \(q,m\) are the parameters of the beta distribution. \(\delta\), q and m are set to 0.1,3 and 4. On this basis, the positional variables of the initial population individuals are defined as

where \(w{c_j}\) and \(u{c_j}\) denote the lower and upper boundaries of hyperparameters at the j-th dimension.

Similar to other optimization algorithms, it is inevitable that SCSO suffers from slow convergence and easily falls into the localization. Thus, an adaptive decay function is proposed to improve the global search ability of SCSO during the searching process.

where \(\lambda\) is considered as the decay rate, which is set at 0.05. v is the random number in [0,2], t is the current iteration. \(\varphi\) denotes the angular frequency, which is set to \(2\pi\). Therefore, the Eq. (14) and Eq. (15) are redefined as:

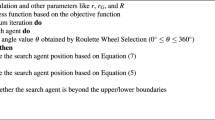

The Fig. 3 is the flow chart of ISCSO being used for hyperparameter optimization in GraphSAGE-GRU. From this Figure, it can be seen that the hyperparameters need to be optimized include the learning rate, the number of hidden layer for GRU, the number of neurons for GraphSAGE and GRU. The detailed workflow is illustrated as follows:

In the engineering problems, sliding window technique is used to obtained the local degradation relationship for engines. Then, the maximum information coefficient (MIC) is introduced to describe the nonlinear relations for different measured parameters for a sliding window. Considering that the data scales for engines are different, leading to the analytical bias, data fusion bias, etc. Thus, it is essential to make normalization of the measured data. In this work, min-max normalization is employed to implement the normalization for training datasets.

where \({x_{norm}}\), \({x_{\hbox{max} }}\) and \({x_{\hbox{min} }}\) denote the normalized data, the maximum value and the minimum value of the current measured parameter. Then, the normalized data are fed into the GraphSAGE-GRU, and the ISCSO is used to the optimization of hyperparameters for GraphSAGE-GRU. The detail are as follows:

Step 1: Initialize the parameters of ISCSO. The size of soldiers population U is set to P, the maximum iteration is given by T. The hyperparameters in the U is defined as follows:

where \(lr\) represents the learning rate for GraphSAGE-GRU, \(n{h_G}\) and \(n{h_g}\) denote the number of neurons for GraphSAGE and GRU. \(nl\) is the layer of GRU.

Step 2: Calculate the fitness function, a series of trained models are obtained by training set based on U. Then, the R2 from these trained models are used as the fitness function and the hyperparameters set are sorted according to the R2 while the optimal information for the current time is retained.

Step 3: Calculating the hyperparameters set according to Eq. (22) and Eq. (23). Building on this foundation, Calculating the R2 and replace the current soldiers if it is better than the current soldiers.

Step 4: The optimal parameters of the optimal GraphSAGE-GRU and the optimal model are outputted if the maximum iteration is reached or the threshold for R2 is satisfied, otherwise execute 1–4.

Finally, the testing set is used to predict the RUL based on optimal GraphSAGE-GRU. The number of soldiers and the max iteration are 5, 100, respectively. Besides, the weight of GraphSAGE-GRU and bias are updated by Adam and the number of training epochs is set to 100.

The flowchart of RUL prediction based on ISCSO-GraphSAGE-GRU method.

RUL prediction method based on ISCSO-GraphSAGE-GRU

The details of the proposed method are shown in Fig. 4. From this figure, it can be seen that the proposed method consists of three parts, including graph construction, acquisition of spatial-temporal degradation relations, and the optimization of hyperparameters. Firstly, fixed-size sliding window is introduced to allows the local degradation trends in engines to be preserved. Then, the maximum information coefficient (MIC) is used to describe the nonlinear relations for different measured parameters. On this basis, fixed-size sliding window is used to construct graph data. Notably, parameters at different moments within the sliding time window are used as nodes of the graph data while the MIC is used to measure the relationship between these nodes. Not all relationships between measured parameters have positive effect on the RUL prediction task. Thus, it is essential to filter out redundant edge information based on MIC value. According to the guidelines of correlation, two nodes are considered to be correlated when the normalized correlation coefficient is greater than 0.2. Besides, 30% of relationships in the filtered information are random selected, which are used to ensure diversity of information. The above information is fed into the proposed GraphSAGE-GRU so as to RUL prediction model for engines can be constructed. Moreover, considering that the RUL prediction performance can be affected by the hyperparameters of the neural network, such as the number of hidden layers, number of neurons, etc. Therefore, the ISCSO is proposed to proposed to optimize the GraphSAGE-GRU while the global search performance of SCSO is guaranteed. Finally, the optimized model is used for the RUL prediction, and the test data set is used to validate the effectiveness of the proposed method.

The flow of the proposed method.

Results and discussion

The structure of engines is complex, which leads to expensive maintenance costs. Therefore, accurate RUL prediction for operational status of aircraft engines is an effective and important safety precaution.

Data description and the evaluation metrics

The dataset used in this work is prom the commercial modular aero-propulsion system simulation (C-MAPSS)46. The schematic diagram of the engine is shown in Fig. 5. It consists of four sub-datasets composed of unit number, time stamp, three configurations, and 21 sensors. The details are shown in Table 1 and the description is listed in Table 2. In this work, FD002 and FD003 are used to validate the effectiveness of the proposed methodology. Inspired by literature47, the length of fixed-size sliding window is set to 20. And some of the MIC results for two datasets is shown in Fig. 6. From this figure, it is observed that a large number of normalized MICs are less than 0.2, which can be considered as very weakly correlated. Thus, the MIC threshold is set to 0.2 in this work is reasonable. Notably, it also can be seen that the autocorrelation of some parameters is very weak, caused by the limitations of the MIC48. This is considered as future work that can extract more degradation information.

The simulated engine diagram in CMAPSS.

The heatmap of some inputs in a window.

Moreover, three evaluation metrics are introduced to analyze the predicted performance, including r-square (R2), Root mean square error (RMSE), mean absolute percentage error (MAPE).

where \(y_{m}^{\prime }\), \({y_m}\) represent the predicted RUL values and true RUL values at the m-th degradation samples, Notably, all the experiments in this work were realized on a personal computer based on Pytorch and PyTorch Geometric, with a CPU of i7-12700 H, a GPU of NVIDIA GeForceGTX 3060, and 16G of RAM.

The effect of different metaheuristic optimization algorithms

In order to verify the effectiveness of the ISCSO, SCSO, sparrow search algorithm (SSA), whale optimization algorithm (WOA), alpine skiing optimization (ASO)47, chaotic k-best gravitational search strategy assisted grey wolf optimizer (EOCSGWO)48 and attack defense strategy assisted osprey optimization algorithm (ADSOOA)28 are firstly introduced for comparison experiment in the selected sub-datasets. The population of these methods is set to 5 and the maximum number of iterations is 100 in this experiment, and the range of hyperparameters \(lr,n{h_G},n{h_g},nl\) are in the range of [0,0.001], [32,128], [32,128] and1,4 in two datasets. The evaluation results of these methods are shown in Tables 3 and 4. From these tables, it can be seen that the ISCSO-GraphSAGE-GRU provides more optimal predicted precision compared to other optimization algorithms. In addition, the time to obtain a stable optimal solution is also provided, which shows that the proposed method is able to reduce the running time while ensuring better prediction precision. Take the FD002 as an example, ISCSO-GraphSAGE-GRU provides the second-best results for the time to obtain a stable optimal solution. But they’re close (28.94 compared to 28.76). However, R2 is improved by 0.24%, RMSE and SMAPE are reduced by 11.12% and 22.85% compared to ADSOOA-GraphSAGE-GRU, respectively. These results show that ISCSO is able to alleviate the problem of falling into local optima under the same number of iterative populations on the hyperparameter optimization issue in GraphSAGE-GRU. The predicted results of some engines assisted by different optimization algorithms in FD002 and FD003 are shown in Figs. 7 and 8. Notably, error interval boundary is defined as follows49, in which the lower bound indicates the overprediction with 8 cycles and the upper denotes the lag prediction is 13 cycles. From these figures, it can obtain more degradation information for reliable RUL prediction so that timely maintenance for engines can be performed. Furthermore, Wilcoxon rank sum test is used to make a statistical comparison, and the p-values obtained from this are listed in Table 5. Notably, p-value is greater than 0.05 indicates that no considerable differences have been discovered between the results of the two compared optimizers. Otherwise, it is considered to be statistically huge differences between the performances of the two optimizers if the p-value is less than 0.05. From this table, it can be seen that significant differences have been discovered compared to other methods, and the ISCSO-GraphSAGE-GRU has a statistically competitive performance and achieved good and significant results.

Predicted results of some engines assisted by different optimization algorithms in FD002.

Predicted results of some engines assisted by different optimization algorithms in FD003.

4.3 Compared with other algorithms

In order to verify the advancement of the ISCSO-GraphSAGE-GRU, some state-of-the-art algorithms are introduced, including CNN, LSTM, GRU, Consolidated Memory GRU (CMGRU), CNN-GRU and Transformer. To ensure fairness, all algorithms are optimized by using the proposed ISCSO. Tables 6 and 7 are the details of evaluation results of the engines and the predicted result of different methods can be seen in Figs. 9 and 10. Take the FD002 as an example, it can be seen that the ISCSO-GraphSAGE-GRU can provide better predicted results in constant to other methods, as listed in Table 6. For index R2, the result of ISCSO-GraphSAGE-GRU is higher than 0.99, meaning that it has a probability of higher than 99% to explain the reason of engine degradation, providing stable foundation for accurate prediction of the engine state. For the RMSE, the value of IMTSO-GCN is 5.7436, indicating that the predicted RUL value of IMTSO-GCN is different from the true RUL value with an average of 5.7436 cycles. The SMAPE of CMGRU is the optimal among the used traditional comparison methods. However, but value of ISCSO-GraphSAGE-GRU is still reduced by 35.55%. Among the traditional methods used in this experiment, GRU has the worst predicted performance for index R2, RMSE, and SMAPE. In contrast, the values of ISCSO-GraphSAGE-GRU are improved by3.67%, 54.95%, and 52.82%, which illustrates that the introduction of relationships between data interdependencies provides more potential degradation relationships, so as to fully analyse degradation information of engines. Notably, the predicted results of the different methods on FD003 are mostly superior to the ones on FD002, which is caused by the single degradation pattern of FD003 and the more complex degradation pattern of FD002. Nevertheless, ISCSO-GraphSAGE-GRU still shows competitive results, indicating that ISCSO-GraphSAGE-GRU in the real engine degradation process has shown in a large promise for RUL prediction issue. Figure 11 is the boxplot for different algorithms in different datasets. Using A as an example, the prediction errors of each cycles are within the range of -20-20, indicating that the GraphSAGE-GRU can provide competitive prediction results that enable accurate RUL prediction for engines. Moreover, the training and testing time of different RUL prediction methods are explored in FD002, including GraphSAGE-GRU, CMGRU, CNN, GRU, CNN-LSTM, and Transformer are simultaneously listed in Table 8. From this table, the GraphSAGE-GRU have a longer training time in constant to CNN and GRU. However, it has a shorter training time compared to CMGRU, CNN-LSTM and Transformer. Although the training time of GraphSAGE-GRU reaches 323.23s, it will not affect the RUL prediction because of the training is an offline process. Indeed, the training time will be greatly shortened when the hardware is improved. Also, all methods have a short testing time, which is less than 1 s. As the testing time of the proposed method is much less than the recording interval (10 s), its latency performance is sufficient for the RUL prediction in practice. In addition, in order to validate the generalization performance and effectiveness of the proposed method. PHM2010 dataset is introduced50. And the detailed evaluation results are listed in Table 9. From this table, it is observed that the proposed GraphSAGE-GRU could provide competitive results for R2, RMSE and SMAPE. The comparison results show that the advances and effectiveness of the propose method in other dataset.

Predicted results of some engines by using different algorithms in FD002.

Predicted results of some engines by using different algorithms in FD003.

Boxplot for different algorithms in different datasets.

Conclusion

In this work, a novel ISCSO-GraphSAGE-GRU is proposed to achieve the RUL prediction for engine based on the implicit relations from measured parameters in non-Euclidean spaces. The experimental results in CMAPSS dataset indicate the effectiveness of ISCSO-GraphSAGE-GRU. In the ISCSO-GraphSAGE-GRU, the maximum information coefficient (MIC) is used to illustrate the relationships for measured parameters of engines and achieve the construction of the graph data. The constructed graph samples are fed into the GraphSAGE-GRU to achieve the RUL prediction while the degradation information in non-European spaces such as the interdependence of measured parameters of engine is obtained. Moreover, the ISCSO is developed to improve the predicted performance of GraphSAGE-GRU. The experimental results on CMAPSS dataset indicate that the ISCSO-GraphSAGE-GRU can provide better predicted performance compared to traditional methods. In future, the proposed ISCSO-GraphSAGE-GRU will be extend explored, including real-time deployment with constrained computational resources, dynamic optimization and integration with digital twin systems for proactive maintenance. Specifically, the computational resources for embedded control devices in industrial scenarios are limited. Thus, it is address the feasibility of deploying ISCSO-GraphSAGE-GRU in real-time monitoring systems with constrained computational resources. In addition, explore the scalability and integrated with digital twins of the proposed method for larger datasets or other industrial domains, such as automotive or power plants is another of our research.

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author Ruifang Li on reasonable request via e-mail 836912005@qq.com.

References

Li, J. et al. Remaining useful life prediction of turbofan engines using CNN-LSTM-SAM approach. IEEE Sens. J. 23(9), 10241–10251 (2023).

Ren, L. et al. Aero-engine remaining useful life estimation based on multi-head networks. IEEE Trans. Instrum. Meas. 71, 1–10 (2022).

Zhang, Q., Liu, Q. & Ye, Q. An attention-based temporal convolutional network method for predicting remaining useful life of aero-engine. Eng. Appl. Artif. Intell. 127, 107241 (2024).

Alfarizi, M. G. et al. Optimized random forest model for remaining useful life prediction of experimental bearings. IEEE Trans. Ind. Inf. 19(6), 7771–7779 (2022).

Al-Khazraji, H. et al. Aircraft engines remaining useful life prediction based on a hybrid model of autoencoder and deep belief network. IEEE Access. 10, 82156–82163 (2022).

Soares, J. L. L. et al. Fault diagnosis of belt conveyor idlers based on gradient boosting decision tree. Int. J. Adv. Manuf. Technol., 1–10 (2024).

Wang, S. et al. Convolutional neural network-based hidden Markov models for rolling element bearing fault identification. Knowl. Based Syst. 144, 65–76 (2018).

Fei, X. et al. Projective parameter transfer based sparse multiple empirical kernel learning machine for diagnosis of brain disease. Neurocomputing 413, 271–283 (2020).

Wang, Z. et al. Cured memory RUL prediction of solid-state batteries combined progressive-topologia fusion health indicators. IEEE Trans. Ind. Inform. https://doi.org/10.1109/TII.2025.352857 (2024).

Pang, Y. et al. Boosting Enhanced Kriging leave-one-out cross-validation in improving model estimation and optimization. Comput. Methods Appl. Mech. Eng. 414, 116194 (2023).

Tong, H. et al. A fault identification method for animal electric shocks considering unstable contact situations in low-voltage distribution grids. IEEE Trans. Ind. Inform. https://doi.org/10.1109/TII.2025.3538132 (2025).

Guo, J., Li, D. & Du, B. A stacked ensemble method based on TCN and convolutional bi-directional GRU with multiple time windows for remaining useful life estimation. Appl. Soft Comput. 150, 111071 (2024).

Zhang, Y. et al. Health status assessment and remaining useful life prediction of aero-engine based on BiGRU and MMoE. Reliab. Eng. Syst. Saf. 220, 108263 (2022).

Zhou, J. et al. Remaining useful life prediction of bearings by a new reinforced memory GRU network. Adv. Eng. Inform. 53, 101682 (2022).

Asif, N. A. et al. Graph neural network: A comprehensive review on non-euclidean space. Ieee Access. 9, 60588–60606 (2021).

Xiao, Y. et al. Heterogeneous graph representation-driven multiplex aggregation graph neural network for remaining useful life prediction of bearings. Mech. Syst. Signal Process. 220, 111679 (2024).

Li, T. et al. The emerging graph neural networks for intelligent fault diagnostics and prognostics: A guideline and a benchmark study. Mech. Syst. Signal Process. 168, 108653 (2022).

Kong, L. et al. Traffexplainer: A framework towards GNN-based interpretable traffic prediction. IEEE Trans. Artif. Intell. (2024).

Reiser, P. et al. Graph neural networks for materials science and chemistry. Commun. Mater. 3(1), 93 (2022).

Yang, X. et al. Bearing remaining useful life prediction based on regression Shapalet and graph neural network. IEEE Trans. Instrum. Meas. 71, 1–12 (2022).

Wei, Y. & Wu, D. Remaining useful life prediction of bearings with attention-awared graph convolutional network. Adv. Eng. Inform. 58, 102143 (2023).

Liu, T. et al. GraphSAGE-based dynamic spatial–temporal graph convolutional network for traffic prediction. IEEE Trans. Intell. Transp. Syst. 24(10), 11210–11224 (2023).

Chen, Z. et al. Energy consumption prediction of cold source system based on graphsage. IFAC-PapersOnLine 54(11), 37–42 (2021).

Zhu, G., Liu, X. & Tan, L. Bearing fault diagnosis based on sparse wavelet decomposition and sparse graph connection using GraphSAGE. In 2023 IEEE 2nd International Conference on AI in Cybersecurity (ICAIC) 1–6. (IEEE, 2023).

Ma, Y. et al. A novel method for remaining useful life of solid-state lithium-ion battery based on improved CNN and health indicators derivation. Mech. Syst. Signal Process. 220, 111646 (2024).

Ren, J. et al. Genetic algorithm-assisted an improved adaboost double-layer for oil temperature prediction of TBM. Adv. Eng. Inform. 52, 101563 (2022).

Yuan, Y. et al. Multidisciplinary design optimization of dynamic positioning system for semi-submersible platform. Ocean Eng. 285, 115426 (2023).

Yuan, Y. et al. Attack-defense strategy assisted osprey optimization algorithm for PEMFC parameters identification. Renew. Energy 225, 120211 (2024).

Yuan, Y. et al. Learning-imitation strategy-assisted alpine skiing optimization for the boom of offshore drilling platform. Ocean Eng. 278, 114317 (2023).

El-Kenawy, E. S. M. et al. Greylag Goose optimization: Nature-inspired optimization algorithm. Expert Syst. Appl. 238, 122147 (2024).

Abdollahzadeh, B. et al. Puma optimizer (PO): A novel metaheuristic optimization algorithm and its application in machine learning. Clust. Comput. 1–49 (2024).

El-Kenawy, E. S. M. et al. Football optimization algorithm (FbOA): A novel metaheuristic inspired by team strategy dynamics. J. Artif. Intell. Metaheuristics 8, 21–38 (2024).

Houssein, E. H. et al. Liver cancer algorithm: A novel bio-inspired optimizer. Comput. Biol. Med. 165, 107389 (2023).

Lian, J. et al. Parrot optimizer: Algorithm and applications to medical problems. Comput. Biol. Med. 172, 108064 (2024).

Yuan, C. et al. Artemisinin optimization based on malaria therapy: Algorithm and applications to medical image segmentation. Displays 84, 102740 (2024).

Yuan, C. et al. Polar lights optimizer: Algorithm and applications in image segmentation and feature selection. Neurocomputing 607, 128427 (2024).

Su, H. et al. RIME: A physics-based optimization. Neurocomputing 532, 183–214 (2023).

Wang, L. et al. Wind turbine blade icing risk assessment considering power output predictions based on SCSO-IFCM clustering algorithm. Renew. Energy 223, 119969 (2024).

Bai, X., Liu, H. & Meng, G. Reliability prediction based on SVR and improved SCSO. In 2023 6th International Conference on Computer Network, Electronic and Automation (ICCNEA) 76–81. (IEEE, 2023).

Lin, M. et al. Rolling bearing fault diagnosis based on SCSO-LightGBM. In 2024 5th International Conference on Intelligent Computing and Human-Computer Interaction (ICHCI) 474–478. (IEEE, 2024).

Seyyedabbasi, A. & Kiani, F. Sand Cat swarm optimization: A nature-inspired algorithm to solve global optimization problems. Eng. Comput. 39(4), 2627–2651 (2023).

Liu, J., Ong, G. P. & Chen, X. GraphSAGE-based traffic speed forecasting for segment network with sparse data. IEEE Trans. Intell. Transp. Syst. 23(3), 1755–1766 (2020).

Chang, Z. H., Yuan, W. & Huang, K. Remaining useful life prediction for rolling bearings using multi-layer grid search and LSTM. Comput. Electr. Eng. 101, 108083 (2022).

Lin, G., Lin, A. & Gu, D. Using support vector regression and K-nearest neighbors for short-term traffic flow prediction based on maximal information coefficient. Inf. Sci. 608, 517–531 (2022).

Chen, L., Song, N. & Ma, Y. Harris Hawks optimization based on global cross-variation and tent mapping. J. Supercomput. 79(5), 5576–5614 (2023).

Asif, O. et al. A deep learning model for remaining useful life prediction of aircraft turbofan engine on C-MAPSS dataset. IEEE Access. 10, 95425–95440 (2022).

Yuan, Y. et al. Alpine skiing optimization: A new bio-inspired optimization algorithm. Adv. Eng. Softw. 170, 103158 (2022).

Yuan, Y. et al. Optimization of an auto drum fashioned brake using the elite opposition-based learning and chaotic k-best gravitational search strategy based grey Wolf optimizer algorithm. Appl. Soft Comput. 123, 108947 (2022).

Wang, Y. et al. Temporal convolutional network with soft thresholding and attention mechanism for machinery prognostics. J. Manuf. Syst. 60, 512–526 (2021).

Xu, A. Theory based on HMM and Bayesian analysis for predicting the remaining useful life of tools. In Fourth International Conference on Mechanical, Electronics, and Electrical and Automation Control (METMS 2024), 13163, 56–60. (SPIE, 2024).

Acknowledgements

This research work was supported by the New round of key disciplines in Henan Province (Mechanical Engineering) (Teaching and Research [2023], No. 414), National Natural Science Foundation of China (No. 52404163), Science and Technology Plan Project of Henan Province (No. 252102221003, and No.252102220079), Henan University of Technology Outstanding Youth Science Fund (J2025-3), Natural Science Foundation of Henan Polytechnic University (No. B2021-31), Fundamental Research Funds for the Universities of Henan Province (No. NSFRF220415).

Author information

Authors and Affiliations

Contributions

YL Y: Methodology, Writing-original draft, Preparation, Verification, ResourcesRF L: Formal analysis, PreparationGH W: Methodology, Formal analysisXJ L: Preparation.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Yuan, Y., Li, R., Wang, G. et al. Improved sand cat swarm optimization algorithm assisted GraphSAGE-GRU for remaining useful life of engine. Sci Rep 15, 6935 (2025). https://doi.org/10.1038/s41598-025-91418-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-91418-w