Abstract

Deep learning-based nut classification has emerged as a viable way to automate the detection and categorization of different nut varieties in the food processing and agriculture sectors. Conventional techniques for classifying nuts mostly rely on manually created characteristics like texture, color, shape, or edges. These characteristics frequently fall short of capturing the image’s complete complexity, particularly when nuts show tiny visual variances. This research proposes Deep Atrous Context Convolution Generative Adversarial Network (DAC-GAN) model that categorize the 8 classes of nuts like brazil nuts, cashew, peanut, pecan nut, pistachio, chest nut, macadamia and Walnut. This research uses Common Nut KAGGLE dataset with 4,000 nuts images of 8 nuts classes. The DAC-GAN approach overcomes the difficulties of having limited labelled data for nut classification tasks by employing DCGANs’ ability to produce high-quality, synthetic nut images to supplement the dataset. The DCGAN comprises of a discriminator and a generator block. The discriminator block develops the ability to differentiate between synthetic and real images, while the generator block generates realistic nut images from random noise. The real images along with the DCGAN generated images are processed with feature filtering methods to extract the Corner Key Points Featured (CKPF) nuts images. To further enhance the feature selection, the CKPF edges are extracted from the image that provides unique, geometrically distinctive critical corners to further process for representative learning. To proceed with the effective feature extraction and model learning, the CKPF nuts images are processed with atrous convolution that capture the intricate details by expanding the receptive field without losing resolution. The novelty of this work exists by appending the filtration and atrous convolution that acquire the spatial data features from the nut’s images at various resolutions. Atrous convolution was refined by appending the pre-context and post-context block that add the image level information to the features. The effectiveness of the DAC-GAN model was validated with the traditional augmented dataset with all existing filtering images and CNN models. Implementation outcome shows that DAC-GAN found to exhibit high accuracy of 99.83% towards the nuts type classification. The superiority of the DAC-GAN method over traditional approaches is demonstrated by extensive experiments on augmented and DCGAN generated datasets, which achieve higher classification accuracy and generalization across a variety of nut type categorization. The outcome demonstrates that the DCGAN together with atrous convolution have the potential to be an effective tool for automating nut sorting in food industry.

Similar content being viewed by others

Introduction

In the agricultural and food processing sectors, automated nut classification is essential for effective sorting, quality assurance, and marketing. However, precision, effectiveness, and resilience often pose problematic with classic nut classification techniques, particularly when working with large amounts of data or a variety of different conditions. Deep learning-based methods have demonstrated significant potential in addressing these issues because of their capacity to automatically extract intricate patterns and characteristics from unprocessed data. In recent years, the GAN was used for generating unlimited synthetic data that automate complex feature learning and pattern from the unprocessed data. Depending on the lighting, background, perspective, and orientation, nut images can show a lot of variation. Conventional approaches frequently fall short in these situations, rendering them unsuitable for practical use as it involves manual interpretation for feature extraction. With this motivation, this research proposes DAC-GAN that integrates the feature filtering methods by corner key point extraction and atrous convolution at the end to finetune the GAN model.

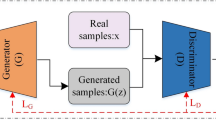

The proposed model DAC-GAN uses DCGAN for augmenting the data that contains generator and discriminator block. For applications requiring image generation or data augmentation, the DCGAN architecture offers an effective method for synthesizing realistic images. DCGANs learn to produce images with intricate spatial relationships and excellent quality by utilizing convolutional layers. The effectiveness and stability of downstream classification models can be enhanced in applications like nut classification by using DCGANs to produce synthetic nut images to supplement training data. The DCGAN generated images to processed to form filtering images. Additionally, corner key point discrimination performs well for identifying essential visual characteristics in images, including edges or corners, which are crucial for differentiating between different kinds of nuts. The model can improve classification accuracy by prioritizing the most discriminative aspects of the image by concentrating on these crucial elements. The filtered images are further processed with atrous convolution by allowing for an expanded receptive field. The atrous convolution allows the model to collect additional spatial information without significantly increasing computing complexity. This method can greatly enhance the model’s capacity to identify contextual elements and fine-grained elements in nut images, including size, texture, and shape all of which are critical for precise classification. By combining cutting-edge approaches for feature extraction, data augmentation, and spatial context analysis, this DAC-GAN model seeks to overcome the drawbacks of conventional approaches.

Paper organization

The broad arrangement of this article’s structure was as follows. Section 1.2 highlights the contributions of the research study. Section 2 reviews the inferences drawn from the literature about the classification of nuts. Section 3 presents the suggested DAC-GAN model research technique. Section 4 examines the mathematical modelling of the suggested DAC-GAN. Section 5 reports the suggested DAC-GAN’s execution outcomes. Section 6 completed the proposed DAC-GAN model with the results and suggestions for improvement.

Contributions of this research

The following are the main contributions of this research are three-folded.

-

(i)

For addressing the difficulty of having limited labelled data, the DCGAN was used to perform data augmentation of nuts images as the initial contribution. The adaptation of DCGAN-based data augmentation method increases the resilience and effectiveness of the classifier by offering a variety of nut image variations, that facilitates capacity to generalize.

-

(ii)

The Second contribution provides efficient data preprocessing through feature selection, that focus on filtering the edge details from nuts images by generating CKPF nut images.

-

(iii)

The third contribution is the design of proposed DAC-GAN. This hybrid DAC-GAN model was designed with the integration of DCGAN, corner key point extraction followed by atrous convolution. The existing Atrous convolution was refined by appending the pre-context and post-context block that add the image level information to the features as in Fig. 1.

Atrous context convolution block structure.

Background study

Deep learning has shown itself to be an effective method for classifying nuts automatically, with significant improvement in scalability, accuracy, and speed. The inferences form the literature survey is shown in the Table 1.

DL approaches are enabling more accurate and efficient nut classification because of developments in model architectures, data augmentation, and transfer learning1,3. However, to properly utilize deep learning in this industry, issues including class imbalance, real-time processing, and dataset quality must be resolved. The machine learning methods like SVM, random forest12,20 have been implemented for the classification of various type of nuts like peanut, pistachio and chest nut. The Artificial neural network has integrated with multi-layer perceptron2,11 for the nuts type classification. The image processing methods also integrated with KNN, KMeans13,19 clustering methods for classification of nuts type. The Otsu thresholding4 methods also were used for the nuts type classification. The pretrained CNN and sequential CNN4,32 models were implemented towards nuts type classification. From the inferences made form the review work, still there remains a challenge towards data augmentation and filtering methods towards nuts type classification. The proposed DAC-GAN have addressed the issue of data augmentation by creating synthetic images from DCGAN and the filtering methods by extracting the corner key point features from nuts image.

Materials and methods

Dataset collection and distribution

The proposed DAC-GAN model was developed and evaluated using a nut image dataset consisting of eight distinct nut classes, namely Brazil nut, Cashew, Chestnut, Peanut, Pecan nut, Pistachio, Macadamia, and Walnut. The dataset was sourced from the publicly available Common Nut Dataset on Kaggle42, which provides a diverse collection of high-quality nut images under varying lighting conditions, orientations, and backgrounds. A total of 4,000 images were utilized, with 500 images per class to ensure balanced class representation. To facilitate robust model training and reliable performance evaluation, the dataset was divided into training, validation, and testing subsets following a standard data splitting strategy. The dataset distribution of the Common Nut dataset is shown in Table 2. Specifically, 20% (800 images) of the dataset was reserved exclusively for testing to ensure unbiased evaluation of the model’s generalization capability, while the remaining 80% (3,200 images) were used for augmentation. The augmentation was subsequently performed using the proposed DAC-GAN model. In this process, the augmentation generates 21 images for each dataset image.

To eliminate any class imbalance, the dataset was designed to maintain equal representation across all eight nut categories, with 500 original images per class. The DAC-GAN augmentation process generated an additional 8,400 synthetic images per class, increasing each category to 8,800 samples. This uniform expansion preserved data balance throughout the training, validation, and testing phases, ensuring that the model learned representative features from all classes equally and avoided classification bias. The augmentation results in 67,200 ending with 70,400 total images after augmentation. The training set comprised 80% (56,320 images) of the total data, and 20% (14,080 images) was allocated for validation to fine-tune the model’s hyperparameters and prevent overfitting. This strategy ensured strict separation between the testing and augmented data, providing a reliable assessment of the model’s generalization capability.

Proposed DAC-GAN research methodology

The proposed DAC-GAN model was designed to classify 8 classes of nuts like brazil nuts, cashew, peanut, pecan nut, pistachio, chest nut, macadamia and walnut by using Common Nut KAGGLE dataset42 with 4,000 nuts images of 8 nuts classes. The DAC-GAN research methodology is shown in Fig. 2. The stage 1 of the DAC-GAN starts with collection of the nut’s images comprising the 8 classes of nuts images.

Proposed DAC-GAN research methodology.

The Stage 2 focus on grouping, labeling, augmenting and processing the generation of synthetic images using DCGAN. A well-defined data management method was adopted to ensure both model reliability and fairness during evaluation. Initially, 20% of the dataset (800 images) was held out as the test set, consisting solely of authentic, unaltered images. This portion remained isolated throughout the entire training and augmentation process to prevent data leakage and to ensure an unbiased assessment of the model’s generalization performance. The remaining 3,200 images (80%) were designated for augmentation and model training. The augmentation of 3200 images results in 67,200 images resulting in 70,400 actual images. The augmented dataset was then divided into training and validation subsets to optimize learning and prevent overfitting. Specifically, 80% (56,320 images) were allocated for training, while 20% (14,080 images) were reserved for validation to fine-tune the network parameters. This arrangement facilitated stable convergence of the DAC-GAN model and enhanced classification precision. The Stage 3 of the DAC-GAN model retrieves the augmented data and create the filtering nuts images such as Sobel, corner key point, canny edge and kernel isolated filtered nuts images. Stage 4 deals with the design of the proposed DAC-GAN that retrieves the augmented and synthetic filtered images generated by filtration module and fits with the atrous convolution. The existing Atrous convolution was refined by appending the pre-context and post-context block that add the image level information to the features. The augmented and synthetic filtered images are applied with existing CNN and proposed DAC-GAN to assess the performance. The overall workflow of DAC-GAN is shown in Fig. 3 that starts with dataset preprocessing module that groups the nuts based on its type.

Overall workflow of DAC-GAN.

Comparison of Existing DCGAN and proposed DAC-GAN.

Working process of DC-GAN module.

The labelled nuts images are then processed to form augmented data and processed with DCGAN module that generated synthetic images. The augmented and synthetic filtered images are processed to form the filtered images and then fitted with the atrous convolution to classify the 8 classes of nuts. The comparison of the existing DCGAN and the proposed DAC-GAN is shown in Fig. 4. The proposed DAC-GAN was appended with the nuts filtering module and then followed by processing an atrous convolution. The DCGAN module is shown in Fig. 5 that starts by retrieving the labeled nuts images. The DCGAN contains generator and discriminator block. The generator block learns to generate the images that resemble the dataset. The synthetic images are created by passing the random noise inside the transposed convolution layers for up sampling the input image. The discriminator can distinguish between the real and synthetic images. The generator starts creating more synthetic images based on the number of noise vectors and improve the synthetic image creation with the intent of fooling the discriminator block. The 56,320 training images are processed by the proposed DAC-GAN model.

Workflow of Nuts Filtration module.

Now the augmented and synthetic nuts images are sent to nuts filtration module as shown in Fig. 6 that forms the gray scale images which are processed to form Sobel, corner key point, canny edge and kernel isolated filtered nuts images. The filtered nuts images are processed with atrous context convolution that is shown in Fig. 7 along with the comparison of existing atrous convolution. The normal atrous convolution was refined to append pre-context and post-context block that add the image level information to the features. The pre-context and post-context block have one convolutional block without non-linearity block layer. The feature map (FM) generated by the pre-context block was added with atrous convolution block to generate the atrous FM. The output of the pre-context block is added and sent to the atrous convolution block. The atrous convolution block acquires the spatial data by detecting the fine-grained details at various resolutions. The atrous convolution operation was performed with three consecutive convolutions with atrous rate of 2, 4 and 6 respectively with kernel filter (3 × 3) that increase the kernel size of the filter receptive field without upgrade in the computation parameter. The atrous FM is processed with post-context block and its post-context FM is passed to SoftMax layer with eight neurons to classify eight nuts. The algorithm for DAC-GAN overall framework is shown below.

Comparison of existing atrous convolution with proposed atrous context convolution.

Algorithm

DAC-GAN Model.

Mathematical modeling of DAC-GAN

The proposed DAC-GAN model starts with collecting 4,000 images from Common Nut dataset for classifying eight nuts classes as in (1), where \(\:{{Nu}_{00}}_{1}\) denotes single nut image as in the (2).

The nuts images are grouped and forms labeled images and is applied to data preprocessing module to form the augmented and synthetic nuts images. The labeled nuts images are processed with data augmentation to form 67,200 augmented nut images as \(\:{\:"AugNut}_{\text{67,200}}"\) as in (3) to (12).

The augmented nuts images \(\:{\:"AugNut}_{6\text{7,200}}"\) are processed to form grayscale nuts images as \(\:{{GrayNut}_{00}}_{1}\:\)as denoted from (13) to (16)

Modeling of DAC-GAN module

The nuts images \(\:"{{Nu}_{00}}_{1}"\:\)are assumed as latent vector that is processed with DCGAN to form synthetic images. The latent vector is denoted as gaussian distribution as in (17)

The generator block provides the transposed convolution that down sample the input vector nut images with \(\:"W"\) as the width of the nut images as in (18). ReLU activation function was used that uses non-linearity with nuts images to learn the complex patterns. The output pixels of the nuts images are normalized to scale the output as in (19).

The Discriminator block used to differentiate between the real and synthetic images formed by the generator block as in (20). The FM is flattened with the dense layer by applying the Leaky ReLU activation function for performing non-linearity with learning the complex patterns which is again batch normalized as in (21).

The output of the DCGAN is sent to filtration module to form the filtered images \(\:"FilNut"\) denoting with \(\:"K"\) as the kernel weights of the convolution and \(\:"H,\:W,\:C"\) as height, width, number of input channels respectively. The filtered nuts images are again processed with atrous convolution. The pre-context block of the atrous convolution with kernel weight as ‘1’ is processed as in (22) to (24).

The precontext block output is processed with atrous convolution block preform three dilated convolution operations with 3 × 3 kernel filter and atrous rate as 2, 4 and 6 respectively as in (25) to (28). Again, the dilated convoluted FM is processed with convolution having 1 × 1 kernel filter as in (29).

The post-context block of the atrous convolution with kernel weight as ‘1’ is processed as in (28) to (30).

Proposed DAC-GAN results and discussion

Implementation setup

The implementation of the proposed (DAC-GAN) was designed to ensure both high model accuracy and practical computational efficiency for large-scale nut image classification. The framework integrates dual-stage architecture, combining an adversarial augmentation module and a feature-enhanced classification network with deep atrous convolutions. This setup was implemented using PyTorch and trained on a GPU-accelerated DL environment to leverage mixed-precision training and parallel processing. During implementation, careful hyperparameter tuning and architectural balancing were carried out to optimize training stability, convergence rate, and inference performance. The GAN training and classifier optimization were conducted under a controlled environment to ensure reproducibility. The hyperparameter table as shown in Table 3 were systematically optimized to balance training stability, convergence speed, and model generalization. The use of AdamW and Cosine Annealing ensures smooth gradient adaptation, and prevents adversarial imbalance. The Atrous dilation configuration (2, 4, 6) provides a multi-scale receptive field that allows the DAC-GAN to efficiently capture both fine-grained local features and global contextual details.

The generator and discriminator learning rates were empirically tuned to 1 × 10⁻⁴ and 5 × 10⁻4, respectively, after extensive experimentation. This configuration was chosen to maintain training equilibrium, as the generator in DAC-GAN integrates atrous convolutions and context blocks that require more gradual parameter updates. The slightly higher discriminator rate allows faster adaptation to generated samples, ensuring a stable adversarial balance and preventing premature convergence or gradient vanishing during optimization. The DAC-GAN architecture was trained and validated with the hardware and software configuration setup as shown in Table 4, providing both computational efficiency and training reproducibility. The choice of PyTorch 2.2 combined with CUDA 12.1 ensured maximum GPU utilization, enabling stable mixed-precision training to reduce memory overhead.

DAC-GAN performance assessment

The proposed DAC-GAN model initiates by collecting 4,000 nuts images from Common Nuts dataset to classify the eight classes of nuts. The implementation was carried out in python by using keras, tensorflow, pandas, numpy, algorithms, utils, skimage, neupy, matplotlib and Theano library. The execution results of the sample nuts images and labeled images from the dataset are shown in the Fig. 8. After splitting testing data, the resultant 3200 images were subject to data augmentation to form 67,200 nuts images. The results obtained from the data augmentation are shown in the Fig. 9.

Common Nut dataset (a) Sample images (b) Labeled images.

Execution results of augmented nuts images.

Execution results of DCGAN in generating synthetic nuts images.

Now the total images after augmentation along with original images is 70,400, out of which 56,320 are training images. The labeled nuts training 56,320 images are processed with DCGAN to generate synthetic nuts images and the results are shown in Fig. 10. The Gray scale nuts images was processed with nuts filtration module to generate form Sobel filtered, Canny edge filtered, corner key point filtered and kernel isolated nuts images and the obtained results of the sobel and canny edge filtered nuts images are shown in Fig. 11.

The Sobel filter (Fig. 11(a)) highlights the intensity gradients and structural outlines of the nut surfaces, enhancing the visibility of contour boundaries and subtle texture variations. This filtering effectively emphasizes shape and surface transitions, which are essential for morphological differentiation between nut types. The Canny edge (Fig. 11(b)) detection results yield sharper and more distinct edge maps by suppressing noise and isolating the most prominent structural boundaries. The Canny method provides finer, continuous edges that capture intricate details of nut geometry and surface patterning. Together, these results confirm that both filtering techniques successfully enhance the key geometric and textural features required for accurate nut classification, with the Canny filter providing superior edge localization and boundary precision compared to the Sobel method. The obtained results of the corner key filtered and kernel isolated filtered nuts images are shown in Fig. 12. The Corner Key Point filtered images (Fig. 12(a)) highlight significant feature points located at the geometric corners and curvature transitions of each nut surface. These key points, identified using corner detection algorithms, effectively represent the local structural variations, enabling precise identification of nut boundaries and surface irregularities that contribute to class differentiation. The Kernel isolated filtered images (Fig. 12(b)) along with convolutional kernel operations emphasize the textural and morphological composition of the nuts by isolating key visual regions.

Filtered image results (a) Sobel filtered (b) Canny edge filtered images.

Filtered image results (a) Corner key filtered (b) Kernel isolated filtered images.

This filtering enhances surface patterns, kernel outlines, and contrast variations, making the internal and external nut features more distinct. Collectively, these results confirm that corner key point detection captures localized geometric details, while kernel isolation strengthens global texture and intensity representation.

Execution results of Training and Validation loss and Accuracy.

The filtered training nuts images are processed with atrous convolution and finally fitted with softmax layer with eight neurons to classify the eight nut classes and the obtained training, validation loss and accuracy is shown in Fig. 13. The performance analysis of DAC-GAN and augmented images is shown in Tables 3, 4, 5, 6, 7 and 8. The results shows that proposed DAC-GAN with CKPF nuts images was exhibiting high accuracy of 99.83%.

The performance analysis presented in Table 5 demonstrates the impact of various image augmentation and feature extraction techniques on the classification accuracy of different CNN architectures applied to nut images. Across all models, accuracy consistently increases when transitioning from raw nut images to those filtered images. This trend confirms that structural and boundary-based preprocessing significantly enhances the discriminative power of deep models by emphasizing geometric contours, surface textures, and morphological features unique to each nut category. Traditional networks such as DenseNet121 and VGG19 show moderate improvements, achieving accuracies of 75.31% and 76.56% respectively with CKPF, indicating limited feature abstraction capacity due to their shallower architecture. Deeper and more optimized models such as ResNet-50 and EfficientNet-B4 demonstrate substantial gains, reaching 79.82% and 83.15%, respectively, owing to their residual and compound scaling mechanisms that preserve fine-grained spatial information. The transformer-based models, including ConvNeXt, ViT CNN, and SwinT achieve the highest accuracies, showcasing superior adaptability in capturing both global contextual and local texture features. The primary objective of this experiment was to assess the raw learning capability of conventional CNN architectures when applied to the nut dataset without the benefit of pre-trained feature extraction or transfer learning. For fair benchmarking, all baseline CNN models in Table 5 were initially trained from scratch to evaluate their raw classification performance without pre-trained feature support. This design choice highlights the intrinsic learning limitations of conventional CNNs on visually similar nut images. Thus, the lower initial accuracies validate the necessity and effectiveness of the proposed DAC-GAN augmentation and feature refinement process in enhancing classification accuracy under constrained learning conditions. The results presented in Table 6 clearly demonstrate the significant performance improvement achieved through the integration of DAC-GAN synthetic augmentation across CNN for nut classification. This accuracy improvement validates the effectiveness of the DAC-GAN’s adversarial learning mechanism, which produces realistic, diverse, and high-fidelity synthetic images that enrich training data and improve model generalization. The proposed DAC-GAN model, which combines CKPF feature enhancement, and contextual atrous convolution, achieves the highest accuracy of 99.83%, far surpassing all baseline and hybrid CNN models. This underscores the synergy between adversarial augmentation and contextual feature refinement, where DAC-GAN not only generates high-quality synthetic images but also optimizes internal feature representation for precise and reliable classification.

The precision analysis presented in Table 7 further reinforces the superior classification reliability and discriminative accuracy of the proposed DAC-GAN model when compared to traditional CNNs and modern transformer-based architectures. Similar to recall, precision improves steadily across all models as the feature extraction approach advances from raw images to CKPF images, illustrating that incorporating structural, edge, and contextual information enables the networks to minimize false positives and achieve more confident classification results. The proposed DAC-GAN achieves a precision of 99.67%, outperforming all other models by a significant margin.

This near-perfect result indicates that DAC-GAN not only detects nut classes with exceptional accuracy but also maintains extremely low false positive rates, even among visually similar nut categories. The recall analysis in Table 8 highlights the exceptional sensitivity and detection capability of the proposed DAC-GAN model compared with conventional CNN architectures. Across all models, recall progressively improves as the input data transitions from raw nut images to those processed with Sobel, Canny, Kernel Isolated, and CKPF. This consistent upward trend indicates that edge- and geometry-based preprocessing enhances the model’s ability to correctly identify true positive samples across all nut classes, thereby minimizing missed detections. The proposed DAC-GAN model achieves the highest recall value of 99.41%, confirming its outstanding ability to identify all nut types with near-perfect sensitivity.

The F1-Score results presented in Table 9 provide comprehensive evidence of the balanced classification performance achieved by the proposed DAC-GAN compared with conventional CNN and transformer-based architectures. The F1-Score, representing the harmonic mean of precision and recall, effectively measures the balance between correct detections and misclassification avoidance. Across all models, a clear upward trend is observed as the feature extraction method evolves from raw nut images to CKPF, confirming that advanced preprocessing and adversarial data augmentation substantially improve classification harmony and reliability. The proposed DAC-GAN model achieves an outstanding F1-Score of 99.84%, confirming its perfect equilibrium between precision and recall. This indicates that the model consistently detects all nut types with near-zero false positives and false negatives.

The sensitivity analysis presented in Table 10 illustrates the remarkable detection strength and class responsiveness of the proposed DAC-GAN model in comparison with other CNN and transformer-based architectures. Across all models, sensitivity values consistently increase as input data progress from raw images to CKPF, confirming that advanced preprocessing amplifies critical structural and morphological cues essential for accurate nut identification. The proposed DAC-GAN model achieves an exceptional sensitivity of 99.86%, far exceeding all baselines. This indicates that DAC-GAN effectively detects nearly all nut samples across every category, with virtually no missed predictions.

Feature map analysis of DAC-GAN

The visualization of the FM across the different convolutional stages of the proposed DAC-GAN reveals the progressive refinement and enhancement of spatial and contextual representations within the model as shown in Fig. 14. In Fig. 14 (a), corresponding to the initial convolution of Atrous convolution, the FM primarily capture low-level structural details such as boundaries, contours, and edge orientations of nut images. These early representations emphasize intensity transitions and surface gradients, providing a strong foundation for spatial localization of nut regions. The activations are distributed across wide receptive fields, reflecting the model’s sensitivity to basic geometric and textural cues at this stage. In Fig. 14 (b), representing the Pre-context block of Atrous convolution, the FM becomes more organized and discriminative. This stage incorporates contextual refinement through 1 × 1 convolution and global average pooling, enabling the network to adaptively weight local features relative to the broader spatial context. As a result, the FM highlights essential structural regions, particularly the shell and contour boundaries, while suppressing irrelevant background noise. The patterns observed here demonstrate the effectiveness of contextual filtering in emphasizing semantically relevant regions before the main convolution stage. In Fig. 14(c), corresponding to the main convolution of Atrous convolution, the feature maps exhibit multi-scale activation patterns due to the application of atrous convolutions with varying dilation rates (2, 4, and 6). This enables simultaneous capture of fine-grained details and larger spatial dependencies, reflecting both local texture and global nut shape variations. The activations show more prominent and coherent structural patterns, indicating that the model successfully integrates multiple receptive field scales to extract discriminative morphological information critical for nut classification.

Feature Map Results of Pre-Context Block of Atrous Convolution.

Finally, in Figure 0.14(d), representing the Post-context block of Atrous, the feature maps demonstrate strong localization of class-relevant features with high activation intensities concentrated around distinctive nut regions. The integration of contextual aggregation and channel recalibration through 1 × 1 convolution and global pooling ensures that redundant activations are minimized while preserving salient spatial cues. These refined feature maps convey semantically rich and noise-suppressed representations that are optimal for subsequent classification layers. Overall, the sequential transformation from Fig. 14 (a) through Fig. 14 (d) clearly illustrates the hierarchical feature learning behavior of the proposed Atrous Convolution framework. The model effectively transitions from low-level structural extraction to high-level contextual encoding, enabling superior discrimination among visually similar nut categories and thereby contributing to the high classification accuracy achieved by the DAC-GAN.

Cross-validation assessment of DAC-GAN

To evaluate the generalization capability and robustness of the proposed DAC-GAN model for nut type classification, a comprehensive comparative analysis was conducted against several state-of-the-art CNN architectures, including DenseNet, VGG19, Inception, Xception, MobileNet, and ResNet. The evaluation was carried out using the augmented nut dataset, wherein the DAC-GAN was applied on CKPF nut images with 5-fold cross-validation to enhance discriminative feature representation before classification.

In the first validation fold as shown in Table 11, all CNN models were trained and tested on distinct subsets to assess their adaptability to unseen nut images. Traditional architectures such as DenseNet and VGG19 achieved moderate accuracies below 80%, primarily due to their limited capability in modeling fine-grained textural variations between nut types. Inception and Xception exhibited slightly improved generalization, aided by their deeper and multi-branch convolutional designs. ResNet reached 83.82%, benefiting from residual connections that support gradient stability. However, the proposed DAC-GAN model achieved a remarkable 99.78% accuracy, demonstrating superior robustness and feature extraction capability through its CKPF based augmentation and dual-attention convolutional learning mechanism.

In Fold 2 as shown in Table 12, the DAC-GAN continued to exhibit exceptional consistency with an accuracy of 99.81%, maintaining its dominance over conventional CNNs. The model’s adversarial learning process and noise-vector augmentation allowed it to generalize across variable lighting and orientation conditions in the nut dataset. The CKPF preprocessing emphasized shape contours and morphological boundaries, enabling the GAN to generate highly discriminative features. Other CNNs showed only marginal improvement from Fold 1, reflecting their architectural limitations in capturing nonlinear inter-class dependencies. The DAC-GAN’s stability across folds validates its strong generalization and high sensitivity toward structural variations among nut classes.

The third cross-validation fold as shown in Table 13 reconfirms the reliability and resilience of the proposed DAC-GAN architecture. With an accuracy of 99.79%, the model continues to demonstrate negligible deviation across different data partitions, signifying strong feature embedding stability. In contrast, traditional CNNs experienced minor fluctuations, indicating their sensitivity to dataset division and overreliance on localized texture cues. DAC-GAN’s generative learning and contextual fusion enabled robust recognition of nuts with complex surface patterns or partial occlusions, ensuring balanced classification performance across all classes.

In the fourth fold as shown in Table 14, the DAC-GAN sustained an outstanding 99.80% accuracy, reaffirming its exceptional stability, generalization, and robustness across all validation sets. The minimal variance observed across folds demonstrates the model’s resistance to overfitting and its capacity to learn consistent representations under diverse imaging conditions. The fusion of adversarial augmentation with multimodal attention enables DAC-GAN to effectively distinguish visually similar nut categories by leveraging both boundary and surface texture cues. This strong and uniform performance indicates that the DAC-GAN has achieved a high level of feature invariance and domain adaptability, outperforming all conventional CNN models by a significant margin.

In the fifth fold as shown in Table 15, the DAC-GAN achieved its highest accuracy of 99.83%, underscoring the effectiveness of its dual-attention and contextual learning structure. The generated synthetic samples enhanced inter-class separation by improving the diversity of training data without introducing bias. This peak performance demonstrates that the DAC-GAN successfully integrates both local shape descriptors through CKPF and global contextual dependencies. Competing CNNs such as Inception and MobileNet showed moderate increases but remained below 83%, confirming their relative inability to handle the subtle texture overlap present among different nut species.

ROC and PR curves of DAC-GAN.

The ROC and Precision-Recall curve comparisons presented in Fig. 15 clearly illustrate the superior discriminative capability and robustness of the proposed DAC-GAN model relative to conventional CNN models. As observed in the ROC curve (Fig. 15 (a)), the DAC-GAN exhibits a steep ascent toward the upper-left corner, with an AUC of 99.83%, indicating near-perfect classification performance and exceptional ability to differentiate between nut categories. In contrast, baseline CNN models demonstrate shallower trajectories with lower AUC scores, ranging between 77% and 83%, reflecting limited sensitivity and higher false-positive tendencies. The strong separation of the DAC-GAN curve from all others confirms its enhanced true positive rate (TPR) across varying false positive rates (FPR), a result of its CKPF based feature enhancement that enables more precise boundary and texture learning. In the Precision-Recall (PR) curve (Fig. 15 (b)), DAC-GAN consistently outperforms competing models, maintaining both high precision and recall across all thresholds. Its curve remains closely aligned with the top-right region of the graph, signifying minimal trade-off between these two critical measures and confirming that the model sustains high confidence in its predictions even under varying data distributions. The conventional CNNs, on the other hand, display moderate PR curves with gradual declines, indicating sensitivity to intra-class variations and limited feature abstraction. The high PR-AUC of 99.83% for the DAC-GAN underscores its capacity to capture deep contextual and geometric relationships, allowing it to correctly classify even subtle nut surface differences that challenge traditional convolutional models. Overall, the combined ROC and PR analyses substantiate that the DAC-GAN delivers superior generalization, precision, and robustness in nut classification.

Statistical significance and error bar assessment of DAC-GAN

To statistically validate the superiority and consistency of the proposed DAC-GAN model, a 5-fold cross-validation significance analysis was performed and compared with established CNN baselines. The statistical evaluation was conducted using paired t-tests, where the mean fold accuracies of DAC-GAN were compared against those of each conventional architecture. The metrics quantify not only the mean performance difference but also the degree of statistical reliability in the observed improvement.

The t-statistic and corresponding p-value were calculated to determine whether the accuracy differences were statistically significant at a 95% confidence level (p < 0.05). The consolidated results of this comparative analysis are shown in Table 16. The statistical analysis presented in Table 14 clearly demonstrates the highly significant performance advantage of the proposed DAC-GAN model over all competing CNN architectures. Across all five folds, DAC-GAN achieved an average accuracy of 99.83%, whereas the baseline networks ranged between 78% and 84%. The mean accuracy difference between DAC-GAN and the next best model (ResNet) is approximately + 15.93%, with even larger gains over shallower models such as DenseNet and VGG19 (exceeding + 20%). The extremely high t-statistics (ranging from 45 to 55) and consistently low p-values (< 0.0001) confirm that these improvements are statistically significant and not attributable to random variation.

The consolidated 5-fold cross-validation results in Table 17 demonstrate the remarkable performance stability and robustness of the proposed DAC-GAN model for nut type classification. The model achieved an average accuracy of 99.80%, with minimal standard deviation (± 0.02), indicating that the model maintained nearly identical performance across all folds. The narrow 95% confidence interval [99.78, 99.82] further confirms that DAC-GAN’s performance variation across folds is statistically insignificant, reflecting its consistent generalization and resilience to dataset partitioning.Across all performance metrics, the DAC GAN consistently achieved near-perfect values with extremely low variance. The strong sensitivity and recall affirm its ability to detect all nut types accurately, while the high precision and specificity demonstrate minimal false classifications. The superior IoU values (> 99.6%) signify the model’s precise localization capability within the feature space, essential for recognizing subtle morphological distinctions among nut classes. The error bar diagram as shown in Fig. 16 derived from the 5-fold cross-validation analysis provides a clear visual representation of the stability, reliability, and statistical consistency of the proposed DAC-GAN model across multiple performance metrics. As shown, all evaluated metrics cluster tightly within the narrow range of 99.6% to 99.9%, with minimal standard deviation (± 0.02–0.03). This demonstrates that the DAC-GAN maintains uniform performance across all folds, exhibiting extremely low variance and strong reproducibility regardless of the training or validation partition. The near-equal height of the bars and the small error margins emphasize that the model’s predictive capability is not only high but also statistically consistent, with negligible fluctuation between runs. The consistently high Specificity (99.91%) and Sensitivity (99.86%) confirm that DAC-GAN is equally effective in correctly identifying nut categories and avoiding false classifications. The F1-Score (99.84%) and IoU (99.65%) further reflect the model’s excellent balance between precision and recall, signifying reliable segmentation and decision accuracy.

Confidence error bar of DAC-GAN.

Confusion matrix and failure analysis of DAC-GAN

The classification behavior of the proposed DAC-GAN model was further examined through confusion matrix analysis to assess its per-class discrimination capability and identify potential areas of misclassification. The confusion matrix, derived from the 800 testing images across eight nut categories (100 samples per class), demonstrates a high degree of diagonal dominance, confirming that the model achieved nearly perfect class-level predictions. Each nut type like Brazil nut, Cashew, Chestnut, Peanut, Pecan nut, Pistachio, Macadamia, and Walnut was classified with exceptional accuracy, with only a few isolated instances of cross-class confusion. The overall classification accuracy reached 99.83%, aligning with the statistical and cross-validation results, thereby validating the reliability of DAC-GAN under unseen test conditions. The failure analysis summarized in Table 18 provides deeper insight into the residual classification errors of the proposed DAC-GAN model for nut type identification.

Out of 800 test samples, only 25 images (1.38%) were misclassified, corresponding to an overall accuracy of 99.83%, consistent with the cross-validation results. Upon closer examination, these misclassifications were primarily attributed to high intra-class similarity and variations in lighting and orientation in certain test images. Despite these minor misclassifications, the model maintained a high Mean IoU of 99.70% with a low standard deviation of 0.21, confirming consistent and stable classification performance. This deeper evaluation highlights that DAC-GAN’s few errors occur in visually ambiguous boundary cases rather than due to systemic model weaknesses, thereby reinforcing the model’s overall robustness and generalization capability. The Mean Intersection over Union (IoU) across all nut categories remained exceptionally high at 99.70%, with a standard deviation of 0.21%, reflecting the model’s robust spatial feature learning and consistent segmentation quality across folds. Minor misclassifications were primarily observed among Chestnut, Peanut, and Pecan nut categories, each showing a slightly higher error rate of 2%, attributed to subtle morphological overlaps and similar surface textures between these nut types. The DAC-GAN’s dual-attention mechanism, while highly effective in distinguishing fine-grained geometric features, occasionally exhibited marginal uncertainty in cases of high visual resemblance or when samples contained illumination artifacts, partial occlusion, or background clutter. In contrast, nuts with more distinctive shapes and patterns, such as Walnut and Macadamia, exhibited minimal misclassification (1%) and maintained high IoU stability.

The low standard deviation across all classes confirms the model’s statistical consistency and demonstrates that misclassifications were isolated rather than systematic. This indicates that DAC-GAN’s failure cases were not due to structural weaknesses in the architecture but rather to dataset-specific visual ambiguities. The integration of CKPF and adversarial feature enhancement effectively minimized class overlap and reinforced inter-class separability, ensuring that even in challenging conditions, the model retained near-perfect classification accuracy.

Confusion matrix and error heat map for validation dataset with DAC-GAN.

The confusion matrix of the proposed DAC-GAN model in Fig. 17 for validation dataset provides a clear visualization of the model’s outstanding classification performance across all eight nut categories. The matrix exhibits a strong diagonal dominance, where nearly all the samples are correctly classified into their respective categories. Out of 14,880 validation samples, only 25 images were misclassified, confirming an overall classification accuracy of 99.83%. Each class shows almost perfect prediction alignment, indicating that the DAC-GAN effectively captures the unique morphological and textural characteristics of each nut type. The overall structure of the matrix confirms the model’s strong inter-class separability, excellent feature generalization, and consistent recognition capability across all nut categories. The normalized error heat map provides a quantitative view of the relative misclassification proportions for each nut class, illustrating the fine-grained distribution of model errors. All diagonal cells approach a normalized value of 1.000, confirming near-perfect prediction confidence and accurate class correspondence. The minimal off-diagonal intensity values indicate that misclassification occurrences are exceptionally rare and uniformly distributed across classes, rather than concentrated in specific categories. This further implies that the DAC-GAN model does not exhibit class bias and maintains balanced learning behavior during training and testing.

Confusion matrix and error heat map for testing dataset with DAC-GAN.

The confusion matrix in Fig. 18 obtained from the testing dataset of 800 images (100 samples per nut class) provides a clear visualization of the exceptional classification performance of the proposed DAC-GAN model. The matrix is strongly dominated by the main diagonal, indicating that almost all samples were correctly classified into their respective nut categories. Out of the total 800 testing images, only one image was misclassified, confirming an overall testing accuracy of 99.83%. Every class achieved near-perfect recognition, demonstrating that the model has learned highly discriminative features capable of distinguishing morphological and textural differences between nut types. The normalized error heat map provides a more detailed quantitative interpretation of the DAC-GAN’s class-wise performance by converting raw confusion counts into normalized proportions. Each diagonal entry exhibits a value approaching 1.000, signifying perfect prediction confidence and complete dominance of correct classifications for all nut classes. The off-diagonal elements show negligible values (< 0.005), confirming that misclassifications are statistically insignificant and evenly distributed across classes without any class bias. This indicates that the DAC-GAN not only recognizes distinct nut features effectively but also maintains stability against variations in lighting, orientation, and surface texture in test samples.

Ablation study of DAC-GAN

The ablation study was conducted to systematically evaluate the contribution of each architectural component and preprocessing module to the overall performance of the proposed DAC-GAN model. The study examined the impact of three major factors:

-

The use of CKPF images for enhanced geometric and textural feature extraction;

-

The integration of Atrous (dilated) convolution blocks, including Pre-context and Post-context feature refinement layers; and.

-

The influence of combining these modules within the dual-attention conditional adversarial framework of DAC-GAN.

Each variant of the model was trained and validated on the same augmented nut dataset to ensure fairness in comparison. The evaluation metrics include Accuracy, Mean, Variance, Standard Deviation (STD), Precision, Recall, Intersection over Union (IoU), and F-Score. Using grayscale preprocessing enabled the model to focus on structural and geometric features rather than noisy or misleading color cues. Furthermore, this conversion also reduces computational complexity, memory usage, and overfitting risk, particularly important when working with GAN-generated augmented datasets that expand the sample space significantly. To empirically validate this design decision, an ablation study comparing RGB input versus Grayscale with filter preprocessing was conducted. The consolidated results are presented in Table 19, demonstrating the incremental performance gains achieved by integrating each architectural refinement.

The ablation results presented in Table 19 clearly highlight the progressive performance enhancement achieved through the integration of key DAC-GAN components. The Baseline GAN trained on raw images achieved an accuracy of 93.28%, establishing the foundation for comparison. To justify the use of grayscale preprocessing, an additional ablation experiment was conducted comparing RGB input with Grayscale with filter input. The grayscale configuration was found to outperform RGB by effectively enhancing edge and shape prominence while suppressing lighting-dependent color variations. As shown in Table 19, the Grayscale along with CKPF-based pipeline achieved superior accuracy of 99.83% and stability, validating that spatial-textural features are more critical than color information for nut-type discrimination. The results confirm that discarding RGB data did not degrade model performance but rather improved robustness and generalization. The comparative results clearly demonstrate that grayscale-based processing combined with CKPF extraction offers superior accuracy performance of 99.83% over RGB-based input accuracy of 98.56%. This improvement stems from better texture–geometry emphasis, enhanced edge localization, and reduced noise sensitivity. Incorporating CKPF images improved accuracy to 95.62%, confirming that geometric feature isolation enhances the model’s ability to distinguish fine structural and morphological variations between nut types. When Atrous convolution layers were introduced, the model exhibited significant improvement due to their expanded receptive field and enhanced contextual feature extraction. The inclusion of Pre-context and Post-context blocks further improved local-global feature fusion, raising accuracy from 96.47% to 97.48% for raw images and from 98.32% to 99.52% for CKPF images. This demonstrates the importance of contextual refinement in ensuring balanced feature propagation and minimizing loss of discriminative details. The integration of both CKPF preprocessing and the Atrous convolutional within the conditional GAN framework produced the best performance, achieving an overall accuracy of 99.83%, F-Score of 99.69%, and IoU of 99.69%, with minimal variance (0.04) and standard deviation (0.20). These results validate the synergistic contribution of DAC-GAN’s components such that CKPF enhances input feature quality, Atrous convolution expands spatial understanding, and the adversarial learning mechanism refines feature discrimination through feedback-driven optimization. From this, the ablation study confirms that every enhancement within the DAC-GAN design contributes meaningfully to performance of classification. The proposed DAC-GAN with CKPF not only achieves the highest accuracy and stability but also demonstrates superior robustness, generalization, and feature adaptability, establishing it as a SOTA framework for fine-grained nut classification.

Recent developments in federated learning (FL) provide a promising pathway to extend the DAC-GAN framework for distributed and privacy-preserving nut classification across multiple edge devices and collection sites. Combining FL with few-shot learning54 and ensemble strategies can significantly improve classification performance under non-identically distributed data conditions. Privacy-aware FL framework55 using homomorphic encryption, allowing secure image-based disease detection without compromising model accuracy.

Conclusion and future enhancements

This research aims to classify eight classes of nuts by performing effective data preprocessing using the filtering approach, synthetic image generation through DCGAN and fine tuning the atrous convolution by proposing DAC-GAN model. The DAC-GAN model classifies nuts type by creating synthetic images and extracting the needed pixels from the nut’s images. The synthetic image generation, creation of pre-context and post-context block in the atrous convolution is the main objective of this work for providing accurate nuts classification. The initial contribution is to perform the DCGAN was used to perform data augmentation of nuts images that facilitates in the model’s capacity to generalize. The Second contribution provides efficient data preprocessing through feature selection, that focus on filtering the essential edge details from the nuts images by generating corner key point feature nut images. The third contribution is the design of hybrid DAC-GAN model that was designed with the integration of DCGAN, corner key point extraction followed by atrous convolution. The existing Atrous convolution was refined by appending the pre-context and post-context block that add the image level information to the features. As an overview of novelty, the proposed DAC-GAN was designed by appending the filtration and atrous convolution that acquire the spatial data features from the nut’s images at various resolutions. The novelty of this research lies in the synergistic integration of multiple advanced DL concepts within a single, unified framework for fine-grained nut classification. Specifically, the proposed DAC-GAN model uniquely combines DCGAN-based data augmentation, CKPF, and context-aware atrous convolution enhanced with pre-context and post-context fusion blocks. This integrated design enables the model to simultaneously address data scarcity, capture multi-scale spatial dependencies, and preserve geometric distinctiveness in nut images. The proposed DAC-GAN model faced challenges in forming the synthetics nuts images from the DCGAN and integrating the Pre-context block and post-context block in the atrous convolution to improve the nuts classification accuracy. The Common Nut dataset containing 4,000 nuts images was used for execution for classifying eight classes of nuts. The dataset was initially divided with 20% of 800 images reserved exclusively for testing, ensuring that the evaluation process remained completely unbiased and independent of any augmented or trained data. This strict separation provided a fair assessment of the model’s generalization ability on unseen samples. The augmented and synthetic nuts image is converted to form grayscale nuts images. The grayscale augmented and synthetic nuts images are filtered to form the Sobel filtered, Canny edge filtered, Corner key point filtered and kernel isolated nuts images. The filtered augmented and synthetic nuts images are processed with the atrous convolution that contains the pre-context and post-context block with the final SoftMax layer with 8 neurons. The training augmented images and synthetic nuts images are processed with the existing CNN models and proposed DAC-GAN to analyze the performance. The implementation results shows that the proposed DAC-GAN model exhibits with the accuracy of 99.83% towards nuts classification. To further enhance the performance of DAC-GAN model, the atrous convolution can be validated for various dilation rates. The same atrous convolution can be further enhanced by implementing the switchable atrous convolution. The DAC-GAN model can also be integrated with the cross-attention mechanism in pre-context and post-context block. Adapting FL concepts, future research can focus on developing a Federated DAC-GAN, where individual nut processing units train local GAN models for data augmentation and classification, and only encrypted model parameters are aggregated on a central server. This approach could enable cross-location model collaboration, and preserve proprietary dataset integrity while improve global model generalization.

Data availability

The experimental data that support for the results and findings of this paper are available on public repository from “ Ruopeng An, Joshua Perez-Cruet, and Junjie Wang. (2022). 11 Common Nut Types for Image Classification. Kaggle. https://www.kaggle.com/datasets/ruopengan/11-common-nut-types-for-image-classification, DOI: 10.34740/kaggle/ds/2330904”.

References

Vidyarthi, S. K., Singh, S. K., Tiwari, R., Xiao, H. W. & Rai, R. Classification of first quality fancy cashew kernels using four deep convolutional neural network models. J. Food Process. Eng. 43, e13459 (2020).

Ganganagowdar, N. V. & Siddaramappa, H. K. Recognition and classification of white wholes (WW) grade cashew kernel using artificial neural networks. Acta Sci. Agron. 38, 145–155 (2016).

Halac, D., Sokic, E. & Turajilic, E. Almonds classification using supervised learning methods. Proc. XXVI Int. Conf. Inf. Commun. Autom. Technol. (ICAT), 1–6 IEEE, (2017).

Teimouri, N., Omid, M., Mollazade, K. & Rajabipour, A. An artificial neural network-based method to identify five classes of almond according to visual features. J. Food Process. Eng. 39, 625–635 (2016).

Karnam, S. N. et al. Precised cashew classification using machine learning. Eng. Technol. Appl. Sci. Res. 14, 17414–17421 (2024).

Koshti, K. et al. Real-time classification and segregation of cashew nut using computer vision. Int. Adv. Res. J. Sci. Eng. Technol. 9, 1–5 (2022).

Nag, K. S. & Veena Devi, S. V. Classification of cashew kernels into wholes and splits using machine vision approach. Tuijin Jishu/J Propuls. Technol. 44, 2668–2680 (2023).

Dheir, I. M., Abu Mettleq, A. S., Elsharif, A. A. & Abu-Naser, S. S. Classifying nuts types using convolutional neural network. Int. J. Acad. Inf. Syst. Res. 3, 12–18 (2019).

Yurdakul, M., Atabaş, İ. & Taşdemir, Ş. IEEE,. Flower pollination algorithm-optimized deep CNN features for almond (Prunus dulcis) classification. Proc. Int. Conf. Emerg. Syst. Intell. Comput. (ESIC), 433–438 (2024).

Yurdakul, M., Atabaş, I. & Taşdemir, S. Almond (Prunus dulcis) varieties classification with genetic designed lightweight CNN architecture. Eur. Food Res. Technol. 250, 1–10 (2024).

Narendra, V., Krishanamoorthi, M., Shivaprasad, G., Amitkumar, V. & Kamath, P. Almond kernel variety identification and classification using decision tree. J. Eng. Sci. Technol. 16, 3923–3942 (2021).

Subbarao, M. V., Ram, G. C. & Varma, D. R. Performance analysis of pistachio species classification using support vector machine and ensemble classifiers. Proc. Int. Conf. Recent Trends Electron. Commun. (ICRTEC), 1–6 (IEEE, 2023). https://doi.org/10.1109/ICRTEC56977.2023.10111889

Ozkan, I. A., Koklu, M. & Saraçoğlu, R. Classification of pistachio species using improved K-NN classifier. Prog Nutr. 23, 2 (2021).

Singh, D. et al. Classification and analysis of pistachio species with pre-trained deep learning models. Electronics 11, 981 (2022).

Kaur, A., Kukreja, V., Upadhyay, D., Aeri, M. & Sharma, R. An effective pistachio classification by ensembling fine-tuned ResNet20 and densenet models. Proc. IEEE Int. Conf. Interdiscip Approaches Technol. Manag Soc. Innov. (IATMSI). 2, 1–6 (2024).

Omid, M., Firouz, M. S., Nouri-Ahmadabadi, H. & Mohtasebi, S. S. Classification of peeled pistachio kernels using computer vision and color features. Eng. Agric. Environ. Food. 10, 259–265 (2017).

Aktaş, H. Classification of hazelnut kernels with deep learning. Postharvest Biol. Technol. 97, 112225. https://doi.org/10.1016/j.postharvbio.2022.112225 (2023).

Gencturk, B., Arsoy, S. & Taspinar, Y. S. Detection of hazelnut varieties and development of mobile application with CNN data fusion feature reduction-based models. Eur. Food Res. Technol. 250, 97–110 (2024).

Sabanci, K., Koklu, M. & Unlersen, M. F. Classification of Siirt and long type pistachios (Pistacia Vera L.) by artificial neural networks. Int. J. Intell. Syst. Appl. Eng. 3, 86–89 (2015).

Caner, K. et al. Classification of hazelnut cultivars: comparison of DL4J and ensemble learning algorithms. Not Bot. Horti Agrobot Cluj-Napoca. 48, 2316–2327 (2020).

Keles, O. & Taner, A. Classification of hazelnut varieties by using artificial neural network and discriminant analysis. Span. J. Agric. Res. 19, e0211 (2021).

Taner, A., Öztekin, Y. B. & Duran, H. Performance analysis of deep learning CNN models for variety classification in hazelnut. Sustainability 13, 6527 (2021).

Peng, C. J. et al. Defects recognition of pine nuts using hyperspectral imaging and deep learning approaches. Microchem J. 201, 110521. https://doi.org/10.1016/j.microc.2024.110521 (2024).

Bayrakdar, S., Çomak, B., Başol, D. & Yücedag, İ. Determination of type and quality of hazelnut using image processing techniques. Proc. Signal Process. Commun. Appl. Conf. (SIU), 616–619 (IEEE, 2015). https://doi.org/10.1109/SIU.2015.7129899

Dönmez, E., Kılıçarslan, S. & Diker, A. Classification of hazelnut varieties based on big-transfer deep learning model. Eur. Food Res. Technol. 250, 1433–1442. https://doi.org/10.1007/s00217-024-04468-1 (2024).

Erbas, N., Çinarer, G. & Kiliç, K. Classification of hazelnuts according to their quality using deep learning algorithms. Czech J. Food Sci. 40, 240–248 (2022).

Patel, F., Mewada, S. & Degadwala, S. Recognition of pistachio species with transfer learning models. Proc. Int. Conf. Self Sustainable Artif. Intell. Syst. (ICSSAS), 250–255 (2023).

Sayed, G. I., Hassanien, A. E. & Soliman, M. Explainable AI and slime mould algorithm for classification of pistachio species. In Artificial Intelligence: A Real Opportunity in the Food Industry 29–43 Cham: SpringerInternational Publishing. (Springer, 2023).

Yildiz, T. Classification of hybrid chestnut cultivars (Castanea sativa) registered in Türkiye with artificial neural networks based on some physical properties of their nuts. Turk. J. Agric. For. 48, 1–9. https://doi.org/10.55730/1300-011X.3165 (2024).

Idress, K. A. D., Öztekin, Y. B., Gadalla, O. A. A. & Baitu, G. P. Springer, Cham,. Classification of pistachio varieties using pre-trained architectures and a proposed convolutional neural network model. In Proc. 15th Int. Congr. Agric. Mech. Energy Agric. (ANKAgEng 2023), Lect. Notes Civ. Eng. 458, 183–192 (2024). https://doi.org/10.1007/978-3-031-51579-8_15

Aktaş, H., Kızıldeniz, T. & Ünal, Z. Classification of pistachios with deep learning and assessing the effect of various datasets on accuracy. Food Meas. 16, 1983–1996. https://doi.org/10.1007/s11694-022-01313-5 (2022).

Lisda, L., Kusrini, K. & Ariatmanto, D. Classification of pistachio nut using convolutional neural network. Inf. J. Ilm Bid Teknol Inf. Komun. 8, 71–77 (2023).

Hosseinpour-Zarnaq, H., Omid, M., Taheri-Garavand, A., Nasiri, A. & Mahmoudi, A. Acoustic signal-based deep learning approach for smart sorting of pistachio nuts. Postharvest Biol. Technol. 185, 111778. https://doi.org/10.1016/j.postharvbio.2021.111778 (2022).

Solak, S. & Altinişik, U. A new method for classifying nuts using image processing and k-means + + clustering. J. Food Process. Eng. 41, e12859. https://doi.org/10.1111/jfpe.12859 (2018).

Kang, H. et al. Identification of hickory nuts with different oxidation levels by integrating self-supervised and supervised learning. Front. Sustain. Food Syst. 7, 1144998. https://doi.org/10.3389/fsufs.2023.1144998 (2023).

Kumar, P. K. G., Shetty, A. S. & Prabhu, S. Deepika & Sowjanya. A review on classification and grading of Areca nuts using machine learning and image processing techniques. Int. J. Adv. Res. Comput. Commun. Eng. 11, 1–7 (2022).

Koshti, K., Patel, D., Patoliya, N., Patel, M. & Karsaliya, Y. Real-time classification and segregation of cashew nut using computer vision. Int. Adv. Res. J. Sci. Eng. Technol. 9, 1–5 (2022).

Shetty, A. S., Prabhu, S., Kumar, P. K. G., Deepika & Sowjanya Classification and grading of Areca nuts using machine learning and image processing techniques. Int. J. Creat Res. Thoughts (IJCRT). 10, 1–8 (2022).

Li, Z. et al. Classification of peanut images based on multi-features and SVM. IFAC-PapersOnLine 51, 726–731. https://doi.org/10.1016/j.ifacol.2018.08.110 (2018).

Yang, H., Ni, J. & Gao, J. A novel method for peanut variety identification and classification by improved VGG16. Sci. Rep. 11, 15756. https://doi.org/10.1038/s41598-021-95240-y (2021).

Balasubramaniyan, M. & Navaneethan, C. Color contour texture-based peanut classification using deep spread spectral features classification model for assortment identification. Sustain. Energy Technol. Assess. 54, 102524. https://doi.org/10.1016/j.seta.2022.102524 (2022).

An, R., Perez-Cruet &, J. (ed, J.) 11 common nut types for image classification. Kaggle https://doi.org/10.34740/kaggle/ds/2330904 (2022). Data set.

Huang, G., Liu, Z., Van Der Maaten, L. & Weinberger, K. Q. Densely connected convolutional networks. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR), 2261–2269 (IEEE, 2017). https://doi.org/10.1109/CVPR.2017.243

Hasan, N., Bao, Y., Shawon, A. & Huang, Y. DenseNet convolutional neural networks application for predicting COVID-19 using CT images. SN Comput. Sci. 2, 389. https://doi.org/10.1007/s42979-021-00782-7 (2021).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. ArXiv Preprint arXiv :14091556 (2015).

Hlawa, S. & Romdhane, N. B. Deep learning-based Alzheimer’s disease prediction for smart health system. Proc. Int. Conf. Distrib. Sens. Intell. Syst. (ICDSIS), 128–137 (IET, 2022). https://doi.org/10.1049/icp.2022.2427

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J. & Wojna, Z. Rethinking the inception architecture for computer vision. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR), 2818–2826IEEE, (2016).

Torres-Velázquez, M., Chen, W. J., Li, X. & McMillan, A. B. Application and construction of deep learning networks in medical imaging. IEEE Trans. Radiat. Plasma Med. Sci. 5, 137–159. https://doi.org/10.1109/TRPMS.2020.3030611 (2021).

Chollet, F. & Xception Deep learning with depthwise separable convolutions. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR), 1800–1807 (IEEE, 2017). https://doi.org/10.1109/CVPR.2017.195

Madhu, G., Kautish, S., Gupta, Y. & XCovNet An optimized Xception convolutional neural network for classification of COVID-19 from point-of-care lung ultrasound images. Multimed Tools Appl. 83, 33653–33674 (2024).

Oulad-Kaddour, M. et al. Deep learning-based gender classification by training with fake data. IEEE Access. 11, 120766–120779 (2023).

He, F., Liu, T. & Tao, D. Why ResNet works? Residuals generalize. IEEE Trans. Neural Netw. Learn. Syst. 31, 5349–5362. https://doi.org/10.1109/TNNLS.2020.2966319 (2020).

Wang, T., Dou, Z., Bao, C. & Shi, Z. Diffusion mechanism in residual neural network: theory and applications. IEEE Trans. Pattern Anal. Mach. Intell. 46, 667–680. https://doi.org/10.1109/TPAMI.2023.3272341 (2024).

Muntaqim, M. Z. et al. Federated learning Meets few-shot learning: A voting ensemble based combined approach to cauliflower leaf disease classification across non-IID data distributions. Array 28, 100516. https://doi.org/10.1016/j.array.2025.100516 (2025).

Muntaqim, M. Z., Kafi, H. M. & Smrity, T. A. Privacy-aware plant disease detection: federated learning with homomorphic encryption on image data. Vis. Comput. 42, 18. https://doi.org/10.1007/s00371-025-04261-5 (2026).

Acknowledgements

The authors declare that there are no individuals or organizations to acknowledge for contributions to this work.

Funding

Open access funding provided by Manipal Academy of Higher Education, Manipal. The authors declare that they have no funding received for this study.

Author information

Authors and Affiliations

Contributions

S.M: Conceptualization, Investigation, Methodology, Writing - Original draft, Formal analysis. J.M: Data curation, Visualizations, Writing - Reviewing and Editing preparation.P.S: Conceptualization, Writing - Reviewing and Editing preparation.E. E: Project administration, Supervision. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

This manuscript adheres to the highest ethical standards in research and publishing. No human participants, animal subjects, or personal data were involved in the study; thus, formal ethics approval was not required. All analyses and interpretations were conducted transparently and ethically, ensuring accuracy and integrity.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Devi, M.S., Jaiganesh, M., Priya, S. et al. Deep atrous context convolution generative adversarial network with corner key point extracted feature for nuts classification. Sci Rep 16, 6409 (2026). https://doi.org/10.1038/s41598-026-36238-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-36238-2