Abstract

The purpose of this study is to explore the effects of certain factors, as defined by self-determination theory, the technology acceptance model, and cognitive load theory, and their relationships on the learning engagement of rural junior high school students within an artificial intelligence (AI)-powered adaptive learning system (ALS). The main research question investigates how these factors not only interact to understand student engagement but also inform the implementation of AI in educational settings. A model is constructed that reflects these theories and is tested using a survey administered across five rural junior high schools by utilizing AI-powered ALS. Path analysis and mediation effect analysis are employed to assess the effects of various factors on student engagement. The results indicate that technological acceptance and perceived ability significantly enhance student learning engagement. In AI-powered ALS, extrinsic cognitive load is more important than intrinsic load. Perceived autonomy, perceived ability, and perceived relatedness are found to positively affect technological acceptance. The mediation effects reveal that technological acceptance partially mediates the relationship between perceived ability and engagement, fully mediates the relationship between perceived relatedness and engagement, and has no mediating effect on the relationship between perceived autonomy and engagement. The study concludes that to improve learning engagement, educational systems should focus on enhancing technology acceptance by improving system usability and learning outcomes. Furthermore, it recommends fostering student competence through effective monitoring and immediate feedback on errors and minimizing extraneous cognitive load through clearer instructions and feedback. These strategies could be pivotal in designing targeted instructional frameworks for AI-powered learning environments.

Similar content being viewed by others

Introduction

Artificial intelligence (AI)-powered adaptive learning, which is a learning method based on data and the application of relevant theories and methods, is aimed at delivering more accurate, large-scale individualized learning (Chen et al. 2021). Because imbalances in educational resources are a well-documented challenge in China’s rural regions (Gao et al. 2016), this study explores a novel solution through the lens of self-determination theory (SDT), the technology acceptance model (TAM), and cognitive load theory (CLT). The integration of these theoretical frameworks is justified by the unique cognitive and motivational demands posed by an AI-powered adaptive learning system (ALS), which has been underexplored in the math educational context (e.g., Dabingaya 2022; Ingkavara et al. 2023). Compared with the affluent southeastern coastal regions in China, there remains a significant disparity in educational resources for secondary schools in the southwestern areas, including a lack of access to high-quality teachers and learning materials (Li et al. 2020), limited resources, and left-behind children (Wang 2025). The 2024 Central Document No. 1 lists “smart education” as a core lever for rural revitalization, echoing the Ministry of Education’s Digital-Education Action Plan unveiled at the World Digital Education Conference (WDEC) 2024. In its 2021 Five-Year Action Plan, China’s Ministry of Education permitted the reasonable use of electronic devices in schools but stipulated that, in principle, screen-based instruction should not exceed 30% of the total classroom time. Within this policy window, pilots of AI-powered ALS are being deployed on subsidized tablets to offset teacher shortages and personalize instruction for left-behind children. Some educational institutions, such as the China Development Research Foundation (CDRF), have collaborated with Apple to promote the use of tablets in these regions. ALS powered by AI in tablets offers students access to tailored educational content and potentially bridges a gap by providing secondary students with high-quality educational resources (e.g., Rane et al. 2024; Strielkowski et al. 2025).

The design and development of ALS is an important topic in the field of adaptive learning research. Strielkowski et al. (2025) reported that AI-powered ALS can deliver personalized educational experiences that address individual learner needs, cultivate more educated and better-informed citizens, spur innovation, and underpin future economic growth. Learners’ experiences with new technologies, however, play a critical role in the adoption and successful use of ALS. Researchers typically assess the efficacy of ALS through small-scale experiments conducted after system design and development—a methodological pattern that provides important insight for the present study. For example, Yuan (2025) employed an AI-powered ALS to support second-language reading and identified significant gains in learners’ reading comprehension, motivation, anxiety reduction, and cognitive load management while also highlighting students’ strong emphasis on the user experience and system functionality. Wang et al. (2020) compared Chinese eighth-grade students who used an ALS with those who did not and reported that the ALS group achieved markedly higher mathematics scores. Lee and Yeo (2022) designed an AI-powered adaptive learning chatbot to assist primary school students with mathematics; through multiple design iterations, they evaluated both its structural features and user experience and concluded that design elements such as sequential responses, informing responses, and personification significantly improve users’ perceptions and, consequently, students’ mathematics learning outcomes.

Our study investigates the relationships between an AI-powered ALS and learning engagement among rural junior high school students. It explores how variables such as technological acceptance and cognitive load are related to engagement levels. The research draws on SDT and TAM to guide the discussion of these relationships. Student engagement in learning is affected by various factors, such as students’ knowledge level, motivation, self-efficacy, and technology acceptance (e.g., Milligan et al. 2013). A substantial body of research has focused on learning engagement in junior high school students. Shao et al. (2024), for example, examined how peer relationships affect academic achievement via the sequential mediation of learning motivation and engagement. Guan et al. (2023) investigated, in a virtual reality (VR) setting, how students’ creativity during pottery-making influences their level of engagement. An et al. (2024) identified multiple mediating roles of intrinsic motivation and engagement between technology acceptance and students’ self-regulated learning among Chinese junior high learners, while Shi et al. (2023) analyzed the factors that shape engagement within a smart classroom environment. Taken together, these studies underscore that exploring junior high students’ engagement is a timely and important issue. With the advent of emerging AI technologies, however, junior high students—unlike university undergraduates—have relatively limited opportunities to interact independently with AI devices during class; therefore, the participants in previous empirical studies on ALS have been primarily higher education students (Han and Li 2024; Xie et al. 2019). Therefore, the research focus on ALS needs to shift to secondary school students, and more attention needs to be given to the factors related to the student learning process. In addition, previous research on ALS has focused more on whether the use of ALS can improve student learning, and there is a lack of research on the nature of learning engagement in AI-powered ALS. Accordingly, our research focuses mainly on the factors associated with student learning engagement in this environment and the strategies for improving learning engagement with AI-powered ALS.

This study targets grade seven (the first year of junior high school) mathematics because the transition from primary to secondary school is widely regarded as the most challenging stage in students’ mathematics education (Kaur et al. 2022). The transition entails numerous marked changes—adapting to a new school environment, encountering more demanding mathematical content, coping with the discontinuities between primary and secondary curricula, bearing a heavier academic workload, and adjusting to altered instructional practices (e.g., Hammond 2015; Keay et al. 2015; Paul 2014). If mathematics education during this critical phase is not properly supported, then students are prone to develop mathematics anxiety and lose interest in the subject (Suren and Ali Kandemir 2020). Hence, personalized guidance or strong family support is especially needed (Chen et al. 2024). In rural areas, secondary education faces persistent challenges—limited access to quality educational resources, a shortage of experienced teachers, digital literacy gaps, and generally lower levels of self-directed learning (e.g., Wang 2025). As an emerging technology, AI can help alleviate these constraints to some extent. These limitations underscore the potential value of AI-powered ALS as follows: (a) Personalized support independent of teacher expertise, where ALS can provide differentiated content and personalized feedback that does not rely on the proficiency level of any individual teacher; and (b) Learner-dependent efficacy, where the impact of AI-powered ALS nevertheless hinges on whether students are willing and able to operate tablet interfaces, interpret automated feedback, and regulate their own learning pace. Accordingly, this study examines not only learners’ technology acceptance and their cognitive load but also the motivational factors grounded in SDT, thereby revealing how rural resource conditions may amplify or attenuate these influences.

Literature review and research hypotheses

Theoretical framework

This study posits that the interplay of SDT, TAM, and CLT offers a unique framework for understanding student engagement. SDT is widely used to understand the factors that promote or undermine intrinsic motivation, extrinsic motivation, and mental health. Its core assumptions in education include the following: (1) more autonomous forms of motivation promote student engagement, learning, and health; and (2) teacher and parental support for basic psychological needs can promote intrinsic motivation, but this promotion can be weakened if student autonomy needs are constrained (Ryan and Deci 2020). Learning engagement is mostly regarded as the result of the motivation process, and cultivating different types of motivation helps students engage in learning activities. More autonomous motivation from extrinsic sources is related to greater learning engagement. Recent studies on AI-powered learning confirm that perceived competence is the most powerful driver of sustained use (Ellikkal and Rajamohan 2024; Rosli and Saleh 2022). In rural implementations of ALS—where both students and teachers receive limited face-to-face support and have little prior experience with the technology—the fulfillment of all three basic psychological needs becomes especially critical. Therefore, if students’ autonomy, competence, and relatedness can be supported, then they will more deeply engage in learning. Ryan and Deci (2020) claimed that future SDT research should focus on the design of learning technologies in student learning engagement. When studying student learning in AI technological environments, technical support should also be considered.

TAM is an important theory for studying user acceptance and the use of a specific information system. In the cognitive category of TAM theory, the two variables of perceived ease of use (PEU) and perceived usefulness (PU) are very important determinants for explaining users’ acceptance and use of information technology. PEU refers to the degree to which users find it easy to use a particular technology or system, and PU refers to the degree to which users believe that using a specific technology or system can improve their work efficiency and performance (Davis 1989). Venkatesh and Bala (2008) divided the factors that influence PEU in detail and proposed TAM3. Although TAM has undergone several rounds of updates and evolutions, its core variables remain PEU and PU, which are collectively referred to as technology acceptance. When TAM is applied to AI-powered ALS, PEU and PU play important roles in whether students are willing to adopt the novel AI technology and can continue to use it effectively. Recent research has shown that when students encounter new AI tools, PEU predicts PU, and PU, in turn, predicts sustained use (Wang et al. 2023). Consequently, even highly motivated learners may withdraw if the system is difficult to operate or if its value is uncertain.

CLT suggests that individual cognition is a type of resource consumption and that individuals need cognitive processing to acquire knowledge and solve problems, thereby consuming cognitive resources (Sweller 1988). Sweller (2012) proposed that there are three kinds of cognitive load (CL) for learners in the learning process, namely, intrinsic CL, extraneous CL, and germane CL. The total amount of CL is the sum of these three CLs. In novel AI-based settings, AI-powered ALS can lower extraneous CL and intrinsic CL by adjusting task difficulty (Chen and Chang 2024). However, ambiguous interface instructions, insufficiently user-friendly designs, or system lags may still inflate extraneous CL and dampen learner engagement (Han et al. 2023; Koć-Januchta et al. 2022). Careful instructional design should therefore accompany classroom implementation to minimize extraneous CL and enhance germane load, keeping total cognitive demand within students’ tolerance range and ultimately promoting greater learning efficiency and improved outcomes.

Prior research has explored the interconnections among SDT, TAM, and CLT (e.g., Fathali and Okada 2018; Gkintoni et al. 2025; Maričić et al. 2024; Rosli and Saleh 2022) as follows. (a) SDT × TAM: Fathali and Okada (2018) combined SDT and TAM to investigate learners’ intentions to engage in technology-enhanced out-of-class language learning. They showed that SDT determinants significantly predict both PU and PEU and that PU, in turn, strongly influences learners’ intention to continue using the technology. (b) SDT × TAM × Self-Efficacy: Rosli and Saleh (2022) integrated SDT, TAM, and self-efficacy into a unified theoretical model to explain students’ acceptance of technology-enhanced learning and argued that this synthesis offers richer insights into acceptance processes. (c) CLT × TAM: Maričić et al. (2024) merged CLT with TAM and proposed a close causal relationship between the two frameworks. Their findings indicated that teachers’ perceived usability was the pivotal factor that shaped attitudes toward teaching and continuous teaching intention, whereas no significant negative correlation emerged between teachers’ cognitive load and perceived usability. Building on this literature, we posit that when an ALS supports learners’ autonomy, competence, and relatedness, the satisfaction of these basic psychological needs enhances PEU and PU, which illustrates the SDT–TAM linkage. PEU and PU together account for a substantial proportion of behavioral intention and subsequent engagement in technology-rich courses (e.g., Maričić et al. 2024), demonstrating the TAM–engagement relationship. If the ALS interface or task design imposes a high extraneous cognitive load, however, then the positive influence of PEU/PU on engagement may be nullified; conversely, well-designed AI scaffolds that lower extraneous load and increase germane load can amplify the benefits of acceptance (Gkintoni et al. 2025; Maričić et al. 2024), indicating that CLT moderates the TAM–engagement links. Accordingly, in the context of ALS, we propose a cross-framework pathway—SDT + CLT → TAM → Learning Engagement. In short, SDT explains why students are motivated to engage, TAM clarifies whether they will adopt and persist with the ALS, and CLT describes how the ALS design influences the cognitive resources required for this engagement.

Basic psychological needs

SDT is a multifaceted framework that encapsulates five distinct mini-theories, which together provide a comprehensive view of human motivation. Two commonly employed mini-theories that have been used to examine physical activity behavior include basic psychological needs theory and organismic integration theory. The theory of basic psychological needs emphasizes the essential role of three innate psychological needs—autonomy, competence, and relatedness—in fostering high-quality motivation and well-being in individuals (Ryan and Deci 2002). By integrating the principles of SDT and specifically the theory of basic psychological needs, educators and technologists can better understand and design an AI-powered ALS that not only enhances learning engagement but also contributes to the overall well-being and motivation of rural junior high school students. Many studies have shown that students’ basic psychological needs can affect their learning engagement. Chiu (2022) conducted a study on student learning engagement in an online learning context based on Hong Kong learners during the epidemic and used structural equation modeling (SEM) to verify the relationship between students’ basic psychological needs and their learning engagement. The results revealed that students’ perceived autonomy, perceived competence, and perceived relatedness significantly and positively affect their behavioral, cognitive, emotional, and agentic engagement. Among them, perceived relatedness is the most important factor that influences student behavioral, emotional, and agentic engagement, and it can also significantly affect student cognitive engagement. Jiang and Peng (2023) surveyed 115 massive open online course (MOOC) learners and reported that engagement significantly mediates the relationship between perceived autonomy and academic achievement. Therefore, the following three research hypotheses are proposed:

H1: Perceived autonomy can have a significant positive impact on student learning engagement in an AI-powered ALS.

H2: Perceived competence can have a significant positive impact on student learning engagement in an AI-powered ALS.

H3: Perceived relatedness can have a significant positive impact on student learning engagement in an AI-powered ALS.

The relationship between basic psychological needs and technology acceptance has also been confirmed in some studies. Fathali and Okada (2018) researched undergraduate students in a technology-enhanced learning environment and reported that Japanese English-learners’ perceptual competence had a significant effect on their PEU and PU and that their perceived autonomy and perceived relatedness had a positive effect on PU. Li et al. (2024) showed that in gamified instructional settings, students’ lack of perceived competence and lack of perceived autonomy undermine their sense of classroom involvement. Similarly, Zhang and Zhou (2022) highlighted that in the information-and-communication-technology domain, perceived autonomy, perceived competence, and perceived relatedness each exert positive effects on learners’ intercultural knowledge, skills, and attitudes.

Accordingly, when the degree to which students’ basic psychological needs are met is higher, their learning engagement is greater, their perceived autonomy and relatedness are greater, and their PU is higher. Moreover, when students’ perceived competence is greater, their PEU and PU are higher. Based on the above literature, the following four research hypotheses are proposed:

H4: Perceived autonomy can have a significant positive impact on PU.

H5: Perceived competence can have a significant positive impact on PU.

H6: Perceived competence can have a significant positive impact on PEU.

H7: Perceived relatedness can have a significant positive impact on PU.

Technology acceptance

In various studies, PEU and PU are often brought into the research discussion simultaneously. It is clear from TAM that students’ PEU significantly affects PU (Venkatesh and Bala 2008). In addition to theoretical support, studies on college students in both online and VR learning contexts have verified the significant positive predictive effect of PEU on PU from the perspective of empirical verification (e.g., Li et al. 2022; Tang et al. 2022). Therefore, the following hypothesis is proposed:

H8: PEU can have a significant positive impact on PU.

A growing body of research has linked technology acceptance to learning engagement. Ebadi and Raygan (2023) reported that English-as-a-foreign-language (EFL) learners’ PU strongly and significantly influences their engagement in mobile learning. In addition, both PEU and PU have emerged as key predictors of learners’ perceptions of mobile-assisted language learning (MALL). In semi-synchronous online learning environments, Gunness et al. (2023) reported that PU enhances students’ social, behavioral, cognitive, emotional, and reflective engagement.

These studies show that student learning engagement is closely related to each of the two dimensions of student technology acceptance in distinct technology-supported environments. Accordingly, when students’ PEU is greater, their PU and their learning engagement are greater. Therefore, this study proposes the following two research hypotheses:

H9: PEU can have a significant positive impact on students’ learning engagement in an AI-powered ALS.

H10: PU can have a significant positive impact on students’ learning engagement in an AI-powered ALS.

Cognitive load

Some researchers have argued that cognitive load affects student learning engagement. For example, Evans et al. (2024) reported that employing load-reducing strategies together with a motivating style marked by a clear structure and autonomous support lowers students’ cognitive load and, in turn, increases their engagement. Zhang et al. (2023) examined the link between teaching presence and learners’ engagement, with cognitive load as a mediator; their results indicated that reducing the cognitive load has a detrimental effect on student participation. Dong et al. (2020) explored the relationship between cognitive load and the learning engagement of Chinese secondary school students and found that cognitive load plays a mediating role in the study of how the prior knowledge level affects learning engagement; that is, cognitive load can significantly negatively affect students’ learning engagement. Based on the SDT theoretical framework, cognitive evaluation theory (CET) claims that providing timely and effective feedback to students can enhance their intrinsic motivation and thus improve their learning engagement. Extrinsic cognitive load may constrain intrinsic motivation and thus negatively affect student learning engagement. On this basis, the following two research hypotheses are proposed:

H11: Intrinsic cognitive load can have a significant negative impact on students’ learning engagement in an AI-powered ALS.

H12: Extraneous cognitive load can have a significant negative impact on students’ learning engagement in an AI-powered ALS.

Mediation analysis

According to SDT, students’ basic psychological needs are closely related to their learning motivation, and the more that their psychological needs are satisfied, the more willing students are to internalize their learning motivation. Learning motivation not only is a mediator that affects students’ learning engagement (Cai and Jia 2020) but also significantly and positively affects their PEU and PU (Rosli and Saleh 2022). In some studies, technology acceptance has been used as a mediator to explore its indirect effect on the relationship between other external variables and student learning engagement (Li et al. 2022). The positive relationships among PEU, PU, and learning engagement have been mentioned previously. Therefore, the following research hypotheses are proposed:

H13: In an AI-powered ALS, PEU and PU can play a mediating role between perceived autonomy and learning engagement.

H14: In an AI-powered ALS, PEU and PU can play a mediating role between perceived competence and learning engagement.

H15: In an AI-powered ALS, PEU and PU can play a mediating role between perceived relatedness and learning engagement.

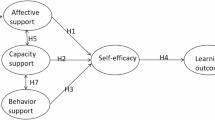

Based on the literature reviewed and the theories, we propose the research model shown in Fig. 1.

Model of the factors that influence learning engagement among junior high school students in AI-powered ALS.

Methods

Participants and context

Five rural schools were selected from southwest China, all of which are geographically located at the border between rural areas and have similar student populations that mainly consist of migrant workers and left-behind children in rural areas. Over recent decades, southwest China has experienced substantial economic growth; nevertheless, schools in these rural areas still face multiple educational challenges. The five focal schools in the present study exemplify these shared problems. First, low-quality teaching remains prevalent—the schools struggle to attract and retain qualified instructors, and some incumbent teachers have not received rigorous professional training (Wang 2025). Second, the large-scale out-migration of adults to urban centers has produced a high concentration of “left-behind” children, and more than 60 million children nationwide—approximately 22% of all Chinese children—live apart from their parents (Huang and Gong 2021). The gross upper-secondary enrollment rate of these children is only 23.1% (Duan et al. 2013). In the target schools, most students reside with grandparents or in dormitories, leaving them little parental homework support after class. Third, teacher workload is heavy: student-to-teacher ratios in western rural schools can reach 17.21:1, and more than 14.31% of teachers teach more than twenty hours per week (Liu et al. 2014; Wu and Chen 2018). Faced with such large classes and intensive teaching schedules, teachers find it difficult to provide personalized guidance, while students lack individualized help at home. Chinese educational authorities and researchers, therefore, call for modern instructional methods and technologies—such as AI and innovative learning environments—to address these challenges (Rysbekkyzy and Jide 2021; Wang 2025). However, pronounced urban–rural disparities persist in the use of digital tools (Li and Wu 2023; Sun et al. 2024). A large-scale survey across ten provinces revealed that rural students aged 7–18 years mainly use smartphones for Entertainment and short video viewing (Liu and Li 2023). The same pattern holds in the five schools in this study: students are familiar with watching short videos on phones but have no experience using tablet-based AI functions for learning. In anticipation of the technology difficulties that arise from an unfamiliarity with tablets, one researcher on the project team provided a week-long (two-session) training program that explained operating procedures, common issues, and AI features; the students then practiced independently and asked questions. In addition, a technical staff member was present in every tablet-equipped classroom to troubleshoot any problems in real time.

This choice allows for the exploration of the potential of AI-powered ALS to mitigate educational disparities and is a novel application of our theoretical framework. Schools in these areas face common challenges, including poor teacher qualifications, limited time, limited ability of parents to support student learning, and low family economic status. This correlational study was conducted during the first semester of the first grade of junior high school (seventh grade), a period when the students had just transitioned from primary school after a unified examination, and they thus share a broadly similar knowledge base. In addition, seventh grade is the first year of junior high school. There is a certain gap between the mathematics curriculum in primary school and that in junior high school, which can pose challenges to students transitioning between these educational stages. Consequently, some seventh-grade students have not yet fully adapted to the difficulties inherent in junior high school mathematics. Two classes were randomly selected from the seventh-grade cohort at each participating school to be included in the research. A total of 315 students across five schools participated in the study.

These classes were equipped with tablets that contained a built-in AI-powered ALS, which was used for free mathematics learning, as it was found to be the most stressful subject for seventh-grade junior high school students and the most challenging subject for rural middle schools. Learning activities were carried out during after-school services, which are subject-based self-study classes organized by junior high schools for students after regular classes that run from 4 to 5 pm. The teachers of these classes were responsible for maintaining order in the classroom, and the students completed homework and asked questions in the AI-powered ALS provided on the tablets (see Fig. 2). Each class was guaranteed to use the tablets during two sessions per week, and all students who participated in the survey used the AI-powered ALS for one full academic year.

Students use an AI-powered ALS for independent learning.

AI-powered ALS function and procedure

Prior to the start of the after-school service, the teacher writes the learning tasks to be completed on the blackboard. The learning tasks are determined by the mathematics teachers for all classes and the research teachers of the system developer before the AI-powered ALS is officially used for the semester.

The system first issues diagnostic questions through AI-powered adaptive technology. After completing the diagnostic questions, each student is tested on various knowledge points, which are presented in the form of a knowledge graph in the system. When the test is completed, the system recommends personalized learning resources and paths based on the students’ mistakes and error patterns, and the students conduct “precision learning” on this basis. According to the learning task stipulated in class, students can choose the knowledge points of each section that they have not mastered on the knowledge graph. For each question, in addition to the analysis, a video provides targeted explanations of the related knowledge points.

After the “precision learning,” the students enter “precision practice.” According to the current learning level of the students, the system pushes the exercise of the relevant weak knowledge points to further test and consolidate the weak knowledge points. If the students answer the questions incorrectly, then the questions regarding relevant knowledge points are pushed further until the student passes. Once all of the exercises under the knowledge point are completed, the students pass it and move on to the next knowledge point.

Finally, by analyzing the students’ answers in the first three stages, the system accurately determines the students’ mastery of current knowledge and identifies in the knowledge graph what the students have and have not mastered to help students, teachers, and parents more precisely understand the students’ progress (see Fig. 3). All of the students and teachers agreed to participate in this study.

AI-powered ALS function and procedure.

Measures

This study was conducted through questionnaires used to collect, collate, and analyze the data to determine the status of junior high school students’ learning engagement as supported by AI-powered ALS. The questionnaires helped to revise the model of factors and to validate the research hypotheses. The questionnaire design of this study mainly adopted the following steps.

First, the literature was read, and the scales were screened. The following were the three main criteria for screening the literature: a. the research should be similar to the research context of this study; b. the literature should be from an authoritative journal; and c. the research should be conducted no earlier than 2010.

Second, the study utilized expert consultation and peer discussion. By consulting with experts, the most suitable scale for this study was selected from the screened scales and translated into Chinese. During the translation process, five rounds of expert consultation and peer discussion were conducted on the expression of the relevant questions, and final agreement was reached regarding the deletion and revision of irrelevant, repetitive, controversial, and unclear questions. After the design of the scale was completed, demographic questions related to the students’ personal information were added to form the questionnaire.

Finally, small-scale interviews were conducted. To ensure the validity of the content of the questionnaire, three graduate students, two junior high school mathematics teachers, and four junior high school students were selected after expert consultation and peer discussion to complete the questionnaire and were subsequently interviewed. The questionnaire questions were revised according to their feedback.

Learning engagement measures

In this study, learning engagement is classified into four measures: behavioral engagement, cognitive engagement, emotional engagement, and agentic engagement. This study was conducted on AI-powered ALS, and the learning engagement measures are operationally defined according to the definitions of each measure in the literature and the context of the study (see Appendix A).

The questionnaire on learning engagement is divided into two main parts: (1) information is captured on students, such as their gender, school, teachers’ attitudes, and parents’ attitudes, and this information is used for descriptive statistical analysis and discrepancy analysis in this survey of junior high school students’ learning engagement under an AI-powered ALS; and (2) a Likert scale was used for the learning engagement questionnaire. After the literature screening, expert consultation, peer discussion, and small-scale interviews, the sources and questions of the final scale are shown in Appendix B.

The behavioral, cognitive, and emotional engagement types in the learning engagement scale are mainly based on the mathematics and science learning engagement scale developed by Wang et al. (2016). The questionnaire items on the behavioral, cognitive, and emotional dimensions were selected for translation and were modified according to the actual conditions. Agentic engagement is based on the agentic engagement measurement questions proposed by Reeve (2013) (see Appendix B).

Learning engagement influencing factors scale

The learning engagement influencing factors scale includes questions aimed at measuring the following seven variables: student perceived autonomy, perceived competence, perceived relatedness, PEU, PU, extraneous cognitive load, and intrinsic cognitive load. Since there are few studies on the application of AI-powered ALS, it is difficult to find research that is consistent with the context of this study, so the scales used in studies that have a similar context to this study are drawn on. The mobile learning context has some commonalities with the context of this study, as the students use mobile terminals, specifically tablets, for learning. There are similarities in terms of the hardware devices used, and the research on mobile learning is relatively mature, so the scales used in the studies on the mobile learning context are used as the main reference source for this study. Among the factors that influence learning engagement, the questions of perceived autonomy and perceived relatedness are selected from the scale used in the mobile learning evaluation study by Nikou and Economides (2017). The questions of perceived competence are based on the scale used by Nikou and Economides (2017) and the operational definition of perceived competence in this study, and they are combined with some of the questions of perceived competence in the study conducted by Jeno et al. (2019) that relate to mobile learning. The questions of PEU and PU are combined with the scales used in the research of Park et al. (2012) and Liu et al. (2010) by removing the repeated questions from these two scales and retaining the questions that are highly relevant to the operational definition proposed in this study. The questions concerning intrinsic cognitive load and extraneous cognitive load refer mainly to the questions concerning intrinsic cognitive load and extraneous cognitive load in the cognitive load scale developed by Leppink et al. (2013), as shown in Appendix C.

Data collection and analysis

Quantitative data

The students completed paper-based questionnaires on site. To minimize self-report bias, we adopted several procedural remedies designed to mitigate common-method and social desirability biases (Podsakoff et al. 2003). All questionnaires were completed anonymously: printed booklets were distributed, students were given ample time to respond in the classroom, and items tapping predictors and outcomes were placed in separate sections. The written instructions—and verbal reminders from the administering researchers—emphasized that “there are no right or wrong answers” and that responses would remain confidential, thereby encouraging students to answer truthfully. These precautions reduce the likelihood of serious common-method bias, but the mono-method nature of the data remains a limitation. To address this, we randomly sampled students and teachers for interviews, and two research assistants completed an observation report during every lesson, allowing us to triangulate student engagement through multiple data sources.

A total of 302 questionnaires were collected. To increase the validity and accuracy of the data, we excluded 59 questionnaires that had missing entries or multiple entries in the scale sections or that were completed in an unusually short amount of time. Ultimately, 243 valid questionnaires were retained, resulting in an approximate validity rate of 80.5%. SEM was used for path analysis and model building. SEM has certain requirements for the sample size. In general, when the sample size is larger, the results that can be obtained are more stable. However, in practice, the learning process of the participants in this study, who were using AI-powered ALS and were affected by the epidemic, made it more difficult to collect the sample. Huang (2009) suggested that a sample size of at least 100–150 is required to achieve convergent and discriminant validity. Breckler and Berman (1991), after extensive empirical research, concluded that when conducting SEM, a sample size of more than 200 can be regarded as medium-sized, and researchers can use this size range to obtain more stable results. We also carried out a Monte Carlo‐based power analysis (Wolf et al. 2013) and generated 1000 Monte Carlo replications using the empirically obtained standardized loadings and path coefficients as the population values. We compared two sample size conditions: N = 200 and N = 240 (our realized N = 243). The criterion for adequate power was ≥0.80 to detect each structural path at α = 0.05. Overall, the root mean square error of approximation (RMSEA) close-fit test (null = 0.05, alt = 0.08) achieved power = 0.86 at N = 240. The 95% confidence interval coverage proportions were all within 0.93–0.96, indicating unbiased parameter recovery. With our realized sample (N = 243), the study is well powered (≥0.80) to detect all medium-sized or larger effects specified in the model and to reject ill-fitting models at the desired close-fit threshold.

In addition, we reviewed the sample sizes employed in comparable studies. Wang et al. (2020) recruited 200 Chinese lower-secondary students to examine mathematics learning with an ALS. Rosli and Saleh (2022) surveyed 303 students in a technology-enhanced learning setting when SEM was used to construct a unified model that combined SDT, self-efficacy, and TAM. In a technology-enhanced, out-of-class language-learning context, Fathali and Okada (2018) surveyed 162 learners to develop a theoretical model grounded in TAM and SDT. Ebadi and Raygan (2023) surveyed 223 students while building a conceptual model relating facilitating conditions, PEU, PU, and perceptions of mobile learning. Collectively, this research indicates that the sample size of the present study is adequate for SEM analysis. Moreover, all of the students who completed the questionnaire had been using the AI-powered ALS for an entire academic year.

The following data analysis process was adopted for this study. First, SPSS 26.0 and AMOS 26.0 were used to analyze the validity and reliability of the questionnaire data. Two validity testing methods, exploratory factor analysis (EFA) and confirmatory factor analysis (CFA), were used. All of the questions on the scale were included in the analyzed variables. Some questions were deleted according to the EFA analysis results, and the retained questions were then subjected to CFA. The questions with better validity were left in the CFA analysis results, and finally, the retained questions were subjected to a group reliability test and an overall reliability test. Second, the SEM fit was tested. The structural dimensions of the model were built according to the constructed theoretical model to check whether each index of the model fits the running results, meets the requirements. If these requirements were not met, then the model was corrected according to the modification index. Finally, research hypothesis testing and mediation analysis were conducted. Research hypothesis testing analyzes the P-value of the unstandardized estimate of the path coefficient and the magnitude and signs of the standardized estimate of the path coefficient. The mediation analysis was carried out by multiplying the coefficients, taking 1000 samples, and applying the bias-corrected percentile bootstrap method to estimate the 95% confidence interval.

Qualitative data

The researchers randomly selected students from the five participating schools for one-to-one semistructured interviews—six students per school, for a total of 30 interviewees—and interviewed the mathematics teachers of the focal classes (five teachers altogether). Guided by our research aims, we developed 14 interview questions. The first category aimed to explore the relationships among students’ perceived autonomy, perceived competence, perceived relatedness, and their engagement (e.g., “When using the AI learning tablet, are you willing to invest time, effort, and energy to study proactively? Why?”). The second category asked about students’ acceptance of the new AI technology and its link to engagement (e.g., “Do you find the AI learning tablet easy and convenient to use? Does this influence the effort you put into learning? Why?”). The third category revealed students’ views on the design of the AI-based ALS (e.g., “Can you describe your experiences and feelings while learning mathematics with the AI learning tablet—for instance, your interactions with teachers and classmates, the learning workflow, or the device’s content and functions?”). The complete interview protocol is presented in Appendix E.

The teachers received a parallel schedule that explored student engagement and practical issues from the instructors’ perspective—for example, “What changes would make your students more willing to engage with the AI learning tablet?” and “Do you have any additional comments or suggestions on its use?” During every lesson, two research assistants conducted in-class classroom observations, and their field notes were added to the qualitative dataset. All qualitative data were analyzed via directed content analysis (Hsieh and Shannon 2005). This method, which is commonly used to verify or extend an existing theoretical framework, follows a structured, theory-driven coding process. To ensure coding reliability, a second researcher cross-checked each critical step of the analysis.

Findings

Validity and reliability testing of the measurement model

EFA was carried out on all the questions in each dimension of the factors, and the question types retained in the junior high school students’ learning engagement scale in the AI-powered ALS. First, the Kaiser–Meyer–Olkin (KMO) and Bartlett sphericity tests were performed. The KMO value reached 0.915, and the Bartlett sphericity test was significant, indicating that the questionnaire was suitable for validity analysis. The EFA results (see Appendix D) reveal that the scale is divided into 10 components, with a total variance explained of 75.069%. Among these items, the items under the dimensions of PEU and PU can be seen to be in the same component compared with the constructed theoretical model. This finding indicates that the two dimensions are highly correlated, so PEU and PU were combined to discuss technology acceptance in the subsequent analysis.

There are many questions under this dimension of technology acceptance because of this combination, and the measurement of this dimension is further analyzed in AMOS 26.0. The results show that the factor loadings of each question in the dimension of technology acceptance are in the range of 0.63–0.86, all of which are above 0.6 and below 0.95, indicating that the factor loadings of all questions meet the requirements (see Fig. 4). However, the results of the structural validity test indicate that χ2/df = 5.609 > 3 and RMSEA = 0.138 > 0.08, and neither fit index meets the requirements of the reference value, which demonstrates that further modification is needed. Some models are modified according to the modification indices, and the observed variables identified by the path with a larger MI value are deleted. According to the table of modification indices, the four questions of PE1, PE4, PU3, and PU4 pointed to by e1, e3, e7, and e8, respectively, were removed from this study, and the four questions of PE3, PE5, PU1, and PU2 were retained (see Table 1). The revised construct validity data are shown in Table 2. After modification, the measurement facet clearly meets the reference values for model fit, and all the values are good, demonstrating that this measurement facet has good construct validity.

The CFA results for technology acceptance.

CFA was then performed on all of the retained questions to study the learning engagement of the junior high school students in the AI-powered ALS and its factors. The first examination concerns the analysis result of convergent validity. Table 3 shows that all of the reserved questions are significant at the 0.001 level. The factor loadings of all questions range from 0.648–0.933, which are all in the range of 0.6–0.95. The average variance extracted (AVE) values of the seven dimensions are between 0.542 and 0.806, which are all greater than 0.5, and the composite reliability (CR) values are between 0.777 and 0.926, which are all greater than 0.7. The above analysis results demonstrate that the scale has good convergent validity.

The discriminant validity of the whole scale was subsequently tested. The value of the square root of the AVE for each dimension is 0.759, 0.827, 0.824, 0.746, 0.804, 0.736, 0.776, 0.802, 0.898, and 0.877 (see Table 4). The square root value of the AVE for each dimension is significantly greater than the correlation coefficient between one dimension and the other dimensions, which proves that there is no high correlation between each dimension and the other dimensions, thus indicating good discriminant validity for this study.

A reliability test of the questions that passed the validity test was carried out, and the results of the test using Cronbach’s α are shown in Table 5. The Cronbach’s coefficient of each dimension is between 0.759 and 0.925, and the overall reliability of the scale reaches 0.901. All dimensions have good reliability, except for perceived relatedness, which is within the acceptable range of reliability; accordingly, the overall reliability of the scale is good.

To obtain a more complete picture of the students’ answers to this scale, the mean and standard deviation of each dimension and the questions under each dimension are determined. The average score of the students is higher than 3 points for the four dimensions of perceived autonomy, perceived competence, perceived relatedness, and technology acceptance. This includes all of the questions in these dimensions, which means that the degree of satisfaction of the students’ needs for autonomy, competence, and relatedness in an AI-powered ALS is generally recognized, and the students have a high degree of acceptance of the AI-powered ALS. The mean of both students’ internal cognitive and extraneous cognitive load is lower than 3, but the mean of their internal cognitive load is close to 3, indicating that the students generally perceive the task to be more difficult and feel that they have received less unambiguous instruction and feedback under the AI-powered ALS.

Goodness-of fit test of the structural model

Second-order model validation

The right part of the model, i.e., learning engagement and its four measures, belongs to a second-order model. The main purpose of using second-order models is to simplify the structural dimensions in SEM. In this study, learning engagement is divided into four dimensions: behavioral, cognitive, emotional, and agentic engagement. If a second-order model is not constructed, then, according to the research hypothesis, each dimension significantly affects the four dimensions of learning engagement, thus resulting in a redundant structural dimension. Therefore, a second-order model is used in this study for model validation.

However, the second-order model inevitably causes a certain loss to the model. This simplifies the structural model at the cost of a loss of model fit. Therefore, the second-order model is suitable for use only when such a loss is low. There are usually two bases for judging whether the first-order model can be replaced by the second-order model: one is whether the construction of the second-order model has theoretical support, and the other is to determine the size of the objective coefficient of the model. In this study, the four measures of learning engagement have been widely recognized and used by researchers; thus, the second-order model has theoretical support. Second, the objective coefficient of the model is T = 0.976. The T-value of the model is very close to 1, which is ideal for using a second-order model instead of a first-order model. After the second-order validation is passed, the structural model can then be tested and analyzed for goodness-of-fit.

Model running and goodness-of-fit validation

Based on the reliability and validity tests and the validation of the second-order model, the model is divided into 10 dimensions, with technology acceptance being a combination of PEU and PU. The six dimensions of intrinsic cognitive load, extraneous cognitive load, perceived autonomy, perceived competence, perceived relatedness, and technology acceptance are factors that influence junior high school student learning engagement in the AI-powered ALS, and behavioral engagement, cognitive engagement, emotional engagement, and agentic engagement are used as measures of learning engagement. There are 3–4 measurement questions (observed variables) under each dimension (i.e., latent variables), which meets the SEM requirement of at least 3 observed variables under each latent variable. The running results show that the values of χ2/df, RMSEA, SRMR, CFI, IFI, and TLI meet the requirements of the reference values. Therefore, the values of each model fit index are within the acceptable range, indicating that this structural model is an acceptable research model (see Table 6).

Research hypotheses test

Research hypotheses revision

In the initial theoretical framework, a total of 15 research hypotheses were delineated to explore distinct facets of user interaction with AI-powered ALS. Upon conducting a discriminant validity test, it was found that PEU and PU—two constructs anticipated to independently interact with learning engagement—exhibited a higher than acceptable level of correlation. This suggests a lack of clear distinction between them in the context of our study’s empirical data. To maintain the integrity of the structural model and align it with the theoretical underpinnings of TAM, these constructs were unified into a single dimension termed “technology acceptance.” Consequently, the original hypotheses H9 and H10, which separately addressed these constructs, were integrated into a composite hypothesis—now referred to as H7—to reflect their combined influence on learning engagement.

Similarly, hypotheses H5 and H6, which pertained to the impact of PC on PEU and PU, respectively, were also consolidated. This was based on the empirical observation that PC uniformly affected the newly defined technology acceptance dimension. Thus, a revised H5 was formulated to encapsulate this unified relationship. The original hypotheses H4, H7, H13, H14, and H15 also underwent terminological adjustments to replace PU and the dual expressions of PEU and PU with technology acceptance. These alterations, resulting in revised hypotheses H4, H6, H10, H11, and H12, were necessary to accurately represent the findings of our discriminant validity assessment and to ensure conceptual consistency throughout the study (as shown in Table 7).

These methodological amendments have streamlined our hypotheses and ensured that the structural model is congruent with both theoretical expectations and the observed empirical relationships. This adjustment addresses the overlap in the constructs and aligns the hypotheses with the validated model of user engagement within AI-powered adaptive learning environments.

Test for the relationship between variables

The current model has a goodness-of-fit that is based on previous modifications to the model; therefore, AMOS 26.0 was used to conduct path analysis on the revised model structural dimensions to test the validity of the research hypothesis on the basis of the logical relationships between the variables, as proposed by the theory. The test results in Table 8 support research hypotheses H2, H4, H5, H6, H7, and H9, whereas H1, H3, and H8 are not supported.

Mediation effect test

In the constructed influencing factor model, technology acceptance may have a mediating effect on perceived autonomy, perceived competence, and perceived relatedness. Therefore, the relationships between the three dimensions of basic psychological needs and both technology acceptance and learning engagement are further analyzed in this study.

Additionally, the coefficient product and the bootstrap estimation of the upper and lower limits of the 95% confidence interval were used to test the significance of the direct effect, indirect effect, and total effect. The test results of the mediating effect of perceived autonomy are shown in Table 9, which indicates that technology acceptance does not have a mediating effect on the relationship between perceived autonomy and learning engagement; thus, H10 is not supported. The test results of the mediating effect of perceived competence are shown in Table 10, which indicates that technology acceptance plays a partial mediating role between perceived competence and learning engagement; thus, H11 is supported. The variance analysis of the indirect path and direct path of perceived competence reveals that there is no significant difference between the indirect effect and direct effect of perceived competence. The test results of the mediating effect of perceived relatedness are shown in Table 11, which indicates that technology acceptance plays a fully mediating role between perceived relatedness and learning engagement; thus, H12 is supported.

Model validation results

Based on the above relationship among the variables in the mediation effect test, the findings show that perceived competence and technology acceptance have a significant positive impact on learning engagement; extraneous cognitive load has a significant negative effect on learning engagement; technology acceptance is a mediating variable among perceived competence, perception relatedness and learning engagement; and perceived autonomy and intrinsic cognitive load have no direct or indirect impact on learning engagement. However, perceived autonomy, competence, and relatedness all have positive effects on technology acceptance.

We also conducted a round of expert reviews where domain experts evaluated the components of the model. We sent the model to a panel of experts who can provide feedback on its validity. This process can help achieve a consensus among experts through a series of expert interviews with their feedback. A research-validated model of the factors that interact with junior high school student learning engagement in the AI-powered ALS is shown in Fig. 5. The figure shows that among all of the influencing factors, technology acceptance has the greatest positive impact on learning engagement, and extraneous cognitive load has a lower significant, negative impact. Among the three factors of basic student psychological needs, the direct and indirect impact paths of perceived autonomy on learning engagement are not significant. Perceived competence can directly affect learning engagement and can also have an indirect effect on it through technology acceptance. The direct and indirect effects of perceived competence are the greatest among these three factors, and the difference between the direct and indirect effects is not significant. Perceived relatedness, in contrast, indirectly affects learning engagement mainly through the mediating effect of technology acceptance, but according to the results of the point estimation, its indirect effect is still low. Finally, the test results show that intrinsic cognitive load has no significant effect on learning engagement.

Verified model of the factors that affect learning engagement.

Qualitative data results

The qualitative data analysis procedure drew on the steps proposed by Assarroudi et al. (2018) and was partially modified as follows: (1) presetting themes—our themes were based on SDT, TAM, and CLT; (2) familiarizing with the data; (3) defining themes; (4) coding; (5) reviewing codes; (6) summarizing themes; and (7) producing the report. In the end, 19 codes were generated. From the SDT perspective, the themes included perceived autonomy (with four codes: personalized guidance, personal needs, self-directed learning, and curiosity), perceived competence (with two codes: self-efficacy and challenge), and perceived relatedness (with two codes: interactive discussion and a sense of closeness).

Excerpt 1:

-

(1)

Personalized guidance: I feel that the AI learning tablet is basically like a one-to-one tutoring class outside school (teacher interview).

-

(2)

Personal needs: (a) The AI learning system recommends one type of problem to students who do not understand it and a different type of problem to those who do. (b) We can see that every student is working on a different set of questions (classroom observation).

-

(3)

Self-directed learning: In my spare time, I like to study on my own and follow my own learning schedule.

-

(4)

Curiosity: (a) When we first started using it, I was curious and wanted to explore it. (b) When the technician opened the cabinet holding the tablets, the students all rushed forward to grab one—they were clearly curious and very interested (classroom observation).

-

(5)

Self-efficacy: Most of the time, I can complete the tasks.

-

(6)

Challenge: I am willing to address this problem as a challenge.

-

(7)

Interactive discussion: (a) In this kind of class, I can discuss things with my classmates. (b) Because I do less lecturing in this mode, I have fewer discussions with students, and sometimes I worry whether they have truly learned it (teacher interview).

-

(8)

Sense of closeness: If the teacher is also present, I can raise my hand to ask questions and feel a bit closer to the teacher.

From the TAM perspective, the themes comprise PEU—reflected in the codes’ device login and updates, drawing/handwriting operations, and recognition functionality—and PU—reflected in the codes’ learning assistance, knowledge consolidation, and learning enhancement.

Excerpt 2:

-

(1)

Device login and updates: (a) During one update, we spent quite a while flustered and trying to sort things out. (b) At the start of today’s class, the software was updated, so the students could not use it at first; the classroom was slightly chaotic, and the technician stood by to step in quickly to fix it (classroom observation).

-

(2)

Drawing/handwriting operations: (a) Drawing tools—such as the set square and compass—are much easier to use. (b) The drawing tool is well designed: students can draw directly inside the software instead of sketching on paper and then taking a photo (teacher interview).

-

(3)

Recognition function: (a) I hope that the system can improve its accuracy in recognizing characters. (b) A student told the technician in class that the software could not correctly recognize his handwriting (classroom observation).

-

(4)

Learning assistance: (a) After a problem is finished, the worked solution can be read, and the analysis is very detailed. (b) If students answer a question incorrectly or do not know how to solve it, then the text explanations and video tutorials help them learn (teacher interview).

-

(5)

Knowledge consolidation: (a) This helps me cement the knowledge point more firmly. (b) Teachers and technicians collaborate to set the day’s learning tasks, which helps students consolidate what they learned that day (classroom observation).

-

(6)

Learning improvement: (a) Because my grades have improved, I am even more motivated to keep progressing. (b) With respect to the review that we performed before class the next day, students performed better than before; compared with when no one at home could help them solve problems, their learning has indeed improved (teacher interview).

From the CLT perspective, the data encompass intrinsic CL, as represented by the codes “tasks too difficult” and “excessive text or overly long videos,” and extraneous CL, as represented by the codes “inaccurate feedback,” “unclear instructions,” and “unclear explanations.”

Excerpt 3:

(1) Tasks too difficult: “I think the problems it pushes to me are too hard—I really don’t want to do them”.

(2) Excessive text/videos that are too long: (a) There is too much text in the questions, and it is tiring to read. (b) Some students do not have the patience to finish the videos (classroom observation).

(3) Inaccurate feedback: I worked on it for a long time and felt sure that I was right, but it said that I was wrong.

(4) Unclear instructions: Sometimes, if you accidentally tap somewhere, it exits, and you do not know how to get back.

(5) Unclear explanations: Sometimes the explanation is not clear, which can be stressful.

Discussion

Basic psychological needs (SDT) and learning engagement

For the first research question concerning the relationship between SDT and learning engagement, the study revealed a significant association between students’ perceived competence and their engagement in AI-powered ALS. This echoes Ryan and Deci’s assertion that competence is the most universal energizer of intrinsic motivation (2002) and aligns with recent AI learning studies in which competence was the strongest predictor of persistent use (Ellikkal and Rajamohan 2024; Rosli and Saleh 2022). Self-efficacy, an important indicator of perceived competence, has been shown to be the main factor positively correlated with learning engagement, with higher self-efficacy generally leading to greater learning engagement (Rosli and Saleh 2022). Challenging tasks can also increase student learning engagement (Li et al. 2022), which shows that within the limits of the students’ CLs, when the learning tasks are more challenging, their self-efficacy and learning engagement are higher. The interview data echoed this pattern; as one student remarked, “Some of the questions on the AI tablet are challenging, so I feel like attempting them.”

However, the associations between perceived autonomy and perceived relatedness with learning engagement were not statistically significant. This may be because the independent learning ability of Chinese junior high school students in mathematics is polarized (Chen et al. 2024), with some students struggling to grasp their own learning needs and still requiring assistance from teachers. Some students prefer to act autonomously, while others prefer a controlled learning environment. Students who prefer a controlled environment may feel overwhelmed when confronted with a more autonomous learning environment. Our interviews indeed revealed divergent views: one student said, “This feels like one-to-one tutoring—I can focus on what I don’t know instead of repeating what I already understand,” whereas another commented, “I still prefer the teacher’s instruction; I don’t like studying on my own.” The combination of data from students with different characteristics may lead to a nonsignificant impact of perceived autonomy on learning engagement in an AI-powered ALS. Perceived relatedness had a positive but nonsignificant effect on engagement. It is possible that when students sense connection and support from teachers and peers, they mainly seek additional enjoyment (Roca and Gagne 2008) rather than markedly higher engagement—a pattern also observed in tightly scripted digital environments where choices are prestructured (Chiu 2022).

Cognitive load and learning engagement

With respect to the second research question, only extraneous CL dampened engagement, whereas intrinsic CL was nonsignificant. Extraneous CL stemmed from an inappropriate instructional design: in the present system, unclear prompts, feedback, and explanations—largely due to imperfect handwriting recognition and other functions—were the principal sources of extraneous CL, as repeatedly noted in the student interviews (e.g., “The software couldn’t recognize my handwriting”). This finding aligns with previous work that shows that clear guidance and a user interface (UI) design can reduce CL and increase engagement (Han et al. 2023; Koć-Januchta et al. 2022).

Intrinsic CL had no significant negative effect on engagement. The adaptive system’s weakness-diagnosis knowledge map not only visualizes students’ status but also helps them organize relationships among knowledge points, thereby supporting knowledge construction. In this sense, the system offers a scaffolding effect (e.g., Dong et al. 2020), which may mitigate any adverse impact of intrinsic load on engagement. As one student put it, “I think the questions assigned to me are pretty good—some aren’t especially difficult, though they’re not exactly easy either, but I feel I can complete them. Some of the questions are ones I previously got wrong.” A teacher also remarked, “Before class, we set up the learning content (on an AI tablet)—some of it reviews what the students learned today, and some recaps the week’s material. The system can automatically link and push related questions to them”.

Student technology acceptance and engagement

To address the third research question, this study advances the field by unraveling how technology acceptance acts as a gateway to learning engagement in rural educational contexts, which is mediated by cognitive load factors and driven by intrinsic motivation according to SDT. This finding supports the novelty of our integrated theoretical approach and calls for refined AI-powered ALS designs that cater to rural students’ specific educational needs. This suggests that when students positively perceive an AI-powered ALS, their engagement levels also tend to be higher. The interview data also support this view. For example, one student stated, “The AI tablet helps me learn mathematics,” and another commented, “I think using the AI tablet is very useful.”

Within the AI-powered ALS, a notable positive association was observed between the junior high school students’ technology acceptance and their learning engagement. The study identified correlations among perceived competence, perceived relatedness, and technology acceptance, but importantly, these associations do not imply causation. The data suggest that students with higher perceived competence and relatedness also tend to report higher levels of technology acceptance, which is associated with increased learning engagement. Student technology acceptance is also closely related to student perceived autonomy, perceived competence, and perceived relatedness. The results show that all three dimensions of students’ basic psychological needs have a significant positive effect on technology acceptance and that technology acceptance serves as a mediating variable between perceived competence and perceived relatedness. As one student remarked, “I find this learning method really novel—it’s my first time encountering it, so I’m willing to spend some time on it,” while another added, “The system generates questions specifically on the problems I don’t yet understand; because I find that helpful, I enjoy using it.”

In an AI-powered ALS, student needs revolve around using the AI-powered ALS to compensate for weak knowledge points, and when their needs are met, i.e., their weak points are strengthened, they feel that the AI-powered ALS has produced good learning results (Hassan et al. 2021; Zhang et al. 2019). With a high sense of self-efficacy, they have a more positive attitude toward the ease of use of the operation and function of the AI-powered ALS and the learning effects that it creates, resulting in higher levels of both PEU and PU (Chen et al. 2021; Jeno et al. 2019; Rosli and Saleh 2022).

The findings of this study underscore the pivotal role of technology acceptance in shaping learning engagement within AI-powered ALS. As postulated by Davis (1989) and subsequent TAM-related research, PEU and PU are fundamental determinants of technology adoption (Wang et al. 2023). In the rural educational context of our study, this translates to an alignment between students’ interaction with the AI-powered ALS and their engagement levels. This echoes the research of Venkatesh et al. (2003), highlighting that technology acceptance is a nuanced predictor of educational technology utilization. Importantly, this study extends the TAM discourse by illuminating the nuanced ways in which the system’s usability and functional benefits (or lack thereof) correlate with rural students’ engagement—a demographic often underrepresented in the technology acceptance literature.

Theoretical implications

The present study contributes to the theoretical landscape by illuminating the interplay between SDT and TAM in the domain of rural education technology. While reinforcing the central tenet of SDT—that the satisfaction of basic psychological needs is essential for learning engagement—our findings also reveal a nuanced picture. Specifically, the significant association between perceived competence and learning engagement echoes the emphasis that SDT places on the need for competence as a driver of intrinsic motivation (Ryan and Deci 2002). We clarified the motivation–acceptance links. Our data suggest that competence-supportive AI feedback increases PU, which functions as a motivational amplifier. This refines SDT by showing that need satisfaction may translate into engagement only when learners perceive clear instrumental value in the technology. However, the lack of significant findings for autonomy and relatedness suggests a context-dependent expression of these needs, potentially reshaping the applicability of SDT in diverse educational settings (Chiu 2022). In addition, this study suggests reconceptualizing CL. The nonsignificant intrinsic load path indicates that AI-adaptive algorithms can self-regulate task difficulty and effectively “outsource” intrinsic CL management to the system—a nuance that CLT models rarely capture. Furthermore, by integrating TAM into this framework, our research underscores the vital role of technology acceptance, particularly PEU and PU, in mediating the relationship between students’ psychological need satisfaction and their engagement with AI-powered ALS (Davis 1989). These insights underscore the necessity for future theoretical models to account for the complexities of technological integration in learning environments, especially in underrepresented contexts such as rural education. This integration not only expands TAM by situating it within the broader motivational framework provided by SDT but also offers a more comprehensive understanding of the factors that influence engagement in educational technology (Nikou and Economides 2017).

Practical implications for rural ALS deployment

Given the distinctive features of rural education—its context, teacher resources, and varying levels of digital literacy—this study advances five practical implications designed to make the “competence → acceptance → engagement” pathway actionable while simultaneously minimizing students’ CL. We believe that these implications are not only relevant to the present setting but also can inform AI-powered adaptive learning initiatives in rural schools worldwide. (1) Given the strong association between technology acceptance and learning engagement, it is recommended that an AI-powered ALS be designed to maximize ease of use and usefulness. Especially in rural educational settings, where students begin with a low level of digital literacy, they need progressive, step-by-step guidance; if they are expected to use the system independently at home, then such guidance becomes even more critical. (2) To enhance perceived competence and PU, for students who have no support with mathematics after they return home, there is a need to collect students’ incorrect answers during the process and classify them according to knowledge points. If only the analysis is given and no space for review is provided for students, then the system can help them only for a short period, and it is difficult to achieve substantial improvement because of the rapid pace of forgetting (Jiang et al. 2018). (3) To enhance perceived relatedness, a learning exchange community can be established in the system where students can share their learning experiences, offer their insights and confusion, and strengthen their interaction and sense of belonging with teachers and classmates through online channels (Liu et al. 2010). (4) Teachers should be provided with a “one-to-many” support dashboard so that they continue to play a pivotal role. The system’s back end should generate tiered alert lists that enable teachers to spend just ten minutes each day identifying priority students for intervention, which makes more efficient use of limited instructional time. This arrangement both deepens teachers’ insight into students’ learning progress and ensures that learners with weaker self-regulation receive adequate support. (5) To reduce the extraneous cognitive load for students, the accuracy of handwriting recognition should be further improved to reduce the probability of misjudgment (Dong et al. 2020). The instant feedback function of the AI-powered ALS is a very important feature, but the misrecognition of handwriting and consequently, the misjudgment of the answers can delay students’ learning time, affect their learning mood, and dampen their self-confidence and pride, which do not facilitate their engagement in learning. The user interface design of the system also needs to be enhanced to ensure that the instructions in the system are clear and that the likelihood of students having problems because of mistaken touches is minimized (Chen et al. 2021).

Accordingly, for practitioners, this study emphasizes the necessity of developing an AI-powered ALS that is both user-friendly and pedagogically sound. The system’s ability to meet students’ psychological needs and provide a seamless user experience may dictate its success. Thus, educational technologists and curriculum designers should consider these aspects in tandem to optimize learning outcomes in rural contexts (Lan et al. 2019).

Conclusion

This research not only explores the variable associations but also integrates distinct theoretical perspectives to offer a comprehensive model that explains how and why certain factors influence learning engagement in rural contexts. This innovative approach fills a gap in the literature and has practical implications for the design of educational technology. Although the correlational design precludes definitive conclusions about causality, the identified associations provide valuable insights for the development of more engaging educational technology. Starting from the real-world application of an AI-powered ALS in rural schools, a comprehensive and effective analysis of the factors that interact with learning engagement is conducted. Considering the characteristics of student learning in a technological environment, this study proposes targeted improvement strategies and recommendations from the perspective of learning engagement.