Abstract

This study investigates how job insecurity affects employees’ cybersecurity behavior by highlighting the mediating role of job stress and the moderating function of self-efficacy in AI use. Drawing on multi-wave data (N = 373) from working adults in South Korea, the research employs a three-time-point design to address longstanding questions about whether perceived threats to employment stability influence compliance with organizational security protocols. Contrary to initial expectations, job insecurity alone did not exert a direct impact on cybersecurity behavior; rather, its detrimental effect was fully conveyed through elevated job stress. These findings indicate that uncertainty surrounding future employment consumes employees’ emotional and cognitive resources, thereby undermining their ability to maintain consistent vigilance in detecting or preventing cyber threats. Additionally, the results reveal that self-efficacy in AI use significantly moderates the link between job insecurity and job stress, suggesting that employees who feel confident in their AI-related competencies experience lower stress under conditions of heightened job insecurity. By synthesizing insights from conservation of resources theory and the job demands-resources model, the study advances both theoretical and empirical understanding of how job-related insecurities and personal technological capabilities collectively shape cybersecurity engagement. The outcomes emphasize the importance of interventions that both alleviate employee stress and enhance skill sets in emerging digital tools, ultimately safeguarding organizational data in fast-changing technological contexts.

Similar content being viewed by others

Introduction

In contemporary organizations, the widespread adoption of sophisticated digital systems and AI-based tools has rendered cybersecurity behavior a critical priority. Organizations rely on advanced information systems to improve operational efficiency and manage critical data, but these same systems present new vulnerabilities and heightened exposure to cyber threats (Alsharida et al., 2023; Califf et al., 2020). While digital innovations promise substantial benefits, they also create openings for malicious attacks and data breaches, making employees’ vigilance in adhering to security measures essential for safeguarding corporate assets (Ifinedo, 2012; Khan et al., 2023). As these technologies evolve at an unprecedented pace, the people who uphold comprehensive cybersecurity practices become essential defenders of organizational continuity (Alanazi et al., 2022; Alsharida et al., 2023; McCormac et al., 2017).

Despite considerable scholarly attention on cybersecurity, much of the early focus fell on technical solutions or top-down policies, with relatively less investigation into the human drivers of secure behavior (Kim and Kim, 2024a). However, emerging findings underline a variety of individual- and organization-level factors—such as security awareness, organizational climate, personal motivations, and leadership—that collectively shape an employee’s willingness to comply with security standards (Alanazi et al., 2022; Alsharida et al., 2023; Pfleeger and Caputo, 2012). Such research highlights that protective conduct cannot be explained solely by the existence of advanced firewalls or encryption; instead, cybersecurity adherence is contingent upon employees’ psychological states and the contextual elements that either reinforce or undermine vigilant practices.

Nevertheless, existing studies rarely examine how job insecurity affects cybersecurity behaviors. While prior work explores work overload (Kim and Kim, 2024a), the impact of job loss fears on security standards remains understudied. As AI-driven transformations intensify and job roles become more uncertain (Nam, 2019; Brougham and Haar, 2020), it is critical to understand whether job insecurity discourages employees from allocating the mental resources necessary for cyber vigilance (Alanazi et al., 2022; Khan et al., 2023).

Another gap pertains to the insufficient examination of the psychological mechanisms and contextual moderators that might explain or mitigate the negative effects of job insecurity on cybersecurity behavior (Bazzoli and Probst, 2022; Shoss et al., 2023; Shoss, 2017). Studies have sporadically proposed indirect pathways, yet the role of employees’ internal affective states—such as job stress—in translating perceived insecurity into suboptimal security actions remains underexplored. Investigating why, and through which mediators, job insecurity diminishes cybersecurity engagement is essential for crafting effective interventions. Given that job stress can deplete the mental bandwidth required for consistent security practices, this variable likely serves as a key psychological bridge linking feelings of insecurity to security-related lapses (Halbesleben et al., 2014).

A third notable gap is the scarcity of empirical work examining how AI-specific constructs, such as employees’ confidence in navigating AI tools, can buffer the detrimental effects of stress on cybersecurity behavior. Although AI implementation is widely recognized as a driver of organizational transformation, research has not adequately explored self-efficacy in AI use as a moderator of the stress-behavior relationship (Bandura, 2012; Kim et al., 2024a; Kim and Lee, 2025). This oversight is significant because employees who believe they can skillfully utilize AI technologies may maintain their cybersecurity practices even under stressful conditions, as their technological competence provides the cognitive resources needed for security vigilance. Hence, clarifying whether—and how—AI-related self-efficacy helps employees maintain cybersecurity behaviors despite experiencing stress is vital for understanding variance in security outcomes.

Building on these three research gaps, the current study integrates key principles from the conservation of resources (COR) theory and the job demands-resources (JD-R) model to illuminate how job insecurity, job stress, and AI self-efficacy jointly shape cybersecurity behavior. COR theory emphasizes the depletion and protection of personal resources when employees confront threats like job loss (Hobfoll, 1989, 2011), while the JD-R model clarifies how job demands and resources interact to influence employee well-being and behavior (Bakker and Demerouti, 2017). By linking these two perspectives, this research provides novel insights into why employees under perceived job instability may neglect cybersecurity practices through stress-induced resource depletion and how self-efficacy in AI use can preserve security behaviors even when stress levels are high.

This study advances the literature on cybersecurity behavior by highlighting job insecurity as a previously understudied yet potentially important antecedent, thereby expanding the discourse beyond more commonly examined stressors such as work overload. It further illuminates the underlying psychological process linking job insecurity to cybersecurity behavior by introducing job stress as a key mediator, offering a robust explanation of why perceived threats to employment stability translate into diminished security compliance. In addition, the research broadens theoretical insights by identifying self-efficacy in AI use as a crucial moderator that can preserve cybersecurity behavior despite high stress levels, thereby uncovering how employees with greater technological competence can maintain security vigilance even under adverse psychological conditions. Lastly, by integrating principles from both the COR theory and the JD-R model, this paper provides a comprehensive framework that clarifies how contextual stressors, internal psychological states, and personal capabilities jointly influence vital organizational outcomes in the rapidly changing digital workplace.

Theory and hypotheses

Job insecurity and cybersecurity behavior

We posit that job insecurity diminishes employee cybersecurity behavior. In today’s fast-moving employment arena, which is shaped by ongoing technological progress, intensifying global competition, and fluctuating economic patterns, job insecurity—characterized by the perceived possibility of job discontinuation and accompanying worries (Bazzoli and Probst, 2022; Lin et al., 2021; Shoss et al., 2023)—has emerged as a critical psychosocial challenge in organizational settings. Since job insecurity has garnered notable focus within the domains of occupational health and organizational psychology, its extensive consequences for workplace behaviors, especially cybersecurity behavior, have warranted thorough examination (Bazzoli and Probst, 2022; Lin et al., 2021; Shoss et al., 2023).

Cybersecurity behavior encompasses both mandatory compliance with protocols and proactive protective engagement beyond minimum requirements. While organizations typically mandate basic security compliance—such as password protocols and data handling procedures—effective cybersecurity increasingly depends on employees’ proactive vigilance and voluntary efforts to identify, prevent, and respond to evolving threats (D’Arcy and Lowry, 2019; Posey et al., 2013). This distinction is crucial because while mandatory compliance may be maintained through organizational controls and sanctions, the proactive dimension of cybersecurity behavior—including staying alert to new threats, promptly reporting suspicious activities, voluntarily updating security knowledge, and going beyond minimum requirements in protecting organizational assets—requires sustained cognitive engagement and intrinsic motivation (Bulgurcu et al., 2010; Herath and Rao, 2009). These proactive security behaviors demand considerable cognitive diligence and represent the difference between mere compliance and genuine security effectiveness (Alanazi et al., 2022; McCormac et al., 2017). When a member’s mental energy becomes largely consumed by thoughts about job insecurity, fewer attentional and cognitive resources may be available to fuel the actions necessary for robust cybersecurity behavior (Kennison and Chan-Tin, 2020; Khan et al., 2023). This inward focus on job-related threats can thus leave individuals more prone to cybersecurity missteps, thereby leaving the organization vulnerable.

In exploring how job insecurity diminishes cybersecurity behavior, we draw upon the COR theory (Hobfoll, 1989, 2011) and the JD-R model (Bakker and Demerouti, 2017) as our primary theoretical lenses.

First, COR theory posits that individuals protect resources essential for coping with demands (Hobfoll, 1989, 2011). Job insecurity threatens multiple resources—employment, income, status—creating psychological impacts that exceed potential gains (De Witte et al., 2016). When employees perceive job instability, they redirect cognitive resources toward self-preservation rather than discretionary behaviors like proactive security engagement. While basic compliance may persist due to sanctions, voluntary security vigilance—monitoring vulnerabilities, reporting threats—requires sustained attention that stressed employees cannot maintain (Califf et al., 2020). This resource diversion exemplifies how job insecurity shifts attention from organizational security toward immediate job-preservation concerns.

Within the context of cybersecurity, while basic compliance with security protocols may be maintained due to organizational mandates and potential sanctions, the proactive aspects of cybersecurity—such as voluntary participation in security training, vigilant monitoring of system vulnerabilities, and prompt reporting of potential threats—require sustained attention and discretionary effort (Califf et al., 2020). However, in an atmosphere characterized by job insecurity, employees may shift their attention to meeting short-term, visibility-enhancing tasks—i.e., assignments they believe demonstrate their ongoing value to the organization. As a result, they may reduce their engagement in proactive security behaviors, maintaining only the minimum required compliance while neglecting the voluntary vigilance that characterizes effective cybersecurity (Nam, 2019). This departure from security-related vigilance exemplifies how job insecurity can divert crucial resources (such as vigilance, time, and emotional well-being) away from safeguarding activities and toward coping mechanisms intended to offset perceived job threats.

Second, while acknowledging that job insecurity is not a traditional job demand in the JD-R model, we position it as a hindrance stressor that functions similarly to job demands in depleting employees’ resources (Vander Elst et al., 2016). The JD-R model’s extended framework recognizes that job-related threats and hindrance stressors can tax employees’ capacity in ways comparable to traditional job demands (Crawford et al., 2010). According to JD-R, the balance between demands—stressful aspects of work—and resources—facilitators of goal attainment—shapes both well-being and work behavior (Crawford et al., 2010). Job insecurity, as a hindrance stressor, creates psychological demands that require continuous emotional and cognitive processing, thereby functioning as a resource-depleting force in the workplace (Vander Elst et al., 2016). If the organization or the individual cannot augment resources (for instance, through reassurance, managerial support, or skill-building), the employee’s overall resource pool becomes insufficient. This lack of resources and ongoing stress exposure can impede behaviors that require sustained commitment, careful monitoring, and additional mental bandwidth—exactly the traits necessary to maintain reliable cybersecurity practices (Califf et al., 2020).

By integrating these two theoretical perspectives, we propose that job insecurity—as both a threat to resource loss (COR) and a hindrance stressor (JD-R)—undermines employees’ capacity and motivation to engage in cybersecurity behaviors, particularly the proactive dimensions that require discretionary effort. The COR theory illuminates why job insecurity triggers resource conservation strategies that redirect attention away from voluntary security behaviors, while the JD-R model’s extended framework explains how job insecurity, though not a traditional demand, creates comparable resource depletion through its role as a persistent workplace stressor.

Empirical investigations reinforce these conceptual frameworks by illustrating behavioral patterns that emerge when job insecurity is present. For example, workers experiencing job insecurity may display lower motivation to engage in proactive cybersecurity behaviors beyond mandatory requirements, including taking part in extra training or promptly reporting security issues (Lam et al., 2015). Whenever employees sense instability in their roles, they may become less committed to the organization’s continued prosperity and thus be less willing to exceed their defined responsibilities to strengthen cybersecurity (Bazzoli and Probst, 2022; Shoss et al., 2023). Furthermore, job insecurity has been empirically linked to increased risk-taking (Bazzoli and Probst, 2022; Shoss et al., 2023; Probst and Brubaker, 2001), suggesting that concerned employees might engage in workarounds that technically comply with security mandates while compromising their spirit (Kennison and Chan-Tin, 2020). When people fear for their job tenure, their immediate priorities may favor speed or productivity, resulting in minimal compliance rather than proactive security engagement (Kennison and Chan-Tin, 2020; Khan et al., 2023). Job insecurity additionally lowers organizational loyalty and identification (Sverke et al., 2002), which can dampen voluntary participation in cybersecurity protocols and raise the possibility of internal security breaches (Hadlington, 2017; Li et al., 2019).

Alternative mechanisms may exist. Employees facing job insecurity might anticipate exit and reduce citizenship behaviors, including security engagement (Sverke et al., 2002). However, we argue stress-mediation represents the primary pathway because: (1) empirical evidence shows job insecurity affects behaviors primarily through strain rather than withdrawal (Cheng and Chan, 2008); (2) cybersecurity encompasses both mandatory and voluntary elements, making complete withdrawal unlikely; (3) resource depletion explains why even committed employees struggle with security behaviors under insecurity-induced stress.

Based on this foundation, we propose:

Hypothesis 1: Job insecurity will have a negative total effect on cybersecurity behavior.

Job insecurity and job stress

In the present study, we further argue that unstable jobs will elevate the degree of job stress. Job stress occurs when perceived demands exceed coping resources, eliciting negative emotional and physiological responses (Lazarus and Folkman, 1984; Beehr et al., 1976). Rather than acute crises, chronic stress accumulates from persistent challenges—excessive workloads, role conflicts, uncertain environments—depleting resources and reducing performance (Cooper and Marshall, 1976; Ganster and Rosen, 2013). Contemporary technological shifts and restructuring heighten stress by undermining employees’ control and stability (Brougham and Haar, 2020). High stress correlates with burnout, reduced engagement, and diminished capacity for tasks requiring vigilance like cybersecurity compliance (Califf et al., 2020).

Drawing on our integrated theoretical framework of COR theory and the JD-R model, we elucidate the pathways by which job insecurity fosters higher job stress among workers.

First, the widely regarded COR theory provides a compelling explanation for how job insecurity can amplify job stress. Job insecurity represents a prototypical threat to resource loss, as it jeopardizes multiple valued resources, including income, professional identity, social support networks, and career advancement opportunities (De Witte et al., 2016; Hobfoll, 2001). COR theory emphasizes that the menace of resource erosion exerts a more forceful impression than the chance of resource acquisition, and that such resource shrinkage carries disproportionately heavier implications relative to resource gain (Hobfoll, 2001). Accordingly, the anticipation of impending unemployment (i.e., job insecurity) can profoundly disrupt an individual’s psychological health, overshadowing any marginal benefit of potentially securing alternative employment. Moreover, COR theory posits that resource shrinkage can precipitate subsequent resource losses in a spiraling sequence, as diminished resource pools hamper an individual’s capability to rebound from the initial strain (Hobfoll, 2001). Applied to job insecurity, the specter of job loss can result in drained emotional and intellectual reserves, exacerbating the difficulty of managing the stress originating from such insecurities.

Second, the JD-R model, in its extended form, recognizes job insecurity as a hindrance stressor that creates psychological demands comparable to traditional job demands (Vander Elst et al., 2016). While not a job demand per se, job insecurity functions as a chronic stressor requiring continuous cognitive and emotional processing to manage the uncertainty and threat it represents. Moreover, the JD-R model highlights that elevated hindrance stressors, coupled with diminished job resources, culminate in increased strain and stress (Bakker and Demerouti, 2007). Under conditions of job insecurity, individuals might lack tangible or intangible job resources—including social support networks or substantial workplace autonomy—to mitigate the stress emerging from uncertain employment prospects. Such an unfavorably imbalanced relationship between job demands and job resources fosters a surge in job stress.

The integration of these two theoretical perspectives reveals complementary mechanisms linking job insecurity to job stress. COR theory emphasizes the stress-inducing nature of threatened resource loss, while the JD-R model’s extended framework explains how job insecurity, as a hindrance stressor, taxes employees’ coping capacity when resources are insufficient. Together, they provide a robust theoretical foundation for understanding why job insecurity reliably produces heightened job stress.

Based on these principles, the current research articulates the following hypothesis:

Hypothesis 2: Job insecurity will increase job stress.

Job stress and cybersecurity behavior

We propose that job stress would decrease the level of cybersecurity behavior that individuals exhibit. As contemporary workplaces transition into ever more digitally driven environments, cybersecurity has become an important element of organizational protocols. Nevertheless, a particularly intriguing yet not fully explored facet within this context lies in the influences of job stress on cybersecurity behavior. Analyzing how these two variables interact can yield critical insights into how organizations can sustain a robust digital defense.

First, the COR theory offers a useful lens for understanding how such stress drains critical personal resources—such as cognitive focus, emotional resilience, and energy—that employees need to sustain both mandatory compliance and proactive engagement with organizational security policies (Hobfoll, 1989, 2011). In the realm of cybersecurity, where continuous vigilance and thorough adherence to protocols are essential, employees under heightened stress may find it difficult to dedicate the attention and effort required to go beyond basic compliance. While stressed employees may maintain minimal adherence to avoid sanctions, they often lack the cognitive resources needed for the proactive vigilance that characterizes effective cybersecurity—such as carefully scrutinizing emails for phishing attempts, promptly installing security updates, or actively monitoring for unusual system behavior (Halbesleben et al., 2014). Consequently, higher stress levels can result in a reduction from comprehensive security engagement to mere compliance, or even lapses in basic security practices.

Second, the JD-R model provides a complementary viewpoint by illustrating how elevated job stress, arising from factors like work overload, conflict, or fear of failure, strains an individual’s ability to maintain proactive and voluntary tasks (Bakker and Demerouti, 2017). Although basic cybersecurity activities—such as using mandated passwords—are not inherently optional from an organizational standpoint, the quality and thoroughness of security behavior vary significantly based on available cognitive resources. Stressed employees may technically comply with password requirements but choose easily guessable passwords, or they may delay security updates to avoid work interruptions. Because stress diverts mental and emotional resources to immediate concerns, the proactive and vigilant aspects of security behavior are more likely to be compromised (Califf et al., 2020).

When these two perspectives are integrated, they clarify how job stress diminishes employees’ capacity and motivation to engage in comprehensive cybersecurity practices. COR theory illuminates the depletion of cognitive, emotional, and motivational resources under stress, while JD-R demonstrates how stress creates an imbalance where immediate demands overshadow important security behaviors, particularly those requiring proactive effort beyond basic compliance. This dual theoretical lens provides a comprehensive understanding of how stress erodes the quality and consistency of cybersecurity behavior.

Hence, the following hypothesis is proposed.

Hypothesis 3: Job stress will diminish cybersecurity behavior.

The mediating role of job stress in the job insecurity–cybersecurity behavior link

A central objective of this article is to examine the mediating role of job stress in connecting job insecurity with cybersecurity behavior. Building on our integrated COR-JD-R framework, we propose that job stress serves as the critical psychological mechanism through which job insecurity influences cybersecurity behavior, particularly its proactive dimensions.

From the COR theory perspective, job insecurity triggers a resource loss spiral that manifests as job stress, which subsequently impairs employees’ capacity to engage in resource-intensive behaviors like cybersecurity compliance (Hobfoll, 2011). The threat of job loss initiates a conservation mode where employees experience heightened stress as they attempt to protect their remaining resources. This stress state, characterized by resource depletion, leaves insufficient cognitive and emotional resources for maintaining vigilant cybersecurity practices.

The JD-R model complements this view by explaining how job insecurity, as a hindrance stressor, creates job stress that disrupts the balance needed for discretionary positive behaviors (Crawford et al., 2010). When employees experience stress from job insecurity, they lack the psychological resources to engage fully in behaviors that, while organizationally important, do not directly address their immediate concerns about job security. The stress arising from job insecurity thus channels employees’ limited resources away from cybersecurity behaviors toward more pressing self-preservation activities.

This mediating mechanism reflects the process by which contextual threats (job insecurity) are internalized as psychological strain (job stress), which then manifests as behavioral outcomes (reduced cybersecurity behavior). Job insecurity does not automatically result in poor cybersecurity practices; rather, it is the stress response—the depletion of resources and the psychological strain—that serves as the proximal cause of diminished security compliance. Without the intervening stress response, employees might maintain their security behaviors despite job uncertainty. However, when job insecurity generates stress, it creates a psychological state incompatible with the sustained attention and effort required for cybersecurity vigilance.

Hence, the following hypothesis is proposed:

Hypothesis 4: Job stress will mediate the relationship between job insecurity and cybersecurity behavior.

The moderating influence of self-efficacy in AI use in the job stress–cybersecurity behavior link

The current paper suggests that employees’ self-efficacy in AI use will act as a protective moderator, buffering the negative impact of job stress on cybersecurity behavior. Rooted in Bandura’s (1977) conceptualization, the notion of self-efficacy means an individual’s confidence in his or her capability to conduct behaviors essential for achieving certain performance objectives. Researchers have widely applied this concept in numerous settings, including technology utilization. Building on Compeau and Higgins’ (1995) work, who defined computer self-efficacy as an individual’s perception of their competence in utilizing computers for task completion, we extend the idea to self-efficacy in AI use. This indicates an individual’s belief in his or her skillfulness in adopting and effectively harnessing AI-related technologies in their professional responsibilities (Beane and Leonardi, 2022; Berente et al., 2021; Dwivedi et al., 2021; Huang and Rust, 2018; Jia et al., 2023; Weber et al., 2023). In other words, it captures the confidence people have in their ability to proficiently use, interpret, and manipulate AI platforms, tools, or processes, whether in a workplace context or other spheres (Dwivedi et al., 2021; Kim et al., 2024a; Kim et al., 2024b; Kim and Lee, 2025; Seeber et al., 2020).

Self-efficacy in AI use represents individuals’ confidence in utilizing AI technologies effectively (Bandura, 1977; Compeau and Higgins, 1995). We extend this concept to AI contexts, capturing confidence in using, interpreting, and manipulating AI platforms (Dwivedi et al., 2021; Kim and Lee, 2025). Three considerations justify AI self-efficacy as our moderator: First, contemporary cybersecurity systems incorporate AI components—machine learning threat detection, automated responses—making AI competence directly relevant (Sarker et al., 2021). Second, domain-specific AI self-efficacy provides technological competencies that directly facilitate security task execution, unlike general self-efficacy. Third, AI confidence differentiates employees’ adaptation to automated environments (Dwivedi et al., 2021). Employees with high AI self-efficacy adopt tools confidently, viewing them as performance enhancers while managing AI-related uncertainties effectively.

Those employees characterized by high self-efficacy in AI use tend to be more inclined to adopt AI tools, conceive of them as mechanisms to advance performance levels, and be self-assured in learning new AI-related competencies. Consequently, they possess a superior capacity for managing uncertainties and challenges linked with AI introductions in organizational milieus.

Integrating Bandura’s self-efficacy theory with our COR-JD-R framework, we propose that self-efficacy in AI use serves as a critical personal resource that buffers against the detrimental effects of job stress on cybersecurity behavior, particularly preserving the proactive aspects that are most vulnerable to stress-induced degradation.

First, from the COR theory perspective, self-efficacy in AI use represents a valuable personal resource that helps employees maintain performance even when other resources are depleted by stress (Hobfoll, 1989, 2001). When employees experience high job stress, their cognitive and emotional resources become strained, typically leading to a retreat from proactive behaviors toward minimal compliance or even non-compliance with security protocols. However, employees with high AI self-efficacy possess an additional resource reservoir—their technological competence—that can compensate for stress-induced resource depletion. This technological confidence enables them to maintain not just basic compliance but also the proactive security behaviors that distinguish effective from merely adequate cybersecurity. Their familiarity with digital systems means that security tasks require less cognitive effort, allowing them to maintain vigilant security practices even when general cognitive resources are taxed by stress.

Second, within the JD-R framework, self-efficacy in AI use functions as a personal resource that helps employees cope with the demands created by job stress (Bakker and Demerouti, 2007). Personal resources in the JD-R model are aspects of the self that are linked to resiliency and refer to individuals’ sense of their ability to control and impact their environment successfully (Xanthopoulou et al., 2007). When job stress threatens to reduce security behaviors to mere compliance, those with high AI self-efficacy can draw upon their technological skills to maintain comprehensive security engagement. Their confidence in using AI and digital tools translates into greater efficiency in performing cybersecurity behaviors, allowing them to sustain proactive security practices—such as actively monitoring for threats, promptly updating security protocols, and thoroughly vetting suspicious communications—even when stressed.

Furthermore, employees with high AI self-efficacy are likely to perceive cybersecurity tasks differently than those with low AI self-efficacy. Rather than viewing security protocols as additional burdens that compound their stress, they may see them as integral parts of their technological work environment that they are well-equipped to handle. This cognitive reframing, enabled by their confidence in technological domains, helps maintain their engagement with both mandatory and voluntary security practices even under stressful conditions. Their self-efficacy provides not just the skills but also the motivational foundation to persist with comprehensive security behaviors when stressed, as they view security challenges as opportunities to apply their technological expertise rather than as additional stressors (Bandura, 1997).

Importantly, the moderating effect of AI self-efficacy is particularly relevant for maintaining the proactive aspects of cybersecurity behavior under stress. While organizational mandates and potential sanctions may ensure basic compliance regardless of stress levels, it is the voluntary, proactive security behaviors—those that require sustained attention, initiative, and engagement—that are most vulnerable to stress-induced degradation. Employees with high AI self-efficacy are better positioned to maintain these crucial proactive behaviors because their technological competence reduces the effort required for security vigilance and provides them with the confidence to engage with evolving security challenges even when experiencing stress.

Based on these arguments, we put forth this hypothesis:

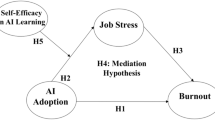

Hypothesis 5: Self-efficacy in AI use will buffer the negative effect of job stress on cybersecurity behavior, such that the negative relationship between job stress and cybersecurity behavior will be weaker for employees with high AI self-efficacy than for those with low AI self-efficacy (please see Fig. 1).

Theoretical model.

Methods

Participants and procedure

In this investigation, data were collected from a cohort of working adults, all 20 years of age or older, employed in a broad range of companies across South Korea. The study adopted a three-wave approach, wherein participants responded at three separate points in time. Potential participants were identified through a well-established online research service known for maintaining a sizeable panel of roughly 5.52 million registered respondents. As part of the platform’s enrollment procedure, individuals disclosed their current employment status; moreover, they were required to validate their identity by submitting authenticated mobile phone numbers or email addresses. The use of online surveys to obtain representative, heterogeneous samples has been affirmed in prior scholarly work (Landers and Behrend, 2015).

A central aim of this study design was to secure repeated measures from employed individuals in South Korean enterprises at three distinct intervals, thereby minimizing biases often tied to cross-sectional or single-wave data gathering. The online platform’s management tools enabled the research team to meticulously track participant engagement across each stage, ensuring consistency of respondent participation. To guarantee that participants had adequate time for thoughtful responses, the survey at each wave remained open for roughly 2–3 days, with 4- to 5-week intervals separating these data-collection windows. Furthermore, to enhance data credibility and prevent spurious or hasty submissions, the hosting research firm utilized various protective measures—such as geo-IP filtering—to identify suspiciously rapid survey completions and safeguard the reliability of the dataset.

Prospective respondents were approached directly by the research firm, which clearly communicated that participation was fully voluntary and that all collected data would remain confidential and would be used strictly for academic purposes. In alignment with prevailing ethical standards, participants provided informed consent before proceeding to the questionnaire items. As a token of gratitude, each fully participating individual received a financial honorarium in the range of US$8 to US$9 upon finishing the survey.

To reduce the likelihood of sampling bias, the research firm employed a stratified random sampling technique by segmenting the overall participant pool into demographic and professional strata (e.g., gender, age, position title, educational background, and industry) and then randomly selecting respondents from each stratum. Additionally, the survey provider consistently monitored participant engagement throughout the different data-collection waves, making certain that the same individuals contributed to each time point.

In the initial wave of data collection, the study captured responses from 729 employees, whereas the second wave involved 507 respondents, and the third wave garnered 376. Following these three collection phases, the researchers ran a thorough data-cleaning procedure to eliminate surveys with incomplete or inconsistent answers. Ultimately, 373 participants provided fully complete data at all three waves, yielding a final response rate of 51.17%. Our attrition rate from Wave 1 (n = 729) to Wave 3 (n = 376) was 48.4%, which falls within the expected range for three-wave organizational studies. To ensure that attrition did not bias our results, we conducted Little’s MCAR test, which was non-significant (χ² = 47.32, df = 42, p = 0.264), suggesting that data were missing completely at random. Additionally, logistic regression analysis revealed no significant differences between completers and non-completers on key demographic variables or Wave 1 measures of job insecurity (p > 0.05).

The decision regarding sample size was determined through a priori power analysis using G*Power 3.1.9.7 (Faul et al., 2009). For our primary analysis involving moderated mediation with structural equation modeling (SEM), we conducted power analysis for linear multiple regression with the following parameters: effect size f² = 0.15 (medium effect), α error probability = 0.05, power (1-β error probability) = 0.95, and number of predictors = 8 (including control variables, main effects, interaction term, and mediator). This analysis indicated a required sample size of 160 participants. The effect size of f² = 0.15 was chosen based on meta-analytic evidence of job insecurity’s effects on workplace outcomes (Cheng and Chan, 2008; Sverke et al., 2002), which typically show medium effect sizes. The high power criterion (0.95) was selected, given the practical importance of understanding cybersecurity behaviors in organizational contexts.

However, considering the complexity of our three-wave design and anticipated attrition rates, we aimed for a larger initial sample. Based on longitudinal studies reporting average attrition rates of 30–50% across multiple waves (Goodman and Blum, 1996), we targeted an initial sample of approximately 400–500 participants to ensure adequate power for our final analyses. Additionally, we followed Barclay et al.’s (1995) recommendation to secure a minimum of 10 observations per variable. With 22 observed variables in our model, this guideline suggested a minimum of 220 participants.

Our final sample of 373 participants who completed all three waves exceeds both the G*Power calculation (n = 160) and the 10-per-variable guideline (n = 220), providing adequate statistical power for testing our hypothesized relationships, including the moderated mediation model. This sample size also aligns with recommendations for SEM, which suggest 200–400 cases for models of moderate complexity (Kline, 2016).

To reduce the likelihood of sampling bias, the research firm employed a multi-stage stratified random sampling procedure. The stratification process was conducted as follows:

First, industries were grouped into eight major categories based on the Korean Standard Industrial Classification (KSIC): (1) Manufacturing (21.4% of final sample); (2) Services including retail, hospitality, and personal services (13.9%); (3) Construction (13.2%); (4) Health and welfare (16.9%); (5) Information services and telecommunications (7.5%); (6) Education (16.1%); (7) Financial/insurance (2.1%); and (8) Others including consulting, advertising, and professional services (8.8%). This categorization ensured representation across South Korea’s major economic sectors. The eight-category industry classification was chosen to balance granularity with practical considerations. While more detailed classifications exist, consolidating industries into these major groups allowed for sufficient sample sizes within each stratum while still capturing the diversity of work contexts where cybersecurity behaviors and AI adoption patterns might differ. For instance, manufacturing and construction were kept separate due to their distinct technological environments, while various service industries were grouped together as they share similar customer-facing orientations and digital infrastructure requirements.

Second, within each industry stratum, the research firm further stratified participants by: (a) Gender (targeting 50% male, 50% female to reflect workforce demographics); (b) Age groups (20–29, 30–39, 40–49, 50–59 years); (c) Position levels (staff, assistant manager, manager/deputy general manager, department/general manager or above); and (d) Educational background (high school or below, community college, bachelor’s degree, master’s degree or higher).

Third, the research firm calculated proportional sample sizes for each stratum based on Korean workforce statistics from the Korean Statistical Information Service (KOSIS). Random selection was then conducted within each stratum using the firm’s participant database numbering system, with every nth participant selected based on the required sample size for that stratum. This multi-stage approach ensured that our final sample (N = 373) maintained representativeness across multiple dimensions relevant to our research questions about job insecurity and technology use in the workplace.

While our stratified sampling approach enhanced representativeness, we acknowledge certain limitations. The consolidation of industries into eight categories may mask some sector-specific variations in job insecurity or AI adoption. Additionally, our sampling frame was limited to participants registered with the online research platform, which may underrepresent certain demographic groups, such as blue-collar workers in manufacturing or older employees less comfortable with online surveys (please see Table 1).

Measures

At the first time point, participants reported their extents of job insecurity and self-efficacy in AI use. At the second time point, participants were asked about their degree of job stress. During the third time point, data on cybersecurity behavior were collected. All constructs were measured using multi-item scales anchored on a five-point Likert format.

Job insecurity (time point 1)

The current study evaluated job insecurity by utilizing five items from Kraimer et al.’s (2005) measure. The items in this study included: “If my current organization were facing economic problems, my job would be the first to go,” “I will be able to keep my present job as long as I wish (reverse coded),” “I am confident that I will be able to work for my organization as long as I wish (reverse coded),” “Regardless of economic conditions, I will have a job at my current organization (reverse coded),”and “My job is not a secure one.” The Cronbach’s α value was 0.897.

Self-efficacy in AI use (time point 1)

To assess self-efficacy in AI use, we utilized the self-efficacy in AI use scale that adapted Bandura’s (2006) self-efficacy measure in the context of AI. This scale has been utilized in previous studies (Kim et al., 2024a, 2024b; Kim and Lee, 2025). The full items include: “I am confident in my ability to utilize artificial intelligence technology appropriately in my work when it is introduced,” “I am able to utilize artificial intelligence technology to perform my job well, even when the situation is challenging,” “I maintain the requisite competence to effectively integrate artificial intelligence technologies into my work processes,” “I am adept at overcoming various occupational challenges by leveraging artificial intelligence technologies throughout the course of my work,” and “Through the utilization of artificial intelligence technologies, I am able to successfully achieve the objectives set forth in my professional endeavors.” The Cronbach’s α value was 0.936.

Job stress (time point 2)

To measure job stress, this study incorporated six items from the job stress scale, modifying existing scales from prior research (DeJoy et al., 2010; Kim and Kim, 2024b; Motowidlo et al., 1986). Sample items included: “At work, I felt stressed during the last 30 days,” “At work, I felt anxious during the last 30 days,” “At work, I felt frustrated during the last 30 days.” The value of Cronbach’s alpha in this research was 0.899.

Cybersecurity behavior (time point 3)

This study measured cybersecurity behavior with six items derived from previously validated research (Bulgurcu et al., 2010; Kim and Kim, 2024a; Padayachee, 2012). The complete list of items included: “I maintain appropriate privacy/security settings, including on computers I use at work,” “I keep the antivirus software on my work computer updated,” “I observe and respond appropriately to unusual behavior/reactions on my work computer (e.g., computer slowdown or freeze, pop-up windows, and others),” “On computers I use at work, I don’t open attachments or links to unfamiliar URLs in emails from people I do not know,” “I don’t send sensitive information (account numbers, passwords, social security numbers, etc.) via email or social media from my work computer,” and “I back up important files on my work computer.” The Cronbach’s alpha value was 0.863.

Control variables

In line with the recommendations from prior studies (Bulgurcu et al., 2010; Kim and Kim, 2024a; Ifinedo, 2012), we included controls for various demographic and professional attributes—namely tenure, gender, occupational position, and educational level—to rule out their potential confounding effects on cybersecurity behavior. These control variables were collected during the first wave.

Statistical analysis

Following the data collection process, a Pearson correlation analysis was undertaken using SPSS version 26 to examine the interrelationships among the key constructs at the composite level. For hypothesis testing, we employed full SEM with latent variables using AMOS version 26 with maximum likelihood estimation. Specifically, we used item-level data where each latent construct was measured by its respective items: job insecurity (five items), self-efficacy in AI use (five items), job stress (six items), and cybersecurity behavior (six items). This approach allows for the simultaneous estimation of measurement error and structural relationships, providing more accurate parameter estimates than path analysis with aggregated variables (Anderson and Gerbing, 1988).

The subsequent analysis followed Anderson and Gerbing’s (1988) two-step approach for SEM. First, we conducted confirmatory factor analysis (CFA) to validate the measurement model with all 22 items loading on their respective four latent constructs. After establishing adequate measurement properties (including convergent and discriminant validity), we tested the structural model, examining the hypothesized relationships among the latent variables. This two-step SEM approach is superior to path analysis with composite scores as it accounts for measurement error and provides more accurate estimates of structural relationships (Hair et al., 2014).

Following contemporary recommendations for mediation analysis (Hayes, 2013), we tested both the total effect model (job insecurity → cybersecurity behavior) and the mediated model (job insecurity → job stress → cybersecurity behavior). This approach allows us to distinguish between the total effect of job insecurity on cybersecurity behavior and its direct effect when job stress is included as a mediator.

Model fit was judged using an assortment of indices, including the Comparative Fit Index (CFI), the Tucker–Lewis Index (TLI), and the Root Mean Square Error of Approximation (RMSEA). Based on widely accepted benchmarks, values exceeding 0.90 for both CFI and TLI, together with an RMSEA lower than 0.06, indicate satisfactory model fit. Additionally, to scrutinize the mediation hypothesis, we utilized bootstrapping with a 95% bias-corrected confidence interval (CI). Following Shrout and Bolger’s (2002) methodological guidance, a CI that does not cross zero emphasizes a significant indirect effect at the 0.05 level.

Given the non-significant bivariate correlation between job insecurity and cybersecurity behavior, we followed Shrout and Bolger’s (2002) recommendations for testing mediation in the absence of strong direct effects. This approach is particularly appropriate when theoretical rationale suggests that the relationship between variables operates through intervening mechanisms rather than directly. We used bootstrapping with 10,000 samples to test the significance of indirect effects, as this method does not require significant zero-order correlations and provides robust estimates even with suppression effects present (Hayes, 2013).

For testing moderation effects (Hypothesis 5), we employed the product indicator approach using mean-centered variables to create the interaction term (Aiken and West, 1991). Simple slope analysis was conducted to probe significant interactions by examining the relationship between the predictor and outcome at high (+1 SD) and low (−1 SD) levels of the moderator. The significance of slope differences was tested using the Johnson-Neyman technique to identify regions of significance (Hayes, 2013).

Results

Descriptive statistics

Our findings revealed that job insecurity, self-efficacy in artificial intelligence use, job stress, and cybersecurity behavior were strongly interrelated. The correlation analysis outcomes are displayed in Table 2.

The bivariate correlation between job insecurity and cybersecurity behavior was non-significant (r = −0.08, p > 0.05), which initially raised questions about the viability of further analysis. However, contemporary mediation analysis recognizes that significant indirect effects can exist even without significant zero-order correlations, particularly when suppression effects or opposing pathways are present (MacKinnon et al., 2000; Shrout and Bolger, 2002). We therefore proceeded with our planned analyses while remaining cognizant of this initial non-significant relationship.

Measurement model

We evaluated the discriminant validity of the four key research constructs (job insecurity, self-efficacy in artificial intelligence use, job stress, and cybersecurity behavior) by executing a CFA on all scale items to assess the measurement model’s fit.

The measurement model included four latent variables with their respective indicators. Job insecurity was measured by five items (e.g., “If my current organization were facing economic problems, my job would be the first to go”), self-efficacy in AI use by five items (e.g., “I am confident in my ability to utilize artificial intelligence technology appropriately in my work”), job stress by six items (e.g., “At work, I felt stressed during the last 30 days”), and cybersecurity behavior by six items (e.g., “I maintain appropriate privacy/security settings on computers I use at work”). All items were included in the SEM analysis, with each item loading on its respective latent construct. The measurement model showed excellent fit with all standardized factor loadings exceeding 0.70 and significant at p < 0.001.

We performed a sequence of chi-square difference tests, contrasting the four-factor model (incorporating job insecurity, self-efficacy in artificial intelligence use, job stress, and cybersecurity behavior) with multiple alternative models. These included a three-factor model (χ2 (df = 197) = 965.663, CFI = 0.848, TLI = 0.822, and RMSEA = 0.102), a two-factor model (χ2 (df = 199) = 1700.849, CFI = 0.703, TLI = 0.655, and RMSEA = 0.142), and a one-factor model (χ2 (df = 200) = 2371.848, CFI = 0.571, TLI = 0.504, and RMSEA = 0.171). The fit indices obtained demonstrated that the four-factor model delivered the most favorable fit indicators, registering χ2 (df = 194) = 338.196, CFI = 0.971, TLI = 0.966, and RMSEA = 0.045. Successive chi-square difference tests confirmed that these four constructs presented adequate discriminant validity.

Before testing our structural model, we examined whether to proceed despite the non-significant correlation between job insecurity and cybersecurity behavior. Following recent methodological guidance (Rucker et al., 2011), we proceeded for three reasons: (1) a strong theoretical rationale suggesting mediated rather than direct effects, (2) the possibility of suppression effects that mask relationships in bivariate correlations, and (3) the contemporary understanding that mediation can exist without significant total effects. Our subsequent results validated this decision by revealing a significant indirect effect through job stress.

Structural model

This manuscript devised a moderated mediation framework to inspect our hypotheses. This conceptual model integrated both mediation and moderation aspects. From the mediation standpoint, the effect of job insecurity on cybersecurity behavior was transmitted via the extent of job stress. Simultaneously, within the moderation perspective, self-efficacy in artificial intelligence use served as a contextual factor attenuating the decreasing impact of job stress on cybersecurity behavior.

In this moderation analysis, the interaction term for job stress and self-efficacy in artificial intelligence use emerged by multiplying the two variables. Before this multiplication, we mean-centered each variable to reduce multi-collinearity (Brace et al., 2003). Mean-centering not only curbed high intercorrelations but also prevented potential reductions in correlation coefficients, thereby bolstering the precision of our moderation examination.

In order to quantify any likelihood of multi-collinearity bias, the present investigation measured variance inflation factors (VIFs) and tolerances, in line with Brace et al. (2003). VIF values are frequently utilized in regression-based approaches (including SEM) (Hair et al., 2014; Kline, 2016) to detect multi-collinearity, which involves substantial correlations among predictor variables and can produce unstable or biased regression coefficient estimates (Alin, 2010). If the VIF value for a predictor variable exceeds 5 or 10, researchers often interpret that as serious multi-collinearity (Hair et al., 2014; Kline, 2016). In our model, both job stress and self-efficacy in artificial intelligence use had VIFs of 1.003, lying well below these usual thresholds. Accordingly, this finding suggests that multi-collinearity posed no major difficulties in our structural modeling, indicating stable, reliable estimates for these predictors as they relate to cybersecurity behavior.

By incorporating VIF to gauge multi-collinearity within our structural model, we furnished substantiation for the reliability and legitimacy of the observed relationships among the study constructs, reducing the possibility of alternative accounts. In SEM, particularly, multi-collinearity can misrepresent parameter estimates and hamper the ability to identify noteworthy effects (Kline, 2016). By openly reporting VIF values, we amplify the reproducibility and transparency of this analysis, allowing other scholars to judge the soundness of our findings, as well as draw comparisons with other studies. Likewise, the tolerance statistics for job stress and self-efficacy in artificial intelligence use were 0.997, suggesting that both predictor variables were largely unaffected by multi-collinearity issues—since VIF remained below 10, and tolerances topped 0.2.

Results of the mediation analysis

To test our hypotheses, we employed a two-step approach. First, we examined the total effect of job insecurity on cybersecurity behavior without the mediator. This total effect was marginally significant (β = −0.105, p = 0.051), providing partial support for Hypothesis 1.

Second, we tested the full mediation model by including job stress. In this model, the direct effect of job insecurity on cybersecurity behavior became negligible and non-significant (β = −0.027, p > 0.05), while the indirect effect through job stress was significant (indirect effect = −0.078, 95% CI = [−0.130, −0.026]).

To pinpoint the most appropriate mediation framework, we ran a chi-square difference test comparing a full mediation model to a partial mediation model. The full mediation design mirrored the partial mediation design, with the exception that the direct path between job insecurity and cybersecurity behavior was absent. Both the full and partial mediation models showed adequate fit: for the full mediation model, χ2 = 342.005 (df = 209), CFI = 0.961, TLI = 0.953, and RMSEA = 0.041; and for the partial mediation model, χ2 = 339.414 (df = 208), CFI = 0.962, TLI = 0.954, and RMSEA = 0.041.

Nonetheless, the chi-square difference test supported the full mediation model because the chi-square difference (Δχ2 [1] = 2.591, p > 0.05) between the two designs was non-significant. This implies that job insecurity affects cybersecurity behavior indirectly, as opposed to exerting a direct influence, with job stress serving as the intervening variable. The study further included control variables (e.g., tenure, gender, educational level, and occupational position) with respect to cybersecurity behavior. Results indicated that tenure and educational level were statistically relevant, whereas neither gender nor position produced significant effects.

Once controls were integrated, our model revealed that job insecurity was non-significantly related to cybersecurity behavior (β = −0.027, p > 0.05), which led to the dismissal of Hypothesis 1. The partial mediation model, illustrating a path from job insecurity to cybersecurity behavior, also yielded an insignificant coefficient, reinforcing the choice of the full mediation model as the preferred approach. Consequently, Hypothesis 1 was rejected, signifying that the mechanism linking job insecurity to cybersecurity behavior transpires via indirect channels—namely, job stress—instead of a straightforward, direct link.

The findings substantiated Hypothesis 2, demonstrating that job insecurity significantly intensifies job stress (β = 0.293, p < 0.001), as well as Hypothesis 3, showing that job stress exerts a meaningful, diminishing impact on cybersecurity behavior (β = −0.265, p < 0.001).

While our cybersecurity behavior measure encompasses both mandatory compliance and proactive engagement elements, post-hoc examination of individual items suggests differential impacts. Items reflecting proactive behaviors (e.g., “observing and responding to unusual behavior,” “backing up important files”) showed stronger negative relationships with job stress (average r = −0.26) compared to mandatory compliance items (e.g., “maintaining privacy settings,” “not opening unfamiliar attachments”; average r = −0.18). Although these differences were not formally tested due to measurement limitations, they suggest that stress particularly undermines the voluntary, resource-intensive aspects of cybersecurity behavior. These outcomes are displayed in Table 3 and Fig. 2.

***p < 0.001; all values are standardized. The dashed line indicates a non-significant path.

Bootstrapping

In order to assess Hypothesis 4—predicting that job stress mediates the relationship between job insecurity and cybersecurity behavior—we used bootstrapping with a large sample (n = 10,000), conforming to Shrout and Bolger’s (2002) recommendations. For the indirect effect of job stress to be deemed statistically significant, the 95% bias-corrected CI for the mean indirect effect must not include zero (Shrout and Bolger, 2002).

Furthermore, to assess job stress as the mediating link from job insecurity to cybersecurity behavior (i.e., Hypothesis 4) in a more rigorous fashion, we once again employed the aforementioned bootstrapping approach. The 95% bias-corrected CI for the average indirect effect did not intersect with zero (95% CI = [−0.130, −0.026]), indicating that the mediation effect of job stress was significant. This robustly supports the argument set forth in Hypothesis 4 and emphasizes the intricate connections among the variables. The complete breakdown of direct, indirect, and total effects from job insecurity to cybersecurity behavior is laid out in Table 4.

In summary, our analyses revealed: (1) a marginally significant total effect of job insecurity on cybersecurity behavior (β = −0.105, p = 0.051), (2) a non-significant direct effect when job stress was included (β = −0.027, p > 0.05), and (3) a significant indirect effect through job stress (β = −0.078, p < 0.01). This pattern supports full mediation, indicating that job insecurity influences cybersecurity behavior entirely through its impact on job stress.

Result of the moderation analysis

To test Hypothesis 5, which predicted that self-efficacy in AI use would moderate the relationship between job stress and cybersecurity behavior, we examined the interaction term between job stress and AI self-efficacy. Our analysis revealed that this interaction term was significant (β = 0.258, p < 0.001), supporting Hypothesis 5. Specifically, the negative relationship between job stress and cybersecurity behavior was weaker for employees with high AI self-efficacy than for those with low AI self-efficacy. This pattern indicates that AI self-efficacy serves as a protective buffer, helping employees maintain cybersecurity behaviors even when experiencing elevated stress levels.

Simple slope analysis

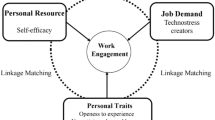

To further examine the nature of the moderation effect, we conducted simple slope analysis following Aiken and West (1991). We tested the relationship between job stress and cybersecurity behavior at high (+1 SD) and low (−1 SD) levels of AI self-efficacy. The results revealed that the negative relationship between job stress and cybersecurity behavior was significant for employees with low AI self-efficacy (β = -0.384, p < 0.001), but this relationship was attenuated and only marginally significant for employees with high AI self-efficacy (β = −0.101, p = 0.058). The difference between these slopes was statistically significant (t = 3.67, p < 0.001), confirming the buffering effect of AI self-efficacy. As illustrated in Fig. 3, employees with high AI self-efficacy maintained relatively stable cybersecurity behavior levels even under high stress conditions, whereas those with low AI self-efficacy showed substantial deterioration in cybersecurity behavior as stress increased.

The graph shows the relationship between job stress and cybersecurity behavior at high (+1 SD) and low (−1 SD) levels of AI self-efficacy. Simple slopes analysis revealed a significant negative relationship for low AI self-efficacy (β = −0.384, p < 0.001) and a non-significant relationship for high AI self-efficacy (β = −0.101, p = 0.058).

Figure 3 presents the interaction pattern, clearly showing how AI self-efficacy moderates the stress–cybersecurity behavior relationship. The diverging slopes indicate that the detrimental effect of job stress on cybersecurity behavior is significantly weaker for employees with high AI self-efficacy.

Discussion

This study clarifies how job insecurity affects cybersecurity behavior through job stress and identifies AI self-efficacy’s buffering role. By examining how job insecurity, job stress, AI self-efficacy, and cybersecurity behavior interrelate, this research provides nuanced perspectives on the intersection of psychological strain, advanced technological environments, and employees’ protective conduct. Below, we discuss our findings in the context of the hypotheses and previous scholarly work.

Hypothesis 1: The non-significant direct influence of job insecurity on cybersecurity behavior

Our results for Hypothesis 1 showed a marginally significant total effect of job insecurity on cybersecurity behavior (β = −0.105, p = 0.051), which was weaker than anticipated. This aligns with recent meta-analytic evidence suggesting that job insecurity’s behavioral effects are often modest and primarily operate through intervening mechanisms (Sverke et al., 2019). When we included job stress as a mediator in our model, the direct effect of job insecurity on cybersecurity behavior became negligible and non-significant (β = −0.027, p > 0.05), while the indirect effect through job stress was significant (β = −0.078, p < 0.01). This pattern of results—a marginally significant total effect that becomes non-significant when the mediator is included, coupled with a significant indirect effect—indicates full mediation (Baron and Kenny, 1986; Hayes, 2013). This suggests that job insecurity does not directly influence cybersecurity behavior but rather operates entirely through its effect on job stress.

One plausible explanation grounded in COR theory (Hobfoll, 1989) is that while job insecurity can indeed threaten one’s psychological resources, employees may not always channel their anxiety into either neglect or over-performance regarding security measures. The non-significant direct effect may also reflect the complex nature of cybersecurity behavior, which encompasses both mandatory compliance and voluntary proactive engagement. While job insecurity might reduce proactive security efforts, employees may maintain basic compliance to avoid sanctions, resulting in a mixed effect that appears non-significant in aggregate. This interpretation is supported by our full mediation findings, which show that when job insecurity creates stress, it then significantly reduces cybersecurity behavior. The initial non-significant correlation thus masks a more nuanced indirect relationship that becomes apparent only when examining the mediating role of job stress.

The non-significant bivariate correlation between job insecurity and cybersecurity behavior (r = −0.08), combined with a marginally significant total effect in SEM (β = −0.105, p = 0.051) and a significant indirect effect through job stress, illustrates an important methodological point. This pattern—often called inconsistent mediation or suppression—occurs when the direct and indirect effects have different signs or when the mediator accounts for variance that suppresses the direct relationship (MacKinnon et al., 2000). Rather than indicating the absence of a relationship, this pattern suggests that job insecurity’s influence on cybersecurity behavior operates entirely through psychological mechanisms, specifically job stress, rather than through a direct pathway.

Our finding of non-significant direct effects but significant indirect effects through job stress also helps rule out alternative mechanisms. If employees were primarily withdrawing effort due to anticipated exit, we would expect to see significant direct effects of job insecurity on cybersecurity behavior, potentially mediated by reduced organizational identification or motivation. Instead, our full mediation through job stress suggests that resource depletion, rather than motivational withdrawal, is the primary mechanism. This aligns with meta-analytic evidence showing that job insecurity’s behavioral effects operate predominantly through stress and strain rather than through reduced commitment or identification (Cheng and Chan, 2008).

Hypotheses 2 and 3: Job insecurity increases job stress, and job stress reduces cybersecurity behavior

Consistent with the COR theory and our integrated theoretical framework, our findings showed that job insecurity significantly heightened employees’ job stress (H2), which, in turn, negatively impacted cybersecurity behavior (H3). These results validate the idea that perceived threats to continued employment are a salient driver of resource depletion, leading to strain that curtails the cognitive energy and motivation required for sustained cyber-safe routines (Halbesleben et al., 2014). Our findings suggest that job stress particularly undermines the proactive aspects of cybersecurity behavior—those voluntary efforts that go beyond minimum compliance to actively protect organizational assets. Indeed, tasks such as actively monitoring for new threats, promptly reporting suspicious activities, and staying updated on evolving security protocols demand constant vigilance. Under stress, employees tend to divert focus toward more pressing or immediate job-retention issues—thereby reducing their engagement from comprehensive security participation to minimal compliance, or even resulting in compliance failures. Our findings suggest that job stress particularly undermines the proactive aspects of cybersecurity behavior—those voluntary efforts that go beyond minimum compliance to actively protect organizational assets. Indeed, tasks such as actively monitoring for new threats, promptly reporting suspicious activities, and staying updated on evolving security protocols demand constant vigilance. Under stress, employees tend to divert focus toward more pressing or immediate job-retention issues—thereby reducing their engagement from comprehensive security participation to minimal compliance, or even resulting in compliance failures. This outcome aligns with earlier studies suggesting that psychological strain can erode proactive or compliance-oriented behaviors in technologically demanding contexts (Califf et al., 2020).

Hypothesis 4: The mediating role of job stress in the job insecurity–cybersecurity behavior relationship

Our data supported a full mediation model in which the effect of job insecurity on cybersecurity behavior was carried entirely by job stress. This means that job insecurity did not by itself yield a significant direct path to cyber-protective conduct; rather, its adverse effect emerged only when employees experienced elevated levels of stress. The result extends previous research that generally recognized the negative impact of job insecurity on well-being and performance (Nam, 2019), but did not always account for the specific mechanism linking these constructs to cybersecurity practices. Grounded in COR theory, the finding implies that when employees worry about potential job loss, the depletion of resources (e.g., emotional stability, focused attention) manifests as heightened job stress, which ultimately disrupts their ability to maintain comprehensive cybersecurity engagement, particularly the proactive behaviors that require discretionary effort. Notably, because no direct effect remained after including job stress in the structural model, the study emphasizes that perceived job instability alone does not invariably translate into riskier cyber conduct; rather, it is the stressful “interpretation” and internalization of that insecurity that diminishes employees’ security-related actions.

Hypothesis 5: Self-efficacy in AI use moderates the influence of job stress on cybersecurity behavior

Our results showed that self-efficacy in AI use significantly moderated the link between job stress and cybersecurity behavior, thus supporting our Hypothesis 5. Employees who reported stronger confidence in their AI-related competencies maintained higher levels of cybersecurity behavior even when experiencing elevated job stress. This aligns with the perspective that domain-specific self-efficacy can operate as a psychological resource, preserving performance capacity even when other resources are depleted (Bandura, 2012; Kim et al., 2024a). Specifically, our findings suggest that AI self-efficacy is particularly important for maintaining the proactive aspects of cybersecurity behavior under stress—those voluntary efforts that are most vulnerable to stress-induced degradation. As depicted in Fig. 3, the moderating effect of AI self-efficacy demonstrates a classic buffering pattern. While employees with low AI self-efficacy show a steep decline in cybersecurity behavior as stress increases (from 4.12 to 3.28 on our 5-point scale), those with high AI self-efficacy maintain relatively consistent cybersecurity behavior levels (from 4.08 to 3.87) even under high stress conditions.

By contrast, employees with low self-efficacy in AI use showed a stronger negative relationship between stress and cybersecurity behavior, suggesting that they lack the technological resources needed to maintain comprehensive security practices under stressful conditions. For these employees, stress appears to reduce their security efforts from proactive engagement to mere compliance, or even below compliance levels, as they struggle to manage security tasks that feel cognitively demanding without the benefit of strong technological competencies.

The simple slope analysis provides nuanced insights into how AI self-efficacy operates as a buffer. The significant slope for low AI self-efficacy (β = −0.384) indicates that these employees experience approximately a 0.38 standard deviation decrease in cybersecurity behavior for each standard deviation increase in job stress. In contrast, the non-significant slope for high AI self-efficacy (β = −0.101) suggests that these employees’ cybersecurity behaviors remain relatively stable despite experiencing stress. This 74% reduction in the stress-behavior relationship magnitude (from −0.384 to −0.101) demonstrates the substantial protective effect of AI self-efficacy.

This finding addresses the important theoretical question of where AI self-efficacy exerts its protective influence in the job insecurity-stress–cybersecurity behavior chain. Rather than preventing stress formation (which would occur regardless of technological competence when job security is threatened), AI self-efficacy helps employees maintain critical security behaviors despite experiencing stress. This positioning is theoretically more coherent, as it recognizes that job insecurity—as a general employment threat—would universally generate stress, while the ability to cope with that stress in maintaining specific technological behaviors would depend on relevant competencies like AI self-efficacy.

Synthesis and theoretical considerations

Taken together, these results illustrate a nuanced process model where job insecurity in a rapidly transforming, AI-rich environment influences cybersecurity behavior through a two-stage process. First, job insecurity generates psychological stress as employees grapple with threats to their employment stability—a relationship that appears universal regardless of technological competencies. Second, this stress typically undermines cybersecurity behavior, particularly its proactive dimensions, by depleting the cognitive and emotional resources needed for comprehensive security engagement. However, this second link is critically moderated by AI self-efficacy, which provides a technological resource buffer that helps maintain both compliance and proactive security behaviors even under stress. By positioning AI self-efficacy as a second-stage moderator, our model recognizes both the inevitability of stress responses to job threats and the importance of specific competencies in maintaining performance despite that stress. Importantly, our findings suggest that AI self-efficacy is particularly critical for preserving proactive security behaviors—those voluntary, discretionary efforts that distinguish truly effective cybersecurity from mere compliance. While organizational mandates may ensure basic compliance even under stress, it is the proactive dimension that AI self-efficacy most effectively protects, highlighting the strategic value of technological competencies in maintaining comprehensive security engagement.

Theoretical implications

This study advances the body of knowledge on cybersecurity behavior, job insecurity, and related psychological processes through four major theoretical contributions.

First, by foregrounding job insecurity as a critical antecedent of cybersecurity behavior, this investigation responds to recent calls for greater integration of social and psychological dimensions into cybersecurity research (Brougham and Haar, 2020; Shoss, 2017). Although earlier works explored how workload and other stressors adversely affect employees’ adherence to security protocols, scholarship had yet to thoroughly examine the impact of uncertainty about continued employment on cyber-related conduct (Kim and Kim, 2024a). By demonstrating that employees who feel insecure about their job stability may withhold the cognitive and emotional resources needed for comprehensive security measures, this study pushes theoretical boundaries in occupational psychology, where job insecurity has traditionally been linked to outcomes such as turnover intentions or lowered job performance but not systematically tied to cybersecurity compliance. This expanded scope emphasizes the importance of recognizing that technological upheavals, including rapid AI-driven innovations, inherently foster greater job instability, thereby heightening the salience of job insecurity for security-related behavior.

In addition, by positioning job insecurity as a hindrance stressor rather than a traditional job demand within the JD-R framework, this study contributes to the evolving understanding of how different types of workplace stressors influence employee outcomes. This reconceptualization acknowledges that job insecurity represents a psychological perception arising from various organizational and environmental factors, rather than an objective job characteristic. This distinction is theoretically important as it recognizes the subjective nature of job insecurity while still accounting for its resource-depleting effects comparable to traditional job demands.

Second, this research contributes to cybersecurity literature by distinguishing between mandatory compliance and proactive engagement in security behaviors. While previous research often treated cybersecurity behavior as a unitary construct, our findings suggest that stress differentially impacts these two dimensions. This distinction has important theoretical implications, as it suggests that different psychological mechanisms may govern compliance (driven by external mandates and sanctions) versus proactive engagement (driven by intrinsic motivation and available cognitive resources). This nuanced view aligns with broader organizational behavior literature on in-role versus extra-role behaviors (Organ, 1988) and extends it to the cybersecurity domain.