Abstract

Second language writing is a challenging skill often hindered by high cognitive demands and writing anxiety, which negatively affect learner confidence and performance, highlighting the urgent need to investigate how emerging AI tools can support and improve this process. Therefore, this study adopts a mixed-methods design to examine the influence of AI chatbots on English language learners’ writing proficiency, with a focus on writing anxiety, perceived usefulness, and writing outcomes. Quantitative analyses investigated the predictive role of perceived usefulness and anxiety, alongside moderating variables such as gender, academic level, English proficiency, frequency of AI use, and self-reported confidence. The qualitative component involved thematic analysis of open-ended responses to explore learners’ perceptions, benefits, challenges, and intentions regarding AI chatbot use in writing. Data were collected from 387 Saudi university students with prior experience using AI chatbots for English writing tasks. Results indicated that perceived usefulness significantly predicted improved writing outcomes, while writing anxiety remained a relevant factor. Demographic variables such as gender and academic level showed no significant effects, but English proficiency and frequency of AI tool use influenced learner perceptions. Thematic findings highlighted benefits including fluency enhancement, idea generation, speed, and personalized feedback, while concerns centered on over-reliance and repetitive responses. These findings underscore the importance of considering learners’ perceived usefulness when integrating AI chatbots into writing instruction. Adaptive designs are needed to address varying proficiency levels and to support learners with persistent anxiety or avoidance behaviors. Moreover, understanding the drivers of frequent AI tool use, such as task relevance and feedback quality, can inform more effective implementation. Educators should promote balanced use of AI tools to enhance writing development while preserving learners’ independent writing skills.

Similar content being viewed by others

Introduction

Second language (L2) writing presents unique challenges for learners who must not only master the mechanics of writing but also navigate cultural and idiomatic expressions unique to English. Writing in an L2 involves a complex interplay of linguistic, cognitive, and affective factors, which can exacerbate feelings of anxiety and hinder performance (Bailey & Almusharraf, 2022; Baker & Boonkit, 2004; Maarof & Murat, 2013). This anxiety is compounded by avoidance behavior, where learners may deliberately avoid writing tasks to escape the discomfort associated with these negative emotions (Cheng, 2004). Such behaviors can significantly impede the development of writing proficiency and overall language acquisition.

L2 students often struggle with anxiety, particularly when it comes to academic writing assignments (Cheng, 2004; Horwitz et al., 1986). Concerns about making grammatical errors, effectively communicating ideas, and meeting instructors’ expectations can create a significant barrier to successful language production (Bailey & Almusharraf, 2022). This anxiety not only undermines learners’ confidence but also impedes their meaningful engagement in academic writing activities (Cheng, 2004; Daly, 1978). Interestingly, the research on the impact of L2 writing anxiety on learners’ performance and written output has been limited (MacIntyre & Wang, 2022; Papi et al., 2022). Only in the last few years have researchers started investigating the relationship between L2 writing anxiety, writing tasks, task implementation, and learners’ written output (Abdi Tabari et al., 2024).

To tackle this challenge, researchers and educators have explored the potential of Artificial Intelligence (AI) chatbot technology as a viable solution (Chen, 2022; Hsu et al., 2023). AI chatbots, powered by natural language processing algorithms, can mimic human-like dialogues and offer learners personalized feedback and support (Imran & Almusharraf, 2023). Through interactive writing exercises and immediate, non-judgmental assistance, AI chatbots can create a more supportive learning environment (Bettayeb et al., 2024). Research suggests that when learners perceive AI tools as useful, they are more likely to engage with them, which can lead to improved learning outcomes (e.g., Chen et al., 2020; Fokides & Atsikpasi, 2018; Yan, 2023; Yang et al., 2024). In addition to writing anxiety and perceived usefulness, individual differences such as gender, academic level, language proficiency, and frequency of AI use may shape learners’ experiences and benefits from AI-assisted learning (Almusharraf & Bailey, 2023; Almusharraf & Bailey, 2025; Tsai et al., 2024; Wei et al., 2023). A comprehensive understanding of these factors is needed to inform effective integration of AI chatbots into L2 writing instruction.

Although existing studies have examined the general impact of AI chatbots on language learning (e.g., Chen et al., 2020; Okonkwo & Ade-Ibijola, 2021; Wang et al., 2022; Yang & Kyun, 2022), limited attention has been given to the specific interplay between learners’ writing anxiety, perceived usefulness of AI tools, and perceived writing outcomes within the context of L2 writing instruction. Writing anxiety, as an affective variable, has been shown to influence learners’ willingness to engage in writing tasks and their overall performance (Cheng, 2004). Meanwhile, the Technology Acceptance Model (TAM) (Davis, 1989) underscores perceived usefulness as a critical determinant of technology adoption and learning success, a relationship that has been supported in digital language learning contexts (Chen et al., 2020; Fokides & Atsikpasi, 2018; Kelly et al., 2022; Liu & Ma, 2023). Despite the potential for these constructs to interact in complex ways, there is a paucity of studies that examine them collectively in AI-assisted L2 writing environments. While initial research, such as that by Aydin and Zeinolabedini (2024), suggests that AI tools can enhance motivation and alleviate anxiety, the specific dynamics of this relationship remain largely unexplored.

To address this gap, the present study aims to offer a comprehensive analysis of how these key variables interact, contributing to a deeper understanding of the cognitive and affective factors that shape learners’ experiences with AI-assisted writing. Additionally, the study examines demographic factors (e.g., gender, university level) and learner characteristics (e.g., English proficiency, self-reported confidence, frequency of AI tool use) given prior evidence that these factors can influence both technology perceptions and affective responses (Gasaymeh et al., 2024; Sanusi et al., 2022; Stöhr et al., 2024; Yeh et al., 2021; Yilmaz et al., 2023). By doing so, the current research provides valuable implications for scholars and educators engaged in technology-enhanced language instruction, particularly in L2 writing contexts. Accordingly, this study is guided by the following research questions:

RQ1. What are the levels of learners’ anxiety, the perceived usefulness of AI chatbots, and their perceived writing outcomes?

RQ2. How do perceived usefulness and writing anxiety as predictors contribute to the variance in learners’ perceived writing outcomes when utilizing AI chatbots?

RQ3. How do demographic factors such as gender and university level correlate with anxiety, perceived usefulness, and perceived writing outcomes?

RQ4. How do English proficiency level, self-reported confidence, and frequency of AI tool use correlate with anxiety, perceived usefulness, and perceived writing outcomes?

RQ5: How do learners perceive the benefits and challenges of using AI chatbots for language learning, and how do these perceptions influence their intentions to continue using these chatbots for developing English writing skills?

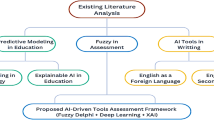

Literature review

The integration of AI chatbots into language learning, particularly in the context of L2 writing, has garnered significant attention in recent research. This section explores the intersection of three key areas: L2 writing anxiety, the role of conversational AI chatbots in L2 writing, and the application of the TAM in understanding learners’ perceptions and outcomes. This literature review critically examines these dimensions, laying the groundwork for a comprehensive analysis of AI chatbot usage in L2 writing.

L2 writing anxiety

Many L2 learners experience writing anxiety, which significantly hinders language development (Aydin & Zeinolabedini, 2024; Huang et al., 2024; Okonkwo & Ade-Ibijola, 2021; Yılmaz & Üstünel, 2025). This anxiety often arises from difficulties with grammar, vocabulary, and organization (Huang et al., 2024) and leads to heightened apprehension when composing in a foreign language (Papi & Khajavy, 2023; Yılmaz & Üstünel, 2025). Its consequences include avoidance of writing tasks (Papi & Khajavy, 2023), reduced motivation (Aydin & Zeinolabedini, 2024), and diminished self-efficacy (Rahimi & Fathi, 2022).

Grounded in Horwitz et al.’s (1986) foreign language anxiety framework, L2 writing anxiety involves negative self-perceptions, apprehension, and avoidance behaviors in response to writing demands. To capture this construct, Cheng (2004) developed the Second Language Writing Anxiety Inventory (SLWAI), which comprises three subscales: somatic anxiety (physiological arousal), cognitive anxiety (worry about negative evaluation), and avoidance behavior (resistance to writing).

The present study focuses on cognitive anxiety and avoidance due to their pronounced impact on L2 writing. Cognitive anxiety reflects persistent worries about inadequacy, mistakes, and negative feedback, which undermine confidence and risk-taking in writing (Cheng, 2004). Cheng further identifies it as a causal factor in poor performance. Avoidance behavior, meanwhile, reflects deliberate efforts to evade writing tasks through procrastination, reluctance to participate, or avoiding writing-intensive courses (Cheng, 2004).

Research confirms that high writing anxiety negatively affects performance (Okonkwo & Ade-Ibijola, 2021; Yılmaz & Üstünel, 2025). Waer (2021) emphasizes the emotional and academic costs of both internal factors (e.g., fear of criticism) and external ones (e.g., instructional practices, evaluative comments). Daly (1978) distinguishes between low-anxiety writers, who often find joy in writing, and high-anxiety writers, who avoid practice and feedback, limiting skill development. Consequently, anxious writers’ texts are shorter, less developed, and contain more errors (Liu & Ni, 2015; Sabti et al., 2019). This avoidance cycle further impedes overall progress in English writing competence.

L2 writing and conversational AI chatbots

Proficient writing skills are critical for L2 learners to communicate ideas effectively, achieve academic success, and excel professionally (Yoon, 2011). Yet, L2 writing instruction often faces constraints such as limited time and the demand for individualized feedback. Technology integration in English language classrooms can mitigate these challenges and enhance learning (Barrot, 2023; Rahimi & Fathi, 2022; Söğüt, 2024; Wang et al., 2022).

Conversational AI chatbots, notably ChatGPT, provide personalized, immediate, and interactive support that can improve proficiency and reduce writing anxiety (Liu & Ma, 2023). Studies demonstrate that such tools facilitate coherent and cohesive writing by offering instant feedback, grammatically correct alternatives, and opportunities for reflection on writing strengths and weaknesses (Barrot, 2023; Liu et al., 2021; Yan, 2023; Tseng & Warschauer, 2023). Imran and Almusharraf’s (2023) review identifies five benefits of ChatGPT in academic writing: efficiency, idea generation, language translation, error reduction, and collaboration.

By enabling learners to compare their work with AI-generated outputs, chatbots foster awareness of proficiency, vocabulary, and style, promoting targeted revisions (Liu et al., 2021; Song & Song, 2023; Tsai et al., 2024). When combined with teacher instruction, they can further enhance learning outcomes (Yang & Kyun, 2022; Wang et al., 2022). Chen et al. (2020) found that timely, personalized chatbot feedback significantly improved student performance.

Beyond corrective feedback, AI chatbots can tutor learners by suggesting prompts, organizing ideas, and enhancing clarity, particularly for those struggling with structure (Song & Song, 2023). Immediate feedback can reduce evaluation-related anxiety (Okonkwo& Ade-Ibijola, 2021) and increase motivation (Aydin & Zeinolabedini, 2024). Simulating real-life conversational contexts, they create safe, engaging spaces for experimentation (Godwin-Jones, 2021; Nazari et al., 2021; Tseng & Warschauer, 2023), prevent writing blocks (Zhao, 2023), and support high-quality text production (Guo et al., 2022). Tsai et al. (2024) found that ChatGPT-assisted revisions shifted score distributions toward higher grades, with the greatest gains among initially low-performing students, suggesting a particular advantage for those facing greater writing challenges.

While AI tools are designed to enhance writing skills and alleviate common challenges faced by learners, emerging evidence suggests a complex relationship between their use and writing anxiety. Aydin and Zeinolabedini (2024) observed that the integration of AI tools in educational contexts not only enhances student motivation but also plays a role in alleviating anxiety associated with writing tasks. In a related study, Yılmaz and Üstünel (2025) examined how participants’ perceptions of AI in language learning correlate with their levels of writing anxiety in a L2. Their findings, derived from Spearman’s Rank-Order Correlation test, revealed a slight relationship between these two variables, although it was not statistically significant (rs(133) = 0.132, p = 0.128). This indicates that while there may be some connection between perceptions of AI usage and writing anxiety, it is not strong enough to warrant definitive conclusions.

In contrast, Yu (2024) identified a significant positive correlation between the frequency of AI-assisted writing and increased writing anxiety among Chinese university students. This suggests that, contrary to the intended supportive role of AI, frequent use may inadvertently exacerbate anxiety for some learners. Conversely, Liu (2024) explored the relationship between writing anxiety and AI tool utilization, finding that students with higher levels of writing anxiety are more likely to seek AI assistance in their writing tasks. This indicates a complex dynamic where anxiety may drive the use of AI as a coping mechanism.

Additionally, Shen and Tao (2025) found that self-efficacy in using AI for writing could help mitigate writing anxiety. Their research suggests that learners who feel confident in their ability to use AI tools experience lower levels of anxiety, emphasizing the importance of fostering positive attitudes toward AI in educational contexts. These insights highlight the multifaceted relationship between AI tools, writing anxiety, and learners’ perceptions, suggesting that while AI can be a valuable resource, its impact on anxiety levels may vary depending on individual experiences and confidence in using these technologies.

Furthermore, the literature also highlights potential limitations and concerns regarding the use of AI-assisted writing tools like ChatGPT. Key issues include risks of cheating and compromised academic integrity (Kostka & Toncelli, 2023), impeded language learning and development (Alharbi, 2023; Yan, 2023), potential for plagiarism (Alharbi, 2023; Yan, 2023), and over-reliance on AI at the expense of critical thinking skills (Barrot, 2023; Teng, 2024). Experts caution that while ChatGPT provides valuable writing assistance, it cannot fully replicate the expertise and nuanced understanding of human instructors, particularly in identifying and addressing complex errors such as those related to deep structure and pragmatic aspects of writing (Al-Garaady & Mahyoob, 2023).

Therefore, the successful integration of these AI-powered writing assistance chatbots necessitates a thoughtful approach in which learners carefully craft prompts based on their understanding of the technology’s capabilities and limitations to elicit quality, relevant text and subsequently critically evaluate and selectively incorporate the generated content into their own writing (Liu et al., 2021). Instructors must navigate the fine line between encouraging students to utilize these chatbots effectively as learning aids and ensuring that their use does not cross the boundary into unacceptable academic practices (Tseng & Warschauer, 2023).

The technology acceptance model

The TAM, introduced by Fred Davis in 1989, is a foundational framework in the field of information systems that aims to explain and predict user behavior towards technology adoption. TAM suggests that an individual’s intention to use a technology, and their subsequent actual usage, is primarily determined by two key factors: perceived usefulness and perceived ease of use. Perceived usefulness refers to the degree to which a person believes that using a particular system would improve their job performance, while perceived ease of use is the extent to which a person believes that using the system would require minimal effort (Davis, 1989).

In this study, the focus was solely on perceived usefulness. Perceived usefulness has consistently been shown to be a strong predictor of technology adoption and user satisfaction in various contexts (Yan, 2023; Yang et al., 2024). By concentrating on perceived usefulness, the aim was to capture the participants’ perception of the practical value and effectiveness of the AI chatbot in enhancing their L2 writing skills, which was the central focus of this research. Perceived usefulness posits that when learners regard educational technologies, such as conversational AI chatbots, as useful, they are more likely to utilize these resources, thereby enhancing their learning outcomes (Yan, 2023; Yang et al., 2024). This relationship is grounded in the idea that technologies that effectively support learners’ goals and improve performance are more readily accepted and utilized. Notably, research by Chen et al. (2020) and Fokides and Atsikpasi (2018) has demonstrated substantial positive correlations between the perceived usefulness of AI and learning achievement. Moreover, Liu and Ma (2023) found that L2 students who recognized the usefulness of ChatGPT for extracurricular language learning were more likely to engage regularly with this tool. This suggests that recognizing the usefulness of AI chatbots can not only increase their usage but also facilitate their broader integration into language education frameworks.

Furthermore, Kelly et al. (2022) recognized perceived usefulness as a critical determinant influencing the behavioral intention to employ AI chatbots. This behavioral intention reflects how likely individuals are to adopt and continue using these technologies based on their perceived benefits. Furthermore, an individual’s attitude, which signifies their inherent predisposition toward a particular activity, markedly impacts their behavioral intentions. Chen et al. (2020) found a significant positive correlation between attitude and variables such as perceived ease of use, perceived usefulness, and familiarity with AI chatbots. These factors collectively play a pivotal role in shaping future intentions to engage with these technologies.

To conclude, the TAM provides a useful lens for understanding learners’ interaction with AI chatbots in language learning contexts, with perceived usefulness playing a central role in shaping users’ behavioral intentions and technology adoption (Davis, 1989). In parallel, research on L2 writing has shown that writing anxiety, particularly cognitive anxiety, can hinder learners’ engagement and performance (Cheng, 2004). These cognitive and affective dimensions are not independent: learners’ anxiety levels may shape their perceptions of AI tools’ usefulness, while positive perceptions may, in turn, alleviate anxiety. Existing studies suggest that AI tools can both improve writing performance and reduce anxiety (Chen et al., 2020; Fokides & Atsikpasi, 2018), yet findings remain mixed (Aydin & Zeinolabedini, 2024; Liu, 2024; Yu, 2024). This study integrates TAM with the construct of writing anxiety to explore their interaction in AI-assisted writing environments, addressing an underexamined intersection and offering a richer framework for understanding how such tools influence learners’ perceived writing outcomes.

Methods

Research design

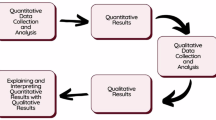

This study employed a mixed-methods approach to investigate the multifaceted impacts of AI chatbots on language learning, particularly focusing on writing proficiency. A mixed-methods approach was chosen to capture both the measurable relationships among key variables and the in-depth learner experiences that quantitative data alone could not reveal. The quantitative component examined levels of anxiety, perceived usefulness, and perceived writing outcomes of AI chatbots among learners. It also examined how perceived usefulness and writing anxiety as predictors contribute to the variance in learners’ perceived writing outcomes when utilizing AI chatbots and how various participant characteristics, including demographic factors (e.g., gender, university level) and other relevant variables such as English proficiency, frequency of AI tool usage, and self-reported confidence, moderated these relationships.

Additionally, the qualitative component investigated learners’ perceptions of the benefits and challenges of using AI chatbots and how these perceptions influenced their intentions to continue using these tools for developing English writing skills. Both datasets were collected concurrently and analyzed separately. Integration occurred at the interpretation stage, where findings were compared and synthesized to triangulate results and enhance the validity of the conclusions.

Participants

The participants of this study were English language learners from two public universities in Riyadh, Saudi Arabia, who had experience using AI chatbots for writing assistance. The sample included a total of 387 participants, with 342 females and 45 males, selected through purposive sampling. Purposive sampling was employed to include participants with prior experience in AI-assisted writing, ensuring that the data collected were directly relevant to the study’s focus on L2 writing in AI-supported contexts, while also capturing variation in proficiency levels, ages, and academic backgrounds. Eligible participants were identified through coordination with instructors and academic departments and invited to take part in the study. The majority of participants were between 19-24 years old. The average age of the participants was 22.9 years (SD = 3.76). Academically, the participants’ grade point averages (GPAs) ranged from 2.14 to 5.00, with an average of 4.26 (SD = 0.53). The participants self-reported their English proficiency, with an average score of 7.39 (SD = 1.72) on a scale ranging from 1 (beginner) to 10 (expert). The participants came from diverse academic majors, including Engineering and Technology, Business and Economics, Health and Science, Language and Linguistics, and Media and Communication. The sample consisted of 18 first-year students, 27 second-year students, 78 third-year students, and 264 fourth-year.

The sample, while exhibiting a predominance of female participants, nonetheless provided a representative cross-section of Saudi L2 learners. Despite the skewed gender distribution, the diverse composition of the sample, in terms of age, academic performance, and program of study, ensured the findings would be generalizable to the broader population of Saudi students engaged in English language learning.

AI chatbot usage and cleaning protocol

A total of 396 participants completed the survey; nine were excluded due to no prior AI-assisted writing experience and being identified as outliers (via Mahalanobis distance and Cook’s distance). The final sample consisted of 387 participants, all of whom had engaged in AI-assisted writing. Assumption checks indicated that the latent factor indicators met normality criteria, with skewness and kurtosis values within the acceptable range of -1.0 to +1.0 (George, 2011). Sampling adequacy was high (KMO = 0.953), and Bartlett’s Test of Sphericity was significant, χ²(253) = 5731.377, p < 0.001, confirming sufficient intercorrelation among variables. Commonalities exceeded the recommended 0.50 threshold (Kline, 2015), supporting the suitability of the data for parametric analysis.

Participants were provided with examples of AI tools such as ChatGPT, Poe, and others, but no distinction was made between specific tools during the study. This approach allowed participants to choose a chatbot they were familiar with or preferred, reflecting authentic, real-world usage patterns. While this design introduces variability in the specific tools used, the focus of the study was on the general effects of AI chatbot assistance on L2 writing, rather than on comparing specific platforms.

The questionnaire

The data collection instrument comprised three sections (see supplementary material accompanying this paper). The first section was designed to elicit demographic information from the participants, including their gender, age, academic field of study, university level, frequency of AI usage, self-reported English language proficiency scores, and self-reported confidence level.

The second section incorporated a series of closed-ended items utilizing a Likert-type scale format. This approach aimed to quantify the participants’ perspectives on the variables of interest, ensuring the scales were contextually relevant to current L2 instructional practices and aligned with the broader trends observed in language education research. All items within the questionnaire employed a five-point Likert-type scale, with response options ranging from “never” (1) to “always” (5).

The scale measuring the perceived improved writing outcome of using AI chatbots in L2 writing included 11 items and was derived from an extensive review of previous research on pre-writing, while-writing, and post-writing strategies (Bailey & Almusharraf, 2022; Baker & Boonkit, 2004; Maarof & Murat, 2013). The 11 items illustrate how AI chatbots can support pre-writing, while-writing, and post-writing strategies, thereby enhancing writing proficiency outcomes. In the pre-writing stage, AI chatbots help with vocabulary acquisition and idea generation. During the while-writing stage, they provide real-time feedback, correct errors, offer style suggestions, assist with grammar, and enable comparisons with AI-generated examples to improve the writing process. In the post-writing stage, AI chatbots contribute to overall writing improvement, help overcome language barriers, provide relevant resources for refinement, and enhance writing skills through reflective feedback. This comprehensive support across all writing stages demonstrates the effectiveness of AI chatbots in developing writing proficiency.

The 11 items measuring perceived usefulness were informed by Davis’s Technology TAM (1989) and a recent study by Almusharraf & Bailey (2025) on the use of machine translation tools in language learning. One original item from the Almusharraf & Bailey’s (2025) study stated, “Machine translation websites are helpful for doing English assignments.” This was adapted for the current study to read, “AI Chatbots (e.g., ChatGPT, Poe) are useful for English assignments.” Moreover, other items were aligned with current debates in educational contexts (Imran & Almusharraf, 2023; Teng, 2024; Tseng & Warschauer, 2023), focusing on AI’s usefulness in providing personalized feedback, adapting to individual writing needs, enhancing motivation and engagement, reducing cognitive load, and minimizing the effort required for writing tasks.

The writing anxiety scale comprised 10 items adapted from Cheng’s (2004) cognitive anxiety and avoidance behavior scale. Some items were retained in their original form, such as “I’m afraid that the other students would deride my English composition if they read it,” while others were modified. For instance, an original item stated, “I usually do my best to avoid writing English compositions,” and was adapted to, “I do my best to avoid writing English compositions.”

Table 1 presents the internal consistency of three key variables measured in the study. All three variables demonstrated very strong internal consistency, as indicated by the high Cronbach’s alpha values, ensuring the reliability of the measures used in the study.

The third section included three open-ended questions aimed to collect qualitative data on participants’ in-depth experiences and thoughts. The three open-ended questions were:

-

1.

What benefits have you gained from using AI chatbots (ChatGPT, Poe, etc.) for English writing?

-

2.

What challenges have you faced when using AI chatbots (ChatGPT, Poe, etc.) for English writing?

-

3.

Do you plan to continue to use AI chatbots (ChatGPT, Poe, etc.) for English writing? Why or why not?

To ensure the validity of the questionnaire, it was subjected to evaluation by four doctoral-level instructors with specialization and extensive teaching experience in Applied Linguistics. Based on the experts’ feedback, several revisions were made to enhance the clarity, coherence, and relevance of the questionnaire items. Items identified as ambiguous were reworded to improve precision and ensure they were easily understood by the target population. Redundant items, those that overlapped in content or measured the same construct without adding value, were either revised for distinction or removed entirely to reduce respondent fatigue and improve the overall efficiency of the instrument. These modifications aimed to enhance both the content validity and the reliability of the questionnaire prior to pilot testing.

Following this expert validation, a pilot test was conducted using a convenience sample of 20 participants. This sample size was deemed appropriate for a preliminary assessment, as it allowed for the identification of potential issues related to item clarity, response time, and overall survey functionality without requiring the resources of a full-scale administration. Participants reported that the survey was easy to navigate and that the items were clear and understandable. Minor adjustments were made based on their feedback, including rewording two items to improve clarity and modifying the Likert scale layout for greater consistency. Additionally, the average completion time was recorded to ensure that the length was manageable and did not contribute to survey fatigue. These refinements enhanced the instrument’s usability and ensured that it would yield reliable data during the main study.

Furthermore, the validity of the survey was strongly supported by the results of the Exploratory Factor Analyses (EFA) conducted on the various constructs measured. The factor loadings for the “Improved Writing Outcome” items ranged from 0.61 to 0.81, with communalities between 0.43 and 0.66, indicating a good underlying factor structure. Similarly, the EFA for “Perceived Usefulness” revealed distinct factors with loadings between 0.63 and 0.73 and communalities ranging from 0.55 to 0.85, further validating the construct. For “Cognitive Writing Anxiety,” factor loadings were notably strong, ranging from 0.53 to 0.86, with communalities between 0.63 and 0.81, while the “Avoidance” items exhibited high factor loadings (0.84 to 0.91) and communalities (0.70 to 0.83). These results demonstrate good construct validity, confirming that the items effectively measure the intended dimensions.

Questionnaire administration

The questionnaire was electronically administered through Google Forms. Students were notified that the questionnaire was designed for research purposes related to informing pedagogical practices involving generative AI. They were allotted 20 minutes to complete the survey, based on the average completion time observed during the pilot study, where participants typically required 15 to 20 minutes to finish all items. Participation was anonymous, and students were informed that they could withdraw from the survey at any time without penalty. However, no cases of attrition were recorded, and all participants who began the survey completed it in full.

Data analysis

The data analysis for this study employed both quantitative and qualitative methods to comprehensively examine the impacts of AI chatbots on L2 writing. The quantitative data collected from the closed-ended survey items were analyzed using the Statistical Package for the Social Sciences (SPSS). Descriptive statistics, including means and standard deviations, were computed to provide a detailed summary of the data. Additionally, correlation analyses were performed to explore the relationships between various variables, such as the perceived usefulness of AI chatbots, improved writing outcomes, and anxiety levels.

The study used multiple regression analysis to examine the relationship between two predictor variables (Writing Anxiety and Perceived Usefulness) and a single outcome variable (Improved Writing Outcome). The analysis aimed to determine the extent to which the predictor variables explained the variance in the outcome variable. The key aspects of the multiple regression analysis were calculating the R-squared value, conducting an F-test to evaluate the overall model significance, and estimating and evaluating the statistical significance of the coefficients for each predictor variable. This analysis allowed the researcher to understand the combined and individual effects of Writing Anxiety and Perceived Usefulness on Improved Writing Outcome.

The qualitative data derived from the open-ended survey questions were subjected to thematic analysis to uncover deeper insights into learner perceptions and experiences. The responses were systematically coded to identify recurring themes and patterns. The thematic analysis adhered to Braun and Clarke’s (2006) six-phase framework, which involves familiarization with the data, generating initial codes, searching for themes, reviewing themes, defining and naming themes, and producing the report. An inductive, data-driven approach was employed, allowing themes to emerge organically from participants’ responses rather than being based on predetermined categories. The responses were systematically read and re-read, and initial codes were generated to capture key ideas, attitudes, and concerns expressed by participants. These codes were then grouped based on conceptual similarity, and candidate themes were developed by identifying patterns across related codes. Themes were refined through iterative review to ensure internal coherence and distinctiveness, and clear definitions were assigned to each theme. A detailed documentation of the qualitative thematic analysis is provided in the supplementary material accompanying this paper.

To ensure the reliability of the qualitative analysis, interrater reliability was assessed using Cohen’s Kappa to evaluate consistency between two coders. A random subset of 30% of the data was independently coded, yielding a Kappa value of 0.84, indicating excellent agreement (Landis & Koch, 1977). Discrepancies were resolved through discussion, and the coding scheme was refined before analyzing the full dataset.

Results

The results section of this study presents findings on learners’ experiences and perceptions of AI chatbots for enhancing English writing skills. The data, derived from a survey of 387 participants, offer insights into the psychological and practical impacts of these tools on language learning. Below, the findings are organized into five subsections, each addressing a specific research question to provide a comprehensive understanding of the different dimensions of AI tool usage in language learning contexts.

Levels of anxiety, perceived usefulness, and writing outcomes

In response to Research Question 1 concerning the levels of learners’ anxiety, the perceived usefulness of AI chatbots, and their perceived writing outcomes, the survey data indicate varied perceptions among participants. As illustrated in Table 2 the average score for perceived writing outcomes was 3.79, reflecting a positive evaluation of AI tools in enhancing writing skills. The perceived usefulness of these chatbots was also rated favorably at 3.59, demonstrating that participants generally regard AI as beneficial for their writing endeavors. In contrast, writing anxiety scored lower at an average of 2.84, suggesting that learners experience moderate anxiety when using AI for writing regardless of AI usage.

The analysis of participants’ perceptions of AI chatbots in improving writing outcomes and their usefulness reveals generally positive feedback. Participants reported that AI chatbots, such as ChatGPT and Poe, significantly improved their writing skills (M = 3.81, SD = 1.14) and helped overcome language barriers (M = 3.84, SD = 1.13). The chatbots were also seen as effective in correcting errors (M = 3.84, SD = 1.15) and providing valuable feedback (M = 3.71, SD = 1.21), with similar ratings for offering helpful suggestions (M = 3.84, SD = 1.15) and providing useful resources (M = 3.73, SD = 1.20). Furthermore, participants acknowledged improvements in their writing after receiving feedback (M = 3.61, SD = 1.24) and learning new English words and phrases (M = 3.82, SD = 1.18). The highest rating in the writing outcome was for enabling the expression of more ideas in English (M = 4.00, SD = 1.07). Regarding the perceived usefulness of AI chatbots, participants found them particularly useful for writing English essays (M = 4.04, SD = 1.07) and English assignments (M = 4.02, SD = 1.07), and in saving time (M = 4.23, SD = 0.96). However, the usefulness ratings were lower for using AI chatbots to minimize the need for writing (M = 3.13, SD = 1.31) and for reducing reliance on their level of English proficiency (M = 2.89, SD = 1.36). Overall, AI chatbots were seen as helpful chatbots for enhancing writing skills and supporting language learning, with high ratings for their utility in various writing-related tasks.

Furthermore, participants expressed varying levels of concern and behaviors related to writing anxiety. The highest level of anxiety was related to worrying about receiving a poor grade, with a mean score of 3.97 (SD = 1.19), indicating that participants “usually” to “always” experience this concern. In terms of avoidance behaviors, participants reported moderate tendencies to avoid writing tasks, with mean scores of 2.32 (SD = 1.36) for avoiding writing compositions, 2.57 (SD = 1.39) for selecting alternatives to writing, and 2.19 (SD = 1.29) for avoiding situations that require writing. The lowest score was observed for excusing oneself from writing tasks, with a mean of 2.01 (SD = 1.34), suggesting that while avoidance behaviors are present, they are less frequent compared to cognitive anxieties (see Table 3). These findings indicate that while cognitive aspects of writing anxiety are prevalent among participants, avoidance behaviors occur less frequently, though they are still a notable response to writing-related stress.

Impact of usefulness and anxiety on writing outcomes

A multiple regression analysis was conducted to examine the contributions of writing anxiety and perceived usefulness to improved writing outcomes among learners using AI chatbots. The model demonstrated strong explanatory power, with the predictors collectively accounting for 67% of the variance in improved writing outcomes (R² = 0.67), which can be interpreted as a large effect size. The overall regression model was significant, F(2384) = 400.63, p < 0.001. However, when examining the individual predictors (see Table 4), perceived usefulness emerged as a strong and statistically significant contributor to improved writing outcomes (β = 0.52, p < 0.001), while writing anxiety was not significant predictor (β = –0.08, p = 0.09). The relatively high β value for perceived usefulness indicates a large effect size, suggesting that learners’ perceptions of the tool’s utility play a far more influential role in shaping their writing outcomes than their anxiety levels. In other words, a substantial portion of the variance in perceived writing improvement can be attributed to how useful participants find AI chatbots, highlighting the importance of technological acceptance over affective barriers such as anxiety in this context.

This result is also supported by the correlation in Table 5. Improved writing outcome has a strong, positive, and statistically significant correlation with perceived usefulness (r = 0.82, p < 0.001), which can be interpreted as a large effect size according to Cohen’s (2013) standards. This finding indicates that participants who find AI chatbots useful are more likely to report improved writing. Additionally, writing anxiety shows a positive correlation with both improved writing outcomes (r = 0.25, p < 0.001) and perceived usefulness (r = 0.36, p < 0.001). While the correlation between writing anxiety and improved writing outcomes represents a medium effect size, the correlation with perceived usefulness indicates a large effect size (Cohen, 2013). This suggests that higher levels of writing anxiety are associated with both an increased recognition of the usefulness of AI tools and a greater likelihood of reporting improved writing outcomes.

Influence of demographic factors

To answer the third research question, the impact of demographic variables, specifically gender and university level, was analyzed, as these were the only demographic factors examined in the study. The results revealed minimal differences across these subgroups. Specifically, gender comparisons and university level both showed negligible associations (eta values ranging from 0.03 to 0.10), indicating minimal impact on the outcomes. Furthermore, the results of the ANOVA analysis indicate that there are no statistically significant differences in improved writing outcome, perceived usefulness, and writing anxiety across the different university levels (first year, second year, third year, and fourth year). The p-values for all three variables were greater than the commonly used significance level of 0.05, suggesting that the university level of the participants does not have a significant influence on these outcomes. Although detailed subgroup descriptive statistics were not calculated, the overall data suggested consistent patterns across demographic groups, with no evident trends or disparities in learner responses. This indicates that gender and academic level did not meaningfully affect learners’ experiences or perceptions of AI chatbot use in writing.

English proficiency, self-reported confidence, and frequency of AI tool usage

The correlation analysis in Table 5 shows that English proficiency level is negatively correlated with perceived usefulness (r = −0.16, p = 0.001) and writing anxiety (r = −0.25, p < 0.001), indicating that higher proficiency is associated with lower perceived usefulness of AI chatbots and lower writing anxiety. Additionally, the analysis revealed that confidence is not significantly correlated with improved writing outcome (r = −0.02, p = 0.78) or perceived usefulness (r = −0.05, p = 0.33), indicating no meaningful relationship. However, confidence shows a moderate negative correlation with writing anxiety (r = −0.34, p < 0.001), suggesting that higher confidence is associated with lower writing anxiety. In contrast, frequency of usage demonstrates a moderate positive correlation with both improved writing outcome (r = 0.38, p < 0.001) and perceived usefulness (r = 0.39, p < 0.001), indicating that more frequent use of AI chatbots is linked to better writing outcomes and higher perceived usefulness.

Perceptions of benefits, challenges, and intention to use

To answer the fifth research question, qualitative insights regarding the benefits and challenges of using AI chatbots for language learning were explored. The thematic analysis discussed how these perceptions influenced learners’ intentions to continue utilizing these chatbots to enhance their English writing skills, providing a detailed narrative of user experiences and expectations.

Initially, the analysis synthesized a range of user experiences, underscoring the primary benefits that AI chatbots offer in enhancing writing proficiency. The key themes included speed and convenience, immediate feedback, vocabulary and grammar reinforcement, customized learning experiences, and additional academic support. AI chatbots offered rapid access to information and instant feedback, making the writing process more efficient and less time-consuming. One user noted, “It’s saved me lots of time and effort, helping me analyze things more easily than searching online.” The instant corrections and suggestions from AI chatbots were invaluable for users to quickly learn from their mistakes and improve their writing skills, with one respondent mentioning, “I always use it to give me feedback on my writing if my instructor isn’t available.” Additionally, AI chatbots helped users expand their vocabulary and reinforce grammar rules by introducing new words, providing definitions, and demonstrating correct usage in context. A user shared, “I’ve started learning more vocabulary because the bot uses a variety of terms and has improved my grammar and punctuation.” They also offered personalized learning experiences tailored to individual needs and skill levels, enhancing the effectiveness of the learning process, as highlighted by a user who remarked, “The chatbot exposed me to new vocabulary and encouraged me to use new words in my writing.” Furthermore, AI chatbots provided substantial academic support by assisting with paraphrasing, summarizing, and evaluating writing, which was essential for academic success, as one student mentioned, “It’s like having a tutor available 24/7.”

Despite the benefits, several challenges arose from using AI chatbots in language learning. The key themes included repetitive responses and lack of originality, inaccuracies and misinformation, handling of complex language and terminology, dependency and over-reliance, and technical and financial limitations. The generation of repetitive or redundant responses was a crucial issue reported by many learners, which hindered learning new language structures and writing styles. Users expressed this concern, saying, “Sometimes there is repetition,” and “It could repeat the same idea over and over.” Inaccuracies and misinformation also posed significant problems, as one user noted, “Sometimes we find misinformation, so you should not rely on it completely,” and another added, “One of the most exasperating challenges I’ve faced when using AI is that it sometimes provides me with inaccurate information.” Additionally, AI chatbots struggled with complex language and idiomatic expressions, leading to confusion and requiring simplifications or multiple queries for clarification. Feedback included, “Sometimes it uses complex terms that are not understood,” and “The AI chatbot may provide a response that is unrelated to my topic.” There was also concern about dependency on AI, potentially limiting critical thinking and self-learning, as stated by a user, “Reliance on using AI chatbot may limit the person’s ability to think and brainstorm.” Technical issues and the cost of advanced features further restricted access to comprehensive functionalities. Users reported, “Slow response time,” and “Good AI tools need money and subscriptions.”

User intentions to continue using AI chatbots for English writing varied, influenced by their experiences with the technology. Most users planned to continue due to the benefits in vocabulary expansion, writing assistance, and time efficiency. One user highlighted, “Yes, because it helps me improve my writing skills and adds creative ideas,” and others affirmed, “Yes, it’s very helpful!”, “Yes, is non-judgmental and supportive”, and “Yes, it encourages continuous learning and curiosity.” However, some users hesitated or decided against further use due to dissatisfaction with the support provided and concerns about accuracy, as one noted, “I don’t think so… it shows wrong information sometimes.” Additionally, there was a preference among some users for relying on personal effort rather than technology, emphasizing independent skill development. One user expressed, “I prefer to depend entirely on myself and my own efforts,” highlighting the importance of self-directed learning, while another noted, “Yes and no, depending completely on it is wrong and will weaken my writing, thinking abilities so I will use it only for rephrasing my work or sentences for better word choice.”

Discussion

The findings of this study offer valuable insights into learners’ perceptions and experiences with AI chatbots for English writing. The survey data revealed diverse perceptions among participants, with an average score of 3.79 for perceived writing outcomes, indicating a generally positive evaluation of AI tools in enhancing writing skills. In line with previous research, participants of the current study particularly viewed AI chatbots, such as ChatGPT and Poe, as highly effective in improving writing quality, providing valuable feedback, correcting errors, and offering helpful suggestions (Barrot, 2023; Liu et al., 2021; Rahimi & Fathi, 2022; Söğüt, 2024; Wang et al., 2022; Yan, 2023; Yılmaz & Üstünel, 2025). Notably, participants of the current study reported significant improvements in their ability to express ideas and overcome language barriers.

The perceived usefulness of these chatbots also received a favorable rating of 3.59, demonstrating that participants consider AI beneficial for saving time and supporting various writing tasks, making them valuable aids in the writing process. Within the TAM framework (Davis, 1989), this perception directly aligns with the notion that perceived usefulness is a primary driver of technology adoption and sustained use. The positive correlation between perceived usefulness and writing outcomes in this study reinforces TAM’s core proposition; learners are more likely to integrate and benefit from a tool when they believe it will enhance their performance.

This finding underscores the motivational role of perceived usefulness in shaping learner engagement and perceived progress, even when anxiety is present. Qualitative responses supported this relationship, with many participants noting that AI chatbots helped them generate ideas, improve fluency, and receive timely feedback; factors that increased their confidence and sense of progress. Although writing anxiety was a weaker predictor, its persistence suggests that affective barriers remain relevant in technology-mediated writing. This aligns with previous research showing that while technological tools can mitigate certain writing challenges, they do not eliminate them (Barrot, 2023; Liu et al., 2021; Yan, 2023, Yılmaz & Üstünel, 2025). Therefore, addressing anxiety through targeted instructional strategies remains important alongside promoting the perceived usefulness of AI tools.

However, the study also highlighted concerns about over-reliance on AI chatbots. While participants acknowledged the benefits of using AI chatbots, lower ratings were given for items related to reducing the need for cognitive engagement or relying less on one’s language proficiency. These concerns have been echoed by numerous researchers (e.g., Al-Garaady and Mahyoob, 2023; Barrot, 2023; Kostka & Toncelli, 2023; Alharbi, 2023; Teng, 2024; Yan, 2023). Importantly, learners themselves recognize that while AI chatbots are helpful, they should not replace the deeper cognitive processes essential for language learning. Over-reliance on AI tools may limit opportunities for learners to engage in independent problem-solving, reflection, and active language production; processes that are critical for developing long-term writing proficiency and linguistic competence (Barrot, 2023; Teng, 2024). These insights highlight the need for a balanced pedagogical approach, in which AI chatbots are integrated as complementary tools rather than substitutes for core learning activities. Such a balance ensures that the benefits of AI are maximized without undermining the learner’s ability to internalize language structures, develop original thought, and cultivate essential academic writing skills.

The study also found that the average score for writing anxiety was 2.84, suggesting that learners experience moderate anxiety when writing. This finding aligns with previous research on the persistent challenges L2 writers face in overcoming writing anxiety (Cheng, 2004; Daly, 1978; Liu & Ni, 2015; Rahimi & Fathi, 2022; Sabti et al., 2019; Waer, 2021; Yılmaz & Üstünel, 2025). It is important to note here that while cognitive aspects of writing anxiety, such as worry, self-doubt, and negative thoughts about writing, are widespread among participants, avoidance behaviors, such as procrastination or avoiding writing tasks altogether, are less commonly observed. This indicates that although many learners experience significant mental and emotional distress related to writing, they do not necessarily resort to avoidance as a primary coping mechanism. However, the fact that avoidance behaviors are still present, though less frequent, highlights their role as a notable, if secondary, response to writing-related stress. This dual pattern suggests that while learners may struggle internally with anxiety, they often push through these feelings rather than avoiding writing tasks entirely, which could have important implications for how writing anxiety is addressed in educational settings.

Moreover, the results suggest that perceived usefulness plays a crucial role in mediating the impact of writing anxiety on learners’ writing outcomes. The significant coefficient for perceived usefulness (p < 0.001) indicates that it substantially contributes to the prediction of improved writing outcomes, while writing anxiety does not significantly contribute to the model (p = 0.26). This highlights the critical importance of perceived usefulness in determining the effectiveness of AI chatbots for enhancing writing skills.

This suggests that the functional value learners attribute to AI chatbots may mediate or even overshadow the influence of cognitive anxiety on performance. Drawing on Cheng’s (2004) conceptualization, cognitive anxiety, marked by worry and negative self-assessment, often leads to avoidance behaviors that hinder performance. However, in the current context, AI chatbots may buffer against such avoidance tendencies by providing immediate, nonjudgmental feedback and reducing the cognitive load associated with generating and organizing ideas. This supportive interaction likely enables learners to engage more fully with the writing task, thereby diminishing the performance impact of anxiety.

One possible explanation for this discrepancy is the context of the current study, where participants interacted with AI writing tools. The supportive features of these tools may alleviate writing anxiety, allowing learners to focus on the writing process without the usual stressors associated with traditional writing tasks (Aydin & Zeinolabedini, 2024). Furthermore, the perceived usefulness of AI chatbots may instill a sense of confidence in users, empowering them to overcome their anxieties. As noted by Chen et al. (2020) and Fokides & Atsikpasi (2018), the perceived usefulness of AI writing tools strongly predicts improved learning outcomes, suggesting that when learners believe these tools can enhance their writing, they are more likely to engage positively with the writing process.

The study also examined the influence of demographic factors on key variables. Results revealed that English proficiency level had a small, negative, and statistically significant correlation with both perceived usefulness (r = −0.16, p = 0.001) and writing anxiety (r = −0.25, p < 0.001). This suggests that higher proficiency is associated with lower perceived usefulness of AI tools and lower writing anxiety, aligning with previous research indicating that more proficient L2 writers may perceive AI tools as less useful compared to their less proficient counterparts (Almusharraf & Bailey, 2023; Tsai et al., 2024; Wei et al., 2023).

Research examining the influence of demographic factors and academic levels on the adoption of AI tools presents varied findings. Yilmaz et al. (2023) found overall positive perceptions of ChatGPT among university students, with males reporting higher self-efficacy and no grade-level differences. Males also rated themselves as more confident in AI knowledge (Yeh et al., 2021) and viewed AI suggestions as more valuable, while females rated AI-generated information as less useful (Araujo et al., 2020). Stöhr et al. (2024) found that male students reported higher usage, more positive attitudes, and less concern with AI chatbots.

The current study observed similar mean scores across different genders and university levels, suggesting that students, regardless of gender or year of study, perceive the impact of AI chatbots on their writing and their associated anxiety levels consistently. This consistency could indicate that the benefits and challenges of using AI tools for writing are broadly experienced by students at all stages of their university education. Similarly, Gasaymeh et al. (2024) indicated that gender and educational level have minimal effects on familiarity, concerns, and perceived benefits related to generative AI writing tools. As these tools become increasingly integrated into academic settings, it is likely that differences in technology adoption among demographic groups will continue to diminish.

Furthermore, the negligible effects observed in the present study could also be attributed to methodological factors. The sample composition was relatively homogeneous, predominantly female (88.4%) and largely composed of fourth-year students (68.2%), with small subgroup sizes for males (n = 45) and first-year students (n = 18). Such uneven distributions restrict variability and reduce the statistical power to detect small subgroup differences (Cohen, 2013). Moreover, any potential demographic influences may be indirect, operating through mediating factors such as prior AI experience, language proficiency, or frequency of chatbot use; when these proximal predictors are accounted for, the direct contribution of demographic characteristics may appear minimal. The minimal impact of demographic factors on learning outcomes aligns with broader educational research, suggesting that learners’ competencies in AI education might play a more significant role than demographic variables (Sanusi et al., 2022).

The results also indicate that while confidence helps reduce writing anxiety, it does not directly contribute to perceived improvements in writing or the usefulness of AI chatbots. Conversely, frequent use of AI chatbots is clearly linked to better writing outcomes and higher perceived usefulness, suggesting that regular engagement with these chatbots plays a critical role in enhancing writing performance. This finding is consistent with Stöhr et al. (2024), who highlighted the importance of frequent engagement with AI chatbots in shaping students’ perceptions and learning outcomes.

Moreover, while some studies, such as those by Yu (2024), suggest that frequent use of AI tools may inadvertently increase writing anxiety, others, like Liu (2024), highlight that students with greater anxiety may turn to AI for support. This presents a complex dynamic: on one hand, increased reliance on AI tools can lead to heightened anxiety for some learners; on the other, those experiencing anxiety may seek out AI assistance as a coping mechanism.

This dual perspective indicates that the impact of AI tools on writing anxiety is not straightforward. While frequent use can enhance writing performance, it may also be a response to existing anxiety rather than a solution to it. The current study thus demonstrates that factors such as proficiency level and frequent use of technology can be more influential than demographic variables in shaping learners’ perceptions of usefulness and learning outcomes in AI-assisted language learning. This complexity underscores the need for further research to disentangle these relationships and better understand how AI tools can be optimized to support learners in diverse contexts.

The thematic analysis of user experiences further illuminated the benefits and challenges of using AI chatbots for language learning. Key benefits identified included speed and convenience, immediate feedback, vocabulary and grammar reinforcement, customized learning experiences, and additional academic support. These findings are consistent with existing research on the potential of AI-powered language learning chatbots to enhance writing skills and provide personalized support (Barrot, 2023; Liu et al., 2021; Rahimi & Fathi, 2022; Söğüt, 2024; Wang et al., 2022; Yan, 2023). The integration of advanced functionalities, such as translation, paraphrasing, and grammar-checking, within conversational AI tools significantly enhances learners’ perceptions of their usefulness. These features serve as valuable thinking partners, offering personalized support tailored to individual needs (Tseng & Warschauer, 2023). This personalized assistance boosts learners’ confidence and motivation in English writing tasks, reduces cognitive load, and alleviates stress, thereby streamlining the writing process. Consequently, learners who perceive these chatbots as useful are likely to experience improvements in their writing proficiency and overall language skills.

However, the study also identified several challenges, including repetitive responses, lack of originality, inaccuracies and misinformation, difficulty handling complex language and terminology, dependency and over-reliance, as well as technical and financial limitations. These challenges underscore the need for continued refinement and improvement of AI-based language learning chatbots to address the complexities of language acquisition and writing development, as emphasized in previous research (e.g., Kostka & Toncelli, 2023; Alharbi, 2023; Teng, 2024; Yan, 2023). Ultimately, addressing these challenges will be crucial for maximizing the potential benefits of AI chatbots in educational settings.

Furthermore, the thematic analysis reinforced minimal differences across gender and academic level, with students across demographic groups citing similar benefits (e.g., improved fluency, speed, and idea generation) and concerns (e.g., over-reliance, repetitive responses). This convergence may indicate that the benefits and challenges of AI-assisted writing are broadly experienced across learner profiles, and that factors such as English proficiency and frequency of AI tool use are more salient in shaping perceptions and outcomes than gender or academic level.

In sum, this study offers a significant contribution to the growing field of AI-assisted language learning by providing large-scale, empirical evidence on how EFL learners engage with AI chatbots for writing support. Crucially, it identifies perceived usefulness of AI chatbots as a strong predictor of improved writing outcomes, while also revealing that frequent AI use does not significantly mitigate writing anxiety; an insight that challenges assumptions about the affective benefits of AI tools. Moreover, the findings on avoidance and cognitive anxiety dimensions highlight the psychological complexities of AI use in writing contexts, especially among learners with lower confidence or proficiency. By clarifying the relationships between writing anxiety, perceived usefulness, and writing outcomes, this study fills a key empirical gap and offers educators a data-driven framework to integrate AI tools without diminishing essential language acquisition processes. This balance between leveraging AI’s benefits and preserving the cognitive rigor of writing instruction marks a critical step forward in designing pedagogically sound, technology-enhanced language learning environments.

Implications

The findings from this study provide significant insights into the integration and impact of AI chatbots in English language learning, emphasizing a series of pedagogical and research implications. Pedagogically, educators and developers need to create more customized AI chatbots that address individual learning needs across different proficiency levels. Such customization could range from advanced grammar assistance for beginners to more targeted feedback on style and coherence for advanced learners. Barrot (2023) suggests that a beneficial approach for using ChatGPT in second-language writing instruction is to have students first generate their own original written outputs and then refine and improve those outputs using the language model. This methodology aligns with the need for tailored AI chatbots, striking a balance between cultivating independent writing abilities and benefiting from the strengths of AI.

Additionally, the findings have important implications for learners themselves. Students can independently optimize their use of AI chatbots by adopting them as scaffolding tools, seeking feedback, clarifying grammar and structure, and using them for brainstorming, while ensuring they remain actively engaged in the cognitive processes of writing. Developing learner awareness about when and how to rely on AI tools is essential for fostering autonomy and preventing overdependence. Furthermore, given the link between confidence and reduced anxiety, pedagogical strategies should utilize AI chatbots to bolster learner confidence through personalized feedback that not only corrects but also affirms learners’ strengths, thus enhancing their self-efficacy. Also, given the association between frequent use of AI tools, better writing outcomes, and higher perceived usefulness, it could be valuable to investigate what drives frequent use of AI tools and how this usage interacts with other factors like motivation, task complexity, and writing-related feedback.

Furthermore, the finding that cognitive anxiety significantly outweighs avoidance behaviors suggests that interventions must first tackle negative thought patterns while also providing strategies to prevent task avoidance. Educators are encouraged to craft support systems that not only help students manage cognitive stress but also promote perseverance in their writing endeavors. This insight highlights the importance of instructional strategies that blend cognitive support with practical techniques to counter avoidance. By addressing both the mental and behavioral aspects of writing anxiety, educators can more effectively boost writing skills and alleviate learner stress.

From an ethical standpoint, institutions should establish clear guidelines that promote responsible AI use, including transparency about the capabilities and limitations of AI tools, academic integrity policies, and data privacy protections. Such frameworks not only safeguard learners but also help build trust in AI-assisted learning environments.

On the research front, future studies should explore the various dimensions of perceived usefulness, identifying specific AI tool features that students find most beneficial, which can inform the enhancement of these technologies. Research should also assess how perceived usefulness varies across different contexts and cultures to generalize the effectiveness of AI chatbots globally. Although this study indicated that anxiety does not significantly impact writing outcomes, the presence of moderate anxiety levels suggests an area ripe for further investigation, possibly by incorporating anxiety-reduction training within AI chatbots. Additionally, the negative correlation between English proficiency and the perceived usefulness of AI tools indicates a need for adaptive learning technologies that tailor their functions to learners’ skill levels. Future research could focus on developing dynamic AI systems that adjust their feedback and support based on user proficiency, ensuring relevance and sustained engagement. Moreover, with minimal influence from demographic factors such as gender and university level observed, further research could explore other intrinsic factors, such as motivation or learning strategies, which might influence the effectiveness of AI chatbots in learning. Lastly, although this study was conducted in a Saudi university context, the insights are applicable to broader EFL settings, particularly in regions where AI technologies are increasingly integrated into education. However, cultural, linguistic, and technological differences should be considered in future research to validate and adapt these findings to diverse educational systems globally.

Conclusion

This study directly addresses a critical gap in the literature by examining how learners’ writing anxiety, perceived usefulness, and perceived writing outcomes interact in the context of AI-assisted L2 writing. While previous research has explored the general impact of AI chatbots on language learning (e.g., Chen et al., 2020; Okonkwo & Ade-Ibijola, 2021; Wang et al., 2022; Yang & Kyun, 2022), few studies have investigated this specific interplay of psychological and experiential factors in L2 writing instruction. Using a mixed-methods approach, this study uncovered significant relationships between these variables, demonstrating that perceived usefulness strongly predicts writing outcomes and that higher English proficiency is associated with lower anxiety and reduced reliance on AI tools. Frequent chatbot users also reported greater writing improvements, emphasizing the role of sustained engagement.

Complementing these results, the thematic analysis of learners’ perceptions offered deeper insights into how AI chatbots are both empowering and limiting: while participants appreciated the speed, clarity, and language support provided by chatbots, concerns emerged around over-reliance and reduced cognitive engagement. Together, these results offer a more holistic understanding of how learners experience AI integration in writing tasks.

Study limitations

One significant limitation is the lack of an experimental study to measure how AI chatbots can improve writing performance. While this research highlights participants’ perceptions of chatbots in alleviating writing anxiety, perceptions alone cannot reliably predict actual improvements in writing skills. Conducting an experimental study would provide valuable data on the effectiveness of chatbots in enhancing writing performance, allowing for a more comprehensive understanding of their impact. Also, the significant skew in participant demographics, with 342 females and only 45 males, which may introduce bias into the findings. Additionally, while AI chatbots were found to mitigate some aspects of writing anxiety, the relationship between anxiety, proficiency, and perceived usefulness is complex and warrants further exploration. Another limitation of the study lies in its reliance on self-reported data, including reported English proficiency, which may be influenced by participants’ subjective perceptions and may not accurately reflect their actual language abilities. The cross-sectional nature of the research also limits the ability to draw causal inferences, and the findings may not be generalizable across different populations or educational contexts.

In summary, while this study highlights the promising role of AI chatbots in supporting L2 writing, it also emphasizes the need to integrate AI tools thoughtfully alongside traditional instructional methods to support cognitive engagement and skill development. Future research should incorporate experimental design, examine the long-term impact of AI use, explore diverse linguistic and cultural contexts, and address the complex challenges learners face to ensure more inclusive and effective implementations. By acknowledging these limitations and pursuing these future directions, we can develop ethically guided educational frameworks that leverage AI not only as a technological aid but also as a transformative tool in multilingual writing education.

Data availability

The supplementary materials include a file containing statistical analyses and output tables, along with detailed documentation of the qualitative thematic analysis (theme definitions, coding categories, and sample data excerpts). The full raw dataset is available from the author upon reasonable request.

References

Alharbi W (2023) AI in the foreign language classroom: A pedagogical overview of automated writing assistance tools. Education Research International, 2023(1):4253331. https://doi.org/10.1155/2023/4253331

Almusharraf A, Bailey D (2023) Machine translation in language acquisition: A study on EFL students' perceptions and practices in Saudi Arabia and South Korea. J Comput Assist Learn 39(6):1988–2003. https://doi.org/10.1111/jcal.12857

Almusharraf A, Bailey D (2025) Predicting attitude, use, and future intentions with translation websites through the TAM framework: a multicultural study among Saudi and South Korean language learners. Computer Assisted Language Learning, 38(5–6), 1249–1276. https://doi.org/10.1080/09588221.2023.2275141

Abdi Tabari M, Khajavy GH, Goetze J (2024) Mapping the interactions between task sequencing, anxiety, and enjoyment in L2 writing development. J Second Lang Writ 65: 101116. https://doi.org/10.1016/j.jslw.2024.101116

Al-Garaady J, Mahyoob M (2023) ChatGPT’s capabilities in spotting and analyzing writing errors experienced by EFL learners. Arab World Engl J 9:3–17. https://doi.org/10.24093/awej/call9.1

Araujo T, Helberger N, Kruikemeier S, de Vreese CH (2020) In AI we trust? Perceptions about automated decision-making by artificial intelligence. AI Soc 35(3):611–623. https://doi.org/10.1007/s00146-019-00931-w

Aydin S, Zeinolabedini M (2024) Integrating artificial intelligence into foreign language learning: Learners’ perspectives [ERIC Document No. ED662814]. ERIC. https://files.eric.ed.gov/fulltext/ED662814.pdf

Bailey DR, Almusharraf NM (2022) A structural equation model of second language writing strategies and their infl uence on anxiety, profi ciency, and perceived benefi ts with online writing. Education and Information Technologies, 27:1115–1131. https://doi.org/10.1007/s10639-022-11045-0

Baker W, Boonkit K (2004) Learning strategies in reading and writing: EAP contexts. RELC J 35(3):299–328. https://doi.org/10.1177/0033688205052143

Barrot ES (2023) Using ChatGPT for second language writing: Pitfalls and potentials. Assess Writ 57: 100745. https://doi.org/10.1016/j.asw.2023.100745

Bettayeb AM, Abu Talib M, Sobhe Altayasinah AZ, Dakalbab F (2024) Exploring the impact of ChatGPT: Conversational AI in education. Front Educ 9:1379796. https://doi.org/10.3389/feduc.2024.1379796

Braun V, Clarke V (2006) Using thematic analysis in psychology. Qualit Res Psychol 3(2):77–101. https://doi.org/10.1191/1478088706qp063oa

Chen H-L, Widarso GV, Sutrisno H (2020) A chatbot for learning Chinese: Learning achievement and technology acceptance. J Educ Comput Res 58(6):1161–1189. https://doi.org/10.1177/0735633120929622

Chen YC (2022) Effects of technology-enhanced language learning on reducing EFL learners’ public speaking anxiety. Comput Assist Lang Learn 37(4):789–813. https://doi.org/10.1080/09588221.2022.2055083

Cheng YS (2004) A measure of second language writing anxiety: Scale development and preliminary validation. J Second Lang Writ 13(4):313–335. https://doi.org/10.1016/j.jslw.2004.07.001

Cohen J (2013) Statistical power analysis for the behavioral sciences (2nd ed.). Routledge

Daly JA (1978). Writing apprehension and writing competency. J Educ Res 72(1):10–14. https://doi.org/10.1080/00220671.1978.10885110

Davis FD (1989) Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q 13(3):319–340. https://doi.org/10.2307/249008

Fokides E, Atsikpasi P (2018) Development of a model for explaining the learning outcomes when using 3D virtual environments in informal learning settings. Educ Inf Technol 23(5):2265–2287. https://doi.org/10.1007/s10639-018-9719-1

Gasaymeh A-MM, Beirat MA, Abu Qbeita AA (2024) University students’ insights of generative artificial intelligence (AI) writing tools. Educ Sci 14(10):1062. https://doi.org/10.3390/educsci14101062

George D (2011) SPSS for Windows step by step: A simple study guide and reference, 17.0 update (10th ed.). Pearson Education

Guo K, Wang J, Chu SKW (2022) Using chatbots to scaffold EFL students’ argumentative writing. Assess Writ 54: 100666. https://doi.org/10.1016/j.asw.2022.100666

Horwitz EK, Horwitz MB, Cope J (1986) Foreign language classroom anxiety. Mod Lang J 70(2):125–132. https://doi.org/10.2307/327317

Hsu TC, Chang C, Jen TH (2023) Artificial intelligence image recognition using self-regulation learning strategies: Effects on vocabulary acquisition, learning anxiety, and learning behaviours of English language learners. Interactive Learning Environments, 1–19. https://doi.org/10.1080/10494820.2023.2165508

Huang X, Xu W, Li F, Zhang Y (2024) A meta-analysis of effects of automated writing evaluation on anxiety, motivation, and second language writing skills. Asia-Pac Educ Res 33:957–976. https://doi.org/10.1007/s40299-024-00865-y

Imran M, Almusharraf N (2023) Analyzing the role of ChatGPT as a writing assistant at higher education level: A systematic review of the literature. Contemp Educ Technol 15(4):ep464. https://doi.org/10.30935/cedtech/13605

Kelly S, Kaye SA, Oviedo-Trespalacios O (2022) A multi-industry analysis of the future use of AI chatbots. Human Behavior and Emerging Technologies, 2022;(1):2552099. https://doi.org/10.1155/2022/2552099

Kline RB (2015) Principles and practice of structural equation modeling (4th ed.). Guilford Publications

Kostka I, Toncelli R (2023) Exploring applications of ChatGPT to English language teaching: Opportunities, challenges, and recommendations. TESL-EJ 27(3):n3. https://doi.org/10.55593/ej.27107int