Abstract

This corpus-based study investigates the extent to which ChatGPT converges or diverges from human practices in the construction of research article (RA) titles in the field of medicine. Two parallel corpora were compiled: one consisting of 300 human-authored RA titles from high-impact medical journals (The Lancet, JAMA, and The BMJ), and another comprising 300 ChatGPT-generated titles based on the abstracts of those same articles. Titles were analysed quantitatively and qualitatively in terms of length, form, syntactic structure, and content focus. Descriptive statistics, independent samples t-tests, and chi-square analyses were used to compare means and frequencies of the observed features. Results revealed a close alignment between the two corpora. ChatGPT generated titles that closely mirrored human-authored titles in length, preference for multi-unit forms, and dominance of nominal constructions. At the content level, titles of both corpora were largely methods-focused, though ChatGPT produced proportionally more dataset- and results-oriented titles. These findings suggest that ChatGPT has the capacity to reproduce disciplinary conventions in medical RA titling with considerable accuracy. Meanwhile, subtle differences point to its tendency towards formulaicity and limited stylistic flexibility. The study contributes to ongoing discussions of GAI in academic writing, underscoring both the potential of GAI tools to model disciplinary conventions and the need for critical awareness when incorporating them into EAP pedagogy. The study also offers implications for EAP instruction, genre analysis, and future research on GAI-assisted academic writing.

Similar content being viewed by others

Introduction

The RA title is not merely a linguistic label but a rhetorical act central to the practice of science and dissemination of knowledge (Soler 2011; Hao 2024), serving as the first point of contact between a text and its prospective readers (Orbay et al. 2025). As concise yet rhetorically dense, RA titles both summarise content and position research within disciplinary discourse communities (Soler 2011; Cleland 2025). They signal research topics, methods, and sometimes results, while also performing persuasive functions that enhance visibility and accessibility in an increasingly competitive publishing landscape (Goodman 2010; Hyland and Zou 2022; Lehmann 2022; Cleland 2025). For these reasons, the study of RA titles has extensively enticed scholarly attention within genre analysis, English for Specific Purposes (ESP), English for Academic Purposes (EAP), and academic writing pedagogy, with research examining their structural, syntactic, and rhetorical features across disciplinary and cultural contexts (e.g., Martín and León-Pérez 2024; Matsubara 2024; Perdomo and Morales 2024; Wang et al. 2024; Brett 2025; Cleland 2025; Orbay et al. 2025).

At the same time, recent and disruptive advances in generative artificial intelligence (GAI), particularly large language models (LLMs) that generate texts in response to user prompts, are increasingly impacting higher education and reshaping the landscape of scientific research and academic writing (Nguyen et al. 2024; Ou et al. 2024; Zou et al. 2025). Chat generative pre-trained transformer (ChatGPT), as one of the most leading GAI-powered tools, has attracted considerable attention in academic writing for its advanced natural language processing capabilities and efficiency in generating human-like texts (Tsai et al. 2024). ChatGPT has rapidly become a versatile writing assistance tool capable of performing a multitude of language-based tasks that span from relatively simple to more complex ones (Meniado et al. 2024). Beyond generating topics, ChatGPT can develop outlines, provide high-quality corrective feedback on grammar and vocabulary, deconstruct complex texts, and suggest strategies for improving clarity, readability, and cohesion (Barret and Pack 2023; Kaebnick et al. 2023; Lund et al. 2023; Tsai et al. 2024).

Within the context of academic writing and publishing, GAI has the potential to revolutionise the scholarly publication landscape and streamline multiple stages of the research process and science communication (Hosseini and Horbach 2023; Kaebnick et al. 2023; Lozić and Štular 2023; Lund et al. 2023). For authors, ChatGPT, when used responsibly, can offer a range of practical benefits, such as assisting with the formulation of research ideas, identifying research gaps, synthesising large volumes of data, revising and proofreading manuscripts, translating or paraphrasing texts, identifying suitable journals for submission, and ensuring alignment with journal conventions (Cotton et al. 2024; Gilat and Cole 2023; Ingley and Pack 2023; Jiao et al. 2023; Kaebnick et al. 2023; Karakose 2023; Lozić and Štular 2023; Özçeli̇k 2023; Nguyen et al. 2024). For editors and peer reviewers, ChatGPT can provide tangible benefits by automating tedious routine tasks (Gilat and Cole 2023; Ebadi et al. 2025), detecting instances of plagiarised content (Huang and Tan 2023; Schulz et al. 2022), promoting greater consistency in peer review (Hosseini and Horbach 2023; Schulz et al. 2022), and generating constructive feedback (Liang et al. 2024; Marrella et al. 2025).

As this field is still at its very onset, a dynamically growing body of research on GAI and academic writing has therefore begun to take shape. This emerging scholarship has examined a multitude of subjects, including the potential uses of ChatGPT in academic writing, particularly L2 writing, as both a writing assistant and an assessment tool (e.g., Lozić and Štular 2023; Mizumoto and Eguchi 2023; Nurseha 2023; Özçeli̇k 2023; Yan 2023; Nguyen et al. 2024; Pham and Le 2024; Zou et al. 2025), as well as the experiences and perceptions of language teachers and learners on the appropriate uses of GAI in the writing process (e.g., Barret and Pack 2023; Nguyen 2023; Xiao and Zhi 2023; Madden et al. 2025).

More specifically, very few studies have directly compared ChatGPT-generated texts with those authored by humans, with a primary focus on extended academic genres such as essays (e.g., Jiang and Hyland 2025a, 2025b, 2025c), bachelor’s and master’s theses (e.g., Nowacki and Wrochna 2025), and abstracts (e.g., Gao et al. 2023; Huang and Deng 2025; Zhang and Zhang 2025). Taken together, these studies suggest that ChatGPT is capable of producing extended academic texts that approximate human writing at a macro-discourse level, displaying structural coherence and functional coverage of key genre moves.

Despite these insights, existing research has overwhelmingly focused on extended academic genres, leaving the generation of highly compressed and convention-sensitive micro-genres such as RA titles largely underexplored. In contrast to longer genres, RA titles distil complex research content into a highly conventionalised and disciplinarily situated form, where redundancy and elaboration are necessarily minimised. Under such constraints, even relatively small lexical, syntactic, or rhetorical variations can carry disproportionate communicative weight and thus become analytically salient. Beyond their formal properties, RA titles function as the primary point of entry between a study and its potential readership, playing a central role in indexing, visibility, and initial evaluation within academic publishing. This function is particularly pronounced in medical research, the disciplinary context examined in the present study, where titling practices are shaped by strong expectations of precision, transparency, and evidential accountability.

From a genre-analysis perspective, RA titles therefore provide a sensitive site for examining whether GAI systems align with established disciplinary conventions, tend to amplify recurrent patterns, or produce surface-level approximations that diverge from human-authored norms. Against this backdrop, the present study addresses an underexplored area in the literature by systematically comparing human-authored and ChatGPT-generated medical RA titles across a range of micro-level features, notably length, form, syntactic structure, and content focus. By foregrounding a highly conventionalised academic micro-genre, the study extends existing research on GAI in academic writing beyond longer text types and offers empirical insight into ChatGPT’s handling of constrained disciplinary discourse. The findings further inform ongoing discussions in EAP by elucidating how GAI-generated titles may interact with established genre norms, with implications for genre awareness and pedagogical practice.

Literature review

Research article titles

Swales’ (1990) observation that titles were little considered has inspired a growing body of scholarship into titleology, revealing disciplinary variations, new trends, and evolving norms (Goodman 2010; Salager-Meyer et al. 2017; Xiang and Li 2020; Heßler and Ziegler 2023; Jiang and Hyland 2023; Martín and León-Pérez, 2024; Brett 2025). Specifically, extant research into medical titling practices suggests that medical RA titles are highly conventionalised and rhetorically intricate forms that have language-specific features. One of these features that has most frequently been examined is length (e.g., Jiang and Hyland 2023; Hao 2024; Martín and León-Pérez 2024), revealing that medical titles tend to be longer than those in other disciplines. This characteristic feature has been linked with more downloads and higher citation rates (Habibzadeh and Yadollahie, 2010; Heßler and Ziegler 2022). Other linguistic features which have been examined are title syntactic form and semantic content (Busch-Lauer 2000; Goodman et al. 2001; Kerans et al. 2020; Hao 2024; Matsubara 2024). The findings have shown that medical RA titles tend to be colonic, informative, nominal, and methods-oriented. Medical RA titles do not merely function as a summary of the research reported but act as a stand-alone text indicating its clinical relevance (Goodman 2000). This underpins the epistemic function of RA titles in medical discourse, mediating between research production and its professional applications.

GAI in academic writing

As one of the most advanced versatile AI LLMs, ChatGPT operates through a transformer-based architecture and a two-stage training process comprising pre-training and fine-tuning (Cotton et al. 2024; Hai 2023). The transformer model employs self-attention mechanisms to capture syntactic and semantic relationships within language, enabling the model to produce coherent and contextually appropriate responses based on user prompts (Ray 2023; Chang et al. 2024). During pre-training, ChatGPT acquires general linguistic patterns and word knowledge from extensive text corpora, while the fine-tuning phase adapts the model for specific communicative tasks through reinforcement learning from human feedback (RLHF), ensuring greater alignment with user expectations and genre-specific conventions (Hai 2023; Howard et al. 2023; Chang et al. 2024). These mechanisms underpin ChatGPT’s ability to generate human-like academic texts.

Existing research comparing human-authored and ChatGPT-generated academic texts, albeit limited, has extensively concentrated on metadiscourse use in argumentative essays and abstracts. This line of enquiry suggests that ChatGPT can reproduce macro-level features of academic discourse with considerable fluency. However, the model has a reduced capacity in reflecting the rhetorical diversity and interpersonal linguistic complexities characteristic of human academic writing. For instance, Jiang and Hyland (2025a, 2025b, 2025c) examined engagement markers in argumentative essays written by students and those generated by ChatGPT. Their findings indicate that while it can generate structurally coherent essays, ChatGPT exhibits a limited ability to build interactional arguments and display rhetorical flexibility and evaluative complexity found in student-authored essays. Similarly, Zhang and Zhang (2025) explored disciplinary variations in metadiscourse use in RA abstracts generated by ChatGPT and those written by human authors. They observed broad similarities in overall discourse functions, but notable differences in frequencies and distributions. Relatedly, Huang and Deng (2025) investigated ChatGPT’s use of shell nouns when generating abstracts for dissertations. They reported that ChatGPT-generated abstracts were more promotional and repetitive, but with less authorial visibility. Recent work has also examined AI-generated language in educational contexts by comparing AI output with human feedback or writing support, further reflecting a growing interest in evaluating AI performance against human benchmarks (Alnemrat et al. 2025).

Despite these advances, research has largely overlooked shorter, rhetorically dense genres such as RA titles. As the first point of contact between a text and prospective readers, RA titles play a critical role in positioning and promoting the article in a highly competitive academic publishing landscape. Adding to this the critical role of titles in medicine, a field where the rhetorical and the formal are at interplay. Medical RA titles therefore provide a revealing lens for examining the extent to which GAI can replicate human rhetorical practices, that privilege informativeness and precision. Examining human and GAI-generated RA titles not only addresses a notable void in the literature but also raises critical pedagogical questions about whether ChatGPT might reproduce or reinforce formulaic tendencies in academic writing within EAP contexts.

Therefore, the present study seeks to fill this gap by comparing human-authored and ChatGPT-generated medical RA titles across multiple micro-level features, notably length, form, syntactic structure, and content focus. In doing so, it contributes to the understanding of ChatGPT’s potential in academic writing and underscores the critical engagement with GAI as both a resource and a challenge for genre pedagogy.

Methods

Data collection and corpus

This comparative analysis examines two corpora of medical RA titles to uncover the extent to which ChatGPT converges or diverges from human titling practices. The first corpus consists of 300 RA titles selected from three leading general medical journals, namely The Lancet, The BMJ, and JAMA, each represented by 100 titles. These journals were selected not only for their high impact but also because prior genre-based research has highlighted their highly conventionalised RA titling practices, particularly the frequent use of nominal constructions and multi-unit titles (Goodman et al. 2001; Busch-Lauer 2000; Kerans et al. 2020). As such, they exemplify dominant genre norms in medical research publishing and provide a robust site for examining micro-level features such as title length, form, syntactic structure, and content focus. Limiting the dataset to three journals with well-established and relatively homogeneous titling conventions allowed to reduce editorial and disciplinary variability while ensuring sufficient data to identify stable genre patterns.

To account for potential journal-specific influences on title features, we reviewed the submission guidelines of each journal regarding title word limits, punctuation, formatting conventions, and AI policy. All three journals permit colon-separated multi-unit titles and nominal or verbal constructions, impose modest word limits (ranging from 15 to 20 words), and require clear disclosure of AI use if applied. Specifically, The Lancet allows AI only for language improvements with full disclosure; The BMJ recommends informative titles with optional subtitles indicating study design; and JAMA requires concise, specific titles and discourages undeclared AI-generated content. None of the titles in our dataset indicated AI-assisted generation, minimizing the likelihood that human-authored titles were influenced by AI. These considerations guided the selection of titles and ensured consistency and transparency in the dataset.

Only original RAs were included; reviews, perspectives, editorials, and other article types were excluded to maintain comparability in genre and micro-level features of titles. Titles were collected starting from the first issue of 2022 and proceeding chronologically until 100 original research articles were obtained from each journal, yielding the 300 human-authored titles (HATs) in the first corpus.

The second parallel corpus consists of 300 medical RA titles generated by ChatGPT. For each human-authored title, ChatGPT was prompted to generate a parallel title by providing the article’s abstract as input and requesting an appropriate RA article for. This procedure yielded 300 ChatGPT-generated titles (CGTs), ensuring correspondence between the two corpora. Overall, the two corpora comprised a total of 600 RA titles. Details of the two corpora are provided in Table 1.

Prompting procedure

To generate the second corpus of RA titles, ChatGPT was used as a text-generation tool under controlled prompting conditions. All titles were generated using the same ChatGPT version (ChatGPT 4.0), accessed via the OpenAI web interface. No custom fine-tuning was applied during text generations since parameter adjustment is not available in the free GPT-4.0 website.

For each human-authored RA title in the corpus, ChatGPT was prompted once to generate a parallel title using the corresponding article abstract as input. The prompt was reused verbatim across all 300 generations to ensure consistency and minimise variability introduced by prompt phrasing. To reduce potential bias introduced by prompt wording, the prompt was intentionally framed in neutral, genre-oriented terms, without referencing specific stylistic features, structural patterns, or evaluative criteria (e.g. length, phrasing, or rhetorical strategies). This design aimed to elicit titles based on ChatGPT’s internalised representation of medical RA titling conventions rather than steering outputs toward predetermined forms. The full prompt text was as follows:

“You are an expert academic author. Please generate a concise and informative title for the following medical research abstract. Ensure the title is accurate, follows typical conventions for medical research articles, and is suitable for publication in a high-impact medical journal.”

The abstract of the corresponding article was then provided immediately after the prompt. Only one title was generated per abstract, and no iterative prompting, regeneration, or post-selection was conducted. This one-shot prompting approach was adopted to avoid introducing researcher bias through selective choice among multiple outputs.

Analytical framework

Based on analytical frameworks identified in the literature, the two corpora of medical RA titles were examined in terms of length, form, syntactic structure, and content focus. To analyse titles length, we used Microsoft Word to count the number of words in each title, considering abbreviations, acronyms, and hyphenated words as single words (see Table 2).

To avoid conflating the number of units in titles with their syntactic structure as observed in previous studies (e.g., Haggan 2004; Soler 2007; Cheng et al. 2012; Morales et al. 2020; Martín and León-Pérez 2024), we followed a top-down approach. First, titles were classified by form into single-unit and multi-unit by counting their units. Single-unit titles consist of only one segment or clause, whereas multi-unit titles are composed of two disparate segments linked by a colon (see Table 3).

Second, single-unit and multi-unit titles were each examined separately to identify their syntactic structures (see Table 4). Single-unit titles were further categorised into nominal and verbal constructions. Nominal titles consist mainly of one or more nouns with optional modifiers. Verbal titles begin with a verb in the -ing form followed by objects or modifiers. Multi-unit titles, on the other hand, were further divided into compound constructions consisting of nominal-nominal and verbal-nominal constructions.

Regarding the analysis of titles content focus, we followed a typology framework developed by Goodman et al. (2001). Based on this framework, titles were classified into six types: topic only, methods, results, dataset, methods-results, and methods-dataset. Descriptions and examples of each type are provided in Table 5.

Coding and analysis

The coding and classification of the data were conducted manually by the two researchers. Prior to any discussion, both researchers coded all 600 titles independently using a predefined scheme of RA titles form, syntactic form, and content focus. Inter-rater reliability was assessed using Cohen’s Kappa for each coding level across the full dataset. Almost perfect agreement was obtained for form (κ = 1.00), with no disagreements recorded across the 600 RA titles. For syntactic form, nine cases of disagreement were identified, yielding a κ value of 0.94. This high agreement for form and syntactic structure is attributable to the discrete and mutually exclusive nature of their categories. For content focus, 36 disagreements were observed, corresponding to a κ value of 0.82. Following the reliability assessment, all disagreements were revisited and resolved through discussion until full consensus was reached. The consensus coding was subsequently used for all quantitative and qualitative analyses. This procedure ensured both the reliability of the coding scheme and the analytical consistency of the final dataset.

Statistical analyses were performed using IBM SPSS Statistics (Version 27). An independent-samples t-test was used to examine differences in mean title length between human-authored and ChatGPT-generated titles. In addition, chi-square tests were conducted to compare the frequency and distributions of title form, syntactic form, and content focus across the two corpora.

Results and discussion

Title length

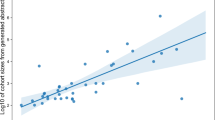

Table 6 presents the distribution of title length across the two corpora. In total, 11,931 title words were analysed, with 6035 words in human-authored titles and 5895 words in ChatGPT-generated titles. The mean title length was 20.1 words (SD = 5.68) for the human corpus and 19.6 words (SD = 3.31) for the ChatGPT corpus. An independent samples t-test, assuming unequal variances, indicated that this difference was not statistically significant, t(480.9) = 1.23, p = 0.219. The mean difference of 0.47 words had a 95% confidence interval of −0.28 to 1.21. The corresponding Cohen’s d was 0.01, with a 95% confidence interval of −0.06 to 0.26, suggesting a negligible effect size. These results indicate that human-authored and ChatGPT-generated titles are broadly comparable in length.

The similarity between human-authored and ChatGPT-generated medical RA titles in terms of length is noteworthy, particularly given previous research reporting diachronic trends towards longer, more informative titles in the medical sciences (Jiang and Hyland 2023; Martín and León-Pérez 2024). ChatGPT’s close alignment with human authors in this regard indicates that the model has internalised prevailing length-related conventions, reflecting disciplinary expectations for titles that are sufficiently extended to communicate key research aims, methods, and findings. Simultaneously, the absence of statistically significant differences in titles length between the human corpus and the ChatGPT corpus suggests that ChatGPT does not extend beyond genre-specific norms but instead reflects them in ways that may contribute to their reinforcement. At first glance, this alignment indicates that AI can serve as a reliable tool for replicating normative length patterns, which may support efficiency in writing and adherence to journal standards.

However, this convergence has broader rhetorical and disciplinary implications. By consistently producing titles that conform to dominant length conventions, ChatGPT may inadvertently reinforce homogenization within the field. Over time, repeated reliance on AI-assisted titling could reduce rhetorical variations, diminishing opportunities for creativity or nuanced differentiation among research studies. In particular, the tension between informativeness and conciseness, a key aspect of effective RA titles, may be subtly constrained if AI outputs encourage formulaic lengths rather than prompting authors to tailor titles to specific research contributions or audiences.

Also, the alignment raises questions about rhetorical stagnation. While longer, informative titles enhance clarity and discoverability, they may also promote over-inflation of methodological detail at the expense of stylistic diversity or interpretive nuance. In extreme cases, this could lead to a proliferation of titles that are technically correct but stylistically uniform, limiting the communicative diversity that underpins dynamic scholarly discourse.

From a pedagogical and EAP perspective, these findings underscore the importance of cultivating GAI literacy. Students and early-career researchers should be encouraged to critically assess AI-generated titles, reflecting on whether length choices optimally serve both disciplinary conventions and rhetorical aims. Educators might emphasise strategies such as iterative prompting, manual refinement, and comparative evaluation of human and AI-generated titles to preserve variation and foster strategic decision-making. In this way, ChatGPT can function not as a replacement for human judgment but as a collaborative tool that supports awareness of genre norms while promoting reflective, context-sensitive authorship.

Title form

As shown in Table 7, both corpora favored multi-unit titles, although their distributions varied significantly. ChatGPT produced a higher proportion of multi-unit titles (275, 91.7%) compared to human authors (249, 83%), whereas human authors employed a greater proportion of single-unit titles (51, 17%) than ChatGPT (25, 8.3%). A Pearson chi-square test of independence indicated that this difference was statistically significant, χ²(1, N = 600) = 10.19, p = 0.001. All expected cell counts exceeded 5, confirming the validity of the chi-square test; the minimum expected count was 38. The corresponding effect size, Cramér’s V = 0.13, 95% CI [0.049, 0.208], suggests a small but meaningful association between title type (ChatGPT-generated vs. human-authored) and title form. These results indicate that ChatGPT exhibits a stronger tendency towards multi-unit constructions compared to human authors.

ChatGPT’s marked preference for multi-unit titles aligns with previous research highlighting the trend towards greater informativeness in medical RA titles. Heßler and Ziegler (2023), for instance, found multi-unit titles in around 98% of articles in The BMJ and The Lancet. Similarly, Kerans et al. (2020) reported that The Lancet featured no single-unit titles, with 93.5% of their medical corpus comprising multi-unit constructions. These patterns reflect the generic conventions of scholarly writing, a tendency noted earlier by Dillon (1981) and Perry (1985), who associate “titular clonicity” (or multi-unit titles) with both scholarly productivity and rhetorical distinctiveness. In medical RAs, multi-unit titles balance informativeness with reader engagement: one segment communicates core content, while the other attracts attention (Sword 2012; Eva 2013). Hyland and Zou (2022) similarly note that such structures increase informational density by elaboration or specification, allowing authors to foreground multiple research aspects and differentiate their work from related studies.

ChatGPT’s consistent production of multi-unit titles likely reflects the training data it has been exposed to, which over-represents such forms. While this demonstrates its capacity to internalise disciplinary norms, it also raises critical concerns. By systematically favoring elaborate, multi-unit constructions, ChatGPT may reinforce dominant conventions and homogenise title forms, potentially limiting rhetorical diversity. By contrast, human authors retain some flexibility, sometimes producing concise, single-unit titles that prioritise readability, memorability, or rhetorical impact (Hartley 2005; Paiva et al. 2012; Hyland and Zou 2022). Over-reliance on AI-generated titles could therefore lead to rhetorical stagnation, where creativity, conciseness, or alternative structuring strategies are underutilised.

These findings also have implications for pedagogy and GAI literacy. Rather than simply adopting AI outputs, researchers and students should critically evaluate the rhetorical trade-offs inherent in multi-unit versus single-unit forms. Educators can guide learners to consider whether AI-generated titles appropriately balance informativeness with engagement, whether they risk overloading methodological detail, and whether the choices serve the communicative and disciplinary purpose of the study. In this way, ChatGPT functions as a supportive tool that reinforces genre conventions while requiring deliberate human oversight to preserve stylistic variation, rhetorical nuance, and disciplinary diversity.

Title syntactic structure

Single-unit titles

As shown in Table 8, single-unit titles were realised only as noun phrases in both corpora. This uniformity is noteworthy given the range of syntactic alternatives available for choice in formulating RA titles, including verbal, full-sentence, and interrogative constructions identified in previous studies (Haggan 2004; Soler 2007).

The predominance of nominal constructions in single-unit titles across both corpora echoes previous research findings. Busch-Lauer (2000), for instance, found that approximately 95% of single-unit titles in medical English RAs were nominals. Similarly, Ball (2009) reported a general trend towards nominal titling practices in medical RA titles. This pattern has also been observed across a range of other disciplines (e.g., Perdomo and Morales 2024; Jiang and Jiang 2023; Diao 2021; Morales et al. 2020; Soler 2007; Haggan 2004), suggesting that nominal constructions represent a conventional and widely adopted feature of RA titles. As Wang et al. (2024) observe, the prevalence of nominal titles can be attributed to their capacity to economically condense complex information through modifiers while clearly delineating the focus of the research.

ChatGPT’s replication of nominal constructions demonstrates its capacity to internalise conventional syntactic patterns. While this supports clarity and the efficient communication of complex research content, it also highlights a potential constraint of AI-assisted titling; the reliance on nominals may limit exploration of alternative syntactic forms, such as verbal phrases or interrogatives, which human authors occasionally employ to add emphasis, narrative variation, or rhetorical nuance.

From a pedagogical perspective, these findings suggest that users of GAI should critically assess syntactic choices. In EAP contexts, learners can use ChatGPT outputs as a baseline, refining or diversifying constructions to achieve desired rhetorical effects, maintain reader engagement, and avoid formulaic expression. In this way, AI can assist in following disciplinary conventions while leaving room for deliberate human syntactic decisions.

Multi-unit titles

As in the case of single-unit titles, both human authors and ChatGPT overwhelmingly favoured nominal constructions when producing multi-unit titles (see Table 9) with the two corpora displaying interestingly similar distributions. Specifically, nominal-nominal constructions accounted for 96% of ChatGPT titles and 95.6% of human titles, while verbal–nominal constructions comprised 4% and 4.4% of the respective corpora. A chi-square test of independence confirmed that these distributions did not differ significantly, χ²(1, N = 524) = 0.057, p = 0.812. All expected cell counts exceeded 5, confirming the validity of the chi-square test; the minimum expected count was 10.45. The corresponding effect size, Cramér’s V = 0.010, 95% CI [0.002, 0.109], indicates a negligible association between title type and syntactic structure.

These findings are consistent with previous research demonstrating the predominance of nominal constructions in RA titles in medicine and across disciplines (Perdomo and Morales 2024; Diao 2021; Morales et al. 2020; Jalilifar 2010; Gesuato 2008; Busch-Lauer 2000). In a comparable study of Turkish RAs, Demir (2023) reported that nominal-nominal constructions accounted for more than 85% of titles in medicine and 100% of titles in engineering. This suggests a global trend towards the use of nominal constructions in both single-unit and multi-unit RA titles, irrespective of culture and discipline.

The dominance of nominal-nominal forms reflects their communicative significance in academic discourse, as they enable authors to maximise informativeness while maintaining structural economy (Hyland and Zou 2022; Wang et al. 2024). ChatGPT close mirroring of this pattern suggests a strong attunement to medical titling conventions, indicating that ChatGPT has internalised not only surface-level length conventions but also deeper syntactic preferences. By contrast, verbal-nominal constructions appeared only marginally in both corpora, a finding that aligns with Busch-Lauer’s (2000) observation that medical RA titles typically avoid stylistic variation in favour of unemotional and information-dense formulations. ChatGPT’s close replication of this tendency with near-identical proportions to human authors suggests alignment with prevailing genre practices.

Overall, the parallel distributions observed across the two corpora point to ChatGPT close alignment with human syntactic preferences in multi-unit titles. Yet, this convergence invites reflection on broader implications. While this alignment demonstrates ChatGPT’s utility in producing convention-compliant titles, it also highlights potential risks for disciplinary discourse. Multi-unit nominal constructions, by packing extensive methodological and content information, may inadvertently encourage over-inflation of detail or reduce the prominence of interpretive or conceptual framing. In practice, this could contribute to titles that are formally correct but rhetorically dense, potentially limiting readability or nuanced signaling of study aims.

Title content focus

Analysis of title content focus (see Table 10) reveals significant differences between human-authored and ChatGPT-generated titles, χ²(5, N = 600) = 126.54, p = 0.001. However, two cells (16.7%) had expected counts below the recommended threshold of 5 (minimum expected count = 0.50), indicating a violation of the chi-square test assumptions. To address this, bootstrap methods were employed to provide robust estimates. The effect size, measured by Cramér’s V, was 0.459 (95% CI [0.424, 0.495]), indicating a strong association between title type and content focus despite the assumption violation.

Specifically, both human authors and ChatGPT markedly favored methods titles, accounting for more than three-quarters of each corpus (76.7% and 81.3%, respectively). This finding is consistent with medical RA titling conventions identified in previous studies. According to Busch-Lauer (2000) and Goodman et al. (2001), medical RA titles are expected to clearly convey the subject matter of the RA while providing sufficient details about study design and methods. Likewise, Siegel et al. (2006) caution that topic-only titles may limit readers’ ability to discern the novelty of the study whereas informative titles that include methodological details enable readers to assess more quickly whether the RA is relevant to their interests. Therefore, by foregrounding methods-oriented forms, both human and ChatGPT-generated titles conform to medical RA titling conventions, reflecting the epistemological value placed on methodological transparency in medical research reporting (Goodman et al. 2001; Siegel et al. 2006).

Nevertheless, closer examination reveals nuanced divergences that carry important rhetorical and epistemic implications. Specifically, human authors produced proportionally more topic-only titles (16.3% vs. 7.3%) and more titles combining methods with datasets (4% vs. 2%). These choices suggest deliberate rhetorical strategies, where human authors balance the need for methodological specificity with concise topical framing or highlight of key data resources. Such variation reflects a human capacity to negotiate disciplinary conventions, authorial voice, and journal-specific stylistic preferences, enabling subtle signaling of novelty, emphasis, or audience orientation.

ChatGPT, by contrast, generated relatively more dataset-focused titles (5.7% vs. 2.7%), more methods-results combinations (3.3% vs. 0.3%), and one explicit results title, which was absent from the human corpus. This pattern suggests that the model tends to prioritise empirical detail and outcome specificity, likely influenced by its training on large-scale medical corpora, where methodological transparency and empirical reporting dominate. While this tendency can enhance informativeness and precision, it also introduces potential risks by systematically amplifying dominant content patterns, ChatGPT may inadvertently reinforce homogenised rhetorical structures, limit alternative framing strategies, and contribute to a subtle narrowing of stylistic and epistemic diversity within the discipline.

The presence of methods-results combinations and explicit results-reporting titles, albeit minimal (3.6%), is particularly noteworthy. Previous research findings indicate that such constructions are relatively uncommon but can effectively signal novelty or highlight key findings (Aronson 2009). ChatGPT’s occasional adoption of these forms raises questions about whether the model is capable of productive rhetorical expansion or whether it reflects overgeneralization from its training data, privileging empirically dense constructions at the expense of concise or interpretive title formats.

Overall, the content-focus analysis illustrates both the capabilities and limitations of ChatGPT as a scholarly writing tool. While ChatGPT demonstrates remarkable alignment with disciplinary norms, its outputs may contribute to the standardization of title content, privileging methodological transparency over conceptual framing, and potentially constraining reader engagement or interpretive flexibility. In EAP and pedagogical contexts, this highlights the need for advanced GAI literacy, which entails not only awareness of potential biases but also active intervention to ensure rhetorical diversity. Learners and authors should critically evaluate whether AI-generated titles maintain a balance between informational density, conceptual emphasis, and readability, selectively modifying content focus to preserve clarity, novelty, and disciplinary appropriateness.

Conclusion

This study compares human-authored RA titles in high-impact medical journals to those generated by ChatGPT across length, form, syntactic structure, and content focus. Overall, the results reveal a high degree of convergence between human authors and ChatGPT in the construction of titles. Median title length is broadly comparable, with no statistically significant differences between human- and ChatGPT-generated titles. Both sets of titles show a strong preference for multi-unit forms, within which nominal–nominal constructions dominate. In terms of information content, methods titles account for most titles in both corpora, although ChatGPT generates proportionally more results-focused titles than human authors. These similarities suggest that ChatGPT can reproduce established medical titling conventions with considerable accuracy, likely reflecting its exposure to large-scale medical corpora and internalised genre regularities.

However, this apparent alignment warrants critical reflection. Across all levels of analysis, ChatGPT shows a strong tendency to adhere to prevailing norms which, if uncritically adopted, may reinforce dominant conventions and contribute to homogenization of disciplinary discourse. Its consistent preference for multi-unit, nominal, and methods-focused titles highlights the risk of rhetorical stagnation, potentially limiting stylistic diversity and reducing opportunities for concision, creative phrasing, or alternative rhetorical strategies. Moreover, the relative emphasis on methodological details and explicit results, while informative, may foreground particular reporting practices embedded in the model’s training data.

From an EAP perspective, these findings underscore the need for more advanced forms of GAI literacy. Instructors and authors should not only maintain critical awareness of AI-generated texts but also actively evaluate whether such outputs align with communicative goals, audience expectations, and stylistic considerations. Pedagogical guidance should therefore include discussion of alternative titling strategies, the implications of rigid adherence to conventions, and the ways AI outputs may subtly shape disciplinary writing norms. By combining AI assistance with informed human judgment, writers can leverage ChatGPT’s capacity to model conventions while mitigating the risks of formulaic or homogenised output.

In sum, ChatGPT demonstrates a robust ability to mirror human practices in medical RA titles, yet this strength also presents a tension: close alignment with genre norms may inadvertently constrain rhetorical variation and reinforce entrenched patterns. Responsible use in both academic practice and pedagogy therefore requires careful human oversight, critical engagement, and explicit instruction in balancing conformity with innovation.

While this study offers important insights into the similarities and differences between human-authored and ChatGPT-generated medical RA titles, several limitations should be acknowledged. First, the analysis is restricted to a single discipline of titles, which may not capture variations across disciplines with different rhetorical and stylistic conventions. Titles in the humanities, for example, often privilege creativity and metaphor (e.g., Haggan 2004), whereas those in the sciences tend to emphasise precision and methodology (e.g., Busch-Lauer 2000). Future studies should expand the scope of analysis to encompass a broader disciplinary range to assess whether ChatGPT adapts equally well across knowledge domains.

Second, ChatGPT-generated titles were produced using article abstracts as input rather than full manuscripts and through a single, non-iterative prompting procedure. While abstracts encapsulate the core aims, methods, and findings of a study, they do not always reflect the full scope, nuance, or rhetorical positioning of the complete manuscript. Moreover, this one-shot prompting approach may not fully capture how researchers interact with GAI tools in authentic writing contexts, which often involve iterative prompting, revision, and human intervention. Future research could address this limitation by examining title generation based on full-text inputs and by exploring how different prompting strategies and revision cycles shape the rhetorical features of GAI-generated titles.

Third, the study focuses on a single GAI system (ChatGPT) and does not compare outputs across different models or versions. Given the rapid development of LLMs and the documented variability across systems and updates, the findings should be interpreted as model-specific rather than generalisable to all GAI tools or model iterations. Comparative studies examining multiple models or successive versions of the same system would offer a more comprehensive understanding of GAI performance in academic titling.

Fourth, the study did not incorporate blind human evaluations of title naturalness, clarity, or perceived appropriateness. While genre- and corpus-based methods allow for systematic comparisons of formal and rhetorical features, complementary perceptual evaluations by disciplinary experts could provide additional insights into how GAI-generated titles are perceived by human readers.

Finally, potential domain-specific biases inherent in the training data of LLMs cannot be ruled out. ChatGPT’s exposure to large volumes of biomedical and medical texts may influence its reproduction of dominant disciplinary conventions while marginalising less common or emerging titling practices. Such biases may shape the patterns observed in the generated titles and warrant further investigation across disciplines with differing writing conventions.

Data Availability

Data will be available upon reasonable request from the corresponding author.

References

Alnemrat A, Aldamen H, Almashour M, Al-Deaibes M, AlSharefeen R (2025) AI vs. teacher feedback on EFL argumentative writing: a quantitative study. Front Educ 10:2025. https://doi.org/10.3389/feduc.2025.1614673

Aronson J (2009) When I use a word … Declarative titles. QJM: Int J Med 103(3):207–209. https://doi.org/10.1093/qjmed/hcp084

Ball R (2009) Scholarly communication in transition: The use of question marks in the titles of scientific articles in medicine, life sciences and physics 1966–2005. Scientometrics 79(3):667–679. https://doi.org/10.1007/s11192-007-1984-5

Brett DF (2025) Titles in archaeology research articles: A corpus-based comparison with other disciplines. J Engl Academic Purp 77:1–11. https://doi.org/10.1016/j.jeap.2025.101545

Busch-Lauer I-A (2000) Titles of English and German research papers in medicine and linguistics theses and research articles. In A. Trosborg (Ed.), Analysing Professional Genres (pp. 77–94). John Benjamins B.V

Chang Y, Wang X, Wang J, Wu Y, Yang L, Zhu K, Chen H, Yi X, Wang C, Wang Y, Ye W, Zhang Y, Chang Y, Yu PS, Yang Q, Xie X (2024) A survey on evaluation of large language models. ACM Trans Intell Syst Technol 15(3):39. https://doi.org/10.1145/3641289

Cheng SW, Kuo C-W, Kuo C-H (2012) Research article titles in applied linguistics. J Academic Lang Learn 6(1):A1–A14

Cleland J (2025) What’s in a name?’ Writing an effective and engaging article title. Med Teach 47(6):909–910. https://doi.org/10.1080/0142159X.2025.2488697

Cotton DRE, Cotton PA, Shipway JR (2024) Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innov Educ Teach Int 61(2):228–239. https://doi.org/10.1080/14703297.2023.2190148

Demir D (2023) Syntactic structures of Turkish research article titles in medicine and engineering. Afr Educ Res J 11(3):403–412

Diao J (2021) A lexical and syntactic study of research article titles in Library Science and Scientometrics. Scientometrics. https://doi.org/10.1007/s11192-021-04018-6

Dillon JT (1981) The emergence of the colon: An empirical correlate of scholarship. Am Psychologist 36(8):879–884. https://doi.org/10.1037/0003-066X.36.8.879

Ebadi S, Nejadghanbar H, Salman AR, Khosravi H (2025) Exploring the impact of generative AI on peer review: Insights from journal reviewers. J Academic Ethics 23(3):1383–1397. https://doi.org/10.1007/s10805-025-09604-4

Gao CA, Howard FM, Markov NS, Dyer EC, Ramesh S, Luo Y, Pearson AT (2023) Comparing scientific abstracts generated by ChatGPT to real abstracts with detectors and blinded human reviewers. npj Digital Med 6(1):75. https://doi.org/10.1038/s41746-023-00819-6

Gesuato S (2008) Encoding of information in titles: Academic practices across four genres in linguistics. In C. Taylor (Ed.), Ecolingua: The Role of E-corpora in Translation and Language Learning (pp. 127–157). EUT - Edizioni Università di Trieste

Gilat R, Cole BJ (2023) How will artificial intelligence affect scientific writing, reviewing and editing? The future is here …. Arthroscopy 39(5):1119–1120. https://doi.org/10.1016/j.arthro.2023.01.014

Goodman NW (2000) Survey of active verbs in the titles of clinical trial reports. BMJ 320:914–915. https://doi.org/10.1136/bmj.320.7239.914

Goodman NW (2010) Novel tool constitute a paradigm: How title words in medical journal articles have changed since 1970. Write Stuff 19(4):269–271

Goodman RA, Thacker SB, Siegel PZ (2001) What’s in a title? A descriptive study of article titles in peer-reviewed medical journals. Sci Editor 24(3):75–78

Habibzadeh F, Yadollahie M (2010) Are shorter article titles more attractive for citations? Crosssectional study of 22 scientific journals. Croatian Med J (CMJ) 51(2):165–170. https://doi.org/10.3325/cmj.2010.51.165

Haggan M (2004) Research paper titles in literature, linguistics and science: dimensions of attraction. J Pragmat 36(2):293–317. https://doi.org/10.1016/s0378-2166(03)00090-0

Hai HN (2023) ChatGPT: The evolution of natural language processing. Authorea Preprints

Hao J (2024) Titles in research articles and doctoral dissertations: Cross-disciplinary and cross-generic perspectives. Scientometrics 129(4):2285–2307. https://doi.org/10.1007/s11192-024-04941-4

Hartley J (2005) To attract or to inform: What are titles for? J Tech Writ Commun 35(2):203–213

Heßler N, Ziegler A (2022) Evidence-based recommendations for increasing the citation frequency of original articles. Scientometrics 127(6):3367–3381. https://doi.org/10.1007/s11192-022-04378-7

Heßler N, Ziegler A (2023) Content and form of original research articles in general major medical journals. PLoS One 18(6):e0287677. https://doi.org/10.1371/journal.pone.0287677

Hosseini M, Horbach SPJM (2023) Fighting reviewer fatigue or amplifying bias? Considerations and recommendations for use of ChatGPT and other large language models in scholarly peer review. Res Integr Peer Rev 8(1):4. https://doi.org/10.1186/s41073-023-00133-5

Howard A, Hope W, Gerada A (2023) ChatGPT and antimicrobial advice: the end of the consulting infection doctor? Lancet Infect Dis 23(4):405–406. https://doi.org/10.1016/S1473-3099(23)00113-5

Huang J, Tan M (2023) The role of ChatGPT in scientific communication: Writing better scientific review articles. Am J Cancer Res 13(4):1148–1154

Huang L, Deng J (2025) “This dissertation intricately explores…”: ChatGPT’s shell noun use in rephrasing dissertation abstracts. System, 129. https://doi.org/10.1016/j.system.2024.103578

Hyland K, Zou H (2022) Titles in research articles. J Engl Academic Purp 56:1–13. https://doi.org/10.1016/j.jeap.2022.101094

Ingley SJ, Pack A (2023) Leveraging AI tools to develop the writer rather than the writing. Trends Ecol Evolution 38(9):785–787. https://doi.org/10.1016/j.tree.2023.05.007

Jalilifar A (2010) Writing titles in applied linguistics: A comparative study of theses and research articles. Taiwan Int ESP J 2(1):29–54

Jiang FK, Hyland K (2023) Titles in research articles: Changes across time and discipline. Learned Publ 36(2):239–248. https://doi.org/10.1002/leap.1498

Jiang F, Hyland K (2025a) Does ChatGPT write like a student? engagement markers in argumentative essays. Writ Commun 42(3):463–492. https://doi.org/10.1177/07410883251328311

Jiang F, Hyland K (2025b) Metadiscursive nouns in academic argument: ChatGPT vs student practices. J Engl Academic Purp 75:101514. https://doi.org/10.1016/j.jeap.2025.101514

Jiang F, Hyland K (2025c) Rhetorical distinctions: Comparing metadiscourse in essays by ChatGPT and students. Engl Specif Purp 79:17–29. https://doi.org/10.1016/j.esp.2025.03.001

Jiang GK, Jiang Y (2023) More diversity, more complexity, but more flexibility: research article titles in TESOL Quarterly, 1967–2022. Scientometrics 128(7):3959–3980. https://doi.org/10.1007/s11192-023-04738-x

Jiao W, Wang W, Huang J-t, Wang X, Shi S, Tu Z (2023) Is ChatGPT a good translator? Yes with GPT-4 as the engine. arXiv preprint arXiv:2301.08745

Kaebnick GE, Magnus DC, Kao A, Hosseini M, Resnik D, Dubljević V, Rentmeester C, Gordijn B (2023) Editors’ statement on the responsible use of generative artificial intelligence technologies in scholarly journal publishing. Bioethics 37(9):825–828. https://doi.org/10.1111/bioe.13220

Karakose T (2023) The utility of ChatGPT in educational research—Potential opportunities and pitfalls. Educ Process: Int J 12(2):7–13

Kerans ME, Marshall J, Murray A, Sabaté S (2020) Research article title content and form in high-ranked international clinical medicine journals. Engl Specif Purp 60:127–139. https://doi.org/10.1016/j.esp.2020.06.001

Lehmann PS (2022) What’s in a name? On journal article titles. ACJS Today 50(5):8–11

Liang W, Zhang, Y., Cao H, Wang B, Ding DY, Yang X, Vodrahalli K, He S, Smith DS, Yin Y, McFarland DA, Zou J (2024) Can large language models provide useful feedback on research papers? A large-scale empirical analysis. NEJM AI, 1(8). https://doi.org/10.1056/AIoa2400196

Lozić E, Štular B (2023) Fluent but not factual: A comparative analysis of ChatGPT and other AI chatbots’ proficiency and originality in scientific writing for humanities. Future Internet 15(10):336

Lund BD, Wang T, Mannuru NR, Nie B, Shimray S, Wang Z (2023) ChatGPT and a new academic reality: Artificial intelligence-written research papers and the ethics of the large language models in scholarly publishing. J Assoc Inf Sci Technol 74(5):570–581. https://doi.org/10.1002/asi.24750

Madden ON, Gordon S, Chambers R, Daley J-L, Foster D, Ewan M (2025) Teachers’ practices and perceptions of technology and ChatGPT in foreign language teaching in Jamaica. Int J Lang Instr 4(3):1–32. https://doi.org/10.54855/ijli.25421

Marrella D, Jiang S, Ipaktchi K, Liverneaux P (2025) Comparing AI-generated and human peer reviews: A study on 11 articles. Hand Surg Rehab 44(4). https://doi.org/10.1016/j.hansur.2025.102225

Martín P, León-Pérez I (2024) The evolution of sub-disciplinary linguistic trends: A diachronic study of biomedical research article titles. J Engl Res Publ Purp 5(1-2):60–82

Matsubara S (2024) Crafting informative titles in medical articles to enhance the comprehension of the study findings. JMA J 7(3):410–414. https://doi.org/10.31662/jmaj.2024-0023

Meniado JC, Huyen DTT, Panyadilokpong N, Lertkomolwit P (2024) Using ChatGPT for second language writing: Experiences and perceptions of EFL learners in Thailand and Vietnam. Computers and Education: Art Intell 7. https://doi.org/10.1016/j.caeai.2024.100313

Mizumoto A, Eguchi M (2023) Exploring the potential of using an AI language model for automated essay scoring. Res Methods Appl Linguist 2(2). https://doi.org/10.1016/j.rmal.2023.100050

Morales OA, Perdomo B, Cassany D, Tovar RM, Izarra É (2020) Linguistic structures and functions of thesis and dissertation titles in Dentistry. Lebende Sprache 65(1):49–73. https://doi.org/10.1515/les-2020-0003

Nguyen A, Hong Y, Dang B, Huang X (2024) Human-AI collaboration patterns in AI-assisted academic writing. Stud High Educ 49(5):847–864. https://doi.org/10.1080/03075079.2024.2323593

Nguyen TTH (2023) EFL teachers’ perspectives toward the use of ChatGPT in writing classes: A case study at Van Lang University. Int J Lang Instr 2(3):1–47. https://doi.org/10.54855/ijli.23231

Nowacki L, Wrochna AE (2025) ChatGPT theses. Identifying distinctive markers in AI-generated versus human-created texts: A multimodal analysis in university education. E-Learn Digit Med 0(0). https://doi.org/10.1177/20427530251331083

Nurseha I (2023) EFL teachers’ perspectives on how AI writing tools affect the structure and substance of students’ writing. JEET, J Engl Educ Technol 4(3):189–211. https://doi.org/10.59689/jeet.v4i03.127

Orbay K, Fernando AM, Orbay M (2025) Short, but how short? Analysis of educational research titles. SAGE Open 15(1):1–18. https://doi.org/10.1177/21582440251320538

Ou AW, Khuder B, Franzetti S, Negretti R (2024) Conceptualising and cultivating critical GAI literacy in doctoral academic writing. J Second Lang Writing, 66. https://doi.org/10.1016/j.jslw.2024.101156

Özçeli̇k NP (2023) A comparative analysis of proofreading capabilities: Language experts vs. ChatGPT. Int Stud Educ Sci 147:147–161

Paiva CE, Lima JPDSN, Paiva BSR (2012) Articles with short titles describing the results are cited more often. Clinics 67(5):509–513. https://doi.org/10.6061/clinics/2012(05)17

Perdomo B, Morales OA (2024) Titles of architecture research articles in English and Spanish: Cross-language genre analysis for disciplinary writing. 3L: Lang, Linguist, Lit 30(4):344–360. https://doi.org/10.17576/3L-2024-3004-23

Perry JA (1985) The Dillion hypothesis of titular colonicity: An empirical test from the ecological sciences. J Am Soc Inf Sci 36(4):251–258. https://doi.org/10.1002/asi.4630360405

Pham VPH, Le AQ (2024) ChatGPT in language learning: Perspectives from Vietnamese students in Vietnam and the USA. Int J Lang Instr 3(2):59–72. https://doi.org/10.54855/ijli.24325

Ray PP (2023) ChatGPT: A comprehensive review on background, applications, key challenges, bias, ethics, limitations and future scope. Internet Things Cyber-Phys Syst 3:121–154. https://doi.org/10.1016/j.iotcps.2023.04.003

Salager-Meyer F, Lewin BA, Briceño ML (2017) Neutral, risky or provocative? Trends in titling practices in complementary and alternative medicine articles (1995-2016). Rev de Leng para Fines Específicos (LFE 23(2):263–289. https://doi.org/10.20420/rlfe.2017.182

Schulz R, Barnett A, Bernard R, Brown NJL, Byrne JA, Eckmann P, Gazda MA, Kilicoglu H, Prager EM, Salholz-Hillel M, ter Riet G, Vines T, Vorland CJ, Zhuang H, Bandrowski A, Weissgerber TL (2022) Is the future of peer review automated? BMC Res Notes 15(1):203. https://doi.org/10.1186/s13104-022-06080-6

Siegel PZ, Thacker SB, Goodman RA, Gillespie C (2006) Titles of articles in peer-reviewed journals lack information on study design: A structured review of contributions to four leading medical journals, 1995 and 2001. Science 29(6):183–185

Soler V (2007) Writing titles in science: An exploratory study. Engl Specif Purp 26(1):90–102. https://doi.org/10.1016/j.esp.2006.08.001

Soler V (2011) Comparative and contrastive observations on scientific titles written in English and Spanish. Engl Specif Purp 30(2):124–137. https://doi.org/10.1016/j.esp.2010.09.002

Swales JM (1990) Genre analysis: English in academic and research settings. Cambridge University Press

Tsai C-Y, Lin Y.-T, Brown IK (2024) Impacts of ChatGPT-assisted writing for EFL English majors: Feasibility and challenges. Educ Info Technol 29(17). https://doi.org/10.1007/s10639-024-12722-y

Wang Z, Jabar MAA, Jalis FMM (2024) Cross-disciplinary analysis of the syntactic and lexical features of Chinese master thesis titles. Theory Pr Lang Stud 14(9):2760–2772

Xiang X, Li J (2020) A diachronic comparative study of research article titles in linguistics and literature journals. Scientometrics 122(2):847–866. https://doi.org/10.1007/s11192-019-03329-z

Xiao Y, Zhi Y (2023) An exploratory study of EFL learners’ use of ChatGPT for language learning tasks: Experience and perceptions. Languages, 8(3). https://doi.org/10.3390/languages8030212

Yan D (2023) Impact of ChatGPT on learners in a L2 writing practicum: An exploratory investigation. Educ Inf Technol 28(11):13943–13967. https://doi.org/10.1007/s10639-023-11742-4

Zhang M, Zhang J (2025) Disciplinary variation of metadiscourse: A comparison of human-written and ChatGPT-generated English research article abstracts. J Eng Acad Purposes 76. https://doi.org/10.1016/j.jeap.2025.101540

Zou M, Kong D, Lee I (2025) Doctoral student’s strategy use in GAI chatbot-assisted L2 writing: An activity theory perspective. J Eng Acad Purposes 76. https://doi.org/10.1016/j.jeap.2025.101521

Funding

Open access funding provided by The Science, Technology & Innovation Funding Authority (STDF) in cooperation with The Egyptian Knowledge Bank (EKB).

Author information

Authors and Affiliations

Contributions

The two authors contributed equally to this work. SKMI: Conceptualisation, Methodology, Writing - original draft. ZAZM: Resources, Formal Analysis, Writing - review & editing. All authors were involved in collecting, coding, and interpreting the data for this article.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

This article does not contain any studies with human participants performed by any of the authors.

Informed consent

This article does not contain any studies with human participants performed by any of the authors.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ibrahim, S.K.M., Mahmoud, Z.A.Z. Generative AI in academic writing: a comparison of human-authored and ChatGPT-generated research article titles. Humanit Soc Sci Commun 13, 394 (2026). https://doi.org/10.1057/s41599-026-06956-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1057/s41599-026-06956-z