Abstract

Prostate cancer is a leading cause of male cancer mortality, and early, accurate diagnosis is critical. Artificial intelligence (AI), including machine learning, deep learning, and radiomics, enhances detection, characterization, and treatment assessment across TRUS, mp-MRI, and PSMA PET/CT. AI models achieve high accuracy, often matching experts, improving small-lesion detection, and supporting risk stratification. Challenges remain in data quality, generalization, clinical integration, and ethics, with future prospects in multi-omics, explainable AI, and workflow-embedded decision support.

Similar content being viewed by others

Introduction

Prostate cancer is a major threat to the health of elderly men. According to GLOBOCAN 2022 data, there are over 1.4 million new prostate cancer (PCa) cases and approximately 370,000 deaths worldwide each year. The incidence rate is significantly higher in developed countries than in developing countries, and there is a trend of younger age1,2. Recent epidemiologic studies further clarify this age shift. Early-onset prostate cancer, commonly defined as diagnosis at ≤55 years of age, is now recognized as a distinct clinical entity and accounts for a meaningful proportion of prostate cancer cases in Western populations1,2. Global analyses of adolescents and young adults (15–40 years) also show a steady increase in prostate cancer incidence across all young age strata, with an average annual rise of approximately 2% since 19901,2. Consistent with these observations, population-based cancer registry data from Jiangsu Province in China demonstrate that the age-standardized incidence has increased most rapidly among men aged 0–59 years, accompanied by a decrease in the age-standardized mean age at diagnosis and an increasing proportion of cases in younger age groups1,2. The clinical outcome of PCa is closely related to the timing of diagnosis1,2. The 5-year survival rate of patients with early localized PCa can reach 99%, while that of patients with metastatic PCa drops sharply to less than 30%. Therefore, achieving early and accurate detection and risk stratification of PCa is of great significance for formulating individualized treatment plans and reducing mortality3,4.

In the clinical management of PCa, diagnostic imaging techniques are important tools, and various modalities have their own advantages and disadvantages: Transrectal Ultrasound (TRUS) is a commonly used screening method due to its real-time performance and low cost, but it cannot identify small lesions and is dependent on the operator’s experience; multi-parameter MRI (mp-MRI) has high soft tissue resolution and can well display the prostate capsule, seminal vesicles, and surrounding blood vessels, making it the “gold standard” for diagnosing clinically significant prostate cancer (csPCa), but it is time-consuming for image reading and has low consistency in image reading among radiologists from different centers (Kappa value: 0.4–0.6)5,6; positron emission tomography/computed tomography (PET/CT) can accurately detect PCa metastatic lesions through tracers that target prostate-specific membrane antigen (PSMA), but it cannot identify small lesions ≤5 mm, and the equipment cost is high, making it difficult to popularize in primary hospitals.

With the rapid evolution of artificial intelligence (AI)-particularly machine learning and deep learning-there is growing evidence that AI can enhance every step of imaging-based diagnosis of prostate cancer7,8. In ultrasound and elastography, AI-driven detection and radiomics models improve lesion conspicuity, reduce operator dependence, and assist decision-making in patients with PSA levels in the gray zone. In multiparametric MRI (mp-MRI), AI algorithms enable fully automatic prostate and zonal segmentation, standardized assessment of clinically significant prostate cancer (csPCa), and accurate risk stratification, in some multi-center studies achieving performance that is non-inferior or complementary to expert radiologists while reducing reading time and inter-reader variability. For PSMA-based PET/CT, AI models can automatically segment metabolically active lesions, quantify whole-body tumor burden, and support earlier and more objective assessment of treatment response. Against this background, the present review systematically summarizes the current applications and clinical impact of AI across major diagnostic imaging modalities in PCa, analyzes key technical and translational challenges, and outlines future directions for integrating robust, explainable AI systems into routine prostate cancer care.

From a technical perspective, current AI systems are designed to work on top of conventional imaging modalities rather than replace them. In routine practice, ultrasound, mp-MRI, and PSMA positron emission tomography/computed tomography (PSMA PET/CT) are acquired according to standard clinical protocols, and the resulting DICOM images are fed into AI pipelines that follow three main steps. First, images are pre-processed, and the prostate and surrounding structures are segmented either manually, semi-automatically, or by deep-learning–based contouring. Second, quantitative information is extracted from these images: traditional radiomics approaches compute hundreds of handcrafted intensity, texture, and shape features, whereas deep learning models learn hierarchical image representations directly from the pixel data. Third, these imaging-derived features are combined with established diagnostic information—such as PI-RADS assessment, PSA level, or PSMA uptake—and used to train supervised machine-learning or deep-learning models against reference standards (biopsy or prostatectomy histopathology). At inference time, the trained model processes new MRI/ultrasound/PET-CT studies in parallel with conventional reading, automatically highlighting suspicious regions, generating malignancy probabilities or risk scores, and suggesting biopsy targets or treatment-response categories. In this way, AI augments traditional MRI, ultrasound, and PET/CT by providing quantitative, reproducible, and workflow-integrated decision support that can be interpreted alongside existing imaging criteria and clinical judgment.

In contrast to most existing reviews, which typically focus on a single imaging modality or emphasize algorithmic performance metrics in isolation, the present work is designed to provide a multimodal and system-level perspective on AI in prostate cancer imaging. First, we integrate evidence across all major diagnostic imaging techniques—ultrasound (including gray-scale, elastography, and multimodal TRUS), mp-MRI, PSMA-based PET/CT, and emerging image-fusion approaches—along the entire clinical pathway from initial screening and biopsy guidance to metastatic staging and treatment-response evaluation. Second, we synthesize methodological and translational limitations within a structured “data–model–clinic” framework and, informed by recent clinical trial data, discuss scenario-specific deployment strategies for outpatient screening, inpatient diagnosis, and post-treatment surveillance. Third, we devote dedicated sections to ethical, regulatory, and medico-legal considerations, including data governance, algorithmic bias, risk-based regulation, and allocation of responsibility among stakeholders, which are often only briefly mentioned or omitted in prior overviews. By explicitly combining multimodal technical advances with clinical workflow integration and governance perspectives, this review aims to offer a distinctive and practical roadmap for the safe and trustworthy implementation of AI-assisted diagnostic imaging in prostate cancer.

Application of artificial intelligence in mainstream diagnostic imaging modalities for prostate cancer

Ultrasound imaging

Ultrasound imaging is the most commonly used screening tool for PCa diagnosis. However, PCa lesions in gray-scale ultrasound images often appear as hypoechoic areas, which have a high degree of overlap with the imaging features of benign prostatic hyperplasia (BPH) and prostatitis, resulting in a specificity of only 55–65% for traditional ultrasound diagnosis, as shown in Fig. 1. It illustrates a typical workflow of ultrasound-based radiomics analysis for prostate cancer, including lesion segmentation, extraction of multiple texture features, feature selection and subsequent statistical or machine-learning modeling. Similar pipelines have been implemented in clinical TRUS studies. Han et al. developed a computer-aided diagnosis system that combined multiresolution autocorrelation texture features with clinical descriptors (tumor location and shape) and used a support vector machine classifier, achieving approximately 92–96% sensitivity and 90–95% specificity for detecting cancerous tissue on TRUS images7,8. Huang et al. later proposed a texture feature-based classification method on 342 transrectal ultrasound images using optical-density conversion, local binarization, and Gaussian Markov random fields to derive fused texture features, and their SVM model reached an accuracy of 70.9%, sensitivity of 70.0% and specificity of 71.7% for differentiating malignant from non-malignant prostate tissue7,8. The steps in Fig. 1 summarize the key stages shared by these ultrasound radiomics approaches. Through feature learning and pattern recognition of ultrasound images, AI technology has effectively improved the performance of lesion detection and qualitative diagnosis, with the main application directions including the following two categories:

a Image segmentation. b Feature extration and selection. c Spss analysis.

Deep learning-based automatic lesion detection

To address the limitations of conventional ultrasound lesion detection, in which manual selection of the region of interest (ROI) is time-consuming and prone to missed lesions, convolutional neural network (CNN)-based object-detection algorithms using multi-scale feature fusion can automatically localize the prostate gland and generate real-time annotations of suspicious lesion areas9,10. Wang constructed a YOLOv5s model based on TRUS images and proposed a method based on the Attention Mechanism, which can improve the feature extraction and recognition of hypoechoic PCa lesions. The detection sensitivity of PCa lesions was 92.3%, with only 3.1 false positives per case, and the detection time for a single image was only 0.8 s, which was much shorter than that of 2 intermediate radiologists. Wang et al. developed a radiomics-based prediction model using whole-gland TRUS video clips; 851 texture features were extracted and reduced by LASSO, and support vector machine and random forest classifiers achieved AUCs of approximately 0.75–0.78 for distinguishing PCa from benign lesions in the validation and test cohorts, performing slightly better than MRI-based assessment by senior radiologists9,10. Sun et al. subsequently proposed a multi-institutional three-dimensional convolutional neural network (3D P-Net) trained on standardized grayscale TRUS videos of the entire prostate; the model achieved an AUC up to 0.90 in the training cohort and maintained high diagnostic accuracy in independent internal and external validation cohorts, while reducing unnecessary biopsies compared with conventional TRUS scoring systems9,10. In addition, Zhang et al. constructed several machine-learning models that integrated multimodal transrectal ultrasound parameters with PSA-related indicators; their best-performing artificial neural network yielded an AUC of 0.855 with 80% sensitivity and 88.6% specificity for predicting clinically significant PCa, underscoring the value of combining ultrasound with clinical variables9,10. Collectively, these clinical studies demonstrate that AI-based lesion detection and risk prediction using TRUS or multimodal ultrasound can outperform or complement conventional imaging assessment and provide real-time decision support in prostate cancer screening and biopsy workflows. In addition, data augmentation technology was used to solve the bottleneck of poor model robustness caused by artifacts in ultrasound images, and the detection sensitivity was still maintained at 89.8% in the external validation set, providing a reliable auxiliary detection tool for ultrasound physicians in primary hospitals.

Radiomics-based benign-malignant differentiation

In addition to lesion detection, AI can extract quantitative features of ultrasound images through Radiomics and combine machine learning algorithms to establish a benign-malignant differentiation model. Li analyzed ultrasound images of 586 patients undergoing TRUS-guided biopsy, extracted 126 radiomic features, retained 12 important features after LASSO feature selection, and constructed a random forest model11,12. The results showed that the Area Under the Receiver Operating Characteristic Curve (AUROC) of this model for differential diagnosis of PCa and BPH reached 0.89, with a sensitivity of 87.1% and a specificity of 83.3%, which were significantly better than those of conventional ultrasound (0.72) and serum PSA detection (0.68). Further subgroup analysis showed that the AUROC of the model remained 0.83 in patients with PSA gray zone (4–10 ng/mL)13,14. This meets the needs of clinical diagnosis for patients in the PSA gray zone - the misdiagnosis rate of traditional methods for patients in the PSA gray zone is as high as 40%, while that of the AI model is below 15%.

Secondly, elastography is an extended technology of ultrasound, which assists diagnosis by detecting tissue hardness. The application of AI technology has further improved its diagnostic accuracy. In 2021, Zhang et al. established a CNN model using Shear Wave Elastography (SWE) images, integrating hardness values and texture features to distinguish PCa from benign lesions, with an AUROC of 0.9115,16. At the same time, the detection rate of high-risk PCa with a Gleason score ≥8 was 94.5%, providing a new quantitative index for PCa risk stratification.

Magnetic resonance imaging

Multi-parameter MRI (mp-MRI) is the “gold standard” for PCa diagnosis, usually including T2-weighted imaging (T2WI), Diffusion-Weighted Imaging (DWI), Dynamic Contrast-Enhanced MRI (DCE-MRI), and Diffusion Tensor Imaging (DTI) sequences. It can reflect the characteristics of prostate tissue from multiple dimensions, such as anatomical structure, water molecule diffusion, and vascular perfusion. However, mp-MRI image reading requires integrating multi-sequence information, which has high requirements for the professional level of physicians, and the diagnostic consistency among different centers is low (especially for small lesions ≤1 cm)17,18. The application of AI technology in mp-MRI has developed from single-sequence analysis to multi-sequence fusion models, with the core goals of realizing automatic prostate segmentation, accurate csPCa identification, and clinical parameter prediction. The specific applications are as follows:

Automatic segmentation of prostate and its zones

The prostate is divided into the Peripheral Zone (PZ), Transition Zone (TZ), and Central Zone (CZ). 70% of PCa originates from PZ, and 30% originates from TZ. However, TZ lesions are more likely to be missed due to their high overlap with BPH images. Traditional manual segmentation requires surgeons to outline MRI images layer by layer, taking about 20 min per case, and the segmentation consistency Kappa value is only 0.5–0.719,20. The deep learning-based segmentation algorithm realizes pixel-level semantic segmentation through an encoder-decoder, significantly improving the efficiency and accuracy of segmentation, as shown in Fig. 2.

The first column is the prostate image, the second column is the ground truth of the prostate, the third column is the segmentation result of MPU-Net, the fourth and fifth columns are the PZ and TZ segmented by MPU-Net, respectively. The four rows of images represent four different cases. The first two rows show MRI modality, and the last two rows show ADC modality. Both modalities are used in the actual segmentation process. MPU-Net Morphology-Preserving U-Net, PZ Peripheral Zone, TZ Transition Zone, MRI Magnetic Resonance Imaging, ADC Apparent Diffusion Coefficient.

Chen constructed a U-Net++ model using 1000 cases of mp-MRI, using residual connections to avoid gradient disappearance in deep networks and a Dice loss function to optimize segmentation boundaries. The Dice Similarity Coefficient (DSC) of this model for the entire prostate, PZ, and TZ was significantly higher than that of the classic CNN model, and the segmentation time for a single case was only 1.2 min21,22. In addition, the model maintained robustness to datasets of MRI with different field strengths, well solving the problem of model inapplicability caused by image differences generated by different devices. In 2023, Zhao et al. added an attention gating module to the above model, and by improving the feature extraction ability for TZ boundaries, the segmentation DSC of TZ reached 0.90. The TZ area segmented by AI is the basis for accurate segmentation of TZ lesions—clinical reports show that based on radiomic analysis of the TZ area segmented by AI, the detection rate of TZ PCa is increased by 20–30%.

Accurate identification and risk stratification of csPCa

Clinically, csPCa is mainly treated with intervention, while indolent PCa is mainly under active surveillance. Accurate identification of csPCa is the key to avoiding over-treatment and missed diagnosis23,24. AI models can improve the diagnostic performance of csPCa by integrating multi-sequence features of mp-MRI, which are mainly divided into CNN-based end-to-end identification models and radiomics-based prediction models.

In terms of end-to-end models, Liu et al. proposed a Transformer-based multi-sequence fusion model (ViT-MRI). The T2WI, DWI, and DCE-MRI sequences were input into the Vision Transformer (ViT) encoder, respectively, and a cross-attention mechanism was used for multi-modal feature fusion, and a probability map of csPCa was output25. A total of 1500 patients were included in this study. The results showed that the AUROC of this model for identifying csPCa in the test set was 0.94, with a sensitivity of 91.2% and a specificity of 88.5%, which was significantly better than that of 3 senior radiologists (AUROC: 0.85-0.88). Further research found that the detection rate of the model for small csPCa ≤1 cm was as high as 87.3%, while the average detection rate of physicians was 72.1%, indicating that AI is superior to humans in detecting small lesions, as shown in Fig. 3.

The figure shows the performance of the ViT-MRI model compared with three senior radiologists in detecting clinically significant prostate cancer (csPCa)37. Panels A–F display example prediction results and corresponding ADC maps. The yellow lines indicate the AI-predicted lesion boundaries, which are highly consistent with the manual annotations (A–F) performed by experienced genitourinary radiologists based on pathological results.

Tumor activity evaluation based on dynamic MRI

DCE-MRI measures changes in tissue blood perfusion by dynamically injecting contrast agents, reflecting the state of tumor angiogenesis. Traditional DCE requires manual selection of ROI and complex calculation of quantitative parameters. AI technology can automatically extract the DCE time-intensity curve (TIC) for quantitative analysis, thereby making an objective evaluation of tumor activity26,27.

Huang et al. established a long short-term memory model based on 600 cases of DCE-MRI data, using the learned dynamic change features of TIC to automatically classify TIC types and predict Ktrans and kep values. The results showed that the correct rate of the model for TIC type classification was 93.5%, and the Pearson correlation coefficients between the predicted Ktrans and kep values and the manually calculated values were 0.91 and 0.89, respectively. Further analysis found that the Ktrans value predicted by AI was positively correlated with the Gleason score of PCa, and the risk of biochemical recurrence in patients with Ktrans >0.8 min−¹ was 3.2 times that of patients with Ktrans ≤0.8 min−¹, suggesting that AI-quantified DCE parameters have potential value in PCa prognosis evaluation28,29.

Technical breakthroughs in PET/CT for metastatic lesion detection and treatment response evaluation

PET/CT combines tumor-specific molecular tracers to achieve whole-body evaluation of PCa, playing an irreplaceable role in metastatic lesion detection and recurrence monitoring. However, traditional PET/CT image reading often relies on physicians’ subjective judgment of metabolically increased areas, which is significantly less effective in detecting small metastatic lesions (≤5mm), and the quantitative analysis is relatively subjective30,31. By automatically segmenting metabolically abnormal areas and quantitatively analyzing tracer uptake characteristics, AI has effectively improved the diagnostic efficiency of PET/CT, with the main applications as follows:

Deep learning-based automatic detection of metastatic lesions

PCa metastasis is common in bones, lymph nodes, and visceral organs. Bone metastatic lesions appear as tracer accumulation in PET/CT, but they are easily confused with physiological uptake; lymph node metastatic lesions are often missed due to their small size. The CNN-based multi-modal fusion detection model can effectively solve the above problems.

In 2023, Kim et al. constructed a 3D-UNet model based on 800 cases of 68Ga-PSMA-11 PET/CT, including 320 patients with metastasis and 480 patients without metastasis. The model realized automatic detection of bone metastatic lesions and lymph node metastatic lesions by inputting 3D images of PET and CT simultaneously32,33. In this study, the sensitivity and specificity of the model for bone metastatic lesions were 90.5% and 92.3% respectively, and those for lymph node metastatic lesions were 88.1% and 91.7% respectively, which were higher than those of 2 nuclear medicine physicians. The model can automatically calculate parameters such as SUV max and metabolic tumor volume (MTV) of metastatic lesions, providing support for the objective evaluation of metastatic burden. Studies have confirmed that the MTV calculated by AI is inversely correlated with the progression-free survival of PCa patients (HR = 1.85, P < 0.001).

Dynamic evaluation of treatment response

After PCa patients receive endocrine therapy, chemotherapy, or radiotherapy, PET/CT is required to evaluate the treatment response. The traditional method for evaluating PCa treatment response includes evaluating the therapeutic effect based on the rate of change of SUV max before and after treatment34,35, which has disadvantages such as strong subjectivity and inability to evaluate the response early. AI models can predict and accurately evaluate the treatment response early based on the characteristic changes of PET/CT before and after treatment.

Park conducted a prospective study on 68Ga-PSMA-11 PET/CT of 400 patients with metastatic PCa undergoing endocrine therapy before treatment, 1 month after treatment, and 3 months after treatment. By establishing a contrastive learning model based on a Siamese network, the treatment response of patients was predicted by comparing the changes of metabolic features before and after treatment. It was found that the AUROC of the model for predicting the 3-month treatment response based on the data at 1 month after treatment reached 0.90, which was significantly better than the traditional method based on the rate of change of SUV max (0.75)36,37. When applied to patients, the model could early identify patients with no treatment response, providing a basis for timely adjustment of treatment plans, avoiding adverse reactions caused by ineffective treatment, and reducing resource waste.

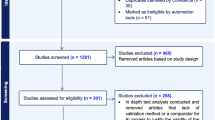

Clinical trial evidence for AI-assisted diagnostic imaging of prostate cancer

Several clinical and near-clinical studies have evaluated AI-assisted prostate MRI in settings that closely resemble routine practice. In a simulated clinical deployment study including men undergoing bi-parametric MRI and subsequent MRI–ultrasound fusion biopsy, a fully automatic U-net–based deep learning system for clinically significant prostate cancer (csPCa) detection achieved sensitivity and specificity comparable to radiologist PI-RADS assessment, and the combination of AI output with PI-RADS scores improved positive predictive value without compromising negative predictive value36,37. In a large international, paired, non-inferiority study (PI-CAI) involving 10,207 MRI examinations from 9129 patients, an AI system showed higher sensitivity for csPCa detection than the average radiologist and non-inferior specificity compared with both radiologists and multidisciplinary standard-of-care decisions36,37. Clinical evaluation of a fully automated diagnostic AI software integrated into the PACS environment demonstrated an area under the ROC curve of about 0.80 and sensitivity around 85% for csPCa at a PI-RADS ≥ 4 threshold, with performance comparable to meta-analytic estimates for expert PI-RADS readers36,37. A recent two-center study assessing AI assistance for radiologists with different experience levels showed that AI support increased lesion-level sensitivity and area under the curve for less-experienced readers, modestly improved performance for experienced readers, reduced reading time, and improved inter-reader agreement36,37. Taken together, these clinical data indicate that AI systems can match or even surpass expert readers in csPCa detection, enhance efficiency, and reduce variability, providing important context for the limitations and challenges discussed in the following section.

Despite these promising advancements across ultrasound, MRI, PET/CT, and early clinical deployment studies, several key obstacles still hinder the widespread, standardized, and equitable implementation of AI-assisted diagnostic imaging for prostate cancer in everyday practice. In the next section, we therefore move from “what is currently possible” to “what is still needed”, focusing on critical challenges related to data quality and sharing, model generalization and robustness, clinical workflow integration and physician trust, as well as the broader ethical and regulatory environment.

Key challenges in the application of artificial intelligence in prostate cancer diagnostic imaging

Building on the application examples and clinical studies outlined above, AI has already achieved remarkable results in the imaging analysis of PCa diagnosis; however, it still needs to overcome many problems on the path from experimental research to large-scale clinical application, including issues related to image data acquisition, technical model implementation, clinical adoption, and ethics. These require systematic planning and resolution, as shown in Fig. 4.

A Model input data. B Mode architecture. C Model application.

Dual bottlenecks of data quality and quantity

The performance of AI models is highly dependent on the “quantity” and “quality” of training data, and there are three core problems in PCa diagnostic imaging data:

First, the amount of data is insufficient, and the distribution is uneven. High-quality annotated multi-modal imaging data is scarce—most studies have a training set size of only 500–1000 cases, which are concentrated in tertiary hospitals38,39, and the proportion of data from primary hospitals is less than 10%. However, the heterogeneity of PCa requires models to be trained on large-scale and diverse datasets; otherwise, “overfitting” is likely to occur. For example, an MRI AI model trained on 1000 cases of data from tertiary hospitals had its AUROC drop from 0.93 to 0.78 in the validation set of primary hospitals. This is mainly because the image resolution and artifact types of MRI equipment in primary hospitals are different from those in tertiary hospitals, leading to the failure of the model’s feature extraction40,41.

Second, data annotation is subjective and inconsistent. Image annotation requires radiologists to manually outline lesions and label regions based on pathological results, which takes about 1–2 h for a single case of multi-modal imaging. However, the consistency of annotations among different physicians is very low. Such annotation differences can have a fatal impact on model training—if the same lesion in the training set is labeled as both “benign” and “malignant”, the model will learn incorrect features, resulting in a decrease in diagnostic specificity42,43. In 2023, a multi-center study showed that the diagnostic AUROC of AI models trained on data independently annotated by 3 physicians was lower than that of models trained on consensus-annotated data, indicating that the accuracy of annotation is also crucial.

Third, data privacy and sharing restrictions exist. PCa imaging data contains patients’ private information, and cross-center data sharing faces strict supervision due to regulations such as the Personal Information Protection Law and Guidelines for Medical Data Security44,45. At present, most studies use single-center data, lacking external validation with multi-center, cross-device, and cross-population data, resulting in insufficient model generalization. A 2024 systematic review showed that only 18% of AI + PCa imaging studies included validation data from 3 or more centers, and only 9% of studies included populations of different races. However, the prostate anatomical structure and PCa imaging features vary among different racial populations, which further limits the universality of the models.

Insufficient model generalization and robustness

Generalization and robustness are the core prerequisites for the clinical application of AI, but current AI models for PCa diagnostic imaging have obvious shortcomings in these two aspects.

There is the problem of “domain shift” caused by differences in equipment and scanning parameters. Different manufacturers’ MRI/PET equipment and different scanning parameters can lead to differences in the imaging features of the same patient, that is, different “data domains”. Most AI models are trained on datasets with a single manufacturer and single parameters46,47, so they cannot adapt to “domain shift”. For example, a csPCa identification model trained on Siemens 3.0T MRI had its AUROC drop from 0.94 to 0.76 and the false positive rate rise from 5.2 to 18.3% when applied to GE 1.5T MRI data. The fundamental reason is that the features learned by the model are bound to specific equipment parameters rather than the essential pathological features of the lesions.

The robustness to image noise and artifacts is weak. Clinical images inevitably contain artifacts, and current AI models are sensitive to such noise. Huang input MRI images with different artifact intensities into a well-trained MRI AI model and found that when the artifact intensity increased by 20%, the lesion detection sensitivity of the model decreased from 91.2 to 72.5%, and the specificity decreased from 88.5 to 65.3%48,49. The root cause of this problem is that the training data contains relatively few samples with artifacts, so the model has not learned the distinguishing features between artifacts and lesions, leading to misjudgment of artifacts as lesions or masking of lesions.

The model’s ability to handle rare cases is insufficient. The imaging features of rare subtypes of PCa are significantly different from those of common acinar carcinoma, but there are very few samples of rare subtypes in the existing training data, resulting in a misdiagnosis rate of more than 60% for such cases by AI models. In addition, complex cases with other concurrent diseases also become “blind spots” in model diagnosis due to the scarcity of samples.

Problems of clinical adaptability and physician trust

Even if an AI model has excellent performance, it will be difficult to be implemented if it cannot be integrated into the clinical workflow and gain the trust of physicians. Currently, there are three main problems:

The form of model output is disconnected from clinical needs. Most AI models output technical indicators such as “lesion probability map” and “AUROC value”, while clinicians need more intuitive and interpretable diagnostic suggestions50,51. A 2024 survey of 200 radiologists showed that only 35% of physicians believed that the output form of current AI models “conforms to clinical habits”, and 62% of physicians hoped that the models could directly generate “drafts of imaging diagnostic reports” and label key evidence.

The lack of “explainability” of the model leads to low physician trust. Currently, the most performant AI models are mostly “black-box” models, which cannot explain “why this region is determined to be a malignant lesion”. The model may make a diagnosis based on a certain texture feature of the lesion, but physicians cannot know the pathological significance of this feature52,53. If the model’s diagnosis is inconsistent with the physician’s experience, the physician is more likely to reject the model’s result. A clinical trial study showed that when the AI model’s diagnosis was consistent with that of the physician, the adoption rate by the physician was 92%; when they were inconsistent, the adoption rate was only 18%54,55. The core reason is that physicians cannot understand the model’s decision-making logic. This “distrust” will lead to the marginalization of AI models, making them unable to play an auxiliary role.

The model does not consider the diversity of clinical scenarios. Different clinical scenarios have different requirements for AI models: outpatient screening requires rapid exclusion of benign lesions, inpatient diagnosis requires accurate identification of csPCa, and post-operative follow-up requires focused detection of recurrent lesions56,57. However, most current AI models are “general-purpose” and not optimized for specific scenarios. For example, when an AI model suitable for screening is used for post-operative follow-up, its sensitivity drops to 70% because most recurrent lesions are small, which cannot meet clinical needs. In addition, the operational skills of physicians and equipment conditions in primary hospitals can also affect the quality of model input data, resulting in unstable model performance.

To facilitate an integrated view of the current barriers and future priorities, the major challenges and corresponding research directions for AI-assisted diagnostic imaging in prostate cancer are summarized in Table 1.

Future prospects of artificial intelligence in prostate cancer diagnostic imaging

Building on the key challenges summarized in the previous section, and combined with the development trend of AI technology and clinical needs, the future development of AI for PCa diagnostic imaging will focus on the four directions of “data optimization, technological innovation, clinical integration, and system improvement”, promoting the transformation of technology from the “laboratory” to the “clinic” and achieving the goals of accurate diagnosis and individualized treatment.

Construction of high-quality and shareable multi-modal datasets

Solving the data bottleneck is the basis for improving the performance of AI models. In the future, data optimization will be achieved through three strategies, and the architecture of the federated learning model is shown in Fig. 5:

Local model parameters and coefficients are shared with the global model, which merges the local model parameters and coefficients and then shares them back with the local models (adapted/redrawn from published work and publicly available data79).

Promote the construction of multi-center data alliances. Led by national or regional health departments, unite tertiary hospitals, primary hospitals, and scientific research institutions to build a PCa diagnostic imaging data alliance, and formulate unified data collection standards and annotation specifications58,59. The European Union has launched the “PCa-AI Data Hub” project, integrating multi-modal data from 50 hospitals in 20 countries, and opening it to qualified R&D institutions after standardized processing. Such alliances can achieve the accumulation of data “quantity” and improve data “diversity” through multi-center data, reducing model bias caused by regional, equipment, and population differences.

Advance semi-supervised/unsupervised learning and synthetic data technology. To solve the problem of high annotation costs, semi-supervised learning and unsupervised learning will become the main technical directions60,61. Li established an MRI AI model using semi-supervised learning, training it with 200 annotated data + 800 unannotated data. The diagnostic AUROC reached 0.92, which was similar to that of the fully supervised model, saving a lot of annotation costs. In addition, synthetic data technology can supplement data of rare cases. Wang used GANs to generate 1000 cases of MRI images of neuroendocrine PCa. After adding these data to the training set, the diagnostic AUROC of the model for this subtype increased to 0.85, effectively solving the problem of scarce samples of rare cases.

Establish a privacy-preserving data sharing system. Use federated learning to realize “models move while data stays”—each medical institution keeps its local data in-house and only uploads the gradient parameters of model training to a central server. The server aggregates the parameters to update the model and then distributes it back to each medical institution. During this process, no raw data is transmitted62,63, fundamentally ensuring privacy. In 2024, the “Prostate Cancer AI Diagnosis Alliance” in China constructed a three-dimensional multi-center AI model based on federated learning, aggregating 5000 cases of data from 15 hospitals. The diagnostic AUROC of the model was 0.95, and no data privacy leakage occurred. Technologies such as differential privacy and homomorphic encryption will also be continuously explored to balance data sharing and privacy protection.

Technical level

Technological innovation is the core driving force for the clinical implementation of AI models. In the future, four key technical directions will be focused on to solve the current problems of model generalization, explainability, and scenario adaptability:

In-depth integration of explainable AI (XAI) technology

To overcome the trust issue of “black-box” models, XAI will develop from “post-hoc explanation” to “pre-hoc design”, enabling the model to have a transparent decision-making process. On the one hand, the feature regions focused on by the model will be visualized—based on the improved algorithm of Gradient-Weighted Class Activation Mapping (Grad-CAM)64,65, the main regions relied on by the model when determining a lesion as malignant are labeled on MRI images through heatmaps and associated with pathological features, facilitating physicians to understand the model’s decision-making logic. In 2024, a team proposed an XAI-assisted diagnosis system that, while outputting the csPCa risk score, automatically generates a “feature-pathology” association report, such as “The model determines a high risk based on the texture heterogeneity of the lesion and the degree of diffusion restriction (ADC value), which is consistent with the pathological feature of a Gleason score of 8”. This increased physicians’ trust in the model from 35% (traditional models) to 78%66,67. On the other hand, develop “intrinsically explainable” lightweight models—compared with complex Transformers or deep CNNs, ensemble models based on decision trees and simple CNNs achieve explainability through explicit feature weights and decision rules, while still having good performance. For example, an ultrasound AI model based on lightweight CNN outputs the weight values of each feature, allowing physicians to intuitively grasp the core basis for the model’s judgment, and the model can run in real-time on low-configuration equipment in primary hospitals.

Domain adaptation and robustness optimization technology

Domain Adaptive Learning (DA) and robustness training will be the core. From the perspective of domain adaptation, transfer learning will be used to enable the model to adaptively adjust on datasets with different equipment and scanning parameters—train the model based on the “source domain”, and learn the feature distribution of the “target domain” through domain adversarial training to eliminate performance degradation caused by equipment differences. In 2024, Kim et al. constructed an MRI AI model based on Domain-Adversarial Neural Networks (DANN), and the AUROC difference of the model between the source domain and target domain data decreased from 0.18 to 0.05, achieving stable performance across different equipment68,69. Universal feature extraction technology can enable the model to learn the “essential features” of lesions rather than “superficial features” bound to equipment, further improving generalization. In addition, to address the problem of robustness optimization, data augmentation algorithms will be used to simulate common noise and artifacts in clinical practice, allowing the model to fully learn the discrimination algorithms for these interfering features during training.

Development of scenario-specific customized models

In the future, AI models will shift from “general-purpose” to “scenario-customized” according to different clinical problems, and be customized for different scenarios. Outpatient screening scenario: Focus on high specificity, integrate ultrasound + short MRI sequences (T2WI + DWI) to quickly generate a “benign possibility” score. Patients with a score > 90% are advised to have regular follow-up without further examination70,71. In 2024, a primary hospital in China trialed a screening AI model, which had a specificity of up to 92% and reduced the unnecessary biopsy rate by 30%, significantly alleviating the medical burden on patients. Inpatient diagnosis scenario: Focus on accurate judgment of csPCa, integrate multi-modal information to generate a “csPCa risk level” and “suggested biopsy area” for csPCa, and label the relationship between the lesion and surrounding structures to support surgical plans. Post-operative follow-up scenario: Focus on the detection of small recurrent lesions, automatically label suspicious recurrent lesions by comparing changes in imaging features before and after treatment, with a sensitivity of over 90%. At the same time, developing customized models for special populations is also a popular direction—for example, for elderly patients, by enhancing the model’s ability to learn lesion features against the background of glandular atrophy, the diagnostic AUROC is increased by 0.12 compared with the general model72,73; the customized model for patients in the PSA gray zone combines radiomic features and clinical parameters to reduce the misdiagnosis rate to 8%.

Construction of multi-omics data integration models

In the future, radiomics AI models will break through the limitation of relying on single-group imaging data and build diagnostic or prognostic prediction models based on multi-dimensional information such as “radiomics + genomics + clinical data”. On the one hand, in imaging diagnosis, integrating imaging features and genetic markers to identify csPCa can improve the accuracy of differential diagnosis. For example, a multi-omics AI model that integrates mp-MRI radiomic features and PCA3 gene expression levels to identify csPCa has an AUROC of 0.97, which is 0.03 higher than that of diagnostic models based on single imaging74,75. On the other hand, in imaging prognostic prediction, integrating imaging features, genomic information, and clinical parameters to predict patients’ biochemical recurrence-free survival (bRFS) and overall survival (OS) provides support for personalized treatment. For example, a multi-omics prognostic model constructed by a research team in 2024 can accurately predict patients’ 1-year and 3-year bRFS, and recommend treatment plans for patients based on the prediction results. Clinical trials found that this can increase the 3-year bRFS rate of high-risk patients by 15%. In addition, the relationship between imaging and molecular features can also be discovered—for example, it was found that the DCE-MRI Ktrans of MRI is positively correlated with the expression level of the VEGF gene (r = 0.82), and imaging features can be used as a non-invasive indicator of tumor angiogenesis, providing a means for monitoring the efficacy of precise targeted therapy for PCa.

However, translating such multi-omics models from proof-of-concept studies into clinically usable tools requires more rigorous validation and standardization than many current single-modality radiomics pipelines. First, model development and evaluation should follow explicit standards, including pre-specification of endpoints and statistical analysis plans, transparent reporting of feature preprocessing and selection, strict separation of training, validation, and independent test sets, and external validation in multi-center cohorts with diverse patient populations. For prognostic applications, calibration analysis and decision-curve analysis are essential to ensure that predicted risks of biochemical recurrence or overall survival are accurate and clinically actionable rather than merely statistically significant76.

Second, reproducibility across different imaging devices and acquisition protocols is a critical bottleneck for multi-omics integration. Variations in scanner vendor, field strength, sequence parameters, and reconstruction algorithms can substantially alter radiomic features and destabilize multi-omics signatures, especially when they are combined with genomic markers77. For prostate MRI and PSMA PET/CT, harmonized acquisition protocols, phantom-based cross-vendor calibration and statistical feature-harmonization techniques, together with robustness testing on heterogeneous multi-center datasets, will be necessary prerequisites before a multi-omics model can be deployed as a reliable decision-support tool. Finally, because multi-omics AI systems that combine imaging, genomic, and clinical variables are likely to be classified as high-risk AI/ML-based Software as a Medical Device (SaMD), their entire lifecycle needs to be aligned with emerging international regulatory benchmarks78. Key elements include adherence to good machine-learning practice, pre-market evaluation based on well-controlled clinical evidence, clearly defined mechanisms for post-market performance monitoring and model updating, and change-control procedures consistent with frameworks such as the U.S. FDA’s AI/ML-based SaMD action plan. Incorporating these requirements at the design stage of multi-omics pipelines will improve reproducibility across sites, facilitate regulatory review, and ultimately accelerate the safe clinical translation of AI-assisted precision diagnosis in PCa.

Clinical integration level

The ultimate value of AI technology lies in serving clinical practice. In the future, in-depth integration of AI and clinical practice will be achieved through process optimization, training system construction, and patient participation. The flowchart of AI embedded in the clinical workflow of prostate cancer diagnosis is shown in Fig. 6.

Flowchart of AI Embedded in the Clinical Workflow of Prostate Cancer Diagnosis (adapted/redrawn from published work and publicly available data92).

AI-assisted system embedded in clinical workflow

In the future, AI systems will transform from “isolated tools” to “seamless assistants” embedded in the original clinical workflow. During an ultrasound examination, AI reads the examination images in real-time. When AI identifies a suspicious lesion, it automatically prompts the doctor to rotate the probe and generates a preliminary diagnosis in real-time. During mp-MRI image reading79, AI pre-performs automatic prostate zone segmentation and csPCa risk analysis, marking high-risk areas with heatmaps. On this basis, physicians only need to review the areas within the heatmaps instead of checking each frame one by one, reducing the image reading time from the original 30–10 min. In addition, AI systems will be embedded in hospital information systems (HIS), Picture Archiving and Communication Systems (PACS), and pathology information systems (PIS), automatically importing patients’ clinical history, imaging data, and pathological reports without the need for physicians to input them one by one. In 2024, after the application of an AI system in a tertiary hospital, the time consumed for PCa diagnosis was reduced from 72 to 24 h, and patient satisfaction increased by 40%.

Beyond conceptual workflow diagrams, several multi-center and prospective studies have demonstrated that AI systems for prostate cancer imaging can be embedded into real-world clinical pathways. In prostate MRI, the international PI-CAI study evaluated an AI system for csPCa detection in a paired, routine-like reading setting involving more than 10,000 examinations from over 9000 patients, and showed that AI-assisted assessment achieved higher sensitivity than the average radiologist and non-inferior specificity compared with multidisciplinary standard-of-care decisions, supporting its feasibility as a plug-in to existing MRI reporting workflows80. In China, the Prostate Cancer AI Diagnosis Alliance has built a three-dimensional diagnostic model using a federated learning framework on 5000 cases from 15 hospitals; the model is trained by aggregating local gradient updates while raw data remain within each institution’s firewall, and achieves an AUROC of 0.95 across participating centers without any recorded privacy breaches, illustrating that cross-hospital AI deployment can be integrated into PACS/HIS environments in a privacy-preserving manner. Together with prospective multi-center studies of TRUS-based 3D convolutional neural networks that reduced unnecessary biopsies while maintaining high sensitivity for csPCa in routine ultrasound workflows81, these initiatives provide concrete evidence that AI-assisted systems can be operationalized within diverse clinical settings, thereby reinforcing the translational relevance and practical feasibility of the workflow-integration strategies discussed in this section.

Improvement of physician training systems for AI application capabilities

To enhance physicians’ understanding and application of AI technology, hierarchical training content will be designed: For senior radiologists, the training will focus on the performance boundaries of AI models, methods for interpreting XAI results, and how to use clinical experience to correct diagnostic errors of AI. For mid-career and young physicians, the content will be expanded to include the principles of AI technology and the impact of data quality on AI models, improving their ability to judge AI results independently. For physicians in primary hospitals, the training will mainly cover the operation process of AI systems and solutions to common equipment problems in AI applications. In addition, through the “AI-physician” case discussion mechanism, physicians can accumulate experience in diagnosing diseases using AI technology. After training, the adoption rate of AI models by physicians has been significantly improved82,83. In 2024, a provincial training program on the application of AI technology in radiology, ultrasound, pathology, and other fields was launched, covering 100 primary hospitals. After the training, the adoption rate of AI models by physicians increased from 35% to 82%, and the diagnostic consistency Kappa value rose from 0.65 to 0.81.

Patient-participated collaborative decision-making model

AI technology can be integrated into the patient-participated decision-making process. Through “patient-friendly” reports, patients can understand the diagnostic process—for example, in addition to professional terminology, the AI-generated diagnostic report includes a plain-language explanation, options for treatment plans, and the risk-benefit ratio of each plan84. Moreover, AI provides personalized treatment recommendations based on the patient’s age, underlying diseases, and living habits, helping patients make joint decisions with doctors85. In 2024, a clinical study observed that the treatment satisfaction rate under the “AI-assisted + doctor-patient joint decision-making” model was 92%, and the compliance with post-treatment follow-up increased by 35%, which was significantly higher than the traditional “physician-led” model86. While these data, technical, and workflow strategies are essential to enhance the performance and usability of AI systems, their safe and equitable deployment ultimately depends on robust ethical norms and regulatory frameworks.

Ethical and regulatory level

From a governance perspective, the ethical and regulatory issues surrounding AI for PCa imaging can be considered along three complementary dimensions: ethical norms and data governance, formal regulatory supervision, and allocation of legal liability. To ensure the safe application of AI technology, it is necessary to establish an ethical and regulatory system covering the entire lifecycle of “R&D → approval → application → iteration”.

Refinement and implementation of ethical norms

At the algorithm level, on the one hand, universal guidelines for the collection, use, and sharing of medical imaging data will be established, such as specifying data de-identification standards, informed consent procedures, and implementing a data usage recording mechanism. In 2024, China issued the Ethical Guidelines for Medical Artificial Intelligence Data, which clearly stipulates that AI developers can only use clinical data after passing ethical review, and must sign a confidentiality agreement for data sharing; otherwise, the maximum fine can reach 5 million yuan87,88. On the other hand, procedures for detecting and correcting algorithmic bias will be formulated. For example, AI models are required to pass fairness reviews for diverse populations before being approved. If the diagnostic performance for certain populations is much lower than the average, the model must be adjusted. In addition, a periodic bias monitoring system will be established for AI models that are continuously iterated and used in clinical practice, with timely adjustments made when necessary.

In practical terms, algorithmic bias detection and correction can be operationalized at three levels: datasets, models, and clinical outcomes. At the dataset level, developers should routinely audit the composition of training, validation, and test sets by key subgroups (e.g., age, race or ethnicity where applicable, comorbidities, and hospital level) and document any under-represented populations. At the model level, performance metrics such as sensitivity, specificity, false-negative rate, and calibration should be reported in a stratified fashion across these subgroups, with pre-defined fairness thresholds beyond which the model is considered biased. If substantial disparities are detected, mitigation strategies—such as targeted data augmentation or re-sampling, re-weighting of under-represented groups, group-specific decision thresholds, or re-training with fairness constraints—should be triggered and documented as part of an algorithmic impact assessment. At the outcome level, institutions are encouraged to establish multidisciplinary ethics or fairness committees that periodically review real-world performance dashboards, investigate systematic discrepancies in error patterns, and mandate corrective updates, consistent with the requirements for continuous bias monitoring in China’s Ethical Guidelines for Medical Artificial Intelligence Data (2024).

Standardization and dynamization of regulatory systems

From the perspective of regulatory requirements, international cooperation among regulatory authorities will be strengthened to form a global consensus and further unify the review requirements for AI-assisted PCa diagnostic imaging systems, such as requirements for clinical trial verification data, performance standards, and safety requirements. A “risk-based hierarchical supervision” mechanism will be established: Models with low risk can go through a simplified approval process; models with high risk require large-scale and rigorous clinical trials. At the dynamic supervision level, considering the iterative update characteristics of AI models, a mechanism of “real-time monitoring → regular evaluation → dynamic update” will be established. For example, AI developers are required to submit the number of clinical cases in which the AI model is applied every 6 months. The regulatory authority judges whether the model performance is stable based on this information89. If performance degradation occurs, the developer must submit a safety certificate for the federated learning iterative optimization algorithm to avoid new vulnerabilities caused by federated learning-based iterative optimization.

Recent international regulatory developments provide more concrete benchmarks for these requirements. In the European Union, the AI Act, which entered into force in 2024, classifies AI systems that are components of medical devices or standalone diagnostic software as “high-risk” and mandates a documented risk-management system, robust data governance, detailed technical documentation, appropriate human oversight, and post-market monitoring for such systems. These obligations apply directly to AI-based prostate MRI, ultrasound, and PET/CT decision-support tools and require manufacturers to demonstrate dataset representativeness and actively manage algorithmic bias throughout the lifecycle. In China, the Ethical Guidelines for Medical Artificial Intelligence Data (2024), together with emerging guidance on the clinical application of medical AI, similarly emphasize prior ethical review, strict de-identification and data-security requirements, and explicit procedures for fairness review and bias correction before large-scale deployment90. Aligning PCa imaging AI systems with these high-risk regulatory frameworks “by design”—from data collection and model development to clinical validation and iterative updating—will facilitate cross-jurisdictional approval and help ensure that technical performance gains are matched by robust protection of patient rights and equity.

Clarification of liability definition mechanisms

Legal provisions will be formulated to clarify the liability for AI diagnostic errors: If the error is caused by problems with the model itself, the AI R&D institution shall bear the main liability; if the error is caused by the hospital’s failure to use the model in accordance with requirements, the hospital shall bear the main liability; if the error is caused by the physician’s failure to review the AI results, the physician shall bear the main liability. A mediation mechanism for AI medical disputes will also be established. In 2024, a country passed the Medical Artificial Intelligence Liability Regulations, which clearly defined the standards for liability attribution in AI medical disputes for the first time, reducing the dispute rate of clinical applications using AI-assisted diagnostic systems by 60%91.

Discussion

AI technology is beginning to tangibly reshape clinical decision-making in prostate cancer diagnostic imaging92. By coupling with ultrasound, mp-MRI, and PSMA-based PET/CT, AI systems can automatically highlight suspicious regions, quantify tumor burden, and generate standardized risk scores that feed directly into biopsy, treatment, and follow-up strategies. In prospective and routine-like settings, AI-assisted MRI reading has reduced reporting time from about 30 min to roughly 10 min per examination—an approximate two-thirds reduction—while maintaining, and in some cases improving, clinically significant prostate cancer (csPCa) detection performance compared with senior radiologists and increasing inter-reader agreement. Embedding AI into hospital information and PACS systems has shortened the overall diagnostic turnaround for suspected PCa from around 72–24 h and has been associated with patient satisfaction gains of about 40%93. In ultrasound workflows, three-dimensional convolutional neural network models trained on multi-center TRUS videos have preserved high sensitivity for csPCa while reducing unnecessary biopsies, and federated-learning models trained on thousands of cases from multiple hospitals have achieved AUROCs close to 0.95 across centers without sharing raw data. For systemic therapy, AI models that compare pre- and post-treatment PSMA PET/CT scans can predict 3-month treatment response based on 1-month imaging with AUROCs around 0.90, enabling earlier identification of non-responders and more timely adjustment of endocrine or systemic regimens94. Together, these findings indicate that AI is moving from an experimental proof-of-concept to a practical assistant that accelerates workflows, standardizes image interpretation, and supports faster, evidence-based clinical decisions in both tertiary and primary-care settings.

At the same time, current AI applications still face substantial obstacles, including data scarcity and imbalance, limited model generalization and robustness, imperfect clinical adaptability, and incomplete ethical and regulatory safeguards. Addressing these issues will require coordinated efforts to build large, diverse multi-center datasets; develop explainable and domain-adaptive algorithms; design scenario-specific models that integrate seamlessly into AI–physician–patient collaborative decision-making; and implement full-lifecycle regulatory oversight and bias monitoring. Looking forward, as multi-omics data fusion matures, edge computing becomes more widely available, and shared decision-making models are more broadly implemented, AI is expected to evolve into an indispensable tool for precision diagnosis, individualized treatment planning, dynamic efficacy monitoring, and prognostic prediction in prostate cancer. The experience accumulated from AI deployment in prostate cancer diagnostic imaging will, in turn, inform imaging-based AI strategies for other malignancies and help shape the next generation of intelligent oncologic imaging.

Data availability

No datasets were generated or analysed during the current study.

References

Bray, F. et al. Global cancer statistics 2022: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA Cancer J. Clin. 74, 229–263 (2024).

Salinas, C. A., Tsodikov, A., Ishak-Howard, M. & Cooney, K. A. Prostate cancer in young men: an important clinical entity. Nat. Rev. Urol. 11, 317–323 (2014).

Bleyer, A., Spreafico, F. & Barr, R. Prostate cancer in young men: an emerging young adult and older adolescent challenge. Cancer 126, 46–57 (2020).

Zhou, H. et al. Exploring prostate cancer incidence trends and age change in cancer registration areas of Jiangsu Province, China, 2009 to 2019. Curr. Oncol. 31, 5516–5527 (2024).

Schelb, P. et al. Simulated clinical deployment of fully automatic deep learning for clinical prostate MRI assessment. Eur. Radio. 31, 302–313 (2021).

Belue, M. J. et al. External validation of an artificial intelligence algorithm using biparametric MRI and its simulated integration with conventional PI-RADS for prostate cancer detection. Acad. Radio. 32, 3813–3823 (2025).

Arif Albulushi, F. et al. Advancements and challenges in cardiac amyloidosis imaging: a comprehensive review of novel techniques and clinical applications. Curr. Probl. Cardiol. 49, 9 (2024).

NZA, B., XTL, A., LM, C., ZQF, B. & YSS, A. Application of deep learning to establish a diagnostic model of breast lesions using two-dimensional grayscale ultrasound imaging. Clin. Imaging 79, 56–63 (2021).

Bai, S. et al. The role of artificial intelligence in colorectal cancer and polyp detection: a systematic review. J. Clin. Oncol. 43, 47–47 (2025).

Baltzer, P. A. T. & Clauser, P. Applications of artificial intelligence in prostate cancer imaging. Curr. Opin. Urol. 31, 416–423 (2021).

Bansal, R. & Aggarwal, B. Prospective evaluation of an affordable and portable artificial intelligence-based modality for breast cancer screening in women across breast densities. J Clin Oncol. 40, e13585 (2022).

Barat, M. et al. CT and MRI of abdominal cancers: current trends and perspectives in the era of radiomics and artificial intelligence. Jpn. J. Radiol. 42, 246–260 (2024).

Bayerl, N. et al. Assessment of a fully-automated diagnostic AI software in prostate MRI: clinical evaluation and histopathological correlation. Eur. J. Radiol. 181, 111790 (2024).

Han, S. M., Lee, H. J. & Choi, J. Y. Computer-aided prostate cancer detection using texture features and clinical features in ultrasound image. J. Digit Imaging 21, S121–S133 (2008).

Huang, X., Chen, M., Liu, P. & Du, Y. Texture feature-based classification on transrectal ultrasound image for prostatic cancer detection. Comput. Math. Methods Med. 2020, 7359375 (2020).

Bera, K., Braman, N., Gupta, A., Velcheti, V. & Madabhushi, A. Predicting cancer outcomes with radiomics and artificial intelligence in radiology. Nat. Rev. Clin. Oncol. 19, 132–146 (2021).

Bharathi, P. G. et al. Artificial intelligence-driven model for prostate cancer staging with PSMA PET: a study integrating radiomics features and SVM classifier. J. Nucl. Med. 65, 1 (2024).

Wang, K. et al. Machine learning prediction of prostate cancer from transrectal ultrasound video clips. Front Oncol. 12, 948662 (2022).

Sun, Y. K. et al. Three-dimensional convolutional neural network model to identify clinically significant prostate cancer in transrectal ultrasound videos: a prospective, multi-institutional, diagnostic study. EClinicalMedicine 60, 102027 (2023).

Zhang, M. et al. Value of machine learning-based transrectal multimodal ultrasound combined with PSA-related indicators in the diagnosis of clinically significant prostate cancer. Front. Endocrinol. 14, 1137322 (2023).

Brodie, A. et al. Artificial intelligence in urological oncology: An update and future applications. Urol Oncol. 39, 379–399 (2021).

Canellas, R., Kohli, M. & Westphalen, A. The evidence for using artificial intelligence to enhance prostate cancer MR imaging. Curr. Oncol. Rep. 25, 1–8 (2023).

Cellina M. et al. Artificial intelligence in the era of precision oncological imaging. Technol. Cancer Res. Treat 21, 15330338221141793 (2022).

Chassagnon, G. et al. Artificial intelligence in lung cancer: current applications and perspectives. Jpn J Radiol. 41, 235–244 (2023).

Chaurasia, A. K. M., Greatbatch, C. J. B. M. S. H. M. & Hewitt, A. W. Diagnostic accuracy of artificial intelligence in glaucoma screening and clinical practice. J. Glaucoma 31, 15 (2022).

Chen, W., Yao, M., Zhu, Z., Sun, Y. & Han, X. The application research of AI image recognition and processing technology in the early diagnosis of COVID-19. BMC Med. Imaging 22, 1–10 (2022).

Chidambaram, S., Sounderajah, V., Maynard, N. & Markar, S. R. Diagnostic performance of artificial intelligence-centred systems in the diagnosis and postoperative surveillance of upper gastrointestinal malignancies using computed tomography imaging: a systematic review and meta-analysis of diagnostic accuracy. Ann. Surg. Oncol. 29, 1977–1990 (2022).

Decharatanachart, P., Chaiteerakij, R., Tiyarattanachai, T. & Treeprasertsuk, S. Application of artificial intelligence in chronic liver diseases: a systematic review and meta-analysis. BMC Gastroenterol. 21, 10 (2021).

Eisazadeh, R., Shahbazi-Akbari, M., Mirshahvalad, S. A., Pirich, C. & Beheshti, M. Application of artificial intelligence in oncologic molecular PET-imaging: a narrative review on beyond C-MET and other less-commonly used radiotracers. Semin Nucl. Med. 54, 293–301 (2024).

Wu, B. et al. Modality preserving U-Net for segmentation of multimodal medical images. Quant. Imaging Med. Surg. 13, 5242–5257 (2023).

Eminaga, O., Loening, A., Lu, A., Brooks, J. D. & Rubin, D. Detection of prostate cancer and determination of its significance using explainable artificial intelligence. J. Clin. Oncol. 38, 5555–5555 (2020).

Filippi, M. et al. Present and future of the diagnostic work-up of multiple sclerosis: the imaging perspective. J Neurol. 270, 1286–1299 (2023).

Fowler, G. E. et al. Artificial intelligence as a diagnostic aid in cross-sectional radiological imaging of surgical pathology in the abdominopelvic cavity: a systematic review. BMJ Open 13, e064739 (2023).

Guha, A. et al. Artificial intelligence applications in cardio-oncology: a comprehensive review. Curr. Cardiol. Rep. 27, 56 (2025).

Gupta, R. et al. Artificial intelligence-driven digital cytology-based cervical cancer screening: is the time ripe to adopt this disruptive technology in resource-constrained settings? A literature review. J. Digit. Imaging 36, 1643–1652 (2023).

Ha HK. Editorial for "Deep-Learning-Based Artificial Intelligence for PI-RADS Classification to Assist Multiparametric Prostate MRI Interpretation: A Development Study". J Magn Reson Imaging 52, 1508–1509 (2020).

Zhu, L. et al. Fully automated detection and localization of clinically significant prostate cancer on MR images using a cascaded convolutional neural network. Front. Oncol. 12, 958065 (2022).

Hamid, M. T. R., Mumin, N. A., Hamid, S. A. & Rahmat, K. Application of artificial intelligence (AI) system in opportunistic screening and diagnostic population in a middle-income nation. Curr. Med. Imaging Rev. 20, e15734056280191 (2024).

Hasani, N. et al. Application of artificial intelligence in molecular imaging of rare diseases: is the future bright?. J. Nucl. Med. 63, 2 (2022).

Helal, M. et al. Validation of artificial intelligence contrast mammography in diagnosis of breast cancer: relationship to histopathological results. Eur. J. Radio. 173, 111392 (2024).

Hong, K. et al. Artificial intelligence for early gastric cancer boundary recognition in NBI and nF-NBI endoscopic images. Scand. J. Gastroenterol. 60, 624–634 (2025).

Hsiao, Y. et al. Application of artificial intelligence-driven endoscopic screening and diagnosis of gastric cancer. World J. Gastroenterol. 27, 2979–2993 (2021).

Al-Rawi, N. et al. The effectiveness of artificial intelligence in detection of oral cancer. Int. Dent. J. 72, 436–447 (2022).

Ilhan, B., Guneri, P. & Wilder-Smith, P. The contribution of artificial intelligence to reducing the diagnostic delay in oral cancer. Oral. Oncol. 116, 105254 (2021).

Jairam, M. P. & Ha, R. D. A review of artificial intelligence in mammography. Clin. Imaging 88, 36–44 (2022).

Khosravi P., Lysandrou M., Eljalby M., Li Q. & Hajirasouliha I. A Deep Learning Approach to Diagnostic Classification of Prostate Cancer Using Pathology-Radiology Fusion. J Magn Reson Imaging 54, 462–471 (2021).

Kocak, B., Baessler, B., Cuocolo, R., Mercaldo, N. & Daniel, P. D. S. Trends and statistics of artificial intelligence and radiomics research in radiology, nuclear medicine, and medical imaging: bibliometric analysis. Eur. Radio. 33, 7542–7555 (2023).

Koett, M., Melchior, F., Artamonova, N., Bektic, J. & Heidegger, I. Redefining prostate cancer care: innovations and future directions in active surveillance. Curr. Opin. Urol. 35, 439-446 (2025).

Kufel, J. et al. Application of artificial intelligence in diagnosing COVID-19 disease symptoms on chest X-rays: a review. Int. J. Med. Sci. 19, 1743–1752 (2022).

Twilt, J. J. et al. AI-assisted vs unassisted identification of prostate cancer in magnetic resonance images. JAMA Netw. Open 8, e2515672 (2025).

Sun, Z. et al. Assessing the performance of artificial intelligence assistance for prostate MRI: a two-center study involving radiologists with different experience levels. J. Magn. Reson Imaging 61, 2234–2245 (2025).

Priester, A. et al. Prediction and mapping of intraprostatic tumor extent with artificial intelligence. Eur. Urol. Open Sci. 54, 20–27 (2023).

Kwee, T. C. & Kwee, R. M. Workload of diagnostic radiologists in the foreseeable future based on recent (2024) scientific advances: updated growth expectations. Eur. J. Radio. 187, 112103 (2025).

Lai, B. et al. Artificial intelligence in cancer pathology: challenge to meet increasing demands of precision medicine. Int. J. Oncol. 63, 107 (2023).

Law M, Seah J, Shih G. Artificial intelligence and medical imaging: applications, challenges and solutions. Med. J. Aust. 214, 450–452.e1 (2021).

Lawal, I. O., Ndlovu, H., Kgatle, M., Mokoala, K. M. G. & Sathekge, M. M. Prognostic value of PSMA PET/CT in prostate cancer. Semin Nucl. Med. 54, 14 (2024).

Le, Y. et al. Progress in the clinical application of artificial intelligence for left ventricle analysis in cardiac magnetic resonance. Rev. Cardiovasc. Med. 25, 447 (2024).

Lecointre, L. et al. Artificial intelligence-enhanced magnetic resonance imaging-based pre-operative staging in patients with endometrial cancer. Int. J. Gynecol. Cancer 35, 100017 (2025).

Li, J. et al. Diagnostic CT of colorectal cancer with artificial intelligence iterative reconstruction: a clinical evaluation. Eur. J. Radio. 171, 111301 (2024).

Lomer, N. B. et al. MRI-based radiomics for predicting prostate cancer grade groups: a systematic review and meta-analysis of diagnostic test accuracy studies. Acad. Radio. 32, 3429–3452 (2025).

Mahmood, H., Shaban, M., Rajpoot, N. & Khurram, S. A. Artificial Intelligence-based methods in head and neck cancer diagnosis: an overview. Br J Cancer. 124, 1934–1940 (2021).

Majumder, A. & Sen, D. Artificial intelligence in cancer diagnostics and therapy: current perspectives. Indian J. Cancer 58, 481–492 (2021).

Margail, C. et al. Imaging quality of an artificial intelligence denoising algorithm: validation in 68Ga PSMA-11 PET for patients with biochemical recurrence of prostate cancer. EJNMMI Res. 13, 50 (2023).

MD, A. K. et al. Is there a role of artificial intelligence in preclinical imaging?. Semin. Nucl. Med. 53, 7 (2023).

Mehralivand, S. et al. A cascaded deep learning-based artificial intelligence algorithm for automated lesion detection and classification on biparametric prostate magnetic resonance imaging. Acad. Radio. 29, 1159–1168 (2022).

Miao, K. & Miao, J. Diagnosis and prognosis of stroke using artificial intelligence and imaging (P11-5.018). Neurology 100, 2 (2023).

Mohammadzadeh, I. et al. Application of artificial intelligence in forecasting survival in high-grade glioma: systematic review and meta-analysis involving 79,638 participants. Neurosurg. Rev. 48, 1–13 (2025).

Moro, F. et al. Role of artificial intelligence applied to ultrasound in gynecology oncology: a systematic review. Int J. Cancer 155, 14 (2024).

Nakamoto, Y., Kitajima, K., Toriihara, A., Nakajo, M. & Hirata, K. Recent topics of the clinical utility of PET/MRI in oncology and neuroscience. Ann. Nucl. Med. 36, 798–803 (2022).

Padhani, A. R., Godtman, R. A. & Schoots, I. G. Key learning on the promise and limitations of MRI in prostate cancer screening. Eur. Radio. 34, 6168–6174 (2024).

Penzkofer, T., Padhani, A. R., Turkbey, B., Haider, M. A. & Barentsz, J. ESUR/ESUI position paper: developing artificial intelligence for precision diagnosis of prostate cancer using magnetic resonance imaging. Eur Radiol. 31, 9567–9578 (2021).

Roest, C. et al. Multimodal AI combining clinical and imaging inputs improves prostate cancer detection. Investig. Radio. 59, 854–860 (2024).

Rothberg, M. B. et al. The role of novel imaging in prostate cancer focal therapy: treatment and follow-up. Curr. Opin. Urol. 32, 231–238 (2022).

Sachpekidis, V., Papadopoulou, S. L., Kantartzi, V., Styliadis, I. & Nihoyannopoulos, P. Use of artificial intelligence for real-time automatic quantification of left ventricular ejection fraction by a novel handheld ultrasound device. Eur Heart J - Cardiovasc Imaging 23, jeab289.005 (2022).

Safarian, A. et al. Impact of [18F]FDG PET/CT radiomics and artificial intelligence in clinical decision making in lung cancer: its current role. Semin Nucl. Med. 55, 156–166 (2025).

Saha, A. et al. Artificial intelligence and radiologists in prostate cancer detection on MRI (PI-CAI): an international, paired, non-inferiority, confirmatory study. Lancet Oncol. 25, 879–887 (2024).

Seah, J., Brady, Z., Ewert, K. & Law, M. Artificial intelligence in medical imaging: implications for patient radiation safety. Br. J. Radio. 94, 20210406 (2021).

Sibic, O. et al. Diagnosis of acute appendicitis with machine learning-based computed tomography: diagnostic reliability and role in clinical management. J. Laparoendosc. Adv. Surg. Tech. 35, 313–317 (2025).

Loftus, T. J. et al. Federated learning for preserving data privacy in collaborative healthcare research. Digit Health 8, 20552076221134455 (2022).

Strzelecki, M. et al. Artificial intelligence in the detection of skin cancer: state of the art. Clin. Dermatol. 42, 280–295 (2024).

Gunashekar, D. D. et al. Explainable AI for CNN-based prostate tumor segmentation in multiparametric MRI correlated to whole mount histopathology. Radiat. Oncol. 17, 65 (2022).

Thomas, M. et al. Use of artificial intelligence in the detection of primary prostate cancer in multiparametric MRI with its clinical outcomes: a protocol for a systematic review and meta-analysis. BMJ Open 13, e074009 (2023).

Tonozuka, R. et al. Deep learning analysis for the detection of pancreatic cancer on endosonographic images: a pilot study. J. Hepatobiliary Pancreat. Sci. 28, 95–104 (2021).

Uema, R. et al. A novel artificial intelligence-based endoscopic ultrasonography diagnostic system for diagnosing the invasion depth of early gastric cancer. J. Gastroenterol. 59, 543–555 (2024).

Vazzano, J., Johansson, D. & Hu, A. Z. Evaluation of a computer-aided detection software for prostate cancer prediction: excellent diagnostic accuracy independent of preanalytical factors. Lab Investig. 103, 100257 (2023).

Wang, Y., Lin, W. & Zhuang, L. L. Advances in artificial intelligence for the diagnosis and treatment of ovarian cancer (review). Oncol. Rep. 51, 46 (2024).

Wang, Y., Xu, Z., Tang, L., Zhang, Q. & Chen, M. The Clinical Application of Artificial Intelligence Assisted Contrast-Enhanced Ultrasound on BI-RADS Category 4 Breast Lesions. Acad Radiol. 30, S104–S113 (2023).

Woernle, A. et al. Picture perfect: the status of image quality in prostate MRI. J. Magn. Reson Imaging 59, 1930–1952 (2024).

Xiao, Z., Ji, D., Li, F., Li, Z. & Bao, Z. Application of artificial intelligence in early gastric cancer diagnosis. Digestion 103, 69–75 (2022).