Abstract

The increasing importance of early prediction of student performance has led to research into machine learning models that can be used to assess student outcomes more accurately.This study focused on developing a predictive model based on machine learning algorithms to evaluate student performance and provide early intervention mechanisms. Create a new predictive model using machine learning algorithms to assess student performance and identify the key variables that influence success. The proposed model aims to serve as an early warning system to detect potential academic failures and suggest interventions. A questionnaire was developed to collect data from the students. Four machine learning algorithms, C5.0, CART, Support Vector Machine (SVM) and Random Forest, were used to analyze the data. The effectiveness of each algorithm was evaluated with a focus on performance accuracy. Among the four algorithms, Random Forest achieved the most consistent results in the cross-validation metrics. However, C5.0 provided higher accuracy on the test set and CART showed the highest training performance, indicating performance conflicts, which are analyzed in more detail in the Discussion section. Based on these findings, a new classification model is proposed that includes the most important variables that significantly influence student success. This model was developed to detect academic failure at an early stage and enable timely intervention. The proposed predictive model provides a valuable tool for early identification of at-risk students and can support formative assessments. By identifying students who are likely to fail, the model provides opportunities for interventions to improve their academic outcomes. It is expected to help educators respond more effectively to student needs, ensure equity in the classroom, and provide cost-effective solutions for education policymakers.

Similar content being viewed by others

Introduction

Machine learning is an interdisciplinary field that has emerged through the use of mathematics and statistics, and encompasses many disciplines1. There are successful examples in many areas, such as business, health, industry and education. Intelligent systems that emerge from these disciplines make human life easier and contribute positively to forward-looking decision-making processes2. The use of machine learning in education has increased. Machine learning and academic performance estimation methods are often used to improve student success3,4,5,6.

By storing education data securely, countries with modern education systems can make the best use of this data to plan for the future and develop new education strategies7. These countries make predictions about future periods by using models derived from data and learning approaches8,9. Recent studies have shown that the importance of the effective use of systematic data collection and analysis procedures is increasing in countries, especially in assessments such as PISA and TIMMS that relate to student achievement10. Many factors influence the academic success of students. The effects of student socioeconomic status, age/class status, life satisfaction, immigration status, teacher support, reliance on social media, early childhood education status, and grade repetition on student achievement have been demonstrated11,12,13,14,15,16.

Predicting students’ academic performance is a popular topic in educational research17 Identifying the variables that lead to student failure and improving these variables are important topics to be investigated. With the development of new approaches based on machine learning, this topic has become increasingly attractive to researchers. Numerous studies have been published in the literature on the prediction of study success. However, the main distinguishing feature of this study is that it identifies the importance of variables outside the course for student success and develops a model based on these variables. The selection of variables in this study was influenced by both empirical and theoretical perspectives. For example, the inclusion of indicators such as the number of books in the home and parents’ education is based on Bourdieu’s theory of cultural capital18, which states that students from a culturally enriched environment are more likely to succeed academically. Bronfenbrenner’s ecological systems theory19 also emphasizes the multi-layered influence of the home, school and peer environment on student development. Time spent on physical activity or perceptions of school climate can be understood within this framework as proximal processes that influence academic outcomes. These theories help to interpret the results of machine learning and explain why certain variables are prioritized. Furthermore, the model presented in relation to student failure can be evaluated as an early intervention mechanism to transform the status of failure of new students entering the system into a successful situation. Suggestions can also be developed to increase student success by identifying and eliminating deficits based on variables that significantly impact their success.

Machine learning algorithms used in the study

In this study, four machine learning algorithms were used to identify the conditions that have the greatest impact on student’ performance and to predict their future success. A review of the literature shows that logistic regression provides successful results for data groups with fewer variables. However, because of the large number of variables in this study, C5.0, CART, SVM and Random Forest were favored, that is, machine-learning algorithms that provide better results in terms of performance in the literatüre20,21,22. Although the analyses were conducted for all algorithms, we adhered to the four algorithms that provided the best comparable results. Another important reason for selecting the selected algorithms is their simplicity and applicability. The Classification and Regression Tree (CART) algorithm23 creates binary decision trees by splitting data into subgroups based on the target variable24,25. It uses criteria such as the Gini measure for categorical classes and the sum of squared errors for numerical classes. A large initial tree is pruned to minimize misclassification, often with 10-fold cross-validation26. Advantages include transparency and ease of understanding, but it may suffer from instability and high variance27. The C5.0 algorithm28 is an improvement over C4.5, handling both numerical and continuous values, pruning trees, and generating rules. It can perform multiple splits per node, provides robustness against overfitting, and handles missing data. Limitations include reduced performance on very large datasets and potential classification issues with weighted variables. SVM29 is a supervised learning method that separates classes using an optimal hyperplane. It can handle linear, multi-class, and non-linear classification problems30, effectively managing high-dimensional and unstructured data. Disadvantages include difficulty in kernel selection, long training times for large datasets, and interpretability challenges31,32. Random Forest33 combines the Bagging method and Random Subspace method to build multiple decision trees from random subsets of the data. Trees differ due to randomization in root node and split selection. Widely used in educational data mining, it offers robustness and ease of implementation, though the number of trees does not have a fixed optimal value.

These algorithms have frequently been used in previous EDM research because they are less complex and easier to implement and interpret34,35,36.

Related works

Prior research on educational data mining and learning analytics has explored multiple prediction tasks, which can be grouped into four main themes: (1) prediction of optimal learning materials and methods, (2) academic performance prediction, (3) dropout prediction and early warning systems, and (4) student classification and success factors.

Prediction of optimal learning materials and methods

Several studies have aimed to identify the most suitable study resources or learning strategies for students. For example37, used the k-NN algorithm to determine the appropriate level of study material based on students’ pretest performance, while38 employed decision trees to recommend study methods using demographic and academic variables. Similarly39, leveraged various classification algorithms to predict how socio-demographic factors influence learning motivation. These works highlight the potential of machine learning in tailoring instruction to individual needs but often rely on relatively narrow sets of features and lack cross-validation across diverse contexts.

Academic performance prediction

A substantial body of research has focused on predicting students’ academic success using demographic, behavioral, and performance data. Approaches include decision trees, support vector machines, artificial neural networks, and ensemble methods40,41,42,43,44,45,46,47,48,49,50,51,52. For example41, compared multiple algorithms for predicting performance in Portuguese and mathematics courses, while47 conducted a large-scale comparison of supervised learning techniques for exam prediction. While these studies demonstrate strong predictive capabilities, many rely on single-institution datasets, limiting generalizability.

Dropout prediction and early warning systems

Dropout prediction has been addressed using models such as Naïve Bayes, Random Forests, and logistic regression53,54,55,56,57,58,59,60. Large-scale efforts like55, which analyzed over 165,000 students, have identified behavioral indicators such as attendance and engagement as strong predictors of attrition. Early warning systems57,58,59 integrate such predictors to intervene before dropout occurs. However, challenges remain in adapting models to different educational contexts and addressing class imbalance in dropout versus retention cases.

Student classification and success factors

Other works focus on classifying students into performance categories or identifying key determinants of success61,62,63,64,65,66. For instance59, used Elastic Net and Random Forest to rank socio-economic and demographic variables by importance, while55 built classification models for over 10,000 students using a combination of decision trees and neural networks. These studies contribute valuable insights into the drivers of student performance but vary widely in methodological rigor and in their handling of multicollinearity among features.

Summary and research gap

Across these themes, prior work demonstrates the versatility of machine learning in educational contexts but also reveals common limitations:

-

Limited external validation, often relying on single datasets.

-

Insufficient handling of class imbalance and multicollinearity, which can distort feature importance.

-

Lack of sociocultural and psychometric justification for certain variables.

The present study addresses these gaps by integrating multiple algorithms, applying data balancing techniques, and providing a psychometric rationale for feature selection, thereby enhancing both the robustness and interpretability of the model.

Method

The aim of this study is to develop a new classification model based on machine learning algorithms to assess student performance. The questionnaire was developed as part of this study. The questionnaire developed in this study was created in five steps. First, questionnaire design and preliminary planning were conducted, and expert opinions were obtained. A pretest was conducted with 30 students. After consulting the expert opinions again, the design and planning of the questionnaire was finalized, and the data were collected. The data obtained were analyzed and reported67. After secondary school, students in Turkey take an exam prepared by the Ministry of Education. Students who achieve a high score in this exam go on to secondary schools, the so-called “qualified schools” Students who do not receive enough points for these schools are enrolled in the nearest schools in their region.

Prior to data collection, a power analysis was conducted using GPower 3.1 to determine the minimum required sample size for the planned analyses. Assuming a small-to-medium effect size of 0.15, an alpha level of .05, a power of .95, and 84 predictor variables, the required sample size was estimated to be 410 participants. Thus, the final sample of 613 students exceeded the required threshold, providing adequate power to detect statistically significant relationships among the study variables. The students were included in the study, 307 of whom attended the specified qualifying schools and 306 attended the nearest schools. Data were collected from homogeneous groups as part of preliminary studies and experimental research processes. Students were informed of the importance of the study by the researchers, then the appropriate survey form was forwarded to the students ' Internet accounts, and the data were collected via Google Form. Missing data were not found when the data were reviewed. When selecting the sample, the diversity of socioeconomic and geographical conditions among the students participating in the study was considered. Data were collected from students living in the same urban conditions in five central districts of Antalya. The developed questionnaire was administered to students in schools that accepted students with a High School Entrance Examination score and student GPA. Students attending schools that admit students based on exam scores are classified as successful, while those attending schools based on GPA are classified as unsuccessful. In this form, predictions were made with the C5.0, CART, Support Vector Machine and Random Forest algorithms, which are known to work better with categorical data from datasets obtained from students. As a result of the analysis performed using the Random Forest algorithm, which makes the most successful predictions, a new classification model consisting of the main variables that influence the success performance was proposed. The proposed model is intended to contribute to formative assessments and can be used as an early warning system. Thus, a student’s failure can be transformed from a negative to a positive state. In light of these studies, the research questions are as follows:

Question 1: What are the most important variables influencing study success?

Question 2: Which machine-learning algorithm is the most successful in predicting student performance according to the assessment criteria?

Question 3: Can an early warning system be created using a model for student failure?

Research model

In the questionnaire, variables such as “parents’ level of education,” “amount of monthly income,” “number of books owned,” “learning method,” “student’s attitude towards classes,” “time spent on sports,” “interest in robotics and coding,” “mother’s and father’s occupation,” “the student’s physical equipment,” “the climate of the school where the student is located“ and “the qualification of the teachers in the school”67 and their life perspective The “Boruta” package68 was used via the R program to find the most important variables that affect student performance, based on the data from the survey in which a total of 613 students took part. Variables were identified using this package. The variables used are listed in Table 1. Thus, the most important variables affecting student achievement were determined, and the answer to the first research question was found. In the second question of the research, an answer was sought regarding which algorithm was the most successful in predicting student achievement. Because the answers given to the questionnaire were categorical, Random Forest, Support Vector Machine, CART and C5.0 machine learning algorithms, which are known to work better with categorical data, were used. First, the data were divided into two groups: an 80% training set and a 20% test set. After implementing the algorithms, tables were created based on the evaluation criteria of the models. To increase the error-freeness of the obtained accuracy values, cross-validation was performed and an algorithm that made the most successful prediction was found. In the third question, an answer was sought for an early warning system application where student failure can be detected early, and deficiencies can be eliminated. For this, models were developed over the determined variables, and the coefficients of the items were determined through an algorithm that was determined to be the model that made the most successful prediction. Based on these coefficients, applications were made about which students were successful and which students were unsuccessful. The sum of the coefficients obtained from the answers given by successful students and the fixed value of 0.076 were above 0.50. It was determined that the unsuccessful students were below this value. Thus, an application was created to detect deficiencies using it as an early warning system. The visual flow of this study is shown in Fig. 1.

Visual flows of research processes.

As shown in Fig. 1. flow diagram of the research process, showing the sequential stages from data collection to model interpretation. Steps include: (1) collection of raw student performance data from institutional databases, (2) preprocessing involving cleaning, missing value handling, and encoding of categorical variables, (3) application of feature selection to identify the most relevant predictors, (4) model training with C5.0, CART, SVM, and Random Forest algorithms, (5) model evaluation using cross-validation and performance metrics, and (6) interpretation of predictive outputs to inform early intervention strategies. The diagram illustrates the integrated and systematic approach adopted to ensure transparency, reproducibility, and actionable educational insights.

Research sample

Data were collected from 613 students studying in the central districts of Antalya Province, Turkey. Purposive sampling was used in this study. The data were analyzed. This study can be divided into three phases.

A comprehensive investigation was conducted to identify the variables that influence student achievement. A survey questionnaire was developed based on the pilot applications and expert opinions. The most important variables were identified using the R program package based on the data obtained from the variables in the developed questionnaire. The analysis continued for these variables.

In the second step, the data are divided into training and test sets based on the identified variables. Algorithms C5.0, CART, Support Vector Machine and Random Forest were used to determine the percentage of successful predictions based on specific evaluation criteria. The degree of accuracy was determined using cross validation. The model was created using an algorithm that makes the most successful prediction.

-In the third part, the coefficients of the model created using the most successful estimation algorithm are determined. Once these coefficients are determined, students who remain above a certain value are labelled as successful, and students who fall below a certain value are labelled as unsuccessful. An early warning system can be established by determining the deficits of unsuccessful students in the system.

Data analysis

In this section, we analyze the data. Before model training, we addressed two potential issues that can influence feature importance in ensemble methods such as Random Forests: multicollinearity and class imbalance. To detect multicollinearity, we computed the Variance Inflation Factor (VIF) for all predictor variables and excluded those with VIF values greater than 5, following established guidelines69 Additionally, we examined the correlation matrix and removed one variable from each pair with an absolute correlation coefficient above 0.85 to minimize redundancy. Regarding class imbalance, we assessed the distribution of the target classes and found that the minority class represented less than 30% of the total sample. To mitigate this imbalance, we applied the Synthetic Minority Oversampling Technique (SMOTE)70 to the training dataset. This procedure generated synthetic examples for the minority class, thus improving classifier performance and stabilizing feature importance rankings. Then, the “Boruta” package was used via the R program to identify the most important variables influencing student success. When selecting the item (feature), the “Boruta” package, which works together with all the classification algorithms, was processed in R. The Boruta package has the potential to reduce the misleading effects of fluctuations and correlations resulting from random samples by adding randomness to the system. The technical features of this package and the consideration of algorithm accuracy in cases where the number of variables is at the optimum level are some of the reasons why this package is preferred71. When determining the features in the Boruta package, a copy (shadowmax) of all the features was created. Subsequently, a random forest classifier was trained, and the importance of each feature was determined by the mean reduction line. At each iteration, features with unimportant values were removed by checking whether a true feature had higher importance than the best of its shadow features. Finally, the algorithm is stopped when either the entire item (feature) is approved or rejected or when it reaches the run limit. For the dataset used in this study, 54 out of 84 features were eliminated using this feature selection method and 30 features were evaluated. The values for the feature selection are shown in Fig. 2. Information on the data and the determination of the variables are shown in Table 2.

Selecting independent variables.

Figure 2 presents the ranked importance of predictor variables in the trained Random Forest model, as determined by the mean decrease in accuracy. Each colored boxplot represents the distribution of importance scores across multiple trees in the forest, with green indicating the highest-contributing variables, yellow representing moderately important variables, red showing lower-importance variables, and blue representing the least influential ones. Variables at the top of the plot contribute the most to model performance, highlighting key predictors to prioritize in educational interventions.

To determine which algorithm makes the most accurate prediction, the data were first divided into two parts: an 80% training set and a 20% test set. The four algorithms were then executed using the R program. The results, including the accuracy criteria for the training sets of Random Forest, CART, Support Vector and C5.0, are listed in Table 3.

Table 3 shows that the accuracy achieved by the algorithms for the training dataset was 76.62% for Random Forest, 73.11% for SVM, 81.1% for C5.0, and 81.67% for the CART algorithm. It can be seen that the CART algorithm has the highest precision value, Random Forest algorithm has the highest recall value, and CART algorithm has the highest F-score and kappa value. Once the data were obtained, the metrics of the test datasets for the classifiers were calculated. The metrics of the training and test datasets were compared. The comparison results are presented in Table 4.

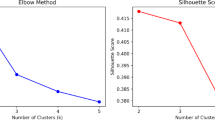

Table 4 shows that the algorithms for the test dataset achieved an accuracy of 73.7% for Random Forest, 68.8% for SVM, 75.4% for C5.0, and 65.5% for CART. It can be seen that the C5.0 algorithm has the highest values for precision, recall, F-score and kappa. The examination in Table 4 shows that the C5.0 algorithm is the most successful estimation model for the metrics of the test series. To ensure a robust performance evaluation, we employed a 10-fold cross-validation strategy for all models. The dataset was randomly partitioned into ten equal folds; in each iteration, nine folds were used for training and one for testing. This process was repeated ten times to average-out the variance due to data partitioning. This method provides a more reliable estimate of model generalizability than simple train-test splits, which can be subject to sample bias.The results in terms of the accuracy rates obtained using this method are shown in Figs. 3, 4 and 5, and 6.

Random forest algorithm cross validation results.

When Fig. 3 is examined the line plot presents cross-validation accuracy as a function of the number of randomly selected predictors in the Random Forest model. Accuracy peaks when three predictors are used, indicating an optimal balance between model complexity and predictive performance. The gradual plateau beyond three predictors suggests that adding more variables yields diminishing returns, reinforcing the importance of feature selection in preventing overfitting and enhancing generalizability.

Support vector machine algorithm cross validation results.

When Fig. 4 is examined Relationship between model accuracy and cost parameter in SVM. The plot shows that accuracy peaks at low cost values (around 0–0.2) and gradually declines as the cost increases, indicating that overly high cost values may lead to reduced generalization performance.

CART algorithm cross validation results.

When Fig. 5. Examinde effect of complexity parameter on classification accuracy using cross-validation. Accuracy increased up to a complexity parameter of approximately 0.023, after which performance declined steadily, indicating potential overfitting at higher complexity values.

C5.0 algorithm cross validation results.

When Fig. 6. Examined changes in cross-validation accuracy of the C5.0 algorithm by the number of boosting iterations. Accuracy remained higher without winnowing across all iterations.

Looking at the results of the cross-validation performed to ensure the validity of the algorithms by guaranteeing the absence of errors, it can be seen that the Random Forest algorithm achieved the highest degree of correct classification. A comparison of the metrics of the algorithms after cross validation is presented in Table 5.

When all metrics were analyzed after cross-validation, it became apparent that the random forest algorithm achieved the highest values for accuracy, precision, recall, F-score, and kappa, although not the most successful in the training and data sets. To statistically compare the performances of the four algorithms, we conducted a Friedman test with a significance level of α = 0.05. For post hoc analysis, the Nemenyi test was used to detect pairwise differences in performance.

Results

When Table 5 is examined, the training set accuracy rate of the Cart algorithm was 81.67%, the test set accuracy rate was 65.2%, and the cross validatation accuracy was 68.5%. The training set accuracy rate of the C5.0 algorithm was 81.1%, the test set accuracy rate was 75.4%, and as a result of cross-validation, the accuracy rate was 73.0%. The training set accuracy rate of the Random Forest algorithm was 72.26%, test set accuracy rate was 73.4%, and accuracy rate after cross-validation was 74.3%. The training set accuracy rate of the SVM algorithm was 73.11%, the test set accuracy rate was 68.8%, and, as a result of cross-validation, the accuracy rate was 68.0%. The metrics obtained from the algorithms are shown in Fig. 7.

Comparison of metrics of machine learning algorithms after cross validation.

The evaluation of the algorithm performance revealed that different models performed better on different subsets and metrics. Although the CART algorithm achieved the highest accuracy in the training dataset (81.67%), its performance declined significantly in the test dataset (65.5%), indicating potential overfitting. The C5.0 algorithm showed the best results in the test set with the highest F1-score and kappa values. However, the Random Forest algorithm demonstrated the most balanced and consistent performance across all three stages: training, testing, and 10-fold cross-validation. This consistency, combined with its compatibility with boruta-based feature selection, supports its selection as the most robust model for early warning purposes. To compare the performances of the four algorithms, we conducted a Friedman test, which revealed statistically significant differences among the classifiers (χ² = 26.96, p < 0.001). Although we could not perform a full post-hoc Nemenyi test in the current environment, a visual inspection of the accuracy values and the significance of the Friedman result suggest that the Random Forest model significantly outperforms CART and SVM. These findings confirm that algorithmic differences are not only practical, but also statistically meaningful. The estimation coefficients of the model created using the random forest algorithm were calculated, and the results are presented in Table 6.

A new prediction model

The proposed model is based on the prediction coefficients obtained from the answers chosen by the students according to the prediction coefficients in Table 6 and is presented in Eq. (1).

Where, Y: predicted student outcome; constant term: 0.076; Itemi : the ith-Item (e.g., parental education, cultural possessions, etc.); Coeffi : weight (coefficient) of the ith-Item; n: total number of selected Item(after Boruta selection).

If the total score obtained from the answers was greater than or equal to 0.50, the student was classified as successful; otherwise, the student was classified as unsuccessful.

The proposed model was applied to 30 students who would take the exam next year, and the school prediction that the students would go to was similar to the rate of the Random Forest algorithm. Thus, model validation is also provided. The resulting confusion is presented in Table 7.

The metrics listed in Table 8 were obtained from the data in Table 7.

Tables 7 and 8 show that the results of the validation set were consistent with the prediction metrics of the model proposed in this study.

Discussion

This study sought to answer these three research questions. To answer the first question, we sought to determine the variables that influence student performance. First, a literature review was conducted and an item pool of approximately 300 variables that affected student achievement was created. When developing the questionnaire, the number of items was reduced to 84 based on prior use and expert opinion. Data were collected using the developed questionnaire, and the 30 most important variables influencing student success were identified through the Random Forest algorithm. These variables covered a wide range of factors, including social interaction (e.g., participation in school activities, time with friends, use of social media), family and socioeconomic background (e.g., parental education and occupation, family income, cultural resources), and personal characteristics and habits (e.g., self-perception, problem-solving skills, physical activity, sleep, and study routines). The Random Forest model, with Boruta feature selection, highlighted several high-impact predictors. Parental education level emerged as one of the strongest, reflecting the role of family academic support. Cultural possessions at home, such as books and artworks, also indicated the richness of the educational environment. In addition, self-concept of reading was found to be critical, showing that students who believe in their reading ability tend to achieve higher outcomes. Conversely, the perceived difficulty of the PISA test was negatively associated with success, consistent with cognitive load theory: students who find the test overly difficult are more likely to underperform due to stress or low self-efficacy. These findings highlight that academic success is influenced by both cognitive and sociocultural factors. They also suggest that non-test indicators may be powerful tools for supporting and improving student performance. The patterns observed in this study can be interpreted using established theoretical frameworks. For instance, the predictive power of variables such as parental education, number of books at home, and cultural possessions aligns with Bourdieu’s17 theory of cultural capital. According to this perspective, students raised in environments rich in cultural and intellectual resources are more likely to develop dispositions and competencies valued by formal education systems. Moreover, variables such as school climate and teacher-student interaction can be explained within Bronfenbrenner’s18 ecological systems theory, which emphasizes the influence of proximal environmental systems on child development. These theoretical perspectives help clarify why non-academic factors, such as home resources, reading enjoyment, and perception of difficulty, emerged as strong predictors in machine learning models. At the same time72, suggests that proficiency in the language of instruction is closely tied to students’ prior linguistic competence. Students who develop strong literacy skills in their home language are better positioned to transfer these skills to a second language, thereby enhancing their performance in reading-intensive subjects.

From a psychometric perspective, differences in achievement linked to cultural and linguistic backgrounds may also reflect differential item functioning (DIF), where certain test items are more or less difficult for specific groups due to cultural familiarity rather than true differences in ability73. These factors highlight the importance of considering measurement invariance in educational assessments to ensure that observed differences are not solely artifacts of the assessment process but reflect genuine variations in underlying competencies.In a study conducted by74, factors affecting student success were identified in four main areas: Communication, Learning Opportunities, Appropriate Supervision, and Family Stress. Their study focused on the extracurricular factors that affect students’ academic success36,75,76. sought to determine the factors that influence the academic success of 600 9th grade students (300 girls and 300 boys). Socioeconomic factors were found to have a significant effect on student achievement. In this study, the socioeconomic status of the students was found to have a significant impact on student achievement. However, it can be said that the most effective factor is the number of books owned by the student77. analyzed the data of students attending public schools in Brazil before the start of the school year and at the beginning of the second semester. According to the results of this study, the most important variables for predicting student performance were grades and attendance. In addition, school, students’ age, and the environment of the students were important variables for predicting student stress.

In the second research question, we sought to determine the machine learning algorithm that best predicted student success. In this direction, machine learning algorithms have been used to predict student success. Because the answers to the questions in the questionnaire were categorical, analyses were performed with the CART, C5.0, DVM, and random forest algorithms, which are known to work better with categorical data78. The preprocessing steps taken to handle multicollinearity and class imbalance were critical to ensuring the robustness of the Random Forest feature importance results. By removing highly correlated or collinear variables, we reduced redundancy and prevented inflated importance scores for predictors that share substantial variance79. Balancing the target class distribution using SMOTE further contributed to model stability, as imbalanced data can bias the algorithm toward the majority class, thereby skewing the ranking of predictive features. The fact that the overall ranking of key predictors remained consistent before and after these adjustments strengthens the confidence in our findings and suggests that the identified influential variables are not artifacts of data imbalance or collinearity. 80% of the 613 data were assigned as training test data and 20% as test data set, and after the data were trained, predictions were made for the test data. In these predictions, the random forest, DVM, C5.0, and CART algorithms achieved success rates of 75.61%, 68.80%, 68.47%, and 65.25%, respectively. Cross-validation was then performed to improve accuracy. The validation results showed that the RF, C5.0, DVM, and CART algorithms had accuracies of 74.3%, 73%, 69.2%, and 59.5%, respectively. Although the Random Forest algorithm was ultimately selected as the most suitable model for early warning purposes, this decision was not based solely on a single performance metric. In fact, while CART exhibited the highest accuracy on the training dataset and C5.0 outperformed others in test set metrics such as F1-score and kappa, Random Forest demonstrated the most balanced and consistent performance across all evaluation stages, including cross-validation. Its relatively low risk of overfitting, stable generalization ability, and compatibility with the Boruta feature selection method makes it the most robust candidate. These trade-offs were carefully considered to ensure not only predictive power, but also model stability for practical implementation. Although the Random Forest algorithm achieved the highest overall performance in terms of accuracy and consistency, it is often criticized for its lack of interpretability. This can be a significant limitation in educational settings, where transparency and the ability to trace how decisions are made are crucial. To address this, we complemented the Random Forest results with Boruta-based variable importance analysis, which allows educators and policymakers to understand which features most strongly influence predictions. Moreover, despite their lower predictive power, models such as CART and C5.0, offer decision rules and visual pathways that may be more appropriate when interpretability is of primary concern. Thus, model choice should balance predictive performance with transparency based on the application context80. estimated student success using decision trees and random forest algorithms based on the data they obtained on students’ grades, demographic characteristics, and marital status. As a result of the analysis, the decision tree algorithm was found to be more successful, with an accuracy rate of 66.9% compared to the random forest algorithm81. compared the accuracy rates of the K-Nearest Neighbour and Support Vector Machine algorithms on 395 students. The Support Vector Machine algorithm had a hit rate of 96%, whereas the K-nearest neighbor algorithm had a hit rate of 95%82. used logistic regression, k-nearest neighbor, support vector machine, XGBoost and Naive Bayes algorithms. In a study conducted in Portugal using data from school grades and surveys, the support vector machine algorithm was found to be the most accurate estimation algorithm, with a prediction accuracy of 96.89%. The random forest algorithm exhibited the highest accuracy in this study.

The third question aimed to predict student failure in the initial period by calculating the prediction coefficients of the model using the Random Forest model. The coefficients and a constant value of 0.076 were collected for the choices made by the students, and a score above 0.50 was considered successful. Based on this, a simple program was developed in Excel. The student’s total score is the sum of the fixed values across the responses to the variables in the program, and it predicts whether the student will be successful or unsuccessful. Furthermore, because it determines under what conditions the student can be successful, the deficiencies of a new student entering the system are eliminated in this process, and the system is used as an early warning system. It is assumed that the coefficient predictions can provide a system with additional information.

On the other hand, certain coefficients, such as the negative weight associated with PhD-level parental education, appear counterintuitive when compared to the prior literature. This anomaly can be explained by multiple factors. First, the sample size for these subgroups was relatively small, possibly leading to unstable predictions. Second, contextual dynamics, such as the pressure imposed by highly educated parents or their limited availability due to professional obligations, could inadvertently hinder student outcomes. We advise interpreting these specific coefficients with caution, as they may not reflect universal causal relationships, but rather localized or sample-specific effects.

The study was conducted using data collected from 613 students in the central district of Antalya. More generalizable results in terms of the assessment of students’ achievement levels can be obtained if a much larger number of study groups are selected for the research. It is not known whether the answers to the questions were given openly, as the data were collected online during the pandemic. The extent to which distance learning affects student success is unknown. Therefore, comprehensive analyses can be conducted in these two situations. The most accurate classification model can be clarified by working with other machine learning algorithms to evaluate student performance. The dataset is divided into three parts. By comparing the accuracy levels of the training, test, and validation sets, the results could be compared without cross-validation. The significance of these factors can be verified by collecting the data again and increasing the factors that influence student success. Students’ past performance can be assessed together with the results of this study by performing a logistic regression analysis of numerical values using the marks obtained in the oral and written examinations. Thus, the assessment of students’ future achievement status can be observed in two ways. When developing software for the study, students can fill in this form online and obtain information about their success. In the case of failure, the reasons for failure can be analyzed as an early warning system. In this way, a recipe can be created to help students achieve success. Therefore, this method may be more effective in this area.

It is believed that the model proposed in this study will make a significant contribution to teachers in the formative assessment process, and will have an impact on increasing student success in academic performance with the actions to be taken. It is also expected to provide an effective proposed solution in terms of cost and resources for educational policymakers through early identification and intervention of academic failure. It is expected that this developed model will help teachers meet students’ needs more quickly and ensure equal opportunities in the classroom. It is possible to intervene with a student who is academically unsuccessful during the process. Steps can be taken to ensure that students are academically successful. From a decision-maker’s perspective, integrating the system into educational processes will ensure a more efficient use of educational resources, and the failure rate per student will be significantly reduced owing to timely interventions. As the system is strengthened by data analysis and teacher feedback, it enables the modernization of education policy and the establishment of more effective decision-making mechanisms.

Study limitations

This study has some limitations. First, the study was limited to data from students attending qualifying schools and schools closest to their homes in the central districts of a single province. The data collection process can be expanded by including data from all the provinces. In addition, student responses to the questionnaire developed in this study were limited by their ability to use digital resources.

This study only collected data from 1st grade high school and 8th grade middle school students; in this context, there are opportunities to work at different grade levels. Finally, the data collected were self-reported, which is a limitation due to the nature of the study. Although this study focused on base-level algorithms for transparency and interpretability, future research may benefit from experimenting with meta-ensemble strategies, such as voting or stacking, which have the potential to integrate the strengths of multiple models and enhance predictive performance in educational early warning systems.

This study relied on self-reported data, which may have been influenced by social desirability bias or recall inaccuracies. In addition, the sample was drawn entirely from the urban districts of a single province in Turkey, which potentially limits the generalizability of the findings. Future research should aim to replicate the model using a more diverse, nationally representative sample and explore the inclusion of behavioral or administrative data sources to reduce response bias.

A key limitation of this study lies in its external validation process, which was conducted on a relatively small sample of only 30 students. Although this provided a preliminary indication of the model’s generalizability, the restricted sample size substantially limits the statistical power and the reliability of the validation outcomes. To strengthen the robustness and applicability of future research, we strongly recommend the adoption of more rigorous validation strategies—such as k-fold cross-validation, stratified sampling, or the use of independent datasets drawn from diverse regions and educational contexts.These approaches would better support generalizable conclusions and provide stronger evidence for the broader applicability of early warning systems in diverse educational settings.

Data availability

The datasets generated and analyzed during the current study are available from the corresponding author upon reasonable request.

References

Hastie, T. et al. The Elements of Statistical Learning: Data Mining, Inference and Prediction 2 (Springer, 2009).

Alpaydin, E. Introduction To Machine Learning (MIT Press, 2014).

Agarwal, P. K., Bain, P. M. & Chamberlain, R. W. The value of applied research: retrieval practice improves classroom learning and recommendations from a teacher, a principal, and a scientist. Educational Psychol. Rev. 24 (3), 437–448. https://doi.org/10.1007/s10648-012-9210-2 (2012).

Baniata, L. H., Kang, S., Alsharaiah, M. A. & Baniata, M. H. Advanced deep learning model for predicting the academic performances of students in educational institutions. Appl Sci. 14 (5), 1963. https://doi.org/10.3390/app14051963 (2024).

Airlangga, G. Predicting student performance using deep learning models: A comparative study of MLP, CNN, BiLSTM, and LSTM with attention. Malcom J. 4 (4), 37–49. https://doi.org/10.57152/malcom.v4i4.1668 (2024).

Alnasyan, B. The power of deep learning techniques for predicting academic performance: A review of 46 studies (2019–2023). Discover Educ. 3 (1), 12. https://doi.org/10.1016/j.dise.2024.100123 (2024).

OECD. Trends Shaping Education 2022 https://doi.org/10.1787/6ae8771a-en (OECD Publishing, 2022).

Adekitan, A. I. & Noma-Osaghae, E. Data mining approach to predicting the performance of first year student in a university using the admission requirements. Educ. Inform. Technol. 24, 1527–1543. https://doi.org/10.1007/s10639-018-9839-7 (2019).

OECD AI and the Future of Skills, Volume 1: Capabilities and Assessments, Educational Research and Innovation. https://doi.org/10.1787/5ee71f34-en (OECD Publishing, 2021).

Gamazo, A. & Martínez-Abad, F. An exploration of factors linked to academic performance in PISA 2018 through data mining techniques. Front. Psychol. 11, 575167. https://doi.org/10.3389/fpsyg.2020.575167 (2020).

Karakolidis, A., Pitsia, V. & Emvalotis, A. Examining students’ achievement in mathematics: a multilevel analysis of the programme for 2012 international student assessment (PISA) data for Greece. Int. J. Educ. Res. 79, 106–115. https://doi.org/10.1016/j.ijer.2016.05.013 (2016).

Pholphirul, P. Pre-primary education and long-term education performance: evidence from programme for international student assessment (PISA), in Thailand. J. Early Child. Res. 15, 410–432. (2016).

Malakeh, Z. et al. Correlation between psychological factors, academic performance, and social media addiction: Model-based testing, behavior and information technology. 41 (8), 1583–1595. https://doi.org/10.1080/0144929X.2021.1891460 (2022).

Feraco, T. et al. An integrated model of school students’ academic achievement and life satisfaction. Linking soft skills, extracurricular activities, self-regulated learning, motivation, and emotions. Eur. J. Psychol. Educ. 38, 109–130. https://doi.org/10.1007/s10212-022-00601-4 (2023).

Affuso, G. et al. Effects of teacher support, parental monitoring, motivation, and self-efficacy on academic performance over time. Eur. J. Psychol. Educ. 38, 1–23. https://doi.org/10.1007/s10212-021-00594-6 (2023).

Shahiri, A. M., Husain, W. & Rashid, N. A. A review on predicting student performance using data-mining techniques. Procedia Comput. Sci. https://doi.org/10.1016/j.procs.2015.12.157 (2015).

Bourdieu, P. The forms of capital. In (ed Richardson, J.) Handbook of Theory and Research for the Sociology of Education 241–258. (Greenwood, 1986).

Bronfenbrenner, U. The Ecology of Human Development: Experiments by Nature and Design (Harvard University Press, 1979).

Yadav, S., Bharadway, B. & Pal, S. Mining education data to predict student retention: A comparative study. Int. J. Comput. Sci. Inf. Secur. 10 (2), 113–117. (2012).

Adekitan, I. & Salau, O. Toward an improved learning process: the relevance of ethnicity to the data mining prediction of students’ performance. SN Appl. Sci. 2, 8. https://doi.org/10.1007/s42452-019-1752-1 (2020).

Lottering, R., Hans, R. & Lall, M. A machine-learning approach to identifying students at risk of dropout: A case study. Int. J. Adv. Comput. Sci. Appl. 11 (10), 417–422. https://doi.org/10.14569/IJACSA.2020.0111052 (2020).

Breiman, L., Friedman, J., Olshen, R. A. & Stone, C. J. Classification and Regression Trees 1st edn. https://doi.org/10.1201/9781315139470 (Chapman and Hall/CRC, 1984).

Witten, I. & Frank, E. Data Mining: Practical Machine Learning Tools and Techniques (2nd edition). (Morgan Kaufmann Publishers, 2005).

Larose, D. Discovering Knowledge in Data: an Introduction To Data Mining (Wiley, 2005).

Loh, W. Classification and regression trees. WIREs Data Min. Knowl. Discov. 1 (January), 14–23 (2011).

Wu, X. & Kumar, V. Top Ten Algorithms in Data Mining (CRC Press, 2009).

Pujari, A. Data Mining Techniques 45 (Universities Press (India) Private Limited, 2008).

Vapnik, V. Statistical Learning Theory (John Wiley and Sons, Inc., 1998).

Chu, F., Jin, G. & Wang, L. Cancer diagnosis and protein secondary structure prediction using support vector machines. L.WANG (Ed.) Support Vector Machines: Theory and applications(s) 243–363). Berlin: Springer- Verlag Berlin Heidelberg. (2005).

Fedorovici, L. & Dragan, F. Comparison between a neural network and an SVM and Zernike moments based blob recognition modules. 6th IEEE International Symposium on Applied Computational Intelligence and Informatics (SACI). (Timisoara, 2009).

Auria, L. & Moro, R. A. Support Vector Machines (SVM) Are Used for Solvency Analysis (DIW Berlin, 2008).

Breiman, L., Random & Forests Mach. Learn. 45, 5–32 https://doi.org/10.1023/A:1010933404324 (2001).

Shih, B. Y. & Lee, W. I. October Application of the nearest neighbor algorithm to create an adaptive online learning system. In The 31st Annual Frontiers in Education Conference. Impact on Engineering and Science Education. Conference Proceedings (cat. No. 01CH37193) 1 T3F-10. (IEEE, 2001).

Lünich, M. Explainable artificial intelligence for academic performance prediction in higher education. In Proceedings of the 2024 ACM Conference on Fairness, Accountability, and Transparency 1–12. https://doi.org/10.1145/3616415.3623301 (ACM, 2024).

Kesgin, K., Kiraz, S., Kosunalp, S. & Stoycheva, B. Beyond performance: explaining and ensuring fairness in student academic performance prediction with machine learning. Appl. Sci. 15 (15), 8409. https://doi.org/10.3390/app15158409 (2025).

Saqr, M. Why explainable AI May not be enough: predictions and mispredictions in student performance models. Smart Learn. Environ. 11 (1), 14. https://doi.org/10.1186/s40561-024-00343-4 (2024).

Al-Radaideh, Q. A., Al-Shawakfa, E. M. & Al-Najjar, M. I. Mining students data using decision trees. The 2006 International Arab Conference on Information Technology. (2006).

Helal, S. et al. Predicting academic performance by considering student heterogeneity. Knowl. Based Syst. 161, 134–146. https://doi.org/10.1016/j.knosys.2018.07.042 (2018). ISSN 0950–7051.

Minaei-Bidgoli, B. K. Predicting student performance: an application of data mining methods using an educational web-based system. On 33rd Annual Frontiers in Education 1, T2A-13. (IEEE, 2003).

Cortez, P. & Silva, A. M. G. Using data mining to predict secondary school student performance. In A. Brito, & J. Teixeira (Eds.), Proceedings of 5th Annual Future Business Technology Conference 5–12. (Porto, 2008).

Ramaswami, M. & Bhaskaran, R. A CHAID based performance prediction model in educational data mining. Int. J. Comput. Sci. Issues 7 https://doi.org/10.48550/arXiv.1002.1144 (2010).

Kabra, R. & Bichkar, R. Performance prediction of engineering students using decision trees. Int. J. Comput. Appl. 36 (11), 8–12 (2012).

Ahmed, A. & Elaraby, I. Data mining: A prediction for student performance using classification method. World J. Comput. Application Technol. 2 (2), 43–47 (2014).

Daud, A. & Abbas, F. Predicting student performance using advanced learning analytics. International World Wide Web Conference Committee (IW3C2). 415–421. (2017).

Lu, O. H. T. et al. Applying learning analytics for the early prediction of students’ academic performance in blended learning. J. Educational Technol. Soc. 21 (2), 220–232 (2018). http://www.jstor.org/stable/26388400

Tomasevic, N., Gvozdenovic, N. & Vraneš, S. Overview and comparison of supervised data mining techniques for student exam performance prediction. Computers&Education 143. 103676. https://doi.org/10.1016/j.compedu.2019.103676. (2019).

Aydoğdu, Ş. Predicting students’ final performance using artificial neural networks in online learning environments. Educ. Inform. Technol. 25 (3), 1913–1927 (2020).

Dabhade, P. et al. Educational data mining for predicting students’ academic performance using machine learning algorithms. Mater. Today: Proc. 47, 5260–5267 (2021).

Chen, Y. & Zhai, L. Comparative study of student performance prediction using machine learning. Educ. Inform. Technol. 28, 12039–12057. https://doi.org/10.1007/s10639-023-11672-1 (2023).

Hussain, S., Khan, M. Q. & Student-Performulator Predicting students’ academic performance at secondary and intermediate level using machine learning. Ann. Data Sci. 10, 637–655. https://doi.org/10.1007/s40745-021-00341-0 (2023).

Guanin-Fajardo, J., Guaña-Moya, J. & Casillas, J. Predicting the academic success of college students using machine learning techniques. Data 9 (4), 60. https://doi.org/10.3390/data9040060 (2024).

Villar, A. & Andrade, C. Supervised machine-learning algorithms for predicting student dropout and academic success: A comparative study. Discovering Artif. Intell. 1–24. (2024).

Kotsiantis, S., Pierrakeas, C. & Pintelas, P. Preventing student dropout in distance learning systems using machine learning techniques. 7th International Conference on Knowledge-based Intelligent Information & Engineering Systems 2774 267–274. (Springer-Verlag, 2003).

Chung, J. Y. & Lee, S. Dropout early warning systems for high school students using machine learning. Child Youth Serv. Rev. 96, 346–353 (2019).

Aina, C., Baici, E., Casalone, G. & Pastore, F. Determinants of university dropout: A review of socio-economic literature. Socio Econ. Plann. Sci. 101102. (2021).

Chen, Y. et al. Identifying at-risk students based on a phased prediction model. Knowl. Inf. Syst. 62, 987–1003. https://doi.org/10.1007/s10115-019-01374-x (2020).

Plak, S., Cornelisz, I., Meeter, M. & van Klaveren, C. Early warning systems for more effective student counselling in higher education: evidence from a Dutch field experiment. High. Educ. Q. 76 (1), 131–152 (2022).

Yağcı, M. Educational data mining: prediction of students’ academic performance using machine learning algorithms. Smart Learn. Environ. 9, 11. https://doi.org/10.1186/s40561-022-00192-z (2022).

Alamgir, Z., Akram, H., Karim, S. & Wali, A. Enhancing student performance prediction via educational data mining on academic data. Inf. Educ. 23 (1), 1–24. https://doi.org/10.15388/infedu.2024.04 (2024).

Baradwaj, B. & S.Pal Mining educational data to analyze student performance. Int. J. Adv. Comput. Sci. Appl. Vol. 2, 6 (2011).

Kabakchieva, D., Stefanova, K. & Kisimov, V. Analyzing university data to determine student profiles and predict performance. Proceedings of the 4th International Conference on Educational Data Mining (EDM 2011)347–348. (Eindhoven, 2011).

Kaur, Parneet, M., Singh & Gurpreet Singh Josan. Classification and prediction based data mining algorithms to predict slow learners in education sector. Procedia Comput. Sci. 57, 500–508 (2015).

Saa, A. A. Educational data mining & student performance prediction. Int. J. Adv. Comput. Sci. Appl. 7 5. (2016).

Pallathadka, H., Wenda, A. & Ramirez-Asís, E. Maximiliano Asís-López, Judith Flores-Albornoz, Khongdet Phasinam, Classification and prediction of student performance data using various machine learning algorithms. Mater. Today Proc. 80 (3), 3782–3785. https://doi.org/10.1016/j.matpr.2021.07.382 (2023).

Matz, C., Peters, S. C. B., Deacons, H., Dinu, C., Stachl, A. & C Using machine learning to predict student retention from socio-demographic characteristics and app-based engagement metrics. Sci. Rep. 13, 5705. https://doi.org/10.1038/s41598-023-32484-w (2023).

Czaja, R. & Blair, J. Designing Surveys https://doi.org/10.4135/9781412983877 (Pine Forge, 2005).

Escribano, R., Treviño, E., Nussbaum, M., Irribarra, D. T. & Carrasco, D. How much does the quality of teaching vary at under-performing schools? Evidence from classroom observations in Chile. Int. J. Educ. Dev. 72, 102125. https://doi.org/10.1016/j.ijedudev.2019.102125 (2020).

Kursa, M. B. & Rudnicki, W. R. Feature selection with the Boruta package. J. Stat. Softw. 36 (11), 1–13 (2010). http://www.jstatsoft.org/v36/i11/

O’Brien, R. M. A caution regarding rules of thumb for variance inflation factors. Qual. Quant. 41 (5), 673–690. https://doi.org/10.1007/s11135-006-9018-6 (2007).

Chawla, N. V., Bowyer, K. W., Hall, L. O. & Kegelmeyer, W. P. SMOTE: synthetic minority Over-sampling technique. J. Artif. Intell. Res. 16, 321–357. https://doi.org/10.1613/jair.953 (2002).

Kohavi, R. & John, G. Wrappers for feature subset selection. Artif. Intell. 97, 273–324 (1997).

Mushtaq, I. & Khan, S. N. Factors affecting students’ academic performance. Global J. Manage. Bus. Res. 12 (9), 17–22 (2012).

Cummins, J. Cognitive/academic Language proficiency, linguistic interdependence, the optimum age question and some other matters. Working Papers Biling. 19, 121–129 (1979).

Zumbo, B. D. A Handbook on the Theory and Methods of Differential Item Functioning (DIF): Logistic Regression Modeling as a Unitary Framework for Binary and Likert-Type (Ordinal) Item Scores (Directorate of Human Resources Research and Evaluation, Department of National Defense, 1999).

Farooq, M. S., Chaudhry, A. H., Shafiq, M. & Berhanu, G. Factors affecting students’ academic performance quality: A case of secondary school level. J. Qual. Technol. Manage. 7 (2), 1–14 (2011).

Ahmed, W. Machine learning-based academic performance prediction: ensemble regression models for quality education delivery. Sci. Rep. 15 (1). https://doi.org/10.1038/s41598-025-12353-4 (2025).

Fernandes, E. et al. Educational data mining: predictive analysis of academic performance of public school students in the capital region of Brazil. J. Bus. Res. 94, 335–343 (2019).

López-Zambrano, J., Torralbo, J. A. L. & Romero, C. Early prediction of student learning performance through data mining: A systematic review. Psicothema 33 (3), 456 (2021).

Dormann, C. F. et al. Collinearity: A review of methods to deal with it and a simulation study evaluating their performance. Ecography 36 (1), 27–46. https://doi.org/10.1111/j.1600-0587.2012.07348.x (2013).

Kaunang, F. J. & Rotikan, R. Students’ academic performance prediction using data mining. 2018 Third International Conference on Informatics and Computing (ICIC) 1–5. https://doi.org/10.1109/IAC.2018.8780547 (2018).

Al-Shehri, H. et al. Student performance prediction using support vector machine and K-Nearest neighbor. IEEE 30th Can. Conf. Electr. Comput. Eng. (CCECE). 2017, 1–4. https://doi.org/10.1109/CCECE.2017.7946847 (2017).

Khan, A. & Ghosh, S. K. Student performance analysis and prediction in classroom learning: review of educational data mining studies. Educ. Inform. Technol. 26 (1), 205–240 (2021).

Author information

Authors and Affiliations

Contributions

B.B.A. and S.K. conceptualised the study. S.K., N.A. and A.S. analysed the data. B.B.A., S.K. and N.A. contributed in writing the paper and interpreting the results. All authors approved the final version of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval and consent to participate

All procedures performed in this study involving human participants were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards. This study was approved by the Social and Human Sciences Scientific Research and Publication Ethics Committee of Akdeniz University (dated: 29.03.2021 and E. 62830). For participants who were minors, informed consent was obtained from their parents and/or legal guardians prior to participation in the study.

Consent for publication

The participants provided informed consent for the use of anonymized data in the publication.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Alkan, B.B., Kuzucuk, S., Alkan, N. et al. Using machine learning to predict student outcomes for early intervention and formative assessment. Sci Rep 15, 39797 (2025). https://doi.org/10.1038/s41598-025-23409-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-23409-w