Abstract

Metaheuristic algorithms inspired by natural phenomena have become indispensable tools for addressing complex, high-dimensional, and multimodal optimization problems. Nevertheless, many existing approaches are constrained by premature convergence, stagnation, and inadequate balance between exploration and exploitation, thereby limiting their effectiveness in solving challenging benchmark problems. This study introduces the Swift Flight Optimizer (SFO), a novel bio-inspired optimization algorithm grounded in the adaptive flight dynamics of swift birds. The novelty of SFO lies in its biologically motivated multi-mode framework, which employs a glide mode for global exploration, a target mode for directed exploitation, and a micro mode for local refinement, augmented with a stagnation-aware reinitialization strategy. This design ensures sustained population diversity, alleviates premature convergence, and enhances adaptability across high-dimensional search landscapes. The efficacy of SFO was rigorously assessed using the IEEE CEC2017 benchmark suite. Experimental findings reveal that SFO attained the best average fitness in 21 of 30 test functions at 10 dimensions and 11 of 30 test functions at 100 dimensions, thereby exhibiting accelerated convergence and a robust exploration–exploitation balance. Comparative evaluations against 13 state-of-the-art optimizers, including PSO, GWO, WOA, and EMBGO, further demonstrate the superior performance of SFO in terms of convergence speed, solution quality, and robustness. Collectively, these results establish SFO as a novel and competitive metaheuristic framework with significant potential for solving large-scale, multimodal, and high-dimensional optimization problems.

Similar content being viewed by others

Introduction

Metaheuristics are a collection of algorithms specifically developed in order to solve hard optimization problems for which classical algorithms are not efficient. These are particularly effective in solving problems involving large, complex, highly nonlinear search spaces where classical optimization algorithms are not efficient due to their extremely high computational needs1. Metaheuristics are high‑level frameworks for steering heuristic search processes in such a manner so as to enable efficient exploitation as well as exploration of the solution space. Their objective is to obtain high‑quality solutions within realistic computational budgets. Most such algorithms are inspired by naturally occurring processes and phenomena, which are robust strategies for dealing with vast and problematic problem domains2.

The field of optimization metaheuristics is incredibly diverse, encompassing a wide number of natural-process, cognitive, as well as engineered-system–oriented algorithms3. As one of the oldest groups is evolutionary algorithms (EAs)4, such as schemes like differential evolution (DE)5, and recent contributions in the form of the Logarithmic Mean‑Based Optimization (LMO)6, which seek at promoting convergence with novel schemes for recombination.

Another major class is swarm intelligence (SI) algorithms7, where behavior in social organisms is simulated. Classic examples are particle swarm optimization (PSO)8 and ant colony optimization (ACO)9, while a few newer members are the Puma Optimizer (PO)10 and Walrus Optimization Algorithm (WOA)11. These utilize population‑based cooperation and cooperative dynamics for exploration versus exploitation balancing in complex search landscapes. A further group of methods is based on physics‑inspired principles, such as the gravitational search algorithm (GSA)12, which simulates physical forces to guide candidate solutions. Recently, algorithms like the Enzyme Action Optimizer (EAO)13 have enriched this domain by modeling biochemical reaction dynamics to provide new mechanisms for escaping local optima and accelerating convergence.

Recent progress in metaheuristic research has introduced advanced nature-inspired algorithms that extend beyond these classical frameworks. A notable contribution is the Woodpecker Mating Algorithm (WMA), which models the drumming-based mating behavior of woodpeckers14. Enhanced variants, such as the Opposition-based Woodpecker Mating Algorithm (OWMA), integrate opposition-based learning and local memory mechanisms to strengthen the balance between exploration and exploitation, thereby achieving superior performance across diverse optimization problems15. Furthermore, parallel and hardware-accelerated implementations have been proposed. For instance, the GPU-based Woodpecker Mating Algorithm (GWMA) employs a CUDA-parallelized framework that substantially reduces computational time reportedly achieving up to an 80-fold speedup compared to the sequential WMA while preserving solution accuracy on the IEEE CEC2017 benchmark suite16.

Beyond the WMA family, recent algorithms have further diversified the landscape of bio-inspired optimization. For example, the Wolf–Bird Optimizer (WBO), draws upon interspecies dynamics to model competitive and cooperative behaviors, and has demonstrated strong performance in continuous optimization tasks17. In parallel, comprehensive surveys in have systematically catalogued emerging metaheuristics, evaluating their convergence properties, performance trends, and application domains18. Likewise, an extensive review19, has provided an updated taxonomy of metaheuristics, while also identifying persistent challenges and outlining avenues for future algorithmic development. Collectively, these contributions underscore both the rapid pace of methodological innovation in the field and the necessity of advancing novel, biologically grounded algorithms such as the proposed Swift Flight Optimizer (SFO).

Metaheuristic solutions are found extensively in a range of applications including design optimization in engineering design, financial forecasting, logistics and scheduling in supply chains, bioinformatics, machine learning, and network configuration3,20. Because of their flexibility and capability for robust handling of multimodal, nonlinear, high‑dimensional optimization problems, their application is especially effective for theoretical research in addition to practical issue solving in real‑world scenarios21. Even newer applications are bio‑inspired algorithms in unmanned aerial vehicle (UAV) and drones in multi‑UAV path planning22, applications for search‑and‑rescue mission optimization23. Further attesting to their practical range diversification and growing impact in autonomous aerial systems.

However, solutions based on metaheuristics also share some shortcomings and weaknesses. These are a vulnerability towards early convergence, a persisting exploration–exploitation balance, and a reliance on careful parameter tuning. Furthermore, in line with the No‑Free‑Lunch theorem24, there does not exist any optimization algorithm which is equally effective in delivering optimal solutions for all possible problems. As a result, a choice with regard to a suitable metaheuristic is dependent on specific attributes, constraints, and needs of candidate problems. Towards this end, it is interesting to note that works towards designing new optimization schemes which are able to handle more effectively the wide spectrum of challenging problems which are encountered in applications in engineering fields and other applications are still continuous25.

Recent advances in bio-inspired metaheuristic optimization have witnessed the emergence of numerous algorithms that address the limitations of classical methods, particularly in high-dimensional and multimodal search spaces. For instance, the Improved Planet Optimization Algorithm hybridized with the Reptile Search Algorithm has been successfully employed in complex segmentation tasks, showing how hybrid strategies can significantly enhance search performance in medical imaging domains26. Similarly, the Puma Optimizer (PO) presents a novel predation-inspired strategy, integrating aggressive pursuit mechanisms into machine learning pipelines for superior convergence27. Furthering the trend of synergistic design, the Synergistic Swarm Optimization Algorithm introduced a cooperative architecture that dynamically adjusts between exploration and exploitation, emphasizing adaptability in dynamic landscapes28. In multi-objective domains, the Guided Epsilon-Dominance Arithmetic Optimization Algorithm has demonstrated impressive efficiency in engineering design by enhancing convergence reliability across Pareto fronts29. In the space of single-agent local search models, the Non-Monopolize Search (NO) algorithm proposed a lightweight and effective mechanism, focusing on selective intensification in promising areas while maintaining overall search diversity30. From a biological standpoint, novel animal-based behaviors continue to influence metaheuristic innovation—exemplified by the Pufferfish Optimization Algorithm, which models the defensive inflation and threat response mechanisms of pufferfish to guide search dynamics31. Hybridization is another major theme in recent literature. For example, the Improved Prairie Dog Optimization combined with the Dwarf Mongoose Optimizer offers a collaborative framework that leverages interspecies behavioral metaphors to escape local optima and improve convergence reliability32. Likewise, the Modified Crayfish Optimization Algorithm has been tailored to engineering problems, highlighting the effectiveness of structural adjustments to baseline algorithms33. Interestingly, metaphor-less algorithms have also shown great promise. The Far and Near Optimization (FNO) model employs a simple yet effective distance-based update mechanism that forgoes biological metaphor in favor of mathematical clarity and computational efficiency34. On the other hand, the Stork Optimization Algorithm applies avian migratory behavior to sustainable production planning, aligning well with the biologically inspired design of SFO35. The Teaching–Learning-Based Optimization (TLBO) algorithm remains an influential educational metaphor-based technique, often serving as a baseline for comparative analyses in metaheuristic performance evaluations36. Among classical and hybrid swarm intelligence methods, the Particle Swarm Optimization (PSO) algorithm continues to be extensively studied and applied across domains due to its simplicity and convergence properties37. Human behavior has also been adopted in optimizer design, as seen in the Sculptor Optimization Algorithm, which mimics iterative refinement and creative decision-making processes akin to human sculpting38. Additionally, the Social Spider Optimization Algorithm integrates cooperative foraging and vibration-based communication strategies, showing strong capabilities in multimodal landscapes39. Similarly, the Whale Optimization Algorithm (WOA) continues to receive attention, with recent surveys highlighting its mathematical foundation, variants, and successful application cases40. Lastly, the Animal Migration Optimization (AMO) algorithm encapsulates large-scale group movement dynamics, enabling broad exploration and adaptive response to environmental gradients, which directly aligns with the mode-switching and population diversity control mechanisms embedded within SFO41.

The primary purpose of the SFO is to overcome persistent limitations of existing metaheuristics, including premature convergence, stagnation in local optima, and an unstable balance between exploration and exploitation. While many algorithms perform adequately on low- and medium-dimensional problems, their effectiveness often declines on high-dimensional, multimodal, or composite benchmarks. SFO addresses these challenges through an adaptive search mechanism inspired by the agile flight dynamics of swift birds. It employs three coordinated modes: glide for global exploration, target for focused exploitation, and micro for localized refinement. Combined with a stagnation-aware reinitialization strategy, this design maintains population diversity, accelerates convergence, and enhances robustness across diverse optimization problems, thereby justifying the development of SFO as a novel and competitive bio-inspired algorithm.

The focus is on developing a novel bio-inspired optimization algorithm referred to herein as SFO based on swift birds (Fig. 1) agile flight behavior and hunting strategies in nature. Tailored for efficient handling of complex high-dimensional optimization problems commonly found in real applications as well as in the realm of engineering, SFO is inspired by swift birds’ remarkable agility for effective scanning large environments efficiently, targeting prey precisely, making precise rapid maneuvers in changing environmental conditions42,43, so it mimics such behaviors by virtue of adopting three mutually complementary search modes: glide, target, and micro, and dynamically transitioning between modes based on search feedback. Such a biologically inspired design allows for balancing exploration and exploitation sufficiently while maintaining population diversity for effective adaptation towards a broad range of optimization landscapes in a manner akin to swift birds’ flight strategies in their intrinsic robustness and flexibility43.

Picture of swift bird44.

Besides its biological inspiration, SFO is organized in a form so that it is capable of dealing with major issues occurring in high-dimensional optimization settings. There are two main computational issues motivating its construction. First, high-dimensional optimization leads oftentimes into the curse of dimensionality so that with large search spaces standard optimizers suffer slow and erratic convergence. Second, many real‑world objective functions are multimodal, discontinuous, or contain noisy gradients, further complicating the search process3. SFO overcomes these challenges by maintaining a population of candidate solutions that collectively survey multiple regions of the decision space. Through its three adaptive flight modes (glide, target, and micro), SFO integrates stochastic perturbations and dynamic mode switching to escape local minima. This cooperative and biologically inspired mechanism allows SFO to balance intensified exploitation of promising regions with wide exploration of complex landscapes, thereby improving robustness and convergence in high‑dimensional problems.

By effectively addressing these challenges, SFO demonstrates strong adaptability and advanced problem‑solving capabilities. Its design allows it to handle a wide spectrum of complex optimization tasks, positioning it as a promising approach for engineering and other real‑world applications. The principal contributions of this study are as follows:

-

The Swift Flight Optimizer (SFO) is introduced as the first bio-inspired metaheuristic algorithm derived from the adaptive flight dynamics of swift birds, establishing a novel paradigm for multi-mode search strategies in optimization.

-

A three-mode computational framework is formulated, consisting of a glide mode for global exploration, a target mode for directed exploitation, and a micro mode for localized refinement. This framework is further reinforced with a stagnation-aware reinitialization mechanism that preserves population diversity and mitigates premature convergence.

-

A comprehensive empirical evaluation is conducted using the IEEE CEC2017 benchmark suite, covering multiple dimensional settings and problem categories, and including comparisons against thirteen state-of-the-art algorithms.

-

Experimental analysis demonstrates that SFO consistently achieves accelerated convergence, a balanced exploration–exploitation dynamic, and enhanced robustness, confirming its effectiveness for tackling large-scale, multimodal, and high-dimensional optimization problems.

The rest of this paper is organized as follows: Section “Related work” reviews related work, discussing existing bio‑inspired and nature‑inspired optimization algorithms and categorizing them according to their behavioral inspiration. Section “Swift Flight Optimizer (SFO) background theory” introduces the Swift Flight Optimizer (SFO), detailing its biological foundation drawn from swift bird flight dynamics, its mathematical formulation, and its adaptive exploration–exploitation strategies. Section “Results” describes the experimental setup, including benchmark functions, parameter settings, evaluation metrics, and the set of compared optimization algorithms. Also its presents a comparative performance analysis of SFO against state‑of‑the‑art optimizers across diverse benchmark suites. Finally, Section “Discussion” concludes the paper by summarizing the main findings and outlining promising directions for future research.

Related work

Optimization algorithms are fundamental in computational research, offering powerful methods to tackle complex problems across a wide variety of fields. Many of these methods draw inspiration from natural and social phenomena, converting biological, physical, chemical, and behavioral principles into efficient computational strategies45. Their richness stems from numerous sources of inspiration, such as animal behaviors, plant dynamics, and physical processes3. This diversity has led to algorithms that are not only highly effective but also robust and adaptable to the multi‑dimensional and intricate challenges encountered in optimization tasks. Each algorithm emulates certain natural mechanisms, which enable distinctive means for revealing optimal solutions in changing high-dimensional search spaces46. By mimicking natural processes, such algorithms conduct exploration, exploitation, and evolutionary transitions and cover the essence of mechanisms in real-world processes3. Consequently, Table 1 gives a complete classification scheme for State-Of-The-Art (SOTA) optimization algorithms, which are listed according to their inspirations at a conceptual level and dominant methodological approaches.

Evolutionary-based algorithms are based on Darwinian principles of evolution such that populations of candidate solutions are successively refined in order to achieve superior performance. Genetic Algorithm (GA)47 and Differential Evolution5 algorithms are based on mimicking processes like selection, crossover, mutation, and reproduction. These kinds of algorithms maintain a population whose exploration is broad in the search space so that solutions can gradually refine themselves in promising regions with evolutionary pressure. Due to their fine balance between convergence and diversity, a large class of intricate optimization problems are made efficient.

Swarm-based algorithms utilize social animals’ collective intelligence and de-centralized decision-making. Some examples are: Particle Swarm Optimization (PSO)48 modeling bird flocking behavior and school behavior in fish, whereas pheromone laying is employed for search path direction in Ant Colony Optimization (ACO)9 for search path guidance. Artificial Bee Colony (ABC)49, Whale Optimization Algorithm (WOA)50, Ant Lion Optimizer (ALO)46, Jellyfish Search (JS)51, and Bacteria Phototaxis Optimizer (BPO)52 further diversify swarm behaviors for optimization purposes. Newer variants such as EMBGO53, GPSOM54, NSM‑AHA55, GWOCSA56, and QSHO57 introduce multi‑strategy mechanisms, survivorship mechanisms, hybrid mechanisms or quantum augmentation enabling effective exploration in whole regions as well as efficient exploitation in promising solutions. Such inherent collective behavior provides flexibility in exploring large high‑dimensional search spaces while keeping computational costs low. A distinctive subgroup within swarm‑based algorithms is specifically inspired by cooperative behaviors in mammals. Grey Wolf Optimizer (GWO)24 mimics hierarchical predation behavior involving chase and encircling strategies whereas Harris Hawks Optimizer58 mimics strategic pouncing strategies in hawks. Newer approaches such as the Cheetah Optimizer59 (CO) and Meerkat Optimization Algorithm (MOA)60 model rapid chasing behavior combined with surveillance behavior as well as synchronized predation behavior. These optimizers demonstrate how modeling mammalian social behavior as well as predation strategies results in optimizers possessing strong global exploration capability in combination with sharp exploitation capability locally.

Physics‑based is algorithm inspiration stems from physical laws governing natural phenomena. Simulated Annealing61 is an imitation of metal annealing for escaping local optima during optimization, while Gravitational Search Algorithm (GSA)62 is inspired by Newton’s gravitation for attracting agents towards optima. Multi‑Verse Optimizer (MVO)63 uses concepts from cosmology, and Black Hole Algorithm (BH)64 is an imitation of black hole gravitational force. These utilize familiar physics analogies for exploitation versus exploration balancing in complex landscapes.

Chemical and biochemical algorithms project reaction dynamism onto search approaches. Atom Search Optimization (ASO)65 mimics atom movement and bonding forces, Chemical Reaction Optimization (CRO)66 reflects chemical reaction dynamism, while Nuclear Reaction Optimization (NRO)67 mimics nuclear level processes. These methods tend to utilize energy states and reaction paths for diversification objectives while gradually enhancing solutions in a steady state form, which is suitable for hard engineering and design problems. Biology-inspired optimizers are based on microorganisms or biological processes apart from typical swarm behavior. Enzyme Action Optimizer (EAO)13 abstracts enzymatic catalytic action in a manner such that candidate solutions are adaptively modified. Pufferfish Optimization Algorithm (POA)68 employs pufferfish defense behaviors as an inspiration, while Artificial Protozoa Optimizer (APO)69 mimics a foraging and reproduction cycle in protozoa. These algorithms utilize biological diversity and adaptability for robust search with effective convergence.

System‑based approaches model interactions within ecological or natural systems. Water Cycle Algorithm (WCA)70 simulates evaporation, precipitation, and runoff to explore and exploit search spaces, while Snow Ablation Optimizer (SAO)71 uses melting and accumulation dynamics to guide solution updates. By abstracting ecological processes, these methods can efficiently handle multi‑modal and dynamic optimization challenges. Math‑based algorithms are derived from mathematical operators and functions. Arithmetic Optimization Algorithm (AOA)72 employs arithmetic operators for guiding movement, Chaos Game Optimization (CGO)73 uses chaotic maps along with fractals for diversity purposes, while Sine Cosine Algorithm (SCA)74 uses oscillatory patterns of sines and cosines for balancing exploitation versus exploration. Due to their mathematical origins, it is simple for users to implement and fine-tune them for a wide range of optimization problems.

Human-oriented algorithms include processes based on human behavior and knowledge passing. Chef‑Based Optimization Algorithm (CBOA)75 reflects successive optimization by chefs, while Cultural Algorithms (CA)76 mimic cultural knowledge evolution over a few generations. Both are iteration-based learning and adaptation-oriented approaches which are easy to use for a large number of applications and versatile. Although metaheuristic and evolutionary algorithms are significantly variform, their severe limitations include premature convergence, low adaptability in changing environments, and strong needs for extensive parameter tuning3. Classical evolutionary algorithms always suffer exponentially growing computational costs which render their efficiency low when dealing with very large or highly complicated search spaces. Moreover, strongly gradient-oriented approaches are poor on highly nonlinear or discontinuous problems such that it is essential for flexible, gradient-free optimization strategies to be constructed3.

Recent studies have introduced advanced multi-strategy metaheuristics to improve the balance between exploration and exploitation and to enhance convergence reliability. The Artificial Lemming Algorithm (ALA) combines four behavioral patterns: migration, burrowing, foraging, and predator evasion, all regulated by an adaptive energy factor that sustains population diversity and solution accuracy77. The Multi-Strategy Enhanced Arithmetic Optimization Algorithm (MSEAOA) extends the original AOA through mechanisms such as good point set initialization, cooperative learning strategies, and perturbation-based refinements, resulting in faster and more precise convergence78. Similarly, the Multi-Strategy Cooperative Augmented Crayfish Optimization Algorithm (MCOA) incorporates adaptive cooperation strategies and dynamic parameter control, yielding stable performance on CEC benchmarks and demonstrating practical effectiveness in engineering tasks, including unmanned aerial vehicle path planning79.

These weaknesses notably affect the effectiveness of metaheuristic algorithms when finding solutions for high-dimensional or strongly nonlinear optimization problems. Premature convergence is a key deficiency when an algorithm fails to sustain significant diversity in its population, particularly in multimodal search landscapes80. As a result, the search is trapped in local optima while not obtaining actual global solutions81. Slow adaptability in dynamic environments is another key deficiency since most algorithms apply constant exploration and exploitation behaviors82. Such inadaptability constrains their performance in handling changing objectives or constraints and thus renders them less suitable for realistic real-world problems83.

Metaheuristic algorithms are also subject to several major drawbacks which hinder their universal use. Most are greatly reliant upon parameter tuning such as setting mutation rates, crossover probabilities, and weight multipliers which is slow and restricts generalizability83. Scalability is also restricted by the “curse of dimensionality” because computational expense grows exponentially with model size so large-scale optimization is inefficient80. Moreover, in applications involving heavily nonlinear, discontinuous, or noisy objective functions for which gradient-based approaches are unsupportable, there is a need for strong gradient-free approaches82. They are also less robust in multimodal landscapes such that there is early convergence upon local optima in favor over exploration of multiple regions simultaneously82. Many are also reliant upon problem-oriented adaptations such that there is a restriction in their capacity for adaptation between problems in a set of different optimizations task80.

Another essential advantage of the Swift Flight Optimizer (SFO) is an adaptive mode-switching within the algorithm. Such a mode-switching between modes of gliding, targeting, along with micro-adjustment is performed on a dynamic level in order to ensure stable convergence behavior compared with a majority of state-of-the-art optimizers. Such characteristics make it best suited for hard, high-dimensional, as well as hard multimodal optimization problems.

By taking inspiration from flight dynamics as well as adaptive behavior observed in swift birds, SFO compensates for significant weaknesses observed in conventional metaheuristics and offers a robust nature-inspired method for handling a large number of challenging optimization problems. We now give the mathematical formulation for SFO and its applications for design examples in engineering.

Swift Flight Optimizer (SFO) background theory

Originality and distinct features of SFO

The Swift Flight Optimizer (SFO) introduces a set of conceptual and mathematical innovations that clearly differentiate it from existing swarm intelligence algorithms. To the best of our knowledge, it represents the first metaheuristic inspired by the flight dynamics of swift birds, whose distinctive behaviors are modeled through three complementary modes: glide, target, and micro. Within this structure, the glide mode facilitates large-scale exploration across the search space, the target mode enables directed exploitation toward promising solutions, and the micro mode provides localized refinements around elite candidates. This tri-modal framework reflects the natural adaptability of swifts, allowing the algorithm to sustain balance between global and local search processes.

Unlike classical swarm optimizers such as PSO, ACO, and ABC, which rely on a single update equation, or more recent methods such as BPO, GPSOM, EMBGO, and QSHO, which introduce partial multi-strategy enhancements, SFO integrates a multi-mode adaptive structure within a unified framework. This structure is reinforced by a stagnation-aware reinitialization mechanism that restores population diversity when premature convergence occurs, thereby maintaining balance between exploration and exploitation even in high-dimensional search spaces.

Table 2 summarizes the conceptual and methodological distinctions between SFO and representative swarm-based algorithms. As shown in Table 2, classical swarm algorithms such as PSO, ACO, and ABC are based on single updating equations and exhibit weak stagnation recovery mechanisms. Mid-generation algorithms such as GWO and WOA improve search adaptability through hierarchical and encircling behaviors but remain vulnerable to premature convergence. Recent developments, including GPSOM, EMBGO, and QSHO, have introduced group-based multi-strategy updates, dual-stage global–local search, and quantum-enhanced dynamics, respectively. While these methods demonstrate stronger adaptability, they do not employ explicit stagnation recovery or full adaptive mode switching. In contrast, the proposed SFO integrates a three-mode adaptive structure inspired by swift flight dynamics, coupled with a stagnation-aware reinitialization mechanism. These features establish SFO as both conceptually novel and formulaically distinct within the swarm intelligence domain.

Biological inspiration

The proposed Swift Flight Optimizer (SFO) gets its basic inspiration from the common swift’s (Apus apus) adaptative flight behavior which is famous for its exceptional aerial endurance and mobility84. Unlike most bird species which land for rest or foraging while in continuous flights, swift birds remain in flight for months at a stretch performing gliding, direction-oriented foraging, and stabilizing perturbation in lieu of landing84. Such continuous flight ability is a fine compromise between distant travel and real-time control such that swift birds are a prime biological example for optimization frameworks which need exploration, exploitation, and stability within complex search landscapes. When performing high-altitude migration or foraging for food supplies, swift birds primarily utilize energy-optimal gliding for covering large tracts with a low metabolic exertion. Such a behavior is made possible by narrow crescent-wing form and elongated streamlined body such that they are in a position for exploiting upward currents inherent within thermals as well as atmospheric updrafts43. When conceptualized in optimization terms, such biological behavior is mimicked in glide mode in SFO such that agents perform large inertial movement for worldwide exploration in a similar manner as the bird’s low-cost travel for large distances in search for favorable environmental signals.

When environmental stimuli like clusters of insects are perceived, swifts change passive gliding into active high-speed chasing. Such foraging behavior is marked by rapid direction reversals and accelerating towards dynamic preys based on input from sensors and fine-motion control85. SFO inherits such a biological method in its Target Mode in which agents perform abrupt transitions in their courses towards best-known solutions thus far for enhancing convergence accuracy in auspicious subregions. Such a technique enables such an optimizer to mimic the bird’s capability for promptly exploiting ephemeral opportunities within its milieu. Under turbulent or unstable airflow conditions, swifts display remarkable control by performing fine-scales wing as well as tail maneuvers. Such micro-movements settle their position while in flight and prevent aerodynamic instability86. Drawing such a technique as an inspiration, there is an implementation for a Micro Mode in SFO which performs localized fine-tuning in the neighborhood around elite solutions so as to permit in-depth exploitation in optima-laden realms with tight optima or softly gradated fitness slopes. Such an adaptable control technique ensures no oscillation or stagnation near optima. Altogether, swift flight mechanics (long distance gliding, directed directional movement towards objectives, as well as fine-grained movement locally in its immediate milieu during flight maneuvers) provide a biologically inspired foundation for developing a multi-modal optimization method. Such behaviors are simulated computationally within our proposed Swift Flight Optimizer (SFO) as three mutually complementary search modes: Glide, Target, as well as Micro. Each such mode serves a specific purpose in balancing exploration and exploitation throughout an optimization campaign. As illustrated in Fig. 2, there is a glide mode which accommodates wide exploration in keeping with extended randomized steps; a target mode which identifies search towards substantiated regions; a micro mode which conducts fine-motion refinements in the vicinities around optimum solutions. Such a biologically inspired technique enables such an SFO for effectively solving high-dimensional, multimodal, as well as dynamic scenarios in optimization in minimal parameter fine-tuning with increased adaptability.

Illustration of the three flight modes in the proposed Swift Flight Optimizer (SFO).

Mathematical model

The Swift Flight Optimizer (SFO) is a bio-inspired metaheuristic algorithm that mimics the dynamic flight behaviors of swift birds across three distinct modes: glide, target, and micro. Each mode is modeled by a separate velocity update strategy, reflecting the birds’ adaptive navigation and search behaviors in their natural environment. Swifts often glide across long distances with minimal energy expenditure, exploring vast regions of the sky. This behavior is modeled by stochastic movement influenced by inertial momentum, Gaussian noise, and Lévy flight-based exploration.

The Eq. 1 used to update the velocity of the given agent \({\varvec{i}}\) in Glide Mode:

where \({v}_{i}^{t+1}\) is the current velocity, \({x}_{i}^{t}\) is the current position, \(\omega\) is the inertia weight, \(\alpha and \beta\) are scaling factors for Gaussian and Lévy components, \(N(0,I)\) is a vector of standard normal values, \({\varvec{L}}\) is the Lévy step vector computed using Mantegna’s algorithm. Equation 2 used to define Lévy step components \({L}_{j}\)

where \({u}_{j}\) ∼ \(N\left(0,{\sigma }_{u}^{2}\right)\) is a standard normal variable, \({v}_{j}\sim N\left(\text{0,1}\right)\) and \(\beta \in ( 0, 2]\) is a stability parameter (commonly set to 1.5).

Equation 3 use to compute the scale parameter \({\sigma }_{u}\):

where \(\Gamma \left(.\right)\) is the gamma function. This mechanism mimics the swift bird’s erratic long-glide movements, helping the algorithm escape local optima and maintain search diversity across complex landscapes. When a swift identifies a food source or landing spot, it rapidly transitions into targeted flight. This behavior is translated into the optimizer by guiding agents toward the global best solution found so far, while preserving swarm diversity.

The velocity in Target Mode is updated as in (4):

where \({p}_{best}\) is the personal best position of agent, \(i, {g}_{best}\) is the global best position among all agents, \({\psi }_{1}\) and \({\psi }_{2}\) are acceleration coefficients and \({r}_{1}, {r}_{2}\) are random scalars \(\in\)1,2. This model captures the goal-oriented descent of swifts toward known high-quality regions in the decision space. In final approaches, swifts exhibit fine-tuned, micro-level wing adjustments for high precision control. This behavior is implemented in SFO as a local exploitation strategy around the best-known solution. In this mode, the position is directly perturbed using a Gaussian and adaptive step as in (5).

where ϵ ≪ 1 is a small step size.

This stage ensures local refinement near high-quality optima, enabling convergence accuracy, akin to the swift’s fine body control when catching prey mid-air or landing on narrow surfaces.

Mode switching strategy and stagnation avoidance

A critical strength of the Swift Flight Optimizer (SFO) lies in its adaptive mode-switching mechanism and stagnation avoidance strategy, which together ensure effective navigation of complex, multimodal search spaces. Inspired by the flexible and responsive flight behavior of swift birds, the algorithm dynamically transitions between distinct behavioral phases (Glide Mode, Target Mode, and Micro Mode) to balance exploration and exploitation based on real-time feedback from the fitness landscape. Transitions between modes are governed by adaptive rules based on recent improvement history:

-

Glide Mode is activated when exploration is required. It generates large, random movements using Lévy flight and stochastic noise. When no improvement (IMPR) occurs in Glide mode the glide fail counter (GFC) is increment by one. If GFC is more than four and the Glide–Target-Cycle counter (GTC) remains below a predefined threshold (typically 30) the algorithm switches to Target Mode.

-

Target Mode focuses on exploitation by guiding agents toward the best global solution with smaller, controlled steps. When no IMPR occurs in target mode the target fail counter (TFC) is increased by one. If TFC is more than ten, and the GTC counter below a predefined threshold the algorithm switches to Glide Mode to encourage broader exploration.

-

Micro Mode a fine-tuning technique for exploitation at a deeper level locally. It is invoked when Target Mode and Glide Mode were repeatedly cycled more than 30 times without satisfactory improvement, and no stagnation is detected. When stagnation counter (SC) is reached up to more than 20 iterations with reset count (RstC) less than 5, population is re-initialized so diversity restoration occurs.

SFO also keeps count of number of GTC in order to monitor behavioral change. When such count exceeds a pre-specified threshold (e.g., 30), when further improvement is not taking place, Micro Mode is activated for fine-grained exploitation. It guarantees phasic responsiveness with a layering effect akin in flexibility to observed real swift bird foraging behavior. To avert convergence too prematurely, SFO adopts a stagnation counter counting global improvement over entire swarm. When improvement in best fitness is not observed for more than 20 iterations, while its reset count is below 5, population is rejuvenated. Its role is restoring diversity so it can explore once again in search space while also acting as a controlled restart. Its stagnation-aware re-initialization is for not only restoring diversity but also for allowing exploration in search space once again. This stagnation-aware reinitialization serves two purposes:

-

Escape from local optima

-

Maintenance of population diversity, both essential for robust performance in high-dimensional and dynamic optimization search space.

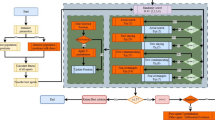

By combining biologically inspired flight behaviors with an adaptive control logic, SFO avoids common pitfalls in metaheuristic search, such as premature convergence and unproductive oscillations. The full flow of SFO optimizer including mode switching and stagnation handling mechanism is illustrated in Fig. 3.

SFO Flowchart with stagnation handling and mode-switching mechanism.

Avoidance of local optima

One of the principal challenges in population-based metaheuristics is premature convergence to local optima. SFO addresses this limitation through several mechanisms. First, its three-mode design (glide, target, and micro-refinement) enables adaptive transition from global exploration to fine-grained exploitation, reducing the likelihood of early stagnation. Second, the glide phase incorporates stochastic perturbations, including Lévy-distributed steps, which introduce long-range moves that expand the search frontier and help escape local basins. Third, a stagnation-aware reinitialization strategy actively restores diversity when no improvement is observed over several iterations, thereby preventing population collapse. Finally, adaptive switching thresholds ensure that the transition between modes is not purely deterministic but guided by fitness improvement signals, which enhances resilience against entrapment in narrow valleys. Collectively, these mechanisms contribute to SFO’s robustness in navigating multimodal and high-dimensional landscapes, ensuring a sustained balance between exploration and exploitation.

Exploration–exploitation balance

Maintaining an appropriate balance between exploration and exploitation is a defining requirement of high-performing metaheuristics. In SFO, this balance is embedded in its design. The glide phase promotes exploration through stochastic updates and Lévy perturbations, which broaden the search frontier and enhance coverage of the solution space. The target phase intensifies exploitation by steering agents toward elite solutions and consolidating improvements around promising basins. The micro-refinement phase further sharpens exploitation by applying local adjustments to refine solution accuracy. Adaptive mode switching regulates transitions among these phases, ensuring that exploration does not diminish too early and exploitation does not dominate prematurely. In addition, stagnation-aware reseeding reinstates exploration when diversity loss is detected. The effectiveness of this architecture is reflected in the convergence profiles, which exhibit rapid early descent followed by stable refinement, as well as in oscillatory patterns of exploration–exploitation dynamics that signify successful reactivation of diversity when stagnation occurs. These features collectively sustain a dynamic equilibrium between global search and local intensification across diverse problem classes and dimensional settings.

SFO algorithm analysis

Algorithm 1 illustrates the core workflow of the Swift Flight Optimizer (SFO).

-

Step 1 The algorithm begins with the initialization phase, where a population of particles (candidate solutions) is randomly generated, and initial velocities are assigned.

-

Step 2 The fitness of each particle is evaluated to determine both the individual best positions (pbest) and the global best solution (gbest) within the population.

-

Step 3 The main optimization loop iterates for a fixed number of iterations (TMax).

-

Step 4 In each iteration, every particle undergoes a behavioral update governed by the adaptive flight modes of the swift bird.

-

Step 5 The algorithm dynamically switches among three flight behaviors Glide, Target, and Micro Flight based on specific rules that detect stagnation or improvement in the particle’s search trajectory.

-

Step 6 Depending on the current mode, the velocity and position of the particle are updated using the corresponding mathematical model: long exploratory movements in Glide, goal-oriented updates in Target, or fine-grained refinements in Micro mode.

-

Step 7 After movement, the fitness is re-evaluated, and the personal and global bests are updated if improved solutions are found.

-

Step 8 Repeat this process until all particles are updated, and in every iteration, an optimal solution is saved.

-

Step 9 After a set maximum number of iterations is reached, gbest (the best global solution) is returned as the final result of the optimization routine.

The pseudocode embodies the adaptiveness in SFO, representing multi-mode coordination with minimal adjustment in parameters for robustness in varying search landscapes.

Swift Flight Optimizer.

SFO computational complexity

The computational complexity of the Swift Flight Optimizer (SFO) can be assessed by examining its key operational stages: population initialization, fitness evaluation, mode-dependent updates, and iterative tracking of the best solutions. Each stage contributes to the overall computational load as follows.

The initialization phase generates a population of n particles in a d dimensional search space. Each particle is initialized with random position and velocity vectors, resulting in a time complexity of.

\(O(nd)\). In every iteration, the fitness function is evaluated for each particle to update personal and global bests. Assuming the fitness function requires \(O(F)\) time per particle, the fitness evaluation for the entire population per iteration has a complexity of \(O(nF)\). Let T denote the maximum number of iterations. The total fitness evaluation complexity over all iterations becomes \(O(TnF)\). Each particle updates its velocity and position based on the current behavioral mode (Glide, Target, or Micro) with different stochastic and adaptive mathematical models. These updates are computed per particle per iteration and are linear in the dimension d, yielding a complexity of \(O(d)\). Thus, the per-iteration position and velocity update cost for all \(n\) particles is \(O\left(nd\right).\) Over T iterations, the cumulative cost of all mode-based updates is \(O(Tnd)\). Combining the initialization, evaluation, and update stages, the total computational complexity (TCC) of SFO is expressed as in (6):

\({\text{TCC}}_{\text{ SFO}}\) indicates that the runtime of SFO scales linearly with the number of particles \(n,\) the problem dimensionality d, and the number of iterations T, making it suitable for scalable high-dimensional optimization problems, especially where fitness evaluation dominates computational cost.

Results

An overview of the experimental setup is given in this section, together with details on the benchmark functions, comparison algorithms, settings of parameter , and evaluation metrics.

Benchmark description

The IEEE CEC 2017 benchmark suite is a widely recognized set of 30 test functions curated by the IEEE Congress on Evolutionary Computation to rigorously evaluate the performance of optimization algorithms. These functions are categorized into four main types: unimodal(Uni.), multimodal(Multi.) , hybrid(Hybr.), and composition (Comp.) functions, each presenting unique challenges to test various aspects of algorithmic behavior, as shown in Table 3. Unimodal functions are designed to assess the exploitation capabilities of algorithms in smooth landscapes, while multimodal functions test exploration abilities by presenting numerous local optima. Hybrid functions combine characteristics of both unimodal and multimodal functions, challenging algorithms to balance exploration and exploitation. Composition functions, the most complex category, are formed by blending multiple basic functions to simulate highly irregular and rugged search spaces, reflecting real-world problem complexity. Together, this benchmark suite provides a comprehensive and systematic framework for benchmarking an algorithm’s efficiency, adaptability, and robustness in solving high-dimensional and diverse optimization problems.

Computational environment

All simulations and comparative experiments were conducted on a workstation equipped with an Intel Core i7 processor (3.2 GHz, 16 cores), 16 GB RAM, and an NVIDIA GeForce RTX 3070 GPU with 16 GB VRAM. The operating system environment was Ubuntu Linux 20.04 LTS (64-bit). The algorithms were implemented in Python 3.10 using scientific computing libraries including NumPy 1.24, SciPy 1.10, and Matplotlib 3.7 for visualization. Random number generation was controlled through fixed seeds to ensure reproducibility. Benchmark functions from the IEEE CEC2017 test suite were evaluated under identical conditions across all algorithms.

Compared optimizers

Table 4 summarizes a variety of 13 contemporary, widely mentioned optimizations techniques with which the performance of SFO was assessed and contrasted.

Evaluation metrics

The mean and standard deviation were used to statistically assess each algorithm’s effectiveness. These metrics were selected in order to shed light on the optimization’s outcomes’ consistency throughout several runs as well as their average performance.

Parameters settings

For experimentation with the proposed Swift Flight Optimizer (SFO) on the IEEE CEC2017 benchmark functions, population size (n) was chosen as a constant 50 agents, while maximum number iterations were 1000 in order to permit for the fine details and complexity of the benchmark landscape. To examine further the scalability and robustness in high-dimensional regimes of the algorithm, further experimentation was again conducted for 100-dimensional optimization problems. Here, in this enlarged setting, population size was chosen as a constant 100 agents, while maximum number iterations were made 10,000. Parameter values for algorithm were chosen as: inertia weight w = 0.5, cognitive coefficient c_1 = 1.5 and social coefficient c_2 = 1.5. These particular settings were chosen intentionally in order to permit for adequately strong exploration ability and for a strenuous test of convergence behavior, adaptability as well as final effectiveness in solving extremely difficult, multimodal, or large-scale optimization problems. Parameter settings specific for each algorithm are shown in Table 5 such a detailed transparency about experiment results is retained including facility for reproducibility.

Performance analysis on IEEE CEC2017 benchmark functions

The proposed Swift Flight Optimizer (SFO)’s performance was extensively validated over the IEEE CEC2017 set of benchmarks in a range of dimensional scenarios. There were two large scenarios considered: 10-dimensional (10D) problems with 50 agents for 1000 iterations and 100-dimensional (100D) problems with 100 agents for 10,000 iterations. These experiments in totality encompass a complete measure of robustness for the optimizer, its scalability, and its adaptability for a wide range of mixed-complexity problems.

According to evaluation with 10D functions, SFO demonstrates remarkable superiority across a broad range of test functions. As summarized in Table 6, SFO achieved the best average fitness in 21 out of 30 benchmark functions, significantly outperforming competing methods. Notably, SFO consistently dominated in the more challenging hybrid and composition functions, where premature convergence often hinders other optimizers. In contrast, alternative algorithms showed weaker competitiveness: EMBGO achieved the best performance in 9 functions, GTP secured the top position in 4 functions, while classical optimizers such as PSO, FDA, MVO, GWO, and HGSO managed only 3 wins combined. Other algorithms, including WOA, MFO, SCA, WSO, SHO, and HLOA, failed to achieve a leading position in any function. These results highlight SFO’s ability to navigate complex, multimodal search spaces efficiently, avoiding stagnation and maintaining fast convergence toward optimal solutions.

When scaled to high-dimensional settings (100D, with 100 particles and 10,000 iterations), SFO continued to demonstrate competitive performance, though with expected challenges in scalability. As reported in Table 7, SFO achieved top rankings in 11 out of 30 benchmark functions, placing it among the strongest algorithms for high-dimensional optimization.

While its dominance was not as pronounced as in the 10D case, SFO maintained robustness in handling multimodal, hybrid, and composition functions, where traditional algorithms often struggle. Competing algorithms such as EMBGO and FDA exhibited stronger performance in selected high-dimensional problems, yet SFO’s consistent ranking across functions underscores its balance between exploration and exploitation even as the dimensionality increases. Importantly, the results demonstrate that while performance margins narrow in 100D settings, SFO remains a scalable and reliable optimizer capable of addressing large and complex search spaces.

The comparison between 10 and 100D results highlights two key aspects of SFO’s design. First, its adaptive switching mechanism enables rapid convergence in moderate-dimensional landscapes, leading to superior performance against both classical and modern metaheuristics. Second, in high-dimensional contexts, while absolute dominance decreases, SFO still maintains competitive adaptability without requiring extensive parameter tuning, a valuable trait for real-world large-scale optimization problems. This scalability demonstrates SFO’s robustness and provides evidence of its potential for deployment in dynamic, high-dimensional applications such as UAV search-and-rescue missions or large-scale engineering optimization tasks.

Visual analysis of SFO

Sensitivity analysis of SFO parameters

As illustrated in Fig. 4, the sensitivity analysis of the SFO optimizer reveals that it maintains consistent performance across most benchmark functions, reliably achieving competitive fitness values under a broad range of parameter configurations. Functions such as F2, F3, and F4 demonstrate high stability, where the optimizer converges to optimal or near-optimal solutions with minimal sensitivity to variations in parameters such as the number of agents, cognitive and social coefficients (c₁, c₂), Gaussian scaling (α), Lévy scaling (β), and inertia weight (w). These functions exhibit convergence stability particularly for moderate parameter values (e.g., \(w = 0.5,\;c_{1} = c_{2} = 1.45,\;\alpha = 0.5,\;and\;\beta = 0.57\)), requiring minimal fine-tuning.

Sensitivity heatmaps for SFO parameters on CEC2017 benchmark functions F1–F4 at dim = 10.

In contrast, Function F1 displays notable sensitivity, where the optimizer’s performance significantly depends on the choice of parameter settings. For F1, extreme values such as high inertia (w = 0.9) or overly aggressive exploration (β = 2.0) can result in performance degradation, and in some cases, lead to unstable or divergent search behavior. Nevertheless, under well-balanced configurations, SFO is still capable of converging toward optimal regions. Overall, the optimizer demonstrates strong robustness and convergence characteristics, with only slight to moderate sensitivity in select functions, affirming its reliability with limited tuning requirements across diverse optimization landscapes.

SFO search history, trajectory, and average fitness analysis

Figures 5 and 6 comprehensively illustrate the performance of the proposed Swift Flight Optimizer (SFO) on the CEC2017 benchmark functions F1–F10. Each row in these figures presents four key perspectives: the 3D surface of the objective function (first column), the spatial search history of the particles (second column), the trajectory of the first particle over iterations (third column), and the average fitness convergence curve (fourth column).

Search history, trajectory of the first agent, and average fitness of SFO optimizer over selected functions F1, F2, F3, F4 and F5 of IEEE CEC2017 functions.

Search history, trajectory of the first agent, and average fitness of SFO optimizer over selected functions F6, F7, F8, F9 and F10 of IEEE CEC2017 functions.

From Fig. 5, it is evident that for functions F1–F5, SFO demonstrates a clear two-phase behavior. Initially, the search is widely exploratory, as reflected by the diverse distribution of particle positions in the second column and large positional changes in the trajectory plots. As the iterations progress, the algorithm transitions smoothly into an exploitation phase, with the first particle converging toward the optimal region. The average fitness plots confirm this behavior, exhibiting rapid and stable convergence within the first 200 iterations, especially for F1, F2, and F3, where the optimizer reaches extremely low objective values, confirming high precision. Figure 6 presents the optimizer’s performance on the more rugged and multimodal functions F6–F10. Even under these challenging conditions, SFO maintains its adaptive behavior. The search history plots continue to show a dense cluster of particle positions around the minima, while the trajectory plots reveal a gradual dampening of movements signifying stabilization near global optima. The fitness curves further confirm this trend, with a consistent and monotonic decrease across most functions, despite minor oscillations in functions such as F7 and F8, due to their highly repetitive landscapes.

Moreover, Fig. 7 illustrates the 3D trajectories of the first particle of the Swift Flight Optimizer (SFO) across the CEC2017 benchmark functions F1–F9. The optimizer on functions F1–F6 exhibits a dynamic and adaptive search behavior characterized by a well-balanced transition between exploration and exploitation. In the early stages of the search process, the trajectory plots clearly show wide movements, indicating that the particle initiates a broad exploration phase to scan large areas of the search space. As optimization advances, the particles path becomes increasingly refined, with trajectory steps shrinking and converging toward specific regions in the landscape. This shift reflects a deliberate transition into a focused exploitation phase. The optimizer thus demonstrates an effective capacity to identify promising regions early and intensify its search in their vicinity, avoiding premature convergence. The smooth reduction in movement magnitude further confirms that SFO systematically guides the particle from global exploration toward high-precision convergence near the global optima. the 3D trajectories of the first particle of the SFO over the CEC2017 benchmark functions F7–F9, highlighting the optimizer’s capability to maintain adaptive search dynamics in more complex and rugged landscapes. In F7 and F8, the optimizer demonstrates a relatively scattered but directed search pattern, where initial steps explore the function surface broadly, followed by a gradual narrowing of the search trajectory. This behavior illustrates SFO’s robustness in avoiding stagnation and adapting to functions with multiple local optima.

3D trajectories of the first particle of SFO on CEC2017 benchmark functions F1–F9.

SFO exploration and exploitation analysis

The exploration–exploitation balance of the SFO can be visually assessed through the exploration–exploitation ratio95,96, and it is expressed as:

where \({x}_{ij}\) represents the value of the jth dimension for the ith individual. Figure 8 provides a comprehensive visualization of the dynamic exploration–exploitation behavior exhibited by the Swift Flight Optimizer (SFO) across all 30 benchmark functions in the CEC2017 suite at 10-dimensional settings. In the early iterations of most functions, SFO exhibits a high level of exploration activity, effectively searching diverse regions of the decision space. This wide exploration is instrumental in escaping local optima and identifying promising areas. As the algorithm progresses, a gradual transition from exploration to exploitation is observed, where SFO begins to focus its search around the most promising regions discovered. This balance is clearly maintained in most functions, where exploitation becomes dominant in the later stages of the optimization process. Such behavior contributes to both convergence reliability and solution refinement.

Exploration–exploitation ratio for SFO on CEC2017 suite with 10D.

Notably, for functions F1–F30, SFO consistently maintains this adaptive trade-off, dynamically adjusting the exploration pressure while intensifying exploitation, particularly in unimodal and hybrid problem instances. This regulated transition is essential for improving convergence speed and achieving high-precision solutions, demonstrating SFO’s robustness and adaptability in handling complex optimization challenges.

Convergence analysis of SFO with competitive optimizers

Figure 9 and 10 presents the convergence behavior of the competing algorithms on the IEEE CEC2017 benchmark functions for 10 dimensions. In Fig. 9 the results show that the proposed SFO algorithm frequently outperforms the other competitors in terms of convergence speed and final solution quality.

Convergence curve analysis over CEC2017 benchmark functions (F1–F15), Dim = 10.

Convergence curve analysis over CEC2017 benchmark functions (F16–F30), Dim = 10.

For the unimodal functions F1- F3 and F5, SFO achieves the fastest and most stable convergence, demonstrating strong exploitation ability and the capability to reach near-optimal solutions in significantly fewer iterations. In F4, SFO maintains competitive performance, performing on par with the best-performing competitors throughout the search process.

For the basic multimodal functions F6—F12, SFO consistently exhibits superior convergence for F6, F8, F10, and F11, while also delivering competitive performance on F7 and F9. In challenging cases such as F8 and F9, most algorithms (e.g., MFO, SCA, MVO) stagnate prematurely due to limited exploration, whereas SFO continues to make steady improvements over time. In F12, SFO achieves the best final solution, clearly outperforming all other algorithms tested.

In the hybrid and composite functions F13—F15, SFO demonstrates a strong balance between exploration and exploitation, achieving the best convergence in F13 and F14 and delivering top performance on F15. Across most functions, classical algorithms such as GWO, WOA, and PSO tend to stagnate early, while SFO sustains progress until the final iterations. These results highlight the robustness, adaptability, and effectiveness of the proposed SFO algorithm in solving diverse and complex optimization problems within the CEC2017 suite.

Figure 10 illustrates the convergence behavior of the competing algorithms across the F16 to F30 functions of the IEEE CEC2017 benchmark suite under 10-dimensional settings. the IEEE CEC2017 test set in 10-dimensional environments. Performance for our proposed algorithm SFO is variable in this set of test functions.

For several test functions such as F18, F20, and F23, competitive convergence is noted for SFO which often closely traces or beats conventional algorithms such as PSO, WOA, and GWO. Particularly for F20 and F23, convergence behavior for SFO is consistent with low variability and decent final fitness values are achieved. However, in functions F16, F17, and F24, for instance, the algorithm does worse relative to best schemes like EMBGO and PSO, which means there are issues for SFO in efficiently navigating rugged environments in certain hybrid or composition functions. Indeed, for F19, F21, F25, and F26, SFO converges at a slower rate and attains larger final fitness values, which means it needs improvement in its exploration mechanisms in order for it to handle heavily rugged or deceptive search environments. Despite these limitations, SFO exhibits moderate resilience and maintains competitive results in functions like F22, F27, and F28, where it performs on par with several baseline optimizers. On the most complex functions such as F29 and F30, SFO struggles to match the convergence quality of state-of-the-art algorithms such as EMBGO, GWO, and PSO, which display faster convergence rates and better solution precision.

Analysis of box plot results

The box-plot of all results and convergence curve of best result for SFO and MFO, SCA, MVO, HGSO, GTO, PSO, WAO and EMBGO on 30 benchmark functions of the IEEE CEC2017 with Dim = 10 throughout the iterations with 1,000 times are plotted in Figs. 11 and 12.

Boxplots for SFO and other nine algorithms for IEEE CEC2017 benchmark functions(F1–F15).

Boxplots for SFO and other nine algorithms for IEEE CEC2017 benchmark functions(F16–F30).

According to Fig. 11, the box-plot distributions illustrate the performance of the competing algorithms on the IEEE CEC2017 benchmark functions (F1–F15) with 10-dimensional search spaces. The proposed SFO algorithm demonstrates strong and stable performance across several functions, achieving the lowest median fitness and narrow interquartile ranges in functions such as F1, F2, F3, F4, and F11, indicating high robustness and accuracy. In other unimodal and basic multimodal functions, such as F5, F6, and F10, SFO maintains competitive results, though some competitors, notably GTO and EMBGO, achieve slightly lower quartiles. For more challenging multimodal and hybrid functions (F7, F8, F9, and F12), SFO performs comparably to the top algorithms but exhibits a slightly wider spread in certain cases, reflecting higher variance in final solutions. In composite functions such as F13 and F15, SFO remains competitive, with performance close to the best methods, although on F14 it shows a significantly larger quartile range and higher median, suggesting difficulty in fine-tuning on this function. Overall, the box-plot analysis reveals that SFO consistently ranks among the best performers on most CEC2017 functions, with particularly strong results in low-to-moderate complexity landscapes, while certain highly composite functions leave room for further improvement.

For the remaining functions F16–F30, Fig. 12 clearly shows the proposed SFO algorithm maintains competitive performance in several cases but also shows variability in challenging landscapes. In F16, F17, and F20, SFO achieves relatively low median fitness values, close to the best-performing algorithms, with a narrow spread indicating stable convergence. For functions F18 and F19, which exhibit high complexity, SFO delivers moderate performance—outperforming stagnating algorithms like MFO and GTO but trailing behind the top solutions from methods such as EMBGO and WOA. In functions F21, F22, and F23, SFO demonstrates consistent behavior, ranking among the competitive algorithms, although the marginal gap to the leaders suggests room for further exploitation refinement. On hybrid and composite functions such as F24, F25, and F26, SFO produces solid median values, yet the quartile ranges indicate slightly higher variance compared to the best methods. Performance on F27–F29 is mixed: SFO remains competitive in F27, while in F28 and F29, several competitors achieve lower and more consistent fitness values. In the most complex composite function, F30, SFO delivers moderate results but is clearly outperformed by certain algorithms that achieve much smaller median fitness values.

Discussion

The experimental results presented in Section “Results” demonstrate the competitiveness of the proposed Swift Flight Optimizer (SFO) across diverse benchmark functions. In this section, we provide a detailed discussion of these findings, highlighting the advantages and limitations of SFO compared to existing algorithms. We also analyze specific shortcomings to guide future research directions and outline the broader implications of adopting SFO in real-world optimization tasks. To elucidate the internal dynamics underlying these results, it is instructive to first examine how the algorithm regulates the balance between exploration and exploitation across different stages of the search. The adaptive three-mode structure of SFO regulates the transition from global exploration to local exploitation, with early iterations dominated by broad search movements that ensure extensive coverage and mitigate premature convergence, followed by increasingly directional updates that concentrate the population around promising basins, and final localized refinements that enhance solution accuracy. This staged progression maintains a dynamic balance between exploration and exploitation across runs. In some functions (e.g., F16, F21), oscillatory patterns appear in the exploration–exploitation curves due to the stagnation-aware reinitialization mechanism, which deliberately injects diversity when convergence stalls; although this intervention temporarily perturbs search dynamics and may distort fitness progression, it ultimately restores diversity and sustains long-term convergence. Beyond this exploration–exploitation interplay, the convergence trajectories provide additional evidence of how the adaptive design governs the optimizer’s long-term behavior. The convergence dynamics of SFO are characterized by a rapid initial descent, followed by a mid-phase of gradual improvement without stagnation, and a late stage of steady refinement rather than premature flatlining. This profile reflects the efficacy of the multi-mode switching strategy and the stagnation-aware reinitialization in sustaining optimization progress beyond the plateau stage common in classical swarms. The glide mechanism exerts a decisive influence on these dynamics: its parameterization governs the breadth of early exploratory moves, thereby controlling the algorithm’s capacity to access unexplored regions of the search space. Properly tuned glide parameters enhance basin discovery and delay stagnation, whereas suboptimal settings may restrict exploration and induce premature convergence. Consequently, glide plays a pivotal role in shaping the trajectory of convergence and in preserving the balance between global search and local refinement. The influence of these mechanisms becomes particularly evident when contrasting performance across varying problem dimensionalities, where structural challenges of the search space amplify the role of diversity management. Across different dimensional settings, SFO demonstrates consistent adaptability to the structural properties of the search space. In low- to moderate-dimensional problems, the glide phase efficiently identifies promising basins, the target phase consolidates improvements before stochastic noise dominates, and the micro-refinement operator exploits local curvature to achieve low terminal errors with limited variance across runs. These outcomes indicate that the control architecture generalizes effectively across unimodal, multimodal, and hybrid landscapes. In high-dimensional scenarios, where the exponential growth of the search space and heterogeneous curvature impose substantial challenges, SFO preserves early convergence speed and prevents premature population collapse. However, its relative advantage diminishes on ill-conditioned or highly composite functions, where directional updates may inadequately resolve narrow valleys without frequent diversity reintroduction. This suggests that scalability constraints are linked less to the intrinsic design of the search operators than to the frequency and efficiency of diversity-restoring mechanisms, which become increasingly critical as dimensionality escalates. Complementary insights are gained from sensitivity analysis, which clarifies the extent to which algorithmic performance depends on the calibration of key control parameters. The sensitivity analysis reveals that SFO’s performance is closely dependent on parameter calibration. Population size strongly affects convergence reliability, with larger populations enhancing diversity but incurring higher computational cost. The cognitive coefficient (\({\psi }_{1}\)) amplifies individual exploration, whereas the social coefficient (\({\psi }_{2}\)) stabilizes collective convergence. The inertia weight (\(w\)) governs search dynamics, where higher values sustain exploration and lower values accelerate exploitation but risk stagnation. The stochastic scaling factors (\(\alpha , \beta\)) regulate perturbation intensity, balancing basin discovery against local precision. These results indicate that robust performance arises from balanced parameterization rather than extreme settings, underscoring the importance of controlled exploration–exploitation trade-offs.

Synthesizing these observations, the principal strengths of the optimizer can be delineated as follows:

-

Multi-mode balance Glide, target, and micro phases jointly sustain exploration and exploitation.

-

Diversity preservation Stagnation-aware reseeding prevents premature convergence.

-

Robust outcomes Stable performance with low variance across independent runs.

-

Wide applicability Effective across diverse benchmark classes without heavy tuning.

-

Efficiency Rapid convergence with low computational overhead and scalability to high dimensions.

Conversely, certain constraints must be acknowledged to provide a balanced and critical evaluation of the method:

-

Parameter sensitivity Performance depends on glide and control coefficients, requiring careful calibration.

-

Computational overhead Diversity-restoring operations add cost, especially in high-dimensional settings.

-

Scaling constraints Effectiveness diminishes on ill-conditioned or highly composite functions.

-

Switching complexity The multi-mode framework relies on multiple transition conditions, which may complicate parameterization and reduce robustness across diverse problems.

Taken collectively, the findings substantiate the capacity of SFO to address fundamental challenges in global optimization, including the preservation of diversity, the mitigation of premature convergence, and the attainment of stable solutions across varied problem classes. At the same time, they identify methodological aspects such as parameter sensitivity, switching complexity, and scalability that require refinement to further enhance the algorithm’s robustness and generalizability.

Conclusions

This paper introduces a novel bio-inspired optimization algorithm known as the Swift Flight Optimizer (SFO), motivated by the adaptive flight behaviors of swifts in nature. By simulating glide, target, and micro flight modes, SFO keep a dynamic balance between exploration and exploitation, like the way swifts adapt their flight strategies for long-range travel and precise hunting maneuvers.

Experimental evaluation on the IEEE CEC2017 benchmark suite demonstrated the robustness and competitiveness of SFO. At 10 dimensions, SFO achieved the best average fitness on 21 out of 30 functions, and at 100 dimensions, it ranked first on 11 functions, consistently securing top positions across unimodal, multimodal, hybrid, and composition functions. These results confirm SFO’s ability to achieve fast convergence, high-quality solutions, and stability in complex optimization landscapes. Compared to 13 state-of-the-art optimizers, including PSO, GWO, WOA, and EMBGO, SFO consistently outperformed or matched their results, underscoring its effectiveness and resilience in both low- and high-dimensional problems.

Key contributions of this work include the introduction of SFO as the first swift-inspired optimizer, the development of a multi-mode adaptive framework that balances exploration and exploitation, the incorporation of a stagnation-aware reinitialization mechanism to maintain diversity, and a comprehensive comparative evaluation that demonstrated superior convergence and solution quality against 13 state-of-the-art algorithms on the IEEE CEC2017 benchmark suite.

Despite these promising results, SFO has several limitations. Its performance remains sensitive to parameter settings, particularly those governing the transitions between exploration and exploitation phases, which may require careful tuning for different problem classes. Although the stagnation-aware reinitialization mechanism enhances diversity and reduces the risk of premature convergence, it also introduces additional computational overhead that may affect scalability when applied to very high-dimensional.

Building on these findings and limitations, future work on SFO will focus on expanding its applicability and enhancing its capabilities. A promising extension lies in multi-objective optimization, where SFO could manage simultaneous trade-offs among conflicting objectives, enabling broader use in complex real-world problems. Advances in adaptive parameter control and population diversity management may be achieved through hybridization with other metaheuristics or by incorporating machine learning models such as deep neural networks and reinforcement learning. For large-scale, high-dimensional optimization, distributed or parallelized SFO implementations may provide significant gains in scalability and computational efficiency. Finally, applying SFO to dynamic and time-varying optimization challenges—including UAV thermal search-and-rescue and industrial processes—offers an opportunity to demonstrate and enhance its real-time adaptability. These directions highlight both theoretical opportunities and pathways to broaden SFO’s range of real-world scientific and engineering applications.

Data availability

All relevant data are within the manuscript. The collection and analysis method complied with the terms and conditions for the source of the data.

References

Sharma, M. & Kaur, P. A comprehensive analysis of nature-inspired meta-heuristic techniques for feature selection problem. Arch. Comput. Methods Eng. 28(3), 1103–1127. https://doi.org/10.1007/s11831-020-09412-6 (2021).

Wang, Z. & Schafer, B. C. Machine leaming to set meta-heuristic specific parameters for high-level synthesis design space exploration. In 2020 57th ACM/IEEE Design Automation Conference (DAC), 20(24) 1 6 (2020) https://doi.org/10.1109/DAC18072.2020.9218674.

Dokeroglu, T., Sevinc, E., Kucukyilmaz, T. & Cosar, A. A survey on new generation metaheuristic algorithms. Comput. Ind. Eng. 137, 106040. https://doi.org/10.1016/j.cie.2019.106040 (2019).

Vikhar, P. Evolutionary algorithms: A critical review and its future prospects. 261–265 (2016).

Storn, R. & Price, K. Differential evolution–A simple and efficient heuristic for global optimization over continuous spaces. J. Glob. Optim. 11(4), 341–359. https://doi.org/10.1023/A:1008202821328 (1997).

Dagal, I. et al. Logarithmic mean optimization a metaheuristic algorithm for global and case specific energy optimization. Sci. Rep. 15(1), 18155. https://doi.org/10.1038/s41598-025-00594-2 (2025).

Sotomayor, M., Pérez-Castrillo, D. & Castiglione, F. Complex Social and Behavioral Systems Game Theory and Agent-Based Models: Game Theory and Agent-Based Models. (2020).

Wang, D., Tan, D. & Liu, L. Particle swarm optimization algorithm: An overview. Soft Comput. 22(2), 387–408. https://doi.org/10.1007/s00500-016-2474-6 (2018).

Dorigo, M. & Stützle, T. (2010) Ant colony optimization: Overview and recent advances”. In Handbook of Metaheuristics (eds Gendreau, M. & Potvin, J. Y.) 227–263 (Springer, 2010).