Abstract

The construction industry faces pressing challenges, including persistent labor shortages, hazardous working conditions, and stagnating productivity gains. Simultaneously, the field of humanoid robotics has matured from early experimental platforms to advanced systems capable of dynamic locomotion, dexterous manipulation, and partial autonomy. This paper examines how humanoid robots, with anthropomorphic designs suited to human-centric environments, might revolutionize future construction processes. The unique challenges of construction humanoid robots are outlined such as perceptual robustness, adaptive locomotion, human-level dexterity, continual learning, and human–robot collaboration. In addition, non-technical perspectives that could affect the adoption and implementation of humanoid robots in construction are discussed such as workforce implications, safety and ethical considerations. These challenges may emerge when translating humanoid capabilities to active construction sites due to unstructured and dynamic settings, unpredictable task sequences, frequent interactions with human trades, and nascent regulatory frameworks. Drawing on a breadth of literature, this paper presents future milestones from near-term technical advances and to longer-term visions of scalable, fully integrated robotic ecosystems. This paper uniquely contributes a comprehensive roadmap for humanoid robot integration in construction, addressing both technical challenges like adaptive locomotion and ethical considerations such as workforce impacts. By fostering interdisciplinary collaboration, it aims to position humanoid robots as transformative assets for advancing safety, efficiency, and sustainability in the industry.

Similar content being viewed by others

Introduction

Recent breakthroughs in robotics and artificial intelligence (AI), particularly in the last 3 years, have enabled the development of next-generation humanoid robots characterized by enhanced mobility, dexterity, and autonomy. Characterized by anthropomorphic morphology and bipedal locomotion, these systems promise to operate in spaces originally designed for humans, leveraging existing infrastructures and tools in ways that conventional wheeled or tracked robots cannot1,2. While early humanoids, such as Honda’s ASIMO, primarily served as research platforms to explore bipedal locomotion and interactive behaviors3, newer entrants like Boston Dynamics’ Atlas or Tesla’s Optimus seek to expand into more practical and industrial applications4,5 (Fig. 1).

Humanoid robots have gained momentum: (a) ASIMO from Honda. Image Source6:; (b) Digit from Agility Robotic. Image Source7:, used with permission; (c, d) Atlas HD & New Atlas. Image Source8:, used with permission; (e) DR01. Image Source9:, used with permission (f) Optimus from Tesla. Image Source10:.

In parallel, the construction industry faces critical challenges, among them a chronic labor shortage, stricter safety requirements, productivity declines, and time and cost overruns11,12,13. Construction projects often take place in environments with rapidly changing layouts, hazardous working conditions, and a high degree of unpredictability in day-to-day tasks14,15. Unlike sectors such as manufacturing, where automation has already led to dramatic gains in output and quality16,17, construction remains less automated due to the inherent variability in building processes and the significant customization required in each project phase18,19. These factors have led to a strong and still growing interest in the potential of humanoid robots to assume roles traditionally performed by on-site human workers, tackling repetitive, physically demanding, or hazardous tasks, and thus alleviating labor constraints and improving overall site safety20,21,22.

There have been reservations for adopting humanoid robots in the construction industry. One of such arguments claims that the humanoid morphology is not optimal for many construction activities such as payload handling23,24. While we argue that adopting humanoid robots present unique practical and scientific opportunities for transforming the entire industry for three reasons. First, the human-centered design of construction tools and spaces means that a robot with similar anthropometrics and dexterous capabilities can, in principle, use the same infrastructure without requiring substantial redesign of work environments or equipment21,25. Second, a bipedal system is capable of traversing uneven, partially completed surfaces, climbing stairs and ladders, and navigating scaffolding, i.e., tasks that are relatively straightforward for humans but typically challenging for wheeled machines26,27. Third, the technology community has shown a growing interest in developing platforms and methods for humanoid robots28,29,30. These resources can be leveraged to enhance humanoid robots with AI-driven perception algorithms that harness 3D mapping, multimodal sensors, and real-time object recognition to adapt to evolving site conditions31,32. Humanoid robots show the ability to operate flexibly in dynamic environments underpins a vision of seamless human–robot collaboration, where machines provide support alongside skilled workers, thereby reducing physical strain, improving efficiency, and safety outcomes33,34.

The potential economic, safety, and societal benefits of humanoid robots in construction are significant. Humanoid robots show a great potential to automate material handling and transport, assembly, inspection and works that must be done in hazardous places. By addressing labor shortages, optimizing material handling, and mitigating hazards, these systems can contribute to a safer, more productive, and more sustainable industry35,36. Nevertheless, the realization of humanoid robot deployments in construction is still in its infancy. The demands of advanced perception ability, dexterity, robust locomotion, generalizable policies, high payload capacity, sufficient battery life, and intuitive human–robot interaction pose formidable engineering and algorithmic challenges22. Even as cutting-edge research demonstrates increasingly agile and capable humanoids in controlled or semi-structured contexts, such as laboratory settings or manufacturing cells, substantial work is needed to adapt these technologies to the more unpredictable and harsh conditions of active construction sites. In addition, introducing autonomous or semi-autonomous humanoids into a workforce also raises questions about workforce displacement, ethical considerations, and regulatory frameworks, all of which require careful and ongoing discussion among researchers, policymakers, and industry stakeholders37,38. This paper aims to develop an in-depth discussion on the challenges and opportunities of humanoid robotic for the construction industry and propose a roadmap of research for the next decade.

State of the art

Recent developments in humanoid robotics reflect a rapidly maturing field that has moved from proof-of-concept bipedal walkers to sophisticated platforms capable of dynamic locomotion, dexterous manipulation, and partial autonomy. Although the roots of humanoid robotics stretch back several decades, contemporary efforts have benefitted immensely from advances in actuation, sensing, and control theory. As researchers continue to explore new materials, mechanical architectures, and AI frameworks, humanoid robots are increasingly seen as potential game-changers in unstructured and human-centered domains such as construction, disaster response, and healthcare39,40,41.

Major platforms and their capabilities

Early examples, including Honda’s ASIMO and KAIST’s HUBO, primarily demonstrated the viability of bipedal locomotion and rudimentary interactive behaviors3,42. As shown in Table 1. Their designs focused on maintaining balance under moderate disturbances, performing simple gestures, and navigating flat surfaces. These pioneering systems set crucial foundations for gait control, power management, and anthropomorphic design. In the past decade, however, a new wave of platforms has emerged with increasingly robust mobility and advanced manipulation abilities. Boston Dynamics’ Atlas, for instance, employs high-torque electrical actuators and model predictive control to enable agile motions such as jumping, backflips, and traversing rough terrain. These capabilities are complemented by recent advancements in reinforcement learning frameworks, which allow robots like Atlas to adapt locomotion strategies dynamically to unstructured environments4,71. Similarly, Tesla’s Optimus project, though still in early development, aims to merge large-scale reinforcement learning with advanced servomotors, aspiring to produce cost-efficient humanoids for industrial and consumer applications5. Examples of humanoid robots are shown in Table 2.

Research into locomotion spans beyond simply achieving upright bipedal movement. The emphasis lies in robust adaptation to diverse terrains and disturbances without sacrificing overall efficiency73,74. Classical approaches like the Zero Moment Point (ZMP) framework remain influential, as they define stable gaits by ensuring the robot’s center of mass stays within the support polygon formed by its feet75,76. However, more contemporary work employs deep reinforcement learning to train walking policies in simulation, allowing robots to master dynamic locomotion skills under varied scenarios77,78. Such policies are then transferred to hardware, requiring careful tuning to accommodate real-world factors such as actuator backlash, sensor noise, or friction inconsistencies79,80. Balancing these computationally demanding methods against real-time constraints remains an ongoing challenge, necessitating hardware accelerators or algorithmic optimizations.

Another core domain is manipulation. Since humanoid robots are intended to function in spaces and with tools designed for humans, researchers focus on end-effector designs, soft or flexible actuators, and multi-modal sensing. Tactile sensors embedded in finger pads, high-resolution force-torque sensors at the wrist, and visual-haptic fusion all aim to enable skillful handling of objects of varied sizes and fragility81,82. Cutting-edge labs have also explored imitation learning and zero-shot learning, allowing humanoids to quickly adapt to unfamiliar tasks based on limited demonstrations62,83. This convergence of sensor-driven control, machine learning, and ergonomically inspired hardware is critical to achieving human-like dexterity, as tasks such as wire routing, bolt tightening, and part assembly can be substantially more complex than the standardized manipulations seen in industrial robotics84,85.

Finally, perception and AI integration remain pivotal. Current humanoids typically carry multiple sensor modalities, stereo or Red Green Blue-Depth (RGB-D) cameras, Light Detection and Ranging (LiDAR), Inertial Measurement Units (IMUs), and sometimes radar, to map their surroundings, identify objects, and track their own poses86,87. AI algorithms for object detection and semantic scene parsing often leverage deep neural networks, which have shown remarkable progress on benchmarks88,89. Nevertheless, these capabilities are largely validated in controlled environments, and real-world performance can deteriorate under variable lighting, dust, or clutter. Fragility, high deployment costs, and the absence of large-scale real-world training datasets continue to hamper the widespread adoption of humanoids outside research institutes or specialized industry pilot projects90,91.

Unique challenges for construction robotics

Construction sites, by definition, are unstructured, large-scale environments in flux. Unlike factories with fixed robotic cells, construction areas feature partially built walls, scaffolding in constant motion, and daily rearrangements of heavy equipment92,93. Tasks like pouring concrete, stacking materials, or installing steel beams can drastically alter navigable paths, making frequent re-mapping and localization crucial. Dust, glare, and inclement weather degrade sensor performance, while uneven terrain heightens the risk of slips or falls85,94. Traditional solutions that rely on static reference markers or carefully planned routes often falter when confronted with such perpetual variability.

A further complication arises from the diversity of tasks on-site. Some are repetitive like carrying bricks or painting walls while others demand extreme precision, such as plumbing or electrical installations. The order of operations can also shift unexpectedly: if a critical material delivery is delayed, teams might reprioritize tasks and disrupt the planned workflow49,95. For a humanoid robot to be effective, it must seamlessly adapt to these evolving conditions, modulating its skill sets and planning horizons. However, existing control algorithms and scheduling systems often assume a degree of task predictability ill-matched to the sporadic nature of construction96,97. This necessitates robust task switching, flexible scheduling, and possibly real-time learning or human-in-the-loop planning.

Cooperative interactions with human workers further complicate matters. Construction sites frequently host tradespeople of varied specializations electricians, pipefitters, carpenters each operating with different tools, safety protocols, and daily objectives98,99,100. Robots have to navigate the same shared environment, sometimes needing to coordinate tasks such as material handoffs, floor layout changes, or collaborative lift-and-fit operations. Ensuring the robot can detect and respond to human gestures, maintain safe distances, and provide clear communication channels is paramount101,102. These interaction layers far exceed those of conventional manufacturing, where robots are often caged or structured to avoid close human–robot proximity.

All of these technical complexities compound when one factors in regulatory and liability frameworks103. Construction sites are subject to stringent occupational safety rules like OSHA standards in the U.S. or EN directives in Europe to protect workers from falls, equipment malfunctions, and respiratory hazards104,105,106. Introducing a humanoid robot that traverses scaffolding or wields power tools raises new questions about accountability for accidents. If a robot’s sensor fails and it collides with a worker or structural element, does fault lie with the machine’s operator, manufacturer, or site supervisor107,108? Equally pressing are insurance premiums and certification processes, which typically assume risk profiles suited to standard equipment. Evolving these frameworks to encompass humanoid robotics in open, dynamic sites remains an ongoing pursuit109,110,111.

Beyond these environmental and regulatory challenges, the mechanical and energy limitations of current humanoid robots remain major barriers to practical deployment. Despite impressive advances in actuation and control, humanoids continue to struggle with joint elasticity, vibration damping, and precision in ankle and wrist mechanisms, all of which affect balance and dexterous tool use. Their payload capacity is relatively low. For example, Boston Dynamics’ Atlas (≈ 89 kg) can safely lift only about 11 kg making heavy material handling impractical on most sites. Similarly, battery endurance typically ranges between 30 and 90 min of continuous operation, far below the duration required for daily construction tasks. Table 2 summarizes representative metrics for current state-of-the-art humanoids, including battery endurance and payload-to-mass ratios. Most commercial and research platforms operate for 1–2 h per charge, with battery packs ranging between 1.5 and 3.0 kWh and overall mass above 40 kg. Their effective payloads rarely exceed 20–25 kg.

Energy efficiency is a critical bottleneck: dynamic locomotion, balance correction, and actuation of multiple high-torque joints demand continuous power, leading to rapid depletion. To address this, ongoing research explores energy-efficient grippers and compliant end-effectors capable of passive holding or variable stiffness control, minimizing active power draw during sustained manipulation. Recent designs use tendon-driven under actuation or electro-permanent magnetic couplers to hold payloads with minimal current once grasped, while others integrate variable-stiffness actuators (VSA) that store and release mechanical energy through elastic components. These strategies are particularly valuable for field operations where power access is unreliable such as remote or partially completed construction sites enabling robots to sustain manipulation tasks without constant high-power actuation.

In addition, limited autonomy constrains field usability: many humanoids still rely on external motion-capture systems or pre-planned trajectories for navigation and manipulation. The combination of low payload-to-mass ratios, short battery life, and limited endurance in harsh weather means current humanoid systems remain primarily research prototypes rather than deployable field assets. Nevertheless, ongoing mechatronic innovations including lightweight composite materials, variable-stiffness actuators, energy-dense batteries, and hybrid locomotion mechanisms are steadily narrowing this gap. Continued collaboration between roboticists and civil engineers will be crucial to achieving the mechanical resilience and energy efficiency necessary for sustained operation in real-world construction environments.

Existing efforts for advancing construction robotics

Despite these formidable obstacles, the potential benefits of humanoid robots in construction remain too significant to overlook. If such systems can be effectively adapted to the realities of construction environments, they could eventually undertake physically strenuous or hazardous tasks thereby improving worker safety, reducing operational costs, and shortening project durations112,113. Achieving these outcomes, however, depends on targeted interdisciplinary research that marries advanced perception (able to handle high levels of environmental noise and clutter) with locomotion strategies suitable for uneven, partially assembled floors93,94,114. Efforts to develop more energy-efficient systems are equally critical, as construction tasks frequently run for extended shifts in remote settings where frequent battery swaps might be impractical115,116,117. Additionally, cooperative task planning must be refined to facilitate robust, dynamic teaming between human specialists and robotic assistants, bridging the gap between theoretical multi-robot coordination algorithms and tangible job-site collaboration61,102,103,118,119. Addressing these gaps will entail consistent collaboration between roboticists, civil engineers, site managers, and policymakers, ensuring that hardware design, control algorithms, and regulatory measures evolve in tandem120,121. Only through such interdisciplinary synergy can humanoid robots progress from captivating laboratory demos to essential, reliable players on tomorrow’s construction sites.

Opportunities and application areas

The inherent anthropomorphic design and advanced locomotive and manipulation capabilities of humanoid robots are expected to pave the way for a range of construction-related applications. By capitalizing on the capacity to traverse sites built for human workers and to handle tools intended for human hands, humanoid robots may uniquely address several persistent challenges in the construction sector73,94,98,122. This section outlines four promising avenues material handling and transport, assembly and installation, inspection and quality control, and demolition in hazardous contexts where humanoid robots could deliver appreciable value if current technological hurdles can be surmounted.

Material handling and transport

Material handling within construction projects is a labor-intensive, repetitive, and often injury-prone activity123. Manual transport of heavy loads or bulky materials, such as bricks, drywall, or piping, not only consumes significant labor resources but also exposes workers to musculoskeletal hazards124. Humanoid robots, equipped with bipedal locomotion and dexterous manipulators, present an opportunity to automate these tasks in environments not well-suited for traditional wheeled or track-based robotic platforms125,126 (Fig. 2).

By maintaining an upright posture, humanoids can navigate through tight corridors, climb stairs, and move across uneven surfaces with greater ease than comparable wheeled systems94,114. Their human-like reach and dexterity enable the handling of irregularly shaped or fragile materials that might otherwise require specialized grippers or custom apparatuses57,122. When augmented with advanced AI-based perception, these robots could dynamically adapt to fluctuating site conditions,avoiding obstacles, rerouting in response to temporary blockages, and adjusting force output to accommodate varying loads55,56,57,81. This adaptability could help minimize logistics bottlenecks, reduce physical strain on human workers, and improve overall site productivity12,96,127.

Assembly and installation

Construction projects rely on precise and timely assembly tasks, ranging from rough framing to the installation of finishing elements such as fixtures, wiring, and insulation128. The high variability and custom nature of these tasks make them especially challenging to automate with traditional industrial robots, which are typically confined to structured environments34,50. Humanoid robots, however, could leverage their anthropomorphic dimensions and dexterous end-effectors to utilize standard hand tools, such as drills, wrenches, hammers, and navigate within partially completed structures without major alterations to the site98,122,129,130,131.

Emerging research in dexterous manipulation, particularly those employing multi-sensor integration and AI-driven motion planning, strengthens the feasibility of humanoid robots performing fine-grained installation tasks82,122,132. Advanced force feedback and tactile sensing can enable nuanced control for fastening bolts, positioning ducts, or aligning modular components, thereby reducing the margin of error57,133,134 (Fig. 3). Moreover, real-time coordination with Building Information Modeling (BIM) systems could provide the robot with geometric references and scheduling data, enabling dynamic adjustments to reflect on-site changes or design updates135,136.

Dexterous hands for pipe assembly. Image source; Informatics, Cobots and Intelligent Construction Lab, University of Florida.

Inspection and quality control

Ensuring structural integrity and compliance with specifications is paramount to successful construction outcomes20,36. Typically, inspectors must traverse scaffolding, maneuver through narrow spaces, or climb to significant heights to perform detailed evaluations of welds, joints, or installations which is a task often rendered hazardous by unstable or confined environments14,137. Humanoid robots, by virtue of their bipedal balance and full-body mobility, can ascend and navigate scaffolding or ladder-like structures without extensive site reconfiguration78,138.

Integrating high-resolution visual, thermal, or even ultrasonic sensors into the humanoid’s upper torso or head module can facilitate detailed, non-destructive testing of critical components63,139,140. With the aid of AI-based object detection and anomaly classification, robots can highlight potential defects, such as cracks, misalignments, or thermal irregularities, in real-time, communicating these findings to human supervisors through a shared digital platform141,142,143,144. This continuous data-driven inspection process has the potential to enhance quality control, reduce rework, and bolster site safety by limiting the need for human workers to undertake dangerous assessments145,146.

Demolition and hazardous work

Demolition tasks and hazardous operations, such as handling toxic materials, cutting through unstable structures, or performing cleanup in disaster zones, pose significant risks to human workers147,148,149. While specialized demolition machinery exists, many aspects of dismantling or decommissioning a structure still rely on manual labor, especially for tasks involving restricted spaces or partial structural collapses150,151. Humanoid robots equipped with reinforced end effectors and ruggedized body shells could navigate precarious areas, wield standard demolition tools, and perform partial teardown operations more safely than human crews152,153 (Fig. 4).

Moreover, teleoperation modes allow human operators to guide the robot’s actions from a safe distance, leveraging onboard cameras and force feedback for situational awareness95,155,156. This dual mode of operation autonomous for routine tasks and teleoperated for complex or delicate procedures enables continuous adjustments to unplanned circumstances (e.g., unexpected debris shifts) while ensuring human expertise remains in the loop157,158. Such hybrid solutions could drastically reduce injuries in demolition work and related hazardous applications like asbestos removal, while also contributing to faster site turnover159,160,161.

Taken together, these use cases illustrate the breadth of potential value that humanoid robots may bring to construction sites. Whether assisting in material logistics or performing nuanced installation tasks, the ability of humanoid robots to replicate human mobility and tool usage gives them a distinct edge over conventional robotic solutions22,162,163. However, realizing these capabilities on a large scale will require concomitant progress in perception, locomotion stability, power management, and human–robot interaction, as described in subsequent sections of this paper. The successful integration of humanoid robots in construction will thus demand a concerted, multidisciplinary effort that involves not only roboticists and AI researchers but also construction professionals, safety experts, and policymakers92,96,107,120,121,164,165.

Technical challenges and considerations

Humanoid robot deployments in construction settings demand robust engineering and advanced algorithms capable of handling the inherent unpredictability of unstructured, dynamic worksites. Many of the technical breakthroughs enabling humanoid robots to walk, perceive, and manipulate objects remain optimized for laboratory or factory conditions, where variables are more contained1,2,22,38. In contrast, construction tasks confront robots with moving equipment, workers, and ever-changing site layouts that stress-test existing perception and control methods166. In addition, the versality and dynamics of construction also calls for the ability to learn generalizable policies or continual learning167,168. This section addresses the substantial technical hurdles and considerations required for implementing humanoid robots in dynamic construction environments.

Long and deep perception

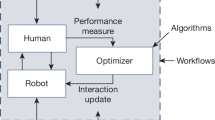

Humanoid robots in construction must operate in highly dynamic settings where the tasks and the layout evolve across overlapping phases, such as excavation, foundation work, framing, and finishing137,169,170. Each phase introduces fresh contexts and spatial configurations, partially completed structures, temporary scaffolding, newly placed rebar, which can radically alter navigable paths and obscure visual markers. Moreover, tasks like installing formwork, routing electrical conduits, and fitting concrete panels are interdependent, so an error in one task may cascade into complications in subsequent steps171. Standard perception pipelines, which often assume static or slowly shifting environments, are ill-prepared for these fast-paced changes. Consequently, a robust construction robot must not only detect and localize existing walls or equipment but also predict how they might evolve over the course of a single workday, especially in response to crane movements, material deliveries, or partial installations118,153,172. We call this new type of perception “long perception” (see Fig. 5). Balancing this predictive element in long perception with immediate situational awareness is essential for safe, efficient navigation and task execution in such fluid settings.

Another reason why many existing perception systems struggle lies in the fundamental 3D complexity of construction sites. Unlike functionally “2.5D” indoor environments, construction areas contain overhead cranes and suspended loads, trenches and rebar protrusions underfoot, and partial floors or scaffolding at intermediate levels174. Even advanced algorithms for object detection or semantic mapping can be flummoxed by moving workers, occluded objects, or intense dust from cutting and drilling operations. To address these challenges, research increasingly emphasizes multi-sensor fusion, integrating streams from LiDAR, stereo cameras, inertial measurement units (IMUs), and potentially radar or ultrasonic sensors175,176. Fusing these data sources in real time makes it possible to build dense 3D representations, often leveraging SLAM (Simultaneous Localization and Mapping) techniques that incorporate factor graphs or graph optimization for scalability164,175,177,178. The efficacy of these pipelines is tempered by high noise levels, partial occlusions, and reflective materials, prompting the need for robust outlier rejection and continuous sensor calibration, which we call “deep perception” capability (see Fig. 5).

New methods are needed to further enhance perception to reach the proposed “long and deep perception”. One direction is to seek algorithmic innovations in SLAM and visual odometry pielines to implement context-aware filtering and redundant sensor arrays to bolster reliability. Some solutions can turn to machine learning architectures, including transformer models and self-supervised networks, which can extrapolate structural cues even if large portions of the scene are obscured114,164. Recent advances in Vision Transformers (ViTs) and multimodal fusion techniques offer promising avenues for enhancing perception pipelines by enabling robust object detection and semantic understanding under challenging conditions like dust or occlusion. For instance, newly poured concrete, with its uniform texture, can break traditional feature-based matching; machine learning-driven approaches can infer likely boundaries or shapes even without distinct key points. Additionally, we should explore in-situ sensing networks as a means of offloading part of the perception burden, installing IoT beacons or drone-mounted LiDAR scanners to provide globally consistent point clouds that the robot can fuse with its local observations179,180. These external sensors also help track fast-moving or occluded objects, including workers wearing sensor-embedded vests102,181,182.

Still, adopting external sensor infrastructure creates its own set of challenges and trade-offs. Installation and maintenance of site-wide LiDAR scanners or beacons can be expensive, and connectivity dropouts may cause the robot to revert to onboard perception in precisely the adverse conditions that prompted external assistance in the first place183,184. A typical scenario is a robot tasked with moving drywall panels through a corridor where dust clouds and irregular lighting impair camera-based SLAM. A global LiDAR network could offer updated environmental maps, enabling more reliable route planning. Yet if part of the network fails or misaligns due to vibrations or weather, the robot might have to rely on noisy onboard measurements to avoid collisions185,186. These contingencies underscore the need for fallback strategies, robust sensor fusion, and adaptive algorithms that gracefully degrade in performance rather than fail abruptly.

All-terrain locomotion and mobility

Humanoid robots on construction sites confront enormous variability in terrain, ranging from soft mud and loose gravel to scattered debris and uneven ground levels. Unlike controlled indoor environments where paths are flat and predictable, construction terrains often shift daily due to excavation or deliveries, and even small obstacles such as wood offcuts or cable spools can jeopardize stability. Furthermore, certain tasks may require ascending scaffolding that sways under load or navigating partial structures with little margin for error. These factors make it difficult to precompute a reliable gait or route; instead, real-time adjustments in friction, slope, and support compliance become essential for safe locomotion. The combined threat of stumbling, tipping, or encountering abrupt changes in surface inclination underscores the critical importance of robust gait planning, active balance control, and adaptive body posture.

Recent approaches (Fig. 6) to tackling uneven terrain rely on predictive footstep planning informed by real-time sensor data, sometimes known as foothold adaptation. By estimating ground properties before each step, via force sensors or exteroceptive measurements, robots can adjust stance width, gait cycle duration, and foot placement to minimize slips and stumbles187,188. Additional techniques incorporate tactile or distributed pressure sensors in the feet, allowing for partial compliance or “give” in each step as the robot encounters irregularities. Similarly, on scaffolding or partial floor segments, advanced balance algorithms using Zero Moment Point (ZMP) or Model Predictive Control (MPC) frameworks can integrate scaffold compliance data and micro-vibrations into the center-of-mass trajectory planning46,47,76. Fall recovery mechanisms, such as reflex stepping or using transient handholds, provide fallback strategies if the robot’s predictions fail in the face of unexpected perturbations. Researchers also explore controlled fall approaches to mitigate damage or injury when all other countermeasures fail.

In parallel, a key focus is refining gait control algorithms for bipedal robots to be both agile and resilient194,195,196. While ZMP-based control has traditionally anchored stable walking, it can be too conservative for cluttered sites demanding quick evasive maneuvers around mobile equipment. MPC, by contrast, anticipates future states over a short horizon, enabling rapid footstep reconfiguration when terrain or obstacles change suddenly. Hybrid locomotion paradigms blend reactive reflex loops, which handle micro-disturbances at the millisecond timescale, with higher-level predictive models that plan footsteps over seconds, striking a balance between responsiveness and long-term stability. Others propose bipedal-hybrid systems that incorporate wheeled or tracked “feet”, switching between walking in complex vertical areas (e.g., ladders, tight corners) and rolling on flat surfaces for efficiency193,197. However, such designs often complicate transitions between different types of ground and can compromise the anthropomorphic advantages needed to climb or work in human-designed spaces.

Another crucial consideration is energy consumption and safety. Continual balance corrections, high-torque actuators, and heavy onboard computational loads can rapidly deplete batteries, particularly if the robot is also tasked with carrying substantial payloads like tools or building materials115,116,117. Construction sites often lack convenient recharging infrastructure, pushing engineers toward solutions such as swappable battery packs, lightweight composite materials, and energy-aware motion planning. From a safety standpoint, geofencing or high-level supervisory controls can override locomotion routines if the robot drifts into restricted zones or if environmental conditions degrade too severely. Combined with sensor redundancy and anomaly detection in mechanical components, these safety protocols aim to prevent catastrophic falls or collisions. Ultimately, locomotion in construction is a delicate interplay of hardware robustness, real-time motion intelligence, and fail-safe mechanisms,an interplay that must be meticulously designed to handle the unpredictability of large-scale building projects.

Human-level manipulation dexterity

Humanoid robots in construction must contend with an extraordinary variety of objects, tools, and materials in environments far more chaotic than standard industrial settings. Unlike the controlled or semi-structured layouts seen in factories, construction sites are rife with partially assembled frameworks, irregularly shaped components, and materials ranging from fine electrical wiring to massive steel beams128,198,199,200. Manipulation tasks thus oscillate between precision (e.g., carefully threading cables through conduits) and brute force (e.g., prying or lifting heavy panels), often within tight clearances. Moreover, dust, debris, and temperature fluctuations can degrade sensor performance and mechanical reliability201,202,203. These factors underscore the acute need for end-effectors and control algorithms specifically tailored to support robust, adaptive manipulation. Critically, many essential tasks including tying rebar intersections, wire fishing, pipe fitting operations, applying sealants and welding, require the kind of dexterous finger control normally attributed to human hands. When performed manually, these detailed tasks impose significant strain on workers’ wrists and fingertips, reinforcing the potential value of anthropomorphic robotic solutions122,204,205.

Recent advances seek to address these challenges by developing modular, reconfigurable end-effectors, which combine anthropomorphic multi-fingered hands for dexterous tasks with interchangeable tool attachments for heavy-duty operations54,206. Such designs might feature interchangeable “fingers” optimized for high-friction grasps or specialized couplings that replicate the clamping power of pliers and wrenches207. In parallel, a growing emphasis on force-torque sensing at the wrist and fingertips enables real-time adjustment of grip force, reducing the risk of accidental material damage or dropped loads208,209. For instance, when tying rebar intersections, the robot may rely on tactile feedback to sense the tension in the wire; likewise, careful cable routing demands sufficiently low grip force to avoid pinching or severing insulation55,57,210. Another dimension is visual serving for tasks like pipe fitting, where minute alignment errors can cause leaks or structural weaknesses. By integrating depth imaging and force feedback, the robot can iteratively adjust the pipe’s angle until the desired tolerance is achieved.

However, most state-of-the-art dexterous hands such as the Allegro, Shadow, and DLR-HIT designs remain mechanically fragile and limited in payload capacity, as they were developed primarily for research in precision manipulation rather than forceful, high-torque tasks (Fig. 7). Their lightweight structures and intricate actuation mechanisms make them unsuitable for repetitive or high-impact operations common in construction environments. This limitation highlights the urgent need for construction-grade end-effectors that blend human-like dexterity with industrial-level durability, possibly through hybrid actuation systems, compliant materials, and protective housing against dust and vibration.

In addition to dexterity, several of the hand designs listed in Table 3 employ actuation strategies that enhance energy efficiency, an increasingly important factor for field operations with limited power access217. For instance, tendon- and cable-driven mechanisms (e.g., DLR Hand II, Schunk SVH) reduce motor count and enable remote actuation, lowering joint-level inertia and overall energy use compared to fully motorized servo configurations. Pneumatic and hybrid systems (e.g., Biomimetic and Shadow Hands) further improve force-to-weight ratios and can store potential energy through compliant materials, allowing energy recycling during grasp release.

Recent research also explores variable stiffness actuators (VSA) and underactuated tendon hands, which dynamically adjust stiffness or share actuators across multiple joints, yielding substantial energy savings for long-duration manipulation tasks. Such designs are particularly relevant in off-grid or partially powered construction environments where humanoid robots must operate for extended periods between battery charges.

On the control side, hybrid position-force strategies and compliance control methods are instrumental for ensuring safe, reliable manipulation in unstructured environments218,219. By blending position-based path planning with real-time force feedback, these algorithms allow the robot to deviate from purely rigid trajectories and “feel out” uncertain tasks. For example, if a drill bit enters a hole at a slightly incorrect angle, the controller can adjust torque and posture to prevent binding. Meanwhile, compliance control can assist with tasks like inserting pre-bent piping into tight wall cavities, accommodating slight geometric variations204. Researchers also experiment with learning-based manipulation, including reinforcement learning or imitation learning frameworks, which can speed the acquisition of new dexterous skills131,220,221. In some prototypes, robots train in physics-rich simulations to master wire handling, rebar tying, or pipe fitting, subsequently refining these skills in real-world conditions.

Efforts to improve manipulation robustness also hinge on multi-modal sensor integration, fusing visual, depth, force, and tactile data into cohesive situational awareness222,223 (Fig. 8). Systems that exploit state-of-the-art semantic segmentation can distinguish between a partially obstructed rebar segment and background clutter, while model predictive controllers anticipate how contact forces will evolve if the object shifts unexpectedly. By combining such sensor fusion with advanced planning algorithms, future humanoids may reliably perform delicate tasks currently deemed too repetitive or risky for unassisted human labor224,225. Although notable progress has been made, the complexity and unpredictability of construction sites mean that dexterous, foolproof manipulation, particularly for intricate tasks like wiring, plumbing, and rebar tying, remains an open frontier, motivating deeper research into integrated hardware-software architectures, high-fidelity simulation, and adaptive learning mechanisms.

Multi-modal sensations are used to improve robot dexterity. Image source; Informatics, Cobots and Intelligent Construction Lab, University of Florida.

Transferability and generalizability

A major appeal of humanoid robots in construction lies in their promise of flexible redeployment across diverse tasks and project sites without demanding excessive reprogramming. Yet achieving robust transferability remains exceedingly challenging. Construction projects vary widely in their architectural designs, structural materials, and building codes, while even within a single site, day-to-day changes can drastically alter the robot’s operational domain226,227,228. A robot that learns to carry drywall up a scaffold in one setting may struggle in another environment229, where the scaffold sway dynamics differ or the drywall dimensions deviate from prior assumptions. Without effective knowledge transfer, the time and effort required to recalibrate models and retune controllers for each new situation can easily erode the cost and productivity benefits of a humanoid platform230,231,232,233.

To address these issues, researchers are increasingly exploring domain adaptation and meta-learning techniques that help bridge the gap between a “source domain” (e.g., a training facility or simulation) and a “target domain” (a live construction site). Domain adaptation often employs adversarial methods that encourage feature extractors to learn environment-invariant representations, thus minimizing performance degradation when exposed to novel lighting conditions, partial occlusions, or different building materials234,235,236. Meanwhile, meta-learning or few-shot learning paradigms train the robot to rapidly update its skills with minimal on-site data, reducing the overhead of re-labeled demonstrations or manual controller tweaking237,238,239. In practice, this might take the form of a humanoid robot refining its drilling parameters after observing or mimicking a human worker for just a few minutes rather than requiring a full day of reprogramming.

Another promising avenue is the foundation model approach, where large-scale pre-trained networks capture broad sensorimotor priorities from massive simulated and real-world datasets. By fine-tuning such a model with a comparatively small domain-specific dataset, robots can adapt to new tasks or layouts swiftly240. As shown in Fig. 9, the proposed foundation model for transferability of robotic manipulation skills integrates perceptual encoding, cross-domain policy adaptation, and low-level control refinement. While the conceptual basis draws from the framework proposed by Fu et al.241, the present model extends it through multimodal learning components and cross-environment generalization mechanisms tailored for construction-oriented manipulation tasks. This approach is bolstered by cloud-based knowledge repositories, wherein multiple deployed robots share training logs and best practices. For example, if a robot successfully masters the insertion of specialized fasteners in a high-rise project, it can upload its policy updates to a central server; a second robot can then download and adapt these updates to a mid-rise residential job242,243. This collective learning accelerates deployment, promotes standardization in skill representation, and distributes best practices widely across geographically dispersed projects.

Foundation model for transferability robot manipulation skills. Image source; Informatics, Cobots and Intelligent Construction Lab, University of Florida.

Crucially, the success of these techniques hinges on the availability of diverse, high-quality datasets that capture the breadth of construction scenarios, from extreme weather conditions to partially collapsed or partially built structures244. Building such datasets often requires specialized site mapping, sensor instrumentation, and data annotation processes. The field is consequently placing emphasis on simulation-to-reality (sim2real) protocols, wherein robots train extensively in highly realistic virtual environments,including artificially introduced noise and variations, and then transition to real sites with minimal performance drop245,246. Combined with robust sensor fusion and advanced planning methods, these efforts aim to make humanoid robots not only capable of complex tasks in a single setup but also adaptable enough to be redeployed fluidly across the multi-faceted, ever-changing tapestry of modern construction.

Continual learning

Continual learning is an essential yet challenging aspect of deploying humanoid robots in construction, where the environment and tasks evolve over time. Unlike static factory floors, a construction site transforms daily as structures rise, materials get relocated, and new subcontractors bring different tools and processes. Moreover, local building codes and safety regulations can shift across jurisdictions or even mid-project, necessitating on-the-fly adjustments to the robot’s operational parameters. A single, monolithic model trained prior to deployment is unlikely to remain viable amid these ongoing changes. Instead, the robot must incrementally update its perception and motion policies to cope with new tasks, unfamiliar site layouts, or revised safety constraints without losing the knowledge it has already acquired. Achieving this “continual learning” paradigm is particularly difficult because naive attempts to retrain a model on recent data often lead to “catastrophic forgetting,” where previously learned competencies degrade in the face of new information247,248.

To address this, robotics researchers are developing safe, on-site learning methods that allow incremental adaptation without risking accidents. One technique involves simulation-driven pretraining, wherein the robot refines a baseline policy in a virtual environment that mirrors real-world variability249,250,251,252. Once deployed, the robot continues to gather field data but updates its model in a “shadow mode”, executing new or partially trained policies only in simulation or at low-risk times, such as after work hours or on specially designated test sections of the site253,254. This practice dramatically reduces the chance of failures that could compromise worker safety or project timelines. Another strategy focuses on transfer reinforcement learning255,256,257, which leverages each job site’s feedback (e.g., scaffolding sway, ground irregularities) as a domain adaptation signal, gradually narrowing the gap between simulated assumptions and reality. Importantly, robust fallback policies are retained for critical tasks, ensuring the robot can revert to proven maneuvers if its newly acquired skills falter.

Under the hood, much of continual learning relies on regularization and dynamic scheduling to mitigate catastrophic forgetting while incorporating fresh information. For instance, techniques like L2 regularization (often employed in neural networks) limit how drastically the model’s parameters can deviate from their well-established values258,259. This encourages small, incremental changes aligned with the robot’s past experience rather than radical overhauls that risk erasing learned behaviors. A learning rate scheduler can also be employed: the robot might increase its learning rate only after confirming that certain new data are relevant, then reduce the rate once adaptation is complete260,261. Advanced approaches include elastic weight consolidation (EWC)262, where parameters most critical to existing tasks are protected during retraining, and progressive networks263, which allocate new neural pathways for novel tasks while preserving the old pathways for previously mastered skills. By carefully combining these strategies, humanoid robots can gradually absorb fresh site data and updated building regulations (such as a sudden switch from steel frames to cross-laminated timber) while continuing to perform established tasks effectively.

Power and payload constraints

The dual demands of hauling hefty payloads while maintaining extended operational durations create a persistent challenge for humanoid robots in construction. Because bipedal walking inherently consumes more energy than rolling or static platforms115,116,264,265, these robots require high-torque actuators that rapidly deplete battery reserves. At the same time, tasks such as lifting concrete blocks or steel beams push designers toward more powerful motors, yet every additional kilogram in mechanical structure and batteries increases overall mass, a vicious cycle of weight versus capability. For instance, a robot strong enough to move large panels might carry heavier battery packs, cutting into its total operation time and limiting how far it can travel before recharging. This trade-off becomes even more pressing in remote or sprawling worksites where easy access to charging stations is not guaranteed.

To mitigate these constraints, researchers and engineers have explored solutions that range from battery swap stations, where robots dock to quickly exchange depleted power units for fresh ones, to partial or full tethered power configurations that sidestep onboard energy storage. In environments with minimal movement needs, tethered approaches can drastically boost uptime; however, cables may impede a humanoid’s ability to climb scaffolding or squeeze into confined spaces. Meanwhile, large-scale projects might adopt a fleet approach266,267, deploying multiple robots in parallel rotations: as soon as one unit’s battery runs low, it is replaced by a freshly charged counterpart, ensuring near-continuous coverage. This strategy allows site managers to keep tasks moving without long robot downtimes, albeit at the expense of purchasing multiple, often costly, humanoid platforms.

Beyond operational planning, emerging innovations in battery technology and alternative power sources hold promise for alleviating the power-versus-payload dilemma. Next-generation lithium-sulfur or solid-state batteries may offer superior energy density and thermal stability, reducing the weight penalty for high-capacity storage268,269,270. Other initiatives investigate fuel cells271,272,273,274, which can yield longer runtimes and faster refueling, or supercapacitors that deliver bursts of high current for transient heavy lifts. Researchers also examine energy-aware motion planning algorithms that optimize each step’s torque usage or exploit momentum to reduce power draw275,276,277. Although no single technology provides a universal fix, a judicious mix of advanced batteries, clever mechanical design, and operational strategies can expand humanoid robots’ effective working window and their utility on active construction sites.

Human–robot interaction

Integrating humanoid robots into construction sites demands more than just advanced hardware and software; it requires seamless interaction with human workers who navigate the same dynamic spaces (Fig. 10). One of the most significant challenges lies in ensuring that robots and humans share a situational awareness of ongoing tasks, potential hazards, and each other’s movement37,137,155,278,279. Because a construction site may involve multiple trades working concurrently, the robot needs real-time updates on who is welding in one area, who is carrying materials in another, and how structural features are changing with each completed phase. Without this shared cognitive map, misunderstandings can arise: a worker might inadvertently walk into the robot’s path, or the robot might begin a task in a zone where critical safety checks have not yet been performed.

Human–Robot teaming will be a main form of automation in the future; (a) Fire Fighters and Cassie Blue Walks Through Fire. Image source; Michigan Robotics: Dynamic Legged Locomotion Lab, used with permission; (b) 4NE-1 from NEURA Robotics shown in a conceptual promotional image illustrating potential industrial applications. Image source280,281; (c) Atlas HD hands-on tools bag to human worker. Image Source4:, used with permission.

Addressing these issues involves developing robust communication channels and intuitive interfaces for collaboration. Robots can integrate with Building Information Modeling (BIM) data and site management software to stay informed of scheduling changes or hazard alerts, while workers might receive visual or audible cues when a robot is approaching200,282,283,284. For instance, wearable devices or tablet interfaces can display the robot’s current status or path, helping humans anticipate movements and coordinate tasks. In this context, human acceptance of humanoid robots depends less on affective or emotional engagement and more on trust, predictability, safety, and perceived usefulness. Studies of technology adoption have shown that frameworks such as the Technology Acceptance Model (TAM3)285 and the Unified Theory of Acceptance and Use of Technology (UTAUT)286 provide better lenses for understanding how construction professionals evaluate robotic systems. Key factors such as performance expectancy, effort expectancy, and facilitating conditions directly influence whether workers view the robot as a reliable teammate or as a disruptive element on-site. Accordingly, ensuring transparent robot behavior, legible motion patterns, and reliable safety boundaries is essential to fostering trust and reducing anxiety during collaboration287,288,289,290. Gesture recognition and voice command systems can also provide user-friendly methods for directing the robot33,60,291, especially when hands-on tools are involved or when rapid, situationally adaptive instructions are needed.

Underlying these interfaces is a framework of safety protocols designed to prevent accidents and collisions. Many humanoid robots incorporate advanced sensor arrays (e.g., LiDAR, depth cameras, ultrasonic sensors) to detect nearby personnel and obstacles, triggering real-time collision avoidance routines if something encroaches on the robot’s workspace. In congested areas, robots may slow or reduce their working radius until humans clear the zone107. Some companies are even experimenting with cognitive load sensing that gauges how intensely a worker is focused on a particular task, adjusting the robot’s behavior accordingly to minimize distraction or surprise. Regulatory bodies and standards organizations, meanwhile, are starting to formulate guidelines on permissible robot interactions, protective equipment, and co-working protocols. Ultimately, as construction humanoids become more autonomous, the depth and sophistication of their human–robot interaction capabilities will determine whether they coexist harmoniously with workers or remain relegated to strictly controlled niches.

Beyond technology: socioeconomic and ethical implications

Understanding the non-technical aspects of adopting humanoid robots in construction is as crucial as the technical perspectives to ensure successful integration. Beyond engineering challenges, the broader socioeconomic and ethical dimensions shape the feasibility, acceptance, and long-term sustainability of these technologies within the industry. This section considers several key aspects: the workforce implications, where we explore potential job displacement versus upskilling opportunities; safety issues, weighing the reduction of human risk against new risks introduced by robots; economic feasibility, analyzing the balance between initial investments and anticipated gains; public perception and acceptance, which determine how these technologies are received and integrated by workers and communities; and ethical frameworks and policy development, crucial for addressing liability, privacy, and data security concerns. Together, these considerations offer a holistic view of the complex interplay between technological advancements and their societal impacts in the construction sector.

Workforce implications

Humanoid robots in construction promise both increased productivity and a safer working environment, but they also raise concerns about how existing jobs might be affected. A major worry is that widespread automation could lead to job displacement, particularly for tasks requiring repetitive or physically taxing manual labor292,293,294. Given the global shortage of skilled construction labor, some industry stakeholders argue that robots could fill the gap rather than displace existing workers. Examples from industries like automotive manufacturing demonstrate how automation can coexist with human labor by creating new roles in robot operation, maintenance, and system integration, highlighting opportunities for upskilling rather than displacement. Nonetheless, apprehension persists: Will introducing robots for tasks like material handling, assembly, or site inspection diminish opportunities for entry-level laborers? And how will experienced tradespeople adjust if robots begin taking on highly specialized activities? These questions underscore the delicate balance between tapping robotics’ potential and safeguarding the livelihoods of the human workforce.

To address such concerns, many experts point to historical precedents in other industries295,296,297,298. The automotive sector, for example, saw widespread fear of worker displacement when assembly-line robots became commonplace299,300. Yet automation ultimately catalyzed the creation of new roles such as robot programmers, maintenance technicians, and systems integrators, alongside upskilling or reskilling initiatives aimed at the existing workforce128,301. Construction can follow suit by framing robots as enablers that augment human capabilities rather than pure labor replacements. This might include equipping experienced tradespeople with the knowledge and certifications needed to supervise robotic platforms, troubleshoot errors, or calibrate specialized tools. Government agencies and trade unions can collaborate to develop short-course training, online modules, or apprenticeship-style programs where workers learn basic robotics literacy, safety protocols, and multi-robot fleet management. Such initiatives would not only preserve jobs but potentially elevate the sector’s skill floor, fostering a more technologically adept labor force.

In practical terms, construction workers might gain new pathways to transition into “robot managers,” monitoring multiple robots and intervening when anomalies occur. Some might become certified robotic tool specialists, advising on the best end-effectors for given tasks. Vocational institutions could offer micro-credentials recognized by both construction firms and robotics manufacturers, ensuring standardized skill sets across regions. Over time, a carefully orchestrated approach based on stakeholder dialogue, well-funded training programs, and accessible upskilling resources can help shift the narrative from job loss to workforce enhancement. By embracing robots as collaborative partners, the industry can expand its talent pipeline, retain seasoned experts who adopt new technical competencies, and draw younger workers eager to work with cutting-edge technologies.

Safety

Humanoid robots have the potential to dramatically reduce the most dangerous aspects of construction labor. Whether it is lifting heavy materials, operating in cramped or poorly ventilated conditions, or scaling scaffolds at precarious heights, robots can take on tasks associated with high injury rates and long-term health problems. For instance, back injuries from continuous heavy lifting are a prominent issue in construction302,303, and robotic assistance could alleviate such chronic strain. Similarly, in demolition or hazardous material removal (e.g., asbestos), sending a robot in place of a human significantly lowers the risk of exposure. By leveraging advanced sensors, real-time feedback loops, and precise motor control, these machines can perform intricate tasks without succumbing to fatigue or momentary lapses in attention, i.e., factors that account for many on-site accidents.

However, the introduction of humanoid robots also brings novel risks. Collisions are a prime concern, particularly in congested sites where visibility can be poor and workers are frequently crossing paths96,105,108. Software vulnerabilities ranging from bugs that cause erratic movements to hacking attempts that disrupt coordination also pose another layer of risk. If a critical control algorithm malfunctions during a lift or scaffold climb, the consequences could be catastrophic for both the robot and surrounding personnel. Thus, while the robotic hardware might limit human exposure to direct hazards, its misuse or malfunction can create new dangers. Confronting these realities calls for robust safety standards tailored specifically to humanoid forms operating in dynamic, unstructured settings.

One path forward lies in adapting and extending existing industrial safety certifications (e.g., ISO 10218304, ANSI/RIA standards305) to the unique context of construction humanoids. Unlike stationary robotic arms in manufacturing cells, bipedal robots have to navigate multi-level worksites, requiring continuous collision avoidance, fallback procedures, and foolproof emergency stop mechanisms138,139,306. Standards organizations, in tandem with manufacturers and government regulators, could develop performance benchmarks that measure a robot’s stability under unexpected impacts, sensor redundancy in harsh climates, and resilience against cyber intrusions. Additionally, well-documented safety protocols should be as integral to a project as standard engineering specifications to establish how humans and robots share corridors, signal who has right-of-way, and under what conditions a robot must yield. Implementing such guidelines alongside worker education, thorough risk assessments, and frequent on-site drills can ensure that the safety advantages of humanoid robots are realized without introducing unmanageable new threats.

Economic feasibility

The economic feasibility of deploying humanoid robots in construction hinges on balancing the high initial capital expenditure (sensors, actuators, and advanced control hardware) against prospective labor savings and productivity gains over time. Even before considering large-scale production, individual components can be costly: high-torque motors and joint mechanisms must be durable enough for rugged environments, while LiDAR and depth cameras often dominate sensor budgets307. For smaller contractors working on low-margin projects, such upfront costs can be prohibitive, reinforcing the perception that robotics adoption may only be viable for major construction firms. Moreover, ongoing variable costs like battery replacements, software updates, and additional insurance premiums introduce complexities into long-term financial planning.

In response, industry stakeholders should undertake cost–benefit analyses that weigh capital investment and maintenance expenses against anticipated operational savings. For large-scale projects with substantial labor shortages or extensive repetitive tasks, a well-deployed humanoid robot fleet can cut down on delays, reduce rework, and improve overall site efficiency, thereby recouping the initial purchase costs more quickly. Conversely, in smaller construction jobs with fewer repetitive tasks, the return on investment may be less straightforward, making robotic adoption a tougher sell. In some regions, public funding or R&D subsidies are beginning to play a role; government grants or innovation tax credits can offset part of the financial burden, especially when pilot programs yield safety or environmental benefits. Over time, as the scale of production ramps up and cost-reduction strategies (e.g., standardized hardware modules) become more widespread, the economic threshold for integrating humanoid robotics will likely lower further.

Another angle to consider is the flexibility and reusability of a humanoid robot across multiple sites. An expensive platform might be justified if it can be transported between projects and configured for a range of tasks, thereby amortizing costs over numerous job sites. Contractors might also pursue leasing or sharing models, where robots are rented during peak demand periods and returned afterward, offloading maintenance responsibilities to the provider. Ultimately, robust financial modeling requires a nuanced look at project scale, location, labor availability, and specific construction tasks. By assembling reliable data from existing pilot deployments and early adopters, the industry can refine its ROI projections and move toward more data-driven decision-making on robotic investments.

Public perception and acceptance

The proliferation of robots on construction sites invariably triggers apprehension about job security and broader societal impacts. Much like the debates surrounding self-driving cars and warehouse robotics308,309,310, concerns arise that humanoid robots might replace human workers, eroding the social fabric of manual trades. This fear is compounded by the fact that humanoid form factors, by design, evoke comparisons to human capabilities, potentially heightening anxiety around displacement. Beyond job fears, there are emotional and psychological barriers: encountering a life-sized, moving machine in a hard hat can be off-putting, especially for workers unaccustomed to robot co-collaborators.

Addressing these concerns demands community engagement and transparent communication. One effective strategy involves demonstration projects that showcase not only the robot’s capabilities but also how it collaborates safely and efficiently with human teams. Public open houses at large construction sites, livestreamed tests on social media, and candid Q&A sessions can demystify the technology, offering workers and the local community a chance to see the robots in action. Lessons from the self-driving car industry underscore the importance of staged rollouts, starting with lower-risk tasks and gradually increasing autonomy as public trust builds. Surveys and case studies indicate that acceptance grows when people can observe tangible benefits including fewer workplace injuries, quicker project completions109,311,312, while confirming that robots do not replace but rather assist or complement human labor.

From an implementation standpoint, forging partnerships with trade unions or vocational schools can further legitimize robotics in the construction realm. Early involvement of key stakeholders helps articulate realistic job augmentation scenarios and fosters credibility in how these technologies are deployed. As the novelty wears off and robots reliably perform tasks that are either ordinary or hazardous, perceptions typically shift from fear to pragmatism, i.e., viewing humanoid machines as advanced tools that, like any other equipment, require proper training, oversight, and respect for operational limits.

Ethical frameworks & policy development.

Alongside economic and social considerations, the ethics of humanoid robotics in construction come to the fore in scenarios involving accidents or data privacy287,313. Liability represents a particularly thorny issue: if a robot malfunctions or collides with infrastructure, who bears responsibility: its operator, the manufacturer, the site manager, or the software developer? The legal landscape grows even more complex if software errors or hacked systems cause project delays or safety incidents. Moreover, many humanoid robots rely on extensive sensing capabilities (e.g., cameras, microphones, thermal imagers) that can inadvertently capture private conversations or sensitive site information314,315. These surveillance potentials demand stringent data management and consent protocols, lest they infringe on worker privacy or corporate intellectual property.

To navigate such complexities, policymakers and industry bodies should call for formalized standards akin to established industrial robotics safety guidelines but adapted for mobile, humanoid systems in unstructured environments. This effort should include drafting procedures for verifying real-time sensor reliability, implementing secure communication channels to mitigate hacking risks, and setting performance benchmarks for safe, autonomous decision-making. Jurisdictions may also incorporate “ethical audits” into project approval processes, requiring documentation of how the robot’s data logs are stored, how collision avoidance has been tested, and who is accountable for system upgrades. Additionally, localized variations in labor laws and safety regulations mean that solutions cannot be simply copy-pasted from one market to another; the policy framework must be malleable enough to account for cultural, legal, and infrastructural differences worldwide.

Looking ahead, strategic policy measures could expedite responsible adoption by offering liability protections when robots are used within strict operational boundaries or by granting tax incentives for companies that invest in robust safety systems. Governments might also introduce licensing schemes to certify that both robots and their operators meet core competency standards, analogous to drivers and vehicles in the automotive sector104,316. Through a combination of clear guidelines, enforceable regulations, and cross-industry collaboration, ethical frameworks can foster an environment in which humanoid robots bring transformative benefits to construction sites without undermining worker rights, public safety, or personal privacy.

Proposed future research directions

Based on the throughout discussion on the challenges related to humanoid robots for construction, we propose a three-stage roadmap for the research community and the industry to realize a sustainable humanoid robot-centered ecosystem in the next decade (Fig. 11).

The proposed 10-year roadmap for construction humanoid robots.

Short-term milestone (< 3 years)

In the immediate future, researchers and industry stakeholders should focus on refining key perception and locomotion components that underpin humanoid robot performance in construction. Specifically, enhanced SLAM and sensor fusion methods need to be adapted for dynamic, cluttered environments, where partially built structures and moving equipment can foil conventional algorithms. Achieving 'long and deep perception’ requires integrating predictive modeling with real-time sensor fusion to anticipate dynamic changes in site layouts caused by shifting materials or machinery movement throughout the workday. Additionally, more resilient outlier rejection methods and real-time calibration are critical, as construction sites generate high levels of dust, glare, and occlusions.

On the locomotion front, stable walking on uneven terrain remains a top priority. Even slight miscalculations on mud, loose gravel, or debris can result in falls that jeopardize both the robot and surrounding personnel. Research efforts should thus focus on advanced control schemes such as model predictive control for footstep planning and reflex loops for quick disturbance rejection that adapt to changing friction conditions in real time. Field-testing these locomotion solutions through pilot programs or smaller-scale testbeds will provide valuable feedback on their robustness. Collaborations with sensor manufacturers could accelerate innovation by integrating specialized LiDARs or depth cameras designed to cope with construction dust and harsh lighting. Likewise, partnering with AI laboratories can help expedite data-driven perception pipelines, generating algorithms optimized for real-time SLAM and multi-modal sensor fusion specifically tuned to the demands of large-scale building sites.

Short-term milestones could also include systematic benchmarking of prototypes in controlled but realistic mock-up sites. These environments would mimic a subset of construction hazards, such as partial scaffolding, busy foot traffic, and temporary obstacles, allowing researchers to evaluate whether their perception and locomotion modules maintain high safety and reliability metrics. Ultimately, these smaller-scale tests will inform the broader community of best practices for sensor placement, fall recovery strategies, and specialized hardware configurations needed to achieve baseline stability and perception in the field.

Mid-term milestone (3–5 years)

Over the mid-term horizon, the primary goal expands to improving manipulation and dexterity, enabling humanoid robots to tackle a broader range of construction tasks. While basic manipulation might be demonstrated in the short term, advanced capabilities, such as tying rebar intersections, fitting pipes with sealants, or installing delicate electrical components, require more sophisticated end-effectors and force-control algorithms. Designs that incorporate tactile sensors and interchangeable tool interfaces can help robots switch between precision tasks and heavier operations without extensive reconfiguration. By leveraging real-time learning methods, the robot can adapt its grip strength, insertion angle, and motion profile based on feedback from the environment, reducing the likelihood of damage to materials or tools.

During this phase, large-scale data collection from the first 3 years of testing will be critical. Robust datasets capturing varied construction scenarios (e.g., different materials, weather conditions, and site layouts) can inform more accurate AI-driven manipulation strategies. These data resources will aid in building “manipulation intelligence,” where the robot’s end-effectors recognize subtle tactile cues or partial occlusions, automatically adjusting grip force or trajectory mid-task. Prototypes that combine advanced computer vision (e.g., semantic segmentation of scaffolding elements) with responsive force control can serve as a benchmark for dexterous humanoid operation in the construction domain.

Additionally, the integration of standardized tool interfaces such as quick-change systems is a key research focus. By developing universal connectors, the robot can swap out end-effectors for specialized tasks (e.g., drilling, welding, or fastening) thus broadening its functional range. This modularity reduces the downtime associated with manual adjustments and eliminates the need for multiple specialized robots on a single site. Pilot-scale projects might explore multi-robot teams, where one bot loads the correct tool attachment while another performs the task, showcasing coordinated dexterity and paving the way for more efficient on-site workflows.

Long-term milestone (5–10 years)

Looking 5–10 years into the future, the aspiration is to establish a methodology for generalizability that allows humanoid robots to seamlessly adapt to new construction sites and tasks. Current systems often require extensive reprogramming or retraining whenever the robot is moved from one context to another. By contrast, scalable AI models, possibly leveraging deep reinforcement learning, meta-learning, and advanced domain adaptation techniques, could enable a true “plug-and-play” mode of operation. In this scenario, a robot arriving at an unfamiliar site would download relevant structural data, calibrate its perception pipelines to local lighting and dust conditions, and begin work with minimal human intervention. This level of flexibility would not only cut deployment costs but also unleash the full potential of humanoids as generalist machines capable of bridging the myriad tasks encountered in construction.

Another significant thrust at this stage involves deepening human–robot interaction (HRI) to make collaboration between crews and robots more intuitive and fluid. Developments might include wearable interfaces that provide real-time feedback on the robot’s intentions or gestures, enabling workers to coordinate tasks without halting their own progress. Advanced natural language processing could allow voice commands and dialogues, supporting on-the-fly revisions to work orders or quick clarifications about site conditions. Critically, as robots progress toward full or partial autonomy, policies and practices must evolve to ensure smooth role delineation and safe co-working arrangements. Future cross-industry AI models may share knowledge from other automation sectors (logistics, healthcare, manufacturing) thus enriching construction robots’ skill sets and accelerating iterative improvements.

Continuous efforts