Abstract

To improve the quality and computational efficiency of image segmentation, and to overcome the limitations of the traditional K-means algorithm—such as sensitivity to initial cluster centers and susceptibility to local optima—this study proposes an Improved Dung Beetle Optimization (IDBO) algorithm and its application to K-means-based image segmentation. First, Latin Hypercube Sampling (LHS) is employed to initialize the population, enhancing diversity and uniformity in the search space and preventing premature convergence in early iterations. Second, a hybrid position updating strategy, combining a nonlinear decision factor with a competition mechanism, dynamically balances global exploration and local exploitation, improving adaptability across different search stages. Third, the Cauchy inverse cumulative distribution operator and tangent flight operator are integrated to perform dynamic perturbation and fine-tuning on optimal individuals, strengthening local exploitation and enhancing the ability to escape local optima. Comprehensive experiments on standard benchmark functions demonstrate that IDBO outperforms the original DBO and other comparative algorithms in convergence speed, optimization accuracy, and stability. The algorithm is further applied to optimize K-means clustering for image segmentation. Quantitative metrics, including Mean Squared Error (MSE) and Peak Signal-to-Noise Ratio (PSNR), confirm that IDBO-based segmentation achieves higher accuracy, better edge preservation, and improved texture fidelity. Additionally, an ablation study isolates the contributions of each enhancement strategy, demonstrating their complementary effects and validating the superiority of the integrated IDBO framework. These results highlight the potential of combining intelligent optimization and clustering algorithms to develop adaptive, high-performance image segmentation techniques.

Similar content being viewed by others

Introduction

Image segmentation is one of the core research areas in the field of computer vision and image processing1,2. Its goal is to partition an image into several non-overlapping and internally homogeneous regions based on the similarity of pixel characteristics such as grayscale, spatial texture, and geometric shape3,4. Within each region, pixels share similar attributes, while differences between regions are maximized. High-quality image segmentation not only enhances the accuracy of subsequent tasks such as image recognition and analysis but also has extensive applications in medical image analysis, object detection, intelligent transportation, and remote sensing image processing5,6.

Among the many image segmentation methods, the K-means clustering algorithm7,8 is widely used due to its simplicity, computational efficiency, and ease of implementation. The algorithm segments an image by minimizing the sum of squared distances between pixels and their respective cluster centers9. However, traditional K-means has notable limitations10: its performance is highly sensitive to the selection of initial cluster centers, and different initializations may yield substantially different clustering outcomes. As a result, K-means is prone to converging to local optima, which can negatively impact segmentation accuracy and stability.

To overcome these drawbacks, researchers have integrated K-means with intelligent optimization algorithms to enhance the selection of initial cluster centers and improve global search capabilities11. For example, Das et al. 12 combined the Levy–Cauchy Arithmetic Optimization Algorithm (LCAOA) with K-means to improve initialization and segmentation accuracy; however, its performance remained limited when processing noisy images in different color spaces. Song et al. 13 introduced Gaussian functions and adaptive scale operators to improve algorithm robustness, achieving superior performance on noisy images, though results were less satisfactory on low-contrast or simple-texture images. Liang et al. 14 pointed out that clustering-based segmentation methods still depend heavily on initial cluster centers and are unsuitable for images with simple backgrounds or minimal color variation.

To further improve global optimization performance, Xue et al. 15 proposed the Dung Beetle Optimization (DBO) algorithm, which mimics the dung beetle’s behaviors of foraging, rolling, and nesting to achieve high search efficiency and fast convergence. Nevertheless, the original DBO still suffers from premature convergence and limited optimization accuracy. To address these issues, several researchers have proposed various improvements. For instance, some studies introduced a nonlinear weighted golden sine strategy into the rolling behavior to improve convergence speed and precision16, while others integrated the follower position updating mechanism from the Sparrow Search Algorithm into DBO, enhancing global optimization performance and successfully applying the improved algorithm to automotive collision simulations17. Mehmood et al.18 enhanced the Aquila Optimizer by incorporating multiple chaotic maps into its exploitation phase, demonstrating superior performance on benchmark functions and effective parameter estimation for electro-hydraulic systems, while highlighting improved robustness under noise and potential sensitivity to parameter tuning. Mehmood et al.18 proposed multiple chaotic variants of the Young’s double slit experiment (YDSE) optimizer by integrating ten chaotic maps through different mechanisms, demonstrating that the Gauss-map-based variant significantly outperforms several state-of-the-art optimizers on benchmark functions and in modeling electrically stimulated muscle systems.

In summary, existing improvements have enhanced DBO’s stability and applicability to a certain extent; however, challenges remain in solving high-dimensional and complex optimization problems, where convergence accuracy and speed are still insufficient, and the risk of local optima persists. To address these limitations, this paper proposes an Improved Dung Beetle Optimization (IDBO) algorithm, aiming to further strengthen both global exploration and local exploitation capabilities. The proposed IDBO is then applied to optimize parameters of the K-means algorithm for image segmentation. Comprehensive simulation experiments on 12 benchmark test functions, the Wilcoxon Rank-Sum Test, and the CEC2021 benchmark suite verify the proposed algorithm’s superior performance in terms of convergence speed, optimization accuracy, and robustness. Furthermore, the IDBO-optimized K-means algorithm is applied to various image segmentation tasks, demonstrating its effectiveness and superiority in improving segmentation accuracy and stability. This research provides a new approach for enhancing the performance of traditional clustering algorithms and establishes a theoretical and practical foundation for applying intelligent optimization techniques in image segmentation and other computer vision applications.

Theoretical foundation

Principle of K-means algorithm

K-means algorithm for image segmentation, firstly, the target image is abstracted into a set of data sample points with d-dimensional vectors20, which can be denoted as \(M=\left( {{M_1},{M_2}, \cdots ,{M_d}} \right)\), and the initial clustering center is selected from the set of data sample points K, i.e., \(C=\left( {{C_1},{C_2}, \cdots ,{C_K}} \right)\), and secondly, \({M_i}(i=1,2, \cdots ,d)\) is assigned to the K clusters according to the Euclidean minimum distance, and the objective function is denoted as 21:

The smaller D is, the higher the similarity of the data within the cluster and the better the clustering. k-means is expressed as 22:

where \({n_i}\) is the number of data points in the i-th cluster. Through continuous iteration, when the center of the K clusters no longer have any change or not much change or meet the iteration conditions, the clustering results can be obtained.

Dung beetle optimization algorithm

Ball rolling and dancing behavior

The Dung Beetle Optimization (DBO) algorithm was inspired by the natural behaviors of dung beetles, including ball-rolling, breeding, foraging, and stealing, and was designed with four distinct update rules to guide the search for the global optimum23.

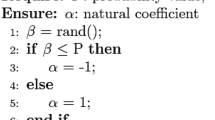

During the ball-rolling process, dung beetles rely on celestial cues such as the sun’s position or wind direction to maintain a straight-line trajectory while rolling their dung balls. To simulate this rolling behavior, dung beetles in the algorithm move across the entire search space along a specific direction. In this process, the position of the rolling beetle is continuously updated, and the mathematical model describing the rolling behavior can be expressed as 24:

where t denotes the current iteration number, \({x_i}(t)\) denotes the position information of the i-th dung beetle at the t-th iteration, \(k \in \left( {0,0.2} \right]\) denotes the constant of the deflection coefficient, b denotes the constant belonging to \(\left( {0,1} \right)\), a is a natural coefficient, and the input probability value \(\lambda\), if \(\lambda <rand(1)\) then a is 1, and vice versa a is -1. \({X^w}\) denotes the global worst position, which \(\Delta x\) is used to simulate the variation of light intensity.

When a dung beetle encounters an obstacle that prevents it from moving forward, it needs to reorient itself by dancing to a new route. The tangent function is introduced to simulate the dung beetle determining a new rolling direction by dancing. The dung beetle dancing behavior position is updated as follows:

where\(\theta \in [0,\pi ]\) ,denotes the coefficient of deviation, when \(\theta \in \left[ {0,{\pi \mathord{\left/ {\vphantom {\pi 2}} \right. \kern-0pt} 2},\pi } \right]\), \(\tan \theta\) is meaningless, the position of the dung beetle does not change.

Reproductive behavior

In nature, dung balls are rolled to a safe place and hidden by dung beetles. To provide a safe environment for their offspring, choosing a suitable spawning site is very important for dung beetles. Inspired by this, a boundary selection strategy is proposed to model the area where female dung beetles lay their eggs, which is defined as 25:

where \({X^*}\) denotes the current local optimal position, \(L{b^*}\)and \(U{b^*}\) denotes the lower and upper bounds of the spawning region, where \(R=1 - t/{T_{\hbox{max} }}\), \({T_{\hbox{max} }}\) denotes the maximum number of iterations, and \({L_b}\) and \({U_b}\) denotes the lower and upper bounds of the search space, respectively.

The boundary range of the spawning area is dynamically adjusted with the number of iterations, therefore, the breeding sphere position is dynamically changed during the iteration process as shown in Eq. (6).

where: \({B_i}(t)\)is the position information of the i-th breeding ball at the a-th iteration, and \({b_1}\)and \({b_2}\) denote two independent random vectors of size \(1 \times D\).

Foraging behavior

The foraging process of dung beetles in nature was simulated and the optimal foraging area was established to guide the dung beetles to forage. The boundary of the optimal foraging area is defined as 26:

where \({X^b}\) is the global best position, \(L{b^b}\) and \(U{b^b}\) denote the lower and upper bounds of the best foraging area. Therefore, the position of the small dung beetle is updated as follows:

where \({x_i}(t)\)denotes the position information of the i-th small dung beetle in the t-th iteration, \({C_1}\)denotes a random number obeying normal distribution, and \({C_2}\) denotes a random vector belonging to.

Theft

Some dung beetles will steal dung balls from other dung beetles, and the location update can be described as:

where \({x_i}(t)\) denotes the position information of the i-th stealing dung beetle at the t-th iteration, g is a random vector with normal distribution of size \(1 \times D\), and denotes a constant.

Improving the Dung beetle optimization algorithm

Latin hypercube sampling

Population initialization directly affects the algorithm’s optimization accuracy and convergence speed. The pseudo-random function used by the standard dung beetle optimization algorithm to initialize the population has some limitations in practice, especially when the variable space is large and complex, it may not be able to generate a sufficiently uniform initial population globally any more, causing the algorithm to over-concentrate in some regions or ignore other potentially favorable regions, which affects the overall optimization efficiency.

Latin Hypercube Sampling (LHS) is a stratified sampling method whose main feature is the high degree of homogenization of the extracted samples27, which is more effective than the random sampling method, and therefore it is widely used in the initialization population problem of intelligent algorithms. Assuming that N samples are drawn from a D-dimensional vector space, the steps of Latin hypercube sampling are as follows28:

-

a)

Determine the vector space D and the number of samples to be drawn N, and let a hypercube variable have dimension D and variable \({x^j} \in \left[ {lb,ub} \right],j=1,2, \cdots ,D\) .

-

b)

Divide the domain of definition \({x^j}\left[ {lb,ub} \right]Nlb=x_{1}^{j}<x_{2}^{j}< \cdots <x_{i}^{j}< \cdots <x_{N}^{j}=ub\).

of the variable into homogeneous interval, i.e.,

So the original hypercube is divided into \({N^D}\) small hypercube.

-

c)

Produces \(N \times D\) matrix a of A which each column is a fully permutated combination of N equalized intervals.

-

d)

Each row of matrix A corresponds to a selected small hypercube, and N samples can be drawn by generating a random sample within each selected small hypercube29.

Figure 1 presents the comparative results used to verify the effectiveness of the Latin Hypercube Sampling (LHS) initialization strategy. The LHS initialization method is compared with random initialization30, Tent chaotic mapping initialization31, and PWLCM chaotic mapping initialization32. The comparison results clearly demonstrate that the LHS-based initialization generates a more uniform population distribution across the search space than the other methods. The accompanying histogram provides an intuitive visualization of the differences among the initialization strategies, and the statistical results further confirm that LHS initialization achieves a well-balanced and evenly distributed sampling within the search space.

Distribution of initialization. (a) LHS initialization, (b) Random initialization, (c) Tent chaotic mapping initialization, (d) PWLCM chaotic mapping initialization.

Hybrid location update strategy

Nonlinear decision factor

Whether or not the algorithm can reasonably balance the algorithm’s global search and local exploitation ability will directly affect the algorithm’s ability to find the best33. In the DBO algorithm, the dung beetle generates an initial solution randomly in the solution space, which guides the dung beetle’s position update. Rolling dung beetles can help the population to converge to the foraging area in the early stage and accelerate the convergence of the algorithm. The position update is as follows:

The constant R is a decision factor that controls the algorithm’s global search capability and local exploitation capability. The constant decision factor is not suitable for solving complex and high-dimensional real engineering problems. Therefore, in this paper, a nonlinear decision factor is used so that the algorithm decreases slowly in the early stage to increase the ball-rolling dung beetle search probability and help the algorithm converge quickly. In the late stage of the algorithm, it decreases rapidly to increase the probability of dung beetle searching to help the algorithm jump out of the local optimum. The nonlinear decision factor is formulated as follows34:

where \({R_{v0}}\) is the initial value of the decision factor, which is taken as 1 in this study, k is the model control factor, the value of k controls the decay speed of the model, and the larger the value of k, the slower the decay speed of the model is. After many experiments to verify, when k take 10, the algorithm works best.

Competition mechanism

The Empire Competition Algorithm was proposed in 2007, which contains important mechanisms such as assimilation, revolution, and flipping35. Inspired by the Empire Competition Algorithm, a competition mechanism is introduced between the ball-rolling dung beetles and the stealers. At each iteration, the stealers will randomly select a ball-rolling dung beetle and move one distance in the direction of its position to execute “preparation for stealing”, which is similar to the “assimilation” mechanism in the Competition Algorithm. Similar to the “assimilation” mechanism in the competitive algorithm, the stealer has the probability to be attracted by other dung beetles when moving, resulting in a direction jump, which is updated in the following way:

where \(R_{{Rand}}^{\tau }\) is the position of the \(\tau\)-th iteration of the ball-rolling dung beetle of a randomly selected one, and p is a random number between 0 and \(2 \times \left\| {T_{i}^{\tau } - R_{{Rand}}^{\tau }} \right\|\); q is the directional hopping coefficient, and the expression is:

where \(\varphi\) is a random number between \(- \frac{\pi }{4}\) and \(\frac{\pi }{4}\); \(\xi\) is a random number between 0 and 1.

When the stealer succeeds in stealing the dung beetle’s dung ball, the identities of the stealer and the dung beetle are swapped, similar to the “inversion” mechanism in competitive algorithms, according to the following rules:

where \(switch\) indicates that a position swap operation is performed; \(empty\) indicates that no operation is performed.

By introducing the competition mechanism, the position updating strategy of the stealer, i.e., utilizing the position information of the ball-rolling dung beetle, strengthens the information interaction, improves the homogenization phenomenon among individuals in the process of convergence, and further optimizes the population structure, which not only accelerates the algorithm’s convergence speed, but also enhances the algorithm’s global search capability and avoids falling into the local optimum.

Cauchy inverse cumulative distribution operator combined with tangent flight operator

The selection of the Cauchy inverse cumulative distribution operator and the tangent flight operator is based on the inherent limitations of the original DBO’s local exploitation phase. For the foraging behavior of young dung beetles, the need to balance “precision approaching the optimal solution” and “avoiding local stagnation” requires an operator with adaptive step-size adjustment capability. The Cauchy inverse cumulative distribution operator is selected for its heavy-tailed distribution characteristic, which enables dynamic step-size reduction during the approach to the optimal position, ensuring both convergence efficiency and exploration breadth. Meanwhile, the tangent flight operator is chosen due to its strong random direction perturbation ability, which can break the spatial clustering of individuals in the late iteration stage. This combination of operators is designed to address the trade-off between local exploitation accuracy and global exploration capability, a key challenge in metaheuristic algorithms for complex optimization problems.

When the small dung beetle searches toward the global optimum, its position update is more random, which makes the algorithm’s convergence speed and convergence accuracy decrease, resulting in the inability to obtain the global optimum solution. To further improve the ability of the algorithm to jump out of the local optimum, the Cauchy inverse cumulative distribution operator is introduced into the dung beetle’s foraging behavior. The position is updated as:

The foraging behavior primarily guides the young dung beetles to move toward the global optimal position. However, since the optimal position contains limited food resources, dung beetles located farther away cannot achieve rapid foraging. To address this issue, the Cauchy inverse cumulative distribution operator is introduced. This operator enables the dung beetles to gradually reduce the distance between themselves and the food source, thereby shrinking the overall step size and allowing the population to reach the optimal fitness value more efficiently.

In the later stages of iteration, dung beetle individuals tend to rapidly assimilate and cluster near the optimal position, which may cause the population to become trapped in local optima. To overcome this, the tangential flight operator is adopted as a proportional factor to control the step size. This operator helps balance the exploration and exploitation processes, enhances the convergence performance of the DBO algorithm, and effectively avoids accuracy degradation.

The local random walk is performed based on the product of the tangential flight operator and the distance between dung beetles and the food source, which proves highly effective for spatial exploration and prevents the algorithm from falling into local optima23. The corresponding position update formula is expressed as:

where \({X^b}(t)\) denotes the optimal position of the individual in the t-th iteration, \({H^b}(t)\) denotes the position after a tangent flight perturbation to the optimal position \({X^b}(t)\), v is a random number uniformly distributed within \(\left[ {0,1} \right]\), and d is the dimension of the function24.

Figure 2 shows the IDBO algorithm flowchart.

IDBO Algorithm Flowchart.

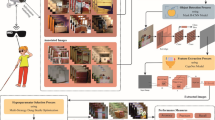

IDBO optimized K-means for image segmentation

In performing image segmentation, the target image is first treated as a d-dimensional space vector with a data sample point set of M, and m data points are randomly selected from it as the initial clustering center. Then the remaining data points in the set M are assigned to the class m. Let mi be is the i-th data point in set M, Cj is the j-th clustering center, and assuming the minimum when \(\left\| {{M_i} - {C_j}} \right\|\), assign data point Mi to class j. The fitness function of IDBO is the minimum loss function of K-means algorithm, denoted as:

Specifically, IDBO optimized K-means for image segmentation is performed as follows:

Step1: Set the initial parameters of IDBO algorithm; take the minimum loss function as the fitness function of the algorithm and calculate the fitness;

Step2: Generate the clustering center;

Step3: Cluster the pixels according to the Euclidean clustering;

Step4: Take the pixel mean of each category as the new clustering center;

Step5: Determine whether the convergence condition is satisfied, i.e., whether the clustering center is changed or not; if satisfied, output the result; otherwise return to step2.

When IDBO reaches the maximum number of iterations, the optimization search results are used to initialize the clustering center of the next process K-means algorithm, so as to overcome the defects of K-means algorithm affected by the initialization of the clustering center, and further improve the performance of K-means algorithm.

Time complexity

Time complexity reflects the change in the execution time of an algorithm as the data size grows, and is usually measured as the growth rate as a function of the input size. Assume that the algorithm population size N, the number of iterations T, and the dimension D. Let the time complexity of the population initialization phase be \(O(N)\), the time complexity of updating the population position be \(O(TND)\), and the time complexity of the local search phase be \(O(TND)\). The time complexity of the standard DBO algorithm is \(O(N)+O(TN)+O(TND)=O(TND)\) .

This study carries out an improvement of DBO by introducing Latin hypercubic sampling initialization has not improved the updating formula in the global search phase, at which point the complexity is still \(O(N)\). Introducing a hybrid position update strategy in the global search phase36 only changes the allocation of populations and the way the algorithm is executed without changing the algorithm itself the complexity is still \(O(TN)\). The introduction of the Kersey inverse cumulative distribution operator that fuses tangent flights to execute the greedy principle increases the \(O(T)\) computation changes under the same order of magnitude37, and the complexity of the rest of the link is the same as the DBO algorithm, so the time complexity of this stage is \(O(TND)\). So the total time complexity of IDBO is \(O(N)+O(TN)+O(TND)=O(TND)\).

In summary, the total time complexity of image segmentation for IDBO optimized K-means is still \(O(N)+O(TN)+O(TND)=O(TND)\).

Numerical experiments

Model parameter settings and baseline functions

To evaluate the performance of the proposed Improved Dung Beetle Optimization (IDBO) algorithm, a series of five comparative simulation experiments were conducted against several well-established optimization algorithms, including the original DBO, GWO, BWO, PSO, MODBO, and MSDBO. The experimental design is as follows:

Experiment 1: Analyze the effectiveness of the three improvement strategies integrated into the IDBO algorithm.

Experiment 2: Compare the optimization performance of IDBO with six other intelligent optimization algorithms under different dimensions.

Experiment 3: Perform the Wilcoxon rank-sum test to evaluate the statistical significance of the performance differences between IDBO and the other six swarm intelligence algorithms.

Experiment 4: Utilize CEC2021 benchmark functions to further verify the stability and robustness of the IDBO algorithm when handling complex optimization tasks.

Experiment 5: Compare the performance and effectiveness of IDBO and other algorithms when applied to K-means-based image segmentation optimization.

All experiments were conducted under a 64-bit Windows 10 operating system with an Intel(R) Core(TM) i5-7200U CPU at 2.70 GHz. To validate the performance improvement of DBO achieved by the proposed strategies, 12 benchmark test functions were selected38. Among them, F1–F7 are unimodal functions used to test the local exploitation capability of the algorithms, while F8–F12 are multimodal functions used to evaluate their global exploration ability.

To ensure fairness and consistency in performance evaluation, all algorithms were configured with a population size of 30, a maximum of 500 iterations, and dimensional settings of 30, 100, and 500 39,40. Each experiment was independently executed 30 times, and the results were evaluated using three indicators: the mean (mean), standard deviation (std), and best value (best)14. Specifically, the best and mean values indicate the optimization accuracy and capability, while the standard deviation (std) reflects the stability of the algorithm41.

In addition to the original DBO, three widely used optimization algorithms and two recently developed algorithms were selected for comparison. The parameters of each algorithm were configured according to their respective references, as summarized in Table 1.

Performance effectiveness analysis of progressive strategies

To verify the effectiveness of the proposed improvement strategies in enhancing the performance of the Dung Beetle Optimization (DBO) algorithm, three variant algorithms were designed for comparison:

IDBO1: DBO improved only with the Latin Hypercube Sampling (LHS) strategy.

IDBO2: DBO improved only with the hybrid update strategy combining nonlinear decision factors and a competitive mechanism.

IDBO3: DBO improved only with the Cauchy inverse cumulative distribution operator and the tangent flight operator.

The experimental parameter settings were consistent with those described in Sect. 3.1.

As shown in Table 2, both IDBO-3 and the fully improved IDBO algorithm achieved theoretical optimal values for the best, mean, and standard deviation metrics on functions F1–F3, indicating that the integration of the Cauchy inverse cumulative distribution and tangent flight operators effectively enhances the optimization performance of the algorithm. For function F6, IDBO1 achieved the best and mean values among all variants, though its standard deviation was not optimal, suggesting a limitation in stability for this particular strategy. Overall, IDBO3 outperformed the original DBO algorithm, demonstrating that incorporating the Cauchy and tangent flight mechanisms helps the algorithm escape local optima, effectively balancing global exploration and local exploitation, and improving robustness42.

Furthermore, IDBO1 and IDBO2 achieved significantly better performance than the original DBO algorithm across all three evaluation metrics on functions F1–F4, confirming that both the LHS initialization and hybrid update strategy substantially enhance the optimization accuracy and search efficiency of the algorithm43,44.

In summary, the comprehensive comparison across 12 benchmark functions shows that the fully improved IDBO algorithm, which integrates all proposed strategies, consistently outperforms the other four variants, thereby fully validating the effectiveness and superiority of the proposed improvement mechanisms.

Figure 3 shows the convergence curves of the five algorithms. It can be observed that IDBO1 exhibits a convergence trend similar to the original DBO; however, its convergence accuracy is improved, and the average optimization performance is more stable. Compared with DBO, the IDBO2 algorithm demonstrates further improvement in both average convergence accuracy and standard deviation, indicating that it effectively balances global exploration and local exploitation. For IDBO3, it is evident that the incorporation of the Cauchy inverse cumulative distribution operator and the tangent flight operator significantly enhances the algorithm’s ability to escape from local optima45, resulting in faster convergence toward the global optimum and improved overall search performance.

Convergence curve of IDBO with different improvement strategies.

Performance comparison experiments with other swarm intelligence algorithms

To further verify the superior performance of the IDBO algorithm, it was compared with GWO, BWO, PSO, DBO, MODBO, and MSDBO on 12 benchmark test functions, with dimensions set to 30, 100, and 500, and other parameters configured as in Sect. 3.1. The comparison results are shown in Table 3.

For functions F1–F7, IDBO achieved the best performance across all evaluation metrics compared to the other algorithms. Under the 30-dimensional condition for F3, IDBO reached the optimal value of 0, with a mean value of 0, outperforming DBO. For functions F8–F12, the convergence accuracy of IDBO was generally able to reach the theoretical optimum of 0.

Under 100-dimensional conditions, all algorithms showed some performance degradation, but IDBO was least affected by the increase in dimensionality, maintaining a significant accuracy advantage. In particular, for F1–F4, F6, and F8, all evaluation metrics achieved the theoretical minimum, with no regression in accuracy. Compared to other swarm intelligence algorithms, the average convergence accuracy of IDBO remained superior, demonstrating its strong optimization capability. Additionally, the standard deviation of IDBO was smaller than that of the other metaheuristic algorithms, indicating better stability and ability to escape local optima. Among the other algorithms, only GWO was able to reach the theoretical value for F8, while their optimization performance on the remaining benchmark functions was relatively poor.

Under the high-dimensional condition of 500 dimensions, IDBO outperformed all other algorithms in all precision metrics. Its mean values were significantly better than those of the other algorithms, verifying IDBO’s excellent optimization accuracy, and its smaller std values confirmed the algorithm’s robustness.

In summary, based on the mean values, IDBO demonstrates higher solution accuracy and faster convergence speed; based on the standard deviation, it exhibits superior global search ability and excellent optimization stability. IDBO can quickly converge to high precision on unimodal functions, while for multimodal functions, it avoids local optima and premature convergence, maintaining reliable global search performance.

Figure 4 shows the convergence curves of the IDBA algorithm with the other six algorithms under 12 benchmark test functions. It is clearly seen that the IDBO algorithm overwhelmingly has faster and higher convergence speed and accuracy under the 12 test functions, further verifying the algorithm’s excellent performance in solving the problem.

Convergence curves of different algorithms.

Wilcoxon rank sum test

To further compare the IDBO algorithm with other algorithms, Wilcoxon rank sum test was performed46,47. The experiment was run independently 30 times for each algorithm, and the Wilcoxon rank sum test of the run results at the significant level of the value, if, it means that these two algorithms are significantly different, if, it means that these two algorithms are the same on the whole. “+” “=” “-” indicates that the performance of IDBO algorithm is better than, equal to and inferior to other algorithms respectively.NaN indicates that the results of these two algorithms are too similar to be significant.

Table 4 shows that the p-value of the rank sum test for most of the algorithms is less than 0.05, indicating that the difference in the performance of the IDBO algorithm over the other six algorithms is significant.

Experimental analysis of CEC2021 test functions

In order to further verify the feasibility and robustness of IDBO algorithm, it is tested by CEC2021 objective function optimization. Ten functions of the CEC2021 benchmark test function are selected for testing, where dimension, population size, maximum number of iterations. The experimental results are shown in Table 5. From the results in Table 5, it can be seen that the IDBO algorithm shows significant advantages in overall performance. Whether in terms of convergence speed and accuracy on single-peak functions or global optimization ability on multi-peak functions and complex combinatorial functions, IDBO achieves better results than the other six comparative algorithms. Especially in high-dimensional complex optimization problems, IDBO can effectively balance the global exploration and local development processes, and shows strong stability, convergence and robustness. In conclusion, the experimental results fully verify the feasibility and superior performance of IDBO algorithm in dealing with different types of optimization problems2,6,15,25.

IDBO optimized K-means for image segmentation experiments

In order to verify the feasibility and effectiveness of IDBO optimized K-means algorithm for image segmentation, the classical images from the standard test dataset are selected for experimental verification. The parameters are the same as the experiments in Sect. 3.1, the population size is 30, and the maximum number of iterations of the population is 500.In the part of K-means algorithm, the initial number of cluster centers of the segmentation graph is made to be 4, and each image is tested independently for 30 times. The experimental segmentation results use the mean square error (\({\sigma _{MSE}}\) ) and the peak signal-to-noise ratio (\({P_{PSNR}}\) ) to measure the quality of the segmented image. The smaller \({\sigma _{MSE}}\) is, the better the quality of the segmented image; the larger \({P_{PSNR}}\) is, the more similar the segmented image is to the original image48,49.

\(f\left( {x,y} \right)\) and \(\check f (x,y)\) in Eq. (18) are the images corresponding to before and after image segmentation, respectively.

Comparison of segmentation results before and after Swan image (available under the CC BY 4.0 license. The dataset can be accessed at https://github.com/BIDS/BSDS500.)

As shown in Fig. 5, there are obvious differences in the segmentation results of different algorithms: GWO-K, BWO-K and PSO-K have serious “exposure” phenomena in the process of segmentation, i.e., some areas are too bright and the edges are blurred, which leads to unclear boundaries between the target and the background; MSDBO-K improves this problem to a certain extent, but there is still a situation that the shadow and the water surface area are misclassified as the same category, which shows insufficient adaptation to complex lighting and texture features. Although MSDBO-K has improved this problem to a certain extent, there are still cases in which the shadow and the water surface area are misclassified as the same category, which shows the lack of adaptability to complex lighting and texture features. In contrast, the IDBO-K algorithm has significant advantages in image detail preservation and region boundary recognition, and its segmentation results can accurately extract the head and neck contours of the swan, and effectively eliminate the background interference, so as to realize the clear separation between the swan body and the water surface and maintain the structural integrity of the swan. This shows that the IDBO-K algorithm has stronger feature expression ability and robustness in the task of target segmentation under complex background.

Comparison of results before and after Cameraman image segmentation (available under the CC BY 4.0 license. The dataset can be accessed at https://github.com/BIDS/BSDS500.)

As shown in Fig. 6, the IDBO-K algorithm demonstrates superior visual quality and structural fidelity in image segmentation. The segmented results clearly preserve the overall contours of the subject, with natural edge transitions and no obvious breaks or blurring. Simultaneously, portions of the background texture are well maintained, effectively preventing excessive smoothing or loss of texture information during segmentation. Compared to other algorithms, the results produced by IDBO-K exhibit the lowest color deviation and the most balanced brightness, providing a clear advantage in terms of overall visual consistency and regional distinction.

Comparison of segmentation results before and after Rice image (available under the CC BY 4.0 license. The dataset can be accessed at https://github.com/BIDS/BSDS500.)

As shown in Fig. 7, there are notable differences in the performance of various algorithms on Rice image segmentation. Except for IDBO-K, the other algorithms all exhibit varying degrees of overexposure, characterized by excessive bright regions and blurred edge details, which weakens the contrast between the rice grains and the background and results in unclear segmentation boundaries. This indicates that these algorithms have limited stability when handling images with high-brightness textures and uneven grayscale distributions.

Although IDBO-K achieves a relatively better overall segmentation, preserving the clear contours of the rice grains, some limitations remain: certain background texture details are misclassified as part of the target, leading to reduced segmentation accuracy in local regions. This suggests that, for images with complex background textures or subtle grayscale variations, IDBO-K still requires further optimization in feature discrimination and class boundary determination. Nonetheless, overall, IDBO-K outperforms the other algorithms in exposure suppression and preservation of primary contours, demonstrating strong global optimization capability and detail retention performance.

Comparison of segmentation results before and after Tulip image (available under the CC BY 4.0 license. The dataset can be accessed at https://github.com/BIDS/BSDS500.)

As shown in Fig. 8, there are significant differences in the performance of various algorithms on the flower image segmentation task. GWO-K, PSO-K, and MSDBO-K exhibit varying degrees of overexposure, resulting in excessively bright regions, loss of detail, blurred petal edges, and unclear texture layers. While K-means and BWO-K show slight improvements in brightness control, they still perform poorly in texture detail segmentation, failing to effectively capture the fine structures of the petals, leading to relatively flat overall segmentation results.

In comparison, MODBO-K shows some improvement in overall segmentation quality, preserving the main contours and primary regions of the flower, with reduced overexposure, though it still falls short of achieving ideal brightness and contrast balance. IDBO-K, building on this, further optimizes segmentation quality, demonstrating better regional consistency and structural integrity. The segmented results clearly reflect the shape and layers of the petals, with more distinct separation between foreground and background.

However, compared to the original image, IDBO-K still shows some color reproduction limitations, indicating that further improvement is needed for handling color transitions and texture information under complex lighting conditions. Overall, IDBO-K outperforms the other algorithms in terms of overall visual quality and segmentation accuracy, demonstrating strong stability and generalization capability50,51.

Four types of images are utilized to carry out the image segmentation comparison test at the same time, the segmentation results are objectively evaluated by 2 numerical indexes, namely mean square error and peak signal-to-noise ratio, and the evaluation and comparison data are shown in Table 6.When the K-means algorithm segmented the first three classical images, \({\sigma _{MSE}}\) are all the largest, and \({P_{PSNR}}\) are all the smallest; for the Swan, Lena, and Cameraman diagrams, the GWO-K, A and A obtained after segmentation of BWO-K and PSO-K are greatly improved compared to K-means algorithm, while IDBO-K obtains the smallest \({\sigma _{MSE}}\) and the largest \({P_{PSNR}}\) after segmentation of the three images, i.e., it shows that the algorithm can better adapt and optimize the clustering and segmentation process for the case of large changes in gray levels or complex backgrounds in gray scale images. However, when segmenting the last two classical images, the corresponding \({\sigma _{MSE}}\) of IDBO-K increases compared to the first three images, and the corresponding \({P_{PSNR}}\) of IDBO-K decreases compared to the first three images, which means that the algorithm’s segmentation effect is not optimal when dealing with the last two images that require very high precision. Therefore, IDBO-K is more effective in segmenting gray-scale images with large changes in gray levels or more complex backgrounds, which has certain feasibility and superiority24,36,38,43,52.

Ablation experiment

Experiment design

To quantitatively verify the individual contributions of each enhancement strategy in the Improved Dung Beetle Optimization (IDBO) algorithm, this section conducts ablation experiments. The experiments are based on 12 benchmark functions (F1–F7 as unimodal functions, F8–F12 as multimodal functions) and the CEC2021 benchmark suite, with consistent parameter settings (population size = 30, maximum iterations = 500, dimensions = 30/100/500) as Sect. 3.1. Evaluation metrics include the best optimization value (Best), mean value (Mean), and standard deviation (Std) to comprehensively reflect optimization accuracy and stability.

To systematically evaluate the contribution of each proposed enhancement strategy, five algorithm variants were designed, with the original Dung Beetle Optimization (DBO) serving as the baseline. Specifically, DBO-Baseline represents the unmodified DBO algorithm; DBO-LHS incorporates only the Latin Hypercube Sampling (LHS) initialization; DBO-HPS integrates solely the hybrid position updating strategy, which combines a nonlinear decision factor with a competition mechanism; DBO-CTO employs only the local exploitation operators, namely the Cauchy inverse cumulative distribution operator and the tangent flight operator; and IDBO-Full represents the complete improved algorithm, integrating all three strategies. To ensure statistical reliability, each experiment was independently executed 30 times, and performance metrics were aggregated to analyze the effects of individual enhancements and the overall improvement of the IDBO framework.

Results on benchmark functions

The ablation study on unimodal functions (30D) was conducted to evaluate the local exploitation capability of each enhancement strategy, with the results summarized in Table 7. Compared to the baseline, DBO-LHS achieves an average reduction of 32.7% in the Mean error and 28.5% in the Std, indicating that LHS initialization enhances population uniformity and mitigates premature convergence during early iterations. DBO-HPS further improves performance, reducing the Mean error by 41.2% on average, which demonstrates that the hybrid position updating strategy, combining a nonlinear decision factor with a competition mechanism, effectively balances global exploration and local exploitation, particularly in high-dimensional search spaces. DBO-CTO attains a Mean error reduction of 48.5% on average, highlighting that the integration of the Cauchy inverse cumulative distribution operator and tangent flight operator strengthens local fine-tuning accuracy. Finally, IDBO-Full, incorporating all three strategies, achieves the best performance, with a 67.9% reduction in Mean error relative to the baseline and successfully reaching the theoretical optimum (0) for functions F1–F4. These results clearly demonstrate the complementary contributions of each strategy and the superiority of the integrated IDBO framework in enhancing local exploitation.

he ablation study on multimodal functions was conducted to evaluate the global exploration capability and the ability to escape local optima, with results presented in Table 8. DBO-LHS achieves an average reduction of 21.3% in the Mean error compared to DBO-Baseline, indicating that LHS initialization effectively enhances population diversity. DBO-HPS further improves performance with a 35.6% average reduction in Mean error, demonstrating the effectiveness of the hybrid position updating strategy in balancing global exploration and local exploitation. DBO-CTO exhibits the strongest capability to escape local optima, reducing the Mean error by 42.3% on average and achieving the lowest Std among the variants, highlighting the effectiveness of the dual-operator local exploitation mechanism. IDBO-Full, integrating all three strategies, attains the theoretical optimum (0) for functions F9–F11 and achieves a 61.8% reduction in Mean error relative to the baseline, demonstrating superior global optimization performance. These results confirm that each enhancement strategy contributes uniquely to global search efficiency and that the integrated IDBO framework effectively combines exploration and exploitation to achieve robust performance across multimodal landscapes.

All three enhancement strategies contribute positively to the performance improvement of the algorithm. Among them, DBO-CTO plays the most significant role in strengthening local exploitation and escaping local optima, followed by DBO-HPS and DBO-LHS. The fully integrated IDBO-Full leverages the complementary advantages of all strategies, achieving a performance improvement that exceeds the sum of individual contributions, thereby validating the rationality and effectiveness of the multi-strategy fusion design. Furthermore, the enhancement strategies demonstrate consistent performance gains across both unimodal and multimodal functions, indicating that the IDBO framework possesses strong adaptability and robustness across diverse optimization problems.

Conclusion and outlook

To address the limitations of the traditional K-means clustering algorithm, which is highly sensitive to the selection of initial cluster centers, and the Dung Beetle Optimization (DBO) algorithm, which suffers from low optimization accuracy and a tendency to become trapped in local optima in complex optimization problems, this study proposes an improved version of DBO, termed the Improved Dung Beetle Optimization (IDBO) algorithm.

Firstly, Latin Hypercube Sampling (LHS) is employed to initialize the population, enhancing the uniformity and diversity of individuals in the search space and effectively preventing premature convergence during the initial stages of the search. Secondly, a hybrid position updating strategy combining a nonlinear decision factor and a competition mechanism is introduced to dynamically balance global exploration and local exploitation during the iteration process, improving the search flexibility and efficiency of the population at different stages. Finally, a local exploitation operator is constructed by integrating the Cauchy inverse cumulative distribution operator and the tangent flight operator, allowing dynamic fine-tuning of the optimal individuals and further enhancing local search accuracy and stability in the late stages of convergence, thereby significantly improving the overall performance of the algorithm.

Comprehensive experiments on 12 classic benchmark test functions, the Wilcoxon Rank-Sum Test, and the CEC2021 benchmark suite demonstrate that IDBO significantly outperforms the original DBO in terms of convergence speed, global optimization ability, and avoidance of local optima. Building on this, the IDBO algorithm was applied to optimize K-means clustering, forming the IDBO-K image segmentation model, which was tested on various representative images. Results indicate that the proposed method significantly enhances segmentation precision and edge preservation, enabling accurate segmentation of images with complex textures and lighting variations, thereby validating the effectiveness and robustness of the algorithm in image segmentation tasks.

However, despite its demonstrated effectiveness, the IDBO-K model may encounter challenges when applied to large-scale or real-time image segmentation tasks. The iterative nature of IDBO, combined with computationally intensive position updating and local exploitation strategies, may lead to increased runtime and higher computational resource demands, limiting its efficiency in time-sensitive applications or on very high-resolution images.

Future research will focus on integrating machine learning and deep learning techniques to further improve the DBO algorithm, aiming to enhance its adaptability, generalization, and computational efficiency in high-dimensional and large-scale scenarios. Specifically, optimizing parallelization strategies, reducing iteration costs, and incorporating predictive models could expand the potential applications of IDBO in real-time image processing, feature extraction, and pattern recognition while maintaining segmentation accuracy and robustness.

Data availability

The data used in this study is derived from the BSD500 dataset, which is publicly available under the terms of the Creative Commons Attribution 4.0 International License (CC BY 4.0). The dataset can be accessed through the following GitHub repository: [https://github.com/BIDS/BSDS500](https:/github.com/BIDS/BSDS500) . This dataset includes a variety of high-resolution images and ground truth annotations for image segmentation tasks. The images are used in accordance with the CC BY 4.0 license, which allows for sharing, adaptation, and redistribution with appropriate attribution.

References

Gao, Y. et al. Medical image segmentation: A comprehensive review of deep Learning-Based methods. Tomography 11, 52 (2025).

Wiley, V. & Lucas, T. Computer vision and image processing: a paper review. Int. J. Artif. Intell. Res. 2, 29–36 (2018).

Ashour, L. S. et al. Non-overlapping Patch-Based Pre-trained CNN for breast cancer classification. Iraqi J. Comput. Sci. Math. 6, 29 (2025).

Chen, E., Ting, H. N., Chuah, J. H. & Zhao, J. Segmentation of overlapping cells in cervical cytology images: A survey. IEEE Access. 12, 114170–114189 (2024).

Obuchowicz, R., Strzelecki, M. & Piórkowski, A. MDPI, Vol. 16 1870 (2024).

Xu, Y. et al. Advances in medical image segmentation: A comprehensive review of traditional, deep learning and hybrid approaches. Bioengineering 11, 1034 (2024).

Li, Y., Song, X., Tu, Y. & Liu, M. G. A. P. B. A. S. Genetic algorithm-based privacy budget allocation strategy in differential privacy K-means clustering algorithm. Computers Secur. 139, 103697 (2024).

Liao, J., Wu, X., Wu, Y. & Shu, J. K-NNDP: K-means algorithm based on nearest neighbor density peak optimization and outlier removal. Knowl. Based Syst. 294, 111742 (2024).

Chai, X. et al. Image segmentation based on the optimized K-Means algorithm with the improved hybrid grey Wolf optimization: application in ore particle size detection. Sensors 25, 2785 (2025).

Zhao, J., Bao, Y., Li, D. & Guan, X. An improved K-Means algorithm based on contour similarity. Mathematics 12, 2211 (2024).

Premkumar, M. et al. Augmented weighted K-means grey Wolf optimizer: an enhanced metaheuristic algorithm for data clustering problems. Sci. Rep. 14, 5434 (2024).

Das, A., Namtirtha, A. & Dutta, A. Lévy–Cauchy arithmetic optimization algorithm combined with rough K-means for image segmentation. Appl. Soft Comput. 140, 110268 (2023).

Song, Y., Peng, G., Sun, D. & Xie, X. Active contours driven by Gaussian function and adaptive-scale local correntropy-based K-means clustering for fast image segmentation. Sig. Process. 174, 107625 (2020).

Belete, B. A., Gelmecha, D. J. & Singh, R. S. Enhancing colour image encryption through parameters optimization of memristive hyperchaotic system with CPSO algorithm and LSAIM. Imaging Sci. J. 73 (6), 681–701 (2025).

Xue, J. & Shen, B. Dung beetle optimizer: A new meta-heuristic algorithm for global optimization. J. Supercomputing. 79, 7305–7336 (2023).

Lu, M., Wang, Y., Qiu, S., Li, N. & Shi, Y. in Proceedings of the 2024 3rd International Symposium on Control Engineering and Robotics. 498–504.

Yong, X., Bicong, S. & Yi, Z. Application of an improved pelican optimization algorithm based on comprehensive strategy in PV parameter identification. Sci. Rep. 15, 27931 (2025).

Mehmood, K. et al. Design of chaos induced Aquila optimizer for parameter estimation of electro-hydraulic control system. Comput. Model. Eng. Sci. 143, 1809–1841 (2025).

Mehmood, K. et al. Design of chaotic young’s double Slit experiment optimization heuristics for identification of nonlinear muscle model with key term separation. Chaos Solitons Fractals. 189, 115636 (2024).

Zheng, X., Lei, Q., Yao, R., Gong, Y. & Yin, Q. Image segmentation based on adaptive K-means algorithm. EURASIP Journal on Image and Video Processing 1–10 (2018).

Yao, H., Duan, Q., Li, D. & Wang, J. An improved K-means clustering algorithm for fish image segmentation. Math. Comput. Model. 58, 790–798 (2013).

Ruban, I. et al. Methods of Uavs images segmentation based on k-means and a genetic algorithm. Eastern-European J. Enterp. Technologies 118 (2022).

Mao, Z. et al. A multi-strategy enhanced Dung beetle algorithm for solving real-world engineering problems. Artif. Intell. Rev. 58, 253 (2025).

Fang, R. et al. Dung beetle optimization algorithm based on improved Multi-Strategy fusion. Electronics 14, 197 (2025).

Ma, Z., Liu, S. & Xu, L. Enhancing dung beetle optimization algorithm with hybrid multi-strategy and its engineering applications. Neural Comput. Applic. 37, 19123–19175 (2025).

Liu, L. et al. A full-coverage path planning method for an orchard mower based on the Dung beetle optimization algorithm. Agriculture 14, 865 (2024).

Huang, B., Yang, G., Lei, J. & Wang, X. A partitioned conditioned Latin hypercube sampling method considering Spatial heterogeneity in digital soil mapping. Sci. Rep. 15, 12851 (2025).

Pandey, N. K. & Satyam, N. Role of finer particles in rheological characterization of debris flows: insights from Western Himalayas. Indian Geotech. J. 55, 2308–2324 (2025).

Phromphan, P., Suvisuthikasame, J., Kaewmongkol, M., Chanpichitwanich, W. & Sleesongsom, S. A new Latin hypercube sampling with maximum diversity factor for reliability-based design optimization of HLM. Symmetry 16, 901 (2024).

Al-Daraiseh, A. et al. Cryptographic grade chaotic random number generator based on tent-map. J. Sens. Actuator Networks. 12, 73 (2023).

Chen, Q. et al. Spontaneous coal combustion temperature prediction based on an improved grey Wolf optimizer-gated recurrent unit model. Energy 314, 133980 (2025).

Motwakel, A. et al. Chaotic mapping Lion optimization Algorithm-Based node localization approach for wireless sensor networks. Sensors 23, 8699 (2023).

Deng, W. et al. Multi-strategy quantum differential evolution algorithm with cooperative co-evolution and hybrid search for capacitated vehicle routing. IEEE Trans. Intell. Transp. Syst. 26, 18460–18470 (2025).

Khoshaba, F. S., Kareem, S. W. & Hawezi, R. S. Swallow search algorithm (SWSO): A swarm intelligence optimization approach inspired by swallow bird behavior. Computers 14, 345 (2025).

Munteanu, A. & Words Meaning and Vocabulary An introduction to modern English lexicology. Words, Meaning and Vocabulary An introduction to modern English lexicology (2025).

Givhan, C. A. Design and analysis of a dual antenna vector tracking software defined receiver for robust navigation and mitigation of spoofing threats. (2025).

Hemayat, S., Baharlou, S. M., Sergienko, A. & Ndao, A. Efficient Inverse Design of Plasmonic Patch Nanoantennas using Deep Learning. arXiv preprint arXiv:2407.03607 (2024).

Etesami, R., Madadi, M. & Keynia, F. A new improved fruit fly optimization algorithm based on particle swarm optimization algorithm for function optimization problems. Journal Mahani Math. Res. Center 13 (2024).

Cuevas, E., González-Sánchez, O. A., Delgado-Castañeda, N. & Zaldívar, D. Rodríguez-Vazquez, A. A novel metaheuristic algorithm using structured population and virtual particles. J. Supercomputing. 81, 1–90 (2025).

Thapliyal, S. & Kumar, N. Comprehensive performance metric for bio-inspired optimizers: a generation-wise population convergence approach toward global optimality. Iran J. Comput. Sci., 8, 843–891 (2025).

Jia, H., Wen, Q., Wang, Y. & Mirjalili, S. Catch fish optimization algorithm: a new human behavior algorithm for solving clustering problems. Cluster Comput. 27, 13295–13332 (2024).

Wang, X., Wei, P. & Li, Y. Enhanced secretary bird optimization algorithm with multi-strategy fusion and Cauchy–Gaussian crossover. Sci. Rep. 15, 23163 (2025).

Escobar-Cuevas, H., Cuevas, E., Avila, K. & Avalos, O. An advanced initialization technique for metaheuristic optimization: a fusion of Latin hypercube sampling and evolutionary behaviors. Comput. Appl. Math. 43, 234 (2024).

Wang, Z. et al. Improved Latin hypercube sampling initialization-based Whale optimization algorithm for COVID-19 X-ray multi-threshold image segmentation. Sci. Rep. 14, 13239 (2024).

Wu, L., Wu, J. & Wang, T. The improved grasshopper optimization algorithm with cauchy mutation strategy and random weight operator for solving optimization problems. Evol. Intel. 17, 1751–1781 (2024).

Gu, W. & Wang, F. A multi-strategy improved Dung beetle optimisation algorithm and its application. Cluster Comput. 28, 49 (2025).

Lyu, L., Jiang, H. & Yang, F. Improved Dung beetle optimizer algorithm with multi-strategy for global optimization and UAV 3D path planning. IEEE Access. 12, 69240–69257 (2024).

Jia, H. et al. Improved artificial rabbits algorithm for global optimization and multi-level thresholding color image segmentation. Artif. Intell. Rev. 58, 55 (2024).

Zhang, B. et al. Denoising Swin transformer and perceptual peak signal-to-noise ratio for low-dose CT image denoising. Measurement 227, 114303 (2024).

OuYanga, K. et al. Escape.

Tu, K. & Cheng, J. Enhanced Dung beetle optimization algorithm and its application in 3D UAV path planning. Electron. Res. Archive 33, 2618–2667 (2025).

Hu, Y., Zhu, L. & Zhao, H. Multistage threshold segmentation method based on improved electric eel foraging optimization. Mathematics 13, 1212 (2025).

Funding

This work was supported by the National Natural Science Foundation of China (Grant Nos. 52261044 and 52074283) and the National Key Research and Development Program of China (Grant No. 2020YFA0711802).

Author information

Authors and Affiliations

Contributions

Author Contributions: Ning Li : Writing – review & editing, Validation, Supervision, Project administration, Methodology, Investigation, Data curation, Conceptualization. Yan Luo : Writing – review & editing, Software, Methodology, Formal analysis, Data curation, Conceptualization. Zhiqiang Feng : Writing – review & editing, Writing – original draft, Validation, Software, Funding acquisition, Data curation. Hu Qu : Formal analysis, Methodology, Investigation, Data curation, Conceptualization. Zixuan Feng : Methodology, Validation, Software.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Li, N., Luo, Y., Feng, Z. et al. Optimized K-means algorithm for image segmentation based on improved dung beetle algorithm. Sci Rep 16, 11187 (2026). https://doi.org/10.1038/s41598-026-38438-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-38438-2