Abstract

Accurate real-time dense mapping with precise scale and localization is crucial for autonomous robot navigation, particularly in dynamic environments. However, existing methods often rely on a single sensor type and lack robustness to dynamic scenes. To address these challenges, a generalized framework for real-time, scale-aware dense mapping with dynamic robustness is proposed in this paper, referred to as SDMFusion. SDMFusion supports monocular, stereo, and RGB-D cameras and is built upon the ORB-SLAM3 system. Three core modules are integrated into SDMFusion. The scale-depth optimization module recovers the absolute scale for monocular and refines the depth maps. The dynamic feature rejection module segments dynamic objects, combining geometric constraints and moving consistency checks to facilitate dynamic feature rejection. The real-time anti-dynamic reconstruction module generates high-quality dense maps of static regions using optimized depth, dynamic masks, and camera poses. Extensive experiments on KITTI, TUM RGB-D, BONN RGB-D, and real-world datasets validate the effectiveness of our approach. The results demonstrate that SDMFusion achieves superior overall performance in accuracy and robustness compared to ORB-SLAM3 and other advanced dynamic SLAM methods. Furthermore, our method effectively eliminates dynamic regions from the dense maps.

Similar content being viewed by others

Introduction

Simultaneous Localization and Mapping (SLAM) serves as a fundamental technique for autonomous robot navigation. SLAM systems are categorized into LiDAR and visual variants based on the type of sensor used. Compared with LiDAR, visual SLAM provides advantages such as compact sensors, lower cost, and richer texture information. These features make it well-suited for applications in low-cost robotics, unmanned aerial vehicles (UAVs), and augmented reality.

Despite these benefits, visual SLAM faces several challenges in real-world scenarios. First, to meet lightweight and low-cost requirements, many consumer platforms are equipped with only monocular cameras. This prevents the recovery of absolute scale and limits localization accuracy in GNSS-denied environments. Second, dynamic objects often interfere with feature extraction and matching, causing pose estimation drift. Third, dense maps are crucial for advanced robotic functions, such as obstacle avoidance and autonomous navigation. However, most visual SLAM systems produce only sparse maps, which are inadequate for such tasks.

Various approaches have been proposed to address these issues. Monocular depth estimation using deep learning techniques has been proposed as a solution to scale ambiguity. These methods enable absolute scale recovery and dense reconstruction without the need for external sensors1,2,3,4. However, most of them assume static scenes, ignore dynamic objects, and typically support only monocular input. In parallel, multiple strategies5,6,7,8,9,10,11,12,13,14 have been developed to improve robustness in dynamic environments by eliminating dynamic regions. Yet, most existing methods are limited to RGB-D inputs. Even systems that support multiple input types, such as DynaSLAM11, fail to recover absolute scale or perform dense mapping for monocular.

To address these challenges, this paper presents SDMFusion, a comprehensive framework enabling real-time, scale-aware dense mapping with enhanced dynamic robustness. It is compatible with monocular, stereo, and RGB-D cameras. The primary contributions of this study are outlined as follows:

-

(1)

A scale-depth optimization method that leverages DepthAnythingV2 to recover precise absolute scale for monocular and refine depth maps for stereo and RGB-D.

-

(2)

A dynamic feature rejection strategy that incorporates YOLO11s-seg, geometric constraints, and a feature-level moving consistency check to accurately reject dynamic features.

-

(3)

A generalized framework named SDMFusion that enables high-quality, real-time, scale-aware dense mapping with dynamic robustness across all camera types.

The structure of this paper is as follows. Section 2 provides a review of related work. Section 3 introduces the proposed methodology. Section 4 presents the analysis of experimental results, and Sect. 5 offers the concluding remarks.

Related work

Monocular depth estimation for SLAM

Scale uncertainty is a common issue in monocular SLAM. To address this, various studies15,16,17 have introduced auxiliary sensors such as IMUs, stereo cameras, or depth sensors to obtain absolute scale. However, for cost and size considerations, recent research has increasingly focused on recovering absolute scale using monocular images alone. Among these efforts, monocular depth estimation has become a key technique.

Early works, such as CNN-SLAM1, introduced CNN-predicted dense depth maps into monocular SLAM. These predictions were combined with SLAM measurements to enhance performance, particularly in low-texture regions. In CNN-SVO18, predicted depth initialized the feature depth variance and mean during mapping. DVSO19 incorporated a two-stage optimization framework and fused predicted depth as virtual stereo measurements into DSO20. Pose, uncertainty, and predicted depth were incorporated into D3VO21 to improve both tracking and optimization. Several other methods further explored this direction. Steenbeek et al.2 combined SLAM with CNN-based depth estimation to achieve real-time dense mapping and scale calibration on UAVs. Yin et al.22 employed deep convolutional neural fields for scale recovery and depth estimation. Tiwari et al.23 explored joint optimization of depth and pose. Sun et al.24 enhanced visual odometry using monocular depth estimation, but provided only relative depth. Luo et al.3 fused monocular SLAM with an adaptive online depth predictor to improve heterogeneous scene reconstruction. DRM-SLAM4 proposed a depth fusion scheme using CNNs for robust depth prediction and absolute scale recovery.

Despite promising results, many of these monocular depth estimation methods exhibit limited absolute accuracy, especially in complex scenes. To improve this, recent models25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41 have used techniques such as multi-scale modeling, ranked regression, domain adaptation, diffusion mechanisms, and multi-tasking. Among them, DepthAnythingV241 has emerged as a leading model due to high accuracy, strong generalization ability, and inference efficiency. In this work, a scale-depth optimization module is constructed based on DepthAnythingV2 to recover absolute scale and refine depth. Moreover, most existing methods still assume static scenes, leading to degraded performance in dynamic environments.

Dynamic SLAM

Conventional SLAM methods typically assume a static environment. However, in real-world settings with pedestrians, vehicles, or animals, this assumption is often violated. Dynamic objects contaminate feature extraction and reduce localization accuracy. Thus, precise identification and removal of dynamic regions are essential for robust and accurate SLAM.

Dynamic SLAM techniques are generally divided into geometric and deep learning-based approaches. Geometric methods identify dynamic features without deep learning. Some5,7 utilize moving consistency, recognizing dynamic features by evaluating deviations from camera motion. However, these rely on static assumptions during pose estimation and require accurate motion models. This leads to a well-known “chicken-or-egg” problem8. Besides, Sun et al.42 used the homography matrix between frames alongside RANSAC for dynamic object segmentation. However, when dynamic objects dominate the frame, static features are often mistakenly removed. To address this, DM-SLAM43 proposed DLRSAC, assuming that static features are spatially well-distributed, applying a grid-based model to distinguish dynamics. Similarly, Sun et al.44 created a foreground motion model to separate dynamic and static features. ReFusion6 employed alignment residuals to detect dynamic regions and perform dense reconstruction in such environments.

Dynamic SLAM performance has markedly improved with the rise of deep learning. Compared with traditional techniques, deep learning models offer greater scene adaptability and more accurate dynamic object extraction. For example, DS-SLAM10 integrated moving consistency check and SegNet45, enabling dynamic feature removal. Although effective for highly dynamic objects, its performance depends heavily on motion detection and can mistakenly eliminate static features. DynaSLAM11 employed Mask-RCNN46 and multi-view geometry for dynamic feature identification and removal. It performs well in complex scenarios but is computationally intensive. RDS-SLAM47 introduced parallel semantic and optimization threads into ORB-SLAM317, supporting SegNet and Mask-RCNN to filter dynamics. Although near real-time performance is achieved, the localization accuracy remains relatively low. Anebarassane et al.48 integrated YOLOv8-seg49 into ORB-SLAM3, optimizing segmentation for real-time operation. Detect-SLAM12 used SSD50 for object detection with ORB-SLAM216, processing only keyframes for efficiency. DO-SLAM13 incorporated YOLOv549 and polar geometric constraints into ORB-SLAM2. YOLO-SLAM14 enhanced ORB-SLAM2 using a lightweight YOLOv349 variant and new geometric constraints. Liu et al.51 combined YOLOv5 and geometric constraints within the ORB-SLAM2 framework to mitigate the impact of dynamic objects. Liu et al.52 incorporated a depth-aware point-line attentional graph neural network and RGB-D sensing into ORB-SLAM3 to enhance robustness against dynamic objects and low-texture regions. Despite the promising results of the above methods, most are centered around RGB-D inputs. Some support monocular or stereo but lack absolute scale recovery and dense reconstruction for monocular, limiting their practical application.

Methods

Framework

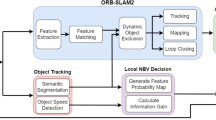

SDMFusion is a scale-aware dense SLAM framework with dynamic robustness built upon ORB-SLAM317. The system incorporates three modules: the scale-depth optimization module, the dynamic feature rejection module, and the real-time anti-dynamic dense reconstruction module. As illustrated in Fig. 1, the system adopts a modular design for flexible integration. Firstly, in the scale-depth optimization module, DepthAnythingV241 is used to generate dense depth maps from RGB or grayscale images. For monocular, these depth maps provide the absolute scale necessary for accurate localization and dense reconstruction. For RGB-D and stereo, the raw depth data can be refined and completed. Secondly, the dynamic feature rejection module employs YOLO11s-seg49 for real-time instance segmentation. Dynamic masks are then generated and passed to the tracking module based on object category and spatial relationships. Based on the masks, ORB features53 are categorized into static and dynamic sets. The dynamic features are then evaluated through a moving consistency check, and only truly dynamic features are rejected. Finally, the real-time anti-dynamic dense reconstruction module constructs dense maps using keyframes’ optimized depth maps, camera poses, and dynamic masks. Detailed technical aspects of each module are described below.

The architecture of SDMFusion.

Scale depth optimization

This module provides an absolute scale for monocular and refines depth maps for RGB-D and stereo. The module is built on DepthAnythingV241, which enhances accuracy and robustness using synthetic data, larger model capacity, and large-scale pseudo-labeled images.

The original implementation of DepthAnythingV2 is based on the PyTorch framework. To ensure real-time operation, the pre-trained model is optimized with NVIDIA TensorRT (TensorRT 8.6, https://github.com/NVIDIA/TensorRT). Model inference has been re-implemented using TensorRT’s inference engine. We build the TensorRT engine for the DepthAnythingV2 pre-trained model following open-source implementations54. Specifically, we first export the Open Neural Network Exchange (ONNX) model from the official DepthAnythingV2 codebase, then compile it into a TensorRT engine using the open-source framework54. The resulting TensorRT engine conducts inference using FP16 precision with an input resolution of 518 × 518 pixels. In this study, to adapt to varying scenarios, both indoor and outdoor pre-trained metric models are converted and deployed separately.

DepthAnythingV2 processes RGB or grayscale images and outputs corresponding depth maps. In monocular mode, the predicted depth is used directly for absolute scale recovery and dense reconstruction. The method for recovering the absolute scale is intentionally simple: the predicted depth map is treated as pseudo-depth for RGB-D initialization. Specifically, for each input frame, the system first predicts a depth map and generates a corresponding segmentation mask. Both are then combined with the RGB image and passed to the RGB-D tracking function for map initialization and subsequent pose optimization. The RGB-D tracking function first converts the RGB image into a grayscale image and then extracts ORB features from it. Subsequently, dynamic features are filtered out by combining the segmentation mask with moving consistency check. Next, the remaining static features are subsequently rectified. Using the predicted depth map, the horizontal coordinate of the matching feature in the hypothetical right image is computed for each feature. These features are then assigned to an image grid. Subsequently, map initialization is performed. For each static feature, the corresponding 3D world coordinates are obtained by back-projecting the feature using its associated predicted depth value, as defined in Eq. (3). This process constructs the initial 3D map. At this point, absolute scale recovery for monocular SLAM has been achieved based on the predicted depth map.

Comparison of raw and refined depth maps.

In stereo mode, the Semi-Global Block Matching (SGBM)55 algorithm is first used for generating the initial depth image. A global scale factor \(\:S\) is then computed as the sum of predicted depth divided by the sum of initial depth for valid regions, as defined in Eq. (1). The valid regions are defined as the intersection of valid pixels present in both the predicted and initial depth maps. For stereo outdoor scenes, the valid pixels are defined as 3 m to 50 m and 0.05 m to 80 m for initial and predicted depth maps, respectively. Invalid regions in the initial depth map are subsequently filled by multiplying the predicted depth values by the global scale factor \(\:S\), as defined in Eq. (2). In RGB-D mode, the sensor provides raw depth maps, which are often noisy or incomplete. The same refinement method is applied as in stereo mode. For RGB-D indoor scenes, the valid pixels are defined as 0.05 m to 8 m and 0.05 m to 20 m for raw and predicted depth maps, respectively. Assuming that the initial, predicted, and refined depth maps are denoted as \(\:{D}_{init}\), \(\:{D}_{pred}\), and \(\:{D}_{refined}\), respectively, then

Where \(\:S\) represents the scale factor, \(\:sum\left(\right)\) refers to the accumulation, \(\:valid\left(\right)\) denotes the extraction of valid values, [] stands for the regional indexing, and \(\:p\) denotes a pixel coordinate. Figure 2 shows that the refined depth is more complete and smoother, with clearer details.

Dynamic feature rejection

YOLO49 has gained widespread use in segmentation and detection due to its efficiency and high accuracy. For handling dynamic objects efficiently, YOLO11s-seg is introduced. The open-source YOLO11s-seg pre-trained model and configuration were directly utilized, which was trained on the COCO-Seg dataset, including 80 classes. Specifically, the model was configured with an input resolution of 640 × 640 pixels, a confidence threshold of 0.25, an IoU threshold of 0.7, and non-maximum suppression (NMS) disabled. With low computational overhead, YOLO11s-seg ensures high accuracy while meeting the real-time processing needs of SLAM systems.

To align with dynamic SLAM, the original category labels of YOLO11 have been reclassified according to dynamic attributes. Most existing methods tend to concentrate only on objects that are intrinsically dynamic, like pedestrians and vehicles. However, objects like chairs and keyboards, which are potentially dynamic, can also be displaced by external forces. If such objects are not processed, the robustness and accuracy may be negatively affected. Therefore, a three-level dynamic attribute classification mechanism is proposed in this paper to achieve accurate dynamic feature rejection. As shown in Table 1, objects are categorized into static, potentially dynamic, and dynamic based on dynamic attributes. Dynamic objects are defined as those capable of active motion, including people, various cars, and animals. Potentially dynamic objects are those that can be influenced by dynamic objects to move, such as chairs, laptops, and cell phones. Static objects are those that generally remain stationary, like refrigerators, microwaves, and toilets. After the initial classification, the potentially dynamic objects are further determined based on their spatial positions. Any potentially dynamic object located near dynamics is classified as dynamic, while others are treated as static. Based on this rule, the final dynamic mask is generated to identify the dynamic regions. The tracking thread then receives this mask for the removal of dynamic features.

After masks are passed to the tracking thread, dynamic features are further filtered. The core mechanism is a feature-level moving consistency check method. The check process consists of five main steps. Firstly, the correspondence of features between frames is calculated by Lucas Kanade (LK) optical flow pyramid matching. The pyramid level is set to 5. Secondly, matching pairs at the edges or in a 3 × 3 neighborhood with excessively large pixel differences are eliminated. Specifically, features located within 5 pixels of the edges, or the sum of absolute pixel differences in a 3 × 3 neighborhood surpasses 2120, are designated as outliers. Thirdly, the RANSAC algorithm is applied to estimate the fundamental matrix using inlier matching pairs, configured with a distance threshold of 0.1 pixels and a confidence of 0.99. Fourthly, based on the matrix, the epipolar line is generated. Finally, if the distance of a feature from the epipolar line exceeds 1, it is classified as dynamic. Unlike region-level rejection, this method allows retention of static features within potentially dynamic or dynamic objects. For example, if a person turns head, the head may exhibit motion, while the torso remains static. Applying indiscriminate elimination to the person would discard numerous stable features, degrading tracking accuracy and robustness. Therefore, to only reject features exhibiting motion, the moving consistency of each feature within dynamic regions is checked individually. This strategy effectively suppresses dynamic interference while maximizing both the quantity and quality of static features. However, dynamic objects may dominate most of images, causing the fundamental matrix estimation to fail. In such cases, our algorithm directly rejects all dynamic features based on the dynamic masks.

Figure 3 presents a comparison of feature extraction, validating the effectiveness and accuracy of the proposed approach. And YOLO11-SLAM3 denotes that ORB-SLAM3 incorporates YOLO11s-seg-based regional-level dynamic feature rejection. It can be observed that the original ORB-SLAM3 retains lots of dynamic features. The YOLO11-SLAM3 removes dynamic features but also erroneously eliminates stable features within dynamic objects. In contrast, the proposed method accurately distinguishes between dynamic and static features. Static features, such as those in the static torso of the person on the left, are successfully preserved. Only truly dynamic features, such as those in the human on the right and the head of the person on the left, are rejected.

The features of the three different methods.

Real-time anti-dynamic dense reconstruction

Most visual SLAM methods produce only sparse maps, which are insufficient for complex applications like obstacle avoidance or navigation. Moreover, repeated observations of moving objects across multiple frames in dynamic environments often result in ghosting or artifacts. To address these issues, a static region-driven dense mapping mechanism is proposed in this paper. Dynamic pixels are excluded using dynamic masks before reconstruction, and only the static parts are reconstructed.

The reconstruction in the module is carried out as outlined below. Firstly, only keyframes are used for reconstruction to ensure map integrity and to control data size. Static pixel filtering is then applied to each keyframe. In each keyframe, pixels labeled as static are retained. Subsequently, 3D projection and reconstruction are carried out based on the static pixels. Using the extrinsic parameters, color images, and depth maps, static pixels are transformed into a point cloud. The point cloud is then integrated into the global map. By merging point clouds from all keyframes, a complete reconstruction of the static environment can be obtained. Finally, voxel filtering is applied to the global map to reduce data redundancy, preserve structures, and improve mapping efficiency. The back-projection of static pixels to the point cloud is defined in Eq. (3).

Where \(\:\left(u,v\right)\) represents the pixel coordinates, \(\:D\left(u,v\right)\) denotes the depth at pixel \(\:\left(u,v\right)\), \(\:{X}_{w}\) stands for the world coordinates corresponding to the pixel \(\:\left(u,v\right)\), \(\:K\) denotes the camera intrinsic matrix, and \(\:{T}_{wc}\) denotes the camera extrinsic matrix. \(\:K\) is a 3 × 3 matrix that describes the camera’s focal lengths and principal point coordinates. Combined with the depth value, it enables the transformation of pixel coordinates into the camera coordinate system. \(\:{T}_{wc}\) is a 4 × 4 homogeneous transformation matrix, composed of a 3 × 3 rotation matrix and a 3 × 1 translation vector, which represents the transformation from the camera coordinate system to the world coordinate system. The parameters for our experimental setup were as follows. For the outdoor KITTI dataset, the point density was established at 10 cm. For the indoor BONN RGB-D and TUM RGB-D datasets, a density of 1 cm was employed. Consequently, the leaf size of the voxel filter was configured to 10 cm and 1 cm for the outdoor and indoor scenarios, respectively. The values for MeanK and the standard deviation multiplier threshold were set to 50 and 1.

Results

Implementation and experiment setup

Datasets and metrics

Three representative datasets are selected in this paper for evaluation. The first is KITTI56, a low-dynamic outdoor dataset, including natural dynamic conditions such as vehicle movement. The other two datasets are highly dynamic indoor datasets, TUM RGB-D57 and BONN RGB-D6. They contain a variety of scenarios involving human activity, occlusions, and non-rigid body changes, posing greater challenges. The Absolute Trajectory Error (ATE) and Relative Pose Error (RPE) are employed as primary metrics to assess the accuracy. The RPE comprises both translational and rotational components. Therefore, the ATE, translational RPE, and rotational RPE Root Mean Square Error (RMSE) are used to further measure the trajectory’s accuracy and stability. The ATE RMSE is defined in Eq. (4).

Where \(\:N\) represents the number of frames, \(\:{T}_{gt,i}\) represents the ground truth pose of the \(\:i\)-th frame, \(\:{T}_{est,i}\) denotes the estimated pose of the \(\:i\)-th frame, and \(\:trans\)() refers to taking the translation part of the pose. The translational RPE and rotational RPE RMSE are defined in Eqs. (5) and (6).

Where \(\:{P}_{i}\) denotes the estimated pose at timestamp \(\:i\), \(\:{Q}_{i}\) represents the ground truth pose at timestamp \(\:i\), \(\:\varDelta\:t\) denotes the time interval, \(\:trans\)() refers to taking the translational component of the pose, and \(\:angle\)() refers to taking the rotational component of the pose. The TUM evaluation tool57 (https://cvg.cit.tum.de/data/datasets/rgbd-dataset/tools) was used for evaluations. In terms of dense maps, since ground truth dense maps are unavailable in public datasets, qualitative visual comparisons are used to evaluate reconstruction quality.

Implementation details

All experiments were performed on a desktop computer with an Intel Core i9-14900KF CPU, an NVIDIA RTX 4080 SUPER GPU and 32GB memory. Timing experiments were also conducted on a Jetson AGX Orin. The system was built on the ORB-SLAM317, using both C + + and Python. Our method was compared against four representative SLAM approaches: ORB-SLAM3, DS-SLAM10, DynaSLAM11, and RDS-SLAM47. For ORB-SLAM3, DS-SLAM, DynaSLAM, and our method, we executed five independent runs for each sequence, and then calculated both the mean and standard deviation (S.D.) of RMSE. We also evaluated the robustness of each method by calculating the success rates. A run was considered successful if the trajectory data were successfully stored and the corresponding evaluation results were output. For RDS-SLAM, we directly cited the results from the publication. Note that the RDS-SLAM paper conducted experiments on a GeForce RTX 2080Ti GPU, while our experiments were performed on an NVIDIA RTX 4080 SUPER GPU. This difference limits a direct comparison with the results reported in their paper, mainly due to variations in processing speed. RDS-SLAM includes a semantic thread and a semantic-based optimization thread, which run in parallel with other threads. Consequently, the tracking thread does not need to wait for semantic information. This design can improve tracking speed. However, it cannot guarantee that semantic information is available for every frame. In general, higher computational performance enables the acquisition of more semantic information. If RDS-SLAM were executed in our environment, it would likely obtain more semantic information and achieve higher tracking accuracy. Unfortunately, we were unable to successfully deploy RDS-SLAM on our system. Therefore, we can only cite the results from their original publication. The mean and S.D. are shown in this paper, which indicate the robustness and stability of the system. For the mean values, the best results are highlighted in bold in the tables. Notably, all trajectories are evaluated without post-hoc scale correction to better reflect each method’s scale recovery capabilities. Avoiding post-hoc scale correction is essential to preserve each algorithm’s inherent scale estimation, rather than aligning it to the ground truth. This ensures that the scale-aware capabilities of different methods can be fairly evaluated.

KITTI dataset

The KITTI dataset56 includes stereo sequences captured by in-vehicle equipment in outdoor environments. The setup is characterized by a baseline of 54 cm, 10 Hz operating frequency, and 1241 × 376 resolution. Dynamic elements are included in the sequences but occupy a small portion of frames. Tables 2, 3 and 4 present the quantitative comparison results of ORB-SLAM317, DynaSLAM11, and our method for 11 sequences with publicly available ground truth. All results were obtained through our own evaluations. To adapt to the TUM evaluation tool57, the ground truth and timestamp files were converted to TUM format.

As we can see from Tables 2, 3 and 4, for monocular, SDMFusion significantly reduces ATE and Translational RPE RMSE across all sequences. Additionally, in sequence 05, containing numerous dynamic objects, SDMFusion successfully rejects dynamic features and avoids trajectory drift. For Rotational RPE RMSE, ORB-SLAM3, DynaSLAM, and our method, each has its respective strengths and limitations. These improvements are primarily attributed to the scale-depth optimization and dynamic feature rejection strategies. In terms of Stereo ATE and Translational RPE RMSE, ORB-SLAM3, DynaSLAM, and SDMFusion achieved the best performance on 1, 5, and 5 sequences, respectively. For Rotational RPE RMSE, DynaSLAM and SDMFusion performed best on 2 and 9 sequences, respectively. This validates the efficacy and precision of the dynamic feature rejection method proposed. In terms of success rates, only the monocular configuration of DynaSLAM failed once on sequence 02, resulting in an 80% success rate. All other configurations achieved a 100% success rate. In summary, while both our method and DynaSLAM demonstrate high accuracy, the proposed approach exhibits significantly superior robustness. Furthermore, Fig. 4 illustrates the ATE trajectory comparison results between SDMFusion and ORB-SLAM3 across four KITTI sequences.

ATE for ORB-SLAM3 and SDMFusion on four sequences of the KITTI dataset.

Dense maps for ORB-SLAM3 and SDMFusion on three sequences of the KITTI dataset.

Figure 4 shows that for stereo, SDMFusion provides slight improvements over ORB-SLAM3 in low-dynamic outdoor environments. However, for sequence 05, our method achieves a more significant improvement because of more dynamic elements. In the monocular case, our method yields clearly visible improvements. Furthermore, Fig. 5 shows the dense reconstruction results using both our method and ORB-SLAM3. To ensure a fair comparison, the SGBM55 stereo dense reconstruction method was incorporated into ORB-SLAM3. The results indicate that SDMFusion can accurately remove dynamic regions, preserving only static regions for reconstruction. Consequently, our maps are denser and more complete.

TUM RGB-D dataset

The TUM RGB-D Dataset57 is a popular indoor dataset in dynamic SLAM, with all sequences recorded at 640 × 480 resolution. In this paper, the dynamic sequences are chosen for experiments. In this section, our method is compared with ORB-SLAM317 and several advanced dynamic SLAM methods, including DS-SLAM10, DynaSLAM11, and RDS-SLAM47. Tables 5, 6 and 7 shows the quantitative comparison results. The results of ORB-SLAM3, DS-SLAM and DynaSLAM are obtained through our evaluations, and the results of RDS-SLAM are cited from the paper.

The results show that in the monocular setting, for ATE and Translational RPE RMSE, SDMFusion achieves the best performance on 8 sequences. DynaSLAM’s best performance on the fr3_sitting_rpy sequence is misleading. It stems from an incomplete trajectory, as evidenced by a keyframe file only one-fifth the size of ORB-SLAM3’s or our method’s. This indicates tracking failures, further supported by two crashes in five runs. Moreover, it should be noted that for some sequences, such as fr3_sitting_rpy and fr3_sitting_static, ORB-SLAM3 achieves satisfactory performance. However, ORB-SLAM3 does not actually recover trajectories with absolute scale. The limited camera motion and the recovered small-scale passively suppress the errors. For Rotational RPE RMSE, ORB-SLAM3, DynaSLAM, and SDMFusion achieved the best performance on three sequences, respectively. The best performance of SDMFusion demonstrates the efficacy and accuracy of our dynamic feature rejection and absolute scale recovery strategy.

Under the RGB-D configuration, for ATE and Translational RPE RMSE, SDMFusion achieved the best performance on 2 sequences and the second-best on 4 sequences. Furthermore, it attained the smallest sum of mean errors across the nine sequences, indicating its overall superior performance. ORB-SLAM3 performed optimally on two low-dynamic sequences but exhibited significantly poor accuracy in high-dynamic scenarios. While DS-SLAM achieved the best result on one low-dynamic sequence, it yielded exceedingly large errors on the fr3_walking_rpy and fr3_walking_xyz sequences. DynaSLAM demonstrated accuracy comparable to SDMFusion, achieving optimal results on four sequences. However, its overall inferior performance on low-dynamic sequences resulted in slightly worse overall performance compared to our method. For Rotational RPE RMSE, ORB-SLAM3, DS-SLAM, DynaSLAM, and our method achieved the best performance on 2, 1, 2, and 4 sequences, respectively. In terms of robustness, the monocular configuration of DynaSLAM achieved success rates of only 60%, 0%, and 20% on the fr3_sitting_rpy, fr3_sitting_static, and fr3_walking_static sequences, respectively. The RGB-D configuration of DynaSLAM attained an 80% success rate on both the fr2_desk_with_person and fr3_walking_halfsphere sequences. All other tested configurations achieved a 100% success rate. In summary, while both our method and DynaSLAM demonstrate high accuracy, the proposed approach exhibits significantly superior robustness.

ATE for ORB-SLAM3 and SDMFusion on four sequences of the TUM RGB-D dataset.

Moreover, Fig. 6 presents the ATE trajectory comparison results between SDMFusion and ORB-SLAM3 in four high-dynamic sequences. It can be seen that the trajectories of SDMFusion are closer to ground truth for both monocular and RGB-D, indicating superior accuracy and consistency. The reconstruction results of ORB-SLAM3 and SDMFusion on four high-dynamic sequences are also presented in Fig. 7. For a fair comparison, an RGB-D dense mapping module was added to ORB-SLAM3, and RGB-D reconstruction results were compared. The results indicate that ORB-SLAM3 produces ghosting artifacts from moving objects. Thus, the static scene is heavily occluded, and the geometry of the scene exhibits drift. In contrast, SDMFusion successfully constructs a complete dense map of the static environment without ghosting or drift. The reconstructed map exhibits higher completeness, more accurate geometric preservation, and significantly improved visual quality compared to ORB-SLAM3.

Dense maps for SDMFusion and ORB-SLAM3 on four sequences of the TUM RGB-D dataset.

BONN RGB-D dataset

The BONN RGB-D dataset6 is another important dataset that has been widely adopted in dynamic SLAM research. The dataset includes 2 static and 24 dynamic sequences with a resolution of 640 × 480, encompassing diverse motions like box lifting and balloon interaction. Each sequence is accompanied by high-precision groundtruth trajectories acquired using the Optitrack Prime 13 motion capture system. The evaluation results are summarized in Tables 8, 9 and 10. All results were obtained through our own evaluations. In Tables 8, 9 and 10, the obstructing_box and nonobstructing_box will be shortened as o_box and no_box.

The results show that for the monocular configuration, ORB-SLAM3, DynaSLAM, and our method achieved the best ATE RMSE on 1, 4, and 19 sequences, respectively. Regarding Translational RPE RMSE, ORB-SLAM3, DynaSLAM, and our method attained optimal performance on 7, 16, and 1 sequence(s), respectively. As for Rotational RPE RMSE, ORB-SLAM3, DynaSLAM, and our method yielded the best results on 2, 10, and 12 sequences, respectively. Under the RGB-D configuration, DS-SLAM, DynaSLAM, and our method achieved the best ATE RMSE on 1, 13, and 10 sequences, respectively. For Translational RPE RMSE, DS-SLAM, DynaSLAM, and our method obtained optimal performance on 1, 12, and 13 sequences, respectively. In terms of Rotational RPE RMSE, DS-SLAM, DynaSLAM, and our method demonstrated the best performance on 3, 7, and 14 sequences, respectively. Particularly, we observed that our method does not perform well on the moving_o_box series sequences. In the moving_o_box sequence scenario, the moving box occupies more than 80% of the image area across consecutive frames, and no other dynamic objects are present in the scene. As a result, our method incorrectly interprets the entire scene as static and fails to perform dynamic rejection. Consequently, our approach experiences tracking drift. Therefore, our method becomes ineffective in the absence of identifiable dynamic objects within the image. In terms of robustness, the monocular configuration of DynaSLAM achieved a success rate of only 20% on the balloon, balloon2, and crowd3 sequences. On the crowd2, person_tracking, synchronous, and synchronous2 sequences, it attained success rates of 40%, 60%, 0%, and 80%, respectively. The RGB-D configuration of DynaSLAM attained an 80% success rate on the balloon, moving_o_box2, placing_no_box3, and placing_o_box sequences. All other tested configurations achieved a 100% success rate. In summary, while both our method and DynaSLAM demonstrate high accuracy, the proposed approach exhibits significantly superior robustness.

ATE for SDMFusion and ORB-SLAM3 on four sequences of the BONN RGB-D dataset.

Moreover, Fig. 8 presents the ATE for SDMFusion and ORB-SLAM3 for four representative sequences. The results show that more accurate trajectories are achieved by the proposed method for both monocular and RGB-D. To further compare the reconstruction quality, the RGB-D dense mapping module was integrated into ORB-SLAM3. Figure 9 shows the reconstruction results for ORB-SLAM3 and our method across four sequences. The findings indicate that ORB-SLAM3’s reconstruction is characterized by obvious character ghosting, misplacement of static objects, and alterations of scene geometry. For example, the position of the small yellow car is clearly deviated. In contrast, a clear and complete dense map is constructed by the proposed method, without ghosting or drifting problems. The scene geometry is fully restored, and more details are achieved, such as the lattice structure on the left.

Dense maps for SDMFusion and ORB-SLAM3 on four sequences of the BONN RGB-D dataset.

Test device and real environments.

Real dataset

To further assess performance in real-world dynamic scenes, two sequences were captured. Recording was performed using a micro-drone equipped with an Intel RealSense D435i camera. The experiments were conducted via remote control flight within a small office at the School of Aeronautics and Astronautics, Zhejiang University. The two sequences featured varying human activities and motions. The hardware device and real environments for experiments are shown in Fig. 10. Furthermore, Fig. 11 compares the reconstruction results of ORB-SLAM317 and SDMFusion. As shown in the figure, the reconstruction produced by ORB-SLAM3 retained numerous human silhouettes and exhibited noticeable trajectory drift. Specifically, several spurious red points appeared on the right, indicating incorrect mapping of environments. In contrast, dynamic elements were accurately excluded by our method, and only the static environment was reconstructed. Moreover, no drift was observed in the map, demonstrating enhanced stability and robustness of our method in real-world dynamic environments.

Dense maps for ORB-SLAM3 and SDMFusion on the real dataset.

Ablation study

To clarify the specific contributions of each module, ablation experiments are designed in this section. Dynamic sequences from the TUM RGB-D dataset57 are selected for the ablation experiments. Since monocular depth estimation is mainly useful for monocular localization, the results for monocular are presented in this section. Five comparison configurations are set. w/o Depth indicates that the monocular depth estimation module is removed. w/o Segment indicates that instance segmentation is removed, and only the moving consistency check is retained for dynamic feature rejection. w/o Check indicates that the moving consistency check is removed, relying solely on instance segmentation for dynamic feature rejection. w/o Spatial-Reclass represents that the spatial-proximity reclassification is removed. Full represents the complete version of the proposed method. Tables 11, 12 and 13 present the results, and bold font is used to denote the best accuracy.

The results show that Full achieves the overall highest accuracy, verifying that each module is essential for improving tracking performance. For ATE and Translational RPE RMSE, the Full configuration achieved the best performance on 8 sequences and the second-best on 1 sequence. Regarding Rotational RPE RMSE, it attained the best performance on 4 sequences and the second-best on 5 sequences. Specifically, w/o Depth cannot recover the absolute scale, leading to a significant accuracy reduction, especially when substantial camera displacement. Although satisfactory results for fr3_sitting_static and fr3_walking_static sequences, this is primarily attributed to limited camera movement. w/o Segment significantly degrades the performance in highly dynamic sequences, indicating that traditional methods are inadequate for complex dynamic scenes. w/o Check and w/o Spatial-Reclass achieve the closest overall performance to Full. However, the occasional deletion of static features and retention of moving features lead to slight degradation in both accuracy and robustness. Besides, in scenarios with drastic dynamic changes, intermittent tracking interruptions are encountered during operation due to fewer static features. Therefore, all modules are important for the system.

Runtime analysis

To validate the real-time performance, we measured the per-frame latency of the core modules and the end-to-end pipeline on the desktop computer and Jetson AGX Orin. ORB-SLAM3 was still selected as the baseline for comparison. Note that for the monocular and RGB-D configurations, the fr3_walking_xyz sequence was used to evaluate the time. For the stereo configuration, sequence 01 was used for evaluation. The results are shown in Table 14. On the desktop computer, the end-to-end pipeline required approximately 61-67ms per frame, corresponding to about 15 FPS. While less efficient than ORB-SLAM3, our method nevertheless demonstrated real-time performance. However, on the Jetson AGX Orin, the processing time increased to approximately 250-280ms per frame (about 4 FPS). Consequently, our current method does not achieve real-time performance on the embedded platform, which represents a key direction for future improvement. However, ORB-SLAM3 still maintained a real-time performance of 12.5 ~ 28 FPS.

Furthermore, a comparative analysis of the depth estimation module was conducted to evaluate the performance before and after TensorRT acceleration. The results are shown in Table 15. As shown in the results, the inference time of the original DepthAnythingV2 is significantly influenced by image resolution. The inference time on KITTI increases approximately 50 ms on the desktop and 1180 ms on Jetson, compared to that on BONN RGB-D and TUM RGB-D datasets. After TensorRT acceleration, the disparity in inference time across different image resolutions is reduced to less than 2 ms, indicating nearly resolution-invariant performance. Furthermore, on the desktop, TensorRT acceleration reduces inference time by 7.27 ms and 56.76 ms, corresponding to efficiency improvements of 25.71% and 72.98%, respectively. On the Jetson platform, the inference time is reduced by 265.99 ms and 1442.95 ms, achieving efficiency gains of 65.90% and 91.29%, respectively.

Discussion

This paper introduces SDMFusion, a comprehensive framework enabling real-time, scale-aware dense mapping with enhanced dynamic robustness. It is compatible with monocular, stereo, and RGB-D cameras. Built on ORB-SLAM3, the absolute scale for monocular is first obtained by incorporating DepthAnythingV2, which also provides refined depth for stereo and RGB-D. Subsequently, YOLO11s-seg, geometric constraints, and moving consistency check are combined to enable efficient and accurate dynamic feature rejection. Finally, a real-time anti-dynamic dense reconstruction module is integrated to generate dynamic-interference-free dense maps in all modes. Extensive experiments demonstrated that SDMFusion can achieve real-time, high-precision, and scale-aware dense reconstruction of static environments in various dynamic scenarios. These experimental results confirm the generality, robustness, and practical value of the proposed method.

Nevertheless, several issues remain that warrant further investigation. Firstly, a performance gap still exists between monocular and stereo/RGB-D, primarily due to limitations in depth estimation accuracy. Future improvements may be explored by integrating enhanced monocular depth prediction algorithms or introducing alternative scale recovery schemes. Secondly, semantically dense mapping methods could be studied to support more advanced navigation and interaction. Thirdly, the proposed method continues to face challenges in scenarios dominated by moving objects. Feature detection strategies emphasizing static regions and matching algorithms reliant on sparse features can be developed. Lastly, efforts will be made to adapt the system for deployment on edge devices like NVIDIA Jetson Orin NX, enabling real-world applications in mobile robotics.

Data availability

The datasets supporting the findings of this study include: (1) The KITTI, TUM RGB-D and BONN RGB-D datasets, publicly available at https://www.cvlibs.net/datasets/kitti/eval_odometry.php, https://cvg.cit.tum.de/data/datasets/rgbd-dataset/download and https://www.ipb.uni-bonn.de/data/rgbd-dynamic-dataset/index.html, respectively; and (2) two self-collected real datasets, were captured by the authors, which are available from the first author upon reasonable request via email: 11924038@zju.edu.cn.

References

Tateno, K., Tombari, F., Laina, I. & Navab, N. Cnn-slam: Real-time dense monocular slam with learned depth prediction. in Proceedings of the IEEE conference on computer vision and pattern recognition. 6243–6252.

Steenbeek, A. & Nex, F. CNN-based dense monocular visual SLAM for real-time UAV exploration in emergency conditions. Drones 6, 79 (2022).

Luo, H. et al. Real-time dense monocular SLAM with online adapted depth prediction network. IEEE Trans. Multimedia. 21, 470–483 (2018).

Ye, X. et al. DRM-SLAM: Towards dense reconstruction of monocular SLAM with scene depth fusion. Neurocomputing 396, 76–91 (2020).

Lee, S., Son, C. Y. & Kim, H. J. Robust real-time RGB-D visual odometry in dynamic environments via rigid motion model. in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). 6891–6898 (IEEE). 6891–6898 (IEEE). (2019).

Palazzolo, E., Behley, J., Lottes, P., Giguere, P. & Stachniss, C. ReFusion: 3D reconstruction in dynamic environments for RGB-D cameras exploiting residuals. in 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). 7855–7862 (IEEE).

Wang, R., Wan, W., Wang, Y. & Di, K. A new RGB-D SLAM method with moving object detection for dynamic indoor scenes. Remote Sens. 11, 1143 (2019).

Saputra, M. R. U., Markham, A. & Trigoni, N. Visual SLAM and structure from motion in dynamic environments: A survey. ACM Comput. Surv. 51, 1–36 (2018).

Runz, M., Buffier, M., Agapito, L. & Maskfusion Real-time recognition, tracking and reconstruction of multiple moving objects. in IEEE international symposium on mixed and augmented reality (ISMAR). 10–20 (IEEE). 10–20 (IEEE). (2018).

Yu, C. et al. DS-SLAM: A semantic visual SLAM towards dynamic environments. in. IEEE/RSJ international conference on intelligent robots and systems (IROS). 1168–1174 (IEEE). (2018).

Bescos, B., Fácil, J. M., Civera, J. & Neira, J. DynaSLAM: Tracking, mapping, and inpainting in dynamic scenes. IEEE robotics and automation letters 3, 4076–4083 (2018).

Zhong, F., Wang, S., Zhang, Z. & Wang, Y. Detect-SLAM: Making object detection and SLAM mutually beneficial. in IEEE winter conference on applications of computer vision (WACV). 1001–1010 (IEEE). 1001–1010 (IEEE). (2018).

Wei, Y., Zhou, B., Duan, Y., Liu, J. & An, D. DO-SLAM: Research and application of semantic SLAM system towards dynamic environments based on object detection. Appl. Intell. 53, 30009–30026 (2023).

Wu, W. et al. YOLO-SLAM: A semantic SLAM system towards dynamic environment with geometric constraint. Neural Comput. Appl. 34, 6011–6026 (2022).

Qin, T., Li, P. & Shen, S. Vins-mono: A robust and versatile monocular visual-inertial state estimator. IEEE Trans. Robot. 34, 1004–1020 (2018).

Mur-Artal, R. & Tardós, J. D. Orb-slam2: An open-source slam system for monocular, stereo, and rgb-d cameras. IEEE Trans. Robot. 33, 1255–1262 (2017).

Campos, C., Elvira, R., Rodríguez, J. J. G., Montiel, J. M. & Tardós, J. D. Orb-slam3: An accurate open-source library for visual, visual–inertial, and multimap slam. IEEE Trans. Robot. 37, 1874–1890 (2021).

Loo, S. Y., Amiri, A. J., Mashohor, S., Tang, S. H. & Zhang, H. CNN-SVO: Improving the mapping in semi-direct visual odometry using single-image depth prediction. in 2019 International conference on robotics and automation (ICRA). 5218–5223 (IEEE).

Yang, N., Wang, R., Stuckler, J. & Cremers, D. Deep virtual stereo odometry: Leveraging deep depth prediction for monocular direct sparse odometry. in Proceedings of the European conference on computer vision (ECCV). 817–833.

Engel, J., Koltun, V. & Cremers, D. Direct sparse odometry. IEEE Trans. Pattern Anal. Mach. Intell. 40, 611–625 (2017).

Yang, N., Stumberg, L., Wang, R. & Cremers, D. D3vo: Deep depth, deep pose and deep uncertainty for monocular visual odometry. in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 1281–1292.

Yin, X., Wang, X., Du, X. & Chen, Q. Scale recovery for monocular visual odometry using depth estimated with deep convolutional neural fields. in Proceedings of the IEEE international conference on computer vision. 5870–5878.

Tiwari, L. et al. Pseudo rgb-d for self-improving monocular slam and depth prediction. in European conference on computer vision. 437–455 (Springer).

Sun, L. et al. Improving monocular visual odometry using learned depth. IEEE Trans. Robot. 38, 3173–3186 (2022).

Bhat, S. F., Alhashim, I., Wonka, P. & Adabins Depth estimation using adaptive bins. in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 4009–4018.

Bhat, S. F. et al. Zero-shot transfer by combining relative and metric depth. arXiv preprint arXiv:2302.12288 (2023).

Eigen, D., Puhrsch, C. & Fergus, R. Depth map prediction from a single image using a multi-scale deep network. Advances neural Inform. Process. systems. 27, 2366-2374 (2014).

Fu, H., Gong, M., Wang, C., Batmanghelich, K. & Tao, D. Deep ordinal regression network for monocular depth estimation. in Proceedings of the IEEE conference on computer vision and pattern recognition. 2002–2011.

Guizilini, V., Vasiljevic, I., Chen, D., Ambruș, R. & Gaidon, A. Towards zero-shot scale-aware monocular depth estimation. in Proceedings of the IEEE/CVF International Conference on Computer Vision. 9233–9243.

Gui, M. et al. DepthFM: Fast Generative Monocular Depth Estimation with Flow Matching. in Proceedings of the AAAI Conference on Artificial Intelligence. 3203–3211.

Yin, W. et al. Metric3d: Towards zero-shot metric 3d prediction from a single image. in Proceedings of the IEEE/CVF international conference on computer vision. 9043–9053.

Hu, M. et al. Metric3d v2: A versatile monocular geometric foundation model for zero-shot metric depth and surface normal estimation. IEEE Trans. Pattern Anal. Mach. Intell. https://doi.org/10.1109/TPAMI.2024.3444912 (2024).

Ke, B. et al. Repurposing diffusion-based image generators for monocular depth estimation. in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 9492–9502.

Li, Z., Bhat, S. F., Wonka, P. & Patchfusion An end-to-end tile-based framework for high-resolution monocular metric depth estimation. in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 10016–10025.

Piccinelli, L. et al. UniDepth: Universal monocular metric depth estimation. in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 10106–10116.

Ranftl, R., Lasinger, K., Hafner, D., Schindler, K. & Koltun, V. Towards robust monocular depth estimation: Mixing datasets for zero-shot cross-dataset transfer. IEEE Trans. Pattern Anal. Mach. Intell. 44, 1623–1637 (2020).

Shao, S. et al. Normal-distance assisted monocular depth estimation. in Proceedings of the IEEE/CVF International Conference on Computer Vision. 7931–7940.

Shao, S. et al. Iebins: Iterative elastic bins for monocular depth estimation. Adv. Neural. Inf. Process. Syst. 36, 53025–53037 (2023).

Wofk, D. et al. Fast monocular depth estimation on embedded systems. in International Conference on Robotics and Automation (ICRA). 6101–6108 (IEEE). 6101–6108 (IEEE). (2019).

Yang, L. et al. Depth anything: Unleashing the power of large-scale unlabeled data. in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10371–10381.

Yang, L. et al. Depth anything v2. Adv. Neural Inf. Process. Syst. 37, 21875–21911 (2024).

Sun, Y., Liu, M. & Meng, M.-H. Improving RGB-D SLAM in dynamic environments: A motion removal approach. Robot. Auton. Syst. 89, 110–122 (2017).

Lu, X., Wang, H., Tang, S., Huang, H. & Li, C. DM-SLAM: Monocular SLAM in dynamic environments. Appl. Sci. 10, 4252 (2020).

Sun, Y., Liu, M. & Meng, M.-H. Motion removal for reliable RGB-D SLAM in dynamic environments. Robot. Auton. Syst. 108, 115–128 (2018).

Badrinarayanan, V., Kendall, A. & Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE transactions on pattern analysis and machine intelligence 39, 2481–2495 (2017).

He, K., Gkioxari, G., Dollár, P. & Girshick, R. Mask r-cnn. in Proceedings of the IEEE international conference on computer vision. 2961–2969.

Liu, Y. & Miura, J. RDS-SLAM: Real-time dynamic SLAM using semantic segmentation methods. IEEE Access 9, 23772–23785 (2021).

Anebarassane, Y., Kumar, D. & Enhancing ORB-SLAM3 with YOLO-based semantic segmentation in robotic navigation. in IEEE World Conference on Applied Intelligence and Computing (AIC). 874–879 (IEEE). 874–879 (IEEE). (2023).

Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. You only look once: Unified, real-time object detection. in Proceedings of the IEEE conference on computer vision and pattern recognition. 779–788.

Liu, W. et al. Ssd: Single shot multibox detector. in European conference on computer vision. 21–37 (Springer).

Liu, Y., Wang, Y., Zhang, H. & Li, Q. Geometric constraints and semantic optimization SLAM algorithm for dynamic scenarios. Sci. Rep. 15, 31864 (2025).

Liu, X., Zhang, X., Yan, X., Liu, P. & Lao, H. RGB-D camera and graph neural network-based SLAM for dynamic and low-texture environments. Sci. Rep. 15, 28214 (2025).

Rublee, E., Rabaud, V., Konolige, K. & Bradski, G. ORB: An efficient alternative to SIFT or SURF. in 2011 International conference on computer vision. 2564–2571 (Ieee).

spacewalk01. depth-anything-tensorrt, (2024). https://github.com/spacewalk01/depth-anything-tensorrt

Hirschmuller, H. Stereo processing by semiglobal matching and mutual information. IEEE Trans. Pattern Anal. Mach. Intell. 30, 328–341 (2007).

Geiger, A., Lenz, P. & Urtasun, R. Are we ready for autonomous driving? the kitti vision benchmark suite. in IEEE conference on computer vision and pattern recognition. 3354–3361 (IEEE). 3354–3361 (IEEE). (2012).

Sturm, J., Engelhard, N., Endres, F. & Burgard, W. & Cremers, D. A benchmark for the evaluation of RGB-D SLAM systems. in 2012 IEEE/RSJ international conference on intelligent robots and systems. 573–580 (IEEE).

Acknowledgements

This research was funded by the “Pioneer” R&D Program of Zhejiang, grant number 2023C03007.

Funding

The “Pioneer” R&D Program of Zhejiang, grant number 2023C03007.

Author information

Authors and Affiliations

Contributions

Conceptualization, Nuo Cen and Yao Zheng; Data curation, Yao Zheng; Formal analysis, Nuo Cen and Tuck-Whye Wong; Funding acquisition, Yao Zheng; Investigation, Nuo Cen and Yi Xu; Methodology, Nuo Cen; Project administration, Yao Zheng; Resources, Yao Zheng; Software, Nuo Cen; Supervision, Yi Xu; Validation, Nuo Cen and Yi Xu; Visualization, Nuo Cen, Yi Xu and Tuck-Whye Wong; Writing – original draft, Nuo Cen; Writing – review & editing, Nuo Cen, Yi Xu, Tuck-Whye Wong and Yao Zheng. All authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Cen, N., Xu, Y., Wong, TW. et al. Scale aware dense dynamic SLAM for monocular, stereo and RGBD cameras. Sci Rep 16, 10285 (2026). https://doi.org/10.1038/s41598-026-41208-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-41208-9