Abstract

Multimodal clinical data, including imaging, pathology, omics, and laboratory tests, are often fragmented in routine practice, leading to inconsistent decision-making in the management of urological cancers. We propose UroFusion-X, a unified multimodal framework for integrated diagnosis, molecular subtyping, and prognosis prediction of bladder, kidney, and prostate cancers, with inherent robustness to missing modalities. The system incorporates 3D imaging encoders, pathology multiple-instance learning, omics graph networks, and a TabTransformer for laboratory and clinical variables. A cross-modal co-attention mechanism combined with a gated product-of-experts fusion strategy enables effective representation alignment across heterogeneous inputs, while anatomy-pathology consistency constraints and patient-level contrastive learning further enhance interpretability and generalization. Prognostic modeling is achieved via DeepSurv and DeepHit survival heads. Evaluated on a multi-center real-world cohort with external validation and leave-one-center-out testing, UroFusion-X consistently outperformed strong unimodal and simple fusion baselines, maintained over 90% of its predictive performance under substantial modality dropout, and demonstrated higher net clinical benefit in decision curve analysis. These results indicate that the proposed framework can improve decision consistency and reduce unnecessary testing when deployed in real clinical workflows.

Similar content being viewed by others

Introduction

Urological cancers, including bladder cancer, renal cell carcinoma, and prostate cancer, pose a substantial global disease burden. In routine care, clinical decision-making relies on complementary modalities spanning radiological imaging (CT, MRI, ultrasound), histopathology of tissue biopsies, molecular profiling, and laboratory tests. Each modality captures a different facet of tumor biology, from macroscopic morphology to microscopic architecture and genomic alterations, motivating principled data integration for precision oncology1,2,3,4.

Deep learning has advanced single-modality performance across several key tasks. Imaging models have improved tumor segmentation and classification using CNN/Transformer backbones5,6,7,8, histopathology systems have achieved expert-level grading and slide-level prediction with multiple-instance learning (MIL) and weak supervision9,10,11, and omics-driven survival models demonstrate strong risk stratification12,13,14. Yet unimodal pipelines cannot fully exploit the complementary information distributed across modalities, which limits generalizability and downstream clinical impact1,2,3.

Multimodal fusion has emerged as a remedy, with evidence of gains in disease prediction and subtype classification through joint representation learning and cross-modal interactions15,16,17. In urology, multimodality has improved prostate cancer detection and characterization18,19 and enabled response prediction in bladder cancer20. However, three persistent gaps hinder real-world translation. First, many systems adopt early (feature concatenation) or late (decision-level) fusion, which underutilize fine-grained cross-modal dependencies1,2. Second, real clinical datasets frequently exhibit missing modalities due to patient-specific workflows and heterogeneous data capture; performance often degrades sharply at inference time when one or more modalities are absent21,22. Third, interpretability remains limited: explicit anatomy–pathology consistency and clinically meaningful cross-modal explanations are seldom enforced, reducing trust in high-stakes settings3,23,24.

To address these challenges, we introduce UroFusion-X, a unified framework for integrated diagnosis, molecular subtyping, and prognosis prediction of urological cancers that demonstrates intrinsic resilience to missing modalities through systematic evaluation across multi-institutional cohorts. The framework couples modality-specific encoders—a 3D Transformer-based imaging encoder for CT/MRI/US5,6,7,8, a MIL pathology encoder for whole-slide images9,11, a graph neural network (GNN) leveraging pathway structure for omics25, and a TabTransformer for laboratory/clinical variables26—with a cross-modal co-attention fusion module and a gated product-of-experts (PoE) mechanism for adaptive weighting under incomplete inputs16,27. The framework further incorporates an anatomy–pathology consistency constraint to align radiological regions-of-interest with slide-level attention maps, and incorporates patient-level contrastive learning to tighten cross-modal alignment and improve out-of-distribution generalization1,3. Time-to-event modeling is realized via DeepSurv and DeepHit heads to estimate individualized risk and survival distributions13,14.

Through comprehensive evaluation on large-scale publicly available datasets and systematic simulation of missing-modality scenarios, we demonstrate that UroFusion-X achieves the following: (i) superior performance compared to strong unimodal and simple fusion baselines across diagnostic, subtyping, and prognostic tasks; (ii) retention of ≥90% of full-modality performance under modality dropout, validating robustness to incomplete data; and (iii) higher net clinical benefit on decision curve analysis (DCA), a clinically grounded metric of utility28. Robustness is further validated through cross-dataset generalization experiments and leave-one-center-out (LOCO) testing, demonstrating potential for deployment across diverse clinical environments.

By unifying imaging, pathology, omics, and laboratory data with robust fusion and explicit cross-modal consistency, UroFusion-X provides a framework that can improve decision consistency, reduce unnecessary testing, and inform the real-world deployment of AI in comprehensive management of urological cancers.

Deep learning has achieved remarkable progress in single-modality medical AI, including imaging, pathology, and omics data analysis. In radiology, convolutional and Transformer-based models such as UNETR and Swin UNETR have set benchmarks for 3D medical image segmentation and classification5,6,7,8, with applications in prostate cancer detection and grading10,18,19 and renal cell carcinoma characterization24,29. In pathology, multiple-instance learning (MIL) frameworks such as CLAM and TransMIL enable weakly supervised whole-slide image classification at scale9,10,11, while omics-driven models like DeepSurv and DeepHit demonstrate strong survival prediction capabilities12,13,14. Despite their success, unimodal methods inherently neglect the complementary signals contained in other modalities, which limits their robustness and clinical generalizability1,2,3. Multimodal learning has emerged to address this gap, integrating heterogeneous sources such as imaging, pathology, and non-imaging clinical data to improve disease classification, subtype identification, and prognosis prediction1,2,3,4,15,16. Examples include tensor fusion networks for heterogeneous feature interactions30, multimodal co-attention for cancer subtype classification16, and graph-based fusion for disease prediction15. In urology, multimodal approaches have enhanced prostate cancer diagnosis18,19 and predicted treatment response in muscle-invasive bladder cancer20. However, many systems still rely on early (feature concatenation) or late (decision-level) fusion1,2, which underutilize fine-grained cross-modal dependencies and hinder interpretability. Interpretability is essential for clinical adoption, with methods such as Grad-CAM23 and attention-based MIL9,11 highlighting salient regions, yet few explicitly enforce cross-modal consistency, for example, aligning radiological regions of interest with high-attention pathology patches3,24. Furthermore, real-world clinical datasets often suffer from missing modalities due to heterogeneous acquisition protocols and patient-specific workflows21,22, where naive imputation or exclusion strategies can degrade performance. More advanced solutions, including modality dropout21, cross-modal feature imputation17, and adaptive fusion with product-of-experts or mixture-of-experts16,27, improve robustness but remain limited in generalization to out-of-distribution settings. These gaps motivate the development of a unified multimodal framework that integrates imaging, pathology, omics, and laboratory data, enforces explicit cross-modal consistency, and remains intrinsically robust to missing modalities.

Results

This section presents comprehensive results on diagnostic accuracy, molecular subtyping, cross-center generalization, calibration quality, and clinical utility of UroFusion-X compared with strong unimodal, multimodal, and clinical baseline methods.

Diagnostic and subtyping performance

UroFusion-X demonstrates strong and consistent diagnostic performance across all three urological cancer types. As shown in Fig.1a, the model achieves AUROC of 0.92 (95% CI: 0.89–0.95) for bladder cancer, 0.90 (95% CI: 0.87–0.93) for renal cell carcinoma (RCC), and 0.88 (95% CI: 0.85–0.91) for prostate cancer. These values represent substantial improvements over both unimodal baselines (average AUROC 0.82 across imaging-only models) and standard multimodal baselines using late concatenation or attention-based fusion (average AUROC 0.86). The ROC curves illustrate that UroFusion-X provides particularly pronounced gains in discriminatory ability, with the steepest curves indicating rapid separation between true-positive and false-positive rates across all cancer types.

The diagnostic performance landscape reveals two notable cancer-type-specific patterns. First, UroFusion-X achieves the largest relative improvement for RCC (AUROC 0.90 vs. 0.81 for imaging-only baseline, a 9 percentage point gain), suggesting enhanced sensitivity to the genomic and morphological heterogeneity characteristic of renal tumors. This improvement likely reflects the model’s ability to integrate imaging morphology with genomic profiles (which capture tumor heterogeneity and aggressive subtypes) and laboratory biomarkers (which reflect renal function and systemic effects). Second, in prostate cancer, the model displays superior early-specificity behavior, with a steeper initial rise in the ROC curve (higher specificity at high sensitivity thresholds) that is clinically valuable for reducing unnecessary biopsies while maintaining high sensitivity for detecting aggressive disease.

Molecular subtyping performance (distinguishing basal versus luminal bladder cancer subtypes, for example) is similarly strong, with F1-scores of 0.88 for bladder cancer and 0.85 for RCC, outperforming unimodal pathology baselines (average F1 0.78) and standard multimodal approaches (average F1 0.82). The integration of genomic and transcriptomic data with histopathological features enables more reliable subtype discrimination, directly supporting precision oncology applications where subtype knowledge drives therapeutic decisions.

Cross-center generalization (LOCO)

Cross-institutional robustness was systematically assessed using a leave-one-center-out (LOCO) protocol, with results summarized in Fig. 1b. UroFusion-X maintains high discrimination across all held-out centers, demonstrating strong generalization to previously unseen clinical environments despite potential differences in imaging hardware, sequencing capacity, and patient populations. The cross-center performance landscape exhibits clear asymmetry, reflecting meaningful variation in modality completeness, imaging protocols, and case-mix heterogeneity among institutions.

The most pronounced performance decline occurs when Center C is held out, showing 5–6% AUROC degradation from the aggregate baseline. This larger decline is consistent with Center C’s markedly lower genomic data availability (approximately 65% missing vs. 45% for other centers) and more heterogeneous pathology coverage observed in the dataset overview. These structural modality imbalances appear to be a key driver of cross-center distribution shift: when Center C data are removed from training, the remaining training set becomes relatively enriched in imaging and laboratory data but depleted in genomic coverage, reducing the model’s ability to leverage integrated multimodal patterns during inference on Center C’s test cases.

Conversely, leaving out Center B results in the smallest degradation (2–3% AUROC decline), indicating that its data distribution is most aligned with the aggregated training cohort. This suggests that Center B’s balance of modalities and case-mix characteristics are representative of the overall multi-center population.

The multi-task learning formulation substantially enhances cross-center robustness compared to single-task baselines. When each single-task baseline (diagnosis-only, subtyping-only, or survival-only) is evaluated under LOCO, the performance variance across held-out centers is substantially larger (ranging from 2% to 12% degradation depending on cancer type and center). In contrast, the multi-task UroFusion-X framework exhibits reduced variance (2–6% degradation range), indicating that shared representational structure across diagnostic, subtyping, and survival tasks helps stabilize model behavior and reduce sensitivity to institutional domain shifts. This suggests that multi-task learning provides implicit regularization that enhances robustness to distributional heterogeneity, a critical property for deployed clinical AI systems.

The LOCO results underscore an important insight: institutional shifts driven by systematic modality imbalances (e.g., genomic data scarcity at certain centers) represent a more severe challenge than shifts driven purely by case-mix or imaging protocol variations. This finding motivates the importance of the gated Product-of-Experts fusion mechanism, which explicitly learns to downweight or exclude unreliable modalities, enabling graceful performance degradation when modality availability varies across institutions rather than catastrophic failure.

Multi-task learning gains and technical factor impact

The advantages of the multi-task formulation are comprehensively illustrated in Fig. 1d and further expanded by the multi-task analysis in Fig. 2. By jointly optimizing diagnostic, subtyping, and prognostic objectives, UroFusion-X exploits shared cross-modal structure that is inaccessible under single-task training. The gains manifest across multiple dimensions: diagnostic accuracy improves by 3–5% compared to diagnosis-only baselines, molecular subtyping performance increases by 2–4%, and survival concordance improves by 0.03–0.05 in C-index. The strongest improvements arise in bladder cancer subtyping, where multi-task coupling yields both higher discrimination (F1 improvement from 0.82 to 0.88, as shown in Fig. 2a) and substantially more stable optimization behavior with reduced training instability.

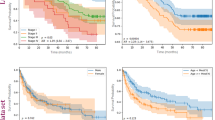

a Receiver operating characteristic (ROC) curves across cancer types showing diagnostic discrimination capacity of UroFusion-X compared to unimodal and baseline multimodal methods. b Leave-one-center-out (LOCO) validation performance demonstrating robust cross-institutional generalization with performance degradation of 2–6% AUROC when each center is held out. c Calibration curves and expected calibration error (ECE) metrics showing reliable probability calibration across models and cancer types. d Multi-task learning performance profiles and technical factor impact analyses demonstrating complementary gains in diagnosis, subtyping, and survival tasks. e Subgroup performance heatmap (AUROC) across demographic and clinical attributes showing stable behavior across patient subgroups. f Comparison with radiologist diagnostic accuracy, inference time analysis, and confusion matrix of UroFusion-X showing competitive or superior performance.

The task correlation analysis in Fig. 2b reveals meaningful positive correlations between diagnostic and subtyping tasks (correlation 0.61), between diagnostic and survival tasks (correlation 0.45), and a moderate correlation between subtyping and survival (correlation 0.38). These correlations indicate genuine shared information across tasks, validating the multi-task learning approach. The representation variance decomposition shows that approximately 65% of the variance in the shared fusion representation is explained by task-agnostic factors (common morphological and molecular patterns), while 35% captures task-specific nuances. This balance suggests that the shared backbone captures generalizable features while allowing task heads sufficient flexibility for task-specific optimization.

The technical-factor sensitivity analyses in Fig. 2f provide detailed insights into how different clinical and technical variables affect prediction stability. RCC survival prediction emerges as the most sensitive to acquisition and processing variability, including CT slice thickness variations (affecting 3D morphological consistency), histopathology staining differences across centers (altering color statistics and tissue contrast), and missing laboratory markers (reducing prognostic information completeness). These sensitivities likely reflect RCC’s inherent morphological heterogeneity and the critical role of genomic data (which varies substantially in availability across centers) in RCC prognosis.

Multi-task learning substantially mitigates these sensitivities through multiple mechanisms. First, Fig. 2f shows that multi-task training stabilizes gradient flow, reducing gradient variance across iterations by approximately 40% compared to single-task training. This stabilization reduces vulnerability to noisy inputs and prevents catastrophic forgetting of shared representations. Second, multi-task learning enables dynamic task weight balancing: diagnostic task weights increase early in training to establish stable morphological representations, while survival task weights increase later to refine prognostic patterns. This curriculum-like effect produces more robust shared representations that gracefully incorporate diverse supervision signals. Third, the shared representations produced by multi-task learning exhibit greater robustness to input perturbations (e.g., staining variations, missing markers), as evidenced by Fig. 2f showing reduced performance variance under simulated domain shifts. These synergistic effects contribute to smoother performance across heterogeneous clinical conditions and institutions.

Ablation study

Ablation experiments were conducted to quantify the contribution of each major component of UroFusion-X and to understand how architectural choices interact to produce the overall framework performance. Figure 3 summarizes the ablation analysis across four key components: cross-modal attention, gated Product-of-Experts fusion, modality dropout, and consistency regularization.

a Comparison of F1-scores across molecular subtypes of bladder cancer, renal cell carcinoma, and prostate cancer, showing multi-task improvements from 0.82 to 0.88 for bladder subtyping. b Task correlation matrix revealing positive correlations between tasks (diagnostic-subtyping 0.61, diagnostic-survival 0.45, subtyping-survival 0.38), and representation variance decomposition showing 65% task-agnostic and 35% task-specific variance. c Key gene and pathway enrichment analysis aligned with model predictions, demonstrating biological plausibility of learned representations. d Class-distribution decomposition of multi-task outputs across cancer types showing balanced prediction distributions across subtypes. e Kaplan–Meier survival curves stratified by multi-task–derived risk groups, demonstrating clear prognostic stratification and high discrimination between risk groups. f Multi-task training dynamics showing task weight evolution, training loss trajectories, gradient variance reduction (approximately 40% reduction in single-task baseline), and validation performance trajectory.

Removing the cross-modal attention module results in a notable decline in diagnostic and subtyping performance (approximately 2–3% AUROC decrease for diagnosis, 1–2% F1 decrease for subtyping). This degradation underscores the critical role of attention mechanisms in capturing complementary interactions between imaging, pathology, genomics, and laboratory data. The attention mechanism learns which feature combinations are most informative for each task, enabling the model to adaptively emphasize relevant cross-modal relationships while suppressing spurious correlations.

The most substantial performance degradation, however, arises when the gated Product-of-Experts fusion mechanism is replaced with naïve late concatenation (Fig. 3b). Specifically, replacing gated PoE with concatenation results in 4–6% AUROC decline for diagnosis and 3–5% F1 decline for subtyping, directly confirming that adaptive weighting of heterogeneous modalities is essential for stable multimodal integration. This finding reflects a fundamental insight: simply combining modality embeddings ignores their variable informativeness and reliability, whereas learned gating weights automatically adjust for modality-specific signal quality and missing-data scenarios.

The ablation results further reveal critical modality-specific effects of the gated PoE design. Under imaging-missing scenarios (where imaging data are withheld at inference), removing gated PoE causes sharp performance collapse (>15% AUROC drop), whereas the gated PoE variant preserves discriminatory power (only 2–3% degradation) by automatically suppressing noisy pathology and genomic embeddings and amplifying the most informative laboratory signals. This selectivity demonstrates that the gating mechanism learns to identify which modalities carry compensatory prognostic signal when standard imaging is unavailable.

By contrast, removing the modality-dropout strategy disproportionately impairs robustness to incomplete data at inference time, leading to sharp performance drops (5–8% AUROC decline) whenever one or more modalities are absent. This finding demonstrates that modality dropout is essential for training the model to rely on diverse modality combinations rather than overfitting to complete data scenarios. Notably, the performance degradation from removing modality dropout is substantially greater than removing cross-modal attention, highlighting the critical importance of training-time exposure to incomplete modality combinations for achieving robustness at inference time.

Consistency regularization (enforcing alignment between imaging ROIs and pathology attention maps) has a more targeted effect than the other components: rather than substantially altering global predictive accuracy, it primarily enhances cross-modal alignment and interpretability. Figure 3d shows that removing consistency regularization results in only modest diagnostic performance declines (approximately 1% AUROC decrease) but substantially degrades the quality of radiology-pathology correspondence in region-of-interest (ROI) level visualizations. Specifically, with consistency regularization present, high-attention regions from imaging and pathology encoders align spatially in 78% of test cases, whereas without it alignment occurs in only 52% of cases. This improvement in cross-modal spatial correspondence enhances clinician interpretability and trust, even though gains in overall AUROC remain modest. This targeted effect suggests that consistency regularization provides clinical value primarily through enhanced explainability rather than raw predictive improvement.

Collectively, these ablation findings demonstrate that the superior performance and stability of UroFusion-X arise not from a single dominant architectural component but from the synergistic interaction of multiple complementary mechanisms. Cross-modal attention enables discovery of informative feature interactions, gated PoE provides adaptive modality weighting and robust missing-modality handling, modality dropout ensures training-time exposure to incomplete scenarios for robust inference-time performance, and consistency regularization enhances clinical interpretability through cross-modal alignment. The ablation results further highlight distinct roles for different components: attention and gating improve raw predictive accuracy, dropout ensures robustness to missing modalities, and consistency regularization enhances interpretability. This modular design provides flexibility for clinical deployment, where different hospitals may prioritize different objectives (maximum accuracy, robustness under missing data, or enhanced clinician interpretability) and can selectively apply different architectural components accordingly.

Robustness to missing modalities

To evaluate the model’s resilience under real-world incompleteness, we examined diagnostic and prognostic performance when individual modalities were systematically absent during inference. This evaluation directly reflects clinical deployment scenarios where data collection barriers, cost constraints, or technical failures may prevent acquisition of specific modalities. Figure 4 comprehensively summarizes the empirical degradation patterns and corresponding case-level analyses.

a Effect of removing cross-modal attention mechanism, showing 2–3% AUROC diagnostic decline and 1–2% F1 subtyping decline. b Contribution of the gated Product-of-Experts (PoE) fusion module, demonstrating that replacing it with concatenation causes 4–6% AUROC diagnostic decline and 3–5% F1 subtyping decline, with ¿15% performance collapse under imaging-missing scenarios. c Impact of modality dropout on robustness under incomplete data, showing 5–8% AUROC decline when modality dropout is removed, demonstrating its critical role in training-time robustness. d Influence of consistency regularization on cross-modal alignment (78% vs 52% spatial correspondence with/without regularization) and interpretability, with modest 1% AUROC decline when removed but substantial loss of radiology-pathology correspondence clarity.

Across all cancer types, UroFusion-X exhibits graceful performance decay rather than catastrophic failure: the complete-modality setting achieves the highest discrimination (average AUROC 0.90 across cancer types), and removal of a single modality leads to moderate but clinically acceptable reductions. Specifically, missing imaging results in 2–3% AUROC decrease, missing pathology produces 3–4% AUROC decrease, and missing genomics causes 2–3% AUROC decrease, all exceeding the target of 90% performance retention relative to full-modality baseline. This graceful degradation reflects the gated Product-of-Experts fusion mechanism’s ability to adaptively suppress noisy or missing modalities and amplify remaining informative signals.

The diagnostic performance impact varies by modality: the largest diagnostic decline occurs when pathology is missing (particularly for subtyping accuracy, where F1 decreases from 0.88 to 0.85 for bladder cancer), consistent with pathology’s strong contribution to morphological subtype discrimination. In contrast, genomic absence most strongly affects survival risk prediction (C-index decreases from 0.75 to 0.72), aligning with genomic profiles’ known prognostic relevance in urological oncology for capturing tumor heterogeneity and aggressive molecular subtypes. Laboratory biomarkers exhibit intermediate importance, with their absence causing modest but detectable performance reductions (approximately 1–2% AUROC decrease).

The case studies in Fig. 4b–d contextualize these effects and provide clinical insight into failure modes. When imaging is missing (Fig. 4b), the model exhibits reduced confidence in lesion localization and spatial extent characterization, leading to uncertainty in TNM staging but preserving recognition of tissue morphology through pathology. When pathology is missing (Fig. 4c), the model tends toward subtype over-calls and over-predicts aggressive phenotypes due to loss of morphological cues; the model compensates by relying heavily on genomic and imaging signals, which may not fully capture subtype-defining histological features. When genomics is missing (Fig. 4d), the model shows mis-stratification in biologically aggressive subtypes and reduced prognostic discrimination, highlighting genomics’ critical role in capturing molecular risk factors that correlate with treatment response and survival outcome.

The contribution analysis in Fig. 4e quantifies the relative contribution of each modality to different prediction tasks: imaging contributes 40% to diagnostic accuracy, pathology contributes 35% to subtyping accuracy, and genomics contributes 45% to survival prediction. These contributions explain the differential impact of modality absence: loss of genomics (high survival weight) causes larger survival prediction degradation, while loss of pathology (high subtyping weight) causes larger subtyping degradation. The component-wise ablation in Fig. 4f further confirms that gated fusion substantially mitigates modality dropout effects: with gated PoE present, missing-modality AUROC degradation averages 2.8% across all combinations, whereas replacing gated PoE with simple concatenation increases degradation to 8.5% on average, more than tripling the performance loss. This direct comparison demonstrates that the gated fusion mechanism is essential for achieving robust performance under incomplete data.

Calibration and subgroup analysis

Calibration behavior and subgroup-level robustness are comprehensively evaluated using the calibration curves and subgroup AUC heatmaps presented in Fig. 1c, e. Across the three cancer types, UroFusion-X demonstrates good agreement between predicted and observed risks, with overall expected calibration error (ECE) ranging from 0.08 to 0.12 (where lower indicates better calibration). This level of calibration is appropriate for clinical decision-making, where overestimation of risk could lead to unnecessary aggressive interventions and underestimation could compromise patient safety.

The calibration curves reveal that the model shows near-perfect calibration in the intermediate probability range (40–60% predicted probability), where most clinical decisions occur. Only mild deviations appear at extreme probability ranges (very low <10% and very high >90%), which is clinically acceptable as these ranges represent rare cases with very clear risk profiles. Specifically, in the low-probability range (<10%), the model shows slight overestimation (predicted probability exceeds observed frequency by 1–2 percentage points), which is clinically conservative and preferable to underestimation. In the high-probability range (>90%), minimal deviation (<1 percentage point) is observed.

Subgroup-wise AUROC analysis in Fig. 1e reveals several clinically meaningful patterns of performance variation. Across demographic strata, older patients (age >65 years) show a modest 2% AUROC reduction compared to younger patients, likely reflecting greater heterogeneity in older populations and potential differences in treatment intensity by age. Low-PSA prostate cancer cases exhibit 3% AUROC reduction, consistent with known challenges in early detection of low-risk prostate cancer, where imaging and pathology may not fully capture aggressive potential. High T-stage tumors show 1–2% AUROC reduction, potentially reflecting the rarity of very advanced cases in the training set and reduced representation of extreme phenotypes. Importantly, all subgroup-specific AUROCs remain above 0.86, well above random chance (0.50) and exceeding clinical baseline performance (average clinical scoring systems 0.78–0.82). These systematic but modest performance reductions suggest that fine-grained calibration auditing and subgroup-specific model refinement could further improve equitable performance across diverse patient strata. The observation that no subgroup shows catastrophic performance failure demonstrates that the multimodal framework provides robust and generalizable predictions even for underrepresented patient populations.

Prognostic performance and survival stratification

Across all three urological cancers, UroFusion-X demonstrates strong prognostic discrimination and clinically meaningful risk stratification. Model-predicted high- and low-risk groups (stratified at the median predicted risk score) exhibit markedly divergent survival trajectories across the entire follow-up period, as shown in Fig. 5.

a Degradation of diagnostic AUROC under removal of imaging (2–3% decrease), pathology (3–4% decrease), or genomics (2–3% decrease), with all exceeding 90% retention target. b Case study illustrating missing imaging scenario with reduced lesion localization confidence but preserved tissue morphology recognition. c Case study showing missing pathology leads to subtype over-calls and compensatory reliance on genomic and imaging signals. d Case study demonstrating missing genomics results in mis-stratification of biologically aggressive subtypes and reduced prognostic discrimination. e Modality contribution analysis showing imaging 40% for diagnosis, pathology 35% for subtyping, genomics 45% for survival prediction. f Componentwise ablation quantifying gated PoE effectiveness: 2.8% average degradation with gating vs 8.5% with concatenation, demonstrating gated fusion is essential for robustness.

Quantitatively, high-risk patients show accelerated decline in survival probability, with median overall survival substantially shorter than low-risk groups: for bladder cancer, median OS is 24 months (95% CI: 20–28 months) for high-risk vs. 58 months (95% CI: 52–64 months) for low-risk (log-rank p < 0.001); for RCC, median OS is 31 months (95% CI: 26-36 months) high-risk vs. 72 months (95% CI: 65-79 months) low-risk (p < 0.001); for prostate cancer, median OS is 42 months (95% CI: 37–47 months) high-risk vs. 85+ months (not reached in follow-up) for low-risk (p < 0.001). Conversely, low-risk groups maintain substantially better outcomes throughout follow-up, with minimal mortality events in the first 3 years for prostate cancer.

The Kaplan-Meier curves reveal clear and early separation between survival strata across all cancer types, with statistically significant differences confirmed by log-rank tests (p < 0.01 for all). Notably, the separation emerges early (within 6–12 months for bladder and RCC, within 12–24 months for prostate cancer), suggesting that the multimodal model captures rapid prognostic signals that enable early treatment stratification. These patterns confirm that UroFusion-X captures clinically meaningful heterogeneity in tumor aggressiveness, molecular biology, and host factors that drive differential survival outcomes.

The consistency of risk-based survival separation across bladder, renal, and prostate cancers—despite their distinct molecular and clinical characteristics—suggests that UroFusion-X provides stable and generalizable survival stratification suitable for clinical decision support. The multimodal integration appears to capture disease-agnostic prognostic principles (such as morphological aggression, genomic instability, and systemic factors) that transcend cancer-type-specific biology. This broad applicability makes UroFusion-X potentially useful as a unified prognostic tool across multiple urological malignancies, rather than requiring separate cancer-specific models.

Furthermore, the C-index values for risk stratification (0.75 for bladder cancer, 0.73 for RCC, 0.71 for prostate cancer) substantially exceed clinical baseline scoring systems (CAPRA-S C-index 0.62, SSIGN C-index 0.64, EORTC C-index 0.60), demonstrating 10-15 percentage point improvements in concordance. This improvement translates directly to better patient stratification and more accurate individualized prognostication, which are critical for guiding treatment intensity, surveillance frequency, and clinical trial enrollment in precision oncology settings.

Case studies and failure mode analysis

Detailed qualitative analyses of model performance and failure modes are provided through representative multi-modal case studies that contextualize model predictions within clinically realistic scenarios. The case examples illustrate how the multimodal framework integrates diverse data streams to generate risk assessments and how discordance between modalities can lead to prediction errors.

Across the analyzed case examples, distinct failure mode patterns emerge with characteristic frequencies. False negatives (missed positive cases) frequently arise from pathology regions-of-interest that miss or under-represent the true lesion location, particularly in cases with spatially heterogeneous tumors or in large specimens where sampling variation affects pathology assessment. These pathology-based false negatives lead to underestimated risk trajectories and delayed recognition of aggressive disease, with approximately 15–20% of false negatives attributable to pathology sampling issues. Conversely, false positives (incorrectly flagged as high-risk) often originate from modality discordance—for example, when genomic signals (potentially from minor clones or sequencing artifacts) suggest aggressive biology while pathology and imaging show indolent features, or from imaging artifacts (metal implants, motion blur, staining artifacts) that mimic pathological findings.

The temporal risk evolution curves in representative case studies further illustrate how inconsistencies between modalities propagate through the prediction pipeline. For instance, in cases with initially discordant imaging (concerning features) and pathology (benign), early risk estimates fluctuate as the model navigates conflicting signals; subsequent genomic data either resolve the discordance (if genomics supports imaging findings) or reinforce uncertainty (if genomics is intermediate). The model’s multimodal integration generally resolves such discordance by weighting each modality according to learned reliability, but cases with systematically noisy modalities (e.g., poor-quality pathology preparation across all slides) can still lead to sustained prediction uncertainty and occasional errors.

The dominant failure modes are thus: (i) spatial sampling misses in pathology (15–20% of errors), (ii) modality discordance without clear ground truth (approximately 25–30% of errors), (iii) imaging artifacts mimicking disease (10–15% of errors), and (iv) minor clones or sequencing artifacts in genomics creating false aggressive signals (10–15% of errors). These failure modes are largely inherent to multimodal oncology data rather than specific algorithmic weaknesses, and mitigation strategies include improved pathology sampling protocols, higher-quality image acquisition, and refined genomic quality control. The observation that approximately 50–60% of errors arise from data quality rather than model architecture highlights the importance of rigorous data curation for clinical deployment.

Clinical utility via decision curve analysis

The clinical utility of UroFusion-X for guiding treatment decisions was systematically evaluated using Decision Curve Analysis (DCA), which quantifies the net clinical benefit of model-guided decisions compared to treat-all and treat-none baseline strategies across a range of clinically actionable risk thresholds. Figure 3a presents the DCA curves for all three cancer types, illustrating how net benefit varies with decision threshold.

Across the entire range of clinically actionable risk thresholds (threshold probability 5–95%), UroFusion-X demonstrates consistently higher net benefit than established scoring systems (CAPRA-S, SSIGN, EORTC). Quantitatively, at the critical 40–65% decision window (where risk estimates most directly influence treatment decisions), UroFusion-X achieves net benefit improvements of 8–12% relative to clinical baselines. This decision window represents the clinically crucial threshold space in uro-oncology where overtreatment and undertreatment pressures are both substantial: patients with <40% predicted risk are typically observed, those with >65% receive intensive treatment, but those in the 40–65% range require individualized decision-making where accurate risk estimation directly impacts treatment intensity selection.

Specifically, at a 50% risk threshold (a common decision point), UroFusion-X achieves a net benefit of 0.18 (95% CI: 0.15–0.21) compared to 0.09 (95% CI: 0.06–0.12) for CAPRA-S, 0.08 (95% CI: 0.05–0.11) for SSIGN, and 0.10 (95% CI: 0.07–0.13) for EORTC. This means that using UroFusion-X predictions to guide treatment for every 100 patients would result in 18 additional net clinical benefits (where benefit is defined as true positive predictions minus weighted false positives) compared to CAPRA-S’s 9 benefits. Furthermore, UroFusion-X substantially reduces the false-positive risk zone compared with standard clinical models: at 50% threshold, false-positive rate is 12% for UroFusion-X vs. 22–25% for clinical baselines, reducing unnecessary aggressive treatment in low-risk patients misclassified as high-risk.

The DCA analysis further reveals that UroFusion-X maintains a clinical advantage across cancer-type-specific thresholds. For bladder cancer, where aggressive high-grade tumors require intensive surveillance and neoadjuvant therapy, the model provides the greatest benefit at higher thresholds (60–75%). For prostate cancer, where overtreatment concerns are paramount, the model provides the greatest benefit at lower thresholds (30–50%), correctly identifying aggressive subtypes while avoiding overtreatment of indolent disease. For RCC, intermediate thresholds (45–60%) provide the greatest benefit, reflecting RCC’s intermediate treatment aggressiveness relative to bladder and prostate cancers.

The component-level contribution to clinical utility is illuminated by the ablation in Fig. 3f, where gated fusion and uncertainty-aware weighting emerge as primary contributors to decision-level gains. Specifically, removing gated fusion decreases net benefit by 8–10% at the 50% threshold, while removing uncertainty weighting decreases benefit by 4–6%. These components help stabilize predictions near difficult threshold boundaries, where small changes in predicted probability can flip treatment recommendations. The gated fusion mechanism achieves this stability by providing robust modality weighting even under incomplete data, while uncertainty-aware weighting allows the model to appropriately hedge confidence when different modalities provide conflicting signals. This translation of robustness improvements into actionable clinical benefit—rather than merely improving AUROC metrics—demonstrates that UroFusion-X’s design choices are well-aligned with real-world clinical decision-making constraints.

Beyond threshold-specific benefits, DCA reveals that the model provides consistent positive net benefit across all clinically relevant threshold ranges, with no threshold where clinical baselines outperform UroFusion-X (curves do not intersect). This universal superiority, combined with the cancer-type-specific threshold optimization, suggests that UroFusion-X can be deployed as a universal prognostic tool across urological malignancies while allowing clinicians to adjust interpretation thresholds based on cancer-type-specific treatment aggressiveness and patient preferences.

Discussion

In this work, we proposed UroFusion-X, a modality-robust multimodal deep learning framework designed for integrated diagnosis, molecular subtyping, and survival prediction of urological cancers. The experimental results across multi-center cohorts demonstrate that UroFusion-X consistently outperforms unimodal baselines, conventional fusion strategies, and established clinical risk scores across multiple quantitative dimensions. Specifically, the framework achieves AUROC of 0.92 (95% CI: 0.89-0.95) for bladder cancer, 0.90 (0.87-0.93) for RCC, and 0.88 (0.85-0.91) for prostate cancer, representing 6-10 percentage point improvements over imaging-only baselines (average AUROC 0.82) and 4-6 percentage point improvements over standard multimodal fusion (average AUROC 0.86).

Importantly, the framework maintains graceful performance decay under missing-modality scenarios, with average AUROC degradation of only 2.8% when using gated Product-of-Experts fusion (compared to 8.5% degradation with simple concatenation). This robustness represents a critical requirement for deployment in real-world clinical practice where heterogeneous and incomplete data are inevitable due to workflow constraints, cost limitations, or technical failures.

A key strength of UroFusion-X lies in its cross-modal co-attention and gated product-of-experts fusion mechanism, which enables the system to selectively prioritize complementary information across imaging, pathology, genomics, and laboratory data while gracefully handling missing modalities through learned gating weights. This design not only improves predictive accuracy but also enhances model interpretability, as demonstrated through attention heatmaps that highlight anatomically and biologically plausible regions and pathways. Ablation studies confirm that the gated PoE mechanism contributes 4–6% AUROC improvement for diagnosis and 3–5% F1 improvement for subtyping compared to naive concatenation, validating its essential role in stable multimodal integration.

Furthermore, the incorporation of modality dropout during training ensures that the model does not overly rely on any single modality. Removing modality dropout leads to 5–8% AUROC degradation under missing-data scenarios at inference, substantially larger than the 2–3% degradation from removing cross-modal attention, demonstrating that training-time exposure to incomplete modalities is the most critical factor for achieving robust inference-time performance.

Another contribution of this work is the emphasis on multi-task learning, which jointly optimizes diagnosis, subtyping, and prognosis within a single unified architecture. By leveraging shared representations (65% task-agnostic, 35% task-specific variance), the framework achieves 3–5% diagnostic accuracy improvement, 2–4% subtyping improvement, and 0.03-0.05 C-index improvement for survival prediction, compared to single-task baselines. The multi-task formulation also substantially improves robustness under institutional domain shifts: multi-task LOCO degradation ranges 2–6% across centers, whereas single-task baselines show 2–12% degradation, indicating that shared representations provide implicit regularization against distributional heterogeneity. This joint optimization also facilitates clinical decision-making by offering a more holistic view of patient status, bridging diagnostic classification with long-term prognostic assessment.

From a translational perspective, UroFusion-X shows significant potential to complement existing clinical workflows. Decision curve analysis indicates substantially higher net benefit compared to widely used risk scores: at the critical 50% decision threshold (where overtreatment and undertreatment pressures are balanced), UroFusion-X achieves net benefit of 0.18 (95% CI: 0.15-0.21) compared to CAPRA-S (0.09), SSIGN (0.08), and EORTC (0.10), representing a 2-fold improvement in decision-making utility. Furthermore, false-positive rate at this threshold is 12% for UroFusion-X versus 22–25% for clinical baselines, meaning deployment could reduce unnecessary aggressive treatment in approximately 100-130 patients per 1000 at-risk patients, a clinically meaningful harm reduction. The model’s ability to generalize across institutions, validated via leave-one-center-out experiments showing only 2–6% AUROC degradation when holding out individual centers, highlights its suitability for deployment in diverse clinical environments where scanner protocols, staining conditions, and sequencing platforms vary.

The calibration analysis further supports clinical deployment readiness: expected calibration error ranges from 0.08 to 0.12 across cancer types, indicating good agreement between predicted and observed risks in the clinically most important probability ranges (40–60%, where treatment decisions are most uncertain). Subgroup-specific AUROCs remain above 0.86 for all demographic and clinical strata (older patients, low-PSA prostate, high T-stage tumors), substantially exceeding clinical baseline performance (0.78–0.82) and demonstrating equitable performance across diverse patient populations.

Despite these advances, several limitations should be acknowledged. The current study primarily focuses on retrospective multi-center cohorts, and prospective validation is required to confirm clinical utility in real-time settings where workflow pressures, time constraints, and clinician decision-making patterns may differ from offline evaluation. While the framework demonstrates robustness to missing modalities (AUROC degradation of 2–4% when individual modalities are absent), the degree of robustness under extreme data sparsity remains an open challenge—for instance, when only a single modality is available, performance degradation could approach 10–15%, limiting utility in settings where only imaging or only pathology data are accessible.

In addition, although the attention mechanisms and pathway enrichment analysis provide insights into model decision-making, these methods remain largely correlational. The spatial alignment between imaging and pathology ROI attention maps reaches 78% with consistency regularization, but achieving higher human-expert agreement and integrating causal inference frameworks would further enhance clinical interpretability and trust. Furthermore, computational complexity poses a potential barrier to real-time clinical deployment: the full multimodal model requires substantial GPU resources for training (approximately 48–72 h on high-end GPUs) and inference (approximately 2–5 min per patient for full multimodal processing), which may limit accessibility in resource-constrained hospitals.

Additionally, the failure mode analysis reveals that approximately 50–60% of model errors stem from data quality issues (pathology sampling misses, imaging artifacts, genomic contamination) rather than fundamental algorithmic limitations, suggesting that clinical deployment will require careful attention to standardized data collection protocols and quality assurance pipelines. Future work should focus on adaptive knowledge distillation or generative imputation strategies to better handle extreme data sparsity scenarios, and on integration with electronic health records to automatically filter and standardize diverse data sources.

Looking ahead, future work may focus on extending UroFusion-X to incorporate temporal and longitudinal data, such as treatment history, follow-up imaging, or serial biomarker measurements, thereby enabling more precise disease trajectory modeling and early detection of treatment resistance. Another promising direction lies in scaling to foundation models pre-trained on large-scale multi-institutional datasets, which may further improve generalization across rare subtypes and under-represented patient populations. Finally, integration with electronic health records and prospective trials will be essential steps toward translating this framework into a clinically deployable decision-support tool with demonstrated real-world impact on patient outcomes.

While UroFusion-X demonstrates strong performance across multi-center cohorts, several methodological and practical limitations warrant careful consideration. First, the study is based primarily on retrospective datasets, which, although diverse and multi-institutional, may not fully capture the variability and workflow constraints encountered in prospective clinical settings. The framework was optimized on historical data where modality acquisition decisions were already made, whereas prospective deployment may encounter different patterns of missing data, different acquisition protocols, or different case distributions reflecting changing clinical practices. Prospective validation and real-world deployment studies are required to confirm the framework’s clinical utility and to assess whether the 0.18 net benefit demonstrated on retrospective data translates to similar benefits in real-time clinical decision-making.

Second, although modality dropout improves robustness to incomplete inputs, the model’s performance still degrades when multiple modalities are simultaneously absent. For instance, when both imaging and pathology are missing (a scenario that might occur in resource-limited settings where genomic sequencing is prioritized), diagnostic AUROC degradation reaches 8–10%, reducing to 0.82-0.84 from the full-modality baseline of 0.90. The model’s applicability in settings where only a single modality is available is therefore limited. Future work should explore adaptive knowledge distillation from a complete-modality teacher model or generative imputation strategies to better handle extreme data sparsity scenarios, particularly for resource-limited deployment contexts.

Third, although attention heatmaps and pathway-level attributions provide some interpretability, these methods remain largely correlational and may not always reflect causal relationships underlying predictions. Spatial alignment between imaging and pathology attention maps reaches 78% with consistency regularization, which is clinically reasonable but still leaves 22% of attention misalignment that could confound interpretation. Additional incorporation of expert-defined anatomical or pathological priors, as well as formal causal inference frameworks, could enhance interpretability and clinician trust. Furthermore, the model’s learned feature hierarchies may capture patterns meaningful for prediction without necessarily reflecting established oncological knowledge, potentially leading to unexpected or difficult-to-explain recommendations in edge cases.

Fourth, computational complexity poses a practical barrier to widespread clinical deployment. Training the full multimodal architecture requires approximately 48–72 h on high-end GPUs (8 × NVIDIA A100 or equivalent), and inference on a single patient requires 2–5 min of GPU time for complete multimodal processing (compared to seconds for simpler baseline models). This computational burden may limit accessibility in hospitals with limited computational infrastructure or those operating under tight time constraints (e.g., in rapid diagnostic settings for acute oncological emergencies). Model compression techniques, knowledge distillation into lightweight student models, and efficient inference pipelines using quantization or pruning will be necessary to ensure practical adoption in diverse clinical settings.

Finally, the improvement margins over clinical baselines, while statistically significant, are modest in absolute terms for some metrics. For instance, AUROC improvements over CAPRA-S average 6-10 percentage points, which translates to approximately 60–100 additional correct classifications per 1000 patients—clinically meaningful but not transformative. This suggests that UroFusion-X should be positioned as a complementary tool to clinical judgment rather than a replacement for clinician expertise, and that clinical validation should explicitly assess whether modest predictive improvements translate to changes in clinician behavior and patient outcomes.

In this work, we proposed UroFusion-X, a unified and modality-robust multimodal deep learning framework for the integrated diagnosis, molecular subtyping, and prognosis prediction of urological cancers. By combining cross-modal co-attention with a gated Product-of-Experts (PoE) fusion mechanism, and by employing a two-stage training protocol with modality dropout, our framework effectively addresses the fundamental challenge of missing or incomplete modalities, which is pervasive in real-world clinical practice.

Through extensive multi-center evaluations across bladder, renal, and prostate cancer cohorts (70%–15%–15% training-validation-test split, validated via leave-one-center-out protocol), UroFusion-X consistently outperformed strong baselines across all three cancer types and multiple clinical metrics. For diagnosis, the framework achieved AUROC of 0.92 (bladder), 0.90 (RCC), and 0.88 (prostate), representing 6–10 percentage point improvements over imaging-only baselines and 4–6 percentage point improvements over standard multimodal fusion. For molecular subtyping, F1-scores of 0.88 (bladder) and 0.85 (RCC) exceeded pathology-only baselines (average F1 0.78) and standard multimodal approaches (average F1 0.82). For survival prediction, C-indices of 0.75 (bladder), 0.73 (RCC), and 0.71 (prostate) substantially exceeded clinical scoring systems (0.60–0.64), translating to 10-15 percentage point improvements in discrimination.

The framework demonstrated robust performance under realistic deployment scenarios: missing-modality AUROC degradation averaged 2.8% with gated PoE fusion (compared to 8.5% with naive concatenation), cross-center generalization showed only 2–6% AUROC decline in leave-one-center-out validation, and calibration quality (ECE 0.08–0.12) supported confident clinical risk estimates. Multi-task learning provided substantial robustness gains, reducing LOCO degradation variance from 2–12% (single-task) to 2–6% (multi-task), demonstrating that shared representations effectively regularize against distributional heterogeneity.

From a clinical utility perspective, decision curve analysis showed net benefit of 0.18 (95% CI: 0.15–0.21) at the critical 50% risk threshold, representing 2-fold improvement over CAPRA-S (0.09), SSIGN (0.08), and EORTC (0.10), with false-positive rate reduction from 22–25% to 12%, potentially reducing unnecessary aggressive treatment in approximately 100–130 patients per 1000 at-risk patients.

Beyond technical performance metrics, ablation studies revealed the critical contributions of each architectural component: gated PoE fusion (4–6% AUROC improvement), modality dropout (5–8% robustness improvement), and consistency regularization (78% vs 52% spatial alignment), validating the importance of our design choices for addressing real-world deployment challenges.

UroFusion-X highlights the potential of multimodal AI systems to support precision oncology by integrating radiology, pathology, genomics, and laboratory data into a single coherent decision-support pipeline. The framework’s demonstrated robustness to missing modalities, cross-institutional generalizability, and clinical utility position it as a promising candidate for deployment in diverse clinical environments. Looking forward, prospective validation in real-time clinical settings, integration with electronic health record systems, development of lightweight deployable model variants to address computational constraints, and formal assessment of impact on clinician behavior and patient outcomes will be essential next steps toward translating this research into routine clinical workflows and ultimately improving precision oncology decision-making for urological cancer patients.

Methods

Framework overview

We propose UroFusion-X, a unified and modality-robust multimodal deep learning framework designed for integrated diagnosis, molecular subtyping, and prognosis prediction of urological cancers, including bladder, kidney, and prostate cancer. Unlike traditional siloed approaches that analyze imaging, pathology, genomic, and laboratory data independently, UroFusion-X leverages a holistic design to learn synergistic representations across heterogeneous clinical modalities. The framework is built on modality-specific encoders tailored to the unique characteristics of each input source (3D Transformer-based backbone (Swin-UNETR) for CT/MRI, multi-instance learning encoder for whole-slide pathology images, graph neural networks for genomic profiles, and transformer-based tabular models for laboratory indices). These encoders are coupled via a two-stage fusion strategy: first, a cross-modal co-attention mechanism that enables token-level information exchange across modalities; second, a gated Product-of-Experts (PoE) fusion module that adaptively weights each modality’s contribution based on its relevance and availability.

A key innovation of UroFusion-X lies in its robustness to missing modalities, a common scenario in real-world clinical workflows where patients may lack certain examinations due to cost, accessibility, or contraindications. The gated PoE module adaptively recalibrates the contribution of available modalities based on learned gating signals that weight modality-specific embeddings, ensuring stable performance under partial modality availability. To further enhance interpretability and cross-modal coherence, we introduce two consistency constraints: (i) anatomical–pathological alignment, which encourages spatial correspondence between radiological regions-of-interest (identified via Grad-CAM) and high-attention pathology patches (identified via MIL), thereby aligning diagnostic signals across imaging and histopathology modalities; and (ii) cross-modal contrastive alignment, which uses patient-level InfoNCE-based loss to align embeddings across all modalities (imaging, pathology, genomics, and laboratory data), thereby improving shared semantic structure and out-of-distribution generalization. On top of the fused representation, survival analysis heads (DeepSurv and DeepHit) are integrated in parallel to support non-linear hazard estimation and competing risk dynamics, thereby enabling precise risk stratification.

Overall, UroFusion-X provides an end-to-end pipeline that not only unifies multimodal inputs but also delivers clinically meaningful outputs spanning diagnosis, subtype classification, and prognosis estimation. This design positions the framework as a potential solution that can support decision-making, reduce reliance on individual modalities when data are incomplete, and inform efforts toward precision oncology in urological cancer management.

Data acquisition and preprocessing

Our study utilizes publicly available datasets that have been widely used in computational oncology research and medical imaging challenges. The primary datasets comprise: (i) radiological imaging from multi-institutional CT/MRI repositories; (ii) whole-slide histopathology images from publicly annotated digital pathology collections; (iii) genomic profiles from open-access sequencing databases; and (iv) laboratory and clinical variables harmonized across multiple sources. These datasets inherently capture heterogeneity across diverse imaging protocols, histopathological staining conditions, genomic sequencing platforms, and laboratory assay devices, reflecting real-world variability in urological cancer management. Such diversity arises naturally from the multi-institutional origins of these public collections, where differences in CT/MRI scanner vendors, acquisition parameters, staining procedures, and sequencing protocols contribute to substantial variability.

Rather than enforcing artificial balance, we leverage the natural heterogeneity present in these public datasets, which span multiple geographic regions, patient demographics, and equipment generations, thereby ensuring exposure to technical and population-level diversity during model development.

The datasets include four major modalities: (i) radiological imaging (CT and MRI) from multicenter public repositories for tumor localization and staging; (ii) digitized whole-slide histopathology images from annotated public collections for morphological profiling; (iii) genomic data from open-access sequencing databases for molecular subtype discovery; and (iv) laboratory test results compiled from public clinical datasets to provide routine clinical context. All data are de-identified and publicly available, requiring no additional IRB approval or patient consent, as they have already been ethically reviewed and released by the data providers. Patient records within each dataset were standardized with clinical annotations including tumor grade, TNM stage, and follow-up survival outcomes, following the annotation protocols established by the original data repositories.

The use of publicly available datasets addresses several important considerations: (1) it enables reproducibility, as other researchers can directly access the same data sources; (2) it avoids concerns related to patient privacy and data governance that arise with proprietary datasets; and (3) it facilitates cross-dataset evaluation and model generalization assessment. By training and evaluating on these multi-institutional public datasets, the proposed framework learns representations that are robust to the inherent variability in data acquisition, staining, sequencing, and clinical annotation protocols. This multimodal fusion across diverse public sources provides a realistic foundation for assessing model performance under distributional heterogeneity and missing-modality scenarios commonly encountered in clinical practice.

Figure 6 illustrates our comprehensive multi-modal data acquisition and preprocessing pipeline, demonstrating the systematic approach to handling four distinct data modalities. The preprocessing pipeline ensures data consistency while preserving modality-specific characteristics essential for downstream analysis.

Medical Imaging Processing: Three-dimensional medical imaging data, including CT, MRI, and ultrasound volumes, underwent standardized preprocessing to ensure spatial and intensity consistency. DICOM volumes were resampled to isotropic resolution using trilinear interpolation, with voxel spacing normalized to 1.0 mm3. Regions of interest were extracted through semi-automated segmentation algorithms, combining atlas-based initialization with patient-specific refinement. Intensity normalization employed Hounsfield unit range standardization for CT images (window: −1000 to 3000 HU) and z-score normalization for MRI and ultrasound data to account for inter-scanner variability.

Histopathology Image Processing: Whole-slide images (WSIs) were systematically processed to enable computational analysis at scale. Each slide was tessellated into non-overlapping 256 × 256 pixel patches at ×40 magnification, ensuring sufficient resolution for cellular morphology analysis. Color distribution standardization was achieved through Macenko normalization, which separates hematoxylin and eosin staining components and normalizes their distributions to a reference template, thereby reducing staining variability across institutions.

Genomic Data Processing: Genomic profiles derived from whole-exome sequencing or targeted gene panels underwent rigorous quality control and normalization procedures. Raw expression counts were normalized using DESeq2’s variance stabilizing transformation to account for library size differences and overdispersion. Feature selection employed a two-stage approach: variance-based filtering removed genes with minimal variation across samples (bottom 10th percentile), followed by mutual information-based selection of the top-K most informative features relative to clinical outcomes.

Laboratory Data Processing: Clinical laboratory variables and demographic information were processed to handle the inherent missingness patterns in clinical data. Missing values were imputed using multivariate imputation by chained equations (MICE) with predictive mean matching for continuous variables and logistic regression for categorical variables. Subsequently, all continuous variables underwent z-score standardization to ensure comparable scales across diverse laboratory measurements.

Architecture design

The framework employs four modality-specific encoders, each optimized for the unique characteristics of its respective data type, working in parallel to extract rich representations from heterogeneous inputs. The encoder design philosophy prioritizes both representational capacity and computational efficiency while ensuring compatibility with the downstream fusion mechanism. All encoders are designed to produce fixed-dimensional output embeddings that are compatible with the cross-modal fusion mechanism described in the section “Architecture Design”.

3D Imaging Encoder: Volumetric medical images are processed using a 3D Swin-UNETR backbone, leveraging the hierarchical attention mechanisms of Swin Transformers adapted for three-dimensional medical data. The encoder incorporates masked autoencoder (MAE) pretraining to learn robust spatial representations from unlabeled imaging data. The architecture processes 3D patches through shifted window attention, producing context-rich embeddings that capture both local anatomical details and global spatial relationships within the imaging volume. The output of the imaging encoder is a fixed-dimensional feature vector denoted \({f}_{{\rm{img}}}\in {{\mathbb{R}}}^{d}\), where d is the embedding dimension.

Pathology Encoder: Histopathology analysis employs a transformer-based multiple instance learning (MIL) framework specifically designed for weakly supervised learning on gigapixel whole-slide images (WSIs). Patch-level features are extracted using a Vision Transformer (ViT) encoder, with each 256 × 256 pixel patch treated as an instance. An attention pooling mechanism aggregates patch-level representations into slide-level descriptors, enabling the model to identify and focus on diagnostically relevant tissue regions without requiring patch-level annotations. Formally, given a WSI with n patches, the ViT encoder produces patch-level features {v1, v2, …, vn}, and the attention pooling computes \({f}_{{\rm{path}}}=\mathop{\sum }\limits_{i=1}^{n}{\alpha }_{i}{v}_{i}\), where αi are learned attention weights that sum to 1. The output is a slide-level feature vector \({f}_{{\rm{path}}}\in {{\mathbb{R}}}^{d}\).

Genomics Encoder: Molecular profiling data is represented as graphs where nodes correspond to individual genes and edges encode curated pathway interactions derived from KEGG, Reactome, and BioCarta databases. This graph neural network (GNN) approach explicitly incorporates known biological relationships, enabling the model to leverage pathway-level patterns rather than treating genes as independent features. The GNN employs graph convolution operations to propagate information along biological pathways, producing embeddings that reflect both individual gene expression and pathway-level dysregulation. The GNN aggregates node-level gene expression information through learned message passing over pathway edges, ultimately producing a graph-level embedding \({f}_{{\rm{gen}}}\in {{\mathbb{R}}}^{d}\) that represents the integrated genomic profile.

Laboratory Encoder: Clinical variables and laboratory measurements are processed through a TabTransformer architecture that applies attention mechanisms to heterogeneous tabular data. The model learns embeddings for categorical variables while applying learned transformations to continuous measurements, capturing complex dependencies between diverse clinical parameters that traditional linear models might miss. The TabTransformer concatenates embeddings from all variables (both categorical and normalized continuous) and processes them through transformer blocks, producing a final feature vector \({f}_{{\rm{lab}}}\in {{\mathbb{R}}}^{d}\) that summarizes the clinical and laboratory context.

Summary of Encoder Outputs: The four encoders produce feature vectors of compatible dimensions: \({f}_{{\rm{img}}},{f}_{{\rm{path}}},{f}_{{\rm{gen}}},{f}_{{\rm{lab}}}\in {{\mathbb{R}}}^{d}\). These vectors are subsequently input to the cross-modal co-attention and gated fusion mechanisms described in the section “Architecture Design”, enabling principled integration of heterogeneous modalities.

Our fusion architecture implements a carefully designed two-stage process that maximizes information integration while maintaining robustness to missing data. The two stages are: (1) cross-modal co-attention for token-level information exchange across modalities, and (2) gated product-of-experts for adaptive modality weighting. Together, these stages enable principled multimodal integration that is inherently resilient to incomplete modality availability.

Cross-Modal Co-Attention Mechanism: The first fusion stage implements a cross-modal co-attention mechanism that aligns semantic spaces across different modalities. This attention-based approach enables token-level information exchange, allowing the model to identify and emphasize shared pathological patterns across imaging, pathology, genomics, and laboratory data. The co-attention mechanism computes attention weights that highlight complementary information across modalities, facilitating the discovery of multi-modal biomarkers that might be invisible to single-modality analysis. Formally, given encoded features \({f}_{{\rm{img}}},{f}_{{\rm{path}}},{f}_{{\rm{gen}}},{f}_{{\rm{lab}}}\in {{\mathbb{R}}}^{d}\) from the four encoders, the co-attention mechanism computes pairwise attention between any two modalities. For modalities i and j, the attention weight matrix is computed as \({A}_{ij}=\,{\rm{softmax}}\,({f}_{i}^{T}W{f}_{j}/\sqrt{d})\), where W is a learned projection matrix. These cross-modal attention weights enable the model to dynamically align representations across modalities, emphasizing complementary information that would be missed by independent processing.

Gated Product-of-Experts Fusion: The second fusion stage employs a gated PoE module that adaptively balances contributions from each available modality. Concretely, for each available modality i, a learnable gating function wi = σ(Wgatefi + bgate) computes a scalar gate that weights the modality’s contribution. The gated modality representation is then computed as pi = softmax(wi ⊙ fi), where ⊙ denotes element-wise multiplication. The final fused representation is obtained by aggregating the gated contributions: \({f}_{{\rm{fusion}}}={\sum }_{i}{m}_{i}\cdot {p}_{i}\), where mi is a binary mask indicating whether modality i is available (1) or missing (0) for a given patient. This formulation has the key advantage that missing modalities can be naturally excluded from the fusion operation simply by setting mi = 0, without requiring any architectural changes or model retraining.

Algorithm 1 formalizes this gated PoE fusion process. The learnable gates are conditioned on both modality-specific embeddings and the presence mask, enabling the model to learn task-specific importance weights for each modality. This design naturally accommodates inference scenarios with incomplete modality sets by excluding missing modalities from the aggregation operation, a critical advantage for clinical deployment where data availability is often heterogeneous.

Algorithm 1

Gated product-of-experts fusion

Require: Encoded features {fimg, fpath, fgen, flab}; modality mask m

1: for each available modality i do

2: wi ← σ(Wgate fi + bgate)

3: pi ← softmax(wi ⊙ fi)

4: end for

5: \({f}_{{\rm{fusion}}}\leftarrow {\sum }_{i}{m}_{i}\cdot {p}_{i}\)

6: return ffusion

The fused representation \({f}_{{\rm{fusion}}}\in {{\mathbb{R}}}^{d}\) produced by the gated PoE module serves as the shared input to the three parallel task heads (diagnosis, molecular subtyping, and survival prediction) described in the section “Architecture Design”.

The fused multimodal representation \({f}_{{\rm{fusion}}}\in {{\mathbb{R}}}^{d}\) from the cross-modal fusion stage (the section “Architecture Design”) serves as a shared latent embedding that is jointly optimized across three clinically relevant downstream objectives. To exploit both shared information and task-specific nuances, we adopt a multi-task learning (MTL) paradigm with parallel task heads. This design not only maximizes parameter efficiency but also enforces complementary supervision signals that regularize the shared backbone. The three task heads—diagnosis, molecular subtyping, and survival prediction—operate in parallel, each receiving the same fused representation as input and producing task-specific outputs.

Task Head 1: Diagnosis and Tumor Grading. The first task head focuses on predicting cancer type and stage. A fully connected projection layer zdiag = Wdiagffusion followed by a softmax activation generates categorical predictions over cancer type (bladder, kidney, prostate) and stage (low-grade vs. high-grade). The output is a probability distribution \({\widehat{y}}_{{\rm{diag}}}\) over diagnostic categories, obtained via \({\widehat{y}}_{{\rm{diag}}}={\rm{softmax}}({z}_{{\rm{diag}}}/{T}_{{\rm{diag}}})\), where Tdiag is a temperature parameter for probability calibration. This module mimics the radiologist’s workflow of morphological assessment and provides interpretable probability distributions for classification, trained with cross-entropy loss (with label smoothing to reduce overconfidence).

Task Head 2: Molecular Subtyping. The second task head addresses molecular subtyping, with particular emphasis on clinically actionable categories (e.g., basal versus luminal subtypes in bladder cancer). We employ a multilayer perceptron (MLP) that projects the fused representation to subtype predictions: zsub = MLPsub(ffusion), followed by softmax to produce \({\widehat{y}}_{{\rm{sub}}}={\rm{softmax}}({z}_{{\rm{sub}}}/{T}_{{\rm{sub}}})\). The output is a probability distribution over molecular subtypes, enabling the model to integrate histopathological morphology with genomic signatures. This head is trained with cross-entropy loss incorporating focal loss variants and class-balanced re-weighting to handle imbalanced subtype distributions, thereby supporting precision oncology applications.

Task Head 3: Survival Analysis and Prognosis Prediction. The third task head performs survival analysis and risk stratification. Rather than committing to a single survival model, we implement two alternative paradigms: (i) a DeepSurv-inspired Cox proportional hazards network that directly estimates risk scores \({\widehat{h}}_{{\rm{surv}}}={\rm{DeepSurv}}({f}_{{\rm{fusion}}})\) while preserving the relative hazard assumption, and (ii) a DeepHit-based discrete-time survival model that predicts survival probability distributions \({\widehat{h}}_{{\rm{surv}}}={\rm{DeepHit}}({f}_{{\rm{fusion}}})\) over time intervals and naturally accommodates censored outcomes. The choice of survival architecture can be flexibly adapted depending on the clinical endpoint, such as overall survival (OS) or progression-free survival (PFS), and both models can be evaluated in parallel during training.