Abstract

This paper presents a comprehensive, task-centric synthesis that reframes the development of Oracle bone inscriptions (OBI) information processing as a coevolution between methodologies and their underlying data substrate. Critical challenges and potential research directions are discussed for building trustworthy, efficient OBI information processing systems that function as true partners for frontline researchers, enabling them to contribute to key debates on the social structure and periodization of the pre-Qin period.

Similar content being viewed by others

Introduction

Oracle Bone Inscriptions (OBI) represent the earliest known system of ancient Chinese writing, dating primarily to the late Shang dynasty (c. 1300–1046 BCE), which were typically incised on turtle plastrons and ox scapulae for pyromantic divination1. Studies on OBI offer unparalleled insights into the phonology, morphology, and semantics of archaic Chinese. Beyond their profound linguistic value, the divinatory texts constitute an invaluable primary source for understanding the history, religion, governance, and societal structure of China’s Bronze Age.

The methodology of OBI processing has always been a unique and formidable set of challenges rooted in the artifacts’ physical nature and the script’s intrinsic properties. Foremost among these is the severe fragmentation of the OBI. The majority of bones and plastrons were broken after thousands of years of natural weathering and man-made destruction, scattering related texts across massive fragments. The monumental task of fragment rejoining, a complex, high-dimensional puzzle, is often a prerequisite for coherent semantic analysis, which, prior to the popularization of image processing technologies, relied exclusively on decades of painstaking manual assembly by oracle bone experts2. Moreover, at the paleographic level, the script is characterized by a high degree of allography, with a single character possessing numerous morphological variants, and glyph similarity, where distinct oracle bone characters bear a strong visual resemblance3. Meanwhile, the specific noises in the OBI rubbings, including stroke-broken, bone-cracked, abnormal edges, and dense white regions4, further challenge the recognition of oracle bone characters. Lastly, the ultimate goal of OBI processing is to decipher them. Since the first discovery of oracle bones in 1899, over 4,500 character classes have been excavated, with only about one-third of them successfully deciphered or interpreted. One major obstacle lies in the scarcity of the corpus and the narrow contextual scope. The vast majority of OBI texts are divinatory records, which are highly formulaic and restricted in semantic space. A related difficulty is the pictographic and ideographic nature of many graphs. While their iconographic logic is sometimes apparent, the original visual metaphor is often lost to time or stylized beyond intuitive recognition. This forces their decipherment to rely heavily on comparative paleography, i.e., tracing a glyph’s evolution forward to more stable forms in bronze inscriptions or bamboo slips, which is often speculative5.

Driven by advances in artificial intelligence (AI) technology, especially the proliferation of large generative models, the field of OBI processing is continuously evolving and also changing traditional OBI experts’ perspectives. In recent years, a large number of OBI papers that cross various techniques have been published (Fig. 1), which, to a certain extent, may obscure the truly pivotal points. Due to interdisciplinary issues, the key questions that the archeological linguists or historians focus on may have a large gap with computer scientists or AI algorithm experts. Therefore, conducting a thorough survey in the OBI field is of great significance, which will help gain a comprehensive understanding of the relevant literature and shed light on the future development direction.

Results cover the last twenty years, from 2005 to 2024. We can observe a relatively stable publication output before 2017. Between 2017 and 2022, as deep learning techniques gained widespread adoption, the number of OBI studies increased steadily. More recently, with the advancement of large language models, the volume of relevant articles has shown rapid acceleration, reflecting growing interdisciplinary interest in large language models across different OBI tasks.

Related surveys

Although a large number of OBI studies have been carried out, comprehensive review articles that synthesize and critically analyze these works remain conspicuously scarce. Table 1 summarizes the existing surveys in the field. Xing et al.6 provided an overview of OBI detection models, such as Faster R-CNN7, SSD8, YOLO9, RFBnet10, and RefineDet11, to select an appropriate architecture for OBI detection that achieves the speed/memory/accuracy balance for a given platform. Diao et al.12 gave a survey on ancient script image recognition methods in 2025. They categorized existing studies by script type and analyzed the corresponding recognition methods, highlighting both their differences and shared strategies. Challenges unique to ancient script recognition and recent solutions, including few-shot learning and noise-robust techniques, are also reviewed. Recently, Li et al.13 presented a structured survey of the current landscape of oracle character recognition (OrCR) research, including an overview of the primary benchmark datasets and digital resources available for OrCR, as well as a review of contemporary research methodologies with their respective utilities, limitations, and applicability.

To the best of our knowledge, the aforementioned articles are the only existing surveys related to the OBI research. However, they have narrowly focused on a single facet, i.e., the recognition task, overlooking the systematic integrity and intrinsic interdisciplinarity of OBI studies. Some key tasks and new emerging areas in OBI research are not included. For example, OBI dataset construction, OBI rejoining, OBI classification, OBI deciphering, and many related up-to-date AI techniques have all been intensively researched in recent years. These emerging topics represent new trends in OBI processing, but they are missing from almost all current OBI surveys.

Considering the limitations of the current OBI surveys described above, a comprehensive and up-to-date survey, including not only traditional OBI processing measures but also whole-process OBI processing tasks from excavation to digital preservation, is greatly needed for a better top-down understanding of the history, current state-of-the-art, and future trend of the field. This survey is written to fulfill that need.

Survey contribution and novelty

This survey makes the following unique contributions:

-

Comprehensive Review: It provides a thorough review of the existing OBI processing techniques with surveyed OBI papers several times more than those of the previous surveys, highlighting both data and methodology perspectives across different tasks.

-

Integrating Emerging Technologies: It situates current OBI processing methods within the context of emerging technologies such as deep learning, generative models, and large language models.

-

Identification of Challenges: It discusses the key challenges and limitations in the current state of OBI research, especially the applicability of the current evaluation approaches.

-

Guiding Future Research: Several promising research directions for future works are identified and detailed, encompassing a variety of aspects such as text-to-OBI generation, specific evaluation metric design, multimodal learning for OBI deciphering, and 3D reconstruction of OBI fragments.

Survey methodology and organization

To ensure a holistic and systematic review of the literature on OBI processing, a clear and structured survey methodology is important. The methodologies for conducting a thorough survey, including literature search, selection criteria, taxonomy, analysis, and synthesis, are summarized as follows.

-

Literature search: Considering the several major fields related to OBI processing, such as archeology, philology, computer science, signal processing, and linguistics, etc., we utilize mainstream academic databases such as Google Scholar, IEEE Xplore, ACM Digital Library, SpringerLink, and Web of Science as the searching platform. Besides, we focus on prominent international and Chinese journals in the fields of multimedia, computer vision, artificial intelligence, and historic preservation, such as IEEE Transactions on Image Processing, Pattern Recognition, npj Heritage Science, and conferences like ICLR, ACM MM, CVPR, ICCV, ACL, AAAI, etc. Moreover, we use a combination of keywords related to OBI, such as “oracle bone inscriptions", “oracle character recognition", “rejoining oracle bone inscriptions", “oracle bone inscription datasets","deep learning in OBI processing", and “deciphering OBI" to search the relevant papers.

-

Selection criteria: The inclusion criteria include topic relevance, methodology, novelty, impact, etc. The exclusion criteria include language, study quality, publication type, redundancy, etc.

-

Taxonomy: We categorize the papers according to the OBI datasets, OBI processing-related techniques, and task-specific OBI processing works.

-

Analysis: We present a systematic review of OBI information processing studies, charting the evolution from traditional methodologies to modern AI-driven approaches. We conduct a task-by-task introduction and analysis while highlighting the distinct characteristics of the OBI datasets derived from these tasks.

-

Synthesis: Finally, based on the current challenges in the digitization, preservation, and interpretation of paleography, and in conjunction with the technological trajectory of AI, we overview future research and development trends in this field.

We confine our survey pool to encompass technical papers published in the last 20 years, i.e., 2005–2025. Note that this does not limit the citation and reference of the work of early experts and scholars, considering the long-lasting characteristic of OBI research. We include more OBI tasks and related papers that are considered important but have not been included in existing OBI surveys. The taxonomy of this survey is shown in Fig. 2. First, we outline four key stages in the development of OBI research, each embodying distinct methodology designs, data formats, and technical challenges. This historical perspective not only situates the current state of the field but also clarifies the motivations for the directions explored in later sections of this survey. Second, we review the existing OBI datasets for specific tasks. Third, we summarize mainstream OBI preprocessing methodologies, including traditional image processing and generative model-based data augmentation, and review OBI processing methods for different tasks, including OBI recognition, OBI rejoining, OBI classification, and OBI deciphering. Fourth, the evaluation of OBI processing methods in different tasks is discussed, with a comparison of their advantages and disadvantages. Fifth, we summarize challenges and outlooks for future trends in OBI processing, and finally, we conclude the whole paper.

*The website reference of YinQiWenYuandetection and OBI-IJDH are (https://jgw.aynu.edu.cn/home/down/detail/index.html?sysid=3) and (http://www.ihpc.se.ritsumei.ac.jp/OBIdataseIJDH.zip), respectively.

Evolving paradigms of OBI processing and discussion

The evolution of OBI information processing has undergone a series of significant paradigm shifts (Fig. 3), reflecting a deeper integration of frontier AI techniques with expert-calibrated cognitive processes. In this section, we outline four key stages in the development of OBI processing studies, each embodying distinct mainstream methodologies, effectiveness analyses, and technical challenges. This historical perspective not only situates the current state of the field but also clarifies the motivations for the directions explored in later sections of this survey.

We show some representative research directions in each stage.

Stage 1: Traditional expert-led methodologies

In the early days, the processing of OBI was conducted mainly by manually collating and proofreading ancient books. Scholars such as Yirong Wang, Guowei Wang, Zhenyu Luo, Moruo Guo, Houxuan Hu, Xigui Qiu, Tianshu Huang, Dekuan Huang, and Zhenhao Song have made outstanding contributions in both theoretical14,15 and practical16,17 aspects of the field. At that time, after being unearthed, the publication of oracle bone materials typically involved reproducing the inscriptions through ink rubbings, tracings, or photographs, and then compiling these reproductions into printed volumes. Among them,18 remains the most comprehensive catalog to date, containing the largest number of oracle bones ever published, complete with plates, transcriptions, and indexes. Based on these, the deciphering and interpretation of the script constitute the primary task of early oracle bone studies.

Beyond paleographic deciphering, significant progress has also been made in the chronological and periodization studies of oracle bone inscriptions. Zuobin Dong19 made a landmark contribution, dividing 273 years of oracle bone materials into five chronological phases. Later, modern scholars revised this framework by first classifying the inscriptions based on script features and other characteristics and then determining the temporal affiliation of each class, proposing a new two-lineage hypothesis to replace the traditional five-period model18. Other specialized studies, such as the research on the graphical system and the morphological formation system of OBI20,21, encompass both intrinsic analyses of ancient character systems and diachronic explorations, contributing significantly to our understanding of the structural and evolutionary mechanisms of early Chinese writing.

However, the traditional expert-led methodology that dominated early oracle bone research, while foundational, was inherently constrained by human limitations. The primary drawback was a profound lack of efficiency. Progress was painstakingly slow, as every task, from deciphering individual characters and transcribing inscriptions to manually searching for and matching fragmented pieces, depended entirely on the laborious, time-consuming efforts of a few highly trained scholars. Furthermore, this approach suffered from low reproducibility and high subjectivity. Interpretations often relied heavily on an individual expert’s intuition, memory, and accumulated private knowledge. This tacit knowledge was difficult to formalize or transfer, making it challenging for other researchers to independently verify findings or systematically build upon previous work, creating a significant bottleneck for scaling research and validating results objectively.

Stage 2: Computer-aided digital image processing

This stage, from the 1990s to around 2012, marked a transformative era for digital image processing. Research in the OBI domain primarily focused on image enhancement22, restoration (e.g., histogram equalization and spatial filtering), and introduced local feature descriptors, such as scale-invariant feature transform (SIFT) and histogram of oriented gradients (HOG)23 (http://digital.library.wisc.edu/1793/67547). These algorithms allowed computers to improve OBI image quality for downstream tasks and identify distinctive structures across images despite changes in scale, rotation, or illumination.

Wang et al. (https://nlpr.ia.ac.cn/2006papers/gjhy/gh75%20.pdf) utilized the wavelet transform to remove noise of different defective rubbings of oracle bone. In24, the author proposed a mesh point feature extraction algorithm for neat oracle bone rubbings, which utilizes coarse mesh relative address, resulting in a decent recognition performance improvement. Liu et al.25 presented a local boundary descriptor with a two-dimensional fragment contour matching algorithm based on angular sequence. Wang et al.26 proposed an Euclidean distance-based contour matching approach for extracting and tracking contour information from oracle shell images. Furthermore, at a time when open-source awareness was still in its infancy, demonstrating data ownership and integrity, especially for sensitive data such as OBI, was crucial for safeguarding cultural heritage. Liu et al.27 designed a wavelet watermarking embedding and extraction pipeline for OBI images, which exhibits strong robustness to photo brush tampering and image clipping.

Nevertheless, algorithms at this stage are predominantly susceptible to noise and degradation, which often struggle to distinguish genuine character strokes and the stochastic background noise common in rubbings, such as cracks, erosion, and uneven ink distribution. Furthermore, this stage suffered from a profound semantic gap. These methods treated characters merely as rigid geometric patterns rather than semantic entities, relying on hand-crafted features that lacked the representational capacity to handle intra-class variance.

Stage 3: Data-driven deep representation and feature learning

The advent of deep convolutional neural networks (DCNNs)7,28,29 marked a pivotal shift in OBI processing: moving from expert-centric manual procedures to data-driven automatic frameworks. These models achieved strong performance in tasks such as OBI character recognition30,31,32, rejoining33,34,35,36, classification37,38,39, and retrieval40,41,42, laying the foundation for the subsequent construction of large-scale OBI databases.

This stage of research begins to combine low-level directional features with mid- and high-level encodings within a CNN framework to better capture the stroke characteristics of OBI characters43,44,45, which is further improved by Transformer architectures46,47,48. This shift enables the modeling of long-range structural dependencies, which is particularly crucial for OBI, where self-attention mechanisms allow models to infer complete glyph structures despite significant physical degradation or fragmentation. A similar multiscale feature integration scheme49 was also proposed to align features across disparate domains to facilitate cross-modal OBI retrieval. Meanwhile, to combat the severe data scarcity of rare characters, adversarial data augmentation (e.g., AGTGAN50, Oracle-P15k3) was employed to generate synthetic samples, transferring styles between handprinted glyphs and authentic rubbings. For zero-shot and open-set recognition, frameworks like RZCR51 and ACCID52 detect sub-character components (radicals) first and utilize knowledge graphs or DCNNs to infer the identity of novel characters based on structural composition rules. Then, with the emergence of self-supervised learning (e.g., pre-training on large-scale, unlabeled ancient script corpora), models can now acquire robust paleographic intuition without relying solely on scarce expert-annotated labels, effectively mitigating the long-tail distribution problem in OBI datasets53. In the OBI rejoining task, researchers34,54 employed a dedicated deep neural network to serve as a binary classifier to verify texture continuity after preliminary edge matching.

Although significant progress has been made at this stage, there still exist many challenges. First, the distribution of OBI data is extremely imbalanced. Most undeciphered characters often appear only once or survive solely in fragmentary rubbings. Existing deep learning models readily overfit or completely fail on tail categories. Although generative AI-based data augmentation techniques have been exploited to perform style transfer between handprinted sketches and rubbings, the quality and diversity of synthetic samples still fall short of faithfully substituting genuine exemplars. Second, current recognition models are predominantly closed-set systems, which are prone to making mistakes when confronted with unknown characters. Endowing the classifier with a principled rejection capability and enabling it to infer unseen characters through reasoning constitutes the foremost technical bottleneck. Furthermore, models in this era predominantly rely on unimodal visual features, lacking comprehension of the text semantic content within divinatory inscriptions, which hinders further decipherment of OBI characters.

Stage 4: Large multimodal model-based cross-modality perception and interpretation

Recently, the rapid advancement of large multimodal models (LMMs)55,56 (https://openai.com/gpt-5/and https://blog.google/products/gemini/gemini-3/) has led to a paradigm shift in many fields57,58,59, exhibiting unprecedented potential with capabilities in task automation, methodological innovation, and interactive application development. These models have fundamentally transformed OBI research by integrating multiple modalities, enabling cross-modality perception and interpretation for OBIs. The maturation of vision-language alignment (e.g., contrastive learning) allows the field to move beyond isolated character recognition toward contextual decipherment, where the model integrates visual glyph features with divinatory textual semantics, closely mimicking the multi-source evidentiary approach used by human experts. As a prominent example of this trend, OBI-Bench2 has for the first time evaluated 23 LMMs in five main OBI processing tasks, including recognition, rejoining, classification, retrieval, and deciphering, and demonstrated the potential of LMMs in processing tasks demanding expert-level domain knowledge and cognition. Afterwards, specific visual-language OBI models fine-tuned from current foundation models, such as OracleSage60, OracleFusion61, and Peng et al.’s model62, extract hierarchical visual information (glyph structure) to assist the reasoning reliability of LMMs for deciphering OBI characters visually and textually. The recently proposed OracleAgent63, an agent system designed for structured management and retrieval of OBI-related information, integrates multiple OBI analysis tools linked by large language models (LLMs), achieving efficient character, document, interpretive text, and image retrieval. These approaches are effective for familiar or clean OBIs but struggle with noise-contaminated, original, cross-modal OBI inputs. Some pictograph-centric deciphering frameworks limit their applicability, since the major part of the undeciphered OBI is ideographic. Meanwhile, due to the lack of a large-scale, comprehensive OBI corpus, these LMM architectures do not perform well on surface-level pattern matching and static knowledge retrieval, let alone dynamic hypothesis generation and multi-step logical reasoning64.

In this view, this limitation may catalyze the development of chain-of-thought (CoT) reasoning-based OBI deciphering. Furthermore, from a learning method perspective, reinforcement learning-enhanced multimodal reasoning demonstrated by DeepSeek-R155 can become another research direction to foster a new line of multimodal reasoning work for OBI processing. Taken together, the OBI processing evolution from conventional expert-led laborious pipelines to emerging multimodal processors outlines a clear trajectory toward more trustworthy, adaptive, all-round expert-friendly AI systems. In the following sections, we provide a detailed analysis of each stage, from data to methodology and evaluation metric, as well as the emerging research directions that shape the future of OBI processing.

Data sources for OBI information processing

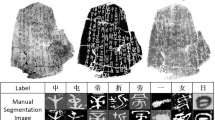

Data source forms the foundation for conducting OBI research. OBI processing from excavation to digital preservation typically involves the processes of data augmentation, recognition, rejoining, classification, and the ultimate decipherment, as suggested by OBI-Bench2. Basically, the OBI datasets are used to train and test automatic models in the respective tasks, whereas emerging OBI datasets are generally designed for some specific OBI processing problems and applications. Since our objective is to conduct a comprehensive search to encompass the full landscape of the field, we do not explicitly set specific criteria for dataset filtering. In general, we mainly imposed constraints on the temporal scope so that only datasets published in the past ten years (i.e., 2015-2025), representing the era of data-driven OBI processing with clear task orientation, are included. Besides, we excluded those internal or private datasets with no technical documentation or accessibility for research comparison. Fig. 2 provides an overview of 36 datasets proposed for four main OBI processing tasks: recognition, rejoining, classification/retrieval, and deciphering. Information, including the publication year, appearance type, data source, the number of samples and classes, image resolution, and availability, as well as the file format, is summarized. Detailed introduction of these datasets and their data sources can be found below. Figures 4 and 5 show some visualization examples.

The image displays fi ve common appearances of OBI from excavation to synthesis, including original oracle bones, inked rubbings, oracle bone fragments, cropped samples, and handprinted OBI characters.

Licensed under CC BY 4.0 (http://creativecommons.org/licenses/by/4.0/).

Online resources

There are many online OBI resources available for public learning and research, which also serve as the main data sources for constructing OBI datasets and promoting computational paleography. Here, we briefly introduce nine representative web platforms. Figure 6 visualizes their interfaces.

-

Yin Qi Wen Yuan (https://jgw.aynu.edu.cn/home/) is a comprehensive and open-access knowledge-sharing platform for OBI studies, which is maintained by Anyang Normal University. It serves as a crucial data source by integrating three main components, i.e., a character database, a collection of oracle bone inscriptions, and a library of academic literature, housing an extensive collection that includes 154 types of OBI publications, 239,902 images of oracle bones, and 35,585 published research works. It offers advanced visualization and search tools, allowing for tracing their appearances across various inscriptions and examining their form and context of use.

-

Xiao Xue Tang (https://xiaoxue.iis.sinica.edu.tw/) is a major scholarly resource chronicling the evolution of Chinese character forms and sounds, which is jointly developed and maintained by the Department of Chinese Literature at National Taiwan University and the Digital Culture Center at Academia Sinica. This platform allows researchers to query and compare the various forms of a character as it evolved, beginning from its earliest appearances in OBI, making it an invaluable tool and data repository for paleographic analysis.

-

Guo Xue Da Shi (https://www.guoxuedashi.com/) is a large-scale, comprehensive online database dedicated to Chinese classical studies and traditional culture. Its key utility for an OBI review lies in its extensive dictionary and character database. The site hosts digitized versions of critical paleographic reference works, most notably an oracle bone script dictionary, a compilation of bronze inscriptions, Shuowen Jiezi, and the Kangxi dictionary.

-

Zhui Yu Lian Zhu (https://www.fdgwz.org.cn/ZhuiHeLab/Home), established by the Zhui He Lab at Fudan University, is a specialized database that stores a growing collection of over 6,600 instances of oracle bone fragment splicing. It provides essential metadata, including the original publication and fragment numbers of the joined pieces, the source of the splicing information, and the name of the scholar credited with the rejoining.

-

Omniglot (https://www.omniglot.com/chinese/jiaguwen.htm) is an online encyclopedia of writing systems and languages. Its page on “Oracle Bone Script” serves as a tertiary, introductory data source. It provides a concise summary of the script’s history, its function in divination, and a comparative chart of selected character examples with their modern equivalents and meanings. It also functions as an aggregator of external links to more specialized academic websites.

-

Multi-function Chinese Character Database (https://humanum.arts.cuhk.edu.hk/Lexis/lexi-mf/), hosted by the Humanum Computing Research Center at The Chinese University of Hong Kong, is a comprehensive lexicographical and etymological resource. It provides an extensive collection of ancient character forms, including a dedicated oracle bone component table and numerous OBI glyphs. The database links these ancient forms to their modern counterparts, offering detailed etymological analyses, component breakdowns, and phonetic information, making it an invaluable tool for tracing the morphological evolution of characters from the Shang Dynasty.

-

Yin Xu OBI Database (https://obid.ancientbooks.cn/), contributed mainly by Prof. Nianfu Chen, contains 59,591 oracle bones and 143,856 divination inscriptions. Each item consists of a description section and an interpretation section, while recording the classification of the oracle bone inscriptions, the specific source and citation, as well as the group of the diviner.

-

Chinese Etymology (https://hanziyuan.net), created by Richard Sears (also known as “Uncle Hanzi”), is a comprehensive digital etymological database, compiling a massive collection of ancient Chinese character forms, including over 31,000 oracle bone characters, over 24,000 bronze characters, and over 49,000 seal characters.

-

OBI AI Collaborative Platform (https://www.jgwlbq.org.cn/home) is an advanced database and online research platform developed in collaboration with partners such as Tencent and academic institutions. It collects approximately 1,430,000 oracle bone single characters, 4,184 lemmata, and nearly 680,000 identified glyphs. It allows users to search for a character and retrieve its modern equivalent, meanings, and evolutionary path.

All nine platforms generally include functions such as glyph-based oracle bone character search and modern interpretation reference.

Print resources

Books and monographs on OBI have long served as the principal source through which the public studied these materials before database technologies matured. Below, we provide a brief overview of representative materials from five key perspectives, including record, hand copy, transcription, periodization, and lexicon.

-

Record: The most famous record of OBI from the last century was compiled by Prof. Moruo Guo and is known as the “Collections of oracle-bone inscriptions"14. It is a monumental 13-volume compendium that represents a milestone in OBI studies. It systematically organizes 41,956 items, including rubbings, photographs, and transcriptions drawn from the first 80 years of oracle bone discoveries.

-

Facsimile: “Corpus of Oracle-Bone Facsimiles”18, edited by Prof. Tianshu Huang, is the largest published archive of inscribed plastromancy remains, housing 70,659 fragmentary pieces that are all redrawn from the clearest extant rubrics or photographs. Each facsimile is tagged for class, optimal exemplar, previous joins, and duplicates, and is paired on the same opening with transcription and concordance indices, yielding a consultable reference for script evolution and divinatory syntax.

-

Transcription: “Collection of Explanations on Oracle-Bone Inscriptions”16, contributed by Prof. Xingwu Yu, assembles over 4600 oracle-bone glyphs with all major scholarly glosses. It contains radical, glyph type summary table, and Pinyin indices, offering a systematic benchmark for subsequent studies of Shang script morphology.

-

Periodization: “Categorization and time division of the Oracle inscriptions for the king in Yin ruins”65, written by Prof. Tianshu Huang, applies rigorous typology to split the corpus into twenty font-based classes, embedding each within a two-lineage development theory. By integrating joins and calendrical algorithms, this book validates the relative sequence of Zu, Bin Zu, and Li Lei inscriptions, supplying a replicable methodology now exported to bronze chronology, geographical investigation, and Shang syntax studies.

-

Lexicon: “New Compendium of Oracle-Bone Characters”66, edited by Prof. Zhao Liu, digitizes thousands of high-resolution Shang glyph images by automated cropping and binarization, while Western-Zhou forms are hand-traced and then scanned. Integrating over 100 previous collections from Heji onward, the volume incorporates the latest decipherments and joins, offering the most comprehensive and systematically arranged paleographic dataset available for quantitative and diachronic studies of early Chinese writing.

OBI Datasets for Recognition

In OBI recognition datasets, each image is densely annotated with axis-aligned or rotated boxes that localize every character region. By converting rubbings into precisely labeled imagery, they provide the training substrate for convolutional neural network (CNN)7,9, Transformer67, and SAM-based68 detectors that can isolate characters amid complex fracture lines, background noise, and overlapping impressions. The resulting models not only accelerate the digital archiving for downstream tasks such as interpretation but also provide an objective, reproducible metric (e.g., mean average precision (mAP), intersection over union (IoU)) with which to benchmark new archivers against expert-drawn gold standards.

-

YinQiWenYuandetection (https://jgw.aynu.edu.cn/home/down/detail/index.html?sysid=3): It is released on the Yin Qi Wen Yuan website, which contains 9823 rubbing images and 9134 annotation files that include the regional coordinates of individual characters in each image.

-

OracleBone-800033: It contains 7824 OBI rubbing images from ref. 14 and 128,770 annotated character instances, with the characteristics of being highly imbalanced and sparse. A deep learning-based scene text detection algorithm is first employed to roughly predict the bounding box of every single character. Then the experts specify the sequence of each character at the sentence-level for fine-grained annotation.

-

ACCID52: ACCID was constructed to satisfy both radical-level and character-level annotations to satisfy the requirements of the above-mentioned methods.

-

O2BR2: O2BR consists of 800 original oracle bone images and 4211 bounding boxes. Its source contents were downloaded from the open museum led by the Institute of History and Philology in Academia Sinica under an appropriate license. Two domain experts and one senior OBI scholar were involved in the annotation and quality assessment processes.

OBI datasets for rejoining

Normally, an OBI rejoining dataset contains assembled high-resolution rubbings and expert-verified splice correspondences. By providing positive and negative edge labels between pieces, it enables machine-learning models to learn fracture contours, inscription continuity, and stratigraphic compatibility, dramatically reducing the search space for human specialists. Such a dataset also supplies calibrated benchmarks for evaluating automated rejoining algorithms or models, thereby accelerating the reconstruction of complete OBI and subsequent historical, linguistic, and paleographic analysis. Below, we list the existing OBI rejoining datasets.

-

OB-Rejoin34: Its rejoining results are manually retrieved from books such as “Collections of Oracle-Bone Inscriptions”, “Catalog of Oracle Bone Rejoinings”, and “The Fifth Collection of Oracle Bone Rejoinings”, as well as multiple digital repositories. It contains 998 rubbing images covering various writing styles with 249 pairs of known rejoinings. Specifically, only the top and bottom borders of the OB fragments are stroked in this work. Six domain experts and two senior OBI scholars participated in the annotation process using a digital drawing tablet with commercial photo editing software.

-

COBD54: COBD contains 480 groups (960 images) of joinable oracle bone contour curve images and 1083 unvalidated OB rubbing images. Each group includes two OB rubbing images: the upper and lower OB fragment rubbing images.

-

OBI-rejoin2: OBI-rejoin comprises 200 complete OBI pieces with 483 rejoinable fragments scraped from the website of the Pre-Qin Research Office at the Institute of History and the Chinese Academy of Social Sciences. Four OBI experts were involved in the annotation process. To reproduce the authentic conditions of oracle bone fractures, annotators manually tear the complete version on appropriate borders, utilizing their domain expertise to induce central or peripheral fractures. Its open-source nature provides a verifiable channel for the subsequent development of rejoining algorithms.

-

OBFI35: OBFI, with a total of 5,374 oracle bone images, consists of four subdatasets, i.e., ZLC, BZ, LS, and DJ, covering yellowing, high contrast, low contrast, and grayscale scenarios, respectively. It also includes more than 138,855 target area images, with 115,893 unrejoinable and 22,962 rejoinable target area images.

OBI datasets for classification and retrieval

Different from recognition datasets, OBI classification datasets are centered on holistic, image-level labels rather than localized annotations. Currently, the dominant classification and retrieval standards are formulated based on the semantic meaning of the oracle bone characters. Below, we introduce some representative datasets. Figure 7 shows the class distribution with a typical long-tail characteristic that exists in current OBI datasets.

-

Oracle-20k43: The first large-scale OBI dataset, Oracle-20k, is composed of 20,039 oracle character instances belonging to 261 categories collected from the website Chinese Etymology. Its characters are a handprinted version extracted and modified by later generations from original OBI rubbings.

-

OBC30669: OBC306, collected from eight authoritative oracle bone publications, is the largest existing OBI dataset, which contains 309,551 samples classified into a total of 306 classes. However, the number of samples in each class varies significantly, ranging from 1 to 25,898, leading to a long-tailed distribution.

-

Oracle-AYNU44: Oracle-AYNU consists of 2583 categories covering 39,062 instances with 2 to 287 instances per category. All of them are binary images and are fixed to a size of 64 × 64.

-

HWOBC70: HWOBC is a handprinted oracle bone character dataset, containing 83,245 single-character samples belonging to 3,881 categories. Each of them is fixed to 400 × 400 and follows a special font named AYJGW, which possesses a high intra-class similarity.

-

Oracle-50k71: Oracle-50k, sourced from three digital OBI websites, consists of 59,081 instances divided into 2668 classes, where a long-tail distribution exists, making its classification problem naturally a few-shot learning problem.

-

OBI-IJDH (http://www.ihpc.se.ritsumei.ac.jp/OBIdataseIJDH.zip): It contains 655 OBI character images divided by 29 categories with mixed Japanese and English labels.

-

Oracle-25072: Oracle-250 consists of 250 most frequently used characters in OBI. Due to the uneven distribution of various categories, the authors adopt ata augmentation algorithms to balance the sample size of the original data. At last, the total number of samples is 92,160.

-

Radical-14872: Radical-148 is constructed based on 148 main component types in OBI. The author invited 700 volunteers to handwrite some OBI components to balance the sample size, resulting in 108,989 samples.

-

OBI12573: OBI125 includes 4257 OBIs that belong to 125 classes, obtained by scanning and archiving the OBIs in the collection of the Shanghai Museum (Volume I)74.

-

OBI-10030: OBI-100 contains 100 character classes, covering various types of characters, such as animals, plants, humanity, society, etc., with a total of 4748 samples.

-

Oracle-24175: Oracle-241, sampled from the Yin Qi Wen Yuan website, contains 78,565 handprinted and scanned rubbing characters of 241 categories.

-

ORCD76: ORCD is an oracle radical character dataset, containing 1288 rubbing samples and 5412 handprinted oracle radicals in 64 categories.

-

OCCD76: OCCD is collected from image synthesis technology and online handwritten OBI collection. The total number of samples is 62,186 images and 1320 categories. It contains 54,876 characters composed of single radicals and 7310 characters copied from existing oracle-combined characters in the font library.

-

OracleRC51: OracleRC, sourced from ref. 77, includes 2005 OBI characters that can be categorized into 202 radicals and 14 structural relations. All radicals and structural relations were annotated by eight linguists.

-

Oracle-MNIST78: Oracle-MNIST dataset, originated from the Yin Qi Wen Yuan website, contains 30,222 28 × 28 grayscale oracle bone character images from 10 classes. It is relatively class-balanced, ranging from 3399 instances to 2,328 instances per class. Its MNIST-aligned format makes it easy to conduct benchmarking on existing classifiers and algorithms.

-

OBI component 2079: This dataset was designed for a component-level retrieval task, namely, using an OBI component to retrieve all characters containing this component. It comprises 9245 OBI characters and 1012 component images, covering 20 distinct components.

First row from left to right: OBC306, Oracle-241, and Oracle-50k. Second row from left to right: OBI125, HUST-OBC, and EVOBC.

OBI datasets for deciphering

OBI decipherment datasets normally pair cropped OBI glyph images with their modern Chinese equivalents or textual glosses, providing the supervised signal needed to bridge the historical orthographic gap. By aligning un-deciphered characters with known characters or semantic annotations, they enable sequence-to-sequence or contrastive-learning models to discover semantic and structural regularities, yielding candidate readings for previously opaque OBI characters.

-

OBI-ECC80: OBI-ECC, sourced from the Guo Xue Da Shi website, consists of images of the evolution of existing Chinese characters, including OBIs, bronze inscriptions, seal script, official script, and regular script. It has 972 categories, with each category containing 5 images.

-

EVOBC81: EVOBC contains six evolutionary stages of the Chinese character, including oracle bone characters, bronze inscriptions, seal script, spring and autumn characters, warring states characters, and clerical script. Its oracle bone character part consists of 75,681 images representing 3077 distinct character categories.

-

HUST-OBC82: HUST-OBC encompasses a total of 77,064 images of 1588 individual deciphered scripts and 62,989 images of 9411 undeciphered characters. Its alignment with modern Chinese characters facilitates the qualitative evaluation of interpretation models.

-

ACCP83: ACCP is expanded upon EVOBC and HUST-OBC to encompass characters from another two periods. Concretely, 9354 characters (1662 AD–1722 AD) from the Kangxi dictionary and 88,899 regular characters are added.

-

OracleSem60: OracleSem is a semantically enriched OBI dataset based on HUST-OBS and EVOBC, which incorporates rich semantic annotations that capture multiple layers of linguistic information. It provides detailed annotations for each character about its pictographic composition, structural organization, and semantic evolution pathway. The OracleSem dataset comprises 1762 OBI characters, with each character associated with 10 to 20 images.

-

GEVOBC84: GEVOBC is a graph-based evolutionary OBI dataset transformed from ref. 80. It contains 3780 Chinese character images classed into 756 groups, where each group contains five evolutionary shapes of an image. Each image is first processed with key point extraction, followed by node connection based on its strokes.

-

PD-OBS62: PD-OBS is a pictographic deciphering oracle bone script (OBS) dataset with multiple sources, comprising 47,157 Chinese characters annotated with OBS images and detailed radical-pictographic analysis texts. Among these, 3,173 and 10,968 characters are associated with OBS image and clerical script images, respectively.

-

PictOBI-20k5: PictOBI-20k was designed to evaluate large multimodal models (LMMs) on the visual decipherment tasks of pictographic OBI characters, i.e., correlating OBI characters with real scenes. It comprises 15,175 different OBI character images from 80 pictographs and 4833 corresponding object images with various question-answer pairs constructed for evaluating LMMs.

Emerging OBI datasets

Beyond the aforementioned traditional OBI processing tasks, numerous studies have also proposed relevant datasets targeting specific issues in OBI research. For example, since most OBI images are in the rubbing form that suffers from severe image degradation caused by limited shooting conditions, constructing a high-quality oracle character dataset becomes rather challenging. Shi et al.85 proposed RCRN, an OBI character image dataset containing 1467 and 139 noisy-clean image pairs, for OBI character image restoration. Later, Li et al.3 introduced Oracle-P15k, a structure-aligned OBI dataset with 14,542 images across 239 classes that covers four common noises in OBI rubbings for OBI character generation and denoising, significantly increasing the scale of OBI datasets for training generative models. Another development direction for OBI datasets is multimodality. Most existing OBI datasets only focus on or are annotated in one or a few dimensions, limiting the potential utility of their application. To this end, Li et al.86 presented an oracle bone inscriptions multi-modal dataset (OBIMD), which includes annotation information for 10,077 pieces of oracle bones. Specifically, it contains detection boxes (coordinates), character categories, transcriptions, corresponding inscription groups, and reading sequences in the groups of each oracle bone character, providing comprehensive annotations. Similarly, RMOBS61 comprises over 20k entries across 900 deciphered OBS characters with pictographic components. Each entry consists of an oracle glyph, a concept, the key components, and a bounding box layout, facilitating fine-grained OBI decipherment model training. Overall, we anticipate that additional OBI datasets tailored to specific tasks will be released in the future, and we especially hope to see large-scale, open-source, and sentence-level OBI corpora that will facilitate the training of dedicated LLMs for the field of OBI and ancient text.

Observations

We further summarize the core characteristics for the aforementioned 36 OBI datasets based on labels, utilities, strengths, and limitations in Table 3. Please note that this does not imply any superiority or inferiority between these datasets. Instead, it provides researchers with some suggestions from the perspectives of ease of use, verifiability, and reproducibility. Here, we make the following observations.

First, the current OBI dataset ecosystem is characterized by a trade-off between scale and granularity. Large-scale datasets such as OBC30669 and HWOBC70 provide sufficient data volume for deep learning but often lack the fine-grained structural or semantic annotations required for higher-order reasoning. Conversely, datasets like OBIMD86 and OracleSem60 offer radical-level and component insights but at a much smaller scale, often limited to a few hundred categories. Second, the long-tail distribution remains the most persistent limitation across the field, reflecting the historical reality of the divination corpus. Third, the lack of original bone images (as opposed to rubbings) in datasets like HUST-OBC82 and EVOBC81 limits the deployment of models in actual archeological excavations where rubbings have not yet been produced. Overall, the efficacy of OBI processing is fundamentally constrained by the data substrate quality. Researchers must navigate three primary visual challenges: authentic noise, resolution constraints, and distribution imbalance.

OBI processing tasks and approaches

In this section, we merely review those AI technique-based OBI processing approaches. From a methodological perspective, the early expert-led empirical methods were relatively uniform, i.e., literature consultation or genealogical comparison, as mentioned above.

OBI preprocessing: data augmentation and synthesis

The deployment of data-driven deep learning models in OBI analysis is fundamentally constrained by the quality and quantity of available training data. Unlike general computer vision tasks supported by massive, clean datasets, OBI research faces unique challenges that render data augmentation not merely beneficial, but strictly necessary. These challenges stem from three primary dimensions: physical degradation, image fidelity, and statistical distribution2,6,13,87.

First, after enduring millennia of burial and exposure, OBI artifacts have suffered extensive natural erosion and anthropogenic damage. The majority of excavated materials exist as fragmented remnants rather than complete bones, resulting in severe semantic discontinuity and information loss, where crucial glyphs are often partially missing or obliterated. Second, in the early stages, the primary method for storing and disseminating OBI was the rubbing, which often suffers from low image fidelity. These images are frequently characterized by complex background noise and texture artifacts4,69, as shown in Fig. 8. A critical challenge lies in the visual ambiguity between genuine character strokes and accidental surface cracks or chipping. Without robust augmentation to simulate these noise patterns, models struggle to disentangle semantic features from background interference. Third, current OBI datasets generally suffer from the long-tailed distribution characteristic, exhibiting extreme class imbalance. Direct training on such skewed data causes overfitting to head classes while failing to generalize to tail classes. To address these problems, researchers have resorted to refining and expanding the dataset through various methods such as geometric transformations88,89,90, noise injection6,91, U-Net architecture92,93, and generative synthesis (e.g., generative adversarial networks (GAN)73,94,95 and diffusion models3,96,97).

This figure illustrates four typical noise types: stroke-broken, bone-cracked, abnormal edges, and dense white regions.

Traditional data augmentation methods employ rotation, scaling, flipping, and affine transformations to simulate variations in the spatial arrangement and writing styles of inscriptions. In88,90, the authors further applied cutting, brightness changing, and contrast adjustment to increase the diversity of data. Besides, Gaussian noise addition is also used to simulate the distortion in the OBI rubbing images. However, such operations fail to introduce substantive semantic variations or novel stroke patterns, and heuristic noise injection is often too simplistic to model the complex, non-stationary degradation in real rubbings, potentially leading the model to learn irrelevant artifacts. Orc-Bert71 leveraged a self-supervised BERT model pre-trained on large unlabeled Chinese character datasets to generate sample-wise augmented samples. Afterwards, concatenated with Gaussian noise, the model further performed point-wise displacement to improve diversity. Subsequently, the emergence of generative models marked a paradigm shift in data augmentation. These models enabled the synthesis of high-quality, realistic samples with controllable attributes, going beyond simple geometric variations. Yue et al.73 proposed a dynamic data augmentation strategy that utilizes the Wasserstein GAN-GP model98 to learn and generate a new OBI dataset, followed by a dynamic selector, to keep the dataset content balanced. In94, a CycleGAN-based OBI data generation strategy was proposed, which learns the mapping between the glyph image data domain and the real sample data domain. Similarly, Gao et al.95 proposed a two-stage decomposition GAN to augment OBI samples exclusively from existing data, which learns an unidirectional mapping to transform low-quality samples into high-quality ones while ensuring enhanced realism and diversity. More recently, Li et al.3 proposed a controllable pseudo OBI image generator by incorporating glyphs and styles through a stable diffusion-based image translation architecture for expanding the tail data. Specifically, users can take those easily accessible rubbing images as a noise style and transfer the handprinted version of scarce OBI characters to realistic noisy versions.

In addition to expanding the number of training samples, researchers also propose to restore the OBI rubbing images through denoising and inpainting techniques to enhance their recognizability. Figure 9 visualizes some OBI denoising and inpainting results. Yang et al.92 formulated a large kernel convolutional attention-based U-Net framework to restore OBI images, which consists of two modified U-Net networks that perform the edge inpainting and overall image inpainting function, respectively. In ref. 99, Wang et al. introduced a two-stage GAN-based model that incorporates a dual discriminator structure to capture both global and local image information. A spatial attention mechanism and a multi-level fusion loss function are proposed to keep the accuracy of restoration. In ref. 93, the authors designed a U-Net-based cross-modal data homogenization module to unify heterogeneous data representations, which transfers rubbings into handwritings to bypass the problem of rubbing recognition. Diao et al.100 presented a glyph extraction-driven image generation network for high-precision OBI restoration, leveraging character glyphs as supplementary information to address complex degradations while preserving the original glyph structures. Similarly, Zhang et al.90 developed a structure-aware diffusion model for OBI restoration, which contains an adaptive dynamic adjustment mechanism to perceive the hierarchical structure of the reconstructed image. Li et al.4 proposed OBIFormer, a fast attentive denoising framework for OBI images, which employs channel-wise self-attention, glyph extraction, and a selective kernel feature fusion module, achieving superior denoising results on multiple OBI datasets while being computationally efficient. Moreover, Orpaint97 leverages the reverse generative capabilities of diffusion models and integrates the visual state space (VSS) block101, achieving significant reductions in time and computational costs compared to those U-Net architectures with multi-head self-attention, serving as an efficient and cost-effective solution for OBI inpainting.

Performance visualization of different OBI denoising methods trained on the RCRN (blue)85 and Oracle-P15K (green) datasets, adapted from ref. 3. Right: Comparison of different OBI inpainting models on the irregular mask, adapted from ref. 99. Licensed under CC BY 4.0 (http://creativecommons.org/licenses/by/4.0/).

OBI recognition

Generally, after being unearthed, an OBI fragment will undergo recognition to extract independent characters, thereby proceeding to downstream tasks. Here, we follow the standard object recognition definition102,103 that an OBI recognition task should include both oracle character localization and identification (Fig. 10). This also distinguishes this subsection from the OBI classification. Specifically, we introduce both traditional pattern recognition-based methods and modern deep learning-based methods.

Traditional pattern recognition

Early pattern recognition approaches are anchored in handcrafted feature extraction and classical classifiers, including structural descriptors, stroke-level graphs, directional histograms, and elastic matching. For example, Zhou et al.104 regarded the OBI character as a non-directional graph and extracted its topological properties to make a two-stage recognition. Meng et al.87 proposed a four-directional scan labeling to reduce the noise in the OBI rubbings, and developed a template matching-based OBI recognition method. In refs. 105,106, they further utilized line features and compared similarities in Hough space. Qu et al.107 developed a recognition method by analyzing the topological features of OBI, such as topological feature points, connected domains, and genus. However, their reliance on laboriously tuned parameters and brittle stroke segmentation routines has limited scalability to the glyph variability exhibited by tens of thousands of unearthed fragments.

Deep representation learning-based recognition

Driven by the rapid advances in deep learning-based generic object recognition, studies in the OBI domain have also shifted toward finer-grained goals that simultaneously localize and categorize every character instance in an image, generating bounding boxes together with confidence scores that quantify the likelihood of each character’s presence6,13,108. Over the past ten years, a large number of automatic OBI recognition approaches combining models such as Fast R-CNN109, single-shot detector (SSD)8, YOLO9, and their variants, have been proposed.

In ref. 110, the authors proposed to detect OBI characters using Faster R-CNN7, while using multi-scale feature fusion to improve recognition capabilities. Meng et al.111 remoulded SSD, enabling it to fit images with higher resolution and to adapt to the recognition of small characters. Liu et al.112 redesigned the sizes and ratios of the anchor box according to the data characteristics by using K-means clustering. To stabilize features and suppress noise, a spatial pyramid block was proposed, offering more robust recognition results. Fujikawa et al.31 implemented YOLO-v3 tiny to perform a rough recognition first and used MobileNet to further inspect the unrecognized areas. Following the multiple optimized versions of YOLO-v3, Wang et al.113, Wen et al.32, Zhen et al.114, and Meng et al.115 further applied the YOLO-v4, YOLO-v5, and YOLO-v8 to OBI recognition tasks, respectively, significantly enhancing the recognition speed and accuracy. More recently, Xiong et al.116 proposed FDW-YOLO, an improved YOLO-v12 for OBI detection, which has a feature focusing diffusion pyramid network design to enhance the integration of multi-scale features. Together, a dynamic mixed convolution block is designed to reconstruct the network structure, helping the model adaptively extract and fuse effective information under complex backgrounds. The resulting FDW-YOLO surpasses vanilla YOLO-v12 in both F1-score and mAP50. Beyond these, Li et al.117 utilized oracle character prototypes and contrastive loss as supervisory signals, cyclically decoupling Oracle features and isolating information-rich character structure features, which effectively mitigates the influence of noise on OBI recognition. Different from mainstream approaches, Fu et al.118 performed OBI detection with the employment of information from multilabel annotations rather than single-location information. A pseudo-label predictor is plugged after the backbone network for learning the particular structure prior to each inscription, thereby improving the recognition precision. In ref. 119, Tao et al. leveraged the OBI font library dataset as prior knowledge to enhance feature extraction in the detection network through clustering-based representation learning.

Furthermore, researchers have also studied the radical-based recognition task since radicals are important components for composing characters and expressing semantics. In ref. 76, Lin et al. combined the maximally stable extremal regions algorithm to generate single radical data annotation on OBIs and then integrated multi-scale features to implicitly extract radical features for radical detection. Diao et al.52 pre-defined 14 categories of character structures, such as single-body, surrounding, left-right, and up-down structures, and adopted a divide-and-conquer recognition strategy by decomposing characters into radicals for benchmarking zero-shot OBI character recognition methods.

Despite these advances, challenges still remain. In the full pipeline of OBI processing, recognition, as an intermediate step, is now governed more by data distribution, pre-processing fidelity, and morphological diversity than by model architecture. Currently, incremental recognition network refinement may no longer deliver the interpretability that OBI experts demand in practice.

OBI rejoining

Unlike other types of OBI processing tasks that have achieved unprecedented results with the advancement of AI technologies, rejoining studies have been painstakingly laborious up until now. Figure 11 shows four common rejoining bases. Existing OBI rejoining methods can be divided into two categories: contour-matching-based methods and deep-learning-based methods.

Licensed under CC BY 4.0 (http://creativecommons.org/licenses/by/4.0/).

Contour matching-based methods

Early rejoining methods typically employ geometric heuristics and manually extract contour features for matching. In refs. 22,26, Wang et al. proposed an algorithm for paragraph-by-paragraph search and matching of contour fragments for fracture phenomena, as well as a matching technique based on shape function operations. Specifically, contour fragments are described by the Freeman chain code, then matched through cascaded Fourier-moment-shape descriptors to propose joins. After the feature calculation, the oracle bone fragments to be rejoined and the candidate ones can be denoted by eigenvectors Fr and Fc. Then, a function is employed to calculate their similarity:

where FN denotes the length of the eigenvector. Based on this similarity score, an OBI rejoining system can determine the connectivity between two fragments. Later, Tian et al.120 further improved the curve matching process and introduced a partial-to-global curve matching algorithm. An OBI fragment is first labeled red by experts to distinguish the contour curves. Then, position correction is applied to unify dimensions and orientation. At the feature extraction stage, the inclination angle and horizontal distance are recorded as the basic local features of the curves. To obtain the global features descriptor, the authors further extract the global discrete points by collecting coordinate points, then denoising them with Gaussian smoothing. At last, a Pearson correlation analysis is adopted to compare the closeness of the two sets of characteristic vectors, where a curve fitting degree analysis algorithm is included to narrow down the number of candidate curves. Moreover, in ref. 33, Zhang et al. formulated the OBI rejoining task as a time series comparison task and transformed the stroked borderline curves into numerical time series. A simple tolerance difference similarity measure was devised for differentiating two time series.

Deep learning-assisted methods

Most of the deep learning-based OBI rejoining approaches share the same procedures as traditional methods in contour representation that utilize similar edge coordinate matching algorithms, while differing in the subsequent discrimination process. For example, in ref. 36, the authors proposed a deep rejoining scheme for automatic OBI rejoining, where an edge equal-distance rejoining method was first used to locate the matching position of the edges of two fragment images, and then a CNN was used to evaluate the similarity of texture in the target area image. Similarly, Zhang et al.121 proposed an internal similarity network to automatically rejoin the OBI fragment image. At the beginning, an edge equidistant matching algorithm was given to search for similar coordinate sequences of edge segment pairs. Then, an internal similarity pooling layer was employed to compute the internal similarity of the convolution feature gradient map. Recently, Zhang et al.35 further enhanced the effectiveness of edge matching by proposing a longest similar edge segment algorithm and a complete image rejoining method. Yuan et al.54 presented SFF-Siam, which includes a similarity feature fusion (SFF) module and a backbone feature extraction network to combine inputs and evaluate the similarity, respectively. Different from the aforementioned approaches, S3-Net34 combines GAN and the Siamese network for data augmentation and measuring the similarity of contour curves to rejoin the OBI fragments.

Furthermore, we include a very related OBI duplicates discovery task, which can be regarded as a matching task involving fragments of the same structure, represented by Zhang et al.’s work122. They designed a progressive OBI duplicate discovery framework that combines low-level keypoint matching with text-centric content-based matching to refine and rank the candidate OBI duplicates with semantic awareness. Specifically, two unsupervised methods, Superpoint123 and Lightglue124, were used for keypoint extraction and mapping, respectively. Afterwards, affine transformations were applied in each image pair for coordinate alignment. Finally, after localizing the OBI characters in each image, a Siamese network was used to compute the content similarity to discover duplicates.

OBI classification and retrieval

OBI Classification is normally to learn a mapping function \({f}_{\theta }:{\mathcal{X}}\to {\mathcal{Y}}\), predicting the character category y for a given input OBI image x. Given a query image, OBI retrieval aims to rank candidate images from a database based on their semantic or visual similarity to a query image. These tasks are particularly challenging due to the characteristics such as various appearances of OBI data and long-tailed distribution.

Supervised deep learning

The early OBI classification methods basically adopt the same models as those used in general image classification. As a pioneering work, Guo et al.43 combined a Gabor-related low-level representation and a sparse-encoder-related mid-level representation for OBI characters, which is also complementary to CNN-based models. Huang et al.69, Liu et al.45, and Fu et al.30 benchmarked traditional classification models including AlexNet125, VGG126, ResNet-5028, ResNet-10128, and Inception127, on a large-scale OBI dataset (OBC306) and a self-curated damaged OBI dataset, which serves as baselines for the development of subsequent algorithms. Chen et al.38 designed a two-step oracle materials classification, where shield grain and tooth grain are detected to divide multiple areas on an OBI image. A CNN is then used to extract the features of each region for final classification. Gao and Liang89 utilized the isomorphism and symmetry invariance of OBIs to distinguish complicated oracle variants, thereby ensuring the classification accuracy. In addition, Jiang et al.128 proposed OraclePoints, which represent OBI images as hybrid neural representations combining image and point-set features, making it easier and more effective to distinguish characters from noise. OraclePoints can be easily integrated with existing models, facilitating both classification and retrieval tasks.

Meanwhile, OBI retrieval approaches mainly focus on deep metric learning, which extends the classification paradigm by optimizing distance metrics. Liu and Wang129 combined deep neural networks (DNN) and K-means clustering technology for the retrieval of OBI images. Ding et al.40 adopted a Siamese network-based image retrieval method to learn feature representations of similar and dissimilar images. To overcome the vast morphological gap accumulated over millennia, Wu et al.49 proposed a cross-font OBI image retrieval network, which employs a Siamese framework to extract deep features from character images with various fonts, exploring structure clues in different resolutions by multi-scale feature integration.

Zero-shot and few-shot learning

Essentially, OBI suffers from the problem of data limitation and imbalance, which therefore should be taken as a zero-shot or few-shot learning task. Wu et al.130 introduced OracleGCD, a generalized category discovery (GCD)-based framework that simultaneously handles the recognition of known characters and the discovery and clustering of novel categories. The authors proposed a stroke-aware asymmetric view augmentation mechanism and a logit-based confidence-guided mechanism for feature enhancement and enabling supervised contrastive learning with virtual labels, respectively. In ref. 131, Hu et al. presented Ora-NSC, a semi-supervised approach for OBI fragment classification that integrates mean teacher with FixMatch, utilizing the exponential moving average strategy to enhance the accuracy and stability of pseudo-label prediction. Liu et al.53 proposed a self-supervised learning approach with contrastive masked frequency modeling for OBI classification, named OBI-CMF, which can extract and learn both global and local features of OBI images. Besides, an inter-domain supervision is achieved by learning OBI features in the spatial and frequency domains, which significantly improves the robustness and generalization ability in OBI classification. Besides, mainstream OBI information systems are based on a collection of databases, which limits retrieval to known and paired OBI characters organized by experts. To reduce such reliance, Gao et al.41 presented a method named linking unknown characters (LUC) for searching arbitrary OBI characters, including both deciphered and undeciphered. Specifically, they designed a domain-aware embedding module to narrow the gap between glyph and scanned data, enhancing the utilization of radical prototypes of OBI characters. The core feature aggregation and dimension lifting layers are employed to get a unified and diverse representation for matching. Similar to the recognition task, researchers utilize radical information to facilitate OBI classification and retrieval. Diao et al.51 proposed a zero-shot OBI recognition framework via radical-based reasoning, which includes a radical information extractor and a knowledge graph-based character reasoner for OBI characters to search for their corresponding modern Chinese versions. Similarly, to support a finer-grained OBI deciphering, Hu et al.79 proposed a component-level OBI retrieval task, i.e., using an OBI component to retrieve all OBI characters containing this component. A dual-stream attention-based model and two types of triplets based on components and characters were designed to model the relationships between components and characters.

Cross-modal learning

To mitigate the problem of the scarcity of OBI data, Wang et al.75 proposed a structure-texture separation network (STSN) for joint disentanglement, transformation, adaptation, and recognition of different forms of OBI data, making full use of the majority of handwritten characters and performing cross-modal generation to enhance the discriminative ability of the learned features. The recent OracleAgent63 further brought OBI retrieval to cross-modal vision-text scenarios. Benefit from the adopted agentic architecture and the extensive OBI knowledge base, OracleAgent can achieve diverse retrieval tasks such as character tracing and type finding using natural language.

OBI deciphering

Deciphering OBI remains a formidable challenge due to its abstract nature, structural diversity, and scarcity of glyphs, as well as the absence of contextual information. While the emergence of generative models has greatly changed the situation. Current OBI deciphering methods can be classified into three categories based on their objectives (Fig. 12), i.e., modern Chinese alignment-based methods, visual content alignment-based methods, and text interpretation-based methods.

From left to right: modern Chinese alignment-based deciphering, visual content alignment-based deciphering, and text interpretation-based deciphering..

Modern Chinese alignment-based deciphering

Different from the early recognition-based OBI deciphering, these methods normally employ generative models such as GAN or diffusion models to link OBI characters with modern Chinese. Chang et al.132 proposed Sundial-GAN, which, for the first time, utilizes the capability of multi-GANs to realize a simulation of the ancient character evolutionary process, i.e., transferring an OBI character to its modern Chinese versions. Following this, Guan et al.133 trained a conditional diffusion model named OBSD that utilizes seen categories of OBI characters as a conditional input to generate corresponding images of its modern counterpart. Benefiting from the proposed localized structural sampling technique, OBSD outperforms previous GAN-based frameworks in generation fidelity and recognizability. To make up for the lack of semantic alignment in OBSD, Wu et al.134 presented DCSD-OBI, which incorporates both OBI images and modern Chinese text as dual conditions while integrating visual and semantic information to enhance structural reconstruction and semantic alignment during the reverse diffusion process.

In addition to the above generative model-based methods, there are also alternative approaches that utilize other feature representations for alignment. For example, in ref. 91, Zhang et al. took an unknown OBI character as a query and used an auto-encoder to retrieve suitable image representations in feature space, where subsequent fonts, such as seal character and modern Chinese, are also embedded. In ref. 80, the authors utilized the Siamese network in few-shot learning with binary cross-entropy loss to predict the evolution rules flow of Chinese characters. Wang et al.83 proposed the Puzzle Pieces Picker (P3) method, which deconstructs OBI into foundational strokes and radicals first and then reconstructs them into their modern counterparts through a Transformer model, explicitly utilizing the structural relationships between ancient and modern characters. Similarly, Hu et al.135 proposed a component-level OBI segmentation model that processes OBI characters and OBI components independently to perform accurate segmentation, thereby accelerating the understanding and deciphering process. In ref. 49, the authors utilized historical font intermediaries to overcome glyph gaps and enable effective decipherment of OBI characters. Inspired by the similarity of the elements throughout the evolutionary process, Jiao et al.84 employed a graph-based representation for OBI and modern characters, wherein nodes correspond to key structural points of a character, and edges define their spatial relationships. They demonstrated a higher similarity of graph-based representation than image-based representation in the same character family, providing valuable data support for the semantic reasoning of undeciphered OBI characters. However, over thousands of years, originally pictorial lines may undergo straightening, simplification, or distortion for the sake of writing efficiency, causing the character to lose its original semantics. This happens especially in those undeciphered OBI characters, challenging the practical application of these approaches. Moreover, most failed generation cases targeting modern Chinese characters consist of meaningless strokes, offering little substantive assistance in speculating about the interpretation of OBIs.

Visual content alignment-based deciphering

Compared to pure character-aligned deciphering, visual content-aligned deciphering can rectify semantic drift, creating a direct cognitive bridge that bypasses the confusing errors introduced during thousands of years of stroke evolution. As a pioneering work, Qiao et al.136 conducted a comprehensive human study to show whether participants could indeed make better sense of an oracle glyph subjected to a proper visual guide. Meanwhile, a conditional visual generation task based on an oracle glyph and its semantic meaning was designed to circumvent model training in the presence of a fatal lack of oracle data. V-Oracle137 applied principles of pictographic character formation and framed the OBI deciphering task as a visual question-answering (VQA) problem. The progressive three-stage training paradigm enables V-Oracle to perform advanced reasoning with pictographic explanations. Li et al.61 proposed OracleFusion, which leverages the LMM with enhanced spatial awareness reasoning to ensure a more structured and interpretable representation of oracle glyphs. They also introduced a glyph structure-constrained semantic typography method to generate object-form deciphering results with both semantic integrity and spatial accuracy. More recently, Chen et al. constructed a visual perception benchmark, PictOBI-20K5, to evaluate the visual decipherment ability of general-purpose LMMs on pictographic OBI characters. Besides, a subjective annotation was conducted to investigate the consistency of the reference point between humans and LMMs in visual alignment, offering valuable insights for optimizing the attention mechanism in visual decipherment.

Text interpretation-based deciphering

The most recognized and straightforward deciphering objective is to generate text interpretation. As the first initial attempt, Chen et al.2 introduced OBI-Bench and conducted evaluations systematically on 23 LMMs for examining their potential OBI deciphering abilities. Experiments on OBI characters with different frequencies and formation principles (e.g., pictograph, ideogram, and radical-based variants) reveal the performance map of LMMs, pointing out the direction for subsequent research. At the same time, Jiang et al.60 proposed OracleSage, a cross-modal framework built upon LLaVA-1.5138 for OBI deciphering that integrates hierarchical visual understanding with graph-based semantic reasoning to enhance the plausibility of interpretations. In ref. 62, Peng et al. proposed an interpretable OBI decipherment method based on large vision-language models (LVLM), which combines radical analysis and pictograph-semantic understanding to bridge the gap between glyphs and meanings of OBI, further enhancing the reliability of the OBI deciphering process. More recently, Li et al.63 presented OracleAgent, an LLM-empowered agentic system for structured management and retrieval of OBI-related information. Benefiting from the constructed large OBI knowledge base and the integration of multiple OBI analysis tools, OracleAgent can generate reliable character explanations and meet the diverse needs of public users.

Evaluation of OBI processing models

Objective metrics

The evaluation protocol of OBI processing models normally depends on the task type.

OBI recognition